Abstract

Purpose

To systematically evaluate the application of artificial intelligence (AI) techniques in X-ray sensor-based coronary angiography for cardiovascular disease (CVD) diagnosis, mapping publication trends, geographic and topical hotspots via bibliometric analysis, and critically reviewing disease-specific AI methodologies and performance to inform future research and clinical integration. Non-angiographic inputs were considered only when angiography served as the reference standard or when the algorithm was explicitly integrated into an angiography-based workflow.

Methods

A two-part approach was undertaken. In Part I, we performed a bibliometric analysis of English-language original research and reviews published between 1 June 2010 and 1 June 2025, retrieved from Web of Science, Scopus, and PubMed. Records (n = 123) were screened using a PRISMA flowchart and analyzed with CiteSpace v6.3.R1 to identify annual publication trends, country contributions, co-authorship networks, and keyword clusters. In Part II, we conducted a structured literature review of the AI methods reported in these studies, organizing findings by three major clinical categories-acute myocardial infarction, ischemic cardiomyopathy, and unstable angina-and extracting model architectures, data sources, and diagnostic performance metrics (accuracy, sensitivity, specificity, and AUC).

Results

Bibliometric analysis revealed three publication phases: a formative period (2010–2017) with <3 papers/year; rapid growth (2018–2021) culminating in a peak of 28 papers in 2022; and sustained interest into 2025. The United States (n = 39) and China (n = 34) led contributions, and keyword clustering highlighted central themes around “artificial intelligence,” “coronary artery disease,” and “computed tomography angiography.” In disease-specific review, convolutional neural networks (CNNs) and CNN–LSTM hybrids predominated, achieving AUCs from 0.724 to 0.997: for acute myocardial infarction detection, accuracies of 90%–95% and AUCs up to 0.99; for ischemic cardiomyopathy differentiation, accuracies of 75%–98% and AUCs up to 0.93; and for unstable angina prediction, overall accuracies of 89%–95%. Classical machine-learning models (XGBoost and random forest) also showed robust performance (AUC 0.77–0.94). Key challenges include dataset heterogeneity, limited multicenter validation, and model interpretability.

Conclusion

AI, particularly deep-learning frameworks, substantially enhances the accuracy and efficiency of CVD diagnosis via X-ray coronary angiography. However, current evidence is constrained by small single-center datasets, limited external validation, inconsistent leakage safeguards, and scarce calibration/decision-curve reporting. To advance clinical adoption, future efforts should emphasize large-scale, multicenter validation studies, development of explainable AI models, and seamless integration into cardiology workflows.

Keywords

Introduction

Cardiovascular diseases (CVDs) remain the leading cause of mortality worldwide, accounting for an estimated 17.9 million deaths annually and imposing a substantial economic burden on healthcare systems. 1 Among these, coronary artery disease (CAD) is particularly prevalent, characterized by the narrowing or blockage of coronary arteries due to atherosclerotic plaque formation. 2 Early and accurate diagnosis of CAD is critical for guiding therapeutic interventions, reducing the risk of adverse cardiac events, and improving patient outcomes. 3 Although a variety of noninvasive modalities—such as electrocardiography, echocardiography, and computed tomography—offer valuable insights into cardiac function and vessel patency, X-ray sensor-based coronary angiography remains the clinical gold standard for the direct visualization of coronary anatomy and lesion severity. 4

Despite its diagnostic utility, conventional angiographic interpretation relies heavily on manual review by experienced cardiologists and radiologists, introducing interobserver variability and prolonging procedural time.5,6 Small vessel detection, overlapping anatomical structures, motion artifacts, and low-contrast lesions further complicate the accurate assessment of stenosis severity. 7 In recent years, artificial intelligence (AI) techniques, including machine learning and deep learning, have demonstrated considerable promise in addressing these challenges through automated image processing, quantitative analysis, and decision support. 8 By extracting high-dimensional features from angiographic sequences, AI algorithms can enhance image quality, delineate vessel boundaries, and classify pathological changes with improved consistency and efficiency. 9 Early AI approaches to coronary angiography were rooted in classical machine learning methods, employing handcrafted feature extraction and statistical classifiers for vessel segmentation and lesion detection. Although these methods achieved moderate success in controlled settings, their reliance on manually engineered features limited generalization across variable imaging conditions and patient populations.5,8 The advent of convolutional neural networks (CNNs) and other deep architectures has shifted the paradigm toward end-to-end learning, enabling models to learn hierarchical representations directly from raw angiographic images. State-of-the-art frameworks incorporate U-Net-based segmentation networks, residual learning, attention mechanisms, and generative adversarial networks (GANs) to improve pixel-level accuracy, suppress noise, and synthesize realistic vascular patterns for data augmentation. 10

Several systematic reviews have critically appraised the evidence base for AI in medicine.11,12 While several cardiology AI reviews and technique-oriented summaries exist, they typically survey broader cardiovascular imaging or specific algorithmic pipelines and do not couple a field-level mapping with a disease-focused performance synthesis restricted to X-ray sensor-based coronary angiography. By contrast, our work explicitly addresses this gap by integrating (i) a bibliometric analysis that charts publication growth, geography, collaboration, and thematic hotspots from 2010 to 2025, with (ii) a structured, disease-specific appraisal of models for acute myocardial infarction, ischemic cardiomyopathy (ICM), and unstable angina, including standardized extraction of architectures, data sources, and diagnostic metrics. This dual design is motivated by the lack of a unified, systematic appraisal of research evolution and clinical efficacy in angiography-focused AI and by the central clinical role of coronary angiography as the reference standard.

Materials and methods

The article is organized into two distinct parts. Part I presents a bibliometric analysis13,14 of AI techniques for CVD diagnosis via X-ray sensor-based coronary angiography, carried out in accordance with Donthu's five-stage framework. We first defined the study's objectives and scope—focusing exclusively on AI-driven CVD diagnosis through X-ray sensor angiography—and then retrieved records from major scientific databases. Non-English articles and document types other than original research or comprehensive reviews were excluded, after which the remaining studies were manually screened for direct relevance. The full workflow is schematized in Figure 1. Part II comprises a detailed literature review of the AI methods identified in Part I, organized by specific cardiovascular conditions. For each disease category, we examine the algorithmic approaches employed, summarize diagnostic performance metrics, discuss data-processing pipelines, and highlight clinical implications and future research directions. We have registered this review with PROSPERO (Registration ID: CRD420251143757, available at https://www.crd.york.ac.uk/PROSPERO/view/CRD420251143757).

Study selection flowchart.

Data sources

A search was conducted in three leading bibliographic databases—Web of Science (Core Collection) (Clarivate Analytics), Scopus (Elsevier), and PubMed (NCBI)—to capture the entire spectrum of research on AI-driven CVD diagnosis via X-ray sensor-based coronary angiography published over the past 15 years (1 June 2010 to 1 June 2025). This period was chosen to encompass the formative phase of AI in medical imaging—when machine learning approaches first began to appear in clinical contexts—and its subsequent rapid expansion into deep-learning and advanced algorithmic methods. 15

Search strategy

We performed a systematic literature search in Web of Science Core Collection (WoS), Scopus, and PubMed to identify all English-language articles and reviews published between 1 June 2010 and 1 June 2025 that applied AI techniques to X-ray sensor-based coronary angiography in CVD. Three concept groups-AI methods, angiographic modalities, and cardiovascular conditions-were combined using Boolean operators. We have included the complete, database-specific search strategies (Web of Science, Scopus, and PubMed) in Supplemental Table S1. Search results were limited to peer-reviewed articles and reviews in English. The study-selection process is summarized in the PRISMA-style flowchart (Figure 1), yielding 123 articles for subsequent bibliometric analysis. Two reviewers independently screened all records at both the title/abstract and full-text stages against the predefined eligibility criteria.

Eligibility criteria and study selection

We prespecified eligibility criteria following PRISMA 2020. For the bibliometric corpus (Part I), we included English-language, peer-reviewed journal articles or reviews published between 1 June 2010 and 1 June 2025 that applied AI methods to X-ray sensor-based coronary angiography (including invasive coronary angiography, quantitative coronary angiography (QCA), and coronary CT angiography/FFR-CT) in the context of CVD. Non-angiographic inputs were considered only when angiography served as the reference standard or when the algorithm was explicitly integrated into an angiography-based workflow. We excluded conference abstracts/proceedings, preprints, theses, editorials, letters, commentaries, non-English items, and records not indexed as “Article” or “Review.” When both a conference version and a subsequent peer-reviewed journal article existed, only the journal version was retained; duplicates were removed by DOI/PMID, title, and author cross-checking. For the disease-specific review (Part II), additional inclusion thresholds required (i) reporting at least one diagnostic performance metric (e.g. AUC, accuracy, and sensitivity/specificity) and (ii) sufficient methodological detail on model architecture and data sources to permit appraisal. Studies leveraging non-angiographic signals (e.g. ECG or hemodynamic surrogates) were eligible only if angiography provided the reference standard, the outcome was angiography-confirmed, or the method was explicitly integrated into an angiography-based workflow. We excluded purely technical/image-processing reports without diagnostic evaluation against a clinical reference, simulation-only work, and studies lacking quantitative performance. Two reviewers independently screened titles/abstracts and full texts against these criteria; any disagreements were resolved through discussion between the two reviewers. The PRISMA flow diagram summarizes the selection process, yielding n = 123 records for analysis.

Risk of bias

To evaluate potential sources of bias, two independent reviewers performed a methodological quality assessment. For randomized and comparative studies, the Cochrane Risk of Bias Tool, version 2, was applied. For diagnostic accuracy studies, risk of bias was assessed using the QUADAS-AI 16 instrument—an artificial intelligence–specific adaptation of the original QUADAS-2 17 framework. QUADAS-AI evaluates four key domains: (1) patient selection, assessing whether participants were consecutively enrolled and representative of the target population; (2) index test (AI), determining whether the AI model was pre-specified, free from data leakage, and validated externally; (3) reference standard, verifying whether the reference test (e.g. coronary angiography or clinical diagnosis) was reliable and independently applied; and (4) flow and timing, assessing whether all participants were included in the analysis and whether the time interval between the index test and the reference standard was appropriate. An overall judgment of risk of bias was subsequently derived for each included study.

Data analysis and visualization

All retrieved records were exported in plain-text format—including complete bibliographic entries and their cited references—and imported into CiteSpace v6.3.R1 (64-bit) Basic, a bibliometric visualization tool developed by Chaomei Chen at Drexel University, USA. 18 CiteSpace applies citation analysis to pinpoint seminal works, emerging research hotspots, and influential authors, and it constructs temporal network maps that depict the evolution of a given field. 19 These graphical representations of knowledge structures are commonly referred to as scientific knowledge maps. 20

Result

Global trends in publications and citations

Analysis of annual publication output on AI-based coronary angiographic imaging reveals three distinct phases between 2010 and mid-2025 (shown in Figure 2). During the formative period from 2010 to 2017, scholarly activity was minimal and relatively flat, with yearly article counts oscillating between one and three (mean = 1.9 publications per year). Beginning in 2018, the field entered a phase of sustained expansion: output rose from three articles in 2018 to five in 2019, nine in 2020, and 12 in 2021. This upsurge culminated in a first major peak of 28 publications in 2022. Although there was a temporary contraction to 13 articles in 2023, research productivity recovered strongly to 23 publications in 2024. Preliminary data for the first half of 2025 record 16 articles, indicating that high levels of scholarly interest are being maintained.

Annual publication trends for artificial intelligence (AI)-driven coronary angiographic analysis from 2010 through mid-2025.

Distribution of publications across countries and regions

Analysis of geographic contribution revealed that the United States (n = 39) and China (n = 34) were the most prolific sources of publications, followed by Italy (n = 17), Germany (n = 14), South Korea (n = 13), the Netherlands (n = 12), Spain (n = 10), Canada (n = 9), Japan (n = 8) and Switzerland (n = 8) (Figure 3(a)). These 10 countries together accounted for the majority of articles in the field. International collaboration patterns were visualized via a country-level co-authorship network (Figure 3(b)). In this network, node size is proportional to each country's publication count, and edge thickness corresponds to the number of joint publications between two countries. The United States and China emerged as the principal hubs, exhibiting the largest nodes and the highest degree of connectivity. A densely interconnected European cluster—including Italy, Germany, the Netherlands, Spain, Switzerland, and the United Kingdom—was also apparent. Strong bilateral ties were observed between the United States and Canada, the United States and Germany, and China and South Korea. In addition, East Asian contributors such as Japan and Singapore, along with Middle Eastern countries (notably Israel), featured prominently within the global collaboration network.

Global output and collaboration in artificial intelligence (AI)-driven coronary angiography. (a) Top-10 countries by publication count (2010–2025): the USA 39, China 34, Italy 17, Germany 14, South Korea 13, The Netherlands 12, Spain 10, Canada 9, Japan 8, and Switzerland 8. (b) Country co-authorship network: node size ∝ output, edge thickness ∝ collaboration. The USA and China are the main hubs; Europe forms a dense cluster with additional bridges across East Asia and the Middle East.

Distribution of keywords

The keyword co-occurrence network (Figure 4(a)) was constructed from 726 unique terms appearing in the 123 records; only keywords with ≥10 occurrences were retained for visualization. Nodes represent keywords, node size reflects frequency, and link thickness indicates co-occurrence strength. Burst-detection analysis identified the top 25 keywords with the strongest citation bursts between 2010 and 2025 (Figure 4(b)). Early bursts (2010–2013) included “triage,” “acute myocardial infarction,” and “blood flow,” reflecting an initial emphasis on emergency care and hemodynamic assessment. Mid-period bursts (2014–2018), such as “classification,” “diagnostic performance,” and “guidelines,” coincided with the rise of algorithmic validation and clinical protocol development. In the most recent interval (2019–2025), “machine learning” and “deep learning” exhibited the strongest bursts, underscoring the rapid adoption of advanced neural architectures. Figure 4(d) summarizes the frequency of the top 10 keywords: “coronary artery disease” (n = 31), “myocardial infarction” (n = 23), “artificial intelligence” (n = 17), “acute coronary syndrome” (n = 17), “machine learning” (n = 16), “prevention” (n = 16), “stent” (n = 14), “guidelines” (n = 13), “diagnosis” (n = 13), and “disease” (n = 11). The prominence of CAD-related terms alongside AI-methodology keywords highlights the dual focus on specific clinical conditions and computational approaches. Applying the log-likelihood ratio algorithm yielded 10 major clusters (Figure 4(c)): #1 emergency department (purple), #2 accuracy (yellow), #3 coronary artery disease (green), #4 coronary CT angiography (light green), #5 AI (red), #6 prognosis (teal), #7 coronary angiography (blue), #8 echocardiography (navy), #9 prevention (orange), and #10 databases (magenta). Cluster #5 (AI) was the largest (n = 152 nodes), followed by clusters #3 and #4, indicating that AI methods and their application in CAD and CT-based imaging constitute the central themes of the field.

Keyword structure and trends in artificial intelligence (AI)-driven coronary angiography (2010–2025). (a) Co-occurrence map of author keywords from 123 papers; node size = frequency, edge weight = co-use. Coronary artery disease, myocardial infarction, and artificial intelligence are central terms. (b) Citation-burst keywords indicating periods of rapid attention. (c) Ten thematic clusters (colors) summarize major topics. (d) Top-10 keyword frequencies; leading terms include coronary artery disease (31), myocardial infarction (23), and artificial intelligence (17). (e) Timeline view showing a shift from early procedural terms (e.g. stent and fractional flow reserve) to AI-related terms (machine/deep learning and validation) after ∼2018.

The timeline view of keyword co-occurrence (Figure 4(e)) reveals topic evolution over time. In the 2010–2012 slice, procedural and device-oriented terms (e.g. “stent,” “fractional flow reserve (FFR),” and “coronary angiography”) dominated. Between 2013 and 2017, keywords such as “atherosclerosis,” “intervention,” and “computed tomography angiography” gained traction. From 2018 onward, the network is increasingly driven by AI-centric terms—“artificial intelligence,” “classification,” “deep learning,” and “validation”—reflecting a clear shift toward methodological innovation and performance evaluation in coronary angiographic analysis.

Disease-specific analysis of AI techniques in X-ray sensor-based coronary angiography

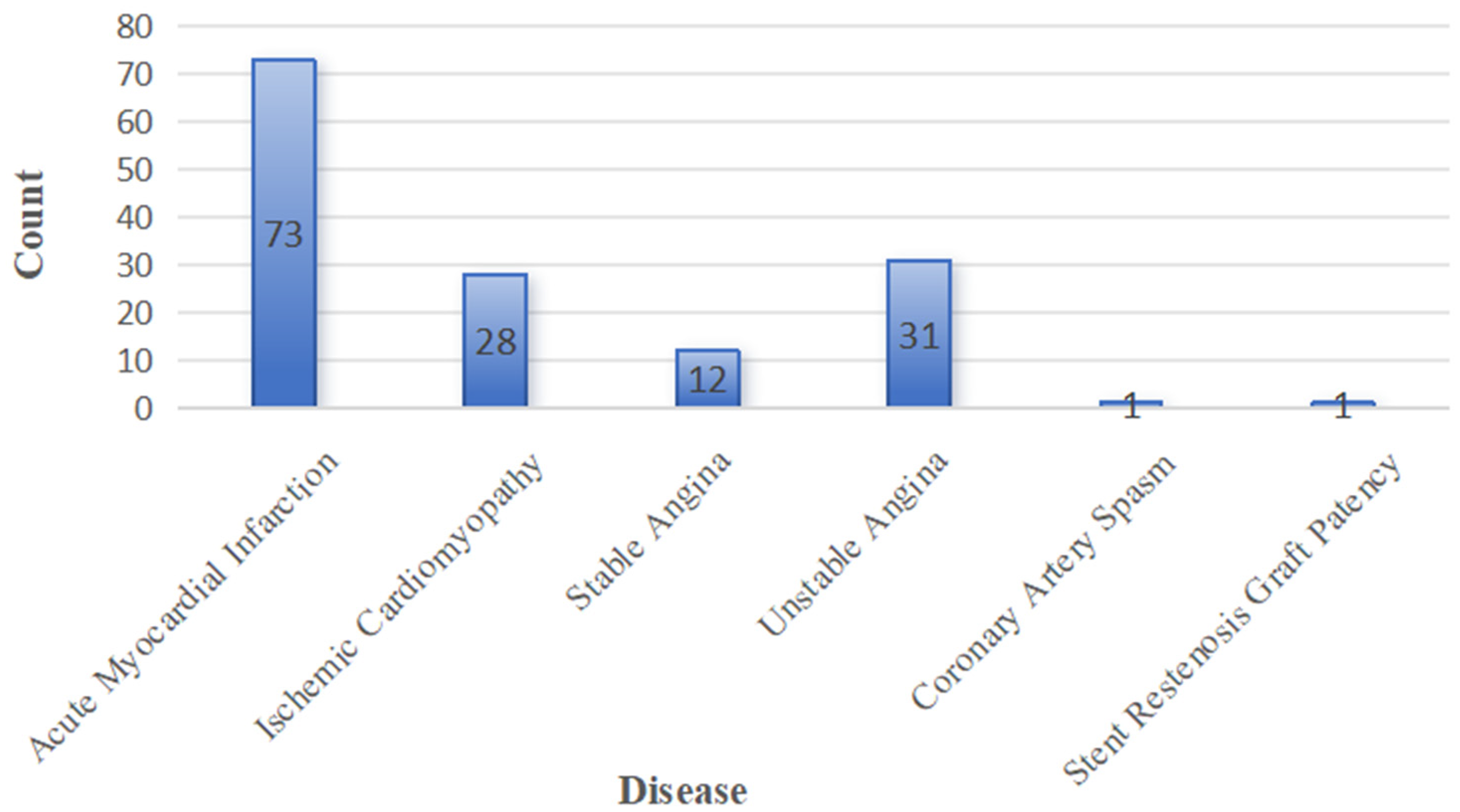

The bar chart illustrates the number of cases in which various coronary pathologies were identified using AI techniques applied to X-ray sensor-based coronary angiography (shown in Figure 5). Acute myocardial infarction accounted for the largest share of diagnoses (n = 73), followed by unstable angina (n = 31), ICM (n = 28), and stable angina (n = 12). Less frequent findings included coronary artery spasm and stent restenosis/graft patency, each detected in one case, demonstrating the AI system's capacity to recognize both common and rare vascular conditions.

Distribution of cardiovascular diseases diagnosed by artificial intelligence (AI)-enhanced X-ray sensor-based coronary angiography.

As the volume of publications addressing acute myocardial infarction, ICM, and unstable angina is particularly large, we refined our corpus to include only those studies that provided explicit AI model performance metrics (accuracy, sensitivity, specificity, AUC, etc.). In the following subsections, we present a detailed, study-by-study analysis of each of these three disease categories.

(1) Diagnosis of acute myocardial infarction

A total of 22 studies applied AI algorithms to the diagnosis of AMI or related acute coronary syndromes, drawing on diverse data sources—including X-ray sensor-based CAG images, 12-lead ECGs, CCTA, and hybrid imaging—hemodynamic parameters (shown in Table 1, and full study-by-study details are provided in Supplemental Table S2). CNNs and their extensions emerged as the most frequently employed architectures. Lee et al. used a CNN model on 18,697 ECGs to both detect ST-segment-elevation myocardial infarction (STEMI) and trigger catheterization-laboratory activation, achieving 92.1% accuracy, 95.4% sensitivity, and 91.8% specificity. Goktekin et al. introduced a multi-input multi-scale ConvMixer network trained on 1321 twelve-lead ECGs, yielding 88.7% accuracy in automated STEMI detection.

Concise summary of AI tasks, data modalities, and reported performance in AMI/CAD.

Rows summarize typical applications, data sources, and representative model families. Performance values are ranges reported in the included studies.

AI: artificial intelligence; AMI: acute myocardial infarction; CAD: computer-aided diagnosis; ECG: electrocardiogram; OHCA: out-of-hospital cardiac arrest; CT: computed tomography; OCT: optical coherence tomography; STEMI: ST-segment–elevation myocardial infarction; CNN: convolutional neural network; LSTM: long short-term memory; DLM: deep learning model; AUC: area under the curve; BP-NN: back propagation neural network; DNN: deep neural network; FFR: fractional flow reserve.

Beyond pure CNN frameworks, several studies combined convolutional architectures with temporal or specialized network modules. Wu et al. developed a CNN–LSTM hybrid system using 506 control and 377 STEMI ECGs, which achieved an AUC of 0.99 for both STEMI identification and culprit vessel prediction. Liu et al. constructed a deep-learning model on 287 CAG-confirmed AMI ECGs and over 140,000 non-AMI controls, reaching an AUC of 0.997, with 98.4% sensitivity and 96.9% specificity. Sawano et al. applied a DNN to 10 frames from each of 5923 CAG video clips (572 patients) to estimate vascular age—a surrogate for acute coronary syndrome risk—and obtained an AUC of 0.839 (sensitivity 74%, specificity 76%, and overall accuracy 75%). Choi et al. leveraged a ResNet-based model on ECG traces extracted during CAG to distinguish AMI from obstructive CAD, reporting 88.5% accuracy (sensitivity 76.9%, specificity 92.1%, and F1 = 0.758).

Classical machine-learning approaches based on engineered features also demonstrated robust diagnostic performance. Wu et al. applied a LASSO regression to 180 automated ECG features, achieving AUCs of 0.92 and 0.98 in internal and external validations, respectively. Zheng et al. combined echocardiographic measurements with angiography-derived FFR (caFFR) and index of microcirculatory resistance (caIMR) in a random forest model for STEMI patients (n = 157), yielding an AUC of 0.85. XGBoost classifiers were used by Shi et al. to predict chronic total occlusion (AUC = 0.724) and by Pareek et al. to identify culprit lesions in out-of-hospital cardiac-arrest survivors (AUC = 0.89). Early neural-network work by Forberg et al. validated an artificial neural network on 560 prehospital ECGs for STEMI triage, achieving 97% sensitivity and 68% specificity (AUC = 0.94). Although constrained by handcrafted features and modest specificity, this study exemplifies the formative stage of AI applications in coronary angiography, paving the way for subsequent deep-learning-based approaches with superior robustness and scalability. Across these modalities and model types, reported AUCs ranged from 0.724 to 0.997 and overall accuracies from 75% to 95%, underscoring the strong potential of AI-driven systems to support rapid and reliable AMI diagnosis.

Comparative synthesis (AMI). CNNs and CNN–LSTM achieve the highest performance on large ECG or cine-angiography datasets with clear reference standards (AUC up to ∼0.99–1.00). Temporal models (e.g. CNN–LSTM) outperform static CNNs on sequential signals/frames. CNNs on CCTA/OCT also show strong discrimination (AUC = 0.98). Classical ML (LASSO/XGBoost/RF) is competitive on structured clinical/physiology features, typically AUC = 0.72–0.89, and improves when caFFR/caIMR or other physiology-derived variables are included.

(2) Diagnosis of ICM

A total of nine studies applied machine-learning and deep-learning algorithms to the identification and characterization of ICM and related heart-failure phenotypes using echocardiographic, electrocardiographic, and hemodynamic data (see Table 2, full study-by-study details are provided in Supplemental Table S3). Zhou et al. leveraged an XGBoost classifier trained on echocardiographic measurements from 200 dilated cardiomyopathy (DCM) and 199 ICM patients, achieving a sensitivity of 72%, specificity of 78%, overall accuracy of 75%, and an AUC of 0.934 for automated differentiation between ICM and DCM. In a similarly focused comparison, Han et al. applied variational mode decomposition combined with higher-order spectral analysis to ECG recordings from 75 subjects (38 DCM and 37 ICM), reporting an accuracy of 98.21%, sensitivity of 98.22%, and specificity of 98.19%.

Concise summary of data modalities, tasks, and performance for ischemic cardiomyopathy (ICM) diagnosis.

Performance values are ranges reported in the included studies.

Efforts to predict heart-failure severity and related clinical outcomes in ICM or post-AMI cohorts have employed both explainable and recurrent network models. Guo et al. developed a TabNet-multilayer perceptron framework using multidimensional clinical features from 1574 AMI patients to stratify heart-failure severity, yielding an AUC of 0.827. Wang et al. proposed a long-term recurrent convolutional network (LRCN) that integrates convolutional and LSTM layers to classify serum-electrolyte disorders in 169 heart-failure patients, delivering 89.0% accuracy, 91.7% sensitivity, and 81.5% specificity. Otaki et al. applied a deep-learning model to single-photon emission computed tomography myocardial-perfusion images from 3578 patients with suspected CAD, achieving an AUC of 0.83 for the detection of obstructive lesions.

Machine-learning classifiers trained on waveform and exercise-ECG features have demonstrated moderate-to-high diagnostic performance. Razavi et al. used a CatBoost decision-tree model on aortic-pressure waveform parameters from 497 STEMI patients to predict prolonged hospitalization after PCI, reporting 77% accuracy (sensitivity 76%, specificity 78%, and AUC 0.77). Zhang et al. employed XGBoost on high-frequency QRS analyses of cyclic exercise ECGs in 140 hospitalized patients and 59 healthy volunteers, achieving 70% sensitivity and 80% specificity for angiographic CAD prediction.

Finally, ANNs have been utilized for prognostic modeling in post-resuscitation and biomarker studies. Chiu et al. trained an ANN on 580 out-of-hospital cardiac-arrest patients who achieved ROSC and underwent targeted temperature management, yielding an AUC of 0.87, accuracy of 86.7%, sensitivity of 77.7%, and specificity of 88.0%. Kalay et al. investigated VPO1 and ATF4 as biomarkers of endoplasmic-reticulum and oxidative stress in 80 patients stratified by single-, double-/triple-vessel, and no-vessel disease; their ANN model attained an AUC of 0.82 for distinguishing disease extent. Collectively, these studies (Table 2) highlight the versatility of AI approaches in differentiating ICM from other cardiomyopathies, stratifying heart-failure severity, and predicting key clinical outcomes.

Comparative synthesis (ICM). Tree-based ML (e.g. XGBoost/CatBoost) shows strong discrimination on structured echocardiographic/clinical features for ICM versus DCM or CAD severity (AUC ≈ 0.77–0.93). Deep-learning models on imaging signals (e.g. SPECT MPI) achieve AUC ≈ 0.83, while sequential/temporal architectures (e.g. LRCN)perform well on time-series tasks(accuracy ≈ 89%). Signal-processing + DL/ML pipelines (e.g. VMD+higher-order spectra) report very high accuracies (∼98%) on small ECG datasets. ANN/ML prognostic models for post-resuscitation or biomarker-based stratification yield AUC ≈ 0.82–0.87. Overall, tree-based models excel with heterogeneous structured features, whereas DL and recurrent models are preferable for imaging or sequential signals.

(3) Diagnosis of unstable angina

Six studies evaluated machine-learning and deep-learning approaches for the diagnosis and functional characterization of ICM (Table 3). Jamthikar et al. employed an ensemble-learning-driven algorithm on baseline clinical and echocardiographic features from 459 patients, demonstrating superior performance over conventional machine-learning methods in predicting cardiovascular events—including coronary artery disease and acute coronary syndromes—with a sensitivity of 91.8%, specificity of 85.1%, positive predictive value of 91.1%, negative predictive value of 87.1%, F1-score of 88.5%, and overall accuracy of 89.2%. Al’Aref et al. trained an XGBoost classifier on qualitative and quantitative CT plaque characteristics across 864 lesions in 234 non-ACS patients, achieving a specificity of 89.3% and an AUC of 0.774 for identifying precursors of culprit lesions in ACS.

Summary of artificial intelligence (AI) models, input data, applications, and performance metrics for unstable angina diagnosis.

Juan-Salvadores et al. applied a random-forest model to clinical data from 506 patients to predict major adverse cardiovascular events, obtaining an AUC of 0.79 and outperforming logistic-regression benchmarks in variable selection and risk stratification. Choi et al. compared traditional logistic regression with multiple machine-learning techniques in a cohort of 1893 patients, reporting that the ML-based model achieved an AUC of 0.81 and an accuracy of 72.5% in isolating the most critical clinical predictors.

Beyond event prediction, recent work has focused on deriving functional indices from imaging and hemodynamic inputs. De Filippo et al. developed an AI-based application to compute FFR and instantaneous wave-free ratio (iFR) directly from conventional coronary angiograms in 389 patients, achieving 87.3% accuracy with 82.4% sensitivity and 92.2% specificity. Zhang et al. implemented a fully automated AI pipeline for CCTA image processing in 1801 adults, reporting 94.7% accuracy in lesion detection and classification without manual intervention. Collectively, these findings underscore the versatility of modern AI frameworks—from ensemble tree-based classifiers to end-to-end automated systems—in improving the diagnostic precision and functional evaluation of ICM.

Comparative synthesis (UA). End-to-end automated CCTA pipelines show the highest accuracy for lesion detection/classification (= 94.7%) on large imaging cohorts. AI-based FFR/iFR from conventional CA attains balanced discrimination (accuracy = 87.3% and specificity = 92%) when hemodynamic surrogates are derived from angiograms. On structured clinical/echo/CT-plaque features, tree-based ML (EML/XGBoost/RF) performs consistently in the upper-mid range (AUC = 0.77–0.81; accuracy up to 89%), and outperforms logistic regression for risk prediction/variable selection. Overall, image-centric, fully automated pipelines excel on large imaging datasets, while ensemble/tree-based models are competitive for structured feature sets and event-risk prediction.

Discussion

This systematic review comprehensively highlights the transformative impact of AI techniques on the diagnosis of CVDs via X-ray sensor-based coronary angiography. Our bibliometric analysis revealed distinct growth phases in research publications, indicating escalating scholarly interest, particularly from 2018 onward. Such rising interest aligns with the increased utilization and validation of DL methods, notably CNNs, which have consistently demonstrated superior diagnostic performance compared to traditional manual interpretations.

In our detailed literature review, CNN-based frameworks were notably prominent, achieving high accuracy, sensitivity, and specificity across various cardiovascular conditions, including AMI, ICM, and unstable angina. Similarly, CNN-LSTM hybrid models have demonstrated exceptionally high diagnostic performance with AUC values reaching as high as 0.99 (Wu et al., 2022), underscoring the robustness and effectiveness of hybrid DL architectures in integrating spatial and temporal angiographic features. Moreover, classical ML techniques also displayed substantial diagnostic potential, especially when integrated with clinical data and engineered imaging features. For example, XGBoost and random forest algorithms provided reliable predictive performance in identifying chronic total occlusions and culprit lesions, respectively, highlighting the continued relevance of traditional ML techniques in contexts where interpretability and computational efficiency are prioritized. However, beyond CNN-based pipelines, attention-centric models—including Vision Transformers (ViTs) 62 and hierarchical variants such as Swin Transformers 63 —are gaining traction in medical imaging tasks that benefit from global receptive fields and long-range dependency modeling. 64 Although still underrepresented within X-ray sensor-based coronary angiography, early applications and evidence from adjacent cardiovascular imaging indicate competitive or superior performance on classification and segmentation tasks, particularly when data are sufficiently large or when self-supervised pretraining is available.

The disease-specific evidence synthesized in the “Disease-specific analysis of AI techniques in X-ray sensor-based coronary angiography” section, while encouraging, warrants cautious interpretation because several included studies are constrained by small, single-center cohorts that are inherently vulnerable to overfitting and optimism bias. Examples include a VMD-higher-order spectral ECG classifier reporting ∼98% accuracy in only 75 subjects for ICM-DCM discrimination, a setting in which effect sizes are unlikely to be stable without independent testing; a U-Net segmentation study with 71 patients; and a CT-FFRML comparison in just 33 patients, all of which limit precision and transportability of estimates. Importantly, the “Disease-specific analysis of AI techniques in X-ray sensor-based coronary angiography” section also contains several moderate or borderline results that temper expectations of uniformly high discrimination: prediction of pre-CAG chronic total occlusion (AUC 0.724), long-term prognosis in non-obstructive CAD (AUC 0.74), severe coronary calcification (AUC 0.715), angiographic severity by SYNTAX score (AUC 0.725), and coronary occlusion among OHCA cohorts (AUC ∼0.77). Even angiography-anchored pipelines such as CAG-based “vascular age” estimation demonstrated only mid-range performance (AUC 0.839), underscoring task- and context-dependence of gains and the need to quantify clinical utility beyond accuracy or AUC alone. True external validation was uncommon; a notable exception is the LASSO-based STEMI ECG model that reported both internal and external AUCs (0.92 and 0.98), illustrating good practice that should become routine.

In relation to established clinical benchmarks, however, the performance of current AI models warrants a more nuanced interpretation. While several CNN- or hybrid-based frameworks report AUCs approaching 0.99, these figures should be contextualized against conventional diagnostic standards such as QCA, FFR, or expert visual interpretation, where typical interobserver agreement ranges between κ = 0.65–0.75 and diagnostic AUCs of 0.80–0.85.65,66 In this light, many reported “AI gains” may represent incremental rather than transformative improvements, particularly when evaluated in retrospective or single-center cohorts. Moreover, most models were optimized for discrimination accuracy without examining calibration, decision thresholds, or clinical endpoints such as revascularization success, time-to-diagnosis, or major adverse cardiovascular events (MACEs). As a result, algorithmic superiority in statistical terms may not directly translate into clinical benefit. These gaps underscore the importance of moving beyond conventional AUC-based reporting toward comprehensive benchmarking against standard-of-care pathways and evaluation of workflow integration, interpretability, and clinical impact.

Despite these promising advancements, our findings underline several limitations that must be addressed for broader clinical integration. Future work should prioritize preregistered, adequately powered multicenter prospective cohorts with harmonized acquisition protocols (e.g. frame rate, contrast dosing, and vendor settings) and standardized, blinded endpoint adjudication. To ensure transportability, models should be evaluated with patient-level splits and geographically and temporally external test sets, including cross-vendor and cross-scanner validation to mitigate domain shift. Reporting should extend beyond discrimination to include calibration, decision-curve/net benefit, and uncertainty (confidence intervals), with transparent handling of class imbalance, missingness, and threshold selection. Negative or inconclusive findings and subgroup performance (sex, age, comorbidity, rhythm status, and body habitus) should be presented explicitly to reduce optimism and publication bias. Reproducibility can be strengthened through model cards, release of code/checkpoints, and participation in externally curated benchmarks where privacy constraints permit. Finally, prospective impact and cost-effectiveness evaluations embedded in angiography workflows are needed to determine whether observed performance translates into improved clinical decision-making and outcomes.

Beyond headline accuracy and AUC, the methodological rigor and real-world utility of many included studies remain limited. Sample sizes were often modest and designs predominantly single-center and retrospective, increasing risks of selection and spectrum bias. Data-splitting strategies were inconsistently described, and safeguards against data leakage (e.g. patient-level rather than image-level splits, nested cross-validation, and a locked, untouched test set) were not consistently reported. True external validation on geographically or temporally distinct cohorts was uncommon, and few studies assessed calibration (e.g. calibration curves or Brier score) or clinical utility using decision-curve analysis at clinically relevant thresholds. Moreover, head-to-head comparisons with standard-of-care pathways and evaluations of downstream impact (time-to-diagnosis, resource utilization, or patient outcomes) were rare. Future research should prioritize adequately powered, multicenter prospective designs with pre-specified analysis plans; transparent handling of missing data and class imbalance; rigorous external validation; and explicit assessments of calibration, net benefit, and workflow integration to support safe clinical adoption.

An additional issue arising from our bibliometric findings is the strong geographic skew in research output, with the United States and China contributing the majority of publications. Such concentration risks perpetuating regional data biases, as AI models trained predominantly on cohorts from these countries may underperform in underrepresented populations, particularly those from low- and middle-income regions with different demographic, genetic, and clinical profiles. Limited geographic diversity in training data may therefore constrain model generalizability, reduce diagnostic robustness, and exacerbate inequities in access to reliable AI-assisted cardiovascular care. Addressing this imbalance will require coordinated international efforts to establish large, multicenter datasets that capture broader ethnic, socioeconomic, and clinical heterogeneity, thereby ensuring that future AI systems are equitably designed and validated across diverse populations.

Additionally, interpretability remains a significant barrier to clinical adoption. Although AI methods demonstrate impressive predictive performance, the “black-box” nature of deep-learning algorithms may hinder clinical trust and integration into routine diagnostic workflows. Future research should thus prioritize developing explainable AI models to enhance clinician confidence and facilitate transparent decision-making processes. Ultimately, despite impressive numerical performance, the current generation of AI-driven angiography models remains largely confined to proof-of-concept demonstrations, with limited evidence of measurable impact on clinical decision-making or patient outcomes.

Conclusion

Artificial intelligence—particularly deep-learning approaches such as CNNs and CNN–LSTM hybrids—continues to show substantial promise for augmenting diagnostic accuracy, standardizing measurements, and streamlining workflows in X-ray sensor-based coronary angiography (including CAG, QCA, and CCTA/FFR-CT). Yet, based on the heterogeneous and often small, single-center evidence synthesized in this review, clinical adoption remains premature. To move from promising prototypes to reliable clinical tools, future studies should prioritize adequately powered, preregistered multicenter prospective cohorts with geographically/temporally external test sets, cross-vendor validation, and patient-level splits that minimize leakage and domain shift. Evidence standards should extend beyond discrimination to include calibration, clinical-utility metrics, uncertainty quantification, and transparent thresholding with subgroup reporting (sex, age, rhythm status, comorbidity, and body habitus).

Equally important are implementation and governance considerations: reproducible pipelines (code, model cards, and—where feasible—weights), robust data stewardship and privacy protections, human-factors evaluation for workflow fit, and prospective impact and cost-effectiveness studies embedded in angiography pathways. Negative or inconclusive findings should be reported explicitly to reduce optimism and publication bias, and participation in externally curated benchmarks can help establish fair comparisons. In sum, while current AI methods hold clear potential to enhance angiographic decision support, routine clinical use should await rigorous multicenter validation demonstrating generalizability, safety, calibration, and net clinical benefit.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076261417142 - Supplemental material for Artificial intelligence techniques for cardiovascular disease diagnosis via X-ray sensor-based coronary angiography: A bibliometric and systematic review

Supplemental material, sj-docx-1-dhj-10.1177_20552076261417142 for Artificial intelligence techniques for cardiovascular disease diagnosis via X-ray sensor-based coronary angiography: A bibliometric and systematic review by Hao Ren, Fengshi Jing, Yan Fang and Weibin Cheng in DIGITAL HEALTH

Supplemental Material

sj-docx-2-dhj-10.1177_20552076261417142 - Supplemental material for Artificial intelligence techniques for cardiovascular disease diagnosis via X-ray sensor-based coronary angiography: A bibliometric and systematic review

Supplemental material, sj-docx-2-dhj-10.1177_20552076261417142 for Artificial intelligence techniques for cardiovascular disease diagnosis via X-ray sensor-based coronary angiography: A bibliometric and systematic review by Hao Ren, Fengshi Jing, Yan Fang and Weibin Cheng in DIGITAL HEALTH

Supplemental Material

sj-pdf-3-dhj-10.1177_20552076261417142 - Supplemental material for Artificial intelligence techniques for cardiovascular disease diagnosis via X-ray sensor-based coronary angiography: A bibliometric and systematic review

Supplemental material, sj-pdf-3-dhj-10.1177_20552076261417142 for Artificial intelligence techniques for cardiovascular disease diagnosis via X-ray sensor-based coronary angiography: A bibliometric and systematic review by Hao Ren, Fengshi Jing, Yan Fang and Weibin Cheng in DIGITAL HEALTH

Footnotes

Ethics approval and consent to participate

Not applicable. This study is a review of previously published literature and does not involve any new studies with human participants or animals.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was funded by the Guangzhou Municipal Science and Technology Project (Grant Nos. 2024A03J1074, 2023A03J0286, 2024A03J0927, and 2024A03J1067, 2025B03J0110), the Science and Technology Development Fund (FDCT) of the Macao Special Administrative Region (Grant No. 0064/2024/RIB2), the Guangdong Provincial Key Laboratory of Integrated Communication, Sensing and Computation for Ubiquitous Internet of Things (Grant No. 2023B1212010007), the Guangdong Provincial Medical Science Research Fund (Grant No. B2024030), and the Science and Technology Development Fund (FDCT) of the Macao Special Administrative Region (No. 0004/2025/AKP).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.