Abstract

Objective

To evaluate the performance of machine learning (ML)-based survival models for 10-year cardiovascular disease (CVD) risk prediction using large-scale electronic health records (EHRs). The study benchmarks these models against the QRISK3 score and conventional Cox proportional hazards (CoxPH) models currently used in UK primary prevention, with the aim of assessing their potential to capture complex risk patterns beyond traditional approaches.

Methods

This study utilized individual-level data from the CPRD Aurum, covering 40 million UK primary care records from 2011 to 2021. A total of 469,496 patients aged 40–85 was analysed. Predictor variables were selected based on QRISK3 definitions, with additional phenotyping for comorbidities and pre-stratified risk scores. ML models, including deep neural networks (e.g., DeepSurv and DeepHit) and ensemble survival models (e.g., random survival forest [RSF] and gradient boosting), were developed for CVD risk prediction. Model performance was assessed using calibration and discrimination metrics, with ‘spatial external validation’ conducted using a London-held dataset.

Results

A total of 849,651 records were analysed, including 117,421 for ‘spatial validation’ and 732,230 for development. QRISK3 scores effectively differentiated CVD patients, particularly among females, showing stronger predictive performance. Ensemble methods and neural networks outperformed CoxPH models, with RSF achieving the best discrimination and calibration: AUROC values of 0.738 (95% CI: 0.723–0.752) for males and 0.778 (95% CI: 0.762–0.793) for females, with Brier scores of 0.088 and 0.055.

Conclusion

ML models enhance CVD risk prediction, outperforming conventional approaches in calibration and discrimination. Integrating pre-stratified risk scores further improves performance, highlighting the value of augmenting tools like QRISK.

Keywords

Introduction

Cardiovascular disease (CVD) remains the leading public health challenge worldwide and in the UK, affecting 7.6 million individuals as of 2024. In 2022, CVD accounted for 26% of all UK deaths, resulting in approximately 174,884 fatalities. 1

Additionally, major modifiable risk factors for CVD often lack sufficient patient awareness. In the UK, an estimated 6–8 million people have undiagnosed or uncontrolled high blood pressure (BP), and 850,000 people have undiagnosed diabetes. Approximately half of adults have cholesterol levels above the recommended guideline.1,2

Regular screenings and timely counselling with medical interventions can address behavioural risk factors, reduce CVD onset and severity, improve health outcomes, and decrease health inequalities. 3 In the UK, individuals aged 40 and above are routinely invited by their general practitioner (GP) for a CVD risk assessment every five years as part of a general screening strategy, while those aged 25 to 84 with specific risk factors, such as type 2 diabetes, are assessed using the risk score to evaluate their 10-year CVD risk. 4

Several CVD risk scores have been developed to estimate 10-year CVD risk in primary care for primary prevention. The QRISK3 5 and Pooled Cohort Equation (PCE) 6 are widely used in the UK and USA, respectively, guiding statin prescriptions and other therapies in asymptomatic individuals. These models are recommended by guidelines such as National Institute for Health and Care Excellence (NICE) 4 in the UK and American College of Cardiology/American Heart Association (ACC/AHA) 7 in the USA.

Researchers have enhanced these algorithms by adding new predictors and conducting external validations.8,9 Despite both using Cox proportional-hazard models (CoxPH), some studies suggest these algorithms may misestimate risks for certain subpopulations, leading to controversial outcomes for minor ethnicity groups.10,11

Machine learning (ML), including deep learning (DL), is pivotal in the evolving field of CVD primary prevention and management, employing sophisticated algorithms to analyse diverse datasets and uncover complex patterns that traditional methods may overlook. ML leverages both structured and unstructured electronic health records (EHRs) to discern nuanced correlations within clinical data.12,13

Recent studies indicate that ML/DL techniques provide superior predictive capabilities for long-term CVD risk, specific cardiovascular events, and time-to-event (TTE) analysis, often surpassing traditional algorithms such as QRISK and Atherosclerotic CVD (ASCVD) risk calculators in both discrimination and calibration. 14 Integrating conventional risk scores into ML algorithms could further enhance performance and ensure that established, guideline-endorsed methods are effectively leveraged for primary prevention.15–17 Moreover, ML models demonstrate an improved ability to accommodate patient heterogeneity, particularly among individuals with comorbid conditions. 12

Our objective is to develop and benchmark ML models for TTE analysis using longitudinal EHR data, with the ultimate aim of informing the development of risk scores that could be implemented in primary care settings to support real-world clinical decision-making.

Specific goals include:

Stratifying individual CVD risk using QRISK3. Developing ML algorithms, including ensemble and neural network survival models, to predict 10-year CVD risk. Comparing and validating model performance in terms of both discrimination and calibration.

To support transparency and reproducibility, we leveraged EHR data with phenotyping techniques to ensure the relevance of predicted outcomes, and systematically documented key technical procedures including variable definitions, data harmonization, and hyperparameter optimization. Model development and reporting were guided by the TRIPOD + AI 18 framework. By adhering to these principles, we aim to produce clinically grounded, methodologically robust, and replicable ML-based survival models that advance the field of CVD risk prediction.

Methods

This study is conducted in accordance with the guidelines outlined in the TRIPOD + AI statement, 18 an extension of the TRIPOD statement specifically for AI/ML prediction models (Supplementary file).

Study design and participants

This study employs a prospective open cohort design using retrospective individual-level data from UK EHRs, specifically the CPRD Aurum (study registration protocol No: 21_000346). CPRD provides longitudinal, routinely collected de-identified patient data from primary care GPs that use Egton Medical Information Systems (EMIS) Web®, an electronic patient record system.

The CPRD Aurum, 19 updated to the January 2022 version 2.7, is a comprehensive EHR dataset widely used in cardiovascular research and represents a suitable source for developing ML-based risk prediction models for primary prevention. This dataset includes over 40 million research-acceptable patients, with current patients representing approximately one-fifth of the UK's population and covering 1359 GP practices, equating to 16.62% of all UK GPs. The median follow-up time for currently registered patients is 8.74 years, ensuring a comprehensive longitudinal dataset. Further information regarding this dataset can be found in the data specification document available on the CPRD website. 20

We included a stratified random sample of 469,496 patients aged 40 to 85 years, registered between 2011 and 2021 in England, from the CPRD Aurum database. Sampling was stratified by GP and calendar year to ensure temporal and geographic representativeness. This approach was adopted due to the large size of the full Aurum population which would pose considerable computational challenges for model development, especially when training DL models. The source data consisted of eight structured components: ‘patient’, ‘practice’, ‘staff’, ‘consultation’, ‘observation’, ‘problem’, ‘referral’, and ‘drug issue’ files. These were linked using CPRD-provided unique patient identifiers to reconstruct complete longitudinal primary care records for each individual.

From the given dataset (Figure 1), we first conducted a validity check for individual patients to ensure they had an eligible 10-year follow-up horizon with accurate registration, consultation, and clinical observation dates. We then extracted the required features for patients with valid records that included any 10-year duration, starting from ages 40 to 75, in 5-year intervals. This approach allows participants in our study to have multiple entries, provided they are free from CVD at the year of interest, treating these entries as independent 10-year records.

Workflow for study population and records extraction.

We used a multi-entry cohort design with 5-year intervals to include individuals aged 40 to 85 at multiple time points. This setup allows the model to learn from a broader age range and across different life stages, while increasing the number of usable 10-year follow-up windows. It also ensures appropriate handling of censoring within each prediction horizon, rather than excluding individuals with shorter follow-up. The design reflects how cardiovascular risk is assessed in clinical practice, where patients may undergo repeated risk evaluations over time. In line with this, UK NICE guidelines 4 recommend reassessing risk every 5 years in adults aged 40 to 85. Conceptually, this approach is consistent with landmark modelling methods, 21 where individuals contribute multiple fixed-horizon prediction episodes anchored at different time points. Consistent with this framework, we did not model longitudinal trajectories directly but instead constructed multiple baseline entries, mirroring how risk prediction tools are reapplied in practice.

In 2023, approximately 11% of UK adults were living with CVD, with an annual incidence of 1.5 per 1000 for major cardiovascular events such as stroke or myocardial infarction. 22

We confirmed that the sample size was sufficient for model development by applying the framework proposed by Riley et al., 23 which considers shrinkage, calibration, and precision criteria for prediction modelling (Supplementary file). Our final cohort substantially exceeded the minimum required sample size and event count, ensuring stable risk estimation and low risk of overfitting over a 10-year prediction window.

Predictor variables and cardiovascular disease outcomes

We used the same set of risk predictors as published by QRISK3, 5 as shown in Table 1. CPRD contains demographic characteristics, diagnoses, symptoms, drug prescriptions, and dosages, using MedCode ID as unique identifiers for labelling medical terms and codes, primarily captured using the EMIS, which is a widely adopted EHR system in UK GP. MedCode ID can be mapped to other controlled clinical terminologies, including Read Code and SNOMED CT.

Summary of predictor variables and cardiovascular disease outcomes in QRISK3 and expanded stratified risk set.

NB: “Y” indicates that the predictor or outcome is included in the respective set.

We utilized the phenotype definitions available in the HDRUK Phenotype Library, 24 which include demographic information, measurement procedures, and drug medications. For the diagnosis of comorbidities, we used the phenotype collection from CALIBER. 25 Supplementary Table 1 shows the descriptions and corresponding ICD-10 codes used to identify comorbidities. We also documented all the MedCode IDs used to identify predictor variables, mapping them to other controlled terminologies for cross-quality checks, future updates, and transparency (Supplementary file).

We maintained one set of predictor variables based on the exact QRISK definitions. Additionally, we included a separate set incorporating the pre-stratified risk score in both continuous and categorical formats (Table 1) to evaluate whether this approach could improve performance. No further feature selection was performed, as all predictors were pre-specified by the validated QRISK model, ensuring consistency and fairness in model comparisons.

For variables that remain constant during the records (gender, ethnicity, family history, deprivation), we extracted them whenever they existed in the records. For variables that needed to assert the status at the year of entry, such as smoking status, we extracted them within a certain acceptable range (within 6 months before and after the year of entry). If no recent smoking status records were within the acceptable range, we identified the individual as a former smoker if there was a previous history before the year of entry.

For relevant medication use including anti-hypertensive, corticosteroid, and antipsychotic medications, we used the same approach if records showed they existed within the acceptable range around the start year. We labelled them as existing if they appeared at any time in previous records. For disease comorbidities, we labelled them as existing if they occurred in previous records before the start year within a half-year acceptable range. We assumed that no records indicated no disease occurrence rather than missing data.

For continuous variables like body mass index (BMI), systolic blood pressure (SBP), standard deviation (s.d.) of SBP, and total cholesterol/high-density lipoprotein (HDL) value, if no available readings were within the acceptable range, we used previous records to estimate them using linear regression if there were more than three readings within 2 years before and after the acceptable range.

We extracted all the above-mentioned QRISK predictor variables based on the start year when the patient was recruited into the data and used them to create two additional predictor variables based on the calculated QRISK score: one in continuous format and one categorized into 0–5%, 5–10%, 10–20%, and 20%+. The threshold selection was based on the 10% 10-year risk threshold used in NICE guidelines 4 to recommend statin initiation. For individuals with stage 1 hypertension, the same threshold may also inform decisions around initiating antihypertensive treatment, 26 depending on overall cardiovascular risk.

For this study, in line with the scope of primary prevention, outcomes were defined as first incident CVD events, which are the basis for preventive treatment decisions in clinical practice and consistent with established tools such as QRISK. We used phenotype definitions from HDRUK Phenotype Library 24 and the CALIBER 25 to identify relevant diagnoses from CPRD primary care records using MedCode IDs. These codes were mapped to ICD-10 categories for reporting clarity (Supplementary Table 2). Specifically, we captured coronary heart disease (I20–I25), including angina and myocardial infarction; stroke (I63–I66, I69); transient ischemic attack (TIA) (G45–G46); and other cardiovascular conditions such as heart failure (I50), abdominal aortic aneurysm (AAA) (I71), and peripheral arterial disease (PAD) (I73–I74).

We labelled an occurrence as a CVD outcome when one of the aforementioned types of CVD is observed before the end of the 10-year follow-up, with an acceptable range of six months afterward. If no record was found, we assume the condition did not exist rather than considering it as missing data. We also recorded the TTE information for the CVD outcomes.

Statistical analysis

As previously mentioned, we utilized QRISK3 to pre-calculate the individual CVD risk at the year of entry. First, we preprocess our data by removing outliers using the interquartile range (IQR) method and iteratively imputing the missing data using the multiple imputation by chained equations (MICE) method, with a random forest regressor as the estimator. We then predict the risk using an R programming package 27 that exactly replicates the QRISK3 algorithms based on the official open-source implementation released by ClinRisk Ltd under the GNU Lesser General Public License (LGPL).

To interpret the results, we compared the difference in risk scores between records with CVD and those without CVD. We evaluated the calibration by calculating the Brier score and producing the calibration curve. We use the c-statistic to measure discrimination performance, with a 95% confidence interval (CI) calculated using the bootstrap technique. Additionally, we compare the baseline threshold selection of risk scores for different age-risk groups separately for both genders and identify the optimal baseline threshold that yields the best F1 score, ensuring a balance between precision and recall.

We selected random survival forest (RSF), gradient boosting survival analysis (GBSA), extreme gradient boosting for survival analysis (XGBS), and two NN-based survival models (DeepSurv, 28 a deep NN implementation of the CoxPH; and DeepHit, 29 a DL model designed for survival analysis with competing risks) as potential ML/DL models for training. DeepSurv, as an extension of the CoxPH model, focuses more on modelling time-dependent survival probabilities, which may allow it to better capture overall trends. In contrast, DeepHit is more oriented toward directly predicting the probability of events, making it potentially more sensitive to subgroup-specific characteristics, such as those associated with gender differences. Although DeepHit is capable of modelling competing risks, we implemented it in a single-endpoint setting consistent with the other models. The choice of these models is based on findings from previous systematic reviews, which indicate that ensemble methods and NN outperform other ML models.30–34 CoxPH from two Python packages (Lifelines 35 and scikit-survival 36 ) were used as baseline models, with interaction terms included following the QRISK3 structure for both packages. All models are derived and trained separately by gender.

Initially, we removed outliers using the IQR method. For categorical variables, we created dummy variables using one-hot encoding and scaled continuous variables with MinMaxScaler. We applied the MICE method to impute missing data, particularly to accommodate ML classifiers that do not natively handle incomplete inputs.

For the implementation of ensemble-based survival methods, we used the scikit-survival package, 36 while for NN-based survival models, we used the PyCox package, which is based on PyTorch. 37 The dataset was randomly split into training, validation, and testing sets with a 70–15–15 ratio (Figure 1).

During the model tuning process on the training set, we employed Optuna, 38 a hyperparameter optimization framework based on Bayesian optimization and the Tree-structured Parzen Estimator, to identify the optimal combination of hyperparameters for each model, aiming to balance performance and computational efficiency. The performance of each model is reported separately for the training, validation, and test sets, stratified by gender, using the best hyperparameter combination that achieved the highest F1 score on the validation set. Detailed information on the hyperparameter selection process is provided in Supplementary Table 3.

To evaluate and compare model performance, we selected the best-performing model configuration based on hyperparameter optimization. Calibration plots were generated to compare predicted probabilities with observed event rates in the test set, and Brier scores were calculated to assess overall calibration. AUROC scores and their 95% confidence intervals were computed to quantify each model's discriminatory ability and the associated uncertainty. These metrics were then directly compared across models by gender. Internal validity was assessed using 10-fold cross-validation. Additional performance metrics, including accuracy, specificity, and recall, were reported based on the optimal risk threshold identified by maximizing the F1 score.

We additionally applied SHapley Additive exPlanations (SHAP) to the best-performing models to interpret predictor contributions and assess whether the relative importance and direction of association of variables were consistent with established clinical risk factors.

To assess generalizability in underrepresented populations, we performed ‘spatial external validation’ using a held-out dataset comprising patients from GP in London (Figure 1), a region with the highest proportion of ethnic minority groups in the UK (Table 2). This ‘spatial validation’ set was completely unseen during model training and internal evaluation. All performance metrics described above were also reported for the external dataset.

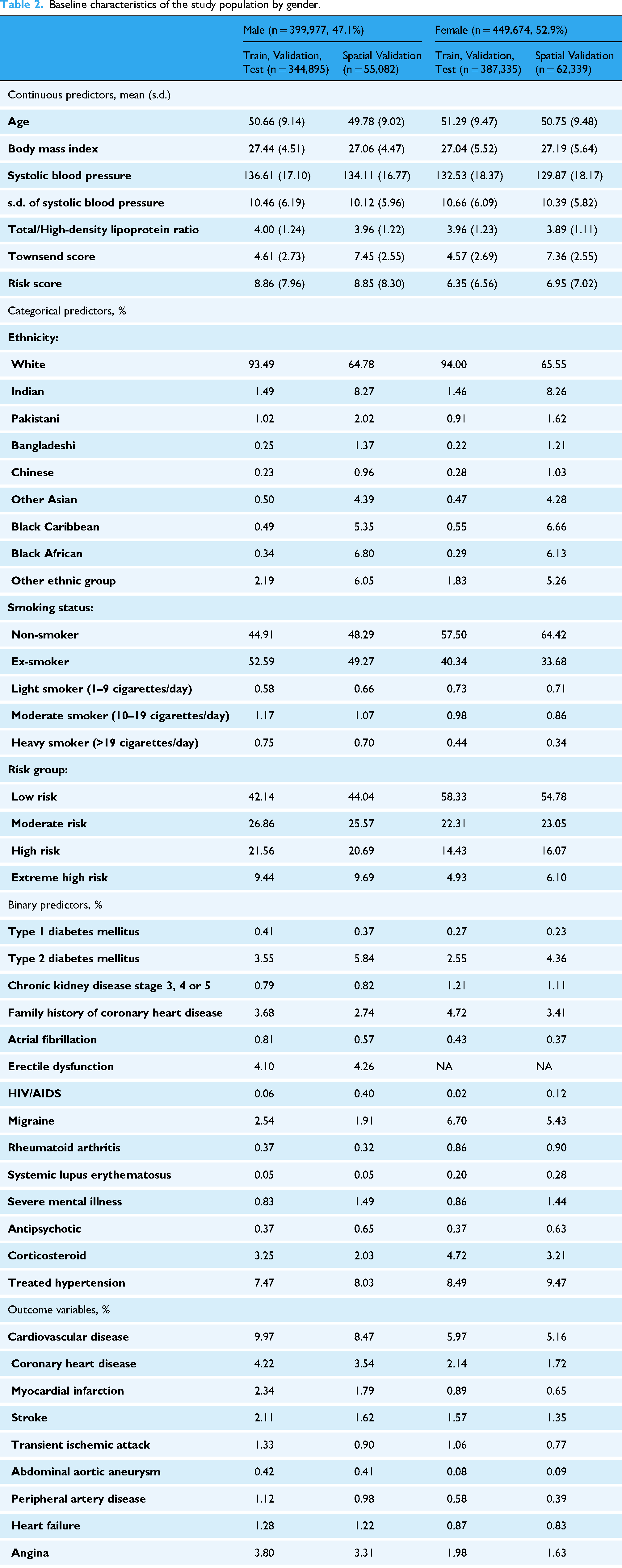

Baseline characteristics of the study population by gender.

Results

Baseline characteristics of study population

We extracted 909,848 records from 469,496 patients across 1476 practices in the England (Figure 1). After a quality check, we retained 849,651 valid records for our model development. We held out 117,421 (13.8%) valid records from practices in London as our ‘spatial validation’ set. For the remaining 732,230 (86.2%) records, we used them as the training, validation and test set by randomly splitting them 70:15:15.

Table 2 presents the characteristics of the baseline training, validation, test set, and the ‘spatial validation’ set, disaggregated by gender. The analysis reveals that most comorbidities and CVD outcomes are more prevalent among males, highlighting the importance of developing gender-specific models. Furthermore, the data indicates a higher proportion of minority ethnic groups in the London held-out set compared to the training/validation/test set. The mean individual deprivation score in the London set is also higher than that of the training and test sets, with men showing slightly higher scores than women. This suggests that selected individuals in the Greater London area experience greater material deprivation compared to those in other parts of the England. The demographic distribution supports the use of the London region as a spatial held-out set for evaluating the model's performance in managing minority ethnic groups.

Distribution and performance of QRISK3

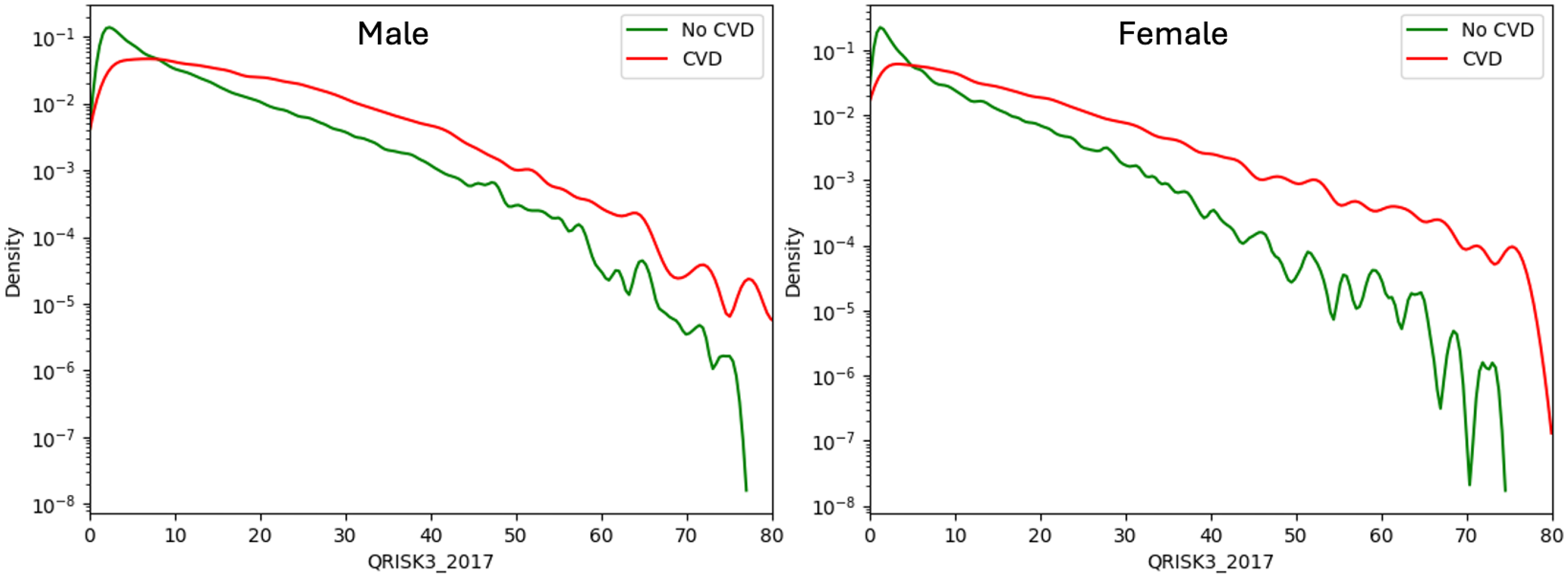

Figure 2 presents gender-specific density plots comparing QRISK3 score distributions between patients with and without subsequent CVD. Overall, QRISK3 scores effectively distinguish between the two groups, with higher scores linked to greater CVD risk. This separation is more pronounced in females, particularly at scores above 20%, suggesting stronger predictive utility. However, for both genders, scores near 5% show limited discriminative ability.

Density plot of QRISK3 scores for patients with and without cardiovascular disease (CVD) by gender.

Figure 3 further supports these findings. Many future CVD cases, particularly among females, fall within the low-to-moderate risk groups. Females, especially those under 60, generally have lower risk scores, limiting the score's discriminative power in younger age groups. In contrast, risk scores are more informative in older populations. Males tend to have higher scores overall, and an appropriate baseline threshold to capture most CVD cases may be >10% for males and <10% for females.

Percentage distribution of patients with cardiovascular disease by age-risk group and gender. Left: Percentage of patients with CVD within each age risk group. Middle: Percentage of CVD patients within each age group, summing to 100%. Right: Percentage distribution of CVD patients across all age risk groups, summing to 100%.

As shown in Table 3, the optimal thresholds for QRISK scores were 12.5% for males and 9.3% for females, highlighting the need for lower thresholds in women. Notably, the optimal threshold for the full population matched the 10% NICE guideline, suggesting it may be suitable at the population level but suboptimal for specific subgroups. QRISK performance was slightly better in females (AUROC: 0.747, 95% CI: 0.745–0.749) than in males (AUROC: 0.727, 95% CI: 0.725–0.729), with a lower Brier score in females (0.069 vs. 0.104), indicating better calibration. These findings are also reflected in the calibration plots (Figure 4).

Calibration plot of QRISK3 predicted vs. observed cardiovascular disease (CVD) risk by gender.

QRISK3 performance metrics by gender.

Model predictive performance and comparison

In general, ensemble-based survival methods consistently outperformed CoxPH and QRISK3 baselines across discrimination and calibration metrics, with RSF showing the strongest overall performance. DL models also achieved competitive results, particularly in the female subgroup.

Table 4 displays the performance metrics of five ML-based survival models and two CoxPH baselines using both the original QRISK3 variable set and the expanded stratified risk variable set on the test and ‘spatial validation’ sets. Full results including training and internal validation sets are reported in Supplementary Table 4.

Performance metrics of machine learning models by gender and variables set.

NB: In each cell, test set: first value; ‘spatial validation’ set: second value

Overall, during the model development stage, the same ML models using the expanded stratified risk variable set generally outperform those using the original QRISK3 variable set in terms of classification, discrimination, and calibration (Supplementary Table 4).

Figure 5 shows the AUROC comparison across seven models using both the QRISK3 variable set and the expanded stratified risk set in the test dataset. AUROC scores using QRISK3 are used as benchmark baselines for comparison. Again, these results confirm that models trained on the expanded stratified risk set achieve better discrimination performance than those trained on the QRISK3 variable set.

AUROC comparison across models in test dataset by gender (with 95% CI).

All five ensemble-based survival methods and the NN-based models demonstrate superior performance in classification and discrimination compared to the two CoxPH baselines in the test set (Table 4, Figure 5), which show nearly identical performance across genders. Specifically, CoxPH (lifelines) achieves an AUROC of 0.724 (95% CI: 0.717–0.730) for males and 0.760 (95% CI: 0.752–0.767) for females, while CoxPH (sksurv) attains an AUROC of 0.723 (95% CI: 0.717–0.730) for males and 0.759 (95% CI: 0.751–0.766) for females. Minor differences are expected due to variations in package defaults such as tie handling and baseline estimation.

Among all models, RSF demonstrates the strongest performance in terms of both discrimination and calibration in the test set, as shown in Table 4 and Figure 5. It achieves an AUROC of 0.738 (95% CI: 0.723–0.752) for males and 0.778 (95% CI: 0.762–0.793) for females. It also has the lowest Brier scores among all models: 0.080 for males and 0.055 for females.

Two other ensemble-based methods, GBSA and XGBS, also perform well in the test set (Table 4). GBSA achieves an AUROC of 0.728 (95% CI: 0.713–0.742) for males and 0.751 (95% CI: 0.735–0.766) for females, with corresponding Brier scores of 0.091 and 0.058, respectively. XGBS reports an AUROC of 0.736 (95% CI: 0.730–0.743) for males and 0.762 (95% CI: 0.754–0.769) for females, with Brier scores of 0.095 and 0.059, respectively.

The two NN-based models perform marginally worse than the ensemble methods in the test set (Table 4). DeepSurv achieves an AUROC of 0.727 (95% CI: 0.721–0.733) for males, slightly outperforming DeepHit's AUROC of 0.725 (95% CI: 0.719–0.732) in the same setting. However, DeepHit performs better in females, with an AUROC of 0.763 (95% CI: 0.756–0.771) compared to 0.758 (95% CI: 0.750–0.765) for DeepSurv.

RSF achieves the best performance among ensemble methods in the training dataset but shows a noticeable decline in the test dataset. Despite this drop, RSF maintains overall strong performance, with consistent results in the validation and test sets, suggesting no signs of overfitting. In contrast, GBSA and XGBS demonstrate more stable performance across the training, validation, and test datasets, with smaller declines from training to testing (Table 4, Supplementary Table 4). The NN-based models, DeepSurv and DeepHit, show slightly lower overall performance compared to ensemble methods but remain competitive. The CoxPH models serve as baselines, showing consistent but lower performance than the ML-based models. These results highlight the superior discrimination ability of ensemble methods such as RSF, GBSA, and XGBS, while also demonstrating their robustness across datasets. Overall, the models exhibit stable performance across the training, validation, and test datasets, with no clear evidence of overfitting for any of the methods evaluated (Table 4, Supplementary Table 4).

Figure 6 presents the calibration curves for all seven models using the expanded stratified risk variable set in the test datasets. Most models, particularly RSF, align closely with the best-fit line, indicating good calibration. In contrast, XGBS consistently lies above the diagonal, suggesting a tendency to overestimate risk probabilities. This may be attributed to its aggressive gradient boosting optimization, which, while effective for discrimination, can introduce calibration challenges. Other models, including DeepSurv, DeepHit, and the CoxPH baselines, exhibit relatively stable calibration across the test datasets, generally following the best-fit line without substantial deviation. These results indicate that most models are well-calibrated, though the overestimation pattern observed in XGBS warrants further investigation.

Calibration curves for model performance in test dataset by gender. NB: Calibration plots are truncated at 0.40 to improve visual clarity. Predictions above this threshold are rare and have limited impact on interpretation.

In addition, the result tables and plots show that all five ML-based survival models tend to perform better in females than in males in the test dataset. The optimal F1 score–based thresholds for females are also generally lower than those for males. These findings highlight the importance of implementing gender-specific thresholds in ML-based models to improve classification performance in clinical settings, rather than relying on a uniform threshold across genders.

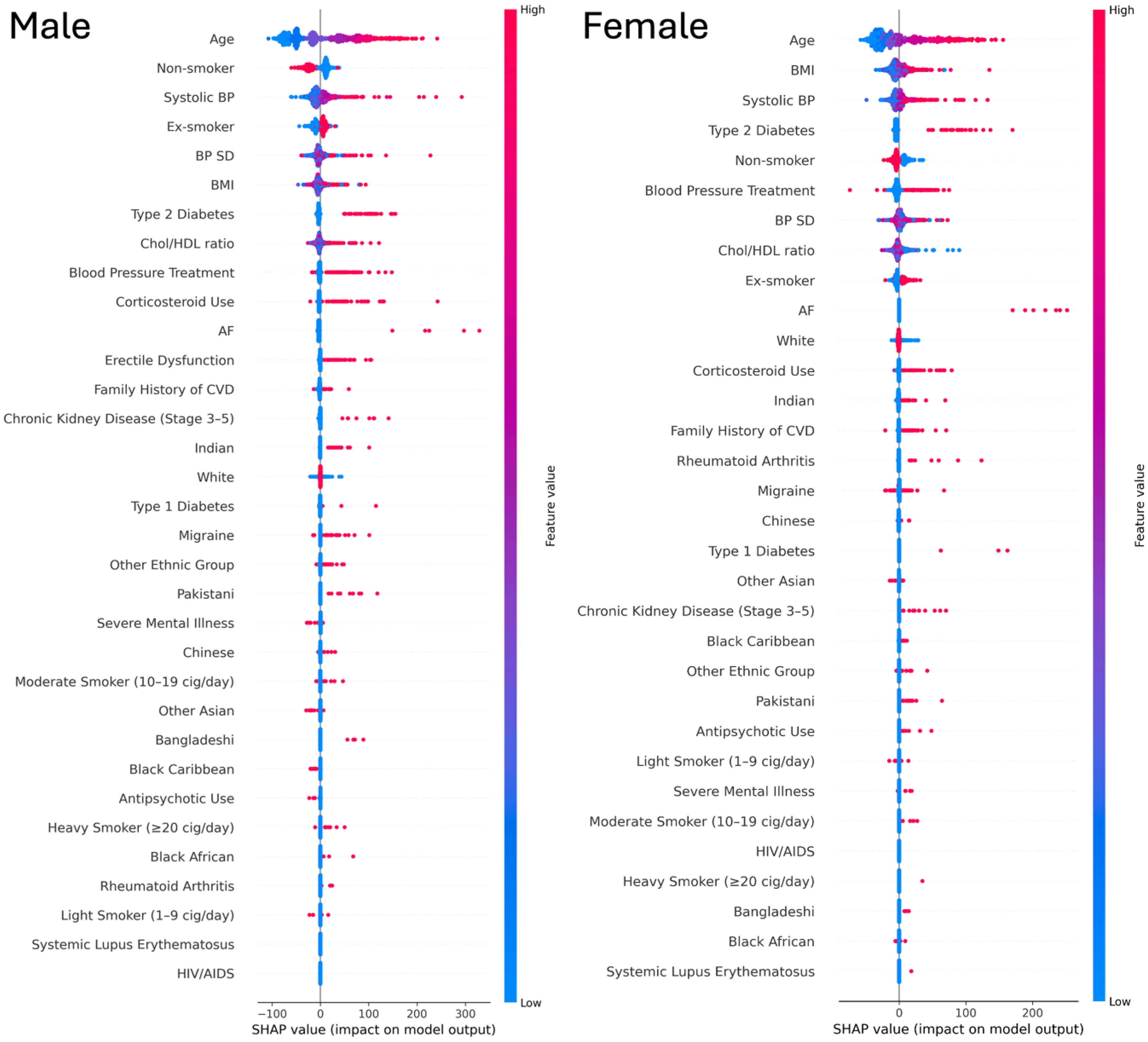

To further interpret model predictions, we examined feature contributions using SHAP applied to the best-performing model (RSF). The summary plots (Figure 7) show that age, SBP, and BMI were consistently the strongest continuous predictors of CVD risk in both men and women, with higher values associated with greater risk. Smoking was particularly influential in men, with ex-smokers and increasing categories of current smoking showing progressively higher risk contributions compared with non-smokers. Ethnicity effects were clear, for instance South Asian groups (Indian and Pakistani) showed higher risk contributions. Among comorbidities, diabetes, especially type 2, was the strongest contributor, while AF and CKD also showed significant importance compared with other conditions. BP treatment and corticosteroid use also had notable impact in both genders.

SHAP summary plots of Random survival forest model by gender. NB: Red indicates higher feature values and blue indicates lower feature values. The x-axis shows SHAP values (impact on model output).

Model performance in the ‘spatial validation’ set

All models using the expanded stratified risk set demonstrate improved performance compared to those using the original QRISK3 feature set in the ‘spatial validation’ set, as shown in Table 4.

The CoxPH baselines perform consistently well, with stable AUROC values that occasionally exceed those of more complex models. CoxPH (Lifelines) achieves an AUROC of 0.725 (95% CI: 0.719–0.731) for men and 0.769 (95% CI: 0.762–0.776) for women, while CoxPH (scikit-survival) reports 0.724 (95% CI: 0.717–0.730) for men and 0.768 (95% CI: 0.761–0.776) for women. Both methods also show strong calibration (Figure 8), with Brier scores of 0.078 for men and 0.045 for women.

Model performance in ‘spatial validation’ dataset by gender. NB: Calibration plots are truncated at 0.40 to improve visual clarity. Predictions above this threshold are rare and have limited impact on interpretation.

Among the more advanced models, NN–based survival methods (DeepSurv and DeepHit) demonstrate stable performance across datasets, with minimal degradation in the ‘spatial validation’ set. In several cases, these models outperform traditional ensemble methods, highlighting their generalizability.

Within the ensemble models, GBSA performs particularly well in the ‘spatial validation’ set. It achieves the highest AUROC among ensemble methods for both genders, with 0.725 (95% CI: 0.719–0.731) for men and 0.761 (95% CI: 0.753–0.768) for women and maintains reliable calibration (Figure 8).

In general, model performance is slightly better for women than for men in terms of discrimination (Table 4). Calibration curves (Figure 8) for women also show closer alignment with the ideal calibration line, particularly at lower predicted CVD risk levels. These findings underscore the value of gender-specific calibration and thresholding when translating model predictions into clinical decision-making.

Finally, calibration curves (Figure 8) reveal that most models are well-calibrated for lower predicted risks (below 20%) but tend to underestimate actual CVD risk in the higher range (20–40%). This underestimation is especially noticeable in RSF and DeepHit, whose calibration curves remain consistently below the diagonal in the ‘spatial validation’ set.

Discussion

Using a prospective open cohort design based on retrospective large-scale EHR data, we find that ensemble-based survival methods, particularly RSF, perform well in terms of calibration and discrimination. Numerous model development papers have shown similar results, indicating that these ML models yield better performance.33,34 Previous systematic reviews also indicate that ensemble methods may produce superior results.30–32

The performance of some of the selected models, particularly RSF, declines in the London held-out dataset, especially for higher predicted risks. This decline is attributed to the different distribution of minority ethnic groups in the validation set. Future efforts should focus on recalibrating for minority ethnic groups. Despite this, the models still show good calibration when the predicted risk is at a low to moderate level below 20%. The hypothesis for this performance drop suggests that predictions still heavily rely on age, with older individuals tending to have higher scores that overestimate the actual CVD risk. In clinical practice, particularly in primary prevention tasks, a higher risk score may not be necessary for decision-making, although achieving accurate predictions across all risk levels is desirable. For primary prevention, it is crucial to identify potential CVD outcomes for patients at an early stage, ensuring the validity of predictions at lower to moderate risk levels.

The increasing use of large-scale comprehensive EHR datasets in model development and validation has become a recent trend. 13 We observed that recent studies using EHR as data sources for long-term CVD prediction are typically rich in the number of predictors and data size compared to study based on conventional cohort. 39 Using CPRD as an extensive and diverse EHR data source with longitudinal medical history and diagnoses ensures consistency during model development and maintains data quality and fairness. 40 Our study underscores the potential of ML on EHR to refine and improve CVD risk prediction.

We utilized QRISK to pre-calculate risk scores before training the ML-based models, aiming to gain deeper insights into individual risk stratification and to leverage the advantages of conventional statistical models. Although QRISK4 has been published recently, 41 its code is currently unavailable, and it has not yet been adopted by UK guidelines. Therefore, QRISK3 remains the basis of our work.

The AUC/C-statistic performance of QRISK in our study may appear lower than reported elsewhere, likely due to the stricter alignment between our predictors and outcomes over a true 10-year period. However, QRISK demonstrates excellent calibration, which is particularly critical for tasks like this. Our ultimate goal is not merely to surpass the performance of conventional models but to achieve more accurate predictions of patients’ CVD outcomes by integrating their strengths. We also identified studies that incorporated the ASCVD risk score as a predictor in their ML models, achieving improved performance. This highlights the potential of combining conventional and ML-based approaches for better risk prediction.15–17

Our analysis showed consistent differences in optimal classification thresholds between males and females, with thresholds typically lower for females, reflecting differences in baseline event rates and predicted risk distributions. It may also be useful to differentiate thresholds for different age groups or set a more personalized baseline, considering additional features. However, this must balance computational cost and generalizability. These thresholds were not intended as clinical cut-offs, but to optimise model performance. While they operate on the same risk scale as clinical guidelines (e.g., NICE's 10% threshold), their purpose is to maximize classification metrics, whereas clinical thresholds consider factors such as cost-effectiveness, feasibility, and policy simplicity. We suggest that male and older age groups may benefit from higher thresholds to indicate moderate risk, but further clinical validation is needed. These findings should be viewed as a step toward clinical translation, which remains outside the scope of this study. Consistent with these threshold differences, the direction of predictor outcome associations in our models aligned with established clinical knowledge. SHAP analysis further showed that the relative impact of several risk factors differs by gender, reinforcing the rationale for gender-specific modelling and thresholding. Our model identified age, SBP, and BMI as strong predictors of CVD risk. Notably, the South Asian groups (Indian and Pakistani) showed higher risk contributions of CVDs. These findings are consistent with previous literature.42,43 However, the sex-specific variations of these associations warrant further investigation to better understand potential gender and ethnic disparities in CVD risks.

Developing and comparing ML models is common, but these efforts are often limited to specific methodologies, lacking external validation or practical implementation. 44 Unlike other model comparison papers or systematic reviews, which often use different validation approaches between ML and classical statistics, making results hard to interpret, our work focuses on fairly comparing widely used ML-based survival models that handle censoring and TTE outcomes. We ensure that our models are both clinically relevant and technically robust. While the “black box” issue of ML models persists, we prioritize transparency in mapping predictors and CVD outcomes, as well as in replicating and validating our models. Adhering to the TRIPOD + AI guidelines, 18 we used standardized methods and terminology to define and extract predictor variables and outcomes, employing validated, publicly available phenotyping to enhance clinical relevance. By reporting detailed mapping, techniques, and hyperparameters, we ensure transparency and replicability, reinforcing model robustness.

Limitations

The primary limitation of our study is the absence of a clinical utility analysis. For our work to be practically applied and potentially replace conventional risk scores, it must demonstrate clinical applicability. 45 Moreover, evaluating ML-based CVD risk scores is crucial to ascertain their substantial benefits for clinical decision-making and consequent improvement in patient outcomes. This evaluation should encompass considerations of ethical implications, legal aspects, economic cost-effectiveness, and the impact on health inequality. 46

Our study design may introduce potential sampling and measurement bias, as multiple records from the same individuals were included. This was an intentional choice to simulate real-world clinical scenarios, where patients’ cardiovascular risk is typically reassessed every five years. The resulting structure is conceptually aligned with landmark modelling approaches, 21 which use repeated entry points and fixed prediction windows to capture evolving risk over time. Consistent with this framework, we did not model full longitudinal trajectories directly but instead focused on repeated baseline entries, mirroring how risk tools are reapplied in clinical practice. While more advanced time-series approaches could provide additional insights, these fall outside the scope of the present benchmarking study. To mitigate bias, we applied a consistent 5-year re-entry framework and constructed fixed 10-year prediction windows for each entry. We also incorporated an acceptance range for predictor and outcome definitions (e.g., a six-month margin) to accommodate potential underreporting or right censoring in EHRs. Given the long prediction horizon, small temporal gaps are unlikely to meaningfully affect the modelling of an individual's CVD risk trajectory.

We focused solely on CVD outcomes recorded in GP within primary care, consistent with our primary prevention goals where the aim is to estimate the risk of first incident events in order to guide early detection, management, and intervention. Mortality data can provide valuable supplementary information, but its inclusion is not essential for this benchmarking objective, which was designed to evaluate baseline prediction of incident CVD events.

Furthermore, our dataset is specifically constructed with a 10-year gap between predictors and outcomes to ensure robust temporal alignment. While this approach may result in lower classification performance compared to other studies, we observed strong calibration, which is more critical for ranking risk and ensuring actionable predictions.

We did not include individuals under the age of 25, despite the trend of earlier CVD onset, as we believe younger age group warrant separate analysis. Our study focuses on middle-aged individuals, where risk trajectories are more distinct and clinically relevant.

Despite their predictive advantages, ML-based models face practical limitations that hinder clinical integration. They are more susceptible to performance heterogeneity due to variations in hardware, software, and implementation, which can compromise reproducibility and deployment stability. 47 Their limited interpretability further reduces alignment with clinical requirements for transparency and accountability. As a result, conventional models remain more widely used in practice, as they are more stable, more comprehensible, and better suited to settings that require oversight and explainability. 48

Furthermore, there is no consensus among researchers, including ourselves, regarding model selection and the detailed technical methods. Identifying the optimal ML models and establishing baseline thresholds for risk scores necessitates collaboration between researchers and physicians within a regulated environment, guided by clearly defined standards or recommendations from authoritative bodies. In the UK, progress is being made in the AI healthcare sector, such as the TRIPOD/PROBAST work group. 49

Another significant limitation concerns model fairness. The CPRD is a primary dataset based in the UK, predominantly including non-Hispanic white individuals. 50 Although we tested the model on minor ethnicities, issues of fairness persist. It is essential to consider additional socio-economic position (SEP) variables during analysis to enhance the model's fairness. 32

External validation also poses a challenge. Conducting external validation using datasets from the same country is difficult because the CPRD largely represents the UK general population. Acquiring data from Europe or North America may not adequately account for minor ethnic groups. Datasets from Asia or Africa may be non-existent or of relatively poor quality and size, requiring significant adjustments to fit the original model. This limits the potential for genuine external validation. Open access platforms such as Kaggle 51 and UCI data repositories 52 could be beneficial, but currently, no datasets match the required structure and lack of features and CVD outcomes definition. This underscores the necessity for open-source datasets to allow researchers to externally test their models and ensure validity. 13

Access to advanced health technology remains a significant issue in less developed regions. 53 While EHR data sharing could benefit these areas, practical challenges in clinical settings persist. Advances in technology and reduced computational costs could facilitate the use of trained models at lower costs. However, it remains uncertain whether this technology will exacerbate health inequalities. Furthermore, there is a pressing need for an open platform that allows researchers to disseminate their findings and validate the work of others, ensuring transparency and replicability. Such a platform would facilitate the development of a consensus on the applicability of data-driven ML-based models in clinical practice.

Conclusion

This study presents a large-scale analysis of a comprehensive longitudinal EHR dataset, evaluating 10-year health risk prediction for approximately 500,000 CVD patients. We benchmark multiple ML-based survival models against traditional CoxPH models and the widely used QRISK3 score. Our findings demonstrate that ML-based survival models consistently outperform CoxPH, with ensemble-based methods achieving the highest AUC scores of 0.778 for females and 0.738 for males, surpassing NN-based models in both predictive performance and calibration. Given their strong discriminative power, stability, and partial interpretability, ensemble-based approaches emerge as preferable alternatives to NN models for clinical applications. Furthermore, we show that integrating pre-stratified individual risk into ML architectures enhances predictive accuracy, highlighting the value of refining rather than replacing established tools like QRISK3.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076251408534 - Supplemental material for Benchmarking survival machine learning models for 10-year cardiovascular disease risk prediction using large-scale electronic health records

Supplemental material, sj-docx-1-dhj-10.1177_20552076251408534 for Benchmarking survival machine learning models for 10-year cardiovascular disease risk prediction using large-scale electronic health records by Tianyi Liu, Andrew Krentz, Lei Lu, Yanzhong Wang and Vasa Curcin in DIGITAL HEALTH

Supplemental Material

sj-docx-2-dhj-10.1177_20552076251408534 - Supplemental material for Benchmarking survival machine learning models for 10-year cardiovascular disease risk prediction using large-scale electronic health records

Supplemental material, sj-docx-2-dhj-10.1177_20552076251408534 for Benchmarking survival machine learning models for 10-year cardiovascular disease risk prediction using large-scale electronic health records by Tianyi Liu, Andrew Krentz, Lei Lu, Yanzhong Wang and Vasa Curcin in DIGITAL HEALTH

Supplemental Material

sj-pdf-3-dhj-10.1177_20552076251408534 - Supplemental material for Benchmarking survival machine learning models for 10-year cardiovascular disease risk prediction using large-scale electronic health records

Supplemental material, sj-pdf-3-dhj-10.1177_20552076251408534 for Benchmarking survival machine learning models for 10-year cardiovascular disease risk prediction using large-scale electronic health records by Tianyi Liu, Andrew Krentz, Lei Lu, Yanzhong Wang and Vasa Curcin in DIGITAL HEALTH

Supplemental Material

sj-zip-4-dhj-10.1177_20552076251408534 - Supplemental material for Benchmarking survival machine learning models for 10-year cardiovascular disease risk prediction using large-scale electronic health records

Supplemental material, sj-zip-4-dhj-10.1177_20552076251408534 for Benchmarking survival machine learning models for 10-year cardiovascular disease risk prediction using large-scale electronic health records by Tianyi Liu, Andrew Krentz, Lei Lu, Yanzhong Wang and Vasa Curcin in DIGITAL HEALTH

Footnotes

Registration protocol and ethical approval

This work is based in part on CPRD Aurum data, with a protocol (No: 21_000346) that has been submitted to CPRD for ethics approval by its Independent Scientific Advisory Committee (ISAC) for research using anonymized patient data.

Patient consent and involvement

No patient consent was required for this study, and there was no patient involvement during the design, conduct, reporting, interpretation, or dissemination.

Contributorship

TL developed the machine learning model, performed statistical analysis, created the figures, and drafted the initial version of the manuscript with support from VC.

AK, LL and YW offered valuable feedback and revisions to the manuscript. All authors critically reviewed the initial version of the article and approved the final draft for publication.

Availability of data, code, and other materials

Funding

V.C. is supported by the Engineering and Physical Sciences Research Council (EPSRC)-funded King's Health Partners Digital Health Hub (EP/X030628/1). T.L. is a PhD student at KCL's DRIVE-Health CDT supported by Metadvice Ltd and the National Institute for Health Research (NIHR) Biomedical Research Centre based at Guy's and St Thomas’ NHS Foundation Trust and King's College London (IS-BRC-1215-20006). The views expressed are those of the author(s) and not necessarily those of the NHS, the NIHR or the Department of Health.

Engineering and Physical Sciences Research Council, Guy's & St Thomas' Foundation, (grant number EP/X030628/1, IS-BRC-1215-20006).

Conflicts of interest

AK is the Chief Medical Officer at Metadvice, a precision medicine technology company.

Guarantor

VC.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.