Abstract

Background

Alzheimer's disease (AD) poses a significant public health challenge to China's aging population. Patients and their families increasingly turn to short-video platforms such as Douyin and Bilibili for information. However, there is currently a lack of systematic analysis regarding the quality and reliability of advertising content on these platforms, creating a critical gap in understanding this emerging information ecosystem.

Aim

Systematically evaluate the quality and reliability of videos on Douyin and Bilibili, analyzing the relationship between content themes, upload sources, and user engagement metrics.

Methods

Using “Alzheimer's disease” as the keyword, we retrieved the top 100 videos from multiple platforms. Videos were categorized by uploader type and content. Two qualified researchers assessed their reliability and quality using the JAMA, the modified DISCERN instrument (mDISCERN), and Global Quality Score (GQS) scale. Data analysis employed nonparametric statistical methods. Apply relevance and logistic regression analysis to discuss factors that may influence video quality.

Results

This study analyzed a total of 171 videos. Results indicate that compared to Douyin, videos on the Bilibili platform scored higher across multiple quality evaluation metrics (GQS: 2.0(1.0–2.0) vs 1.0(1.0–2.0); mDISCERN: 2.0(2.0–2.0) vs. 2.0(2.0–2.0); JAMA: 2.0(1.0–2.0) vs. 1.0 (1.0–2.0); p < 0.001). This disparity may be attributed to Bilibili's longer video format, which allows for more in-depth content, and its user base that tends to favor detailed, knowledge-oriented media. Regarding uploader identity, videos posted by professionals (e.g. physicians) demonstrated superior quality compared to nonprofessional sources (e.g. patients). However, patient-uploaded videos exhibited stronger engagement metrics (e.g. likes, comments). Content-wise, videos focusing on disease prevention and treatment consistently achieved the highest overall quality (all comparisons p < 0.05). Correlation analysis indicated that while interaction metrics showed strong internal correlations, they did not significantly correlate with JAMA, mDISCERN, or GQS scores. Ordered logistic regression analysis indicates that uploader identity, content classification, and presentation format are the three key factors influencing video quality.

Conclusion

This study reveals a pronounced “quality-dissemination paradox” in AD content across mainstream short-video platforms: While scientifically rigorous content published by medical professionals receives high quality ratings, it significantly underperforms in user engagement metrics compared to nonprofessional content centered on patient narratives and lived experiences. This highlights a severe disconnect between scientific rigor and public participation within algorithmic dissemination ecosystems. To address this, platforms should optimize algorithms to enhance the visibility of authoritative content, encourage collaboration between professional and nonprofessional creators to boost content appeal, and strengthen health media literacy education for the public—particularly older adults—to improve their ability to discern information.

Introduction

Alzheimer's disease (AD), the most common type of dementia, is posing an increasingly severe public health challenge to Chinese society against the backdrop of a rapidly aging population. A recent systematic review indicates that as of 2023, the overall prevalence of AD among the elderly population in China was approximately 3.48%, with an incidence rate of 7.90 per 1000 person-years. 1 Other studies suggest that the prevalence of AD in adults aged 60 and above is around 3.20%, rising markedly to 12.04% among those aged over 80. 2 A broader population-based estimate places the AD prevalence at approximately 5% (95% CI: 4%–6%). 3 It is projected that by 2035, the number of people aged 60 and above in China will exceed 400 million, 4 suggesting that this demographic transition will lead to a continually increasing burden of AD. Beyond the loss of individual cognitive function, AD imposes a substantial social and economic burden. A meta-analysis indicates that the current annual economic cost per person with dementia in China is approximately ¥20,893 (US $3104), 5 while the total annual cost of dementia in the country has risen exponentially since 1990—from US $0.9 billion to a projected US $114.2 billion by 2030. 6 In addition to direct medical expenses, caregiving expenditures, productivity losses, and the opportunity costs borne by families further amplify this societal burden. Against this backdrop, China is rapidly developing what is termed the “silver economy,” 4 encompassing industries such as medical care, wellness, nursing, and health consumption, which represent a large and expanding market. Therefore, enhancing public awareness of AD, improving early identification, and promoting scientifically informed care are not only medical imperatives but also critical to the national health strategy and sustainable socioeconomic development.

However, public awareness of AD remains inadequate. Many families often dismiss early memory decline as “normal aging” or lack a clear understanding of the irreversible progression of the disease, perceiving AD merely as a distant threat. 7 Within China's high-pressure healthcare system, limited doctor–patient communication time often leaves caregivers without access to systematic health education or scientific guidance. Consequently, the public increasingly relies on online health resources, with short-form video platforms becoming a major channel for medical information against the backdrop of widespread smartphone adoption. 8 This trend underscores the growing influence of digital platforms in shaping health perceptions and behaviors. As of June 2025, the number of internet users in China had reached 1.123 billion, representing an internet penetration rate of 79.7%. 4 Within this digital landscape, platforms such as Douyin and Bilibili have become instrumental in health communication. Douyin, which is algorithm-driven and emphasizes short, intuitive, and immediate content delivery, enjoys broad reach among middle-aged and older audiences. In contrast, Bilibili, known for its longer video formats and strong community interaction, is particularly popular among younger users and professional content creators. 9 Compared to text-based formats, short videos simplify complex medical issues through audiovisual integration, improving comprehension and retention—particularly critical for audiences such as AD patients and their families. However, Douyin's algorithm tends to promote ts, short videos simplify complex medical issues through audiovisual integration, improving comprehensiBilibili's community-driven nature and coin-based reward mechanism may incentivize clickbait titles and sensationalized presentations, resulting in inconsistent quality of health information. Previous studies have identified widespread deficiencies in professional rigor and evidence-based support in short videos related to conditions such as migraine, 10 cancer 9 ankle sprains, 11 and other illnesses.

There remains a lack of systematic analysis in the current literature specifically addressing the quality and reliability of AD-related videos in China. This gap is critical, as understanding the current information ecosystem is the first step toward improving it. This study therefore aims to perform a cross-sectional evaluation of the accuracy and comprehensiveness of AD-related videos on Douyin and Bilibili. We seek to examine how source types influence content quality and offer practical recommendations for improving the standard of online medical communication, thereby supporting public health education and informing policy development.

Search strategy and data collection

In this cross-sectional investigation focusing on AD-related content, we collected publicly available short videos from two major Chinese platforms—Douyin (China's counterpart to TikTok, http://www.douyin.com) and Bilibili (http://www.Bilibili.com). A detailed introduction of these platforms, including their founding date, general description, and user interaction patterns, is available in Supplementary Table 1.

Video retrieval was carried out on 22 August 2025, in Tianjin, China, using a MECHREVO computer running Windows 11 (Version 24H2) and Microsoft Edge browser (Version 139.0.3405.111). To minimize the potential impact of algorithmic personalization, all browser history, cached files, cookies, and autocomplete data were thoroughly cleared before initiating the search. Since Bilibili does not require login for basic video access, no user account was used. For Douyin, although login is not mandatory for viewing, we employed a new account registered with an unused mobile number to ensure no prior usage data influenced content display.

The search term “阿尔兹海默症” (“Alzheimer's Disease” in Chinese) was used to retrieve the top 100 videos by default ranking on each platform. Previous studies have confirmed that content beyond the top 100 entries on such platforms has minimal impact on analytical results, as the highest-ranked videos sufficiently represent the most accessible and viewed content in the target domain.12,13 To ensure the relevance and quality of the collected videos, the following exclusion criteria were rigorously applied: (1) explicit advertisements or promotional content; (2) videos not substantially related to AD; (3) content delivered in languages other than Mandarin Chinese (including dialects) and (4) duplicate videos within the same platform.

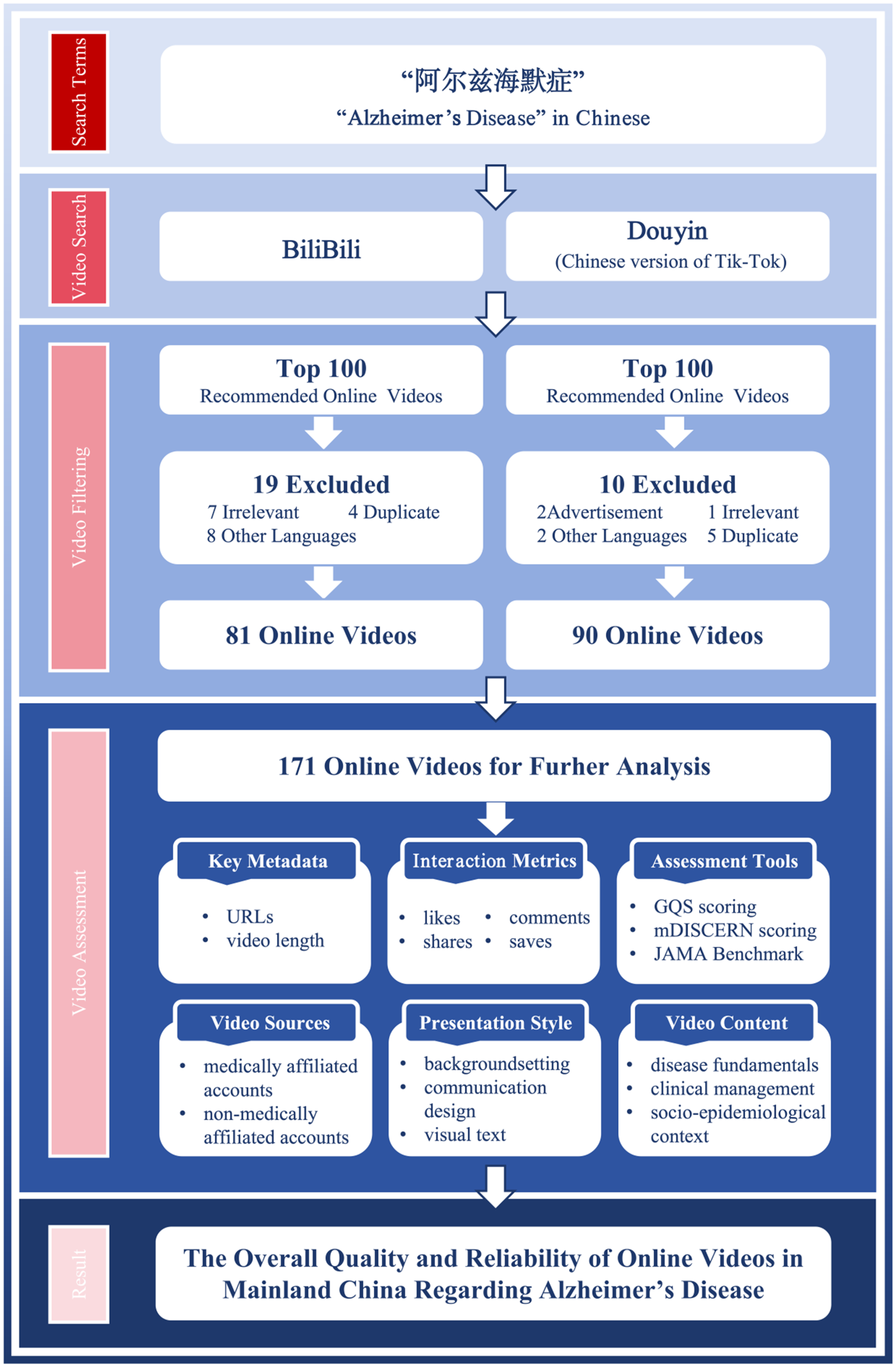

A final corpus of 171 videos was included for in-depth analysis following a structured screening process. The exclusion criteria led to the removal of 29 videos in total, comprising 2 that were advertisement-related, 8 that were off-topic, 10 presented in non-Mandarin languages and 9 duplicates. From each video, key metadata were extracted, including URL, duration, and audience interaction metrics (such as likes, comments, shares, and saves). All collected data were systematically logged into Tencent Docs spreadsheets to facilitate real-time collaboration and ensure data consistency. The complete video screening and inclusion process is depicted in Figure 1 using a detailed flowchart.

Search strategies and video screening process for Alzheimer's disease.

Classification of videos

Video sources were classified primarily based on the professional background of the uploader, categorizing them into two overarching groups: (1) medically affiliated and (2) nonmedically affiliated accounts. The medically affiliated group was further subdivided into: (1) neurologists, (2) non-neurologist clinical physicians, (3) medical students, and (4) hospitals & public health organizations. The nonmedically affiliated category included: (1) other public service organizations, (2) science communicators, and (3) patients & their families. A full description of each category is available in Supplementary Table 2.

The video presentation style was analyzed across three key aspects: background setting, communication design, and use of text. Background settings were classified as: (1) hospital settings, (2) nonhospital settings, or (3) virtual backgrounds, following the criteria outlined in Supplementary Table 3. Video communication design was assessed based on its visual and auditory components, with detailed classifications provided in Supplementary Table 4. Visual communication was defined as the method used to present information about AD and categorized as follows: (1) text-based presentation, (2) static schematic graphics, or (3) 2D/3D animation. Auditory design was classified as: (1) background ambience, (2) AI voiceover, or (3) human voiceover. Additionally, the presence of a physician in the video was noted as either (1) physician presence or (2) no physician presence. Visual text was categorized simply as: (1) subtitled or (2) nonsubtitled. Detailed criteria for these classifications are provided in Supplementary Table 5.

Video content was evaluated across three primary thematic dimensions: disease fundamentals, clinical management, and socioepidemiological context. The first dimension, disease fundamentals, encompassed the nature of AD, including its etiology, pathophysiology, and symptomatology, as well as established diagnostic criteria. The second dimension, clinical management, covered interventions and supportive care strategies. This included treatment options (both pharmacological and surgical approaches), detection and examination methods (e.g. cognitive tests, biomarker assays), and nursing practices. The third dimension, socioepidemiological context, addressed population-level and psychosocial aspects, such as incidence rates, prevention strategies, and portrayals of daily living experiences of individuals with AD. All content falling within these dimensions was systematically documented for subsequent analysis.

Video assessment

Two qualified researchers (Jingyu Li and Xinyi Xu), each with a solid background in medical education, independently evaluated all videos using three established assessment tools: the Global Quality Score (GQS), the modified DISCERN instrument (mDISCERN), and the JAMA Benchmark criteria (JAMA). The GQS primarily evaluates the overall quality of videos, taking into account both the general video quality and its usefulness to patients. mDISCERN is used to assess the credibility of video information, while JAMA evaluates the accuracy of the information. Together, these three components form a comprehensive evaluation chain spanning production standards, content depth, and user experience. Detailed evaluation methods are provided in Supplementary Tables 5–8. In case of disagreement, it should be resolved through discussion or negotiation with the third researcher—a specialist in neurodegenerative diseases (Lan Zhao). Prior to formal scoring, the three raters conducted a comprehensive review of the questionnaires to mitigate potential biases stemming from misinterpretation of the assessment tools.

Statistical analyses

Given the nonparametric nature of the data distribution, these values will be reported as the median and interquartile range (IQR). For paired group comparisons, the Mann–Whitney test will be applied. When comparing three or more groups, the Kruskal–Wallis H test will be used. Categorical variables were assessed for significance using chi-square tests or Fisher's exact tests. To assess inter-rater reliability, we calculated Cohen's Kappa coefficient. Through Spearman's correlation analysis and ordered logistic regression analysis, we investigated the relationships among quality assessment metrics, reliability parameters, and fundamental characteristics. All data analysis was performed using R (version 4.2.3), primarily relying on the gtsummary package (version 2.0.4) for data summarization and statistical inference. Hypothesis testing was conducted using two-tailed tests, with a significance level set at l = 0.05. A p-value < 0.05 was considered statistically significant.

Results

Video characteristics

Two researchers demonstrated excellent agreement on the JAMA, GQS, and mDISCERN scores, with Cohen's kappa values of 0.934, 0.859, and 0.844, respectively. As summarized in Table 1, a total of 171 short videos were included in the analysis and the median (IQR) number of video duration, likes, comments, saves and shares of these videos were 207.0 (IQR:122.0–286.0), 6519.0 (IQR:655.0–28,000.0), 436.0 (IQR:63.0–1499.0), 763.0 (IQR:164.0–2392.0) and 1027.0 (IQR:119.0–3836.0) respectively. Significant differences were observed in the indicators among different platforms (all p < 0.001). Although videos on Douyin were notably shorter (median duration of 159 s), the platform demonstrated substantially higher performance across all interaction metrics, including likes (14,000), comments (1169), saves (866), and shares (2448), compared to Bilibili.

Characteristics of videos on Douyin and Bilibili.

Overall, nonmedically affiliated accounts (63.7%) contributed more videos than medically affiliated sources (36.3%). Among uploaders, Patients and their families (35.7%) posted the most frequently, followed by science communicators (21.6%), non-neurologist clinical physicians (21.1%), neurologists (10.5%), other public service organizations (6.4%), hospitals and public health organizations (2.9%), and medical students (1.8%), as shown in Figure 2.

Distribution of uploader types for Alzheimer's disease-related videos. (A) The pie chart shows the overall distribution of uploader types across all platforms (n = 171). (B) The stacked percentage bar chart compares the distribution differences among various uploader types across the Bilibili and Douyin platforms.

Regarding visual background environment, 22.8% of videos were filmed in hospital settings, 69.6% in nonhospital settings, and 7.6% used a virtual background. For Visual Communication Design, 5.8% incorporated 2D/3D Animation, 32.2% employed Static Schematic Graphics, and 62.0% used Text-Based Presentation only. In terms of Auditory Communication Design, 87.8% featured Human Voiceover and 15.2% used AI Voiceover. Physician presence was observed in 36.3% of the videos, while 63.7% had No Physician Presence. All evaluated videos contained subtitles.

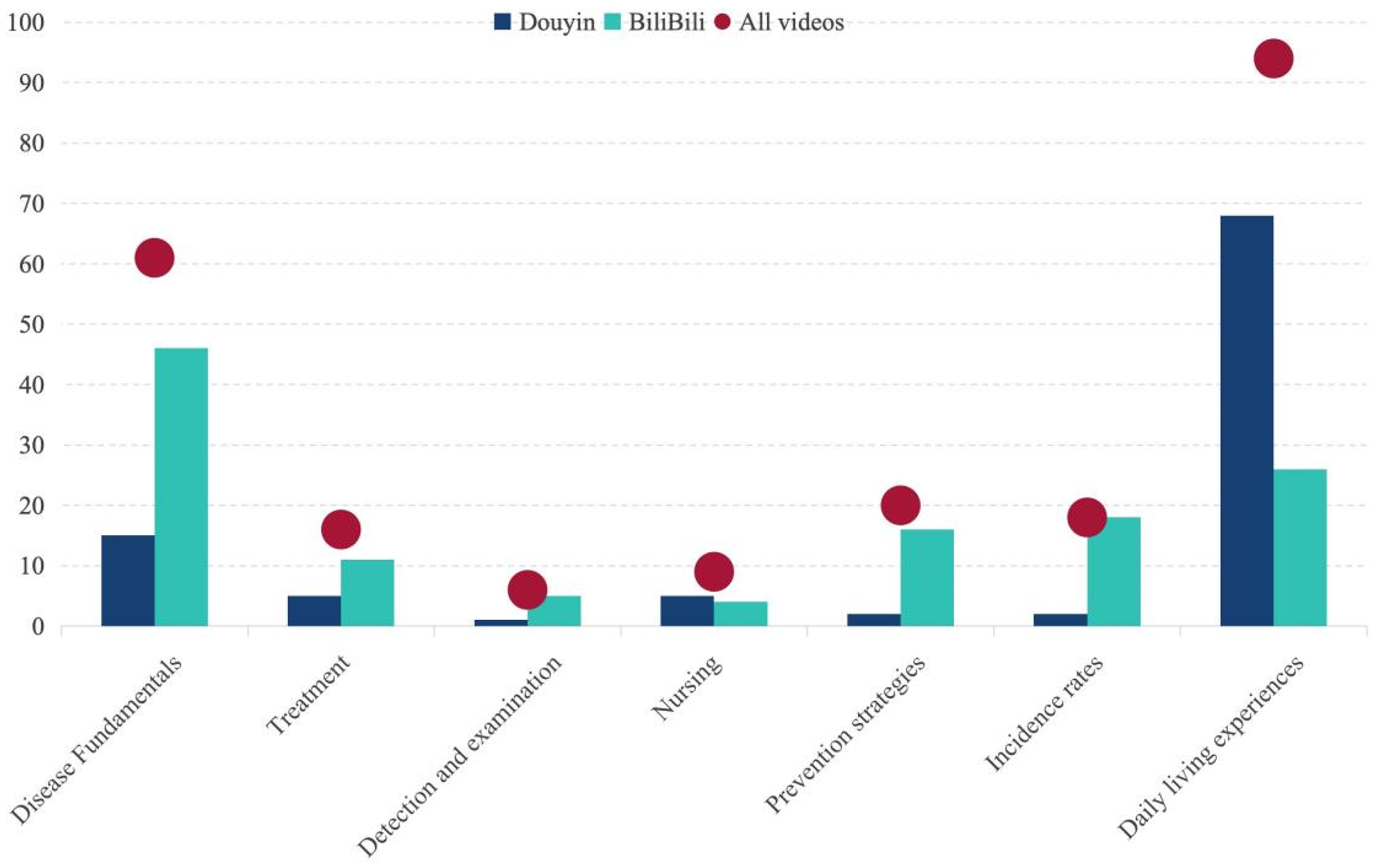

In thematic analysis, 32.7% of videos addressed disease fundamentals, and 9.9% covered aspects of clinical management. Among the latter, treatment options were discussed in 9.4%, detection and examination methods in 3.5%, and home-based caregiving practices in 4.7%. Additionally, 57.3% of videos included socioepidemiological context, with 11.7% mentioning incidence rates, 10.5% covering prevention strategies, and 55.0% portraying daily living experiences of individuals with AD.

The overall sample analysis showed that the video quality and reliability were at a moderately low level: the median score for JAMA was 1.00 (1.00–2.00), the median score for mDISCERN was 2.0(2.0–2.0), and the median score for GQS was 2.0(1.0–3.0); Cross-platform comparisons revealed that Douyin scored lower than Bilibili in both JAMA and GQS evaluation dimensions, with all p < 0.05. Although the median and IQR for the mDISCERN score were identical for both platforms (2.0(2.0–2.0)), the Mann–Whitney U test indicated a statistically significant difference in their overall distributions (p = 0.007). This suggests that while the central tendency of mDISCERN scores was similar, the score distributions between the two platforms were not identical.

Comparison of uploaders on different platforms

To gain a deeper understanding of these short videos, we conducted a comparison based on the uploaders’ identities (Table 2). All short videos included in the analysis (n = 171) were divided into seven groups: neurologists (n = 18), non-neurologist clinical physicians (n = 36), medical students (n = 3), hospitals and public health organizations (n = 5), other public service organizations (n = 11), science communicators (n = 37), and patients and their families (n = 61). The results of the Kruskal–Wallis test showed that videos uploaded by different types of uploaders exhibited statistically significant differences in user interaction metrics (likes, comments, saves, shares), video duration, and all three information quality scores (all p-values < 0.05). Detailed data are presented in Table 2.

Video features included by uploader.

In terms of user interaction metrics, the identity of the uploader significantly influenced the level of user interaction. Videos uploaded by patients and their families received the highest number of likes (median = 16,000) and comments (median = 1240). Videos uploaded by science communicators received the highest number of saves (median = 1792), while videos uploaded by other public service organizations received the highest number of shares (median = 2309). In contrast, videos posted by neurologists and non-neurologist clinicians had lower levels of all engagement metrics.

Video duration also varied across different types of posters (p = 0.024). Videos posted by medical students had the longest duration (median = 665 s), while videos posted by neurologists were the shortest (median = 87 s). Regarding video quality and reliability, the uploader's professional background was highly correlated with information quality. Videos posted by neurologists, non-neurologist clinicians, and hospitals and public health organizations performed best on the JAMA score (median = 2). Videos posted by medical students scored highest on the mDISCERN and GQS scores (median = 3 and 3, respectively), but due to the small sample size (n = 3), these results should be interpreted with caution. Videos posted by patients and their families consistently received the lowest scores across all three quality metrics (median = 1 for JAMA and mDISCERN, median = 1 for GQS), indicating poor information reliability and overall quality. Videos posted by science communicators also had relatively low quality scores.

Video content analysis

In Figure 3, video content was first classified into three broad thematic categories: disease fundamentals (n = 58), clinical management (n = 16), and socioepidemiological context (n = 97). These categories were further broken down into seven specific content themes to capture more nuanced informational emphasis: disease fundamentals (n = 61), treatment (n = 16), detection and examination (n = 6), nursing (n = 9), daily living experience (n = 94), prevention strategies (n = 18), and incidence rates (n = 20).

Information about AD-related video content from Bilibili and Douyin. AD: Alzheimer's disease.

The results of the Kruskal–Wallis test showed that videos across different themes exhibited statistically significant differences in the majority of user interaction metrics, video duration, and quality scores (p < 0.05), with the exception of the “saves” category, where intergroup differences did not reach statistical significance (p = 0.216). Detailed data are presented in Table 3.

Video content characteristics on Bilibili and Douyin.

In terms of user interaction, videos with the theme “daily living experience” performed the most prominently, with median values for likes (median = 15,000), comments (median = 1169), and shares (median = 1990.0) significantly higher than all other theme categories, and the differences were highly statistically significant (p < 0.001). In contrast, videos under the “treatment” and “examination and diagnosis” themes had relatively lower interaction metrics. Notably, while the overall between-group difference in ‘saves’ counts did not reach statistical significance (p = 0.216), videos under the “incidence rate” theme had the highest median “saves” count (2208.0). There were significant differences in video duration across themes (p < 0.001). Videos under the “incidence rates” (median = 490 s) and “prevention strategies” (median = 301 s) themes were typically the longest in duration and contained more detailed content. In contrast, videos under the “nursing” and “daily living experience” themes were shorter in duration, with medians below 210 s.

Video themes were significantly correlated with information quality scores (p < 0.001). A notable trend was that videos under the “Daily living experience” theme received the lowest scores across all three quality assessments (JAMA, mDISCERN, GQS), with medians of 1.00 or 2.00, indicating poor information reliability and overall quality. Conversely, videos on the “Prevention strategies” and “Incidence rates” themes received the highest quality scores, particularly in the GQS score (median = 4.00), indicating that these videos have higher structural, design, and information quality. Videos on the “Treatment” theme also scored higher in the mDISCERN score (median = 3.00), indicating better information credibility.

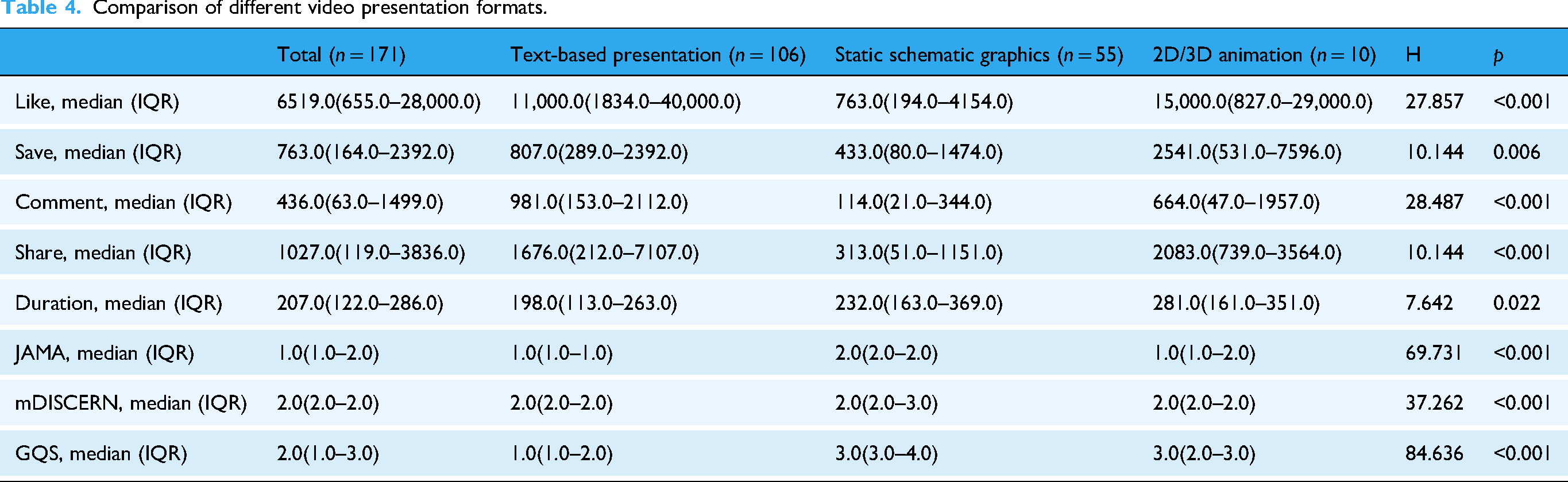

Comparison of different video presentation formats

Based on the presentation style of the videos, the 171 videos were divided into the following three groups: text-based presentations (n = 106), static schematic graphics (n = 55), and 2D/3D animations (n = 10). The differences in user interaction metrics, video duration, and quality scores among the groups are shown in Table 4. In terms of user interaction metrics, text-dominated videos performed the best in terms of likes, comments, and shares, with median values significantly higher than the other two groups. 2D/3D animated videos had the highest number of saves. In contrast, static diagram videos had the lowest median values for all four interaction metrics.

Comparison of different video presentation formats.

In terms of video duration, there were significant differences among the three groups (p = 0.022). 2D/3D animated videos had the longest duration (median: 281.00), followed by static diagram-based videos (median: 232.00), while text-dominant videos had the shortest duration (median: 198.00).

In terms of information quality evaluation, all three scoring criteria (JAMA, mDISCERN, GQS) showed highly statistically significant differences between groups (p < 0.001). Static diagram-type videos scored the highest in all quality scores (JAMA: 2.0(2.0–2.0); mDISCERN: 2.0(2.0–3.0); GQS: 3.0(3.00–4.0)), indicating the best information quality, reliability, and overall production standards. Text-dominant videos scored the lowest (JAMA: 1.0(1.0–1.0); mDISCERN: 2.0(2.0–2.00); GQS: 1.0(1.0–2.0)). The GQS score for 2D/3D animated videos (median: 3.0 (2.0–3.0)) was higher than that for text-dominated videos but comparable to that for static diagram-based videos.

Correlation analysis and regression analysis

Ordered logistic regression analysis reveals that uploader identity, content classification, and presentation format are key factors influencing video quality. Ordered logistic regression results (Table 5) revealed that uploader identity, content classification, and presentation format were significant predictors of video quality, albeit with varying effects across the three evaluation metrics (JAMA, mDISCERN, GQS). Uploader Identity: Compared to the reference group (Science Communicators), videos uploaded by medical professionals—including Neurologists (JAMA: β = 1.536, p = 0.014), Non-Neurologist Clinical Physicians (JAMA: N = 1.378, p = 0.012), Medical Students (JAMA: β = 2.333, p = 0.008), and Hospitals & Public Health Organizations (JAMA: β = 1.831, p = 0.018)—were associated with significantly higher JAMA scores, indicating superior 342 information accuracy. Conversely, videos from Patients and Their Families were significantly associated with lower GQS scores (β = −0.718, p = 0.019), reflecting poorer overall video quality. Content Classification: Videos focusing on Socio-epidemiological context (β = −1.459, p < 0.001) and Clinical management (l = −0.881, p = 0.014) were significantly associated with lower GQS scores compared to those on Disease fundamentals, suggesting these themes were linked to inferior production quality and user experience. Presentation Format: The use of Static Schematic Graphics was a strong positive predictor across all three quality metrics (JAMA: β = 1.011, p = 0.003; mDISCERN: I = 1.026, p = 0.001; GQS: E = 0.849, p = 0.003), underscoring its association with higher information accuracy, credibility, and overall quality compared to simple Text-based presentations. Additionally, videos featuring a hospital setting (β = 0.935, p = 0.049) or employing an AI Voiceover (w = 0.746, p = 0.024) were associated with higher GQS scores, while the absence of a physician in the video was linked to significantly lower GQS scores (β = −0.07, p = 0.038).

Ordered logistic regression analysis of factors affecting JAMA, GQS and mDISCERN.

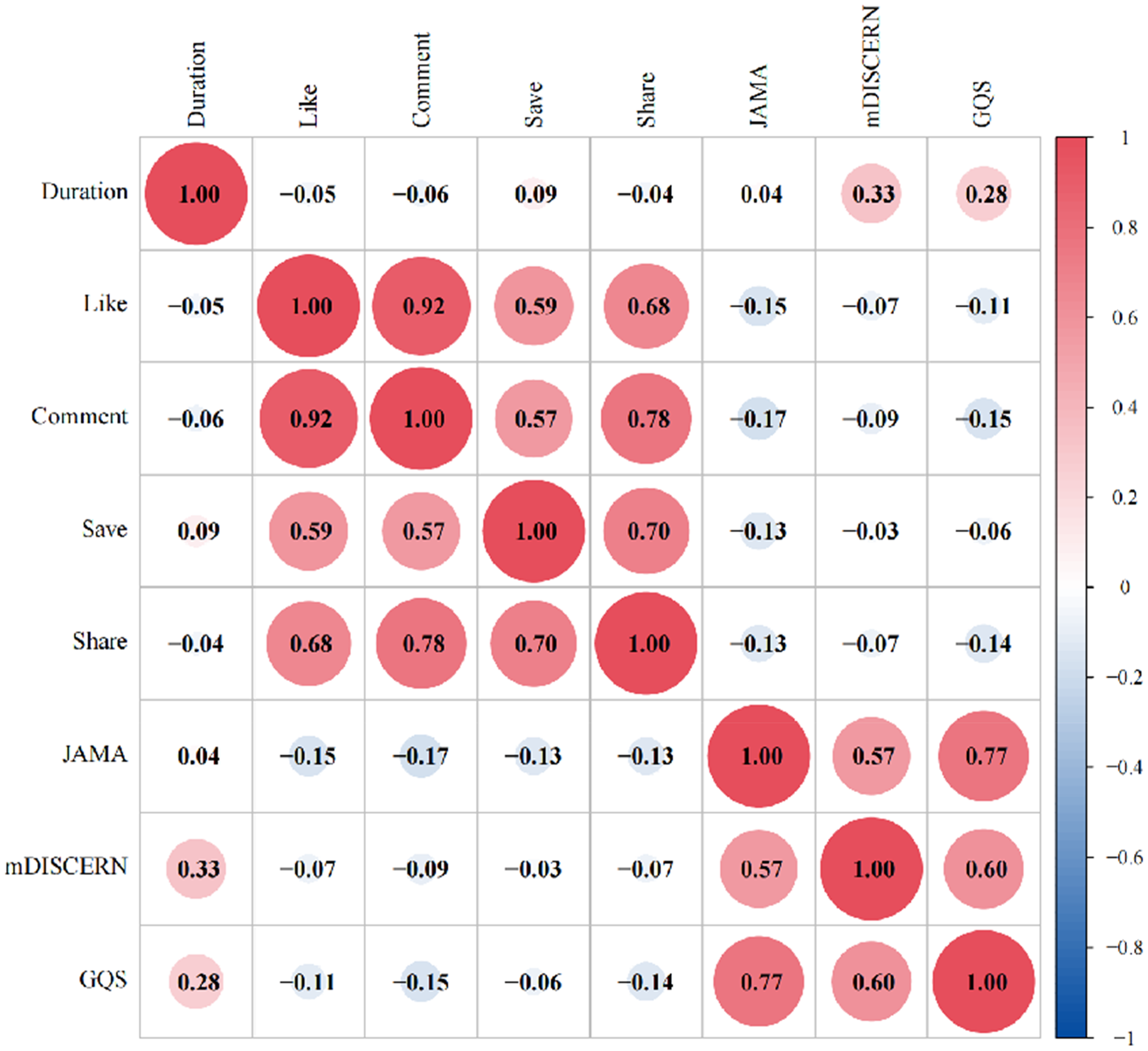

The correlation analysis is shown in Figure 4, there is a significant strong correlation between the JAMA score and GQS (r = 0.77, p < 0.001), a significant strong correlation with mDISCERN (r = 0.57, p < 0.001), and correlation coefficients with short video likes, comments, saves, shares, and duration of −0.15, −0.17, −0.13, −0.13, and 0.04, respectively. There is a significant strong correlation between mDISCERN and GQS scores (r = 0.60, p < 0.001), with correlation coefficients for short video likes, comments, saves, shares, and duration of −0.11, −0.15, −0.06, −0.14, and 0.28, respectively. The correlation coefficients between mDISCERN scores and short video likes, comments, saves, shares, and duration were −0.07, −0.09, −0.03, and −0.07, respectively. The correlation coefficients between short video duration and likes, comments, saves, and shares were −0.05, −0.06, 0.09, and −0.04, respectively. The correlation coefficients between shares and likes, comments, and saves are 0.68, 0.78, and 0.70, respectively. The correlation coefficients between saves and likes, and saves and comments are 0.59 and 0.47, respectively. The correlation coefficient between likes and comments is 0.92.

Spearman correlation analysis between AD video variables, GQS, JAMA, and mDISCERN scores. GQS: Global Quality Score; AD: Alzheimer's disease.

Discussion

Since 1999, China has recognized the impact of dementia and has gradually developed policies to address it. The National Action Plan for Responding to Dementia (2024–2030), issued by China's National Health Commission, explicitly identifies reducing the growth rate of dementia prevalence among older adults as a key indicator of elderly care services, underscoring the elevated importance of dementia prevention and control within national policy. 14 However, while policy efforts advance, patients and families increasingly turn to online platforms for information and support. Although “brain fog” is not a clinical term for AD, it offers a vivid metaphor for the cognitive confusion experienced by patients. This study examines a parallel phenomenon: an “info fog” of low-quality and misleading video content that deepens the challenges confronted by individuals living with AD and their families. In this context, ensuring the reliability of widely accessible health information becomes not only a clinical concern but also an emerging public health priority.

Overall video evaluation

Our study indicates that the overall information quality and reliability of online AD-related videos in China range from failing to poor, with significant heterogeneity and critical shortcomings observed. The median GQS score was 2.0 (range: 1–3), with only 14.6% of videos meeting the high-quality threshold (GQS ≥ 4). The median mDISCERN score was 2.0 (range: 2–2), reflecting generally moderate reliability, and merely 8.2% of videos were rated as reliable (mDISCERN ≥ 4). The median JAMA benchmark score was 1.0 (range: 1–2), with no video meeting the reliability standard (JAMA ≥ 4). These results reveal serious deficiencies in authorship transparency, attribution, currency, and disclosure practices, substantially undermining the credibility of the information presented. In summary, our analysis highlights considerable room for quality improvement in widely circulated health education short videos. There is an urgent need to develop content standards, enhance creator training, and establish authoritative review mechanisms to improve reliability and overall quality, thereby better serving public health literacy.

Our study identified a significant gap in the current public communication landscape concerning AD. Although public awareness is considerable, support from national policies and higher-level healthcare systems for disseminating information on this topic remains inadequate. This pattern is reflected in our data: videos from medically affiliated accounts constituted only 36% of the sample, a proportion similar to that of videos produced by patients and their families. Notably, nonmedically affiliated accounts consistently achieved higher user engagement across all metrics—videos from patients and their families received the most likes and comments, those from science communicators garnered the most saves, and content from other public service organizations received the highest number of shares. This finding contradicts intuitive expectations, as medical accounts, with their professional authority, would be expected to attract more public recognition and interaction—a view consistent with previous studies indicating public preference for content from publishers with medical backgrounds. 15 Yet, our results demonstrated the opposite. The same pattern has been observed in a recent evaluation of YouTube as an educational resource for AD, which similarly reported that videos produced by healthcare professionals and institutional sources—despite their higher reliability and informational quality—did not achieve higher engagement than those from nonprofessional creators. 16 At the same time, videos posted by medically affiliated accounts were significantly superior in both quality and reliability compared to those from patients and their families as well as science communicators—an anticipated outcome which is consistent with previous studies. 17 Further correlation analysis revealed weak negative correlations between established evaluation metrics (JAMA scores, GQS, and mDISCERN) and engagement indicators (likes, comments, saves, and shares). Collectively, these results underscore a compelling paradox: more scientific and reliable content did not lead to broader public engagement; instead, nonprofessional sources, despite their relatively lower rigor, exhibited stronger communicative appeal. Previous studies have reported similar findings: while public engagement metrics are often positively correlated with each other, their relationship with quality ratings remains inconsistent. For instance, research on knee osteoarthritis educational content found no significant correlation 18 ; studies on ankle sprains 11 and migraine treatment 10 indicated weak positive correlations; and work on atopic dermatitis demonstrated a more distinct positive association. 19 Furthermore, our research revealed that online AD-related videos are highly diverse in content; however, those focusing on “daily living experiences” were the most prevalent. Notably, this category of content garnered significantly higher likes, comments, and shares compared to other types. Nevertheless, these videos received the lowest scores across quality assessment metrics such as JAMA, mDISCERN, and GQS. Unsurprisingly, the majority of these videos were created by patients and their families.

Media-communication lens: the paradox of video quality vs. engagement

This phenomenon may be attributed to several factors. First, professional medical content often employs technical language and follows a formally structured narrative, whereas nonmedical creators tend to use emotional storytelling and personal experiences—strategies that foster a stronger sense of connection. Second, algorithmic recommendation mechanisms likely prioritize “popularity” and “engagement” over informational accuracy and public value, thereby systematically promoting content that is emotionally charged or entertaining. Furthermore, the general public's foundational understanding of AD remains limited, which may lead individuals to place greater trust in intuitive personal accounts rather than in abstract medical explanations.

This engagement can be better understood through the lens of media communication theory. According to uses and gratifications theory, audiences actively select content that meets psychological and social needs, such as emotional resonance, identification, and social belonging, 20 rather than seeking purely informational accuracy. Patient-generated narratives, which emphasize shared experiences and emotional authenticity, therefore align more closely with audience motivations than do fact-dense clinical explanations. 21 Moreover, cognitive load theory suggests that highly technical or information-heavy videos may exceed viewers’ working memory capacity and discourage sustained engagement. 22 In contrast, applying narrative frames or segmented storytelling can reduce extraneous cognitive load and enhance comprehension. 23 In addition, social proof theory explains how content accumulating likes, shares, or comments is perceived as more credible and relevant—regardless of actual quality—by signaling social consensus and endorsement. 24 Visible engagement metrics act as heuristics that invite trust. Once a patient story gains early engagement, algorithms interpret this as a signal of user preference, further boosting its visibility and creating a self-reinforcing cycle. 25

Our study also identified substantial thematic disparities beyond the quality-engagement gap. Notably, several cutting-edge areas emphasized in clinical practice and academia were largely absent from high-engagement videos. These omissions included AI applications in AD and related brain disorders,26–29 novel drug-based therapies such as targeted nanomedicine,30–32 multi-omics technologies, 33 and even traditional Chinese medicine interventions for AD.34,35 The specialized nature of these topics may explain why they are valued by medical professionals yet overlooked by the public and general content creators. This disconnect can be understood through established communication theories. From the perspective of uses and gratifications theory, these technical subjects often lack the immediate emotional resonance that drives audience engagement. Furthermore, cognitive load theory suggests their complexity may overwhelm viewers’ working memory, reducing appeal to a general audience. Despite these challenges, these innovative research areas hold significant potential for public engagement if communicated effectively. Bridging this gap between clinical advancement and public understanding represents a promising and underexplored direction for future health communication efforts.

Video presentation style

Our study examined various aspects of video presentation styles. The majority of videos (69.6%) were filmed in nonhospital settings as background environments, while only 22.8% used hospital settings. Additionally, physician presence within the video was observed in 36.3% of the samples. These findings align with the relatively low proportion of videos from medically affiliated sources (36%) identified in our sample. In terms of the method used to present information about AD, the majority of videos (62.0%) relied primarily on verbal narration to convey information. A smaller segment incorporated static schematic graphics, and only 5.8% employed 2D/3D animation. The use of schematic graphics and animations can, to some extent, enrich audiovisual content and enhance the viewing experience. In our study, such professionally produced visual content was predominantly published by physicians, with only one example originating from videos created by patients and their families. Regarding auditory communication design, the vast majority of videos (87.8%) used a human voiceover, while a smaller proportion (15.2%) adopted an AI-generated voiceover. Evidence suggests that although AI-synthesized voice technology has become increasingly prevalent, genuine human voices are often perceived as more authentic and approachable. 36 While high-quality synthetic voices have achieved considerable naturalness, they still slightly underperform in conveying authenticity and emotional nuancerich audiovisual content and enhance the vinship or caregiving. 37 This suggests that in online healthcare videos, the use of human voiceovers may be more effective in establishing a sense of closeness with the audience. The same principle applies to the visible presence of physicians in videos: when a doctor appears on screen and speaks in their own voice, it simulates a face-to-face communication dynamic. Empirical research further suggests that the visual presence of an instructor not only enhances social presence and learner engagement but also helps modulate cognitive load—that is, the mental effort required to process information. 38 This is particularly beneficial for individuals with lower working memory capacity, who show greater reliance on nonverbal cues from the speaker. 39 Given that individuals with AD often exhibit reduced cognitive capacity, this audience may derive particular benefit from such presenter-guided video communication. It is also worth noting that all videos included in our analysis incorporated subtitles, which is an encouraging finding. Subtitles improve accessibility for viewers with hearing impairments, 40 aid comprehension of complex information, facilitate understanding in sound-sensitive or noise-prone environments, and enhance overall information retention and engagement. 41

Reflections from an international perspective

While this study focuses on the Chinese short-video ecosystem, the dynamics it reveals cannot be analyzed in isolation. The dissemination of medical information on social media is a global challenge, shaped by intersecting factors of platform architecture, regulatory governance, audience culture, and algorithmic priorities.

A key difference across platforms concerns content provenance and verification. Platforms such as YouTube and Facebook feature a higher proportion of institutional or organizational accounts, which are often affiliated with hospitals, universities, or public health agencies. This association is linked to a higher average quality of content. However, the absence of systematic pre-publication review still allows inaccurate or misleading content to circulate widely, including videos with incomplete or outdated information.42,43 By contrast, short-video platforms such as TikTok and Instagram Reels prioritize brevity and virality, often elevating user-generated narratives that emphasize personal experience over evidence-based communication. 44 These structural features contribute to significant variability in content quality across platforms and complicate efforts to establish standardized information quality benchmarks. Cultural and demographic context modulates which credibility cues are weighted, but effects vary by platform and audience. Cross-national work shows that national culture predicts trust in online health information and shapes evaluation strategies, suggesting that institutional/source cues tend to be emphasized in some Western samples, 45 while heuristic cues are frequently reported by Chinese social media users—especially older WeChat users—during credibility appraisal. 46 Meanwhile, the regulatory environment adds another layer of complexity. In the United States, regulatory actions by the Food and Drug Administration's Office of Prescription Drug Promotion and the Federal Trade Commission primarily target sponsored health claims and deceptive advertising practices.47–49 This scope includes the use of social media and influencer disclosures by commercial sponsors, yet it largely excludes ordinary user-generated content from direct oversight. In the European Union, the Digital Services Act imposes obligations on Very Large Online Platforms—including conducting public-health risk assessments, implementing mitigation measures, enhancing algorithmic transparency, and providing data access to researchers. These provisions are poised to influence platform-level systems, potentially reshaping content ranking and labeling practices. 50

Limitations

This study has several important limitations that should be considered. First, it is inherently cross-sectional, representing a single snapshot of the information landscape on 22 August 2025. Short-video platforms evolve rapidly— Short-video plaems, user behaviors, health campaigns, and viral trends all change over time—so our findings may not fully reflect subsequent developments. Future studies should adopt longitudinal designs with repeated sampling to capture temporal dynamics and algorithmic drift. Second, there is a notable sampling bias from analyzing only the top 100 videos ranked by each platform's default algorithm. Although this approach is widely used and generally considered sufficient, it inevitably excludes much of the available content, including potentially high-quality but less visible videos. Advertisements and sponsored content were also excluded, yet they constitute a significant part of real-world exposure. As a result, our findings reflect the algorithmically curated content most users see, rather than the full spectrum of AD-related material online. Third, the opacity of platform algorithms poses additional methodological constraints. The specific weighting of ranking signals—such as engagement metrics, personalization features, and content attributes—is proprietary and undisclosed. Even under “default” conditions, algorithmic personalization may have influenced which videos appeared, reducing reproducibility and potentially introducing bias. Finally, our analysis is geographically and linguistically limited to Chinese short-video platforms and Chinese-language content. While this focus provides valuable insights into China's rapidly developing digital health ecosystem, it limits the international applicability of our findings. The impact of self-media on medical information is a global challenge, and factors such as regulatory frameworks, user demographics, and platform governance differ substantially across platforms such as YouTube, TikTok, and Instagram. Comparative, cross-cultural studies are needed to understand these differences and develop globally relevant strategies. Additionally, our quality assessment tools (GQS, mDISCERN, JAMA) measure general information quality but not AD-specific accuracy, guideline concordance, or misinformation severity. Some key engagement variables (e.g. follower counts, posting time, and paid promotion) and behavioral metrics (e.g. watch time, completion rate) were unavailable, and clustering effects from repeated videos by the same uploader could not be addressed statistically. These factors, combined with the cross-sectional, algorithmically biased, and platform-specific nature of the study, should be considered when interpreting our results.

Future suggestions

Furthermore, it is essential to recognize that dementia is not only a medical condition but also a critical social challenge, requiring coordinated action across multiple sectors. Effective prevention and management strategies require multidimensional collaboration across medical, social, and policy sectors rather than efforts from a single discipline or institution. 51 Future work should therefore extend beyond traditional clinical research to include longitudinal and repeated-sampling studies that track how evolving algorithms, health campaigns, and viral trends shape public understanding over time, as well as broader and more representative sampling strategies that include lower-ranked and promotional content to capture the full spectrum of user exposure.

In addition, several practical strategies can strengthen the effectiveness of online dementia communication. Given that videos incorporating static schematic graphics are consistently associated with higher quality, platforms could actively support healthcare professionals by developing ready-to-use visual templates that standardize presentation, improve clarity, and reduce production barriers. At the same time, since patient narratives consistently attract high levels of engagement, they could be paired with expert commentary or cocreated with medical professionals to enhance scientific credibility without diminishing emotional resonance. Finally, platforms should consider integrating verified medical accounts into their ranking algorithms, assigning greater weight to content produced by authoritative sources to improve the visibility of accurate, evidence-based information. Together with sustained digital literacy initiatives that equip older adults and caregivers to critically assess online health content, such measures would transform the digital ecosystem into one that truly supports dementia prevention, management, and care.

Conclusion

This study systematically evaluated the quality and dissemination status of AD-related content on China's mainstream short-video platforms. Results indicate that the overall information quality and reliability of AD-related videos are generally low, revealing a significant “quality-dissemination paradox”: content published by medical professionals that is scientifically rigorous receives significantly higher quality scores, yet lags far behind nonprofessional content centered on patient narratives and daily life experiences in terms of user engagement metrics. This paradox highlights a severe disconnect between content scientific rigor and public engagement within the current algorithm-driven dissemination ecosystem. These findings hold clear implications for public health communication and policy development. First, platforms should assume primary responsibility for governing the information environment by optimizing algorithms to enhance visibility for authoritatively verified medical content. Second, healthcare professionals should be encouraged to collaborate with nonprofessional creators, integrating scientific evidence into relatable narrative formats to balance rigor with communicative impact. Finally, public education on health media literacy—particularly for elderly care groups—should be strengthened to improve their ability to discern online health information.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076251398464 - Supplemental material for Quality and reliability of Alzheimer's disease videos on Douyin and Bilibili: A cross-sectional content analysis study

Supplemental material, sj-docx-1-dhj-10.1177_20552076251398464 for Quality and reliability of Alzheimer's disease videos on Douyin and Bilibili: A cross-sectional content analysis study by Jingyu Li, Jingshu Zhang, Xinyi Xu, Lu Xiao, Yanjun Ling, Shuzhen Liu, Ying Gao, Lan Zhao and Hui Jia in DIGITAL HEALTH

Supplemental Material

sj-xlsx-2-dhj-10.1177_20552076251398464 - Supplemental material for Quality and reliability of Alzheimer's disease videos on Douyin and Bilibili: A cross-sectional content analysis study

Supplemental material, sj-xlsx-2-dhj-10.1177_20552076251398464 for Quality and reliability of Alzheimer's disease videos on Douyin and Bilibili: A cross-sectional content analysis study by Jingyu Li, Jingshu Zhang, Xinyi Xu, Lu Xiao, Yanjun Ling, Shuzhen Liu, Ying Gao, Lan Zhao and Hui Jia in DIGITAL HEALTH

Footnotes

Acknowledgements

The authors express their gratitude to the participants who contributed to the study.

Ethical approval

This study does not involve clinical data, human specimens, or laboratory animals. It exclusively utilizes publicly available video content from Douyin and Bilibili, which was accessed in accordance with the platforms’ Terms of Service at the time of data collection. All data extracted were in the public domain and excluded any nonpublic user information. Furthermore, the research did not involve any interaction with users. Therefore, ethical review was not required, and individual consent from content creators was not sought.

Contributorship

LZ and HJ contributed to conceptualization, methodology, formal analysis, and writing—review & editing. JL, JZ, XX, and LX contributed to software, writing—original draft preparation, data curation, and visualization. SL, YL, and YG contributed to software, writing—original draft preparation, data curation, and visualization.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.