Abstract

Objective

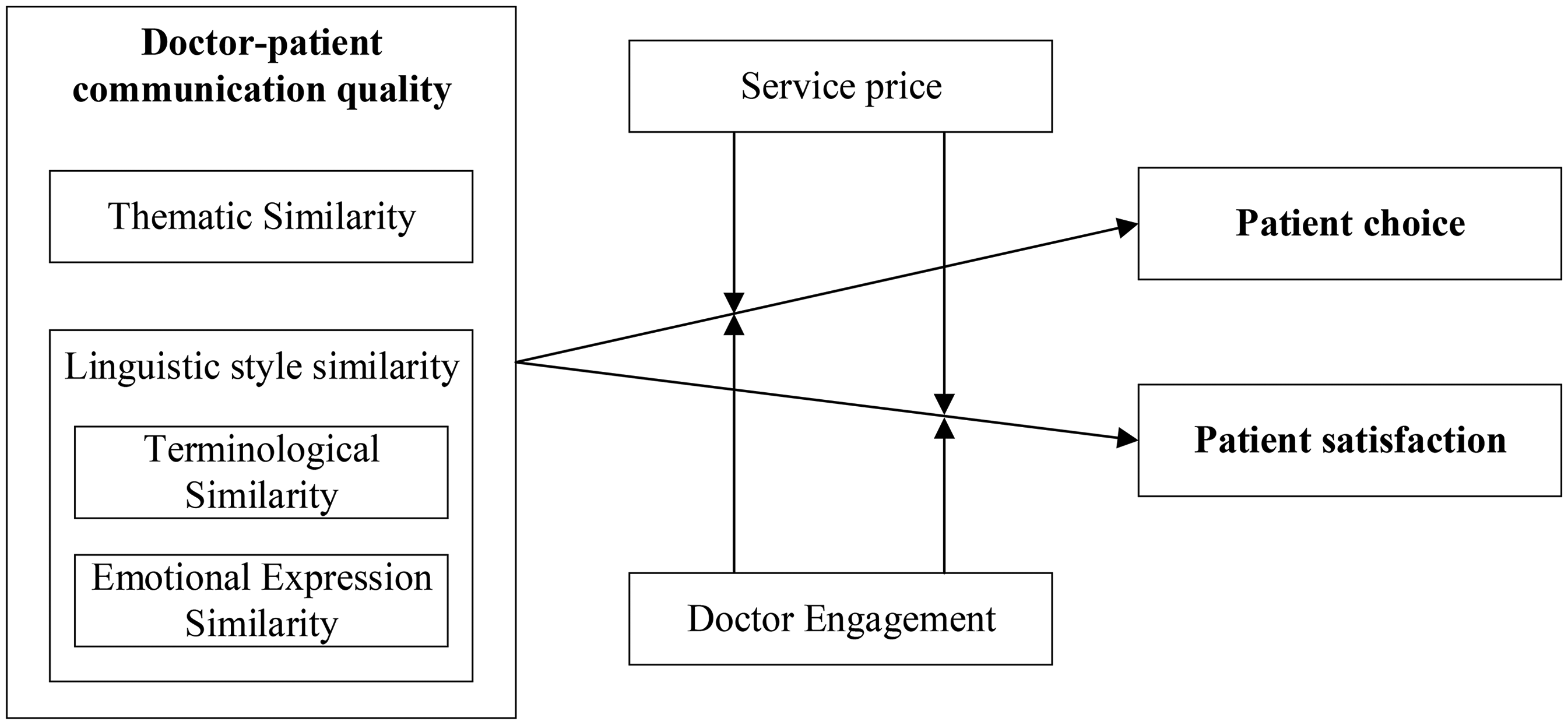

Building upon information quality assessment frameworks and social exchange theory, this study quantifies online doctor–patient communication quality across three dimensions using text analytics methods. Focusing on gynecological cancers online consultations, we examine how communication quality affects patient choice and satisfaction, while simultaneously investigating the moderating roles of service price and doctor engagement.

Methods

This study collected 19,392 doctor–patient interaction records from a leading Chinese online health platform through web crawling. Employing an interdisciplinary methodology integrating natural language processing, machine learning, and sentiment analysis for variable measurement, this study conducted empirical analyses using multivariate linear regression models.

Results

The study reveals that all three communication quality dimensions (thematic similarity, terminological similarity, and emotional expression similarity) exert significant positive effects on both patient choice and satisfaction. Furthermore, online service price and doctor engagement are found to positively moderate the relationship between communication quality and doctor performance, suggesting that higher-priced services and more engaged doctors amplify the beneficial impact of quality communication.

Conclusions

This research contributes to the theoretical understanding of doctor–patient communication in digital healthcare by extending existing frameworks and providing robust empirical evidence. The findings offer new insights into the mechanisms underlying online medical interactions and present fresh perspectives for optimizing communication models. From a practical standpoint, the study provides actionable recommendations for platform operators, including the implementation of targeted communication training programs for doctors to enhance their ability to deliver relevant and comprehensible information, as well as the development of terminology conversion systems to facilitate patient understanding of medical jargon, thereby improving overall communication quality.

Keywords

Introduction

The rapid development of internet-based healthcare has established online consultation services as a vital complement to traditional in-person medical care. This innovative healthcare delivery model facilitates remote doctor–patient communication through digital platforms, gaining increasing patient acceptance due to its convenience, cost-effectiveness, and privacy protection advantages.1,2 However, the virtual environment uniquely exacerbates pre-existing communication challenges, particularly information asymmetry between doctors and patients.3,4 This asymmetry is rooted in a fundamental discrepancy in cognitive frameworks and communication styles. Cognitive frameworks refer to the distinct knowledge structures and mental models held by doctors, which are shaped by professional training, and by patients, which are built upon personal experience.

While discrepancies in terminology usage, where doctors employ professional jargon and patients use colloquial expressions, constitute a general feature of healthcare communication, the online context intensifies their impact. This study is grounded in Information Asymmetry Theory, which posits that transactions where one party possesses more or better information than the other lead to market inefficiencies. In healthcare, this manifests as a gap between medical professionals’ expertise and patients’ lay understanding. The text-based and asynchronous nature of many online interactions critically amplifies this asymmetry by stripping away the non-verbal cues (e.g., gestures, tone of voice) and immediate clarifications that help bridge communication gaps in face-to-face settings.

The primary challenge in this context is effectively evaluating communication quality to mitigate this asymmetry. Existing research has primarily adopted two approaches: (1) scale-based methods, which are subjective and lack granularity,5–7 and (2) feature analysis of doctor response texts, which risks overlooking patient-side expectations and the interactive nature of dialogue.8,9 These methodological limitations directly reflect the core problem outlined by Information Asymmetry Theory: the lack of a tool to objectively and bidirectionally assess whether the information exchange successfully bridges the knowledge gap.

To address this theoretical and methodological gap, our study introduces the information quality assessment methodology 10 as our analytical lens. This framework, which operationalizes the reduction of information asymmetry through dimensions of relevance (needs alignment) and comprehensibility (information accessibility), allows us to move from describing the problem to quantitatively measuring its mitigation. However, building on clinical communication theory,11,12 we refine this conceptualization by distinguishing between two key processes in effective medical communication: (1) Alignment - establishing mutual understanding by building upon the patient's existing knowledge and concerns, and (2) Guidance - the professional responsibility to introduce new medical concepts and correct misconceptions when necessary. Our similarity-based approach primarily captures the Alignment dimension, which serves as the foundational layer for building trust and understanding before Guidance can be effectively delivered. Therefore, this study is guided by the following research question: How does the alignment aspect of online doctor–patient communication, measured bidirectionally through relevance and comprehensibility, influence patient choice and satisfaction?

We choose to investigate this question in the critical context of gynecological cancers. This domain is characterized by high information sensitivity, complex treatment decisions, and a significant need for ongoing support, making the effective reduction of information asymmetry through high-quality communication particularly consequential.13–15 Like patients with other chronic conditions, individuals facing gynecological cancers demonstrate high reliance on online health communities for sustained emotional support and medical guidance throughout their care journey. The rising incidence and younger onset trends of these cancers, especially in China, further underscore the importance of accessible, high-quality online consultation services.

By leveraging real-world data from the Chunyu Doctor platform and employing natural language processing techniques, we implement a novel bidirectional assessment of medical dialogues. This approach directly translates our theoretical framework into an empirical methodology. Our study aims not only to validate the practical utility of the information quality assessment framework but also to contribute back to information asymmetry theory by demonstrating how specific communication qualities can alleviate its negative effects in digital healthcare markets. Furthermore, by contextualizing our similarity metrics within the Alignment-Guidance framework, we provide nuanced insights into how initial communicative alignment serves as a precursor to effective clinical guidance. We further examine the moderating roles of price and engagement to provide a nuanced understanding of the mechanisms at play.

Theoretical background and hypotheses development

Information quality assessment framework

The information quality assessment (AIMQ) framework developed by Lee et al. (2002) establishes a comprehensive evaluation system that combines subjective information utility with objective technical attributes, forming a four-dimensional assessment structure comprising intrinsic quality, contextual quality, representational quality, and accessibility quality. Intrinsic quality reflects the fundamental attributes of information, including accuracy and objectivity. Contextual quality evaluates the degree to which information matches user needs. Representational quality assesses the comprehensibility of information presentation forms, while accessibility quality examines the convenience and security of information acquisition. Specifically, intrinsic information quality emphasizes that information should possess inherent value, manifested in aspects such as accuracy, reliability, and objectivity. Contextual information quality highlights the close connection between information quality and objectives, where relevance, timeliness, completeness, and appropriate quantity serve as key indicators to ensure information meets specific contextual needs. Representational information quality focuses on the level of information expression and form, where understandability, interpretability, conciseness, and consistency are crucial technical dimensions of information structure that determine whether information can be clearly conveyed. Accessibility quality characterizes information obtainability, manifested in availability and security safeguards that ensure information can successfully reach users.

In the healthcare domain, the quality of online doctor–patient communication is of paramount importance, with the information quality of bilateral communication constituting its core element. The online consultation process necessitates active participation from both doctors and patients, as unilateral information provision by medical professionals alone is insufficient to ensure optimal outcomes.16,17 The AIMQ framework provides a multidimensional operational approach for measuring online doctor–patient communication quality. The contextual and representational quality dimensions are particularly valuable for evaluating communication effectiveness, as they encompass information relevance and comprehensibility. Information relevance directly determines whether patient needs can be adequately addressed, whereas information comprehensibility significantly influences patients’ understanding and acceptance of medical advices. To operationalize these concepts for quantitative analysis, we leverage natural language processing techniques. We propose that thematic similarity between a patient's inquiry and a doctor's response serves as a measurable proxy for relevance (a core aspect of contextual quality), as a high degree of topic alignment indicates that the doctor's information directly addresses the patient's expressed concerns. Similarly, we use linguistic style similarity (e.g., convergence in vocabulary complexity and sentence structure) as an indicator of comprehensibility (a key element of representational quality), under the rationale that a doctor who adapts their linguistic style to be more aligned with the patient's is likely making a conscious effort to enhance understanding.18,19 However, following the clinical communication literature,11,12 we emphasize that these similarity metrics primarily capture the Alignment dimension of communication quality. While essential for building rapport and ensuring basic understanding, effective medical communication may also require guidance interventions that could temporarily reduce similarity metrics while ultimately improving clinical outcomes. Our study therefore examines the important but specific role that communicative Alignment plays in the broader clinical communication process. Therefore, this study selects two key dimensions from the AIMQ framework: thematic similarity (representing relevance) and linguistic style similarity (representing comprehensibility), to quantitatively examine online doctor–patient communication quality.

While the AIMQ framework provides a robust multidimensional structure for assessing information quality, its application to dynamic, interactive dialogues in online consultations requires careful adaptation. The original framework was designed for evaluating static information systems or content. A key adaptation in this study is our focus on relational and comparative metrics—thematic and linguistic style similarity—to capture the bidirectional nature of communication quality, which is a departure from the framework's traditional focus on unilateral information assessment. This approach allows us to operationalize the core dimensions of contextual and representational quality in a way that is specifically tailored to the interactive context of online medical dialogues. Moreover, by integrating the Alignment-Guidance perspective from clinical communication theory, we enhance the AIMQ framework's applicability to healthcare contexts where both information transfer and relationship building are crucial. Thus, our study not only applies the AIMQ framework but also extends its utility to a new and critical domain.

Social exchange theory (SET)

SET, as proposed by Blau 20 , posits that social behaviors result from rational actors’ cost-benefit calculations involving both material resources (e.g., money, services) and non-material resources (e.g., recognition, trust). Such exchange behaviors typically emerge through interactive processes between parties 21 and have been applied to understand various phenomena including consumer behavior 22 and information sharing. 23 Research has demonstrated that linguistic style similarity between communicating parties can enhance communication effectiveness. 24

Online medical consultations essentially constitute a resource exchange process. Through interactive text communication, patients seek health-related information while doctors provide professional medical knowledge. In this exchange, doctors may gain enhanced reputation, social recognition, improved patient satisfaction, increased consultation volume, and financial rewards. 25 Studies have shown that the social and emotional support doctors convey during online interactions positively impacts patient satisfaction. 26 In this study, online doctor–patient communication quality is conceptualized as the resource provided by doctors, while patient choice and satisfaction represent the reciprocal resources returned to doctors. Building upon this foundation, the current research employs SET to deeply investigate the impact of online doctor–patient communication quality.

Applying SET to online consultations offers a powerful lens for understanding the reciprocal dynamics between doctors and patients. However, it is important to acknowledge that the ‘exchange’ in healthcare is not purely transactional. Critics might argue that reducing medical communication to a cost-benefit calculus could overlook its ethical and fiduciary dimensions, where the doctor's primary obligation is to the patient's well-being. Nonetheless, SET remains highly relevant for explaining the sustained engagement and relationship-building that characterize successful online consultations. By conceptualizing communication quality as a ‘benefit’ provided by the doctor, our study leverages SET to hypothesize how this benefit fosters patient reciprocity (loyalty, satisfaction), thereby contributing to a sustainable online healthcare ecosystem. This application highlights the theory's value in explaining the socio-economic underpinnings of digital health platforms.

The impact of online doctor–patient communication quality

Previous studies have confirmed the feasibility of measuring online doctor–patient communication quality through text similarity analysis. 27 Based on the dimensions of information relevance and comprehensibility in the AIMQ framework, this study selects thematic similarity and linguistic style similarity as quantitative indicators.27–29 Thematic similarity refers to the consistency of communication content between doctors and patients, 29 while linguistic style similarity indicates the degree of matching in communication styles.28,30

Thematic similarity serves as an indicator for evaluating the relevance of textual content.31,32 By assessing the similarity or relevance of topics during communication, it determines whether both parties engage in discussions around similar themes,

27

thereby measuring communication quality.

27

Within the Alignment dimension of communication quality, higher thematic similarity indicates better alignment in discourse topics between doctors and patients

27

and stronger information relevance. For patients, higher thematic similarity means the information provided by doctors better matches their health needs, making it easier to reach consensus on treatment plans and consequently improving patient satisfaction. Moreover, higher thematic similarity helps better meet patient needs and provide superior resources,

29

thereby attracting more patients and increasing consultation volume. Therefore, this study proposes: H1a/1b: Thematic similarity has a positive impact on patient choice/patient satisfaction.

Linguistic style similarity reflects the comprehensibility of doctors’ responses to patients. According to previous research, linguistic style can be categorized into terminology usage and emotional expression.28,30 As a characteristic of language during communication, doctors’ linguistic style influences patients’ understanding, perception, and judgment of information. 28 As a key component of communicative Alignment, higher linguistic style similarity can enhance patients’ acceptance, comprehension, and understanding of doctors’ information, thereby improving satisfaction and consultation volume.

Terminological similarity refers to the degree of matching in the proportion of professional medical terminology used between doctors and patients during communication.

28

Patients not only care about information relevance but also need to understand the content, making information comprehensibility crucial for them.

33

Research shows that information comprehensibility significantly affects consumers’ cognitive load,

34

and lower comprehensibility leads to worse patient experience.35,36 For patients, higher terminological similarity results in better information acceptance and higher satisfaction, making it easier to attract patients and increase consultation volume. Therefore, this study proposes: H2a/2b: Terminological similarity has a positive impact on patient choice/patient satisfaction.

Emotional expression similarity reflects the degree of matching in emotional polarity and intensity between doctors and patients. 28 When there is significant disparity in emotional polarity and low matching (i.e., low emotional expression similarity), two scenarios may occur: first, doctors maintain an optimistic attitude while patients are negative, indicating patients’ emotional needs remain unmet with persistent psychological stress; second, doctors show concern while patients remain blindly optimistic, reflecting patients’ lack of sufficient awareness about disease severity and prognosis, which may affect treatment outcomes. 37

Emotional expression similarity characterizes how well doctors respond to patients’ emotional needs. For patients, higher emotional expression similarity indicates doctors better satisfy their emotional needs, reducing pressure in information understanding and judgment while enhancing trust,28,36 thereby improving satisfaction and making it easier to attract patients and increase consultation volume. Therefore, this study proposes: H3a/3b: Emotional expression similarity has a positive impact on patient choice/patient satisfaction.

The moderating effects of online service price and doctor engagement

Price serves both as a carrier of quality signals and a manifestation of consumer acquisition costs. In the dimension of quality signaling, research indicates a positive correlation between price and perceived quality, with consumers generally exhibiting a “high price-high quality” cognitive bias. 38 This effect is particularly pronounced in the consumption of intangible services characterized by information asymmetry (e.g., healthcare services), where price plays an especially prominent role as a key quality evaluation indicator.39,40

As a typical information commodity in online consultation services, patients act as service consumers while doctors serve as providers. Grounded in consumer behavior theory, price functions as a core quality signal

41

that directly influences patients’ expectations regarding doctor–patient communication quality. Specifically, higher price levels are often interpreted by patients as indicative of doctors’ professional competence. This perception reduces patients’ perceived risk, elevates their expectations of communication quality, and ultimately enhances satisfaction levels. However, it's important to note that these expectations primarily concern the Alignment dimension of communication quality measured by our similarity metrics. Therefore, this study proposes: H4a/4b/4c: Service price positively moderates the impact of thematic similarity/terminological similarity/emotional expression similarity on patient choice. H5a/5b/5c: Service price positively moderates the impact of thematic similarity/terminological similarity/emotional expression similarity on patient satisfaction.

In online consultation services, doctor engagement represents a core metric that patients prioritize, directly affecting their evaluation of doctors’ professional competence and treatment outcomes. Active doctor–patient interactions facilitate patients’ acquisition of health knowledge, thereby improving healthcare choices and satisfaction.

26

This effect is particularly crucial in online settings. Higher engagement typically signifies that doctors provide more health information and support, which reduces patients’ perceived risk while elevating their expectations of communication quality and satisfaction. From the doctor perspective, high engagement reflects service proactiveness; by delivering more comprehensive and higher-quality information, doctors can attract more patients and consequently increase consultation volume. This engagement effect is particularly relevant for enhancing the impact of communicative Alignment on patient outcomes. Therefore, this study proposes: H6a/6b/6c: Doctor engagement positively moderates the impact of thematic similarity/terminological similarity/emotional expression similarity on patient choice. H7a/7b/7c: Doctor engagement positively moderates the impact of thematic similarity/terminological similarity/emotional expression similarity on patient satisfaction.

The research model is presented in Figure 1.

Research model.

Methods

Research context and data collection

The data for this study were obtained from the Chunyu Doctor platform (established in July 2011), which boasts over 140 million registered users, more than 660,000 registered doctors, and 300 million health records, with a daily average of 360,000 health consultations processed. The research employed the Python programming language within the VSCode and Jupyter Lab development environments for data collection and processing, primarily utilizing the pandas library for data cleaning and text preprocessing.

Data collection was conducted in two phases: doctor profile information and doctor–patient interaction texts were acquired in August 2024, followed by additional doctor information retrieval in November 2024. The sampling criteria selected the most recent 30 interaction records per doctor. To facilitate communication quality measurement, Python was used to consolidate multiple texts from each consultation into separate doctor text corpora and patient text corpora.

Following data cleaning procedures, which excluded missing data due to website issues and supplemented hospital location information (city and province), the final valid sample comprised: 1616 unique doctors corresponding to 19,392 doctor–patient communication texts (9696 doctor texts and 9696 patient texts).

Measurement of variables

This study employs natural language processing and machine learning methods to conduct quantitative analysis of unstructured doctor–patient interaction texts. The detailed steps of the data processing workflow can be referred to in Figure 2. From data collection, cleaning, and preprocessing to model construction, training, and optimization, and finally to output and analysis of results, each step is systematically interconnected. Together, they provide effective technical support for an in-depth investigation into doctor–patient interaction texts and the mechanism of how online doctor–patient communication quality affects doctor performance.

Variable measurement process.

The research model is specified as follows: the independent variable is online doctor–patient communication quality (thematic similarity, terminological similarity, and emotional expression similarity); the dependent variables are patient choice behavior and patient satisfaction, measured through doctor consultation volume and positive rating rate respectively; the moderating variables are online consultation service price and doctor engagement level. Control variables include: doctor characteristics (professional title, years of practice), institutional characteristics (department, hospital rank), and regional characteristics (GDP level and medical resource level). The variable descriptions are presented in Table 1.

Variable definitions and descriptions.

Patient choice was operationalized as the increase in total consultation volume for each doctor between August 2024 and November 2024. The linguistic features for measuring communication quality were derived from the 30 most recent interaction texts available in August 2024, ensuring that the measurement of communication quality proceeded the period during which the change in patient choice was observed.

Thematic similarity measurement

This study employed the Latent Dirichlet Allocation (LDA) model to quantify thematic similarity in doctor–patient texts.

29

The specific implementation procedures were as follows:

a) Data reprocessing A medical terminology dictionary was constructed, incorporating the Chinese version of the International Classification of Diseases (ICD-10), a lexicon of commonly used doctor terms, a comprehensive medical vocabulary database, and a compendium of domestic and international drug names as the corpus for word segmentation. The jieba analysis tool was utilized for text segmentation. A stop-word list was applied to filter irrelevant terms. The bag-of-words model was employed for term frequency vectorization, with a minimum term frequency threshold of 10 and a maximum document frequency of 50%. b) Model training The optimal number of topics was determined to be 9 based on perplexity metrics, yielding topic probability distributions for doctor and patient texts. The cosine similarity metric was then applied to compute the degree of topic alignment between doctor and patient texts,

27

effectively assessing the consistency of communication themes. c) Method validation

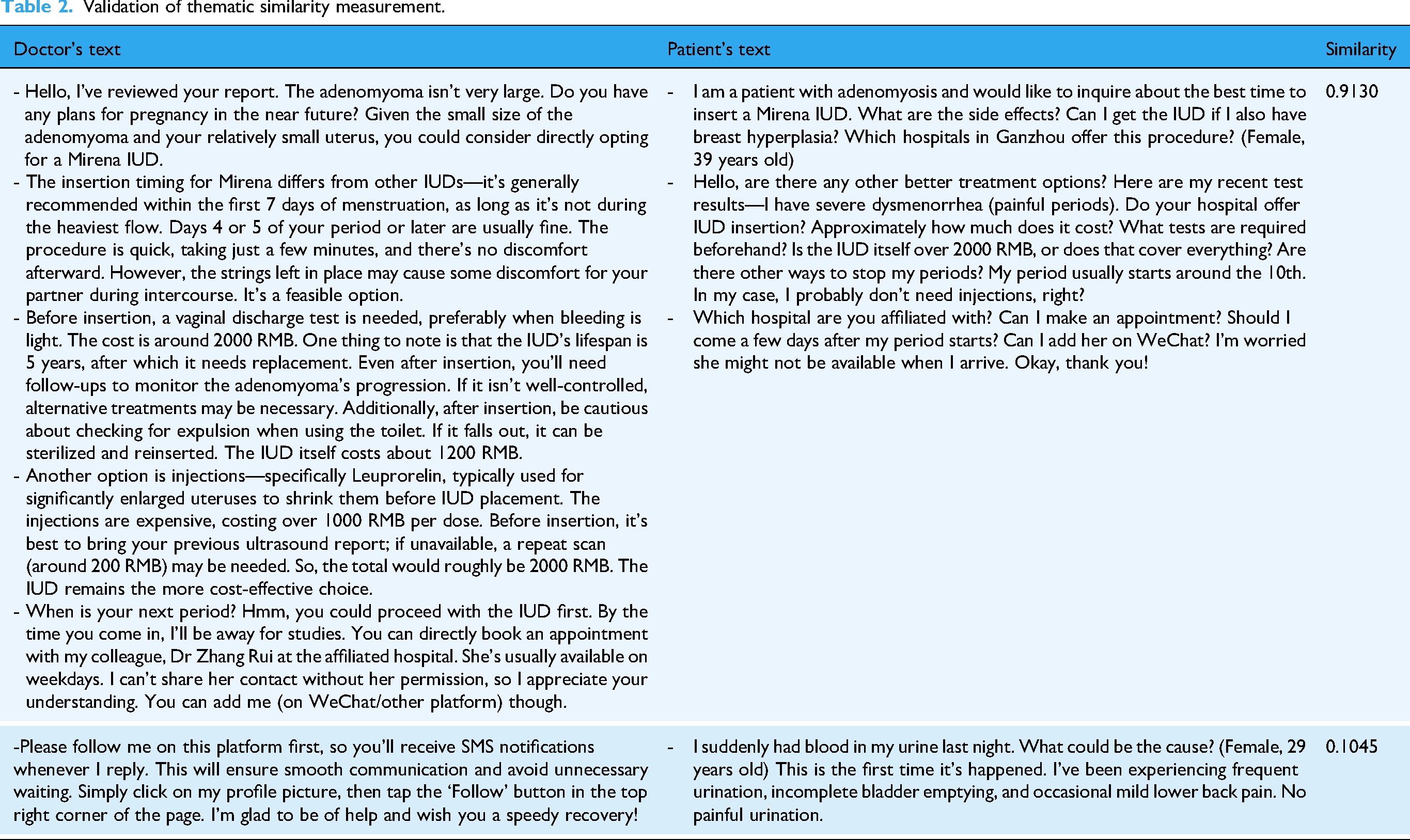

To verify the effectiveness of the proposed similarity measurement approach, a comparative validation framework was established. Two doctors were invited to conduct manual annotation and screening, selecting 10 sets of doctor–patient interaction texts with high thematic congruence and 10 sets with evident mismatches and low congruence. Similarity scores were computed for each sample group to evaluate the method's efficacy. The results demonstrated that the high-congruence group achieved an average similarity score of 0.9130, significantly higher than the low-congruence group's score of 0.1045 (Table 2), confirming the method's robust discriminative validity.

Validation of thematic similarity measurement.

Terminological similarity measurement

This study employed the term frequency-inverse document frequency (TF-IDF) method to calculate terminological similarity in doctor–patient interaction texts.

42

a) Text preprocessing The jieba segmentation tool was applied in conjunction with a custom medical dictionary to identify professional terminologies, ensuring accurate segmentation of medical terms. Simultaneously, the Harbin Institute of Technology stop-word list was used to remove irrelevant vocabulary and punctuation from texts, thereby enhancing analytical precision. Processed doctor and patient texts were separately stored in doctor text corpora and patient text corpora. b) Feature extraction The TfidfVectorizer was utilized to transform textual data into numerical feature matrices. The TF-IDF algorithm computed the importance weight of each term within texts, generating matrix tfidf0 for patient texts and matrix tfidf1 for doctor texts. To focus analyses strictly on medical terminologies, feature terms were filtered based on a pre-constructed medical dictionary, retaining only TF-IDF values belonging to medical terms. This yielded purified medical term frequency matrices. c) Cosine similarity computation

The paired_cosine_distances function first calculated cosine distances between doctor–patient term frequency matrices. These distances were then converted into final cosine similarity scores via the formula (1 - cosine distance). This metric effectively reflects the alignment in professional term usage between parties, maximizing distances between samples with divergent terminology patterns while minimizing distances for similar patterns. For each doctor, terminological similarity scores across all interaction orders were averaged to derive their overall terminological similarity index. This measurement approach provides robust technical support for assessing communication quality by accurately capturing importance variations among professional terms through TF-IDF weighting and quantifying alignment via cosine similarity computation.

Emotional expression similarity measurement

This study adopted a sentiment lexicon-based approach to calculate emotional expression similarity in doctor–patient texts, evaluating communication quality by quantifying the alignment of both sentiment polarity and intensity. A multi-level sentiment analysis framework was developed:

a) Base sentiment lexicon construction Five authoritative sentiment lexicons were integrated: HowNet Sentiment Dictionary, Boson Sentiment Dictionary, NTUSD (National Taiwan University Sentiment Dictionary), Dalian University of Technology Sentiment Ontology, and Tsinghua Sentiment Dictionary. For polarity conflicts between dictionaries, a majority-voting mechanism combined with manual validation was implemented (e.g., “fluctuation” was consistently labeled negative in clinical contexts). Domain-specific adaptations were made to general sentiment words using 19,392 medical dialogues (e.g., “stable” required contextual numerical interpretation). b) Medical domain-specific lexicon development A clinical sentiment lexicon was built using TF-IDF and word embedding techniques. Part-of-speech filtering and TF-IDF weighting extracted feature terms, while Word2Vec (cosine similarity threshold = 0.8) expanded semantically related expressions. The final lexicon contained 28,561 entries, including clinical phrases like “lesion shrinkage”, with dual manual verification ensuring terminological precision. c) Sentiment scoring

Sentiment scores were computed based on the constructed lexicons. Texts were segmented, with word-level scores aggregated into document-level metrics. Euclidean distance measured doctor–patient emotional divergence, whose reciprocal defined the final similarity index. Each doctor's score represented the mean across all consultations.

For contextual adaptation, the model was optimized for medical text characteristics (high terminology density, structural formality, and implicit affect) versus social media. Nine windowing schemes (2-5 word spans, bidirectional/unidirectional) were empirically tested. The forward 3-word window demonstrated optimal performance: 12.3% accuracy gain for clinical expressions (e.g., “marked relief”) and 8.7% error reduction for complex negations (e.g., “not entirely uncontrollable”), which has balanced analytical precision and robustness in clinical sentiment analysis. Contextual modifier window settings are shown in Table 3.

Contextual modifier window settings.

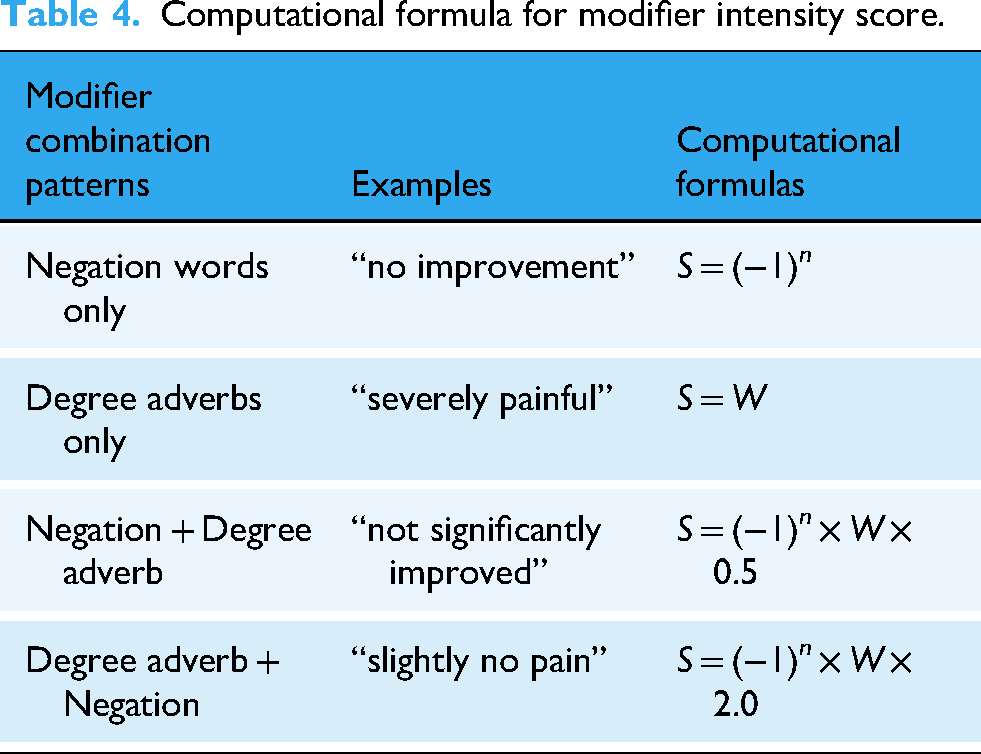

The modifier intensity score (S) for each sentiment word was determined by the count of adjacent degree adverbs and negation words, with scoring rules detailed in Table 4, where S represents the modifier intensity score, n denotes the count of negation words, and W indicates the weight of degree adverbs.

Computational formula for modifier intensity score.

The emotional scores in this study were computed based on the sentiment scores of individual segmented words in doctor–patient texts. The sentiment scoring formula for word segmentation is shown in the equation (1), where Emotion_word_score represents the emotional intensity score of the segmented word, S denotes the modifier intensity score of the sentiment word, and M indicates the emotional polarity of the sentiment word.

Through doctor annotations, we observed that expressions of negative emotions by doctors in online consultations (e.g., “very concerned about your condition worsening”) essentially reflect professional empathy rather than negative service attitudes. The overall emotional scores for doctors and patients were calculated by summing the sentiment scores of all segmented words, as illustrated in equation (2).

The Euclidean distance between doctors’ and patients’ emotional scores was computed to preserve both polarity and intensity information. The reciprocal of this distance yielded the final emotional expression similarity metric.

Using sentiment lexicons, we calculated emotional polarity and intensity scores for doctor–patient texts. Manual validation on 1000 randomly sampled annotations demonstrated an average sentiment classification accuracy of 85.1%, as presented in Table 5.

Accuracy of emotional expression similarity computation.

Text classification-based measurement of patient satisfaction

a) Data preprocessing and manual annotation

This study measured patient satisfaction by extracting sentiment orientation and semantic features from patient-generated texts. Specifically, a deep learning-based text classification model was constructed. In the data preparation phase, 2000 text samples were randomly selected from the scraped dataset of 19,392 interactions as the experimental dataset for classification modeling. Two board-certified doctors independently performed annotation tasks.

According to previous research, patients typically express satisfaction by: affirming doctors’ competence, recognizing the practicality of medical advice, or praising treatment outcomes. For example, when doctors’ recommendations align with patient needs, patients report post-treatment improvement, or compliment doctors’ approachability and professionalism (e.g., clear explanations of conditions). Satisfied patients often use phrases like “Thank you, doctor” or “Very professional”. 43

After thoroughly familiarizing themselves with typical satisfaction expressions in doctor–patient interactions, the two annotators labeled patient texts as: 1: Clearly reflects satisfaction with the doctor; 0: Neutral or dissatisfied (including neutral attitudes and dissatisfaction. Further analysis revealed limited explicit dissatisfaction samples, so these were merged with neutral cases). After completing annotations, the results were immediately cross-validated. For inconsistent labels, the two annotators discussed until consensus was reached to minimize potential bias. The final dataset contained 91.75% satisfaction-labeled samples.

b) Model construction and training

Patient satisfaction identification was treated as a binary text classification problem. After annotation, 70% of labeled samples were used to train a Bi-LSTM model, with the remaining samples reserved for performance testing. Bi-LSTM achieves bidirectional semantic encoding of sequential data by integrating forward and backward LSTM layers. Through bidirectional information fusion and LSTM's gating mechanisms, it significantly enhances contextual understanding and robustness in sequence modeling tasks—particularly suited for complex scenarios requiring global semantic awareness, such as medical text analysis. The model was trained using stratified sampling: 70% (1400 texts) as training set, 20% as validation set, and 10% as test set from the 2000 annotated samples.

c) Model performance testing and patient satisfaction prediction

After multiple rounds of hyperparameter tuning, model accuracy initially increased, stabilized with minor fluctuations, then declined. Peak accuracy occurred at batch_size = 64. Final performance metrics: Accuracy = 0.89, Precision = 0.87, Recall = 0.80, F1-score = 0.83. These results met our classification requirements. The trained Bi-LSTM model was then applied to classify all patient texts (satisfied = 1, otherwise = 0). Doctor-level satisfaction scores were derived by averaging predictions across each doctor's patients.

d) Validation of satisfaction calculation accuracy

The trained Bi-LSTM model predicted satisfaction labels for all 9696 patient texts, with 92.3% classified as satisfied. This closely matched the manual annotation rate (91.75%), confirming high prediction reliability.

Results

Descriptive statistics and correlation analysis

Table 6 presents the descriptive statistics of the variables. The results indicate that the correlation coefficients between variables are relatively small, and all VIF values are below 2, suggesting a low degree of multicollinearity.

Descriptive statistics and correlations.

Empirical results

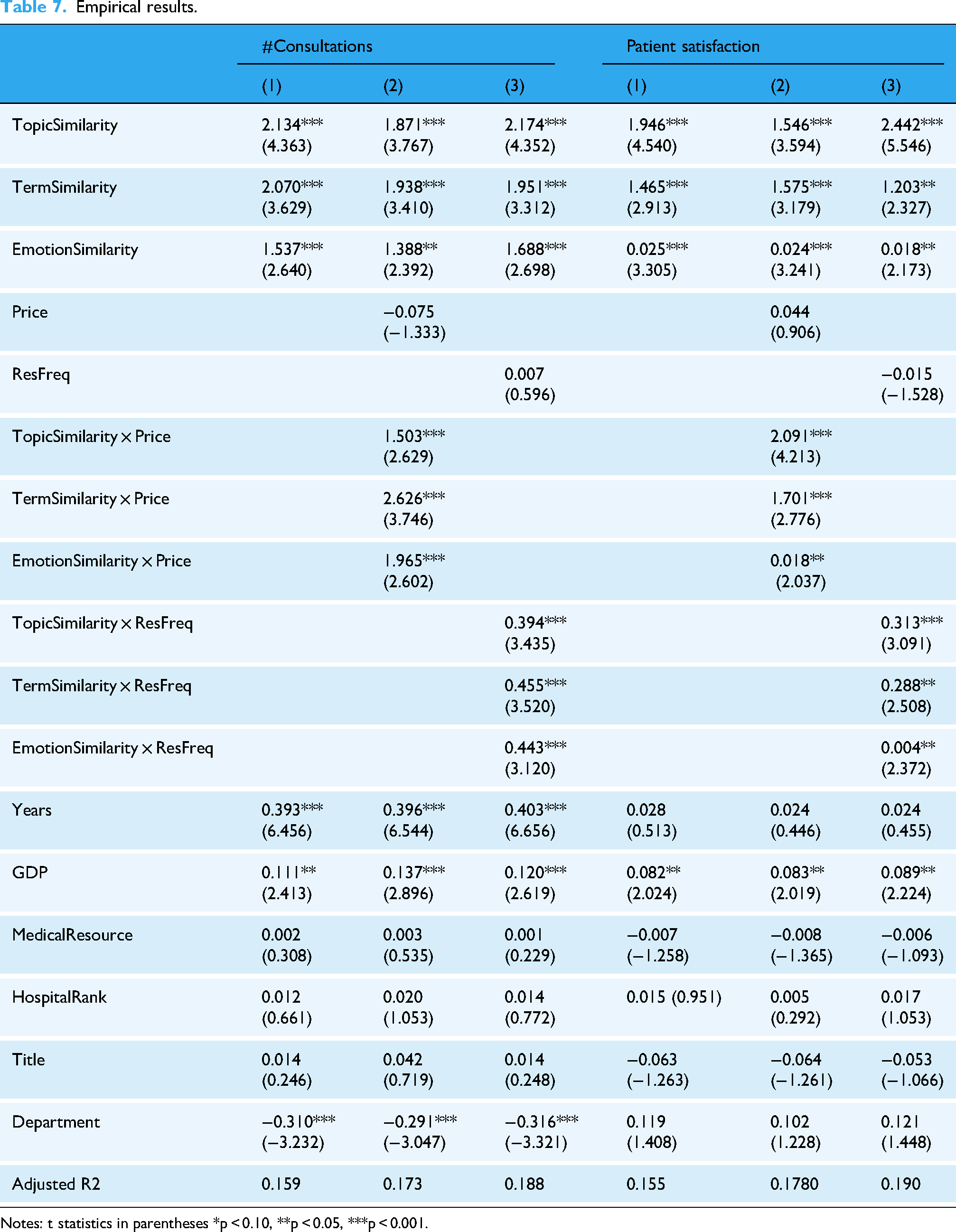

The results are presented in Table 7. Thematic similarity, terminological similarity, and emotional expression similarity all had significant positive effects on doctor consultation volume (β = 2.134, p < 0.001; β = 2.070, p < 0.001; β = 1.537, p < 0.001), supporting hypotheses H1a, H2a, and H3a. Furthermore, thematic similarity, terminological similarity, and emotional expression similarity significantly and positively influenced patient satisfaction (β = 1.946, p < 0.001; β = 1.465, p < 0.001; β = 0.025, p < 0.001), confirming hypotheses H1b, H2b, and H3b.

Empirical results.

Notes: t statistics in parentheses *p < 0.10, **p < 0.05, ***p < 0.001.

Online service prices positively moderated the effects of thematic similarity, terminological similarity, and emotional expression similarity on doctor consultation volume (β = 1.503, p < 0.001; β = 2.626, p < 0.001; β = 1.965, p < 0.001), supporting hypotheses H4a, H4b, and H4c. Similarly, online service prices positively moderated the effects of thematic similarity, terminological similarity, and emotional expression similarity on patient satisfaction (β = 2.091, p < 0.001; β = 1.701, p < 0.001; β = 0.018, p < 0.05), validating hypotheses H5a, H5b, and H5c.

Doctor engagement positively moderated the effects of thematic similarity, terminological similarity, and emotional expression similarity on doctor consultation volume (β = 0.394, p < 0.001; β = 0.455, p < 0.001; β = 0.443, p < 0.001), supporting hypotheses H6a, H6b, and H6c. Likewise, doctor engagement positively moderated the effects of thematic similarity, terminological similarity, and emotional expression similarity on patient satisfaction (β = 0.313, p < 0.001; β = 0.288, p < 0.05; β = 0.004, p < 0.05), confirming hypotheses H7a, H7b, and H7c.

Robustness checks

This study employed alternative independent and dependent variables to verify the robustness of the results (See Table 8). For the main effect model test, context-aware thematic similarity measured by the Sentence-BERT model was used as an alternative independent variable, addressing limitations inherent to the LDA approach. 44 The results remained consistent with the initial regression findings. Similarly, when substituting the dependent variable with platform-calculated patient satisfaction scores (derived from patient ratings), the regression outcomes aligned with prior results.

Robustness checks using alternative variable measurements.

Note: t statistics in parentheses * p < 0.10, ** p < 0.05, *** p < 0.001.

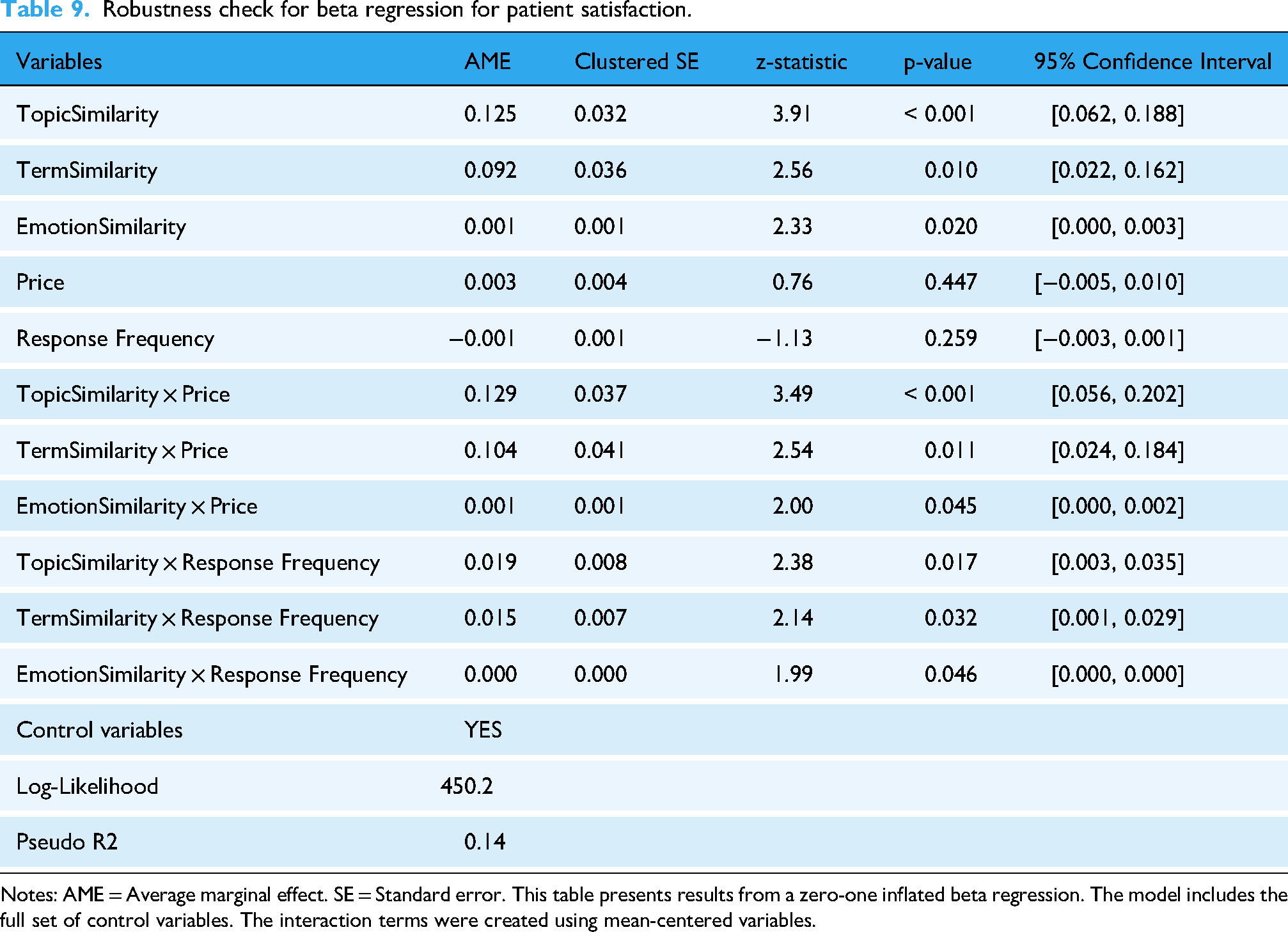

In addition, we re-estimated our models using a zero-one inflated beta regression. The results, presented in Table 9, report average marginal effects (AMEs) with standard errors clustered at the hospital level. The results from the robust beta regression specification fully corroborate our main findings. The AMEs indicate the change in the probability of patient satisfaction (on a 0 to 1 scale) for a one-unit increase in each predictor. As shown, the AMEs for TopicSimilarity (AME = 0.125, p < 0.001), TermSimilarity (AME = 0.092, p = 0.010), and EmotionSimilarity (AME = 0.001, p = 0.020) remain positive and statistically significant. Crucially, the moderating effects of price and response frequency are also robust across all similarity dimensions. For example, the significant positive interaction TopicSimilarity × Price (AME = 0.129, p < 0.001) indicates that the positive effect of topic alignment on satisfaction is strengthened for higher-priced consultations, consistent with H4a. The consistency of these results with our primary OLS models underscores the reliability of our conclusions.

Robustness check for beta regression for patient satisfaction.

Notes: AME = Average marginal effect. SE = Standard error. This table presents results from a zero-one inflated beta regression. The model includes the full set of control variables. The interaction terms were created using mean-centered variables.

Discussion and conclusion

Result analysis

This study examines the mechanisms through which online doctor–patient communication quality influences patient choice and satisfaction, grounded in SET and information quality theory. The results demonstrate that three dimensions of communication quality (thematic similarity, terminological similarity, and emotional expression similarity) all exert significant positive effects on both patient choice and satisfaction. This indicates that both the relevance and comprehensibility of information in online medical consultations critically shape doctor outcomes. Furthermore, consultation service price and doctor engagement were found to positively moderate these effects, enhancing patients’ perceived communication quality.

First, thematic similarity reflects the alignment between doctors’ and patients’ discussion content regarding core topics. When doctors adhere to evidence-based guidelines by focusing on core clinical themes (e.g., symptom presentation), patients can articulate their conditions more precisely. This enables doctors to leverage clinical decision support systems for accurate diagnoses and personalized treatment plans—key factors in evidence-based medicine. 45 Such focused dialogue not only increases consultation volume by attracting more patients but also boosts satisfaction as patients perceive scientifically grounded care.

Second, terminological similarity also plays a crucial role in online doctor–patient communication. Medical jargon often creates communication barriers. Doctors who adapt their language to patients’ literacy levels (e.g., explaining “myocardial infarction” as “heart attack”) improve treatment adherence through better understanding. This linguistic alignment enhances medication compliance and follow-up rates while generating positive word-of-mouth—both driving consultation demand and satisfaction.

Finally, emotional expression similarity plays a unique and critical role in online doctor–patient communication. Patients experiencing illness endure physical-psychological distress. Doctors demonstrating empathic alignment (e.g., acknowledging anxiety about symptom recurrence) foster trust through emotionally attuned responses. This therapeutic connection encourages patients to disclose critical health details, improving diagnostic accuracy while positively influencing both consultation volume and satisfaction metrics.

Additionally, this study reveals the moderating effects of online consultation prices and doctor engagement. Regarding consultation prices, higher prices signal higher quality, enhancing patients’ perception of communication quality. For doctor engagement, when doctors respond more frequently, patients perceive this as a clear indicator of the doctor's high involvement in their treatment process. Frequent responses imply the doctor's close attention to their condition, subconsciously building a stronger connection between doctor and patient and significantly enhancing patients’ trust perception toward the doctor.

Theoretical contributions

This study makes the following contributions.

First, this research comprehensively considers both thematic and linguistic style dimensions, using text similarity to measure online doctor–patient communication quality, thereby addressing both the relevance and comprehensibility of information in medical communication. This approach enriches theoretical research on online doctor–patient interaction and communication quality quantification in digital health platforms. When measuring online communication quality, this study focuses on interactive texts from both doctors and patients, employing a combination of supervised and unsupervised learning algorithms to quantify quality across two dimensions: thematic content and linguistic style (including terminological usage and emotional expression). By calculating thematic similarity and linguistic style similarity, we effectively capture the essence of online doctor–patient communication quality. Utilizing natural language processing methods, this study analyzes unstructured Q&A data from online consultations, expanding the methodological approaches for measuring communication quality and addressing the excessive subjectivity prevalent in prior research.

Second, grounded in SET, this study constructs a theoretical model examining the impact of online doctor–patient communication quality on doctor performance, confirming both the main effects and the moderating roles of price and doctor engagement. Existing studies predominantly investigate the effects of communication quality from the patient perspective, whereas this research shifts focus to doctor outcomes. By exploring how communication quality influences doctor performance, this study provides actionable recommendations for both clinicians and platform development.

Practical contributions

Our study has the following practical contributions. For doctors, first, they should quickly review the patient's medical history before consultations to identify priority issues, ensuring discussions concentrate on disease-critical themes. For example, with gynecologic cancers patients, focus on key topics such as tumor staging, treatment options (e.g., surgery vs. chemotherapy), and symptom management (e.g., pelvic pain or abnormal bleeding). This strengthens topic similarity, improves clinical relevance, and enhances both consultation efficiency and patient satisfaction.

Second, optimize terminological expression. Regularly train in plain-language communication to adapt complex oncology terminology into patient-friendly explanations. Borrow techniques from health literacy materials to simplify concepts. For instance, when explaining BRCA gene mutations to ovarian cancer patients, use analogies: “BRCA genes act like spellcheckers for cell growth—when they’re faulty, errors accumulate, increasing cancer risk.” This elevates terminology similarity and ensures information accessibility.

Third, emphasize emotional expression training. Attend workshops on empathetic communication to master active listening and distress-alleviating responses. When patients express fears about prognosis, validate emotions—e.g., “I hear your concerns about recurrence. Let's discuss monitoring plans to address this together.” Such responses promote emotional expression similarity, build trust, and improve satisfaction scores among cancer patients.

OHC platforms should optimize pricing strategies. Platforms could implement a tiered pricing system to guide doctors’ price-setting practices, accounting for disease complexity and doctor qualifications. For common conditions, offer baseline-priced services; for complex cases, premium pricing should correspond to in-depth consultations with specialists. Each tier must transparently outline service inclusions (e.g., detailed case analyses or multiple follow-ups) to align with patient expectations for communication quality. In addition, platforms could regularly analyze patient feedback and market data to dynamically adjust price based on doctor communication quality ratings and patient preferences to guide doctors’ price-setting practices. For doctors consistently receive high satisfaction scores, prices may be moderately increased.

Second, platforms should enhance doctor incentive mechanisms. For example, platforms could establish a doctor response-tracking system, rewarding timely and frequent engagement. For instance, display doctors within the same specialty in rank order based on response rates. This motivates participation and amplifies the positive impact of communication quality on performance metrics.

Limitation and future directions

This study has several limitations that suggest avenues for future research. First, the exclusive reliance on data from a single platform may constrain the generalizability of our findings. Future research should directly test the model's applicability across diverse platforms, specialties, and national contexts. Second, the measurement of online doctor–patient communication quality could be enhanced by employing more sophisticated algorithms, such as multimodal fusion techniques. Third, our measure of terminological similarity focuses on lexical alignment as a precursor to shared understanding. It does not capture the pedagogical quality of a doctor's language, such as the skillful simplification of complex terms. Future research could valuably incorporate readability metrics to explore this important aspect of communication quality. Finally, while this study utilized cross-sectional data, longitudinal panel data could provide more robust evidence in future investigations.

Conclusion

This study empirically validates that the quality of online doctor–patient communication, measured through thematic, terminological, and emotional similarity, is a critical determinant of patient choice and satisfaction. These insights extend the application of information quality and social exchange theories to the digital health context. For practitioners, our results underscore the necessity for platforms to implement targeted training and tools that enhance doctors’ communicative competence, ultimately fostering more effective and satisfactory online medical interactions.

Footnotes

Acknowledgements

This study was supported by grants from the National Natural Science Foundation of China (No. 72404094).

Ethical approval

No clinical data, human specimens, or laboratory animals were involved in this study. All information used in this study was obtained from publicly released online health communities, and none of the data involved personal privacy. In addition, the study did not involve any interaction with users; therefore, no ethics review was required.

Author contributions

Ping Li: Conceptualization, Methodology, Data Curation, Formal Analysis, Writing - Original Draft.

Yilan Hu: Conceptualization, Resources, Project Administration, Writing - Review & Editing.

All authors have read and agreed to the published version of the manuscript.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Natural Science Foundation of China. National Natural Science Foundation of China, (grant number 72404094).

Data availability

The data that support the findings of this study are available on request from the corresponding author.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Guarantor

**.

Contributorship

All authors were involved in data collection and data analysis, and writing the manuscript. All authors reviewed and edited the manuscript and approved the final version of the manuscript.