Abstract

Objectives

This study aims to fulfill the current research gap pertaining to the lack of a comprehensive systematic mapping study (SMS) regarding the effectiveness of artificial intelligence (AI) machine learning algorithms in the diagnosis of speech and language disorders (SLDs), and the extent to which such AI algorithms can automate this diagnostic process.

Methods

An SMS has been implemented following the Preferred Reporting Items for Systematic Reviews and Meta-Analyses guidelines; 19,774 research papers were screened resulting in 70 studies meeting purposely designed inclusion and exclusion criteria.

Results

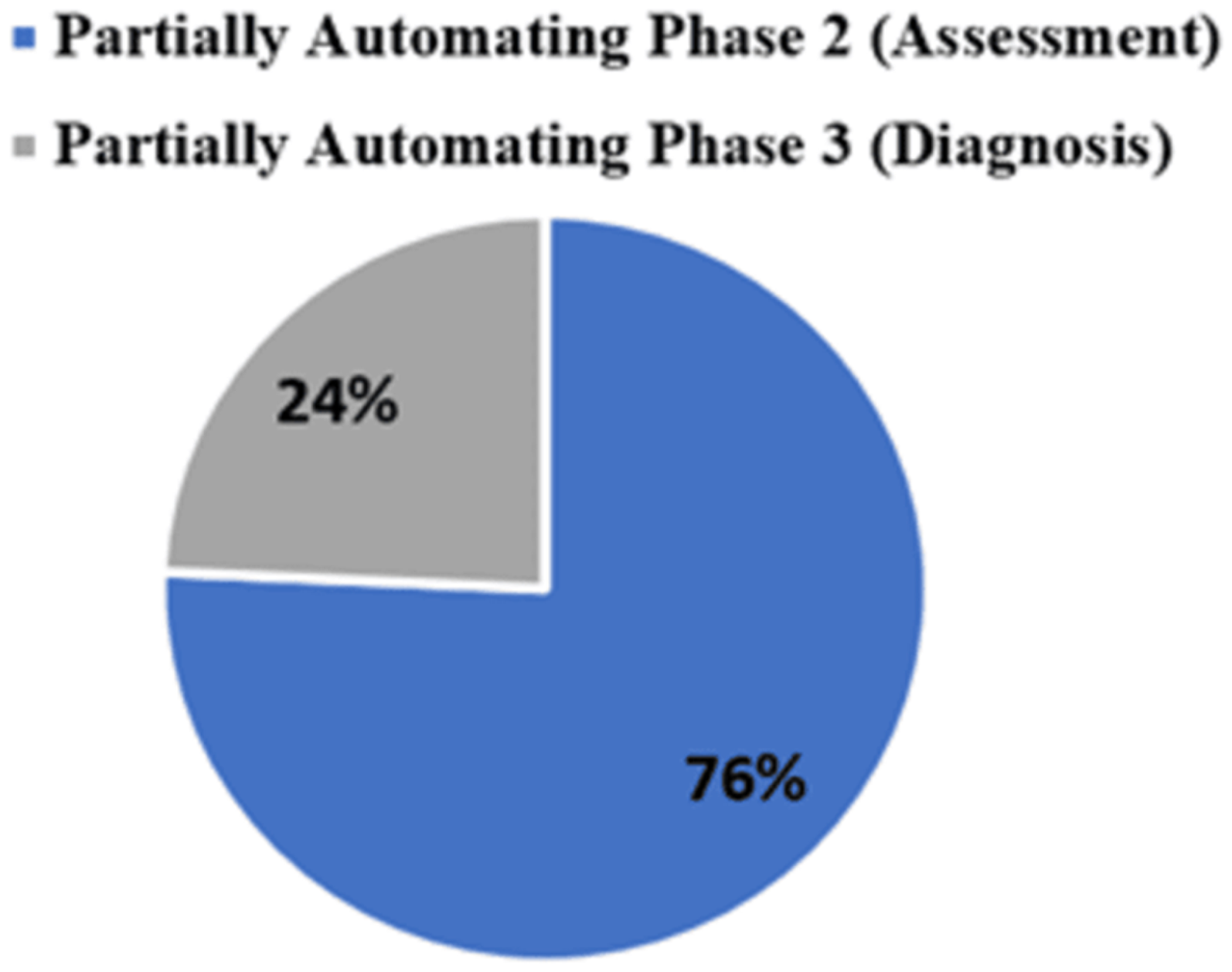

The findings revealed multiple research gaps including substantial divide in the application of AI machine learning algorithms for diagnosing SLDs, where 91.43% versus 8.57% of the studies relating to SLDs, respectively. This is further exacerbated by the absence AI machine learning algorithms for diagnosing prevalent language disorders, such as developmental language disorders in children. Furthermore, most AI machine learning algorithms for diagnosing SLDs are focused on binary classification of these disorders, for example, healthy and pathological voices, but not providing detailed diagnostics, such as the impaired aspects and contextual SLDs severity. Finally, AI machine learning algorithms have predominantly focused on partially automating the SLD assessment phase of the diagnostic process (76%) compared to those that have extended the automation to partially include the diagnosis determination phase (24%).

Conclusion

The effectiveness of AI machine learning algorithms in automating SLD diagnosis cannot be claimed without larger population datasets, highlighting a research gap for developing AI models to automate the four phases of the SLD diagnostic process and link them to treatment protocols in clinical settings.

Keywords

Introduction

Speech and language disorders (SLDs) affect significantly a variety of populations impacting their communication abilities, and resulting in negative long-term difficulties in academic performance, social well-being status, and mental health status; thus, impacting their quality of life. 1 According to the National Institute on Deafness and Other Communication Disorders, developmental language disorder (also known as specific language impairment) has a prevalence of 7% among children, while speech sound disorders (articulation disorders or phonological disorders) have a prevalence of 8–9% in young children. 2

An informative assessment process of SLDs that results in highly accurate diagnosis should adhere to five key principles: validity, reliability, tailored to the individual patient, use variety of assessment modalities, and thoroughness. Usually, clinicians use a combination of methods to assess these types of disorders including norm-referenced tests which are standardized tests that allow the clinician to compare the patient's performance to a larger group that is the normative group. Criterion-referenced tests inform clinicians to reveal what a patient can do and cannot do based on a predefined criterion, whereas authentic assessment tests (though similar to criterion-referenced tests) depend on the assessment of the contextual assessment of these disorders. Although these methods of assessment are valuable for the diagnosis of SLDs, they have some limitations such as lack of individualization in the norm-referenced and criterion-referenced tests; however, contextual testing of these disorders is not representative of real-life situations as it only evaluates isolated skills without taking into account other contributing factors. Furthermore, authentic assessment can lack objectivity, reliability, efficiency, and validity, and therefore may require extensive planning and experience. 3

Given these limitations, there was a growing need to develop and innovate diagnostic approaches that consider these limitations and improve the diagnostic process of SLDs in terms of efficiency, reliability, and validity. One of the responses to this need is the utilization of artificial intelligence (AI) techniques, which have been integrated in the diagnosis of SLDs for almost two decades. Artificial intelligence techniques have been increasingly utilized in various healthcare domains, including medical imaging, diagnostic services, virtual patient care, medical search and drug discovery, and patients’ rehabilitation. 4 In the field of speech and language patient journey, AI has been increasingly utilized, and in particular in the form of incorporating automatic speech recognition systems in augmentative and alternative communication devices, and in the evaluation of disorders such as voice and speech issues in head and neck cancer patients.5,6

However, several questions remain unanswered regarding the use of AI in diagnosing SLDs, including which disorders have been assessed and diagnosed using AI, which AI algorithms and techniques have been employed in the diagnosis process and diagnosis, and the performance and effectiveness of these algorithms and techniques.

Additionally, the current literature lacks a comprehensive systematic mapping study (SMS) regarding the effectiveness of AI machine learning algorithms in the diagnosis of SLDs, and the extent to which such AI algorithms can automate this diagnostic process. The literature includes one publication namely Brahmi et al., 7 among the 70 publications of the SMS conducted in this research as can be seen in “Discussion” section. Although this study identified speech disorders that were investigated in light of diagnosing and treating processes (combined), the Brahmi et al. included diseases that are not classified as a speech disorder, such as the Parkinson's disease, 8 facial palsy, 9 dysphagia, 10 and aphasia. 11 Additionally, in the Brahmi et al. study, AI machine learning algorithms were not identified in relation to their role in the diagnosis, treatment, or treatment and diagnosis stages, but rather in general. Also, their study does not reflect on the extent of automating the diagnosis process of speech disorders. Finally, the Brahmi et al. study does not include systematic review of the role of AI machine language algorithms in the diagnosis of language disorders.

Therefore, this SMS aims to address these questions and thus to fulfill the research gap pertaining to the utilization of AI in the diagnosis of SLDs through a formal methodological review. This study reports on the different types of SLDs that have been assessed and diagnosed using AI and machine learning algorithms, and hence informing the effectiveness of these algorithms in the diagnosis of SLDs. Finally, this study evaluates the extent to which AI and machine learning algorithms have automated the task of diagnosing SLDs.

To achieve the present study, aim and objectives, a systematic mapping literature review has been conducted regarding the use and effectiveness of AI in the diagnosis of SLDs in accordance with the guidelines outlined in the PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) statement for systematic reviews. 12

Background

Speech disorder or speech impairment is a condition where a person encounters difficulty in producing sounds and that it affects communication with others.

13

Speech disorders include the following:

Speech sound disorders: These are disorders that interfere with the ability to produce sounds clearly. The assessment of these disorders relies on guiding the client to speak a list of words, and the speech-language pathologist examines the client's oral production, and determines whether there were any errors in the production of different speech sounds. The decision is highly dependent on the therapist's experience, making the diagnostic process highly subjective.

14

Speech sound disorders include the following categories

13

:

Articulation disorder: This relates to the difficulty of producing speech sounds because of the inaccurate speed, pressure, placement, timing, or the flow of movement of tongue, lips or throat.15,16 However, the diagnosis of articulation disorder is very difficult and relies on the expertise of the speech-language pathologist.

16

Phonological disorder: Rule-based errors that affect multiple speech sounds within the same class.

17

Neuromotor speech disorders/motor speech disorders: Disorders characterized with the difficulties to move the muscles responsible for speech production because of muscle weakness or reduced coordination.

18

These disorders could include the following:

Apraxia of speech/verbal apraxia: A disorder characterized with the difficulties in motor planning for speech production with the absence of muscle weakness and may be considered as developmental, it is known as Childhood Apraxia of Speech or Acquired.3,18 Dysarthria: A disorder characterized with the difficulties of speech production due to the presence of muscle weakness. Dysarthria has multiple types according to the site of lesion including flaccid, spastic, unilateral upper motor neuron, hypokinetic, hyperkinetic, ataxic, and mixed dysarthria.3,18 However, the examination of this disorder is subjective and is based on the therapist's perceptual analysis capabilities and experience. Thus, the diagnostic results can be inconsistent and unquantifiable.

19

Fluency disorders: It is the interruption in the flow of speech that may include atypical disfluencies, atypical rhythm of speech, or atypical rate of speech.

20

Fluency disorders include the following:

Stuttering/ stammering: A disorder that causes interruption in the flow of speech because of certain types of disfluencies which may include repetitions, prolongations, or blocks.

20

Stuttering could be developmental, acquired neurogenic, or acquired psychogenic.

21

Traditional assessment of stuttering depends on counting the speech disfluencies manually, such method of assessment is arbitrary, incoherent, lengthy, and error-prone.

22

Cluttering: A disorder that is characterized by abnormal rapid speech rate, excessive disfluencies, decreased awareness of the fluency problem, and other characteristics that result in decreased speech intelligibility and clarity.

20

Voice disorders: Disorders characterized by a change in voice quality, pitch, and loudness that are not aligned with the individual's age, gender, cultural background, or geographical location.

23

These disorders include the following types:

Organic voice disorder: Voice disorders that result from physical or structural changes in the vocal tract.

24

Neurogenic voice disorder: Voice disorders that result from problems within the central or peripheral nervous system innervation to the larynx.

24

Functional voice disorder: Voice disorders that resulted due to the improper or insufficient use of the vocal tract with the absence of any structural of neurological abnormalities in the vocal tract. These disorders include muscle tension dysphonia and psychogenic voice disorder.24,25

However, voice disorders require automated diagnostic methods, as the current diagnostic procedures lack standard methods and equipment. 26

Language disorders are disorders manifested by the impaired comprehension, and/ or use of the spoken language, written, or any other language models.

27

Language disorders include the following:

Spoken language disorders: Disorders characterized by continuous difficulties in the acquiring and using of speaking and listening skills in any of the following language aspects: phonology, morphology, semantics, syntax, and pragmatics.

28

These disorders include the following types:

Developmental language disorders: Also known as Specific Language Impairment, it is when the spoken language disorder is a primary disability without the presence of a known cause or medical condition, and that persists at school age and beyond.

28

Language disorders associated with [condition]: A spoken language disorder that is categorized as a secondary disorder due to the presence of another condition or diagnosis such as Autism.

28

Acquired neurogenic language disorder/aphasia: A disorder that has resulted from a brain damage that is associated with language comprehension or production and that has resulted in the loss of language function. Aphasia has several types according to the damaged area in the brain, including the following: Broca's Aphasia, Transcortical Motor Aphasia, Isolation Aphasia, Global Aphasia, Wernicke's Aphasia, Conduction Aphasia, Transcortical Sensory Aphasia, and Anomic Aphasia.

3

However, accurately diagnosing aphasia is difficult and is “error prone medical task” due to linguistic ambiguity and uncertainty, diversity, and dissension in the description of aphasic symptoms, and the large number of test items.

29

Overview of diagnosing SLDs

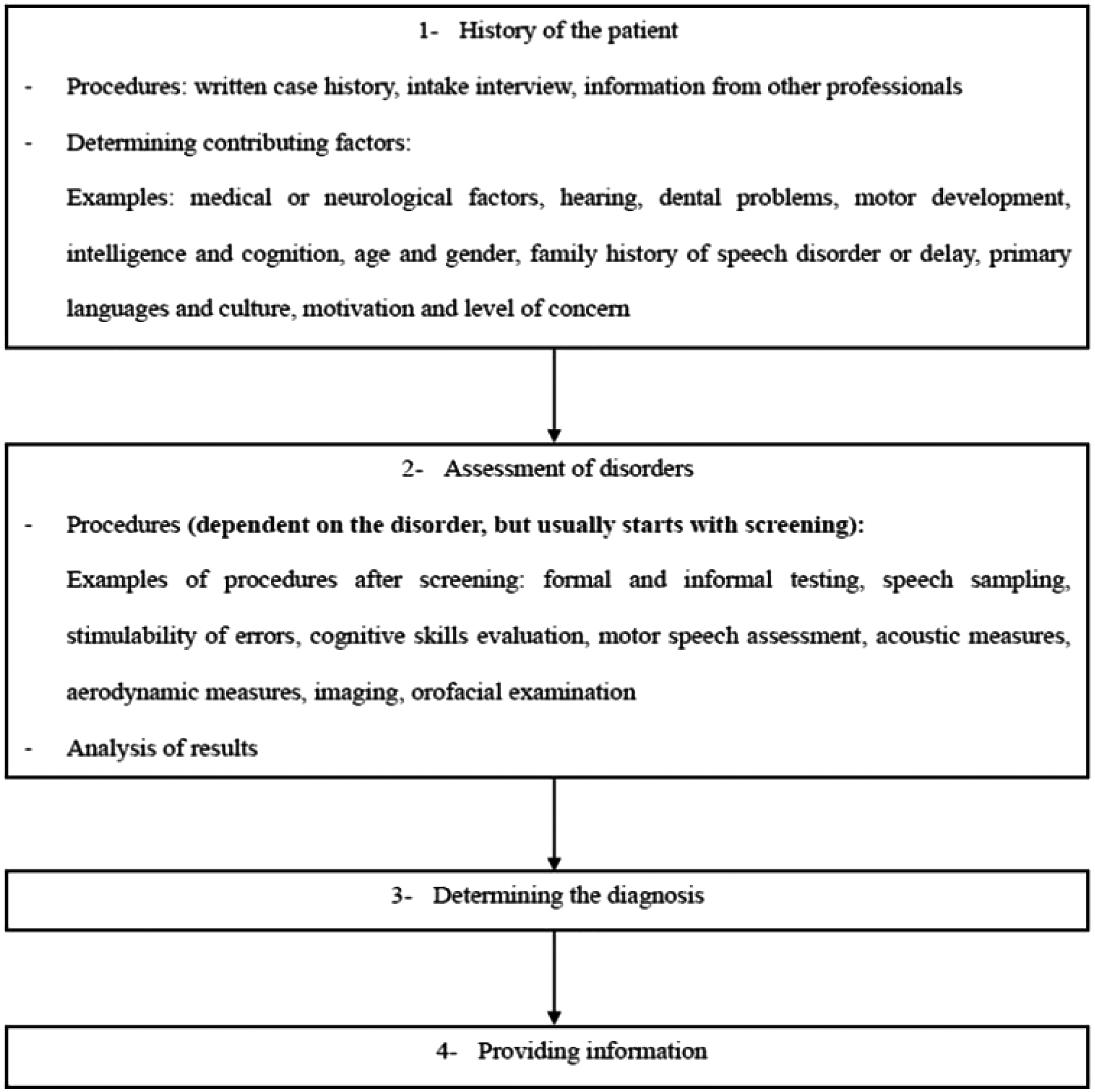

The general diagnostic process of SLDs goes through four primary phases as represented in Figure 1:

Patient history intake: The first phase of the diagnostic process includes collecting comprehensive background information about the patient through multiple means, such as written case history forms, interviews with the patient or their caregiver, or gathering information from other professionals that are involved in the patient's care journey. Additionally, this phase helps the professionals to identify potential contributing factors to the disorders, such as neurological factors, medical conditions, age and gender, and psychological factors.

3

Assessment of disorders: This phase usually starts with a screening procedure to detect the presence or absence of speech or language disorder. After screening, multiple formal and informal diagnostic tests that are specialized to the suspected disorder will be performed by the professionals. Additionally, this phase includes analyzing the tests results to determine the features of the disorder, severity of the disorder, and the stimulability enabling professionals to fully understand the patient's condition.

3

Diagnosis determination: After analyzing the assessment results, professionals will interpret the findings to decide the most relevant diagnosis.

3

Providing information: After determining the diagnosis, professionals convey the findings to the patient or to their caregiver through different ways such as through information-giving session, or writing a detailed assessment report to document the whole diagnostic process, with writing recommendations for the patient's care and management.

3

General overview on the diagnostic process of speech and language disorders.

Methods

This study employs a SMS to explore the effectiveness of AI in the diagnosis of SLDs. As the primary objective of this paper is to address evidence pertaining to specific research topic rather than addressing detailed research questions, systematic mapping methodology has been applied to this study rather systematic literature reviews (SLRs).

Systematic mapping studies are a broader form of SLRs which utilize a comprehensive collection of primary studies to meticulously pinpoint any existing gaps within specific research areas, while also identifying potential trends for further investigation. 30

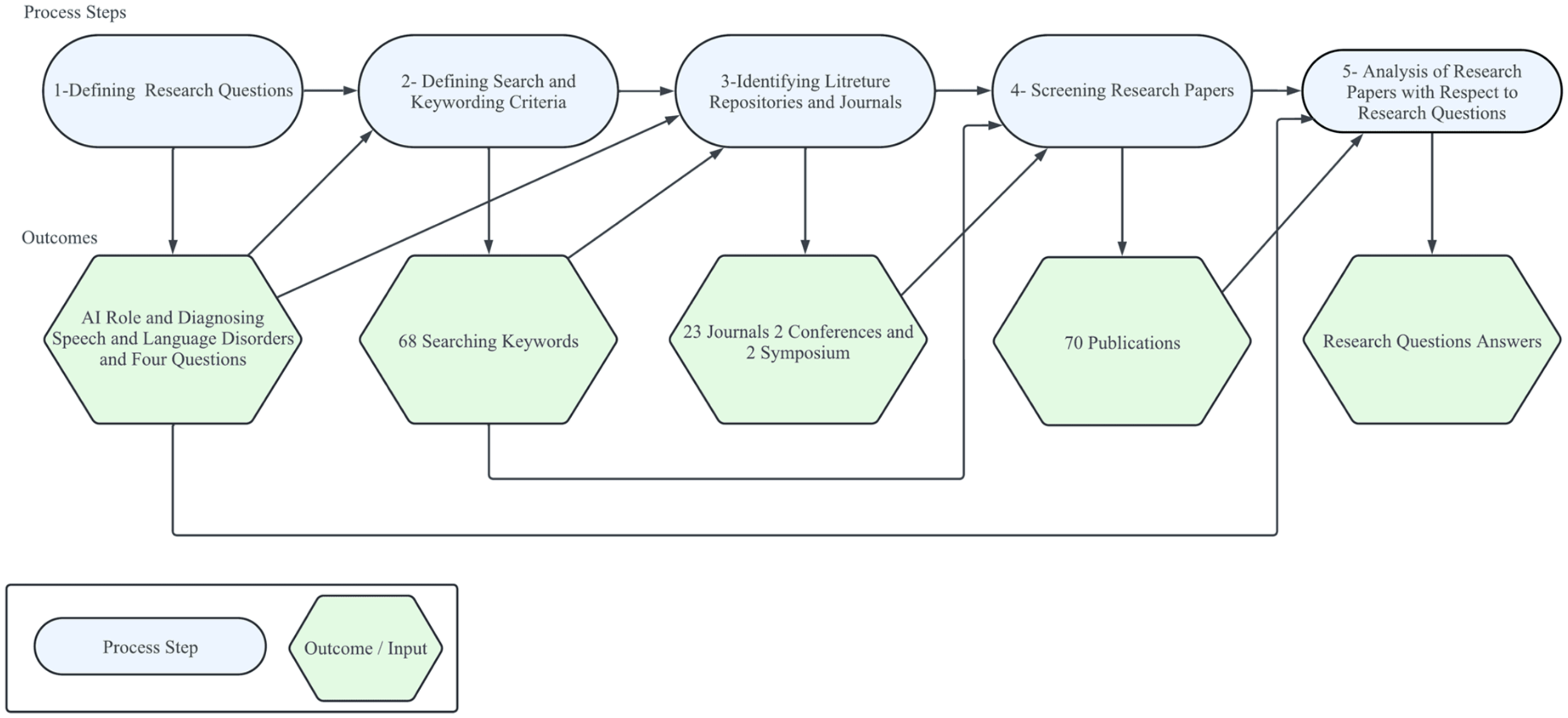

This SMS has been applied with adhering to the guidelines outlined by Petersen et al. 31 and that encompasses five steps as depicted in Figure 2.

The systematic mapping research process applied to artificial intelligence (AI) in the diagnosis of speech and language disorders.

Defining the research questions

This SMS presents a detailed investigation into the utilization and effectiveness of AI methods (and algorithms) in the diagnosis of SLDs. Additionally, the study explores the extent to which the reported AI machine learning algorithms have automated the process of diagnosing SLDs, also the effectiveness of such automation. Accordingly, the following questions have been formulated to guide the application of the adapted SMS in context of the above aim of this research study:

RQ1: What are the different types of SLDs that have been assessed and diagnosed using AI machine learning algorithms?

RQ2: What are the AI and machine learning algorithms that have been utilized in the diagnosis of SLDs?

RQ3: To what extent have the AI and machine learning algorithms automated the task of diagnosing SLDs?

RQ4: How effective are the AI machine learning algorithms (reported in answering RQ2) in the diagnosis of SLDs?

Defining search and keywording criteria

A literature search was conducted to gather all studies relevant to the role of AI in the diagnosis of SLDs. Since the topic of this paper covers the three fields of “speech disorders,” “language disorders,” and “artificial intelligence,” the derived keywords have been semantically articulated as shown in Table 1. The Boolean operators “AND” and “OR” were used in forming the search strings, for example: “Speech Disorder” AND (“Artificial Intelligence” OR “Machine Learning” OR “Deep Learning” OR “Neural Networks” OR “Convolutional Neural Networks” OR “Recurrent Neural Networks” OR “Clustering Algorithms” OR “Support Vector Machine” OR “Automatic Speech Recognition System” OR “Natural Language Processing” OR Automatic OR Automated).

Keywords used in the application of AI in speech and language disorders diagnosis in systematic mapping study.

Identifying the research literature repositories and associated research papers

The electronic search space for this SMS was determined by identifying the most prominent repositories (or databases) that encompass the diverse interests in the field of applying AI in the diagnosis of SLDs. The literature search spaced included the following Scopus and CrossRef indexed research repositories:

Mendeleyhttps://www.mendeley.com/ IEEE https://www.ieee.or ScienceDirect https://www.sciencedirect.com/

Furthermore, only journal papers that are indexed in Scopus and identified as being relevant to the domain of AI in the diagnosis of SLDs were included. These journals are published in the English language, published in the period 2000–2024, as it appears that in the year 2000, the application of AI in SLDs assessment was first reported in the literature.

32

The following journals were included in the systematic mapping literature search:

Journal of Communication Disorders Journal of Biomedical Informatics International Journal of Speech-Language Pathology International Journal of Medical Informatics Computers in Biology and Medicine Diagnostics Journal of Intelligent Systems Neurocomputing Computer Speech and Language IEEE Access International Journal of Speech Technology Speech Communication The Journal of the Acoustical Society of America International Journal of Advanced Computer Science and Applications Digital Signal Processing: A Review Journal Journal of voice Journal of Signal and Information Processing International Journal of Electrical and Computer Engineering Engineering Applications of Artificial Intelligence IEEE Transactions on Audio, Speech and Language Processing Psychology research and behavior management IEEE/ACM Transactions on Audio, Speech, and Language Processing Journal of Speech, Language, and Hearing Research (JSLHR)

Screening research papers

The selection process of the included publications in the SMS is with adherence to the PRISMA protocol depicted in Figure 3. After identifying the possible papers from the selected databases, the papers were exported to the Rayyan Software, which is a free web application designed to help researchers with the screening process for literature review projects. 33 Duplicated papers (through the Rayyan Software) were automatically removed before starting the screening process. After resolving the duplicates, papers were screened manually based on their title, abstract, and keywords. The screening proceeded based on the exclusion and inclusion criteria articulated below. Finally, the obtained papers were screened in accordance to the included journals in this SMS. A final set of 70 papers obtained that were highly relevant from different databases and journals.

Protocol-based paper selection process.

Inclusion and exclusion criteria

Inclusion criteria

Inclusion criterion 1:

Articles published in journals, conferences, or symposiums

Inclusion criterion 2:

Articles that focus on incorporating AI and machine learning algorithms and approaches in SLDs assessment and diagnosis since the early 2000s, when natural language processing and speech signal processing started to aid cognitive linguistic assessments. 34

Exclusion criteria

Exclusion criterion 1:

Publications that focus only on the application of AI and machine learning algorithms in SLDs therapy and rehabilitation.

Exclusion criterion 2:

Publications that focus on diseases not related to SLDs.

Exclusion criterion 3:

Publications with private access

Exclusion criterion 4:

Publications not published in the English language.

Data coding and synthesis

In this SMS, data coding and synthesis was performed by multiple reviewers utilizing predesigned data coding templates that have outlined specific variables to be extracted from the 70 included publications. This approach has resulted in translating the extracted outcomes into the presented Tables A1–A7 in Appendix A. Reviewers coded data independently and have collaborated in post reviewing the outcomes. In order to ensure consistency, reviewers regularly cross-checked their work, and any discrepancies were discussed and resolved through consensus meetings.

Analysis of results

Overview of studies

A total of 50,696 papers were imported after utilizing the searching keywords in the preselected repositories. After removing the duplicates and applying the inclusion and exclusion criteria that have been outlined previously in the Screening Research Papers Section, 50,626 papers have been excluded, and has resulted in including 70 papers in this systematic mapping review study. Additionally, Table 2 demonstrates the number of publications with their corresponding year of publication and their percentages, which has shown that the publications in the scope of the role of AI in diagnosing SLDs had a significant increase since 2018, with a notable rise in 2020 and 2022, when the number of papers in the targeted scope has nine papers. Figure 4 represents a reflection of the increase and decrease in the number of publications related to the targeted subject over the years.

Number of publications in the role of artificial intelligence (AI) in diagnosing speech and language disorders per year.

Publication tendency of the role of AI in diagnosing speech and language disorders, sorted by year of publication.

Furthermore, Figure 5 demonstrates the classification of the publications in accordance to their sources. The publications were classified into three types: journals, conferences, symposiums. Journals accounted for 50.00% of the total publication types, representing the most common type of publications. While conference publications accounted for 47.14%, whereas symposiums publications were the least common source of publications and accounted for 2.85% of the total publication types.

Types of publications included in the systematic mapping study.

Answering the research questions

RQ1: What are the different types of SLDs that have been assessed and diagnosed using AI and machine learning algorithms?

Table 3 represents the distribution of SLDs that have been identified across the 70 publications included in this systematic mapping review study, in which the AI and machine learning algorithms have been utilized in diagnosing and assessing these disorders. Voice disorders emerge as a predominant area in the targeted subject of this study compromising 50.00% of the included publications, with a total of 35 publications. Stuttering follows voice disorders, represented by 10 publications, accounting for 15.71% of the included publications. Dysarthria accounts for eight publications, corresponding to 11.42% of the included publications. Aphasia and speech sound disorder each contribute equally to the included publications, with six publications accounting for 8.57% of the total included studies. Articulation disorder and speech disorders in children with cleft lip and palate each represent two publications, corresponding to 2.85% of the total publications. Lastly, multiple speech disorders are addressed in an only one single publication, representing 1.42% of the total publications.

Number of publications per type of speech or language disorder.

It is evident that the AI and machine learning algorithms have been predominantly utilized in the assessment and diagnosis of speech disorders, particularly voice disorders, compared to language disorders. This can be attributed to the acoustical, quantifiable, measurable changes caused by the voice disorder, such as changes in the intensity, pitch, jitter, and shimmer acoustic measures, and that can be analyzed using AI machine learning algorithms facilitating the automated diagnosis of these disorders. 98 Conversely, certain SLDs, such as apraxia of speech, spoken language disorders, cluttering, and phonological disorder, are absent from the 70 included publications. The absence of these disorders, in addition to the decreased focus on utilizing AI machine learning algorithms in the diagnosis of other disorders present within the included publications may attributed to the complexity of these disorders and possibly the current limitations of AI implementation, as these disorders involve complex interactions between cognitive, motor, and linguistic processes; and thus making the duty of identifying the diagnostic markers of these disorders more challenging for automating the process of diagnosing these disorders. 99 Additionally, the symptoms of these disorders are highly variable and are individually based, making it more challenging to develop a generalizable AI model to diagnose these disorders. 99

RQ2: What are the AI and machine learning algorithms that have been utilized in the diagnosis of SLDs?

AI machine learning algorithms are classified based on the learning approach into two main categories: primary approaches and hybrid approaches. Primary approaches include supervised learning that is used when the data are in the form of input variables and the output target value such as support vector machine, decision trees, and random forest (RF). This learning algorithm learns how to map the function from the input to the output. Additionally, primary approaches include unsupervised learning that is used when the data are present only in the form of an input without the availability of their corresponding output value such as fuzzy role-based approaches, and k-means clustering. This learning algorithm learns the pattern in the data to know about its characteristics. Furthermore, primary approaches include reinforcement learning that is used when the aim is to make a series of decisions that will lead to a final reward such as Q-learning and proximal policy optimization.

While hybrid approaches include three main learning approaches: (1) Semisupervised learning that combines supervised and unsupervised learning, (2) Self-training learning that is a form of unsupervised learning where the training data are automatically labeled, and (3) Self-taught learning that is used when there are labeled and unlabeled data, but the unlabeled data doesn’t belong to the same classes as the labeled data.100,101

Tables A1–A7 in Appendix A provide a brief articulation of the AI machine learning algorithms that have been reported to have been implemented within the 70 SMS output publications, in relation to the diagnosis of SLDs.

The essential AI machine learning algorithms that have been utilized in the diagnosis of SLDs are briefly discussed and reflected on below:

Voice disorders:

Support vector machines: This algorithm has been used in distinguishing between normal (healthy) voice and pathological voice.44,45,47 Neural networks: Multilayer perceptron (MLP) neural networks have been widely being used in the recognition process of pathological voices and classifying speech as normal or pathological voice.

40

Additionally, feedforward convolutional networks have been utilized in classifying specific types of voice disorders, such as hyperfunctional dysphonia, phono-trauma, vocal fold palsy/paralysis, and laryngeal neoplasm.

89

Hidden Markov Models: These models have been used in the recognition process of voice pathologies, and they are often used in combination with support regression or deep neural networks.42,55 Aphasia:

Fuzzy Rule-Based Approaches: Models of the fuzzy rule-based approaches have been applied in aphasia diagnosis for distinguishing different types of aphasia, such as hierarchical fuzzy rule-based systems and adaptive neuro-fuzzy inference systems.38,29 Convolutional neural networks: Convolutional neural networks (CNNs) have been used in assessing speech impairments in aphasic patients.

71

Gaussian mixture models and deep neural networks: These models have been utilized to recognize aphasic speech for clinical assessment purposes.

55

Dysarthria:

Recurrent neural networks: Long short-term memory (LSTM) networks, which are type of the recurrent neural networks, have been widely applied to detect dysarthric speech to distinguish between healthy and dysarthric speech samples.

61

Hybrid models: The combination of CNNs and the LSTM to detect dysarthric speech.

82

Stuttering:

Deep learning models: Bidirectional LSTM (bi-LSTM) and deep residual networks (ResNet) were used to detect and classify stuttering events, such as repetitions and prolongations.22,67 Additionally, CNNs have been used to identify different types of stuttering events.86,93 Hybrid models: Pipelined deep learners, which combine convolutional auto-encoders with dual classifiers, have been used in the detection and the classification of stuttering events.

94

Speech sound disorders:

Support vector machine, RF, and gradient boosting: These algorithms have been used to detect correct and incorrect pronunciations in children's speech to diagnose speech sound disorders.

60

Siamese networks: These networks have been utilized in evaluating pronunciation correctness to diagnose speech sound disorders.

63

Articulation disorder:

Speech disorders in children with cleft lip and palate:

Support vector machine: This algorithm has been utilized to detect phonetic errors in children with cleft lip and palate.

43

RQ3: To what extent have the AI and machine learning algorithms automated the task of the diagnosis of SLDs?

The investigation into the role of AI machine learning algorithms in diagnosing SLDs revealed that the full automation in this process, where human involvement is entirely or fully automated, has not been achieved yet. According to the review of the 70 included publications in this SMS study and outlined in Tables A1–A7 in Appendix A, most of the applied AI machine learning algorithms have primarily been automating the screening procedure which is part of the second phase (assessment) of the diagnostic process of SLDs, to discriminate between healthy and disordered individuals. Figure 6 represents the distribution of publications in relation to the automation phase of the diagnostic process of SLDs. It has been revealed that around 76% of the publications included in this SMS, equivalent to 53 publications, have been partially automating the second phase (assessment) of the diagnostic process. This partial automation was achieved through the automation of the screening procedure, the assessment procedure itself, or the analysis of the responses provided by the patients. Although the full automation of the second phase would facilitate the diagnostic process expediting the assessment procedures and saving time in the analysis of the patient responses.

Distribution of publications in relation to the automation phase of the diagnostic process of speech and language disorders.

Also, around 24% of the included publications, which is equivalent to 17 publications, have been partially automating the second phase (assessment), alongside the partial automation of the third phase (diagnosis determination). The partial automation of the third phase has been achieved in certain disorders such as voice disorders, where AI machine learning algorithms automatically classify certain vocal pathologies into subtypes such as vocal fold cysts, unilateral vocal fold paralysis, and vocal fold polyps. 26 Similarly, in the case of aphasia, AI machine learning algorithms have been reported to categorize primary progressive aphasia into two subtypes, namely: semantic dementia (SD) and progressive nonfluent aphasia (PNFA). 50 Although the full automation of the third phase would reduce human bias and variability in decision-making, thus ensuring consistency and objectivity in the diagnostic process.

Furthermore, this SMS has revealed that none of the 70 included publications have included the automation of the first phase (history of the patient) and the fourth phase (providing information) of the diagnostic process of SLDs. Although the automation of the first phase would contribute significantly to the diagnostic process by saving time effectively through the elimination of manual data entry and processing, and reducing potential errors in the documentation of this phase. Additionally, fully automating the fourth phase would optimize time management by automatically generating patient assessment reports and treatment plans, thus facilitating the immediate documentation and communication of the diagnostic results to the patient and their caregivers.

RQ4: How effective are AI and machine learning algorithms in the diagnosis of SLDs?

Tables A1–A7 in Appendix A report on the effectiveness of AI machine learning algorithms reported in the 70 publications in this SMS for the diagnosis of SLDs. The effectiveness was assessed using common AI algorithms’ performance metrics:

Accuracy: The ability of the AI machine learning algorithm to identify both healthy and disordered patients among all cases, which is calculated by dividing the sum of true positives and true negatives over the total number of cases.

102

Sensitivity: The ability of the AI machine learning algorithm to identify the disordered patients correctly, which is calculated by dividing the true positives over the sum of true positives and false negatives.

102

Specificity: The ability of the AI machine learning algorithm to identify the healthy cases correctly, which is calculated by dividing the true negatives over the sum of true negatives and false positives.

102

However, as reported in Tables A1–A7 in Appendix A, many of the 70 included publications haven’t reported the performance of the AI machine learning algorithm using the previously mentioned metrics. Otherwise, they have used other metrics such as area under the curve, correlations, and equal error rate.

The following reflects the top three effective AI machine learning algorithm per type of speech or language disorder that have been assessed using the performance metrics:

Voice disorders:

Gaussian mixture models: Achieved an accuracy, sensitivity, and specificity percentages of 100% in study

35

to identify pathological voices from health voices using the Massachusetts Eye and Ear Infirmary (MEEI) Database which has been developed by the MEEI Voice and Speech Lab, and that contains more than 1400 recorded voice samples.

26

Support vector machine: Achieved an accuracy, sensitivity, and specificity percentages of 99.96% in

26

study to identify pathological voices affected by vocal fold cysts, unilateral vocal fold paralysis, and vocal fold polyps from healthy voices using the MEEI Database. Multilayer perceptron neural networks: To identify pathological voices from healthy voices, this AI machine learning algorithm has achieved in

40

study an accuracy percentage of 96.3%, sensitivity percentage of 99%, and specificity percentage of 82% when using running speech. Additionally, it has achieved an accuracy percentage of 93.8%, sensitivity percentage of 97%, and specificity percentage of 78% when sustained vowels. Aphasia:

Adaptive neuro-fuzzy inference system: Achieved an accuracy percentage of 94.6% in the diagnosis of anomic, global, Broca's, and Wernicke's aphasia in

29

study, using 265 Aachen Aphasia Test (AAT) profiles of aphasic patients. However, sensitivity and specificity percentages weren’t reported. Hierarchical fuzzy rule-based: Achieved an accuracy percentage of 91.30% using spontaneous speech data, and 93.61% using comprehensive language test data in

38

study, using 265 AAT profiles of aphasic patients. However, sensitivity and specificity percentages weren’t reported. Random forest: Achieved an accuracy percentage of 84.85% to identify the presence or the absence of primary progressive aphasia, and 75.59% to classify two types of the primary progressive aphasia (SD and PNFA) in

50

study. However, sensitivity and specificity percentages weren’t reported. Dysarthria:

Hybrid CNNs and LSTM: This model has achieved an accuracy percentage of 99.59% in

82

study to detect the presence of dysarthric speech using the TORGO Dataset which contains audio recordings from patients with cerebral palsy (CP) and amyotrophic lateral sclerosis.

82

However, sensitivity and specificity percentages weren’t reported. Support vector machine with wac2vec 2.0 model: Achieved an accuracy percentage of 93.95% in study to detect the presence of dysarthric speech, with a sensitivity of 93%, and a specificity of 95% using the UA-Speech Database that contains audio recordings from dysarthric patients with CP and healthy cases.

88

Convolutional neural network with wav2vec2-LARGE-2 feature: Achieved an accuracy percentage of 95.79% in

96

study, with a sensitivity of 94%, and a specificity of 98% to detect dysarthric patients from healthy cases using the UA-Speech Database. Stuttering:

Deep learning Bi-LSTM: Achieved in

22

study an accuracy percentage of 98.67% to detect disfluent speech (prolongation), with a sensitivity of 97.5%, and a specificity of 98.12%. While detecting the disfluent speech (syllable repetition) has achieved an accuracy percentage of 97.5%, and a sensitivity of 92.5%, and a specificity of 98.12%. Furthermore, an accuracy percentage of 97.19% was achieved to detect disfluent speech (word repetition), with a sensitivity of 87.5%, and a specificity of 99.37%. Additionally, achieved an accuracy percentage of 97.67% to detect disfluent speech (phrase repetition), with a sensitivity of 97.5%, and a specificity of 96.87%. Lastly, achieved an accuracy percentage of 97.33% to detect fluent speech, with a sensitivity of 90%, and a specificity 98.75%. University College London Archive of Stuttered Speech Dataset (UCLASS) was used in this study. Support vector machine: Achieved an accuracy percentage of 96.67% ± 0.37% in

51

study to classify speech disfluencies (repetitions and prolongations) using the UCLASS. However, sensitivity and specificity percentages weren’t reported. Deep residual network (ResNet) and Bi-LSTM: Achieved in study

67

an accuracy percentage of 84.10% to classify disfluent speech (sound repetition), 96.60% to classify disfluent speech (word repetition), 95.54% to classify disfluent speech (phrase repetition), 97.14% to classify disfluent speech (revision), 81.40% to classify disfluent speech (interjections), and 94.08% to classify disfluent speech (prolongations) with an average classification accuracy of 91.15%. However, sensitivity and specificity percentages weren’t reported. Additionally, UCLASS was used in this study. Speech disorders in children with cleft lip and palate:

Automatic detection based on time-domain waveform difference analysis: This model in

64

study has achieved an accuracy percentage of 88.9%, with a sensitivity of 70.89%, and a specificity of 91.86% to automatically detect consonant omissions in cleft palate children. Hybrid approach: A combination of machine learning algorithms reported in Appendix A5, the study

43

has been utilized to develop a semiautomatic and fully automatic evaluation for five common speech disorders (hypernasality in vowels, nasalized consonants, pharyngealization, laryngeal placement, weakened pressure consonants) in children with cleft lip and palate. The study has reported for the semiautomatic evaluation an accuracy percentage, ranging from 94.2% to 99.6% at the frame level to detect these common speech disorders, with a sensitivity that ranged from 56.8% to 71.1%. While at the phoneme level, the accuracy percentage ranged from 95.6% to 99.6%, with sensitivity from 62.9% to 76.9%. Additionally, at the word level, the accuracy percentage ranged from 82.5% to 98.2%, with sensitivity from 60.6% to 75.8%. Regarding the fully automatic evaluation, the accuracy percentage at the frame level ranges from 94.2% to 99.1%, with sensitivity from 52.6% to 71.1%. Moreover, at the phoneme level, the accuracy percentage ranged from 88.5% to 99.6%, with sensitivity from 56.9% to 76.9%. Lastly, at the word level, the accuracy percentage ranged from 68.6% to 98.2%, with sensitivity from 50.7% to 75.8%. (The study didn’t report the performance using the specificity measure). Articulation disorder:

Support vector machine: Achieved an overall accuracy percentage of 88.89% in

16

study to detect the presence of articulation disorder for those with substitution errors only. However, sensitivity and specificity percentages weren’t reported. Multilayer perceptron neural network: Achieved an overall accuracy percentage of 79.86% in

41

study to detect the presence of articulation disorder for those with substitution errors only. However, sensitivity and specificity percentages weren’t reported. Speech sound disorders:

Siamese recurrent network with bidirectional gated recurrent units: Achieved an accuracy percentage of 94.1% in study

63

to detect the presence of speech sound disorder in children by classifying the correctness of the pronunciation, with a sensitivity of 91% using the CUChild127 speech corpus, which contains speech data from 1500 Cantonese-speaking children aged 3–6 years. However, the specificity percentage wasn’t reported. Siamese recurrent network with gated recurrent units: Achieved an accuracy percentage of 93.3% in study

63

to detect the presence of speech sound disorder in children by classifying the correctness of the pronunciation, with a sensitivity of 90% using the CUChild127 speech corpus, which contains speech data from 1500 Cantonese-speaking children aged 3–6 years. However, the specificity percentage wasn’t reported. Blending situation awareness with machine learning: Achieved an accuracy percentage of 92.5% in study

60

in classifying children's speech as correct or incorrect in concordance with the therapist evaluation to detect speech sound disorder. However, sensitivity and specificity percentages weren’t reported.

Discussion

The findings of this SMS regarding the utilization of AI machine learning algorithms in diagnosing SLDs have identified multiple research gaps in the current research landscape. Out of the 70 reviewed publications, 64 publications were focusing on speech disorders, and only 6 publications were addressing language disorders. Accordingly, this informs that there is substantial divide in the application of AI machine learning algorithms in diagnosing SLDs, where 91.43% of the reported research relates to speech disorders, compared to 8.57% in relation to language disorders. Such a finding is further exacerbated by the absence of applying AI machine learning algorithms in diagnosing prevalent language disorders, such as developmental language disorders that affect many children, 2 and this may be related to the complexity of the signals that is being analyzed by the AI machine learning algorithms as diagnosing language disorders relies on assessing multiple aspects such as pragmatics, phonology, semantics, syntax, and morphology with taking into consideration the different developmental milestones of the assessed patient. While diagnosing speech disorders relies more on analyzing the acoustic features of the signals.

Also, this study revealed that most of the utilized AI machine learning algorithms in diagnosing SLDs are being focused on the binary classification of SLDs, such as classifying the voice sample into healthy voice and pathological voice,35,44,47 where such classification is often possible through traditional methods like auditory perception by trained professionals. A more useful application of AI in diagnosing SLDs would involve AI models capable of providing detailed diagnostics, such as the impaired aspects and the contextual severity of the disorder itself.

Furthermore, this study revealed that the generalizability of findings in relation to efficiency of AI machine learning algorithms in diagnosing SLDs have not been objectively achieved, as it is subjectively limited by a number of factors. Many AI models have been trained and tested using datasets that are specific to certain languages, dialects, age groups, and/or disorder subtypes, which limits the applicability of the AI models to larger broader populations.16,41,42,55,56,76,95 For instance, in one study, 49 the AI model was trained on six subtypes of voice disorders (vocal fold cysts, laryngopharyngeal reflux disease, spasmodic dysphonia, sulcus vocalis, vocal fold nodules, and vocal fold polyps) to detect the presence of voice disorder, which limits the underlying AI model in detecting other voice disorders. Additionally, this SMS has included a wide range of SLDs that differ significantly by their diagnostic characteristics and their evaluation details. Thus, it may limit the applicability of our findings to all SLDs. Future research work would be recommended to be focused on targeted SLDs. Alongside, a recent research paper investigated the role of an AI model (ChatGPT) in supporting speech-language therapists. Although the study showed high accuracy in tasks such as writing assessment reports, and in clinical decisions, the study identified important risks such as linguistic limitations, and the absence of real-world clinical validation. 103 Thus, these results are in line with our observation, in the diagnosis of SLDs, differences in language, dialect, and culture affect the generalizability of AI models in the diagnostic process.

Moreover, our current review did not include a classical and detailed quality assessment to complement SMS methodology in SLD context, we have incorporated elements of quality evaluation indirectly. This is evidenced in Tables A1–A7, where we provide information on sample size within the renamed “Population: Sample Size Diversity” column and assessed the transparency of key performance metrics—such as accuracy, sensitivity, and specificity—under the renamed columns titled “Performance Analysis: Reported Metrics” and “Performance Analysis: Missed Metrics” in the context of the research questions. These aspects were rigorously reviewed and assessed by the two independent reviewers, with outcomes’ consensus reached and documented in the Tables A1–A7. This approach was intended to ensure a level of methodological scrutiny and transparency without critically affecting the limited number of growing research articles in the field of AI-assisted SLD diagnosis and underlying research synthesis process, while recognizing the diverse and emerging nature of research in AI for SLDs.

From a broader perspective and as future research directive, adopting a more formal quality assessment framework, such as a tailored version of tools like CASP 104 is anticipated to reinforcing the domain-specific criteria, undertaken in this research. This would allow researchers and practitioners to distinguish the varying methodological rigor and potential biases, toward more critical understanding and reflective implications for employing AI tools in SLD most effectively.

Additionally, this review may benefit significantly from adding a comparative analysis to the descriptive analysis of the 70 included publications in this work to gain deeper and strengthened insights into our conclusions. In addition, this review has included only English language publications which may have induced a linguistic bias in our findings. Thus, expanding the inclusion criteria to include publications from diverse languages would enhance the generalizability and the applicability of results in future work. Furthermore, while this review has included an internal protocol to guide our methodological approach, the absence of a preregistration in a public external registry such as PROSPERO 105 and OSF 106 remains a limitation. However, the transparency and the replicability of this SMS are not considered compromised. Finally, although the data coding in this work was performed primarily by multiple reviewers using coding templates with routine cross-checks, incorporating inter-rater reliability statistical measures would further improve reproducibility and transparency.

Finally, while detailing all algorithms referenced across the 70 publications presents significant challenges—particularly because our systematic review encompasses a broad range of SLDs rather than focusing on a specific condition—we believe it is more impactful to emphasize the most effective AI and machine learning techniques tailored to each specific SLD. This approach will not only enhance the clarity and relevance of our discussion but also provide a clearer pathway for future research. Moving forward, we plan to incorporate these insights into our subsequent studies, designing diagnostic tools that encompass the most promising AI algorithms aligned with targeted SLDs, thereby advancing precision and effectiveness in clinical applications with reference to highly effective AI machine learning algorithms.

Conclusion

This study has addressed the research gap in the provision of a comprehensive systematic study of the literature in relation to the effectiveness and extent of automation of AI machine learning algorithms, the diagnostic process of SLDs with a detailed emphasis on the role of these algorithms in diagnosis of specific respective disorders. This has resulted in 70 publications that have met the inclusion and exclusion criteria established in the research design of the SMS process conducted in this research. Furthermore, this study contributes to the SLDs field of knowledge a comprehensive articulation of the AI machine learning algorithms that have been utilized in the diagnosis of SLDs that have been reported since AI was utilized in this field of specialization since 2000, along with respective type of AI algorithms versus specific SLD, the datasets used in the testing and training of those AI algorithms, their effectiveness using AI algorithms metrics such as accuracy, specificity, and sensitivity. Finally, this SMS study reported on the extent of automation the speech and language process of disorders diagnosis.

As this study has confirmed the existence of a substantial divide in the application of AI machine learning algorithms in diagnosing SLDs, of 91.43% versus 8.57% of research output in SLDs, respectively. Accordingly, several questions that are ought to be addressed. First, what are the current shortcomings in AI machine learning algorithms that limit attempts to implement more research into adopting AI machine learning algorithms for the automatic diagnosis of language disorders and especially developmental language disorders that affect many children? Second, more research needs to be carried out to inform whether funding of AI-based research for language disorders is one key substantial contributor to this substantial divide between SLDs diagnosis using AI machine language algorithms.

Furthermore, this study confirmed that the focus in the application of AI machine learning algorithms has been primarily on the partial automation of the second phase (assessment) of the diagnostic process of SLDs, accounting for around 76% of the included publications. Additionally, around 24% of the included publications have extended the automation to include the partial automation of the third phase (diagnosis determination). Hence, this directs the finding of a further research gap for the need of developing AI models that are capable of automating the whole diagnostic process of SLDs encompassing all four phases, with post-linkages to treatment protocols.

Finally, the findings of this study have highlighted the top-performing AI machine learning algorithms in relation to their roles in the diagnostic process of SLDs. However, a significant gap remains in reporting the performance of these AI machine learning algorithms using the common performance metrics, particularly sensitivity, specificity, and accuracy. Therefore, this SMS study and its associated processes have highlighted a broader challenge in the field and more specifically: the lack of standardized and structured quality assessment protocols tailored to AI research applied in clinical contexts, like diagnosis of SLDs. Therefore, the lack of such standardized protocols in SLD context hinders the formal qualitative evaluation of research studies’ robustness, reproducibility, and comparability necessary for evidence-based practice. This underscores the urgent need for developing AI-based SLD study standardized quality assessment protocols that explicitly incorporate measures for data collection quality, metrics for formal AI algorithms transparency reporting, ethical adherence measures, performance transparency reporting standards, etc.

As a corollary from this SMS, we are working on the development of formal structured quality assurance protocols for AI algorithms and tools development and deployment in SLD journey context, aimed at paving the way for facilitating rapid and standardized regulatory approval processes, and hence, the betterment of stakeholders’ confidence in support of more effective clinical implementation.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076251379769 - Supplemental material for The role of AI in the diagnosis of speech and language disorders: A systematic mapping study

Supplemental material, sj-docx-1-dhj-10.1177_20552076251379769 for The role of AI in the diagnosis of speech and language disorders: A systematic mapping study by Dina Tbaishat, Rand Al-Shafei and Mohammed Odeh in DIGITAL HEALTH

Footnotes

Acknowledgements

The authors are grateful to Zayed University, Dubai, UAE, for funding and supporting this research, and to Global Academy for Digital Health for research support.

Contributorship

DT and MO contributed to conceptualization and supervision. All authors contributed to the methodology, investigation, and validation of the study. RS and MO were responsible for the software, while RS also handled data curation, formal analysis, and writing the original draft. DT oversaw project administration and secured funding. Both DT and MO contributed to reviewing and editing the manuscript.

Funding

The authors express their gratitude to Zayed University for supporting this study through the Research Incentive Fund (RIF Grant No. 23010), with DT as the principal investigator.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.