Abstract

Purpose

This study examined meta-data, source, type of informational content, understandability, and actionability of YouTube content related to speech and/or language disorders.

Method

The 100 most widely viewed videos related to children with speech and/or language disorders were obtained. Meta-data and sources of each upload were identified. Type of informational content within the videos was analyzed. The Patient Education Material Assessment Tool for Audiovisual Materials was used to assess understandability and actionability.

Results

A significant difference between video source groups was found for length of video, thumbs-up, and thumbs-down, but not for number of views. The YouTube videos related to speech and/or language disorders covered a range of issues, although a majority of the content focused on signs/symptoms and treatment. Videos had close-to-adequate understandability (i.e. 68%), although poor actionability scores (i.e. 32%) were noted. Videos uploaded by professionals were superior to other upload sources in understandability, but no difference was noted between video source for actionability.

Conclusions

Study insights about meta-data, source, type of informational content, understandability, and actionability of YouTube videos may help professionals understand the nature of online content related to speech and/or language disorders. Study implications and recommendations for further research are discussed.

A substantial number of parents seek Internet-based information and support related to their child’s health, development, or disorder.1–3 This shift from only seeking information and support from professionals to also seeking Internet-based information and support warrants close examination to understand the nature of online content relative to unique populations. As noted by Greenberg et al., 4 professionals and other stakeholders are concerned about the accessibility and quality of health information from Internet-based sources. However, there are limited mechanisms to ensure a standard of reporting of health-related information online. As such, it is critical that professionals evaluate Internet-based materials so they may guide patients to helpful, accurate, and accessible information. 5

YouTube is the main public online video-sharing site currently available and provides an outlet for health-related information developed by professionals, health organizations, patients, and/or families and friends of patients. 6 Gabarron et al. 7 examined the most frequently used methods to evaluate video quality of healthcare videos. Expert ratings were the primary method, but due to the volume of online videos, expert ratings may not be feasible. The second most frequently used evaluation was popularity (e.g. public ratings such as view count). Unfortunately, popularity of videos may be manipulated or misleading. Finally, meta-data (e.g. video length, number of views) was noted as another feature to measure quality. Examining the video length relative to other meta-data (e.g. thumbs-up, thumbs-down) may provide information regarding how populations interact with the videos during searches or viewing. 8

There are several validated tools available to evaluate various health literacy constructs in text information (e.g. readability, understandability, suitability, comprehensibility). 9 However, these tools do not include analysis of audio-visual information. One validated tool to analyze either print and audio-visual information is the Patient Education Material Assessment Tool (PEMAT). The PEMAT is a free, publicly available tool developed for the Agency for Healthcare Research and Quality to assess understandability and actionability of patient education materials. 10 Understandability refers to health information that can be understood by health consumers from diverse backgrounds and with varying levels of health literacy. Actionability refers to health information that enables patients to easily identify what they need to do. There are two versions of the PEMAT. The PEMAT-P is used to evaluate printed material and the PEMAT-AV is used to evaluate audiovisual materials. Strong internal consistency, reliability, and construct validity of PEMAT-AV was established by Shoemaker et al. 11 The PEMAT-AV has been recently used to evaluate information directed at a patient audience.12–14

YouTube content in communication disorders

Internet-based health-related video information has been evaluated in multiple healthcare areas. In a systematic review of health-related information on YouTube, Madathil et al. 15 reported that YouTube information is unregulated and may contain misleading information. Furthermore, obtaining videos is based on the healthcare search term used and the probability of a non-professional finding misleading or low-quality healthcare content is high due to the variety and type of search terms used. That said, Madathil et al. 15 reported that videos from government organizations and professional associations contained trustworthy and high-quality information.

Only recently has work examining video information in communication disorders been conducted. Three studies have been published. Within the area of autism, Kollia et al. 16 identified source of upload and content portrayed in the 100 most-viewed videos on Autism Spectrum Disorder (ASD). Results indicated that videos with the most views were uploaded by non-professionals and provided content (i.e. personal videos and television show clips). Bellon-Harn et al. 17 examined meta-data, source, content, understandability, and actionability of ASD-related information contained in the most widely viewed videos uploaded to YouTube. Video content covered a range of issues, although a majority of the content focused on signs and symptoms of ASD. Poor understandability and actionability scores were reported for all videos regardless of video source. These videos primarily were personal videos and television show clips. Bellon-Harn et al. 17 reported that the mean of the number of views of videos uploaded by professionals was notably greater than consumer and Internet-based videos and concluded that families of children with ASD lean toward watching videos that may have a higher degree of scrutiny (i.e. professional) than other videos. Basch et al. 18 examined information about tinnitus contained in 100 of the most widely viewed videos on YouTube. Of those, most were uploaded by non-professionals and mainly consisted of personal experiences about tinnitus.

Summary and study aim

YouTube is one media platform through which healthcare content is shared. Examining information from YouTube to which clients are exposed will help professionals guide patients to helpful, accurate, and accessible information. Video quality can be evaluated through multiple methods and tools (e.g. meta-data, source, PEMAT-AV). Evaluating online information across multiple dimensions increases the strength of the evaluation. 9 Recently video information on YouTube about autism and tinnitus was conducted. This study extends this work in communication disorders. The purpose of this study was to examine the meta-data, source, type of informational content, understandability and actionability of information about children with speech and/or language disorders (S/LDs) contained in 100 of the most widely viewed YouTube videos.

Method

Data extraction

The study was deemed exempt from review by the Lamar University Institutional Review Board (IRB) since all information was publicly available. We followed the same procedure to extract data as other studies examining online content.17,19–21 A panel of two speech-language pathologists that served children with a S/LD, nine parents of children with a S/LD, and three early childhood teachers were asked to provide keywords that might be used when searching for information related to S/LDs. They provided 30 unique keywords or phrases they considered to be most likely used when searching for information on the Internet. Parents used phrases such as, “How to help my child with speech delay,” “Help my child use words to communicate,” or “Why isn’t my toddler talking.” Teachers and speech-language pathologists used keywords such as child language disorder, speech delay, child expressive language disorder. Words and phrases were entered in Google Trends (www.google.com/trends), which compiles relative frequency of keywords in the search engine over time. The three most frequent keywords (i.e. speech delay, expressive language disorder, child language disorder) identified from Google Trends were used to search for videos on YouTube about children who have S/LDs that are in English. YouTube presents search results differently depending on the (a) type of Internet browser, (b) time of search, and (c) if the researchers have logged in to their personal YouTube (or Gmail) account. Hence, to minimize the user-targeted search results the browser history was deleted, cookies were cleared, and the search was performed in a private mode on the Mozilla Firefox browser (Version 62.0.3).

All videos included were in English. Videos were excluded if they did not include informational content related to S/LDs. A total of 46 videos were excluded. Twenty-four were home videos of children without context, information, or explanation. Eleven were not in English, eight were not about children or S/LDs, two were repeated videos, and one was an advertisement. Once the sample of 100 videos was comprised, basic descriptive data was included: the title, uniform resource locator (URL), date of upload, length of the video, total number of views of the video, as well as the number of thumbs-up (likes) and thumbs-down (dislikes). The sample was collected during February–March 2019.

Coding the video source and content

Content of each video was categorized and coded. First, the sources of upload were recorded and grouped into the following categories: (a) consumer (i.e. member of the lay public), (b) professional (i.e. a person with credentials, qualified to discuss the topic, professional body); or (c) Internet-based clip (i.e. any clip that originated from an Internet channel or website). Second, videos were coded for type of information. To determine the types of information considered relevant to families seeking information regarding S/LDs, categories from fact sheets for parents from the National Institute of Deafness and Communication Disorders were compiled (i.e. https://www.nidcd.nih.gov/health/specific-language-impairment). These included the following categories.

Signs and symptoms: this refers to behavior, sensory, communication, social, and play indicators of S/LDs. Causes: this refers to genetic and environmental etiologies of S/LDs. Treatment: this refers to information about treatments associated with S/LDs. Diagnosis: this refers to explanations on how to obtain a diagnosis of a S/LD including descriptions of multidisciplinary teams, qualified professionals, and components of the diagnostic protocols. Services: this refers to how families can obtain services for their child with a S/LD (e.g. early childhood intervention). Research: this refers to mention of evidence-based practice or research related to S/LDs. Policy: this refers to information related to eligibility and access to early intervention services and Individuals with Disabilities Education Act (IDEA). Associated disorders: this refers to commonly associated disorders of S/LD (e.g. Attention Deficit Hyperactivity Disorder [ADHD]). Resources: this refers to mention of other organizational contacts that may provide additional resources about S/LDs.

Meta-data

Meta-data extracted from the videos included number of views, length of videos, thumbs-up, and thumbs-down. The number of views, video length, thumbs-up, and thumbs-down was recorded based on information in the page containing the video.

Understandability and actionability

The understandability and actionability of each YouTube video were evaluated using PEMAT-AV.10 The PEMAT-AV has 17 items, with 13 items related to understandability and four items related to actionability; the items are listed in Appendix 1. Two items (item 12 and 19) were not included in our ratings as they were not relevant to the video content we reviewed. Thus, there were 11 items rated for understandability. Each item was scored as agree ( = 1), disagree ( = 0), or not applicable.

The percentage understandability and actionability sub-scale scores were calculated by dividing the number of items which scored one (i.e. agree) by number of items rated. Items that were identified as not applicable were not included in the calculation. Areas potentially rated as not applicable included use of text and/or visual aids if no text and/or visual aids were used. For example, for a specific video, if 10 out of 13 items in the understandability sub-scale were rated and three were not applicable, the calculation would include 10 total items rated. Of the 10, if five items were rated as agree, the understandability score would be 50% (i.e. score of five out of 10 items rated, 5/10 = 50).

First, two Master’s level graduate students in speech and hearing sciences and an author who holds a PhD in communication disorders, utilized the user’s guide provided by the Agency for Healthcare Research and Quality (https://www.ahrq.gov/ncepcr/tools/self-mgmt/pemat.html) to familiarize themselves with PEMAT-AV. Next, the team evaluated 10 videos within the area of speech and hearing sciences (i.e. aphasia) to calibrate their responses on the PEMAT-AV and reach consensus. They followed the five steps presented in the guide: (a) read through the PEMAT-AV and user’s guide; (b) read or view patient education material; (c) decide if the PEMAT-AV is appropriate to use; (d) go through each PEMAT-AV items one by one; and (e) rate the material on each item as you go through them.

Finally, one graduate student completed analysis of 100 videos and the other graduate student completed analysis of 20% of randomly selected videos. The interclass correlation coefficient (ICC) was performed to examine the inter-rater reliability for PEMAT-AV sub-scale ratings. ICC values <0.5, between 0.5–0.75, between 0.75–0.9, and >0.90 are indicative of poor, moderate, good, and excellent reliability, respectively. 22 The ICC for understandability and actionability sub-scales were 0.77 and 0.85, respectively, suggesting good inter-rater reliability for PEMAT-AV.

Data analysis

Statistical analysis was conducted using IBM SPSS Software Version 24. The descriptive statistics were examined. Normality tests were performed on the videos meta-data (i.e. number of views, length of videos, thumbs-up, thumbs-down) and PEMAT-AV (i.e. understandability sub-scale scores, actionability sub-scale scores). The Shapiro-Wilk test and also visual examination of normality plots suggested that all of these variables violated the assumption of normality. Hence, non-parametric tests were used for further analysis.

The Kruskal-Wallis H test was used to examine whether the meta-data and the PEMAT-AV scores varied across the video source (i.e. consumer, professional, Internet-based). A pairwise analysis was performed using the Bonferroni post-hoc test for the variables that found significance in the Kruskal-Wallis H test. Spearman’s correlation was performed to examine the correlation between the meta-data variables. Manually-coded video content was converted into multiple binary variables (i.e. coded as zero if the video did not include information about a category and coded as one if the video did present information about a category). A significance level of 0.05 was used for interpretation of results. However, when conducting the Bonferroni post-hoc test, SPSS provides a p-value for each pair of means that is adjusted so that it can be compared directly to 0.05.

Results

Video source and popularity

Of the 100 most viewed videos identified on YouTube, 24 were created by consumers, 58 were created by professionals, and the remaining 18 were Internet-based. Table 1 presents the descriptive data of the popularity-based meta-data for the videos. The collective number of views of the videos was 4,165,406. The length of videos for all 100 videos was 760 min (i.e. 12 h 40 min) with the shortest video being 44 s and the longest video being 61.19 min. The total number of thumbs-up (i.e. likes) and thumbs-down (i.e. dislikes) for these videos were 26,478 and 1414 respectively.

Descriptive statistics of meta-data (i.e. number of views, video length, thumbs-up and thumbs-down) in 100 most viewed childhood speech and/or language disorder (S/LD) YouTube videos in English by their source (consumer = 24; professional = 58; Internet-based = 18)

SD: standard deviation.

The Kruskal-Wallis H test was conducted to compare the meta-data between the four video sources. No significant differences between the source groups were found for number of views (Chi square = 1.45, p = 0.48). However, a significant difference with a moderate effect size was found between video source groups was found for length of video (Chi square = 8.43, p = 0.02. η2 = 0.066), thumbs-up (Chi square = 7.30, p = 0.03, η2 = 0.055), and thumbs-down (Chi square = 7.73, p = 0.02, η2 = 0.059). For video length, the pairwise with Bonferroni post-hoc tests showed that Internet-based videos were significantly shorter when compared to professional (p = 0.03) and consumer (p = 0.03) videos, but no statistically significant difference was found between professional and consumer videos. For thumbs-up and thumbs-down, the pairwise comparisons showed that Internet-based videos had significantly fewer thumbs-up and thumbs-down when compared to consumer videos (p = 0.02 and p = 0.03), but no other differences were statistically significant.

The Spearman’s correlation analysis was conducted to examine the relation between variables number of views, video length, thumbs-up, and thumbs-down. Number of views had a strong positive correlation with thumbs-up (rs = 0.81, p < 0.01) and thumbs-down (rs = 0.86, p < 0.01). Video length had a small positive correlation with thumbs-up (rs = 0.26, p < 0.01). Also, thumbs-up had a strong positive correlation with thumbs-down (rs = 0.82, p < 0.01). No other statistically significant associations were noted.

Video content

The percentage of videos categorized by type of informational content are shown in Table 2. Over 50% of the videos presented information about signs and symptoms and treatment. Fewer videos presented content about causes and diagnosis of S/LDs (i.e. 17% and 13%, respectively). Only 5% or less of the video content was related to services, research, policy, associated disorder, and resources. No difference in source categories was identified for most content categories. However, professional videos included content related to causes, whereas the consumer videos did not talk about causes at all. None of the sources provided much information about services available for S/LDs.

Percentage of videos presenting type of informational content in the 100 most viewed speech and/or language disorder (S/LD) YouTube videos by their source and content.

Understandability and actionability

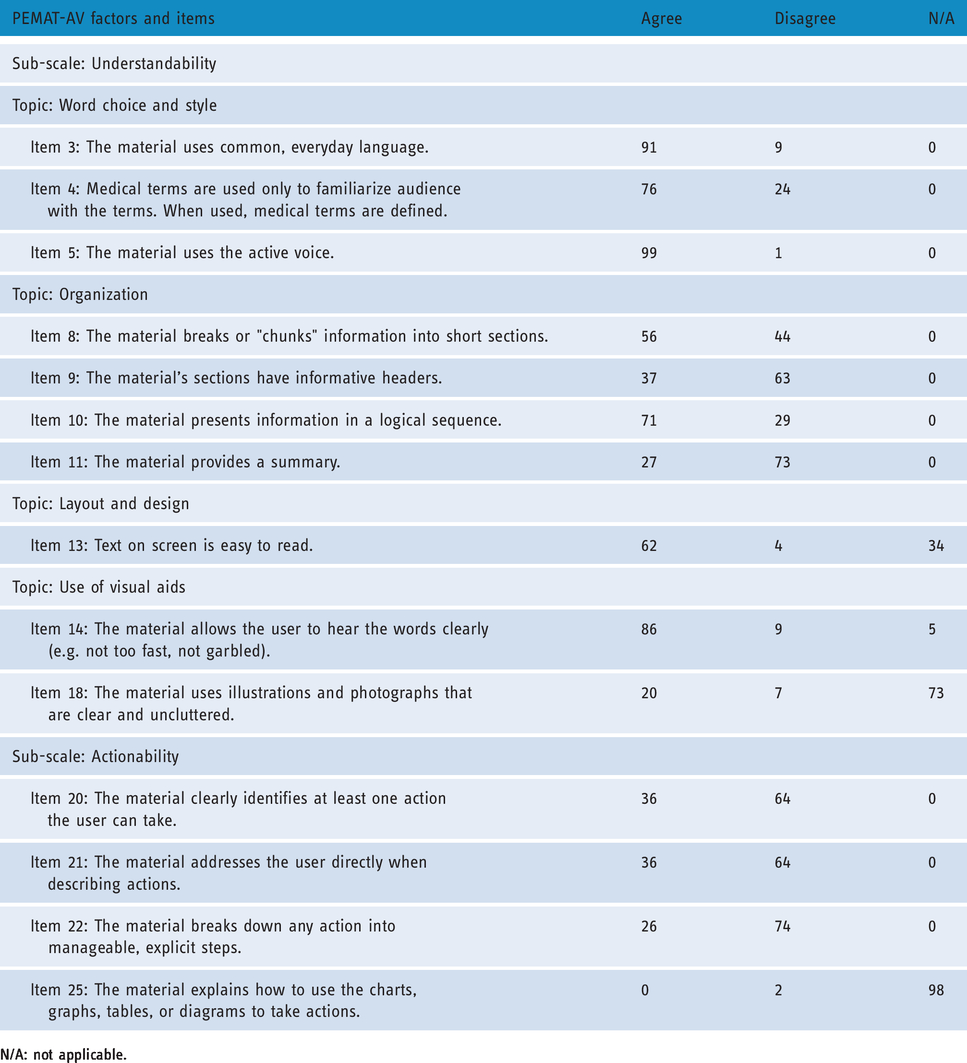

Table 3 presents the frequency of responses to the PEMAT-AV individual item ratings.

Frequency of responses to the Patient Education Material Assessment Tool-Audiovisual Material (PEMAT-AV) items.

N/A: not applicable.

With regard to items contributing to the sub-scale understandability, the frequency of agree responses was highest for the use of common language (item 3) and active voice (item 5), 91% and 99% respectively. A majority of videos received agree responses for audio clarity (item 14, 86%), use of medical terms with explanation (item 4, 76%), use of a logical sequence (item 10, 71%), clarity of text (item 13, 62%), clarity of purpose (item 1, 60%), and chunking the information (item 9, 56%). Frequency of disagree responses included use of informative headers (item 9, 63%) or provision of a summary (item 11; 73%). A majority of videos did not use illustrations or photographs, so a majority of videos were rated as not applicable on item 18 (73%).

A majority of videos were rated as “disagree” within the sub-scale actionability items. Sixty-four percent of the videos did not identify a minimum of one action the user could take (item 20). A majority did not address the user directly (item 21, 64%) and did not break down any action into manageable, explicit steps (item 22, 74%). A majority of videos did not use charts, graphs, tables, or diagrams, so a majority of videos were rated as not applicable on item 25 (98%).

Table 4 presents the descriptive statistics of PEMAT-AV scores across video source categories. As the percentages rise, understandability and actionability increases too. Scores under 70% indicate that the information has poor understandability or actionability. 11 The overall understandability and actionability scores for the videos were 68% and 32%, respectively. Overall these scores indicate poor understandability and actionability (i.e. below 70%), although understandability scores were close to being considered acceptable. The Kruskal-Wallis H test was performed to examine the differences in understandability and actionability scores based on video source categories. The results of Kruskal-Wallis H test showed that there is a significant difference with a moderate effect size in understandability scores between videos from different sources (Chi square = 7.94, p = 0.02, η2 = 0.061), but no significant difference in actionability scores between videos from different sources (Chi square = 5.67, p = 0.06). The pairwise comparisons of understandability scores with Bonferroni post-hoc tests showed that professional videos had significantly higher understandability when compared to consumer videos (p = 0.02), but no statistically significant difference was found between professional and Internet-based videos and between consumer and Internet-based videos.

Patient Education Material Assessment Tool-Audiovisual Material (PEMAT-AV) scores across video source categories (consumer = 24; professional = 58; Internet-based = 18).

CI: confidence interval; SD: standard deviation.

Discussion

In light of the fact that families of children with disabilities seek information and support through the Internet, this study sought to examine the source, content, understandability, and actionability of S/LD-related information contained in the most widely viewed videos uploaded to YouTube. Results indicated that the number of views was 4,165,406 and viewers overwhelmingly liked the videos. The majority of videos were created by professionals. However, consumer-uploaded videos were viewed more frequently, albeit this was not statistically different, and had more thumbs-up than professional videos and Internet-based videos. Internet-based videos were shorter than videos from other sources and had the fewest thumbs-up. Taken together, results point toward the popularity of consumer videos. As noted by Van den Eynde et al., 8 the comparison of views across video source provided some valuable information. As noted by Madathil et al., 15 videos from professionals may contain more trustworthy information than other sources. Even though more videos were uploaded by professionals, viewers lean toward consumer-based videos, which may have a lesser degree of scrutiny than other videos. These findings are somewhat consistent with Kollia et al. 16 and Basch et al. 18 who reported that the most popular videos were from consumers. Viewers may have a preference for viewing personal experiences. However, this is inconsistent with Bellon-Harn et al. 17 who reported that the number of views of videos uploaded by professionals was notably greater.

Some items related to understandability were rated positively, which contributed to the overall understandability score (i.e. 68%). Videos used common language, active voice,, and clear audio, which may facilitate accessibility of the information. That said, collectively this body of videos did not meet the threshold for overall adequacy. No strengths were identified in actionability. Videos uploaded by professionals were superior in understandability than other video sources, which is consistent with previous research. However, viewers tended to view consumer videos more frequently than professional videos, which suggests that they are viewing videos with inadequate understandability. There was no difference between video source and actionability indicating that all videos were lacking in enabling individuals to easily identify what they need to do. In part, this may be due to the majority of information described signs and symptoms, which is consistent with Bellon-Harn et al. 17 However, an equal percentage of videos were related to treatment. It is disconcerting that actionability was inadequate because it suggests that these videos did not empower the viewer to take an action. Overall, the poor scores in both actionability and understandability ratings indicate that there is need for significant improvement. This is consistent with Bellon-Harn et al. 17 who reported that videos about children with ASD did not reach adequate levels.

By understanding the information from various sources to which clients are exposed, professionals can understand the presuppositions that clients may have during clinical encounters. This is essential in developing appropriate and evidence-based information directed towards them. Professionals have an opportunity to generate video resources that have good levels understandability and actionability. For example, Conti-Ramsden et al. 23 launched a YouTube channel to share information about terms associated with children’s language impairment and to raise awareness of language impairment in children. More information should be developed across all content areas, but in particular, the area of diagnosis is limited. Overall, video resources should include a clear purpose and suggest an action by viewers including tangible tools to take an action.

The use of online information by families of children with a S/LD impacts the professional’s role and provides opportunities for professionals to support their clients’ eHealth literacy. 24 Professionals can educate their clients on the ability to seek, find, understand, and critically evaluate information from electronic sources (e.g. identify good search terms and credible sources). As noted, parents used phrases that did not appear in Google Trends as frequent as specified search terms used by teachers and speech-language pathologists. Furthermore, professionals can overcome barriers to discussing online information with their clients (e.g. clients’ concern about professional’s reactions) by sharing online information that may be beneficial. Finally, professionals need to contribute to the digital landscape by generating evidence-based, accessible information across diverse content. One strategy to improve or develop audiovisual materials is to use the PEMAT-AV as a guide. For example, professionals can identify the action(s) that the user can take that are included in the video. If there are none, or if the actions are underspecified, the professional can rectify the issue.

Study limitations and further research

The study was focused on the meta-data, source, type of informational content, understandability, and actionability of YouTube videos related to S/LDs. However, several limitations are noted. First, the context in which a video was uploaded was not considered. This is a major drawback as the context can influence the content. Second, some of these videos may have misinformation related to S/LDs. However, this was not considered in the current study and reliability was not obtained on type of informational content. Future studies can examine and quantify the misinformation by mapping the content to the evidence base in the academic literature. Third, the PEMAT-AV was designed to be used by lay people and health professionals alike. The small number of raters in this study were faculty and graduate students with a background in the area of communication disorders. Consequently, they rated the videos with background knowledge. Future studies should include non-clinical individuals and families of children with S/LDs. Fourth, while the PEMAT-AV is a credible tool for rating the video content, the binary nature (yes/no) of the rating scale may not have captured the degree to which each element of the understandability and actionability was met. Fifth, cultural context and appropriateness were not considered. Future studies should examine the relationship among cultural appropriateness, understandability, and actionability. Sixth, YouTube users may choose the videos based on their interest rather than the popularity of the videos. Hence, it would be interesting to study the content of more relevant YouTube videos based on specific topics (e.g. diagnosis, management, anti-vaccination), rather than their popularity. Seventh, potential search terms were generated from a panel of speech-language pathologists and parents. However, the composition of the panel may impact search terms used. Finally, the background of the consumer who uploaded the video may have contributed to the degree of understandability and actionability. For example, a consumer with a limited language background may contribute to low understandability. That said, the video may include good information regarding some aspect of S/LDs (e.g. illustration of symptom, example of intervention strategy).

Conclusions

This study found that the YouTube videos directed to families of children with S/LDs originated from varying sources. Results indicated that, overwhelmingly, the content covers information about the signs and symptoms as well as treatment with less emphasis on diagnosis. The low actionability and somewhat low understandability ratings indicate that there is room for much improvement in these areas. Professionals and members of healthcare organizations need to create additional high-quality resources and inform families of children with S/LDs about reliable and valid online resources. Finally, healthcare professionals need to take an active role in evaluation of online materials so that high-quality information can be disseminated.

Footnotes

Authors’ Note

Shriya Shashikanth is also affiliated with Department of Speech and Hearing Sciences, Lamar University, USA.

Contributorship

MBH and VM researched literature and conceived the study. SS was involved in data collection and analysis. VM completed all statistical analysis. MBH wrote the first draft of the manuscript. All authors reviewed and edited the manuscript and approved the final version of the manuscript.

Conflict of interest

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Ethical approval

The study was deemed exempt from review by the Lamar University IRB since all information was publicly available.

Guarantor

MBH.

Peer review

This manuscript was reviewed by reviewers, the authors have elected for these individuals to remain anonymous.