Abstract

Objective

The primary objective of this study was to assess the potential of artificial intelligence techniques, in conjunction with transthoracic echocardiography (TEE) examinations, to forecast postoperative mortality outcomes in patients undergoing moderate-to-high-risk noncardiac surgeries.

Methods

This is a second retrospective analysis using the BioStudies public database. This dataset includes data from two medical centers. We partitioned the dataset utilizing a 7:3 ratio. This model seamlessly integrated diverse algorithms, encompassing both machine learning and deep learning methodologies such as logistic regression, gradient boosting decision tree, XGBoost, LightGBM, CatBoost, linear support vector classification, multilayer perceptron classifier, Gaussian Naive Bayes, Adaboost, recurrent neural network, convolutional neural network, Bayesian neural network, and probabilistic Bayesian neural network. To thoroughly evaluate the model's performance, we employed multiple metrics, including the receiver operating characteristic curve, accuracy, precision, F1 score, recall, calibration curve, and clinical decision curve.

Results

The present study included a total of 1453 patients. The Gbdt algorithm ranks the variable importance, and the top five important results are creatinine (Cr), creatinine exceeding twice the upper limit (Cr > 2), creatinine clearance, left ventricular end-diastolic internal diameter, and hemoglobin. Among these algorithms, only Gbdt algorithm yielded satisfactory results both in the training and test groups. In the training group, Gbdt had an area under the curve (AUC) value of 0.904, accuracy of 0.984, and precision of 1; In the testing group, Gbdt had an AUC value of 0.835, accuracy of 0.984, and precision of 0.5. However, the Gbdt algorithm demonstrated suboptimal performance in terms of recall rate and F1 score. Finally, we successfully developed an online intelligent prediction webpage that utilizes the Gbdt algorithm and TEE.

Conclusions

Gbdt represents an optimal approach for predicting postoperative mortality among patients undergoing non-cardiac surgery with moderate-to-high risk.

Introduction

As a non-invasive modality for cardiac assessment, transthoracic echocardiography (TEE) has assumed a pivotal role in the evaluation of cardiac patients. 1 The comprehensive nature of TEE, allowing for simultaneous evaluation of cardiac structure and function, has proven invaluable in cases with uncertain cardiac pathology. 2 Anesthesiologists are increasingly integrating TEE techniques within their perioperative medical strategies for the surgical period. 3 In recent years, there have been notable advancements in TEE examination methodologies, resulting in enhanced clinical applicability and diagnostic precision. 4 As a frequently employed cardiac imaging modality, TEE has a prominent position in medical research, the formulation of diagnostic and therapeutic approaches, and clinical management, and is therefore widely used in perioperative cardiac evaluation. 5 Menon et al. revealed that TEE facilitates the identification of potential risk factors in hospitalized patients, subsequently prompting alterations in the medical management of 90.5% of these individuals. 6 Another prospective study demonstrated that the utilization of TEE in surgical patients could furnish additional information to anesthesiologists, thereby altering the cardiovascular dynamics management protocol during anesthesia procedures in high-risk noncardiac surgical patients. 7

In recent years, artificial intelligence (AI) technology has been widely applied in the field of healthcare, capable of effectively predicting postoperative complications, postoperative outcomes, and bone metastasis, among other conditions.8–10 Moreover, AI technology has powerful capabilities in terms of data processing and pattern recognition. It also exhibits a distinctive advantage in handling voluminous datasets and intricate interrelationships, thereby enabling the extraction of valuable insight, the identification of potential correlations and trends, and the construction of precise predictive models. 11 Furthermore, the inherent learning and optimization abilities of AI empower predictive models to perpetually improve their performance. By means of continual data input and iterative learning, predictive models can achieve real-time updates, elevating their predictive accuracy. 12

However, there is still a lack of information on how AI methods can be specifically applied to transesophageal echocardiography (TEE) parameters to predict postoperative outcomes in patients undergoing moderate- to high-risk non-cardiac surgical procedures. Furthermore, there is a paucity of research exploring the use of AI methods to predict postoperative mortality in patients at high risk of non-cardiac surgery. In light of this feedback, the primary objective of this study is to assess the potential of AI techniques in conjunction with TEE examinations to explore the prediction of postoperative mortality outcomes in patients undergoing moderate- to high-risk non-cardiac surgeries.

Methodology

Patient information

This retrospective study encompassed a cohort of 1453 patients from two medical centers, including individuals who had undergone abdominal or orthopedic surgical procedures. Raw data can be obtained from the BioStudies public database (https://www.ebi.ac.uk/biostudies/europepmc/studies/S-EPMC6483349). Within a 3-month timeframe before their respective surgeries, these patients underwent TEE. The research team, employing the modified Lee Index 13 (Supplementary Table 1) as a reference, evaluated the predictive factors for these patients, namely diabetes mellitus, blood creatinine (Cr) level ≥2 mg/dL, heart failure, history of cerebrovascular accident or transient ischemic attack, intra-abdominal or superior inguinal artery surgery, and ischemic heart disease. Inclusion criteria necessitated a modified Lee index greater than 0, age exceeding 18 years, and the undertaking of moderate-to-high-risk abdominal or orthopedic surgeries. Furthermore, we meticulously documented the patient's TEE findings, with exclusions made for cases with inadequate image quality. Left ventricular ejection fraction (LVEF), left ventricular mass index (LVMi), and left atrial volume index (LAVi) were among the parameters measured. Evaluations of mitral inflow encompassed early (E) and advanced (A) peak velocities, as well as the E/A ratio. Pulsed tissue Doppler imaging was employed to obtain peak systolic velocities (s′) and early diastolic velocities (e′) at the mitral annuli. The ratio of early diastolic transmitral flow velocity (E) to early diastolic mitral annular velocity (e′) (E/e′) was derived by calculating the mean values of e′ from the isolated sidewall and lateral wall (mean E/e′ = E/[(e′ isolation + e′ lateral wall)/2]). A categorical variable was defined as an LVEF less than 40%. Subsequently, all enrolled patients were subjected to a 56-day follow-up period post-surgery, with the primary endpoint being all-cause mortality.

AI algorithms

In this study, a sophisticated predictive model of high intelligence was meticulously constructed through in Python. This model seamlessly integrated diverse algorithms, encompassing both machine learning and deep learning methodologies such as Logistic Regression, GBDT (gradient boosting decision tree), XGB (XGBoost), LGBM (LightGBM), CatBoost, Linear SVC (linear support vector classification), MLPC (multilayer perceptron classifier), gnb (Gaussian naive Bayes), adab (Adaboost), RNN (recurrent neural network), CNN (convolutional neural network), BNN (Bayesian neural network) and PBNN (probabilistic Bayesian neural network). By harnessing the capabilities of these advanced algorithms, we enhanced the processing and analysis of intricate medical data, thus elevating our predictions’ accuracy and precision.

During the data preprocessing phase, we implemented data standardization techniques to establish uniformity in scale across various variables, thus mitigating any scale disparities’ potential influence on the model outcomes. Additionally, in addressing any potential missing data, we employed the SimpleImputer package to impute any absent values, thereby enhancing both the data's integrity and the model's robustness.

In our study, we partitioned the dataset utilizing a 7:3 ratio, allocating 70% of the data for the training group and reserving the remaining 30% for the test group. This division scheme was adopted to ensure the model's reliability and generalizability. Next, employing AI algorithms, we leveraged the vast amount of data in the training group to construct a highly accurate prediction model through the process of learning and training. To assess the model's performance, we used a 5-fold cross-validation technique. This entailed dividing the training dataset into five subsets, of which four subsets were utilized for model training, while the remaining subset served as a validation set. By conducting multiple cross-validations, we were able to comprehensively evaluate the model's performance and gauge its robustness.

To further analyze and optimize the model, we employed the Gbdt algorithm to analyze and assign weights to each variable utilized in the model construction. This facilitated the identification of variables that exerted the greatest influence on the prediction outcomes, thereby guiding us in the feature selection and optimization procedures. Additionally, we conducted Pearson correlation analysis and LIME (Local Interpretable Model–Agnostic Explanations) to explore the relationships among the variables, enabling us to gain insight into the interactions and relative importance of the variables in the model.

To thoroughly evaluate the model's performance, we employed multiple metrics, including the receiver operating characteristic curve, accuracy, precision, F1 score, recall, calibration curve, and clinical decision curve. These metrics could provide a comprehensive assessment of the model's classification accuracy and performance.

In this study, we used threshold setting to adjust the classifier; we applied regularization; from an algorithm perspective, we selected algorithms that are relatively insensitive to data skew, such as tree models; from an evaluation perspective, we observed and adjusted values such as area under the curve (AUC), accuracy, and precision; we used 5-fold cross-validation; and we used various methods, including self-encoding algorithm adjustments, to address the issue of data imbalance in the study.

General statistical methods

In the data analysis phase, we used R to conduct general statistical analysis of the data. Count data were presented as percentages. For numerical variables, a normality test is conducted first. If all groups satisfy normality, the mean (standard deviation) is used for descriptive statistics, and t-tests are used for intergroup comparisons. Otherwise, the median (interquartile range) is used for descriptive statistics and nonparametric tests are used for intergroup comparisons.

Results

The present study included a total of 1453 patients who had undergone moderate-to-high-risk noncardiac surgery. A total of 24 patients died. In this dataset, the completeness of the IVSd (interventricular septal thickness) index is 92.36%, and the completeness of the EDV (end-diastolic volume) index is 97.52%. See Supplementary Figure 1 for the confusion matrix of the Gbdt model.

No statistically significant differences were observed in terms of age or BMI between the groups of patients who had experienced postoperative death and those who had not, in either the training or the test groups (Table 1).

Clinical information and prognostic information of patients undergoing intermediate- and high-risk non-cardiac surgery.

BMI: body mass index; CAD: coronary artery disease; CCr: clearance of creatinine; CHF: heart failure; CKD: chronic kidney disease; EDV: end-diastolic volume; LEE: Lee's revised cardiac risk index; LVEF: Left ventricular ejection fraction; LVMi: left ventricular mass index; LAVi: left atrial volume index; LVIDs: left ventricular systolic diameter; LVIDd: left ventricular diastolic diameter; IVSd: interventricular septal thickness.

In the correlation analysis conducted on clinical variables associated with postoperative mortality outcomes, the three most negatively correlated variables were EF and LVIDs (−0.809), ESV and EF (−0.753), and creatinine clearance (CCr) and age (−0.678); the three most positively correlated variables were ESV and LVIDs (0.914), E′ mean and E′ lateral (0.937) and EDV and left ventricular end-diastolic internal diameter (LVIDd) (0.965). Chronic kidney disease (CKD) and Lee's revised cardiac risk index were positively associated with postoperative mortality outcomes; CCr and hemoglobin (Hb) were negatively associated with postoperative mortality outcomes (Figure 1). We can still see from the shapley additive explanations dependence plot that there is a negative correlation between LVIDd and Cr (see Supplementary Figure 2).

Thermogram of the results of correlation between various clinical information of patients undergoing moderate- and high-risk non-cardiac surgery.

The age and surgery duration exhibited a positive correlation with postoperative pulmonary complications, while serum albumin demonstrated a negative correlation with such complications. Employing the gbdt algorithm, a weighted feature engineering approach was employed to reveal that Cr, Cr > 2, CCr, LVIDd, and Hb had emerged as the top five factors influencing postoperative mortality outcomes (Figure 2).

Presenting the weights of various clinical variables on the outcome of mortality based on the gradient boosting decision tree (GBDT) algorithm.

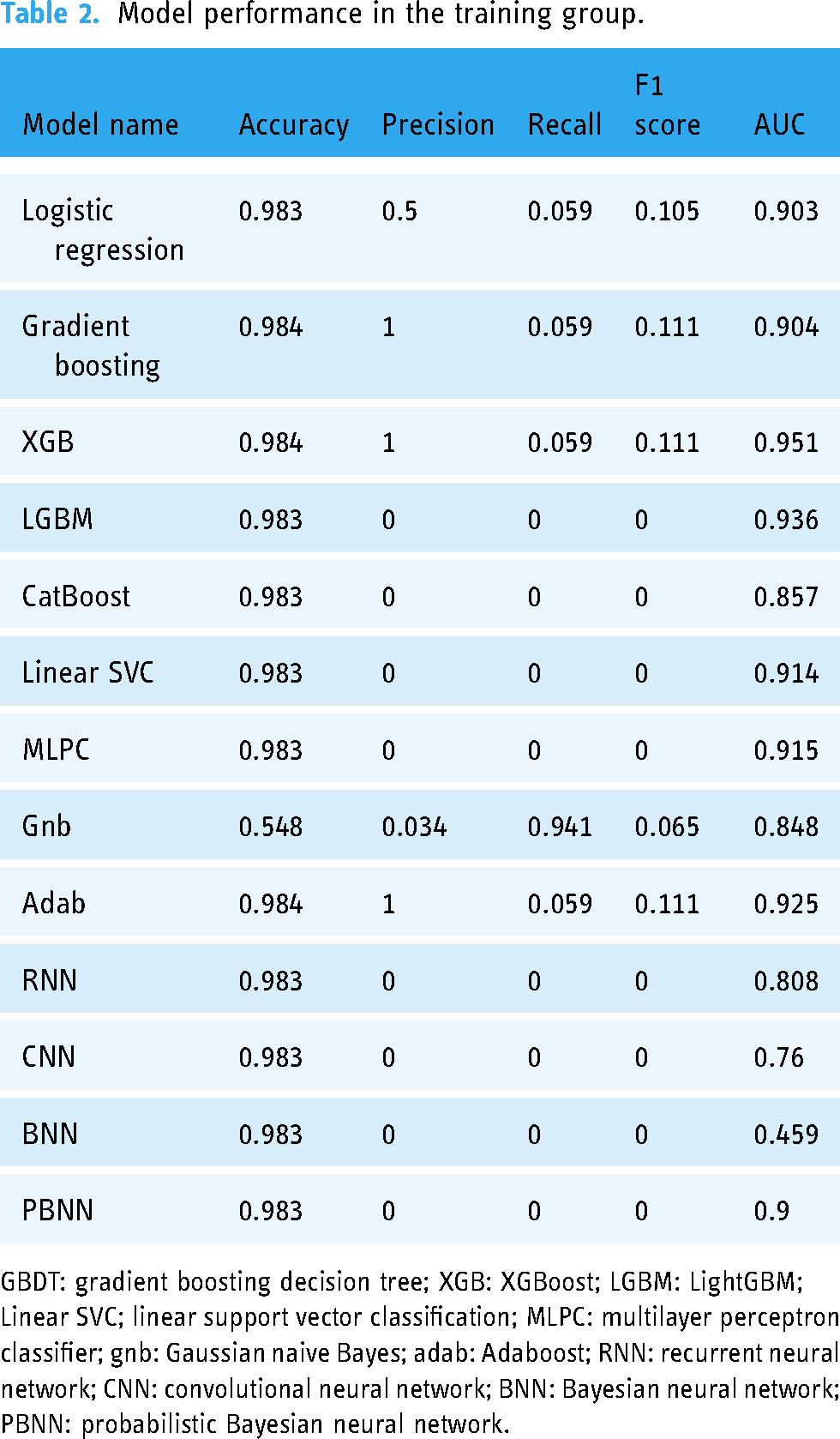

Regarding AI algorithms utilized for predicting postoperative mortality within the training group, the highest performing algorithms were: gradient boosting (0.984), XGB (0.984), and adab (0.984) in terms of predictive accuracy; gradient boosting (1), XGB (1), and adab (1) in terms of precision; gnb (0.941) in terms of recall; gradient boosting (0.111), XGB (0.111), and adab (0.111) in terms of F1 score. Additionally, for AUC, XGB, LGBM, adab, MLPC, linear SVC, gradient boosting, logistic regression, and PBNN exhibited AUC values surpassing 0.9 (Figure 3 and Table 2).

Receiver operating characteristic curves of the different algorithms in the training group. GBDT: gradient boosting decision tree; XGB: XGBoost; LGBM: LightGBM; Linear SVC; linear support vector classification; MLPC: multilayer perceptron classifier; gnb: Gaussian naive Bayes; adab: Adaboost; RNN: recurrent neural network; CNN: convolutional neural network; BNN: Bayesian neural network; PBNN: probabilistic Bayesian neural network.

Model performance in the training group.

GBDT: gradient boosting decision tree; XGB: XGBoost; LGBM: LightGBM; Linear SVC; linear support vector classification; MLPC: multilayer perceptron classifier; gnb: Gaussian naive Bayes; adab: Adaboost; RNN: recurrent neural network; CNN: convolutional neural network; BNN: Bayesian neural network; PBNN: probabilistic Bayesian neural network.

In the case of the test group, the AI algorithms exhibited the following prediction results: In terms of accuracy, all algorithms, except for the gnb algorithm, achieved values greater than 0.970. Regarding precision, the two most proficient algorithms were Gradient Boosting (0.5) and gnb (0.023). As for recall, the two highest performing algorithms were Gradient Boosting (0.143) and gnb (0.714). In terms of the F1 score, the two highest-ranking algorithms were Gradient Boosting (0.222) and gnb (0.045). Finally, with regard to the AUC, the Gradient Boosting algorithm performed best, achieving a value of 0.835 (Figure 4 and Table 3).

Receiver operating characteristic curves of the different algorithms in the test group. GBDT: gradient boosting decision tree; XGB: XGBoost; LGBM: LightGBM; Linear SVC; linear support vector classification; MLPC: multilayer perceptron classifier; gnb: Gaussian naive Bayes; adab: Adaboost; RNN: recurrent neural network; CNN: convolutional neural network; BNN: Bayesian neural network; PBNN: probabilistic Bayesian neural network.

Model performance in the testing group.

GBDT: gradient boosting decision tree; XGB: XGBoost; LGBM: LightGBM; Linear SVC; linear support vector classification; MLPC: multilayer perceptron classifier; gnb: Gaussian naive Bayes; adab: Adaboost; RNN: recurrent neural network; CNN: convolutional neural network; BNN: Bayesian neural network; PBNN: probabilistic Bayesian neural network.

Compared to other algorithms, it was found that only Gbdt can achieve better results in both the training group and the test group. At the same time, the calibration curve of the Gbdt model is not very close to the reference line, indicating that there is a certain distance between the predicted probabilities and the actual probabilities (see Supplementary Figure 3). Moreover, the clinical benefit population range can be observed in Supplementary Figure 4.

LIME is an interpretability method for black-box machine learning models, aiming to provide local interpretability for the predictions. In the figure, the red feature has a negative impact on the prediction result, and the light green feature has a positive impact. The feature importance ranking is shown in the Supplementary Figure 5.

Finally, we launched an online intelligent prediction webpage that utilizes the Gbdt algorithm and integrates TEE data (https://zhouchengmao-streamlit-app-2-g-st-app-gdbt-aiwtptpopuncs-i6hi0 l.streamlit.app/). This website is modeled using all the variables in this study. This webpage provides advanced computational capabilities to accurately forecast the outcome.

Discussion

TEE holds considerable significance in the evaluation of cardiac functional status prior to anesthesia surgery. By utilizing an ultrasound detector's high-frequency oscillations, it provides a clear visualization of cardiac motion, blood flow, and alterations in the cardiac wall. This enables physicians to accurately appraise a patient's cardiac functional status and identify any potential cardiac complications beforehand. The findings from TEE empower physicians to make more informed decisions regarding anesthesia procedures, and to adopt appropriate precautions to mitigate surgical risks, promoting the safety and success of surgery. Our findings indicate that Gradient Boosting demonstrates the best performance and stability in predicting mortality outcomes following non-cardiac surgery with moderate-to-high risk when AI algorithms are implemented in conjunction with TEE. Gradient Boosting, an advanced machine learning algorithm, iteratively trains a series of weak learners, harnessing their collective strength to construct a robust predictive model. This algorithm possesses enhanced capabilities in capturing potential risk factors and complex associations pertinent to the prediction of mortality outcomes following non-cardiac surgery. This method offers several notable advantages. Firstly, it efficiently handles high-dimensional and intricate medical data, while adeptly accommodating nonlinear relationships and patterns. Secondly, it automates feature selection and parameter tuning, thereby improving prediction accuracy and the model's generalizability. Moreover, Gradient Boosting exhibits robustness and interpretability, facilitating its comprehension and acceptance by both medical practitioners and clinical researchers within the medical domain.

Furthermore, the GBDT algorithm's weighted feature engineering highlighted several key factors that influence mortality outcomes following noncardiac surgery. These factors include Cr, creatinine exceeding twice the upper limit (Cr > 2), CCr, LVIDd, and Hb.

Multiple studies have underscored the significance of Cr fluctuations in predicting the risk of mortality following surgery. The rate at which Cr levels decrease holds predictive value in assessing long-term outcomes in living donor kidney transplantation, postoperatively. 14 Conversely, even mild elevations in postoperative Cr levels are associated with heightened mortality rates and prolonged hospital stays. 15 Furthermore, alterations in plasma cystatin C and peak Cr levels exhibit a strong correlation with the risk of death following cardiac surgery. 16

Several other studies have investigated additional factors that are correlated with the risk of postoperative mortality. For instance, an integer-type composite risk score based on the International Normalized Ratio, bilirubin, Cr, and complications graded on postoperative day 3 has demonstrated accurate prediction of mortality at the 90-day mark following hepatic resection. 17 Moreover, there is a correlation between even minor fluctuations in plasma Cr levels and the risk of death within 30 days following open surgery. 18

Cardiac metrics also play a pivotal role in predicting the risk of postoperative mortality. For instance, following the implantation of a left ventricular assist device (LVAD), a larger LVIDd is associated with reduced survival rates. 19 Furthermore, elevation of the LVMi signifies the presence of left ventricular hypertrophy and has been linked to an increased risk of death in patients with chronic obstructive pulmonary disease. 20 Additionally, the left ventricular diameter holds predictive value in risk stratification for sudden cardiac death. 21

Additionally, preoperative anemia and subsequent reduction in Hb concentrations following surgery have been linked to the risk of cardiovascular events and postoperative mortality.22,23 Studies have also reported that higher postoperative Hb levels are associated with reduced hospital stays and lower readmission rates, although they do not affect mortality rates or motor function indicators. 24

Nevertheless, certain limitations remain which warrant attention and further research within this domain. In this study, there was a wide disparity in the number of outcomes between the two groups, leading to imbalanced data. However, imbalanced case data is common in the real world. In these scenarios, we need to choose which algorithm is most suitable. Furthermore, it is essential to highlight the limited number of study outcomes in this research. Despite the focus on rare events, the paucity of outcomes still poses a challenge to the stability of the model. The constrained sample size introduces inherent uncertainty in the model's interpretive and predictive capabilities. XGB and other algorithms have been effective in predicting patient outcomes in multiple studies, 25 but in this study, the accuracy and recall rate of XGB in the test group were both 0. Only the Gbdt algorithm was able to achieve good results in both the training group and the test group. And some potential parameter differences and tuning differences between GBDT and XGB that could lead to performance differences include learning rate, number of estimators, maximum depth, minimum size of leaves, and regularization. Additionally, the process of converting echocardiography results from textual reports to a structured format is of great importance. 26 The use of alternative algorithms can extract more effective features, which may also improve model performance. However, this is merely a study using database data analysis, and this is also a limitation of this study. Consequently, to achieve greater accuracy in assessing and forecasting the probability of the target events, future studies should prioritize the expansion of the sample size. This approach will facilitate more reliable and consistent findings, thereby enhancing the model's validity and generalizability.

While the combination of AI algorithms and TEE has demonstrated promising performance in predicting mortality outcomes following noncardiac surgery, further validation is needed to establish their correlation with actual clinical outcomes. The absence of large-scale multicenter studies introduces the potential for variations in both datasets and sample sizes. In turn, this may impact the models' performance. Therefore, it is imperative to conduct additional prospective, multicenter studies with larger sample sizes to accurately evaluate and validate the efficacy of implementing these models in clinical practice. Additionally, AI algorithms combined with TEE still face challenges in terms of technology diffusion and operational standardization. The implementation of this method requires trained operators, and there are no uniform operational guidelines or standardized procedures. 27 Therefore, in order to widely promote and apply this method in clinical practice, standardized operation guidelines and training systems are needed. 28

The clinical significance of the current findings includes the following. The AI prognosis model based on echocardiography (TEE) data provides a novel method for predicting the postoperative results of patients undergoing non-cardiac surgery. This development offers a wide range of clinical significance. Firstly, through personalized medical care and risk assessment, the model can help doctors formulate individualized treatment plans for each patient's specific situation, so as to maximize patients’ postoperative results. In addition, accurate prognosis evaluation can also promote patients’ treatment compliance, and patients and their families may participate in treatment and rehabilitation plans more actively after learning the individualized postoperative risks. In addition, the development of the prognosis model also helps promote related clinical research, evaluate the benefits and costs of different treatment schemes, and provide new strategies for postoperative management. In addition, the remote deployment and access nature of the AI model also enables it to provide high-quality postoperative risk assessment services for patients in remote areas, and thus bridge regional differences. Finally, this prognosis model is expected to be used for patients’ long-term health monitoring. By continuously collecting and analyzing data, potential risk factors can be discovered thus facilitating timely interventions, which will improve patients’ quality of life and prolong their survival. It is worth noting that although the prospects of this study are encouraging, the model's accuracy and safety still need further verification and testing before they gain widespread adoption.

The key point to be emphasized is that in different clinical scenarios, machine learning algorithms may be unstable in predicting the postoperative outcomes of non-cardiac surgery patients. Specifically, which algorithm is more effective depends on the specific dataset and problem at hand. The reasons are as follows: (1) Data Features: Different machine learning algorithms have different sensitivities to data features. Some algorithms may be more suitable for processing specific types of data. (2) Model Complexity: The complexity of machine learning algorithms varies. Some algorithms may be more suitable for handling simple and clear problems, while other algorithms may be more suitable for dealing with more complex and variable problems. (3) Subgroups of Patients and Special Diseases: For example, dividing patients into elderly and young people, men and women, and whether they have asthma, atrial fibrillation, heart failure, or other serious diseases. In this case, it may lead to exploring the effectiveness of other machine learning algorithms. Overall, due to various factors such as data quality, problem complexity, model adaptability, and patient subgroups, there is no fixed machine learning algorithm that can best combine TEE to predict the postoperative outcomes of all non-cardiac surgery patients in all situations. Therefore, it is necessary to select the most suitable algorithm based on the specific circumstances.

Conclusions

Based on our findings, we conclude that Gradient Boosting represents an optimal approach for predicting postoperative mortality outcomes among patients undergoing non-cardiac surgery with moderate-to-high risk. This model is clinically significant for several reasons, including personalizing medical care, improving treatment compliance, promoting clinical research and assisting in decision support, telemedicine, and long-term health monitoring. However, further verification and research are needed to determine the accuracy, reliability, and practical applications of the model. Future work should include verification set expansion, model optimization and improvement, external verification and practical evaluation, clinical implementation and treatment guidance, as well as follow-up and results evaluation, so as to further promote research and application in this field. Nevertheless, the preliminary results of this study provide a new direction and hope for postoperative management of patients and should facilitate the optimization of patients’ postoperative prognosis and quality of life.

Supplemental Material

sj-doc-1-dhj-10.1177_20552076241261921 - Supplemental material for An AI-based prognostic model for postoperative outcomes in non-cardiac surgical patients utilizing TEE: A conceptual study

Supplemental material, sj-doc-1-dhj-10.1177_20552076241261921 for An AI-based prognostic model for postoperative outcomes in non-cardiac surgical patients utilizing TEE: A conceptual study by Yu Zhu, Renrui Liang and Cheng-Mao Zhou in DIGITAL HEALTH

Supplemental Material

sj-doc-2-dhj-10.1177_20552076241261921 - Supplemental material for An AI-based prognostic model for postoperative outcomes in non-cardiac surgical patients utilizing TEE: A conceptual study

Supplemental material, sj-doc-2-dhj-10.1177_20552076241261921 for An AI-based prognostic model for postoperative outcomes in non-cardiac surgical patients utilizing TEE: A conceptual study by Yu Zhu, Renrui Liang and Cheng-Mao Zhou in DIGITAL HEALTH

Supplemental Material

sj-doc-3-dhj-10.1177_20552076241261921 - Supplemental material for An AI-based prognostic model for postoperative outcomes in non-cardiac surgical patients utilizing TEE: A conceptual study

Supplemental material, sj-doc-3-dhj-10.1177_20552076241261921 for An AI-based prognostic model for postoperative outcomes in non-cardiac surgical patients utilizing TEE: A conceptual study by Yu Zhu, Renrui Liang and Cheng-Mao Zhou in DIGITAL HEALTH

Supplemental Material

sj-doc-4-dhj-10.1177_20552076241261921 - Supplemental material for An AI-based prognostic model for postoperative outcomes in non-cardiac surgical patients utilizing TEE: A conceptual study

Supplemental material, sj-doc-4-dhj-10.1177_20552076241261921 for An AI-based prognostic model for postoperative outcomes in non-cardiac surgical patients utilizing TEE: A conceptual study by Yu Zhu, Renrui Liang and Cheng-Mao Zhou in DIGITAL HEALTH

Supplemental Material

sj-doc-5-dhj-10.1177_20552076241261921 - Supplemental material for An AI-based prognostic model for postoperative outcomes in non-cardiac surgical patients utilizing TEE: A conceptual study

Supplemental material, sj-doc-5-dhj-10.1177_20552076241261921 for An AI-based prognostic model for postoperative outcomes in non-cardiac surgical patients utilizing TEE: A conceptual study by Yu Zhu, Renrui Liang and Cheng-Mao Zhou in DIGITAL HEALTH

Supplemental Material

sj-doc-6-dhj-10.1177_20552076241261921 - Supplemental material for An AI-based prognostic model for postoperative outcomes in non-cardiac surgical patients utilizing TEE: A conceptual study

Supplemental material, sj-doc-6-dhj-10.1177_20552076241261921 for An AI-based prognostic model for postoperative outcomes in non-cardiac surgical patients utilizing TEE: A conceptual study by Yu Zhu, Renrui Liang and Cheng-Mao Zhou in DIGITAL HEALTH

Supplemental Material

sj-doc-7-dhj-10.1177_20552076241261921 - Supplemental material for An AI-based prognostic model for postoperative outcomes in non-cardiac surgical patients utilizing TEE: A conceptual study

Supplemental material, sj-doc-7-dhj-10.1177_20552076241261921 for An AI-based prognostic model for postoperative outcomes in non-cardiac surgical patients utilizing TEE: A conceptual study by Yu Zhu, Renrui Liang and Cheng-Mao Zhou in DIGITAL HEALTH

Footnotes

Acknowledgments

None.

Contributorship

CMZ, RL, and YZ wrote the main manuscript text. RL and YZ prepared figures. CMZ, RL, and YZ reviewed the manuscript.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical approval

Article 32 of the Chinese National Health Education Commission’s Document No. 2023/4 relieves the need for ethical review and informed consent for secondary data analysis on public databases.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Guarantor

Not applicable.

Availability of data and materials

Raw data can be obtained from the BioStudies public database (https://www.ebi.ac.uk/biostudies/europepmc/studies/S-EPMC6483349)29. Some parameter codes of machine learning can be found in ![]() .

.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.