Abstract

Object

This review evaluates the use of smartphone-based voice data for predicting and monitoring depression.

Methods

A scoping review was conducted, examining 14 studies from Medline, Scopus, and Web of Science (2010–2023) on voice data collection methods and the use of voice features for minitoring depression.

Results

Voice data, especially prosodic features like fundamental frequency and pitch, show promise for predicting depression, though their sole predictive power requires further validation. Integrating voice with multimodal sensor data has been shown to improve accuracy significantly.

Conclusion

Smartphone-based voice monitoring offers a promising, noninvasive, and cost-effective approach to depression management. The integration of machine learning with sensor data could significantly enhance mental health monitoring, necessitating further research and longitudinal studies for validation.

Introduction

Depression is a common mental disorder affecting approximately 3.8% of the global population, including 5% of adults (4% of males and 6% of females), according to the World Health Organization. 1 Individuals with depression experience persistent feelings of sadness and a loss of interest or pleasure in their activities. 2 If left untreated, depression can lead to functional impairment affecting various aspects of life, including social relationships, family, work, and school. Early and objective identification of and intervention for depression can help mitigate its negative impact on individuals’ overall well-being and prevent adverse effects on communities and societies.

Current mental health assessment methods rely primarily on self-reporting based on individual retrospective memory and clinical interviews. However, these methods often lead to delayed intervention, as depressive symptoms may reach a clinically significant level before therapeutic approaches are initiated, thereby hindering early intervention. Moreover, relying on retrospective memory can introduce bias, and the ecological validity of such assessments is limited.3,4 Furthermore, among individuals undergoing treatment, there is little follow-up after sessions for psychotherapy and medication therapy. 5 As a result, patients are not monitored in a way that could help detect symptom exacerbation or prevent relapse.

Recent advancements in digital technology, such as smartphones and smartwatches, have led to increased utilization of sensor-data-based monitoring approaches in the field of mental health. By integrating the functionalities of sensors embedded in smartphones, objective behavioral markers related to individual activities and functioning can be extracted and collected ecologically. Studies conducted thus far have demonstrated associations between depressive symptoms and levels of individual mobility, activity, and social interaction features extracted through sensors such as GPS, accelerometers, message logs, and phone call logs. These features significantly predict depressive symptoms as behavioral markers.3,6–8

Recently, voice features have emerged as key characteristics for distinguishing and diagnosing mental health disorders. Several studies have shown differences in linguistic patterns between individuals with and without depression, highlighting the potential of utilizing smartphones’ built-in microphones as sensors to collect speech and voice patterns and investigate their relationship with depression. 9

Previous reviews, such as those conducted by Flanagan et al. 10 and Or et al., 11 primarily focused on the voice characteristics of patients with bipolar disorder and predicted bipolar symptoms based on these characteristics. Flanagan et al. 10 broadened their focus to mood disorders and found compelling evidence demonstrating the high potential of using smartphone voice data to monitor and detect mood disorders in real time. However, among the 13 studies included in the review by Flanagan et al., 10 only one targeted depression. Therefore, there is a lack of review studies that consolidate information on the use of voice features to predict depression.

This literature review focuses on studies exploring the association between voice features and depression using smartphones. Specifically, the objectives of this study are to: (1) summarize the characteristics of studies using voice data to diagnose, monitor, or understand the relevance of depression using smartphone devices and (2) identify specific methods of voice data collection and extracted variables to provide objective indicators of the utility and validity of voice feature information for predicting depression.

Methods

Design

Search strategies

A scoping review is a methodology used to explore the major concepts, materials, and evidence within a specific research field and to identify gaps in research, allowing for a comprehensive overview of a broader scope of studies. 12 Our research topic is a rapidly developing area. Thus, a scoping review is appropriate to identify the key research topics and unexplored areas within this field. Furthermore, adherence to the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines 13 ensures the quality of the literature review and enhances the transparency and reproducibility of the research.

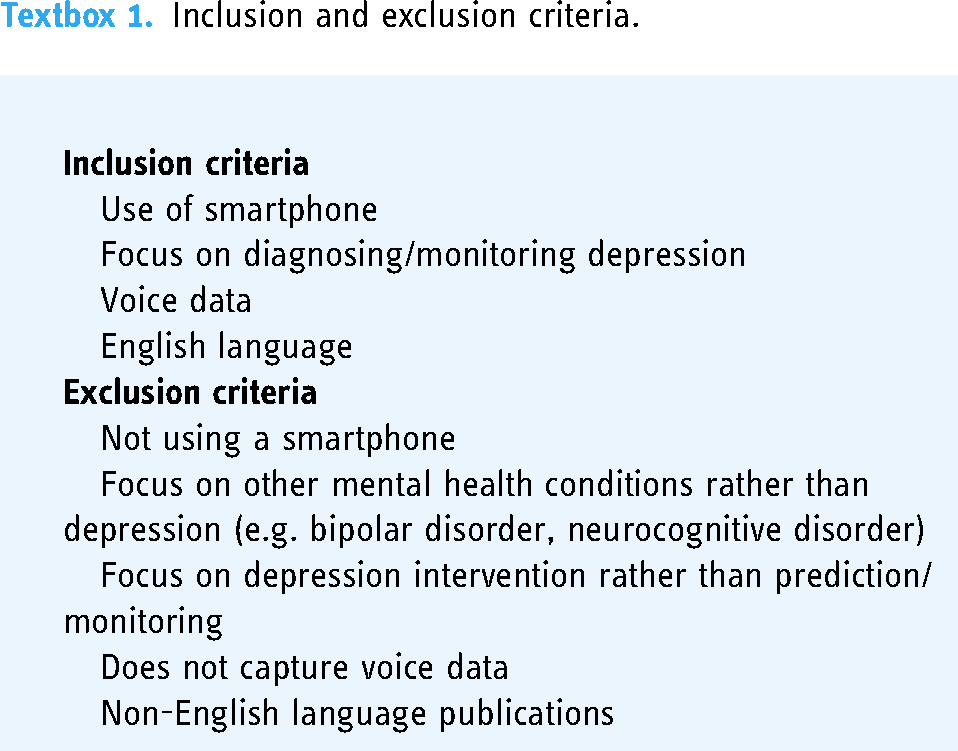

The search strategies employed Medline, Scopus, and Web of Science to identify studies on depression and smartphone-based voice features, published between 2010 and October 18, 2023. The search was limited to academic sources, excluding grey literature and nonacademic databases to ensure the integrity of the search. Keywords including “depression,” “depressive disorder,” “smartphone,” “mobile,” “voice,” “audio,” “speech,” “monitor,” and “predict” were used. These keywords were expanded using the Boolean operator “OR” and the wildcard “*” where needed, and were combined with “AND” for a comprehensive and targeted search. This approach captured all relevant studies, enhancing the thoroughness and relevance of the review. The search was confined to studies published in English. Textbox 1 describes the inclusion and exclusion criteria.

Inclusion and exclusion criteria.

Search outcomes

The study selection process is described using the PRISMA flowchart (Figure 1). After removing duplicates, decisions were made regarding inclusion or exclusion from the review. The lead author (JS) performed the screening of all titles and abstracts for 648 articles. Following a review of the titles, abstracts, and keywords of the articles, 43 studies underwent the first round of screening. Two reviewers (JS and SMB) independently engaged in the review process, retrieving and reading the full texts of 43 studies selected for in-depth review. Additionally, relevant studies that were not identified through the database searches were manually reviewed, and papers within the systematic literature review that were identified during the search process were selected to extract relevant studies.

Flow diagram for screening and inclusion of relevant articles.

Data extraction and analysis

The data was summarized by JS and entered into an Excel spreadsheet format. To ensure the accuracy of the process, the information entered was verified by the reviewer, SMB, and no significant discrepancies or errors were found. The content of the study was systematically categorized into general characteristics (year, design, sample size, study aim), methods (duration, type of data collected, audio data processing techniques), and results (assessment tools, key findings). This categorization allowed for a detailed description of the characteristics of the studies included in the review and prepared the groundwork for comprehensive analysis. A narrative synthesis method was employed to examine and analyze the characteristics of each study, explore the methods used to analyze voice features, and evaluate the predictive efficacy of these voice features for predicting depression.

Results

Characteristics of included studies

Of the initially identified 664 studies, 13 were included in the literature review. One study was added based on a suitability judgment during the literature review process. Most excluded studies were not included because they did not involve the use of smartphone devices or did not focus on monitoring or predicting depression. The publication years of the included studies were targeted at the past 10 years to consider recent advancements in the field. Finally, the studies from 2015 to 2022 were included. According to the Global System for Mobile Communications, the worldwide smartphone penetration rate is expected to exceed 70% in 2020, indicating a gradual increase in smartphone use. This rapid expansion is reflected in the growing number of smartphone sensor-based research studies within the last decade. Furthermore, high-quality microphones are now equipped in all smartphones, making it easier to collect voice data. 14

General information (year published, sample size, diagnosis), study aim, data capture methods, study duration, clinical evaluations, voice data captured, and the key findings of each study are summarized in Table 1.

Results of included studies.

Characteristics of data capture

Length of voice data capture

The duration of voice data capture ranged from two weeks7,15 to 24 months. 16

Methods of data capture

The methods for capturing voice data can be broadly classified into three categories: First, manual capture involves activating the microphone at regular intervals (e.g. every 2 or 5 min)6,7 to record ambient sounds or automatically record voice data during phone calls.16–18

Second, the method involves recording predetermined speech, which includes tasks such as counting simple text and number sequences (e.g. counting from 1 to 40) and reading emotionally neutral or stimulating passages.15,19 In large-scale datasets collected by Sonde Health, both predetermined and spontaneous speech were included.20–22

Third, capturing intentional (nonpassive) spontaneous speech involves recording audio while responding to prompts. Studies employing this method required participants to speak for at least 10 s about the presented images (three positive images, three negative images, and seven neutral images) as a response to prompts. 23 Additionally, in studies in which participants were asked to provide audio samples of fixed lengths on a weekly basis, they primarily recorded audio diaries about their mental health status24,25 or engaged in conversations via applications on topics related to health, work, and life. 26

In summary, among the 14 studies, five employed manual capture methods, two utilized predetermined speech, and four involved spontaneous speech. Additionally, three studies used a combination of predetermined and spontaneous speech datasets.

Timing of data capture

Data can be categorized into studies that use voice data as markers to track symptoms in clinical populations and studies that use voice data as markers to monitor depressive symptoms before clinical symptom manifestation. Among the studies targeting clinical populations, one explored the response to medication in individuals diagnosed with major depressive disorder, 23 and another tracked symptoms in individuals diagnosed with depression and posttraumatic stress disorder. 25

In studies monitoring depressive symptoms, participants included perinatal women at risk of depressive symptoms, 15 adolescents and young adults diagnosed with cancer, 26 and cohorts including individuals experiencing exam stress or unemployment. 19

Studies have targeted both college students and general adults,6,7,16–22 as well as a clinical population consisting of general adults and individuals with major depressive disorder, bipolar disorder, anxiety disorders, and psychiatric patients simultaneously. 24

Voice data captured

Numerous studies have utilized prosodic features of speech. Prosodic features, such as pitch, speed, timing, and tone, have been successfully used in previous studies to distinguish between patients with and without depression. These features are typically extracted using open-source signal processing and feature extraction tools such as openSMILE and Praat. Extracting multiple features from speech data allows for their quantification and machine learning, providing insights into how accurately speech features can be used to monitor and predict depressive symptoms.15,17–19,21,25

Studies utilizing prosodic features have pointed out a limitation where the analysis treats all frames equally by dividing speech into fixed frames (10 to 20 ms), potentially including frames with relatively less information. Two studies utilized large data from Sonde Health to count sudden changes in articulation as speech landmark events, classifying depressed speakers.20,22

Some studies have conducted semantic analysis of speech using automatic speech recognition software (Google Speech-to-Text). One study analyzed the participants’ words based on linguistic and psychological dimensions using Linguistic Inquiry and Word Count (Version 2015; Text Analysis Portal for Research, University of Alberta) software to assess the relevance of the detected theme words to depressive symptoms. 7 In more advanced research, both acoustic analysis of prosodic features and semantic analysis have been integrated. However, this study did not provide statistical results, including the predictive power for depressive symptoms. 26

There were also studies estimating speaking time in everyday life 6 or calculating the proportion of silence in spoken sentences to elucidate the relationship with depressive symptoms.16,23

Clinical outcome measurement and key findings

Clinical outcome measurement

Most studies used self-report measures such as the Patient Health Questionnaire (PHQ),6,7,20–22,26 Montgomery–Åsberg Depression Rating Scale (SIGMA-MADRS), 23 Beck Depression Inventory,16,24 Hospital Anxiety and Depression Scale (HADS), 15 and Hamilton Depression Rating Scale (HAMD) 19 to measure depressive symptoms. Some studies have categorized emotions into two dimensions, valence and arousal, and presented correlations using the Depression Scale (HADS). 17 Studies have measured only some aspects of depressive symptoms 25 and those with no specific information on measuring depression. 18

Relationship between voice data and depression

Studies investigating the relationship between prosodic features of speech and depression as well as the predictive capacity of these features for depressive symptoms have yielded intriguing results. In Braun et al.'s study,

19

fluctuations in F0 amplitude were construed as indicators of speech “richness.” A narrow F0 distribution suggests a lack of emotion and empathy, whereas a wider distribution indicates liveliness and thoughtfulness. They reported a close correlation, observed in 65–75% of cases, between HAMD-17 scores and speech sound characteristics, with a coefficient of

Furthermore, it has been suggested that the predictive power for depression in deep learning analysis improves when a sufficient length of speech data is provided, particularly when utilizing vocal tract coordination. 21 Additionally, in studies using Sonde Health (SH2) data collected via smartphones, landmark-based performance using abrupt changes in articulation as speech landmark events demonstrated performance with F1 scores of 0.77 in SH2 data's free speech and 0.64 in SH2 data, presenting a new set of speech features for depression prediction, in addition to existing prosodic features.20,22

Research investigating the relationship between depression and speech characteristics such as speech rate or the proportion of silence during speech has observed reduced depressive symptoms associated with increased speech activity, particularly in relation to antidepressant medication intake.

23

Moreover, the findings suggested a significant association (

Studies assessing participants’ vitality levels based on speech rate have consistently observed lower vitality values in patients with depression than in healthy individuals, with vitality values showing a wider dispersion among healthy individuals. Additionally, females exhibited lower vitality and mental activity than males, which is consistent with the tendency of females to have higher rates of depression. 16

Although there are studies that provide protocols for extracting voice data to predict and monitor depressive symptoms, these studies primarily focus on introducing extraction methods without presenting statistical results regarding the relationship with depression.15,26 Similarly, some studies have evaluated the feasibility of smartphone apps and participant compliance but lacked statistical results regarding their relationship with depression.18,24

Discussion

Principal findings

This review synthesizes the existing research on the use of smartphone-based voice data for predicting and monitoring depression. Collecting voice data through smartphones, which are widely used by the population, has high accessibility and the potential for real-time monitoring and prediction of individual depression.

Previous studies that reviewed the scope of smartphone voice data use for mood disorders primarily included research on bipolar disorder, resulting in limited findings on the utility of voice data for depression. 10 This scoping review included 14 studies selected from searches of major databases, such as Medline, Scopus, and Web of Science, focusing on relevant studies that utilized smartphone voice data to explore its association with depression.

Each study varied in duration (e.g. two weeks to 24 months) and method of capturing voice data (e.g. manual capture, predefined text speech, and spontaneous speech). Manual capture methods involve users activating smartphone microphones to record everyday speech or phone calls, which poses potential privacy concerns. While some studies have suggested that reading fixed texts may be more advantageous for depression prediction, 27 recent research indicates the potential applicability of spontaneous speech, such as free conversations or interviews, for more autonomous and ecological monitoring of mood disorders. 10

Several prosodic features extracted from voice data are associated with depression. Studies on prosodic features revealed that fundamental frequency (F0), representing the vocal fold vibration rate, was significantly correlated with depressive state, 19 and combinations of pitch information demonstrated predictive power for depression. 25 Moreover, studies also explored combining multiple prosodic features 17 or utilizing sudden changes in articulation as speech landmarks to predict depression.20,22 Combining prosodic features to create new data representations appears more promising and valid than monitoring depression using a single dimension of vocal features because individuals naturally exhibit various changes and characteristics during speech. Therefore, it is difficult to claim that a single-level unimodal prosodic feature provides sufficient information as a clinical indicator of the broad clinical state of depression; a multilevel approach using combinations of voice data is necessary.

Some studies have examined the association between depressive symptoms and fluctuations in speech volume 6 and the ratio of silence during speech,16,23 as well as studies extracting semantic information from speech to explore the relationship between the frequency of specific words and depressive symptoms. 7

However, there are currently insufficient accumulated research results to conclude promising voice features for predicting depression using voice data. While some studies included in our review developed applications and proposed methods for collecting voice data to monitor depression, they did not provide statistical metrics regarding the relationship between depression and the predictive power of voice data. These studies introduced methods for collecting voice data for depression monitoring and explicitly evaluated user engagement and compliance with applications.15,18,24,26 This suggests that depression monitoring and detection using voice data are still in the early stages. Once these applications are implemented, data are collected, and results reporting the predictive power for depression accumulate, allowing more integrated conclusions to be drawn about which voice data features are superior for predicting depression.

Furthermore, several studies included in this research not only collected voice data but also handled various sensors (e.g. GPS and accelerometers) together.6,15,18,23–25 However, most studies have primarily examined the predictive capabilities of individual sensor data for depression in isolation, without integrating diverse sensor inputs.6,23,25 In contrast, Hong's 28 study adopted a multimodal approach, combining activity data from location tracking, sleep patterns, and facial expression markers to predict depression. The findings revealed a significant enhancement in predictive accuracy through multimodal data analysis compared to single data analysis. While Osmani's 29 study wasn't specifically focused on depression, it investigated behavior changes in patients with bipolar disorder to see if the onset of bipolar episodes could be predicted through sensor data. The research found that combining different sensor modalities, including call sound analysis, accelerometer data, and location data, resulted in the highest accuracy (97.4%) in predicting the start of bipolar episodes. 29 Consequently, there is a pressing need for further research to explore multimodal methodologies that integrate voice data with other sensor inputs to improve depression prediction.

Implications for practice

The results of this review suggest that smartphone-based voice monitoring has a significant potential for evaluating and managing depression. When data generated by such monitoring systems are provided to users and clinicians in a timely manner, they allow immediate assessment and intervention, moving away from retrospective self-reporting and clinical interviews. Additionally, because it does not require face-to-face interaction, it offers advantages in terms of cost reduction and accessibility owing to the widespread use of smartphones.

However, although smartphone-based voice monitoring provides a certain level of statistical validity, it remains challenging to conclude whether voice data alone provide sufficient predictive power for mood disorders. Therefore, rather than using smartphone-based voice data as the sole method for clinical management, it can serve as an additional tool for clinicians to detect relapse and remission in patients. 10

The emerging body of research underscores the potential of smartphones as tools for detecting and monitoring mental health conditions. By integrating a variety of smartphone-based sensor data, including but not limited to voice data, a more comprehensive approach to mental health monitoring may be achieved.3,8

Advanced machine learning techniques play a pivotal role in this integration, offering not only the means to predict mood disorders but also the ability to identify which sensor data are most valuable for the assessment and management of such disorders. 30 Particularly, the application of machine learning in conjunction with digital sensor data has shown promise in predicting mood states among individuals with mood disorders, like major depressive disorder and bipolar disorder. This integration extends to wearable, environmental, and smartphone-based passive sensors, highlighting the significance of digital markers such as location data, conversation frequency, and physical activity in understanding mood disorders. 31

The convergence of digital technology's rapid advancement with the capabilities of machine learning algorithms heralds a new era in psychiatry and mood disorder management. By harnessing these technologies, there's a substantial opportunity for groundbreaking improvements in how mental health conditions are detected, monitored, and treated.

To further understand the link between voice data and depression, it's crucial to develop technologies that can more accurately classify voice features related to depressive symptoms. 32 Additionally, longitudinal studies that track the effectiveness of voice-based monitoring over time are necessary. 33 This approach can lead to more consistent outcomes in identifying depression's relevance and predictability through voice data.

Limitations

This study has some limitations. First, while conducting a review of studies examining the relationship between voice data and depression using a systematic paper identification method, it is important to note that relevant research is still in its early stages, with ongoing development and pilot smartphone applications, including voice data.15,26 Therefore, new publications might have been overlooked. Additionally, owing to the evolving nature of this field, varying terminology and a lack of conceptual consensus among researchers may have resulted in the omission of relevant papers owing to limitations in the search methods.

Second, the ethical and acceptability aspects of accessing and extracting voice data from smartphones were not reviewed. User resistance to the extraction and use of voice data can affect the practical implementation of such technologies. While some studies 19 have mentioned privacy protection measures such as avoiding collection by timestamps for privacy concerns, discussions on handling voice data are limited in most studies.

Bauer et al. 32 noted that while leveraging technology to analyze behavioral data obtained from tracking people's digital activities in mental health treatment presents a new direction for healthcare, it also poses challenges to patient privacy and security. There is a need for discussion on data handling and privacy protection within studies, and future reviews should incorporate agreed-upon methods of data collection when utilizing voice data for research purposes.

In particular, the practical implementation of voice data collection carries several privacy and ethical concerns that must be seriously considered to maximize the potential benefits and minimize risks. Slavich et al. 34 outlined requirements for minimizing risks, including: (a) clearly informing users about which devices transmit and evaluate voice data and the potential risks involved; (b) enabling users to easily activate or deactivate the listening function of devices as they wish; (c) allowing users to physically control the listening function of devices; (d) ensuring users can control who accesses their data and how it is used; (e) permitting users to utilize devices within their personal environments; and (f) providing users with the option to opt-out of voice recording or analysis.

Future research exploring the use of voice data and applications within smartphones would benefit from incorporating data handling protocols aligned with the recommendations outlined by Slavich et al. 34 This will ensure that the development and deployment of such technologies are in alignment with ethical standards and privacy considerations, thereby safeguarding user data while maximizing the utility and safety of voice data applications. It's important for researchers and developers to consider these guidelines carefully when designing their studies or applications to ensure they adhere to best practices for data privacy and security.

Conclusions

This study aims to comprehensively review the current status of research utilizing smartphone-based voice data to monitor and diagnose depression. This comprehensive review aimed to activate research that utilizes voice data as a behavioral marker for depression and influences mental health service providers to identify and respond to patients at risk of depression early. Globally, several research teams are developing smartphone-based tools for diagnosing and monitoring a wide range of mental disorders, 8 and the utilization of voice data among them could be a promising extension of existing digital phenotypic data for mental disorders.

Footnotes

Author contributions

Shin designed the study, analyzed the data, wrote the first draft of the manuscript, and edited the final version of the paper. Bae contributed to the design of the study, supervised the study, and edited the final version of the paper. All authors have read and agreed to the published version of the manuscript.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Ministry of Education of the Republic of Korea and the National Research Foundation of Korea (Grant No. NRF-2022S1A5A2A03050428).

Institutional Review Board Statement

The study was approved by the Institutional Review Board of Dankook University (IRB No. 2024-001).