Abstract

Objective

Mental health self-report and clinician-rating scales with diagnoses defined by sum-score cut-offs are often used for depression screening. This study investigates whether machine learning (ML) can detect major depressive episodes (MDE) based on screening scales with higher accuracy than best-practice clinical sum-score approaches.

Methods

Primary data was obtained from two RCTs on the treatment of depression. Ground truth were DSM 5 MDE diagnoses based on structured clinical interviews (SCID) and PHQ-9 self-report, clinician-rated QIDS-16, and HAM-D-17 were predictors. ML models were trained using 10-fold cross-validation. Performance was compared against best-practice sum-score cut-offs. Primary outcome was the Area Under the Curve (AUC) of the Receiver Operating Characteristic curve. DeLong's test with bootstrapping was used to test for differences in AUC. Secondary outcomes were balanced accuracy, precision, recall, F1-score, and number needed to diagnose (NND).

Results

A total of k = 1030 diagnoses (no diagnosis: k = 775; MDE: k = 255) were included. ML models achieved an AUCQIDS-16 = 0.94, AUCHAM-D-17 = 0.88, and AUCPHQ-9 = 0.83 in the testing set. ML AUC was significantly higher than sum-score cut-offs for QIDS-16 and PHQ-9 (

Conclusions

ML augmented depression screenings could potentially make a major contribution to improving MDE diagnosis depending on questionnaire (e.g., QIDS-16). Confirmatory studies are needed before ML enhanced screening can be implemented into routine care practice.

Introduction

In mental health care, an accurate, psychometrically sound assessment, and diagnosis in a timely manner is the key to treatment and optimized health care pathways.1–5 To achieve this goal, different assessment and diagnosis approaches exist ranging from structured clinical interviews (e.g. SCID) 6 to self-report instruments (e.g. nine-item Patient Health Questionnaire (PHQ-9))7,8 and clinician rating scales (e.g. 16-item version of the Quick Inventory of Depressive Symptomology (QIDS-16)).4,9 Given the economic pressure of many health care systems,10–12 time-consuming diagnostic assessments like SCID seem difficult which may contribute to the issue of under-recognition.13–16 A feasible time-saving alternative to SCID is the use of self-report or clinician-rated screening instruments.1,15,16

For such screening instruments, traditionally, a cut-off value (e.g. for the sum scores of all items of the instrument) is determined by balancing the sensitivity and specificity (e.g. optimal Youden Index in a Receiver Operating Characteristic (ROC) curve).7,17,18 An individual participant data analysis with

In recent years, machine learning (ML) has been applied in many areas accompanied by a paradigm shift away from a priori human-defined solutions for problems like prediction tasks towards algorithmic solutions by ML methods automatically determining parameters to reach an optimal solution.21,22 In short, such ML algorithms try to find an optimal function to map the observed data and features to an output, while bias (=prediction error) and variance (=performance difference between known data—training data, and unknown data—testing data) are balanced.22–24 To achieve this, various ML models (e.g. random forest, neural nets) and their hyperparameters (e.g. learning rate) are usually tested and tuned. Doing so, ML has been shown to achieve high accuracy in many prediction tasks21,22 and may also offer a way towards optimized diagnostic procedures.

Given its high prevalence2,25 and impact on global health,26–29 ML-based diagnosis has been studied in particular for depression. However, while studies based neuroimaging, 30 microRNA, 31 RNA, 32 peripheral blood markers, 33 and other biomarkers 34 show promising results in some cases, the necessary data for such biomarkers is often obtained with resource and cost-expensive methods. Hence, the development of ML prediction models based on data, which is easily and cost-efficiently obtainable, may offer a more promising way to improve diagnosis. As such, frequently available screening instruments like self-report questionnaires or clinician-rated instruments could be used in ML classifiers. However, currently, it is unclear to which extent their performance can be optimized by ML compared to existing best practice clinical cut-offs.

Therefore, the present study evaluates the potential of ML classifiers to detect a diagnosis of a major depressive episode (MDE) based on the items of commonly used screening instruments and evaluates their performance compared to established best-practice clinical sum-score approaches in which a diagnosis is given if a score threshold is exceeded. Given the impact of the chosen threshold on the accuracy (e.g. lower thresholds increase sensitivity and decrease specificity), both performance metrics across the spectrum of all possible thresholds and performance metrics for a single best-practice threshold (i.e. optimal balance between sensitivity and specificity) need be addressed. Accordingly, the following research questions will be answered:

Primary research question: Is there a significant difference between the Area Under the Curve (AUC) of the ROC across all possible class prediction thresholds in ML models and sum-score thresholds of the questionnaires? Secondary research question: Is there a difference in the balanced accuracy, precision, recall, F1-score, and number needed to diagnose (NND) between ML models and sum-score approaches, if, respectively, the best threshold is applied (e.g. best class probability threshold compared to best-practice sum-score cut-off)?

Methods

Study design and sample

The present study is a secondary analysis of the large-scale pragmatic, observer-blinded randomized controlled trials WARD-BP (

All procedures have been approved by the ethics committee of the Albert-Ludwigs-University of Freiburg, Germany, and the data security committee of the German Pension Insurance. All participants provided written informed consent for participation in the studies. The studies were registered in the German Register for Clinical Trials (DRKS: DRKS00009272, registered on 14 September 2015; DRKS00007960, registered on 12 August 2015). Further details on the studies are provided in their study protocols and main result publications.35–38 The present study followed the STARD 39 and TRIPOD 40 reporting guidelines (see checklists in supplement 1).

Measures and outcomes

Analysis

We trained a range of candidate models to find the best mapping from the included features (e.g. questionnaire items) to the binary outcome of MDE status. Candidate models varied in: (a) model specifications (e.g. random forest, logistic regression); (b) feature sets (e.g. original items, extended features, demographic variables); (c) dimension reduction steps (e.g. principal component analysis); and (d) data imbalance handling (e.g. down sampling). Please see below for a detailed description. A stepwise combination from (a)–(d) resulted in a total of 261 candidate models for each questionnaire. The preprocessing and analysis code are published in the open science framework (https://osf.io/3hnvz/).

The ROC AUC of the best ML models after training was compared in the testing set against the AUC of varying sum scores for each of the PHQ-9, HAM-D-17, and QIDS-16 instruments according to their respective scoring procedures.7,9,42 DeLong's test for two correlated ROC with

Firstly, all three questionnaire have established clinical cut-offs.51,52 However, there is an ongoing debate on the best sum score cut-offs for the instruments.17,53,54 For the present secondary analysis, we have chosen the following cut-offs PHQ-9 ≥ 10,7,19 HAM-D-17 ≥ 8, 54 and QIDS-16 ≥ 6. 9

Secondly, since the optimal cut-offs for PHQ-9, HAM-D-17 and QIDS-16 might be different in the present sample, we additionally determined the secondary outcome metrics for best-sample-specific cut-offs for the questionnaires in the training sample. Cut-offs were determined using the Youden Index.18,55

Thirdly, we calculated the optimal ML prediction threshold for the best ML candidate in the training set using Youden Index18,55 and calculated the secondary outcomes in the testing set based on this threshold.

Software

All analyses were conducted in R. 56 The tidymodels framework was used for ML training and analysis. 46 For detailed session information for all R packages and versions see online supplement 5. Analysis code is available at: https://osf.io/3hnvz/.

Results

The total number of

Included cases from WARD-BP and PROD-BP.

Primary outcome

Clinician-rated instruments

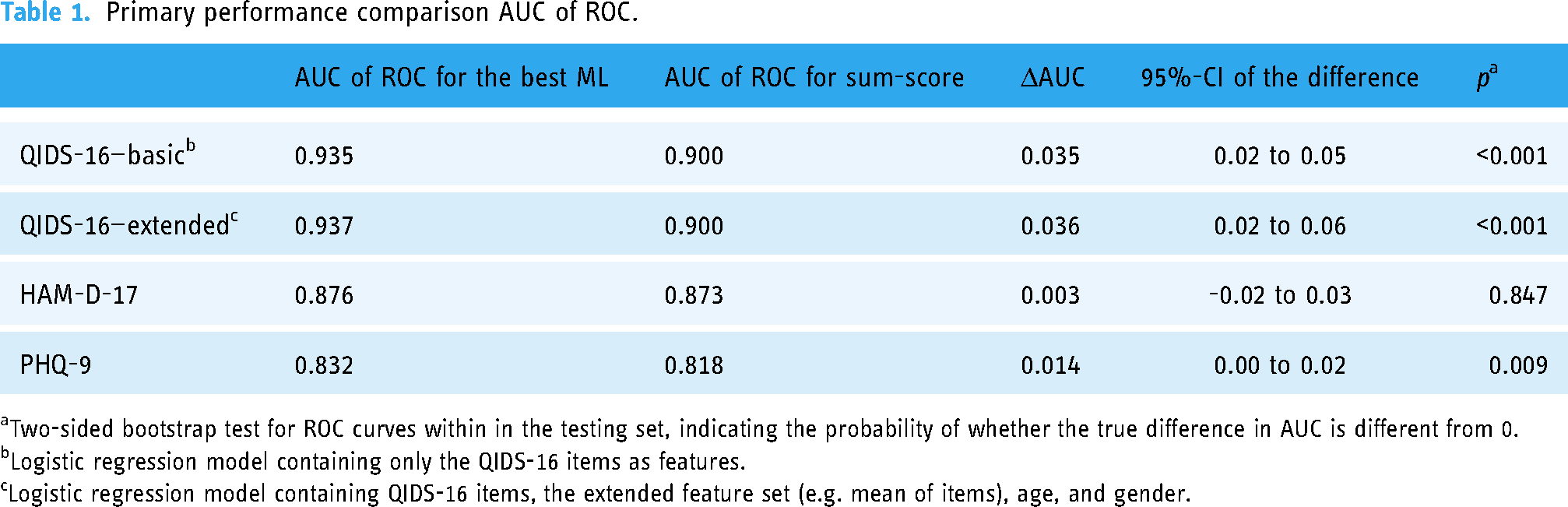

The trained QIDS-16 classifier with only the QIDS-16 items as predictors yielded an AUC = 0.935 in the testing set. In comparison, the AUC using the traditional QIDS-16 sum score achieved an AUC = 0.901. DeLong's test showed a significant difference between the AUC favoring the ML approach (ΔAUC = 0.04, 95%-CI: 0.02 to 0.05,

For the HAM-D-17, a random forest model with the original HAM-D-17 items achieved the best performance of all candidate models. While the ROC AUC of the HAM-D-17 ML model was also higher (AUC = 0.876) in the testing set compared to the sum-score approach (AUC = 0.873), the difference was not significant (ΔAUC = 0.00, 95%-CI: −0.02 to 0.03,

Self-report instrument:

For the PHQ-9, a Naïve Bayes model based on the PHQ-9 items and the extended features set significantly outperformed the traditional cut-off approach: AUC for the Naïve Bayes model was AUC = 0.83 compared to an AUC = 0.82 for sum scores (ΔAUC = 0.01, 95%-CI: 0.00 to 0.02,

See Table 1 for an overview of the primary outcome across all three questionnaires.

Primary performance comparison AUC of ROC.

Two-sided bootstrap test for ROC curves within in the testing set, indicating the probability of whether the true difference in AUC is different from 0.

Logistic regression model containing only the QIDS-16 items as features.

Logistic regression model containing QIDS-16 items, the extended feature set (e.g. mean of items), age, and gender.

Secondary outcomes

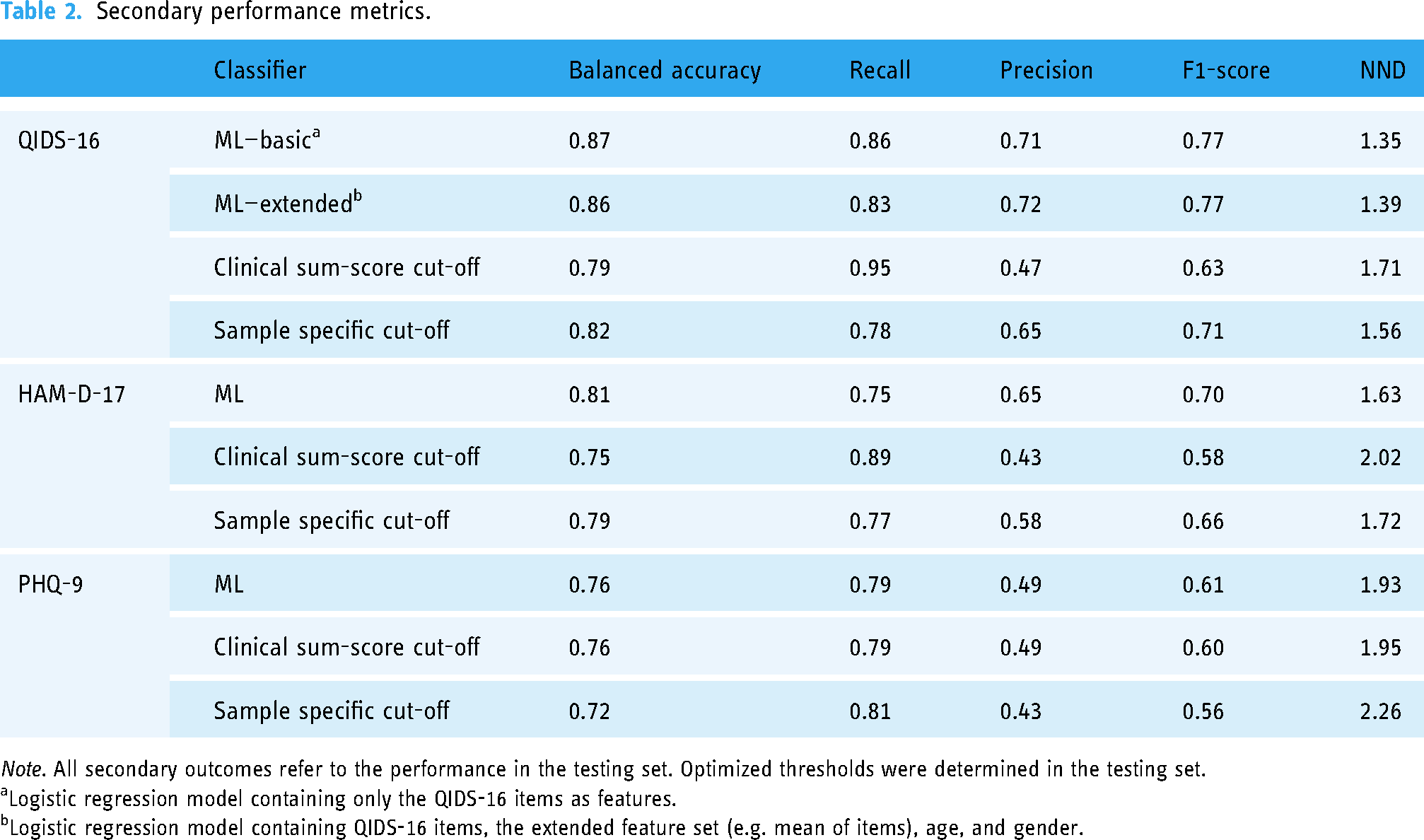

For further comparison the balanced accuracy, precision, recall, F1-score, and the NND were determined for the performance in the testing set following the rationale of best clinical thresholds, best sample-specific thresholds (QIDS-16 ≥ 9, HAM-D ≥ 11, PHQ-9 ≥ 9), and best class probability thresholds in the training set (QIDS-16: 0.28, HAM-D-17: 0.32, PHQ-9: 0.01).

Overall, the balanced accuracy achieved by the ML classifiers outperformed established clinical cut-offs. While precision or recall as a stand-alone metric favored established cut-offs in some cases, the harmonic mean of precision and recall (=F1-score) and balanced accuracy always favored the ML classifiers. Additionally, the NND favored the ML classifiers. For a summary of all secondary outcomes, see Table 2. For the calculation of additional performance metrics, the confusion matrix can be found in supplement 6.

Secondary performance metrics.

Logistic regression model containing only the QIDS-16 items as features.

Logistic regression model containing QIDS-16 items, the extended feature set (e.g. mean of items), age, and gender.

Discussion

The present study evaluated the potential of ML to enhance the performance of clinical assessment tools to detect MDE based on clinician-rated (QIDS-16, HAM-D-17) and self-report (PHQ-9) questionnaire data obtained in a pragmatic health care setting. The present results demonstrated that ML models like penalized logistic regression or naïve Bayes models can significantly increase the AUC of the ROC curve for the clinician-rated QIDS-16 (

A key issue in the field limiting ML in achieving high accuracy and potentially explaining the differences in the findings across the here evaluated questionnaires, is the noise and measurement error of the underlying questionnaires and items (e.g. caused by social desirability bias, recall bias, systematic and random measurement error bias, or confirmation bias).57–59 In particular, patient reported outcome measures are prone to some biases (e.g. social desirability) compared to instruments rated by trained clinicians (e.g. QIDS-16), which may contribute to the difference of improvement by ML based on the QIDS-16 and PHQ-9. However, eliminating some sources of bias by a clinician rating does not guarantee a reliable assessment. For instance, the reliability of the HAM-D-17 has been shown to be questionable, in particular for some items.44,60,61 Contributing to this, the HAM-D-17 is characterized by less precise wording regarding the frequency and duration of symptoms when compared against other instruments like the PHQ-9 or QIDS-16 (e.g. HAM-D-17 mood refers to if feeling states of sadness are reported without categorization of frequency or duration, compared to PHQ-9 and QIDS-16 specified responses whether sadness occurs “at all”, “several times”, “more than half the times”, “nearly all the time”).9,42 In addition, core symptoms of depression according to the DSM-5 6 or ICD-11 62 (i.e. hypersomnia or hyperphagia) are missing in the HAM-D-17 potentially further explaining an increase in noise and measurement error compared to PHQ-9 and QIDS-16.4,9,42 Applying more precise and highly reliable assessments in clinical practice like computer-adaptive tests may provide an opportunity to increase measurement quality of questionnaires and thereby potential of ML in future.63–66

Furthermore, the present study could be expanded by including other data sources like electronic health records, molecular biosignatures (e.g. epigenomics), environmental data (e.g. lifestyle), physiological data, and smart-sensing and digital phenotyping data providing unbiased objective behavioral data using sensors in our daily life from omnipresent smart devices (e.g. in smartphones, smart watches and other wearables), might be promising to further augment ML algorithms in their predictive accuracy and maximize the potential of complex data and ML.34,67–75 However, this goes hand in hand with higher effort and costs to collect such data. In particular, the QIDS-16 might be suited to provide ML-optimized MDE predictions even without such an extension. That said, for health care providers (e.g. physicians), who have access to various sources of health records, the combination of different sources in an ML augmented expert system assisting them in the diagnosis of mental (and other) disorders could be feasible and promising. 75

In future, the application ML in the screening of depression and providing an instant prediction of the depression status based on the questionnaire data alone (e.g. based on QIDS-16)—or further augmented by objective sensing data, health records data, or biological parameters—may offer a way towards an accurate, time-efficient and thus feasible depression screening leading to earlier diagnosis and treatment initiation in resource-limited mental health care.10,11,13,14 If proven to be effective, this could have a major impact on highly prevalent mental disorders like depression and public health.

However, some limitations of the present study must be considered and targeted in future studies before the implementation of ML-optimized depression screening in clinical settings. Most importantly, the present study is an exploratory secondary analysis. We used 10-fold cross-validation in the present study.76,77 Accordingly, the algorithms were trained on a subset of the data (exploratory) and the final performance of the trained models was evaluated on previously unknown data (confirmatory). While this procedure mirrors the logic of a confirmatory study to some extent, it cannot replace the necessity of confirmatory experimental studies eliminating other potential sources of bias and effects. Clinical randomized controlled trials (e.g. comparing ML-enhanced routine care screening of MDE against screening as usual) are of utmost importance before it comes to ML-augmented screening tools or expert systems in routine care settings. Also, it must be ensured that any ML-enhanced medical application derived is fair and unbiased.78–82 While the exploration of age and gender as additional features in the present analysis provided no meaningful improvements and is speaking for the generalizability, further evidence is needed that the benefit of ML in screening accuracy and performance holds across different gender, ages, ethnic and cultural populations. Speaking of generalizability, the setting of the included RCTs also needs to be considered. While the diagnoses and data of the present study were obtained in a pragmatic healthcare setting and the exploration of ML-enhanced screening procedures based on real-world healthcare data is a strong suit of this study, the healthcare setting at hand is also very specific: All participants were recruited in orthopedic rehabilitation centers.35–38 The replication of the present findings in different settings is highly needed. Nonetheless, by highlighting the potential of ML-enhanced screening tools for some questionnaires widely used in clinical practice (i.e. QIDS-16), the present study makes an important first step toward clinical trials and lays the foundation for future clinical implementation. Building on this, future studies could also move away from a binary classification (e.g. depression-free vs. MDE) towards a multi-classification approach to also support the differential diagnosis (e.g. distinguishing between various mental disorders with similar symptoms). However, it also needs to be highlighted, that the current nosology in clinical practice only insufficiently reflects the heterogeneous nature of mental disorder.83–85 For instance, over 1000 unique symptom profiles with a frequency of 1.8% of the most common profile have been reported in 3703 outpatients. 84 Furthermore, the poor reliability of diagnoses 86 and the high comorbidity as a potential indicator of unitary disorders being split up into various diagnoses 83 pose major limitations for research and practice. Investigating how ML can be applied in different systems (e.g. Hierarchical Taxonomy of Psychopathology 83 or the Extended Evolutionary Meta-Model87,88) to predict clinical relevant symptomology and inform treatment processes would be a very valuable addition to this study.

Conclusions

The present study evaluated the potential of ML to increase the performance of screening instruments for MDE. ML improved the performance of clinician-rated and self-report instruments (QIDS-16, PHQ-9). In particular, the optimization of the QIDS-16 indicated high clinical relevance. If proven to be effective in confirmatory studies, implementing ML-enhanced screening tools in clinical practice could significantly improve diagnostic procedures. Given that a timely and efficient diagnosis is key in healthcare, ML applications in mental health care may lay the foundation for optimized mental healthcare in future. However, further research how the potential of ML can be exploited (e.g. by including more data sources like socio-demographic, health records, biomarkers, and smart sensing data) and the implementation into routine care is highly needed.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076231194939 - Supplemental material for Optimizing the predictive power of depression screenings using machine learning

Supplemental material, sj-docx-1-dhj-10.1177_20552076231194939 for Optimizing the predictive power of depression screenings using machine learning by Yannik Terhorst, Lasse B Sander, David D Ebert and Harald Baumeister in DIGITAL HEALTH

Footnotes

Acknowledgement

Contributorship

Conceptualization: YT and HB; Methodology: YT; Software: YT; Validation: YT, HB; Formal Analysis: YT; Investigation: YT, HB, LBS, DDE; Resources: HB, DDE; Data Curation: YT, LBS, HB, DDE; Writing—Original Draft: YT; Writing—Review and Editing: YT, LBS, DDE, HB; Visualization: YT; Supervision: HB; Project Administration: YT, HB; Funding Acquisition: HB, DDE, see funding statement for details.

Ethical approval

The present study is a secondary analysis of the large-scale pragmatic, observer-blinded randomized controlled trials WARD-BP and PROD-BP.35–38 All procedures have been approved by the ethics committee of the Albert-Ludwigs-University of Freiburg, Germany, and the data security committee of the German Pension Insurance. All participants provided written informed consent for participation in the studies.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The present study was self-funded by the authors. The included RCTs providing the primary data were funded by the German Federal Ministry of Education and Research (grant number: 01GY1330A).35–38

Data availability statement

The primary data obtained from the RCTs can be provided by the principal investigator of these trials (HB) on reasonable request. Data-sharing agreements (including the researchers and institutions from the two RCTs) may have to be signed depending on the request. Data and analysis script are available under CC-By Attribution 4.0 international at ![]() . Requests regarding the analysis should be directed at the corresponding author (YT). Support from HB and the corresponding author is depending on available resources.

. Requests regarding the analysis should be directed at the corresponding author (YT). Support from HB and the corresponding author is depending on available resources.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.