Abstract

Stories about ‘intelligent machines’ have long featured in popular culture. Existing research has mapped these artificial intelligence (AI) narratives but lacks an in-depth understanding of (a) narratives related specifically to weaponised AI and autonomous weapon systems and (b) whether and how these narratives resonate across different states and associated cultural contexts. We speak to these gaps by examining narratives about weaponised AI across publics in France, India, Japan and the US. Based on a public opinion survey conducted in these states in 2022–2023, we find that narratives found in English-language popular culture are shared cross-culturally, although with some variations. However, we also find culturally distinct narratives, particularly in India and Japan. Further, we assess whether these narratives shape the publics’ attitudes towards regulating weaponised AI. Although respondents demonstrate overall uncertainty and lack of knowledge regarding developments in the sphere of weaponised AI, they assess these technologies in a negative-leaning way and mostly support regulation. With these findings, our study offers a first step towards further investigating the extent to which weaponised AI narratives circulate globally and how salient perceptions of these technologies are across different publics.

Introduction

Interest in how artificial intelligence (AI) 1 is imagined in different narrative forms has grown significantly. AI narratives found across various popular culture products can shape what kind of benefits and risks publics associate with AI (Cave and Dihal, 2023c; Cave et al., 2020a). Most of the existing AI narratives literature has centred on categorising the diverse types of stories which have (and have not) been told about these technologies, especially in the context of the Anglosphere. Researchers interpret the narrative content of diverse works of popular culture to see if their frequently utopian or dystopian themes have influenced the views, discourse and actions of different stakeholder groups (Cave and Dihal, 2019, 2023c; Cave et al., 2018; Chubb et al., 2024; Dillon and Schaffer-Goddard, 2023; Hudson et al., 2023).

Our paper connects to this literature and identifies two knowledge gaps. First, narratives that specifically concern weaponised AI or autonomous weapon systems (AWS) 2 have not received much attention therein. By this we mean popular culture depictions of the real-world integration of autonomous and AI technologies into the targeting processes of weapon systems (Boulanin and Verbruggen, 2017). Increasing state investments into weaponised forms of AI underline that the military application of these technologies is not science fiction (Bode et al., 2023; Garcia, 2023). While these applications serve different purposes in various contexts, the use of AI in military targeting decisions could lead to extreme forms of harm, destruction and insecurity. Due to their political and societal significance, narratives of weaponised AI require their own dedicated analysis.

Second, whilst the AI narratives literature identifies what types of stories have been told about intelligent machines, there has been less empirical study of how AI narratives have been received and mediated. We currently know relatively little about whether (and if so, how) these AI narratives influence the perceptions of different publics. This connects to a larger research gap around understanding public opinion on weaponised AI/AWS. Studies generally focus on single publics, for example, those of the UK (Cave et al., 2019), the US (Horowitz, 2016; Rosendorf et al., 2022, 2024; Young and Carpenter, 2018; Zhang and Dafoe, 2019) or other Western states (Sartori and Bocca, 2023; Selwyn and Gallo Cordoba, 2022). Further, the literature does not cover these issues in a comparative perspective. Both the extent to which the currently most studied popular culture AI narratives are salient internationally remains unclear and whether there are other AI narratives shaping public thinking originating in different states and associated cultural contexts.

As a result, we lack an understanding of how AI narratives that circulate in popular culture can shape, destabilise and reproduce the public's views on the ‘appropriateness’ of developing and using weaponised AI (Bode and Huelss, 2022). Narratives about complex phenomena like ‘AI’ serve as a means of simplifying these into plots, characters and settings. Through this simplification process, they encapsulate and communicate certain ways of envisioning ‘AI’, including in the military domain, with the potential to reinforce or shift public, societal and stakeholder perceptions of what is ‘appropriate’ behaviour when using and developing these technologies. Exploring these narratives, and how they are perceived by different publics, therefore has important implications for both the research and policy debates around AI.

Our paper speaks to these gaps by presenting the results of a new questionnaire survey 3 that, to our knowledge, provides the first comparative analysis of the salience of popular weaponised AI narratives amongst the French, Indian, Japanese and US publics. 4 We present findings in answer to three research questions: (a) To what extent, if any, are depictions of weaponised AI derived from narratives catalogued by researchers Stephen Cave and Kanta Dihal in Anglophone popular culture relevant to the French, Indian, Japanese and US publics? (b) Are perceptions of weaponised AI across these four states shaped by alternative weaponised AI narratives, and if so, what are they? And (c), to what extent is there evidence of a link between narratives and publics’ attitudes towards regulating the weaponisation of AI technologies?

The remainder of the paper is structured as follows: first, we review the current literature on AI narratives in the context of broader sets of narratives concerning malicious uses of technology. We adapt the eight AI narratives catalogued by Cave and Dihal (2019) to focus specifically on depictions of and concerns about weaponised AI as our analytical framework. Second, we present methodological information about the questionnaire we conducted (via YouGov) with French, Indian, Japanese and US publics in December 2022–January 2023. Our study follows an interpretive research design and does not aim at generalisability and causality. We acknowledge that the selection of states is limited, and that specific views expressed in dominant narratives do not directly translate into specific understandings about regulation. Rather, we present initial insights about cross-cultural public perceptions of weaponised AI narratives that do show differences across the surveyed states encouraging. Third, we outline the findings of the questionnaire surveys and fourth, discuss them. Finally, we offer a critical conclusion, inviting further research on global perceptions of weaponised AI.

Narratives about weaponised AI in the context of stories about malicious uses of technology

The stories told about military uses of intelligent machines long predate the development of the earliest category of AWS during the 1970s (Bode, 2023) and are embedded within a wider collection of imaginaries concerning malicious uses of technology. 5 Within the Anglosphere, novels such as Aldous Huxley's Brave New World and George Orwell's 1984, for instance, depicted the use of technology to sustain totalitarian social orders (Gruenwald, 2013). This genre of dystopian novels is often premised on a determinist understanding of technology as an ‘autonomous force’ that reshapes, seemingly independently, the values and structure of society itself (Beauchamp, 1986). The stories told about weaponised AI/AWS are embedded in and framed by these larger dystopian narratives. 6 From the mid-twentieth century onward, the dramatised depiction of AI capable of causing physical harm has emerged as a common Hollywood trope. These cinematic portrayals of malicious intelligent machines have generally fallen into one of three categories: (a) disembodied superintelligences of the type first popularised in the character HAL 9000 featured in 2001: A Space Odyssey; (b) anthropomorphised and often gendered (Hermann, 2022) robots, such as Ava (Ex Machina), capable of replicating human behaviours; and (c) ‘robotic soldiers’ popularised through films franchises such as The Terminator and The Matrix (Horowitz, 2016: 2). Whilst the cultural reach and influence of the US could suggest that awareness of these narratives has travelled globally, this assumption requires further empirical investigation.

As the starting point for our cross-cultural analysis of stories portraying weaponised AI, it is important to note that the cinematic portrayal of these technologies is more varied than the seemingly simple imaginary of ‘killer robots’. It can involve the multifaceted depiction of these technologies as being capable of conducting both helpful and harmful actions. Dystopian narratives form part of a wider science fiction ‘mega-text’ that sits alongside more utopian portrayals of these technologies (Hermann, 2023: 321). The ‘Good Terminator’ (Carpenter, 2016) featured in Terminator 2: Judgement Day, for instance, protects its human companions from physical harm, enacts the commands of human decision makers, and is shown using force with superhuman levels of precision (Watts and Bode, 2024). Narratives about weaponised AI can be embedded within broader narratives about malicious uses of technology. They should not be analytically conflated, however, because narratives about military applications of AI may feature both techno-dystopian and utopian portrayals of AI. Moreover, as a type of ‘cultural artefact’ (Hermann, 2023: 319), narratives are open to multiple interpretations. It cannot be assumed that academic perspectives of their meaning are shared with other audiences (Young and Carpenter, 2018).

As reflected in the perceived AI ‘story crisis’ pervading Anglophone culture (Chubb et al., 2024), portrayals of weaponised AI and AWS have emerged as one of the principal reference points for popular thinking about AI in general. The Terminator franchise, for instance, is understood to have ‘shap[ed] the public perception of AI since 1984’ (Cave et al., 2018: 16). We acknowledge that the stories told about weaponised AI and AWS form part of a wider corpus of utopian and dystopian thinking about technology. But we hold that this specific category of stories warrants further investigation given its cultural salience in Anglophone cultures and the currently under-investigated possibility that the narrative content and perception of these technologies varies across different states and associated cultural contexts.

As a conceptual framework for studying how audiences come to know about and form opinions on these technologies, scholars from across the humanities and social sciences have developed the study of AI narratives, broadly defined as the stories told about intelligent machines in works of fiction, non-fiction and in the media (Cave et al., 2018: 5). Studying these narratives requires certain qualifications. Popular culture depictions of AI should not be taken literally. In Western cinema, AI technologies are regularly depicted in highly dramatised and futuristic ways to tell stories about real-world socio-political issues (Hermann, 2023). The unrealistic depiction of super-intelligent forms of AI not only distracts from the more mundane real-world uses of these technologies (Hudson et al., 2023: 198) but erases the role played by human decision-makers and corporate interests in sustaining the real-world development of these technologies (Johnson and Verdicchio, 2017). AI narratives are conceptualised as communicating culturally specific sets of interpretations regarding AI and its potential or already existing impacts on society (Cave and Dihal, 2023c). They are argued to influence the perceptions and agency of audiences including AI developers, policymakers and the general public (Cave and Dihal, 2019: 74, 2023b: 3–4; Cave et al., 2020b: 7–10; Dillon and Schaffer-Goddard, 2023).

In the most comprehensive cataloguing of these stories to date,

7

Cave and Dihal have identified eight common AI narratives based on a review of 300 chiefly Western, English-language fiction and non-fiction works (2019) (Table 1). Whilst not the sole focus, these materials include many stories featuring weaponised forms of AI and AWS.

8

In collaboration with Coughlan, Cave and Dihal have surveyed the awareness of and emotional response to these narratives in a nationally representative survey of the UK public (Cave et al., 2019). Subsequent research has built on this categorisation of common AI narratives by examining their circulation amongst specific audiences including national general publics (Sartori and Bocca, 2023; Selwyn and Gallo Cordoba, 2022), the media (van Noort, 2024), academic researchers (Hudson et al., 2023) and statements made as part of the international regulatory debates on AWS (Watts and Bode, 2024). Cave, Dihal and other researchers associated with the Leverhulme Centre for the Future of Intelligence are not the only authors to have examined the various ‘myths’ (Natale and Ballatore, 2020) and ‘imaginaries’ (Bareis and Katzenbach, 2022) connected with AI. The use of Cave and Dihal's categorisation of AI narratives as the foundation of our study serves two important functions. First, it provides a framework for structuring and interpreting our survey findings. Second, it enables us to extend the growing debates in this area by both examining whether these stories matter and whether different stories are told in different states and cultural contexts. ‘Hopeful’ AI narratives present intelligent machines as a transformative cause of human immortality, ease, and gratification, and as a vehicle for preserving the dominance of certain utopian ways of life. These stories often take cues from the science fiction writer Isaac Asimov—more specifically, the Three Laws of Robotics which hold that machines can be programmed with ‘ethical safeguards’ to prevent them from harming humans (Ingersoll et al., 1987: 68–9). ‘Fearful’ AI narratives, in contrast, explore the idea that AI will trigger inhuman behaviours, produce human obsolescence and social alienation, and be the catalyst of a machine uprising in which these technologies overthrow their human creators. Such narratives generally draw from the motif of ‘machines as monsters’ that can trace its literary roots to Mary Shelley's Frankenstein (Cave et al., 2018: 8; Hermann, 2023: 322; Hudson et al., 2023: 197).

Major AI narratives in English-language popular culture (based on Cave et al., 2019).

Major AI narratives in English-language popular culture (based on Cave et al., 2019).

AI: artificial intelligence.

We situate our study of narratives about weaponised AI in the context of this literature. As with the wider scholarship in this area, our analysis is predicated on three understandings. First, that popular culture provides a repository of stories told across multiple mediums (film, television, literature, video games and comic books) that may shape shared perceptions of how ‘desirable’ developing and fielding weaponised forms of AI is. Second, that the AI narratives presented in Anglophone popular culture are shaped by nationally specific ‘histories, philosophies, ideologies, religions, narratives traditions and economic structures’ (Cave and Dihal, 2023b: 4). And third, extending a key insight developed in the International Relations literature on popular culture and world politics, that we cannot automatically assume that academic interpretations of the narrative meaning of stories portraying intelligent machines are universally shared. Instead, ‘we need to look to how audiences receive and understand the media that they consume’ (Young and Carpenter, 2018: 574), in our case, through the use of a survey.

For this purpose, we adapted Cave and Dihal's typology of AI narratives to focus on narratives related to the weaponisation of AI. We concentrate on only four narratives that speak directly to characterisations or concerns relevant to imagining weaponised AI: dominance, uprising, alienation and gratification. Table 2 summarises these narratives, adapting descriptions of the narratives that Cave, Coughlan and Dihal used in their survey of the UK public (2019: 333). Whilst derived from the study of principally Anglophone works of popular culture, these descriptions provide a framework through which to both examine the salience of these narratives across the four surveyed publics and identify distinct narratives about weaponised AI.

Narratives of weaponised AI (based on Cave et al., 2019).

AI: artificial intelligence.

Through surveying and comparing audiences in France, India, Japan and the US, we continue the ongoing empirical examination of the content, history and societal impact of weaponised AI narratives across contexts. Cross-cultural comparisons remain limited to a few exceptions, such as Bareis and Katzenbach's study of the imaginaries featured in the national AI strategies of China, France, Germany and the US (2022). Their findings suggest that may be cultural variation in the types of stories told about military AI and AWS. As they understand it, whilst national AI strategies share an understanding that technical advances in this field are inevitable and will be highly disruptive, US, French, Chinese and German AI strategies reflect the cultural, political and economic contrasts between the four states (Bareis and Katzenbach, 2022: 855). This suggests that the processes of narrative sense-making regarding military applications of AI are not consistent across different states and associated cultural contexts.

Our data is derived from an online questionnaire survey conducted in December 2022–January 2023. 9 Our research design, including the handling of survey data, followed a qualitative-interpretivist approach. We therefore do not present a statistical analysis but aim at understanding survey responses as contextual forms of discursive meaning-making (Schwartz-Shea and Yanow, 2012).

Sample

The surveys were conducted via YouGov with a representative sample of French, Indian, Japanese and US citizens aged 18 + . Standard YouGov panel practice gave respondents ‘points’ for participating. Each country averaged over 500 respondents (France: 508, India: 510, Japan: 508, US: 511). 10 The survey took 3–4 min on average. The survey was anonymous, but asked about gender, age and geography to ascertain the sample's diversity (see data in the online supplemental materials). Across the four states, respondents were almost equally distributed into female and male (the questionnaire included two options for gender). 45 + or 55+ (YouGov uses different age categories for different states) were slighter more represented than other age groups. Respondents were from different state regions. As we are interested in examining the discursive substance of the responses, we did not correlate such demographic factors with responses.

We used three criteria to select France, India, Japan and the US as cases for the survey and the wider research project it is embedded in. First, these four states are prominently positioned as potential developers of weapon systems integrating AI technologies due to large financial investments or advanced robotics sectors (Garcia, 2023; Haner and Garcia, 2019). Second, these states have actively participated in the AWS debate at the Convention on Certain Conventional Weapons and advocated a variety of regulatory positions. The US and India oppose new international law on AWS, France is more moderate, while Japan initially supported a ‘wait and see’ approach but now aligns with the US. Third, we chose these states’ publics because they allow us to compare English-language AI narratives that often emanate from the US with those of less surveyed states like France, India and Japan.

Questions

The 10-question survey was divided into three parts: Part 1 addresses basic understandings and visual ways of imagining weaponised AI (see full questionnaire in the online supplemental materials); Part 2 examines familiarity with and the salience of the four weaponised AI narratives identified in Section 1 and alternative narrative themes; and Part 3 probes attitudes towards regulation. The empirical findings of this paper focus on the five questions in Parts 2 and 3 because only these addressed AI narratives and the issue of regulation (Table 3).

Survey questions.

Survey questions.

AI: artificial intelligence.

Q8 coding categories of ‘other substantive answers’. 16

AI: artificial intelligence.

The survey followed a mixed-structure approach, including five multiple-choice and five open-ended questions. The open-ended questions were a particularly important part of our survey design, contrasting with many other existing surveys of international public opinion on AWS which use multiple choice questions (Deeney, 2019; Horowitz, 2016; Ipsos, 2021; Van der Loos and Croft, 2015). By using open-ended questions, we aimed to develop a deeper understanding as to why respondents may hold a positive/negative attitude towards weaponised AI. We pursued an inductive approach to coding responses to the open questions (Q8 and Q10) and iteratively discussed and adjusted our coding themes, for example, via going through examples and comparing coding approaches.

Our research and survey design deviates from methods conventionally associated with survey experiments in that we conducted an interpretivist study. In this, some of our survey questions include references to our conceptual terminology—namely ‘story’—that are potentially challenging for respondents to understand. Aware of this, throughout the questions we used the more common notion of ‘story’ rather than of ‘narrative’ to ground understanding (Table 3). The notion of ‘story’ should make intuitive sense to respondents because it is part of a common vernacular. We also acknowledge that the design of some of our survey questions demanded a lot from respondents as they contained a high degree of introspection, for example, Q7 or Q10. 11 As a potential consequence of this, especially towards the end of the survey, some questions across the four surveyed publics included significant percentages of ‘unsure’ responses. At the same time however, the significance percentage of ‘unsure’ responses could also be consistent with the ambiguity that characterises how AI has been understood in both popular culture and policy contexts (Hudson et al., 2023).

Summary of findings

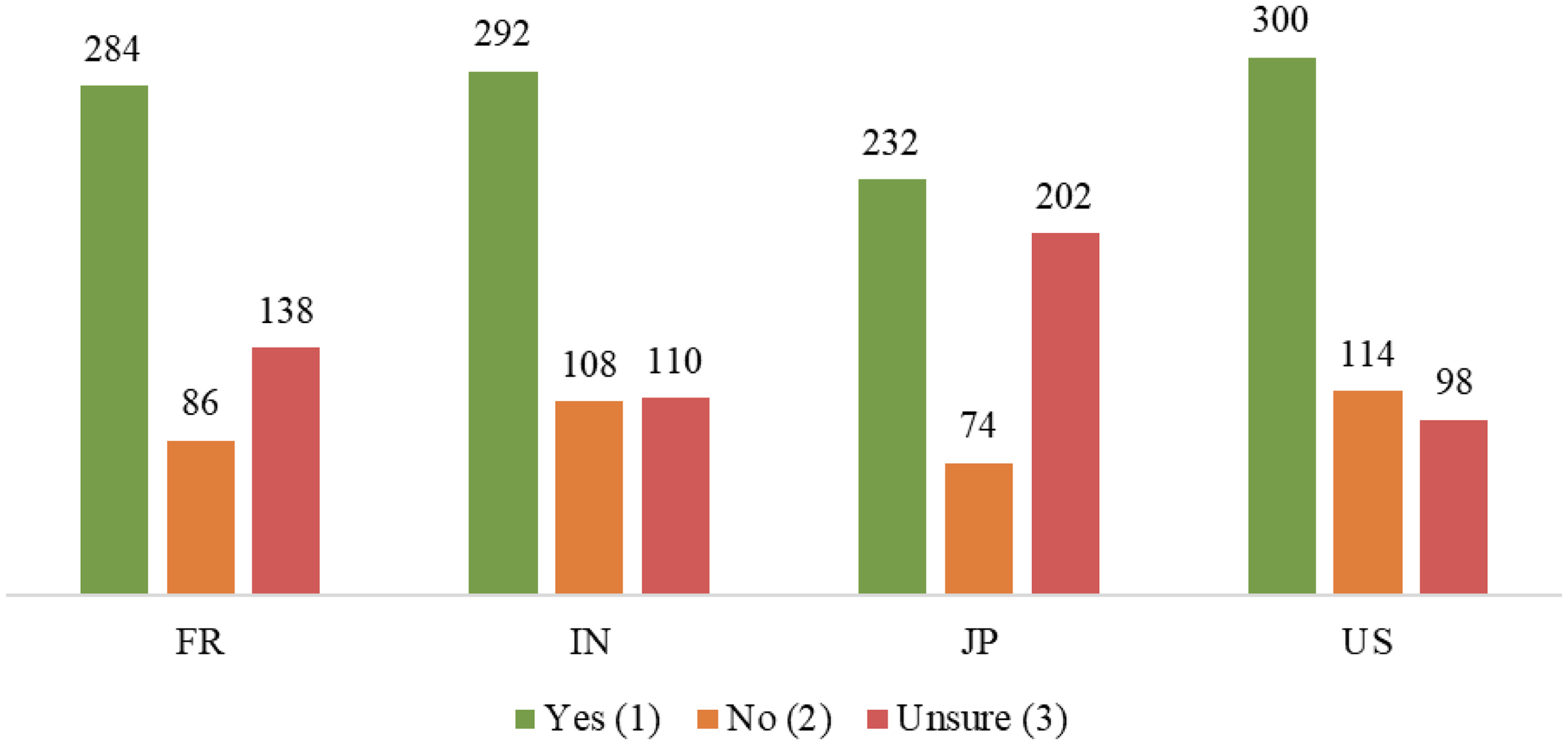

Q6: Familiarity with weaponised AI narratives

Most respondents in France, India, Japan and the US are familiar with the four weaponised AI narratives we surveyed (Figures 1–4). Across all four publics, most respondents knew the dominance narrative, followed by the alienation and uprising narratives. The gratification narrative was least known. This may be because weaponised AI, in comparison to other forms of AI technologies, such as disembodied voice/virtual assistants, is not as clearly linked with the gratification storyline.

Familiarity with dominance narrative.

Familiarity with uprising narrative.

Familiarity with alienation narrative.

Familiarity with gratification narrative.

There are notable differences between the surveyed publics. Japanese respondents chose ‘unsure’, much more often than French, Indian and US respondents, at a highest rate (48%) in relation to the gratification narrative. In comparison, Indian and US respondents used ‘unsure’ least often and were overall most familiar with the four narratives. This finding is insightful because the weaponised AI narratives we surveyed originate in English-language works and may resonate more strongly in English-speaking states. Yet, as we describe in Q8, Indian respondents also suggested several additional popular culture works, especially movies, as sources of narratives. Thus, the Indian respondents may overall be more familiar with certain popular culture products and the weaponised AI narratives these feature.

Across the four publics surveyed, the dominance narrative shaped their thinking on weaponised AI the most, followed by uprising (with Japan as an exception). The alienation and gratification narratives were least influential (Figure 5). These results reflect respondents’ familiarity with the four narratives (Figures 1–4). French and US respondent groups are similar, while Indian and Japanese responses differ. Japanese respondents to Q7 indicate that alienation, gratification and uprising have little impact. Given its status as the ‘touchstone narrative’ of weaponised AI in English-language popular culture (Cave et al., 2020b: 5), uprising's modest impact among Japanese respondents is notable. In keeping with this, 34% of Japanese respondents indicated that none of the narratives influenced their thinking. The dominance narrative clearly stands out among Indian respondents, although the other three narratives are also influential. Only 9% of Indian respondents noted that none of these narratives influence their thinking—the lowest percentage among the publics surveyed.

Q7 results.

Q8 asked about culturally specific, alternative viewpoints on weaponised AI, cognizant that the four narratives whose familiarity we surveyed originate in largely English-language popular culture.

Figure 6 shows responses to the open question 8. 12 There were few ‘Yes’ responses without explanation throughout the four respondent groups (range: 3% Japan to 13% India). More respondents answered ‘No’ without explaining (range: 17% Japan to 31% US), suggesting that Q6's four narratives do not overlook additional perceived implications of weaponised AI and that there is nothing to add. Another group of respondents answered ‘I don’t know’ or ‘I’m not sure’ without further details. Japanese respondents are particularly uncertain (43%), as seen throughout the survey. French respondents also provided a large proportion of ‘do not know’ responses (31%), which is also consistent with their overall survey responses. Additionally, 31% of US participants do not think the four stories in Q6 overlook other essential AI features, but do not explain their answers.

Q8 overview of responses.

Q9 overview of responses.

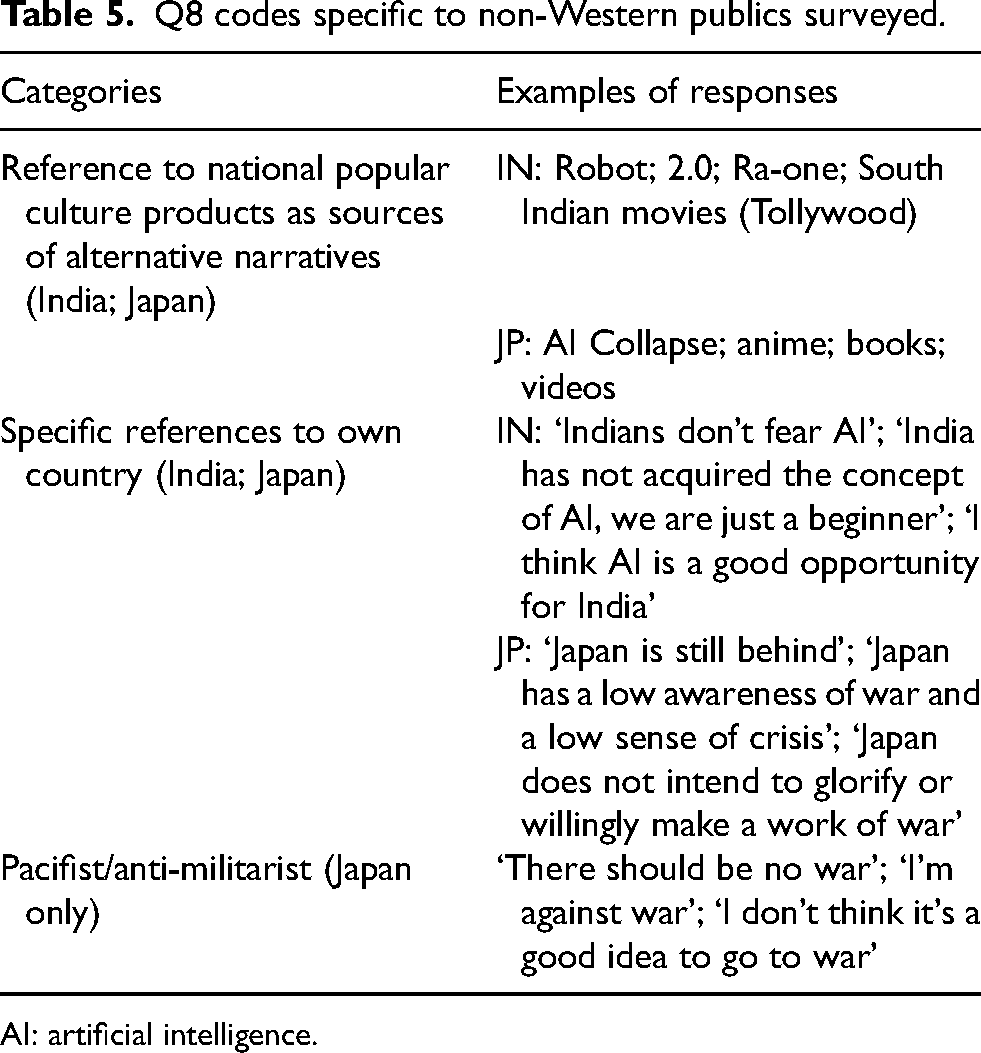

The category ‘other substantive answers’ includes responses of various lengths and themes. We developed 10 broad categories to code these responses for structured analysis (Table 4). These categories include narratives not or only partly covered by Q6's four weaponised AI narratives (Tables 1–2). Other categories include AI applications across civilian and military domains. Further, we characterised assessments of AI as positive/negative rather than hopeful/fearful because the former captures a more comprehensive set of evaluations. We coded responses according to 10 categories to demonstrate their diversity: no single category is prevalent either in or across the publics surveyed.

In addition to these 10 codes, Table 5 shows responses that are particular to either one or both non-Western publics surveyed. Japanese and Indian respondents linked popular culture products to alternate weaponised AI narratives. Answers differed in that many Indian respondents offered names of concrete movies, for example, ‘Robot’ [Enthiran], while Japanese responses pointed to larger genres of science fiction, for example, that ‘it's already been featured in Japanese anime’. Notably, no French respondents referred to specific Francophone narratives on weaponised AI. Some, however, mentioned the French Renaissance writer François Rabelais and the phrase ‘science without conscience is only ruin of the soul’ attributed to him. French and US respondents largely followed the stories found by Cave and Dihal in English-language popular culture. We found that Indian and Japanese respondents made specific references to their countries, in contrast to French and US respondents. Despite the recent reemergence of great power competition as an organising framework of US foreign policy (Bode et al., 2023), US respondents notably did not refer to other states such as China. While other US responses were covered by the 10 codes above (Table 4), it is noteworthy that ‘malice’ concerned many respondents. We explain these responses below.

Q8 codes specific to non-Western publics surveyed.

AI: artificial intelligence.

We use two major themes from the four surveyed publics to structure the summary of our findings: (a) negative assessments of AI (including ‘other AI narratives’, ‘malice’, ‘malfunctions’) and (b) uncertainty. We emphasise codes that were particularly important to the respective respondent group.

France: The French respondents display an overall negative assessment of AI technologies in Q8. They are especially concerned with the loss of human control over AI, whether weaponised or not. Examples include: ‘The technology is evolving very rapidly and we can lose control’ and ‘I fear that AI will surpass its creators, they will no longer be masters of what they have created’. Such fears are in line with Cave and Dihal's uprising narrative or the fear of ‘humankind's unnatural creations rising up against us’ (Cave et al., 2018: 8; Coeckelbergh, 2020: 21). Moreover, many French respondents fear the obsolescence of humans as AI plays an increasingly important role across various sectors of society, for example, ‘If we replace humans by AI, what will be AI's place in society? There is also a big risk of dependence and loss of know-how’, ‘I think that they [AI technologies] are helping us to not think by ourselves, limiting our natural evolution’. While there are some respondents pointing out positive implications of AI, such as ‘the power of helping and saving humans’, concerns about AI predominate. Such results are consistent with previous public opinion studies revealing French ‘technophobe’ tendencies (Berthier, 2016). A recent Ipsos poll in France found 54% of respondents unfavourable or sceptical of AI (Latrille and Teinturier, 2023). They also match the overall uncertainty which 30% of French respondents expressed in previous Ipsos surveys about AWS (Ipsos, 2021).

French respondents’ concerns do not only relate to AI itself but to potential malicious uses of AI, often framed as almost inevitable given humans’ history of technological use: ‘It's like any technology, malicious use remains a possibility or even a certainty given the few unconscious power-hungry people’ or ‘Like any digital tools, AI will be misused for malicious purposes’. Thus, there seems to be not only mistrust towards AI but also towards humanity, for example, ‘Given the efficacity of humans to kill each other has never had limits (cf. torture museums), I doubt that there are no other implications’. Finally, some respondents noted that more research or information is needed about the topics, for example, ‘Need for more research for better understanding of the limits of AI’ or ‘I haven’t observed anything about this. But I think that the general public doesn’t know anything about AI in warfare’.

India: Negative assessments of AI feature among Indian respondents, often reflecting a general level of concern: ‘use of AI for destruction is not palatable for me’ or ‘as the capabilities of using AI increase infinitely, there can be unimaginable consequences of using AI in war’. Some of the concerns raised also draw on broader AI narratives categorised by Cave and Dihal, such as alienation: ‘many have concerns about how advances in AI will affect what it means to be human, to be productive and to exercise free will’. But the Indian public appears to follow a more ‘nuanced’ approach in the sense of recognising both positive- and negative-leaning presentations of weaponised AI, for example, ‘I think there are both risks and benefits available over these technologies’ or ‘AI in war can be helpful and protective but there can be bad effects’.

In addition, some Indian respondents also propose entirely positive views of using AI both in civilian and in military contexts: ‘its significant impact on human lives is resolving some of society's most pressing issues’ or ‘AI would be very popular whether in common people technology or in the modern weapons because it requires less manpower and is guided by the engineers with a lot of distance’. Compared to other survey groups, Indian respondents appeared to transpose a greater sense of excitement about the positive visions attached to AI in war: ‘AI is very helpful to our Indian Army for protecting our people from the war’. Such responses broadly match previous polls on AWS, for instance the Ipsos surveys commissioned by the Campaign to Stop Killer Robots (2018 and 2020). In both cases India is the only country with a majority supporting autonomous weapons, with 56% in 2020 and 50% in 2018 (Deeney, 2019; Ipsos, 2021). Moreover, in significant contrast to, for example, the Japanese respondents, the Indian public surveyed only spoke of uncertainty or futurism on rare occasions, for example, ‘weaponised AI is a step ahead of what we are used to in the current usage of technology, for, for example, usage of robots in warfare is unheard of still’.

Two further themes stand out: first, more than 25% of Indian respondents named popular culture products, mostly films, to offer alternative weaponised AI narratives, especially Robot, 2.0 and Ra-one. These films echo English-language narratives of weaponised AI like uprising (Mahalakshmi and Rajendran, 2023). Many respondents referred to South Indian movies or Tollywood, an industry that is known for producing fantastical, ‘out-there’ themes (Bode and Mohan, forthcoming). Second, Indian respondents also reflected about where their country sits in the global development of weaponised AI: ‘India doesn’t have this much of technology till now. But surely it will go that level some day’. This also features some reflection about, as perceived by some respondents, the relative lack of narratives about weaponised AI in India: ‘In Indian movie, we not like AI based story we like human power stories’.

Japan: Most Japanese responses feature a negative/fearful assessment of weaponised AI: ‘AI is becoming more common in everyday life, but it would be a catastrophe in a large-scale war, so I think we are missing out on the importance of AI’. Others offered specific examples, drawing on themes reminiscent of general AI narratives identified by Cave and Dihal, such as inhumanity (‘we underestimate the parts that affect the human spirit, manipulating high-level things that humans cannot do, activities in dangerous places etc.’) or obsolescence (‘as AI evolves further, we will understand the stupidity of human beings. Even if AI is understood, humans won’t know it’). Only one response featured a positive assessment of weaponised AI (‘I think it is possible for AI to attack the enemy accurately, quickly and efficiently without emotion’), while others commended AI's efficiency in industry and the economy. These findings match results from the 2018 and 2020 Ipsos surveys where respectively 48% and 59.4% of Japanese respondents were somewhat or strongly opposed to AWS (Deeney, 2019; Ipsos, 2021). The Japanese respondents’ overall tendency to express their uncertainty also feature in their responses to Q8, for example, ‘Isn’t that the case with drones? I’m not sure’. Further, some Japanese respondents identify weaponised AI as a future issue, for example, ‘the mass media may not be interested in this kind of story yet, only some nerds’.

Two further coded themes stand out among Japanese responses. First, references to Japan lacking military AI development and investment: ‘Japan is too conservative and lagging behind, so it gets overlooked’ or ‘Japan has no army and is very behind in terms of leadership’. We were surprised by responses about Japan's lack of AI development given the prominence of robotics in Japanese society and popular culture (Nakao, 2014). These differences may be due to Japanese investment and focus on industrial robotics and AI software development. Given that the survey deals with weaponised AI specifically, some of the responses draw attention to Japan as a special case: ‘I have the impression that the technology exists, but we’re being careful about using it’; ‘I think we are somewhat out of touch with AI, which is not being used as a weapon under Japan's Peace Constitution, and I think information on AI weapons should be gradually reported’. These responses reference the country's post-World War II antimilitarist turn (Shuichi, 2010). In their anti-war responses (‘I am against war’, ‘There should be no war’), some Japanese respondents appear to reiterate pacifist or antimilitarist views.

US: US respondents showed a strong level of negative viewpoints towards AI. Important codes include ‘malice’ – being concerned about AI used by the ‘wrong’ people – and ‘malfunctions’ – concerns about unreliable and untrustworthy technology. The US public expressed general negative sentiments regarding AI (‘I think this is bad’); concerns about the effect on humans (‘It's making us lazier and dumber’); or concerns about negative economic impacts (‘Many, but the one top of mind is that broader use of AI will fundamental alter the economy, potentially increasing inequality and creating a society we are unfamiliar with’; ‘If you don't tie universal basic income to profits made by machines you will throw a majority of the population into poverty. Millions are suffering and dying under this collapsing system because of greed’). Other US responses were in line with the categories developed by Cave and Dihal, such as obsolescence: ‘I absolutely think that we need to be more careful with AI development and in how it translates to our needs, as well as the level(s) to our dependence on it’ or ‘Losing trust in ourselves as humans and what we can do with our own brains and hands – I think it leads to an existential crisis beyond “what is life”’. Some responses also highlight positive aspects, for example in terms of ease: ‘Yes, the ability of simpler AI capabilities in day to day life such as QA on production lines, building smart cities, etc’; ‘At present, artificial intelligence can provide convenience and reduce unnecessary casualties for human beings’. However, the US survey was clearly dominated by negative viewpoints when it comes to open responses. This finding confirms the tendency outlined by Ipsos where 21% of US respondents somewhat and 34% strongly opposed the use of AWS (Ipsos, 2021).

Unlike other respondents, the US participants rarely expressed uncertainty. US respondents, as well as Indian respondents, were less likely to choose ‘don’t know’ than French or Japanese respondents. Only few responses to the open question said they lacked sufficient knowledge or information: ‘They probably do overlook certain things but I'm not familiar enough with the topic to definitively say’.

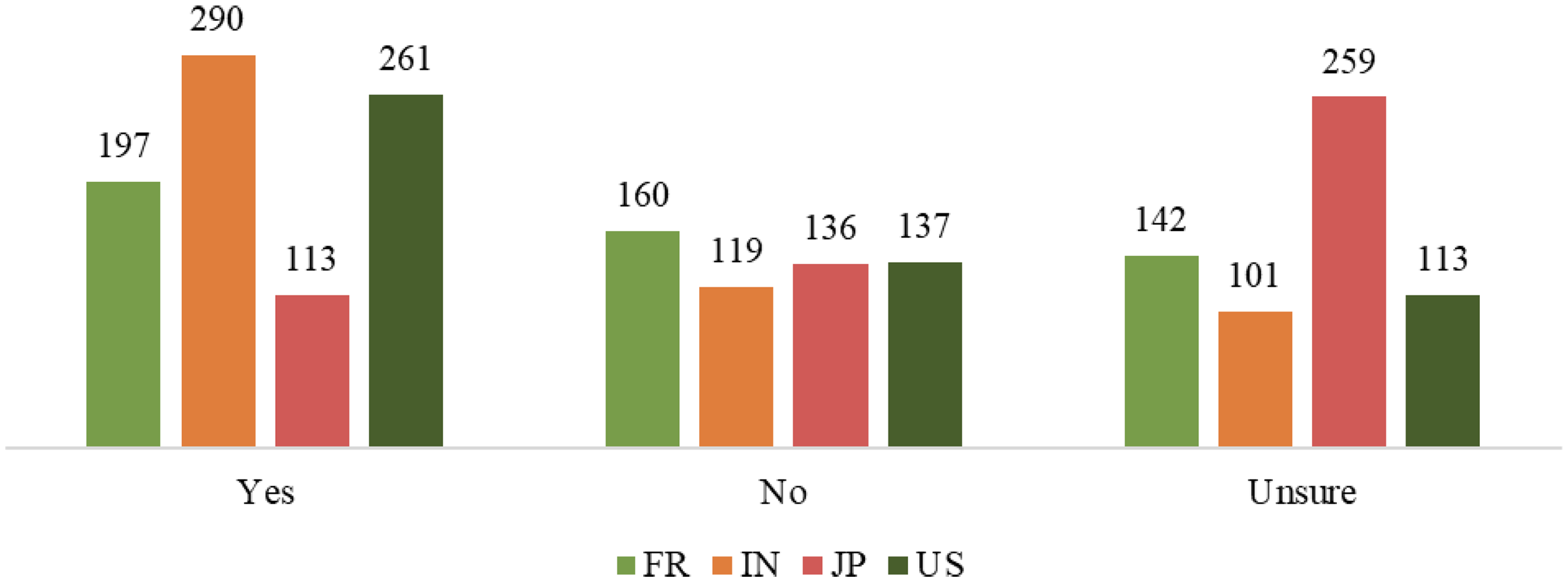

Most Indian (57%) and US (51%) respondents said ‘yes’ in Q9, while 51% of Japanese respondents were unsure. There is no majority for any options among French respondents, although 39% agree that portrayals of weaponised AI in popular culture influenced their views on regulation (Figure 7).

Q10: Weaponised AI narratives and attitudes towards regulation

Figure 8 shows responses to the open question 10. Most French (58%) and Japanese (76%) respondents as well as substantial numbers of Indian (50%) and US (42%) answered ‘I don’t know’. 15% of Indian respondents offered non-valid answers, including copy/pasted sentences from online publications and what appear to be AI-generated answers. 13 This makes it difficult to determine how respondents link portrayals of (weaponised) AI in popular culture to their regulatory opinions. However, as in Q8, we identified eight categories of responses in the ‘other substantive answers’ category, which run across many or all states surveyed (Table 6).

Q10 overview of responses.

Q10 coding categories of ‘other substantive answers’. 17

AI: artificial intelligence.

France: Consistent with their responses to Q8, French respondents perceive AI technologies negatively. A minority of respondents referred to specific works of popular culture, and some explained in detail how these influenced their thinking, for example, In the Swedish series [Real Humans, or Äkta människor] on Arte, thanks to the story I could understand all the difficulties of inventing a new intelligence, its benefits, is disadvantages and limits. By discovering these questions I understood the need for laws and rules to control the abuses of AI as well as human ones.

However, most French answers pertain to either expressing general negative-leaning opinions of AI (‘substituting human decision-making’, ‘risks to national security’ or simply ‘it is dangerous’) or general arguments for the need to regulate different uses of AI, for example, ‘we need laws to prohibit certain uses such as drone surveillance, which could violate privacy’ or ‘we need to define a limit to the use and autonomy of AI’. Overall, French responses are pro-regulation and highlight threats they associate with AI, although they rarely explicitly relate these threats to representations of AI in French popular culture. There are, however, references to other fiction, such as mentions of Asimov: ‘There are regulations governing the world of robots: Isaac Asimov's three laws. It's distressing that the military lobby is prepared to violate these laws’. Overall, the French respondents’ pro-regulation attitude is consistent with results from other surveys conducted in France on AI technologies (Mercier, 2023).

India: Indian respondents demonstrate, to some extent, links between popular culture and their attitudes towards regulation with statements such as: ‘The biggest movies and series invariably show the harmful side of weaponised AI violating the three laws of robotics [of Isaac Asimov]’, ‘It has shown the negative side, so it makes me think’. Only one Indian respondent [out of 510] clearly opposes regulation (Table 6), but overall, unfavourable views of AI technologies are less pronounced than among, for example, French respondents. Some Indian respondents say AI is ‘a risky investment whether it is in monetary terms of intellectual and emotional ways’, or that ‘one day robots will take over us instead of helping us’. Many respondents merely remark that AI ‘must be regulated’ without clearly tying it to popular culture: ‘AI should be weaponised to save the people rather than kill and spread Chaos, there should be an international regulatory body for AI moderation’. Across Q10, Indian respondents were more positive in their assessments of AI than other groups, with many stating that AI ‘is good for the army’ and ‘helps reduce loss of life’, while weaponised AI ‘is useful to protect higher officials’.

Japan: Japanese respondents identify clear regulatory needs when it comes to weaponised AI, for example, ‘I think regulations are necessary so that they do not exceed the limits of coexistence in real life’ or ‘without regulation, the war will expand rapidly and become more like a game’. Few Japanese respondents identified popular culture as influential in shaping their thinking on regulation (Q9), but many answers to Q10 explicitly mention popular culture: ‘there is no guarantee that AI will function as flawlessly as depicted in pop culture so I think it's important to regulate it in our lives’. Many respondents supported regulation while pointing out that popular culture depictions of weaponised AI are unrealistic: ‘Even in pop culture, there's a chance that we realise it with our current technology, so it needs to be regulated’. In line with Q8, the Japanese respondents are overwhelmingly critical of weaponised AI, even when not mentioning regulation directly: ‘I realised that AI is dangerous if it is not under human control’; ‘AI should not be used as a weapon. Pop culture is a fantasy world’; or ‘It's scary to think that there an AI runaway like in a movie’. The few positive assessments of AI pertained chiefly to the civilian context: ‘I want it to be used effectively outside of war’ and ‘I think it's good to build robots that use AI, but I think the international community should regulate the production of robot soldiers and weapons’. We also see anti-war responses: ‘There are no winners or losers in war. Whether it's AI or not, war ought never be started’.

US: Q10 responses show substantial negative views on AI, both generally and in relation to the perceived need to govern AI. US respondents often expressed fear: ‘They are too advanced and will wipe out populations’; ‘AI is a powerful weapon that should be developed and used very carefully. One powerful person having control of weaponised AI could be very dangerous’. Also, respondents felt influenced by popular culture in their view that AI is a risk that needs to be regulated: ‘They let us imagine possible scenarios and prepare for them so they should be regulated’; ‘Most fiction of this genre is centred on the potential issues and negative impacts. It raises doubts about the safety of such programs’. Many respondents believed AI should be regulated without citing popular culture: ‘if not carefully regulated AI could very easily get out of control’; ‘I hate to say it, but everything needs to have some regulation in order for it to work in a civilised culture’. Some culturally-specific responses expressed negative views on regulation and government involvement, such as ‘whatever the government is involved in it's not good for the country’.

Responses across the four groups show significant diversity in the salience of weaponised AI narratives, especially in the substantive answers to open-ended survey questions. Recognising that diversity, we draw out seven major observations that we identified as noteworthy in light of our research questions across/within respondent groups.

First, there appears to be an overall lack of substantive knowledge and awareness of weaponised AI and associated narratives across respondent groups, especially noticeable in the open-ended questions. A substantial number of respondents, especially in France (31%) and Japan (43%), answered ‘I don’t know’ in Q8, making it challenging to gather substantive replies. In Q10, the ‘Don’t know’ option was chosen by more than half of the respondents in France, India and Japan as well as about 40% of US respondents. This suggests either a lack of knowledge or an unwillingness to engage with the question. Moreover, across the substantive responses to Q8 and Q10, we did not find as many specific references to weaponised AI narratives as expected. This raises questions about the extent to which the general public is aware of how AI technologies are already integrated into present-day weapon systems and the debates surrounding these technological developments. These findings also raise issues about the extent to which public opinion can be a major argument for claiming that AWS go against the Martens Clause. 14 The Campaign to Stop Killer Robots and Human Rights Watch use results from the 2018 and 2020 Ipsos surveys to argue that AWS should be banned based on ‘dictates of public conscience’ (Docherty, 2018). But the uncertainty expressed by our respondents invites further research about the level of public knowledge on weaponised AI (see also Horowitz, 2016: 7; Rosendorf et al., 2024).

Second, survey respondents share an overall negative assessment of AI technologies, especially in France, Japan and the US, and to a lesser extent in India. Across the responses to Q8 and Q10, respondents tend to highlight their concerns about AI technologies and the risks they associate with them, although with different kinds of emphasis. This is an important finding for several reasons. It demonstrates that cultural practices and attitudes regarding robotics and AI technologies in the civilian space do not necessarily translate into broader public understandings of appropriateness when it comes to robotic weapon systems. Japanese society, for example, is often characterised as combining a high level of technological development in robotics with a complex ‘affinity’ towards robots who have entered homes, hospitals, offices and schools fulfilling social functions (Hornyak, 2006; Robertson, 2018; Sone, 2017). Japanese AI narratives feature iconic representations of robot characters such as the cuddly tetsuwan atomu (Astro Boy) who helped humans in fighting ‘for the ultimate goal of postwar defeated Japan – peace’ (Schodt, 2007: 4). Pacifist responses are clearly visible among Japanese respondents, while there is an overwhelmingly negative attitude towards weaponised AI.

Third, the narratives of weaponised AI that Cave and Dihal categorised are clearly salient across the different publics surveyed. The extent of their salience differs, but such narratives appear to be relevant reference points for respondents in France, India, Japan and the US. Interestingly, respondents across the four states readily identified, for example the uprising narrative, with specific Anglophone popular culture franchises such as the Terminator. This underlines the apparent salience of such weaponised AI narratives beyond their original cultural spheres.

Fourth, some weaponised AI narratives appear to be more salient than others. Some respondents across survey groups mentioned hopeful AI narratives in terms of ‘ease’ or ‘gratification’. But overall, sentiments expressed aligned more closely with ‘fearful’ rather than ‘hopeful’ narratives of weaponised AI, including among US respondents. Although there are both hopeful and fearful AI narratives in US popular culture (Cave and Dihal, 2023a), only the fearful narratives appear to be salient among the surveyed US public. Positive visions of AI in the sense of ‘the Californian feedback loop – the idea that technology can overcome all problems encountered in the pursuit of unfettered growth’ (Cave and Dihal, 2023a: 156) may be overemphasised in the literature mapping AI narratives.

Our survey's responses also demonstrated a need to further unpack existing categories of AI narratives. While the uprising narrative specified by Cave and Dihal (2019) was mentioned, respondents also drew significant attention to weaponised AI as malfunctioning and unreliable as major concerns. Specifically, responses showed an interesting variation of the salient ‘dominance’ narrative as distinct from Cave's and Dihal's categorisation. There was little evidence of the dominance narrative's ‘hopeful’ side being salient – this being, the understanding that AI provides a potential opportunity to dominate other countries militarily. Instead, respondents, especially in the US but also across other groups, expressed concern about humans using AI for ‘bad’ purposes. A ‘malice/bad intentions’ narrative comes out as salient, often in combination with concerns about malfunctioning technology. This human centric view of risks attached to weaponised AI establishes a counter-narrative to the fearful ‘uprising’ of technology. Our survey therefore suggests that we require further differentiation of weaponised AI narratives. The popular Terminator image might be less influential than widely assumed.

Fifth, our survey findings suggest a public ambiguity concerning culturally specific weaponised AI narratives (Hudson et al., 2023). We had expected respondents to go beyond the narratives categorised by Cave and Dihal, especially in surveyed publics such as Japan, known globally for its rich and distinct cultural narrative tradition of stories featuring robots (Šabanović, 2014). However, only few respondents either referred to culturally specific popular culture products or identified culturally specific weaponised AI narratives.

Sixth, respondents identified popular culture as shaping their impression of the risks associated with weaponised AI, with some explicitly stating that popular culture had shaped their regulatory attitudes. Most respondents supported regulation but did not mention popular culture as specifically influential. We are thus unable to make any firm judgments regarding the degree to which regulatory attitudes are impacted by popular culture.

Seventh, cultural variations, specifically in terms of how certain groups respond in surveys, can be used to explain some of the more pronounced tendencies within survey groups. For instance, research indicates that East Asians ‘avoid extreme responses’ in polls (Wang et al., 2008: 2). This could have contributed to the fact that Japanese respondents were less certain and made fewer severe claims than respondents from other survey groups. Concurrent with this, some survey studies also observe that people from more individualist cultures ‘seek to achieve clarity in their explicit verbal statements’, in contrast to collective cultures where there is ‘less emphasis on individual opinions’ (Johnson et al., 2005: 267).

Conclusion

This article has explored the cross-cultural salience of narratives of weaponised AI via a public opinion survey conducted in France, India, Japan and the US. Building upon the existing AI narratives literature, we examined (a) whether and/or to what extent narratives related specifically to weaponised AI are salient across different states and associated cultural contexts; (b) whether there are specific, alternative narratives which the publics in these states identify as influential in their thinking about weaponised AI; and (c) whether these narratives influence these publics’ views of regulating these technologies.

Our findings suggest that French, Indian and Japanese respondents are familiar with English-language popular culture narratives. Certain narratives, like dominance and uprising, appear to be more salient than others. Indian, Japanese and US respondents also identified some alternative narratives of weaponised AI, including ‘malice/bad intentions’. Yet some of the culturally distinct narratives pointed out by respondents share motifs also found in English-language popular culture. Fearful AI narratives and a lack of knowledge about AI technologies and their implications are evident in responses from all four publics. Indian respondents seem more confident in their knowledge, while Japanese respondents demonstrate strong uncertainty. Most respondents in France, India and the US say they are influenced by popular culture when thinking about regulation (Q9), but few make direct links between weaponised AI narratives and regulating AI technologies in real life (Q10). Respondents from all four states share negative-leaning perspectives of both civilian and military applications of AI technologies and favour their regulation.

Our findings must be considered along with the limitations of this study, specifically issues such as the relatively large proportion of invalid responses to open-ended questions. The survey's geographical scope also limits its cross-cultural representation. A larger survey, conducted especially in the Global South, could provide a more nuanced examination of global narratives on weaponised AI. 15 The Global AI Narratives project is a first step in this direction (Cave and Dihal, 2023c), but it does not explicitly focus on weaponised AI or map the extent to which the global AI narratives identified across different states are possibly salient among national publics.

Integrating AI technologies into the military targeting process is one of the most concerning areas of AI applications. Given the global ramifications of these developments, the conversation should be a multi-stakeholder dialogue which takes public opinion into account (Garcia, 2023; Holland Michel, 2023). More research on how global publics understand weaponised AI narratives, including through surveys, is vital.

Supplemental Material

sj-docx-1-bds-10.1177_20539517241303151 - Supplemental material for Cross-cultural narratives of weaponised artificial intelligence: Comparing France, India, Japan and the United States

Supplemental material, sj-docx-1-bds-10.1177_20539517241303151 for Cross-cultural narratives of weaponised artificial intelligence: Comparing France, India, Japan and the United States by Ingvild Bode, Hendrik Huelss, Anna Nadibaidze and Tom FA Watts in Big Data & Society

Supplemental Material

sj-xlsx-2-bds-10.1177_20539517241303151 - Supplemental material for Cross-cultural narratives of weaponised artificial intelligence: Comparing France, India, Japan and the United States

Supplemental material, sj-xlsx-2-bds-10.1177_20539517241303151 for Cross-cultural narratives of weaponised artificial intelligence: Comparing France, India, Japan and the United States by Ingvild Bode, Hendrik Huelss, Anna Nadibaidze and Tom FA Watts in Big Data & Society

Footnotes

Acknowledgements

We are grateful for the feedback received during the Many Worlds of AI Conference (2023) at the University of Cambridge and the Sixth Convention of the Association pour les études sur la guerre et la stratégie (2023), where earlier drafts of this article were presented. We would also like to thank Shimona Mohan for her insights on the Indian case study. The survey upon which this article is based has been conducted with the help of YouGov.

Consent to participate

Informed consent to participate in this survey was given in written form via the YouGov platform.

Data availability

The datasets generated by the survey research analysed in this study are available as online supplemental materials.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Ethical considerations

The survey analysed in this article is part of the research project entitled ‘Weaponised Artificial Intelligence, Norms and Order’ (AutoNorms), which has passed the review of the Research Ethics Committee at the University of Southern Denmark (approval code 21/2141).

Funding

The authors disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This research is part of the AutoNorms project, which has received funding from the European Research Council under the European Union's Horizon 2020 research and innovation programme (grant agreement no. 852123). Dr Tom F.A. Watts’ contribution to this paper was supported by a Leverhulme Trust Early Career Research Fellowship (ECF-2022-135).

Statements and declarations

The authors have no competing interests to declare that are relevant to the content of this article.

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.