Abstract

Artificial intelligence (AI) is increasingly employed to support decision-making in the public sector, yet it exacerbates the “problem of many hands and eyes” in public accountability. Using a vignette experiment with a 3 × 3 factorial design, we investigated how the configuration of account givers and holders in AI accountability affects perceived legitimacy among citizens. Our findings indicate that human agents are perceived as more legitimate account holders than AI systems, where bureaucratic operators outperform technological developers. Additionally, citizens are perceived as more legitimate account holders than political leaders and experts. We also explored the interactive effects between account givers and holders. This study responds to calls for empirical evidence on AI accountability, expands the conceptual framework of accountability in the AI era, and highlights the crucial roles of bureaucrats and citizens.

Introduction

In recent years, artificial intelligence (AI) has become increasingly adopted by public sector, with the expectation of facilitating internal management, improving public services, and generating public values (Wang et al., 2024; van Noordt and Misuraca, 2022). In particular, AI has been actively involved in public decision-making practice with a human–AI collaborative approach, which is considered to improve the accuracy, efficiency, and consistency of decisions (Choudhary et al., 2023; Wang et al., 2023). However, numerous AI-related accidents in the public sector, including decision errors, systematic biases, and misfunction, have led to substantial public value failures. Against this backdrop, AI-involved decision-making poses significant challenges to public accountability, drawing considerable scholarly attention to emerging accountability gaps and calling for the establishment of AI accountability (Bracci, 2023; Busuioc, 2020; Novelli et al., 2023; Rinta-Kahila et al., 2022).

Public accountability is defined as “a relationship between an actor and a forum, in which the actor has an obligation to explain and to justify his or her conduct, the forum can pose questions and pass judgement, and the actor may face consequences” (Bovens, 2007). While accountability is hailed as a cornerstone of democratic governance, its effective functioning depends on transparent information, timely detection, credible incentives, traceable behavior, and clear responsibility (Pérez-Durán, 2023). In this regard, since AI functions as a “black box” with autonomy, the introduction of AI has undermined safeguards necessary for accountability, resulting in “compounded informational problems, the absence of adequate explanation or justification of algorithm functioning, and ensuing difficulties with diagnosing failure and securing redress” (Busuioc, 2020). Therefore, AI inevitably causes, if not exacerbates, “the problem of many hands and eyes” in accountability (Agostino et al., 2022; Santoni De Sio and Mecacci, 2021), leading to the critical question: who should assume the role of account givers and who should assume the role of account holders?

Imagine a case where AI is used to automate the processing of welfare applications (Rinta-Kahila et al., 2022). If the AI system makes an error, how should such a mistake is addressed? The fundamental question is: among political leaders, citizens, and experts (Bovens, 2007), who should act as the account holders to oversee and demand an explanation? Similarly, among AI systems, technological developers, and bureaucratic operators (Loi et al., 2021), who should serve as the account givers, bearing the responsibility to respond and be held accountable? The advent of AI has highlighted a lack of a comprehensive accountability system. Therefore, it is crucial to identify what constitutes a legitimate accountability arrangement by examining the configurations of account givers and holders. We approach this from the public's perspective, as the public represents the ultimate principals in the democratic chain of delegation (STRØM, 2000). Their expectations of accountability are not merely a normative concern; they carry substantial implications for institutional trust and organizational reputation (Busuioc and Lodge, 2016).

Given that public accountability is inextricably linked to broader questions of legitimacy and that the public plays an important role in upholding the ethical values of AI governance (Busuioc, 2020; Wilson, 2022), this study investigates the impact of different configurations of account givers and account holders in AI accountability on citizens’ perceived legitimacy. To do so, we recruited 978 participants in Chinese survey platform and designed a 3 × 3 repeated measure vignette experiment. Within a fictional scenario of AI-involved decision-making for social welfare, we manipulated the account givers (AI systems vs. technological developers vs. bureaucratic operators) and account holders (political leaders vs. experts vs. citizens). The results were analyzed using the Generalized Estimating Equation (GEE) method.

This study contributes to the literature in three ways. First, responding to the call for empirical evidence on AI accountability (Busuioc, 2020; Wessels, 2024), our analysis provides insights into accountability arrangements in AI-involved public decision-making. Second, following the conceptual framework of accountability (Bovens, 2007), our analysis expands account givers in the AI era and test for political, social, and professional accountability. Third, our analysis bridges the literature streams on AI, public accountability, and perceived legitimacy, providing insights into broader AI governance in the public sector, especially from the perspective of citizens (Ulnicane et al., 2021; Wilson, 2022).

The rest of the article is organized as follows. First, we introduce the concept and theory of accountability and its relationship to legitimacy. We then describe the challenges that AI poses for accountability and formulate the corresponding research hypotheses. Thereafter, we present the experimental design, data collection, and analytical approach, as well as empirically test the hypotheses. Finally, we conclude the study with a discussion on the findings, implications, and limitations.

Public accountability and perceived legitimacy

Public accountability has long been considered as a virtue of good governance. It is a “magic concept” that evokes an image of trustworthiness, legitimacy, and responsiveness (Papadopoulos, 2023; Pérez-Durán, 2023). Along with its normative concerns, accountability is empirically understood as a social relational mechanism linking the account givers and the account holders (Bovens, 2007). For instance, in a classic manifestation of this relationship, a parliament (the account holder) might question a government minister (the account giver) about budget overruns, demanding a public justification for the spending. Currently, there are two main theoretical assumptions about public accountability.

One is the principal–agent account. This perspective argues that accountability between account holders and account givers takes place along with the principal–agent relationships, in the opposite direction of the “democratic chain of delegation” (STRØM, 2000). Specifically, voters delegate sovereignty to their elected representatives, who subsequently delegate substantial authority further down the political hierarchy. As a result, civil servants at the bottom of this chain are accountable to their ministers, and through the political accountability chain, ultimately to the voters at the other end. Given the principal's authority over audits, inquiries, and sanctions toward the agent, accountability is essential for reducing information asymmetries, controlling moral hazards, and reconciling conflicting interests. This ensures that the bureaucracy functions in alignment with democratic, constitutional, and learning principles (Bovens, 2007; Papadopoulos, 2023). Therefore, the principal–agent account is guaranteed by the vertical and hierarchical transmission of political accountability, which underlines the notion of the “primacy of politics” and the system of representative democracy (Brummel and de Blok, 2024; Hansen et al., 2024).

The other is the reputation management account. This perspective posits that accountability between account holders and account givers is interdependent in a network of reputation (Busuioc and Lodge, 2016). Reputation comprises a set of beliefs about an organization's capacities, roles, and obligations embedded in a network of multiple audiences, including performative, moral, procedural, and technical dimensions (Carpenter and Krause, 2012). To maintain or enhance their reputational uniqueness and protect against reputation-damaging humiliation, account givers selectively apply strategies to management day-to-day appearances in front of audiences (Busuioc and Lodge, 2016). These strategies are tailored to the core nature of the organization and the preferences of the differentiated audience, such as engaging in the voluntary accountability (de Boer, 2023; Doering et al., 2021) or employing blame avoidance tactics (MOYNIHAN, 2012). Therefore, the reputation management account represents an informal and noninstitutional public accountability, such as social and professional accountability, characterized by horizontal dynamics with external stakeholders (Brummel and de Blok, 2024; Hansen et al., 2024).

From a citizen's perspective, the framework of principal–agent and reputation management offers explanatory power and is complementary to form a “hybrid accountability arrangement” (Michels and Meijer, 2008; Mizrahi and Minchuk, 2019). On the one hand, in the context of hierarchical political accountability following the principal–agent model, ministers may be reluctant to impose severe penalties on their subordinates due to concerns the reputation of the organization (Busuioc and Lodge, 2016). On the other hand, in the context of horizontal social and professional accountability, where reputation management is central, the account-givers voluntarily engage in accountability practices that can yield strategic political benefits, a process that is deeply rooted in the principal–agent dynamic (de Boer, 2023).

Both for political, social, and professional accountability aim to enhance the legitimacy of the administration (Bovens, 2007; Brummel and de Blok, 2024). Based on Weber (Weber, 2012), legitimacy refers to public recognition of the authority's actions (Waldman and Martin, 2022). Broadly, legitimacy related to “a generalized perception or assumption that the actions of an entity are desirable, proper, or appropriate within some socially constructed system of norms, values, beliefs, and definitions” (Tyler and Huo, 2002). Legitimacy is thus endowed with normative moral criteria, and lays the foundation for capturing public perceptions of legitimacy from a subjective perspective, which generally includes public acceptance, trust, and perceptions of fairness (Brummel and de Blok, 2024).

Public perceptions of legitimacy are fundamental for democracy, which is particularly relevant in the context of AI application in the public sector (Starke and Lünich, 2020). Given the moral and ethical risks associated with AI, public perceptions of legitimacy determine their compliance with these technological arrangements, including their willingness to provide personal data for AI training and to accept decisions made with AI involvement (Waldman and Martin, 2022). Therefore, public perceptions of legitimacy largely determine the success of AI adoption in the public sector. Given the close relationship between legitimacy and accountability, many studies have proposed establishing accountability to ensure the legitimacy of AI-involved decision-making (Busuioc, 2020; Novelli et al., 2023; Waldman and Martin, 2022). Next, we elaborate on the accountability dilemma posed by AI and the impact of different accountability configurations on public perceptions of legitimacy.

Artificial intelligence and public accountability

Artificial intelligence refers to an intelligent system equipped with the capacity to process information, infer patterns and make decisions, simulating cognitive processes, and performing tasks like humans (Minkkinen et al., 2023). Recently, AI has been largely deployed in the public sector to automate and assist decision-making, such as fraud detection, teacher appraisals, crime prediction, video surveillance, and medical diagnosis (van Noordt and Misuraca, 2022; Wang et al., 2023).

While AI holds great promise for enhancing efficiency, it also introduces complex dynamics into public accountability. Operating under the “narrow” conception of accountability (Bovens, 2007), AI creates ambiguity concerning which actors and how they are responsible for explaining behaviors and facing consequences, leading to the “problem of many hands” and the “problem of many eyes” (Santoni De Sio and Mecacci, 2021). Consequently, the use of AI in public decision-making generates emerging accountability gaps in areas involving information management, justification of actions, and understanding of consequences (Busuioc, 2020). First, the complexity and opacity of AI systems, together with their noninterpretability and inaccessibility, make them akin to “black boxes.” This creates a significant information asymmetry that undermines the necessary informational basis for accountability. Second, the inherent “black box” nature, as well as the implicit value trade-offs and context-dependent use of AI systems, complicates the process of justifying their actions, making the interrogation of accountability challenging. Third, the nonretroactivity of AI decisions, along with unclear evaluation criteria and unreliable human oversight, impede the imposition of consequences in accountability (Bignami, 2022; Bracci, 2023; Busuioc, 2020; Novelli et al., 2023; Wessels, 2024; Zhou et al., 2022).

Against this backdrop, a pressing question arises: who should assume the role of account givers when AI is deployed in public decision-making? This question delves into complex moral philosophy. A prominent viewpoint holds that AI systems do not fulfill the traditional criteria for moral agency, such as freedom and consciousness, and thus cannot be held responsible. Instead, it is argued that humans who develop, operate, and oversee AI systems should bear the responsibility (Coeckelbergh, 2020; Johnson, 2006). However, an alternative perspective contends that it is unjust to hold humans accountable for processes and outcomes beyond their control or comprehension. Advocates of this view argue that AI can assume moral agency with electronic personhood, thus taking on responsibility (Avila Negri, 2021; Stahl, 2006; Sullins Lll, 2006). To bridge these divergent arguments, a conceptual solution of distributed responsibility has been proposed to address distributed agency (Taddeo and Floridi, 2018). Yet, this approach cannot address the practical challenges of assigning responsibility, as the contributions and interactions of various agents often remain opaque (Coeckelbergh, 2020).

In the context of public decision-making involving AI, many people find it acceptable for AI to assume the role of account givers (Lima et al., 2021; Simmler, 2024). This is because AI systems are capable of executing complex tasks and making autonomous decisions, frequently operating in a “black box” manner that limits external oversight (Wessels, 2024). This accountability is increasingly possible with advances in Explainable AI technologies, which allow AI systems to self-interpret their decisions (de Bruijn et al., 2022). Accordingly, people are predisposed to treat AIs as social entities, thereby extending social norms and expectations, such as accountability, to them, in line with the assumptions of the “Computer as Social Actor” paradigm (Nass and Moon, 2000). However, things may be different in the public sector, given the nature of publicness to achieve public value (Moulton, 2009). In public decision-making, human agents are considered essential safeguards for the dignity of those impacted by these decisions (Horvath et al., 2023). The public generally shows greater tolerance for human bias and intuitive judgments than for the unknown risks of AI, which can introduce additional uncertainties (Mirowska and Mesnet, 2022). In addition, when mistakes are made in decision-making, there is a risk that humans may utilize AI to create a “moral buffer” for blame avoidance, following automation bias and selective bias (Alon-Barkat and Busuioc, 2022). However, considering that accountability is crucial for making timely responses and improving future governance, placing humans at the center of accountability ensures that this core purpose is fulfilled (Busuioc, 2020). Therefore, AI should serve as a tool to enhance accountability rather than a shield for it, and it is proposed that the accountability of human agents is more acceptable to citizens.

Furthermore, human agents in this respect are typically categorized into two distinct roles: technological developers and bureaucratic operators. The technological developers are primarily responsible for designing, constructing, and programming AI systems, which significantly influences how these systems function and make decisions. They are in a unique position to understand the intricacies of AI and could be held accountable for decision-making (Busuioc, 2020; Dubber et al., 2020). However, the connection between the developer and decision-making is not readily apparent to the public, leading to ambiguity in the accountability structure (Busuioc, 2020). Conversely, the bureaucratic operators are responsible for inputting data and adjusting outputs of AI systems, which is referred to as “human in the loop” or “human as gatekeeper” (Ostheimer et al., 2021). This direct involvement in the decision-making process places them at the center of oversight (Harrison and Luna-Reyes, 2020; Loi et al., 2021). Despite the transition of their workplace from traditional street-level to more screen-level or systems-level, operators remain the frontline bureaucrats (Bullock, 2019). They engage directly with stakeholders affected by public decisions. Consequently, operators are more visibly linked to the actions of AI systems, rendering their role in accountability more tangible and directly associated with the professionalism and ethical standards expected of bureaucrats. This argument is also consistent with the emphasis on the bureaucratic operators in the legal frameworks (Bignami, 2022). Therefore, among human agents, accountability of the bureaucratic operator is more acceptable to citizens.

H1: In AI-enabled decision-making, human agents as account givers are more acceptable to citizens than AIs.

H2: Among human agents, a bureaucratic operator as an account giver is more acceptable to citizens than a technological developer.

Given the widespread concern “from agency drift to forum drift” (Schillemans and Busuioc, 2015), another crucial question concerns the role agreement of account holders, with particular attention to political, social, and professional accountability (Bovens, 2007; Schillemans, 2011). It has been found that compared to political accountability, social accountability can increase citizens’ perception of legitimacy (Brummel and de Blok, 2024), as social accountability makes authorities responsive to stakeholders directly influenced by their decisions, which aligns with theory of participatory democracy that emphasizes greater citizen involvement in public affairs (Cronin, 1988; Hansen et al., 2024). Similarly, given the complexities of administrative expertise, professional accountability can serve as a form of external scrutiny to promote professional standards and values among public sectors (Mulgan, 2000). These conclusions can be reinforced in contexts where AI is integrated into public decision-making. In general, the adoption of AI in the public sector is largely efficiency-driven, with fewer ethical considerations (Fatima et al., 2021). Consequently, stakeholder involvement is increasingly seen as essential for preserving public value and building democratic mandate (Wilson, 2022). In addition, notable AI-related accidents in practice have led to public value failures, significantly eroding public trust in the public sector and raising questions about the effectiveness of political accountability within bureaucratic systems (Giest and Klievink, 2022; Rinta-Kahila et al., 2022; Schiff et al., 2022). As a result, it can be assumed that citizens are inclined to resort to social accountability mechanisms.

Furthermore, the theory of stealth democracy posits that while citizens value direct participation for ensuring accountability, they are not interested in engaging in political process. They prioritize efficient and objective problem-solving over the complexities of political negotiations and compromises. In this regard, nonelected experts are often seen as more suitable agents for achieving social accountability (Bengtsson and Mattila, 2009; Mohrenberg et al., 2021). Moreover, in the context of AI-enabled decision-making, the intricate technologies embedded in AI systems may exceed the understanding of ordinary citizens, potentially limiting their substantive participation in social accountability mechanisms. Therefore, experts may be better equipped to guarantee social accountability, including scrutinizing account givers’ explanations, posing critical questions, and enforcing consequences. This expertise is essential for maintaining the legitimacy of decision-making processes and outcomes (Bovens, 2007). Overall, it is proposed that the public might prefer to involve experts rather than ordinary citizens as account holders for reasons of both willingness and capability.

H3: In AI-enabled decision-making, social stakeholders as account holders are more acceptable to citizens than political leaders as account holder. H4: Among social stakeholders, experts as account holders are more acceptable to citizens than citizens themselves as account holders.

In addition to examining the direct impact of both the account givers and account holders on perceived legitimacy (as shown in Figure 1), this study also analyzes their interactive effects. As the analysis of these interaction effects is exploratory, the corresponding results are illustrated and interpreted in the following sections.

Analytical framework.

Method

Experimental design

To test above hypotheses, this study employed a randomized vignette experiment. A vignette is a brief, carefully constructed description of a scenario, which is used to elicit participants’ reactions to manipulated factors within a controlled setting (Atzmüller and Steiner, 2010). Therefore, the vignette experiment can reduce endogenous interference and improve the internal validity, thus capturing valid and robust causal effects (Brummel and de Blok, 2024). In addition, the vignette experiment is embedded in a concrete and realistic context, thus extending the external validity of a vignette experiment at least to the target population of the survey and serving as a basis for linking the external validity of fieldwork (Schillemans, 2016; Steiner et al., 2016). Due to these methodological advantages, studies on AI have extensively employed vignette experiments (Schiff et al., 2025; Waldman and Martin, 2022; Wang et al., 2022).

Our vignette experiment is set up as 3 × 3 with three types of account givers (AI systems, technological developers, and bureaucratic operators) and three types of account holders (political leaders, citizens, and experts). The experimental context is an AI-involved public decision on social welfare, as this is a real-life example of AI applications (Giest and Klievink, 2022), and the respondents are people with a basic understanding of AI applications. These measures help to improve the ecological validity of the experiment. After review by a group of PhD candidates, the original description of the vignette was improved, and the final version is presented below (translated from Chinese). “Please imagine the following scenario: You are soon to receive a social welfare subsidy, but the exact amount is yet to be determined. To resolve this, the Social Security Bureau in your city has implemented an artificial intelligence system named “Welfare Calculation,” developed by the “Smart Platform” company. You discover that by simply entering your name into the system, it can automatically calculate the subsidy amount you're eligible for based on relevant data. This calculated amount will then be confirmed by staff members at the Social Security Bureau. However, you might have concerns about this process: “Who oversees it? How are my rights protected if there are mistakes?” Through further inquiry, you find out that there is a supervisory group made up of

After reading the vignette, respondents are required to identify the account giver and holder in the scenario as attention check. Then, the dependent variable of perceived legitimacy is measured. Since perceived legitimacy is difficult to measure directly, referring to the empirical strategy of recent research (Brummel and de Blok, 2024; de Fine Licht et al., 2022), we operationalize the perceived legitimacy as perceived fairness, decision acceptance and trust, which are all measured on a 7-point scale. Finally, each respondent was asked to complete three vignette experiments, each of which was randomized to ensure the robustness of the repeated-measures (Selten et al., 2023).

Data collection

The data were collected in China. China is one of the global leaders in the development and application of AI, especially for the public decision-making. In addition, while China has promptly developed ethical AI development, it is still perceived as prioritizing efficiency over ethical aspects. Therefore, the investigation of AI accountability for the Chinese population is of great relevance to our research purpose.

Specifically, the data were collected through “Surveyplus,” 1 a reputable online survey platform in China. The sample was recruited from the platform's diverse panel of respondents. To ensure data quality, we implemented a screening question at the beginning of the survey, restricting participation to individuals who reported having a basic understanding of AI. Before proceeding, all participants were presented with an informed consent form, which outlined the study's purpose, the voluntary nature of their participation, and the assurance of anonymity and confidentiality. To incentivize careful completion, each respondent received compensation of $0.80 USD upon finishing the survey.

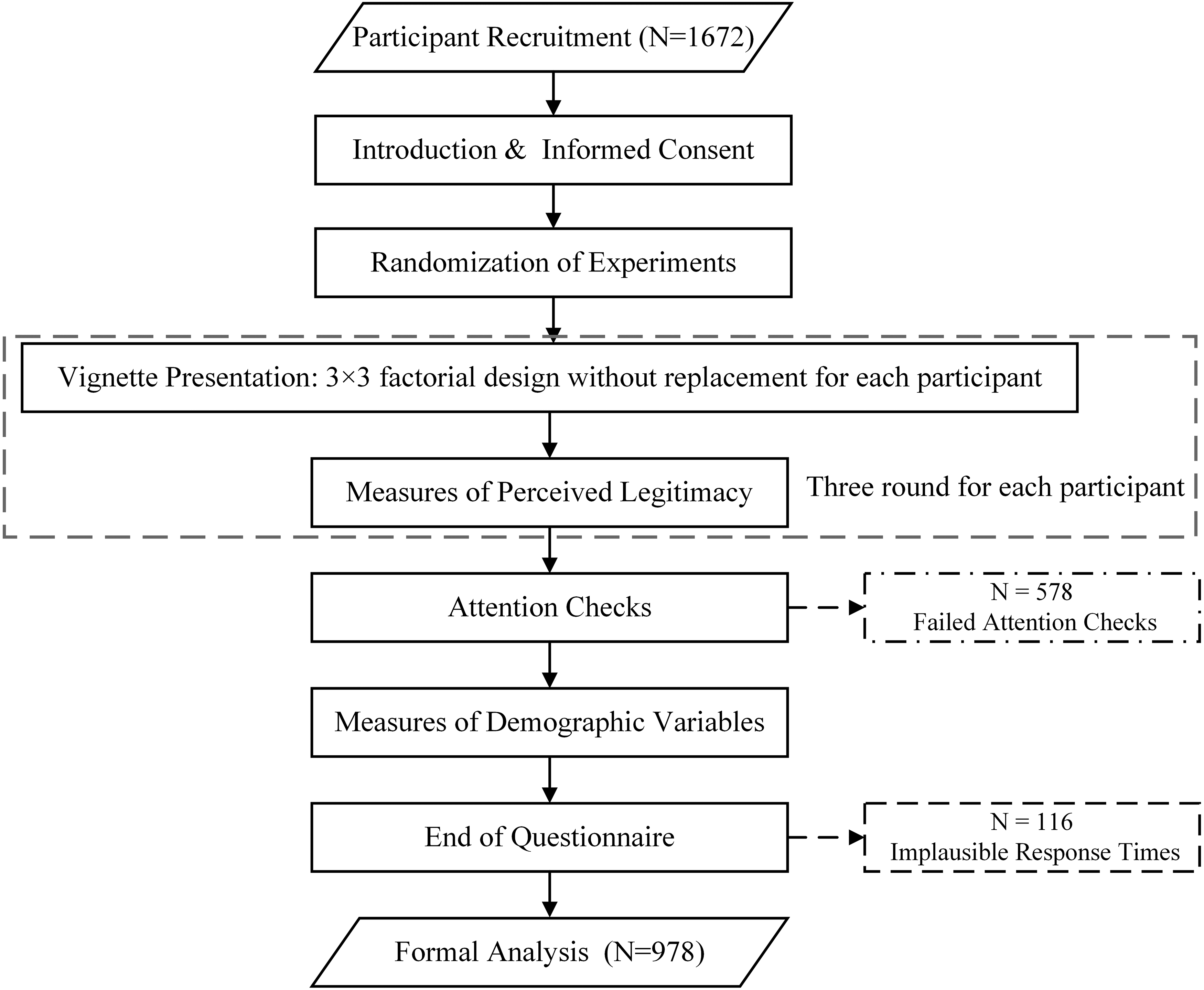

A total of 1672 participants were recruited for our study; however, 694 participants were disqualified and excluded from formal analyses, including 578 participants who failed the attention check, and 116 participants who failed the speed check. Finally, 978 participants (2934 data points) made up the analytical sample.

After completing three rounds of vignette experiments, the respondents were asked to answer questions related to psychological and sociodemographic factors. The descriptive statistics are presented in Table 1. Among them, AI knowledge was assessed using a quiz, with one point awarded for each correct answer out of five questions (Horvath et al., 2023). The perceived importance of accountability is measured by averaging a two-item scale: “How important do you think it is that government organizations (1) explain decisions to citizens (2) gather citizens’ opinions when making and implementing decisions from “not important at all” = 1 to “very important” = 5 (Brummel and de Blok, 2024). Other variables are coded as below: gender (female = 0, male = 1), education (junior high school and below = 1 to postgraduate and above = 5), subjective socioeconomic status (significantly below average = 1 to significantly above average = 5), trust in government (very distrustful = 1 to very trustful = 5), and trust in AI (very distrustful = 1 to very trustful = 5). The full questionnaire is available in Appendix, with the survey flows, as shown in Figure 2.

The structure of the survey.

Demographic statistics.

According to Table 1, the sample has an average age of 30.54, with slightly more female than male. The average education level is between junior college and undergraduate, with a medium socioeconomic status. The sociodemographic structure of the sample is largely representative of China's Internet ecosystem, which improved external validity of the results. In terms of psychological variables, respondents have a high level of trust in both the government and AI. Their level of knowledge about AI and the perceived importance of accountability are relatively high, indicating that our sample was well suited to the research purpose and ensuring the internal validity of the results.

Analytical approach

As previously discussed, our experiment adopts a mixed design, in which each participant evaluated three randomly assigned vignettes from a 3 × 3 factorial structure. This design strikes a balance between statistical efficiency and respondent manageability, as it leverages within-subject comparisons to reduce individual-level noise, while preserving the between-subjects variation needed for estimating main and interaction effects. However, a key limitation is that the repeated observations for each participant introduce intraindividual correlation, violating the independence assumption required for standard regression methods such as Ordinary Least Squares.

To address this issue and appropriately account for the nested data structure, we employed the GEE framework, which provides robust estimates of population-averaged effects while adjusting for the nonindependence of repeated responses. As GEE relies on the estimation framework of quasi-likelihood function, it not only does not necessitate strict distributional assumptions and can be applicable to a wide range of data types but it also offers the flexibility to specify correlation structures and provides consistent estimates even if the working correlation structure is incorrectly specified (Liang and Zeger, 1986; Selten et al., 2023). In light of the data structure in our analysis, we selected a Gaussian family for the response distribution and used an exchangeable correlation structure to account for intracluster correlation.

Results

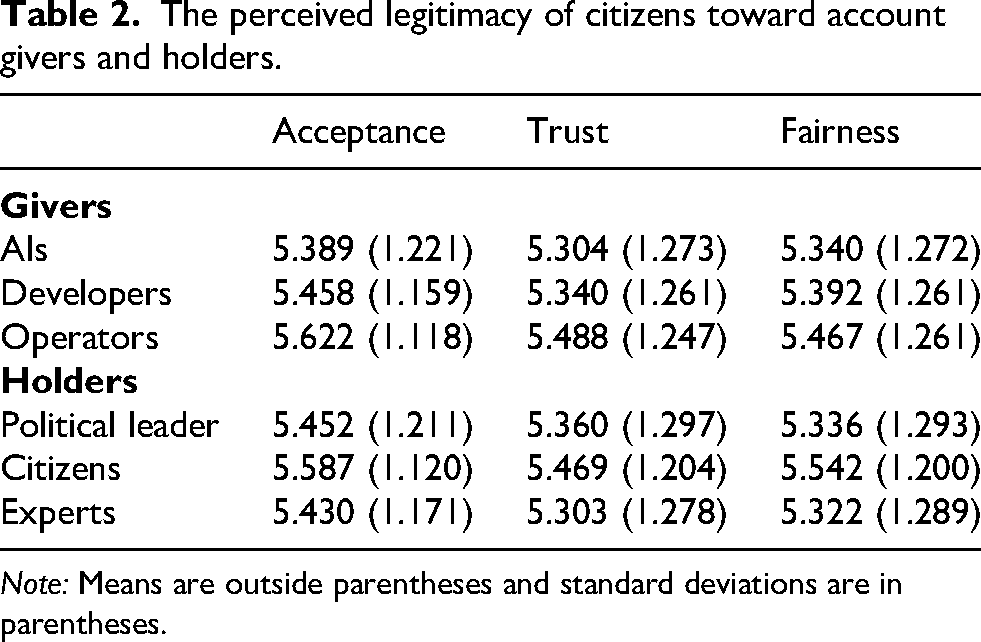

This study analyzes how different configurations of accountability, including the account givers (AI systems vs. technological developers vs. bureaucratic operators) and the account holders (political leaders vs. citizens vs. experts), affect citizens’ perceived legitimacy of AI-involved public decisions. Table 2 describes the acceptance, trust, and perceived fairness of citizens toward different account givers and holders. It is found that the results for acceptance, trust, and perceived fairness (three elements of perceived legitimacy) are highly consistent, with AIs being the worst and operators the best as account givers, and experts being the worst and citizens the best as account holders. Next, we take trust as an example of a more detailed analysis using GEE, and the analysis on acceptance and perceived fairness are reported in Appendix (Brummel and de Blok, 2024).

The perceived legitimacy of citizens toward account givers and holders.

Note: Means are outside parentheses and standard deviations are in parentheses.

Table 3 provides the results of the GEE, where we adjusted the baseline group to correspond to testing different research hypotheses. In terms of account givers, results indicate that compared to AI systems, human agents (developers and operators) as account givers significantly improve perceived legitimacy of AI-involved public decisions (β = 0.099, p < 0.05, Cohen's d = 0.028). Therefore, H1 is supported. Furthermore, bureaucratic operators as account givers have higher perceived legitimacy than technological developers (β = 0.298, p < 0.01, Cohen's d = 0.146), which is in line with our expectation outlined in H2. In terms of account holders, citizens as account holders significantly increase perceived legitimacy compared to political leaders (β = 0.117, p < 0.01, Cohen's d = 0.087), but experts do not. Therefore, H3 is only partially supported. It is conceivable that H4 is also not supported. Instead, experts as account holders significantly reduce perceived legitimacy compared to citizens (β = −0.183, p < 0.01, Cohen's d = −0.134).

Hypothesis test through GEE.

Note: Standard errors in parentheses, * p < 0.1, ** p < 0.05, *** p < 0.01.

In addition, analyses of acceptance and perceived fairness yield similar results, with the only difference being that developers as account giver do not significantly improve the perceived fairness compared to AI systems. The results are shown in Tables A1 and A2 of Appendix.

Although randomization in the experiment minimizes threats to causal inference, we included control variables as a robustness check. As shown in Table A3 in Appendix, the inclusion of these controls did not substantively alter the coefficients or their significance levels, reinforcing the robustness of our findings.

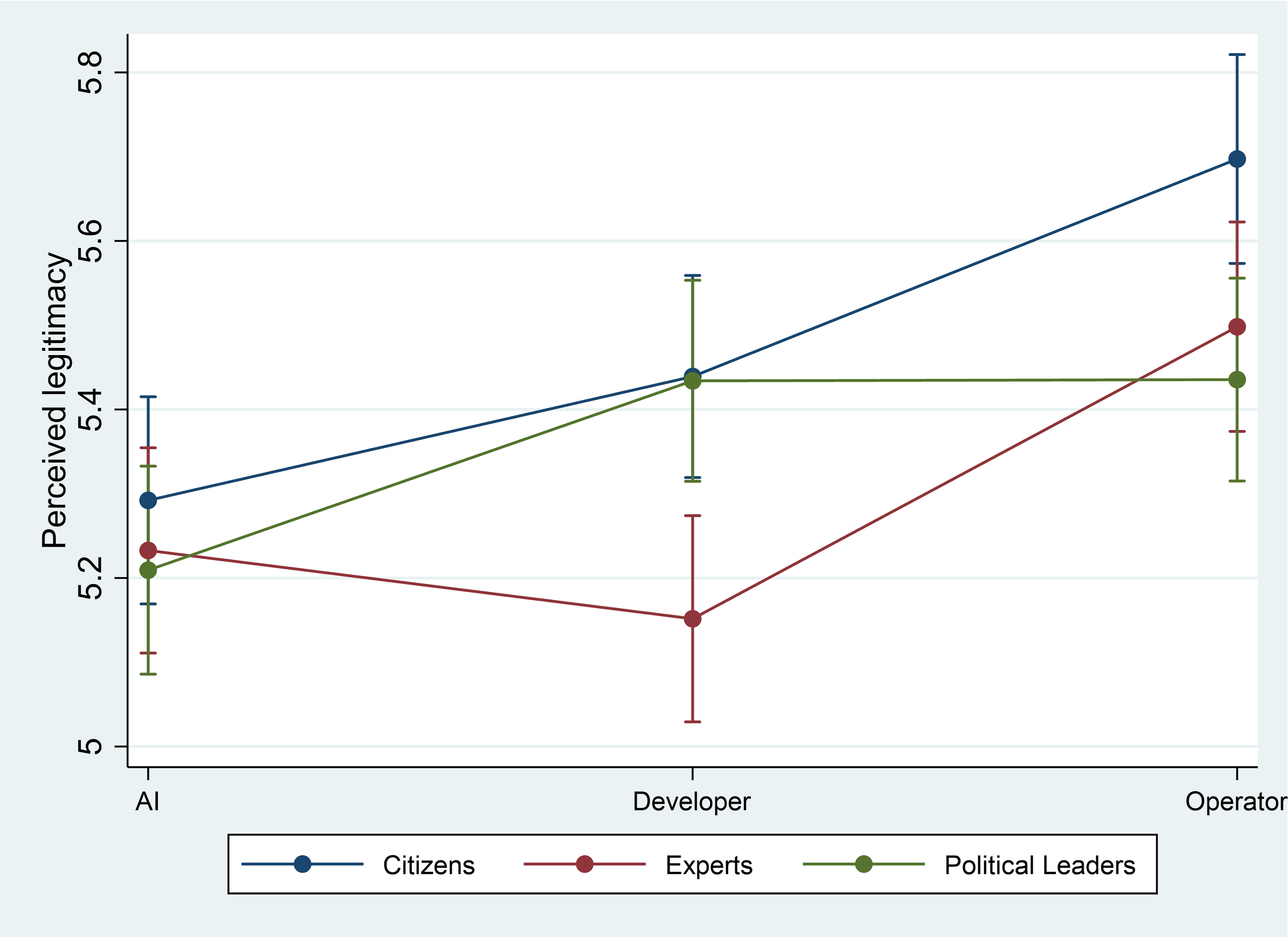

The interactive effects between account givers and holders are further exploratively analyzed, as presented in Figure 3. First, citizens as account holders are considered the most legitimate for any account giver. Second, political leaders as account holders are most applicable when developers are account givers and least applicable when AIs are account givers. Third, compared to citizens or political leaders, experts as account holders have no relative advantage across all account givers.

Interactive effects of account givers and holders.

Conclusion and discussion

The inclusion of AI in public decision-making has raised significant challenges and concerns about public accountability. Against this backdrop, our analysis provides insights into the impact of account givers and holders in accountability arrangements on perceived legitimacy. These results drew three core conclusions.

First, when it comes to assigning the role of account givers, human agents are perceived as more legitimate than AI systems, with bureaucratic operators better than technological developers. This finding contradicts the proposition that AI can be viewed as a moral agency, at least within the public sector (Avila Negri, 2021; Stahl, 2006; Sullins Lll, 2006). Consequently, this finding supports the argument for placing humans at the center of accountability (Novelli et al., 2023; Santoni De Sio and Mecacci, 2021). Furthermore, as anticipated, bureaucratic operators are considered the most significant account givers compared to technological developers. This is due to the fact that they are the primary actors in asserting the legitimacy of the administrative state, with a more direct and visible link to public decision-making (Busuioc, 2020). Therefore, in order to avoid a potential legitimacy crisis, the introduction of AI in the public sector should be accompanied by a governance framework that explicitly holds bureaucrats accountable (Bignami, 2022). In this regard, two contrasting situations are particularly relevant and warrant attention. On the one hand, bureaucrats may use the appearance of technological legitimacy to obscure their psychological tendencies toward “automation bias” and “selective adherence,” thereby adopting blame-avoidance strategies in the face of failures (Alon-Barkat and Busuioc, 2022; Selten et al., 2023). On the other hand, bureaucrats may confront the proprietary, nontransparent, and noninterpretable nature of AI systems, which prevents them from controlling the decision-making, ultimately reducing them to scapegoats for failures (Busuioc, 2020; Santoni De Sio and Mecacci, 2021).

Second, in terms of the account holders, citizens are perceived as more legitimate than political leaders and experts. This finding resonates with the existing research's positive effects of social accountability (Brummel and de Blok, 2024) and further provides empirical evidence into social, professional, and political accountability in AI era (Bovens, 2007). Broadly, this finding confirms the claim for citizen-centered approach to AI governance, as citizens not only provide the democratic mandate but also the ultimate recipients of AI decisions (Wilson, 2022). However, while citizens as account holders for AI-involved decision-making is theoretical warranted, it remains doubtful whether this proposition can be put into practice, as citizens lack concrete channels for substantive involvement and struggle to reach meaningful solutions (Wang and Liang, 2024). Therefore, future research can analyze the feasibility and effectiveness of different account holders in AI-involved decisions. In addition, the finding on the relationship between experts and perceived legitimacy is remarkable and in contrast to our hypothesis. In our theoretical hypothesis, experts can better understand the rationale behind AI-involved decisions and make objective and effective judgment, making them well suited to take the role of account holders (Bovens, 2007; Schillemans, 2011). The inconsistent results may be attributed to Chinese context. As experts in China are more or less connected to the government in China, citizens may assume that experts are as concerned with efficiency as the government, rather than with ethical and moral risks in accountability. Therefore, they have less trust in experts and hold a populist tendency that the will of the people should take precedence over expert opinion (Shao and Ieong, 2024). The aftermath of the Covid-19 crisis may have further strengthened citizens’ populist tendencies and low trust in experts.

Third, our analysis exploratively suggests that citizens, as account holders, are perceived with the highest legitimacy in interactions with any account giver. Notably, political leaders, as account holders, achieve a similar level of perceived legitimacy only when technological developers act as account givers. This finding deepens the view of populism in the mentioned earlier, supporting direct democracy instead of stealth democracy (Mohrenberg et al., 2021). Considering the complexity of AI systems and the specialization of technological development, a more robust ethical standard with transparency and interpretation is a necessary prerequisite for citizens’ engagement in AI accountability (Ulnicane et al., 2021). Otherwise, there could be extensive “forum drift” (Schillemans and Busuioc, 2015). In addition, the positive perceived legitimacy of political leaders overseeing technological developers underscores the importance of public–private partnership in AI implementation (Mikhaylov et al., 2018). To address this, some departments have introduced ICT technocrats, such as Chief Information Officers, to overcome informational dilemmas and accelerate project management in these partnerships. However, it is important to note that these technocrats often have limited awareness of the ethical implications of AI, which may hinder effective AI accountability (Criado and De Zarate-Alcarazo, 2022). Generally speaking, considering that public accountability hinges on the relationship between account givers and holders, our finding enhances the understanding of the configurations between account holders and givers (Bovens, 2007). This aspect has been underexplored in previous studies due to the fixed and clear role of the giver. However, in the AI era, this becomes increasingly significant as it complicates the determination of who should be held accountable (Busuioc, 2020; Santoni De Sio and Mecacci, 2021).

It is important to acknowledge that although the main findings of this study are statistically significant, the effect sizes are relatively modest. This reflects adequate statistical power in our experiment, but may raise questions about the practical significance of the results. We believe the relatively small effect sizes are partly due to the design of our vignettes, which depicted neutral, procedural scenarios of AI-assisted welfare distribution without introducing any explicit failure or adverse consequence. This design choice is critical to interpret the findings, as prior research suggests that public demands for accountability tend to intensify significantly in response to focusing events, which is salient, often negative incidents that draw attention to policy failures or institutional shortcomings (Birkland, 1997). Real-world examples also show that errors in AI systems can trigger severe political repercussions, such as government compensation, legal liability, and even cabinet resignations (Rinta-Kahila et al., 2022). In this context, our findings are best understood as reflecting a precautionary baseline, suggesting citizen expectations for accountability mechanisms even in the absence of visible problems. These effects likely represent a lower bound that would become more pronounced if actual failures occurred. This insight has important practical implications: accountability systems should not be designed only as reactive responses to crises, but rather as proactive safeguards. We therefore encourage future research to explore public attitudes toward accountability arrangements in more realistic, high-stakes scenarios involving AI-related failures, in order to assess the full scope and intensity of public expectations.

Our study also comes with some limitations. First, the generalizability of the findings may be constrained by our experimental design. The decision scenario (AI-assisted allocation of social welfare subsidies) is closely tied to personal interest, which may have heightened citizens’ perceived stakes and influenced their preferences (Mizrahi and Minchuk, 2019). It remains uncertain whether similar patterns would emerge in less self-relevant domains. Moreover, while our mixed design combines within- and between-subject elements to enhance statistical efficiency, it may also introduce biases such as demand characteristics or consistency-seeking behavior, as participants respond to multiple vignettes. Although randomization helps mitigate some risks, others may persist and subtly shape responses. Future research could refine and expand on our findings through more diverse scenarios and tailored experimental designs.

Second, given the hypothetical nature of the vignette experiment, our findings face the problem of external validity, making it uncertain to what extent they can be applied to real-life scenarios. While our results suggest that citizens are best suited to act as account holders, they may encounter significant barriers in practice, such as a lack of engagement channels and insufficient capabilities to participate effectively (Wang and Liang, 2024). Also, placing frontline bureaucrats at the center of accountability may lead to negative consequences, such as blame avoidance or passive inaction, which could reduce the effectiveness of citizen–state interactions (Aleksovska et al., 2019). Generally speaking, theoretically determined approaches to improving legitimacy may not work best in practice (Schillemans, 2016). The complex field setting may involve multifaceted aspects that our experimental setting may not fully capture. Future research should consider real events related to AI accountability, examining their causes, processes, and outcomes. Qualitative research could play a crucial role in this regard, providing in-depth insights into the nuances of accountability mechanisms and their practical implications.

Second, cross-national differences in institutional and political contexts may substantially shape how citizens perceive accountability. Some of our findings may be closely tied to the Chinese context, including the previously mentioned populist tendencies, relatively low levels of trust in experts, and the centralized administrative structure. In other countries, citizens may rely more heavily on professional expertise or elected representatives as legitimate account holders, depending on prevailing governance norms and civic culture. Additionally, the forms of human–AI collaboration in the public sector and the sources of bureaucratic legitimacy can also vary considerably across different countries (Meijer et al., 2021; Weber, 2012). Future studies should replicate and extend this research using more pluralistic experimental designs across diverse national settings, ideally incorporating context-sensitive variations in institutional structure, civic expectations, and AI deployment models. Such comparative approaches would help to assess the robustness and boundary conditions of our findings.

Lastly, while the perceived legitimacy of the public is crucial in accountability, this focus can lead to an analysis that lacks a bureaucratic perspective. Bureaucratic perspective is essential for establishing effective AI accountability systems and influencing broader bureaucratic behaviors related to AI. For example, accountability arrangements can significantly impact the extent to which bureaucrats adopt AI technologies and how they are implemented in practice. In this regard, principal–agent and reputation management frameworks can provide insights to explain bureaucratic perceptions and behaviors regarding accountability (Busuioc and Lodge, 2016). Future research should continue to explore these dimensions, using qualitative and quantitative methods to capture the nuanced interactions between AI technologies, bureaucratic practices, and accountability frameworks. In this regard, the nature of the algorithm, including its transparency, interpretability, and effectiveness, can be taken into account (König et al., 2024; Wang et al., 2023).

Supplemental Material

sj-docx-1-bds-10.1177_20539517251392100 - Supplemental material for When artificial intelligence meets accountability: Who holds legitimacy as account givers and holders?

Supplemental material, sj-docx-1-bds-10.1177_20539517251392100 for When artificial intelligence meets accountability: Who holds legitimacy as account givers and holders? by Shangrui Wang, Yuanmeng Zhang, Yiming Xiao and Zheng Liang in Big Data & Society

Footnotes

Ethical approval

This study involved a virtual experiment with no physical interaction, posing no risk of harm to participants, and this study obtains informed consent from participants to publish their anonymous information.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by National Science and Technology Major Project (2023ZD0121700); Key Project of the National Natural Science Foundation of China (No. 72434002); China Postdoctoral Science Foundation funded project (2023M743362); the 2024 Philosophy and Social Science Planning Project of Guangdong Province (GD24YGL20); Tsinghua University Independent Research Program (20223080026); and Beijing Innovation Center of Humanities and Social Sciences.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability

The data are available upon request.

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.