Abstract

This commentary examines the inherent contradictions between participation in artificial intelligence (AI), controversy studies, and AI narratives.

This article is a part of special theme on Analysing Artificial Intelligence Controversies. To see a full list of all articles in this special theme, please click here: https://journals.sagepub.com/page/bds/collections/analysingartificialintelligencecontroversies

The rising awareness of the harms that “artificial intelligence” (AI) may cause for individuals, communities, and the planet (Acemoglu, 2021; Buolamwini and Gebru, 2018; Dhar, 2020; Noble, 2018; Tufekci, 2015) continues to manifest in some of the controversies surrounding AI. These are often subsumed by totalizing narratives about the wholesale remaking of society by AI. Within these frames, “participation” is offered as way to fix AI's exclusionary and harmful nature (Birhane et al., 2022; Sloane et al., 2022). The nascent field of AI participation spans from formalized approaches to AI governance and policy (such as the European Commission's “EU AI Alliance”) to explorations of stakeholder participation in the design of AI systems (more akin to “co-design” in machine learning (Donia and Shaw, 2021)). The latter can be conceptual inquiries, for example via workshops at AI conferences like the International Conference on Machine Learning (Zhou et al., 2020), or more concrete, domain-specific design specifications, for example for humanitarian aid (Berditchevskaia et al., 2021). These interventions occur against the backdrop of long-held imaginations and beliefs about AI that circulate in society by way of collectively shared narratives. This article examines the relationship between these narratives, forms of AI participation, and the distribution of power vis-à-vis the AI controversy frame. It proposes that participation is an AI controversy that “unblackboxes” AI and considers the implications of this dynamic.

AI, participation, and inequality

The term “participation,” historically, is vague and characterized by definitional questions. It is perhaps best bound by its multilayered nature as a concept (for politics), a procedure (for equitable decision-making), and an experience (of becoming a collective)—and by being deeply enrolled in the project of representative democracy (Kelty, 2020). Despite the growing interest in participation as an emancipatory, inclusive aspiration across all kinds of higher-order design projects—in science, innovation, or policy—design inequality persists (Sloane, 2019). Though designed objects are not always used the way designers intend, design practices and ontologies manifest alongside dominant social structures. Therefore, design inequality (as ontology, practice, and social superstructure) occurs at the intersections of race, class, gender, sexuality, disability, coloniality, and other identifiers and social ordering mechanisms. For example, despite a growing movement towards “decolonizing design” (Schultz et al., 2018; Tlostanova, 2017), design at universities is still taught from Anglo-Eurocentric perspectives (Ansari, 2019), enrolling students and practitioners in harmful dichotomies (Escobar, 2018) and telling the origin story of design during the industrial revolution, othering Indigenous design as “craft” (Sbravate, 2022).

Design inequality manifestations are, for example, public transport systems remaining inaccessible to people with disabilities (Bezyak et al., 2017; Ndebele, 2020); medical devices still embodying the practice of “race-norming” (Braun, 2014); poor people being over-surveilled and -policed, whether digitally (Eubanks, 2018) or physically (Sloane et al., 2016), perpetuating class stigma; and product design reflecting gender stereotypes (Mathers, 2017; Perez, 2021). These problems also arise in AI participation. AI is hailed as a highly specialized field that produces a technology so complex that even technical experts (e.g. statisticians, computer scientists, or machine learning experts) have difficulty understanding it fully. Against that backdrop, a narrative unfolds in which participation by people who are not (only) technical experts, but carry other significant expertise (e.g. professional training, lived experience, or literacy in relevant concepts, such as feminism (Browne et al., 2023; McQuillan, 2022) or decolonization practices (Mohamed et al., 2020), or a mix thereof (Hamraie and Fritsch, 2019)) seems exceedingly hard to realize.

Yet, AI already is deeply participatory since AI is—by design—only possible through data production by ordinary technology users and their continuous optimization of AI systems. Both forms of participation are extractive and do not serve equitable representation or co-determination. Given this extractive nature of AI (as a technology and business proposition), it seems difficult to imagine AI participation that resists defaulting to (technology) design's complicity with inequality (Sloane, 2019), centers community-led practices (Costanza-Chock, 2020), and avoids attempts of AI participation best understood as “participation-washing” (Sloane et al., 2022). That said, acknowledging the structural socio-political and technical challenges to AI participation is important, but does not provide an understanding of the relationship between AI participation and larger AI controversies. For that, one must examine how AI narratives facilitate or hinder a more equitable power distribution.

The relationship between AI narratives and AI controversies

During past decades, AI has mobilized collective imagination, futuring, and the generous flow of capital. Despite AI summers and winters, the dream of intelligent machines has been constant during the past 3000 years, still influencing AI development today (Bareis and Katzenbach, 2022; Cave et al., 2018; Cave and Dihal, 2018). Stories of intelligent machines can be traced back to Roman and Greek myths and are often enmeshed in hopes and fears connected to religious beliefs (Liveley and Thomas, 2022; Singler, 2019). Both AI narratives and their “transhistorical, transcultural imaginative” history (Cave et al., 2022) contribute to the emergence of AI controversies because they scaffold rising AI disputes. In other words, AI narratives, birthed in “ancient tales of intelligent machines” (Liveley and Thomas, 2022), form a script for contemporary AI epistemology. AI leverages our most profound fears and hopes, individually and collectively, most of which are historically recycled. AI renews questions about ethics and how we should imagine society's future. In other words, AI narratives make AI controversies possible. Therefore, it seems unsurprising that AI controversies rarely take the form of dispute but often appear deliberate and cultivated.

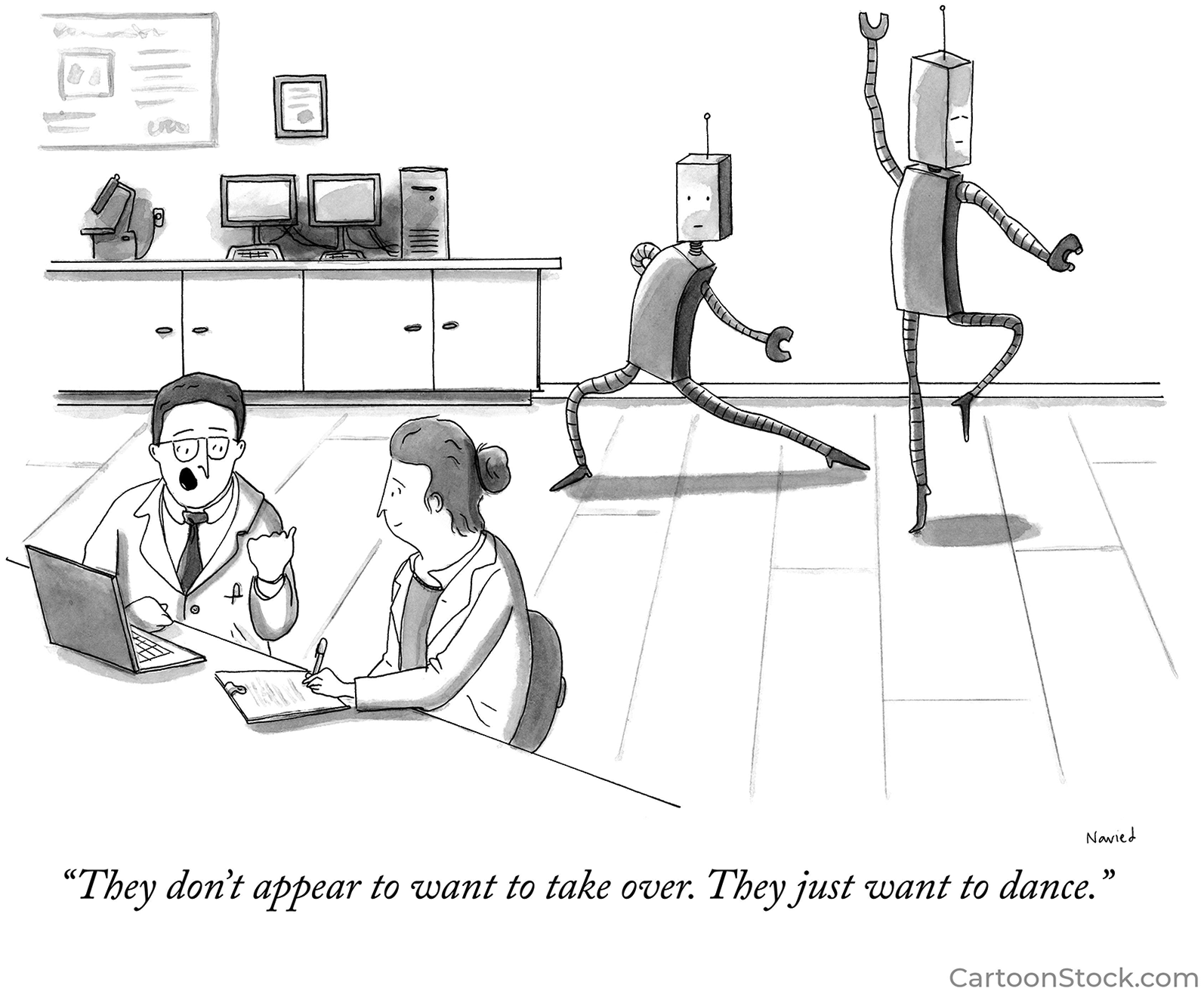

AI narratives tend to follow the path of genius-induced innovation, centering an often male, lonely thinker, inventor, and entrepreneur. Even our AI fears are in “singularity land”: we imagine one coherent super-intelligence that becomes our artificial overlord (Bostrom, 2014). Rarely do AI fears manifest as an ever-emergent ecosystem of many different AIs that sprawl in unpredictable, surprising ways. This is astonishing given the significance attributed to (dangerously capricious yet singular) machine agency in AI imaginations and fears (as humored in the The New Yorker cartoon below), as opposed to loosely linked, special-purpose tools. Centering the “singularity” in AI narratives (Singler, 2019)—both in the machine and its creator—is infrastructurally exclusionary. It does not make room for diversity, multiplicity, unpredictability, and everything that “participation” might bring to the table. This oxymoronic relationship between dominant AI narratives and AI participation materializes in the field of AI (Figure 1).

The New Yorker cartoon by Navied Mahdavian.

Often, controversies in AI relevant to participation lead to ex-post attempts to fix problems that AI applications cause. These include concerns around bias, privacy, value alignment, safety, existential risk, workforce disruption, and de-skilling. Here, the scope of participation is constrained because it addresses specific concerns around a previously designed AI, such as defining fairness, surfacing embodied values for a system, or constraining various functions or data collection practices. On one hand, this narrows the role for participation to individuals and groups able to declare somewhat coherent normative preferences, and on the other to AI systems that can enact those preferences. Technically, that means, for example, that users are observed or surveyed so an AI learns their values or a metric for fairness is processed so an AI can be optimized to hit it. This is a thin form of participation, because participation is limited to existing designs with pre-existing purposes. Human preferences are rarely clear and seldom understood by monitoring behavior. Furthermore, asking for participants to better understand uses for existing AI forecloses participation in deciding what function AI serves, or if it should be used at all. In other words, participation does not feature in our narratives and imaginations of AI design and innovation.

Against that backdrop, one could argue that AI companies use participation to make products that they already have more palatable and less likely to produce lawsuits or restrictive regulations. Participation, in that sense, is part of the marketization of AI (Muniesa et al., 2007). But taking “controversies”—knowledge-making “involving struggles between old and new ideas” (Jasanoff, 2019)—at face value in the context of “participation-washing” and its origins begs a more profound question: What is new about AI? AI systems are commonly designed to facilitate decision making, save time and resources, and increase productivity and efficiency. In that sense, AI is a scaling technology. It walks in the footsteps of other technologies that employ classification and categorization to make bureaucratic processes more efficient. These scaling technologies aim to minimize the need for individual judgment. This is only possible by deploying a set of rules, an ancient technique for the ordering of social life, and one that underpins AI: as Lorraine Daston (2022) argues, algorithms are “thin rules” that “assume a predictable, stable world in which all possibilities can be foreseen,” and the emergence of the idea of AI in the late 19th and early 20th century was conditioned on imagining the feasibility of “the reduction of intelligence to a form of calculation had to seem both possible and desirable.” AI is neither a new idea, nor is its premise and underpinning logic controversial per se. This includes novel AI applications, such as “generative AI” that produce new content, like text, images, or audio since it, too, is nothing more than a blackboxed set of rules. Because we neither see the process that produces results, nor know its governing rules, we mistake the resulting opaqueness for “intelligence” (Daston, 2022). Again, the lethargy and predictability of many AI controversies may be explained by this relationship—there is nothing “new” defying the old.

AI participation as controversy

Interestingly, AI narratives feel more like controversies when they challenge the dominant “singularity” narrative through interventions that transcend the typical participation script. That is, through actual participation (or the visible failure thereof) of people who are marginalized by AI creation, and when this participation focuses on the “unblackboxing” of AI, which demands a more diverse set of actors outline the technical configurations and design of AI systems and identify their limitations. A recent, prominent case of this phenomenon includes the controversy induced by the firing of Google researcher Timnit Gebru, a Black woman, for her work on AI ethics, specifically debunking natural language processing systems (Bender et al., 2021; Metz and Wakabayashi, 2020). Institutionally, these “real” AI controversies prompt practices of variously ignoring, shunning, or selectively championing “participants.” This includes systematically overlooking critical voices demanding inclusion, shunning critics who challenge corporate positions that may give them power over AI products, or “diversity-washing” the employment of people from minority groups (Baker et al., 2022). More recently, it can also include the co-optation of critical AI discourse, steering away from warnings of inequitable AI designs and outcomes and towards warnings of sentient machines that may dominate and harm society. The “godfather” of AI, Geoffrey Hinton, framed his departure from Google, after a decade at the company that bought his AI firm for $44 million, as a necessary step to warn society of AI risks. These risks, according to him, emerge around large-scale unemployment following AI-driven automation and uncontrollable AI-driven weapons or “killer robots” (Metz, 2023). Here, a prominent and powerful actor re-conscripts AI into what Finn Brunton calls “fucking magic”: a process whereby AI becomes “a perfect container for our own anxieties about consciousness, intelligence, control, agency” (Brunton, 2019). The “fucking magic” of AI tends to be practiced by powerful actors whose authority flows from technical expertise, shaping AI narratives at a high level. AI experts invoking AI's “fucking magic” are those who aim to shape “Big Futures,” which imply fundamental, inevitable, and large-scale changes to the status quo (Michael, 2017). These frequently sit opposite the unscripted and often unwanted participation in the shaping of AI narratives by experts who “unblackbox” AI by outlining how specific risks flow from specific AI design features that map onto structures of inequality.

On one hand, AI narratives that are infrastructural to our collective imagination, practices of innovation, and the public discourse, have little room for the multiplicitous perspectives and complexity that (co-deterministic) participation requires. On the other hand, little about AI is new, and the illusion of its “blackboxed” nature (which continues, rather than innovates, bureaucratic automation) tends to only be disturbed by participation in AI discourse that attacks AI's “fucking magic” and refocuses on linking specific design decisions to real AI harms and systemic inequalities. This relationship maps onto more recent critiques of participation and design. Having morphed into a “formatted procedure” that creates “calculated consensus” (Kelty, 2020), participation has lost power over time. Similarly, design has been accused of failing to de-emphasize designers’ ideologies and produce socially beneficial outcomes through participatory design, co-design, or social design (Busch and Palmås, 2023). If participatory design is in crisis, and traditional “controversy studies” provide little consideration of intersections of history, power, and AI narratives, then how can we produce new, more meaningful approaches for foregrounding the effects of AI innovation on society? A first step could be deliberately tracing AI narratives and their cultural genealogies vis-à-vis the structural inequalities mirrored in how we order and organize society (including in and through forms of design). A second could be acknowledging that participation “unblackboxes” AI—a dynamic which will always disrupt AI's desired status as a quasi-magical entity. A third could be deliberately reframing participation as unscripted, unpredictable, and something that must be beyond the grasps of bureaucratic control. Squaring this with AI's nature as a bureaucratic project will be challenging, but necessary and worthwhile. It will lead to routine examinations of AI's cultural assumptions and the social position of those who tell and re-tell AI stories.

Footnotes

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the NYU Center for Responsible AI.