Abstract

Artificial intelligence has become an issue in public policy. Multiple documents issued by public sector actors link artificial intelligence to a wide range of issues, problems or goals and propose corresponding measures and interventions. While there has been substantial research on national and supranational artificial intelligence strategies and regulations, this article is interested in unpacking the processes and priorities of artificial intelligence policy in the making. Conceptually, this article takes a controversy studies lens onto artificial intelligence policy, and complements this with concepts and insights from policy studies. Empirically, we investigate the emergence of German artificial intelligence policy based on content analyses of policy documents and expert interviews. The findings reveal a late, but then powerful institutionalisation of artificial intelligence policy in German federal politics. Artificial intelligence policy in Germany focuses on funding research and supporting industry actors in networked configurations, much more than addressing societal concerns on inequality, discrimination or political economy. With regard to controversies, we observe that German policy is evading controversies by normalising artificial intelligence both with regard to taking artificial intelligence integration in all sectors of society for granted, as well as by accommodating artificial intelligence issues into the routines and institutions of German policy.

Keywords

This article is a part of special theme on Analysing Artificial Intelligence Controversies. To see a full list of all articles in this special theme, please click here: https://journals.sagepub.com/page/bds/collections/analysingartificialintelligencecontroversies

Introduction

Although the notion of ‘Artificial Intelligence’ (AI) denotes a wide array of technologies and systems, this vagueness has not hindered its remarkable uptake in technological, public, and political debates and a massive global roll-out of resources, products, and policies (Berente et al., 2021; Gasser and Almeida, 2017; Jordan, 2019). Political actors in particular have demonstrated great interest in funding, governing, and fundamentally shaping technological and social developments of AI. Recent scholarly work focuses strongly on high-profile regulatory initiatives such as the European AI Act, the AI convention by the Council of Europe, or the US Executive Order (Hacker, 2023; Tallberg et al., 2023). However, many national governments have already been involved in AI governance for years, drafting strategic documents as well as AI policies and regulations that precede inter- and transnational initiatives. There is increasing scholarship that analyses these national AI strategies and policies, yet mostly as documents containing specific narratives and setting regulatory agendas (Bareis and Katzenbach, 2022; Ossewaarde and Gulenc, 2020; Paltieli, 2021; Radu, 2021; Ulnicane et al., 2021).

This article takes a different approach: rather than considering AI policy and documents as consolidated endpoints of a political process, we are interested in unpacking the processes and priorities of national AI policy in the making. From this perspective, AI strategies and similar documents act not only as instruments to manifest and realise specific policies and regulations, but they enact diverse functions. Asdal (2023) has demonstrated in the context of environmental policy how policy documents organise political action, including to prepare the ground for how an issue is handled, and to invite or ignore stakeholders and the public. Given that the vague notion of AI functions as an ‘umbrella term’ (Rip and Voß, 2019) that mediates between developments in science and technology, and societal and political concerns, this unpacking of policy processes is even more important in the context of AI. Analysing AI policy in the making sheds a light on how this mediation happens, which issues are made to matter, and how they are framed. It pokes at the ‘uncontroversial thingness’ of AI (Suchman, 2023) by looking into what policy issue AI is taken to be. ‘[T]here is a tendency across sectors to contend with AI as something inevitable, [but] it is also still the object of controversy and contestation’ (Jobin and Katzenbach, 2023: 51). So while there are certainly controversies in public debates and policy spaces with regard to AI, we lack systematic investigation into which topics become controversial (Marres et al., 2024) and if that also means that they are actually being addressed in the regulatory process.

This paper seeks to unpack this contingent formation of AI policy with a conceptual framework building on controversy studies rooted in Science and Technology Studies, as well as policy studies. Empirically, we investigate the emergence of German AI policy based on content analyses of policy documents and expert interviews. A key theme in this investigation will be the delicate and ambiguous balancing of the controversiality of AI. In many ways, the policy sector, at least for the German case, displays strong characteristics of ‘cold controversies’ as Dandurand et al. (2023) have identified them for Canadian media reporting on AI, instead of articulated ‘hot controversies’ (Callon, 1998; Venturini and Munk, 2021). Upon closer inspection, some AI policy issues are quite controversial, despite controversies being invisible at the level of documents. In our analysis, we use the notion of ‘controversies covered’ to denote this ambivalence of some issues being formally addressed (negotiations did cover this aspect) yet not being resolved, and finally being intentionally excluded from resulting policy documents (results cover, in the sense of hiding, the controversial character of these issues).

The following parts will, first, resort to controversy analysis and policy studies as theoretical perspectives that will help to understand the formation of AI policy in Germany. We will then, second, situate our own methodological approach and position the illustrative case of AI policy in Germany. We then continue, third, with the presentation of the German case and discuss its broader implications. This includes the temporal and actor dynamics of AI policy in Germany, the framing and issue formation, and the modes of governance being discussed. We conclude the discussion with a reflection on the role of controversies. We observe that German policy is evading hot controversies by normalising AI both with regard to taking AI integration in all sectors of society for granted, as well as by accommodating AI issues into the routines and institutions of German policy.

Studying AI controversies and public policy in the making

This article takes a controversy studies lens onto AI policy, and complements this with concepts and insights from policy studies. This combination of approaches allows to develop a processual perspective on AI policy in the making, accommodating both an analysis of the policy documents as well as findings from interviews with policy-makers and experts involved in the process. Specifically, we will build on the observation that policy, and AI policy more in particular, are not always spaces of ‘hot controversies’ (Callon, 1998; Venturini and Munk, 2021), but rather are characterised by a distinct balance of issues that are problematised and transformed into controversies on the one hand, and topics that are being kept out of political controversies on the other.

Controversy studies and technologies

The study of controversies is rooted in Science and Technology Studies (STS), and it approaches scientific controversies as a process that is inherent to scientific and technological practices and that can operate on different levels of dispute (Pinch and Leuenberger, 2006). In general, controversies can be assessed as disagreements between actors, mostly emerging when conventional beliefs are challenged (Venturini, 2010: 261–262). The most prevalent form of controversy studies in the last two decades has certainly been the empirical method based on the cartography of controversies, as proposed by Bruno Latour in order to realise actor-network theory methodologically and in increasingly digital contexts (Latour, 2005; Venturini, 2012). In this approach, controversies are assessed as ‘situations where actors disagree’ (Venturini, 2012: 1), and the complexity of such situations is acknowledged by exploring and ordering extensive material in cartographic research studies. Producing a map of a controversy is then based on recognising all viewpoints of a debate while accounting for their representativeness, influence, and interest, which can allow for choosing focal points and actors to represent the controversy in an adequate way (Venturini, 2012: 2–4). At the same time, ‘social cartography does not require any specific theory or methodology’ (Venturini, 2010: 259). Data analysis can encompass a wide range of different techniques like visualisation (Beck and Kropp, 2011), supported by different quantitative and qualitative coding strategies (Burgess and Matamoros-Fernández, 2016) of documents, media coverage, websites, involved actors, social media data, and so on.

With this processual perspective of controversy studies, scholars have grappled with a plethora of technological advancements in the past. These studies approached their subjects from different angles and through different approaches, depending on the involved actors, contexts, and the technology as such. For instance, through studying the so-called ‘Dieselgate’ scandal, Marres (2021) scrutinises the way that public controversies changed to a ‘genre of publicity’ (Marres, 2021: 12) in light of digital marketing strategies of enterprises that employ beta-testing as a way to reframe a controversy to claim organisational learning. And, in accordance with the subject of this study, Cardon et al. (2018) provided a detailed account of the chronological development of the controversy within the AI research community through explicating how the field has continued to be divided into two communities (connectionist vs. symbolic research traditions), each pursuing different approaches, while both falling under the broader category of ‘Artificial Intelligence’.

From controversy studies to issue mapping and framing

While this strand of research initially focused strongly on technological and epistemological controversies, it has increasingly adopted a more pronounced political approach, assessing how scientists and science shape political processes as well as, conversely, how politics impact science and knowledge production (Pinch and Leuenberger, 2006). The study of public controversies has, for example, highlighted how problematisations of certain technological developments challenge the way ‘innovation’ is worshipped in society (Marres, 2021: 4). In this setting, processes of closure in political controversies are mostly induced through economic and political interests (Pinch and Leuenberger, 2006).

Conceptually, this more pronounced political approach has also led to a shift in focus of epistemological sensitivity and attention, particularly not taking controversies for granted as robust entities but focussing more on their emergence and situatedness. Most prominently, Marres (2015) argues how empirical analyses should develop from controversy mapping to issue mapping, particularly in the context of digital media environments but with implications beyond. Annemarie Mol had also already recommended to sway over from established controversies as an entry point for empirical analyses to tensions and issues, highlighting that tensions often ‘do not turn into controversies but get distributed over different sites’ (Mol, 2003: 107). Consequently, instead of classic controversy mapping that starts with a solid controversy and goes from there, scholars interested in the emergence of issues and controversies should leverage the openness of issue mapping and discern from a processual perspective patterns of issue formation, Marres argues (2015: 672). Focusing on issues instead of controversies allows one ‘to get beyond the loudest voices and binary oppositions, to reveal the multi-sidedness and intersectionality’ (Burgess and Matamoros-Fernández, 2016: 93) of issues and controversies.

These conceptual movements coincide with approaches from policy and communication studies that address the question if and how issues enter the political arena. Specifically, literature on political agenda setting, politicisation, and framing is helpful for this article's ambition to understand how AI is coming into being as an issue in policy, as these strands of literature foreground the role of discourse in policy making. Scholars in political science and agenda-setting theory (McCombs and Shaw, 1972; Russell Neuman et al., 2014) have investigated how topics become political issues (Downs, 1972) and which actors and stakeholders shape the political agenda (Kingdon, 2014: 15–16) since early-on. More recent work highlights the performative role of discourses and processes of politicisation. Issues do not simply transfer from smaller arenas to the political agenda, but are transformed and only properly constituted in the process of politicisation and in connection with parliamentary-administrative processes and resources (Haunss and Hofmann, 2015: 33). In this process, changes in narratives are considered crucial for issues entering the political agenda (Baumgartner and Jones, 1991: 117). With that, one can challenge or safeguard the social order, determine causal or responsible agents, empower actors to tackle the problem, and establish new alliances (Stone, 1989: 295). For instance, emphasising positive aspects of AI, its potential for economic growth or presenting it as a technological solution to societal problems (Katzenbach, 2021) may result in different outcomes compared to highlighting the risks and the need for strict regulation. Therefore, the type of attention given to an issue – paired with its definition and negotiation – is significant in entangling how policy actors make decisions about an issue. The concept of framing focuses on the contingency and politics of potential perspectives on an issue (D’Angelo, 2002). While agenda-setting contributes to the availability of an issue, framing provides specific information or ideas to an issue and makes it, thus, applicable (Tewksbury and Scheufele, 2019). In consequence, the impact of agenda-setting is thus whether individuals and collectives prioritise and contemplate an issue, and the role of framing in terms of how they contemplate it (Scheufele and Tewksbury, 2007). Through the introduction and utilisation of specific frames, actors can influence how the public but also policymakers perceive the origin, overall importance, and possible treatments of issues on the agenda (Jungherr et al., 2019). This struggle for the definition of an issue is crucial in determining whether previously disinterested parties will become engaged, and when combined with institutional control, whether it can lead to rapid change. Taken together, controversy studies, issue formation, and framing allow for a processual approach to unpack AI policy in the making, with a sensitivity for the temporal dynamics and actors, topical priorities and framings that are dominant in this process.

Controversies and AI

In this article, we focus on questions of issue formation, framing and controversies on the emergence of AI policy in Germany. The role of controversies in AI development and regulation has been considered differently in existing research: In general, AI is broadly considered a highly disruptive technology, radically changing established ways of knowing and doing (Roberge and Castelle, 2021). This discourse ties into long-standing narratives of AI being ‘revolutionary’ and triggering fast-paced change (Bareis and Katzenbach, 2022; Edwards, 1996). Critical scholars have pointed to the fundamental harms to society and the planet that this technological and social development brings about (Crawford, 2021), including the perpetuation of inequalities and discrimination (Bechmann and Bowker, 2019). More recently, Marres et al. (2024) have observed industry appropriation of the controversy discourse on AI, arguing that this strategic assertions of AI's controversiality by tech entrepreneurs and major companies helps them to reinforce the deep integration of AI into society and to keep a strong clutch on the public understanding of AI in the face of growing criticism and regulation. Somewhat ironically, this powerful assertion of the controversiality of AI firmly manifests the ‘uncontroversial thingness’ of AI (Suchman, 2023), consolidating AI as a necessary and decisive ingredient of contemporary societies.

In controversy studies, a distinction between hot and cold controversies is widely established (Venturini, 2010). This distinction builds on Callon's characterisation of hot and cold situations. In hot situations, everything becomes controversial, including legitimate forms of knowledge, frames of references, as well as actors and their interests (Callon, 1998: 260–261). In contrast, in cold situations tensions can be resolved within established routines and institutions: ‘actors are identified, interests are stabilised, preferences can be expressed, responsibilities are acknowledged and accepted’ (Callon, 1998: 262). Venturini (2010: 7) has since urged researchers to ‘avoid cold controversies’ in their study of technoscientific problems because ‘actors [already] agree on the main questions’. As discussed, Marres (2015) and Mol (2003) have convincingly argued to employ a more symmetric approach to the study of controversies and tensions that includes cold controversies in the analysis. Yet, the distinction between hot and cold might still be of analytical value. In the context of the Canadian debate on AI, Dandurand et al. (2023) have shown that the absence of hot controversies does not necessarily indicate a stable consensus and routine institutionalisation of an issue. In their analysis of Canadian media reporting on AI, they rather observe a ‘freezing out’ of controversies by a confluence of routine economic reporting and prioritisation of few expert voices to ‘close debates and obfuscate contrasting expectations’ (Dandurand et al., 2023: 3). This yields interesting ramifications for understanding the formation of AI as a key sociotechnical institution (Jobin and Katzenbach, 2023), including the role of different actors, issues, framings, and modes of governance for AI, that we seek to unpack in this article for the case of German AI policy.

Methodology: Understanding AI policy in Germany

Against this background, this article studies the formation of AI policy-making in Germany in the time period from 2012 to 2021. This time period is critical as it constitutes the phase when AI has not only experiences its most recent ‘summer’ (Markoff, 2016), but much more importantly AI is being powerfully integrated into our societies by an unprecedented allocation of economic and political resources while AI debates and developments are still being vague and unreliable (Narayanan and Kapoor, 2024). Germany as Europe's major economic force is a key country for the global roll-out of AI governance, and an interesting case for potential controversies given the traditionally strong concerns on privacy and data protection (Hornung and Schnabel, 2009; Kozyreva et al., 2021) as well as stronger regulatory interventions on technology in the light of a risk-averse society (Hofstede, 2001; Lee et al., 2013).

Building on the presented conceptual literature on controversies, issue formation, and framing, we are particularly interested in the four dimensions of AI policy-making in Germany: (1) First, the temporal dynamics, key actors, and topical priorities of AI policy-making in Germany in order to achieve a systematic overview for this field, and to better understand driving forces and actors. (2) Second, we are interested in the issue formation and framing of AI-related topics, revealing the specific character of AI as a political issue and its context in Germany. (3) Third, we are focusing on modes of governance as a key dimension of how AI technologies are being dealt with in German politics. (4) Finally, we are interested in the role of controversies in the formation of AI in German policy-making.

Empirically, we work with a multi-methods approach that combines large-scale content analysis of policy documents as well as interviews with experts involved in AI policymaking in Germany (cf. Figure 1). We first map AI policies and controversies based on policy documents, and then, second, situate these policies and the underlying processes through interviews with actors involved in the process. This approach reflects the paper's ambition to more symmetrically and systematically understand the formation of AI policy-making in Germany. Given that documents have very different functions in the policy and political process (Asdal, 2023; Schmidt, 2002), and might thus be hard to interpret without context, the interviews serve to situate the documents in the political and procedural context.

Methodology of AI controversy analysis.

The first part of our approach is a content analysis of AI policies in Germany. To build the corpus, we retrieved policy documents containing the term ‘AI’ in German and English language in different grammatical flexions from government bodies on the national level for the time period 2012 to 2021. We understand ‘policy documents’ to include documents such as laws, regulations (including proposals), whitepapers, ethical guidelines, and strategic documents. We only include policy documents issued by state actors that have the ability to make authoritative decisions, as the ‘primary agent[s] of public policy’ (Howlett and Cashore, 2020: 10). We cross-checked the resulting list with the OECD AI Observatory Dashboard (OECD AI Policy Observatory, 2023). As a result, we built a database with the identified 147 documents and their metadata (e.g. scope, issuer, and type of document, cf. Table 1). After performing initial data cleaning (e.g. removing chapters and documents without topical affinity to AI), we exercised named-entity recognition (NER) to identify actors in AI policy documents. For that, we used the NLP-library Flair which includes a four-class NER model based on document-level XLM-R embeddings. In addition, we employed a qualitative coding of the policy documents (Karppinen and Moe, 2019). On the first dimension, we coded the policy documents on the sentence-level with regard to their function (Hall, 1993; Knill and Tosun, 2020; Weible, 2018), including (1) goals and intentions, (2) suggested measures and actions, and (3) demands, recommendations, and evaluations. Accounting for these elements allows for tracing how policy actors approach an issue, which policy instruments are considered, and – more generally – how they are put into practice. The second dimension of coding builds on a topical codebook, developed on both existing literature and iterative coding of our corpus. The resulting codebook comprised eight different first-level codes, namely, economy, labour/work, technology, research, environmental issues, sectors of AI application, concepts/principles, modes of governance, and multiple levels of subcodes (cf. Table 1).

Overview of classification and coding of policy documents.

The second part of our empirical research is an interview study with federal ministries and the German parliament's Enquiry Commission on AI as pivotal actors in German AI policy-making. Based on a preliminary analysis of our policy corpus, we identified the Federal Ministry for Economic Affairs and Climate Action (formerly the Federal Ministry for Economic Affairs and Energy), the Federal Ministry for Education and Research, and the Federal Ministry of Labour and Social Affairs. Within those most relevant ministries, we identified the relevant units with the help of organisational charts, and contacted them for interview requests. In addition, we reached out to 11 out of 19 expert members of the Parliamentary AI Enquiry Commission which was active between 2018 and 2020. As a result, we conducted nine semi-structured expert interviews (Flick, 2018). Each interview was conducted by two researchers.

Dynamics of AI policy in Germany

In the first layer of analysis, the results of our quantitative and qualitative content analysis reveal the dynamics, key actors, and topical priorities of AI policy making in Germany. With regard to the temporal distribution, policy documents in German federal policy-making related to AI only appeared as late as 2016 with a significant uptake from 2017 to 2020 (cf. Figure 2). While this finding confirms previous scholarship on AI attention cycles in Germany and beyond (Köstler and Ossewaarde, 2021; Lemke et al., 2024), the timing of document releases in our corpus also coincides with the fourth grand coalition government (2017–2021) between the CDU/CSU and SPD, led by Chancellor Angela Merkel. Being the first German federal government to substantially address AI technologies, a significant wave of publications commenced in the latter half of 2018, triggered by the announcement of the government-issued German AI strategy 2018 (Die Bundesregierung, 2018). This AI strategy initiated the publication of numerous follow-up documents to implement the strategy plan across federal ministries. The coverage gradually increased, reaching its peak in the second half of 2020, which marked the beginning of the final year of the government's term.

Documents published per issuer over time.

With regard to key actors, at the level of producing AI policy documents, the Federal Ministry for Economic Affairs and Energy issued the highest number of documents (48), followed by the Federal Ministry of Education and Research and the German Federal Government, each with 17 publications. However, all German ministries have in fact published AI policy documents during this time period, incorporating AI into their strategic plans. As a result of the 2018 AI strategy, AI is widely established in the German government throughout all policy fields, not solely limited to the research and economic ministries responsible for science and technology policies. When looking at the attention and visibility of actors and stakeholders being referenced in federal AI policy (Figure 3), the most prevalent actor types are EU actors (EU-POL), the German government including all ministries and the Federal Government (GOV), other German offices and executive instances (EXE) as well as various international organisations (INT-DIV). However, in the first phase (2017–2019) of AI policy documents in Germany, private sector actors (PRIV) and research actors (RESEARCH) still feature prominently but lose salience from 2020 onwards. In contrast, international and global institutions (EU-POL, INT-DIV, and UNO) clearly increase their prominence in the corpus in 2020 and 2021.

Actor type mentions over time (for all issuers).

In sum, these dynamics of AI policy reveal a late, but then powerful institutionalisation of AI policy in German federal politics. The Federal Ministries responsible for economic and research policy are leading this process, but remarkably all Federal Ministries are participating in this development. This strong adoption and integration of AI into the political system is also reflected in the analysis of actors and stakeholders referenced in our data, showing a strong dominance of political actors over economic actors. Asked about the emergence of AI as a topic in the Federal Ministries, interviewees stressed the continuity of the most recent AI governance debates both with general issues of digital policy but also longstanding debates on AI (INT-BMWK).

Thus, in this first step of analysis with a view to broader dynamics, our research suggests that the German political system has integrated AI into its routine machinery, with strong leadership by existing powerful ministries and institutions with only little organisational adaptation. In this way, the most recent wave of debates on AI and governance has been put firmly on the agenda, with initially stronger corporate and research references in the documents indicating external triggers, yet with a quick absorption by the political system. With that, German political institutions seem to have accomplished to avoid a situation in which the structures and the division of labour between political institutions becomes the object of controversy and re-negotiation (Callon, 1998: 260), that is, a ‘hot controversy’, and have instead forcefully institutionalised AI into its trajectory of internet policy and digital policy (Pohle et al., 2016).

Framing and issue formation in German AI policy making

In the second step, we are looking at which issues were prevalent in the German policy documents to better understand AI governance in Germany and its controversies. Our results reveal a strong focus on framing AI policy as questions of competition and innovation, in line with a focus on funding and industry-academia collaborations. A recurrent topic across documents is the global economic competition when it comes to AI, and the positioning and protection of the German economy, particularly vis-a-vis the US and China. ‘Digitalisation in the United States is the story of dominant, unregulated private corporations. And in China, “big data” primarily stands for “big brother”: comprehensive state control of its citizens. We are against both. Our counter-model to the USA and China is a regulatory model that demands data sovereignty and security, open standards and access within a democratically legitimised regulatory framework’ (Bundesministerium für Wirtschaft und Energie, 2020). 1

This coincides with a dominant ‘need for innovation’ frame and much attention dedicated towards Germany-based start-ups in policy documents. This is rather unusual in the German context given traditionally low self-employment and start-up founding rates (Lehrer, 2000). The interviews confirm this finding with an NGO-based expert observing “innovation and competition capacity of the German economy as central frame” (INT-NGO: 12) in German AI policy discourse. On the policy-making level, this translates into fostering cooperation between research and economic actors in ‘transfer networks’. Such clusters are established to facilitate knowledge transfer from AI research institutes to small- and medium-sized companies (SMEs). This industry strategy has been applied since the 1990s in German innovation policy (Koschatzky, 2000: 10), and is now repurposed for specifically promoting regional clusters of companies and research institutions (Figure 4).

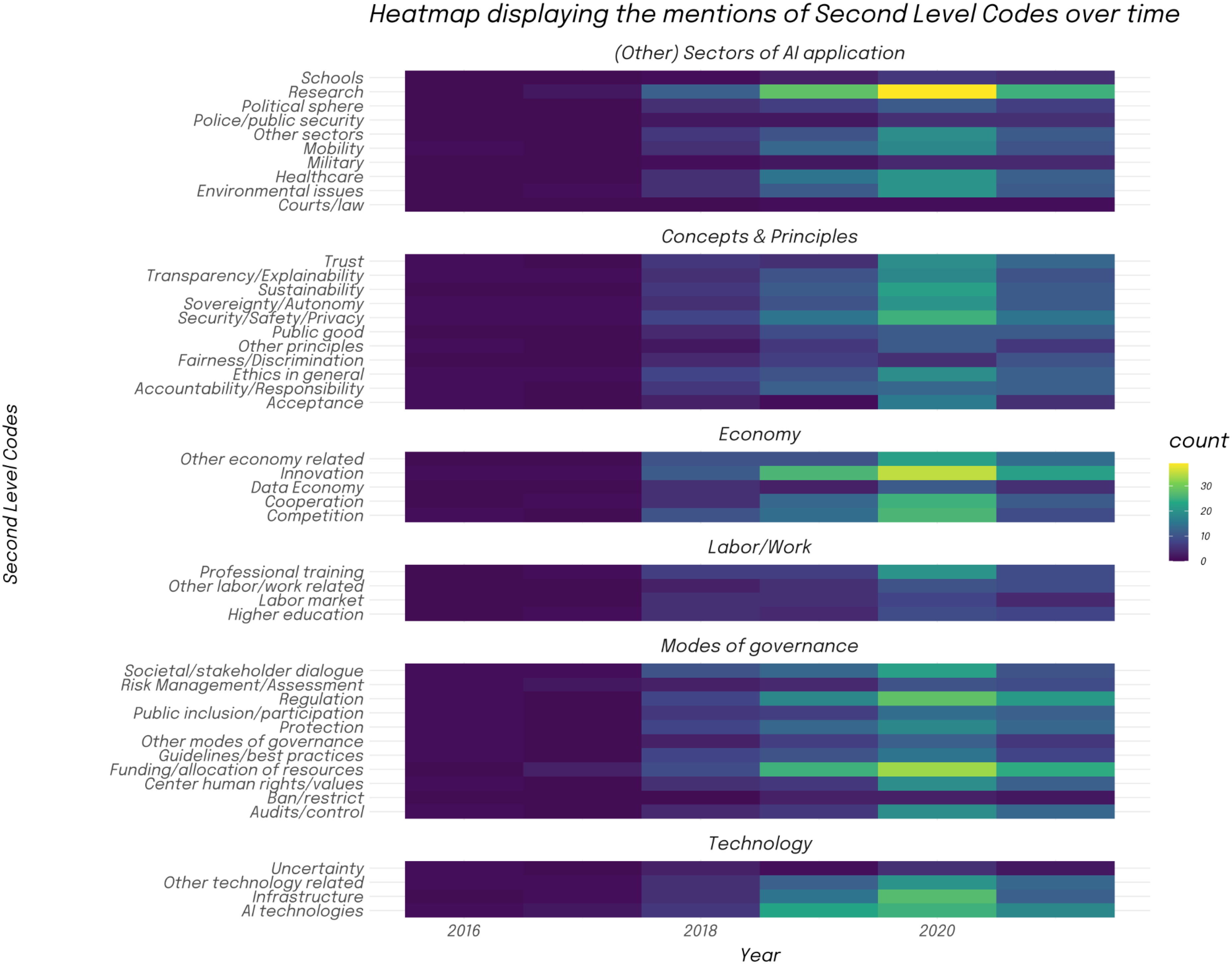

Heatmap of thematic codes over time.

To better understand the framing of AI policy, we analysed policy documents also with regards to underlying concepts and principles. Key reference points are security and safety, as well as privacy. These themes dominate the framing of normative considerations in the documents. Coinciding with the theme of global competition, there is also a recurrent framing of AI developments and policy in terms of sovereignty and autonomy. ‘The development of AI skills is a key factor for the digital sovereignty and competitiveness of Germany and Europe. This is particularly about the competencies in companies, public administration and specialists to evaluate, review and apply AI technologies and application of AI technologies’ (Bundesministerium für Bildung und Forschung and Plattform Lernende Systeme, 2020: 12), demonstrated in a strategy document by the national AI stakeholder platform. Increasing attention is being dedicated to the theme of sustainability in more recent documents. In addition, there are broad references to ethical considerations, but remarkably little mentions of more concrete ethical and normative concepts, such as public good, fairness or discrimination.

In terms of dynamics and timing, the 2018 national AI strategy is clearly an anchor document in the formation of German AI policy-making. It has shaped Germany's AI policy trajectory with its focus on economic rationales, research funding and supporting industry actors in networked configurations. Led by the three federal ministries of economy, research, and labour and social policy, the AI strategy set the framing and priorities for the years to come. In our data, most thematic codes already appeared in the 2018 national strategy and then stabilised over the following years in documents across all included federal ministries and political institutions. The strong focus on economic and research aspects is set on the agenda here. The first four priority areas identified in the A strategy are all related to these economies and research (research for innovation, competitive innovation clusters in Europe, strengthening SME, and start-up culture). These thematic priorities run across our full corpus of German AI policy documents, and institutionalised this economic framing of AI policy. Although interviewees insisted that AI policy has had a history and continuity before the initiation of the national AI strategy, they confirmed that the strategy both broadly set the topics for future AI policy making (INT-MIN-1), as well as functioned as a concrete working basis to structure and organise follow-up policy processes (INT-MIN-2).

In many ways, these results indicate that most AI policy documents are rather starting points than end points in AI issue formation and controversies around AI. They are setting the protagonists and framing the objects of contestation (Latour, 2005; Marres, 2015).

Asdal highlighted for the context of climate policy that policy documents do complex work: they ‘strictly direct action (or the opposite, preclude action) and yet be sufficiently open as to include multiple divergent interests’ and actors (Asdal, 2023: 247). While this certainly does not close key controversies on the role of AI in society, it does substantial work in framing such controversies.

Modes of governance in German AI policy

A closer look into the modes of governance gives further insights into what type of policy issue AI is framed to be. Our results include a broad spectrum of modes, including the allocation of funding and resources, new regulations, risk assessments or technology bans, the establishment of best practices, and the strengthening of public participation. Given their occurrence in the German AI policy documents under study, they constitute plans or concrete suggestions for normative policy instruments. As a consequence, they do not simply describe different ways to govern AI, but explicitly state how AI should be governed from a German perspective.

We have analysed policy instruments mentioned in our documents and tracked their co-occurrence with the institutions that published the document as well as with the sectors concerned. Overall, they correspond, in number and distribution, approximately to the publication dynamic of documents (cf. Figure 5). The more documents are issued in one year, the more mentions of policy instruments can be observed, and the more documents a particular ministry has published, the more policy instruments are mentioned.

Modes of governance.

Allocation of (more) funding to AI is the most frequent mode of governance suggested in German public policy documents. Many ministries propose to allocate more resources to AI. The Ministry of Education and Research is its most dedicated advocate, privileging more funding over all other modes of governance and highlighting the need for resources in 16 of its 17 publications. ‘By funding and promoting the central key technologies, we want to create value, lay the foundations for our future prosperity and […] secure our national and European sovereignty and technological independence’ (Bundesministerium für Bildung und Forschung, 2019). Such statements illustrate how the allocation of resources is positioned to be crucial for governing AI for strategic interests. In addition, the policy instrument of funding often occurred together with the sectors of health, mobility, innovation, and research. This suggests a targeted approach privileging funding and AI development in sectors associated with technological promises of AI like self-driving cars, diagnostic systems, and care robots. Moreover, this strategic focus aligns with Germany's broader economic and technological goals and with solving ‘German problems’ such as the ageing population and decreasing work skills (Ossewaarde and Gulenc, 2020).

Similarly remarkable is the absence of a ban on technologies in almost all German AI policy documents. As a mode of governance, the banning or even restriction of artificial intelligence is suggested only four times across all documents. The instrument of banning or restricting AI came up first in 2019, with the other mentions in 2020. The context of this policy instrument is mostly data protection and data ethics. For example, the data protection conference discussed interventions in data use with AI technologies that have potentially discriminatory effects (Datenschutzkonferenz, 2019), the AI Enquiry Commission recommended restricting AI-enabled political microtargeting, as well as the uncontrolled use of the so-called ‘upload filters’ (Deutscher Bundestag, 2020), and the data ethics commission demanded a total ban of systems with unacceptable potential for damage (Datenethikkommission der Bundesregierung, 2019).

This low level of salience for technology bans in the policy documents is especially remarkable in the context of intense public and political debate on such issues, especially in military and public security contexts. Specifically, the military use of the so-called ‘Autonomous Weapon Systems’ (AWSs) by German forces has been the object of intense public and political controversies (Bächle and Bareis, 2022; Sauer, 2021). Our interviewees have indeed highlighted issues of military use of AI as one of the most controversial issues (INT-RES-1, INT-RES-2, and INT-NGO-1). ‘All issues of AI in the military context were controversial, without consensus’ (INT-RES-1). The policy documents under study do not include bans on AWS, while the Enquiry Commission explicitly included bans because of the controversiality of AWS.

In contrast to common interpretations of controversies, the omission of technology bans in German AI policy indicates that negotiations here are potentially still ‘hot’ in the sense of Callon (1998) rather than ‘cold’. Given that AWSs are in many ways at odds with existing frames of accountability and legitimacy in warfare and concerned political institutions, especially regarding questions of autonomy (Bächle and Bareis, 2022; Sartor and Omicini, 2016), its political processing exhibits exactly the kinds of overflow characteristics for hot situations, with actors and frames of reference not yet being stabilised (Callon, 1998). The usual methods of controversy mapping, analysing relations and content of published material and documents, are not able to surface such hidden policy controversies. In line with Marres’ and Mol's plea for entering the study of issues not through apparent controversies, but rather through a more symmetric observation of discourses and practices in the field under study (Marres, 2015; Mol, 2003), our combination of content analysis with expert interviews allowed to reveal the actually highly controversial nature of this issue, despite controversies being invisible at the level of documents.

Controversy covered, not closed? The normalisation of AI

More broadly, AI policy in Germany seems to be much more characterised by prioritising and normalising AI than by controversies. While the narrative of the ‘AI Tsunami’ (Roberge and Castelle, 2021), the disruptive and revolutionary character of AI technologies is quite dominant both in German media as well as in German AI policy (Bareis and Katzenbach, 2022), our analysis of policy documents and interviews indicate a strong ambition of normalisation and integration of AI policy into existing frameworks and institutions. When controversies arise, such as in the case of AWSs, they are often carved out from routine procedures and essentially excluded from final reports as in the case of the final report of the AI Parliamentary Enquete Commission. While this process is different from the ‘freezing out’ of controversies, observed by Dandurand et al. (2023) in the context of Canadian media reporting on AI, its effect is similar. The specific underlying controversy on AWS is rather covered and then closed. There is no consensus on adequate frames of reference, potentially competing principles, and diverging interests are not balanced.

As a result, German AI policy is normalising AI in two ways: First, it takes AI for granted and constructs it as inevitable. In line with earlier research on the German National AI strategy (Bareis and Katzenbach, 2022; Hälterlein, 2024), we find that AI and its widespread deployment across all sectors of society is taken-for-granted and considered a necessary and inevitable development. For example, none of the (ministerial) interviewees questioned the raison d'être of AI. The ‘uncontroversial thingness’ of AI (Suchman, 2023) has also taken hold of German politics. It is not primarily presented as a problem that needs to be addressed but as a tool that is ready to be used. Second, our research shows that AI is being normalised into the routines and institutions of German policy. After the exceptional efforts to articulate a national AI strategy and to discuss broader ramifications of AI and necessary regulation in the Parliamentary Commission, the German political system has processed AI mostly as a routine matter. Despite fundamental technological developments and rhetorics of an AI revolution, German politics evaded ‘hot situations’ with regard to AI policy that would have demanded the re-negotiation of fundamental principles as well as of political and organisational structures. Although there was clearly potential for such hot controversies, German political actors kept AI controversies cold.

Conclusion

In this article, we have sought to unpack the formation of AI policy in Germany, and understand the role of controversies in this process. The empirical findings have revealed a late, but then powerful institutionalisation of AI policy in German federal politics. Lagging behind the dynamic of public discourse (Köstler and Ossewaarde, 2021), the uptake of German policy only substantially started in 2018. The Ministries for Economy, for Research, and for Labour and Social Affairs strongly shaped this development, also positioning their economic framing and funding priorities successfully in the German AI agenda. The 2018 German AI strategy clearly constitutes an ‘anchor document’ that is forcefully framing the future AI trajectory in Germany with a focus on funding research and supporting industry actors in networked configurations. As a result, the framing of AI in German policy is much more that of a technology and of tools that need to be leveraged than that of a problem that needs to be addressed. Regarding normative debates, we have seen surprisingly little normative controversies around questions of discrimination or inequality. Instead, security and privacy dominate the normative dimension in German policy documents. In addition to the strong economic framing, themes of global competition, sovereignty and autonomy have been recurrent throughout our material.

With regard to controversies, we have observed that substantial controversies are repeatedly rather deferred than closed. We have used the notion of ‘controversies covered’ to denote the observed ambivalence of some issues being formally addressed yet not being resolved in the process, and finally being intentionally excluded from resulting policy documents. Controversial issues were not salient in policy documents, our interviews indeed revealed substantially controversial issues, such as autonomous weapon systems and how to regulate them. In many ways, these results indicate that most of these AI policy documents have served much rather as starting points than end points in AI issue formation and controversies around AI. They are setting the protagonists and framing the objects of contestation (Latour, 2005; Marres, 2015). Instead of closing controversies or regulating issues of concern, we observe how policy documents do complex work here. As Asdal (2023) had mentioned for climate policy, they must balance to need to direct (or preclude) action, yet be open to include multiple, and possibly divergent interests. In German AI policy this rather results in covering than closing key controversies on the role of AI in society. But it does substantial work in framing in such controversies, for example, clearly prioritising economic rationales, funding opportunities, and national sovereignty over issues of inequality and discrimination. We have not found much overlap with the problematisations and structural concerns with AI across epistemic, political, economic, and ethical dimensions as identified by Marres et al. (2024) in their study on AI controversy elicitation. As a result, AI policy in Germany seems to rather cover than close controversies, and normalise AI as a routine issue for policy and political institutions. This process is different from the ‘freezing out’ of controversies in Canadian media reporting on AI (Dandurand et al., 2023), but its effect is similar. It is normalising AI, instead of articulating and negotiating issues of concerns. AI is being normalised in German AI policy both by taking AI proliferation and integration into all sectors of society for granted, as well as by accommodating AI issues into the routines and institutions of German policy.

In the end, German AI policy formation has presented itself as a surprisingly uncontroversial process. While our empirical investigation has indeed surfaced issues of a substantially controversial nature, we must conclude that these controversies have not shaped the political processing of AI and the resulting policy agenda in Germany. On these grounds, we chime in with the chorus of colleagues that there is much to do in countering the normalisation of AI (Suchman, 2023) and to elicit substantial controversies on AI (Dandurand et al., 2023; Marres et al., 2024). With regard to policy, the EU process of negotiating the EU AI Act seems to have addressed such concerns and controversies much more, but key settings for the future course of AI governance remain to be addressed in its detailed implementation (Hacker, 2023). This yields active integration and participation of social sciences and humanities scholars into policy processes an even more important endeavour, even when affirming that our role is more to ask questions and raise issues than to provide regulatory solutions.

Supplemental Material

sj-docx-1-bds-10.1177_20539517241299725 - Supplemental material for Situating AI policy: Controversies covered and the normalisation of AI

Supplemental material, sj-docx-1-bds-10.1177_20539517241299725 for Situating AI policy: Controversies covered and the normalisation of AI by Laura Liebig, Anna Jobin, Licinia Güttel and Christian Katzenbach in Big Data & Society

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Deutsche Forschungsgemeinschaft (grant number 440899634).

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.