Abstract

Against a backdrop of widespread interest in how publics can participate in the design of AI, I argue for a research agenda focused on AI incidents – examples of AI going wrong and sparking controversy – and how they are constructed in online environments. I take up the example of an AI incident from September 2020, when a Twitter user created a ‘horrible experiment’ to demonstrate the racist bias of Twitter's algorithm for cropping images. This resulted in Twitter not only abandoning its use of that algorithm, but also disavowing its decision to use

This article is a part of special theme on Analysing Artificial Intelligence Controversies. To see a full list of all articles in this special theme, please click here: https://journals.sagepub.com/page/bds/collections/analysingartificialintelligencecontroversies

In September 2020, a Twitter user posted a tweet that he described as ‘a horrible experiment’. He tweeted two long images, with former U.S. president Barack Obama at one end, and Republican senator Mitch McConnell at the other. The tweet (shown in Figure 1) presented an implied test of Twitter's algorithm for cropping images, as it would need to ‘pick’ which face to centre and which to crop out to make the images fit within the tweet. In both images, the algorithm cropped out the face of Obama and centred the face of McConnell. The tweet went viral, amplifying accusations of racist bias around the world, and sparking a meme into being through which many other people sought to ‘test’ the algorithm. Soon after, Twitter announced it would abandon the cropping algorithm, claiming not just that the algorithm was harmful, but that they were wrong to use any algorithm for deciding whose faces are visible on their platform (Chowdhury, 2021). Against a backdrop of widespread interest in how publics can participate in and transform AI systems (Birhane et al., 2022; Bunz, 2022), this ‘horrible experiment’ suggests that interacting with algorithms – for example, testing them – may have the capacity to alter the design of algorithms and their influence on society.

Visual explainer of how the Twitter user created a format to test the cropping algorithm.

In this short commentary, I argue for a research agenda focused on AI incidents like this one – defined as examples of AI going wrong in deployment and acquiring political capacities to alter the way that AI system works – and how they enable participation in AI. I take up the controversy over Twitter's cropping algorithm to argue that such a research agenda must pay attention to how individuals and algorithms interact, how those interactions are circulated and framed as incidents in online environments, and how others (e.g., online publics and technology companies) come to know and come to care, so that changes are made to the AI system.

To develop this argument, I first propose we understand the ‘tests’ of Twitter's cropping algorithm as an example of ‘algorithm trouble’: ‘everyday encounters with artificial intelligence [that] manifest, at interfaces with users, as unexpected, failing, or wrong event[s]’ (Meunier et al., 2021). However, I further develop the notion of ‘trouble’ by asking how trouble is

Trouble and troublemaking

Trouble

In recent years, examples of AI going wrong and sparking controversy – what I am referring to as AI incidents – have become both widespread and influential. AI incidents have included an AI model offensively labelling a photo of an African-American couple as ‘gorillas’, and Google translating the genders of job roles (e.g., doctor and nurse) in terms of sexist stereotypes (Crawford, 2017). Thousands of incidents have been documented through initiatives such as the AI Incident Database (2023) and have been used to critique algorithms’ design and underlying logic (e.g., Benjamin, 2019), often resulting in successful pressure on technology companies to alter their AI systems.

Meunier et al. (2021) propose we think of AI incidents as ‘algorithm trouble’: ‘unsettling events that may elicit, or even provoke, other perspectives on what it means to live with algorithms’. Their framing suggests that AI incidents are not simply a question of AI going wrong but also individuals and institutions coming to be

In this commentary, I argue that trouble can be further elaborated as

Troublemaking

Ahmed takes up troublemaking as a central concept in her feminist theory (Ahmed, 2017) to describe how interactions can be deliberately disrupted to critique and challenge harmful systems. Her account is helpful for an understanding of AI incidents because it offers a theoretical framework for how individuals can deliberately controversialise elements of social life such that they are experienced as troubling by others and in need of remedy. As a result, this theoretical account can provide questions to ask as part of an analysis of how AI incidents are

Ahmed presents three techniques for troublemaking: pointing out invisible structures, orientating actors around them, and problematising the harm they cause. The first technique – pointing – involves bringing features of a social environment into view, specifically those that are usually invisible, in order that they can be challenged. In Ahmed's (2017, 255) words, some people are ‘bruised by structures that are not even revealed to others’. Troublemaking involves pointing out those ignored instances of harm in social settings to direct attention to them (Ahmed, 2017). This could include pointing out that a joke is sexist, or that someone is being discriminatorily excluded. ‘Making feminist points, antiracist points, sore points,’ Ahmed writes, ‘is about pointing out structures that many are invested in not recognizing’ (Ahmed, 2017, 158). It is by pointing out those invisible structures that others can come to be troubled cby them. From this insight, we can take a question for AI incidents:

The second technique involves achieving a shared orientation. For Ahmed, trouble is experienced when actors become collectively oriented around a harmful element of a social environment (such as a sexist joke) and achieve a shared experience. Often this relies on emotive provocation. By focusing attention on harmful features of social life, a troublemaker can disturb or possibly outrage those present to acquire their collective attention. This can lead to interactions breaking down: previously people were laughing at the joke's punchline; now they’re feeling uncomfortable about its sexism. Provoking breakdown offers a technique for bringing actors into a shared experience in which issues can be articulated and debated. This is not to say that those present agree with each other, but that

The third technique is problematising harm. Ahmed's account of troublemaking is specifically interested in exposing harms, with the act of making trouble being designed to contest discriminatory social structures. In this respect, Ahmed translates Garinkel's ethnomethodology into a political tactic. Garfinkel famously taught his students to ‘[s]tart with familiar scenes and ask what can be done to

To sum up, troublemaking is a technique for pointing out and orienting actors around harmful structures by deliberately disrupting interactions. And it prompts us to ask of AI incidents: How did actors point out something about an algorithm that was previously invisible? How did actors use breakdown to achieve a shared orientation towards it? And how did they frame it as harmful? In the following section, I show how these questions can be useful for analysing AI incidents that unfold in networked environments such as social media, by applying them to Twitter's image cropping AI incident.

Networked trouble

In this section, I suggest that the ‘horrible experiment’ (Figure 1) can be understood as a form of troublemaking. Drawing on Ahmed, I ask: how did actors point, mutually orientate, and problematise harm? I argue that because these interactions unfolded in networked environments, meaning actors are connected across disparate spaces and times rather than in the same room (Cetina, 2009), trouble unfolded in particular ways. It relied on networked media, a format for participation, and the production of inscriptions. These features, which I elaborate in this section, point towards characteristics of troublemaking that unfolds in networked environments such as social media, or what I am calling ‘networked trouble’.

How did actors point?

The first observation we can make about the cropping AI incident is that it required the networking of media. The Twitter user networked his tweet with his followers; his followers networked it with their followers through likes and retweets and memetic remixes; and journalists embedded screenshots of them in their news stories. The troublemaking was networked, then, in the sense that it depended on networking media (photos, tweets, screenshots and news articles) with audiences to bring the previously invisible cropping algorithm into public awareness. This ‘networked pointing’, where people pointed at the algorithm's unrecognised biases through networked media, was necessary for coordinating the attention of actors that were fragmented across the spaces and times of digital environments. This in turn set the stage for disagreement and intervention by leveraging the affordances of networked environments to bring the previously invisible algorithm and its stakes into view.

How did actors achieve a shared orientation?

Shared orientation was made possible by the Twitter user's discovery of a

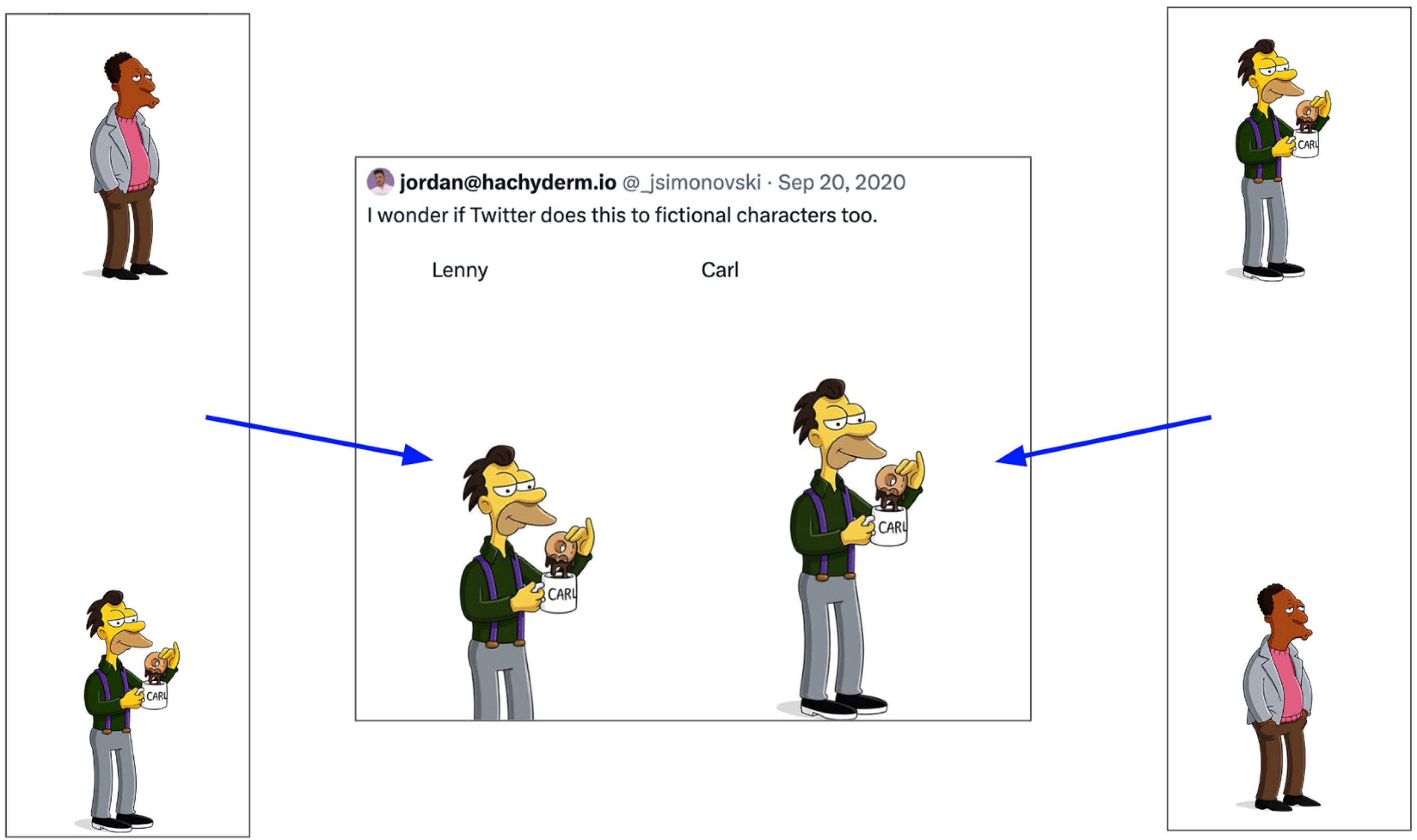

A visual explainer of the format for trouble applied to two characters from the cartoon TV series The Simpsons.

How did actors problematise harm?

Central to the “horrible experiment” was the production of an

In sum, I have presented three hypotheses for the study of ‘networked trouble’ in AI incidents: that it relies on i) the networking of media and audiences, ii) a

Networked trouble in AI incidents

To close, I argue that we require a research agenda to understand

As a final reflection, I suggest it can be helpful to think of AI as a participant in its own transformation, because it plays an important role in generating inscriptions that demonstrate its potential harms. This has important consequences for institutional and activist efforts to enable participation in the development of AI. AI may be an ally in its own contestation or refusal, as actors are increasingly coordinating with harmful algorithms outside of institutionally managed exercises, using networked formats such as ‘tests’. We might usefully consider AI as

Footnotes

Acknowledgements

I am very grateful to Professor Noortje Marres and Dr. Jonathan Gray for their helpful feedback on early drafts of this paper.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Arts and Humanities Research Council.