Abstract

Healthcare provision, like many other sectors of society, is undergoing major changes due to the increased use of data-driven methods and technologies. This increased reliance on big data in medicine can lead to shifts in the norms that guide healthcare providers and patients. Continuous critical normative reflection is called for to track such potential changes. This article presents the results of an interview-based study with 20 German and Swiss experts from the fields of medicine, life science research, informatics and humanities of digitalisation. The aim of the study was to explore expert opinions regarding current challenges and opportunities related to data-driven medicine and medical research and to provide a methodological framework for empirically grounded, continuous normative reflection. To this end, we developed a heuristic tool to map and structure empirical findings for normative analysis. Using this tool, our interview material points to a polarisation between individualistic and collectivistic orientated argumentations among experts. The study shows that a multilevel analysis is required to deal with complex normative implications of data-driven approaches in medical research and healthcare.

Keywords

Introduction

There is an increased use in medical research and healthcare of data-driven methods and technologies. Data-driven methods include in various combinations digital technologies as well as mathematical and computational techniques. 1 Whereas terms such as ‘digital health’ and ‘digital medicine’ remain rather broad, vague and normatively neutral, the term ‘data-driven’ puts the accent on the methodological approach. Following Shah and Tenenbaum (2012), Torkamani et al. (2017) and Hummel and Braun (2020), we understand data-driven medicine to be ‘medical research and care that rests upon consideration of large amounts of data and deploys algorithmic tools to guide prediction, prevention, diagnosis and treatment, for example in attempts to steer towards precision medicine […]’ (Hummel and Braun, 2020: 2, italics in original). As this working definition indicates, the data-driven approach in medicine and medical research is closely linked to a specific goal: the implementation of so called ‘precision’ or ‘personalised’ medicine. The concept of ‘precision’ or ‘personalised’ medicine has been promoted since the late 1990s and 2000s in the context of human genetics (Prainsack, 2017: 89). Thus, the data-driven approach, which nowadays includes not only biomedical data but also increasingly data from mobile health devices (‘mHealth’) such as wearables for clinical monitoring (Lucivero and Jongsma, 2018), goes hand in hand with the promise of ‘personalised’ and ‘precision’ medicine. Demand is high for the generation and permanent storage of more personal biomedical and health data to feed algorithm-driven data analysis. With ever-expanding troves of biomedical data from genomics, molecular genetics, digital patient healthcare and other sources in clinical and non-clinical contexts, massive data repositories are now available to developers of personalised medicine (PM). Those massive data and data-driven methods are expected by some observers to give rise to a new scientific paradigm in medicine (Moerenhout et al., 2018). Others speak of the ‘datafication of health’, expressing a more sceptical stance regarding data-driven healthcare (Ruckenstein and Schüll, 2017: 262). From this perspective, the ‘datafication of health’ is associated with a kind of ‘dataism’, ‘dataveillance’ or ‘data-driven capitalism’ (Sadowski, 2019). These terms imply that data analysis is taking on a role ‘between scientific paradigm and ideology’ (van Dijck, 2014). Whereas commentators from politics and economics as well as media figures in medical informatics are strongly enthusiastic about this development, ‘evidence is scare for successful implementation of products, algorithms and services arising that make a real difference to clinical care’ (Car et al., 2019: 1).

Continuous critical normative reflection

As with every new technology, normative ethics should subject the data-driven approach to continuous critical normative reflection (CCNR) about its advantages, disadvantages, opportunities, risks, limitations and potential alternatives. This applies all the more because new digital technologies and methods are being developed at a rapid pace. In the following we provide a short outline about our understanding of CCNR. CCNR should not only identify and evaluate current ethical, political and social issues, impacts and concerns (‘normative oversight’) – all induced by the development and implementation of new technologies – but also always demand ‘Ethical Foresight Analysis’ (Floridi and Strait, 2020). Furthermore, CCNR should be sensitive to two further dimensions: identifying shifts in basic assumptions and concepts and addressing public communities’ and stakeholders’ perspectives (c.f. Schicktanz et al., 2012) to capture differing national, cultural and historical contexts, and mentalities to ensure epistemic justice and normative contexualism. For this, literature review and empirical-participatory studies engaging with stakeholders’ and public communities’ views are considered complementary approaches enriching each other within the context of CCNR. For continuous reflection, both approaches ought to be conducted at regular intervals. A crucial objective of CCNR, at least to our understanding, is to identify and categorise key normative areas where technological and respective methodological developments are progressing. CCNR can provide a ‘big picture’ or ‘landscape’ of phenomena and normative issues as well as principles and values at different normative levels (from the individual to the collective) involved. Within the scope of CCNR, those big pictures bear a temporal and spatial index, for example, indicating an overview of issues at a certain time within a particular spatial context. In sum, we consider CCNR an approach to be conducted collaboratively by different scholars, discourses and studies such as biomedical ethics, data ethics, science and technology studies (STS), sociology and philosophy of technology, and technology assessment (TA), bundling individual-centred and collective-oriented perspectives and arguments together.

Aim and outline

The primary objective of this article is to contribute to CCNR by reporting an interview study we conducted with 20 experts from Germany and Switzerland. These individuals have been recognised for their leading expertise on digital society, interdisciplinary research and medicine in the German speaking context. The German context is peculiar as it has been identified as ‘front runner’ country among other European countries and WEIRD states 2 with regard to data protection regulation (Custers et al., 2017: 241) – a fact that might be relevant when it comes to data-driven medical research and healthcare. The purpose of our study was to explore the perceptions, appraisals and attitudes of this group regarding data-driven methods and technologies in clinical practice, medical research and regarding ‘PM’ generally. The results allow us to draw a picture of a selection of current normative issues and their assessment with a focus on the German-speaking expert community on data-driven methods in medicine. Since the topic is very broad, the methodological challenge of our analysis was how to integrate heterogeneity productively while reducing the complexity of the analytical and normative dimensions to be addressed. To do so, we developed a heuristic tool allowing us to map, categorise and evaluate complex and diverse statements in a systematic way. The introduction and application of this heuristic tool, which we consider a suitable method to support collaborative CCNR, is a secondary aim of this article.

We proceed in four steps below. First, we start with the description of six subject areas, which we consider as currently being intensively discussed in the biomedical data ethics literature, and which we focused on in our interview-study. Second, we explain our methodological approach. Third, we present our mapping of empirical findings on six ‘levels of normative analysis’ (LoNA), inspired by the mapping approach of Morley et al. (2020), which itself is inspired by the method of ‘levels of abstraction’ (LoA) by Luciano Floridi (Floridi, 2008). Finally, we discuss our main findings: two overarching normative argumentation patterns that show (a) how individual and collective considerations and goals are addressed and (b) there is a considerable stress on collective challenges among experts’ statements. We conclude with some reflection on how this might require going beyond established schemes in bioethics primarily focussing on an individual-centred perspective.

Background

Various attempts have been made in the last years to sketch out overviews of the ‘ethical landscape’ of data-driven approaches. The term ‘ethical landscape’, widely used in ethical analysis, indicates the diversity of normative issues arising in a specific subject of inquiry. The landscape metaphor also underscores that the subject matter can be transformed, (re-)designed and further explored, so bears a temporal index.

Previous work on mapping the normative issues

Two widely quoted examples for ‘mapping studies’ are provided by Mittelstadt et al. (2016) and Mittelstadt and Floridi (2016). Mittelstadt et al. (2016: 2) aimed to ‘map the ethical problems prompted by algorithmic decision-making’ in data-intensive approaches. In their literature review, they identified ‘six types of ethical concerns raised by algorithms’: (a) ‘inconclusive evidence’, (b) ‘inscrutable evidence’, (c) ‘misguided evidence’, (d) ‘unfair outcomes’, (e) ‘transformative effects’ and (f) ‘traceability’ (p. 4, Figure 1). The first three ‘concerns’ are characterised as ‘epistemic concerns’, the last three as ‘normative concerns’. In their systematic meta-analysis of academic bioethics and ‘big data’-related literature, Mittelstadt and Floridi (2016) identified ‘five key areas of concern’: ‘(a) informed consent, (b) privacy (including anonymisation and data protection), (c) ownership, (d) epistemology and objectivity and (e) “Big Data Divides”’ (p. 303). Six additional topics relevant in the near future were identified by consulted experts: ‘(f) the dangers of ignoring group-level ethical harms, (g) the importance of epistemology in assessing the ethics of Big Data, (h) the changing nature of fiduciary relationships that become increasingly data saturated, (i) the need to distinguish between “academic” and “commercial” big data practices in terms of potential harm to data subjects, (j) future problems with ownership of intellectual property generated from analysis of aggregated datasets and (k) the difficulty of providing meaningful access rights to individual data subjects who lack necessary resources’ (p. 303). A third widely quoted overview of normative issues and relevant values was provided by Xafis et al. (2019); this was developed by a working group of experts with feedback cycles. They identified ‘six key domains of big data in health and research’ for which they proposed ‘an Ethics Framework’. These are: (a) ‘openness in big data and data repositories’, (b) ‘precision medicine and big data’, (c) ‘real-world data to generate evidence about healthcare interventions’, (d) ‘AI-assisted decision-making in healthcare’, (e) ‘big data and public-private partnerships in healthcare and research’ and (f) ‘cross-sectoral big data’ (Xafis et al., 2019: 234).

Normative landscape of data-driven approaches

For our interview study above cited studies by Mittelstadt et al. (2016), Mittelstadt and Floridi (2016) and Xafis et al. (2019) functioned as a starting point for selecting relevant areas to be addressed in our German interview study. A literature research was additionally conducted to integrate recent trends, including the German context. After a second stage of condensation, we decided that eight normative topics are crucial for our study as they cover general, recent topics: (1) the notion and vision of ‘PM’, (2) the use of digital mobile measurement devices (wearables and health apps), (3) the practice or goal of linking medical and non-medical data, (4) the use of algorithmic systems and artificial intelligence technologies for clinical decision-making, (5) the eventual paradigm shift in medical knowledge and the concepts of health and disease and (6) requirements for regulation and social transformation (see also Table 2). Two more additional topics in the interviews were (7) ‘consent issues in data ethics’ and (8) ‘citizen science’. For our article here, we focus on topics (1) to (6), discussed in order below. Topics (7) and (8) proved to be so extensive and complex that we decided to present and discuss our results separately elsewhere.

‘Personalised medicine’ is the vision of tailoring diagnosis and treatment of diseases to the individual patient’s genetic and molecularorganic dispositions. It stands in opposition to traditional ‘one size fits all’-medicine, by which for example the same drug and dosage is prescribed to patients who have a similar diagnosis although they have different characteristics and medical backgrounds. However, the concept remains vague and has been disputed for years. For some, it remains more a buzzword than a concept, ‘[p]romising to make health care more effective and efficient’ (Schleidgen et al., 2013: 9). 3 For others, personalisation in medicine indicates the already implemented strategy of stratifying patients into medical subgroups (e.g., of types of immunological reactions to tumours) with the consequence that treatments are differentiated by subgroups. Suggestions to modify the term ‘personalised’ with ‘precise’ or ‘precision’, ‘predictive’ or ‘participative’ (c.f. Flores et al., 2013; Hood and Friend, 2011) are attempts to resolve concerns about conceptual deficiency. An important question remains whether personalised medicine has the potential to become a general approach in healthcare.

Normative subject areas, main topics in questionnaire and categories ( = first-order codes) for deductive coding and analysis.

The use of digital mobile measurement devices such as wearables and health apps allow patients and research participants to be monitored in clinical contexts as well as during daily activities. The use of mobile digital technologies, also called ‘mHealth’, generates massive amounts of individual health, location, social and other data. Since mHealth intensifies the amount and diversity of data in a substantial and new way, some speak of a ‘mHealth revolution’ (Ganasegeran et al., 2017; Lucivero and Jongsma, 2018; Waegemann, 2010). Key ethical issues are privacy, autonomy, data ownership, storage, codification, accountability, security, bystanders, third-party use, the role and power of tech companies and transparency about the data collected by these mobile devices (Schmietow and Marckmann, 2019).

Data linkage of heterogenous, individual health and non-health data follows the idea of combining different types of data and data sources to obtain denser datasets for analysis at the individual and population level. As Harron et al. (2014: 1) note that in research ‘the success of such data linkage depends on data quality, linkage methods and the ultimate purpose of the linked data’. While data linkage has a large potential as a tool in observational research (Bohensky et al., 2010), it can also be employed by health insurance companies and private tech-actors to construct predictive models for individual risk profiles. As data linkage uses personal data from different data sources and thus involves heterogenous data actors (such as tech firms, research institutions), two key concerns are privacy and consent (Boyd, 2007; Xafis, 2015).

The use of algorithmic systems and artificial intelligence technologies such as machine learning for clinical decision-making regarding diagnosis and treatment raises a plethora of epistemic as well as ethical questions of reliability, explainability, opacity, paternalism, automation bias, trustworthiness, the potential traceability of supposedly anonymous data, fairness and moral responsibility (Floridi et al., 2018; Goddard et al., 2012; Grote and Berens, 2020; Mittelstadt et al., 2016). From an ethical point of view, crucial questions revolve around what role algorithmic systems ought to play in clinical decision-making, and the consequences of integrating (opaque) algorithmic systems into the doctor–patient relationship (McDougall, 2019).

The application of data-driven methods may lead to a paradigm shift in the generation of medical knowledge. This raises epistemological questions, with normative implications, because the use of data-driven methods may change our concepts of health and disease. One key controversy points to the potential of an overall shift from causation to correlation in our expectations about what scientific knowledge is, with a corresponding transformation of the goals, methods and implications of scientific inquiry (Skopek, 2018).

Taking all these subject areas into consideration, overall questions of the requirements for legal regulation and social transformation arise. What changes in society are necessary such that we are able to anticipate and manage the rapid digitalisation of the healthcare system? To what extent should data-driven developments in medicine and medical research follow societal needs, and what is necessary for it to do so? Samerksi and Müller (2019) note in this context the claimed need for a special kind of data literacy, termed as ‘digital health literacy’, for managing the digital transformation healthcare system successfully.

Methods

As previously described, the three frequently quoted mapping and framework studies and our own literature review informed the development of an interview guideline for expert interviews. We employed a semi-structured expert interview design as a form of ‘exploratory expert interview’ (Bogner et al., 2014), since the field is currently changing rapidly and is influenced by various disciplines.

Our study is a part of a larger German research project tasked with evaluating, from an ethical and political perspective, a research initiative of the German Federal Ministry of Education and Research (BMBF) that is intended to strengthen medical informatics in German clinics and university medical centre hospitals. 4 This initiative comes late relative to other industrialised countries such as Great Britain, where biobanks were established in 2007, or Sweden, where the electronic health record was established in 2012. Thus, our interviews allowed us to explore expert views and attitudes regarding a still-nascent transformation process as currently occurring in the German-speaking countries. Ethics approval was obtained from the Local Ethics Committee on Human Research at University Medical Centre Göttingen (No. 28/7/18).

Population and recruitment

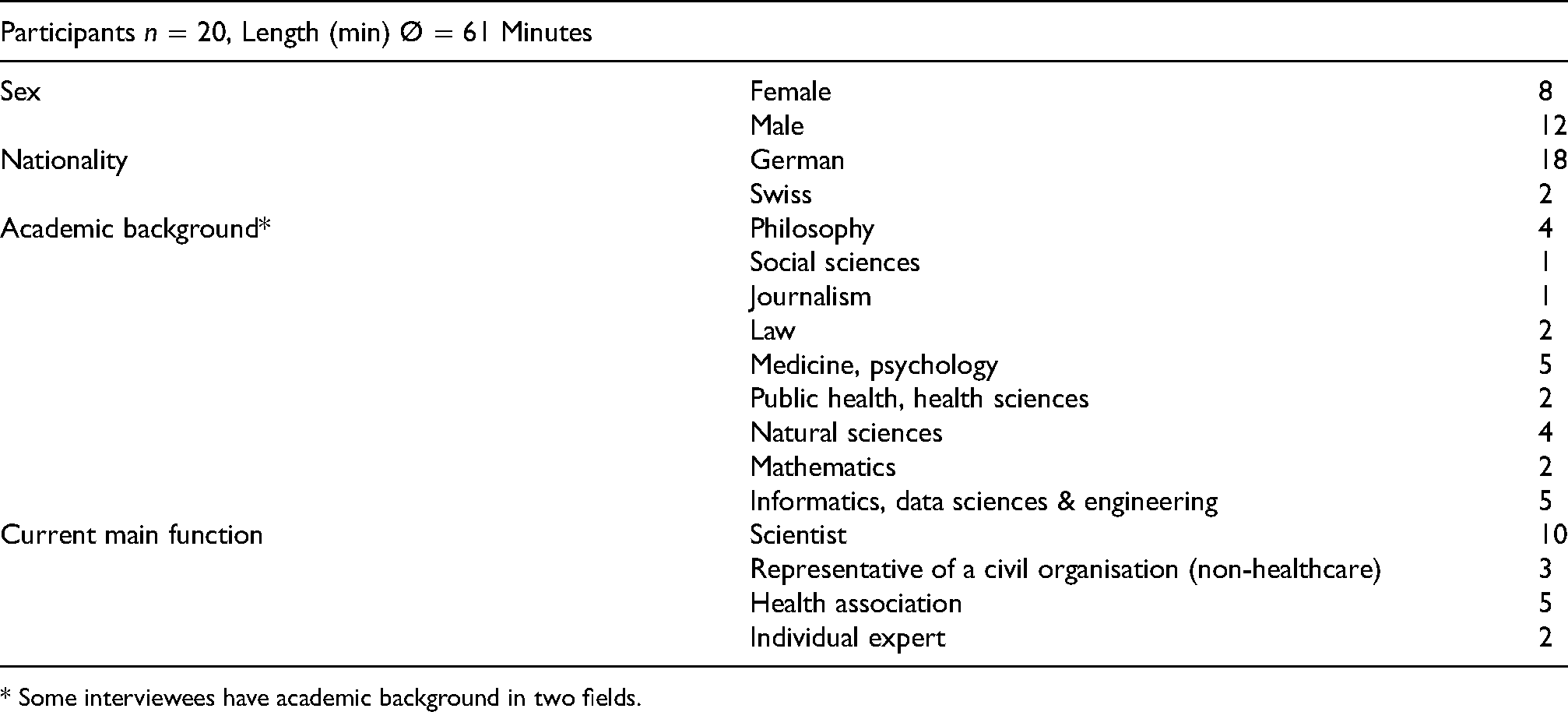

Experts were selected on the basis of our own assessment of their prominence in public and expert discussions (e.g. members of public policy advisory boards). We selected individuals covering different disciplines: natural sciences, medicine informatics, data sciences, philosophy and ethics, sociology and law. Additionally, we searched for representatives of civic organisations or advocacy groups with wide competency in digital society, digital medicine and health. The experts were identified through Internet and literature research. Thirty-three potential interview partners were contacted. Three could not participate due to conflicting appointments or illness, four referred us to other persons, one person was not interested and four did not respond. All interviewees are experts insofar as their practice and knowledge centres on in data-driven approaches and applications in medical research, clinical practice or digital society (Grundmann, 2017). The interviews were conducted between August 2019 and March 2020 (Table 1).

Characteristics of the interviewees.

* Some interviewees have academic background in two fields.

All interviewees received the same invitation letter and signed a written consent form. If requested, the questionnaire was sent in advance. The interview guide consisted of four questions regarding professional background, the aforementioned six topics (see above) and four questions concerning consent models in medical research and citizen research, but these two latter topics are evaluated elsewhere. The interviews were conducted by telephone or, in three cases, in person. The interviews lasted 61 min on average and were held in German. Afterwards, the interviews were transcribed and interviewees’ personal information removed.

Content analysis

We analysed the transcripts of the interviews by methods of qualitative content analysis drawing on Mayring and Fenzl (2019) and Kuckartz (2018). We focused deductively on six thematic codings (‘categories’) representing the six subject areas mentioned above. The categories were differentiated systematically by subcodes (i.e. opportunities and challenges) and complemented with inductive codes. Additionally, thematic categories were supplemented by the category of academic background of the interviewee. The coding list was checked for validity and consistency by two peers using parallel peer coding (Table 2).

The method of mapping the LoNA

Our empirical-normative analysis consisted of two steps. First, we mapped our empirical findings from the interviews using a grid that deploys different Levels of Normative Analysis (‘LoNA grid’). Second, we highlighted the most relevant findings with regard to a normative analysis (see Supplemental Material 1) and illustrated them with selected quotations from expert interviews.

Our analysis revealed a wide range and complexity of normative positions on each of the main topics. To encompass the various ‘dimensions’ of normative analysis, we paraphrased experts’ statements and collated them into the six main topics and into analytical levels. In the sense of the ‘mapping review’ or ‘systematic map’ (Grant and Booth, 2009: 97–98), this approach can be characterised as ‘mapping analysis’. Analytical levels were drawn from the typology proposed by Morley et al. (2020: 7). Referring to Floridi’s (2008) ‘Method of Levels of Abstraction (LoA)’ and to Grant and Booth’s (2009) explication of the ‘mapping review’, they proposed six different ‘LoA’: (a) individual, (b) interpersonal, (c) group, (d) institutional, (e) sectoral and (f) societal. Morley et al. (2020: 2–3) describe LoAs ‘as an interface that enables one to observe some aspects of a system analysed, while making other aspects opaque or indeed invisible’. We kept this methodological matrix, but in the light of our normative analysis, we call the stratified classifications ‘LoNA’ instead of ‘LoA’. We define the distinct levels, starting from the individual and personal level and covering several collective levels as follows.

‘Individual’ addresses the individual as person with fundamental rights and moral autonomy, and as a subject taking a certain social role (here: patient, physician) ‘Interpersonal’ addresses the relationship between two persons (here: doctor–patient relationship) ‘Group’ addresses the social collective constituted by individuals sharing a certain characteristic (e.g. chronic patients, elderly people and medical professions) ‘Institutional’ addresses commonly shared rules, regulations and practices ‘Sectoral/organisational’ addresses aspects of social sectors (e.g. healthcare, insurance) and the activity of large organisations (e.g. companies, big tech firms) ‘Societal’ addresses the society as a whole or general aspects of society

By additionally subdividing the LoNA according to ‘opportunities’ or ‘benefits’, ‘challenges’ and ‘risks’ (or ‘requirements’ for the topic of regulatory and social requirements), we obtained a grid for each subject area that compiles the empirical findings in a clearly structured way. When interviewees repeated their appraisals regarding certain topics within the interview, it is registered as one statement to avoid bias due to verbal repetitions. However, the grid not only mapped the empirical findings but also prepared and contributed to the empirical-normative analysis. Thus, the grid itself served as a tool for normative analysis and reflection. As the grid was applicable for every subject area, we named it ‘topical LoNA grids’ (see Supplemental Material 1). At the same time, the subdivision into six levels allowed a more detailed mapping and analysis, which is particularly appropriate to the complex field of data-driven medicine.

Results

Most of the interviewed experts were highly sensitised to differences among various ethical issues and subject areas. This high level of differentiation led to highly reflective evaluations of data-driven medicine; adamantly pro or contra positions were not evident. This held true across disciplinary and stakeholder boundaries.

After mapping the empirical findings using the LoNA grid, we created six tables that provide an overview of exemplary interviewee statements in each subject area (see Supplementary Material 1). The essential results for each area are presented below. In view of the richness of the material, we decided to focus at maximum on four main findings. We illustrate central findings with experts’ quotations, which are translated by the authors from the German.

Concept of PM

Strong reservations about the concept of PM as a general approach for clinical practice

The promise of the PM approach was seen to lie in the more precise diagnosis and treatment of previously neglected groups and subgroups through the analysis of molecular and genetic data. However, many interviewees articulated reservations, specifically in terms of feasibility of PM as a general, non-stratified account in medical practice. PM was widely judged to be not applicable for all patients and for all type of diseases and thus was not considered a valuable approach at the institutional level of clinical practice. A number of interviewees stated that PM is hardly being implemented yet, and some even doubted that PM is being used in Germany at all, calling it a ‘vision for the day after tomorrow’ (D92_21:48). Some experts remarked also the wide gap between the promise of PM and its actual implementation. Many stressed that the concept and goals of PM are limited in two specific respects. First, the individual analysis of molecular genetics makes sense, if at all, only for a limited range of diseases and fields, such as oncology and pharmacology. In those limited domains, PM might help patients and doctors to optimise individual therapeutic decisions. Second, the term ‘personalised’ is misleading because the underlying methodological approach is based on statistical analyses of data from large numbers of patients, not on ‘individual’ data belonging to one person. One interviewee put it this way: I would always prefer to use the term ‘individualised medicine’, because it’s about the human person. ‘Personalised’ does have ‘person’ in it, but actually it’s the mask, so to speak, where something comes through. What was always meant by ‘personalised’ medicine in the first years was to be as genomically informed as possible…. (D101_16:40)

Use of digital mobile measurement devices (DMD)

With regard to the application of DMD, a number of issues were identified by our interviewees. A widespread view among them was that DMD have clear benefits for healthcare on the individual and on the group level, that is, for individual patients, physicians and patient groups. However, these benefits are associated with new challenges for everyone involved and for the institutional arrangement of clinics and research institutes, as the findings presented below indicate.

DMD have much potential to empower patients and support physicians

For the patient, the possibility of self-monitoring via DMD would, so the expressed view, increase health awareness and could improve chronic patients’ self-regulating disease management. It could also motivate patients in general and certain patient groups to adopt healthier lifestyles. The opinion was repeatedly articulated that the use of DMD ‘activates’ patients and strengthens their role in healthcare. [S]o the role changes from the somewhat passive role of the patient to a more active role. The patient becomes, so to speak, his own co-therapist through these possibilities. He not only has the…data, he also intervenes concretely. There are very different functions. (D91_09:27)

Dealing with massive amounts of health data as challenge on several levels

Many expected benefits associated with the application of DMD in healthcare were counterbalanced by a number of perceived challenges on the individual and interpersonal level. For example, many experts considered correct and appropriate interpretation of MHD to be a major challenge. The absence of routines and experience with massive amounts of health data could lead to dependency, uncertainty or even ‘actionism’, that is, taking action only for the sake of acting, as one interviewee called it (D74_22:21). This could negatively affect the patient, the doctor and the doctor–patient relationship. Insecurity and uncertainty were seen as becoming increasingly acute, and the more evaluation and assessment of data become the focus of medical decision-making. The following comment illustrates this point: The doctor-patient relationship changes. Because it makes both of them more dependent on data collected by others, evaluated by others and which could also represent a kind of independent or supposedly independent authority. And with that, both are unsure about what the interpretation, the evaluation, the consequences of these data sets could be. (D74_01:36)

Establishing data literacy as a collective practice as condition for success

A common view among interviewees was that the plethora of MHD is creating more epistemic and cognitive uncertainty, which has to be countered by strengthening data literacy. Strengthening data literacy was seen as a pragmatic response to uncertainty. It was considered to be critically necessary that literacy of MHD accompany the overall approach of data-driven medicine and healthcare. To formulate this positively: if health data literacy is high, MHD could support the physician in diagnosing and treating a disease and evaluating its course, thus having particular benefits at the individual level. However, concerns about data literacy were expressed in manifold ways. Improving health data literacy means not only – as several interviewees stated – a call for changes on the individual and inter-personal level but also challenges for healthcare institutions and for the healthcare sector. Certain deep-rooted conventions and professional cultures would have to change because establishing data literacy requires the ability to generate individual and shared interpretations, strategies for dealing with deviations and appropriate, effective communication of results. For this, as multiple interviewees stated, strong interdisciplinary and interprofessional collaboration would be necessary. One interviewee put it this way: This cooperation has already taken place but can certainly take place much more strongly in the future and perhaps new professions or new training courses must also be created at this interface in order to ensure this translation of expectations. This is sometimes very, very difficult, because you come from different ways of thinking and you have a different language and you have different research methods, and it is very challenging to derive a common denominator. (D102_17:56)

Challenge for the medical sector due to undetermined role of big tech companies

The advantages of health data literacy are less likely to be realised, however, if no high-quality data are available, if data repositories are insecure and if data storage and use are not transparently regulated. Addressing the last point, a recurrent concern in the interviews was the lack of transparency at the organisational level, concretely about the role and power of DMD providers (see Supplemental Material 1, Table 2).

Data linkage

In their remarks about the linkage of medical and non-medical data, opinions differed regarding which stakeholder groups might profit the most from it.

Data linkage holds the promise of benefits for individuals and research but comes with risks

As with DMD, the advantages and disadvantages mentioned spanned the individual, organisational and sectoral levels. Several experts assessed that data linkage will mainly bring advantages in clinical practice (diagnosis, treatment, prevention) for individuals, since an enriched data base would enable doctors and patients to make better decisions. As one interviewee put the patient's benefit: So of course, if you have a comprehensive database, you can always say that the benefit for the patient is there. Because you have a larger decision-making basis. Of course, that is an advantage. (D86_05:10)

Strong reservations, however, were expressed about data linkage conducted by third parties such as health insurances or tech companies, such as Google or Apple, providing the technical infrastructure for data storage, e.g., Apple's iCloud. First, there is always the risk that individuals may become traceable within such data sets. Second, by making it possible to link data of specific behaviours to risk-dependent insurance pricing, there is the risk that the principle of solidarity – foundational to the German public health system – could be undermined. While the former risk would steadily increase due to improved algorithmic data analysis systems (e.g. machine learning), the latter risk would become a reality when health insurance companies start ‘personalising’ their insurance contracts. Pricing based on personal risk would decrease costs for socially advantaged groups, so it would possibly benefit some individuals and groups, but because it would also increase costs for the socially disadvantaged, it would disadvantage others. This risk must be seen in the national and historical context of the German health insurance system, as one interviewee considered: It is important that the principle of solidarity in health insurance not be undermined. And we have to think very carefully about what forms of insurance we want to decouple [from the principle of solidarity] and what forms we don’t want to decouple. This is speaking very, very generally and is naturally a German way of seeing things. (D83_03:16)

Algorithmic-based assistive systems (AAS)

When the interview partners were asked about opportunities and risks associated with the application of algorithmic assistive systems in clinical practice, one distinct advantage and a number of critical issues were identified. Interestingly, concerns expressed about AAS replicated and reinforced the interviewees’ concerns about digital mobile measurement devices, MHD and data linkage.

AAS substantiate concerns regarding digitalisation

The positive side of AAS, in the judgement of many interviewees, is the potential for supporting clinical decision making, whether it be in diagnostics or in health prediction. It was stated that AAS would in particular support physicians in diagnostics by providing a kind of second opinion and accelerating the diagnostic process. A number of interview partners mentioned that the machine learning systems currently in use for pattern recognition (in dermatology and radiology for example) have demonstrated good performance. A few interviewees even attributed to AAS the potential of compensating for human fallibility. As one interviewee said: I believe that in order to work around these deficits we need AI and Big Data very urgently, because I have seen, more than once, mistakes made because someone did not perceive or wrongly perceived certain things, which through Big Data and AI possibly would have been avoided. So, I think if we say yes to digitalisation in diagnostics and therapy, we also have to say yes to digitalisation in the evaluation of these findings. (D85_04:25)

With regard to the perceived overall benefit, a slight ambiguity is present in the answers. Some interviewees stated that AAS could support conversation and dialogue between physicians and patients, in this way having a ‘personalising’ effect, and provide a benefit for the interpersonal level. Doctors would be able to give more accurate advice on the individual level, and the interpersonal relationship would profit from using assistive systems. Others considered the application of ASS as factor for ‘automation bias’ (D95_20:24) at the individual level stemming from over-reliance on standard models to the neglect of a patient's physical and personal individuality. Automation bias was explained by one interviewee as follows: […] [T]he problem of automation bias […] means the tendency of people to rely on what is displayed to them without necessarily understanding it (D95_20:25).

Many experts also mentioned concerns at the institutional, respective regulatory level. First, there is a substantial problem with quality and control of training data used for training and preparing ASS before implementation in clinical practice.

Second, ASS are still prone to considerable error rates related to specificity and sensitivity (false positive and false negative results). These limit the performance of ASS. In sum, not only digitisation and the explosion of health data were seen as a challenge for all stakeholders and professionals in healthcare, but also the computational methods of their analysis (see Supplemental Material 1, Table 4).

Medical knowledge and concepts

When interview partners were asked about potential changes in the way medical knowledge is produced with support of data-driven methods, some interviewees emphasised that these methods could help to discover previously undiscovered correlations. Others noted that such correlations cannot replace knowledge about causal relationships and should be used with caution.

New resource for knowledge generation but on shaky foundations

A few interviewees saw epistemological value in correlations and models computed by machine learning methods. However, the majority of our interview partners stated that data-driven methods would change knowledge production profoundly. The interviewees evaluated the actual and potential impact of data-driven methods on the conceptualisation of health and disease differently. Overall, there was a sense that a change in the conceptualisation of ‘health’ and ‘disease’ had already started with human genomics and had led to a kind of transforming the dichotomical arrangement of the concepts of health and disease into a polar continuity. As one interviewee said: I think that a change is also occurring maybe now not only through Big Data but generally through technology, that you start thinking of being healthy as only for the time being, being latently ill. (D102_17:22)

Requirements for regulation and social transformation

One dominant theme of almost all interviews was the need for broad societal deliberation on digitalisation in general and public discussion about the effects and governance of the digitalisation of medicine and healthcare in particular.

Urgency of public discourse on data-driven medicine

The need for juridical and legislative regulation was often mentioned, such as registration and authorisation for health devices and (international) standardisation of health data quality and measurements. Beyond consideration of technical aspects such as encryption, interoperability and disclosure of application programming interfaces (APIs), many experts emphasised the need for an intense public discourse about data-driven medicine. Public deliberation should deal with questions such as data ownership, profit and transparency. One participant described these concerns as follows: But in fact, for me this is not at all a purely legal question or a question of data security. If one wants to advance this Big Data model in medicine, I really see a responsibility for society as a whole. […] Because the sober, legal view alone doesn’t get us any further at this point. As a lawyer you might say, well, since the patient has been transparently informed, [data gathering] must be ok. But the question is what does that do to our society? What does that do to the individual patient? (D86_05:15)

One interviewee stated that data-driven medicine should not be taken ‘as a purely medical phenomenon […] but rather, [as] a social process […] which also, and rather belatedly, is gaining a foothold in medicine’ (D85_19:30). The interviewee added that for him ‘the ethical discussion about this has long reached the public and politics’, and questions on ‘regulations for medicine’ are not in the ‘foreground’ but rather are embedded in general debates on the ‘possibilities’ that ‘AI and big data offer us [and] that we have to learn to deal with’ (D85_19:40). Another interviewee stated that is important to reconsider the whole subject of data-driven approaches in medicine in the light of societal justice and related issues of discrimination and inequalities: Questions of how all these digital technologies change accessibility, what new forms of discrimination or bias there are, and how existing injustices or inequalities in the health care system [can] be remedied if necessary (D95_40:13).

Results: current normative benefits and challenges across normative levels of analysis and along six subject areas.

Discussion

We turn now to two observations that stand out clearly from the interview material. The first observation is that the argumentation patterns regarding potential opportunities and problems follow a classical methodological and social-ontological division in political theory: the individual versus the collective.

Individualistic and collective orientations in the argumentation

Our mapping analysis revealed that the German experts interviewed showed a tendency to focus on the individual, institutional and sectoral/organisational levels in their statements. The societal level was also addressed, but less often. Interestingly, the interpersonal (doctor–patient relations) and the group levels were addressed less often. Especially regarding digital mobile measurement devices (i.e. wearables) and MHD, interviewees focused on the individual level (see Table 2 in Supplemental Material 1), mentioning individual benefits for the patient and the doctor. Furthermore, our findings indicate a remarkable tendency among the participating experts to point out problematic issues and possible negative consequences on the institutional and organisational levels. So, there are two normative orientations present in the experts’ answers. First, the interviewees engaged in individual-centred argumentation and evaluation of benefits, chances and drawbacks for the individual (e.g. ‘empowerment’ of the patient, physician support in diagnostics). On the other hand, interviewees’ arguments focused in substance on the impacts on and challenges for institutional and organisational structures and their normative pillars (i.e. solidarity, justice and equality). This collectivity-orientated argumentation was in a few cases explicitly underlined by references on the ethical and political principles of ‘solidarity’, ‘justice’ and ‘public discussion’. To our understanding, these political-ethical principles as well as the focus on the individual served as background for problematising the long-term implications of data-driven medicine.

Individual benefits and collective challenges

In bioethics and data ethics literatures, there seems to be a tendency to follow those health policy and technology-focussed campaigns that claim that data-driven methods will improve healthcare and offer great potential for medical research. Unquestioned are the underlying assumptions that society needs high-performance digital technology and tech companies need to produce new healthcare solutions. In terms of our matrix, these benefits would be located at the institutional and sectoral levels. Ethical reflection in this strand of discussion is primarily directed at identifying and problematising ethical implications and dangers for the individual (patient, user). These individual challenges and risks are examined in the light of the classical principles of medical ethics (Beauchamp and Childress, 2012), namely ‘respect for autonomy’, ‘beneficence’, ‘non-maleficence’ and ‘justice’. The data ethics literature recently added a fifth principle, ‘explicability’ (Floridi and Cowls, 2021). The expected emphasis would be then mostly on the individual-related principles of respect for the autonomy of the patient (translated as the imperative of respect for individual privacy and self-determination) and individual harm.

Interestingly, the argumentation of our interview partners showed exactly the opposite tendency: potential benefits were mainly identified on the individual level, while for the collective level, significant challenges – and fewer benefits – were noted in all subject areas. In general, their evaluations were predominantly oriented towards collective and institutional concerns.

The German author Julie Zeh's novel Corpus Delicti (2009) illustrates well the inversion of perspectives observable in our German interview material. In Corpus Delicti, which is now taught in schools in Germany, a futuristic, benevolent state dictates individuals’ behaviour and bodies to achieve the common good of a healthy collective. The system is viewed critically by Mia, a biologist, who comes to the realisation that the state not only invades privacy but also makes mistakes at the cost of human lives and individual rights. The novel can be seen as dystopian comment on the collective objectives of health, data sharing and a misinterpreted solidarity that overrides individual interests, rights and benefits. Hence, the novel presents a classically liberal warning about the collective benefits provided by technology: they necessarily collide with individual benefits and rights. In contrast, our expert study points in another direction, namely that collective benefits are in no way inevitable.

In sum, our findings indicate a need for bridging the assessment of individual benefits and drawbacks and the assessment of institutional, organisational and societal impacts when reflecting on the various facets of data-driven medicine (c.f. Williams, 2005). This result broadly supports the relevance of work that considers the collective implications, for example, ‘community solidarity’ in healthcare (among many others, see Biller-Andorno and Zeltner, 2015; Davies and Savulescu, 2019). It is therefore not surprising that in recent bioethics literature, PM has itself become a target of a critique that affirms the foundational – and not merely the institutionalised – role of the principle of solidarity in many European healthcare systems (Martinović, 2019; Prainsack, 2017).

Common goods and goals

To our understanding, the classical liberal–communitarian debate in political ethical theory shines through here (c.f. Christensen, 2012; Taylor, 2003). In brief, this debate deals with the question of whether society can and should agree on a substantive formulation of the common good in order to form a just and peaceful social life (a communitarian position would say yes, a liberal position would say no). When applying this perspective to our topic, it raises the following questions. First, is there some common or collective good to be realised through using data-driven medicine? Second, if so, how we should weight individual goods (e.g. empowerment and self-determination) relative to collective goods (e.g. saving costs in public health or being internationally successful in medical research)? Finally, how should we deliberate about these questions (cf. van Beers et al., 2018)? Our interviewees clearly expressed their perception of a need for societal deliberation to clarify the concrete goals of data-driven medicine and technologies such as artificial intelligence. Furthermore, issues such as (health) data literacy were conceptualised by the experts both as individual empowerment and as collaborative practice and thus as a kind of collective good.

Individualistic ethics, which builds on the premise of the autonomous individual, having a focus on individual benefits, and which discounts the effects of social connectedness, reaches its limits in the confrontation with inherently collective phenomena (cf. Callahan, 2003; Etzioni, 2011). Future normative approaches should strengthen the theoretical conception of individual benefits and costs linked to the instrumental and enabling powers of collective actors (Beier et al., 2016).

Conclusions

After a decade of discussion of the ethical and social implications of PM, and after about half a decade debating the data-driven approach and various digital technologies in use now, we need a more refined assessment of their normative dimensions. In recent contributions to social science and social theory, the advance of new forms of normativity has been identified as ‘digital normativity’ (Fourneret and Yvert, 2020) or ‘algorithmic normativities’ (Grosman and Reigeluth, 2019). Interestingly, many interviewed experts expressed the similar idea that data-driven medicine would produce new kinds or accents of normativity in the medical and health context. Against this backdrop and to round off our contribution for CCNR, we propose transferring Fourneret and Yvert's concept of ‘digital normativity’ to the medical sphere as ‘digital health normativity’. The results of our interview study indicate what it covers. First, there is the risk of automation bias as described and explained by one interviewee in situations of clinical decision-making. Second, there is a risk that with the application of data-intensive devices (i.e. wearables) as well as AAS (i.e. machine learning and AI systems) in diagnostics and treatments, patients’ individual health status and norms might be overlooked due to an overreliance on statistical models. The temptation is strong to give statistically ‘normal’ values the status of medical norms, thereby overriding individual values and subjective perceptions regarding health or body status. Third, public debate should influence the fields of application, the roles and the purposes of high-performance technologies in medical care and research.

Our interview-based study was designed to help to trace current normative assessments in order to make an empirically based contribution for the ongoing critical examination of data-driven approaches in medicine. The method of the LoNA grid helped in describing and mapping the complex normative landscape in six subject areas within the broad field of data-driven medicine and along six LoNA, revealing underlying patterns and orientations in interviewees’ arguments. Our LoNA- grids revealed a strong motivation among experts to reflect on the consequences of the data-driven method for the individual, as well as to reflect on transformative effects in (medical) science, in the organisations of healthcare and health insurance and in society as whole. Furthermore, our study showed that there is high demand among experts for public deliberation about subjects concerning data-driven medicine far beyond legal regulations and ethical recommendations on data security and technical standardisation.

We conclude with a methodological proposal. We suggest that CCNR on data-driven medicine and healthcare would benefit from the following three directives (see also Supplemental Material 2):

Reflect on basic notions and contested concepts that pre-structure various lines of argumentation. In our study, the focus was on PM and the concepts of health and disease, but it also includes a political dimension: which actors make which concepts practically relevant and to what extent do certain conceptualisations express certain interests? Cover and integrate several subject areas related to technological and methodological developments. In our study, we covered six subject areas in the context of data-driven medical research and healthcare. Subjects that stretch across two or more areas should be addressed as well. Elaborate different LoNA allows one to identify underlying (social) ontological patterns and normative frameworks in argumentation. We suggested six levels – individual, interpersonal, group, institutional, organisational and societal – and we identified individualistic as well as collective and societal orientated argumentation patterns.

The LoNA grid provides a heuristic tool to approach systematically normative analysis with these three rules of analysis. Bioethics and data-ethics research should critically and continuously examine existing and imminent developments in the field of data-driven methods and technologies.

Supplemental Material

sj-pdf-1-bds-10.1177_20539517221092653 - Supplemental material for Individual benefits and collective challenges: Experts’ views on data-driven approaches in medical research and healthcare in the German context

Supplemental material, sj-pdf-1-bds-10.1177_20539517221092653 for Individual benefits and collective challenges: Experts’ views on data-driven approaches in medical research and healthcare in the German context by Lorina Buhr and Silke Schicktanz in Big Data & Society

Supplemental Material

sj-pdf-2-bds-10.1177_20539517221092653 - Supplemental material for Individual benefits and collective challenges: Experts’ views on data-driven approaches in medical research and healthcare in the German context

Supplemental material, sj-pdf-2-bds-10.1177_20539517221092653 for Individual benefits and collective challenges: Experts’ views on data-driven approaches in medical research and healthcare in the German context by Lorina Buhr and Silke Schicktanz in Big Data & Society

Footnotes

Acknowledgements

The interview study was conducted within the Work Package ‘Ethics & Stakeholders’ as a part of the HiGHmed: Heidelberg – Goettingen – Hannover Medical Informatics consortium (HiGHmed). Scott Stock Gissendanner deserves a special thank for his thorough language editing.

Funding

This work was supported by the HiGHmed consortium, which is funded by the German Federal Ministry for Education and Research (Bundesministerium für Bildung und Forschung, BMBF) (Grant No. 01ZZ1802B). We acknowledge support by the Open Access Publication Funds of the Göttingen University.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.