Abstract

Corporate actors have been actively shaping the normative framing and socio-material infrastructure related to AI governance. We show how they achieve this by analysing documents from corporate actors headquartered in China, Germany and the US from 2017 to 2023, a pivotal period in the formation of public understanding of the topic. Drawing on conceptual work around ‘socio-technical imaginaries’ and corporate strategic behaviours, the analysis identifies ‘hedging’ imaginaries such as ‘Responsible AI’, ‘Trustworthy AI’ and ‘AI for Good’ through communication and stakeholder-networking as a key strategy used by corporate actors to negotiate socio-political differences and navigate regulatory pressure in their interests. In doing so, we highlight the need for critical reflection on current AI governance discourse in relation to public imagination and the making of future socio-technical orders.

Keywords

Introduction

Since the coinage of the term ‘Artificial Intelligence’ (AI), strategic ambiguity has surrounded its meaning, fuelling cycles of hype and disillusionment (Markoff, 2016). Despite growing public attention, AI remains a notoriously fluid and contested concept. Yet this very vagueness drives its formation: AI functions as a cultural myth (Natale and Ballatore, 2017) and an ‘umbrella term’ that aligns scientific, political and commercial interests (Rip and Voß, 2013). More recently, ‘AI governance’ itself has emerged as a similarly encompassing term to organise normative debates around AI's social integration (Butcher and Beridze, 2019; Dafoe, 2018; Mäntymäki et al., 2022).

In this process, private technology companies have become central actors not only by building infrastructures and allocating resources (Dijck et al., 2018; Lehdonvirta, 2022; van der Vlist et al., 2024), but also by shaping public discourses on AI's benefits and risks (Brause et al., 2023; Brennen et al., 2018; Mao and Shi-Kupfer, 2023; Zeng et al., 2020). They have been liberally using slogans such as ‘Responsible AI’, ‘Trustworthy AI’ and ‘AI for Good’ to promote visions of AI governance. Yet, despite some early mappings of corporate ethics principles (Fjeld, 2020; Jobin et al., 2019; Lütge, 2020), we still know little about how corporate actors have shaped these normative discourses and their broader implications for AI governance.

We address this research gap by examining the communications about AI governance from corporate actors pushing the frontier of technological development. Because China, Germany and the US have emerged as jurisdictions with both ambitious AI strategies and distinctive regulatory approaches to digital technologies (Bradford, 2023), we conduct a comparative study by analysing 102 documents from 30 corporate actors headquartered in these countries published during the period from January 2017 to December 2023. Since 2017, the ethical and regulatory dimensions of AI technologies gained public attention and there has been a proliferation of ethics principles by governments, companies, advocacy groups and multi-stakeholder initiatives (Fjeld, 2020). Therefore, the period under study marks a pivotal time in shaping public understanding and imagination around AI governance globally.

Building on the concept of ‘socio-technical imaginaries (SIs)’ (Jasanoff and Kim, 2009, 2015) and analysis of corporate strategic behaviours (Meckling, 2015), our study shows that corporate actors from the three countries have been strategically constructing imaginaries through communication, stakeholder-networking and institution-building. Although they constructed situated imaginaries based on local contexts to respond to national policy agendas and public sentiment, they largely share imaginaries that lend themselves increasing agency and power in the materialisation of future AI governance processes. In particular, we identify ‘hedging imaginaries’ – simultaneously promoting multiple visions of attainable and desirable futures for AI development and governance – as a key strategy used by corporate actors to negotiate socio-political differences and navigate regulatory pressure in their interests. In doing so, we highlight the need for critical reflection on current AI governance discourses in relation to public imagination and the making of future socio-technical orders.

Conceptual underpinnings: SIs and corporate strategy

SIs of AI

Jasanoff and Kim (2009, 2015) proposed the concept of ‘socio-technical imaginaries’ to explain the factors driving the diverse and contingent paths charted by societies in their uptake processes of science and technology. The concept was defined as ‘collectively held, institutionally stabilised, and publicly performed visions of desirable futures, animated by shared understandings of forms of social life and social order attainable through, and supportive of, advances in science and technology’ (Jasanoff and Kim, 2015: 6). By putting analytical focus on how meaning-making in the form of discourse has concrete effects on how technologies are designed, implemented and governed, this concept enables not only cross-cultural comparison, but also insights into the contestation between social actors in the pursuit of techno-scientific projects.

In the case of AI, recent scholarship has documented both shared global narratives and culturally embedded differences in how AI futures are imagined (Cave and Dihal, 2023). Governments have mobilised AI imaginaries tied to national competitiveness, legacy and ideology (Bareis and Katzenbach, 2021; Hälterlein, 2024), while corporate actors have assumed increasing roles to shape dominant imaginaries and thereby taking over public institutions’ capacity to govern these futures with material consequences in the sociopolitical processes (Mager and Katzenbach, 2021).

However, existing research pays little attention to the networks and power dynamics through which states, corporations and other stakeholders jointly construct and disseminate these imaginaries. After all, the competition between, and prominence of certain SIs reflects not just the appeal of particular visions, but their function as ‘crucial reservoir[s] of power and action, [that] lodg[e] in the hearts and minds of human agents and institutions’, where ‘imagination's skilled implementation requires putting in play the intricate networks’ (Jasanoff and Kim, 2015: 25). The limited attention to these networked processes has also left the strategic behaviour of actors under-theorised. How do companies actively deploy imaginaries to build coalitions, negotiate policy solutions and shape the socio-material futures for AI development and governance?

Corporate strategies shaping public discourse and responding to regulatory pressure

Economist Jens Beckert (2016) takes fictional expectations from consumers, investors and corporations as an analytical starting point to study market dynamics. He argues that imaginaries drive modern economies and are, in fact, a core feature of capitalism. However, corporate actors often wield significantly greater power in this dynamic by employing various communication strategies to shape both public imaginaries and actual public policy concerning science and technology. For example, scholars have observed how corporate actors leverage the performative function of ‘hype’ to translate and popularise imaginaries of digital technologies in specific cultural contexts (Hockenhull and Cohn, 2021). Corporate discourse around applications such as the ‘smart city’ has been shown to influence and delimit public imagination, as companies propagate homogeneous, positive imaginaries of their indispensable roles, thereby ‘crowding out’ alternative visions of urban development (Sadowski and Bendor, 2019) or ‘arbitrating’ what constitutes ‘work’ in the context of machine vision and automation (Hsiao and Shorey, 2023). Even amid growing criticism of Big Tech, journalistic and popular discourse often continues to echo and amplify corporate elite narratives, thus reinforcing corporate discursive power (Lucia et al., 2023).

Despite these valuable insights, research on corporate power in the development of digital technologies would benefit from deeper integration with scholarship on corporate strategy in other policy domains. In particular, we draw on the work of environmental politics scholar Jonas Meckling, whose framework for analysing strategic corporate behaviour offers valuable tools for interpreting the patterns observed in our empirical study of corporate imaginaries. Meckling traces how transnational coalitions between companies and NGOs played a key role in establishing emissions trading as a central mechanism in global climate governance (2011). Moreover, building on earlier scholarship that links business policy preferences to both material interests and institutional environments, he develops a typology of four corporate strategic responses to regulations: (1) opposition – when compliance costs are high and regulatory pressure is low; (2) hedging – when costs are high and regulatory pressure is high; (3) support – when benefits are high and regulatory pressure is high; and (4) non-participation – when benefits are high and regulatory pressure is low (Meckling, 2015). In light of the current high regulatory pressure for AI and high compliance costs, what is particular relevant for this study is the hedging strategy which describes when companies advocate for alternative policy instruments or lobby for low-cost designs of existing instruments, especially at the agenda-setting stage of the policy cycle, in order to minimise compliance costs or create a level playing field across global markets (Meckling, 2015: 23).

While Meckling's theory on corporate strategic responses to regulation provides a systematic framework, it does not address the meaning-making process or how discursive influence shapes policy and governance at large. As recent research has shown, corporations do not simply respond to regulations, instead, they actively shape narratives around global challenges such as climate change, thereby influencing which policy options are considered viable (Huf et al., 2022). By bringing this strand of scholarship to studies on SIs, this paper advances conversations on both sides – to understand how corporate actors strategically respond to regulatory pressures with the deployment of imaginative discourse that influence sense-making and normative framings, which ultimately shape the socio-material infrastructure of AI governance.

Methodology

This study adopts a case study approach to include AI governance related documents and communications from ten corporate actors, including both companies and industrial associations from China, Germany and the US. The actors were selected based on two factors: their prominence in AI development and their presence in AI governance related communications. In China, the selected companies are those officially endorsed as part of the ‘National AI Team’, and have each published at least one document addressing AI governance. For the US, the sample include four of the so-called ‘Big Five’ technology companies – Google, Amazon, Microsoft and Facebook/Meta (at the time of data collection, Apple had not published relevant AI governance materials and was therefore excluded). Additionally, we included AI-focused companies such as OpenAI and Palantir, as well as technology companies – Intel, IBM and SAS – that had a notable presence in AI governance discourse through reports and ethics principles. In the German context, the selection include major technology companies that have published AI-related ethical guidelines, and/or policy positions. Aleph Alpha, considered a leading European AI startup during the data collection period, was included due to its active engagement in shaping debates on ‘sovereign’ and trustworthy AI. The KI Bundesverband (German AI Association) was also included to represent the growing role of start-ups and mid-sized enterprises in the debates.

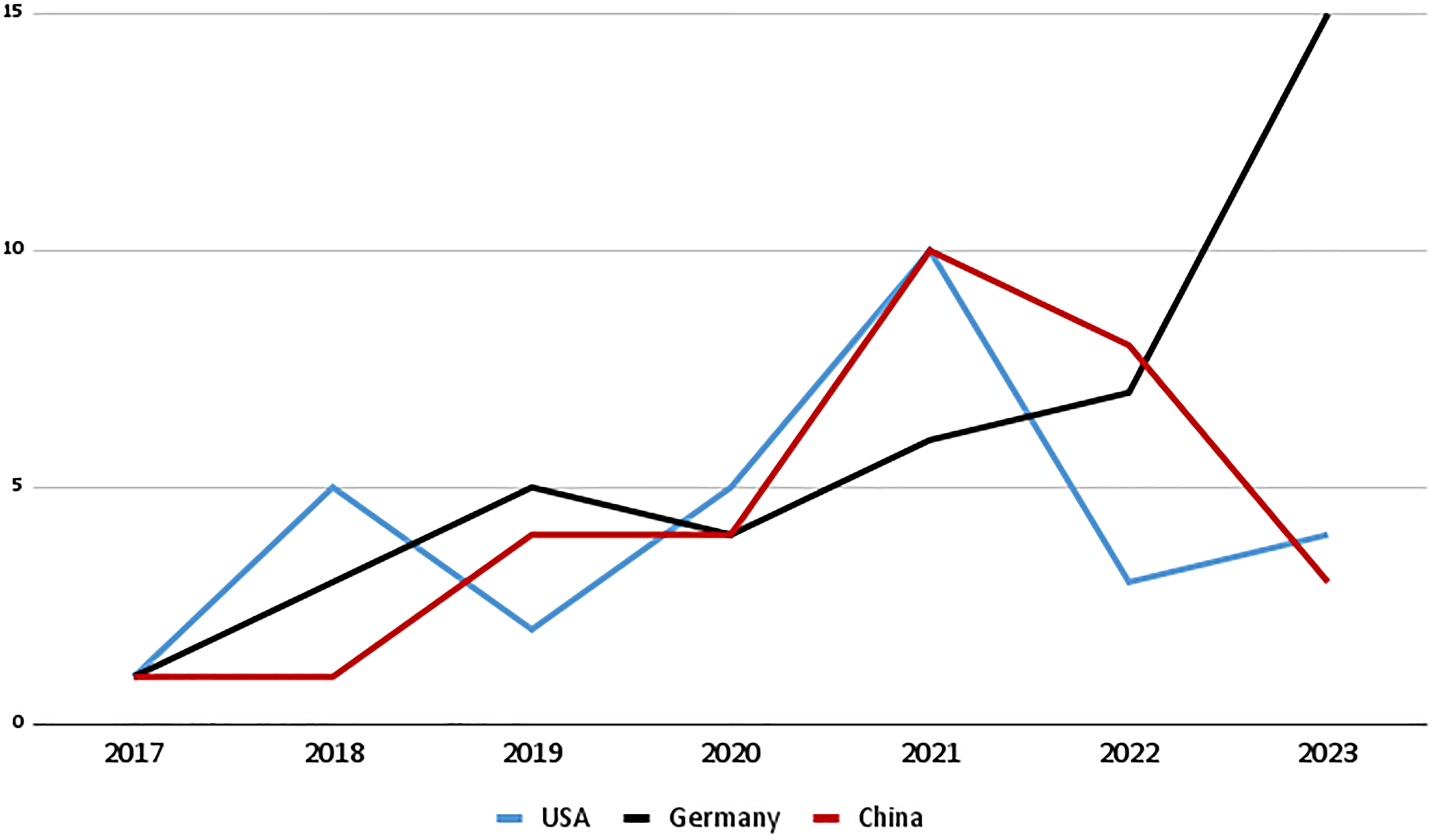

The materials were identified through a targeted web search based on keywords around AI governance in Chinese, German and English, 1 and on the search engines Google and Baidu. The final corpus includes 102 texts such as principles, reports, white papers, standards, corporate blogs, public relation documents and website contents (see Supplemental material file for the full list of companies and documents). The earliest documents identified date back to 2017, followed by a steady increase in publications over time. In China and the US, a significant uptick occurred in 2021, indicating intensified discussions on the topic. In Germany, a similar spike appeared later, in 2023 (See Figure 1 for the number of publications and Figure 2 for a timeline of key milestone documents.).

AI governance documents per country (2017–2023).

Overview of AI governance communication from Jan 2017 to Dec 2023.

Based on an analytical framework developed to identify SIs in communication materials (Brause et al., 2025), we employed a coding system (see Supplemental material file) to identify the communicators, communication type, and narrative elements that form imaginaries. These elements were identified through statements for AI development, its benefits, beneficiaries, risks, negatively impacted entities, statements for value orientations, timeframe, geographical area, and recommended responsible stakeholders and actions for AI governance. We conducted the coding process with the software MaxQDA to identify different imaginaries at the document level. Then we drafted memos to analyse each company's imaginaries. After the original coding, the documents for each company were cross-analysed to identify shifts and continuities in companies’ communicated imaginaries over time. The approach was further combined with a critical discourse analysis (Wodak and Meyer, 2015) to contextualise specific longitudinal developments as well as emphasise the developmental and procedural nature of the imaginary development.

Several limitations of this study should be acknowledged. First, while the selected corporate actors represent influential companies and associations actively engaged in AI governance discourses, the sample does not capture the full diversity of corporate actors in each country. To mitigate potential selection bias, we attempted to identify documents from smaller companies, such as AI startups, but found relatively few publicly available materials. This observation may suggest that AI governance discussions are currently dominated by more established players. However, the imaginaries and governance discourses emerging from smaller companies remain an important area for future research. Second, the data corpus is based on publicly available corporate communications and documents, which primarily reflect companies’ outward-facing narratives. As such, the analysis may not fully capture internal deliberations, lobbying activities, or informal modes of influence that also shape AI governance processes. Research into these will require other methodologies and empirical evidence. Additionally, we acknowledge potential temporal bias of collecting online documents and website content, as documents can be deleted and website content changes. Therefore, our data should be viewed as representing a snapshot in time.

The following sections reflect our analytical process. We first provide an overview of the most prominent imaginaries identified and contextualised with major policy development in each country (section ‘Corporate AI governance imaginaries in China, Germany and the US’), before diving deeper into the strategies used by corporate actors (section ‘Corporate strategies for shaping AI governance’).

Corporate AI governance imaginaries in China, Germany and the US

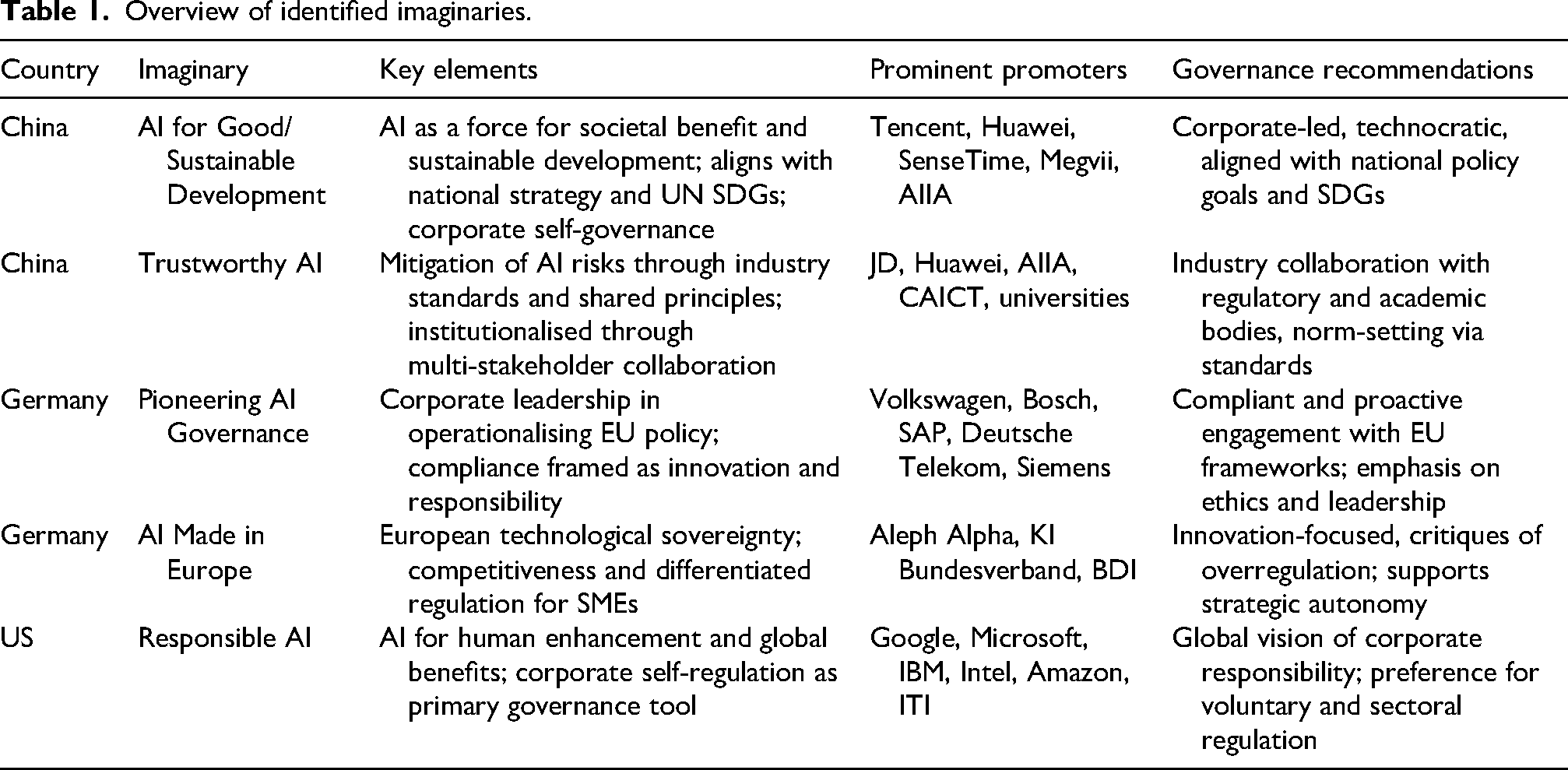

See Table 1.

China

Digital platform companies Alibaba, Baidu, Tencent, JD.com, ICT company Huawei, AI startups SenseTime, Megvii, iFLYTEC, Cambricon and China's Artificial Intelligence Industry Alliance (AIIA) became active in publishing AI ethics and governance related materials from around 2018. These include both lengthy technical reports targeted to policymakers and professionals as well as social media content for the general public. Two prominent and distinctive imaginaries emerged from these materials: ‘AI for Good/Sustainable Development’ and ‘Trustworthy AI’ which remarkably co-evolved closely with major government policies.

Overview of identified imaginaries.

In 2017, the Chinese government released an ambitious national AI strategy. The document outlined a digital development process following three phases– ‘digitisation’ ‘networktisation’ and ‘intelligentisation’ – envisioning the gradual incorporation of digital infrastructure, data-analytics into all aspects of life and transform into an ‘intelligent society’ (PRC State Council, 2017). Building on the strategy, companies began to shape the imaginary ‘AI for Good/Sustainable Development’ which elaborate on the vague concepts in the national strategy with interpretations on how AI applications can provide benefit for people and businesses. The narrative of AI-led technological revolution and its ensued sweeping socio-economic change was prevalent in most Chinese corporate communication materials. For example, Tencent depicted that AI has ‘created a huge wave, and everyone will be swept up in it’ (Tencent, 2020: 1). 2 SenseTime claimed that AI, together with 5G and Internet of Things (IoT), will ‘comprise the basis for 4th Industrial Revolution […] driving force behind public service upgrades and intelligent societies, leading toward a shared future for the full benefit of mankind’ (SenseTime, 2020: 5). Huawei, in its hundred-paged report ‘Intelligent World 2030’, put forward detailed imaginations of AI in various sectors, ‘improving the quality of life […] prevent people from suffering from traffic congestion and urban environmental pollution […] leaving repetitive and dangerous tasks to robots, so that people can devote more time and energy to meaningful and creative work and interests’ (Huawei, 2021b: 14).

Alongside highly positive visions of AI development, companies positioned themselves as capable stewards of utopian AI futures through ethical guidelines and technical reports. Shortly after China's national AI strategy, Tencent emerged as an early corporate voice shaping AI governance by co-publishing a book with the Chinese Academy of Information and Communications Technology (CAICT), a government think tank with significant regulatory roles (Tencent, 2017). The book surveyed AI applications and governance issues, highlighting Tencent's advisory role in policy-making. Its 2019 report, ‘Technology Ethics in the Age of Intelligence’, outlined the company's perspective on the normative foundations of the AI era and framed the company's vision as ‘Tech for Good’ (Tencent, 2019). Other communications promoted AI's benefits in healthcare during COVID-19, smart cities, economic growth and aiding vulnerable populations, while rarely addressing those negatively impacted by AI. Risks were primarily discussed in technical reports on explainability (Tencent, 2022), value-alignment in big models (Tencent, 2023), and algorithm regulation (Tencent, 2022a). These technical proposals, however, fundamentally enlarged corporate roles in AI governance.

Some companies have further extended the narratives of corporate stewardship to steer AI for good with direct links with the UN's Sustainable Development Goals. For example, SenseTime advocated for ‘AI Sustainable Development in several reports, listing AI applications' potentials to solve urgent issues such as resource shortage, public health crisis and gender equality’ (SenseTime, 2022; SenseTime, 2022a). These extremely positive visions illustrate what we identify as the core features of the imaginary ‘AI for Good/Sustainable Development’: it portrays AI as an object of strong desirability to bring about individual and social benefits, and should be adopted as widely as possible; trust in technology companies’ self-regulation and advanced technical capabilities are promoted as means to mitigate AI risks.

Alongside promoting this imaginary, some of the same companies collaborate with government agencies and academia in China also led the propagation of imaginary ‘Trustworthy AI 3 ’. Two major platforms for these collaborations are AIIA, consisting of over 500 member companies, local governments, academic institutions and CAICT. Although AI was also envisioned as a revolutionary force in this imaginary, in contrast to ‘AI for Good/Sustainable Development’, this imaginary focuses on the risks associated with AI, and is constructed around a set of shared norms and standards at the industry level. In 2019, AIIA issued a ‘Draft Code of Conduct for AI’. The Code proposed five sets of principles for industry's self-discipline to ensure socially beneficial AI: (1) safety and controllability, (2) transparency and explainability, (3) privacy, (4) responsibility and (5) diversity and inclusivity. These principles were later listed as the core requirements in the ‘Trustworthy AI Implementation Guide’, together with a specific evaluation framework for assessing whether these principles are implemented in the research and development process (AIIA, 2020).

In 2021, CAICT and the Chinese E-commerce company JD issued the ‘Trustworthy AI White Paper’ which further elaborated on the above-mentioned requirements in terms of techniques, standards and organisational culture for industry actors. The white paper detailed five types of risks in AI systems: (1) physical or information security risks in the deployment of AI systems such as accidents in autonomous driving or data leaks; (2) risks of opaque decision-making caused by ‘black-box’ algorithm models; (3) biased and discriminatory decision-making caused by datasets; (4) risks of unclear liability caused by complicated decision-making processes and (5) privacy risks caused by over-collection of user data (JD and CAICT-2021). It then proposed the ‘Trustworthy AI Framework’ which included industry practices and institutional framework to ensure the mitigation of these risks. Details of these practices and institutions will be elaborated in section ‘Imaginary-based stakeholder-networking and infrastructure-building’.

This imaginary was further propagated at the World AI Conference in 2021, with over 20 companies, universities and research institutions including Huawei, Ant Group, and Tsinghua University, Shanghai Jiaotong University and the China Branch of the BRICS Future Network Research Institute who signed the ‘Pledge for the Development of Trustworthy AI’, reaffirming the above-mentioned values and pledging to collaborate and construct a ‘Trustworthy AI’ ecosystem. Altogether, these actors and initiatives showcased an AI governance imaginary that is based on a set of principles, and institutionalised through the establishment of industry standards. As various types of stakeholders and foreign actors gradually bought into this imaginary, it became the dominant AI governance imaginary in China, demonstrating how networks between government agencies, companies, and academia underwrote the emergence of a coherent imaginary.

In the Chinese corporate discourse, these two types of dominant imaginaries both envisioned AI as revolutionary technologies, but lead to distinctive proposals and implications for AI governance. The ‘AI for Good/Sustainable development’ imaginary focuses predominantly on the benefits of AI applications, leaving the risks of AI applications unaddressed. While seeking to align with the existing domestic imaginary of ‘intelligent society’ or global UNSDGs and their associated institutions, they emphasise corporate self-governance and imply no clear institutionalisation patterns for the external regulation of AI. In contrast, the ‘Trustworthy AI’ imaginary is constructed upon considerations of risks, and with clear institutionalisation patterns through industry standards collaboratively developed among stakeholders. However, the companies promoting the first are in fact also participating in the construction of the latter. This shows the strategy to ‘hedge’ different imaginaries to navigate regulatory and public pressure, which will be elaborated in section ‘Corporate strategies for shaping AI governance’.

Germany

Deutsche Telekom, Siemens, SAP, Bosch, Volkswagen, Mercedes-Benz, Lufthansa, AI startup Aleph Alpha, the German AI association (KI Bundesverband) as well as the Federation of German Industries (BDI) became actively engaged in the ethical and regulatory discussions around AI in 2018. While industrial associations published reports and position papers, individual companies often adopted formats such as corporate blogs and website articles to promote visions in the voices of leading individuals. From these materials two prominent corporate imaginaries with discernible differences emerged: ‘Pioneering AI governance’ and ‘AI made in Europe’, both evolved closely around the imaginary of ‘Trustworthy AI’ promoted by the EU.

Unlike in China and the US, where companies driving AI are mostly digital platform companies and AI-focused tech companies, the German counterparts active in the AI governance discourse are mostly established corporations from traditional sectors like telecommunications, transportation and manufacturing. Their early initiatives portrayed AI as products and services that could bring added value to existing industries and customers, framing it as ‘a key technology in the future’ but should remain to ‘serve people as a tool’ (Bosch, 2020), ‘instrument’ to bring society positive force (SAP, 2022), technologies to ‘transform the efficiency and performance of industries’ (Siemens, 2023c). Although occasionally companies such as Siemens also invoked the ‘4th industrial revolution’ as companies in China, it focused on automating production to meet individual customer needs efficiently and price-competitively (Siemens, 2023d).

The vision of AI as a neutral tool often includes recognising both its benefits and risks. Deutsche Telekom, for instance, stated that ‘AI is initially just a tool and is neutral in itself. It is therefore up to us to use it positively without ignoring the risks’ (Deutsche-Telekom, 2018b: 2). Risks cited typically involve discrimination and bias in datasets, lack of human oversight, privacy concerns and labour market impacts. Bosch referenced ‘dark science fiction’ scenarios where AI overtakes human intelligence or causes job losses but argued that future labour shortages would necessitate AI-human collaboration (Bosch, 2020c). Despite acknowledging potential risks, most companies avoided specifying affected groups, beyond commitments to prohibit discrimination based on factors like ‘culture, race, ethnicity, religion, age, gender, sexual orientation, [or] disability’ (SAP, 2022a: 1).

Highlighting both benefits and risks of AI, some German companies present themselves as pioneers in steering the technologies for social good. For example, Volkswagen stated that, ‘It's not just about technical feasibility (…) We also deal intensively with ethical aspects. For us, the use of AI is not an end in itself. It must always serve people in a meaningful way’ (Volkswagen, 2017). Mercedes-Benz claimed to be ‘the first automobile manufacturer’ to establish principles for AI development and deployment (Mercedes-Benz, 2023a). Early ethical statements, however, were mostly general promissory statements such as ‘We are responsible’ (Deutsche-Telekom, 2018B); ‘We strive for transparency and integrity in everything we do’ (SAP, 2022). However, following the release of EU Commission's AI policies such as the High-Level Expert Group's Ethics Guidelines (2019) and the White Paper on AI (2020), communications from companies converged around the set of values and goals promoted in these policies. Nevertheless, two imaginaries with subtle and yet consequential differences emerged.

In the imaginary ‘Pioneering AI governance’, companies position themselves as both compliant participants and leaders in the EU's regulatory agenda. Lufthansa promoted its AI-as-service solutions by emphasising compliance with European Commission ethics guidelines (Lufthansa, 2022). Volkswagen founded the nonprofit Ethical and Trustworthy Artificial and Machine Intelligence (ETAMI) by collaborating with companies and academia for ‘translating European and global principles for ethical AI into actionable and measurable guidelines, tools and methods’ (Volkswagen, 2021). SAP claimed to be the first European tech company with AI guidelines by 2018 and ‘one step ahead of the legislation’ through its role in the EU's relevant experts committees (SAP, 2022b). Similarly, Bosch, with its code of ethics, stated it was ‘well in advance of binding EU standards’ and actively addressing ethical questions raised by AI (Bosch, 2020: 4). By publicly communicating compliance and influencing the implementation of EU policies, German companies present themselves as actively contributing to the materialisation of the EU's vision of ‘Trustworthy AI’. Underlying these narratives is a clear message: companies can be trusted and should be central in steering AI governance.

In contrast to the social-guardrail focused imaginary, the second prominent imaginary ‘AI made in Europe’ emphasises economic and geopolitical goals, and was promoted by AI startups and industry associations. This imaginary foregrounds the EU's policy goal to achieve ‘excellence’ and ‘competitiveness’ in AI (The EU Commission, 2018). For example, the German AI association, comprised mostly of small and medium-sized companies, advocated for ‘digital sovereignty’ through investment in AI infrastructures like chips, cloud services and data (KI Bundesverband, 2021b). Concerned about US and Chinese dominance in large AI language models, the association championed its ‘OpenGPT-X’ project as a solution to ensure Europe's digital sovereignty and market independence (KI Bundesverband, 2021a). It recommended reducing regulatory pressures to foster local innovation, stating, ‘If Germany wants to take a leading position in the next industrial revolution, it needs a better understanding of young AI companies’ (KI Bundesverband, 2021: 1). Aleph Alpha's CEO echoed this, arguing that while big players can afford regulation, startups would struggle under excessive rules (Aleph Alpha, 2023a).

Nevertheless, this imaginary calls upon European policymakers to provide a counterpoint to the US and Chinese approaches through European values, and therefore not inherently against external regulation. The EU's ideal of ‘trustworthy AI’ becomes an ambivalent goal: while it is often seen as the key to competitive development, it also becomes an obstacle to innovation if regulation is too stifling. According to these corporate discourse, the key is differentiated regulation. In a statement towards the EU's Ethics guidelines for trustworthy AI, BDI argued that ‘the guidelines do not yet take sufficient account of the fact that the ethical boundary conditions of AI systems differ considerably depending on the field of application. Particularly for industrial applications ethical questions often play only a minor or very context-specific role. An insufficient differentiation regarding the criticality of AI applications may lead to undifferentiated red lines, which unnecessarily restricts Europe's competitiveness’ (BDI, 2019: 1). Just as the imaginary is used by AI startups to argue for policy support for small businesses, it is also used by companies developing AI in industrial settings to argue for reduced regulations.

Overall in Germany, these two corporate AI governance imaginaries share common elements depicting AI as neutral tools, and mostly as business or industrial applications. The ‘Pioneer AI governance’ imaginary advanced trust in companies to lead in governance, precariously balanced upon regulatory compliance and leadership in shaping EU regulation. The ‘AI made in Europe’ imaginary put emphasis on technological and economic competition and called for differentiated regulations which essentially meant lowered requirements for AI in industrial settings and SMEs. However, companies have shifted between them, and companies promoting ‘Pioneer AI governance’ were also the members of the associations promoting ‘AI made in Europe’. Here again, we observe the phenomena of ‘hedging’ imaginaries.

US

Amazon, Facebook/Meta, Google, Microsoft, IBM, Intel, OpenAI, Palantir, SAS, as well as the Information Technology Industry Council (ITI), a trade association and advocacy organisation that represents the ICT industry have been forerunners in shaping domestic and global AI governance discourse through corporate communications. These include corporate blogs, reports, position papers to AI policies and social media posts. Analysis of these materials revealed a remarkably consistent and unified imaginary ‘Responsible AI’ which foregrounds corporate responsibility.

As early as 2017, ITI laid the groundwork for ‘Responsible AI’ with its ‘AI Policy Principles’, emphasising the industry's role in fostering responsible AI development with government support. The document highlighted AI's potential to ‘enhance human productivity’, ‘empower people’ and address societal challenges, focusing on AI's positive impacts and urging companies to integrate principles beyond legal compliance (ITI, 2017: 1). Similar narratives celebrating AI's ability to ‘augment human intelligence’ and ‘empower humans’ appeared in communications from Microsoft (2018), Google (2018) and IBM (2023a). Unlike the industrially focused visions of Chinese and German companies, these narratives centred around human potential, portraying it as boundlessly expandable through AI. This emphasis on ‘humans’ implicitly transcends nationalities and geographies. Together with the stated ambitions of using AI to ‘solve societal problems at a global scale’ (ITI, 2017), ‘address global challenges related to public health, humanitarian assistance, sustainability, and disaster response’ (Google, 2021), and ‘address the global need for digital skills development’ (Microsoft, 2018), these grand visions underpin the ‘Responsible AI’ imaginary, which imagines AI as a transformative force capable of delivering boundless benefits for humanity on a global scale.

Built upon the aspirational visions for human futures, American companies position themselves as pioneers ‘more advanced than governmental regulations in terms of AI governance’ (ITI, 2017). Microsoft, for instance, acknowledged the need for AI ethics and regulations but argued that AI ‘needs to develop and mature’ before rules can be crafted, suggesting that only after consensus about social values and ‘best practices’ established to govern AI, would governments be in a position to legislate (Microsoft, 2018: 14). In subsequent years, Microsoft published reports, assessment guidelines, standards and open-source tools detailing its implementation of ‘Responsible AI’ (Microsoft, 2021, 2022a, 2022b). This narrative reflects the logic behind many American Big Tech companies: companies should be given priority to innovate, and due to their technical expertise, they are also better fitted than the governments to design regulations.

Google's communications also illustrated the ‘Responsible AI’ imaginary, but with stronger lobbying efforts and expectations for policymakers and society. Following public backlash against its military AI project ‘Maven’, Google released AI principles in 2018, outlining its commitment to responsible development and specific areas it would not pursue (Google, 2018: 1). In the following years, the company released reports advancing policy recommendations for governments 4 including ongoing AI legislation efforts in the EU (Google, 2019, 2019a, 2021, 2021a). These reports highlighted Google's internal governance structures and computational techniques for interpretability, privacy, security and fairness. One report likened corporate actors to musicians adapting techniques for different audiences, arguing that governments and societies must ultimately decide how to harness AI's benefits and set development frameworks (Google, 2019b: 3). These narratives position Google as a leader in AI governance, yet with a deliberately neutral and limited role. It is noteworthy that Google's report ‘Responsible Regulatory Framework for AI’, stating that policymakers also need to be ‘responsible’ by refraining from obstructing AI's development with burdensome regulations and advising against horizontal regulation like the EU's AI Act (Google, 2021a). Instead, it advocated for sectoral regulation that is risk and context-based, as well as reliance on internationally recognised industry standards, such as those from IEEE and ISO.

While communications from companies such as Amazon, Palantir and Open AI also featured elements of the ‘Responsible AI’ imaginary, at times they have also shown an attempt to align with other AI governance imaginaries. For example, IBM advocated for ‘Trustworthy AI’ and promoted ‘fairness, robustness, privacy, explainability and transparency’ as its pillars, which correspond to many of the principles of ‘Trustworthy AI’ advocated by the European Union's High-Level Expert Group on Artificial Intelligence (2019). The company explicitly acknowledged the impact of ‘concerns around reputational risk and a growing set of regulations’ (IBM, 2021: 1) on AI development, yet considered businesses’ internal assessment and management processes as the ‘outermost guardrails for trustworthy AI’ (IBM, 2021: 4). Despite the use of the label ‘Trustworthy AI’, this fundamentally differs from the European approach that sets laws as the defining guardrails, and therefore demonstrated a rather superficial attempt to hedge different imaginaries.

Another example of a company seeking to buy into different imaginaries in their communications can be best observed by a shift in Intel's corporate website from ‘AI for good’ to ‘Responsible AI’ during the process of this research's data collection. According to data retrieved using the Wayback Machine Internet Archive, the company established the website under the banner ‘AI for Social Good’ approximately in April 2020, listing its vision statement ‘to advance uses of AI that most positively impact our world’ and several social responsibility projects and beneficial applications (Intel, 2020). However, a sub-page ‘Responsible AI’ was later added, showcasing the company's media resources on human–AI interactions, and research on techniques related to privacy preservation and safety. In late 2023, the banner ‘AI for Good’ on the website was replaced entirely by the ‘Responsible AI’ page (Intel, 2023). This shift not only demonstrates a company's changing narratives but also that companies in the US have converged around the same imaginary.

Overall, there is a highly coherent ‘Responsible AI’ imaginary from corporate communications in the US. The core elements that form this imaginary include: (1) AI's strong desirability to ‘human enhancement’, and this empowerment justifies continued innovation and cautious regulation. (2) American companies’ leadership in technologies supporting their superior position in steering AI governance. (3) The idea that technological risks can be solved by technological means such as practices that ensure fairness, transparency, explainability, as well corporate management processes such as risk assessments and internal audits. Although companies do signify their alignment with other prominent imaginaries, their own imaginaries often encapsulate visions and policy recommendations targeted at a global scale and in the name of humanity. This grand vision ultimately hints to an ambition that requires other stakeholders to adopt solutions and frameworks from the companies themselves.

Corporate strategies for shaping AI governance

Hedging imaginaries to navigate socio-political differences and regulatory pressure

Our analysis of corporate discourse through the lens of SIs and our tracing of their building elements reveals remarkably similar ones in the proposals for AI governance, despite their different socio-political embeddedness. The prominent imaginaries present companies as leaders in technical know-how and moral authority, and therefore justify them as who can be responsible for steering societies towards utopian futures. Among American companies, the dominant ‘Responsible AI’ imaginary strongly foreground private ordering as the preferred mode of governance over public regulation: with the creation of dedicated ethics teams within corporations and the development of techniques, processes and standards to operationalise normative principles. In Germany, this element is shared by both established companies in their ‘Pioneer AI Governance’ imaginary and AI startups and industry associations' ‘AI made in Europe’ imaginary, emphasising their roles in both developing frontier technologies and actively contributing to the EU's AI agenda. In China, this element is less prevalent, and when articulated in the imaginary ‘AI for Good/sustainable development’, it is strongly connected to the beneficial impacts of AI applications.

This similar imaginaries are embedded in highly diverging levels of control in the country-specific state-market relations, with the Chinese government maintaining the strongest level of control over the private sector, the US remaining laissez-faire and Germany as part of the EU covering a middle-ground (Bradford, 2023). At the same time, these imaginaries reflect broader public attitudes toward digital technologies within each cultural context. In China, not only has a sense of ‘techno-optimism’ motivated a particular mode of innovation as public–private collaboration (Lindtner, 2020), public acceptance towards technologies, even some controversial ones such as facial recognition, contact tracing apps and the social credit system have remained higher than Western nations (Kostka, 2023). In Germany, public debates and concerns over privacy, data protection and ethical considerations have shaped perceptions of technological innovation since the 1980s (Hornung and Schnabel, 2009; Kozyreva et al., 2021). In the United States, greater tolerance for risk fosters the development of new ideas and innovative products (Lee et al., 2013; Prim et al., 2017; Srite and Karahanna, 2006), with a pronounced emphasis on maintaining a competitive edge in global innovation instead of focussing heavily on regulation.

Pfotenhauer and Jasanoff's analysis of ‘travelling imaginaries of innovation’ in different parts of the world has shown the global circulation of a universalist vision for what good innovation is, how it works and who should get involved (Pfotenhauer and Jasanoff, 2017). In our research, the vision of corporate leadership which transcends national boundaries and cultural differences demonstrate another dimension of these ‘travelling imaginaries’. However, tracing how corporate actors construct these imaginaries in their communications overtime and comparing them in different jurisdictions reveals that these imaginaries do not ‘travel’ as spontaneous forces, but as the result of corporate strategies to pursue their interests.

Our study identifies ‘hedging imaginaries’ as a key strategy employed by corporate actors, whereby they simultaneously promote multiple visions of attainable and desirable futures for AI development and regulation. By analysing the content of the imaginaries in combination with the ‘hedging strategy’ which refers to both defensive and supportive strategies from corporate actors towards political and social demands (Meckling, 2015), we uncover more nuanced ways in which corporate actors discursively shape AI governance pathways. In our empirical analysis, we observe that companies hedge imaginaries in several ways. A substantive approach is exemplified by Chinese companies simultaneously promoting the ‘AI for Good/sustainable development’ imaginary and participating in the promotion of the government-led ‘Trustworthy AI’ imaginary with lengthy reports. While corporate self-governance seemingly contradicts a government-led regulation through standards approach, this hedging effort enabled them to project an image of leadership, advocate for corporate self-governance, while participating in shaping industry standards to address AI's potential risks. Similarly, some German companies, while championing the ‘Pioneer AI Governance’, were also members of the associations promoting ‘AI made in Europe’. This enabled them to publicly endorse the EU regulations while lobbying against overly stringent rules that could impact their operations in a more anonymous way. In both cases, by engaging in the promotion of different imaginaries, this strategy has allowed companies to strengthen their interests effectively facing the growing regulatory pressure: minimise compliance costs or level the costs across the industry.

In comparison, a more superficial approach to the hedging strategy is exemplified by companies’ communications mentioning terms such as ‘Responsible AI’, ‘Trustworthy AI’ or ‘AI for Good’ without substantiating what these visions mean, or without coherent interpretations of these visions. For example, in Facebook/Meta's communication ‘Collaborating on the future of AI governance in the EU and around the world’, the company is said to create ‘AI for Good partnerships like (…) AIxSDGs project to explore how AI can help meet the United Nations’ Sustainable Development Goals’, ‘help define best practices around developing trustworthy AI’ and ‘turn broad responsible AI principles into practical steps that both companies and policymakers can implement’ (Facebook/Meta, 2020). By catching these positive terms related to AI all in one short article, the main goal seems to be fostering a positive image.

Although one can question whether the communicators in companies are aware of the difference between these terms and the evolving connotations and obligations implied by these terms, it is clear that some of the communicators are aware. In Siemens’ AI ‘Artificial Intelligence Glossary’, ‘Responsible AI’ is defined as a ‘topic [that] deals with and questions the responsible use and development of AI applications in a way that they meet ethical and moral standards’. In comparison, ‘Trustworthy AI’ is defined as being ‘introduced in the EU AI Act from the European parliament, trustworthy AI should respect all applicable laws and regulations (lawful), respect ethical principles and values (ethical) and take its social and technical environment into account (robust)’ (Siemen, 2023a). If this distinction between the focus on the obligations of the developer and users to follow soft standards, and the emphasis on the additional requirement to follow laws is clear to the companies, then IBM's attempt, as mentioned above, to advocate for ‘Trustworthy AI’ while arguing that their business’ internal technical assessment and management processes are the ‘outermost guardrails for trustworthy AI’ seems contradictory and exemplifies another attempt to superficially hedge different imaginaries (IBM, 2021: 4). This strategy, both in substantive and superficial ways, enabled corporations to describe and prescribe future pathways to govern AI, navigate competing demands that align with national priorities, public sentiments, while maintaining flexibility to influence emerging frameworks.

Imaginary-based stakeholder-networking and infrastructure-building

By conceptually synthesising SIs and ‘hedging strategy’, ‘hedging imaginaries’ deepens our understanding on how meaning-making facilitates network and coalition building between stakeholders shaping governance practices and infrastructure on a global scale. The concept of ‘sociotechnical imaginary’ was originally developed to pull ‘together the normativity of the imagination with the materiality of networks’ and also links ‘concepts more closely (…) to policy as well as politics’ (Jasanoff and Kim, 2015: 27). This emphasis on normative imagination enriches analytical perspectives beyond the corporate choices of supporting or opposing regulation (Meckling, 2015), allowing insights into how actors justify their alternative proposals, why actors align, and more importantly, which parts of governance infrastructures they shape towards their own interests and power.

The examples from Germany – ‘Pioneer AI Governance’ and ‘AI made in Europe’ – illustrate how interpretations of what European leadership in AI means enable companies to achieve their strategic goals while swaying the German/EU regulatory agenda. They revealed complex negotiations of interests and power in charting pathways of regulatory futures. Unlike their counterparts in China, who closely collaborate with governments, or their US counterparts who enjoyed expansive autonomy, German companies strategically interpreted their imaginaries based on the EU's ‘Trustworthy AI’, but with a different emphasis. These imaginaries do not simply support or oppose the EU's regulations. Established industrial conglomerates leverage their existing reputations and market advantages to foreground the ‘Pioneer AI Governance’ imaginary, which emphasises proactive guardrails and advanced standards. These companies position themselves as key actors in shaping national and EU-level policymaking 5 by promoting initiatives like the ETAMI voluntary standards and labelling systems (Etami, 2021). This allows them to not only craft an image of leadership, but by building networks and shaping communities of practice around this imaginary, they can also institutionalise standards, minimise their compliance costs and maintain their competitive edge.

Start-ups in a more precarious market position adopted another strategy to rally around the ‘AI made in Europe’ imaginary, which stresses the economic benefits of AI innovation and the imperative of maintaining European competitiveness. These startups interpret ‘Europeaness’ and ‘digital sovereignty’ as not only adherence to EU values such as ethics, explainability and data protection regulations but also as retaining autonomy to make their own strategic decisions. Through initiatives like the ‘KI Gütesiegel’ certification scheme, they also sought to institutionalise their vision of AI governance. Their strategy reflects their need to carve out a niche and influence the regulatory agenda in a manner that ensures space for SME innovation while reducing the dominance of large players.

Both sides offered future visions to govern AI that are deeply imbricated with the German/European identity. However, their different levels of resources and status drive their strategic construction of different imaginaries interpreting European leadership in AI governance, and associated alliance networks. Nevertheless, the two camps converge on emphasising the central role of Europe's indigenous infrastructure in materialising both imaginaries. For example, both established conglomerates and tech startups have supported Gaia-X, an initiative by Franco-German governments and European companies to develop a federated secure data infrastructure for Europe, as a prime example to secure Europe's AI endeavours and digital sovereignty (BDI, 2021).

The two imaginaries in Germany also show clear coherence with the regulatory orientation of the EU AIA. While both emphasise the importance of technical standards, certification schemes as governance instruments, and differentiated regulatory obligations, researchers have observed that lobbying efforts by corporate actors ultimately contributed to a ‘watered-down’ version of the AI Act with more lenient requirements, a more finely distinguished set of regulated AI application categories (Corporate Europe Observatory, 2023), and a reliance on standards, certification schemes and conformity assessment mostly conducted by provider themselves, which make the Act to practically become a model of ‘co-regulation’ (Cantero Gamito and Marsden, 2024). This exemplifies how divergent imaginaries can intersect in their proposals, revealing complex interplay between stakeholders’ imaginaries and parts of the governance infrastructure.

We use the term ‘governance infrastructure’ (Johnston, 2010) to refer to structures that extend beyond regulatory texts and include technical means to address issues such as privacy such as federated-learning technique, technical standards, as well as regulatory practices such as algorithm-registry in China (Sheehan and Du, 2022). Since the regulatory frameworks in the three jurisdictions are still nascent (even in the EU the details of implementation and interpretation of the AI ACT is still under discussion), our analysis highlights mainly how corporate actors have shaped the technical infrastructure for AI governance (techniques and standards). In fact, the very phenomenon of primary reliance on these technocratic means of governance across the three regimes illustrates the consequence of corporate influence. However, tracing the influence of corporate governance imaginaries on policy initiatives as they mature remains an important avenue for future research – one to which we hope that our conceptual and empirical contributions provide a useful starting point.

In China, the two dominant imaginaries – ‘AI for Good/Sustainable Development’ promoted by individual companies, and ‘Trustworthy AI’ promoted in close collaboration with government agencies and universities – together undergird a collection of technologies and systems, organisations, policies, practices and relationships that interact to form the emerging AI governance framework in China. This framework is best illustrated by a figure in JD and CAICT's ‘Trustworthy AI White Paper’ (2020: 9) (see Figure 3 for an adapted version).

General framework of trustworthy AI. Source: General framework of trustworthy AI, adapted from CAICT and JD explore Academy's White Paper on Trustworthy AI Governance (2020).

This framework is structured across multiple levels of organisational policies and practices. At its foundation lies ‘industry trustworthiness practices’ alongside the establishment of a ‘system of Trustworthy AI standards’. The intermediate level comprises ‘corporate trustworthy practices’. At the apex of this hierarchy are national ethics frameworks, regulations and laws. Although state regulations are placed firmly at the top, the main parts of this framework rely on industry and corporate practices and technologies. This demonstrates how corporate actors, by actively contributing to the drafting of governance proposals, are able to expand their roles even within China's state-centric system.

Through hedging imaginaries, Chinese companies were also able to articulate regional and global ambitions beyond domestic governance initiatives. A good example is SenseTime's efforts to align AI development with the UNSDGs. As SIs themselves (Fritzbøger, 2020; Jasanoff, 2022), the SDGs provide a widely recognised and institutionalised framework that facilitates integration into global networks of actors, including consortia, UN agencies and NGOs. SenseTime's establishment of the ‘AI Shared Future Alliance’ in 2020 – a coalition of industry, academia and NGOs across Asia aimed at ensuring adherence to ‘AI sustainable development principles’ and mitigating associated risks – exemplifies this strategy. By adopting an imaginary that emphasis empowerment for disadvantaged communities and the Majority World, historically marginalised in industrial and economic development, SenseTime was able to align its vision with established global frameworks promoting the positive outcomes of AI, but also strategically circumvents commitments to new regulatory institutions or deeper accountability mechanisms for AI's risks and implications.

The prominence of the ‘AI for Good/Sustainable Development’ imaginary among Chinese companies may also be a strategic response to counterbalance Western-led collaborations in AI governance institutions, such as the EU-US Trade and Technology Council (TTC) and the Global Partnership on AI (GPAI). These institutions serve as multi-stakeholder forums aimed at advancing transatlantic leadership and promoting democratic values in AI development. In contrast, Chinese actors have increasingly gravitated toward the United Nations as their preferred arena for negotiating global AI policy, leveraging its multilateral framework to influence global norms and challenge Western dominance (Bradford, 2023; Cantero Gamito, 2023).

In the US, tracing longitudinally the rise of ‘Responsible AI’ points to the important role of IT industry association ITI as a network that diffused this imaginary among the American big tech companies, and eventually led to its hegemonic domination in the US for guiding AI governance approaches. The influence of this corporate imaginary over government policy initiatives was thoroughly demonstrated in July 2023 when the Biden-Harris Administration secured voluntary commitments from these companies to develop ‘Responsible AI’. In doing so, the US government formally became a promoter of the imaginary without significantly rewriting the core narrative of industry leadership (The White House, 2023). In the absence of a shared industry standard and government regulations, American companies have taken the lead in implementing their envisioned AI governance imaginaries through a mix of material and social inventions. For example, IBM developed ‘AI Explainability 360’, an open-source toolkit of algorithms that ‘support the interpretability and explainability of machine learning models’ and invite developers to use and contribute to it ‘to help advance the theory and practice of responsible and trustworthy AI’ (IBM, 2019). Microsoft's ‘Responsible AI Standard’ introduced tools and practices to enhance accountability and transparency such as the impact assessment guide, documentation requirement and internal checkpoint as solutions. Google claimed to be ‘working intensely to advance the areas of AI interpretability and accountability, through open-sourcing tools and publishing research’ such as the ‘TensorFlow Lattice’ and ‘Building blocks of interpretability’ to illustrate ‘how different techniques can be combined to provide powerful interfaces for explaining neural network outputs’ (Google, 2019b: 3). Although these tools are presented as open-source and ‘best practices’, they also represent strategic efforts to shape governance infrastructure through establishing de facto standards, and follow a logic of using technical means to solve social issues. The capabilities to first develop advanced algorithms and resources to put organisational structures in place gave these companies advantages to influence practices elsewhere and gain dominance globally. 6

More than ethics washing: strategising AI governance imaginaries

While some scholars have criticised the flurry of AI ethics frameworks and principles from companies as forms of ‘ethics washing’, ‘ethics shopping’, or ‘ethics shirking’ that deflect regulation while preserving reputations (Floridi, 2019; Greene et al., 2019; Wagner, 2018), others view them as private ordering with public impact, akin to corporate rule-making in areas such as social media governance (Chinen, 2023; Klonick, 2018). Our analysis, through the lens of SIs and corporate strategies, extends these debates by uncovering how corporate actors strategically construct and deploy imaginaries such as ‘Responsible AI’, ‘Trustworthy AI’ and ‘AI for Good’. By identifying how companies hedge different imaginaries, build stakeholder networks, shape regulatory agenda and institutionalise these visions through the establishment of practices and technologies, we show that these imaginaries are not merely rhetorical. They concretely shape socio-material infrastructures and governance pathways, embedding corporate interests and power into the future governance of AI.

To be clear, mobilising imaginaries of ‘Responsible AI’ does not shape these pathways necessarily in responsible ways, and the fact that major technology companies chime into the ‘AI for Good’ discourse does not secure future AI developments driven by public interests. Similar to how social media companies have discursively responded to public and policy pressure to take responsibility for misinformation and hate speech (Katzenbach, 2021), AI companies embrace and drive such imaginaries to communicate responsiveness. While companies might articulate ethics guidelines and public interest missions, these imaginaries rather manifest than challenge dominant ideologies and existing inequalities in digital society. This partially allows industry appropriation even of the normative and critical discourse on AI (Marres et al., 2024).

But the detailed investigation of these strategies and imaginaries does make a difference. Firstly, the corporate appropriation of terms such as ‘Responsible AI’ and ‘AI for Good’ calls for a more critical examination of their use in public discourse. Rather than uncritically adopting such idealised narratives, meaningful debates on AI governance need to interrogate the underlying logics, power dynamics and exclusionary practices embedded in these imaginaries. Future research needs to connect these strategic global narratives more closely with concrete controversies and tensions, such as the case of Timnit Gebru, when an internal Google team identified and criticised how actual social harms misaligned with expressed values (Phan et al., 2022). This could help ensure to build robust governance frameworks that genuinely serve societal interests rather than perpetuate corporate dominance. Secondly, focusing on the socio-technical dimensions of these imaginaries reveals how normative discourses and institutional power intersect in corporate AI proposals. These proposals often foreclose alternative solutions that could address AI risks, favouring approaches reliant on technical infrastructure and corporate management. By foregrounding these dynamics, this study highlights how future-oriented articulations of AI norms are deeply intertwined with material infrastructures and practices that shape governance and social outcomes. We may not forget that we are currently building the infrastructure for the 21st century (Jobin and Katzenbach, 2023). We hope that this paper contributes to emphasising the fact that we do have choices and the possibilities to identify the sites and actors making these decisions. Finally, the concept of SIs, which interrogates ‘the complex topographies of power and morality as they intersect with the forces of science and technology’ (Jasanoff and Kim, 2015: 33), offers a valuable lens for understanding different stakeholders’ influence in AI governance. By mapping the development of AI imaginaries across jurisdictions and over time, future studies can contribute to not only identifying how powerful actors navigate public expectations and policymaking to institutionalise their visions of governance, but also better understand how we can bring marginal voices and their proposals to more attention. We need to open up our futures again, with or without AI.

Supplemental Material

sj-docx-1-bds-10.1177_20539517251400727 - Supplemental material for Strategising imaginaries: How corporate actors in China, Germany and the US shape AI governance

Supplemental material, sj-docx-1-bds-10.1177_20539517251400727 for Strategising imaginaries: How corporate actors in China, Germany and the US shape AI governance by Yishu Mao, Vanessa Richter and Christian Katzenbach in Big Data & Society

Footnotes

Acknowledgements

The authors wish to thank members of the Lise Meitner research group “China in the Global System of Science” at the Max Planck Institute for the History of Science, and the anonymous reviewers for providing helpful feedback for earlier versions of this paper, Fiona Bewley for her editorial assistance, Wei Feng and Robin Ketterer for graphic design assistance. ‘Deep L Write’ and ‘ChatGPT 4o’ were used to reduce segments of the text for succinctness, but not in ways that violate the standards for rigorous scholarship.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by Max Planck Gesellschaft's Lise Meitner Excellence Program and the German Research Foundation (DFG) under Grant 450649594 (‘Imaginaries of AI’).

Declaration of conflicting interest

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: One author is member of the Meta/Facebook Expert Board ‘Actor and Behaviour Policies’ and receives an annual compensation for this role of USD 6000. There has not been any connection between work on this paper and the work within the board.

Data availability

Data made available as supplemental materials.

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.