Abstract

Can presidential misinformation affect political knowledge and policy views of the mass public, even when that misinformation is followed by a fact-check? We present results from two experiments, conducted online and over the telephone, in which respondents were presented with Trump misstatements on climate change. While Trump’s misstatements on their own reduced factual accuracy, corrections prompted the average subject to become more accurate. Republicans were not as affected by a correction as their Democratic counterparts, but their factual beliefs about climate change were never more affected by Trump than by the facts. In neither experiment did corrections affect policy preferences. Debunking treatments can improve factual accuracy even among co-partisans subjected to presidential misinformation. Yet an increase in climate-related factual accuracy does not sway climate-related attitudes. Fact-checks can limit the effects of presidential misinformation, but have no impact on the president’s capacity to shape policy preferences.

Introduction

Long-standing literature in political science makes clear that political elites can shape the factual beliefs and policy attitudes of the mass public, especially co-partisans (Lenz, 2013; Zaller, 1992). Yet whether this capacity extends to sitting presidents who disseminate misinformation and who can be fact-checked is an open question. On the one hand, it is possible that political elites can play a dominant role in shaping factual beliefs and policy attitudes even in the presence of fact-checks, with effects most acute among co-partisans. Indeed, some scholars have argued that presidents in particular can shape the political attitudes of the mass public, if only for a short amount of time (Canes-Wrone, 2005; Cavari, 2013; Ragsdale, 1984). On the other hand, it could be the case that fact-checks effectively reduce beliefs in falsehoods propagated by political elites, even when a respondent and the political elite come from the same political party. After all, the power of presidents to shape mass attitudes appears much more limited than popularly imagined (Edwards, 2003; Franco et al., Unpublished). In an age of fact-checking (Graves, 2016), can presidents use the bully pulpit to spread misinformation—and can they use that misinformation to bring Americans closer to their policy preferences?

Here, we present results of two experiments that aggressively measure the capacity of the current president to affect factual beliefs and policy attitudes, with and without the presence of fact-checking. In both experiments, subjects were exposed to misinformation advanced by President Trump about climate change, with some subjects subsequently exposed to fact-checks of this misinformation. In general, correcting misinformation about science can be difficult (Pluviano et al., 2017). As others have recently found, climate issues in particular may provoke people to reject factual information (Cook and Lewandowsky, 2016; Ma et al., 2019). Citizens, especially political independents, may conflate short-term weather with long-term climate trends (Hamilton and Stampone, 2013); political controversies around climate change also inhibit agreement with the facts about the topic (Bolsen and Druckman, 2018; Nisbet et al., 2015).

In addition to testing a difficult issue, we also incorporated subjects who, according to prior research (Guess et al., 2019), might prove especially susceptible to the lure of misinformation. While much of the recent scholarship in this area has utilized opt-in online subjects, we conducted both experiments simultaneously over an opt-in online platform (Amazon Mechanical Turk) and via a random-digit-dialer (RDD). Our use of the RDD afforded us older, more conservative subjects than we would have been able to reach just with Mechanical Turk alone—subjects who prior research (Guess et al., 2019) suggests often have difficulty distinguishing false from factual information. In addition, unlike the Mechanical Turk subjects, RDD subjects were not paid for their participation. The absence of compensation removes a possible accuracy incentive for the RDD respondents. Theoretically, even though we did not pay for accuracy, Mechanical Turk subjects could have believed their compensation would be affected by the accuracy of their responses. Compensation for accuracy can increase factual accuracy about polarizing political issues (Prior et al., 2015).

In addition, while previous research has investigated the effects of fact-checks targeting US presidential candidates (Wood and Porter, 2017) and senators (Benegal and Scruggs, 2018), little work has examined whether the capacity of US presidents to affect factual beliefs and policy attitudes can be mitigated by fact-checks. In sum, our experiments tested the effects of fact-checks about a particularly vexing policy topic, including on subjects who might generally resist fact-checks and who were not compensated for their participation, thereby removing a potential accuracy inventive. The fact-checks targeted the current president. Given these conditions, can fact-checks limit the ability of the sitting president to shape factual beliefs and policy attitudes?

Answers to this question have considerable implications for scholars and policymakers alike. For the last several years, the public has been awash in misinformation and “fake news,” and this paper contributes to the emerging study of this important topic (Lazer et al., 2018). Over the same period, media organizations have invested heavily in fact-checking (Graves, 2016), to uncertain ends (Chan et al., 2017; Nyhan et al., 2019). Some posit that subjects reject factual information, effectively “backfiring” (Ma et al., 2019

In both experiments, and across samples, corrections made the average subject more factually accurate. While Republicans were not as affected by a correction as other partisans, their factual beliefs about climate change were never more affected by Trump than by the facts. However, in neither experiment did factual corrections affect related policy attitudes. Corroborating prior work, factual accuracy can be enhanced without affecting related policy attitudes (Barnes et al., 2018). We found that this was the case even when the factual corrections target the president.

Design

We tested two Trump misstatements. Experiment 1 corrected a Trump misstatement about climate change science, while Experiment 2 corrected a Trump misstatement about climate change policy. In both experiments, subjects were randomly assigned to be exposed to a misstatement, or a misstatement paired with a correction, or neither. Afterwards, all were asked to state their level of agreement with Trump’s misstatement and to answer a question about their attitudes toward environmental regulatory policy. We gathered respondents’ party identification and other covariates prior to treatment. The complete text of both experiments is in the Online Appendix.

We administered each experiment simultaneously online and over the telephone. For the online survey experiments, we recruited subjects using Amazon’s Mechanical Turk service. In wide use across the social sciences (Kuziemko et al., 2015), experimental results observed on Mechanical Turk have been found to closely approximate those observed elsewhere (Mullinx et al., 2015). Turk samples, however, often skew younger and liberal (Huff and Tingley, 2015). To enlist more conservative and older voters in our experiments, we also relied on an RDD. The surveys were run by an external vendor using an automated calling system with pre-recorded questions to which respondents answered by pressing numbers on their telephones. 2

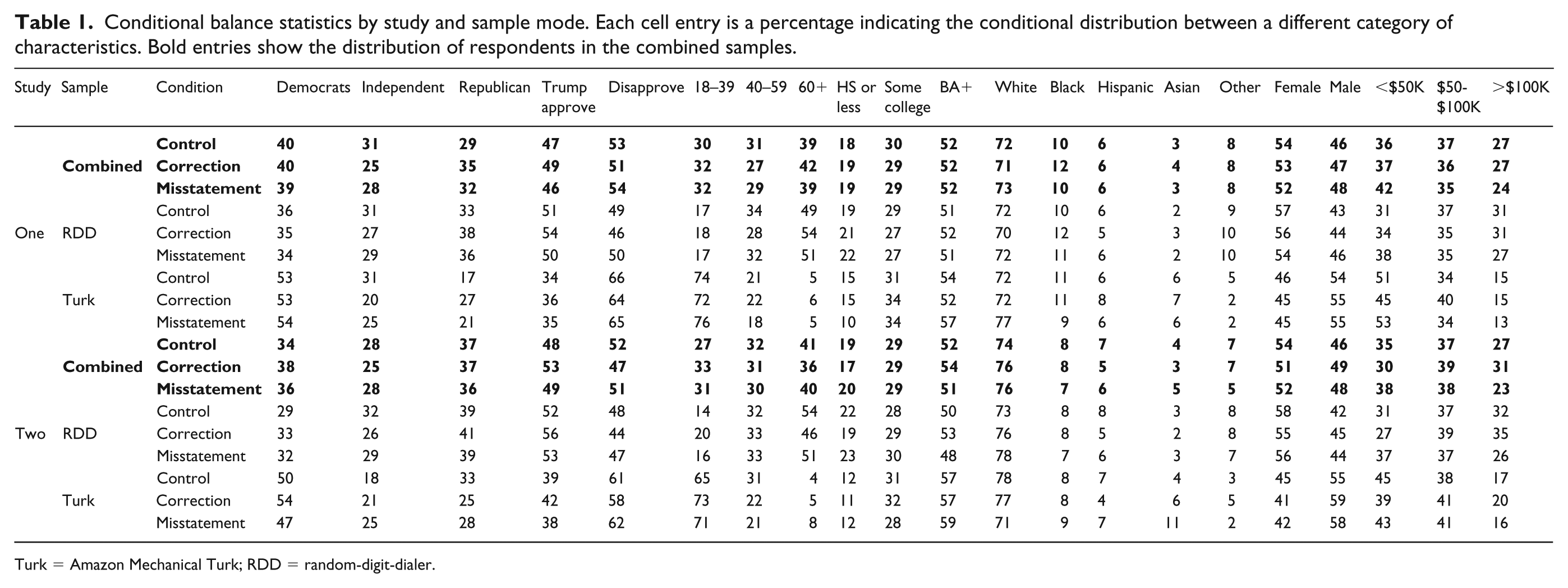

While previous work has looked at the correspondence between results gathered over Mechanical Turk and via RDD (Simons and Chabris, 2012), to the best of our knowledge this is the first paper in the study of factual corrections to take such an approach. We recruited subjects via RDD for two primary reasons. First, while Turk samples tend to be younger and more liberal, those who answer telephone surveys may be comparatively older and more conservative. Age and conservatism are both positively correlated with distribution of misinformation (Guess et al., 2019). As is evident from Table 1, combining multiple experimental modes substantially increased the number of older respondents.

Conditional balance statistics by study and sample mode. Each cell entry is a percentage indicating the conditional distribution between a different category of characteristics. Bold entries show the distribution of respondents in the combined samples.

Turk = Amazon Mechanical Turk; RDD = random-digit-dialer.

Therefore, and in no small part because our fact-checks targeted President Trump, reaching out to subjects via RDD increased the probability that we would observe subjects backfiring or rejecting the facts.

Second, we did not compensate RDD subjects for their participation. While we did not tie payment to Mechanical Turkers for their accuracy, we did pay them for participation, and we worried that some Turkers may have mistakenly believed we were paying them for accuracy—and connecting compensation to accuracy can increase the latter (Prior et al., 2015). Researchers who field Mechanical Turk studies can withhold payment after completion for any reason; of course, we did not do this, but if subjects had reason to believe we might do so, they might have worked harder to achieve accuracy. Because they were not compensated, our RDD subjects would not even have had this misperceived accuracy incentive.

In Experiment 1, we tested a false Trump claim about the scientific trends related to climate change. In response to a question about climate change, Trump said that [climate change] wasn’t working out too well, because it was getting too cold all over the place. The ice caps were going to melt, they were going to be gone by now, but now they’re setting records . . . they’re at a record level.

We randomly exposed some subjects to the claim alone and randomly exposed others to a correction of Trump’s claim, which pointed to National Aeronautics and Space Administration (NASA) data to inform subjects that ice caps are at record low levels. By pointing to NASA data, we were emulating the correction that popular fact-checking sources applied to this particular misstatement (Greenberg, 2018); we did this to increase external validity. Other subjects were randomly exposed to neither a misstatement nor a correction and were only asked our outcome questions. We then asked all subjects to rate their level of agreement with Trump’s misstatement.

In Experiment 2, we tested a false statement Trump made while announcing America’s withdrawal from the Paris Climate Accord. Trump claimed that the accord would prohibit America from building new coal plants while giving permission to China and India to build them. We did not have NASA data to fall back on; instead, we could only tell subjects assigned to a correction that Trump was wrong, and that the Paris Accord only set non-binding emissions targets. Again, our correction mirrored those provided by prominent fact-checkers (Kessler and Lee, 2017). Because source credibility can influence public opinion (Druckman, 2001), the absence of a source in the correction made Experiment 2 an especially demanding test of factual receptivity. In Experiment 2, like Experiment 1, some subjects were randomly exposed to both the false statement and the correction, others just to the false statement, and still others to the outcome items alone, with the last condition serving as a control. We once again asked all subjects to rate their level of agreement with Trump’s misstatement.

In both experiments, after measuring factual beliefs we asked a question meant to measure the effects on proximate policy attitudes. The question concerned attitudes toward environmental regulation and read as follows: “Some people think we need tougher government regulations on business to protect the environment. Others think that the current regulations are already too burdensome. Which of the following statements comes closest to your view?” Respondents could then choose between: “We need tougher regulations to protect the environment / Regulations are already too much of a burden / Not sure or don’t know.” While this policy attitude question does not perfectly map onto the underlying factual issue tested in either experiment, responses should reflect subjects’ views about Trump’s policy preferences, which have tended to oppose environmental regulation. By asking this question, we were testing whether presidential misstatements can compel subjects to align their preferences with the president disseminating the misinformation. If respondents supported regulations more after a fact-check, this would indicate that fact-checks can reduce the ability of presidents to use the bully pulpit to spread misinformation in service of their policy preferences. Conversely, if we were unable to detect effects of fact-checks on policy preferences, this would point to a limitation in the capacities of fact-checks vis-à-vis the presidential bully pulpit.

Results

The simultaneous administration of fact-checking experiments on both Mechanical Turk and RDD subjects constitutes one of the primary contributions of this paper. To that end, as we display in Table 1, the RDD samples were substantially older and friendlier toward Trump than the Mechanical Turk samples. For example, in Study 1, 54% of RDD subjects who heard a correction were over 60 years old. In that same study, only 6% of Mechanical Turk subjects assigned to a correction was over 60. In Study 2, 41% of RDD subjects who heard a correction were Republicans; in that study, only 25% of Mechanical Turk subjects who heard a correction were. Conditional differences by study and mode, and associated p-values, appear in the Online Appendix as Figure 4.

The experimental results are displayed in Figure 1. The accompanying regression results, which reflect ordinary least squares regressions with binary variables standing in for treatment conditions and omit the items-only (control) conditions, appear in Table 2. The figure and table aggregate results across modes. As the left two panels of Figure 1 show, on average, being corrected about both Trump misstatements led to gains in factual accuracy. For the first experiment, randomly being exposed to a correction led to a 0.29 increase in factual accuracy on a 5-point scale, compared to those who only saw the misstatement. In the second, along the same scale, corrections yielded a 0.35 increase in accuracy. Without a correction, Trump’s false statements caused a reduction in accuracy.

Experimental effects on factual understanding (in panels 1 and 2) and environmental regulation preference (in Panel 3). Positive values indicate improved accuracy and increased support for environmental regulation. Significant differences (p < .05) are labeled. These results reflect the models in Table 2.

The first and third models report the effect of corrections on factual accuracy, with larger values indicating increased accuracy. Models two and four report the effect of corrections on preferences for environmental regulation, with larger values indicating a preference for more regulation. Auxiliary quantities report contrasts by experimental condition, with Tukey-adjusted p-values for a family of three estimates. The first set of quantities focuses on the correction effects, while the second set focuses on the misinformation effects.

Regs.: regulations; Flex.: flexibility.

p < .05; **p < .01; ***p < .001.

Yet whether subjects were compensated for their participation, as with the Turk experiments, or not, as with the RDD experiments, corrections increased mean factual accuracy. Even Republicans who were reached over the phone and were not compensated did not backfire or otherwise become less accurate after being exposed to a correction.

While corrections can prompt considerable gains in factual accuracy, policy attitudes prove more stubborn. As the right two panels of Figure 1 illustrate, in neither study did we observe corrections changing views about environmental regulation. While people were more factually accurate after a correction, their views on regulation were indistinguishable from those who did not receive a correction. In line with other work on misperceptions (Nyhan et al., 2019), in neither experiment did we see corrective information having persuasive power on policy views.

To what extent did partisanship affect receptivity to factual information? Figures 2 and 3 showcase the effects by party. For both figures, column one isolates the misinformation effect, differencing those who answered the outcome items only from respondents who were also assigned to be exposed to a Trump misstatement. Column 2 shows the correction effect, differencing those who saw the outcome items only from subjects who were exposed to a Trump misstatement a factual correction. Finally, column 3 computes the difference in these two effects, inclusive of all partisans. The accompanying regression results, which used binary variables to account for treatment conditions and interacted those conditions with partisanship while omitting the items-only conditions, can be found in Table 3. Figures 2 and 3 and Table 3 aggregate responses across modes.

Treatment effects conditioned on partisanship for Experiment 1. Ribbons depict 95% confidence intervals, and labels indicate the expected values of these differences for strong Democrats, Independents, and Strong Republicans. These estimates summarize the regression models in Table 3.

Treatment effects conditioned on partisanship for Experiment 2. Ribbons depict 95% confidence intervals, and labels indicate the expected values of these differences for strong Democrats, Independents, and Strong Republicans. These estimates summarize the regression models in Table 3.

Regression models interacting experimental conditions with respondents’ partisanship. These models provide the estimates reported in figures 2 and 3. The first and third models report the effect of corrections on factual accuracy respectively: that respondents report accurate understanding of the polar ice caps’ size, and understand the stipulations of the Paris Climate Accords. Larger values indicate improved factual understanding. Models two and four report the effect of corrections on preferences for environmental regulation. Larger values indicate a preference for more environmental regulation. Auxiliary quantities report contrasts by experimental condition, and respondent partisanship, with Tukey-adjusted p-values for a family of three estimates. The first set of quantities focuses on the correction effects, while the second set focuses on the misinformation effects.

Regs.: regulations; Flex.: flexibility.

p < .05; **p < .01; ***p < .001.

In both experiments, Democrats exhibited the largest gain in accuracy. The difference between the correction and misinformation effects was always largest for Democrats (though not always significantly so). However, in Experiment 2, the strongest Republicans demonstrated responsiveness to factual interventions—immediately after exposure to misinformation propagated by their co-partisan president (though not significantly so). 3 In line with prior work (Hornsey et al., 2016), partisanship informs beliefs about climate change, but does not determine them.

Discussion

Despite an aggressive attempt by industry to sow confusion in the mass public (Oreskes and Conway, 2010), a large majority of Americans acknowledge the scientific consensus on climate change (Hamilton et al., 2015). Trump’s presidency raises concerns that any gains in accurate climate knowledge may be short-lived. As president, he can generally shape the beliefs of his co-partisans (Lenz, 2013). Existing research suggests that political independents in particular may struggle to evince factually accurate beliefs about climate change (Hamilton and Stampone, 2013). In sum, Trump as a Republican president may be especially powerful at convincing Republicans to disbelieve climate change facts; and given the evidence that political independents lack firm factual beliefs about this issue, he may be able to sway them too.

Our experiments make clear that fact-checks largely undermine Trump’s ability to reduce accurate knowledge. Across samples, when a Trump misstatement was followed by a fact-check, no average member of a partisan subgroup was made less accurate by the fact-check. This was the case even on the RDD sample, which recruited subjects who previous research suggests may have less interest, compared to other members of the population, in factual information. If his misstatements are followed by corrections, Trump’s ability to instill false climate beliefs is quite limited. To be sure, on their own, his misstatements degrade factual accuracy. But on average, corrections markedly improved accuracy. Though the effects of corrections were more muted for Republicans, in neither experiment did Republicans reject the correction for the word of the president. The increasingly common journalistic practice of fact-checking can have meaningful effects on mass political knowledge. However, while fact-checks can curtail the president’s capacity to shape political knowledge, we are unable to conclude that they can affect his ability to move people’s policy preferences.

Prior research into the effectiveness’s of fact-checking has often depended on compensating subjects for participating (Nyhan et al., 2019; Wood and Porter, 2017), introducing a possible misperceived accuracy incentive. In the experiments presented here, even non-compensated subjects were, on average, made more accurate by a correction. This was true despite our experiments’ focus on scientific misinformation that can be notoriously difficult to correct. However, even when they are made more accurate by a correction, people on average do not change their attitudes. Previous research has concluded that public support for climate change mitigating policies may play an essential role in their enactment (Stokes and Warshaw, 2017). Our experiments found that disabusing the public of inaccurate information will likely not, on its own, increase support for such policies.

In neither experiment did we witness as much effect heterogeneity by partisanship as some of the existing literature might lead one to expect. While we cannot rule out the possibility that this finding is an idiosyncratic byproduct of our samples, some recent evidence suggests that treatment effect heterogeneity is rare in both convenience and non-convenience samples (Coppock et al., 2018). When it comes to fact-checking, available evidence shows that partisan responses are often not especially distinct (Wood and Porter, 2017). Theoretically, it may be the case that factual information is simply not important enough to inspire the kind of motivated reasoning that would lead to more marked partisan differences. Motivated reasoning requires cognitive effort, and is usually a function of how important the subject judges the object to be (Taber and Lodge, 2006). Counter-intuitive though it might sound, people may be made more accurate by fact-checks across partisan lines precisely because they do not regard facts as particularly important. We note, however, that this is only theoretical speculation.

We would be remiss not to underline several limitations of the present study. We do not know whether the increases in accuracy observed among the subjects endured, and if so, for how long. Indeed, especially for the older, more conservative voters we contacted via RDD, it may have been the case that corrections increased accuracy only briefly. Such subjects may have media diets that broadcast Trump’s misstatements without subsequently fact-checking those misstatements, as we did here. However, because they were not compensated, we do not believe such subjects’ responses can be attributed to misperceptions about compensation for accuracy or other kinds of demand effects; but it still may have been the case that accuracy increases were short-lived. More work is needed to precisely measure the longevity of accuracy effects caused by fact-checks.

Even more broadly, as president, Trump is deeply unusual for many reasons, not least of which is his seeming unconcern with trafficking in untruths. According to one media account, Trump made more than 8000 false or misleading claims within two years of assuming office (Kessler et al., 2019). Fact-checking a different president, one less prone to mistruths, might have led to different results. Our decision to fact-check President Trump increases the external validity of our findings, but may diminish their generalizability.

With those limitations in mind, it is helpful to circle back to fundamental debates about the power of political elites generally, and the president in particular, to affect the mass public. When false claims are followed by a fact-check, the president’s capacity to degrade mass factual knowledge is rather limited. However, the same fact-checks do not have discernible impact on people’s policy views, including among co-partisans, suggesting that the president’s ability to shape policy preferences is, at most, orthogonal to his ability to affect knowledge.

Supplemental Material

Appendix – Supplemental material for Can presidential misinformation on climate change be corrected? Evidence from Internet and phone experiments

Supplemental material, Appendix for Can presidential misinformation on climate change be corrected? Evidence from Internet and phone experiments by Ethan Porter, Thomas J. Wood and Babak Bahador in Research & Politics

Footnotes

Acknowledgements

We thank Nina Kelsey, Leah Stokes, and Will Youmans for their comments. Mark McKibbin, Jonathan Riddick, and Amanda Menas provided excellent research assistance. All errors are our own.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental materials

Notes

Carnegie Corporation of New York Grant

The open access article processing charge (APC) for this article was waived due to a grant awarded to Research & Politics from Carnegie Corporation of New York under its ‘Bridging the Gap’ initiative.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.