Abstract

Recent work has suggested that media reporting about the public’s policy preferences may be self-reinforcing, contributing to greater policy conformity. This article presents additional evidence in support of this theory and adds new detail about the conditionality of these effects. Results from two experiments are described in which respondents are presented with excerpted news stories containing varying polling information about six separate issues. Findings indicate that (a) exposure to such poll results can elicit differences in support for the issue by as much as 10–15 percentage points; (b) the magnitude of these effects varies systematically and inversely in relation to overall attitudinal intensity levels for each issue; and (c) the opinions of specific subgroups referenced in polls matter, producing larger or smaller effects depending on how salient the group is to receivers of the information. Taken together, these results underscore why news reporting about public attitudes deserves greater attention as an important factor in the policy process.

For as long as scientific surveys have been fielded and their results publicly reported on, they have been viewed suspiciously by citizens and policymakers alike for their potential to influence the public’s preferences (Igo, 2008). Efforts to test how poll results might move public opinion have largely focused on “bandwagon effects” during electoral match-ups or other campaign-related attitudes and behaviors (see Ansolabehere and Iyengar, 1994; Goidel and Shields, 1994; Mutz, 1995, 1997). 1 Far less scholarship has been conducted examining the impact of polls on policy preferences, where people’s attitudes are less likely to be conditioned by partisan cues and where ambivalence and lack of information are widespread.

These effects, should they exist, have major implications for policymakers, journalists, and scholars who refer to opinion polls as evidence of the public’s ostensibly independent policy demands distinct from, for example, “the extreme claims of pressure groups” (Key 1942: 653) who seek to convince leaders of the popularity of their favored agendas. A past president of the American Association for Public Opinion Research, for example, has described polls as a “public utility” and a “continuing ballot box” with the potential to “make government more responsible to the electorate, and consequently, more democratic” (as quoted in Frankovic 2012: 132). To the extent survey results are shaped by exposure to previous polls or other information about public opinion, media reporting about these preferences may well be self-reinforcing, contributing to greater policy conformity and impeding democratic processes.

This article presents results from two experiments that add to the mounting evidence that media reports about polls have the capacity to do just that. The findings detailed below show not only that policy conformity effects occur and can be sizeable in scope, but also important elements about the conditionality of how and when such effects occur.

Previous studies have found isolated cases of policy-related bandwagon effects for “emergent” issues (Lang and Lang, 1984) such as policies surrounding the AIDS epidemic during the 1980s (Dearing, 1989), but also highly contentious policy debates such as abortion (Marsh, 1985) or Quebec sovereignty (Nadeau, Cloutier and Guay, 1993). One recent experiment on a diverse nationally representative sample by Rothschild and Malthotra (2014) indicated that polls may shift people’s stated preferences but that effects vary considerably from issue to issue. Two specific hypotheses flow from these past studies:

Less narrowly focused on polls, a separate literature has also developed in recent years concerning the influence of group norms and social identity on shaping individual attitudes (e.g., Mason, 2018; Suhay, 2015), which suggests an important causal explanation for why poll results might affect policy views. This work differs from past research on social influences on public opinion, such as Huckfeldt and Sprague (1995), which primarily focuses on the diffusion of information through discussion networks. Rather, this recent work revives psychological explanations for social and partisan conformity, drawing on social identity theory (Turner, 1991) and the notion that people regularly engage in what is called “social reality testing” (Festinger, 1950), surveilling the opinions of people around them for evidence of the “subjective validity” of beliefs that otherwise cannot be physically tested. Individuals build confidence in their opinions by comparing them with others’, relying in part on the received wisdom of in-group and out-group views as evidence of “correct” and “incorrect” thinking. This social reality testing makes staking out a minority position at odds from the larger group cognitively and emotionally burdensome. This psychological framework leads to a third hypothesis, which is tested in the second of the two studies presented below:

The two experiments described here are innovative for several reasons. First, this research goes beyond past studies by examining policy-related bandwagon effects across a larger number of issues of differing salience and intensity-level to respondents. This variation enables a more thorough examination of the relationship between attitudinal intensity and the effects of exposure to polls. Second, unlike past work examining the effects of polls, Study 2 specifically examines the heterogeneous impact of poll results pertaining to specific subgroups. That is, a moderately large sample is used to examine how different types of polling information may impact different people differently. Third, the experiments themselves make use of a novel, more naturalistic design that better captures the presentation of polling information in daily life. By embedding polling information in excerpts from simulated news stories about policy debates, the experiments effectively recreate the social reality testing dynamic, inviting respondents to weigh opposing arguments against each other and make use of polling information as evidence for or against the “subjective validity” of either side’s position.

Experimental design

To test these hypotheses, two survey experiments were conducted in January 9–14, 2015 (Study 1) and August 5–12, 2015 (Study 2). The first of these experiments examined the effects of polling information about the policy preferences of the general public, while the second experiment examined the influence of relevant subgroup preferences revealed through polls. This section details the experimental designs of both studies.

Data

Both survey samples were recruited from Amazon’s Mechanical Turk (MTurk) platform. Recruits were screened according to their MTurk “reputation” (Peer, Vosgerau and Acquisit, 2014), and were required to be at least 18 years old and U.S. citizens using computers located in the United States. The resulting samples were comparable to the larger population overall albeit more highly educated, more liberal, and less ethnically diverse (Table 1). Respondents also skewed younger with half between 26 and 43, but those older than 55 made up 10% of the samples and ranged as high as 82 and 70 in Study 1 and Study 2, respectively. Each completed survey respondent earned US$0.50, yielding samples of 1261 participants (Study 1) and 775 (Study 2). 2

Summary of sampled respondents in each study.

Note: Population Estimates For 2012 From The American National Election Studies (ANES).

Concerns about data quality on MTurk have declined over time as the site has improved screening methods and multiple studies have validated their samples as a source for survey participants (e.g., Berinsky, Huber and Lenz, 2012; Clifford, Jewell and Waggoner, 2015; Peer, Vosgerau and Acquisit, 2014). While non-probability samples may be problematic for some survey research tasks, these concerns are reduced when using surveys for experiments with random assignment.

Treatments

Both experiments involved exposing study participants to information about public opinion, but unlike most past studies (see Ansolabehere and Iyengar (1994) as an exception), polling information was embedded in excerpts from simulated news reports about various policy debates. These excerpts were accompanied by graphics adapted from a prominent U.S. cable news network. A sample treatment stimulus is provided in Figure 1; the remainder are provided in Online Appendix E of the supplementary appendices. By presenting polling information in this way, it more naturalistically approximates how people are typically exposed to polling information, reducing possible demand effects associated with presenting poll results in the question prompt itself. This approach also ensured that all respondents, including in control conditions, were presented with a shared baseline of information about the policy debates surrounding each issue.

A sample of the experimental treatments in the two studies.

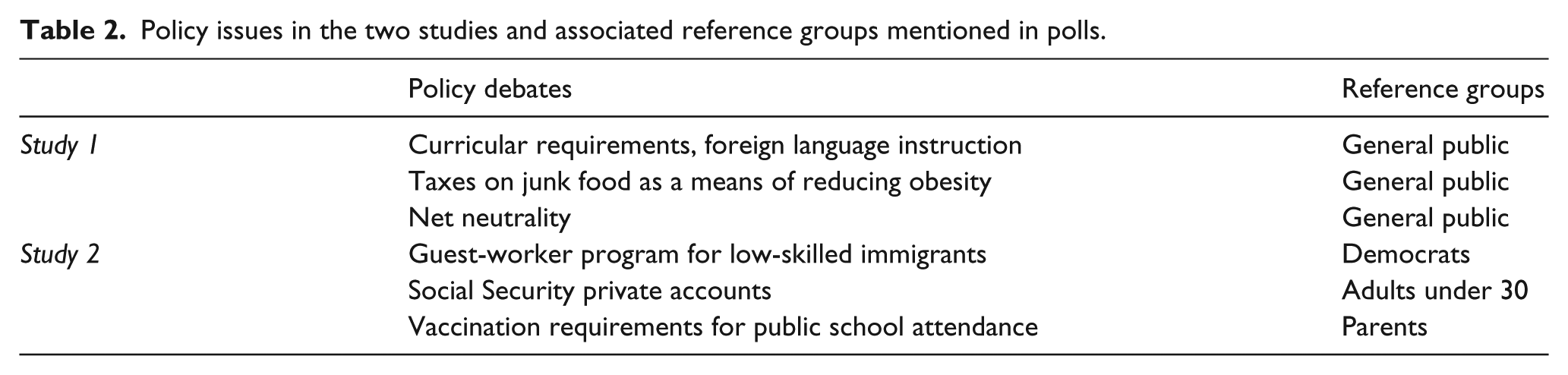

The news excerpts used across the two experiments separately described six policy debates (summarized in Table 2). Issues were selected to achieve variation in salience, but highly polarized issues were avoided such as Obamacare where partisanship might otherwise subsume all other effects. In both studies, treated groups received information about public opinion on the issue. In Study 1, overall public opinion among registered voters was references, whereas in Study 2, the preferences of issue-specific reference groups were provided such as the views of parents concerning school vaccination requirements, the views of Democrats on a guest-worker program for immigrants, or the views of adults under 30 on social security privatization.

Policy issues in the two studies and associated reference groups mentioned in polls.

In each experiment, respondents received information about each issue in randomized order, and for each issue they were randomly assigned into treatment or control groups. 3 Study 1 included: (1) control groups in which respondents received news excerpts about the issue debates but no polling information, 4 (2) treatment groups in which news excerpts also included poll results showing the public closely divided on the issue (ranging from 49–51% favoring it), and (3) treatment groups in which news excerpts included poll results showing support for the issue was far more lopsided (with 65–67% in favor). 5 Study 2 included a single control group and one treatment condition in which respondents were told the relevant subgroup was overwhelmingly opposed to the issue. These groups are summarized in Table 3. Actual polls at the time on the issues in Study 1 showed the public closely divided whereas the information provided in Study 2 intentionally contrasted with reported poll results about reference groups’ opinions. 6

Description of treatment and control groups in the two studies.

Dependent variable

The dependent variable used in both studies was a composite measure of respondent support or opposition to the policies derived using responses to the question, “Please tell us whether you FAVOR or OPPOSE the proposal?” crossed with three additional questions meant to gauge the attitudinal intensity of respondent preferences (as in Mutz, 1998). The three intensity questions were: “How much do you personally CARE about [the issue]?”; “How CERTAIN are you of your feelings on [the issue]?”; and “About HOW MUCH TIME have you spent thinking about [the issue]?” Such attitudinal intensity questions constitute an important, if underutilized, dimension of public attitudes (see Krosnick and Schuman, 1988; Glynn and Park, 1997). 7 Distributions of support using these composite measures are included in Online Appendix B.

Note that respondents were not asked in a pre-test to state their prior preferences for each policy before exposure to treatment. Doing so runs the risk of not only consistency bias but it makes little practical or theoretical sense here as respondents would be asked to state a preference for a policy without a clear referent to the policy debate itself. That is, in order to differentiate effects of exposure to polling data from effects of exposure to media reports, all respondents were provided with the same basic excerpted news report and preferences were only measured post-treatment (or exposure to the control condition stimulus).

Estimation strategy

Several empirical strategies were employed to evaluate the three hypotheses outlined above. To test H1, or whether polls about the public’s preferences lead to greater policy issue conformity, difference-in-means tests were used to compare the average levels of support or opposition to each issue in the control and treatment groups. To assess H2, which predicted an inverse relationship between the magnitude of conformity effects and attitudinal intensity levels, average treatment effects (ATEs) were estimated using the dichotomous measure of support or opposition to each issue, calculating the difference between support for each policy in control and treatment groups. These ATEs were then examined in relation to mean attitudinal intensity for each issue, 8 assessing whether a positive or negative linear trend appeared across the six issues. As only six issues were tested, these results are suggestive rather than dispositive.

As H3 involved heterogeneous treatment effects, respondents were asked several additional demographic and attitudinal questions relevant to the treatment conditions. Closeness was measured in multiple ways. Using language adopted from the American National Election Studies (ANES), 9 self-reported feelings of closeness were asked directly about relevant groups including “Democrats” (used in the analysis of support for the guest-worker program) and “parents of young children” (used in the analysis of the school vaccination issue). Respondents’ age was used to analyze support for social security reform. In addition, respondents were asked their own party identification using the standard 7-point scale adapted from the ANES, gender, race, education, and overall interest in politics. The effects of these potential moderating factors were then tested in linear regression models, interacting the moderating variables with indicators for treatment conditions. 10

Results

Because the two studies are similar in design, results are presented below in order of the three hypotheses.

Both studies offer considerable empirical support for H1, but the existence of effects and their magnitude (as explored in H2) varied from issue to issue. Average levels of support among treatment and control groups are plotted in Figure 2. In Study 1, difference-in-means tests showed no significant divergence in opinion between those in control groups and those receiving polling information suggesting the public was evenly divided on the issues. Significant differences were observed, however, with respect to the second treatment group where respondents received polling information suggesting high levels of support for the issues of foreign language requirements where dichotomous support was 14.2 percentage points higher (p < .001) and junk food taxes where support was 9.3 percentage points higher (p < .01). The net neutrality issue saw no significant effects, but approximately 70% of respondents were already in favor of the issue in the control group, so there may have been ceiling effects for this issue that limited conformity.

Differing levels of support for the six policy issues presented across the two studies broken down by treatment and control groups. Plots include bands reflecting 95% confidence intervals. Higher positive numbers on the y-axis denote greater support for the issues. Significant differences between treatment and control groups denoted by * p < .10; ** p < .05; *** p <.01.

Significant differences between the treatment and control groups were observed across all three issues tested in Study 2 where treated respondents received information suggesting issue-specific relevant subgroups were largely opposed to the issues. The guest-worker program saw the largest decreases in dichotomous support, 13.3 percentage points (p < .001) whereas social security reform (8.3 percentage points, p < .05) and school vaccination requirements (5.4 percentage points, p < .10) saw more modest declines.

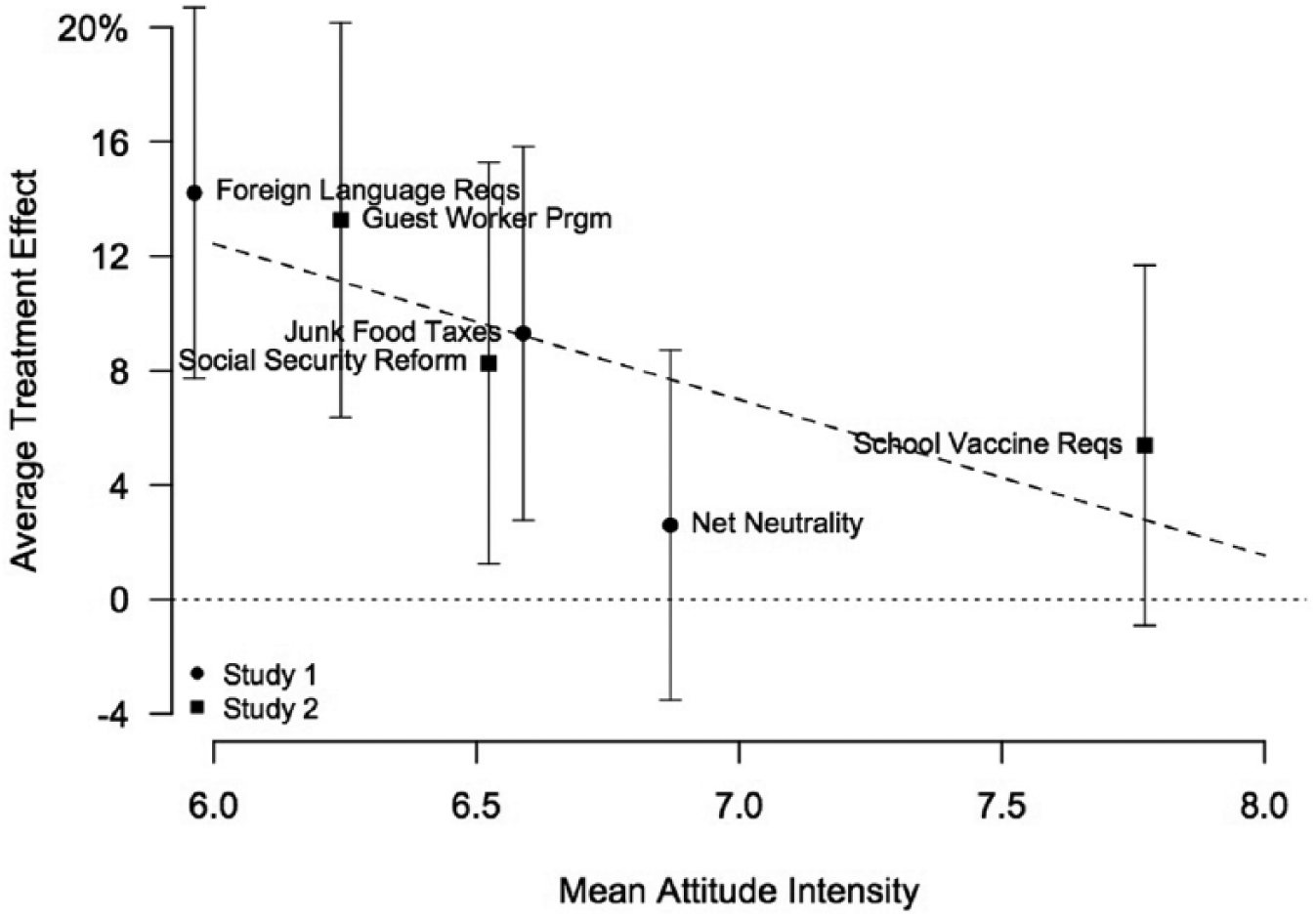

To examine the relationship between issue intensity and the magnitude of conformity effects, differences in the dichotomous measures of support between treatment and control groups are plotted in Figure 3. For comparability across the two studies, only treatment conditions in Study 1 where respondents were told the public was highly in favor of the issue are included and the signs are reversed in Study 2 since the experimental stimuli in these issues produced conformity in the opposite direction.

Relationship between intensity of attitudes and observed average treatment effects (ATEs) across the two studies. Plots include bands reflecting 95% confidence intervals. For interpretability, only the second treatment groups are shown from Study 1 where respondents were told the public is highly in favor of each policy, and in Study 2, where respondents were told that relevant reference groups were opposed to the issues, the sign on the ATEs is reversed for better comparability. The slope of the fitted line is −5.4.

A trend line across the six issues lends support for H2, indicating that on average, conformity effects are approximately five percentage points smaller for each one-point increase in average attitudinal intensity about the issue. This inverse relationship is similar to the one theorized by Rothschild and Malhotra (2014), who likewise found high variability in the effect of polling information depending on the issue being tested.

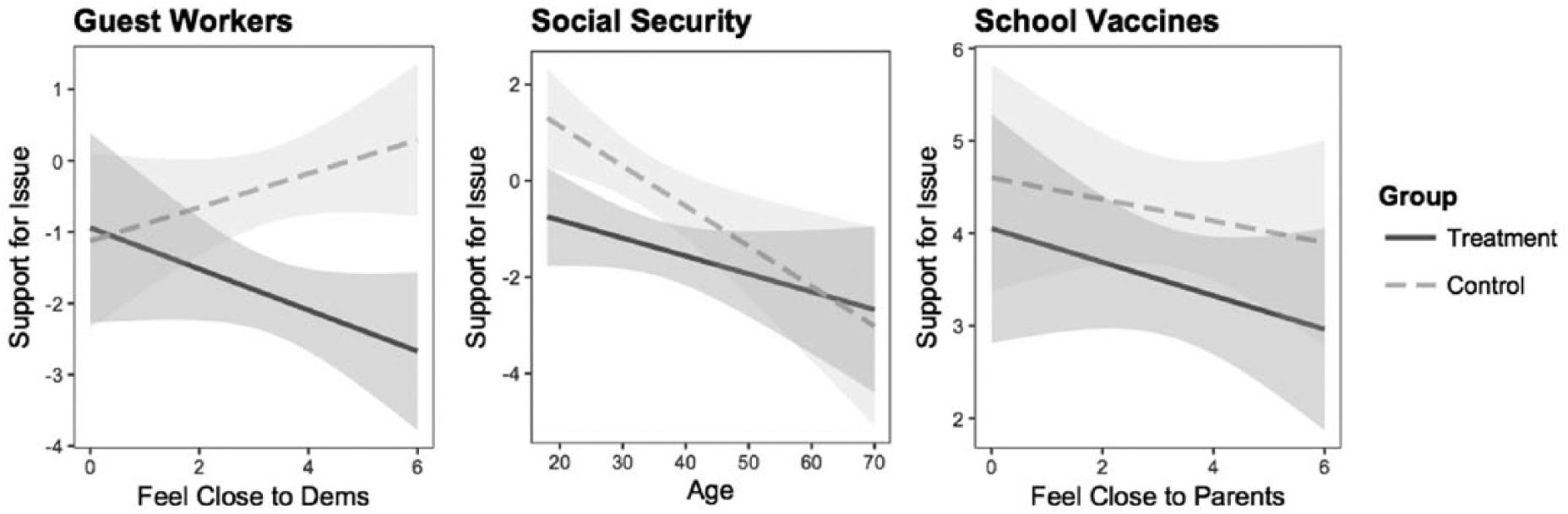

The three issues tested in Study 2 provide an opportunity to examine the conditionality of conformity effects. Recall in this study, treated groups received information about issue support among subgroups relevant to the policy debate rather than the public overall: Democrats with respect to levels of support for the guest-worker program, adults under 30 with respect to social security reform, and parents of school-aged children with respect to vaccinations in schools. Corresponding moderating variables were then tested to examine the conditionality of the influence of the polls: feelings of closeness to Democrats, respondents’ age, and feelings of closeness to parents. For two of the three issues, the moderating variables were significant when modeled in interactions with indicators for treatment (see Online Appendix D for model output). In Figure 4, vignettes are plotted showing how these variables moderate treatment effects, holding all other variables at their mean values.

Comparing predicted levels of support for Study 2 issues between treatment and control groups when interacted with issue-specific moderating variables. All other variables held at their mean values. Graphs depict confidence intervals at the 90% level. Models include control variables for age, education, political interest, and party identification. Full output reported in Tables D-1, D-2, and D-3 in the Online Appendices.

Support for the guest-worker program was lower among all treated respondents (told Democrats opposed the program), but support was significantly lower among those who felt closest to Democrats. The same dynamic was observed for the social security issue. Treatment effects were concentrated primarily among younger adults who were also the reference group in the experimental stimuli. Older adults, more opposed to the issue to begin with, appeared unmoved by the polling information. Feelings of closeness toward parents did not significantly influence treatment effects for the school vaccination requirements, but treatment effects for this issue were only at borderline levels of significance to begin with. Nonetheless, the difference between treatment and control groups among those who felt closest to parents as a group was slightly larger than among those who felt most distant from the reference group.

Discussion

This article detailed results from two studies demonstrating clear effects due to exposure to public opinion polls about the preferences of other citizens. Across five of the six issues tested, stated policy preferences differed significantly when comparing individuals exposed to treatment stimuli and those in control groups. The second analysis above adds to earlier findings from Rothschild and Malhotra (2014), which demonstrated varying effect sizes across issues. These studies show this variation to be systematic: that is, across the six issues, the magnitude of effects was inversely related to attitudinal intensity levels. Issues to which citizens in the aggregate have paid less attention, care less strongly about, and feel less certain about, such as requirements for foreign language instruction in public schools, were those associated with the largest shifts in response to social influence (as much as 14–15 percentage points), but even matters more central to current U.S. politics, including social security and immigration-related reforms, saw opinion shifts of 8–12 percentage points, arguably substantive enough to change perceptions of overall public opinion on the issues.

Lastly, as indicated by the second study above, policy preference conformity may be in some respects conditional on the relationship individuals have with the reference groups featured in the surveys themselves. Respondents for whom the subgroups described in polls were more salient exhibited larger effects across all three issues. That is, when polls describe the views of “people like you,” you are more likely to be influenced by them. These findings and others like them (Kertzer and Zeitzoff, 2017; Toff and Suhay, 2018) add to a growing consensus in the field of political science that social influences play an important role in shaping the formation of individual political attitudes and preferences even as the nature of those processes remains shrouded in a great deal of uncertainty.

The studies detailed in this article bring greater clarity to debates in the literature about the influence of poll results in the news. By improving on earlier efforts to measure bandwagon effects by using a more subtle and naturalistic form of experimental manipulation and offering a clearer framework for thinking about why and how social information may be salient to people, these experiments indicate a path ahead for future research regarding such social influence processes and the dynamics of social reality testing. Furthermore, as the analysis in H3 indicates, effects of polling information on news audiences are unlikely to be uniform across all segments of the public. Future studies ought to consider additional moderating factors that may further limit or exacerbate people’s susceptibility to these kinds of effects including, for example, the role of political engagement and knowledge, or individual-level differences such as self-monitoring personality characteristics, or trust in the science of survey research or media messages more generally. How quickly these effects decay or persist over time—or whether they represent enduring changes in opinion or momentary changes in “top of the head” responses to survey-takers’ questions—remains an additional and vital question for future scholarship, although Sonck and Loosveldt (2010) did find that shifts in perceptions of public opinion may last weeks after initial exposure. Disentangling these long-term effects requires studying the influence of polls over a longer time horizon.

Nonetheless, if poll results can tip the scales in a self-prophesying manner—and it appears in some cases they can—these findings have critical implications for both survey researchers and journalists who use polls to assess public attitudes. It suggests that in some instances widespread reporting on polls can produce the very results it describes—a finding that may help to explain “momentum” during party primaries (Bartels, 1988) or information cascades during citizen deliberation over policy (Hamlett and Cobb, 2006). To be sure, extrapolating from analyses of experimental effects to the “real world” presumes a degree of exposure to polls that surely differs from the way most people consume information in their day-to-day lives (Barabas and Jerit, 2010). Only select individuals are likely to be exposed to poll results in this way. On the other hand, to the extent that polling information serves to alter the perceptions of journalists who cover politics, it is not difficult to envision further feedback effects beyond those stemming from exposure to a single poll—opinion spirals in which polls influence reporters’ framing of the opinion climate, which in turn may further alter support among the public.

Supplemental Material

Effects_of_Polls_-_Appendix – Supplemental material for Exploring the effects of polls on public opinion: How and when media reports of policy preferences can become self-fulfilling prophesies

Supplemental material, Effects_of_Polls_-_Appendix for Exploring the effects of polls on public opinion: How and when media reports of policy preferences can become self-fulfilling prophesies by Benjamin Toff in Research & Politics

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: the research was supported by summer initiative funding from the Department of Political Science at the University of Wisconsin-Madison.

Supplemental materials

Notes

Carnegie Corporation of New York Grant

This publication was made possible (in part) by a grant from the Carnegie Corporation of New York. The statements made and views expressed are solely the responsibility of the author.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.