Abstract

Deepfakes are perceived as a powerful form of disinformation. Although many studies have focused on detecting deepfakes, few have measured their effects on political attitudes, and none have studied microtargeting techniques as an amplifier. We argue that microtargeting techniques can amplify the effects of deepfakes, by enabling malicious political actors to tailor deepfakes to susceptibilities of the receiver. In this study, we have constructed a political deepfake (video and audio), and study its effects on political attitudes in an online experiment (

So-called “deepfakes,” many argue, may be a new form of disinformation that is especially challenging to society. These manipulated videos are the result of machine learning, and can make it seem as if a person says or does something, while in reality, they have never said or done anything of the sorts. Using a lot of real examples of speech and moving images, a so-called neural network is trained that can be used to create a deepfake and deceive citizens. Barack Obama, for example, was once heard and seen calling Donald Trump “a total and complete dipshit” in an online video. In reality, this never occurred. A deepfake made by Jordan Peele made it seem that way. 1 Disinformation conveyed via deepfakes could pose a challenge during elections, since, to the untrained eye, a deepfake may be difficult to distinguish from a real video. Any political actor could try to discredit an opponent or try to incite some political scandal with the goal of furthering their own agenda. After being exposed to a deepfake, citizens may, for instance, change their attitudes toward the politician depicted in the deepfake, or toward the politician’s party. As a result, citizens then cast their votes on the basis of false information, and potentially in line with the goals of the political actor behind the deepfake. This can raise questions about the legitimacy of democratic institutions (Bennett and Livingston 2018), the quality of public debate (Xia et al. 2019), the power of citizens (Flynn et al. 2017), and the power of malicious political actors (Bradshaw and Howard 2018).

Whether people indeed “fall for” deepfakes is unclear and understudied, but not unimaginable. Deepfakes consist of largely real images, and producers only manipulate relatively small elements of the video (e.g., facial expressions, voice), which contributes to the realism of the deepfake. In this sense, a deepfake is qualitatively different from a photoshopped image: a deepfake deceives not just the eyes, but the ears as well.

There are several reasons to believe deepfake disinformation can have a detrimental societal impact, which is why studying effects of deepfake disinformation is worth the scientific scrutiny. For one, deepfakes can be realistic disinformation. Automatically generated images and sounds can be as convincing as real sounds and images. An ordinary citizen may struggle to distinguish fact from fiction. Second, deepfakes can be used to amplify existing mis-, dis- or malinformation. A producer could create a deepfake where the pope is seen and heard to endorse Donald Trump, or a public health official ostensibly seen and heard confirming that vaccinations indeed cause autism. Third, deepfakes can also be a form of efficient disinformation. If a political actor has enough training data, the actor can make many different, realistic deepfakes of the same person in a short period of time. In combination with political microtargeting (PMT) techniques, deepfakes can be especially impactful. We are not there yet. Deepfakes do not yet flood the public sphere, let alone

In this paper, we argue that it is not only the technical possibility of creating deepfakes that is troubling, but also the potential consequences of deploying deepfakes

PMT is a relatively new technique used by political campaigns worldwide (Baldwin-Philippi 2019; Dobber et al. 2017; Dommett 2019; Dommett et al. 2020; Kreiss 2016; Matsumoto 2018; Moura and Michelson 2017). PMT is “a type of personalized communication that involves collecting information about people, and using that information to show them targeted political advertisements” (Borgesius et al. 2018: 82). While tailored messages are often seen as textual messages or traditional campaigning material, we can easily imagine how a deepfake can be used to try and influence particular subgroups of the electorate.

While there is substantial literature on the technical side of deepfakes, such as detection methods (e.g., Afchar et al. 2018; Agarwal and Varshney 2019; Li and Lyu 2018; Yang et al. 2019), as of yet, deepfakes are only marginally studied in the political communication field. Vaccari and Chadwick (2020) used an existing deepfake to show that deepfakes poison the public debate by confusing people about what is real and what is not. To the best of our knowledge, effects of self-produced (microtargeted) deepfakes on people’s political attitudes have never been studied. More knowledge about these effects is needed to understand and counter the threats that deepfake disinformation poses to our democratic societies, for instance, to better inform strategies to combat deepfakes. For this study, we have produced a political deepfake ourselves (video and audio). Using an online experiment, we aim to study the effects of (microtargeted) deepfakes by answering the following key question: To what extent does a (microtargeted) deepfake meant to discredit a politician affect citizens’ attitudes toward that politician and his party?

Theoretical Background

Deepfakes as a Form of Disinformation

False information generally can be placed in one of three categories: disinformation, misinformation, or malinformation (Wardle and Derakhshan 2017). Deepfakes fit best in the disinformation category, which encompasses “manipulated content,” “imposter content,” and “fabricated content” (Wardle and Derakhshan 2017: 5). Disinformation can be seen as “intentional behavior that purposively misleads” (Chadwick et al. 2018: 4257). In contrast, misinformation differs from disinformation in the sense that the former does not imply the intention to deceive (Jack 2018), and malinformation is different from disinformation in that malinformation requires a (slim) factual basis.

Often, disinformation is meant to achieve some political goal. Actors behind disinformation can be domestic actors as well as foreign political actors. Legitimate domestic political actors can use illegitimate means such as disinformation to further their goals.

Foreign actors may try to intervene in domestic debates by injecting lies and conflict in the public sphere (Asmolov 2018; Bradshaw and Howard 2018; Lukito 2019; Xia et al. 2019). Foreign actors may even try to confuse citizens to a point where they become cynical and suspicious of legitimate information and legitimate institutions (Arendt 1951; see also Vaccari and Chadwick 2020). Therefore, disinformation is increasingly regarded a matter of (inter)national security (see, for example, Atlantic Council 2019; European Commission 2019; Metodieva 2018).

Literature about disinformation is growing rapidly, but there is still a “substantive research gap” about its effects (Tucker et al. 2018: 57). Existing literature of paints a nuanced picture. Guess et al. (2018) found that the effects of false news articles on citizens’ political attitudes are likely dampened because only a specific small group of citizens (the people with the most conservative online media diets) is exposed to misinformation. Similarly, Bail et al. (2020: 1) found no evidence for the idea that Russian trolls affected Americans’ political attitudes: those engaging with the Russian trolls “were already highly polarized.” These studies occurred in a very specific context (the U.S. context), focused on specific modes of disinformation (false news stories and Russian trolling on Twitter), and in specific time periods (October 7 to November 14, 2016; and October and November, 2017).

These studies offer insights into the limits of online disinformation campaigns. We argue that deepfake disinformation could be more impactful than Twitter trolling and false news stories. Vaccari and Chadwick (2020) found in a U.K.-based study that deepfakes confuse people about what is real, and consequently reduce trust in news on social media. Zimmermann and Kohring (2020) found that the less people trust news media, the more likely they are to fall for disinformation, which in turn can affect their voting behavior. However, the latter study did not focus on deepfake disinformation, and Vaccari and Chadwick (2020) did not measure how deepfakes affect political attitudes.

Moreover, the potential impact of deepfakes may be amplified by microtargeting. After all, the reason for Bail et al.’s (2020) conclusion of limited effects was not that the efforts in itself were ineffective, but that they were essentially targeted at the wrong, already polarized, audience.

The Amplifying Role of PMT

The Trump-electorate example mentioned in the introduction illustrates how sending several

Our expectations about the potential amplifying role of PMT in deepfake disinformation campaigns are informed by two contrasting theoretical perspectives. First, one could argue that tailored deepfakes are perceived to be more relevant, and, thus, are more likely to be scrutinized by the receiver (which may amplify the effects of the deepfake). Second, one could also argue that a tailored deepfake would cause motivated reasoning: Confronted with incongruent information, the receiver reasons this incongruence away to “maintain their extant values, identities and attitudes” (Slothuus and De Vreese 2010: 652). Motivated reasoning likely decreases the deepfake’s effects.

Amplification

PMT allows political actors to expose people to tailored messages, which should amplify the effects of those tailored messages. The idea is that people would only receive messages that are personally relevant. People who perceive a message as relevant and engage in greater message scrutiny than those who perceive a message as generic (Chang 2006; Wheeler et al. 2005). Scrutinized messages are more likely to influence citizens (Petty and Cacioppo 1986; Wheeler et al. 2008). A tailored deepfake is more likely to be perceived as relevant, which increases the chances of message scrutiny, which, in turn, increases the chances of influencing the citizen. Evidence of how PMT amplifies effects on political behavior is scarce. Endres (2019: 1) found that targeting Democratic voters on issues on which they hold similar positions with the Republican candidate “is associated with decreased support” for the Democratic candidate, and “increased abstention, and increased support for” the Republican candidate. Haenschen and Jennings (2019) found that microtargeted online ads could increase turnout conditionally under Millennial voters: only in competitive districts. Both studies were conducted in a U.S. context. Decreasing support for the citizen’s “own” candidate and increasing support for the opponent, as demonstrated by Endres (2019), are arguably impressive in a polarized two-party context such as the United States (Abramowitz 2013; Webster and Abramowitz 2017). In a multiparty context, citizens are more likely to switch to parties within their “consideration set” rather than to a party outside of the consideration set or rather than abstaining altogether (Rekker and Rosema 2019).

Highly competitive districts such as those studied by Haenschen and Jennings (2019) are difficult to find in a multiparty context due to their (often) system of proportional representation. As such, it is difficult to see how the findings of Haenschen and Jennings (2019) can be generalized to multiparty contexts.

Present research on PMT focuses on legitimate forms of communication, but not on disinformation. To further explore what happens when people are exposed to a (microtargeted) deepfake, we turn to the literature on

Gaffes and scandals

A gaffe is an “unintentional and/or inappropriate statement or behavior bringing into question his or her knowledge, wisdom, and/or politically acceptable attitudes that lead others to question a person’s judgment, ability or character” (Frantzich 2012: 4). A prominent example of a gaffe is a recording of 2012 U.S. presidential candidate Mitt Romney, who was covertly filmed when discounting 47 percent of Americans as entitled, dependent victims who will vote for Obama no matter what (Sheinheit and Bogard 2016). According to Sheinheit and Bogard (2016), this gaffe correlated with a decrease in support for the 2012 U.S. Republican candidate. A different example is the “Dean scream,” which correlated with the deterioration of the 2004 U.S. campaign of Howard Dean (Kreiss 2012).

There is little literature on gaffes. But the closely related field of political scandals is more mature. In the political scandal literature, scandals are causally associated with a decline of political attitudes toward politicians and political parties (Brody and Shapiro 1989; Chanley et al. 2000; Maier 2011). 2 Considering literature on gaffes and scandals, we expect that

Considering the literature on the potential amplifying quality of PMT, we expect that

Inoculation

A deepfake is meant to cause an incongruence between expectation and perceived reality. Seeing a known political figure say or do something offensive or shocking can induce motivated reasoning if people identify with the same political party as the depicted politician. Partisanship plays an important role in activating motivated reasoning (Bolsen et al. 2014; Slothuus and De Vreese 2010). While partisan motivated reasoning can seem to decrease when presented with clear evidence (Parker-Stephen 2013), this type of reasoning has been shown to be highly adaptive in finding ways to still maintain one’s predispositions, despite clear evidence (Bisgaard 2015). Indeed, corrections of misleading statements made by a political candidate have been shown to have no impact on evaluations of that candidate (Nyhan et al. 2019). Moreover, partisans that are confronted with information have been found to interpret this information along party lines (Lauderdale 2016), and in line with prior beliefs (Gaines et al. 2007). However tenacious, Redlawsk et al. (2010) demonstrated that there likely is an “affective tipping point” where citizens stop motivated reasoning. Potentially, microtargeted deepfakes can play a role in reaching that tipping point by confronting citizens with highly relevant discomforting information. Considering the literature on motivated reasoning, we formulate the following research question:

Method

Sample

The sample consisted of 278 participants. Participants were recruited by Kantar Lightspeed, a Dutch company specialized in recruitment for academic purposes, and paid a small amount for their participation. Data collection took place in October 2019. The mean year of birth in the sample was 1970 (

Experimental Design

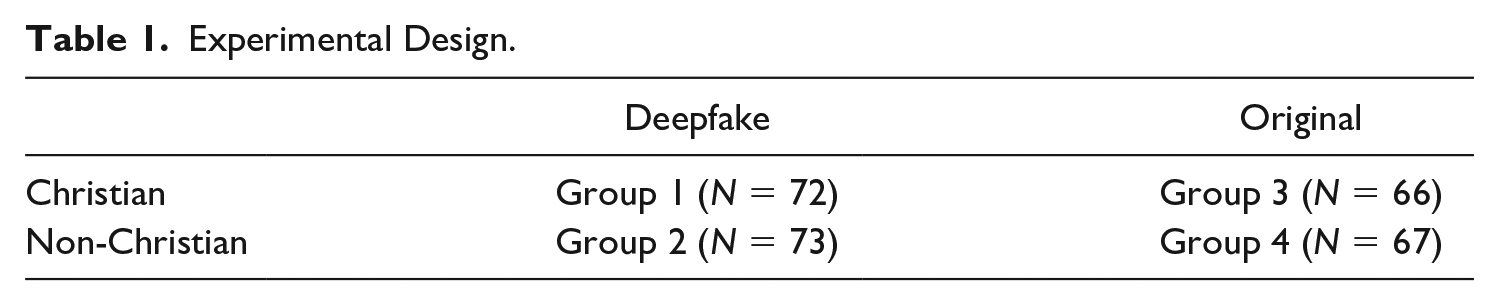

Christian religious identity serves as a blocking variable. After answering a filter question about religion, participants were first placed in either the Christian or non-Christian block and then randomly distributed into either the deepfake or the original video condition (see Table 1 for experimental design).

Experimental Design.

Independent Variables

Deepfake

The deepfake stimulus is a thirteen-second subtitled video showing a leading politician of Dutch Christian Democrats “CDA,” which is one of the largest center-right parties in the Netherlands. The first eight seconds of the video calls for the attention of the participant and announces to the participants that they are going to see a short video of [name politician], politician of the CDA. The following five seconds are a manipulated video that makes it seem as if, in a television show, the politician jokes about Christ’s crucifixion: “But, as Christ would say: don’t crucify me for it.” 4 It is a play on words, essentially making a joke out of Christ’s crucifixion. It would move attitudes because the politician is a prominent Christian politician and the base of his party is to a large extent Christian. Figure 1 shows a screenshot from the deepfake and a screenshot from the original video.

Deepfake (L) and original video (R).

Making the deepfake

First, we produced a fake speech with the politician’s voice using a text-to-speech-based learning approach (“Tacotron2”). Then, we produced a fake “silent video” of the politician from a real video for which we modified the lip movements (frame by frame) to match the new fake speech using artificial intelligence (AI)-based lip synchronization techniques (Suwajanakorn et al. 2017).

To produce the silent video, we collected approximately twenty-five hours of publicly available videos of the politician. These videos where split into frames and used to train a deep learning model that predicts the mouth/lip shape of the politician from a given input audio. From these videos, we also extracted approximately twelve hours of audio that we then transcribed and used to train another model that generates audio with the voice of the politician (the fake speech) from a given input text. We then used our first model to predict the lip movements corresponding the fake speech. Then, we reconstructed the lips and mouth texture for each frame and added the fake audio to produce the final video using the ffmpeg library.

Control condition

The stimulus in the control condition was the original, nonmanipulated, subtitled version of the video of the politician that was also used for the deepfake. The first eight seconds of the control video calls for the attention of the participant and announces to the participants that they are going to see a short video of [name politician], politician of the CDA. The following five seconds show the original version of the television interview that was manipulated in the experimental condition.

Microtargeted or untargeted appeal

The participants who indicated in a filter question that they were Christians were regarded as a group that received a microtargeted stimulus. Christianity served as a blocking variable, and was used to simulate microtargeting. The stimulus is about Christianity, and therefore catered to the personal interest of the Christian participants—but not the nonreligious participants. People who indicated in the filter question that they were not religious were regarded as a group that received an untargeted stimulus. Hence, we speak of a microtargeted and an untargeted appeal.

Being Christian

Participants who answered the question, “I consider myself a Christian” positively, were randomly placed in either the experimental or the control microtargeted group (Group 1 or 3). Participants who indicated to be religious, but not Christian were screened out. Participants who declared themselves to be nonreligious were randomly placed in either the experimental or control untargeted group (Group 2 or 4).

Degree of religiosity

The participants who considered themselves Christian were asked how often they pray at home, using a 7-point scale from the European Social Survey. We then dichotomized this variable into “heavy prayers”: those who pray once a week or more often (

Voted CDA

This variable was used to register the potential occurrence of motivated reasoning. Participants were asked whether they, in the past five years, ever cast their votes for the CDA (in European, national, provincial, or local elections). Participants could answer either yes or no (217 no, sixty yes, one missing).

Dependent Variables

Attitude toward politician

This dependent variable was measured after the stimulus and entailed the attitude toward the politician. The nine-item measure is derived from Boomgaarden et al. (2016). On a 7-point scale, participants were asked to assess the politician’s competence, experience, authenticity, corruptness, determination, fairness, responsibility, honesty, and friendliness (eigenvalue = 5.5; Cronbach’s α = .93). We added one item about “authenticity” to the original eight-item measure of Boomgaarden et al. (2016). Authenticity is a relevant part of the attitude when studying deepfake disinformation.

Attitude toward political party

This dependent variable is measured with one item, on a 11-point Likert scale. Participants were asked about their stance regarding eight political parties, including the CDA to which the politician belongs. The value 0 stood for negative and 10 stood for positive (see Seltzer and Zhang 2011).

Ethics

The experimental protocol has been approved by the ethical review board of our institution. We debriefed participants immediately after the experiment, and stressed among others that the video was manipulated and that the politician in reality never made the Christ remark and that he likely never would. We also informed the participants about the Christian roots of the CDA and linked to the values page of the CDA Web site. Moreover, our experiment took place in a controlled environment and not during an election.

Procedure

Participants were contacted by Kantar Lightspeed. Kantar Lightspeed sampled from a nationally representative sample. They oversampled Christians. They used noninterlocking quotas to get enough Christians but also keep the sample representative on gender, age, and education. Before participants started with the online survey experiment, they were informed and asked for their consent. In the first part of the survey, participants were asked about their religiosity and then sorted into a group or screened out. After completing the survey, the participants were debriefed about the real purpose of the study. Participants in the experimental condition were explained that what they saw was manipulated and that actually the politician never has and likely never will make such a remark about Christ. Participants saw information about the ideology and the Christian fundament of the CDA and were offered a link to CDA’s policy positions if they wanted to read more.

Manipulation Check

Credibility

We measured the degree to which participants found the deepfake credible with two 7-point scale items (

Because the credibility of the deepfake was close to the credibility of the original video, and because only twelve of the participants actually recognized the deepfake as being a manipulated video,

Finally, at the end of the survey, we checked whether participants had ever heard of “deepfakes.” Of the experimental group, almost 75 percent did never heard of deepfakes, 20 percent had heard of them, and 4 percent did not know. To ascertain whether these three groups differed on the degree in which they recognized the deepfake as being manipulated, we used an analysis of variance (ANOVA) and found no significant differences between the groups:

Scrutiny

Scrutiny was measured using four 7-point scale items from Wheeler et al. (2005). We dropped one item to improve scale reliability. The remaining three items were “to what extent did you watch the video attentively?” “To what extent did you think deeply about the content of the video?” and “How much effort did you put into understanding the content of the video?” The three items were combined and averaged (eigenvalue = 1.54; Cronbach’s α = .79).

The experimental (

Power

For H1a and H1b, we compare a group of 144 participants with a group of 133 participants. We conducted an a priori power analysis by using G*Power (Faul et al. 2007) with a significance level of α = .05, a moderate effect size of

Randomization Check

A randomization check showed no significant differences between the experimental condition and the control condition regarding year of birth,

Results

Main Analyses

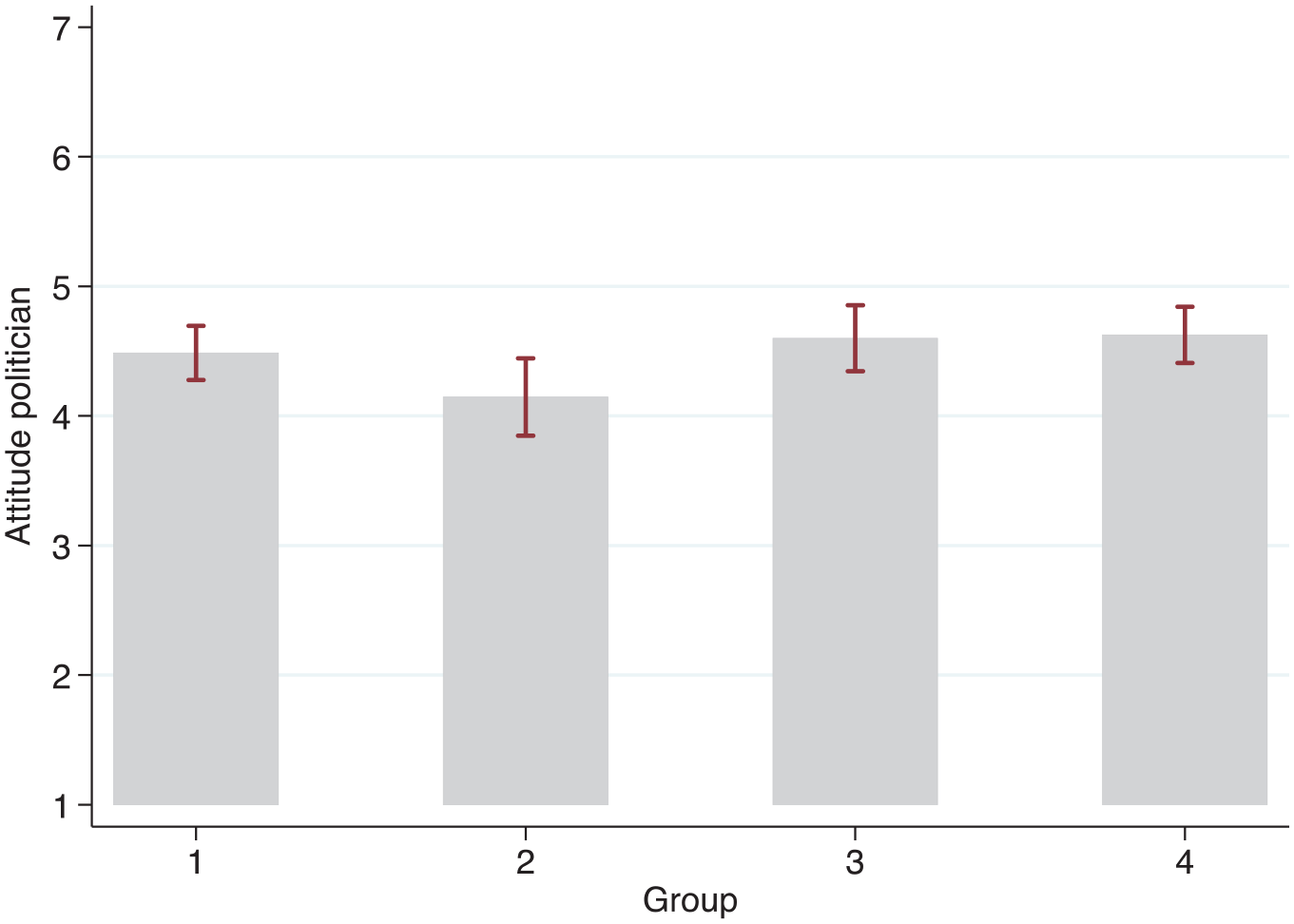

Comparing the two groups that either saw the deepfake or the control video, we find that the experimental group held significantly worse attitudes toward the politician after seeing the deepfake (

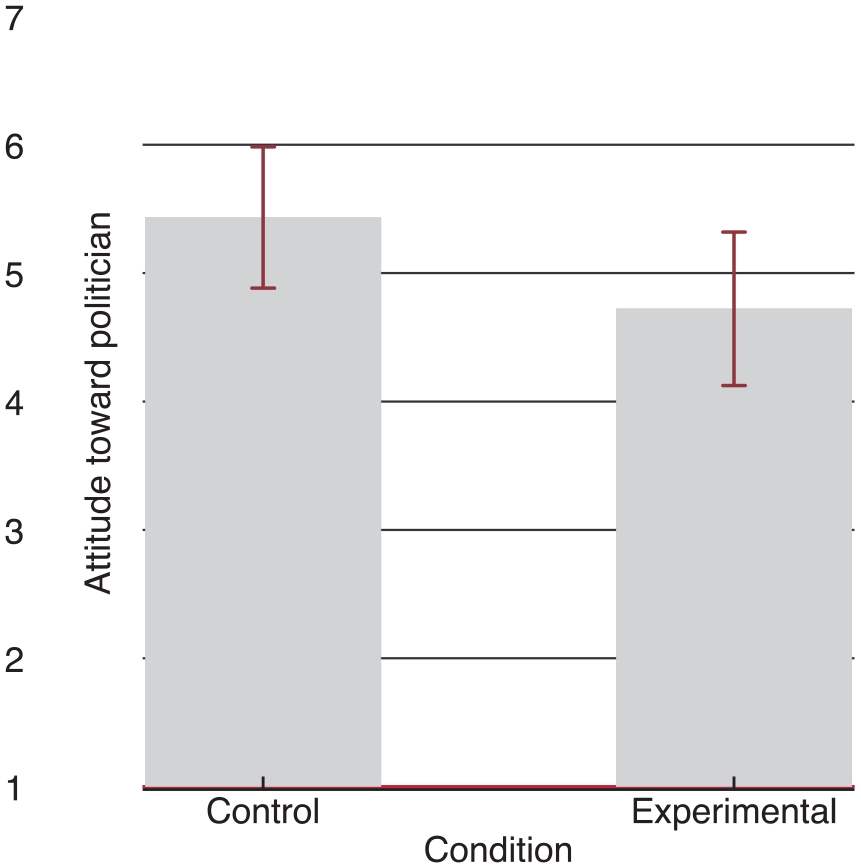

Focusing on the attitudes toward the political party of the depicted politician (CDA), the difference between the experimental group (

Zooming in and comparing the four groups, using an ANOVA, we find a significant difference between the four groups regarding attitude toward the politician:

CI plot attitude toward politician after exposure, per group.

Further testing H1c (

Hypothesis 1c.

Figure 4 displays the same subsample’s mean attitudes toward the political party and shows that the mean scores of the experimental condition are significantly lower than the mean scores of the control condition. Both these findings support

Hypothesis 1c.

Small subsamples

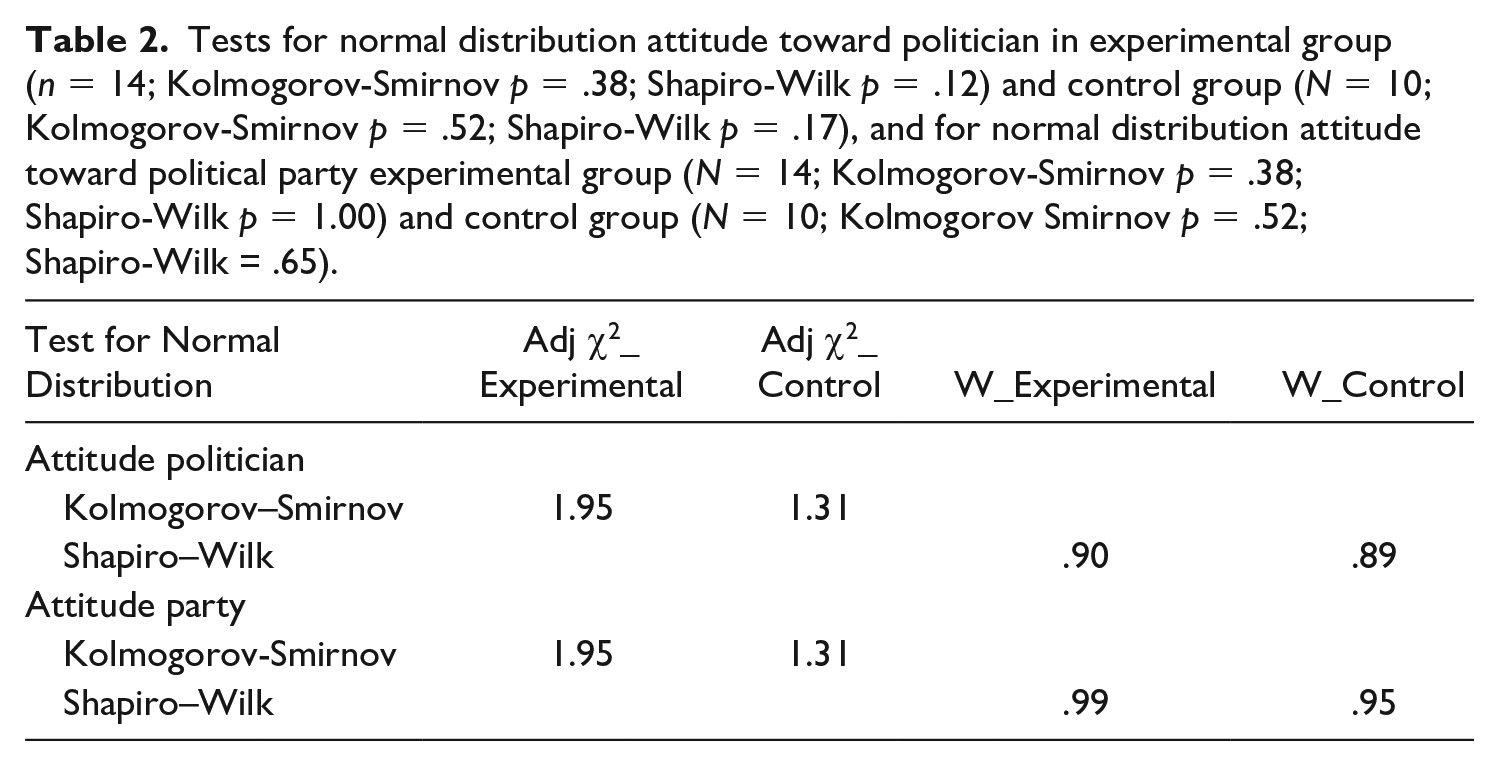

For both attitude toward the politician and attitude toward the party, a Kolmogorov–Smirnov test and a Shapiro–Wilk test were conducted. Table 2 shows that both conditions and both variables were distributed normally, making the

Tests for normal distribution attitude toward politician in experimental group (

Motivated reasoning

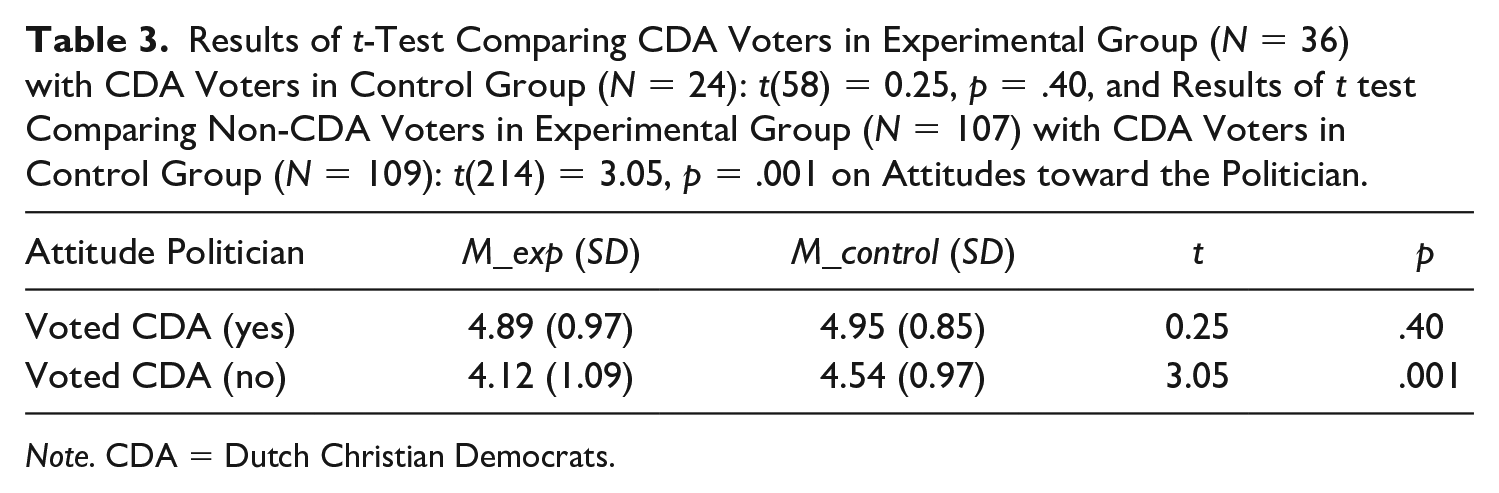

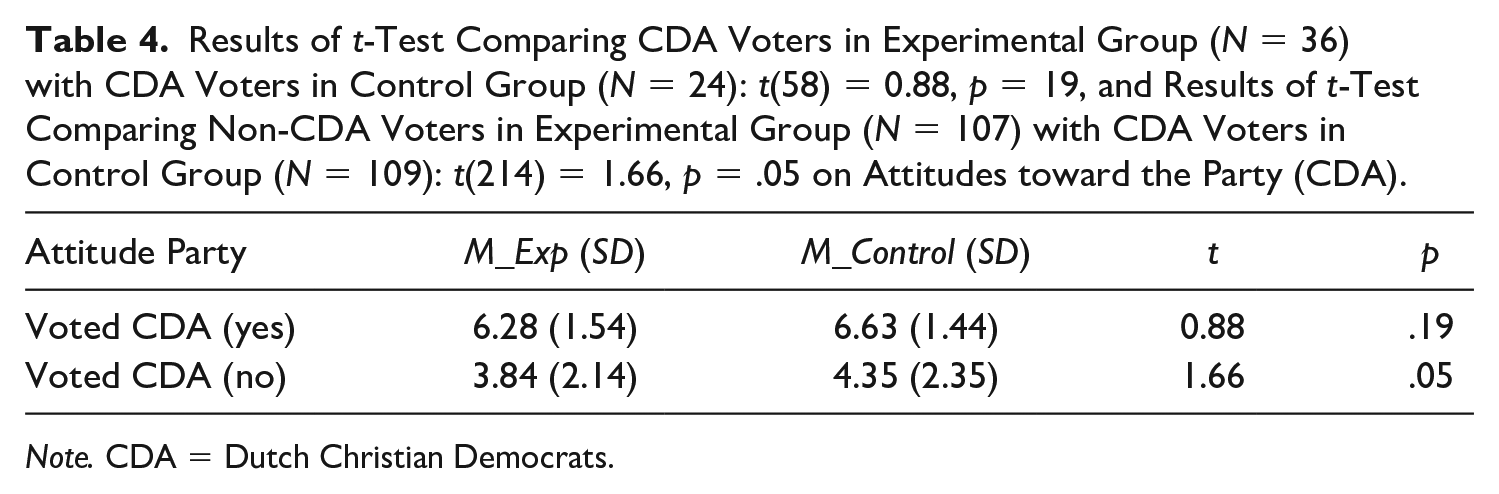

To answer the research question (

Results of

Results of

Discussion

In this study, we set out to investigate the extent to which a (microtargeted) deepfake meant to discredit a politician can affect citizens’ attitudes toward that politician and his party. 9 This experiment indicates that indeed it is possible to stage a political scandal with a deepfake. The negative attitudinal consequences toward the politician and the party that are found in the scandal literature (Brody and Shapiro 1989; Chanley et al. 2000; Maier 2011) are found in this study as well. While especially the attitude toward the politician is directly affected by the deepfake, attitudes toward the politician’s party are only conditionally affected. As such, our findings differ from Guess et al. (2018) and from Bail et al. (2020), who found no effects of disinformation on political behavior and attitudes. The current study provides a first careful support for the idea that indeed deepfakes are a more powerful mode of disinformation in comparison with the false news stories studied by Guess et al. (2018) and the Russian Twitter trolls studied by Bail et al. (2020).

Amplification

We theorized that PMT could function as an amplifier that would make the deepfake more effective. Our findings suggest that PMT can indeed amplify the effects of the deepfake, but only for a much smaller portion of the sample than we expected. In particular, it turns out that the group that one needs to target to are

In sum, concordant with Endres (2019), we found that partisans can be negatively affected by a microtargeted message regarding their own candidate. In contrast with Endres (2019) and Matthes and Marquart (2015), we find that a message meant to be incongruent with the opinions of the receiver can have a significant and substantial negative attitudinal effect.

Inoculation

For part of the “mistargeted group” (the CDA voters who were not heavy prayers), it appears that motivated reasoning inoculated them from any negative effects of the deepfake (see Kahan et al. 2017 for more information on measuring motivated reasoning). Motivated reasoning is sometimes considered negative in the face of truthful information—Richey (2012: 511) even reflected on whether motivated reasoning was the “death knell of deliberative democracy.” But inoculation through motivated reasoning can be positive when facing disinformation meant to be incongruent with people’s prior beliefs.

It may be a reassuring thought that the supporters of the politician who was negatively depicted in the deepfake are to some extent protected from deepfake manipulation by their tendency to engage in motivated reasoning. Even when facing clear evidence of the incongruency occurring, the partisans indeed did not hold worse attitudes than their counterparts in the control condition did, while the nonpartisan groups did (in line with Bisgaard 2015). Still, if, for instance due to microtargeting, the message is personally relevant

Credibility

The open question about why participants did not find the deepfake too credible made it evident that only a small fraction of the sample recognized the deepfake as a manipulated video. Moreover, the open question showed that the credibility scale did not measure the credibility of the deepfake in a narrow sense, but rather in a broader sense where participants interpreted the credibility items as how credible they find the depicted politician or politics in general. One participant, for instance, explained their low credibility score as follows: “Nothing in politics is credible.” Someone else explained, “I have trouble taking politics in the Netherlands seriously.”

Frankly, the deepfake can be improved upon. The mouth movement of the politician sometimes reminds of a dummy used by a ventriloquist, the voice is acceptable but not good, and the video is only five seconds. But even with these points of improvements, almost no participant raised concerns with the veracity of the video itself. This can be partly attributed to the novelty of the technique, but is also because seeing and hearing a person say something can be so realistic.

Unexpected effects

The potential threat of (microtargeted) deepfakes lies in their use by a malicious political actor with the desire to achieve some illegitimate political goal. Similar to Maarek (2003), who attributed the shocking loss of a French presidential candidate to a too professional campaign, or similar to Adams et al. (1986) who found that an antinuclear warfare television broadcast actually increased American viewers’ support for then-president Reagan instead of vice versa, our experiment shows that pursuing a goal with microtargeted deepfakes may also come with some unforeseen outcomes. For instance, not the general group of Christians who saw the deepfake held significantly worse attitudes toward the politician in comparison with the control group, but rather the non-Christians who saw the deepfake did. Moreover, we found that, after exposure, this experimental group of non-Christians held significant and substantial better attitudes toward populist party Forum voor Democratie (

Should we worry about (microtargeted) deepfakes?

Yes, we should worry, but more about deepfakes in general than about

For now, the limited, but significant main effect of our imperfect deepfake on the attitudes of the general sample is more or less aligned with the idea of “minimal effects” of political communication (see Bennett and Iyengar 2008). The idea of minimal effects in political communication was later substantiated by Kalla and Broockman (2018), who in an important meta-analysis estimated that the persuasive effects of campaign contact and advertising on American voters were zero. They also found that identifying and persuading specific subgroups of persuadable voters appears to be a successful persuasive strategy. But “identifying cross- pressured persuadable voters requires much more effort than simply applying much-ballyhooed ‘big data’” (p. 2). Similarly, while that meta-analysis is not easily generalizable to a non-U.S., multiparty, less-affectively polarized context, the current study also finds that making several, or even hundreds or thousands of

Over time, it could get easier to get accurate perceptions of what characteristics make a voter group susceptible to a tailored deepfake. But for a malicious actor operating present day, taking a less subtle approach and spreading one discomforting deepfake would be the most realistic option. Worrisomely, a better quality and longer deepfake, repeated exposure and distribution in a dynamic real-life context could easily produce larger effects. Furthermore, the notion that the main barrier protecting the electorate from large persuasive effects is the difficulty to microtarget a deepfake correctly, is hardly comforting. But for now, as Karpf (2019) has argued, the largest threat of present-day disinformation does not lie in individual-level effects, but rather in the If the public is made up of easily-duped partisans, then there is no need to take difficult votes. If the public simply doesn’t pay attention to policymaking, then there is no reason to sacrifice short-term partisan gains for the public good.

Directions for Future Research

Future research should map the effects of deepfakes, potentially by comparing deepfakes with differing levels of quality, and different degrees of shock the deepfakes induce, and by comparing source effects. More importantly, future research should map ways to counter deepfakes’ effects. A regulatory focus should be directed against this potential new frontier of disinformation warfare. The surprising low number of participants who recognized the deepfake as being manipulated is a clear sign that public awareness and knowledge of deepfakes should improve. But informing the public about deepfakes must not lead to cynicism in citizens and in politicians.

Supplemental Material

_online_supp_-_Dobber_et_al – Supplemental material for Do (Microtargeted) Deepfakes Have Real Effects on Political Attitudes?

Supplemental material, _online_supp_-_Dobber_et_al for Do (Microtargeted) Deepfakes Have Real Effects on Political Attitudes? by Tom Dobber, Nadia Metoui, Damian Trilling, Natali Helberger and Claes de Vreese in The International Journal of Press/Politics

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.