Abstract

Concerns have been raised over AI-generated deepfakes and their impact on democracy. Unlike earlier forms of disinformation relying on text or traditional video-editing techniques (cheapfakes), deepfakes employ artificial intelligence, provoking speculations that they may be even more persuasive and harder to debunk. Using an experiment with a multiple-message design (N = 2,085), we found that fake videos suggesting a sex, corruption, or prejudice scandal—but not text-only fakes—elicited substantial reputational damage for an innocent politician, regardless of whether the underlying technique was “cheap” or “deep.” This was visible in altered attitudes, emotions, and voting intentions. However, exposure to a journalistic fact-check substantially reduced and even eliminated the detrimental effects. These findings have important implications for our theoretical understanding related to the effects of and mitigation strategies for deepfakes. While clearly highlighting the significant persuasive potential of deepfakes (and visual disinformation in general), the present study paints a more nuanced picture than was previously possible.

Keywords

Heated debates sparked by the recent emergence of deepfakes prompted calls for scholarly investigations into the effects of this modern form of fake video material on democracy (Ahmed 2023; Chesney and Citron 2018; Jacobsen and Simpson 2024; McCosker 2024). While the political realm is no stranger to disinformation, deepfakes add a new layer to known concerns: They can be used to make representations of politicians move “like puppets on a string” and “utter words put into their mouths” (Dan et al. 2021: 643). Deepfakes’ high realism—resulting from the use of artificial intelligence (AI)—might mean that effects might go beyond those prompted by traditional forms of fake content, such as text-based fakes and fake videos that do not deploy AI, known as cheapfakes (Dan et al. 2021; Hameleers 2024). Cheapfakes do not use AI, but rather traditional, basic video-editing techniques (e.g., darkening, blurring, or slowing down authentic footage; replacing the audio track). Importantly, when distributed right before an election, deepfakes staging a political scandal may elicit false beliefs and impede voters’ ability to make informed decisions. To the extent that deepfakes are more persuasive and harder to debunk than earlier forms of disinformation, they pose an unprecedented threat to the functioning of democracy.

We report on a large-scale experiment (N = 2,085) conducted with two aims. First, we aim to test if a deepfake with fabricated evidence of scandalous behavior elicits reputational damage for an innocent politician—as visible in altered attitudes, emotions, and voting intentions. To determine whether deepfakes are truly the game changer purported to be, we present a systematic investigation of their impact compared with traditional forms of fake content—that is, text-based fakes and cheapfakes. This goes beyond the available scholarship which tends to study deepfakes in isolation. Second, we aim to identify successful mitigation strategies. We test whether exposure to a journalistic fact-check mitigates noxious effects—a much-needed addition to the technological and legal responses usually discussed in literature (McCosker 2024).

We present evidence supporting the claim that deepfakes can have substantial effects on attitudes, emotions, and voting intentions—just like those prompted by authentic videos. While highlighting the persuasive potential of deepfakes, our data also suggest that cheapfakes elicit similar noxious effects, unlike text-based fakes. This suggests that the availability of fabricated audiovisual evidence is what deceives people, not the use of AI. Moreover, our evidence also indicates that this effect can be reduced (or even eliminated) by exposure to a disconfirming journalistic fact-check. This study is the first to systematically show that the democratic threat posed by deepfakes is significant yet reversible. While these findings come with some caveats rooted in our decision to use a fictitious politician in our stimuli (see below), they do help address the challenges posed by modern forms of (AI-based visual) disinformation.

Reputational Damage as a Key Noxious Effect of Deepfakes

One important way in which deepfakes can impact democracy is by rendering politicians unelectable through the provision of fabricated evidence of scandal involvement (Chesney and Citron 2018). As this could influence the outcome of elections, studying the effects of such deepfakes has been identified as a research priority (Dan et al. 2021; Diakopoulos and Johnson 2021). Involvement in a scandal—defined as “intense public communication about a real or imagined defect that is by consensus condemned, and that meets universal indignation or outrage” (Esser and Hartung 2004: 1041)—is perhaps the greatest reputational threat (Entman 2012). When the content of deepfakes is taken at face value, misperceptions are likely to follow and so are altered attitudes, emotions, and voting intentions—what was conceptualized as reputational damage in this study. Studying reputational damage matters because this is a more enduring outcome than misperceptions: Reputational harm might outlive misperceptions (Thorson 2016; Walter and Tukachinsky 2020).

We predict that exposure to reputational threats in the form of deepfakes has a detrimental effect on the reputation of the deepfaked politician. As scandal research has intimated that involvement in a scandal can prompt negative attitudes toward a protagonist, in addition to decreased voting intentions and negative emotions (Maier 2011; von Sikorski and Herbst 2020), we pose:

H1: Deepfakes suggesting scandal involvement elicit reputational damage, as indicated by a decrease in favorable attitudes (H1.1), a decrease in voting intentions (H1.2), and an increase in negative emotions (H1.3) toward an innocent politician.

Are Deepfakes More Persuasive Compared With Earlier Forms of Disinformation?

While deepfakes are considered more persuasive than earlier forms of disinformation (Chesney and Citron 2018; Dan et al. 2021), systematic knowledge on this matter is still missing. Such comparative assessments are rooted in assumptions regarding the format in which fake evidence of allegations is provided, for instance in support of the claim that a politician transgressed a norm. Indeed, scandal research has established that norm transgressions must be convincingly documented to be damaging (Kepplinger 2018). This raises the question of what makes the documentation of a political misstep convincing to people who did not witness it themselves. Available scholarship suggests that a simple verbal claim (e.g., “I heard the mayor said that. . .”) may not be particularly convincing, but adding visual evidence of the norm breach—especially video—will make it more persuasive (see also Brennen et al. 2021).

Empirical studies on the differential effects of messages presented as text versus video—typically guided by Dual Coding Theory (Paivio 1986), the MAIN Model (Sundar 2008) or the Limited Capacity Model (Lang 2000)—provided support for the contention that “we trust those things that we can see over those that we merely read about” (Sundar 2008: 80–81; for a review, see Dan 2018a, 2018b). It appears that videos are more easily processed and recalled than text (Paivio 1979) and that—on account of being a “rich” mode of presentation, unlike “lean” text—videos are more likely to be taken at face value and processed superficially (Sundar 2008). This is the realism heuristic (Sundar 2008). Another heuristic of relevance here is the being-there heuristic: Viewers of video (more than readers of text) may feel like they witnessed the action portrayed (Sundar 2008). In visual communication, a related concept is indexicality: Since photos and video recordings are “in a sense an automatic product of the effects of light on lenses and film (or video and other electronic media), the connection between [them] and reality has a certain authenticity”; they come with “an implicit guarantee of being closer to the truth than other forms of communication are” (Messaris and Abraham 2001: 217). Differential effects have also been shown in misinformation research: People are more likely to fall for false or misleading claims when given in video rather than text or audio (Sundar et al. 2021); adding visuals to inaccurate claims makes them more credible (Hameleers et al. 2020)—something occasionally referred to as the truthiness effect (Newman and Zhang 2021).

This lends credence to the idea that visual primacy plays a role in the processing of disinformation. Visual primacy, or visual dominance, refers to “the tendency of visual information . . . to dominate perceptual and memorial judgments” (Posner et al. 1976: 158), thus to people’s overreliance on visuals “for judgments of affect and meaning” (Noller 1985: 28). This tendency to assign primacy to visual information over other types of information is particularly relevant in the context of multimodal messages (DePaulo and Rosenthal 1979). When modalities are in conflict—such that the visual stream does not match the verbal/textual stream—images are noticed and remembered better than words (Dan et al. 2020).

Fake videos, be they cheapfakes or deepfakes, would then be expected to influence viewers more than text-only fakes. But since the use of AI adds to the realism of deepfakes, it might be ill-advised to assume no differences in the effects prompted by cheapfakes and deepfakes. Indeed, scholars assume an increasing linear effect from text, through cheapfakes to deepfakes (Chesney and Citron 2018; Dan et al. 2021).

As no study to date has attempted to compare the effects of cheapfakes, deepfakes, and text-only fakes, it remains unclear whether this effect materializes. One study found that cheapfakes were more likely to deceive than deepfakes, not the other way around (Hameleers 2024). With regard to text versus deepfake, the evidence is mixed—with two studies finding no evidence that deepfakes are more persuasive than written (Hameleers et al. 2022) or spoken text (Weikmann et al. 2024) and one study finding such evidence (Lee and Shin 2021).

To summarize, the above suggests that reputational damage can be elicited via the text and the video modalities. But it is unclear whether the speculations about deepfakes’ stronger effects relative to text-based fakes or cheapfakes stand on empirical grounds. We ask:

RQ1: Is the reputational damage elicited by deepfakes (see H1) stronger than that prompted by text-only fakes (RQ1.1) and cheapfakes (RQ1.2)?

Does Deepfakes’ Technical Sophistication Matter?

Inherent to the concerns voiced in connection to deepfakes is the assumption that high-quality fakes will be more convincing compared with poor-quality ones (Chesney and Citron 2018; Dan et al. 2021). As such, deepfakes’ persuasiveness is presumed to increase as the level of sophistication increases (Dobber et al. 2021; Nightingale and Farid 2022). It could be that typical give-aways such as wobbliness, blurriness, or unnatural eye movement are more easily noticed in basic deepfakes than in those of moderate or advanced quality (Dan et al. 2021).

Such glitches would increase the chance that people are weirded out by poor-quality deepfakes, where the protagonist looks “almost human,” whereas high-quality deepfakes, in which the protagonist looks “fully human” would not prompt repulsion (Nightingale and Farid 2022: 1–2)—much in the way people respond to robots resembling human beings, as described in the uncanny valley effect (Mori et al. 2012): As the robot looks more and more like a human, people respond gradually more positive. But when the robot is almost looking like a human, but not quite, people’s positive responses are replaced by repulsion. Finally, as it becomes harder to tell the robot apart from a human, people respond positively again. This shift in people’s response resembles a valley, because a sharp decrease in favorability (“almost human”) is followed by a sharp increase (“fully human”).

However, initial findings regarding how variations in sophistication influence people’s ability to recognize deepfakes are inconclusive (Korshunov and Marcel 2020; Rossler et al. 2019). We ask:

RQ2: Does deepfake quality matter, where advanced deepfakes damage the reputation of the protagonist more than basic and moderate deepfakes do?

How to Counteract Deepfakes: Fact-Checks as a Potential Mitigation Strategy

If deepfakes are the gamechanger purported to be, then mitigation strategies are needed. The quest for such strategies has placed great hopes on journalistic fact-checks (Dan et al. 2021). While still a relatively new “genre of journalism” (Amazeen et al. 2018: 30), fact-checking operations are proliferating around the world (Graves et al. 2016). Fact-checks are reports resulting from a systematic process through which journalists verify a widely circulated claim and give a verdict on its veracity (see Garrett et al. 2013; Young et al. 2018). Research suggests that journalistic fact-checks should be particularly effective in reducing reputational damage inflicted by deepfakes. Unlike NGO-based operations, journalistic initiatives can draw on the resources and infrastructure of the legacy newsrooms to which they belong, being able to enlist professional journalists, while also benefiting from people’s trust in and familiarity with the parent company (Graves and Cherubini 2016). As such, journalistic fact-checking initiatives generally reach considerably larger audiences than NGO-based ventures (Graves and Cherubini 2016).

While this has not yet been demonstrated empirically, fact-checks are considered able to correct false beliefs and help free politicians from false allegations and dampen the reputational damage inflicted by deepfakes (Dan et al. 2021; Diakopoulos and Johnson 2021). On the one hand, considering fact-checks’ demonstrated ability to debunk false or misleading claims made in the body politic (Walter et al. 2020), it seems reasonable to anticipate that they may also be used to mitigate deepfakes. This would be beneficial for democracies, given that they rely on informed citizens with a shared understanding of basic facts (see Galston 2001). On the other hand, it is challenging to debunk political disinformation, regardless of its format (Chan et al. 2017) and fact checks are not always made in a way able to attract and hold the attention of the public (Dan 2021).

Debunking political deepfakes might be even more challenging, as this entails telling people they cannot trust their eyes and ears (Chesney and Citron 2018; Dan et al. 2021; Vaccari and Chadwick 2020). Existing work has clarified the need for a compelling format when attempting to debunk political disinformation in general (Walter et al. 2020) and deepfakes in particular (Dan et al. 2021; Nyhan and Reifler 2019), with video fact-checks appearing particularly promising (Dan and Coleman 2024; Dan and Dixon 2021; Young et al. 2018). This can be linked with preliminary evidence suggesting that literacy-based fact-checks work best (Cook et al. 2018; van der Meer et al. 2023; Vraga et al. 2022). Literacy-based fact-checks are corrective messages that go beyond simply debunking false or misleading content. They aim to empower audiences by equipping them with the knowledge and skills to identify deceitful tactics themselves. Literacy-based fact-checks can elevate people’s media literacy in a scalable way (Hameleers 2022; Schmid and Betsch 2019), illustrating how journalism can help fighting misinformation (Vraga et al. 2020). These insights prompted us to use a literacy-based fact-check in video format as a stimulus in this study.

Whether (literacy-based video) fact-checks can dampen the detrimental effects of deepfakes has yet to be tested. However, initial evidence on the ability to debunk fake videos by flagging them as false (Lee and Shin 2021; Lu and Yuan 2024) or by explaining what deepfakes are (Hwang et al. 2021; Vaccari and Chadwick 2020) is encouraging. Exposure to a literacy-based fact-check may thus mitigate the detrimental effects predicted in H1. We pose:

H2: A journalistic fact-check will reduce (or possibly eliminate) the reputational damage elicited by deepfakes (H2.1) and traditional forms of disinformation, that is, text-based fakes and cheapfakes (H2.2).

Method

In this web-based experiment (N = 2,085), we employed a 5 × 2 between-subjects factorial design with two additional standalone comparison groups (i.e., an incomplete 5 × 2 + 2 design). The standalone comparison groups comprised a control group and an authentic group. The factors were the format of the reputational threat in which evidence of scandal involvement was provided (i.e., text, cheapfake, basic deepfake, moderate deepfake, advanced deepfake) and exposure to a fact check clarifying that the evidence had been fabricated (yes, no). The control group was a standard control, exposed to a neutral video unrelated to the outcome measures. The authentic group was exposed to a real video in which a politician was shown actually engaging in the scandalous behavior he was accused of. The control and authentic groups were used as standalone comparison groups because participants allocated to these groups did not see a fact-check. This is because published fact-checks typically conclude that the fact-checked content was false or misleading.

The control and authentic groups were used as baseline comparison groups in the analysis: The control video should elicit no reputational damage; the authentic video should elicit the strongest observed effect level related to reputational threat. All other groups should theoretically lie in between.

We used a multiple-message design and prepared three versions of the reputational threat, changing the topic of the allegations from sex to corruption and prejudice. This was meant to increase external validity and the generalizability of findings. Participants were randomly assigned to groups and exposed to the Twitter feed of a fictitious politician.

Sample

An a priori power analysis guided us in purchasing a quota-based sample of the general German population from Simple Opinions (N = 2,085). Quality checks defined at the outset enabled a sample to be gathered that was free of speedsters (i.e., those completing the study in less than 10 minutes or spending under 5 seconds considering each stimulus), cheaters (same IP address), straight liners, and inattentive participants.

Quotas were set up for age, gender, education, and political orientation. Our sample was diverse in terms of age (M = 46.25, SD = 15.59), gender (55.5% male, 44.5% female), and education (48.3% had not completed high school, 28.6% had a high school diploma, and 23.1% had a university degree). About one-third of the participants belonged to each of the three ideological groups, self-identifying as liberal (n = 692, 33.2%), moderate (n = 698, 33.5%), and conservative (n = 695, 33.3%).

Experimental Manipulation

Groups

We used eleven experimental conditions and a control group, resulting from our incomplete 5 × 2 + 2 design. All groups saw the same fifteen introductory posts in the Twitter feed of Martin W., a fictitious local politician. In the next and last post shown to participants in the experimental groups, another user, tweeting @MartinW, claims to have heard a conversation between the politician and another man in a restaurant, while seated at a neighboring table. The other user offers an account of this conversation, accusing Martin W. of having said something inappropriate.

The participants who were randomly assigned to the text/fact check group (n = 184) saw the fifteen introductory tweets before being exposed to the incriminatory tweet in text format. Thereafter—and thus, before the outcome measures were recorded—these participants saw a fact-check ruling the incriminatory tweet inaccurate.

Participants in the text/no fact-check group (n = 180) saw the same stimuli as those in the text/fact-check condition, except for the fact-check. To be precise, to avoid depriving these participants of a media literacy intervention, the fact-check was shown to them at the end of the study, after all outcome measures. For brevity, when describing the other “no fact-check” groups below, we refrain from specifying this, stating simply that those participants did not receive a fact-check.

Participants assigned to the cheapfake/fact-check group (n = 183) saw the fifteen introductory tweets before receiving the incriminatory tweet. The latter tweet consisted of the same re-narration of the events presented to participants in the text groups in addition to what was presented as a two-minute video recording of this exchange, with subtitles, but was, in fact, a cheapfake. Thereafter, the fact-check was shown to these participants. Participants allocated to the cheapfake/no fact-check group (n = 186) were exposed to the same stimuli as those in the cheapfake/fact-check condition, except for the fact-check.

Participants assigned to the basic deepfake/fact-check group (n = 183) saw the same stimuli as those in the cheapfake/fact-check condition, but with a basic deepfake instead of the cheapfake. Participants in the basic deepfake/no fact-check group (n = 189) received all stimuli shown to those in the basic deepfake/fact-check condition, except for the fact-check.

The participants allocated to the moderate deepfake/fact-check group (n = 189) saw the same stimuli as those in the basic deepfake/fact-check condition, but a moderate deepfake replaced the basic deepfake. Participants in the moderate deepfake/no fact-check group (n = 180) were exposed to the same stimuli as those in the moderate deepfake/fact-check condition, without the fact-check.

The participants assigned to the advanced deepfake/fact-check group (n = 181) received the same stimuli as those in the moderate deepfake/fact-check condition, but an advanced deepfake replaced the moderate deepfake. Participants allocated to the advanced deepfake/no fact-check group (n = 186) were exposed to the same stimuli as those in the advanced deepfake/fact-check condition, without the fact-check.

Participants in the authentic video/no fact-check group (n = 183) saw the same fifteen introductory tweets as the other groups. Thereafter, they were exposed to the same re-narration of events presented to the rest of the participants, which was now backed up by an authentic video recording of the fictitious politician uttering the words he was reproached for in the text body of the tweet. This was a “real” video.

We used a multiple-message design to increase confidence in the generalizability of the findings (see OSM Table 1 in the Online Supplemental Material Document). This means that participants in each of the experimental groups were randomly assigned to receive one of three versions of each of the incriminating posts. Therein, the topic of the allegations was sex, corruption, or prejudice. This strategy allowed us to disentangle the format in which an allegation was presented from the (topic of the) alleged misconduct, addressing a key limitation in extant work.

Participants in the control group (n = 61) were exposed to the fifteen introductory tweets, followed by a neutral post from the same user as in the other conditions. The user stated that he had overheard a conversation between the mayor and another man in a restaurant and that he had recorded the conversation from a neighboring table. Neither the text nor the video component of this post contained incriminating material; it referred to the mayor’s intention to renovate a run-down park.

Stimuli

Where past studies had to rely on “imperfect” stimuli (Dobber et al. 2021: 85), we created ours with the help of a video company and professional actors. So as not to reveal the fictitious nature of our stimuli—something Vaccari and Chadwick (2020) described as a weakness in their study and that applies to most studies to date—our stimuli were modeled after actual political scandals, some caused by hidden-camera footage. We attempted to imitate them here, because doing so is believed to increase the persuasiveness of false claims (see Dan et al. 2021: 649), since actors spreading disinformation mimic design elements of true information and people are suspicious of material deviating from expectations about how something should look (Metzger and Flanagin 2013). Thus, hidden-camera fake videos were reasoned to be more externally valid than those in which protagonists say scandalous things on camera or on record.

Our stimuli, which can be found on OSF, consisted of three parts. These were fifteen posts introducing the fictitious politician to participants without reputational threat (OSM Table 2), an incriminatory post claiming that he had done something reprehensible conceptualized as a reputational threat (OSM Tables 3–4), and a fact-check meant to set the record straight (OSM Table 5).

Posts Without Reputational Threat

Fifteen posts provided insight into the everyday life of the fictitious politician, from the tasks that must be mastered to work in the town hall (see OSM Table 2). Thirteen tweets consisted of text and a photo; two included authentic videos of the politician, lasting about 20 seconds in total. Participants were shown the videos under the assumption that prior exposure to Martin W.’s voice and image would enable them to distinguish between fake and authentic videos later in the study.

Post With Reputational Threat

We implemented the worst-case scenario for a politician. Specifically, we presented alleged evidence that Martin W., a fictitious politician running for re-election “next Sunday,” behaved in a condemnable way, suggesting he was unworthy of office. We prepared several forms of this alleged evidence, representing the six levels of our first factor, and thus, variation in the levels of skill and criminal effort or resourcefulness required to create them. This allowed us to contrast the effects of text-only allegations with those of cheapfakes, three sophistication levels of deepfakes, and authentic videos while keeping the content constant. Our ability to keep every element of the stimuli constant as needed was a positive corollary of our decision to self-produce stimuli surrounding a fictious politician. This goes beyond earlier studies that compared the effects of authentic recordings with those of manipulated recordings without keeping the content constant, which represents a confounder.

For the text conditions, we prepared Twitter threads at about 560 characters each. Therein, another user tweeting @MartinW claimed to have eavesdropped on a conversation between the politician and another man in a restaurant, while seated at a neighboring table. This other user offered his account of the conversation, accusing Martin W. of having said something inappropriate.

Per our multiple-message design, incriminating tweets were prepared for three topics: sex, corruption, and prejudice. The scenarios are in line with those discussed in the political-scandal literature (Entman 2012) and the literature on the impact of deepfakes on politicians’ reputation (Diakopoulos and Johnson 2021). In addition, to ensure plausibility, the scenarios implemented here used recent real-life verbal derailments from three countries as templates. The scenario for the sex scandal was inspired by Donald Trump’s comments about women on the Access Hollywood tape in 2016 and various so-called “macho jokes” by German politicians. Here, it was said that Martin W. admitted to having cheated on his wife with a journalist, whom he reduced to her appearance, and to sexually harassing women. The corruption scenario was inspired by the Austrian Ibiza affair of 2019, which involved preferential government contracts. Here, it was claimed that Martin W. promised a businessman that the businessman would win open competitive biddings for the restoration of local buildings in exchange for a generous party donation. The prejudice scenario was inspired by Mitt Romney’s disparaging comments about the US Democrats’ base in 2012 and hateful comments from representatives of the German far-right party AfD. It was alleged that Martin W. expressed resentment toward welfare recipients and migrants.

The script and setting of the videos were kept constant across conditions, such that their protagonists—whether in cheapfakes, deepfakes, or authentic videos—uttered the same statements in the same setting. In the cheapfake conditions, the audio of a video showing Martin W. talking to a man in a restaurant was replaced with inappropriate statements voiced by an impersonator, such that the mouth movements were not in line with the audio. The decision to create cheapfakes by altering the audio of authentic video footage was meant to mirror the cheapfakes of politicians encountered online. While even “cheaper” cheapfakes (i.e., of poorer quality) are conceivable, our goal was not to create stimuli that may elicit large effects but be a seldom occurrence in real life. Rather, we aimed to create stimuli resembling as much as possible what participants may encounter outside the research lab.

For the deepfake conditions, we prepared AI-based video manipulations of varying skill levels. We used existing photos and recordings of Martin W. to allow the AI to identify the intricacies of his face, and based on this, to create a synthetized image of him, which was then transferred to video recordings of an impersonator saying something inappropriate. The variation in the skill level was implemented using two techniques. First, we altered the time given to the AI algorithm for the creation of the deepfake: 10 minutes (basic) versus 60 minutes (moderate) versus 16 hours (advanced). Giving more time to an algorithm to learn the intricacies of somebody’s appearance translates into considerably more computing resources and more training material needed. Second, the video agency we partnered with varied the amount of manual retouching used after the AI has generated the videos: not at all (basic), minor edits such as color and light adjustments (moderate), and more considerable edits such as improving on face contours (advanced).

Participants in the basic-deepfake conditions saw a manipulated video of Martin W. in which his face looked wobbly and blurry. Those in the moderate-deepfake conditions saw a manipulated video of Martin W. in which his face looked somewhat blurry and wobbly if one took a closer look. Those in the advanced-deepfake conditions saw a manipulated video of Martin W. in which his face looked realistic and the movements natural. For the authentic-video conditions, we recorded videos of the actor playing our fictitious politician. In them, he actually uttered the statements he was accused of having said.

All this begs the question of whether we might have tried too hard to persuade participants that the actions depicted actually took place. We grant that creating fakes as those used in this study takes time, resources, criminal effort, and some wit. How likely is it that a malicious actor would possess and invest these things in the creation of fakes? This is difficult to say. It appears that scholars and pundits alike consider this to be a real option, given the widespread concern that deepfakes could influence the outcome of elections (Chesney and Citron 2018; Diakopoulos and Johnson 2021; Ecker et al. 2024). Our aim here was to present participants with credible and sophisticated fakes, as plausibility was shown to play a major role in deception (Hameleers et al. 2024a; 2024b).

Fact-Check

The literacy-based fact-checking video lasted about two minutes and was produced with the help of a video company. Two journalists working at major fact-checking institutions in Germany approved of our concept after an initial discussion. The fact-check clarified that the last tweet seen was fabricated, thereby refuting the alleged evidence of misconduct. Using material from this study, it explained and demonstrated various tactics used to mislead people, spanning in skill level and criminal engagement from textual allegations, through cheapfakes, to deepfakes (i.e., the levels of our first experimental factor). The fact-check ended with links to tips on recognizing disinformation.

Measures

For all dependent variables except attitudes, our measures employed multi-item seven-point Likert scales with response options ranging from “strongly disagree” (1) to “strongly agree” (7). For attitudes, we used a multi-item bipolar scale, with negative characteristics on the left (1) and positive ones on the right (7). Sociodemographic characteristics (age, gender, education) were recorded using standard measures. Political ideology was assessed using a self-categorization measure.

Reputational Threat

Attitudes

We used a bipolar scale with seven items (Boomgaarden et al. 2016). Participants were asked about their “opinion about this politician in general terms,” with response options ranging from 1 (bad, negative, unsympathetic, immoral, unprincipled, unfair, arrogant) to 7 (good, positive, personable, moral, principled, fair, humble). Higher mean scores denote more positive attitudes toward the politician, M = 3.79, SD = 1.60, α = 0.95, skewness = 0.08, kurtosis = −0.82.

Voting Intentions

We measured voting intentions using five items on a seven-point Likert scale. We asked participants to imagine that the politician was running for re-election; the election would take place the following Sunday, and the participants were entitled to vote. We then asked participants to specify their agreement with five statements (e.g., “I tend toward voting for this local politician.”). Items were taken from a study by Dan and Arendt (2021). Higher scores indicate stronger voting intentions, M = 3.31, SD = 1.92, α = 0.97, skewness = 0.28, kurtosis = −1.13.

Negative Emotions

Negative emotions were recorded using a seven-point Likert scale with four items: “When I think of Martin W., I get angry,” “I am sad when I think of Martin W.,” “When I think about Martin W., I feel concerned,” and “Martin W. disgusts me” (Arceneaux and Johnson 2013). Higher scores indicate stronger negative emotions, M = 3.69, SD = 1.84, α = 0.86, skewness = 0.11, kurtosis = −1.02.

Other Measures

Political Ideology

Participants self-categorized into groups, prompted by the question, “What is your political orientation?” Response options included “left of center/left” (n = 692, 33.2%), “middle” (n = 698, 33.5%), and “right of center/right” (n = 695, 33.3%). We used political ideology as an additional quota variable to ensure a diverse sample; without such a quota variable, (highly educated) liberals would have been overrepresented, as our experience in recruiting German participants indicated.

Procedure

We informed participants that the purpose of the study was to understand how people evaluate politicians and which criteria they use in doing so. We conveyed that they would be shown excerpts from a local politician’s Twitter feed and then asked questions. Upon providing informed consent, participants were exposed to the stimuli and answered questions recording the measures reported below. The order of the questions was randomized. Finally, participants were debriefed and compensated at the survey firm’s usual rates. The participants took about 20 minutes to complete the study, M = 21.52, SD = 8.85. For ethical reasons, another precaution was taken: Any participants exposed to fake videos who terminated their participation in the study early were contacted by the survey firm and retroactively received the fact-check video and the written debriefing (Greene et al. 2023: 147).

Ethics Statement

The ethics committee of the LMU Munich approved this study (ID: GZ20-05; dated 03/05/2021).

Results

Detrimental Effects of Deepfakes on Reputation

H1 predicted that deepfakes suggesting scandal involvement cause reputational damage, as indicated by a decrease in favorable attitudes (H1.1), a decrease in voting intentions (H1.2), and an increase in negative emotions (H1.3) toward an innocent politician. To test this, we used only data from participants who were not exposed to a fact-check (n = 1,165), including the five fake material exposure groups and the two comparison groups (control, authentic). We used one-factorial ANOVAs, with the format of the alleged evidence set as the between-subjects factor, supplemented with planned contrasts to compare the effects of each evidence format with the control and authentic groups, respectively. For descriptive statistics and exact values for the planned contrasts, see OSM Tables 6–8.

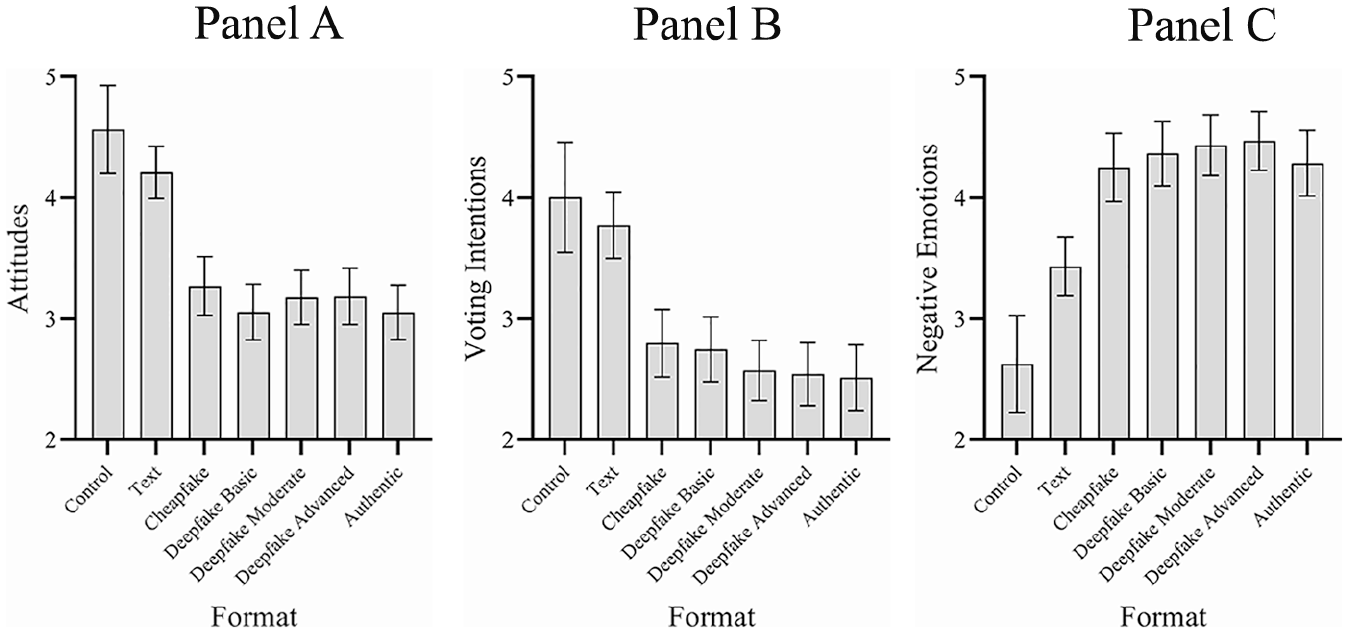

Consistent with the hypotheses, the analysis indicated significant main effects of experimental groups on attitudes toward the politician, F(6, 1158) = 18.09, p < .001, η2 = 0.09, voting intentions, F(6, 1158) = 14.24, p < .001, η2 = 0.07, and negative emotions, F(6, 1158) = 14.76, p < .001, η2 = 0.07. As shown in Figure 1, compared with participants in the control group, those in the three deepfake groups showed decreased positive attitudes (Panel A), decreased voting intentions (Panel A), and increased negative emotions (Panel C), supporting H1. The reputational damage was substantial. Means were not significantly different when compared with the authentic video, which showed a “real” misstep (see overlapping confidence intervals [CIs] in Figure 1).

Effects of the format of fabricated incriminating “evidence” on outcomes indicative of reputational damage. (A) Attitudes. (B) Voting Intentions. (C) Negative Emotions.

Persuasiveness of Deepfakes Compared With Text-Only Fakes and Cheapfakes

RQ1 asked whether the reputational damage elicited by deepfakes is stronger than that prompted by text-only fakes (RQ1.1). To address this, we compared the effects in the text-only group with those in the three deepfake groups. Planned contrasts showed that effects on all three outcomes were weaker in participants exposed to text-only allegations than in those exposed to deepfakes. This is also indicated by the nonoverlapping CIs in Figure 1: Participants in the groups exposed to a deepfake showed attitudes toward the politician that were considerably less positive (Panel A), as well as lower voting intentions (Panel B) and more negative emotions (Panel C), compared with those exposed to text-only fakes. This answers RQ1.1: The reputational damage elicited by deepfakes was stronger than that prompted by text-only fakes.

When compared with the control group, text-only fakes elicited significant reputational damage only when considering negative emotions as the outcome, M∆ = 0.80, 95% CI [1.32, 0.29], p = .002. Although descriptively moving in the predicted direction, text-only fakes did not elicit significant detrimental effects in terms of attitudes and voting intentions as outcomes (see the overlapping CIs of the control and text-only groups in Figure 1).

RQ1 also asked whether the reputational damage elicited by deepfakes is stronger than that prompted by cheapfakes (RQ1.2). Planned contrasts indicated no significant differences among the cheap- and deepfake groups. This is supported by visual inspection, as the overlapping CIs in Figure 1 indicate that no significant differences existed between deepfakes (basic, moderate, advanced) and cheapfakes, addressing RQ1.2.

When compared with the control group, cheapfakes elicited significant reputational damage in terms of attitudes, M∆ = 1.29, 95% CI [1.74, 0.84], p = .000, voting intentions, M∆ = 1.20, 95% CI [1.74, 0.67], p = .000, and negative emotions as the outcome, M∆ = 1.62, 95% CI [1.11, 2.14], p = .000. This is also indicated by visual inspection (see the nonoverlapping CIs of the control and cheapfake groups in Figure 1).

Levels of Technical Sophistication in Deepfakes and the Associated Reputational Damage

RQ2 asked whether the technical quality of deepfakes matters, such that advanced deepfakes damage the reputation of the protagonist more than basic and moderate deepfakes do. As indicated by planned contrasts and the overlapping CIs in Figure 1, attitudes (Panel A), voting intentions (Panel B), and negative emotions (Panel C) of participants exposed to advanced deepfakes were not significantly different from those prompted by basic and moderate deepfakes. All video materials, fake videos as much as authentic videos, elicited a similar amount of reputational damage (see the overlapping CIs in Figure 1).

Summary

Deepfakes suggesting scandal involvement (H1), regardless of their technical sophistication (RQ2), elicited reputational damage for an innocent politician. This was visible in decreased attitudes and voting intentions, as well as increased negative emotions. However, cheapfakes elicited similar reputational damage to deepfakes, and all fake videos outperformed text-only fakes (RQ1). This highlights the persuasiveness of fake videos in general.

Journalistic Fact-Checks Represent a Potent Mitigation Strategy

H2 predicted that a journalistic fact-check would mitigate the reputational damage elicited by deepfakes (H2.1) and traditional forms of disinformation (H2.2). Here, we analyzed only the data of participants exposed to fake incriminating evidence (n = 1,841); half received a fact-check, and half did not. The main hypothesis tested the 5 × 2 factorial design using two-way ANOVAs, with the format of the alleged fake evidence and fact-check exposure set as the between-subjects factors. Given that this design only includes fake content groups, a significant main effect of fact-check exposure would indicate that this exposure elicited a significant effect. A significant interaction would indicate that the effect of fact-check exposure depended on the specific fake format to which participants were exposed. Again, we used the two standalone comparison groups (control, authentic) to interpret effect patterns by relying on independent sample t-tests and Cohen’s d to estimate effect sizes.

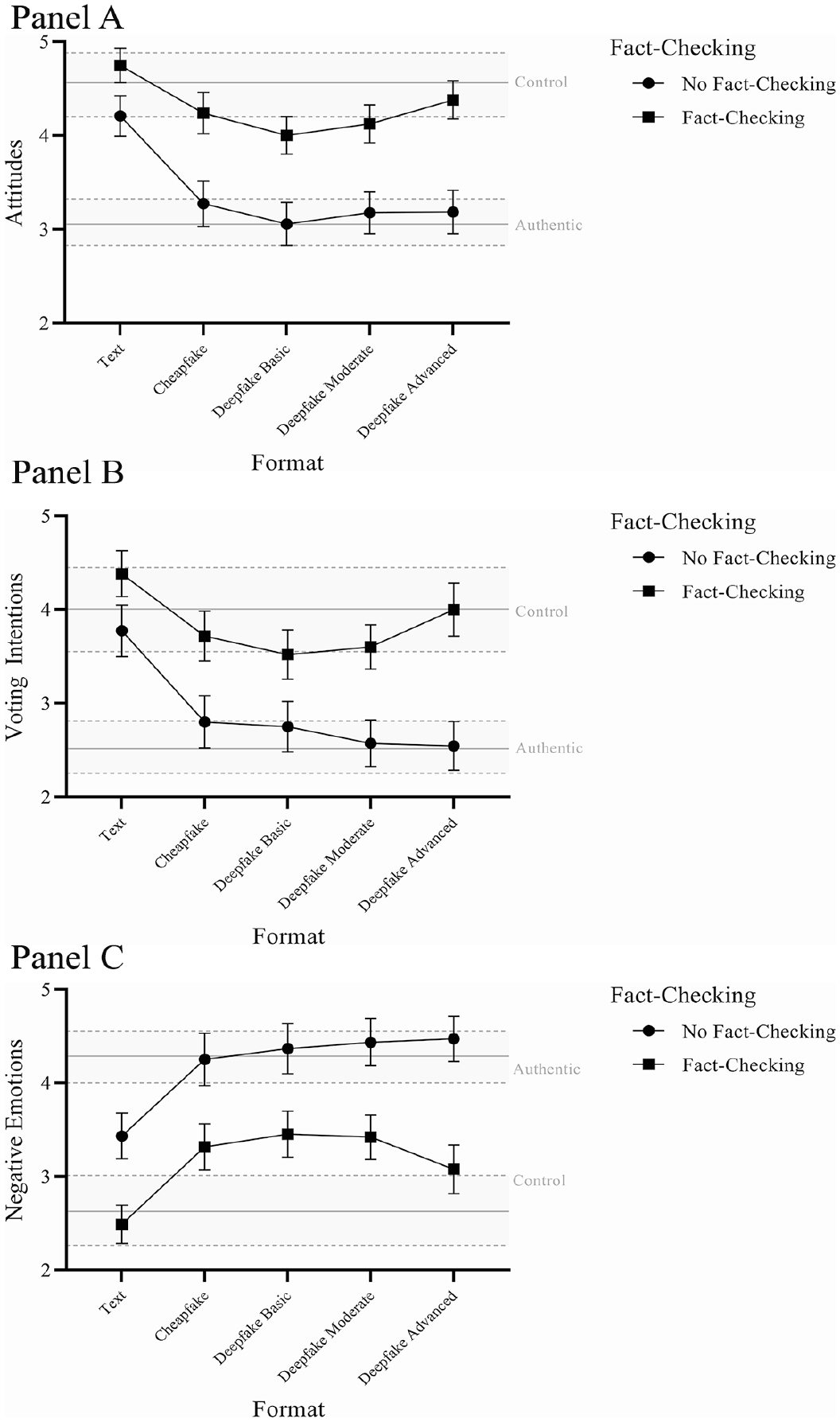

The fact-check had a significant main effect on attitudes toward the politician, F(1,1831) = 175.68, p < .001, η2 = .09, voting intentions, F(1,1831) = 128.94, p < .001, η2 = 0.07, and negative emotions, F(1,1831) = 169.31, p < .001, η2 = 0.08. Specifically, it helped mitigate the noxious effects of fake accusations. Figure 2 illustrates these findings.

Effects of fact-checking on mitigating outcomes indicative of reputational damage. (A) Attitudes. (B) Voting Intentions. (C) Negative Emotions.

For orientation, we insert the values obtained for the two standalone comparison groups—control and authentic—in the figure, along with their bootstrapped 95% CIs. Thus, the gray areas in Figure 2 illustrate the effects under scrutiny in the baseline groups, allowing us to assess the effects of fact-checking relative to control (no reputational threat) and authentic (legitimate, “real” reputational threat). Regarding the latter, t-tests confirmed that differences between the two groups were statistically significant; in the control group, attitudes and voting intentions were higher and negative emotions were lower compared with the authentic group. Cohen’s d was estimated at values above 0.80, indicative of a large effect: attitudes, t(242) = 6.76, p < .001, d = 0.99; voting intentions, t(242) = 5.47, p < .001, d = 0.81; negative emotions, t(242) = −6.24, p < .001, d = −0.92.

As shown in Figure 2, the fact-check brought attitudes (Panel A) and voting intentions (Panel B) back up, mostly reaching the values of the control group (notice the overlapping CIs); thus, it largely eliminated the detrimental effects of the reputational threat. However, for negative emotions (Panel C), the fact-check only reduced the detrimental effects of text-based allegations and those of the advanced deepfake (as these groups’ CIs now overlap with those of the control group). Participants who did not receive a fact-check generally reported attitudes (Panel A), voting intentions (Panel B), and negative emotions (Panel C) that were not significantly different from those obtained for the groups that saw the authentic incriminating evidence (see overlapping CIs). The only exception to this trend was found in the text-based allegations, whose effect on negative emotions was limited to begin with. Detailed descriptive statistics are reported in OSM Table 9.

As an additional analysis, we tested whether the evidence format of the allegations interacted with the existence of a fact-check in influencing the outcomes of interest. We found a small but significant interaction effect for voting intentions, F(4,1831) = 2.89, p < .05, η2 = 0.006, yet not for attitudes toward the politician, F(4,1831) = 2.32, p = .05, or negative emotions, F(4,1831) = 1.27, p = .279. The small interaction effect indicated that the effect was different between those with and those without fact-checking exposure among the five fake format groups: The difference appeared to be smaller (but still significant; see nonoverlapping CIs) in those reading the text-based fake content, possibly because the text-based fake content could not elicit large reputational damage to begin with. The difference was relatively large in those who viewed the advanced deepfakes. However, we refrain from overinterpreting this effect, because the general pattern was similar across the three outcomes: Exposure to fact-checking decreased and often even eliminated the detrimental effects elicited by the fake content.

Taken together, the evidence is consistent with the idea that fact-checks can reduce (and sometimes eliminate) the reputational damage elicited by deepfakes (H2.1) and traditional forms of disinformation—that is, text-based fakes and cheapfakes (H2.2). Journalistic fact-checking emerged as a potent mitigation strategy.

Discussion

The impetus for this study was heated debate over the potential negative effects of deepfakes on democracy. With deepfakes created using AI—which can generate real-looking audiovisual forgeries—it is often assumed that their realism will translate into a capacity to deceive audiences superseding that of earlier forms of disinformation, such as text-based fakes and cheapfakes (Ahmed 2023; Chesney and Citron 2018; Jacobsen and Simpson 2024; McCosker 2024). If such forgeries are mistaken for accurate representations, citizens’ thoughts, feelings, and behaviors could be altered; this could change the outcomes of elections, and thus, it poses a democratic threat.

The present study provided evidence consistent with the idea that fake videos but not text-only fakes, suggesting involvement in a sex, corruption, or prejudice scandal, elicited substantial reputational damage for an innocent politician regardless of whether the underlying technique was “cheap” or “deep.” However, exposure to a journalistic fact-check substantially reduced the detrimental effects, showing that fact-checking is a successful mitigation strategy. These two major findings have important implications for our theoretical understanding of this modern form of disinformation and how to counteract it.

Detrimental Effects Elicited by Deepfakes and Cheapfakes

Earlier writings have acknowledged the importance of investigating deepfakes’ impact on outcomes relevant to democracy. They have speculated that, with their use of AI, deepfakes are more persuasive and harder to debunk compared with earlier forms of disinformation. However, a systematic comparison of deepfakes with traditional forms, asking whether their effects would be different or similar, was missing from the literature. Such a juxtaposition would allow us to put deepfakes’ effects into context, enabling a thorough theoretical understanding of the effects of deepfakes and an evidence-based verdict on whether their characterization as a game changer is appropriate.

We found that fake videos suggesting scandal involvement, but not text-only fakes, elicited substantial reputational damage for an innocent politician, regardless of whether the underlying technique was “cheap” or “deep.” Thus, consistent with prevalent concerns, deepfakes elicited a substantial negative impact. However, cheapfakes provoked a similar effect. Consequently, the differential treatment of deepfakes (vs. cheapfakes) in public discourse seems unwarranted. It appears that the concept of visual primacy, as theorized above, is fundamental to understanding the strength of the negative effects. Meanwhile, text-only fake material did not elicit a comparable effect, and deepfakes’ technical sophistication did not influence the size of the detrimental effect. The latter finding is consistent with our result that deepfakes and cheapfakes are similarly damaging. It seems that the visual mode mattered most: A detrimental effect superseding that of text fakes was observed even for unsophisticated fake videos (cheapfake, basic deepfake).

Our evidence contributes to visual research in political communication, demonstrating the persuasiveness of videos. On a basic level, the finding that fake videos were as damaging as authentic videos, and much more so than text-only fakes, corroborates concerns related to visual disinformation (Ecker et al. 2024). This finding can be attributed to the fact that fake videos share many of the properties of authentic videos, seeming to record reality.

The lack of significant differences between the various forms of fake videos (i.e., cheapfakes, deepfakes) and sophistication levels of deepfakes (i.e., basic, moderate, advanced) suggests that forgeries do not need to be AI-enabled to cause misperceptions; simple tricks suffice (i.e., using authentic footage but swapping the audio; see Hameleers 2024). While it initially seemed reasonable to assume that the various formats would entail a varying number of clues to the fabricated nature of the material (e.g., more clues in cheapfakes than in advanced deepfakes)—often giving reasons to doubt the authenticity of fake videos—this was not the case. This finding can help substantiate discussions about deepfakes, which are routinely described in apocalyptic terms despite limited evidence for such descriptions.

Our study makes a theoretical contribution, clarifying that modality drives persuasion. We conclude that concerns over the negative impact of deepfakes are appropriate (Ahmed 2023; Chesney and Citron 2018; Dan et al. 2021; Jacobsen and Simpson 2024; McCosker 2024) but that it is equally fitting to worry about the effect of cheapfakes. As such, more scholarly attention to visual disinformation seems warranted.

Journalistic Fact-Checking as a Potent Mitigation Strategy

Knowledge of successful mitigation strategies of disinformation contributes to a more thorough theoretical understanding of detrimental effects. We found that exposure to a literacy-based fact-check in video format substantially reduced, and often eliminated, detrimental effects in terms of reputational damage elicited by fake content. This differs from what work on belief echoes (Thorson 2016) and the continued influence of misinformation (Walter and Tukachinsky 2020) would have suggested, as the politician featured was no longer sanctioned, and measures were largely brought back to baseline.

This suggests that the democratic threat posed by fake videos is real, but journalism can help shield democracy from it. Debunking fake videos is not the hard sell it is assumed to be; this finding informs media literacy efforts and is consistent with findings from other domains showing that fact-checks can set the record straight (Walter et al. 2020). Reassuringly, fact-checks appear to be able to debunk deepfakes and cheapfakes in similar ways. Democracies rely on informed citizens who can agree on basic facts (Galston 2001), and such citizens will likely benefit from systematic fact-checking.

At this point, it seems fitting to reiterate a concern voiced in earlier writings (Diakopoulos and Johnson 2021): Internalizing the notion that content is easily fabricated may prompt citizens to challenge all media content. Carried to excess, such skepticism could prove useful to dishonest actors wishing to deny the occurrence of real events and discount the veracity of authentic videos, granting them a “liar’s dividend” (Chesney and Citron 2018: 1758). “Liar’s dividend” refers to the various benefits that individuals, particularly politicians, can reap by exploiting an environment rife with misinformation and uncertainty. This allows them to deny wrongdoing convincingly and may allow them to evade accountability. While also known from other contexts, the liar’s dividend is an often-cited yet not empirically shown effect of deepfakes; it is up to future studies to determine whether it truly exists in this context.

Ethical Considerations

This study was designed to test the most-feared scenario for the use of deepfakes in politics: that fake videos staging a political scandal might be distributed right before an election, affect voting behavior, and potentially put the “wrong” politician in office. This meant that experimental stimuli had to put a politician in severe scandalous situations. As explained above, we used recent real-life verbal derailments as templates (see “Post With Reputational Threat”). This goes beyond what other studies have done, such that a different ethical approach was necessary. We deliberated extensively on how to balance risk and reward, and considered unintended consequences. Primarily, we considered the risk of “influencing [participants’] voting choices,” the risk that the “highly-shareable” stimuli might circulate outside the study context, and the risk of elevating distrust in science (Greene et al. 2023: 139–141). We arrived at the conclusion that using a fictitious politician would be best—an approach described as “the most obvious way to circumvent ethical issues with misinformation exposure” (Greene et al. 2023: 140).

The alternative—consisting of using real politicians in our stimuli, but focusing on “topics with low risk of harm rather than high-risk topics” (Greene et al. 2023: 140)—would have rendered the study unable to test the scenario that has inspired us to conduct it, which does imply severe allegations able to render a politician unelectable. Furthermore, putting severe statements into the mouth of a real politician means risking forfeiting some of the plausibility of stimuli. This was something we wanted to prevent, especially considering research findings that the further away from reality the content of a deepfake is, the more likely people are to recognize it as fake (Hameleers et al. 2024a, 2024b). Finally, unlike our actor, who consented to the creation of deepfakes of him, an actual politician would likely have not, at least not with allegations as severe as those studied here. Manipulating videos to create the impression that real politicians said something offensive, illegal, or immoral could harm their careers and might also represent a false light invasion of privacy, protected by law. Thus, using a real politician seemed ill-advised.

Furthermore, the use of a fictitious politician allowed us to have two baselines for comparison in this study: a neutral control and an authentic video. The latter baseline is crucial for our theory-building efforts and helped us ensure internal validity in a way that would not have been possible when using a real politician: Fake videos should arguably be considered dangerous when they are as harmful as real videos with the same content.

However, observing these ethical concerns meant making some sacrifices regarding external validity. For the first study attempting to isolate the effects of deepfake technology versus other forms of visual and textual disinformation, and authentic videos with the same content, we considered this acceptable. This allowed us to determine cause and effect. Future research could do field experiments instead.

This also meant that the fact-check used was also fictitious in the sense that it did not attempt to debunk disinformation concerning real politicians. While this does mean that our findings regarding the success of fact-checks must be interpreted with caution, especially in comparison with findings on the effects of fact-checks to disinformation actually circulating online, we attempted to approximate real fact-checks as much as possible.

Limitations

This study has important limitations. First, our use of an artificial Twitter (now X) feed as a stimulus may have limited external validity. However, meta-analytical evidence indicates an absence of differences between real and artificial contexts, at least when it comes to correcting misperceptions (Walter and Tukachinsky 2020), as attempted in the second part of our study.

Relatedly, due to the nature of our stimuli, our findings may not be generalizable to real-life settings. Indeed, to prevent harming the reputation of real politicians, our stimuli featured a fictitious politician. While we grant that our fake videos show fake versions not of a real politician, but rather of a fictitious politician and that “Martin W.” did not have any “cultural baggage,” we did make a special effort to create stimuli as realistic as possible. We introduced the fictitious politician to respondents in some depth, ensuring that a story was created around him. Also, the fifteen introductory posts shown to study participants were intended to simulate reputation building as much as possible when using a fictitious politician, and to familiarize participants with the appearance and speech of the political actor. (See these stimuli in the folder “1—Twitter posts without reputational threat” under “Stimuli” in the OSF repository, at https://osf.io/zhwm8/?view_only=8e3ec3ce9eb54ff8a9d344ef31c3e9d3). Participants’ positive response to the politician in the control group (Figure 1) suggests that this attempt was successful. Nonetheless, we grant that building and then deteriorating reputations with claims of scandal involvement is likely to be a more volatile process when it concerns a local politician unknown to participants than when making up one’s mind concerning an actual politician.

While we focused on key outcomes from a politician’s perspective, reputational damage can be measured in other ways. For instance, future studies could distinguish between skill-specific reputation (i.e., the ability to perform the job, affected by a financial scandal) and character reputation (i.e., affected by a sex scandal). Future work, especially that using a longitudinal design, could also explore the societal effects of exposure to fake videos and associated corrections. Of these, a potential increase in across-the-board disenchantment with politicians (e.g., perceived as dishonest or having poor character), increased cynicism, or decreased political efficacy seem most relevant.

Another source of concern might be our decision to use stimuli of reasonable quality throughout (i.e., in the cheapfake, basic, and moderate deepfake conditions). Yet our goal was to create stimuli imitating political fake videos encountered outside the research lab. While the lack of differences among the video conditions may be attributable to this decision—as we had not provided participants with many clues to detect the differential quality of each condition—we consider this approach meaningful. Using stimuli of lesser quality may have well translated into an ability to expose larger effects, but the meaningfulness of such an endeavor seemed questionable in the absence of a real-life equivalent of such fake videos. Relatedly, this study did not explore why videos were more convincing than text. Future work could ascertain whether this is due to videos’ elevated ability to command and hold audiences’ attention, to sear into memory, to provide a sense of being there, due to their ability to boost the credibility of the false claim, or due to them being easier to process or processed more superficially. As for effect moderators, we propose individual differences in media literacy, need for cognition, analytic thinking, involvement, and political interest.

We studied short-term effects and are not able to make claims about the duration of observed effects or whether they fade with time. It is even possible that the credibility of (debunked) fake videos, and thus, the harm they pose, may increase rather than decrease over time, as the sleeper effect suggests (Eagly and Chaiken 1993).

Because we used a video fact-check as a stimulus, we cannot know if other formats (e.g., text only) can elicit similar beneficial effects. While existing research suggests the superiority of video over text fact-checks (Dan and Coleman 2024; Young et al. 2018), designing the former requires more resources. The following question then emerges: Are lower-priced alternatives, such as text fact-checks, also able to debunk fake videos? Relatedly, future studies could investigate the mechanism through which video fact-checks elicit their power when debunking fake videos. This may be due to the fact that video material discredits the video that persuaded the participants—essentially fighting one modality of disinformation with that same modality in the fact-check. Alternatively, the video fact-check may have worked by having increased audiences’ awareness of the presence of deepfakes or by having clarified the importance of media literacy. Also, we acknowledge that our findings may encourage readers to overstate the mitigating effects of fact-checks because all our participants were exposed to one—something that is unlikely in real life. Future studies could use selective-exposure designs to arrive at a more accurate estimate of the power of fact-checking. Also, critics might also point out that our decision to include visuals demonstrating how we have created the fakes used in the study might differ from the way a traditional fact check would look like. While including “making of” footage might not always be possible, numerous fact checks encountered online employ this strategy (e.g., The Washington Post 2019). Nonetheless, future research is advised to use a demonstration of how fakes other than those used as stimuli were created. Finally, our decision to use a fictitious politician reduces our ability to generalize based on the way our participants responded to the fact-check. While the correction worked, our participants’ lack of real-life knowledge about the politician and his ideology may be a source of concern. Indeed, our ability to replace old information (i.e., the reputational threat) with new information (i.e., the correction in the fact-check) could merely be a recency effect. Future studies should test the ability of fact-checks to debunk fake videos of actual politicians.

Conclusion

This study spoke to how the proliferation of fake videos is beginning to complicate the provision of accurate information for the public and the lives of those aiming to safeguard public discourse from undue influences. It illustrated the elevated persuasiveness of the video format compared with text and identified modality as the driver of effects. Concerns over the negative impact of deepfakes are appropriate, but it is equally fitting to worry about cheapfakes. Visual disinformation needs special scholarly attention, whether the underlying technique is “cheap” or “deep.” This study advances our understanding by suggesting that, while deepfakes (and cheapfakes) pose a democratic threat, fact-checking can help mitigate this threat. This paints a somewhat different and more nuanced picture than previously possible.

Supplemental Material

sj-pdf-1-hij-10.1177_19401612251317766 – Supplemental material for Deepfakes as a Democratic Threat: Experimental Evidence Shows Noxious Effects That Are Reducible Through Journalistic Fact Checks

Supplemental material, sj-pdf-1-hij-10.1177_19401612251317766 for Deepfakes as a Democratic Threat: Experimental Evidence Shows Noxious Effects That Are Reducible Through Journalistic Fact Checks by Viorela Dan in The International Journal of Press/Politics

Footnotes

Acknowledgements

Thorough feedback provided by Cristian Vaccari, Briony Swire-Thompson, Dannagal G. Young, and Michelle A. Amazeen has significantly improved the research reported here. This research was conducted while the author was a researcher in residence at the Center for Advanced Studies of the LMU Munich (CASLMU).

Data Availability

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The author gratefully acknowledges the generous financial support provided by the LMU Munich through the LMU Excellent Program in the Young Talent Fund, by CASLMU, and the Munich University Society.

Supplemental Material

Supplemental material for this article is available online.

Author Biography

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.