Abstract

Background:

Previous studies have identified useful endoscopic ultrasonography (EUS) features to predict the malignant potential of gastrointestinal stromal tumors (GISTs). However, the results of the studies were not consistent. Artificial intelligence (AI) has shown promising results in medicine.

Objectives:

We aimed to build a risk stratification EUS-AI model to predict the malignancy potential of GISTs.

Design:

This was a retrospective study with external validation.

Methods:

We developed two models using EUS images from two hospitals to predict the GIST risk category. Model 1 was the four-category risk EUS-AI model, and Model 2 was the two-category risk EUS-AI model. The diagnostic performance of the models was validated with external cohorts.

Results:

A total of 1320 images (880 were very low-risk, 269 were low-risk, 68 were intermediate-risk, and 103 were high-risk) were finally chosen for building the models and test sets, and a total of 656 images (211 were very low-risk, 266 were low-risk, 88 were intermediate-risk, and 91 were high-risk) were chosen for external validation. The overall accuracy, sensitivity, specificity, positive predictive value (PPV), and negative predictive value (NPV) for the four-category risk EUS-AI model in the external validation sets by tumor were 74.50%, 55.00%, 79.05%, 53.49%, and 81.63%, respectively. The accuracy, sensitivity, specificity, PPV, and NPV for the two-category risk EUS-AI model for the prediction of very low-risk GISTs in the external validation sets by tumor were 86.25%, 94.44%, 79.55%, 79.07%, and 94.59%, respectively.

Conclusion:

We developed a EUS-AI model for the risk stratification of GISTs with promising results, which may complement current clinical practice in the management of GISTs.

Registration:

The study has been registered in the Chinese Clinical Trial Registry (No. ChiCTR2100051191).

Introduction

Subepithelial lesions (SELs) are most frequently found in the stomach, most of which are gastrointestinal stromal tumors (GISTs). 1 GISTs have varying degrees of malignancy potential. For example, micro-GISTs (<10 mm) have almost no malignancy potential, whereas large GISTs and GISTs with a high mitotic count have a high metastatic probability and recurrence rate. 2 Numerous systems have been proposed for the risk stratification of GISTs. The most widely used systems are the National Institute of Health (NIH) criteria, modified NIH criteria, and Armed Forces Institute of Pathology (AFIP) criteria.3–6 The variables that are included in the abovementioned systems are mainly tumor size, mitotic index, and tumor location.

The mitotic index is obtained via histopathologic sections of the resected tumor or endoscopic ultrasonography-guided fine needle aspiration or biopsy (EUS-FNA/B). If we are able to predict the malignancy potential of a suspected GIST, it could aid clinical decision-making, especially for small GISTs. Previous studies have attempted to identify useful EUS features to predict the malignant potential of GISTs, and they found that, large size, cystic change, surface ulceration, extraluminal border, depth, heterogeneity irregular borders, and a nonoval shape were potential predictors for malignancy.7–11 However, these predictors were not consistent in the different studies; moreover, except for tumor size, the judgment of other predictors was somewhat subjective. Artificial intelligence (AI) via deep convolutional neural networks (DCNNs) has shown promising results in medicine.12–14 Seven et al. 15 built a deep learning algorithm using EUS images to predict the malignancy potential of gastric GISTs with good accuracy. Their studies used the AFIP criteria as the reference, and the sample size was relatively small and lacked external validation. A previous study indicated that the modified NIH criteria were the best criteria to identify a single high-risk group for consideration of adjuvant therapy. 2 Hence, we aimed to build a risk stratification EUS-AI model to predict the malignancy potential of GISTs in reference to the modified NIH criteria with larger sample sizes and external validation.

Methods

Clinical data and EUS image collection

The EUS images used to build the risk stratification EUS-AI model and used for external validation were from our previous study, in which we successfully built a EUS-AI model to differentiate GISTs and non-GISTs with good accuracy. 16 EUS images obtained from The Sixth Affiliated Hospital, Sun Yat-sen University, and Guangdong Second Provincial Central Hospital were consecutively collected to build the model. For external validation, we collected EUS images from four other hospitals (Fudan University Shanghai Cancer Center, the Fourth Hospital of Hebei Medical University, Zhoushan Hospital of Zhejiang Province, and Yangjiang Hospital of Traditional Chinese Medicine). The inclusion criteria for the collected images were as follows: good quality EUS images showing the tumors; confirmed histopathology of gastric GISTs (obtained by endoscopic resection, surgery, or FNA); and modified NIH criteria risk category could be obtained. The exclusion criteria included the following: poor-quality images and repeated images. The following information about the patients was also collected: age, sex, EUS results, and histopathology results. The collected images were categorized based on the modified NIH criteria risk category.

EUS procedures

EUS was performed by experienced endosonographers with experience in more than 500 cases of SELs. The echoendoscopes that were used in the models and the external validation sets are shown in Supplemental Tables S1 and S2. The utilized frequency was 5–20 MHz.

Image processing and development of the deep learning models

The image processing, augmentation, and development of the deep learning models were supported by the Tianjin Jinyu Artificial Intelligence Medical Technology Co., Ltd. The image processing was the same as in our previous study. After selecting the qualified images, two experts in EUS marked the borderline of the tumor with LabelMe software (a polygonal and open annotation tool developed by the Massachusetts Institute of Technology, Computer Science and Artificial Intelligence Laboratory), and the marked tumors were regarded as the regions of interest (ROIs). Afterward, the engineers trimmed the images to squares or rectangles by precisely fitting the ROI. For images with measuring lines or measuring marks, Adobe Photoshop (Version 13.0; Adobe Systems Software Ireland Ltd., Dublin, Ireland) was used to erase the lines or marks and to preserve the originality of the images as much as possible. Image augmentation technology, such as mirror flip, horizontal flip, and rotation in certain degrees of the EUS images, was applied. The preprocessed images were then changed into the RGB three-channel to be generated as the model input.

We developed two models in this study. Model 1 was a four-category risk EUS-AI model, and Model 2 was a two-category risk EUS-AI model. In Model 1, we classified the images into four categories based on the modified NIH criteria: very low-, low-, intermediate-, and high-risk. In Model 2, we classified the images into two categories: very low- and non-very low-risk (the latter included low-, intermediate-, and high-risk categories). The process was mainly implemented by Python (Version 3.7; Python Software Foundation, Wilmington, DE, USA) and PyTorch (Version 1.7.1; Facebook artificial intelligence research institute, Menlo Park, CA, USA). The chosen images were randomly divided into training sets and test sets at a ratio of 7.5:2.5, and 10-fold cross-validation was used.

We used the DCNN classifier known as ResNeSt-50 to train Model 1 (Amazon and University of California, Davis, Davis, CA, USA). ResNeSt was developed by Zhang et al. 17 , and it showed superiority in accuracy and latency trade-off on image classification when compared with other backbones. 17 The Adam optimizer was used, which is a popular optimizer that combines the ideas of AdaGrad and RMSProp. 18 The initial learning rate was set as 0.003, and cosine annealing was adopted as the attenuation method. This method took the cosine function as a period and reset the learning rate at the maximum value of each period. 19 The model was trained for 300 epochs, and the final learning rate was 3e−7. When the training reached 300 epochs, the training was stopped.

As Model 1 was a four-category model (which was a multicategory task), a deeper learning model was needed to learn the characteristics. Model 2 was a two-category model, and the use of ResNeSt-34 was sufficient to train the model. The Adam optimizer was subsequently used. The initial learning rate was set as 0.003, and cosine annealing was adopted as the attenuation method. The model was trained for 500 epochs, and the final learning rate was 9e−8. When the training reached 500 epochs, the training was stopped.

The output of the model was the risk category (ranging from 0 to 100%) of the GISTs. The type with the higher probability was interpreted as the final risk category.

Statistical analysis

Data are presented as the mean (standard deviation, SD) for normally distributed continuous variables and presented as the median (range) for nonnormally distributed continuous variables. Categorical variables are expressed as numbers (percentages). A receiver operating characteristic (ROC) curve was plotted, and the area under the curve (AUC) was calculated. The sensitivity, specificity, positive predictive value (PPV), negative predictive value (NPV), and accuracy of the risk category of the EUS-AI model, as well as the respective 95% confidence intervals (CIs), were calculated. The abovementioned calculation was performed by using the Scikit-learn package in Python. The reporting of this study conforms to the Standards for Reporting Diagnostic Accuracy (STARD) 2015 statement. 20 The study has been registered in the Chinese Clinical Trial Registry (No. ChiCTR2100051191).

Results

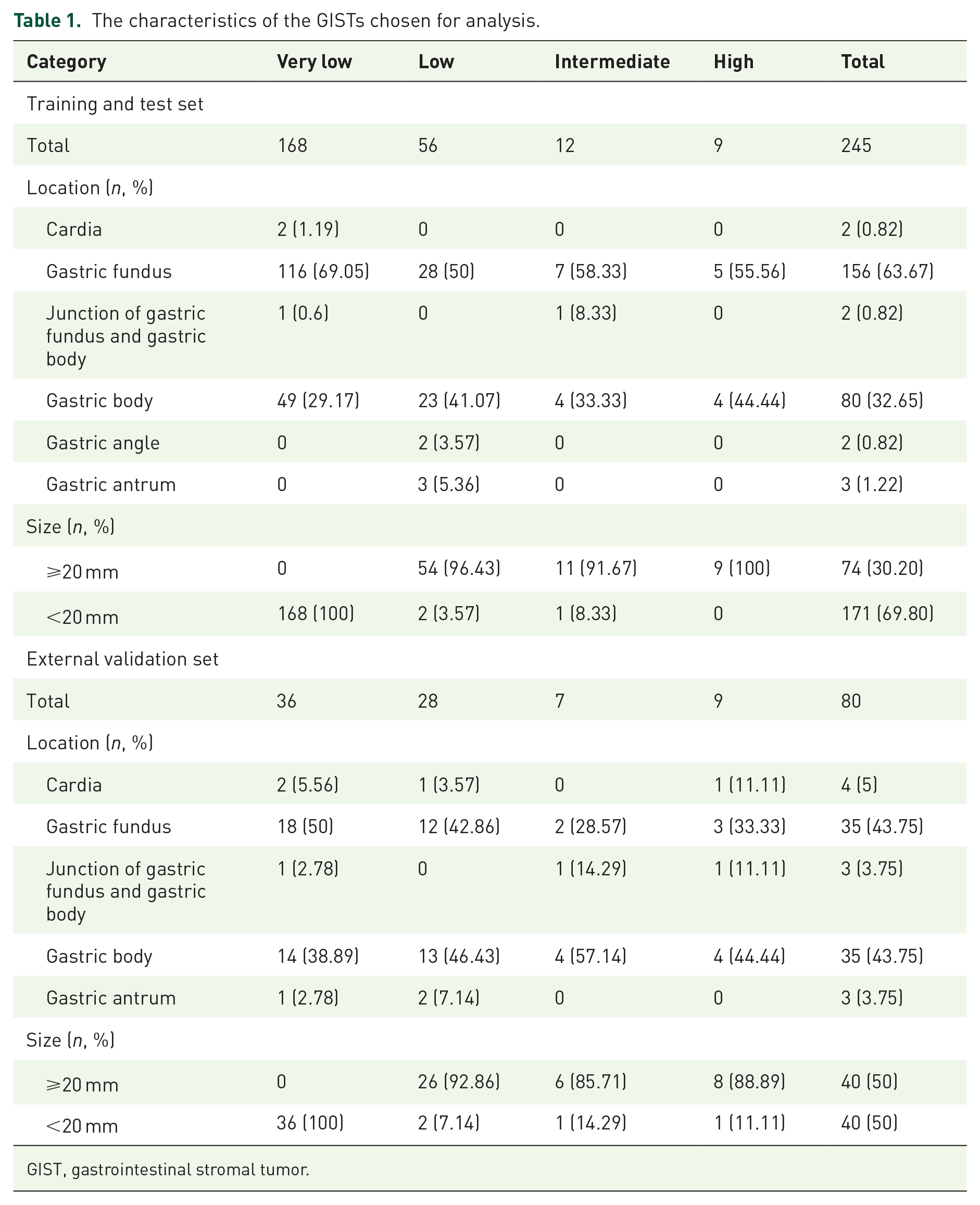

Basic information about the training sets and the test sets

A total of 1320 images (the number of images for very low-risk, low-risk, intermediate-risk, and high-risk was 880, 269, 68, and 103, respectively) from 243 patients (245 GIST cases, of which 168 were very low-risk, 56 were low-risk, 12 were intermediate-risk, and 9 were high-risk) were chosen for analysis. The median age of the patients was 56 (29–81) years, and 104 patients (42.80%) were male. A total of 990 images were randomly divided into the training sets, and 330 images were randomly divided into the test sets. The characteristics of the chosen GISTs are summarized in Table 1.

The characteristics of the GISTs chosen for analysis.

GIST, gastrointestinal stromal tumor.

Basic information for the external validation sets

A total of 656 images (the number of images for very low-risk, low-risk, intermediate-risk, and high-risk was 211, 266, 88, and 91, respectively) from 79 patients (80 GISTs, of which 36 were very low-risk, 28 were low-risk, 7 were intermediate-risk, and 9 were high-risk) were finally chosen in the external validation sets (Table 1). The number of images and tumors selected from each hospital are presented in Supplemental Table S3.

Diagnostic performance of the four-category risk EUS-AI model for prediction of the risk category in the test sets

The accuracy, sensitivity, specificity, PPV, and NPV for the four-category risk EUS-AI model in the test sets by images were 81.21%, 75.48%, 90.98%, 93.45%, and 68.52%, respectively, for very low-risk; 83.64%, 64.86%, 89.06%, 63.16%, and 89.76%, respectively, for low-risk; 85.15%, 55%, 87.1%, 21.57%, and 96.77%, respectively, for intermediate-risk; and 94.85%, 82.14%, 96.03%, 65.71%, and 98.31%, respectively, for high-risk (Supplemental Table S4). The overall accuracy, sensitivity, specificity, PPV, and NPV were 83.15%, 72.42%, 90.75%, 79.95%, and 77.52%, respectively. The ROC curves for each type versus other types are shown in Figure 1(a), and the confusion matrices showing the pairwise comparison (number of images) in the test sets are presented in Figure 1(d).

ROCs of the four-category risk EUS-AI model for prediction of the GISTs in the: (a) test sets by images, (b) external validation sets by images, (c) external validation sets by tumors, and confusion matrices of the pairwise comparison in the (d) test sets by images, (e) external validation sets by images, and (f) external validation sets by tumors.

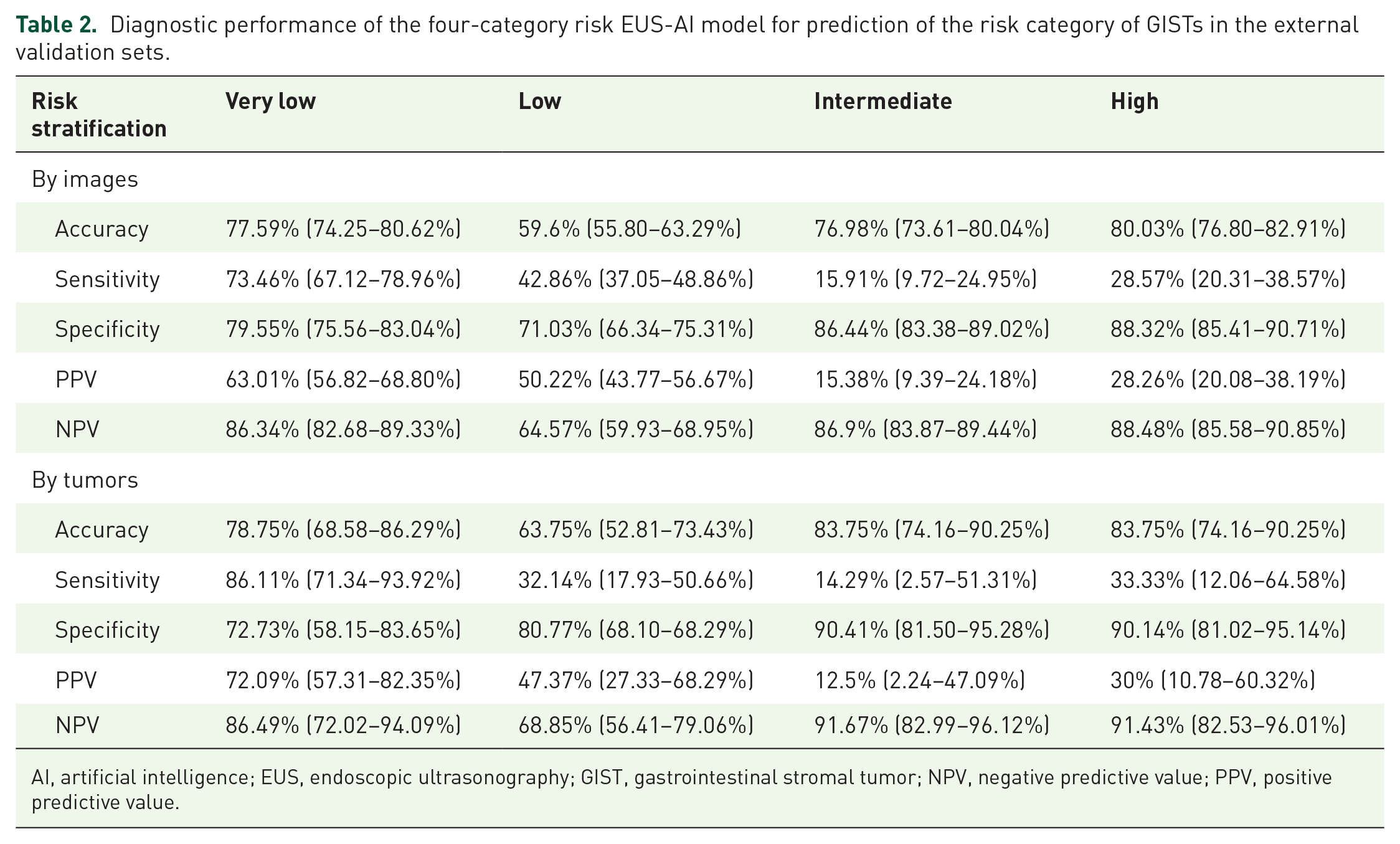

Diagnostic performance of the four-category risk EUS-AI model for prediction of the risk category in the external validation sets

The accuracy, sensitivity, specificity, PPV, and NPV for the four-category risk EUS-AI model in the external validation sets by images and by tumors are shown in Table 2. The overall accuracy, sensitivity, specificity, PPV, and NPV by images were 70.55%, 47.10%, 78.23%, 46.61%, and 77.88%, respectively. The overall accuracy, sensitivity, specificity, PPV, and NPV by tumor were 74.50%, 55.00%, 79.05%, 53.49%, and 81.63%, respectively. The ROC curves and the confusion matrices showing the pairwise comparison results are presented in Figure 1.

Diagnostic performance of the four-category risk EUS-AI model for prediction of the risk category of GISTs in the external validation sets.

AI, artificial intelligence; EUS, endoscopic ultrasonography; GIST, gastrointestinal stromal tumor; NPV, negative predictive value; PPV, positive predictive value.

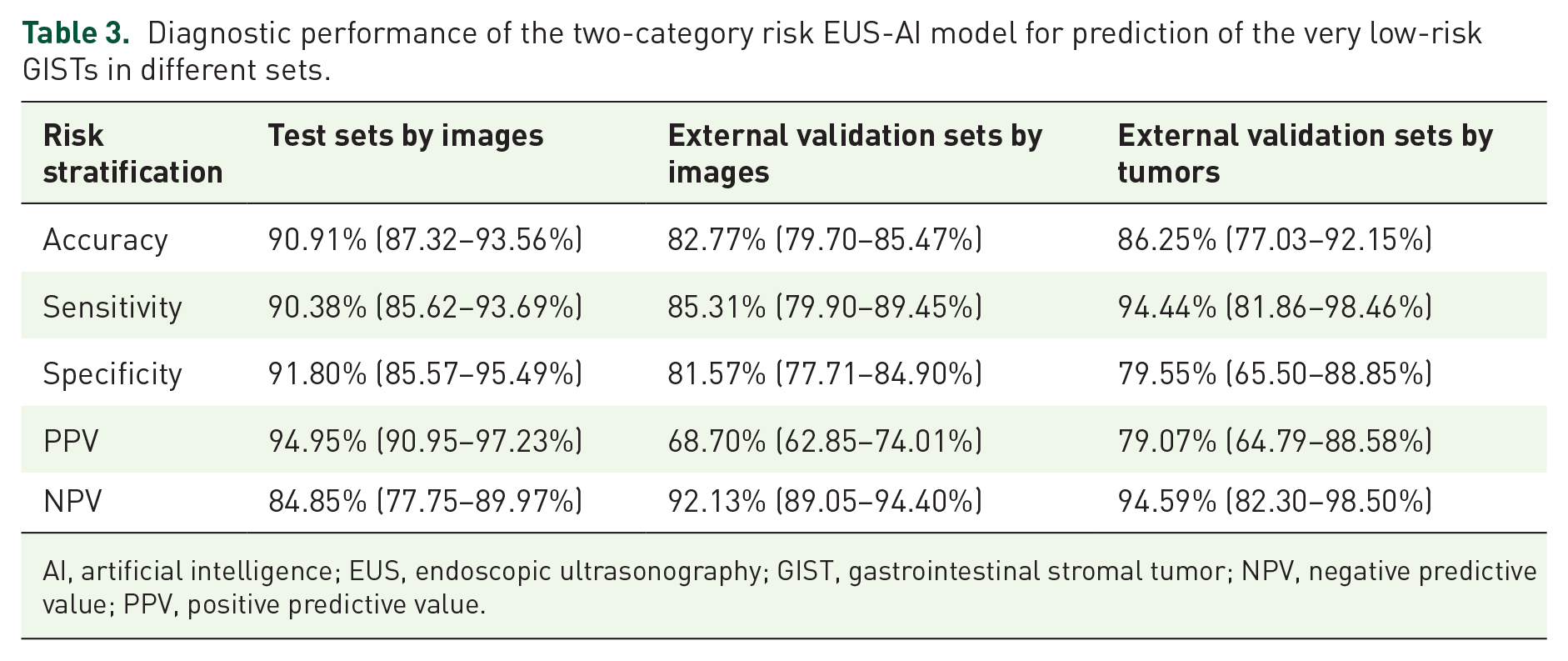

Diagnostic performance of the two-category risk EUS-AI model for prediction of the risk category in the test sets

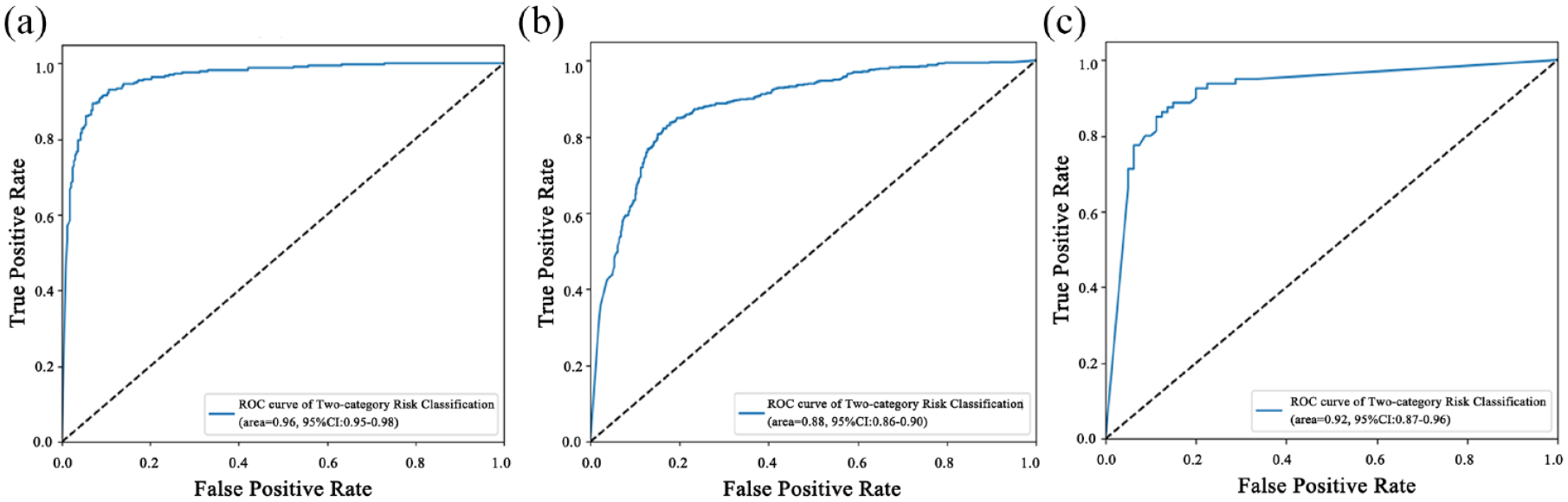

In Model 2, we classified the images into two categories: very low and non-very low-risk. The accuracy, sensitivity, specificity, PPV, and NPV for the two-category risk EUS-AI model for the prediction of very low-risk GISTs in the test sets by images were 90.91%, 90.38%, 91.80%, 94.95%, and 84.85%, respectively (Table 3). The ROC curve is presented in Figure 2(a).

Diagnostic performance of the two-category risk EUS-AI model for prediction of the very low-risk GISTs in different sets.

AI, artificial intelligence; EUS, endoscopic ultrasonography; GIST, gastrointestinal stromal tumor; NPV, negative predictive value; PPV, positive predictive value.

ROCs of the two-category risk EUS-AI model for prediction of the very low-risk GISTs in the: (a) test sets by images, (b) external validation sets by images, and (c) external validation sets by tumors.

Diagnostic performance of the two-category risk EUS-AI model for prediction of the risk category in the external validation sets

The accuracy, sensitivity, specificity, PPV, and NPV for the two-category risk EUS-AI model for the prediction of very low-risk GISTs in the external validation sets by image were 82.77%, 85.31%, 81.57%, 68.70%, and 92.13%, respectively, and those by tumor were 86.25%, 94.44%, 79.55%, 79.07%, and 94.59%, respectively (Table 3). The ROC curves are presented in Figure 2(b) and (c).

Discussion

In this study, we built two risk stratification EUS-AI models to predict the malignant potential of GISTs in reference to the modified NIH criteria, and the accuracy of the four-category risk EUS-AI model was not significantly good; however, the accuracy of the two-category risk EUS-AI model was much better with external validation.

Risk stratification of GISTs tries to evaluate the risk of poor outcomes and to choose patients who may benefit from adjuvant therapy. 21 Independent prognostic factors for GIST include the mitotic index, tumor size, tumor location (gastric versus non-gastric), and tumor rupture. 2 Patients in the low-risk group generally have favorable outcomes and may not require adjuvant therapy or frequent surveillance. In the past few years, several studies have explored AI applications in GISTs which not only include differentiating GISTs from non-GISTs,13,14,22–24 but also risk stratification and prediction of prognosis. 15 Most of the previous studies regarding AI applications in GIST risk stratification were computed tomography (CT)-derived analyses and only a few studies have researched the performance of EUS-derived images. Two major machine learning methodologies, radiomic analysis and DCNNs have shown promise in pattern classification in previous studies.15,25

Seven et al. 15 investigated AI via DCNNs in predicting the malignant potential of GISTs. The overall sensitivity, specificity, and accuracy of the AI system for predicting four-category malignancy risk based on AFIP criteria in their study were 83%, 94%, and 82%, respectively, in the training dataset, and 75%, 73%, and 66%, respectively, in the validation cohort. When patients were divided into two categories: low-risk (including very low-risk and low-risk groups) and high-risk groups (including intermediate-risk and high-risk groups), the sensitivity, specificity, and accuracy in the validation cohort increased to 99.7%, 99.7%, and 99.6%, respectively.

In our study, the overall accuracy, sensitivity, and specificity of the four-category risk EUS-AI model by tumor in the external validation set were 74.50%, 55.00%, and 79.05%, respectively, which is comparable to the results of the validation cohort in Seven’s study. The overall performance of AI in the four-category risk EUS-AI model was not satisfactory because the sensitivity was observed to be relatively low. One possible reason for this phenomenon was that, in our study, the distribution of patients in each risk group of the training set was not balanced, and there were more patients in the very low-risk group than in the other three groups. Therefore, we may not have obtained optimized results for unbalanced classes, as the model did not obtain a sufficient understanding of the underlying classes. This imbalance is naturally inherent in our real-life medical practice. Intermediate and high-risk GISTs were usually larger in size and accompanied by ulceration which could be diagnosed by biopsies alone in most cases; thus, EUS was not required in the diagnosis and preoperative management. Additionally, some of the patients with intermediate- or high-risk GISTs underwent surgery directly after CT examinations with no additional required EUS in our center. These factors can explain the low number of patients in the higher-risk group.

When patients were divided into very low-risk and non-very low-risk groups according to modified NIH criteria, the two-category EUS-AI model that we built showed better accuracy, sensitivity, and specificity of 86.25%, 94.44%, and 79.55%, respectively, by tumor in the external validation sets. Previous studies15,25 have combined the very low-risk and low-risk groups together, which was different from our study. According to European guidelines, 26 very low-risk GISTs probably do not require routine follow-up. National Comprehensive Cancer Network guideline 2022 version 27 also mentioned that less frequent surveillance is acceptable for very small tumors (<2 cm), which is consistent with the modified NIH criteria for the very low-risk group. These results indicate that the very low-risk group has a much lower risk of recurrence compared to the other risk groups; therefore, dividing patients into very low-risk and non-very low-risk groups may be of more clinical significance because the management and follow-up strategy would be different. Our EUS-AI models demonstrated encouraging results in differentiating very low-risk GISTs from other risk groups, which can benefit actual clinical work.

In the study of Li et al., 25 an EUS-derived radiomics model was developed to differentiate GISTs of the higher-risk classification (intermediate-risk and high-risk) from the lower-risk classification (very low-risk and low-risk). The accuracy, sensitivity, and specificity were 82.3%, 81.3%, and 82.6%, respectively. However, they did not specify which classification criteria were used in their study. The radiomics model has been investigated in many previous studies for the risk stratification of GISTs; however, most of the studies 28 were focused on the performance of AI using CT images. Li’s study demonstrated that a EUS-derived radiomics model could increase preoperative diagnostic accuracy and provide a valuable reference in physicians’ decision-making. The steps of radiomics analysis include the first extraction of numerous handcrafted imaging features, followed by feature selection and machine learning-based classification. However, handcrafted features are limited to the current knowledge of medical imaging, which may limit the potential of the predictive model.

Deep learning improves these handcrafted features by automatically learning discriminative features directly from images. 29 A previous study 30 demonstrated that a hybrid structure that includes different features selected with a radiomics model and DCNNs (AUC: 0.882, 95% CI: 0.816–0.947) outperforms independent radiomics (AUC: 0.807, 95% CI: 0.724–0.892) and CNNs (AUC: 0.826, 95% CI: 0.795–0.856) approaches for the classification of GISTs at CT. Moreover, deep learning also has advantages and applications in other related fields, such as sequence methods and synthesis generation. Future studies that explore AI models integrating radiomics and DCNN features for the risk classification of GISTs using EUS images would likely add valuable information to this topic.

There were several limitations of our study. First, this was a retrospective study, and we did not perform prospective validation. It would be better to validate the EUS-AI model in a prospective cohort prior to its application in clinical practice. Another shortcoming, as was mentioned above, is that the number of intermediate- and high-risk GISTs was too small relative to very low-risk GISTs. In future studies, we need to enroll more patients with non-very low-risk to improve the imbalanced dataset model and to make it more stable. Despite these limitations, our results are of value in that our EUS-AI model was trained on a relatively large dataset and validated in an external validation cohort including four hospitals.

In conclusion, we developed a EUS-AI model for the risk stratification of GISTs with promising results. The accuracy and sensitivity of the two-category risk EUS-AI model were high with external validation, which may complement current clinical practice in the management of GISTs.

Supplemental Material

sj-docx-1-tag-10.1177_17562848231177156 – Supplemental material for Artificial intelligence in endoscopic ultrasonography: risk stratification of gastric gastrointestinal stromal tumors

Supplemental material, sj-docx-1-tag-10.1177_17562848231177156 for Artificial intelligence in endoscopic ultrasonography: risk stratification of gastric gastrointestinal stromal tumors by Yi Lu, Lu Chen, Jiachuan Wu, Limian Er, Huihui Shi, Weihui Cheng, Ke Chen, Yuan Liu, Bingfeng Qiu, Qiancheng Xu, Yue Feng, Nan Tang, Fuchuan Wan, Jiachen Sun and Min Zhi in Therapeutic Advances in Gastroenterology

Footnotes

Acknowledgements

The authors thank all the members of Tianjin Economic-Technological Development Area (TEDA) Yujin Digestive Health Industry Research Institute who have made contributions to this program.

Declarations

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.