Abstract

Context

Increasing dissatisfaction with existing methods of assessment in the workplace alongside a national drive towards outcomes-based postgraduate curricula led to a recent overhaul of the way Intensive Care Medicine (ICM) trainees are assessed in the United Kingdom. Programmatic assessment methodology was utilised; the existing ‘tick-box’ approach using workplace-based assessment to demonstrate competencies was de-emphasised and the expertise of trainers used to assess capability relating to fewer, high-level outcomes related to distinct areas of specialist practice.

Methods

A thematic analysis was undertaken investigating attitudes from 125 key stakeholders, including trainees and trainers, towards the new assessment strategy in relation to impact on assessment burden and acceptability.

Results

This qualitative study suggests increased satisfaction with the transition to an outcomes-based model with capability judged by educational supervisors. However, reflecting frustration relating to current assessment in the workplace, participants felt assessment burden has been significantly reduced. The approach taken was felt to be an improved method for assessing professional practice; there was enthusiasm for this change. However, this research highlights trainee and trainer anxiety regarding how to ‘pass’ these expert judgement decisions of capability in the real world. Additionally, concerns relating to the impact on subgroups of trainees due to the potential influence of implicit biases on the resultant fewer but ‘higher stakes’ interrogative judgements became apparent.

Conclusion

The move further towards a constructivist paradigm in workplace assessment in ICM reduces assessment burden yet can provoke anxiety amongst trainees and trainers requiring considered implementation. Furthermore, the perception of potential for bias in global judgements of performance requires further exploration.

Keywords

Introduction

Dissatisfaction with the current assessment system; the death knell of competency-based medical education?

The widespread discontent associated with workplace-based assessment in postgraduate medical education amongst trainees and trainers is well described. Often perceived as a reductionist exercise of questionable educational value, workplace-based assessments (WBPA) are commonly incorrectly used and variably accepted within the clinical environment. 1

Part of this dissatisfaction and misuse comes not necessarily from the tools themselves, which when used well are likely to provide an authentic, holistic picture of practice in the clinical environment, but instead from the requirement to use these assessments to evidence multiple competencies associated with competency-based curricula in their current format.2–7

Competency-based medical education (CBME) was originally described as encompassing a focus on curriculum outcomes rather than processes, an emphasis on abilities and de-emphasis on time-based training, and a focus on learner-centredness. Competency-based medical education was a fundamental change from the ‘apprentice’ model of training that it preceded, driven initially in the 1960s and 1970s by a perceived decline in clinical skills and academic achievement, alongside a demand for ‘competence’ from public health leaders in the USA.8,9 A similar response occurred in the UK in the 1990s; competency-based curricula were perceived to protect the public, reduce subjectivity and maintain standards at a time when failings of self-regulation within the medical profession were demonstrated by a number of high-profile cases.10,11

Workplace-based assessments became ubiquitous as tests of postgraduate medical competence alongside increased focus on reliability and validity of assessments.12,13 Detailed and structured checklists were introduced as part of WBPA and objective structured clinical examinations. These were perceived to reduce examiner subjectivity and create standardisation. 14

However, there were unanticipated consequences related to this focus on objective measures of assessment in workplace-based assessment design. In the desire to measure and demonstrate an objective assessment of each competency and thereby demonstrate overall competence in an area, a level of reductionism in assessment design was created that was arguably at odds with the overarching aims of CBME.5,15

Crossley and Jolly encapsulate these concerns ‘…in essence, scraping up the myriad evidential minutiae of the subcomponents of the task does not give as good a picture as standing back and considering the whole’. 16

The original Intensive Care Medicine (ICM) curriculum, like many postgraduate curricula, used a CBME approach in a manner which requires evidence of assessments in the workplace linked to a significant number of individual competencies, with judgement at the point of assessment. The numbers of assessments required were extensive, with evidence required from trainees of assessments in the workplace demonstrating 96 individual competencies, many of which were repeated for each of three stages of training. 17 Furthermore, ICM trainees commonly dual train with other specialities such as anaesthesia, emergency or internal medical specialities, requiring the separate evidence of additional competencies resulting in a considerable burden on trainee and trainer over the course of postgraduate training.

Standards for postgraduate curricula and the outcome-based model

A new era in postgraduate medical curriculum design in the UK stemmed from the publication of the report ‘Excellence by Design’ in 2017 which set the standards postgraduate curricula had to meet in order to be able to confer entry onto the specialist register. 18 Significantly this report emphasises the need for postgraduate curricula to utilise high-level outcomes, described by the GMC as an ‘outcomes-based curriculum’. The GMC describe an outcome-based curriculum as focussing on what specialist and generic capabilities doctors will have by the end of training as opposed to the processes by which those capabilities are achieved.19,20 Ten years after the inception of the first standalone ICM curriculum in the UK, a redesign was required to meet these new standards.

Readers will find similarity in the theories of competency-based and outcome-based medical education (OBE). Originally described by Spady (1988) in the educational school system in the USA as ‘organising for results’, OBE was later adopted throughout various school systems around the world and used as a framework for the design of undergraduate medical curricula. 20 Emphasis is placed on the intended educational outcomes of the lesson, course, year or programme and in turn the educational programme is designed and evaluated considering these educational outcomes. 21 Simplicity is a key positive of this theory, with limited ‘exit outcomes’ allowing, at a glance, an overview of what the student will be able to do at the end of the programme. The outcomes-based approach is also holistic, as outcomes inevitably involve not only skills and knowledge, but appropriate values and behaviours.

Historically, it is of interest that Spady describes a key driver of OBE as ‘success for all’, or, ensuring that all students are able to demonstrate the ability to ‘face the challenges and opportunities of the outside world’ considering that this may require adaptation of teaching tools and methods. 21 Equality and inclusivity are considered an increasingly important consideration in postgraduate education and it is of note that this perhaps plays a larger part of the core theory behand OBE than of CBME. 22

Clearly, however, there is significant overlap between the andragogical theories of CBME and OBE despite the differences in origins of the terms and drivers for implementation, one based on protecting patients and standardisation, the other based on equality in attainment in the US school system. Perhaps a shift towards using the terminology of OBE in the UK could be conceptualised as an attempt to hold true to the guiding principles of CBME whilst stripping curricula of the term ‘competence’ due to its loaded reductionist associations with UK medical education. Considering this, a move towards an outcomes-based approach and change in terminology alone was felt unlikely to solve the frustrations associated with postgraduate assessment without careful redesign of the assessment process and detailed analysis thereafter.

Programmatic assessment and a new outcomes-based assessment strategy

Development of a new assessment strategy for the ICM curriculum was carried out following critical review of the previous assessment strategy, considering recent GMC guidance, and guided by a literature review focused on reducing assessment burden and using assessments in a more meaningful way.

Differing forms of assessments have varied strengths and weaknesses, for example, in relation to their reliability, validity, acceptability and feasibility. It is increasingly recognised that different assessments can, however, be used to synthesise a valid global impression of capability rather than each standalone assessment requiring a pass/fail judgement. 23 The theory of programmatic assessment encapsulates this move towards making global judgements based on multiple sources of information and holds that considering the utility of the assessment programme as a whole may be more appropriate than focussing on the psychometric validity of each assessment individually.24,25

Moving away from judgements at the point of assessment was felt to help to provide more meaningful feedback from assessors and reduce the known biases associated with summative assessments by single assessors, for example, outlying leniency or stringency, or halo effects relating to single assessments.23,26–28 Judgements of performance were ‘decoupled’ from individual assessment. 24 This was considered a step towards embracing the subjectivity of individual trainers and appreciating different perspectives on performance. Triangulating feedback from multiple assessments (with variation in trainer, environment and assessment type) then informs a more reliable judgement of performance. 29 Therefore it was considered that the educational or clinical supervisor, with the benefit of direct supervision and access to the trainee’s portfolio at the end of the placement was in the best position to identify patterns of performance across tasks and contexts, including identifying outlying aspects of performance. 30 This ‘integrative judgement’ 31 related to each of fourteen high-level outcomes is then reviewed yearly to assess progress.

Although in theory programmatic assessment is widely accepted amongst the educational community and established within medical school curricula, in practice few postgraduate medical curricula incorporate programmatic assessment in this manner currently.32,33 A thematic analysis (TA) of participant feedback was performed to gain detailed insight into attitudes towards of the proposed changes and potential problems in implementation.

Methods

To appropriately ensure the relevance of findings from the TA it was essential that relevant stakeholders were approached.34,35 This included patients and carers, trainees, trainers (including clinical and educational supervisors (ES), heads of school and trainee programme directors), NHS employers and clinical commissioners.

A qualitative analysis was performed to uncover areas of success, any significant areas for immediate or future research, and to highlight how best the assessment strategy might be implemented within the curriculum and share learning with the wider medical education community.

Research governance and ethics

In consultation with Health Education England best practice guidelines, an evaluation of the project was carried to establish the appropriate requirement for research governance and ethics approval. 36 A formal application was made to the Health Education England Research Governance Group and granted.

Data collection

The SARS-CoV-2 pandemic made face to face contact impossible and furthermore placed particular strain on ICM making online focus groups impractical. Therefore, data collection was employed using online methods. However, this meant feedback could be gathered from a larger number of stakeholders and could include a wider range of roles. An electronic questionnaire was employed following publication of the draft assessment strategy on the FICM website. 37 This is likely to increase the applicability of the results across the four nations of the UK compared to local focus groups and improve the analysis of equality in relation to the assessments and decisions made by including both trainees and trainers.

Analysis of data

Due to the unanticipated change in data capture techniques from focus groups to predetermined questions and comments based on these, a phenomenological approach or grounded theory approach were unlikely to be successful in reliably and robustly generating new theories relating to the primary data. Thematic analysis as a qualitative technique is a method of analysing a wide variety of qualitative data in order to report patterns (themes) within it. 38 Thematic analysis is recognised as an effective and flexible technique in its own right which can be performed by small research groups. 39 Given the aims of this research were to explore attitudes towards significant changes in the curriculum use of TA was felt most appropriate.

A step-by-step process was carried out when performing the TA as described by Nowell et al. (2017). 39 The analysis of data was an iterative process; coding was repeated as themes emerged, and previous passages were then re-analysed considering the new ideas generated by the researcher. Familiarisation with the data and generation of codes and themes therefore happened as a continual process. A reflexive approach was taken, considering how background, stage of training, professional role and views and beliefs may affect interpretation of the data at each stage, as described by Patnaik (2013). 40 This potentially mitigated missing or misrepresenting key themes during the TA.

Data collection

The data corpus consisted of the responses to a series of questions relating to the new assessment strategy both using Likert scales and free text responses in addition to data gathered in relation to equality and diversity. The data used for the TA consisted of free text responses by all participants in the comments in relation to the following statements: • The burden of assessment has been suitably reduced in the new curriculum. • It is clear what is required at each stage of training in the assessment strategy. • Will the ES/ARCP Panel be able to form a valid judgement on the trainee’s level of attainment for progression purposes? • Do you feel the proposed new curriculum is all encompassing, without disadvantaging any particular groups of patients or doctors?

Results

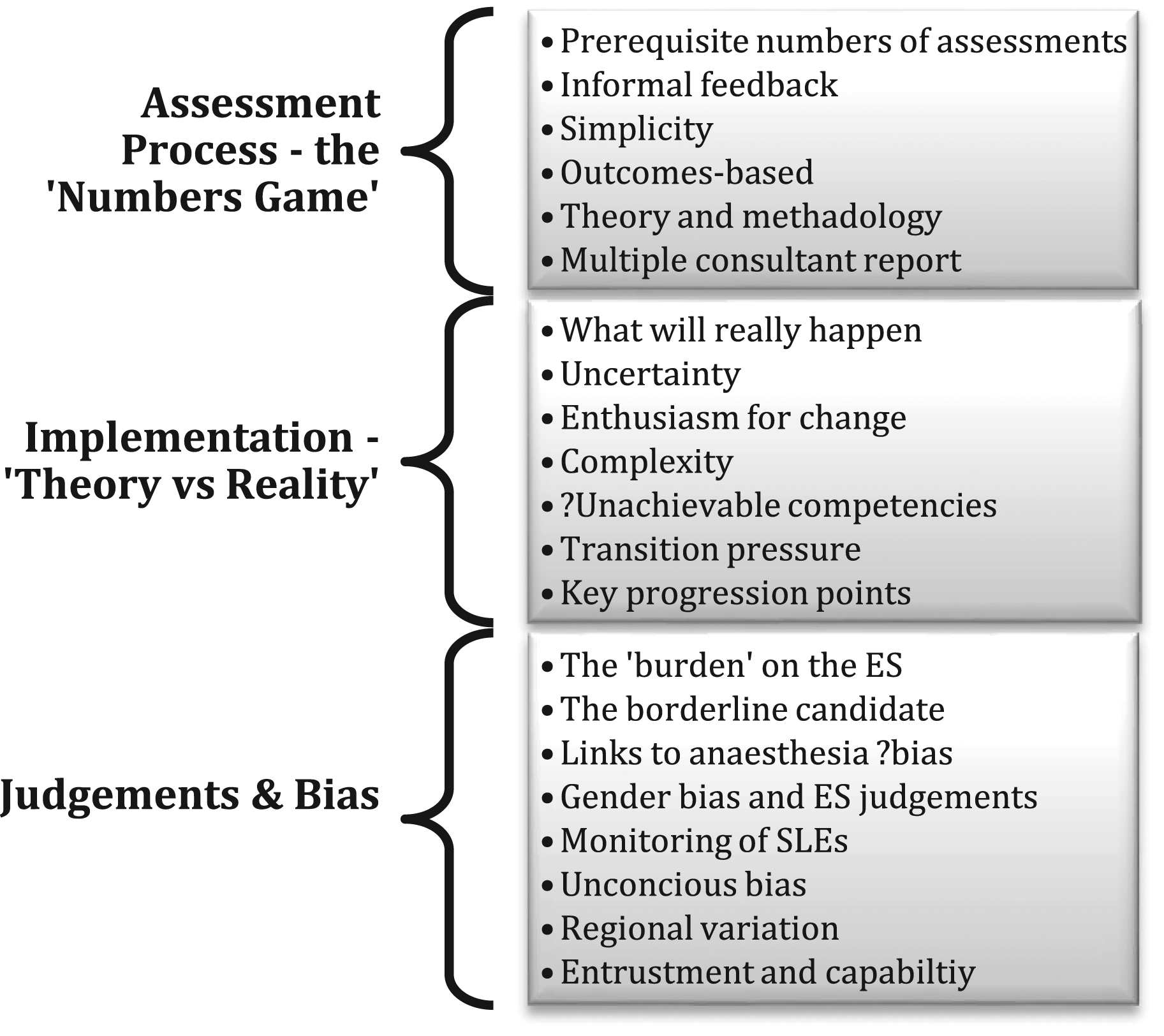

Three final themes were identified based on review of initial coding, generation of preliminary themes, followed by reanalysis of the initial data considering the themes synthesised, as summarised in Figure 1. Visual representation of the thematic analysis and a summary of codes within each theme.

Assessment process – the ‘Numbers Game’

At present there is an arbitrary number of Workplace-based Assessments (WPBAs) stipulated as required annually (P95, Trainee)

There is significant strength of feeling amongst trainees and trainers regarding the current implementation of assessment within CMBE typified here as the ‘numbers game’. Assessment burden comes not in how [Supervised Learning Events (SLE)] are linked to a curricula (which currently is a terrible system) but in notching up enough SLE’s in the first place. Most of the important useful feedback is informal (P62, Trainee)

Comments use terminology that suggests the underlying feeling is more than simply concern regarding the numbers of assessments required but also concern regarding the process and purpose of conducting assessments. The new assessment strategy aimed to remove arbitrary numeric requirements and focus on quality of evidence to support capability judgements. Participants described this as a positive step forward. Very welcome step in right direction. As [Faculty Tutor], I always felt that competency based assessment was not always able to produce a new consultant who can deal with non technical factors / or able to put all his skills in cohesive manner to make a final decision (P29, Faculty Tutor) [I] prefer the high level learning outcomes and the scrapping of the requirements to link something to every single competency (P63, Trainee)

There was clearly the impression of reduced assessment burden without an associated decrease in the quality of the assessment process. I think there will always be an element of assessment burden. I think this will reduce this burden without reducing quality and so worth ‘giving a go’ to see and manage the teething problems (P47, ICM Training Programme Director) Potentially will reduce the number of assessments to address specific curriculum items. (P68, Trainee) For the trainee in theory there is less running around getting the prerequisite number of WBAs and overall performance may be judged in line with the curriculum by MSF etc... It looks simpler (P27, Faculty Tutor)

However, there is concern that removing the requirement for a set number of assessments to ‘pass’ generates anxiety. I suspect ... a lack of clarity as to what is ‘required to pass’ (P75, Trainee) [you are] removing the very detailed and structured competencies and replacing them with vague and nebulous capabilities (P37)

Trainees and trainers are hesitant about removing the psychological safety of knowing that ‘all the boxes are ticked’ irrespective of how useful these assessments are. Uncertainty and therefore anxiety is apparent in relation to where the ‘bar is set’ to pass the integrative judgements in relation to high-level outcomes.

Judgement and bias

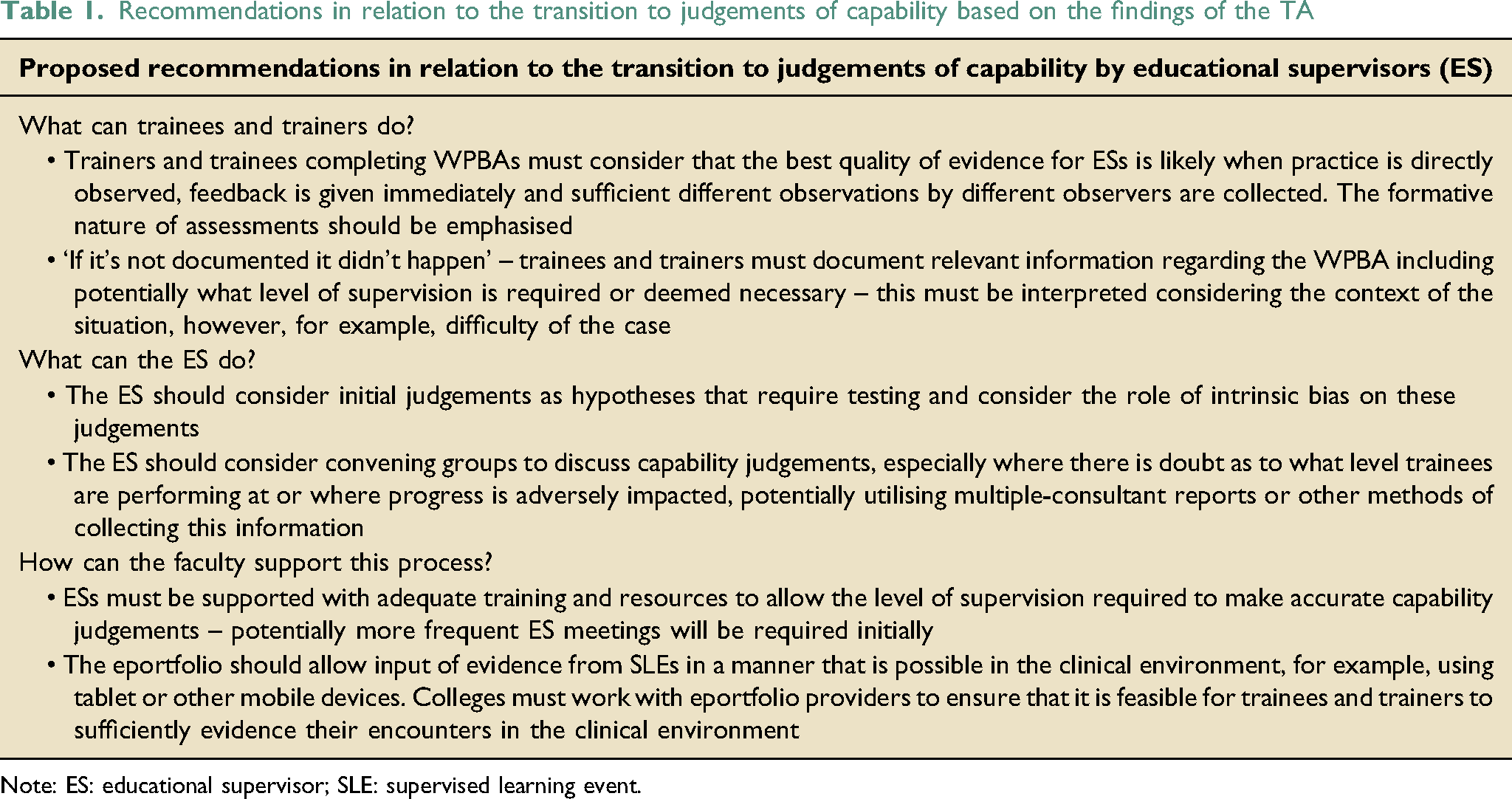

The second theme, entitled judgement and bias, provided unexpected feedback in relation to the new method of assessment. Participants describe feelings relating to a shift towards a constructivist rather than positivist view of the use of supervision during assessments: The burden of assessment has potentially been reduced, but at the expense of an increase in the burden of supervision to the ES and the trainers (P37, ICM Head of School)

The burden on individual assessors is perceived to be reduced; assessors are now asked ‘only’ to use their experience to provide summative feedback to trainees and document this. In contrast, there is a perceived increase in workload and responsibility on the ES: The ES may become biased unless a mentorship scheme runs in parallel. The trainees will need mentoring in order to navigate the HiLLOs (P27, ICM Faculty Tutor) The Faculty should consider developing, and making mandatory for clinical, education supervisors and examiners, unconscious bias training (P122) I think that we may convene small groups of trainers/ESs on our unit to discuss trainees and sign offs the ensure the process is fair and robust (P8, ICM Consultant)

Participants highlight that the role of the ES has shifted and this needs to be acknowledged and planned for accordingly. Although the ES should be provided with evidence to make judgements concerning capability there is concern that bias may impact these ‘high stakes’ decisions and disadvantage groups of trainees as demonstrated by the quotes above. There is concern that further work needs to be done to ensure that the judgements the ES makes are fair, in addition to the practicalities of ensuring there is adequate evidence to base these decisions on.

Implementation – ‘Theory versus Reality’

The third theme relates to the implementation of the new assessment strategy. There is a certain level of disbelief apparent in relation to whether there will be any perceivable change to the process of assessment even if in theory it is more appropriate, particularly among the trainees. This will ultimately depend on how the educational hierarchy choose to implement both processes and summative assessment (P77, Trainee) It remains to be seen whether individual deaneries or supervisors will impose some sort of requirements for specific evidence for a particular HiLLO sign off - which defeats the purpose of this new, flexible, simplified curriculum (P86, Trainee)

Conversely there is a sense of optimism that change is needed: The new curriculum can’t come soon enough (P71, Trainee)

This theme highlights not only a certain level of disillusionment with the current system but also the importance of a process of implementation that results in the assessment strategy being rolled out and used as intended. Participants, even if positive about the new curriculum, are sceptical that the theory will be translated into reality.

Discussion

Acceptability and subjectivity

An emphasis on the use of assessments as formative tools at the point of assessment is welcomed and a perceived decrease in assessment burden is felt. However, trainees and trainers describe disillusionment relating to WBPAs as tools for learning. Many have explored this area in great detail, and it has been a longstanding issue with WPBAs since their inception.1,3,41,42 One should be cautious in expecting a rapid change in attitudes towards the use of assessments simply because the assessment document describes their use as ‘low stakes’ or tools for learning. It many take many years to overturn the entrenched ‘tick-box’ culture associated with, and reason for conducting, assessments. Furthermore, the shift towards a constructivist approach to assessment may require further explanation outside of medical education circles; trainees and trainers are used to a ‘pass/fail’ approach to assessments in the workplace and this research highlights anxiety regarding a move away from this positivist approach to assessments in the workplace, even with associated decreased assessment burden.

Judgement & bias

The most unexpected findings highlighting a requirement for further research stemmed from the second theme, ‘judgement and bias’. Historically we must consider that the shift towards ‘objective’ assessment was felt to drastically reduce the risk of bias inherent with supervisor judgements despite the now apparent difficulties with this model of assessment. This study highlights concern regarding how implicit bias may affect the ‘high stakes’ judgements of performance made by ESs.

There are countless examples of inequality in relation to healthcare and medical training. Within medical education we have been aware that black and minority ethnic doctors perform less well than their peers in postgraduate assessments. 43 This differential attainment occurs across all specialities even accounting for previous academic performance, socioeconomic status or language skills. 44 Additionally, we know that in ICM women make up less than 25% of the consultant workforce and are under-represented in leadership roles. 45 Furthermore, despite the perception that the National Health Service is often perceived as an LGBT+ friendly work environment, 12% of LGBT doctors reported a form of discrimination that they felt adversely impacted their employment or studies (for example, access to opportunities or pastoral support). 46 Although clearly it is of benefit to reduce assessment burden on trainers and trainees, it is imperative that we strive towards an equal and just system of assessment that values and supports all trainees equally.

We must therefore consider how shifting the judgement of capability ‘back’ to ESs may affect various groups of trainees and ensure that this process is done fairly. Judgements made by supervisors are made within their socio-cultural framework and unconscious or implicit bias is likely to impact these decisions. Even if we consider that the idiosyncrasies of supervisors and context-dependent assessment are important for learning in the clinical environment, we still know very little in relation to bias in making these judgements in medical education. 28 However, extensive research in other specialities by social scientists has suggested that demographic similarity affects perceived similarity between supervisors and subordinates. 47 These factors are in turn are linked to positive performance ratings in management literature. 48 We must consider that similar bias may be active in longitudinal relationships such as between ES and trainee.

However, while the decision to move judgements of capability to a single ES may increase the risk of bias given the factors above, we must consider that ESs are a more tangible, smaller group in comparison to the wider group of trainers previously asked to provide summative feedback. This may allow for more effective targeting of training in relation to bias to those making judgements of performance. Additionally, having significant judgements made by a supervisor with a more longitudinal relationship with the trainee allows time for a more concrete awareness of skills, knowledge, and attitudes to develop, rather than assessment based on single judgements which are potentially more prone to these unconscious biases. Thus, the ES may be best placed to make significant judgements which may impact progression, but with the caveat that the findings of this research indicate that it is likely to require that time and training be provided to the ES, and that a shift in resource allocation and responsibility is required alongside implementation of this assessment strategy.

Implementation

The final theme related to concerns regarding implementation with participants feeling that transition to the new strategy would result in stress and anxiety. This specifically included trainees who are out of programme, for example, for research.

Recommendations in relation to the transition to judgements of capability based on the findings of the TA

Note: ES: educational supervisor; SLE: supervised learning event.

Limitations

The credibility of the findings must be considered carefully given the required change in methodology part way though the project. Although the data set analysed provided sufficient detail to elaborate on several important themes guiding the focus for implementation and training, the original design of the study involved focus groups of trainees. Focus groups would have allowed for a greater depth of discussion from which to draw conclusions. However, the SARS-CoV-2 pandemic has made such focus groups impossible for the time being and alternative methods of conducting research must be embraced. Dependability of the results described has been ensured by detailing the research process including a clear description of codes, themes and quotes (all are traceable to the original anonymised source). The generalisability of the results is a particular strength, involving significant stakeholder groups and a wide spread of geographic variation and a balance of feedback from trainees and trainers.

Conclusions

This qualitative study suggests increased satisfaction with the transition to an outcomes-based model with capability within high-level learning outcomes judged by ESs. Despite disillusionment with current assessment processes, participants were positive that assessment burden was likely to be reduced by the new strategy. There was agreement with the process of summative judgements being made in relation to capability within fewer high-level outcomes (representing units of practice within ICM) rather than for individual competencies. Many participants felt the new assessment strategy was an improvement and there was enthusiasm for change.

However, trainees and trainers were unexpectedly nervous regarding the move to a more constructivist paradigm of assessment in the workplace – ‘but how do I/they pass?’ Furthermore, there is currently insufficient evidence examining the role of bias in these judgements made by supervisors and this research highlight this as a potential issue – this is a priority for further evaluation and research.

Footnotes

Acknowledgements

The author would like to acknowledge Dr Tom Gallagher and the FICM Curriculum Working Party for their enthusiasm, kind support and assistance with this research

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.