Abstract

During the past decade, representation based classification method has received considerable attention in the community of pattern recognition. The recently proposed non-negative representation based classifier achieved superb recognition results in diverse pattern classification tasks. Unfortunately, discriminative information of training data is not fully exploited in non-negative representation based classifier, which undermines its classification performance in practical applications. To address this problem, we introduce a decorrelation regularizer into the formulation of non-negative representation based classifier and propose a discriminative non-negative representation based classifier for pattern classification. The decorrelation regularizer is able to reduce the correlation of representation results of different classes, thus promoting the competition among them. Experimental results on benchmark datasets validate the efficacy of the proposed discriminative non-negative representation based classifier, and it can outperform some state-of-the-art deep learning based methods. The source code of our proposed discriminative non-negative representation based classifier is accessible at https://github.com/yinhefeng/DNRC.

Keywords

Introduction

Recent years have witnessed the success of representation based classification method (RBCM) in a variety of classification tasks. In face recognition, the most influential work is the sparse representation based classification (SRC).

1

SRC treats all the training samples as a dictionary, and a test sample is sparsely coded over the dictionary, then the classification is performed by checking which class yields the least reconstruction error. Naseem et al.

2

proposed a linear regression classification (LRC) algorithm which represents the test sample as a linear combination of class-specific training samples. The essence of LRC is nearest subspace classifier (NSC)

3

with down-sampled features. Due to the principle of

Xu et al. 14 pointed out that there exist negative elements in the coefficients obtained by SRC or CRC, which may result in the misclassification of the test sample. Motivated by non-negative matrix factorization (NMF) 15 , Xu et al. 14 proposed a non-negative representation based classifier (NRC) which imposes a non-negative constraint on the coding vector. Extensive experiments on diverse classification tasks demonstrate the superiority of NRC over many existing RBCM, including SRC, CRC and ProCRC. Nevertheless, NRC ignores the discriminative information of training data which limits its classification performance. To tackle this problem, we propose a discriminative NRC (DNRC) which incorporates a decorrelation regularizer into the formulation of NRC. The decorrelation regularizer can reduce the correlation of representation results of different classes, thus promoting the competition among them. Competition means that when training samples of a class have a great contribution in representing the test sample, other classes have relatively less contribution.

In summary, our contributions can be summarized as follows:

A discrimintive nonnegative representation based classifier (DNRC) is presented by introducing a decorrelation regularizer into the formulation of NRC. Alternating direction method of multipliers (ADMM)

16

algorithm is employed to efficiently solve the optimization problem of DNRC. Experiments on diverse standard datasets indicate that our proposed DNRC outperforms conventional RBCM as well as some deep learning based methods on face databases, human action dataset and fine-grained visual datasets.

Related work

Suppose we have

Sparse representation based classification

In SRC,

1

a test sample

Collaborative representation based classification

SRC and its variants have achieved encouraging results in diverse pattern classification tasks. Nevertheless, Zhang et al.

8

argued that it is the collaborative representation mechanism rather than the

Non-negative representation based classification

SRC and CRC have become two representative methods in RBCM. However, the coding vector of conventional RBCM contains negative entries. The test sample should be better expressed by homogeneous samples with non-negative representation coefficients. Moreover, Lee and Seung

15

pointed out that it is unsuitable to approximate the test sample by allowing the training samples to cancel each other out with complex additions and subtractions. Therefore, Xu et al.

14

proposed the following non-negative representation model by imposing the non-negative constraint on the coding vector:

Discriminative non-negative representation based classifier

In this section, first we introduce the formulation of our proposed DNRC, then we present its optimization procedures. Finally, we give the classification scheme of DNRC.

Proposed model

According to the mechanism of RBCM, we know that

By minimizing

Optimization

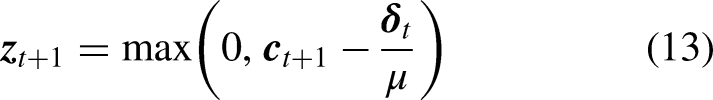

We adopt an alternative strategy to solve the DNRC model. By introducing an auxiliary variable

Update

Update

Solve Equation (7) via ADMM.

Classification

For the test sample

Our proposed discriminative non-negative representation based classifier (DNRC) algorithm.

Rationale of DNRC

Our proposed DNRC model (7) introduces a decorrelation regularizer into the formulation of NRC. As mentioned earlier, the decorrelation regularizer

Since DNRC is closely related to NRC, we make a comparison between the two approaches. On the AR database, seven non-occluded images per subject in Session 1 are used for training, and one face image in Session 2 from the 98th class is used for testing. Figures 1 and 2 show the representation results (i.e.,

Representation results of all classes of non-negative representation based classifier (NRC).

Representation results of all classes of discriminative non-negative representation based classifier (DNRC).

Experiments

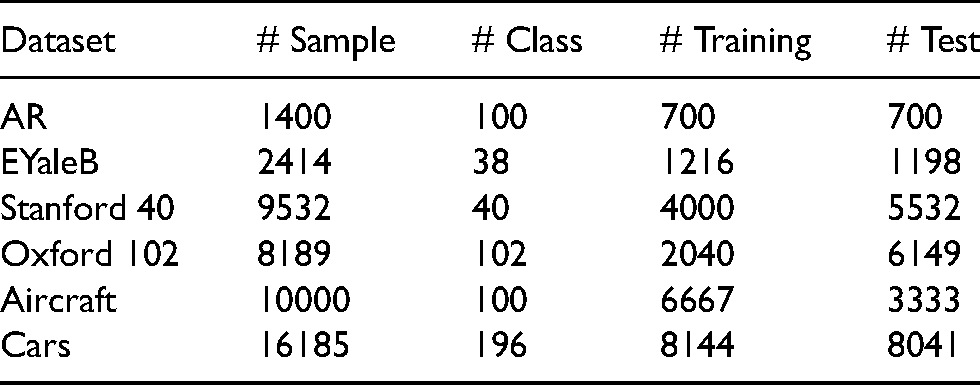

In this section, we assess the classification performance of DNRC on diverse benchmark datsets: two face databases including AR

17

and Extended Yale B

18

databases, Stanford 40 Actions dataset

19

for action recognition, three fine-grained object datasets which includes the Oxford 102 Flowers dataset,

20

the Aircraft dataset

21

and the Cars dataset,

22

the details of these datasets are summarized in Table 1. We compare the classification accuracy of DNRC with NSC,

3

linear SVM, SRC,

1

CRC,

8

CROC,

10

ProCRC

13

and NRC.

14

In addition, on the Aircraft and Cars datasets, we also compare DNRC with some state-of-the-art deep methods, such as Symbiotic,

23

FV-FGC

24

and B-CNN.

25

The parameter of our method, that is,

Details of datasets used in our experiments. The columns from left to right are the names of datasets, total number of samples, number of classes, number of training samples, and number of test samples.

Experiments on the AR database

The AR database

17

contains more than 4000 color images of 126 subjects (70 men and 56 women), these images have variations in facial expressions, illumination conditions and occlusions, example images from this database are shown in Figure 3. Following the experimental settings by Xu et al.,

14

in our experiments, we use a subset with only illumination and expression changes that contains 50 male subjects and 50 female subjects from the AR database. For each subject, seven images from Session 1 are used as training samples, and the other seven images from Session 2 as test samples. All the images are firstly cropped to 60

Example images from the AR database.

Recognition accuracy (%) of competing approaches on the AR database.

SRC: sparse representation based classification; NSC: nearest subspace classifier; SVM: support vector machine; CRC: collaborative representation based classification; CROC: collaborative representation optimized classifier; DNRC: discriminative non-negative representation based classifier; ProCRC: probabilistic collaborative representation based classifier; NRC: non-negative representation based classifier.

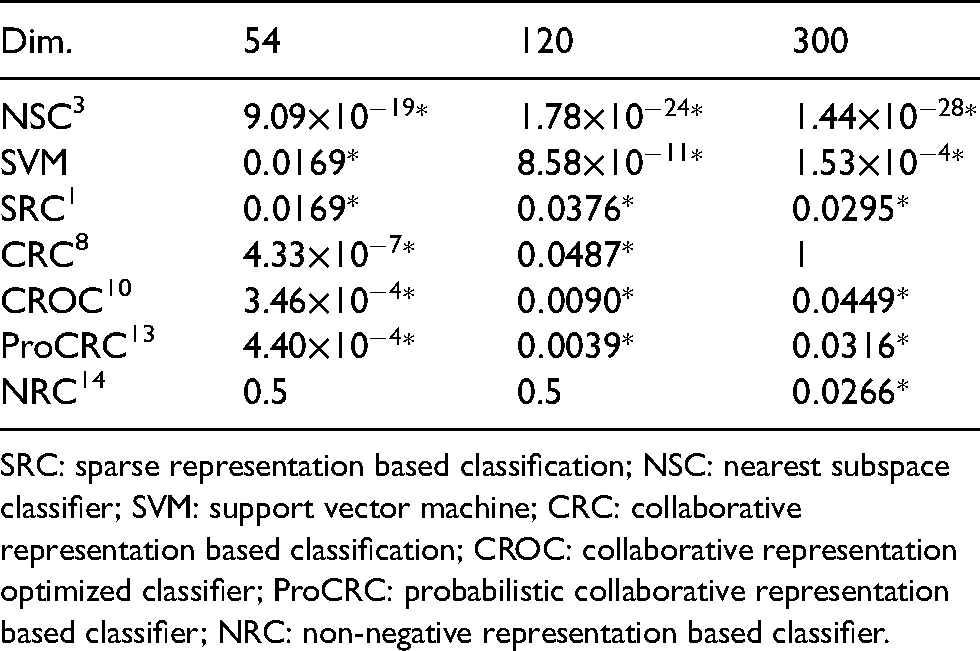

In order to demonstrate the statistical significance of our proposed DNRC compared with the other methods, we conduct a significance test, McNemar’s test (non-parametric),26,27 for the results shown in Table 2. The significance level, that is,

SRC: sparse representation based classification; NSC: nearest subspace classifier; SVM: support vector machine; CRC: collaborative representation based classification; CROC: collaborative representation optimized classifier; ProCRC: probabilistic collaborative representation based classifier; NRC: non-negative representation based classifier.

Experiments on the extended yale B database

The Extended Yale B database

18

consists of 2414 face images from 38 individuals, each having 59–64 images. These images have illumination variations, example images from this database are shown in Figure 4. The original images are of 192

Example images from the Extended Yale B database.

Recognition accuracy (%) of competing approaches on the Extended Yale B database.

SRC: sparse representation based classification; NSC: nearest subspace classifier; SVM: support vector machine; CRC: collaborative representation based classification; CROC: collaborative representation optimized classifier; DNRC: discriminative non-negative representation based classifier; ProCRC: probabilistic collaborative representation based classifier; NRC: non-negative representation based classifier.

Experiments on the stanford 40 actions dataset

The Stanford 40 Actions dataset

19

contains 40 different classes of human actions, for example, brushing teeth, cleaning the floor, reading book and throwing a Frisbee, example images from this dataset are shown in Figure 5. It contains 9532 images in total, 180–300 images per action. Following the training-test split settings scheme in Yao et al.,

19

we randomly select 100 images per class as the training images and employ the remaining images as the testing set. Features are extracted by using the pre-trianed VGG19 network,

28

and the dimension of the feature for each image is 4096. Experimental results are shown in Table 5, the balancing parameter

Example images from the Stanford 40 Actions dataset.

Recognition accuracy (%) of competing approaches on the Stanford 40 Actions dataset.

SRC: sparse representation based classification; NSC: nearest subspace classifier; SVM: support vector machine; CRC: collaborative representation based classification; CROC: collaborative representation optimized classifier; DNRC: discriminative non-negative representation based classifier; ProCRC: probabilistic collaborative representation based classifier; NRC: non-negative representation based classifier.

Experiments on the oxford 102 flowers dataset

The Oxford 102 Flowers dataset

20

includes 8189 images of 102 different flowers. Each flower class has over 40 images. These flower images are captured under diverse lighting conditions, flower poses, and image scales, example images from this dataset are shown in Figure 6. Features are extracted by employing the pre-trianed VGG19 network. Table 6 summarizs the classification accuracy of comparison methods, the balancing parameter

Example images from the Oxford 102 Flowers dataset.

Recognition accuracy (%) of competing approaches on the Oxford 102 Flowers dataset.

SRC: sparse representation based classification; NSC: nearest subspace classifier; SVM: support vector machine; CRC: collaborative representation based classification; CROC: collaborative representation optimized classifier; DNRC: discriminative non-negative representation based classifier; ProCRC: probabilistic collaborative representation based classifier; NRC: non-negative representation based classifier.

Experiments on the aircraft dataset

The Aircraft dataset

21

includes 10,000 images of 100 different aircraft model variants, 100 images for each class. These aircrafts appear at diverse appearances, scales, and design structures, making this dataset very challenging for visual recognition. Example images from this dataset are shown in Figure 7. We adopt the training-testing split protocol provided by Maji et al.

21

to design our experiments, features are extracted via a pre-trained VGG-16 network.

28

We also compare with the methods of Symbiotic,

23

FV-FGC,

24

B-CNN.

25

Recognition accuracy of competing approaches is presented in Table 7, the balancing parameter

Example images from the Aircraft dataset.

Recognition accuracy (%) of competing approaches on the Aircraft dataset.

SRC: sparse representation based classification; NSC: nearest subspace classifier; CRC: collaborative representation based classification; CROC: collaborative representation optimized classifier; DNRC: discriminative non-negative representation based classifier; ProCRC: probabilistic collaborative representation based classifier; FV-FGC: fisher vector for fine-grained classification; B-CNN: bilinear CNN; NRC: non-negative representation based classifier.

Experiments on the cars dataset

The Cars dataset

22

has 16,185 images of 196 car classes. Each car class contains about 80 images at different scales and heavy clutter background, making this dataset very challenging for visual recognition. Example images from this dataset are shown in Figure 8. We use the same split scheme provided by Krause et al.,

22

in which 8144 images are employed as the training samples and the other 8041 images are employed as the testing samples. Features are extracted via a pre-trained VGG-16 network. We also compare with Symbiotic,

23

fisher vector for fine-grained classification (FV-FGC),

24

bilinear CNN (B-CNN).

25

Recognition accuracy of competing approaches on this dataset is recorded in Table 8, the balancing parameter

Example images from the Cars dataset.

Recognition accuracy (%) of competing approaches on the Cars dataset.

SRC: sparse representation based classification; NSC: nearest subspace classifier; CRC: collaborative representation based classification; CROC: collaborative representation optimized classifier; DNRC: discriminative non-negative representation based classifier; ProCRC: probabilistic collaborative representation based classifier; FV-FGC: fisher vector for fine-grained classification; B-CNN: bilinear CNN; NRC: non-negative representation based classifier.

Parameter analysis

In this subsection, we investigate how the balancing parameter

Classification accuracy (%) of DNRC with varying parameter

Conclusions

In this paper, we designed a DNRC for pattern classification. By incorporating the decorrelation regularizer into the formulation of NRC, training samples of different classes are enforced to compete in representing the test sample. Therefore, the coefficient vector obtained by DNRC contains more discriminative information than that of NRC. Our proposed DNRC is solved elegantly via the ADMM algorithm. Experimental results on face databases, human action dataset and fine-grained datasets demonstrate that DNRC is superior to NRC and traditional RBCM, and it also outperforms some deep learning based approaches.

DNRC is an improvement of NRC, and they both belong to shallow model. In our future work, we will compare DNRC with graph convolutional network and design the deep version of DNRC.

Footnotes

Acknowledgements

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Key Project of Jiangsu Vocational College of Information Technology (grant no. JSITKY201905), project of high-level specialty group construction of higher vocational education of Jiangsu province (grant no. [2021] 1), general project fund for natural science research of colleges and universities in Jiangsu province (grant no. 18KJD510011), project of high-level backbone specialty construction of Jiangsu province (grant no. [2017] 17), and in part by the National Natural Science Foundation of China (grant no. 61672263).