Abstract

The use of artificial intelligence (AI) in health research of both communicable and non-communicable diseases has shown an improvement in diagnosis, reduced researchers’ workload, and facilitated real-time data analysis. However, several ethical concerns on public trust, privacy, accountability and fairness regarding access to AI have been pointed out to expose the users of AI to harm. Whereas AI technologies continue to grow rapidly in health research, there is limited knowledge on research ethics committees’ (REC) current practices and challenges experienced when reviewing AI health research in low resource settings like Uganda. This study examined the current practices and challenges experienced by ethics committees during the review of AI health research. We adopted a qualitative exploratory approach, where in-depth interviews were conducted with 12 REC members and 6 REC administrators between May and September 2024. A thematic approach was used to analyze the results. Three themes merged from this data including current practices of RECs, challenges experienced by REC members when reviewing AI health research proposals, and the proposed solutions to the mentioned challenges. Of interest, respondents expressed concerns of limited training and expertise in field of AI, inadequate guiding and reference tools, and unreasonable demands from researchers. Therefore, it is essential to build capacity for REC members and develop comprehensive guidelines, and standard operating procedures for efficient and constructive feedback to researchers.

Keywords

Introduction

Artificial Intelligence (AI) is increasingly being used in the collection and analysis of research data in health research (Schwalbe and Wahl, 2020). The collected data has the potential to promote individuals’ healthy living, provide health education, prompt behavioral change, and contribute to policy-making (Bour, 2023; López Pérez, 2022). However, the recent focus on ethical AI has revealed several ethical concerns including public trust in AI systems, the need for accountability, responsibility, transparency of the technology and explainability of algorithms. Questions about privacy of data subjects, fairness and justice regarding access to AI have also been regularly raised (Blasimme and Vayena, 2019). While the development and use of AI tools is rapidly increasing in low resource settings, there remains concerns about the unclear laws and regulations governing the use of AI, and data access, sharing, and ownership (Fjeld et al., 2020; Mittelstadt, 2019; Yeung et al., 2020).

Recent studies have reported that research ethics committees (RECs) lack the resources, expertise and training to appropriately address the risks that may arise from AI-related research (Petermann et al., 2022; Samuel et al., 2021; Yuan et al., 2020). Furthermore, a study conducted among REC members across 20 sub-Saharan African reported several challenges that hinder effective ethical oversight for example lack of regulatory frameworks to govern data sharing practices, inadequate informed consent practices for patients’ data sharing, and absence of mechanisms for protecting data owners’ privacy and confidentiality (Cengiz et al., 2024). In the recent decades, RECs in developing countries have relied on both international and national legal and ethical frameworks to evaluate potential ethical issues arising from biomedical research (Scherzinger and Bobbert, 2017; Seralegne et al., 2023). For example, the framework proposed by Ezekiel Emanuel has been widely used guide for evaluating ethical research, particularly in low resource settings (Emanuel et al., 2008). While there is some evidence that this model has worked well in REC evaluations for biomedical research in African research communities (Tsoka-Gwegweni and Wassenaar, 2014), the unique and novel challenges posed by digital technologies and artificial intelligence (AI) may render traditional ethics oversight mechanisms and practices inadequate (Ferretti et al., 2022; Friesen et al., 2021). For example, unlike traditional research, AI systems may perpetuate or amplify social inequities if trained on non-representative data. Secondly, data privacy, potential misuse of AI technologies, and the risk of harm due to flawed predictions or recommendations raise significant concerns (Mühlhoff, 2023). Furthermore, ensuring meaningful informed consent can be challenging when participants do not fully understand how their data will be used. In many cases, AI research projects rely on data sourced from website through scraping to automate data extraction, a process that challenges traditional notions of informed consent and raises questions about whether researchers are in a position to assess all the risk of research to participants (Taylor and Pagliari, 2018). In some instances, researchers may not be able to identify the people whose data has been collected, thereby lacking a relational dynamic that is essential for understanding the needs, interests and risks of the research to the participants. In other cases, AI researchers may use publicly available datasets stored in online repositories, which may be repurposed for reasons that differ from their originally intended basis for collection. Despite these challenges, there is limited literature on the current practices and challenges experienced by REC members reviewing AI health research in low resource settings like Uganda. This study examined the current practices and challenges experienced by ethics committees during the review of AI health research. We hope that findings from this study will inform national research ethics guidelines for AI health research.

Materials and methods

Study design

This study employed an exploratory qualitative approach (Butterfield, 1989; Mays and Pope, 2000; Pope et al., 2002) involving semi-structured interviews.

Study setting

The study was conducted among research ethics committees (RECs) with experience in reviewing of AI research proposals involving human participants in Uganda. Currently, Uganda has 35 RECs accredited by the Uganda National Council for Science and Technology (UNCST). Of these, only six RECs had ever reviewed AI health research proposals. Some of the research studies involved developing AI tools for prediction of COVID-19 waves and transmissions, AI diagnostic tools for malaria, tuberculosis, cervical cancer, and AI tools for supporting treatment of mental health conditions. This study was conducted between May and September 2024.

Research team

The research team comprised of bioethicists (SN and ESM), a computer scientist (WW), a social scientist with experience in conducting and analyzing qualitative data (AT), and two research assistants.

Study participants

We enrolled 18 participants. We purposively selected 12 REC members from each of the six RECs. Six were REC chairpersons and the rest were members of the RECs representing the research communities. Four REC chairpersons preferred to nominate a member of their REC who was more knowledgeable in the field of study. We also selected six administrators of the RECs.

Study procedure

We downloaded a list of accredited RECs in Uganda from the Uganda National Council for Science and Technology. The list comprised the names and address of the REC, the names and contact details of the REC chairpersons and administrators. We then contacted each REC administrator to ask whether they had reviewed any research proposal with an AI component. Only six RECs were included in this study. Before conducting the study interviews, the research team was trained on the protocol to ensure that they understood the study well. Participants were contacted via email that contained a brief description of the study and a request to schedule an appointment for the interview. Expression of interest to participate was recorded by a positive response to the email, followed by sharing a consent form. Written informed consent was obtained from all participants, and they were assured of confidentiality. Interviews were moderated by SN, AT, and a research assistant. A note taker was present throughout the discussions. The interviews were audio-recorded and lasted between 30 and 40 minutes.

Research tools

The interview guides were developed from the literature (Ferretti et al., 2022; Sridharan and Sivaramakrishnan, 2025). The questions in the interview guide were related to current practices, challenges experienced during the review process of AI health research proposals, and proposed solutions to challenges. The guides were first piloted with two REC members who were excluded from the study. However, their feedback was used to improve the interview guides. The research team held debriefing meetings at the end of each interview to identify new perspectives that were not initially captured by the tool. Data were collected until no new information or insights were revealed.

Data analysis

Data were analyzed continuously throughout the study using a thematic approach (Braun and Clarke, 2006; Fereday and Muir-Cochrane, 2006). All audio recordings were transcribed verbatim. All transcripts were verified for accuracy by reading word by word while listening to the audio recordings for quality checks and spelling errors. Two authors (SN and AT) selected three transcripts for open coding. These scripts were read line by line to generate the first set of codes. Synthesis of codes from the independent reading were iteratively discussed among the authors and codes of similar ideas were merged. Differences in coding among the independent coders were resolved by consensus. We then developed a coding framework. All the transcripts were then imported into Nvivo version 12 (International-Pty-Ltd Q, 2018) and coded by two authors (SN and AT). Two authors WW and ESM examined the patterns of the emerging themes for consistency until consensus was achieved on the final themes.

All the authors compared the emergent themes with the existing literature to confirm that the final themes accurately represented the respondents’ perspectives. We also returned some transcripts to the participants to verify whether the information in the transcripts was a true reflection of their statements on the subject matter and to ensure credibility of the study findings. The key findings were summarized and presented in Table 2. The final codebook was continuously refined to establish the themes presented in the results section. Regarding research reflexivity, the research team was aware that we needed to remain neutral throughout the interviews. We acknowledge our potential biases based on the prior knowledge about the REC activities and the existing relationships between the respondents and the research team through prioritizing listening from the interviewees’ perspective. We report our findings based on the consolidated criteria for reporting qualitative research (COREQ; Tong et al., 2007).

This study obtained ethics approval from the Mildmay Uganda Research Ethics Committee (0501-2024) and Uganda National Council for Science and Technology (SS 2558ES).

Results

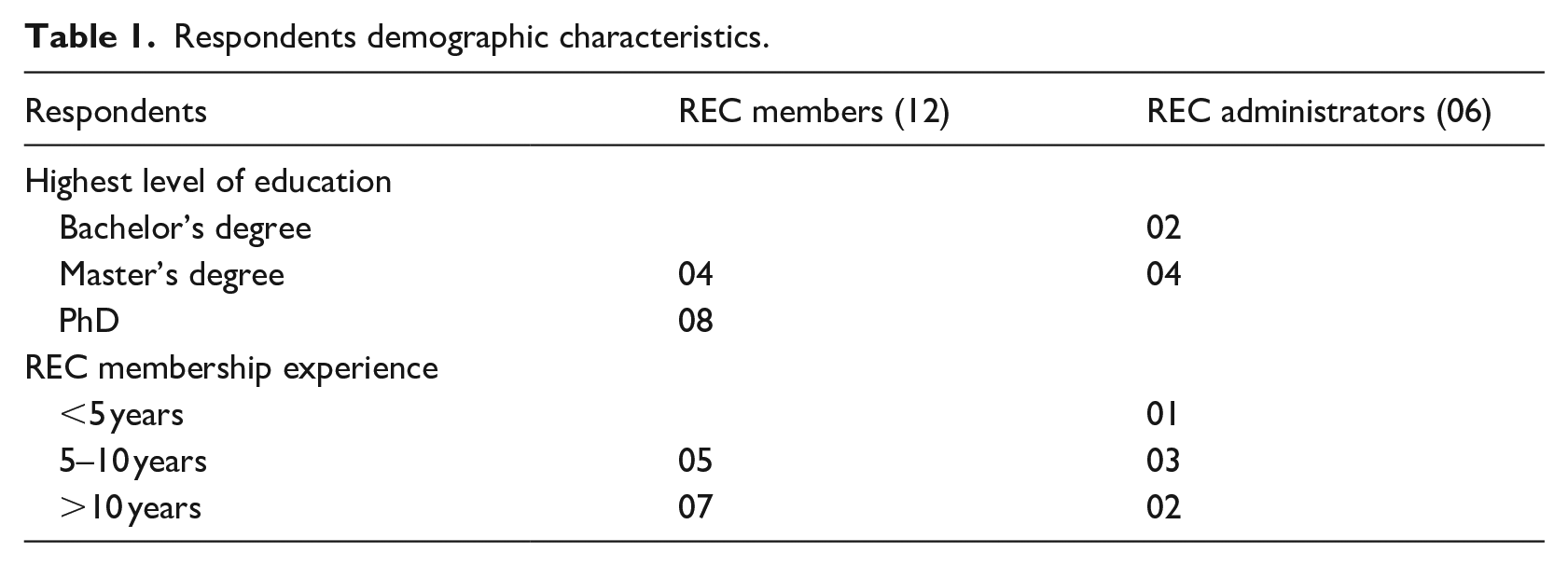

Demographic characteristics

The demographic characteristics of stakeholders are presented in Table 1. A total of 18 respondents participated in this study. The majority had more than 5 years’ experience in reviewing health research proposals.

Respondents demographic characteristics.

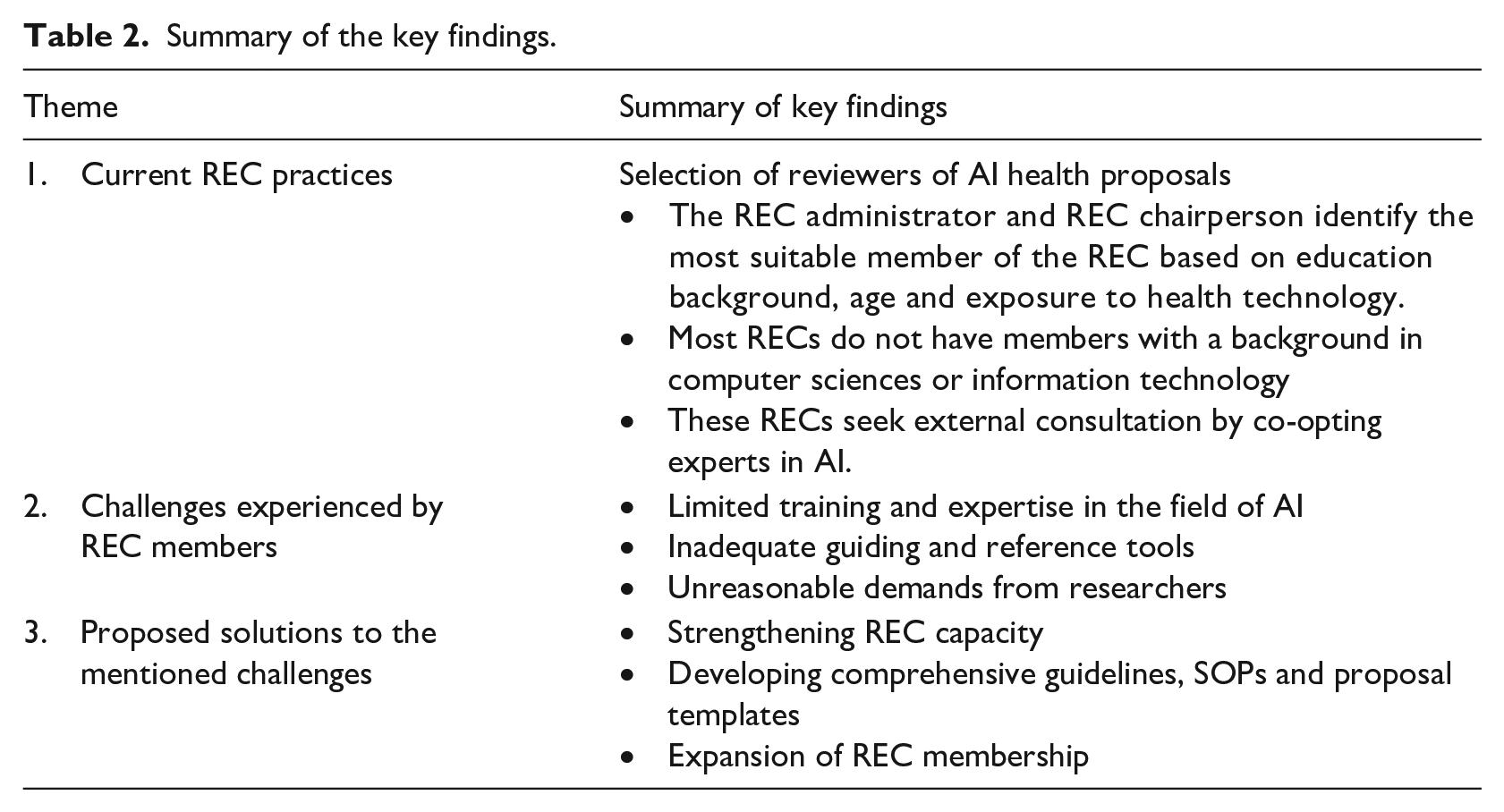

Summary of the themes and key findings

Three key themes emerged from the data collected as described in Table 2.

Summary of the key findings.

Current REC practices of reviewing AI health research proposals

Respondents shared their experiences and current practices on how reviewers of AI health studies are selected and the guiding tools used during the review of the proposals.

Selection of reviewers of AI health proposals

The majority of the respondents (15) said that their committees did not have a member with training in computer sciences. Only one REC, out of the six RECs had two members with a background in computer sciences, who are often the primary and secondary reviewers of the AI-health proposals.

We currently don’t have any member with a background in computer sciences or IT [information technologies]. Maybe because we have received very few AI proposals so far. . . [Administrator 3] We have two members with a background in computer sciences. We [administrator and REC chairperson] often allocate proposals with an AI component to them. . . One is either the primary reviewer and the other is the secondary reviewer [Administrator 6]

For all the six RECs, the REC chairperson and administrator are tasked with selecting the primary and secondary reviewers of AI health proposals. They indicated that their criteria for reviewership are based on the REC members’ exposure to technology, age, field of specialty, and research experience.

The REC chairperson and myself consider certain factors when selecting the primary and secondary reviewers. . . . For example, some of our members are technology-biased and have not fully welcomed the idea of artificial intelligence. They prefer the old traditional way of doing research, the way things were done back then in the 1990s. . . . [Administrator 2] Some members have a lot of interest in AI and the upcoming technologies in health care and research. We often select such members to review the AI health proposals. [Administrator 3]

Alternatively, the committees seek external consultation through co-opting experts in computer sciences, information technologies and health fields.

Most AI protocols have too many jargon words that we [REC members] also can’t understand. So what we do, we seek out for a computer scientist or an IT person who can understand those things. . . [Administrator 1] . . . . we also prefer to pair up the reviewers. . . for example if the study is about AI in women health, we shall select the primary reviewer from the REC membership preferably a gynecologist and a secondary reviewer can be co-opted from the field of computer sciences. This helps us to get a better understanding of the risks in the study. . . . because you can’t easily find someone with both qualifications. . . . [Administrator 6]

Challenges experienced by members of REC during review of AI health research

When asked about the challenges experienced when reviewing AI health proposals, respondents pointed out several challenges as described below.

Limited training and expertise in the field of AI: Almost all REC members opined that limited knowledge, training and expertise in AI and health technologies were their main challenge. Four REC members expressed their struggle in application of the traditional ethical principles when reviewing AI proposals, where the researcher will not have a direct interaction with a participant.

It is very hard to review something you are not well conversant with. . . .it is really hard and takes a lot of time because you constantly have to check the internet, and dictionary. . . I can’t easily tease out the risks that could be involved when there is no direct interaction between the researcher and the participant. . . . [REC member 4]

Six REC members expressed concerns about the uncertainties and unforeseen future risks that could arise during and after the development of the AI tools Sometimes the harm that may result from the developed AI products may not be foreseen at the point when the proposals are being reviewed. . ., and yet, we sometimes don’t have time to conduct a monitoring visit and see what’s is going on. . . . [REC member 6]

Two other REC members expressed concern on how to balance the individual rights and societal benefits.

You see. . . ., most times these AI products are not addressing needs of individuals, but rather societal needs. . .that’s why we talk about “big data”. This means that some individualistic principles may not apply. . . take an example the clash we may experience for group consent to come up with something that will help many people versus individual consent. Sometimes we need to consider social benefit versus individual rights. . . [REC member 10] I wonder in the context of AI where many third parties that are not known to the participants want to use their data.. How should broad consent look like? Does it still care about the participant’s interest as an individual? [REC member 7]

The majority of the REC members (10) reported unfamiliar technical jargon used in the AI proposal write ups. They expressed concerns about ensuring that informed consent would be adequately sought from participants.

. . .Me who is educated, I can’t understand certain AI terms. They are too technical. How can you expect a participant to understand that information. . . . [REC member 3]

One REC member revealed that there are misconceptions about the application of AI.

. . .Maybe the challenge here, I am still old school. . . I don’t really understand what AI is and what it is not, what it can do and what it can’t do. . . I may have some misconceptions which need to be addressed before I can fully trust AI things. . . [REC member 9]

The REC members expressed concerns about the limited personnel with experience in ethical guidance of AI. One REC chairperson mentioned that it is sometimes difficult to co-opt a reviewer who is not conflicted.

. . .AI is still new here in Uganda and one time I struggled to find someone who can provide constructive feedback to this [AI research study] proposal. The person I thought about was conflicted, he was part of the investigation team so I couldn’t ask him. The other person I thought about was too busy. . .so we took almost four months to give feedback to the researcher. . . . [REC member 7]

Inadequate guidance and reference tools. The majority of the REC members (11) spoke about the absence and inadequate guiding tools for example the national research ethical guidelines. However, most REC members (11) mentioned that they rely on international ethical guidelines to ascertain the risks involved in the study, although these guidelines may not consider local communities needs and values.

Our UNCST guidelines do not provide a clear direction on how these research studies of these emerging technologies should be well reviewed. . . . . . So, we often get stuck. . . . [REC member 9]. I know there are many international guidelines and frameworks for ethical AI, but those guidelines are usually not contextualized to our setting. . . . We may pick up a few aspects from them but not much can work well for us. . . . [REC member 12]

None of the RECs had SOPs specific to AI research, despite acknowledging that there are differences in the application of the ethical principles.

One big challenge is that we don’t have SOPs specific to AI research, yet there are differences between the risks exposed to a ‘data subject’ and the risks exposed to a ‘research subject’. . . . [REC member 6]

Four REC members also mentioned that the proposal and informed consent templates shared by the REC administration with researchers are inadequate.

The current templates shared with researchers are generic and not specific to capture the key aspects related to the risks and benefits of AI. . . [REC member 2]

One REC member reported seeking guidance from the internet and not necessarily normative guidance.

. . .I would like to be informed about the risks that AI can present. Because it [AI-research] is still new to us, many guidelines out there may not be meeting our contextual needs. So, I like to use google scholar, even read online articles. . . . I know that some literature out there can be useful to inform me about the harms. . .of course considering risks on a case by case. . . . [REC member 2]

Unreasonable demands from researchers

Two REC administrators mentioned that some researchers in the field of AI have limited experience with the REC processes, while others don’t think they need to have REC approvals prior to study implementation since they have no direct contact with participants.

Some researchers think AI studies present minimal risks to participants and so the review process should not be long. . . . They keep calling me every day asking for feedback. . . [REC administrator 4] One researcher told me that he does not understand why he needs to secure approvals before he conducts his study because all he needed were datasets and no interactions with patients or participants. . . . [REC administrator 2]

Proposed solutions to the challenges experienced by REC members

Respondents proposed several solutions that may mitigate the challenges experienced by REC members.

Strengthening REC capacity

The majority of the respondents (12) opined that training REC members will equip them with skills and knowledge on how to efficiently provide constructive and timely feedback to researchers in the field of AI.

We need training. . . we need skills. . . we need the knowledge if we have to give constructive feedback to our researchers. . . [REC member 7]

Developing comprehensive guidelines, SOPs and proposal templates

The majority of the respondents (16) mentioned that research regulators should develop well-informed guidelines or frameworks to provide a clear direction on how to determine risks in AI health proposals.

Without having formal guidance, its is also difficult to review proposals very well. . . The UNCST should work together with experts in AI and ethics to come up with guidelines. This will simplify our work especially us who don’t know much about AI. [REC member 2]

In addition, more than half of the REC members recommended developing SOPs and proposal template specific to AI health research proposals.

We are currently revising our SOPs, it’s the right time to come up with a specific SOP for AI studies. . . . [REC member 10]

Expansion of REC membership

Most REC members also advocated for expansion of REC membership to include computer scientists since AI and other health technologies are increasingly being used in Uganda today.

. . . . We should expand our committees and have a computer scientist or someone from IT (information technologies) to join RECs. The world is changing very fast, we need to become dynamic in how we do things. . . . [REC member 4]

Discussion

Findings from this study report the current practices and the key challenges experienced by REC members when reviewing AI health research proposals. Of interest, the main challenges pointed out included limited training and insufficient experience and expertise, inadequate guidance and reference tools, and the unnecessary demands from the investigators conducting AI health research. Respondents suggested possible solutions to overcome these challenges.

Members of the RECs acknowledged that they had limited experience in reviewing AI health research proposals and inadequate expertise in the application of the ethical principles when reviewing AI proposals. In fact, the absence of trained personnel in computer sciences or health technologies not only affected the REC activities, but also slowed down the process of obtaining ethical and regulatory clearances, which in return creates delays in implementation of research activities. The current practice where RECs co-opt experts in AI to provide constructive feedback to researchers is also challenging especially when the experts are not available or may be conflicted. Artificial intelligence technology is evolving rapidly and it is important that RECs keep pace (Nebeker et al., 2019). To overcome this challenge, the majority of the REC members expressed willingness to attend trainings and increase technical competence. Our findings are consistent with the results of a Swiss qualitative study that recommended training of RECs to strengthen their oversight role in research (Ferretti et al., 2022). Continuous training may equip REC members with skills and knowledge to identify unforeseen risks that could arise during the development and use of AI products and provide mitigation strategies. In addition, given the current trends of emerging technologies in health research, RECs should consider expanding membership to include individuals with a background in computer sciences and health technologies.

As mentioned by the REC members, other studies have also reported that insufficient or absence of ethical and legal regulatory guidance national guidelines and institutional standard operating procedures undermines consistency in the feedback given to researchers (Favaretto et al., 2020; Vitak et al., 2017). For example, a qualitative cross border study which interviewed REC members from 20 countries in SSA reported the absence of specific ethical guidance for data-intensive health research such as AI and digital technologies hinders adequate research oversight (Cengiz et al., 2024). Members of research ethics committees are challenged with the application and interpretation of the existing ethical principles, theories and frameworks when reviewing AI health research proposals, which creates confusion and meaningless feedback to researchers. Moreover, certain factors such as age and exposure to technology may greatly influence REC members’ opinions or judgment toward certain AI study designs as mentioned by one of the REC chairpersons. As some REC members highlighted, there is a tendency of being more sensitive to some ethical considerations and implications than others. For example, how to balance the application of the ethical principles at an individual level versus group level. Our findings are similar to a study that reported RECs being challenged by the distinction of the application of the traditional ethical frameworks when evaluating big data projects (Ienca et al., 2018). While some REC members rely on the existing traditional research ethics guidelines, online literature and institutional templates, others expressed concern about having to make judgments on the risks that might be presented to the participants and end-users of the AI products on a case-by-case basis. These diverging opinions might lead to subjective approaches of ethical review, which minimizes RECs confidence levels and transparency, thus negatively affecting researchers’ trust in the oversight system (Ballantyne and Schaefer, 2020; Dove and Garattini, 2018). In addition, the lack of specific guidance on AI also adds to the burden of review because it can necessitate members having to do extra background research in order to complete their reviews. Respondents opined that developing national guidelines and institutional SOPs that are tailored to the Ugandan context may provide direction to REC members and improve the quality of the feedback given to researchers. The guidelines should also explicitly define the categories of research studies that may receive waiver of REC review to reduce on the unreasonable demands from researchers.

This study reports two main limitations. First, AI health research is in its infancy in Uganda and subsequently the authors only found a minority of RECs who have reviewed AI research proposals. Therefore, our study findings may not be generalizable. Secondly, there was a possibility of researcher-bias given that three of the authors are REC members. However, most interviews were moderated by a qualitative researcher and a research assistant. SN also moderated some interviews with REC members who do not sit on that same REC.

Conclusion

Our study findings report the main challenges experienced by REC members when reviewing AI health research proposals. Limited training and expertise in the field of AI and inadequate guiding documents hinder constructive feedback to researchers. If not adequately addressed, these challenges may continue to hamper research in low resource settings like Uganda. First, we recommend building capacity for REC members in the field of AI to efficiently point out the ethical concerns that may harm research participants and end-users of AI products including the policy makers and the public. Secondly, we recommend education of AI researchers about the ethics of AI health research. Lastly. we also recommend developing comprehensive guidelines and frameworks that respect individuals and communities’ rights and interests, values, and address the needs of the end-users.

Footnotes

Acknowledgements

This work was done under the fellowship awarded by the Global Forum for Bioethics in Research (GFBR- 2023), which received supplementary funding from the NIH/FIC to SARETI, a project of the University of KwaZulu-Natal (UKZN).

Author note

The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institutes of Health.

Ethical considerations

This study obtained ethics approval from the Mildmay Uganda Research Ethics Committee (0501-2024) and Uganda National Council for Science and Technology (SS 2558ES).

Consent to participate

Written informed consent was sought from each individual prior to participation.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was funded by the Fogarty International Center of the National Institutes of Health under Award Number D43TW011240.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The data will be availed upon request.