Abstract

One of the key criticisms of the ethical review process is the time taken to decision, and associated resource use. A key source of delay is that most submissions are required to respond to at least one request for further information or clarification from the Human Research Ethics Committee (HREC). This study audited the request letters of a single Australian public health HREC using content analysis. Twenty-four submissions were analysed, including 355 individual request elements. Most submissions received a single request letter. There was a mean number of 14.2 (SD = 5.5) elements per letter for the first request and a mean of 2.1 (SD = 1.2) for subsequent requests. Administrative errors were the most common source of request for further information, occurring in all submissions. The second most common theme was the content of the Participant Information and Consent Form, occurring in 79% of submissions. Other common themes, present in over 50% of submissions, concerned: data collection and study procedures; general ethical considerations; recruitment and consent; site, setting or patient pool; research design and methodology; and data management and security. In terms of the general purpose of the HREC comments, 44% were direct corrections or specific requests for changes, 42% were asking for more information or clarification of existing information, and 14% were the HREC expressing concerns about an element of the study, without directly suggesting a change. Overall, the study provides some evidence to show that the quality of the submission (ensuring correct attachments, up to date documents, clear information etc.) could account for a significant proportion of the burden and delay associated with ethical review.

In many countries, ethical review of human research is mandatory. In Australia, all human research conducted within public health institutions requires ethical review by a certified Human Research Ethics Committee (HREC), known in some countries as an Institutional Review Board (IRB). The primary purpose of ethical review is to ensure the protection of research participants.

Despite this important function, the process of ethical review has attracted significant criticism from the scientific community. Some have argued that mandatory ethical review, resulting in a binary outcome of approval or non-approval, can drive maladaptive attitudes in researchers. Colnerud (2015) contends that it turns the focus away from considered dialogue about ethical issues, towards getting a ‘rubber stamp’ of approval. The ethics review process is often perceived as ‘red tape’ by researchers, or even worse, a barrier to conducting research, rather than an important process for protecting research participants (Burris and Welsh, 2007; Guta et al., 2013). Key criticisms of the process include role creep (Angell et al., 2008; Guta et al., 2013), an increasing regulatory approach (Guta et al., 2013), perceived lack of competence with some research approaches (Colnerud, 2015; Guta et al., 2013), variability between HRECs with the same submission (Coleman and Bouesseau, 2008; Colnerud, 2015; Glasziou and Chalmers, 2004), unnecessary review of scientific merit (Angell et al., 2008; Page and Nyeboer, 2017) and lack of transparency of decision making (Klitzman et al., 2020; Glasziou and Chalmers, 2004; Guta et al., 2013; Lynch, 2018).

However, the key criticism of HRECs is the time for approval and the resource use associated with lengthy approval periods (Barnett et al., 2016; Guta et al., 2013; Maskell et al., 2003). Some commentators have debated the controversial possibility that mandatory ethical review is killing patients because of delays to important interventional research (Christie et al., 2007; Hunter, 2015; Whitney and Schneider, 2011), the assumption being that the time and resources for ethical review process don’t always advance the primary function of protecting participants.

One of the key sources of delays and demands upon resources is that it is uncommon for a decision to be reached upon first review 1 (Cleaton-Jones, 2016; Happo et al., 2017). Most submissions will be required to answer at least one request for further information or provide clarification for the HREC. The researchers then need time to develop a response to the request, which then needs to be reviewed again by the HREC, which can add months to study timelines (Page and Nyeboer, 2017). This is a major factor in lengthy review timelines and extra resource use, meaning that requests for further information are undesirable for both parties.

Quantifying the common reasons that ethics applications are returned to researchers could be useful for understanding reasons why delays are occurring and helping to minimise them. Despite the many criticisms of the HREC review process, few studies have investigated the functioning and evaluation of HRECs in an empirical manner, especially across multiple submissions (Nicholls et al., 2015; Sherzinger and Bobbert, 2017). A significant portion of the literature on this topic takes the form of researcher commentary or case studies that describe review of a single submission/project. This may be, in part, because HRECs can be reluctant to participate in research which evaluates their activities (Klitzman et al., 2020).

Several studies have investigated common reasons for request of further information from multiple submissions to HRECs across a range of countries (see, for instance: Bueno et al., 2009; Butler et al., 2020; Cleaton-Jones, 2016; Martín-Arribas et al., 2012; van Lent et al., 2014), but none have been undertaken in the Australian public health setting. In addition, many of these studies analysed comments to a low degree of specificity, often using only 4–10 broad categories like ‘informed consent’ or ‘study sample’.

This study aimed to complete a detailed audit of the reasons for requests for further information across multiple submissions in a single Australian public health HREC. The study aimed to gain an understanding of the common issues, in order to help researchers and HRECs to understand if and how these requests can be minimised, for example through tailored education. It also aimed to provide information on the foci of HREC review, contributing to a better understanding of the role of HRECs in their core mission of protecting the rights of participants.

Methods

Requests for further information letters from an Australian public health service HREC in 2019 were investigated through retrospective audit. This particular HREC reviews most research ethics applications for the health service district, which covers 600,000 residents and includes two major hospitals (790 and 400 beds). Some multisite research occurring in the district may have been reviewed by another HREC through Australia’s national mutual acceptance scheme (New South Wales Health, 2020), and thus would not be included in the study.

Upon first review of an application, the HREC can either approve, reject or send a request for further information to the researcher. For requests for further information, this HREC separates each element of the request into dot points.

Content analysis was used to code each element of the request (usually each dot point) inductively into categories related to the content of the request. Each element was also coded deductively into the following three predefined categories relating to the purpose of the request:

‘More information or clarification’ was used where the HREC’s comment was related to the fact information was not present, or the information that was provided was not clearly understood by the HREC.

‘Concern’ was used where, unlike the above category, the information was present and understood by the HREC, but there was a concern about an aspect of the study. The expected outcome of these queries was usually a justification or change of approach.

‘Correction or request’ was also related to instances where there was concern with present and understood information, but the committee directly required or requested a specific change.

Initial coding was completed in Microsoft Excel by two authors (CB and ST), and all codes were reviewed by all three authors in regular coding review meetings. Coding finalisation was completed by one author (CB) and a subset was checked by the other two authors. Coders were either research ethics professionals, or researchers, with a minimum of 5 years of experience. After coding was completed, codes were summarised quantitatively.

Quantitative information was also collected on number of reviews, and number of elements per request for further information.

Results

It was originally intended that 12 months of data was to be analysed (approx. 100 submissions), however, withdrawal of management support for the project meant that only 24 consecutive submissions could be analysed. However, this data was rich enough to complete analysis, acknowledging that small sample size may limit interpretability. There was minimal crossover in investigator teams in the sample. Out of a total of 117 investigators, one was Principal Investigator on two projects, and three were Associate Investigators on two or three projects.

Of these 24 submissions, all had requests for further information after initial review. Eighty-three percent had only one request for further information from the HREC, while 17% had more than one request, which included administrative requests (one study had an email administrative request prior to HREC review, and three had further, mostly administrative requests after HREC review). There was a mean of 14.2 (SD = 5.5) elements per request for the first request for further information. Subsequent requests had substantially fewer elements, with a mean of 2.1 (SD = 1.2).

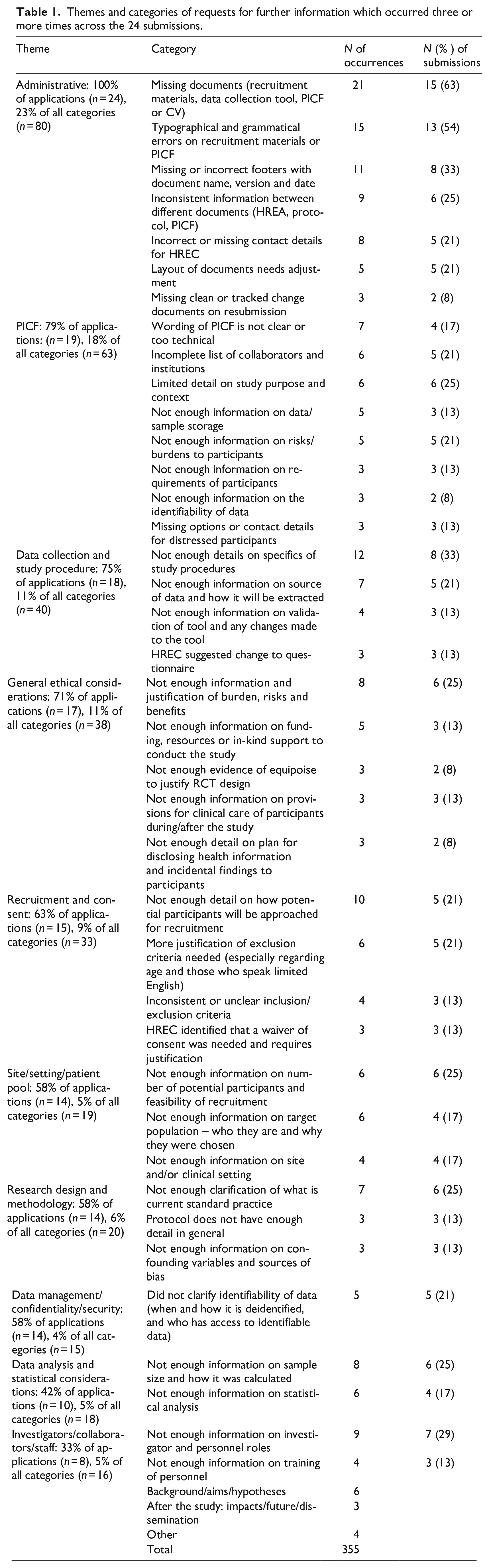

In total, there were 355 elements of requests across 24 projects. Table 1 shows the all broad themes and categories with three or more occurrences from the 24 submissions. Data for all categories can be requested from the authors.

Themes and categories of requests for further information which occurred three or more times across the 24 submissions.

Administrative errors were the most common source of request for further information, occurring in 100% of submissions and making up 23% of all request elements. The most frequent form of administrative error, which appeared in 63% of projects, was missing documents, most commonly missing recruitment materials (6) and data collection tools (6), followed by Participant Information and Consent Forms (PICF: 4), and CVs (3). The second most common administrative error, occurring in over 50% of projects, was typographical or grammatical errors in participant-facing documents like recruitment materials and the PICF. The next most frequent error was a missing or incorrect footer. Other common errors related to inconsistent information between different documents, and incorrect/missing HREC details on the PICF.

The second most common theme was the content of the PICF, occurring in 79% of submissions and comprising 18% of all elements. The most common issues were that the wording of the PICF was unclear or too technical, there was an incomplete list of collaborators or institutions, or limited detail on study purpose and context.

Other common themes, present in over 50% of submissions, concerned: data collection and study procedures; general ethical considerations; recruitment and consent; site, setting or patient pool; research design and methodology; and data management and security.

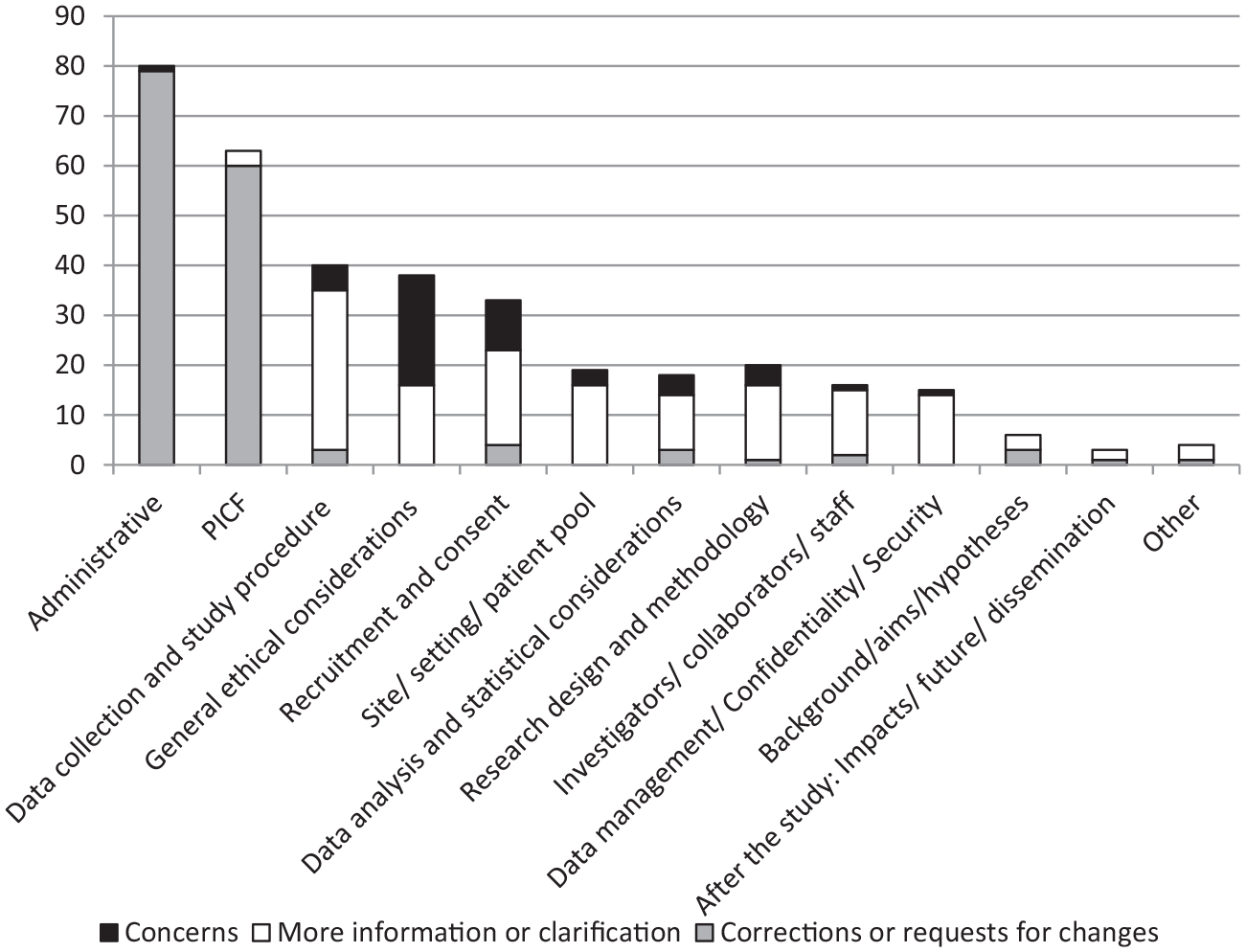

In terms of the general purpose of the HREC comments, 44% were direct corrections or specific requests for changes, 42% were asking for more information or clarification of existing information, and 14% comprised HREC concerns about an element of the study, without directly suggesting a change. Notably, for comments related to administrative or PICF themes, the purpose was generally to make a correction or request a change (Figure 1).

Frequency of general purpose of HREC comments, separated by broad theme.

Discussion

The mean number of reasons for return per letter (14.2) was significantly more than Bueno et al.’s (2009) mean of 2.2 per letter and Martín-Arribas et al.’s (2012) median of 4. The reasons for this large difference are unclear and may relate to differences in the nature of the submissions or ethical review standards and processes between sites. Importantly, this study did not investigate the value of the requests in protecting participants, so the usefulness of having a more detailed review is unknown (Angell and Dixon-Woods, 2009; Butler et al., 2020).

One of the main findings of this study was that administrative issues, such as missing documents or textual errors, were common. This finding is reflected in other studies (see, e.g. Angell and Dixon-Woods, 2009; Bueno et al., 2009; Butler et al., 2020; Cleaton-Jones, 2016; Davies, 2020; van Lent et al., 2014). As raised by Angell and Dixon-Woods (2009), it is unclear whether administrative corrections are helpful in advancing the core remit of HRECs to protect participants, or act as one of the contributors to researchers opining that HRECs are ‘nit-picky’ and focused on controlling elements of little importance (Burris and Welsh, 2007).

However, many of the administrative corrections noted in this study were related to missing documents, which is of obvious importance to thorough ethical review. This supports the idea that many elements of requests for further information from HRECs can be easily avoided when the researcher submits high quality, complete documentation in the first instance (Page and Nyeboer, 2017). In the (anecdotal) experience of the authors, researchers sometimes submit documents which are of lower quality in order to get their submission in the system as early as possible, with the expectation errors will be picked up upon review. In Australia, the national guiding document on ethical review is currently being revised to address this (NHMRC, 2020), with the sentence ‘Researchers should be aware that the submission of poor quality proposals for review may delay the review, ethical approval and/or institutional authorisation process, with consequent impact on potential participants in the research or the community’. However, it should be noted that every submission in this study also had requests related to other categories, so absence of administrative errors would not have negated the need for the requests.

The next most common category related to issues with the PICF, a finding again reflected in many similar studies (Bueno et al., 2009; Butler et al., 2020; Cleaton-Jones, 2016; Martín-Arribas et al., 2012; van Lent et al., 2014). This is unsurprising, given the mandate of ethics committees to protect the rights of human participants, a key element of which is informed consent. Standardised PICF templates are useful in avoiding many issues although, in this case, these were already in use by this health service district. Other options to help minimise PICF-related issues may be specific education or guidance materials for developing PICFs, increasing awareness of the importance of the PICF, and a research culture which values health consumer engagement.

The purpose of most HREC requests was either for more information and clarification of existing information, or direct requests for changes. Cleaton-Jones (2016) also found that missing information, discrepancies and ‘slip ups’ (small errors requiring correction) accounted for a large volume of requests, with missing information an issue in almost 50% of submissions. These findings imply that submissions were submitted without enough information or were poorly written with unclear information, and that some requests for further information could be avoided with detailed, well-written protocols. However, a key complaint from researchers is that ethical review is a ‘black box’, and it is unclear what the HREC requires from them (Fitzgerald et al., 2006; Page and Nyeboer, 2017). Thus, both researchers and HRECs may have a role to play in avoiding requests related to insufficient information or lack of clarity. Our health service and others in Australia have found value in providing a protocol template alongside the national ethics form. However, as it is designed for all research, it only serves to ensure the basic protocol sections are included and could be more specific for different research designs.

Also of note, most of the direct requests for changes were related to administrative errors and changes to the PICF. The HREC rarely gave direct suggestions for changes on other matters like methodology, data collection and recruitment. On one hand, this appears to contradict the view held by many researchers that HRECs overstep their role in ethical review by requiring or requesting that changes be made to the scientific design of the study (Angell and Dixon-Woods, 2009; Angell et al., 2008). On the other hand, some researchers feel the responses from HRECs are vague and unhelpful, and would prefer direct advice on how to address the issues (Colnerud, 2015).

Limitations

An important limitation of this study is that it did not investigate the perceived or actual value of the requests in improving the quality of a study or ensuring the protection of participants. A similar study (Butler et al., 2020) also analysed the researchers’ responses to the HREC and found that 72% of the researchers had made changes to their protocol based on the review. However, another study (Wynn et al., 2014) found that of those researchers who had been recommended significant modifications to their protocol, only 35% felt the changes were helpful to the quality of their research, 40% regarded the changes as neutral, and 25% as actually detrimental to the quality of their research. In terms of whether the changes would protect the welfare of the research participants, only 20% gave a positive response; 10% said the changes requested were detrimental to protecting the welfare of participants and 70% were neutral (Wynn et al., 2014). It appears that from the researcher’s perspective, the changes made as a result of requests for further information could have low value in improving either research quality or protection of participants. However, this needs to be investigated more thoroughly, and from more perspectives, including a health consumer perspective. The study was also limited in that it did not collect information on time to approval, which could have provided useful information on the impact of requests for further information on study timeframes.

It should be noted that the generalisation of these results is limited by the small sample size, and the fact that all applications were for a single, Australian HREC. In the sample of 25 submissions, there were a variety of research designs and research populations, but there was insufficient data to determine any trends related to these variables Future research in this area should endeavour to sample more submissions across multiple sites.

Conclusions

Overall, this study provides some evidence to show that the quality of the submission (ensuring correct attachments, up to date documents, clear information) could account for a significant proportion of the burden and delay associated with ethical review. Many of the issues raised were administrative or a result of unclear or not enough information, rather than principal ethical concerns. As requests for further information negatively impact review times and resource use, it is important to develop ways to minimise them and remain focused on elements which are directly related to the protection of participants.

Page and Nyeboer (2017) proposed that we need change and education at three levels: the HREC, the institution and the researcher. While the literature has placed a lot of focus on the first two, there has been less focus on how researchers might minimise issues related to the quality and completeness of the submission. Possible ways of improving this range from very simple, for example, checklists of administrative requirements, to more complex, like improved ethics training for researchers, or more specific guidance from HRECs or national ethics bodies (for instance, standard guidance on data management/storage for the institution).

The results of this project will be used to help locally, to guide the education of researchers. However, they may also be relevant more widely, through creation of coding frame for other HRECs who wish to conduct a similar audit, and a set of results for comparison. In broad terms, this study supported the notion that it is the responsibility of both researchers and HRECs (which are, after all, mostly made up of fellow researchers) to work together to improve the process and minimise requests for further information, for the benefit of both parties and society in general.

Footnotes

Funding

All articles in Research Ethics are published as open access. There are no submission charges and no Article Processing Charges as these are fully funded by institutions through Knowledge Unlatched, resulting in no direct charge to authors. For more information about Knowledge Unlatched please see here: ![]()