Abstract

While empirical studies of research ethics and integrity are increasingly common, few have aimed at national scope, and even fewer at current results from Central and Eastern Europe. This article introduces the results of the first national research integrity survey in Estonia, which included all research-performing organisations in Estonia, was inclusive of all disciplines and all levels of experience. A web-based survey was developed and carried out in Estonia with a call sent to all accredited Estonian research institutions. The results indicate that the vast majority (89%) of respondents consider research ethics and integrity issues important and view falsification, fabrication, and plagiarism (FFPs) as the most severe forms of misconduct. Self-reporting of FFPs is generally comparable to levels published in other studies (6.2%). Gift authorship (41%) and hampering the work of a colleague (32%) were problematic practices most noticed among colleagues. At the same time, two of the noticed questionable research practices (QRPs) – salami-slicing and misuse of research funding – were seen as less severe, hinting at the existence of counter-norms that career advancement rules and structural factors like funding policies may encourage. The availability of research ethics and integrity guidelines was considered good. Ethical aspects of studying potentially stigmatising data in a very small research community are discussed in the article and results are analysed through counter-norms and normative dissonance frames.

Introduction

Adhering to standards of research ethics and integrity (REI) is a prerequisite for good science. Shared values such as honesty and transparency underpin general prevailing societal norms, but REI is also central to ensuring mutual trust between society and the scientific community, as well as within the scientific community itself (ALLEA, 2023; Resnik, 2011). Ethical research underpins the epistemic goals of science, and shared norms are a prerequisite for a successful research career as they provide a basis for ethical conduct and enable cooperation (National Academies of Sciences, Engineering, and Medicine, 2017).

In our changing world, science is also changing, and this dynamic is reflected in developments in REI. Innovative forms of collaboration, interdisciplinarity, methodological diversity; the rise of open data and data sharing practices as part of open science; new regulations on research (e.g. the EU General Data Protection Regulation) as well as new challenges (e.g. the replication crisis); the explosive growth in the number of scientific publications; and online publication practices are all trends that have been around for some time and influence how, with whom, and what is researched.

Research ethics pertains to adherence to codes of conduct and guidelines, especially regarding the protection of research participants, as well as methodological transparency and responsibility. Research integrity addresses the broader ethical duties of researchers as professionals – towards other researchers, research participants and the society (Iphofen, 2020; Lõuk and Sutrop, 2023; Science Europe, 2015). Debates are ongoing as to the precise overlaps and differences between the concepts of research ethics and integrity (Kolstoe and Pugh, 2023; Meriste et al., 2016). In this article we use the umbrella-term ‘research ethics and integrity’ (REI) although we maintain that RE and RI each have their distinct analytical purposes. Employment of the term REI is related to the broad focus of our national cross-disciplinary study and to pragmatic concerns, as many training formats incorporate both aspects. In addition, in several languages (e.g. Estonian and Finnish) there is just one term (‘research ethics’) to signify both aspects. Attempts have been made in Estonia to find an equivalent to ‘research integrity’ (including within this survey) but no consensus has been reached.

In recent decades, an increasing volume of published research has focused on research ethics, both empirically as well as theoretically (Bouter et al., 2016; DuBois et al., 2013; Ess, 2019; Hofmann et al., 2020, 2023; Horbach and Halffman, 2017; Iphofen, 2017). Research misconduct has traditionally been understood as falsification, fabrication, and plagiarism (FFP) – the three black ‘devils’ of research (Fanelli, 2011). There are, however, other practices that fall into the ‘grey area’, known as ‘questionable research practices’ (QRPs) (Fanelli, 2009). While FFPs have become a standard research focus in studies, there is less consensus in researching QRPs, as they might be less regulated and vary due to disciplinary and methodological differences (Ravn and Sørensen, 2021; Xie et al., 2021). Several authors have emphasised that although FFP has been at the centre of many scandals, QRPs, due to their prevalence, might impact researchers even more (De Vries et al., 2006; John et al., 2012).

At least one meta-study has referred to an increase in misconduct and QRPs in the last decade (Xie et al., 2021). This trend might not be directly caused by an increase in misconduct and questionable practices in research; it may be a result of greater awareness of these issues. The need for REI studies is linked to the realisation that monitoring is important for a better understanding of scientific misconduct as a phenomenon, and for the planning of both national and institutional activities that support good research practices. The shift away from the ‘bad apple’ paradigm, which assigns blame to an individual, has given way to a more nuanced and contextually informed perception of the elements that facilitate or impede FFP and QRPs (Redman, 2013). Many of these factors concern the role of institutions in supporting and fostering research integrity (Bouter, 2023; Forsberg et al., 2018; Mejlgaard et al., 2020).

The objective of the study was to develop an appropriate methodology and conduct an inclusive, cross-disciplinary survey. The initial choice of topics and preliminary questions were based on selected published national surveys. While the literature on empirical REI studies has burgeoned in recent years, many have focused on particular disciplines, institutions, specific researcher groups, or particular QRPs or threats to REI (e.g. Armond and Kakuk, 2022; Hofmann et al., 2020; Ljubenković et al., 2021; Pupovac et al., 2017). A recent international study offers a comparative view on organisational research integrity policies (Allum and Reid, 2021). In the European research context we found the following recent national surveys serving as a starting point for developing methodology: the Dutch survey provided aspects pertaining to the research environment (Gopalakrishna et al., 2022); the Finnish and Lithuanian surveys had questions for mapping awareness of relevant norms and guidelines, training programmes, values and possible threats to REI (Ozolinčiūtė et al., 2020; Salminen and Pitkänen, 2020); and the Norwegian survey included aspects of evaluating the prevalence of FFP and QRPs (Kaiser et al., 2022).

As elsewhere, the national research integrity infrastructure (e.g. the existence of guidelines, procedures, institutional support systems) in Estonia is continuously developing (see more in Parder et al., 2022 and the Estonian Code of Conduct for Research Integrity 2017). The fact that Estonia is a small country (1.3 million inhabitants) raises additional challenges for the study – from dealing with conflicts of roles and interests to ensuring anonymity of research participants. In addition, we were interested in what are considered to be threats to REI, as well as in mapping the diversity of authorship criteria. Our study also included a section on the awareness and accessibility of REI infrastructure (guidelines, procedures, etc.) as well as training opportunities and experiences.

In this article we present the overall quantitative results of the national REI survey of Estonia and discuss its main outcomes and implications. For unpacking the results, particularly in addressing divergences between research norms versus perceptions of misconduct and QRPs, we utilise the frameworks of counter-norms (Mitroff, 1974) and normative dissonance (Anderson et al., 2007). The former are appropriate for assessing the dynamic of changing research norms, as new norms arise besides and possibly supersede old ones. The latter indicate more serious REI issues, as norms are seen as important and yet perceived as not being followed.

Methodology

Study design and development

A web-based questionnaire was developed, combining both quantitative and qualitative questions in order to reach as many researchers in Estonia as possible. The questionnaire consisted of 7 sections: (1) background information; (2) authorship criteria; (3) FFP and QRPs; (4) risks for REI; (5) elements of the REI infrastructure; (6) training; and (7) ethical awareness vignettes (not discussed in this article). Sections 4, 5 and 6 were adapted from the Finnish and Lithuanian surveys and modified for the Estonian context. 1

For FFP and QRPs we decided to use the method utilised in the Norwegian survey (Kaiser et al., 2022) of postulating three questions per practice. The first question in the set asked about respondents’ attitude towards a particular activity and whether they found it problematic. The second question asked whether they had noticed such behaviour or activities among their colleagues in the past 5 years. 2 The final question asked whether they themselves had practiced these activities in the past 5 years.

After compiling our first draft, we included various stakeholders – representatives from the Estonian Research Council, REI advisors, and international experts – to pinpoint the most relevant topics for the Estonian context and to comment on the cognitive and wording aspects of the survey. The questionnaire was developed in both Estonian and English (to accommodate the many foreign researchers and PhD students working in Estonian research institutions). 3

Authorship practices have evolved over time and, especially in recent decades, they have been influenced by the increasing pressure within the academic community to publish and cite extensively. These changing dynamics have prompted researchers to re-evaluate and adapt their approaches to authorship. In the questionnaire, we proposed 10 criteria for determining authorship commonly used in the literature (Breet et al., 2018; ICMJE, n.d.; Uijtdehaage et al., 2018).

The objective of our study was to encompass all academic disciplines and offer a comprehensive national assessment. Due to the discipline-specific nature of several QRPs, we had to restrict their inclusion to manage the questionnaire’s size effectively. This resulted in 13 FFP and QRPs included in the survey (see Table 2).

The questionnaire was validated following Collingridge’s (2023) validation steps: (1) REI experts (10 in total) from different disciplines tested the initial version of the questionnaire; (2) the sampling included the entire research community in Estonia with the final sample consisting of 354 respondents; (3) the online survey platform generated the initial tables with results; open questions were anonymised; (4) factor analysis was not conducted (due to the small sample size), but content responses were grouped into sections (except background information and ethical vignettes); (5) the validity of the central topics was checked with Cronbach alpha (CA) on items that measured the same aspect of practices.

Recruitment and data collection

Similarly to the Dutch survey (Gopalakrishna et al., 2022), the inclusion criteria were that the respondents’ workload included at least 8 hours of research work in a full-time work week (at least 0.2 research position based on self-report). This resulted in also including doctoral researchers who often hold the position of junior researcher, and any other employees in research institutions whose responsibilities may be divided between teaching, administrative work, and research.

Researchers from all accredited research institutions in Estonia were invited to answer the online questionnaire. To compile the sample the snowballing technique was used based on the network of REI experts available at the Centre for Ethics at the University of Tartu and the Estonian Research Council. These experts first received an e-mail informing them of the upcoming survey and asked for their cooperation in filling out and further distributing the survey within their institutions. This step allowed potential respondents to get back to us with any questions or concerns they might have had regarding the survey. Data was collected in 3 weeks (15 March to 4 April 2023).

The limitation of this kind of sampling is that since the survey was designed to be completely anonymous, it was not possible to track the respondents and evaluate the response rate. In addition, it has been difficult to estimate the most recent size of the total population of researchers as there are no conclusive statistics about all the people who do research in Estonia. The UNESCO Science Report estimates the number of researchers in Estonia to be 3755 (in 2018) which would result in our sample being 9.3% (Schneegans et al., 2021). We can also offer information about the proportion of respondents to overall population size (bearing in mind that Finland has almost twice as many researchers per number of inhabitants than Estonia and Lithuania (Schneegans et al., 2021)). Per 1.3 million inhabitants in Estonia, there were 354 respondents; the Lithuanian survey (Ozolinčiūtė et al., 2020) had 384 respondents per 2.8 million inhabitants, and the Finnish survey (Salminen and Pitkänen, 2020) had 1246 respondents per 5.5 million inhabitants.

Data analysis

Data analysis began with cleaning up the data tables. Exclusion criteria were the following:(1) we removed all answers where the respondent’s workload was less than 0.2 (33 responses, about 7.6%), (2) we removed all responses (47) where the respondents answered only questions about their background, and the (3) final exclusion criteria included eliminating responses where fewer than half of the substantive questions were answered. The total dropout rate was about 19% with final sample size being N = 354. For quantitative data analysis we used statistics programme R. All questions were analysed based on different respondent groups: level of education, institution type, position, experience, and research field. We used mainly descriptive statistics and all differences between groups that are presented in the article were statistically significant at the significance level of 0.05. We used mainly the chi-squared test or Fisher’s Exact Test to test whether or not differences between groups were statistically significant. We tested whether groups were statistically different from each other in terms of the distribution of categorical variables.

Ethical considerations

Although a survey of this nature does not formally require an ethics review in Estonia, we acquired the approval of the University of Tartu Research Ethics Committee due to several considerations. 4 As the survey included open-ended questions, there was a possibility of incidental findings that might include identifiable information. Due to the very small research community in Estonia, special attention was given to keeping the answers anonymous and decreasing the likelihood of indirect identification. The questionnaire and the survey platform did not ask for or collect any personal data (no directly identifying data, metadata, or IP-addresses were collected). We also did not collect some background information that is often included in similar surveys, for example gender, name of the research institution, specific field, and position. Again, this was due to the danger of indirectly identifying people in the dataset (e.g. there may be only one female professor in field X, or only a very small number of PhD students studying a subject in a particular institution). To minimise the risks, instructions were added to open-ended questions not to include identifiable information, and prior to data analysis one team member anonymised the data from open comments by deleting all the words that referred to specific people or institutions. Both the ethics committee and the survey testing group endorsed the measures implemented to ensure anonymity.

Responding to the questionnaire was voluntary and the respondents could stop filling out the questionnaire at any time. Information about the survey and the rights of the respondents were described in detail at the beginning of the questionnaire.

Results

Respondents

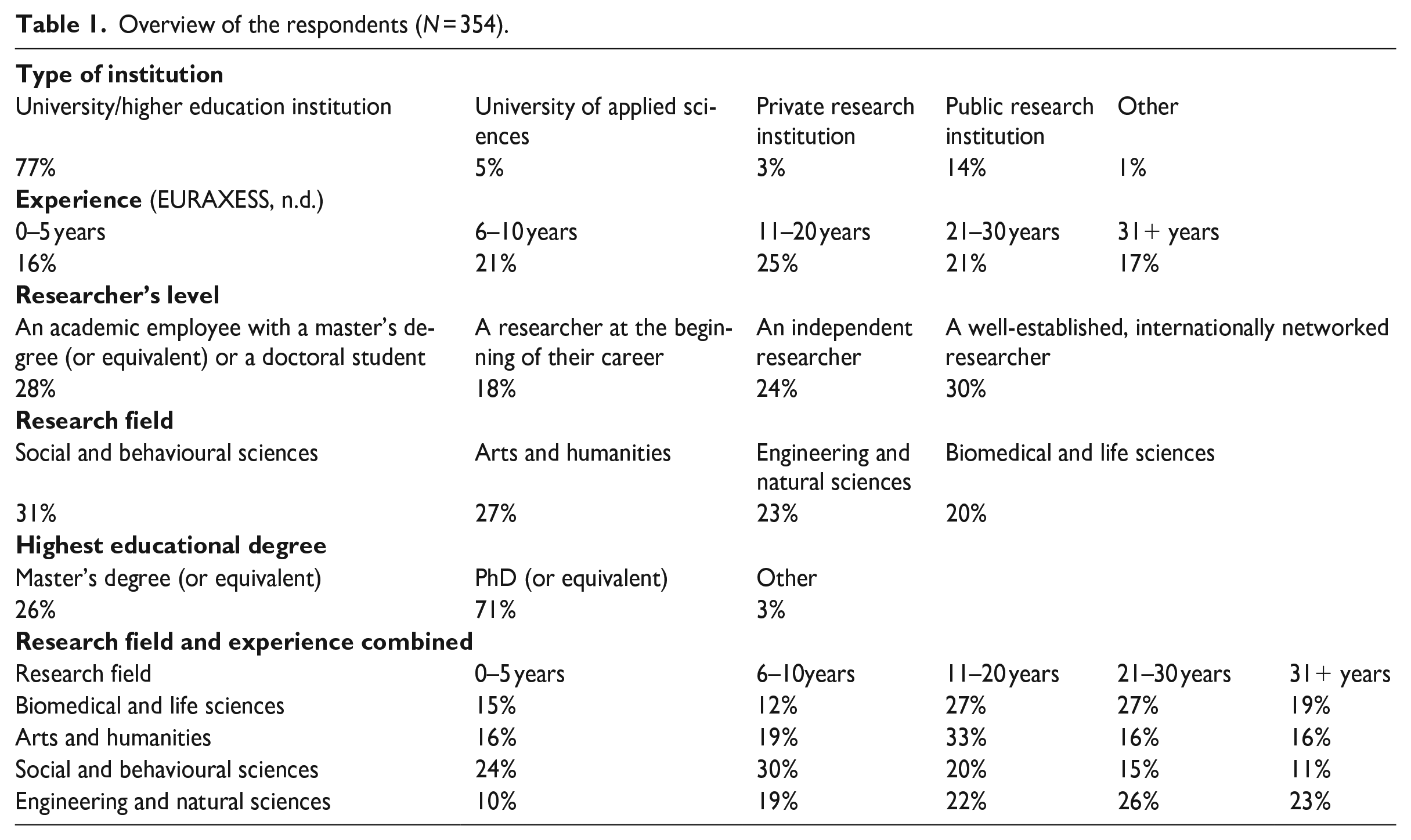

The total sample of the survey included 354 responses. There are altogether 22 accredited research-performing organisations in Estonia, including six public and one private universities/higher education institutions. An overview of the background information of participating researchers is presented in Table 1.

Overview of the respondents (N = 354).

Misconduct and questionable research practices

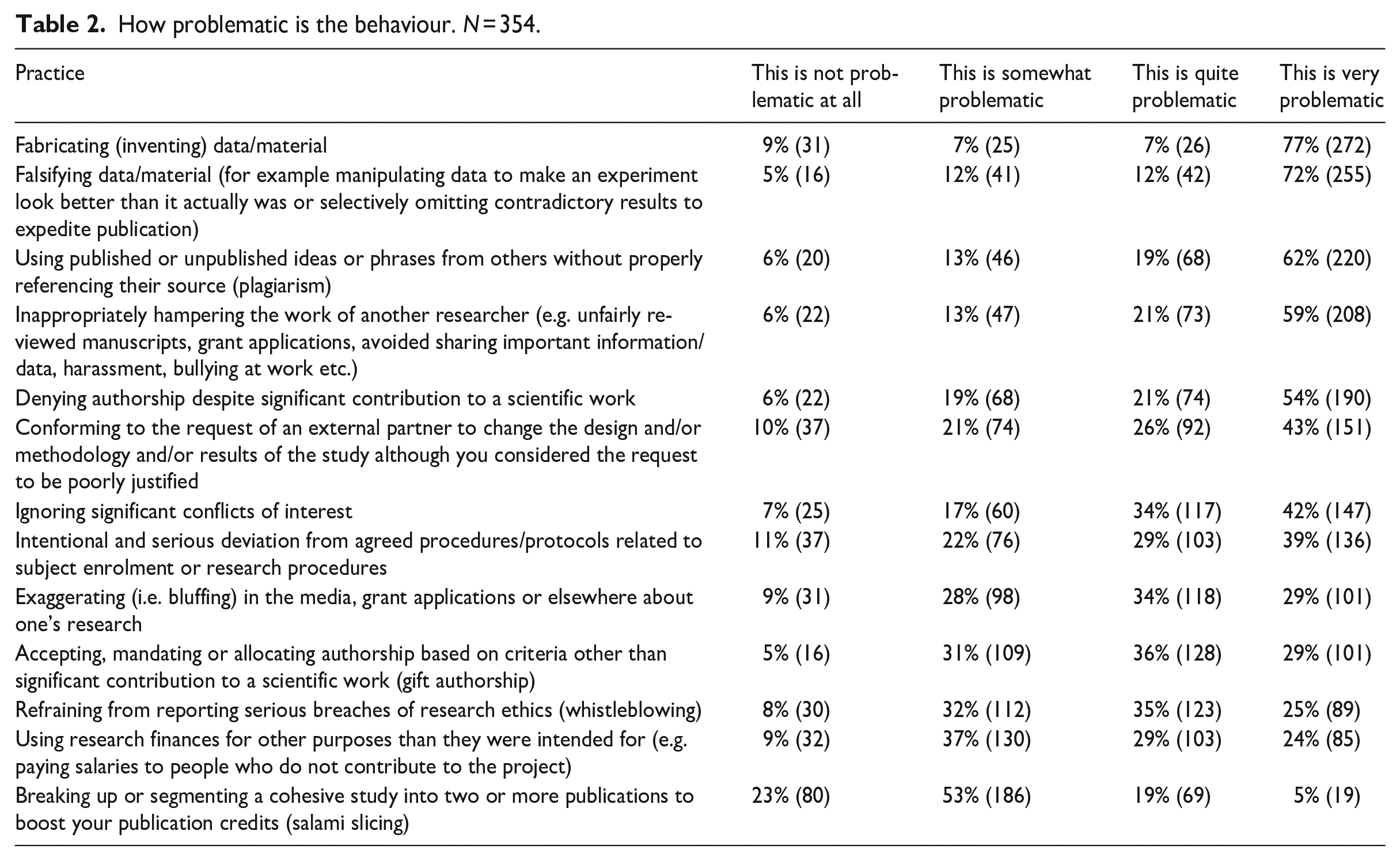

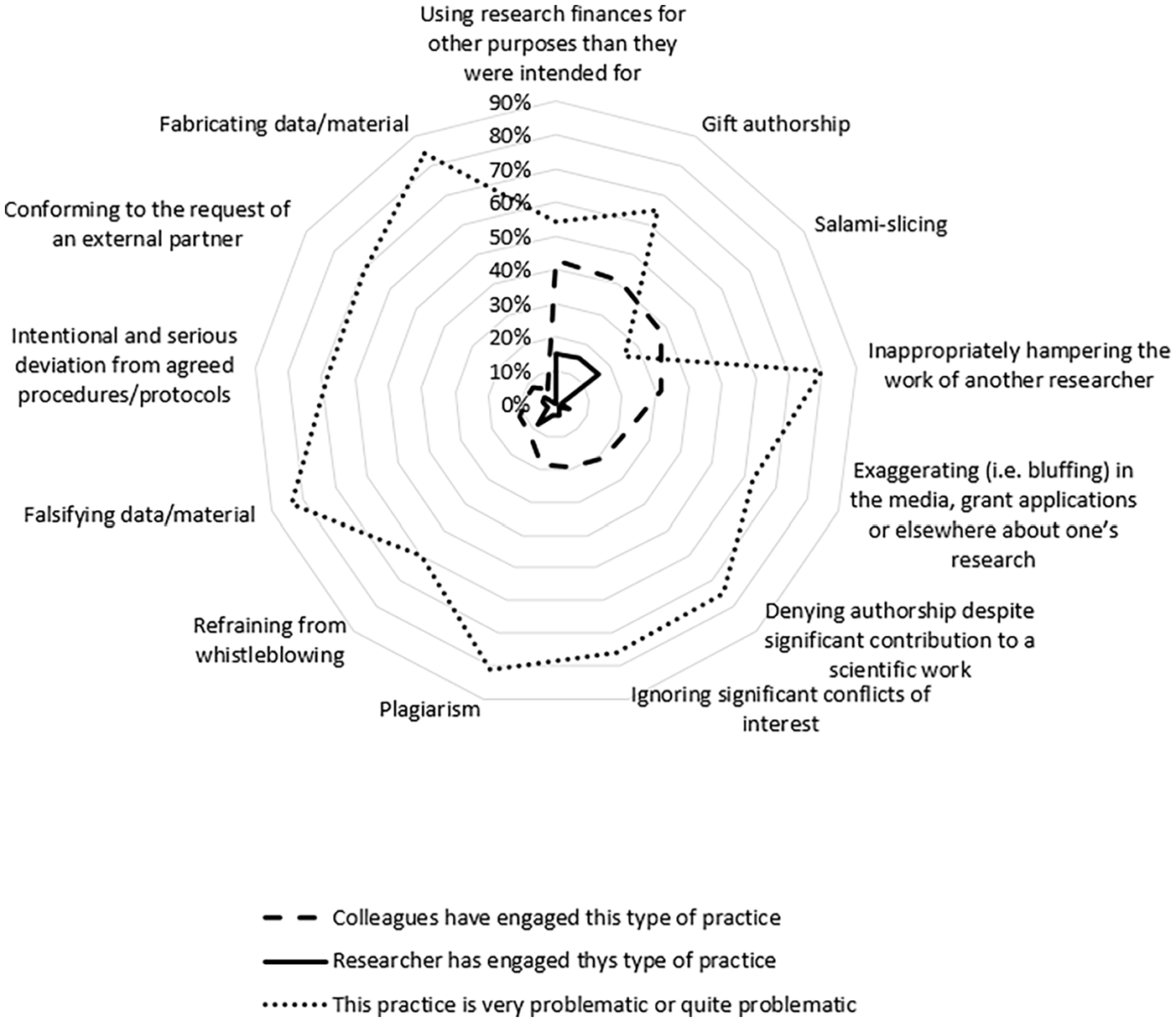

Table 2 summarises how problematic the respondents found FFP and various QRPs. Four activities stood out in terms of being considered very problematic by the respondents: fabrication (77% very problematic), falsification (72% very problematic), plagiarism (62% very problematic) and hampering of the work of another researcher 5 (59% very problematic). The three least problematic activities according to the respondents were salami-slicing (23% viewed it as not problematic at all and 53% as somewhat problematic), serious deviation from research protocol (11% not problematic at all), and conforming to the request of third parties (10% not problematic at all). Cross-sectional analysis revealed that younger and less experienced researchers viewed several QRPs as more problematic than other groups (e.g. refraining from whistleblowing, misuse of research finances, ignoring conflicts of interests).

How problematic is the behaviour. N = 354.

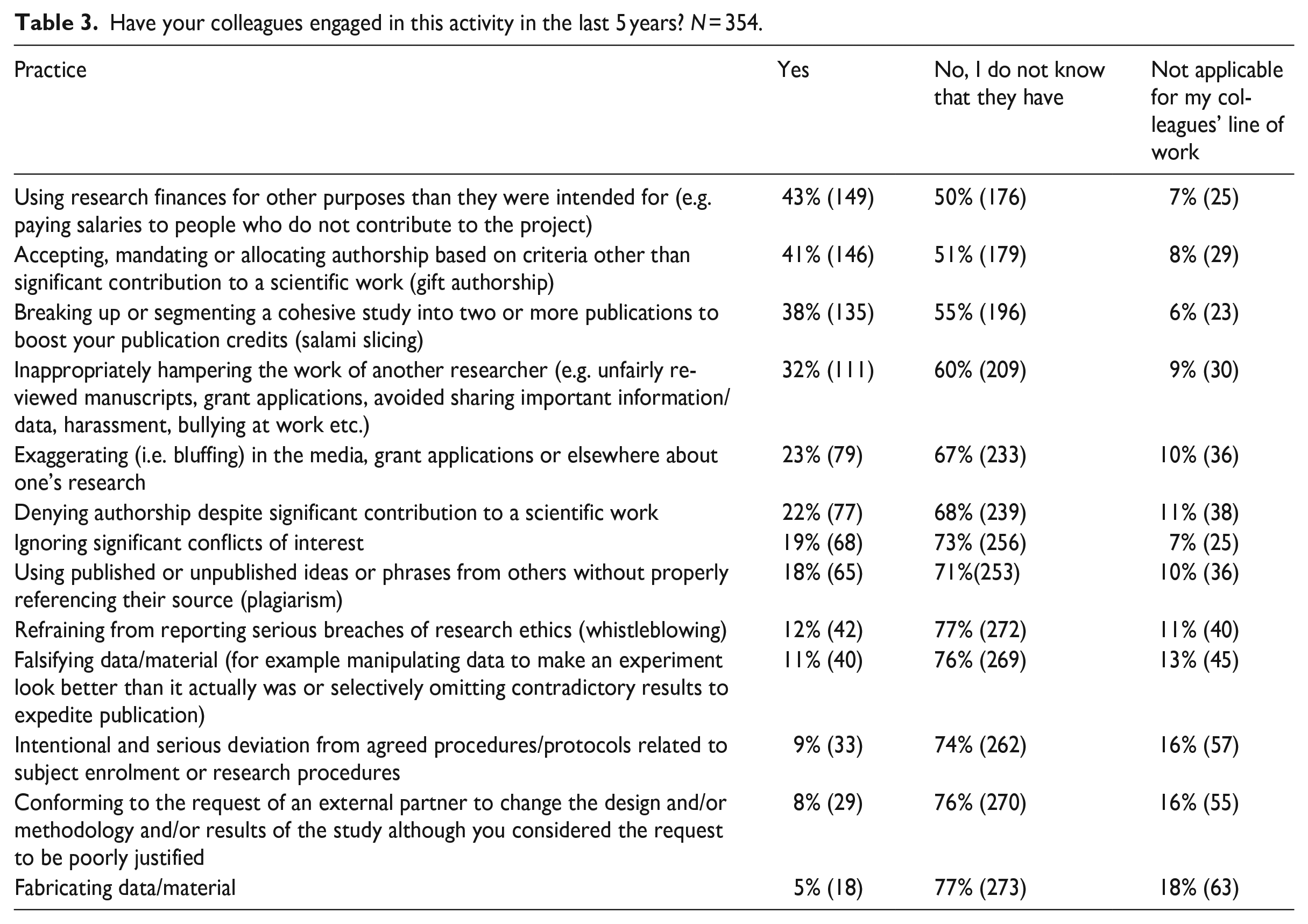

Table 3 summarises the responses to the question ‘Have your colleagues engaged in any of the following FFPs or QRPs over the past five years’? Here four activities stood out – misusing research finances (43% have noticed this practice), gift-authorship (41%), salami-slicing (38%), and hampering the work of another researcher (32%). In terms of FFP, fabrication is reported in colleagues’ work by 5% of the respondents, falsification by 11%, and plagiarism by 18%.

Have your colleagues engaged in this activity in the last 5 years? N = 354.

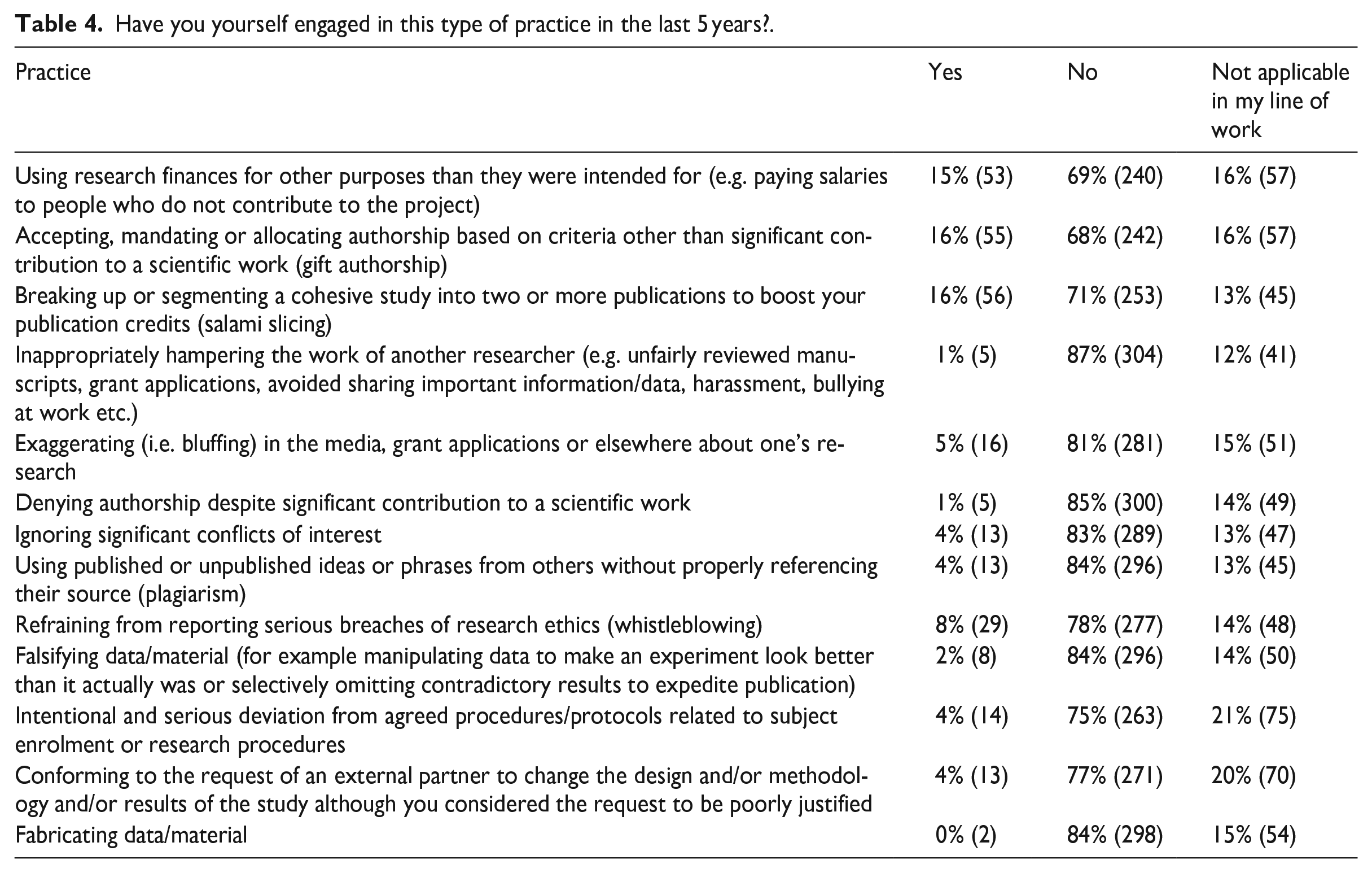

Table 4 summarises self-reported activities and three practices stood out there: gift-authorship (admitted by 16%), salami-slicing (16%), and misuse of research funding (15%). In terms of FFP, fabrication was reported by fewer than 1%, falsification was reported by 2%, and plagiarism by 4% of the respondents.

Have you yourself engaged in this type of practice in the last 5 years?.

When combining the results for how problematic an activity was considered, how much it had been noticed among colleagues, and how much it was self-reported by the respondents, several trends can be identified. Firstly, the practices noticed among colleagues and those committed by the respondents themselves largely correspond to each other, the exception being ‘hampering the work of a colleague’ that was observed rather frequently among colleagues (32%) but was barely self-reported by respondents (1% said they had done this in the past 5 years). Secondly, the practices that were considered to be less problematic received more self-reporting – this is especially true for salami-slicing (see more in Figure 1). On the other hand, activities that were considered to be more problematic were self-reported less – this includes falsification, fabrication, and plagiarism, but also hampering the work of a colleague.

Overlap between attitude, behaviour of the colleagues, and self-reported behaviour.

Threats to research ethics and integrity

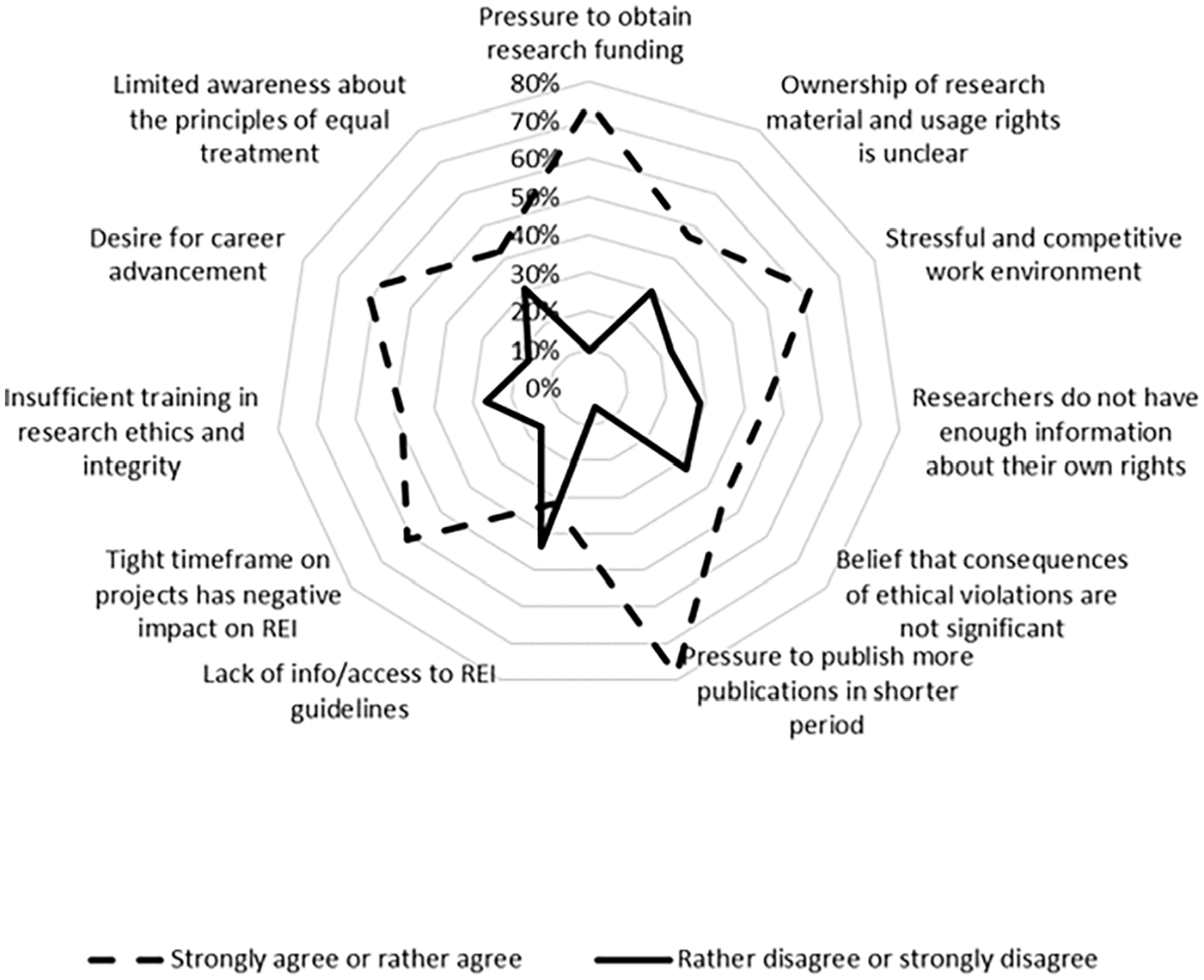

The study also mapped aspects that were considered to be threats to REI in Estonia. Potential threats to REI are shown in Figure 2.

Threats to research integrity on a Likert scale of 5 from strongly agree to strongly disagree.

More than half of the respondents identified several factors as significant contributors to jeopardising REI: (1) publishing pressures, (2) funding pressures, (3) stressful and competitive work environment, closely followed by two factors: desire for career advancement and too tight timeframes for research projects.

Over half of the respondents considered four main factors to be significant in terms of avoiding research misconduct: (1) a trustworthy research team atmosphere that promotes research integrity (87%); (2) the impact of good upbringing and socialisation into shared values (73%); (3) 62% highlighted the quality of mentoring; and (4) 57% underscored the importance of an institutional system that values research ethics and integrity. Cross-sectional analysis revealed that less experienced researchers valued the availability of guidelines and access to training significantly more highly than experienced researchers.

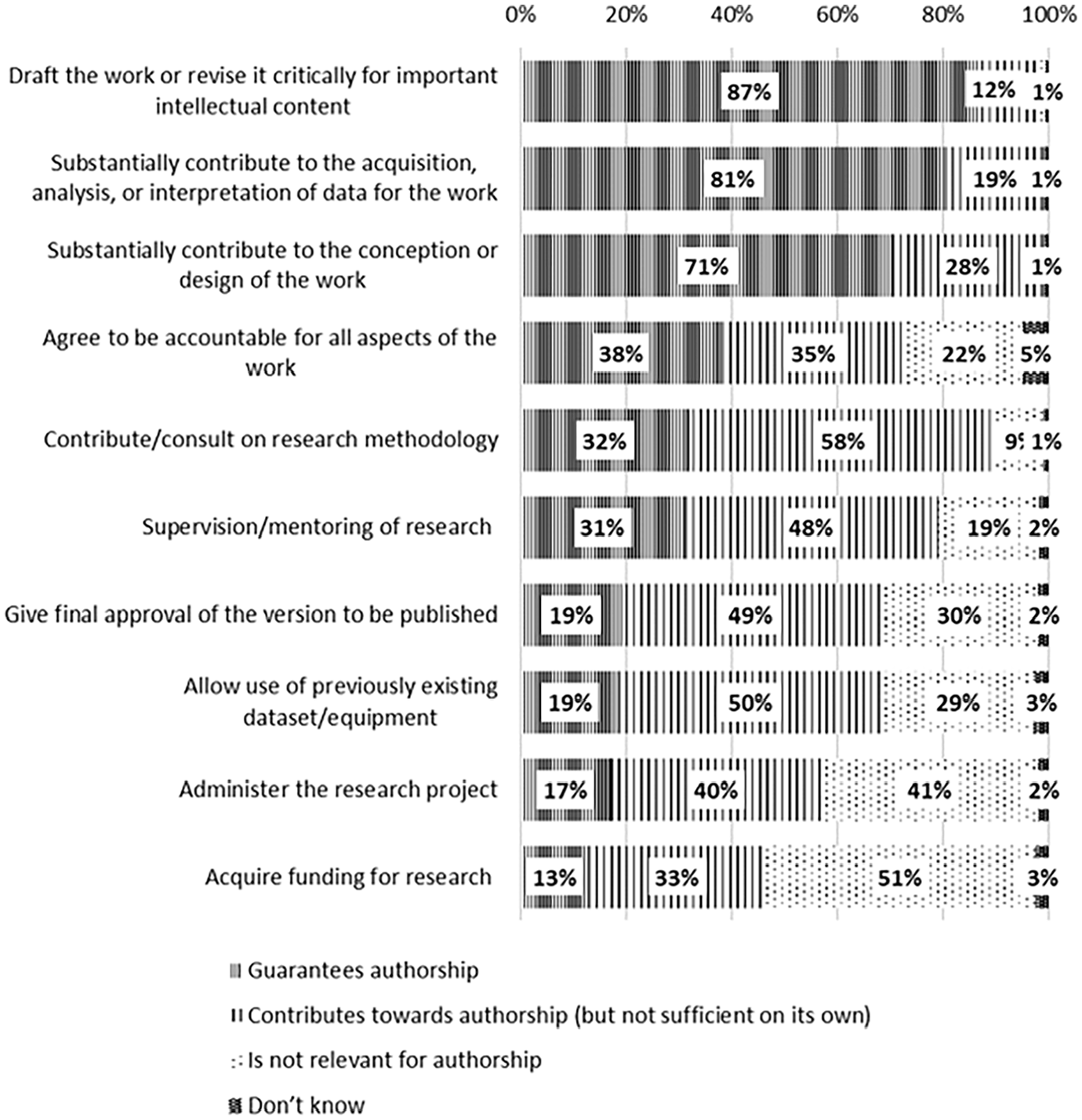

Authorship

The study also investigated perceptions related to authorship criteria. Figure 3 summarises the respondents’ views on the necessary components for becoming an author.

Criteria for becoming an author.

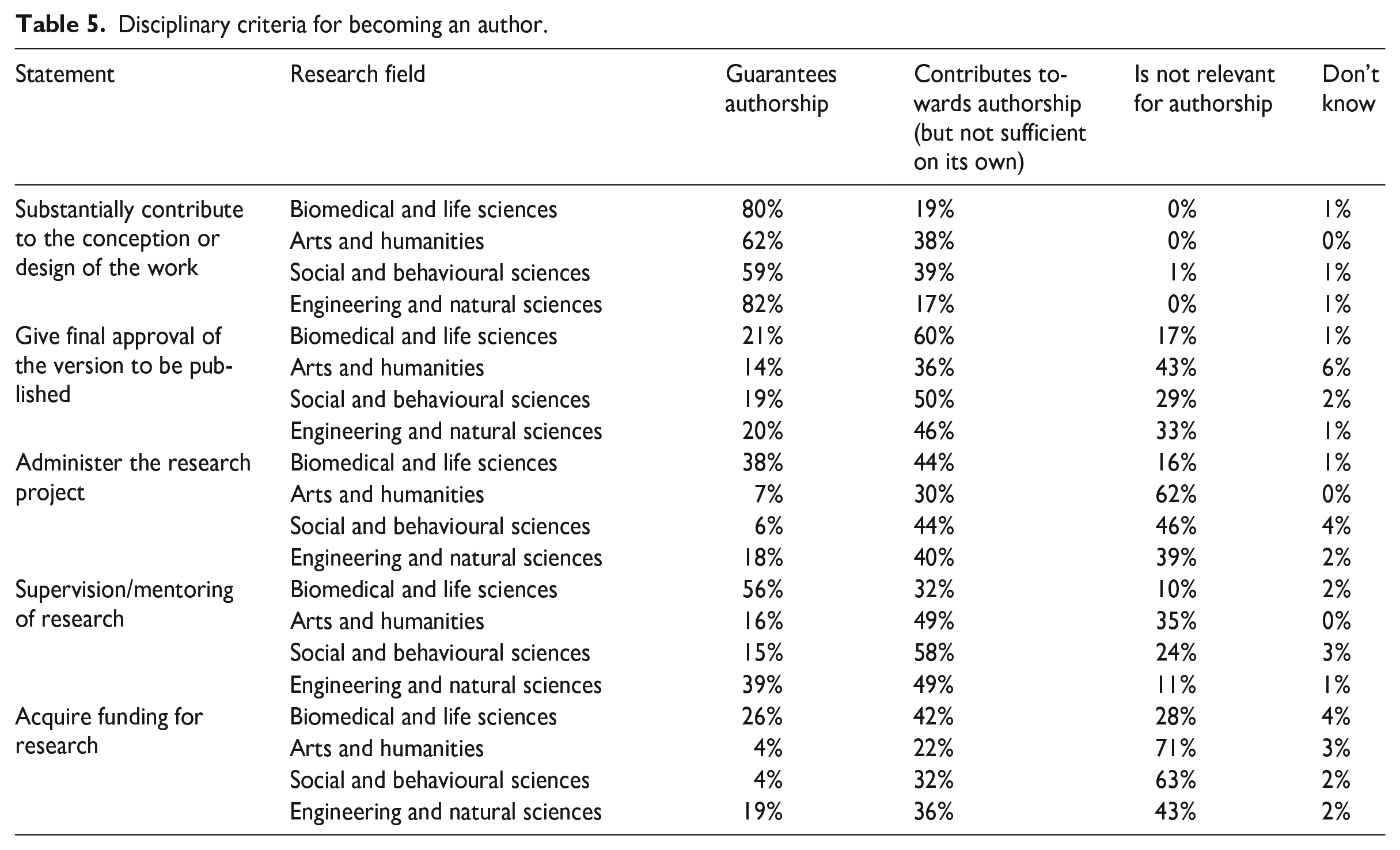

Diverse research practices and methodologies across various disciplines have contributed to the development of distinct viewpoints regarding what constitutes a meaningful contribution to a research article. The survey results allowed us to classify the authorship criteria into two categories: those considered essential for ensuring authorship by most respondents, and those deemed less important. Table 5 presents data about the disciplinary differences between authorship criteria. As an example, in the biomedical field and life sciences, 56% of respondents considered mentoring or supervising to be enough to become an author whereas in the social and behavioural sciences and humanities, only 15% and 16% respectively considered mentoring sufficient to become an author. In general, social scientists and humanities scholars tended to regard research project coordination and ensuring research funding as irrelevant to the authorship of resulting articles.

Disciplinary criteria for becoming an author.

REI infrastructure and training

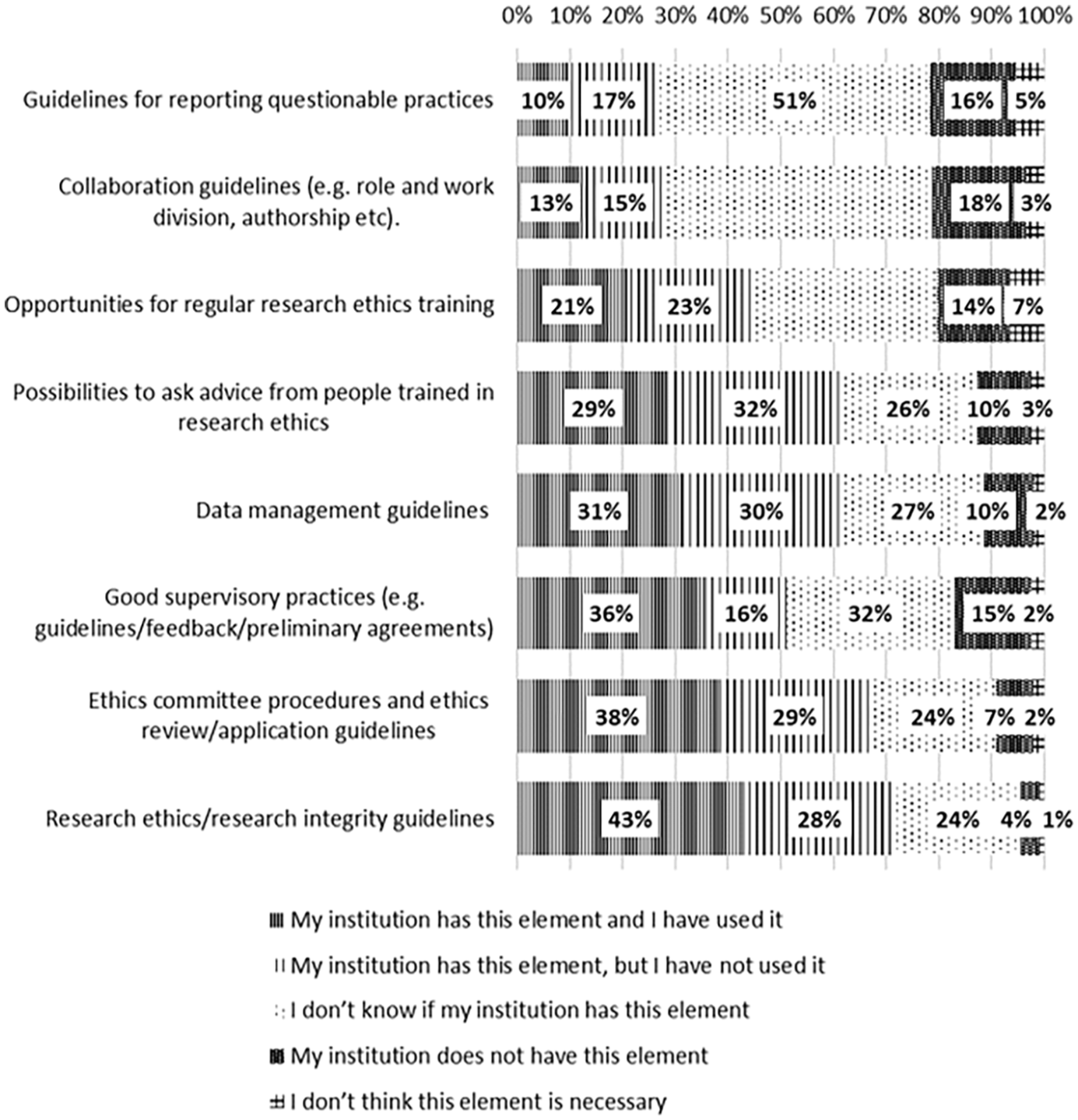

Eighty-nine percent of respondents consider issues pertaining to research ethics and integrity to be important, while 2% thought the topics were not important. In terms of REI infrastructure, the study mapped several elements. We asked whether respondents were aware of the elements of REI infrastructure, and whether they had used them. The results are captured in Figure 4.

Knowledge of REI infrastructure.

The respondents were asked to elaborate why they had or had not participated in REI training. Forty-nine percent of those who had not participated in training said that they had sufficient competence in REI issues. Thirty-nine percent said that training had not been available, and about a quarter said that REI issues were not a priority to them at that moment. Most respondents (75%) who had participated in training said that every researcher must be familiar with the most recent topics of REI, and 38% said that they had felt incompetent when dealing with REI topics. Also, since there is no Estonian equivalent for the term ‘research integrity’, we asked respondents for suitable translations. 6

Discussion

This study mapped the current status of REI in Estonia in terms of research misconduct (both FFP and QRPs) as well as issues related to authorship, research infrastructure, training, and threats to REI. The most prevalent misconduct activities based on self-reporting were gift authorship, salami-slicing, and misusing research finances. An additional activity stood out among colleagues’ practices – hampering of the work of a colleague – an activity that very few respondents reported having engaged in.

The results of the study highlight both structural problems associated with the particularities of the Estonian research system as well as tensions outlined by international research integrity studies (e.g. gift authorship, salami-slicing). The national research funding context can be associated with the relatively high misuse of targeted research funds as a symptom of highly competitive funding in an overall research-resource scarce environment (e.g. lack of resources for teaching in higher education tends to be covered by funding from research grants). In 2020 Estonia dedicated 1.79% of its GDP to R&D, although the policy objective was to reach 3% (SA Eesti Teadusagentuur, 2022). The average success rate in national research funding across disciplines 2018–2021 was 22.9%, with medical research having the highest likelihood of receiving funding at 32.7% and humanities and arts with the lowest at 16.1% (SA Eesti Teadusagentuur, 2022). Further research is needed to validate these explanations. Further research is also needed to study the results around the QRP of hampering the work of a colleague, where a third of respondents claimed to have seen it take place, yet almost nobody admitted to doing it.

International comparisons

Compared to other similar studies on research misconduct, 7 we can highlight that while self-reporting of combined FFP in Estonia was 6.2% (those who admitted at least one of the FFPs), international surveys indicate 1.97% (the survey from Fanelli, 2009 did not include plagiarism, which amounted to 4% in our survey), or, according to a more recent meta-survey, 2.9% (Xie et al., 2021: 41) of self-reported FFP. In the Netherlands (Gopalakrishna et al., 2022), data falsification was reported by 4.2% and fabrication by 4.3% of respondents, with a prevalence estimate of those two activities at 8.3% (no plagiarism included). The Norwegian survey indicated that salami-slicing was self-reported by 8% of the respondents, while in Estonia the self-reporting rate was 16%. Pertaining to whistleblowing, the Lithuanian survey (Ozolinčiūtė et al., 2020) asked about behaviour after noticing questionable practices and 49% of participants said that they had not reported the case, while in Estonia the rate was 21%.

Respondents were aware of the research integrity infrastructure (guidelines, counselling, training opportunities, etc.), although this did not directly translate into confidence in dealing with matters of REI. This is probably related to the fact that more than half of respondents had not participated in REI trainings. One of the explanations provided by respondents was that there were no suitable trainings available. Comparing results of other similar studies, we can see that in Finland, about 70% know about relevant REI guidelines, about 50% are aware of RI advisors, and 56% have participated in trainings (Salminen and Pitkänen, 2020). In Estonia, 70% of researchers know about REI guidelines, 61% know about RI advisors (29% have used their help), and 44% of people say that training is available. Awareness of REI resources can also decrease, as the recent consecutive Lithuanian surveys have demonstrated (Ozolinčiūtė et al., 2020; Umbrasaitė and Ozolinčiūtė, 2022). Participation in REI training programmes was the lowest in Estonia, with about 60% of researchers having not participated in any training in the past 5 years. In Finland, 35% of researchers have not participated in a training (Salminen and Pitkanen, 2020), while in Lithuania the figure is 41%, and in Norway 37% (Hjellbrekke et al., 2018).

Normative dissonance or counter-norms?

The largest gap between research ideals (i.e. activities considered problematic) and actual practices among colleagues pertained to hampering the work of another researcher and ignoring conflicts of interest. On the other hand, practices considered not so problematic (problematic to a degree or not at all problematic), and that were relatively widely self-reported pertained to salami-slicing (16%) and misuse of funds (15%) (these activities were noticed even more amongst colleagues: salami-slicing 38%, misuse of funds 43%).

Depending on whether or not the variation between REI norms and actual attitudes or practices is seen as problematic, there are broadly two analytical approaches to unpacking these results. One option is to interpret significant variance between established REI norms and actual practices in the work environment as normative dissonance that arises when ideals and reality misalign considerably, and this is felt and perceived to be a serious problem (Anderson et al., 2007). High normative dissonance has been associated with persistent work stress, and in the study results such dissonance appeared strongest in relation to observed gift authorship practices and the hampering of the work of colleagues.

Another option is to interpret these variations through the frame of counter-norms. The existence of counter-norms has been associated with competitive work environments (Mitroff, 1974) and it characterises the process of changing scientific norms over time (with counter-norms potentially becoming new norms). While counter-norms can be seen as problematic (and thus may contribute towards similar distress as normative dissonance), variance from established REI norms might also be seen as less problematic and more a case of ill-fitting norms (thus more of a formal, rather than a substantive issue of norm variance).

How can one decide whether the variation between ideals and practices is to be seen as negative (normative dissonance) or potentially positive, or at least in neutral terms (as when counter-norms develop into new norms)? Evidence of a counter-norm has been linked to ‘documenting non-trivial levels of support for a principle that is directly opposed to a recognized normative principle’ (Anderson et al., 2007: 5). Since almost a quarter (23%) of respondents did not consider salami-slicing to be a problem, this might be considered a candidate for a rising counter-norm. Significant changes in publishing practices in recent decades (electronic publishing, the increasing importance of peer-reviewed articles compared to books, quantification of research results in career advancement, etc.) have led to the emergence and increasing acceptance of a ‘new norm’ of ‘quantity matters’.

Limitations

One methodological challenge for a national research ethics survey lay in constructing a questionnaire that was cross-disciplinary (i.e. addressing general concerns like publishing pressures, mentoring shortcomings, predatory journals), yet inclusive of a variety of disciplinary or methodologically specific practices. Our survey asked the same questions of each respondent. An alternative would have been to develop separate survey sections and ask respondents to self-identify themselves according to discipline. However, given the extent of interdisciplinary research and the spread of methodological diversity across research fields, such self-identification might have guided respondents into forced choices. An option not applicable in my line of work was seen as a solution.

Given the very small size of the research community in Estonia, we needed to pay extra attention to issues like indirect identification of respondents and potential stigmatisation of disciplines and institutions. Being aware of the difficulties of researching this topic (De Vrieze, 2020), we were conscious of the fact that the first national research ethics survey needed to support the motivation of potential respondents and create trust towards the future collection and exploitation of such sensitive data. Therefore, we considerably decreased the background data that we asked for (no gender or specific institution’s name, experience level rather than position, very broad research field rather than discipline). This resulted in a somewhat less granular dataset while still fulfilling the aim of producing a national overview of the research integrity landscape. Also, the public and academic deliberation that has followed the publication of the official report raises hopes that the topic can be investigated in the future (Harrik, 2023; Oidermaa, 2023).

Our recruitment method did not allow us to identify response rate; therefore, it is hard to evaluate whether the study was representative of the Estonian research community. As the questionnaire was distributed via snowballing the REI contacts in Estonia, it was not possible to evaluate whether the questionnaire was shared in all the research institutions. It might have been possible that the gatekeeper of the field (e.g. the head of a faculty or institute) could have decided not to share the invitation with their team, thus hampering access to the survey.

In addition, with an online self-reported survey, it should be kept in mind that a sampling error might emerge as people more engaged in the topic may be more motivated to answer the questionnaire while people who think REI is not important were probably less motivated to fill in the questionnaire. Caution is needed when interpreting results of self-reported questionnaires as people might not be honest in responding to questions related to their own questionable activities (Fanelli, 2009; Martinson et al., 2005). In addition, perception creates bias on how well things are remembered (especially those that happened several years ago) or how well they were understood (assessment of colleagues’ activities). It is also possible that respondents gave socially desired answers to the questions.

Conclusions

Creating a survey methodology to investigate sensitive and potentially stigmatising data within a very small research community posed unique challenges. We refrained from including several standard background questions as respondents could potentially be indirectly identified. Omitting more specific institutional and disciplinary data may have contributed to a higher response rate.

Results of the national cross-disciplinary survey of research ethics and integrity in Estonia highlighted that the majority of respondents consider REI issues important, and the most severe types of misconduct are, similarly to other surveys, fabrication, falsification, and plagiarism. The self-reporting of combined FFPs was 6.2%. The data also underscored areas of potential counter-norms (especially salami-slicing) and identified practices where deviations from REI principles are perceived as particularly concerning (e.g. hampering the work of another researcher). These complementary frameworks on the dynamics of normativity in REI context are valuable and should be considered, as not all changes in research practices are necessarily problematic.

Supplemental Material

sj-docx-1-rea-10.1177_17470161241239791 – Supplemental material for National cross-disciplinary research ethics and integrity study: methodology and results from Estonia

Supplemental material, sj-docx-1-rea-10.1177_17470161241239791 for National cross-disciplinary research ethics and integrity study: methodology and results from Estonia by Kadri Simm, Mari-Liisa Parder, Anu Tammeleht and and Kadri Lees in Research Ethics

Footnotes

Acknowledgements

We thank all the researchers working in Estonia who kindly took the time to participate in the survey.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

All articles in Research Ethics are published as open access. There are no submission charges and no Article Processing Charges as these are fully funded by institutions through Knowledge Unlatched, resulting in no direct charge to authors. For more information about Knowledge Unlatched please see here: ![]() .

.

Funding for the study was provided by the Estonian Research Council through the European Regional Development Fund programme RITA.

Ethics approval

Study was approved by the research ethics committee of University of Tartu (approval 374/T-21 from 20 February 2023).

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.