Abstract

Evidence suggests that the incidence of research misconduct is not in decline despite efforts to improve awareness, education and governance mechanisms. Two responses to this problem are favoured: first, the promotion of an agent-centred ethics approach to enhance researchers’ personal responsibility and accountability, and second, a change in research culture to relieve perceived pressures to engage in misconduct. This article discusses the challenges for both responses and explains how normative coherence through values alignment might assist. We argue that research integrity and research ethics convey mixed messages, which are likely to contribute to a form of normative confusion. For the successful adoption of an agent-centred approach, normative coherence is needed between the two. To facilitate normative coherence, we propose that research ethics and research integrity be underpinned by a shared set of moral values that can be enacted via codes and guidelines and imbue research environments. Furthermore, to facilitate culture change, the same normative coherence is necessary at all levels of an institution. Only via values alignment between institutional aims, management, institutional practices and researchers can an ethical culture become truly embedded in research institutions.

Keywords

Introduction

In January 2023, Nature News reported that ‘Young physicists say ethics rules are being ignored’ (Naddaf, 2023). This claim was based on two surveys of young American physicists, commissioned by the American Physical Society in 2003 and 2020. Comparison between the findings from 2003 and 2020 revealed that the incidence of most forms of research misconduct (RM) was largely unaffected by significant improvements in ethics education and awareness building (Houle et al., 2023). The number of respondents who reported witnessing data falsification had increased from 3.9% to 7.3%.

These figures are alarming given that in 2003, 61% of respondents reported that they had no formal ethics training and by 2020, only 5% had received no formal training. While these findings are disturbing, they are not unusual.

A systematic review and meta-analysis of the prevalence of self-reported RM by Xie et al. (2021) included 42 articles, spanning 571 studies from different academic disciplines and 18 countries. Their pooled estimate for self-confessed prevalence of at least one type of RM (falsification, fabrication and plagiarism) was 2.9%. Their estimate for witnessing of others committing at least one type of RM was much higher at 15.5%. Furthermore, they reported that the incidence of RM varied significantly according to publication date with articles published after 2011 indicating a higher prevalence. It appears that current endeavours to eradicate RM are not working.

Over the past 15–20 years, as awareness and incidence of RM has increased, so have calls for the adoption of agent-centred ethics approaches such as virtue ethics (MacFarlane, 2010; Tolich and Tumilty, 2020). The importance of an agent-centred ethics approach for trustworthy research is increasingly recognised (Banks, 2018; Resnik, 2012) as this can serve to promote a sense of personal responsibility and accountability (Mogodi et al., 2019).

There also appears to be an emerging consensus that a change in research culture is needed (The Royal Society, 2017). To this end, the Committee on Publication Ethics (COPE) ‘aims to move the culture of publishing towards one where ethical practice becomes a normal part of the culture itself’ (COPE, 2023, np).

In this article, we propose two hypotheses to help explain why RM is not declining after decades of increased awareness-raising, ethics education and sustained governance efforts, namely that current practices create (i) normative confusion and (ii) values misalignment. We also outline some of the challenges for the cultivation of an agent-centred ethics approach in research, as well as for development of an ethical research culture. To address these challenges, we suggest that a coherent values system to underpin research ethics (RE), research integrity (RI) and institutional management. We begin by explaining what we mean when we refer to agent-centred ethics in research.

An agent-centred ethics approach

The broad field of ethics is commonly divided into three areas 1 : meta-ethics, normative ethics and applied ethics. Meta-ethics concerns the status, foundations and scope of moral values; it questions the nature, and indeed the very existence of morality. ‘Applied ethics’ is a term that broadly refers to the use of philosophical methods to examine specific issues for the purpose of moral decision-making. In this article, we focus on normative ethics as the theoretical framework or approach that is used to determine whether something is right or good. Normative ethics itself is usually divided into various sub-branches such as deontological (rule-based) theories, consequentialist theories, feminist theories and agent-centred theories (Hursthouse, 2022).

The origins of western, agent-centred ethics theories lie in ancient Greece with virtue ethics as articulated by Aristotle (384–322 BCE) in Nicomachean Ethics (Aristotle, 2009). In his legendary treatise, Aristotle explains that rational human beings have the potential to live a good life and achieve happiness (eudaimonia) through the acquisition of virtues. There are two main types: ‘Some virtues are intellectual and others moral, [for instance] philosophic wisdom and understanding and practical wisdom being intellectual, liberality and temperance moral’ (I.13, 1103a5). During childhood (and beyond), individuals must develop good habits. As the capacity for reasoning develops, they must also acquire practical wisdom (phronêsis). In the main, the development of intellectual virtues requires teaching, whereas the development of moral virtues relies upon habit. Eventually, through much practice, habits become character traits of the virtuous individual; none of the virtues arise in us naturally (Aristotle, 2009). All virtues are expressions of the mean between two extremes for instance, the virtue of courage represents the mean between cowardice and rashness: ‘For the man who flies from and fears everything and does not stand his ground against anything becomes a coward, and the man who fears nothing at all but goes to meet every danger becomes rash’ (II.3, 1104a19).

The entirety of Nicomachean Ethics Chapter V is devoted to the nature of justice, which, according to Aristotle is the greatest and ‘the most complete virtue’ (V, 1129b32). Indeed, aside from his general theory of virtue, Aristotle identifies and describes a range of specific moral virtues (like courage and temperance). The naming and description of specific virtues, together with the notion that properly guided individuals who are not themselves virtuous can perform virtuous acts, helps to delineate an agent-centred virtue ethics from an agent-based virtue ethics (Slote, 2013).

According to Slote (2013), agent-based approaches regard the moral or ethical status of actions as entirely derivative from the (virtuous) motives, dispositions or inner life of the agent. In other words, an agent-based approach holds that the virtuous person does not deliberate upon and select virtuous actions. Rather, their actions are deemed virtuous because they arise from their virtuous inner state (Russell, 2008). The rightness (or wrongness) of an act depends upon the agent’s motives and disposition; there is no independent criterion for rightness to which the virtuous person appeals, and no external characteristic of rightness that the agent sees (Brady, 2004). In this article, we focus expressly upon an agent-centred approach, rather than the more radical agent-based account of virtue ethics. Moreover, we are open to other interpretations (of which there are many) of Aristotle’s virtue theory as an agent-centred approach. For this inquiry, the important points of note include the following:

In common with many other ethicists, we hold that an agent-centred approach is vital for RE and RI because rules and principles-based systems, without the inclusion of agent-centred virtues/values, can lead to heteronomy of action and alienation (Foot, 2002).

We advocate for an agent-centred 2 rather than an agent-based approach, accepting the need for ethical deliberation and that moral virtues/values can be enacted by persons who are not wholly virtuous (in the Aristotelean sense).

We maintain that an agent-centred approach to ethics can be entirely compatible with a rules-based approach.

Regarding the last point, we acknowledge the works of Banks (2018) and Resnik (2012), who argue for the complementarity of a principles/rules-based approach and a virtue-based approach: ‘Virtue-based and principle-based approaches to ethics are complementary because they focus on different aspects of ethical conduct’ (Resnik, 2012: 338). However, while Resnik aligns a principle-based approach with a rules-based approach, Banks states that virtues and principles are different types of values, with ‘abstract principles’ being distinct from ‘specific rules’. Differences in the use of terminology make it difficult (even for the ethicist) to make sense of teachings about research ethics, and we believe that this can contribute to a form a normative confusion, as outlined in the next section. We will return to what we mean by values and their place in an agent-centred approach later.

Normative confusion

In everyday life, people draw upon different normative approaches to varying degrees when making decisions about ethical issues. Likewise, people draw upon a range of approaches in research. Regulatory compliance (rules), the ability to weigh harms and benefits (consequences) and trustworthy behaviour (agent-centred ethics) are all expected from researchers. Drawing upon different normative approaches for ethical decision-making does not, per se, create normative confusion. Rather, our hypothesis is that normative confusion stems from multivarious messages about what it takes to be an ethical researcher and that this normative confusion might contribute to research misconduct.

For instance, there is a general lack of consistency in the terminology that is used in ethics literature as well as across guidelines and codes of conduct, as Peels et al. (2019) highlight: . . . something that is described as a value in one code might show up as a principle or a responsibility in another.’ ‘. . . single codes typically use a wide variety of terms: . . . some are organized around core “values,” others contain long lists of “rules” or “principles,” sometimes accompanied by more concrete “applications,” yet others talk about “duties” or “responsibilities,” . . . as well as various combinations of these items (p. 1).

Additionally, there is little consensus about the most important virtues (principles / values / responsibilities) and different organisations propose various lists (Tomić et al., 2022). For instance, the Singapore Statement on Research Integrity (WCRI: 2010, henceforth Singapore Statement) specifies the four principles of honesty, accountability, professional courtesy and fairness and good stewardship, plus 14 responsibilities 3 ; the revised European Code of Conduct for Research Integrity (ALLEA, 2017) specifies four fundamental principles: reliability, honesty, respect and accountability; while Tomić et al. (2022) identified the virtues of honesty, integrity, accountability, criticism and fairness as the most essential for good scientific research practice.

A further source of confusion stems from the distinction that is drawn between RE and RI. While both are concerned with morality in research, RE and RI are generally addressed as if they are discrete entities with separate journals, 4 separate conferences, 5 separate professional networks, 6 and often separate training units. 7 In the broadest sense, the term ‘research ethics’ is applied to all issues of a moral nature that are associated with the planning, conduct, dissemination and impacts of research, as well as the ethics governance and regulation of research. The term ‘research integrity’, on the other hand, has a more demarcated meaning, relating to ‘the conduct of research in ways that promote trust and confidence in all aspects of the research process’ (UKRIO, 2023). Specifically, violations of RI involve deliberately or knowingly engaging in some form of RM (like fabrication, falsification or plagiarism), as opposed to errors or honest mistakes (Poff, 2014).

There may be some practical advantages to distinguishing between RE and RI (for example, bespoke governance mechanisms), but the impression that they exist as discrete entities compounds confusion about what it takes to be an ethical researcher. For instance, different training approaches can promote the idea that there are distinctions at the normative ethics level, with RE being more about compliance with ethics approval requirements, while RI is more about being a good person. Indeed, the promotion of virtues-based training for RI (see, for instance Evans et al., 2021; Tomić et al., 2022), while laudable, might inadvertently convey the message that this is more relevant for RI than RE. Certainly, agent-centred ethics is less well promoted for RE than it is for RI, and complex, technocratic governance mechanisms for RE do little to foster moral agency. Take, for example, the International Compilation of Human Research Standards. This is a listing of over 1000 standards for research participant protections in 131 countries and from many international organisations. The standards include laws, regulations and/or guidelines (HHS.gov, 2023) and many are grounded in the four principles approach (Beauchamp and Childress, 2001), which draws upon rules-based and consequentialist ethics, rather than an agent-centred approach. While Beauchamp and Childress (2001) accept that ‘often, what counts most in the moral life is not consistent adherence to principles and rules, but reliable character, good moral sense and emotional responsiveness’ (p. 26), the place of agent-centred ethics within the framework of the four principles (and thus RE) is not easy for researchers to comprehend. Of course, adherence to principles and rules is demanded for RI as well as RE and there are a great many guidance documents, at international, national and institutional levels. Nevertheless, the shift in emphasis from research integrity (the integrity of the research practice) to researcher integrity (the integrity of the researcher; Banks, 2018) has not been mirrored by an equivalent shift in emphasis from research ethics to researcher ethics.

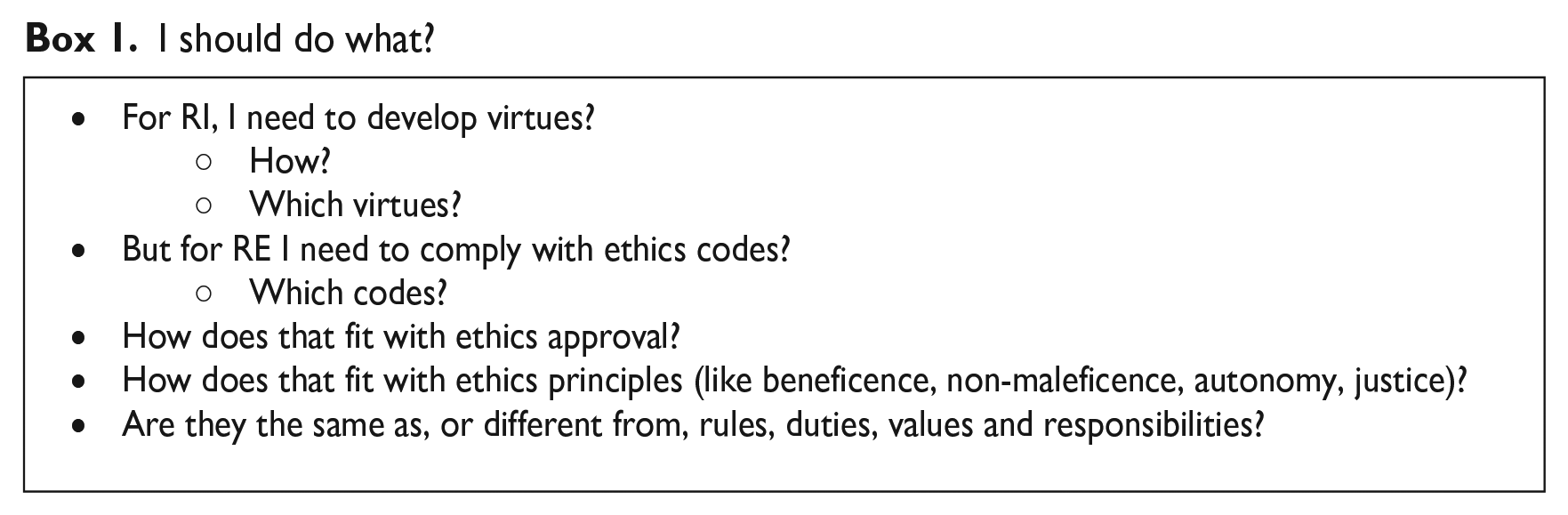

Even though there may appear to be clear differences between RE and RI, there is significant overlap between the two, and neither can exist in isolation. Research ethics and RI are inextricably linked, not least via the values, intentions and actions of the agent who is performing the research (the researcher). Still, there are mixed messages and obvious confusion about what underpins RE and RI at the normative ethics level. Together with the lack of consistency in terminology, and lack of consensus about the most important constituents, these factors can lead to a type of normative confusion (see Box 1).

I should do what?

If RE and RI are to be embraced fully by researchers, they need to understand what it means to be an ethical researcher and this is difficult when there is normative confusion. Hence, an important question to consider is how we might promote normative coherence across RE and RI, and whether this could lead to improvements in understanding and practice. By normative coherence we mean there is greater clarity around what underpins RE and RI at the normative ethics level so that researchers understand:

what is meant by the terminology that is used in literature, guidance documents and codes,

what is guiding their ethical decision-making in research,

the difference between agent-centred and action-centred approaches, and the interplay between them, and

how RE and RI are connected as well as why and how there are some differences in practise.

We do not mean to propose that normative coherence will make RE and RI easy for researchers or help to resolve ethical dilemmas from a singular standpoint. Even with the above measures, there will be times when different action-centred rules clash, or agent-centred virtues/values clash. Normative coherence does not sidestep incommensurability in ethical decision-making. However, normative coherence might help to improve understanding of what is at odds and why, which in turn will aid ethical deliberation.

Culture, performance and values

In recent years, there has been growing interest in research culture and environment, and the impact this has on both researchers and the integrity of their work. We know from studies across many different fields that there is an association between workplace culture and the outcomes/outputs of an organisation. For instance, in healthcare, Braithwaite et al. (2017) demonstrated ‘a consistently positive association held between culture and outcomes across multiple studies, settings and countries’ (p. 1). A positive workplace culture has a positive impact upon performance and behaviour.

In their report, The Culture of Scientific Research in the UK, the Nuffield Council on Bioethics (2014) concluded that researchers do not have a clear set of rules for every situation and can sometimes be tempted by rewards such as career progression, which might override being scientifically rigorous. Further, the Wellcome Trust (2020) found that many researchers believe that they are subjected to ‘overwhelming expectations’ in institutions that attach ‘more value to metrics than research quality’ (p. 12).

According to Immanuel Kant, human beings can distinguish between right and wrong quite easily, but they tend to rationalise exceptions for themselves (Sticker, 2017). Researchers are no exception; common sense determines that researchers will already know that it is wrong to manipulate or falsify data, even without special training. Nevertheless, RM happens, so how are researchers rationalising this? Kant invented a test for self-rationalisations termed the categorical imperative. For Kant, this is a matter of pure reason (Neumann, 2000).

8

If everybody always did as you do, would the ‘kingdom’ that you are part of still be the same? In other words, if everybody always falsified or fabricated data in a significant manner, would the kingdom of science still exist? Of course, the answer is ‘no’. The field of science would lose all credibility and therefore its purpose: In rationalizing, we misuse our capacity of reason in order to construct the illusion according to which we are not bound to the absolute demand of the moral law, but rather subject to exceptions and excuses (Noller, 2022: 175).

While the motivations for rationalising exceptions may vary, the propensity to engage in RM is often associated with external pressures (Grimes et al., 2018). Qualitative data from the aforementioned survey (Houle et al., 2023) revealed pressures that influenced ethical decision-making for the 2020 cohort of early career physicists. These included pressures to publish, to acquire funding, to achieve a high citation count and to obtain significant results. There were also indications that these pressures may be increasing. In the 2003 survey, 7.7% of respondents reported that they had felt pressure to violate professional ethical standards; in the 2020 survey, that percentage had risen to 12.5%. It is not that researchers do not have a sense of personal and professional ethics, rather that this is ‘constantly undermined by disciplinary, institutional and government drivers towards fulfilling goals and targets, even those ostensibly intended to promote ethics’ (Metcalfe et al., 2020: 7). It is likely that these pressures encouraged rationalisations in the Kantian sense. Given that there is general agreement that organisational culture can have a significant impact upon researcher behaviour, further scrutiny of what we mean by ‘culture’ and where it comes from is warranted.

There is no consensus in the literature about how organisational culture should be defined, but most descriptions emphasise the centrality of values, beliefs and norms. For instance, organisational culture is described by Scott-Findlay and Estabrooks (2006: 499) as giving ‘a sense of what is valued and how things should be done within the organization’, and by Marker (2009) as ‘our shared values’. Sleutel (2000) refers to organisational culture as the ‘normative glue, preserving and strengthening the group, adhesing its component parts, and maintaining its equilibrium’ (p. 54). At the normative level, organisational cultures are underpinned by values; ‘individuals draw from their values to guide their decisions and actions, and organisational value systems provide norms that specify how organisational members should behave’ (Edwards and Cable, 2009: 655). Organisational values influence the culture of an organisation (Martins and Coetzee, 2011), which in turn has an impact upon corporate performance (Ofori and Sokro, 2010), job stress and job satisfaction (Mansor and Tayib, 2010), as well as business performance and competitive advantage (Crabb, 2011).

There is also agreement that leaders in scientific institutions play a pivotal role in shaping the institution’s culture (The Royal Society 2017), but crucially a distinction is often drawn between organisational values that are espoused and those that are enacted or lived (Kabanoff and Daly, 2002). Shanafelt et al. (2019) describe espoused values as those that are ‘manifested in mission statements, the communications shared across the organization or profession, publicly stated values, and even advertising and promotional messaging’ (p. 1157). They represent what senior managers believe their organisations to be like, or what they would like their organisations to be like (Kabanoff and Daly, 2002). But for values to act like a ‘common glue’ the core values have to be ‘lived’ throughout the organisation, internalised by individuals (Minbaeva et al., 2018). This supposition underpins our second hypothesis, namely that misalignment between individual and organisational values acts as a barrier to the development of an ethical research culture and by implication might contribute to research misconduct.

Having explained our two hypotheses, we now turn to our proposal for how the associated challenges might be addressed. This involves the adoption of moral values as an agent-centred approach at the normative level and the alignment of institutional values with these personal moral values. In the next section we explain what we mean by moral values, why we emphasise values rather than virtues and suggest specific values for the promotion of normative coherence and values alignment.

The role of values

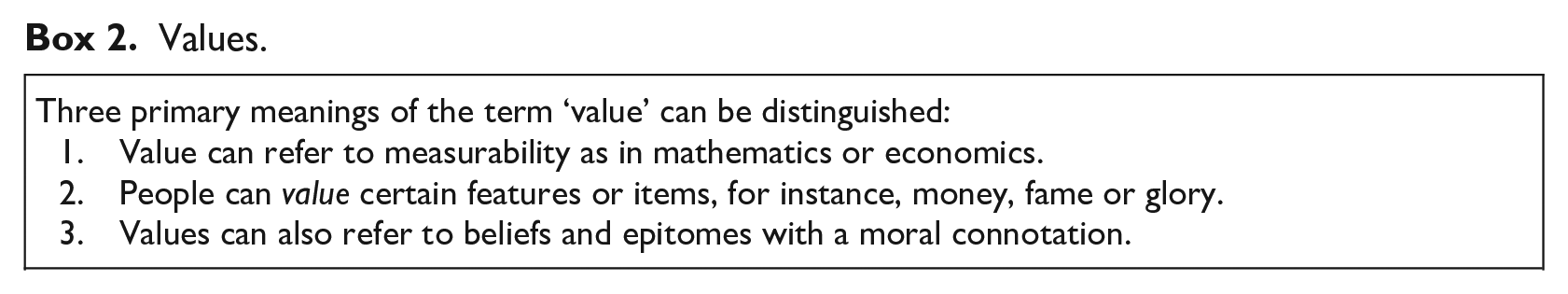

Values are integral to human experience (Ogletree, 2004) and references to values abound in all areas of human life and work. With vast numbers of internet sites proffering advice on matters such as values we should live by, deciding what is important in life, how to know who you really are and achieving success through values, it is obvious that values have a key role to play in the way the people view themselves, and the way they choose to live (Schroeder et al., 2019). The term ‘value’ can be used in many different ways (see Box 2). The type of values that we are concerned with are moral values or values that are, by definition, morally positive. 9 As Ogletree (2004) highlights, values guide our decision-making; they dispose us towards choosing one course of action over another. Thus, our moral values guide our ethical decision-making. If we hold the value of compassion, for example, we will strive to be compassionate in our decision-making. Another key feature of personal values is that they are motivational, and those with explicit moral connotations are regarded as the most important (Schwartz, 2012). People hold their moral values in high esteem and are strongly influenced by them (Schroeder et al., 2019).

Values.

Current discourse and efforts to promote agent-centred ethics for RI is primarily focussed upon the cultivation of certain virtues that are deemed important (Evans et al., 2023) rather than values. Virtues are so embedded that they can be regarded as part of a person’s character and their development requires effort, time, positive role models, appropriate feedback and experience. While the development of the virtuous researcher is an admirable goal, it might feel exclusionist to the young researcher who has yet to gain any practical wisdom. Fortunately, there is an easier and more speedy way to enhance personal responsibility and accountability that resonates with even the novice researcher.

A values approach to agent-centred ethics involves the explicit adoption of specific moral values, which serve to guide decision-making and dispose the agent towards choosing one course of action over another. Moreover, if researchers strive to act with fairness (an example value) regularly, eventually, fairness might become an enduring character trait as required by Aristotelian virtue ethics. In other words, by cultivating the value of fairness, eventually it might become a virtue of that person; virtues being embedded moral values. In that sense, a values and a virtues approach might be regarded as differing points along the same trajectory.

10

What we describe as a values approach appears to be similar to what Banks (2018) describes as researcher integrity as an ordinary quality of character rather than as a complex virtue. Accepting that researcher integrity can be quite demanding of researchers, Banks suggests the following ‘intermediary’ between simple compliance with standards and excellence of character: A researcher exhibits ordinary good character by showing ordinary commitment to the mission, values, principles and standards of codes of ethics etc. and a capacity to interpret and act on those principles etc. (p. 9).

This version of researcher integrity, involving ordinary commitment to values, seems much more achievable than a version that requires researchers to become extraordinary. Of course, it does not rule out the possibility that some researchers may indeed become extraordinary and achieve excellence of character. Likewise, the adoption of a values approach does not rule out the potential for the development of a virtuous nature. The primary difference being that a values approach can tap into (hopefully) existing moral values that resonate with the researcher and feel more attainable.

A values-based framework for RE and RI

Given our assertion that RE and RI are undermined by normative confusion, we propose that a unifying and overarching moral framework is required. We also propose that this moral framework be structured around values, rather than virtues, to promote inclusivity, accessibility and the development of healthy work cultures. But how do we decide what those shared values should be?

One moral framework, based upon the values of fairness, respect, care and honesty, is already used globally in RE. These four values (henceforth TRUST values) are foundational for the TRUST (2018) Code, which seeks to guide equitable research partnerships. The TRUST ethics code, launched in 2018, has been espoused enthusiastically around the world. For instance, it has been adopted by the European Commission, two of the top African universities (WITS and UCT), the European and Developing Countries Clinical Trials Partnership (EDCTP) and NATURE Portfolio to name a few (Global Code of Conduct, 2023). NATURE have adopted the code as the foundation of their inclusion and equity policy because: It’s a framework that’s based on four values of fairness, respect, care, and honesty . . . [T]hese are actually the elements that drew us to the code – the fact that they took such a broad, consultative approach, that they integrated the perspective of vulnerable populations, and that it is designed to be relevant across multiple disciplines (Swaminathan, 2022: n.p.).

There are a number of potential reasons why the TRUST values and the TRUST code have been adopted broadly; these four values are easy to understand, they do not require technical knowledge, they were identified via a bottom-up process, 11 they are not biased towards high-income country thinking, and the code was developed by a global team that included representatives from vulnerable populations (Schroeder et al., 2019). Importantly, because they resonate globally, they leave no room for confusion.

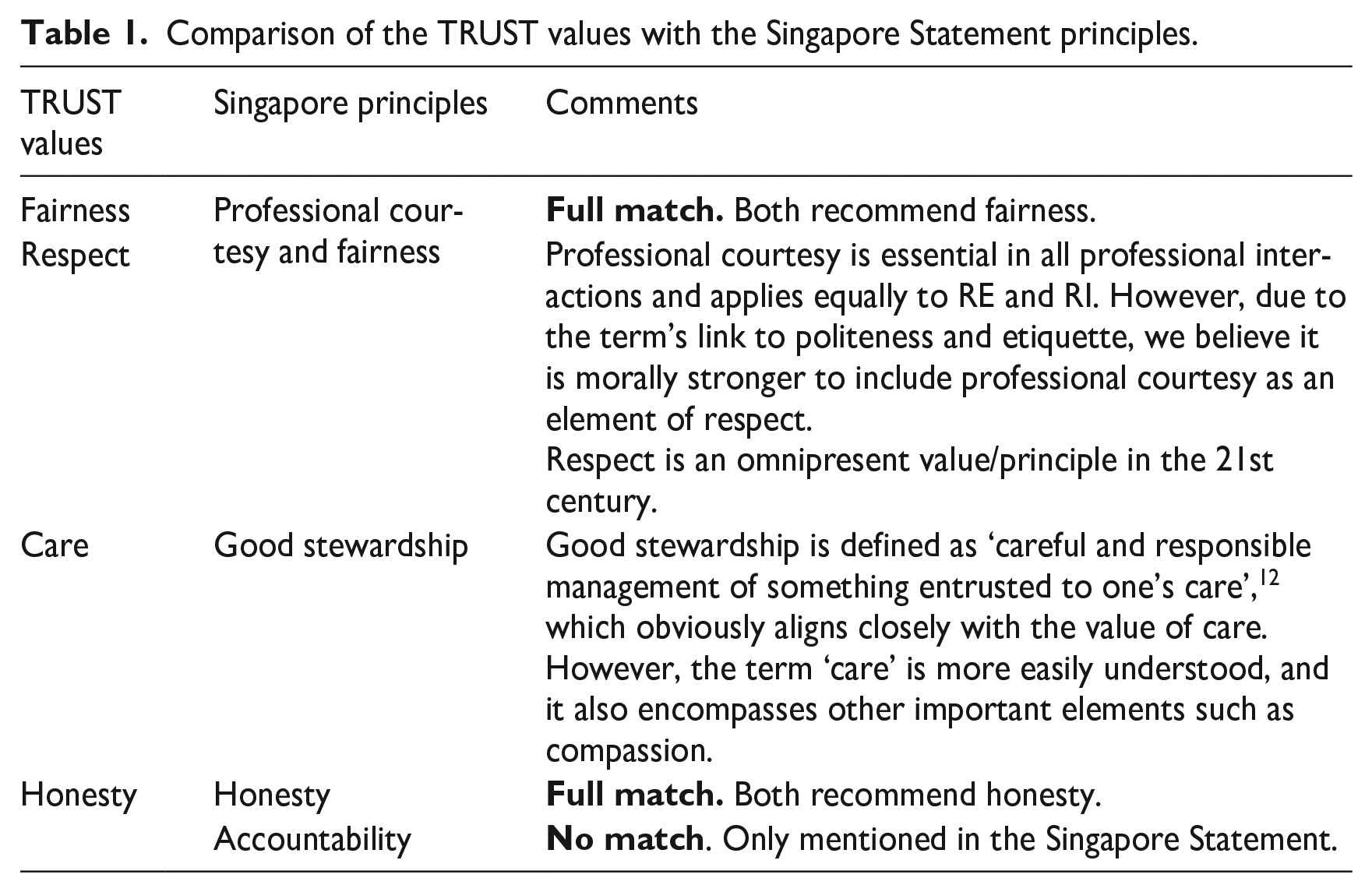

While the broad acceptance and uptake of the TRUST values can be taken as an indication that they provide a useful framework for RE, to enable normative coherence the framework of values must also be fitting for RI. To help explore this as a possibility, we compared the TRUST code with a sister code/statement that seeks to guide researchers towards RI. Such a code exists in the Singapore Statement on Research Integrity (2010), which has been successfully guiding researchers around the world since its inception in 2010. Table 1 summarises how far associations can be made between the TRUST values and the Singapore principles.

Comparison of the TRUST values with the Singapore Statement principles.

Aside from the fact that the TRUST Code was developed for RE and the Singapore Statement for RI, there are three obvious distinctions:

1. The TRUST Code refers to values whilst the Singapore Statement refers to principles. For the purpose of this analysis, we remain impartial on this distinction (see Box 3).

2. There is a full match for the TRUST values of fairness and honesty, but there is only a partial match for the values of respect and care. Additionally, the Singapore Statement includes the principle of accountability which has no direct counterpart in the TRUST code. Regarding respect and care, as noted in Table 1, we believe that the Singapore Statement principles of professional courtesy and good stewardship are encompassed by their broader TRUST code counterparts. Regarding accountability, this mismatch requires further consideration.

Values versus principles.

The Singapore Statement is not the only code to mention the importance of accountability for RI. As noted previously, the revised ALLEA (2017) code also specifies accountability as a fundamental principle. Additionally, accountability has been identified as an essential virtue for good scientific research (Tomić et al., 2022). One solution to this mismatch would be to add accountability to the four TRUST values, thereby creating a framework of five essential moral values for RE and RI. Certainly, accountability is also important for RE. However, we reason that this is not necessary because responsibility is a (hoped for) consequence of the adoption of agent-centred ethics, and the answerability element is encompassed by the values of honesty and fairness, as explained below.

Accountability can be defined as ‘an obligation or willingness to accept responsibility or to account for one’s actions’. 13 Thus, it is generally regarded as having two major attributes: responsibility and answerability (Fry, 2003). Leaving aside the long-debated issue of how responsibility can/should be attributed 14 one might interpret ‘responsibility’, in the context of RE and RI, as the ability of a researcher to connect with their moral agency, to recognise the interrelationship between their actions and the subsequent outcomes, and to act accordingly. These qualities are encouraged by the adoption of an agent-centred approach to ethical conduct. In fact, across a range of domains, agent centred approaches are increasingly promoted as a means to encourage responsible conduct (Šljivo et al., 2017; Steen et al., 2021). Personal responsibility can be considered a desired outcome of the adoption of an agent-centred approach in general.

The second attribute of accountability, namely answerability, entails a relationship between at least two entities, whereby one is held to account for their activities by the other (Romzek and Dubnick, 2018). According to MacIntyre (1999), for each [socially constructed] role there is a range of particular others, to whom, if they [persons] fail in their responsibilities, they owe an account that either excuses or admits to the offence and accepts the consequences (p. 316). Answerability requires honesty of the person being held to account; it may also involve some type of justice/fairness. 15 Thus, answerability is addressed when honesty and fairness are enacted.

3. Each guidance article in the TRUST code is aligned with a certain value while the relationship between guiding principles and individual responsibilities has not been made explicit in the Singapore Statement. Agent-centred approaches may be necessary, but on their own they are insufficient for ensuring ethical conduct for all but the most extraordinary researchers. Even well-intentioned researchers can unwittingly cause harm if they don’t have the skills, knowledge or understanding for the particular scenario in which they are operating. Consequently, we advocate for codes and rules for the operationalisation of values. However, normative confusion can be exacerbated when codes, rules guidelines are developed independently from values, or where the links between the two are not obvious. A recent trend, to make underpinning values/principles more visible at the front of a code (as in the Singapore Statement and the ALLEA code) helps to make the links more obvious. Nevertheless, we believe that normative confusion for novice researchers might be reduced even further if individual ethics requirements were linked to specific values, as in the TRUST Code. Thus, it would be easier for the researcher to understand the relationship between their values/virtues and action-guiding codes of conduct.

Towards normative coherence and values alignment

At the start of this article, we proposed two hypotheses to help explain why RM is not declining after decades of increased awareness-raising, ethics education and sustained governance efforts, namely that current practices create (i) normative confusion and (ii) values misalignment. In common with all hypotheses, these proposals need to be subjected to further interrogation; hypotheses are necessarily suppositional because they are based upon limited evidence. In this article we have attempted to describe our reasoning and how we have integrated available evidence and scholarly discourse to arrive at these hypotheses.

First, we proposed that normative confusion acts as a barrier to the reduction of RM. This is exacerbated by multivarious messages about what it takes to be an ethical researcher as well as the management of RE and RI as if they are entirely separate entities. While we can’t say for sure that there is a correlation between normative confusion and RM, there is evidence that deficiencies in understanding contribute to RM. For instance, in their qualitative interview study with scientists, Cairns et al. (2021), half of the participants referenced a lack of understanding of ethics as being a cause of unethical behaviour.

To promote normative coherence, the factors that lead to normative confusion would need to be remedied. This includes greater care around use of terminology. For instance, the word ‘principles’ is sometimes used to describe an action-centred, rules-based approach and sometimes as more akin to virtues or values (see Box 3). It would be helpful for researchers to understand the interplay between agent-centred factors and action-centred requirements and this type of difference in usage can create confusion around how decision-making is guided. Authors of codes and guidelines could also take greater care when deciding how they refer to virtues, values, principles, rules, responsibilities etc. rather than simply focussing upon which virtues, principles etc. are to be included. Additionally, normative coherence could be promoted across RE and RI via shared agent-centred education/training. While the specific, rules-based requirements for RE and RI might differ, the agent (the researcher) remains the same. Research integrity and research ethics may have different rule books, but researcher integrity and researcher ethics should be regarded as one and the same.

To ensure compliance with key requirements and guidance, research institutions have developed research governance systems, which normally include policies, standards of conduct and oversight committees. However, the question remains, how can researchers be inspired to act ethically rather than being policed to do so? In common with many other contemporary scholars, we have argued for an agent-centred approach, albeit actioned via values rather than virtues. Additionally, we suggest that a values approach, based upon one set of values, might help to promote normative coherence and we recommend that, at least in the first instance, the TRUST values of fairness, respect, care, honesty could be adopted as unifying moral framework for RE and RI. The choice of values may change or be added to over time, but these values are tried and tested in RE; they are also very similar to the principles that underpin the Singapore Statement for RI.

Our proposal that values should be embraced to improve ethics culture within institutions is not new. More than 20 years ago, Treviño et al. (1999) undertook the first large-scale empirical investigation into what helps and what hinders corporate ethics compliance management with a survey of over 10,000 employees from six American companies, spanning a variety of industries. The findings from the study indicated that across all six companies, it was clearly most important to have an approach that the employees perceived as values-based. Where employees perceived a values-based approach to ethics compliance, unethical behaviour was lower, awareness of ethical issues was higher, they were more willing to report ethics violations and more committed to the organisation (Treviño et al., 1999). As Tyler et al. (2008) point out, the effectiveness of a values-based approach, in which organisations seek to motivate employees to develop and act on ethical values for managing compliance, is well supported by empirical research.

We have also noted the association between RM and perceived pressures in the workplace. In the face of pressures, some researchers will rationalise exceptions for their unethical behaviours. The nature of the workplace culture is widely regarded as the most important component for unethical behaviour in an organisation; business scholars have long since turned their attention away from the study of the characteristics of individual transgressors (bad apple approach) toward the organisational context in which the unethical behaviour occurs (bad barrel approach; Kaptein, 2011). Consequently, we propose that for the development of a culture in which RE and RI can thrive, overtly moral values that resonate with individual employees must infuse the institution. To build supportive research environments, researchers need to feel that their personal moral values are aligned with those that imbue the environments in which they work.

When institutional and individual values are aligned, there are numerous organisational benefits including positive employee attitudes and commitment (Sullivan et al., 2001), as well as reduced staff turnover (Caldwell et al., 1991). Both clarity of organisational values, and personal values congruence, have been found to benefit factors such as employee commitment, satisfaction, motivation, anxiety, work stress and ethical conduct at work (Posner, 2010). There are also benefits for the individuals, as well as the organisation, including improved job satisfaction and fulfilment within the workplace (Edwards and Cable, 2009; Verplanken, 2004). Where there is misalignment between individual values and those of an organisation, people operate via objectives and obligations rather than by preference; where there are shared values that are embodied by all (or most) employees there is less need for explicit management and control (Branson, 2008). In other words, when people work in an environment that is reflective and supportive of their own values, they assume greater personal responsibility and wellbeing is increased.

Therefore, alongside the need for normative coherence through the adoption of values, we underscore the importance of supportive research environments for ethical researcher conduct. For maximum impact, people need to feel values alignment. Values alignment has a simple message: If institutional values do not align with the values of employees, detrimental effects may occur; the organisation can lose the trust and confidence of its workers as well as external credibility (Guillemin and Nicholas, 2022). Values set the tone for institutional culture. When moral values infuse a research institution, the foundations are laid for a research culture in which researcher ethics and integrity can thrive. Interestingly, in their large-scale survey of American employees, Treviño et al. (1999) found that what helped most was consistency between policies and actions and dimensions of the organisation’s ethical culture. Researchers who seek to act with integrity and to respect ethical norms can feel at odds and unsupported when their institutional values do not align with their personal moral values. Similarly, we suspect that researchers will be less tempted to engage in RM if this is clearly at odds with the values that imbue their work environment. Values alignment is needed in the research world.

In institutions where multiple different values are used for different purposes, this can appear to be contradictory, causing confusion and a lack of engagement. If we want researchers to aspire to particular moral values, the same values should permeate the environments in which they work. Of course, we can’t expect every institution to adopt exactly the same values, but if research institutions cannot embrace (at least) the values of fairness, respect, care and honesty, how can we expect to achieve trustworthy research? For effective operationalisation of values, there needs to be ‘buy in’ and adoption of the values at different levels of the institution. Organisational values need be evident in institutional policies, procedures and processes. Employees and other stakeholders need to see the values in action in order to believe that they are authentic and lived rather than simply espoused (Shanafelt et al., 2019).

Finally, we appreciate that researchers may be exposed to many different sets of values such as professional, cultural, familial and societal etc., but we have focussed specifically upon personal values because they are key to understanding oneself as a moral agent. As MacIntyre (1999) stresses, ‘. . . the powers of moral agency can only be exercised by those who understand themselves as moral agents, and, that is to say, by those who understand their moral identity as to some degree distinct from and independent of their social roles’ (p. 320). Nevertheless, the importance of congruence between the values of individual employees and their organisations should not be underestimated (Edwards and Cable, 2009). Governance systems within institutions can have complex requirements and this can result in researchers not understanding or fully engaging with RE and RI. Simplifying systems to be based around a core set of values which apply to both, and that are closely aligned to personal values, could help to could help to promote both normative coherence and values alignment within a culture that is underpinned by moral values.

Footnotes

Declaration of conflicting interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Kate Chatfield is co-Editor in Chief of Research Ethics. She was not involved in any handling of the manuscript submission process, including peer review. Kate Chatfield was also a member of the team who developed the TRUST Code.

Funding

All articles in Research Ethics are published as open access. There are no submission charges and no Article Processing Charges as these are fully funded by institutions through Knowledge Unlatched, resulting in no direct charge to authors. For more information about Knowledge Unlatched please see here: ![]() .

.

Funded by the European Union for the Prepared project. UK participants in Horizon Europe Project Prepared are supported by UK Research and Innovation grant number 10048353 (University of Central Lancashire). Views and opinions expressed are however those of the author(s) only and do not necessarily reflect those of the European Union or the Research Executive Agency or UKRI. Neither the European Union nor the granting authority nor UKRI can be held responsible for them.

Ethical approval

None required.