Abstract

In this paper, we present a study, which models and measures the competencies of higher education students in business and economics—within and across countries. To measure student competencies in a valid and reliable way, the Test of Understanding in College Economics was used, which assesses microeconomic and macroeconomic competencies. The test was translated into several languages and adapted for different university contexts. In the presented study, the test contents were also compared with regard to the educational standards and the university curricula in Russia and Germany. Our findings from the cross-national analysis suggest one strong general factor of economic competence, which encompasses micro- and macroeconomic dimensions. This points to a stronger interconnection between learning and understanding economic contents than previous research suggests and indicates far-reaching curricular and instructional consequences for higher education economics as well as needs for further research, which are discussed in this paper.

Keywords

Introduction

The international importance of students’ economic competencies in higher education and beyond is undisputed. In the context of ongoing trends such as the increasing internationalization of economic degree programs across the globe (OECD, 2020) and the globalization of financial markets (Dombrovska, 2020), there is an urgent need for competence-oriented models and instruments that allow for the valid and comparative assessment of economic knowledge and skills among students from different countries.

In recent years, there have been increasing efforts in this regard in the field of international assessments in higher education (for an overview, Zlatkin-Troitschanskaia et al., 2018). The largest international study to date, Assessment of Learning Outcomes in Higher Education (AHELO), also tested economic competencies among university students from nine countries (OECD, 2013). However, the test instrument used in AHELO was found to have numerous shortcomings with regard to the validity and reliability of the measurements across countries (OECD, 2012). This was one of the reasons why this international study was not continued.

Germany and Russia have been cooperating intensively for many decades, both in the realms of economics and (higher) education. Furthermore, there are numerous undergraduate and graduate exchange programs between universities in the two countries (International Study and Training Partnerships, DAAD, 2021). At the same time, however, no representative study of students’ competencies in economics from the two countries has been conducted so far. While Russia was part of the AHELO study, Germany was not (OECD, 2012). In other assessments of economic competence in higher education, Russia has yet to have participated (Zlatkin-Troitschanskaia et al., 2018). To the best of our knowledge, the study presented here is, therefore, the first comparative assessment of competence in economics among university students between the two countries.

Our study was conducted as a part of the international research collaborative project WiWiKom, which aims to model and measure economic competencies of higher education students within and across countries (for an overview, Zlatkin-Troitschanskaia et al., 2014). To measure university students’ competencies in a valid and reliable way, the Test of Understanding in College Economics in the fourth edition (TUCE) was used, which assesses microeconomic and macroeconomic knowledge (Walstad et al., 2007). The test was originally developed by the National Council on Economic Education (NCEE) to measure students’ knowledge and understanding of fundamental economic principles and concepts in a scholarly manner and has since established itself as a reliable and valid assessment tool for basic economics courses (Walstad and Rebeck, 2008). The test was translated and adapted into several languages and university contexts (e.g., Germany and Japan) and the functional as well as measurement equivalence of the adapted test versions has been demonstrated in several studies, which makes the test suitable for international comparisons of student performance (Brückner et al., 2015a,b; Förster et al., 2015; Zlatkin-Troitschanskaia et al., 2016).

For comparative analyses of economic competencies in higher education, it is essential that the test used is curriculum-based and instructionally valid for all countries involved (Berman et al., 2020). This in turn requires that the curricular and instructional approaches (1) cover essential parts of the test contents and (2) are comparable across countries. Therefore, comparative analyses of both curricula and economic university teaching, especially regarding the test-relevant contents (here: micro- and macroeconomics) in both countries are necessary. At the same time, the comparability of the samples and test administration procedure needs to be ensured (for details, the Test Adaptation Guidelines (TAGs) by the International Test Commission (ITC), 2018).

Therefore, the focus of this paper lies on the fundamental comparability analyses, whereby we follow the suggestions put forth by the current report “Comparability of Large-Scale Educational Assessments. Issues and Recommendations” of the National Academy of Education (2020). The ultimate goal of comparative analyses between countries is that the test used measures the same “construct,” that is, students’ knowledge and/or abilities, and the achieved score, that is, test performance, corresponds with the same level of knowledge and/or abilities. Hence, the (functional and measurement) equivalence of construct and test is of particular importance. Construct equivalence means that the construct (here, economic competence) is defined in the same conceptual and theoretical way and that the understanding of the content and structure of the construct is comparable between the countries. In this context, the teaching-learning environment and its specific, especially curricular, but also further-reaching aspects (e.g., sociocultural) must also be explicitly considered in the compatibility analysis (Berman et al., 2020). Analyses of this kind are fundamental prerequisites for more in-depth cross-national comparisons such as the investigation of students’ economic competence levels in Russia and Germany.

The challenges of conducting equivalent assessments in such distinct cultures, languages, and educational systems as the German and Russian ones, are tremendous, as prior international comparison studies have indicated. They are even greater for assessments in higher education, which is characterized by higher structural and curricular diversity compared to secondary education. In this context, fundamental questions arise, including: Based on what facets of economic competence can the construct be measured across (or within) countries in a valid manner? How, and using what criteria, can the comparability of construct and test be proven? What conceptual and empirical evidence needs to be sought for which practical uses and interpretative claims? This paper aims to address these fundamental questions.

Rationale for economic competence assessments within and across countries

For comparative analyses of the economic competence of university students between two countries, it is necessary to analyze the respective teaching-learning environments. For this purpose, the first step is an in-depth examination of the national educational standards and practices around economic competence in the two countries.

In the comparability analysis presented here, we first elaborate in more detail on the reasons for conducting the international assessment of economic competence among higher education students in Germany and Russia. In preparation of this study, it became evident that all participating universities had the similar rationale for pursuing a comparative assessment. This is due in particular to the lack of defined standards for economic education and the lack of evidence on the level of fundamental economic knowledge and skills of students in both countries (for details, see below).

According to the fundamental “Curriculum-Instruction-Assessment-Triad” model by Pellegrino et al. (2001), to develop effective teaching and learning offers for students in higher education economics and other support measures, the relationship between educational standards as described in economics study/degree programs (curricula), in particular at the level of fundamental economic courses, and the actual students’ economic competence should be defined. The educational standards should include intended student learning outcomes (competencies) to be achieved in economics degree programs as well as actual learning outcomes of educational programs (i.e., (final) study grades).

With regard to standards for teaching economics in Russian higher education, it should be noted that according to the Russian Federal State Educational Standards in Economics (FSES), the level of professional generic and domain-specific competencies are described quite broadly, which implies a high degree of flexibility for higher education institutions in developing their own bachelor’s programs. In particular, professional competencies in area 1 (apply knowledge of economic theory in solving applied problems), in area 3 (analyze and meaningfully explain the nature of economic processes at micro- and macrolevel) and in area 4 (propose economically and financially justified organizational and management solutions in professional activities) need interpretation and clarification in the form of formulating specific intended learning outcomes for education in these areas.

With a specific view on three of the four university campuses of The Higher School of Economics (HSE) (which comprise the participants in this study), our analysis shows that the HSE’s Educational Standards for Economics are generally higher than those of the Russian FSES and specify the main content areas of this discipline. In particular, the key educational standard for Economics (the student demonstrates solid knowledge and understanding of microeconomics, macroeconomics, and econometrics) involves mastering micro- and macroeconomics at the intermediate level as separate disciplines. The wording of the educational standards, however, neither discloses the content of the terms “economics” or “economic theory” nor describes “key sections” or “key concepts.” The level of economic competence expected of undergraduate students is more implied rather than clearly defined. Obviously, without a common understanding of the coverage of topics and the degree of mastery of the principles of economics, it is impossible to meaningfully discuss the “compliance” of students’ professional higher education with educational standards.

The analysis of standards for teaching economics in Germany shows a remarkably similar situation. Not only is there no formally binding curriculum at the secondary school level in most federal states, but also no binding nationwide core curricula and standards for universities. As a result, as a comparative study conducted by the German Council of Science and Humanities (2012) indicates, student learning outcomes in higher education economics as indicated by students’ bachelor grades are hardly comparable (within Germany).

This initial analysis of the educational standards indicates a common understanding of rationale for the cross-country assessment of economic competence among higher education students in the two countries (for a more in-depth analysis, see section “Analysis of approaches to teaching economics in Germany and Russia”). Conducting a large-scale measurement provides the evidence-based insights required to improve the effectiveness of economic teaching and learning in higher education.

To this end, an objective and valid test instrument to assess the level of learning outcomes achieved is required. The test results constitute the basis from which minimum/basic standards of economic competence can be derived. The test can be routinely used to measure the extent to which students have achieved a certain competence level and to compare the level of economic competence across time and space (within and between countries).

Assessment framework for the comparative study and research questions

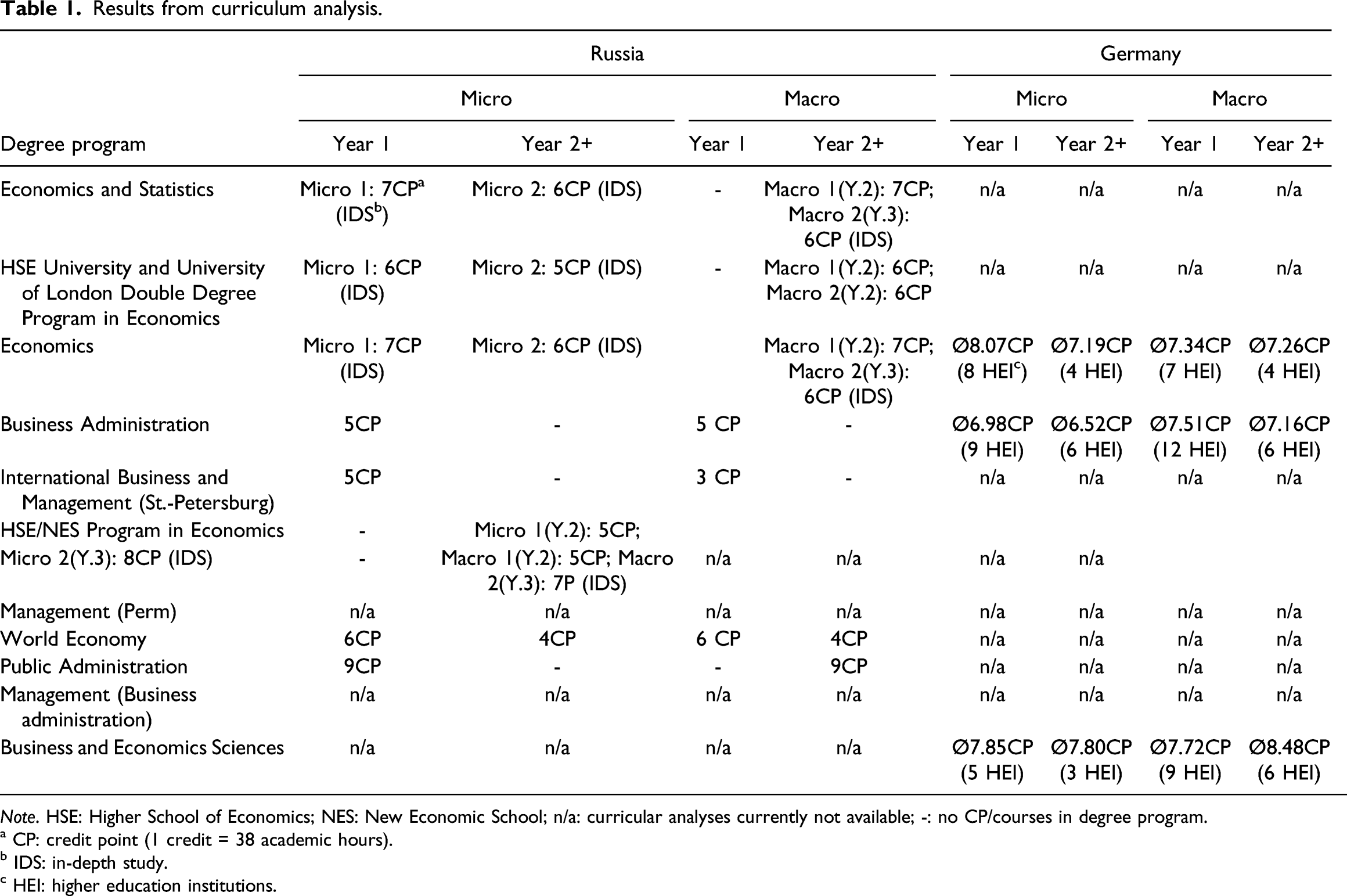

Results from curriculum analysis.

Note. HSE: Higher School of Economics; NES: New Economic School; n/a: curricular analyses currently not available; -: no CP/courses in degree program.

a CP: credit point (1 credit = 38 academic hours).

b IDS: in-depth study.

c HEI: higher education institutions.

For national and international comparisons, the Council for Economic Education has developed 20 Core Content Standards (CEE, 2010), which are considered to be essential for the comparison of economic content in higher education and the CEE Standards have been the basis for many comparative analyses with the United States (Brückner et al., 2015a,b; Förster et al., 2015; Zlatkin-Troitschanskaia et al., 2016). Since the internationally standardized WiWiKom test is based on the CEE standards (Zlatkin-Troitschanskaia et al., 2014, 2019), this assessment framework provides an opportunity to operationalize the content-related and cognitive dimensions of competence in economics of both countries in a comparable way and to validly measure its level among economic students within and between the two countries.

Therefore, this study is based on this assessment framework. Following the Test Adaptation Guidelines by the International Test Commission (ITC) (2014, 2018), and the current report of the National Academy of Education (2020), the German adaptation of the U.S. test (TUCE) was further adapted and validated for the Russian context. In this context, the linguistic and functional equivalence of the three versions (the U.S. original and the German and Russian versions) were determined. Thereby, sociocultural, instructional and curricular aspects of economic education in both countries were reflected as well. Based on the theoretical approaches, the construct “economic competence” was empirically tested. More specifically, based on the comparability analyses of the underlying educational standards and curricula in both countries, the next necessary step was to explore to what extent the expected (economic) contents and their structure can be identified in the empirical data (i.e., students test responses) and whether this content structure of the construct of “economic competence” is equivalent between the two countries (for construct equivalence, see the Section above). For the subsequent analyses, we therefore focus on three research questions (RQs):

To what extent is economic education in Russia comparable with economic education in Germany, particularly in terms of teaching approaches and curricula?

Based on curricular analyses in both countries, what can the content structure of the construct of “economic competence” assumed to be (one- or multidimensional)?

In the empirical cross-country comparison, what content structure of the construct “economic competence” can be determined?

Analysis of approaches to teaching economics in Germany and Russia

In the next step, we focus on two main levels of economic education in the two countries, secondary and higher education.

Secondary education

The federal educational standards of secondary school education in Russia regulate the teaching and learning of economics at school in three formats: economics at a basic level, economics at a more advanced level, and economics as part of the subject “social studies”. The analysis of the existing practices of economic education in secondary schools shows that the vast majority of Russian students are taught economics in ‘social studies’ class. The only exception is so-called “profile schools” (classes) in which economics is taught in depth. However, it is rare for any economics class to be part of the regular curricular in most schools.

The popularity of the “social studies” class is explained by the fact that this subject is accepted by many universities as a compulsory subject for the Unified State Exam (USE) for admission to many universities’ degree programs. On average, 2–3 h a week are allocated to “social studies” in high school. If economics is studied as a separate subject at an advanced level, then it is taught 1–2 h per week during the last 1–2 years of school (grade 10–grade 11).

In Germany, educational policy is organized federally. Thus, individual federal states bear responsibility for their respective school systems, which results in educational structures and curricula differing substantially between the 16 German federal states. In the German school systems of (upper) secondary education, economic education is not standardized. There are significant differences in the curricular integration of economic content in secondary schools. In most cases, economic content is taught in “social and political science” classes, but there are also federal states, such as Baden-Württemberg, which have recently introduced the subject of economics in schools (Oberrauch and Kaiser, 2020).

In contrast, economics is a standard curricular component in vocational education in both countries. Unlike in (upper) secondary education, the vocational school also includes a vocational subject, for example, economics and/or business administration. The curricular extent differs greatly between the individual vocations. Here, however, there is a difference between the two countries. While in Germany, a significant proportion of higher education students complete a non-academic vocational training beforehand (up to 25%, Commission of Experts for Research and Innovation (EFI), 2014), going to university after completing vocational training is much less common in Russia (e.g., at HSE less than 1%, see Table 1).

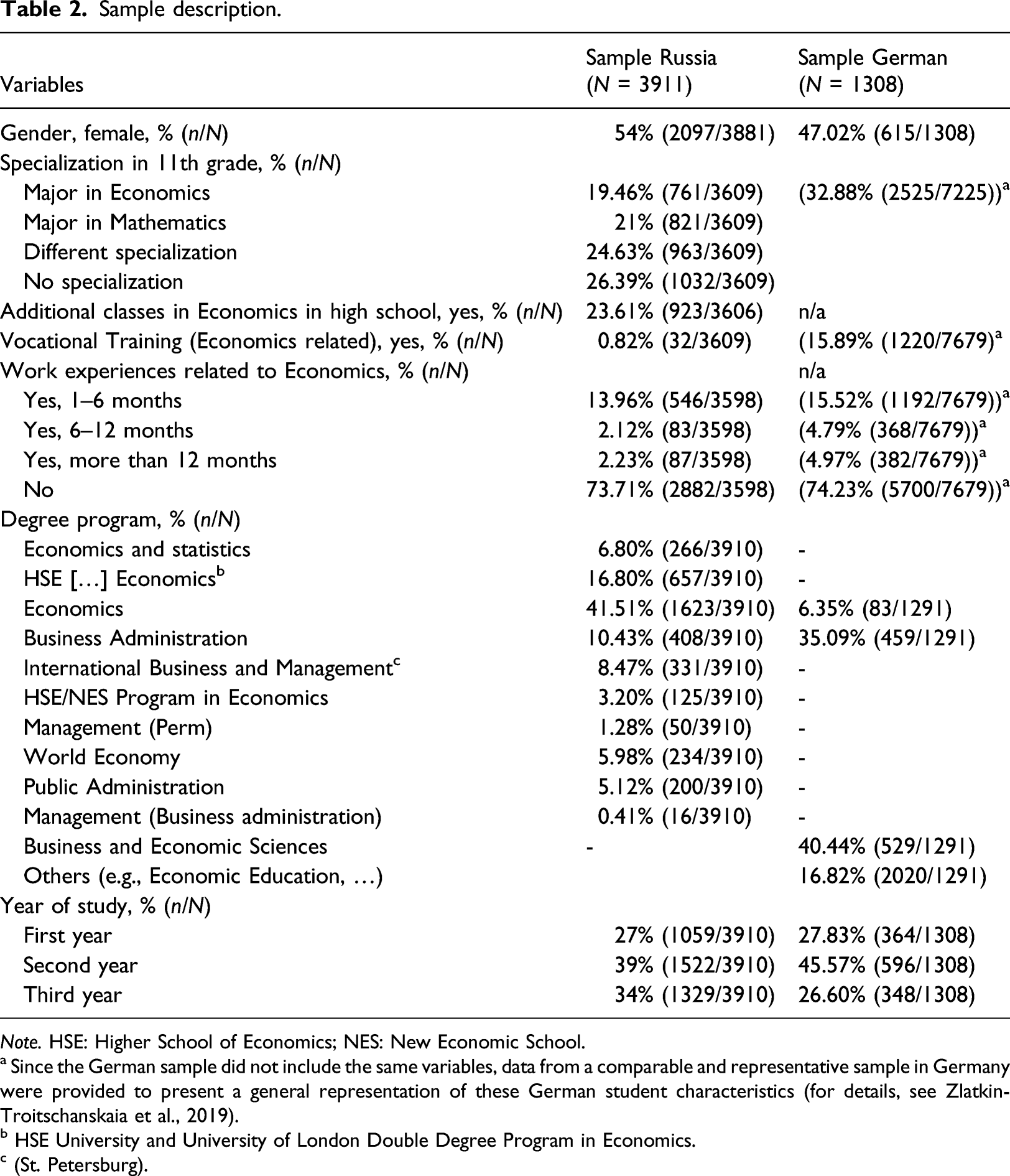

Sample description.

Note. HSE: Higher School of Economics; NES: New Economic School.

a Since the German sample did not include the same variables, data from a comparable and representative sample in Germany were provided to present a general representation of these German student characteristics (for details, see Zlatkin-Troitschanskaia et al., 2019).

b HSE University and University of London Double Degree Program in Economics.

c (St. Petersburg).

Higher education

Next, we focus on the fundamental level of higher education economics in both countries, which is also addressed in the assessment framework (see the next section).

In both countries, the division of economics into content areas (thematic units) of macro- and microeconomics generally corresponds to the U.S.-American tradition of teaching economic courses (Brewer and Picus, 2014). In terms of economic competence development, however, such a division does not seem obvious or mandatory at the introductory level—neither in Germany nor in Russia. For instance, usually, both non-economic and economic higher education programs offer a single course as a general introduction to economics (for details, Table 1). However, most introductory level textbooks retain the logic of sequential presentation from microeconomics to macroeconomics. Similarly, students’ knowledge in these content areas is often assessed sequentially and independently. Despite the concerns about the interdependence of macro- and microeconomics, as also present in the specifications of well-known established economic tests (GCSE, AS and A-levels, Sen et al., 2005), the assessment of understanding of the principles of economics usually takes place within separate content blocks.

In higher education economics degree programs, introductory level courses in macro- and microeconomics are longer (than in non-economics programs) and usually taught by different teachers/lectures and in different teaching formats. This results in substantial differences in approaches and methods between the two subdisciplines (micro-/macroeconomics) (Reardon, 2014). At the same time, experienced teachers may emphasize a common theory and methodology of economic science. For instance, modern macroeconomics is based on strong microeconomic foundations, which, however, are bypassed in introductory level courses. Traditionally, when considering balance of payments and exchange rates in macroeconomics, knowledge of microeconomics is implicitly assumed. For example, imported goods are assumed to be normal (a term from microeconomics) and the concept of partial equilibrium is applied to the foreign exchange market (the commodity in the foreign exchange market is a foreign currency unit). Additionally, the concept of price elasticity of demand is used to analyze the impact of exchange rate changes on net exports, is considered to be “general economic knowledge” and requires certain skill level in mathematical analysis. Given that economic competence requires a high level of quantitative reasoning but is clearly distinct from mathematical competence (Shavelson et al., 2019), there is rather a consensus in both countries that economic thinking and understanding needs to be developed at an introductory level to help bridge the methodological gap between partial and general equilibrium.

The initial, superficial analysis of the higher education micro- and macroeconomics—that are the main content areas of the TUCE—in Russia and Germany shows considerable similarities between the two countries in terms of the provided teaching and learning offers for the acquisition of (micro- and macro-) economic knowledge and understanding, in particular at the introductory level. For a more differentiated insight, the curricular of all degree programs included in this study were analyzed (Table 1).

Curriculum analysis of degree programs included in this study

To draw valid conclusions about the assessment and its cross-national comparability and dimensionality in both countries, it must first be ensured that the teaching and learning opportunities in economics at universities are aligned with the test contents assessed and are comparable. In this context, the (micro- and macro-)economic courses offered are explicitly considered, as the relevant contents are covered by the assessment.

The curricular analyses 1 of the included Russian degree programs comprise all 11 degree programs offered at the Higher School of Economics from which the respective students who participated in this study were taken. Business courses (e.g., management) were also included in the analysis (see Table 1), as it can be assumed that basic economic principles are taught in these study programs as well. The number of credit points (CPs) to be acquired per module 2 reflects the average student workload. In HSE’s credit point system (which is also deployed by most other Russian universities), 1 CP stands for 38 Russian academic hours (1 academic hour = 40 min or 0.67 h; resulting in 25.46 h).

With regard to the curricular analysis of (micro- and macro-) economic education in higher education in Germany, a total of 27 universities and 35 bachelor-level degree programs participating in the German assessment were analyzed. All of these programs can be assigned to one of the following three broad classifications: business administration or microeconomics, political economy or macroeconomics, and economic sciences. These are the most popular degree programs in the study domain of economics. For instance, students of less popular degree programs, for example, business statistics, usually have the same key courses as students of the three major degree programs. Therefore, only business administration, political economy, and economic sciences were included in the curricular analysis. The analysis is based on an examination of the annotated undergraduate course catalogs and examination regulations that are available for every degree program in Germany and which contain the central contents as well as the objectives (i.e., targeted learning outcomes) of all classes of the degree program. The information on credit points per course were taken from these materials and averaged across all included universities. The credit points in Germany are based on the European Credit Transfer and Accumulation Systems (ECTS). Therefore, one credit point corresponds to approximately 30 h of work.

Since there are slight differences between the two countries’ systems, a conversion to the Russian system was made for better comparability (with a conversion factor of 1.178). The results of the curricular analysis for both countries are presented in Table 1. This is to be interpreted as follows using Economics as an example: Eight of the universities examined offered courses in microeconomics recommended for the first year of study. On average, 8.07 credit points can be achieved from attending these courses.

Overall, in the included Russian degree programs, microeconomic content seems to be taught in all phases of study, while macroeconomic content tends to be taught more frequently from the second year onward. In the German degree programs, there seems to be no difference between the (micro- and macro-) content areas in this respect. Many courses covering these contents are already offered in the first year of study. In both countries, there are slight differences in the extent to which the content is covered depending on the course of study. A comparison between the German and Russian curricular analyses shows that students in German programs seem to achieve slightly more credit points on average, which implies higher time investment compared to students in Russia.

Based on these analyses, with regard to RQs 1 and 2, we can conclude that despite certain structural and curricular differences, economic education in Russia is comparable with economic education in Germany, particularly in terms of teaching approaches and curricula, and in both countries the test contents are covered in the respective curricula. The identified differences that can impact the level of students’ (micro- and macro-) economic competence will be specifically controlled for in the cross-country assessment (RQ1).

Moreover, the curricular analyses in both countries indicate a high interdependence between the content areas (micro-/macroeconomics), in particular at the introductory level. Therefore, the one-dimensional content structure of the construct “economic competence,” that is, “general economic competence,” can be assumed for both counties.

WiWiKom economic competence assessment

Test instrument

The TUCE consists of a total of 60 items, which are presented in a closed-response task format, each with one correct answer option and three distractors. The TUCE is based on a well-established taxonomy of knowledge structure (Anderson and Krathwohl, 2001). This taxonomy differentiates between three cognitive levels: (a) students’ understanding of economics as well as (b) the implicit and (c) explicit application of knowledge to solve economic problems. The TUCE is divided into two subdomains, microeconomics and macroeconomics, each represented by 30 closed-ended items (Walstad et al., 2007). Overall, the test contains 12 content categories (with six categories for macroeconomics and six categories for introductory level microeconomics), which in turn contain several individual topics and concepts each (for more details, see test manual: https://www.econedlink.org/wp-content/uploads/2018/09/TUCE-4th.pdf).

The notion of “economic competence” is thus defined in the test specification as a set of cognitive skills and abilities required for solving problems in the fields of micro- and macroeconomics. In this regard, the WiWiKom test construct implies a shift in emphasis from pure declarative knowledge to procedural knowledge demonstrated in the context of applying it to a concrete case scenario. The description of the situation as provided in the test does not explicitly refer to a specific topic or area of economics. However, the content underlying the concepts “typical introductory level economics course” and “general understanding of the basic principles of economic” is described in the test specification, which explicitly distinguish between macro- and microeconomics.

In accordance with this test definition, the WiWiKom test question format is not explicitly tied to the subject (sub)area, as it is case-based rather than content-based. The test questions are descriptions of practical situations, whereby the identification and understanding of the underlying problem are viewed as a component of economic competence. Furthermore, questions on macro- and microeconomics are mixed, requiring an analysis of the applicability of the relevant economic knowledge and skills in the respective areas for answering each question. Under these conditions, questions for the implicit and/or explicit application of economic knowledge without specifying a subject area implement the idea of integrated assessment of economic competence. Implicit application of economic models is embedded in about a quarter of the test questions, which in this context also reflects elements of critical thinking (in particular, comparing alternatives and identifying hidden assumptions) and, in some tasks, quantitative reasoning (for details, Shavelson et al., 2019).

Test adaptation

Adapting test questions to national and university specifics is a complex and subtle challenge that requires the input of highly qualified experts and (local) teachers (Berman et al., 2020). To ensure the validity and reliability of an adapted test, it is necessary to maintain the distribution of questions both by content and by level of cognitive complexity as far as possible. The latter implies outcome-based learning (OBL) standards on the fundamental principles of economics and the formulation of “cross-curricular” intended learning outcomes at the course level rather than on a topic-by-topic basis (Phuc et al., 2020). The assumed depth of learning the fundamental principles of economics reflects the cognitive dimensionality of the taxonomy of learning objectives. The hierarchy of cognitive levels provided by the test design makes it possible to judge the performance of a certain level of economic competence in general.

Therefore, the process of test adaptation involved a team of experts from the field of higher education economics. When adapting the test, the teachers/lectures of economics modified the original questions in accordance with the specifications, that is, at three basic levels of cognitive complexity: (1) recognition and understanding, (2) explicit application, and (3) implicit application of the analytical approaches of economics to practical situations (Walstad et al., 2007).

The adaptation of the German version in Russia was strictly based on the TAGs by the International Test Commission (ITC) (2018), and the test quality of the adapted version was extensively tested according to these standards. This included expert raring, cognitive labs and small pilot studies. Subsequently, the measurement equivalence between the two test versions was also established.

Data and method

Test administration

For the Russian data collection, the testing was conducted at the beginning of June 2020, after the end of the spring term. The students were tested at the end of their first, second, and third years in a computer-based format under controlled conditions. The test consisted of 60 items administrated in randomized item order. The test time was 60 min.

In Germany, the survey took place in the 2013–2014 winter semester and a booklet design was used to administer the test. Students in their first, second, and third years of study participated in the assessment. The German item bank was divided into 6 booklets of 10 items per booklet, making up 60 items in total. The German participants were administrated between 1 and 3 booklets, resulting in a final database of responses with values missing by design. The test was carried out under controlled conditions and the test time was approximately 45 min.

In both countries, all missing responses (except those missing by design) were treated as incorrect answers, assuming that the respondents did not answer to an item due to a lack of knowledge, since they were allotted enough time to respond to all items (for details, Schlax et al., 2020).

In addition to the economic competence test, questionnaires were administered in both countries to assess previous education and socio-demographic backgrounds (Table 2).

Sample

The Russian sample consisted of 3911 undergraduate students at three campuses of the national research university “Higher School of Economics.” The German sample consisted of 1308 undergraduate students at 22 German universities. Students in both countries were studying economics and were in the first, second or third year of their bachelor program. Only students who gave answers to at least 50% of the items were included in the sample for the following analyses. For further information on the German sample, see Zlatkin-Troitschanskaia et al. (2019) and Schlax et al. (2020).

When comparing the two samples, differences become evident both in demographic characteristics (gender-ratio) and in previous education, as well as in terms of distribution across degree programs and years of study. These differences must be taken into account when interpreting the results (see the last section).

Data analysis

To analyze the economics test data, we used Item Response Theory (IRT) models (Van der Linden, 2018). IRT presumes that both test takers and items differ in terms of their latent parameters, such as latent competence abilities (here, economic competence) and items’ difficulty parameters; the probability that a person responds correctly to an item is a function of those parameters. More specifically, we used Rasch (1993) and 2PL (Lord and Novick, 2008) models. 3 Since Rasch models are nested within 2PL models, we use a likelihood ratio test to compare these models to analyze if an unrestricted model fits better than a restricted one with regard to the additional parameters.

During the analysis, we used unidimensional, multidimensional, and bifactor models to investigate RQ3 in terms of extraction of a general factor of economic competence. While the unidimensional model assumes that a single parameter is sufficient to describe differences in latent abilities between all participants across the entire test, the multidimensional model assumes that multiple person parameters are necessary to describe such differences between participants in the content dimensions of the test (i.e., micro- and macroeconomics, Reckase, 1997). Moreover, the multidimensional models allow for directly estimating the correlations of latent person parameters. At the same time, bifactor models assume the existence of some kind of “common cause” behind the observed correlations of latent traits from multidimensional model (Huang et al., 2013). Bifactor models allow for the extraction of this common cause from multiple lower order dimensions and enable us to estimate their residual variance.

To estimate global model fit, we analyzed absolute and relative model fit indices. To estimate the absolute global fit, standardized root mean square residual (SRMSR; Hu and Bentler, 1999), according to the recommendations by Maydeu-Olivares (2013) and Shi et al. (2020), and standardized mean residual (SRMR) were used. These statistics describe the average deviation of observed correlations of item pairs from the model expectations. 4 The models with SRMR and SRMSR values lower than 0.08 are considered acceptable (Hu and Bentler, 1999). To estimate the relative model fit, we compare the models using Akaike (1974) and Bayesian (Schwarz, 1978) Information Criteria (AIC and BIC). 5 Models with lower values for all indices are preferable.

To estimate all the models, we applied the marginal maximum likelihood estimator (Bock and Aitkin, 1981), implemented in a TAM package (Robitzsch et al., 2020) for R.

Results

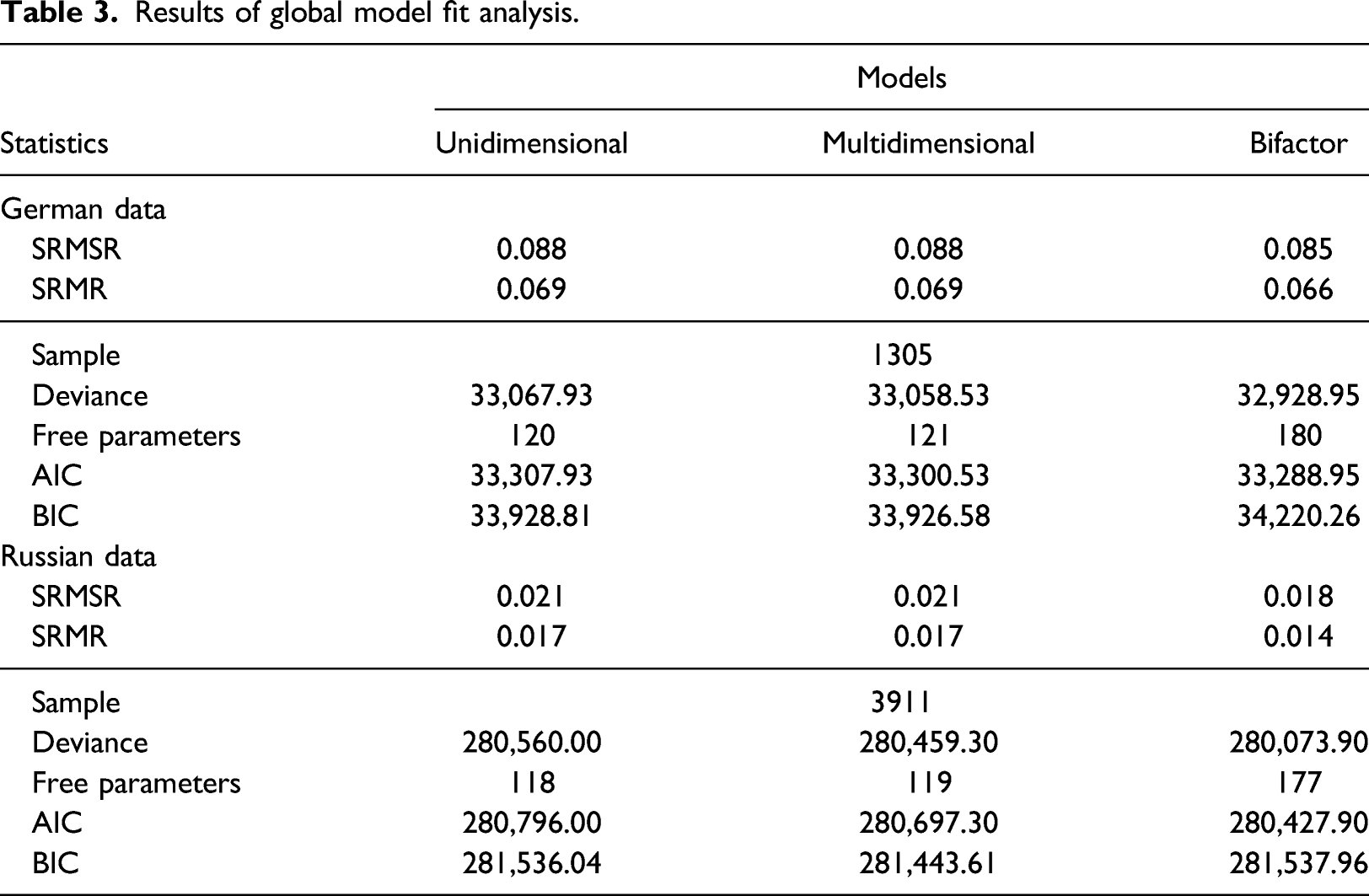

Results of global model fit analysis.

As Table 3 demonstrates, all models fit to the German data rather poorly according to the SRMSR statistics. The SRMSR statistics indicate some degree of local item dependence on person parameters in the German data. However, according to the SRMR statistics, all models demonstrate an acceptable fit. Moreover, neither of the statistics varies much across the calibrated models, implying that local item dependence is not related to the content classification of items. For the Russian data, all models fit well according to both SRMSR and SRMR. Thus, all models can be treated as well-fitting models in terms of absolute fit.

As a result, next, we proceeded with the analysis of relative model fit, whereby AIC and BIC indices indicated mixed results. The bifactor model fits the best according to AIC, while the multidimensional model fits the best according to BIC. Therefore, selecting the model that best represents the dimensionality of the test is challenging. However, the bifactor model exhibits a better model fit in terms of absolute fit. Thus, considering all the results obtained from the analysis of relative and absolute model fit, we conclude that the bifactor models fit better to the respective data. This means that this is possible to extract some “common cause” of item responses across micro- and macroeconomics.

To estimate personal level reliability of general factor of economic competence, we used EAP-reliability. The individual-level EAP-reliability for the general factor of economic competence was 0.842. The results suggest that the general factor of economic competence fits for reporting the individual test scores.

Overall, regarding RQ3, we can empirically suggest a one-dimensional content structure for the construct “economic competence” in the cross-country analysis.

Conclusions

Discussion

In the context of the comparability analyses, the empirical results indicated a high correlation between the two underlying factors corresponding to the content dimensions micro- and macroeconomics. The research question (RQ3) pertaining to merging these dimensions into a single general factor of economic competence was tested using IRT. The test dimension analysis suggests one strong general factor of economic competence. This indicates the one-dimensionality of the WiWiKom test, which therefore measures “general economic competence.” We conclude that this single general factor of economic competence encompasses the micro- and macro-economic dimensions.

This finding might indicate a stronger interconnection between learning and understanding the economic contents than previously assumed in the literature. Therefore, this result needs to be viewed in the context of the curricular and instructional conditions in higher education economics in both countries to explain this high correlation between the macroeconomics and microeconomics parts of the WiWiKom test.

Generally, curricula of basic economics designate macroeconomics and microeconomics as separate subdisciplines of economics. As indicated by the curricular analyses in both countries and with reference to the test manual, the curricula in both countries (i.e., “intended curricula”) cover the vast majority of the TUCE items. However, substantial differences in educational standards and practices and also peculiarities of teaching economics (i.e., “implemented curricula”) become evident both within and between countries. For instance, separate courses, for example, introductory macroeconomics and microeconomics courses, are (most often) taught by different instructors and using different teaching approaches. Besides the possible “teacher and instruction effect,” most native-language (here, Russian and German, respectively) and translated textbooks used in introductory level economics courses make explicit distinctions between the two parts of economics. At the introductory level, the description of the differences between macroeconomics and microeconomics in the objects of study is important from the methodological point of view and a widely justified teaching approach, allowing students to “recognize” the questions related to the different content (sub)areas.

Taking into account the cognitive specification of the WiWiKom test, which differentiates between three cognitive levels, achieving the basic level of general economic competence (recognition and understanding) is determined by the ability to “recognize” a specific area of economics in the wording of the question, to “recall” economic definitions and models, and “relate” them to the question. Since questions about macro- and microeconomics were mixed in the WiWiKom test and there is no explicit indication tying the question to a particular content area, this ability to “recognize and understand” the question can be understood as an integral characteristic of an individual student’s general economic competence. Since the majority of students perform the test applying this competence, the high correlation between the macro- and microeconomics parts of the test can be explained.

The use of the WiWiKom test for the “integral” assessment of “general economic competence,” however, requires specifying the confines of the subject area. Looking at a rather detailed list of 12 content categories, with a breakdown of individual topics and concepts covered by the test questions, however, the division of test questions into the subject areas of micro- and macroeconomics, let alone into thematic sections, is often tentative. Examples of market failures, coordination failures, public goods, etc. are illustrative. In the WiWiKom test specification, the assignment of at least two topics (“International Economics” and “The Role of the State in the Market Economy”) to the respective sections of economics allows for variations. Attribution of these two topics to the respective sections of economics is rather conventional. In both countries, in teaching practice at introductory levels such a division is determined by the overall duration of the course, the choice of the main textbook, and the lecturer’s research interests. The coverage of the topics and the distribution of the teaching load among them may be vastly different from the test specifications. For instance, the issues of international trade (an area of microeconomics) and international finance (an area of macroeconomics) are combined in the WiWiKom test in a common section (international economics). Traditionally, considering balance of payments and exchange rates in the macroeconomics block implicitly assumes knowledge of microeconomics. For example, imported goods are assumed to be normal (a term from microeconomics) and the concept of partial equilibrium is applied to the foreign exchange market (the commodity in the foreign exchange market is a foreign monetary unit). Obviously, assigning individual questions to one or the other section is a challenging task, as questions often deal with two or more principles. Correct statements may refer to one topic, and incorrect statements to another. Therefore, the results of the WiWiKom test could and should not be interpreted as the degree of competence in individual subject areas, but as “general economic competence.”

Implications: Applying the WiWiKom assessment and its results to teaching economics

The cross-country comparative analysis of economic competence presented here provides some initial implications for educational practice in economics, which—in view of the limiting constraints—should be critically examined in further research. First, the results of the current study indicate that the format of regular exams focusing on economic competence, especially at the introductory level or for summative assessment, must not be rigidly tied to a specific subject area. Even when teaching and learning approaches separate economics contents, students’ general economic competence seems to develop over a course of study. At the same time, the one-dimensionality of the competence construct measured here indicates that both subareas (micro- and macroeconomics) are sufficiently interrelated, which can also be taken up in teaching economics. Further, test questions that represent the description of practical situations can be seen as potentially more suitable to measure “lasting knowledge” as a crucial component of general economic competence.

Second, when planning the curricula of educational programs in economics, it is reasonable, at the introductory level, to consider general issues of economics within a single course introducing basic concepts and principles without being required to distinguish between separate parts (macro- and microeconomics). The development of the general methodology of modern economics allows for the transition to teaching general principles of economics in a single course. For instance, modern macroeconomics is based on strict microeconomic foundations. Although the problem of aggregation is addressed in more advanced courses, at the introductory level, economic thinking and understanding need to be developed to help bridge the methodological gap, for example, between partial and general equilibrium. The overlap of (economic) topics and even subject areas in the test questions, as well as the shift in assessment from declarative to procedural knowledge, requires appropriate teaching methods (in the sense of the triad model by Pellegrino et al., 2001). Pedagogical design in this case should focus more strongly on integral, intended learning outcomes at the course level, which are focused on situational analyses and relevant problems (case-based or problem-based learning—PBL, McMillan and Little, 2020).

One fundamental aspect of the WiWiKom test is that it uses an absolute scale that can ensure the inter-temporal comparability of assessment results. The WiWiKom test questions, posed in the form of practical situations, make the link to the curricula less rigid. This allows the “quality of learning” indicator to be formulated and quantified in a outcome-based education paradigm. However, one should be cautious in interpreting measurement results as evidence of the quality of teaching. The accuracy of conclusions about the dynamics underlying learning outcomes depends on many factors, which are not always observable and can hardly be determined by statistical methods. Therefore, interpreting the results of the measurement of general economic competence across faculties, universities, and countries also requires prior analysis with the identification of control variables reflecting students’ (prior) general cognitive abilities and domain-specific skills as well as their actual teaching and learning environments and means inside and outside of higher education (e.g., self-directed learning using the Internet).

Since economic competence is considered an immanent characteristic of a professional in the field, objective assessments using valid tests should be carried out at different stages of higher education. In this case, the concept of “lasting knowledge” can be transformed into the concept of “life-long competences.” The WiWiKom economic competence measurement tool is based on the analysis of practical situations rather than on the reproduction of theoretical postulates taken out of context. This makes it possible to “neutralize” the factor of students “forgetting material” over time when interpreting the results. The “material” in this case is precisely the procedural knowledge measured with cognitive constructs at the level of “practical application” (explicit and implicit), which are embedded in the test. Therefore, the measurement of “lasting knowledge” among undergraduate students using the WiWiKom test can be considered to be equivalent to the measurement of general economic competence.

Overall, due to the broad interpretation of the concept of economics within professional education areas, which require a certain level of economic competence, this standardized, valid test with detailed specifications can provide valuable evidence on the quality of economic education and its actual learning outcomes within and across countries.

Limitations and further developments

In addition to the abovementioned overarching limitations, there were some restrictive conditions in the administration of the study, such as the not exactly identical test conditions (e.g., test time limit). However, a decisive influence on the test performance is not to be assumed since the minimum given test time (45 min) exceeds the usually required time (30 min) by far and was therefore generous.

As with any domain-specific test, a general limitation of the WiWiKom test consists of the fact that the tasks and content are not “timeless” and should be updated continuously. In particular, the lack of consensus on the curricula of a conventionally “standard” introductory level economics course requires discussion within and across both countries. The change in economic policy approaches in recent decades has also entailed a change in the content of economics as an academic subdiscipline. This is also reflected in the current version of the WiWiKom test. For instance, with the establishment of the neoclassical synthesis, the confrontation between the Keynesian, monetarist, and neoclassical schools is no longer relevant. The calculation of multipliers, the choice of targeting variables within monetary rules, and the fundamentals of economic growth theory should be approached more cautiously. Some popular textbooks, written by renowned academics, dispense with the aggregate supply and demand model when describing macroeconomic principles. The WiWiKom test has taken these changes into account when formulating questions on macroeconomics, thus pointing in the direction of reforming the content of introductory level economics curricula. However, the test needs to be regularly adapted by involving teachers as experts. In this context, the requirement for test validity and reliability may conflict with the need for its ongoing actualization. In this regard, research and educational tasks solved with the help of the WiWiKom test may not be quite congruent.

With regard to the curricular analyses, it should be noted that the Russian analysis included a large number of study programs that, even though based in different locations, are all part of a single university. With regard to the German curricular analysis, various higher education institutions were included, and just the three most popular study programs in the economics domain were examined. The degree of comparability is thus limited. In addition, it is uncertain to what extent the time allocated by credit points (which is divided proportionally into classroom teaching and self-study) is actually used by students to this extent. The curricular analyses on the basis of the module descriptions therefore only allow for indications of the “intended” curriculum, but not of the actually “implemented” curriculum. The actual implementation of curricula (i.e., “implemented” curricula) in higher education needs to be investigated deeper to draw further conclusions. For example, it can be assumed that micro- and macroeconomic content is also taught in the business and management courses in Russia, even though the selected keywords did not yield any matches in the curricular analyses presented here.

In the interviews with experts of higher economics education in Germany and Russia, however, the ecological validity of the results of the curricular analyses was confirmed by the experts. With regard to the “implemented” curriculum, the “teacher and instruction effect” was pointed out, according to which the actual content taught depends on the teachers and their instructional approach and can therefore differ greatly between institutions. In this context, it is even more important to validly measure “core” economic competence of students and to compare its level and structure, as indicated in this study.

The involvement of lecturers in the necessary continuous updating of the test can create an incentive to move from content-based teaching to modern OBL methods. This can also be facilitated by the format of the test questions, which are phrased as descriptions of situations that require economic problem solving and decision-making. Such well-organized institutional “adaptation” procedures can “translate” standards for assessing economic competence into standards for teaching economic principles, while also preserving teachers’ competence to choose content and their flexibility in the use of effective teaching methods.

While the assessment and dimensionality of economic competence and its relationship to curricula and learning opportunities were considered in this cross-national comparative study, the domain-related preconditions at study entry in Russia and Germany as well as other personal and contextual factors need to be examined in more detail. Based on the present study, for example, the heterogeneity of the study entry characteristics—which can be assumed considering the heterogeneous nature of preuniversity education—could be specifically examined given an appropriate measurement instrument and comparable university teaching and learning context.

Footnotes

Acknowledgments

We would like to thank the anonymous reviewers and the editor for providing constructive feedback and helpful guidance in the revision of this paper.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The article was prepared in the framework of a research grant awarded by the Ministry of Science and Higher Education of the Russian Federation (grant ID: 075-15-2020-928) and a research project funded by the German Federal Ministry of Education and Research (grant ID: 01PK15001A).