Abstract

Robotic telepresence is an emerging technology with a great potential in applications such as elder assistance, telework, and surveillance. It relies on a combination of information and communication technologies and mobile robotics to provide a person with autonomy to move and interact in a remote environment. The application environments range from homes to factories, even large outdoor spaces, and the type of users involved in particular application contexts is also varied. That leads to a great heterogeneity in the design of the robotic platforms, communication infrastructures, and interfaces found across robotic telepresence applications. This article analyzes the sources of such heterogeneity, defines a modular architecture that provides a suitable solution for generic applications, and presents an instantiation of the architecture into a web-based solution for robotic telepresence. The main advantages of the solution presented are its open-source nature and its cross-platform compatibility. We report a pilot experience that shows how a functional robotic telepresence system based on this solution has been successfully deployed in an office environment and intensively tested by inexperienced participants over the Internet.

Keywords

Introduction

Robotic telepresence arises as a promising approach for diverse applications including assisting elder people, telework, and remote surveillance. A robotic telepresence system results from the combination of information and communication technologies (ICTs) and mobile robotic (MR) solutions. On the one hand, ICTs permit users to remotely control mobile robots within realistic environments, overcoming the still limited performance of autonomous robots in these scenarios. 1,2 On the other hand, mobile robots help in enhancing the capabilities of traditional ICTs, enabling users to interact within the robot environment as if they were physically present. 3,4

A successful robotic telepresence system must face a number of issues that can be grouped into three main categories: (i) providing accessible and easy-to-use interfaces to facilitate the telepresence experience, (ii) integrating robust multimedia features and real-time communications under different network conditions, and (iii) considering efficient and adaptable solutions to provide the requested robotic abilities.

These issues are normally coped by implementing solutions strictly tight to the particular requirements at hand, that is, a specific robotic platform, software architecture, and so on. This option hinders the portability of the solution and normally prevents its extension to a different robotic telepresence system. The use of current open-source tools, like WebRTC and HTML5, provides the needed flexibility to cope with such heterogeneity but at the expense of an extra effort for specifying and designing a complete functional architecture.

This article contributes in (i) identifying the sources of heterogeneity found in robotic telepresence systems, (ii) defining a generic and modular architecture that deals with such heterogeneity with special emphasis on the convenient integration of multimedia and robotic control streams, and (iii) presenting an instantiation of the proposed architecture into a web-based robotic telepresence application.

Our approach addresses the aforementioned issues as follows: It integrates efficient and robust middleware communications based on internet of things (IoT) protocols like HTTP, WebSockets, and MQTT. The use of these standards provides well-structured mechanisms for communication and it permits the inclusion of third-party components. It provides an open-source, web-based user interface (UI) for robot teleoperation, which enriches the solution with interesting features like cross-platform compatibility, accessibility, and maintainability. The web application presented relies on NodeJS, HTML5, and WebRTC technologies to enable these features. The implemented robotic telepresence system is featured with self-localization. For that, the system includes interfaces with robotic control architectures (RCAs), based on, but not limited to, the widely used ROS

5

and MOOS

6

robotic frameworks.

As a result, a functional robotic telepresence system has been successfully deployed and tested by inexperienced participants who accessed the robot through their personal devices and Internet connections. The pilot experience enrolled 64 participants asked to remotely explore an unknown working office environment, without the intervention of any technical staff. During the exploration, the users had to approach some objects and dock the robot in the recharging station at the end of the experience. The reported tests cover the following components: two mobile robot platforms running different operating systems (OSs), that is, Windows and Linux, and robotic frameworks, that is, ROS, and MOOS, different user devices for teleoperating the robots, OS, browsers, and Internet connections. The aim of this pilot experience is to prove the suitability of our approach in the deployment of a functional and practical robotic telepresence system under heterogeneous conditions but not a thorough user study. The results that support the viability of our work consider the number of exploration tasks accomplished by inexperience users who only received a few indications on how to guide the robot.

The article concludes with a discussion about the benefits and limitations of the current study and with the planned future work. Demonstration videos of the trials conducted during the pilot experience are available at: https://mapir.isa.uma.es/work/telepresence.

Related work

First combinations of videoconferencing systems and teleoperated robots emerged in the mid-1990s, being PRoP one of the pioneers. 3,7 Since then, robotic telepresence prototypes have evolved significantly, 8 and promising application areas have been identified, including ambient assisted living (AAL), 9 telework, 10 robotic video surveillance, 11 and remote monitoring of risky environments. 12

In AAL, the concern is focused on the safe performance of mobile robots in domestic environments and the social abilities to be delivered. 13 Systems like Vgo 14 and Giraff 15 (see Figure 1(a)) are commercial solutions that have proven its suitability for this type of applications. 16,17

Examples of commercial robotic telepresence systems: (a) Giraff, (b) iRobot Ava 500, and (c) SMP Rover S5.

Robotic telepresence applications targeting at creating innovative telework scenarios must deal with the particularities of a different context. Solutions in this field emphasize on the mobility of the robot in office environments and the interaction capabilities needed to support remote collaborations between coworkers. Some examples are BeamPro, 18 Anybots QB, 19 and iRobot Ava 500. 20

In its turn, environmental monitoring is another important niche for robotic telepresence systems. Applications in this field shift the focus onto the robotic abilities required to explore industrial environments, public spaces, and/or risky environments 21 and carelessness about the social context nearby the robot. Therefore, the features most valued in this case are the mobility, autonomy, and dependability of the telepresence robot in large and/or outdoor environments, rather than on the face-to-face communication. Meaningful systems and use cases are presented by Guzman et al., 22 and SMP Robotics 23 and Robot Security Systems 24 are example proprietary systems proposing robot mobility as an added value to traditional monitoring environments with set of static cameras.

The diversity in the workspaces, type of users, communication infrastructures, goals of the robotic telepresence applications, and the role played by the robot has led to a high heterogeneity in robotic telepresence designs. Some works have addressed the study of the heterogeneity from different perspectives but focusing only on specific areas. For example, Zalud 25 deals with aspects of control for heterogeneous robotic robotic platforms, and Doisy et al. 26 focus on the techniques for cameras and motion control of a teleoperated robot. Other works study the heterogeneity from a more general perspective, putting the focus on providing insights about common aspects affecting the design and development of robotic telepresence designs. Desai et al. 27 provide an overview of essential technical features, Tanaka et al. 28 propose seven design dimensions that are key in the design of robotic telepresence, and Portugal et al. 29 present a review of the features required toward the delivery of intelligent services through the robot. The work presented also copes with the heterogeneity in telepresence system from a general perspective but differs from other works in proposing a practical, generic control architecture that integrates open-source tools suitable for implementing a functional application with videoconference and robot teleoperation in real time.

Additionally, a key aspect affecting robotic telepresence designs, which is in fact part of its heterogeneity nature, is the role of the telepresence robot. These roles are discussed by Tanaka et al., 30 where a comparison between video, avatar, and robot-mediated communications is presented to evaluate the pros and cons of robotic embodiment. The main difference between avatar and robot-mediated communications is that avatar communications are enabled by intelligent agents installed in the telepresence robot to interact with users (located nearby or in remote locations) and robot-mediated communications are a direct human–human interaction by means of a teleoperated telepresence robot that works as a passive artifact aimed at providing the remote operator with mobility (or other action capabilities) within the local workspace.

On the one hand, approaches considering telepresence robots as mobile avatars emphasize on the robotic and cognitive abilities required by the robot to interact as an autonomous assistant, partner, or coworker. Their distinctive features are a set of decision-making abilities that enable the robot to act on its own initiative in specific tasks. They also account for the basic features of robotic telepresence (i.e. communicating with other ICT systems and remote users) but, despite most of them can communicate people nearby and remote users via videoconference, in general, these approaches do not consider real-time teleoperation features. The remote operator is a passive interlocutor and the robot acts as a third agent supporting the remote human–human interaction. Relevant projects following this approach are SPENCER 31 (http://www.spencer.eu; FP7 Program), where the robot is responsible for passenger guidance and help in busy airports, as well as in Social Robot (http://mrl.isr.uc.pt/projects/socialrobot/) and Grow Me Up (http://www.growmeup.eu; from FP7 and H2020 European Research Programs, respectively), where the Social Robot Platform 29 provides care services for the independent and active living of older persons within a smart environment.

On the other hand, telepresence robots playing the role of teleoperated Robots are considered robotic embodiments of the remote operator in the local workspace. The focus is placed on the positive effects, the physical embodiment of the operator can bring into remote assistance services (e.g. health-care and monitoring services), and the goal is to make them, the operator and the user nearby the robot, feel in some way they are sharing the same physical space. In general, the means to achieve that in current robotic telepresence systems rely on the combination of videoconference with a wide field of view and the initiative yielded to the operators through the movements they can command to the robot using teleoperation features in real time. Thus, the specific challenges in these approaches are to generate an adequate level of situation awareness in both parts and enabling the remote operator to actively visit the robot environment while ensuring its safety. The correct integration of videoconference and robot teleoperation features are at a premium in these approaches, as well as the usability of the robot controllers, which also includes the study of visualizations and autonomous navigation abilities to assist the operator complex maneuvers. Example of projects following this approach is ExCITE 32 (http://www.aal-europe.eu/projects/excite; Active and Assisted Living Program) and GiraffPlus 16 (http://www.giraffplus.eu/; FP7 Program).

In this work, we have addressed the problem of developing an open-source solution able to integrate conveniently efficient videoconferencing and robotic control features and present a particular instantiation into a web-based application for robotic telepresence playing a role of an avatar. However, note that the inclusion of control robotic components into the modular architecture makes it suitable for covering also other options, for example, intelligent robots enabling robot-mediated communications.

Most of the solutions found in the literature tackle robotic telepresence by relying on proprietary software (e.g. Skype or VSee) in combination with specific plug-ins to integrate robotic control commands, 33 which is the approach followed by Social Robot and GiraffPlus platforms. The strength of these solutions relies on the efficient management of networking, videoconference, and real-time communications provided by expert companies, but this strategy has several disadvantages when it comes to the design of features for research prototypes like the aforementioned. In general, prototypes following this approach are excessively influenced by the application programming interfaces (APIs) provided by manufacturers which, in most cases, lead to slow development cycles and hinder the reusability of the system features achieved.

Thus, a common alternative followed by researchers in the area is to avoid the use of these proprietary multimedia services and stream the audiovisual data using technologies and protocols ready to use with state-of-the-art RCAs like, for example, the ROS web video server application (http://wiki.ros.org/web_video_server). The main problems of this approach are the low efficiency in multimedia communications and their limited networking features, which reduce the validity of the solution to operators and robots that are connected within the same local area network (LAN).

We propose a third alternative that solves the trade-off between these two approaches. The alternative relies on the integration of the open-source WebRTC technology, HTML5 apps, standard IoT protocols, and state-of-the-art RCAs. This combination provides efficient multimedia communications in real time and permits a compact integration of multimedia and robotic control features. The drawback with respect to other options is the additional effort in the specification and design of the system architecture, which is one of the contributions of this article.

Heterogeneity in robotic telepresence architectures

Independently of the application domain, robotic telepresence systems, in general, comprise three environments (see Figure 2) as follows: the robot environment, where an MR platform endowed with a videoconferencing set, enables the virtual visit to the robot workplace; the visitor environment, where a networked device provides the visitor with an interface to interact with the robot; and a network environment that supports the communication between visitors and robots.

Robotic telepresence systems entail three environments: visitor, network, and robot environments.

Network environment configurations can vary according to the requirements of particular application contexts. The main distinguishing aspects are the signaling procedure between end points, the communication channel, and the network topology.

Connectivity between visitor and robot environments requires different signaling processes depending on their accessibility to the network. For example, in small LANs, messages can be issued using local internet protocol (IP) addresses. However, in large networks or public connections, the devices operate behind network address translators (NATs) and/or security firewalls, which prevent the direct communications. Under these circumstances, the connection requires networking techniques like the interactive connectivity establishment and additional network elements providing Session Traversal Utilities for NAT (STUN) and Traversal Using Relays around NAT (TURN) services.

Communication channel specifications also vary for different applications. Thus, in cases where the information exchanged is personal or confidential, the communication must incorporate security mechanisms. In applications with intensive exchange of data and/or bandwidth limitations, the transmission efficiency is an issue to be considered. Lastly, the topology of the network infrastructure required also affects the design of the communication channel, ranging from connections between a unique robot and one possible visitor to large networks enabling many-to-many communications between multiple robots and visitors. In most cases, a centralized server provides cloud services to coordinate the connections across the network topology.

The robot environment claims, in its turn, to be the major source of heterogeneity. The structure and elements of the workspace where the robot operates largely condition the design of the robotic platform, including hardware, that is, sensors and actuators, and software, that is, navigational algorithms, control architectures, and so on. Not only the physical environment but also the type of interaction required and the end users coexisting with the robot (if any) affect to the design.

Common particularities can be identified according to the considered application field. Thus, in the context of assisted living, the robot environment usually includes narrow, cluttered, and dynamic spaces where the robot has to behave in the presence of sensitive users with limited physical or cognitive skills, who might be technologically illiterate. Thus, the design must focus on the simplicity and dependability of the robotic platform which should be featured with smooth and gentle movements and a noninvasive appearance. In telework applications, workspaces tend to be larger, less cluttered, and populated with technological-skilled users. In contrast, these applications demand higher levels of interaction since the final purpose is to allow remote coworkers to collaborate efficiently. To achieve that, telepresence robots must be enhanced with additional ICT features like screen sharing, document presentation utilities, and laser pointers.

The visitor environments are also varied and multiple features can be required to develop a successful interface to enable real-time robot control and engage the visitor with a certain sense of presence in the robot environment. The objective of developing a simple, easy-to-use, and effective interface to fulfill these purposes is usually challenged by, for instance, the technological skills of the visitors involved, the level of interaction required with the robot environment, and the multiple visitor devices and platforms targeted by the interface.

For example, AAL and telecare applications usually deal with nontechnical visitors who require an interface as intuitive and easy to use as possible. In contrast, professional applications focused on environmental monitoring or mobile video surveillance account for the possibility of training the visitors to control more complex interfaces; thus, the goal is not on the ease of use of the interface but on its robustness and efficiency to support the operator task.

A robotic telepresence architecture

The inherent heterogeneity of robotic telepresence systems requires a generic architecture to structurally deal with the combinations of the network, robot, and visitor environments. In order to provide a model to cope with such heterogeneity, we first group the functionality of the identified components into essential building blocks and, then, establish the connections between these blocks and their interfaces.

Three main components (Figure 3) enable the system functionality: the network infrastructure, the telepresence robot, and the visitor device. The structure of each component is divided into four levels: hardware, service, application, and middleware. In a nutshell, hardware levels contain physical devices and firmware features while service levels comprise the interfaces with such hardware layer and the processes and utilities required to support the execution of the application. In its turn, application levels include the logic and process management features. Finally, middleware layers are responsible for enabling the communication between the different environments.

Generic-purpose architecture for robotic telepresence systems.

Network infrastructure

The network infrastructure provides functionality to establish the conditions for communicating visitors and robots, support the connectivity and communications across the network, and provide security mechanisms and data persistence.

In order to bring together different configurations, providing a generic structure, the network infrastructure considers as follows.

Hardware level

The hardware level is composed of the physical devices in charge of processing server operations. Multiple configurations can be considered, ranging from a single central computer to distributed computer networks.

Service level

This level encapsulates the features required to support the server application. Firewall/NAT traversal, relayed communications, and data persistence are common services operating at this level. They can run in a particular server machine or integrated with the main application from cloud services provided by external parties.

Application level

It includes the application that exploits the services provided by the upper level and integrates the logic and interfaces required to coordinate robot and visitor operations. In our model, two main blocks are envisaged: the session manager, which deals with concurrency, authentication, authorization, and persistence; and the data manager, in charge of enabling the transmission of audio/video (A/V) streams and robot commands. Services for connection recovery, error logging, and data management are also considered in this level.

Middleware level

It ensures the accessibility of the server application to visitors and robots through a set of APIs. These APIs enable a communication bus across the network layers connecting visitors and robots (i.e. gateways, switches, and routers). This bus integrates two types of communication: point-to-point and server-relayed communications. Point-to-point communication channels exploit the optimal path available across the network, while server-relayed channels increase the point-to-point path with at least one additional end point to provide an alternative network path if direct connections between visitors and robots are not available.

Telepresence robot

The telepresence robot provides the visitor with the sensing and acting capabilities required to perform and to achieve a certain feeling of presence within the robot environment. It consists of an MR base endowed with a videoconferencing set; a network adapter; and, in some cases, additional sensors, actuators, and interfaces for human–robot interaction. Our architecture considers the heterogeneity in the functionality and component configurations that can be required in telepresence robots by grouping them into hardware, service, application, and middleware levels.

Hardware level

The hardware level entails the hardware devices, electronics, and computer firmware working without the need of an OS. Cameras, microphones, MR bases, screens, and additional sensors/actuators are included in this level.

Service level

This level represents the OS that provides the programs running in the application level with basic interaction with the hardware. In addition, this level also includes possible software components like specific hardware drivers.

Application level

The application layer includes the so-called telepresence robot application that integrates the components required to interpret sensory inputs, control the robot actuators, and expose multimedia and robotic capabilities to external interfaces. The main blocks of a telepresence robot application are: A/V media, RCA, and UI. The A/V component processes local and remote media streams, which involves coding/decoding, failure handling, and interface mechanisms. The RCA covers the software architecture that enables the particular robotic capabilities of the system. Finally, the UI component consists of the mechanisms and APIs required to detect user events and transform the inputs into convenient commands.

Middleware level

In this case, the middleware connects the robot to the network infrastructure, exposing the Robot API to visitor interfaces and communicating with the server.

Visitor device

The visitor device aims at presenting the robot environment data and translating the intentions of the user into robot commands. Thus, the essential elements of the visitor device are the mechanisms to capture the visitor inputs, the transformation of such inputs into Robot API commands, and the data structure to be exchanged between visitors and robots. Notice that, given the close relation between the visitor device and the telepresence robot, both models are equally structured.

Hardware level

In this case, the hardware elements are mainly desktop/mobile devices with screens of different sizes and varied input elements (e.g. keyboard, mouses, or touch screens). It includes cameras and microphones for enabling videoconference, which is a common requirement in applications considering users in the robot environment.

Service level

Services in this case are reduced to the core features of the OS but, in most cases, the process followed to install the main application extends the system features with small pieces of software, that is, plug-ins.

Application level

The application level accesses the hardware features through service level APIs and enables adequate interfaces between visitors and robots. It relies on two main components: controllers and UIs. A/V and RCA controllers are managing the traffic of A/V and robotic control streams, and UI features aim at representing these streams and handling visitor inputs.

Middleware level

The middleware level connects the visitor device to the network infrastructure and communicates with robot and server sides through their APIs.

A web-based robotic telepresence application

The architecture presented in section “A robotic telepresence architecture” is generic enough to cover a wide range of robotic telepresence requirements. In this section, we present an instantiation that fits into a given application which deals with a number of restrictions within the three environments to treat with the most typical heterogeneity issues. The presented robotic telepresence application belongs to the monitoring/surveillance context in which visitors steer a mobile robot to accomplish a particular task without the need of interacting with the people around. Concretely, the characteristics of each environment in this application are as follows: The network environment exhibits diverse requirements in terms of connectivity, security, and network topology. Our implementation deals with connections over LANs and the World Wide Web, coping with firewall/NAT traversal issues. If necessary, the communication channels can be secured, and multiple configurations in the network topology are considered, that is, one-to-one/one-to-many/many-to-many connections. The robot environment is a structured, noncontrolled scenario where a number of people are sharing the space with the robot. The presented implementation considers two different robot configurations, including different platforms and hardware (Giraff/Pioneer), OS (Windows/Linux), and robotic control architectures (OpenMora/ROS). This is made possible since the middleware and interfaces levels within the architecture are easily adaptable and cross-platform compatible. The visitor environment considers multiple devices: visitors’ own PCs, smartphones or tablets, and different technological skills. This situation is coped in our implementation by providing users with a cross-platform and adaptable web interface that operates without installing any software.

Figure 4 depicts the structure of the presented robotic telepresence application. Next sections provide an in-deep description of its implementation.

Generic-purpose robotic telepresence system based on web technologies.

Network infrastructure

The network infrastructure relies on a client/server architecture to support the communications between visitors and robots. According to the model of the network environment presented in section “A robotic telepresence architecture,” it has been instantiated as follows.

Hardware

The network infrastructure is supported by a conventional PC that acts as a centralized server. This server is publicly addressable through its IP and stores the application data.

Services

The central server integrates both cloud and local services. Concretely, it relies on two cloud services, namely, the STUN and TURN services provided by Google’s open servers.

It also implements two local services, namely, a Message Broker enabling the server application to communicate with clients using the publish/subscribe messaging pattern 34 and a database to store application data and session logs. The utilities selected to implement these services have been Mosquitto MQTT Message Broker and MongoDB Database.

Mosquitto MQTT Broker is an open-source message broker that implements the MQTT protocol, providing compatibility for TCP/IP and WebSockets. In addition, it provides security (optional) and different levels of quality of service.

MongoDB is an open-source database with cross-platform capabilities. MongoDB relies on JSON documents with dynamic schemas, which facilitates the integration of data. We have implemented a structured database classifying these documents into four collections: user profiles, robot profiles, session data, and system logs.

Server application

The server application is implemented in NodeJS, instantiating the centralized server of our model. NodeJS is a runtime environment to develop javascript server-side applications and offers a rich set of readily available utilities through a package ecosystem, called Npm, structured into packages and modules. Npm packages are accessible directories, Uniform Resource Locator (URLs), and/or repositories containing Npm modules. Modules are javascript folders or files containing code that can be run by a NodeJS program. Our implementation relies, among other, on the following Npm packages as follows: The MQTT package integrates a client MQTT library enabling NodeJS apps to communicate with the MQTT broker from the service layer. The EasyRTC package implements a WebRTC signaling server based on the socket.io library for enabling the connectivity and relaying A/V media streams between WebRTC clients. The Express package provides lightweight and robust tools to develop HTTP servers, particularly focused on single-page and hybrid applications. The Passport package contains an extensive set of authentication strategies prepared to be integrated with Express applications. Finally, the Mongoose package includes effective utilities to integrate NodeJS apps with MongoDB. This package has been used to provide data persistence, for example, server status, session logs, and visitors/robots information.

Middleware

The middleware provides convenient interfaces for allowing visitor and robot clients to connect with the HTTP server in the server application, sign up/authenticate in the database to gain access to the network infrastructure, retrieve the web resources (i.e. media, HTML, JavaScript, and CSS files), discover and connect to other clients connected to the network infrastructure, broadcast/receive robotic control data using the MQTT publish/subscribe mechanism, and broadcast/receive relayed A/V streams when two clients cannot communicate directly.

Communications

Communications consist of a message bus comprising a point-to-point and a server-relayed channel. Point-to-point communications are performed through a websocket channel exclusively used for exchanging multimedia streams. This channel allows efficient bidirectional communications over a single socket when a direct communication between clients is found during the session establishment.

On the other hand, server-relayed communications are composed of (i) the HTTP channel, used to communicate with the server application through request/response messages; (ii) the MQTT channel that establishes a bidirectional communication between visitors and robots transmitting robot commands and sensory data; and (iii) the relayed-media channel, included as a fallback mechanism for exchanging multimedia streams when point-to-point communications are not available.

Telepresence robot clients

According to the model presented in section “A robotic telepresence architecture,” our implementation of the telepresence robot client is organized as follows.

The hardware level is composed of the sensors/actuators, processors, and firmware that work at the lowest level. The service level includes the OS, a WebRTC compatible web browser, and the additional drivers that can be required to integrate additional robotic hardware. For the application level, we rely on a hybrid structure that combines a web application with off-the-shelf robotic control architectures. Finally, the middleware level communicates the robot client with the web server from the network infrastructure through HTTP request/responses, transmits and receives A/V data over websockets using the WebRTC API, and relies on the MQTT data channel enabled by the central message broker to exchange robotic control data.

To prove the suitability of our approach to cope with heterogeneity in the robot environment, in this work, we present two implementations considering two robotic platforms. One is the so-called Rhodon, a mobile robot built upon a Pioneer PatrolBot base, endowed with a robotic arm, a videoconferencing set, a range laser scanner, and a Linux PC. Rhodon is controlled using ROS and includes self-localization abilities.

The second platform is the commercial telepresence robot Giraff, which is built upon a manufacturer-developed base, endowed with a videoconference set and a tilting head. The hardware of the standard Giraff has been enhanced with a laser scanner. It is controlled through an MOOS-based robotic architecture instead of ROS. This decision is because the drivers provided by manufacturers are only available for Windows OS. The MOOS architecture includes a controller for the robotic base, MQTT compatible communications, and self-localization capabilities. Table 1 summarizes similarities and differences in the implementations for each robot.

Summary of the features available in Rhodon and Giraff platforms.

RCA: robotic control architecture; OS: operating system.

Visitor clients

The implementation of the visitor client also instantiates the four-level structure described in section “A robotic telepresence architecture.” The hardware level, in this case, faces the heterogeneous nature of the visitor devices, that is, laptop, desktop, tablet, including different peripherals to capture the user events, that is, keyboard, mouse, and touch screens, and media displays with varied size and different resolutions. The service level integrates the OS and a WebRTC compatible web browser; no additional plug-ins nor drivers are required. An HTML5 web application is the unique component operating at the application level and, lastly, the middleware level, which is encapsulated by the web application, connects the app to the public APIs offered by the central server and the robots.

The implementation of the visitor client focuses on the application level, providing the following features: Cross-platform compatibility without installing additional plug-ins nor software: Based on the core features enabled by current web browsers (e.g. WebSockets, WebRTC, and multimedia APIs), our web-based visitor interface deals efficiently with hardware, visualization, and communication issues. Instant access and updates: Since the user interface is delivered online by the central server, visitors can retrieve the most recent version by just accessing the server URL. Simple and responsive design suitable for multiple sizes and resolutions in the visitor device: The interface provides different views, automatically adapted to the client device, and integrates controllers for keyboard, mouse, and touch-screen events.

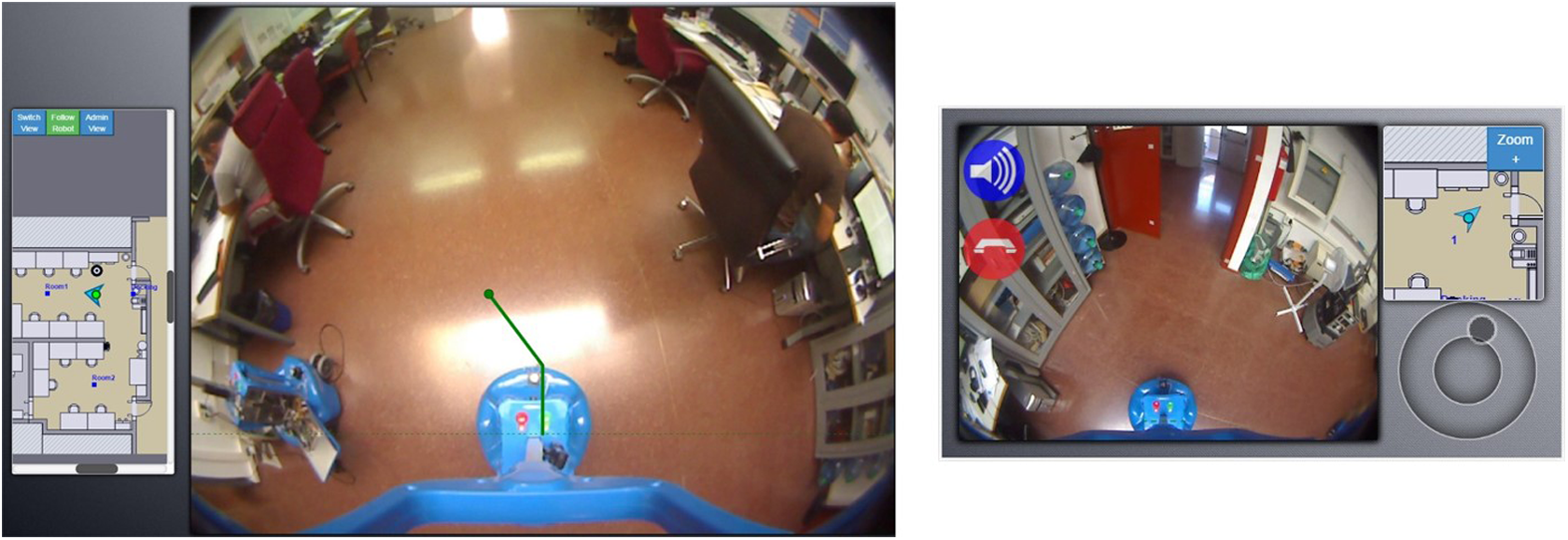

Figure 5 displays a view of the visitor interface. Presentation elements comprise a minimalist layout composed of two viewports. The main one renders the video stream received from the robot environment and overlays some elements, for example, graphic helpers, application alerts, and/or status messages. The secondary viewport displays a schematic map of the robot environment with the aim of improving the visitor situation awareness. The map includes a floor plan of the robot environment enriched with some structural elements, along with the robot pose. Additionally, the map can be hidden, maximized, centered over the robot position, or scrolled to display a specific region.

Visitor UI layout. (Left) layout for medium and large media devices. (Right) layout for small media devices.

Finally, three different controllers can be chosen for steering the robot: (i) a keyboard controller, (ii) a mouse controller, and (iii) a touch-screen controller. The keyboard controller commands linear and angular robot speeds, while the mouse and touch-screen controllers generate a trajectory to the selected destination on the main viewport, enabling smoother movements than the keyboard controller.

Pilot experience: Novice participants using the telepresence robot within an unknown office environment

In this section, we describe an experience with the robotic telepresence application deployed in a varied set of realistic and heterogeneous conditions. The purpose of this preliminary study is to rapidly and informally assess, from a multidisciplinary perspective, the overall suitability of the architecture, implementation, and technology selection presented in this work to cope with the heterogeneity in telepresence robot applications. To that aim, we have designed a web experiment that enabled us to perform intensive, unsupervised tests with users from outside the research laboratory, facilitating the access to novice users (a total of 64 participants) and ensuring a high variability in the devices and Internet connections involved. Three specific objectives were set for the results of the trials as follows: to prove the suitability of the proposed architecture based on open-source technologies (HTML5, WebRTC, IoT protocols, and RCAs) to integrate videoconferencing and robotic control features in real time; to demonstrate the cross-platform compatibility of the solution deployed; and to intensively test the application in a challenging test environment with novice users, multiple client devices, and uncontrolled network conditions.

Next, we describe the setup done to conduct the trials as well as the collected data. Then, the section concludes with a discussion on the objectives proposed for the experience, limitations of the current study, and lines of future work.

Setup

A full-stack web application was designed our telepresence system for public use during 1 month. Visitors were enabled to access the application by clicking on a public link at our group’s home page, then, they were requested to register in the user database and/or log in to gain access to the robot. Once logged in, the application displayed some illustrations giving instructions on robot controls and the task to be carried out. After that, the visitor could take the control of the robot and freely steer it to accomplish the specified task.

The robot environment is composed of two interconnected rooms and a corridor. Two doors connect the rooms and the corridor; the visitor had to steer the robot to pass through both of them. Additionally, one of the doors was sometimes intentionally closed, so the visitor had to manage to find an alternative path to complete the task. To help the visitors, a floor map of the robot workspace and real-time robot localization is displayed during the session (see Figure 6).

Schematic map of the robot workspace. Green pointed line illustrates the path sequence suggested in Rhodon explorations, and red dashed line, the path for Giraff. Rhodon and Giraff labels represent the charging points for each robot.

The considered exploratory task consists of start at checkpoint 0 (charging station), pass through checkpoints 1–4, and go back to checkpoint 0 to dock the robot. In order to guide the visitors, the interface displays messages and visual cues to indicate the next step. In addition, a secondary task is considered for the case of the Giraff robot, consisting in finding a mirror near checkpoint 4, facing the robot to it and taking a snapshot of the robot reflection by means of an emerging that the interface renders automatically at this point of the task. The exploration task ends when the participant accomplishes the docking maneuver at the charging station or after a predefined time out.

Note that the robots considered in our evaluation were equipped with laser scanners for self-localization, but no collision detection/avoidance algorithms were included in the RCA. Thus, the visitor had the responsibility to not crashing the robot with the furniture nor people around.

A web-form was presented to the participants inviting them to evaluate the experience. Form responses were automatically stored and attached to their user profiles in the database.

Data collected

Specific features were developed for the central server and, more specifically, for the NodeJS application described in section “Network infrastructure” to perform data validation, storage, and task control operations. The server application was able to record data from visitors through initial and final questionnaires, and robot session logs, by storing the states of the RCA in real time. The information we collected and stored in the MongoDB was structured as follows.

Visitor profiles

During the registration process, the server stored user data and their skills on using ICTs through an online web form (see Table 2). In subsequent sessions, visitors could directly access the system using the given credentials. In those cases, the ICT form was skipped and a new session was stored attached to the ID of the visitor logged in.

Questions for visitor registration.

In total, 64 users were registered, 52 men and 12 women. The youngest user was 6 and the oldest one 62 years old. The range from 15 to 30 years represents 56% of the participants, followed by the range from 30 to 45, which represents 24%. Participant ages out of the two main intervals are 12%, while 8% decided not to report the birth year. Regarding the questionnaire on the usage of common ICTs, most of the participants answered they use computers, smartphones, web browsers, and instant messaging applications on a daily basis, while the answers for videoconferencing tools and video games are heterogeneous. In the case of videoconferencing, 20% selected daily usage, while more than 40% selected one of the options below once a month. The percentage of daily usage answers provided by registered users is reduced to 11% in the case of video games, and about 60% of them answered they play video games once a month or less.

In this first exploratory study, participant profiles were used only to check their variability as well as to validate and refine the registration features of the platform for further studies.

Exploration tasks

A set of internal states of the whole web application was stored while participants performed the second step, the exploration task. The aims of the data collected at this stage. The collection of states was automatically registered and stored automatically by the server application, and each session included the following data:

Visitor ID. ID of the registered visitor driving the robot.

Visitor device. Data of the visitor device in the session. Visitor’s device information contains user-agent and x-forwarded-for “IP” parameters of the interface HTTP requests. These parameters allow the server to distinguish the OS, web browser, and public IP of the visitor device (except in cases where the user or the network in the visitor environment hides this information for privacy reasons). The registered sessions include connections from 51 different IPs and multiple visitor device configurations. Table 3 shows a summary of the stored configurations.

Robot ID. The telepresence robot used: Rhodon or Giraff.

RCA states. A set of time-stamped data from the RCA, including the robot pose, charging status, speed, and so on. These states are sent at a frequency of 4 Hz.

Mirror snapshot. Image obtained when the visitor performs the mirror subtask.

User commands. This field registers the visitor’s connectivity and the input method used to steer the robot at any moment.

OSs and web browsers used to access the system.

OS: operating system.

We have registered a total of 307 sessions, which are grouped into two sets (see Figure 7) as follows: A first set includes 176 trials carried out with Rhodon. The aim of this set was to test the overall performance of the system and to validate its integration with the ROS architecture installed on Rhodon. For security reasons, we have not considered the free access of inexperienced users to the Rhodon platform. A set of 127 exploration tasks belonging to the open pilot study conducted on the Giraff platform. The Giraff platform is more suitable to be teleoperated by novice users as it is specifically designed for domestic environments. Trials include 116 sessions successfully ended, accumulating a total of 2.6 km in 9 h 40 min. The 11 sessions that were not completed include 6 sessions that exceeded the time limit, 3 sessions in which the connection of A/V was refused because of a corporate firewall, and 2 sessions in which the users canceled before ending the task.

Trajectories followed by Rhodon (a) and Giraff (b) robotic platforms.

Final questionnaire

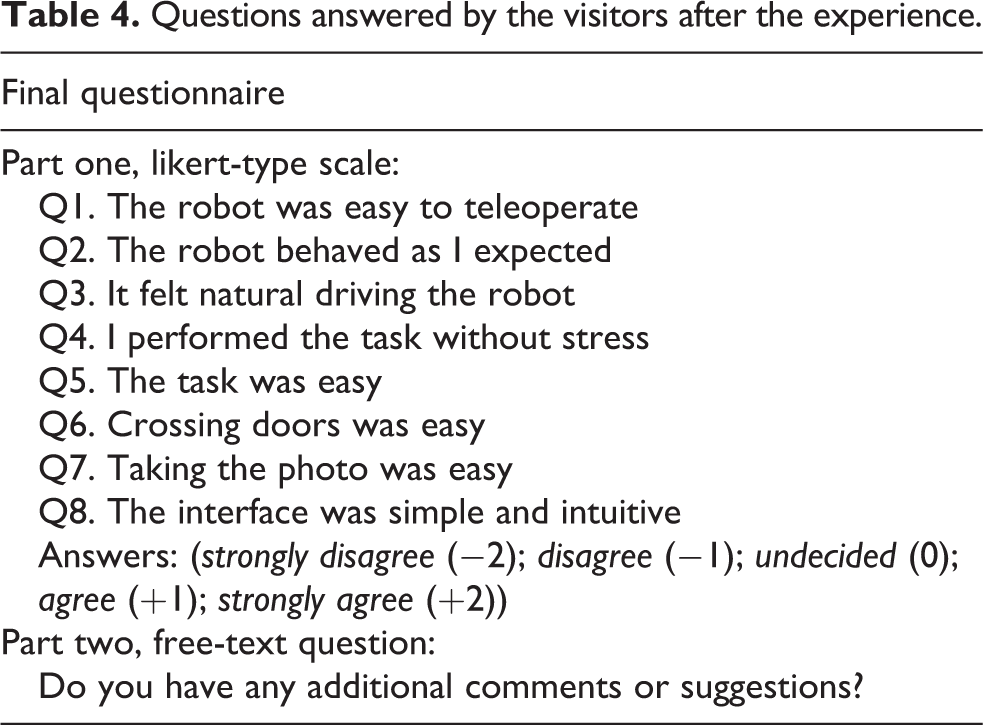

As a final step, users are encouraged to evaluate the experience. Visitors had to evaluate eight positive statements about the experience (see Table 4). Statements 1–3 evaluate robot teleoperation aspects. Through their responses to these statements, visitors rated the overall performance of the teleoperation features and, in particular, the design of the controller developed to drive the robot and the consistency of the robot navigation behavior. Statement 4 evaluates how stressful was the execution of the task. The group of statements 5–7 evaluates the overall complexity of the task proposed (Q5) and how difficult it was dealing with two specific maneuvers: door crossing and mirror snapshot (Q6 and Q7, respectively). Lastly, in the statement number 8, visitors evaluated the design of the user interface by rating how simple and intuitive was its use. A five-point scale was used to rate the statements in which the lower score, Strongly disagree, is scored as −2, while the most favorable one, Strongly agree, computes as +2.

Questions answered by the visitors after the experience.

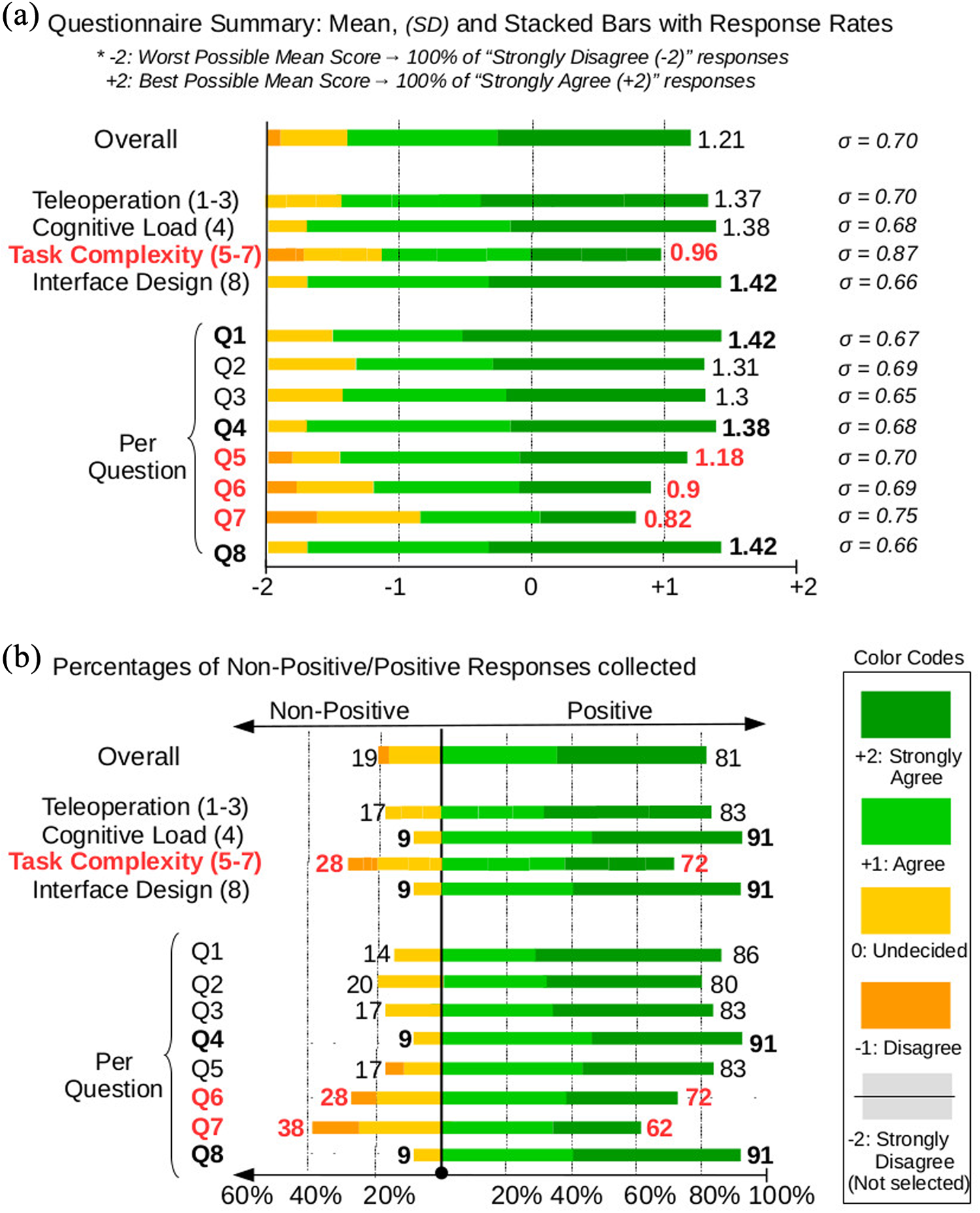

Table 5 provides a detailed summary of the responses given by visitors. The first row of the table presents the overall results of the questionnaire; next four rows include the results of statements grouped in by statement topics: teleoperation, cognitive load, task complexity, and interface design. The rest presents the specific results obtained for each statement. The descriptors presented in the columns of the table are organized into two groups. The first group (left side) includes the percentage of responses given by visitors per each scale value. The second group (right) describes the central tendency and spread of the responses by showing their mean, standard distribution, and two confidence intervals (CIs; 95% and 99%). The central tendency in the overall results of the questionnaire is located slightly above the scale value +1 (“Agree”). Its mean is 1.21 and the rest of descriptors SD = 0.7, CI 95 = 0.17, and CI 99 = 21 reinforce that the evaluations collected are closely grouped around this value. The positive balance of 81/19% between positive and nonpositive answers is another indicator of the good results achieved in general terms.

Summary of final questionnaire responses.

−2: Strongly Disagree; −1: Disagree; 0: Undecided; +1: Agree; +2: Strongly Agree.

However, despite the overall positive feedback, a more questioning visitor perspective is found when the results are organized by topics or examined individually. More specifically, the group of statements evaluating the complexity of the task is the worst rated and the only one that accounts for some negative rates (8.7%). The central tendency in this group of answers is closed to 0.4, lower than the ones obtained for the rest of the topics (that means a 10% lower within the [−2, +2] scale). The balance between nonpositive and positive answers (28/78) is also worse than the value presented by other topics and the spread of the responses within this group is higher. The statement Q7 (taking the photo was easy), contained within this group, is clearly the worst scored with a central tendency of 0.82 (Q7 and Q6 are the only two statements with an average score lower than “Agree”) and a balance between nonpositive/positive answers of 38/62. To improve data readability and highlight these aspects, Figure 8 shows a graphical representation of the results. Responses are organized in charts of stacked bars where the different colors of the bars represent the rate of responses given by visitors per scale value.

These two charts summarize the visitor evaluations collected through the final questionnaire. In (a), bar lengths indicate the mean value of the responses and stacked colors represent the rate of answers received per scale value. In (b), the chart represents a balance between nonpositive and positive responses. All bars have the same length, which represents the 100% of the responses collected. Color lengths represent the percentage of responses received per scale value.

The obtained results are coherent with our initial expectations. The current version of the telepresence application and the experiment setup do not focus on the ease of teleoperating but on proving the correct, overall performance of the features described in this work. Thus, it makes sense the fact that statements that evaluate generic aspects of the experience (Q1, Q4, and Q8) are the best scored. However, evaluation rates decrease as statements relate to driving aspects (Q2 and Q3) and challenging maneuvers (Q5, Q6, and Q7). The results are also coherent in the variability of the responses, which comes from the heterogeneity of our study in multiple aspects like visitor skills, network stability, users’ device, and so on.

Limitations and open issues

HRI studies are always complex due to their demanding requirements to set up a convenient evaluation environment

35

(i.e. participant selection, robot autonomy assessment, study length, replicability, and appropriate selection of statistics). They combine interdisciplinary aspects from technical and social fields and it is hard to follow/develop an effective methodology to cover all of them with accuracy. The scope of the study done in this first stage of development is limited, aiming to illustrate the feasibility of our approach and to identify open issues to be addressed in next iterations. Both the system and its evaluation can be significantly improved in multiple aspects, from which we highlight the following:

To conduct more accurate, specific user studies: After a preliminary feasibility study has been carried out with satisfactory results, next step is to go in-depth with more specific evaluations. To that aim, it is required to focus on specific aspects of the targeted application and, in this line, an important step is to involve potential users in the evaluation loop, for example, health-care professionals or families in AAL applications. A convenient participant selection with detailed user profiles and balanced samples is essential to obtain relevant insights, draw formal conclusions, and generate useful inputs for next iterations.

To increase the complexity in the environments and situations where the application is evaluated: Experiences have demonstrated the validity of the system to operate in a relevant test environment with multiple realistic conditions and next step is to address its deployment in more ambitious environments and more complex situations. Taking the telepresence robot to real homes and evaluating remote social interactions with the primary user are the extensions planned in this line.

To characterize the effects of the communication channel on the application performance: A deeper study on the effects of delays, packet loss, media quality, and video/control synchronization on the telepresence experience is an important step toward the achievement of a successful application. To conduct this study, it is necessary to develop specific application features to monitor data streams, visitor actions, and robot states conveniently. The design of a test environment to obtain unbiased results is also a challenging aspect in this study. Setting up environments to, for instance, compare the performance of different protocols in real networks or to assess how factors like delay, jitter, packet loss, and network latency affect the telepresence experience are two interesting extensions to this work.

To increase the robot autonomy and develop adaptive user interfaces. The enhancement of the robotic platform with autonomous features and assisted maneuvers are the basic means to ease the teleoperation task. In addition, given the different visitor teleoperation skills, the design of adaptive interfaces providing specific configurations for different user profiles or situations is the adequate complement to autonomy in order to improve the teleoperation experience. Autonomous navigation to specific points, assisted driving, and autonomous docking are some of the most relevant features to be integrated in the next iteration.

Overcoming the novelty effects: The use of new technologies always requires to climb a learning curve. Exposing the application to potential users to analyze their evolution until they feel comfortable with the technology is an essential step to determine which aspects of performance are part of an acceptable learning process and which ones demand a better design. However, this type of evaluation is one of the most challenging given the vast efforts needed to find a convenient environment to maintain the setup for a long period and a selection of participants committed to collaborate on a regular basis.

Discussion

The experience enabled us to test the overall performance of the web-based application developed in a relevant test environment. The application instantiates the architecture proposed in this work and the results achieved fulfill our initial objectives. To prove that, we have presented a pilot experience with a considerably high number successful exploration tasks, and a high variability in the characteristics of the users, devices, and Internet connections involved. The good rate of successful task executions and the positive evaluation received from users confirm that, in general terms, we conveniently tackled the challenges addressed, which means that our approach is suitable to build a full-stack solution for robotic telepresence based on open-source technologies, accessible over the web, and with cross-platform compatibility for visitor and robot environment applications.

The setup of the test environment was/is also a valuable experience that allowed us to put in practice the flexibility provided by the modular design of the core application. It enabled the ease integration of extensions to model a particular context or use case, specifically, the set of application specifics required to automate the web experiment. The core application was endowed with user registration, mechanisms to design self-guided teleoperation tasks, and automated survey collection with an effort equivalent to ≈ 0.5 person/month. The major part of this flexibility is enabled by the separation of concerns provided by the modular architecture presented in section “A robotic telepresence architecture,” the cross-platform compatibility of the core technologies used in the implementation, and the rich, well documented ecosystem of ready-to-use extensions that are available for the open-source technologies selected.

After these general remarks, we proceed with some specific ones. We discuss aspects related to each of the objective set for the experience, highlighting the pros and cons of the features applied in the presented approach.

Objective 1

To prove the suitability of the proposed architecture based on open-source technologies (HTML5, WebRTC, IoT protocols, and RCAs) to integrate videoconferencing and robotic control features in real time.

As said at the beginning of the discussion, the number of successful executions in the exploration tasks, achieved under heterogeneous conditions in the devices, Internet connections, and visitor skills prove the correct performance of our approach to support videoconferencing and teleoperation features in real time. The exploration task included accurate maneuvers of real-world contexts like going through doorways, approaching a specific location, and docking the robot to the charging station. These are unattainable maneuvers when applications lack of a correct integration of media and control streams; hence, we can confirm the approach presented is correct in this aspect. However, it is important to consider the following pros and cons before adopting this approach.

On the one hand, although our proposal based on open-source solutions is convenient for small and medium robotic applications that can benefit from cost-free videoconferencing services and full control of the system design, applications aimed at reaching a robust, efficient, videoconference with optimized A/V quality at large-scale should, still, adhere to professional videoconferencing services. The efforts required to enhance our application with the scalability and reliability of purpose-specific services are not worth it for multidisciplinary teams focused on multiple aspects of robotic telepresence applications. Thus, the focus should be placed on searching the most convenient and flexible professional ICT infrastructure to integrate robotic streams.

On the other hand, applications with relaxed requirements in terms of quality and real-time performance, aimed to operate within LANs, can avoid the efforts required to set up the WebRTC services presented in this work. In such contexts, the communications and image-streaming features enabled by state-of-the-art RCAs can be effective to evaluate simple prototypes. However, the cost of this simplicity is the reduced accessibility caused by the lack of convenient features to offer the networking and efficient data exchange required in real-world deployments.

The combination of open-source technologies presented in our work is a third approach that offers a balanced solution which, at the cost of an initial setup to integrate WebRTC services, provides a suitable technical integration that is adaptable, cost free, and ready to be deployed in relevant contexts of robotic telepresence.

Objective 2

To demonstrate the cross-platform compatibility of the web solution developed.

In general terms, the results of the experience permit us to evaluate positively the cross-platform performance of the developed application. First, the visitor interface successfully performed for a wide range of configurations: different versions of five OSs (Windows, Linux, Mac OS, Android, and iOS) and four web browsers (Chrome, Firefox, Chromium, and Opera). Second, communications across the distributed architecture and local system integration at each environment were effectively supported by the IoT standard protocols. Concretely, the NodeJS server, the web visitor interface, and two different RCAs (i.e. ROS on Linux and MOOS on Windows) were able to share data using the MQTT clients through a simple API. Third, although we only used one server configuration in the experience (Windows + NodeJS), it is important to mention that the NodeJS environment is available for Windows, Mac OS X, and Linux platforms. NodeJS extensions are also cross-platform and ready to install from generic JavaScript package managers like the open Npm repository (www.npmjs.com). Thus, the portability of the server-side solution between Windows, Mac OS X, and Linux machines is straightforward.

However, in spite of the fact that web-based solutions are, in general terms, the most effective approach to develop cross-platform compatible and rapidly accessible solutions, the achievement of such generalization is not always possible and, in general, it is achieved at the cost of specific limitations in the overall application performance. Specifically, direct access to local resources and efficient management of the hardware are the main limitations of pure web solutions in comparison to approaches accounting for native components. Thus, despite native applications require specific downloads and operate for a unique platform, applications targeting the intensive use of the local hardware and efficient computing should rely on this conservative approach. Hybrid applications are the third alternative to deal with these aspects; they are web applications wrapped by native software components. This approach offers a balanced solution between the advantages and disadvantages of the other two. Thus, to evaluate the suitability of the hybrid approach, it is required a detailed analysis of the client application requirements and native wrapper specifications in order to find out if all the features required are available.

Objective 3

To intensively test the application in a challenging test environment with novice users, multiple client devices, and uncontrolled network conditions.

The objective was to explore the suitability of the application to operate in a test environment as much realistic as possible. We made it by testing the application with novice users using personal devices from their home locations to control the robot in a realistic office environment, which stressed the technical integration achieved and helped us to rapidly assess the overall correctness of the system. The results of the experience were satisfactory with fairly high number of sessions (176 with expert +127 with novice users) where (i) the control features were executed in multiple platforms including desktop and mobile devices, (ii) the visitor client application was served to a great number of IPs, (iii) the interface performed reasonably well with expert and novice users, and (iv) a high rate of success was achieved in the execution of accurate maneuvers like door crossing, approaching an object (mirror), and docking the robot to the charging station. As it was expected, the uncontrolled variables in visitor and network environments and the challenging maneuvers to be performed during the teleoperation task enabled us to detect some implementation issues and design failures that remained unnoticed during lab tests. As outputs of the experience, novice visitors evaluated the performance of the application developed with quite positive rates, highlighted some weaknesses of the system and the analysis of their responses revealed a relevant set of open issues to be addressed in future work. Despite the preliminary nature of the user study lacks of validity to make formal considerations, the study establishes a valuable initial reference to conveniently design the extensions of this work for the next stage of development.

Future work

Our future work will iterate on the development cycle to overcome the limitations reported in section “Limitations and open issues” putting the focus on robotic telepresence applications for AAL. We aim at enabling our application to operate in field environments within this context and, in this regard, the path provided by state-of-the-art research projects in the field will guide the design of extensions to this work.

A first extension is the enhancement of the RCA with autonomous behaviors to increase its navigation performance, assist visitor in driving tasks, and reduce maintainability. The integration of collision avoidance, collaborative control, and autonomous docking features are the improvements planned for the next stage. These features are especially relevant to AAL contexts where the telepresence robot operates within the personal space of sensitive users and, thus, safety is at a premium. In the same direction, given that robotic telepresence is applied as an assistive technology with the aim to improve certain aspects of personal routines and daily life activities of people with special needs, the maintenance efforts should be minimal to be worth its use. Projects like Spencer 31 and GiraffPlus 16 deal with these aspects of robotic telepresence, provide features that have been tested in real-world deployments, and our solution is compatible with them. Thus, a next step is to integrate such features in our RCA. The integration of an intelligent avatar remains beyond our goals for the next development cycle.

Based on the new abilities developed for the control architecture, we will address the design of an adaptive, flexible user interface able to cover the needs of multiple visitor and local user profiles. Client applications will be extended with modular control components to conveniently support visitor operations. In line with the developments presented in the studies by Kiselev 33 and Gonzalez-Jimenez et al. 36 at least, three default configurations will be considered: novice, expert, and technical user profiles. To that aim, we will address the design of an adequate test environment and convenient user studies, which is one of the most challenging and essential parts of our future work. This topic is object of active research and projects like Social Robot, 37 Alfred, 38 and Miraculous-Life 39 have provided useful guidelines and recommendations based on real-world experiences that we will take into consideration as a starting point in our study.

Lastly, in regard to the third environment, the network environment, the next step is to adhere the architecture and technical features presented to standard ICT infrastructures of AAL deployments. That means an extension of the middleware to support other application frameworks in the field like, for instance, the UniverAAL. 40 The enhancement of the current network infrastructure with features to discover, manage, and configure robotic telepresence services is relevant to increase the robustness and flexibility of applications. The study of security and privacy issues across the distributed communication infrastructure is another aspect to be addressed. The research projects MPOWER, 41 Co-Living, 42 and GiraffPlus 43 define and implement effective middleware solutions for AAL contexts and we will take them as initial references.

Conclusion

In this article, we have analyzed the sources of heterogeneity in robotic telepresence designs and proposed a modular architecture to generalize such heterogeneity. We have examined the most common application domains and reviewed a collection of state-of-the-art systems in the field. According to the information reviewed, robotic telepresence contexts have been presented as a combination of three distributed environments: robot, visitor, and network environments. Our work deals with generic aspects of robotic telepresence designs at each environment but, in particular, we placed our focus on the limited open-source solutions enabling the integration of videoconference and robotic control features in real time. WebRTC, a recent open standard for web real-time communications, provides effective mechanisms to perform such integration, and we have proposed a suitable approach based on this technology. The approach combines WebRTC communications with an HTML5 web interface, a set of IoT protocols, and two state-of-the-art RCAs (i.e. ROS and MOOS). Based on this approach, we have developed a functional open-source web application for robotic telepresence and we have put it into practice through a pilot experience. The application was intensively tested by inexperienced participants over the Internet and it performed successfully across multiple Internet connections, devices, web-browsers, and two state-of-the-art RCAs. We also reported the limitations of the current work (e.g. the lack of a formal user study) and the set of open issues that will be addressed in the next stage of development. Throughout the general discussion, we explained the pros and cons of the presented approach with respect to the alternatives available in the field. Lastly, given that our future work will focus on applications of robotic telepresence in AAL contexts, we describe our plan for next iterations in the development cycle.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the research projects DPI2014-55826-R and MOVECARE by the Spanish Government and EU-H2020, respectively.