Abstract

Qualitative research methods had to quickly adapt to using online platforms due to the COVID-19 pandemic to limit in-person interactions. Online platforms have been used extensively for interviews and focus groups, but workshops with larger groups requiring more complex interactions have not been widely implemented. This paper presents a case study of a fully virtual social innovation lab on bioplastics packaging, which was adapted from a series of in-person workshops. A positive outcome of the online setting was diversifying the types of participants who could participate. Highly interactive activities such as icebreakers, networking, bricolage, and prototyping were particularly challenging to shift from in-person to online using traditional web conferencing platforms like Zoom. Creative use of online tools, such as Gather.Town and Kahoot!, helped unlock more innovative thinking by employing novel techniques such as gamification. However, challenges such as adapting facilitation for an online environment and exclusion of groups that do not have consistent access to internet and/or computers still need to be addressed. The reflections and lessons learned from this paper can help researchers adapt qualitative methods to virtual environments.

Keywords

Introduction

The use of online platforms for conducting qualitative research has become more popular in recent years, particularly after the start of the COVID-19 pandemic due to social distancing (Roberts et al., 2021). Numerous studies have been published on online methods for individual or small-group interactions such as interviews or focus groups (Halliday et al., 2021; Howlett, 2021; Khan & MacEachen, 2022; Lathen & Laestadius, 2021; Lobe et al., 2020; Nobrega et al., 2021; Oliffe et al., 2021; Roberts et al., 2021; Tuttas, 2015). However, established methods for implementing larger and more interactive gatherings such as workshops have yet to be developed in virtual environments (Shamsuddin & Sheikh, 2021; Urbinati et al., 2021). Emergent research on transitioning to virtual tools for interactive applications such as group model building (Brown et al., 2022; Wilkerson et al., 2020; Zimmermann et al., 2021), evaluation (Nobrega et al., 2021), co-design (Kennedy et al., 2021; Zhang et al., 2022), and participatory systems mapping (Penn et al., 2022) are increasing in popularity and showing promise. However, there have been no documented examples in the literature of social innovation labs that were conducted completely virtually at the time of this publication.

While social innovation is generally a contested term with various definitions (Tracey & Stott, 2017), it is primarily focused on addressing systemic issues or deep-rooted problems. According to Westley and Laban (2015), a social innovation is any initiative (product, process, program, project, or platform) that challenges and, over time, contributes to changing the defining routines, resource and authority flows or beliefs of the broader social system in which it is introduced.

A social innovation lab is a participatory research method that brings people together to work on holistic and practical solutions that consider critical features of the broader system elements that may go unnoticed when products are developed in techno-centric silos. Due to their potential to create radical and systemic change, social innovation labs have become more prevalent globally in recent years to address various complex problems (Kieboom, 2014; McGann et al., 2018; Wellstead et al., 2021).

While social innovation labs have grown in popularity, there also exists some challenges. Social innovation labs often work on complex problems, which may be hard to define or lack a consistent definition amongst those affected (Martin et al., 2017; Moore & Westley, 2011). This is reflected in the case of policy innovation labs, which so far have not shown strong empirical evidence of leading to more effective policymaking but appear to perform well in some elements such as problem identification and solution testing (Brock, 2021; McGann et al., 2018; Wellstead et al., 2021). Since social innovation labs have risen in popularity and attracted large amounts of funding, they are also under pressure to produce results (Kieboom, 2014). Labs can develop tunnel vision and focus too much on finding solutions, which in the end may not work (Kieboom, 2014). These challenges underline the importance of using lab processes that build trust, enable participants to meaningfully collaborate, and overcome barriers that can block deep engagement with complex problems and development of innovation.

Activities used in social innovation labs to foster meaningful collaboration between participants and promote different ways of thinking are normally designed for in-person settings (Weinlick & Velji, 2016; Westley & Laban, 2015). Virtual tools can replicate some elements of in-person interactions through use of video conference or collaboration applications (Lobe et al., 2020; Nobrega et al., 2021; Shamsuddin & Sheikh, 2021). However, there are limitations such as only being able to see facial expressions instead of full body language (Halliday et al., 2021; Lo Iacono et al., 2016). Although virtual white boarding tools can be used for visualizing collaboration (Shamsuddin & Sheikh, 2021), there are limitations to how much can be seen by a participant on a small computer screen instead of larger physical media such as maps and flipchart papers (Penn et al., 2022). Furthermore, activities that require a three-dimensional environment such as physical movement (e.g., icebreaker activities, role playing) or interaction with tangible objects (e.g., bricolage, prototyping), present additional challenges when adapting to a virtual environment.

This paper presents our reflections on adapting a social innovation lab to an online environment. This study is the first of its kind to employ the social innovation lab methodology in a completely virtual setting. While existing publications cover online adaptations of some elements of social innovation labs such as co-design (Kennedy et al., 2021; Zhang et al., 2022) and systems mapping (Penn et al., 2022), activities that involve more complex interactions which are characteristic of social innovation labs have not yet been explored. This paper contributes new knowledge on facilitating these more complex interactions online. First, we present general insights on participant recruitment, facilitation, and evaluation in an online environment. We then focus on lessons learned from three types of activities that were particularly challenging to adapt from in-person to online: (1) networking and icebreaker activities; (2) bricolage; and (3) prototyping.

While the original intent of this project was not to study the social innovation lab method, but rather apply a social innovation lab methodology to address the issue of bioplastic packaging, the lessons learned from redesigning a social innovation lab for a completely virtual environment can be of use to researchers interested in this method.

Lab Context

In March 2020, at the start of the COVID-19 pandemic, implementation of an in-person social innovation lab on bioplastic packaging was being planned. The topic of bioplastic packaging was selected because it is considered a “wicked problem”, a problem that is complex and the solution is not clearly defined (Rittel & Webber, 1973). Bioplastics have risen in popularity as an alternative to fossil-fuel based plastics. However, the problems associated with bioplastics span its entire lifecycle. This includes the ecological footprint of its production, its use across the food supply chain, confusion on how to handle these products at the end of life, and a lack of infrastructure for processing waste management systems (Brizga et al., 2020). The convening question for the social innovation lab was: “What role can bioplastics have as a competitive alternative to fossil-fuel based plastics in a truly circular and equitable food system?”

Workshop Planning and Design

To keep the project on schedule, the social innovation lab method had to be adapted to an online environment. The design of the social innovation lab was driven by a combination of the desired outcomes and practical considerations. The desired outcomes of the lab were to deepen the understanding of the key issues related to social and environmental impacts of bioplastic packaging from multiple points of view, facilitate connections between participants who typically would not be connected, and generate ideas for interventions that could address the social and environmental impacts of bioplastic packaging. As the target participants for this lab came from different backgrounds and for the most part, had not met before or only had limited interactions, an approach that included tools for relationship-building, peer-learning, and co-creation was important.

The method created by the Waterloo Institute for Social Innovation and Resilience was considered the best fit for the desired lab outcomes (Westley & Laban, 2015). This method organizes a social innovation lab into three workshops with a variety of interactive activities: 1) seeing the system; 2) designing solutions; and 3) prototyping (Westley & Laban, 2015). A workshop facilitation plan was created based on these activities, with adaptations for an online environment (see Supplementary Material 1).

A key practical consideration during the pandemic was time scarcity of different participant groups. For example, government staff who were seconded to frontline roles had limited availability for meetings or a constantly changing schedule. Due to school closures, many parents were working from home while watching over their children during the workday. Online meeting fatigue was also another concern (Kennedy et al., 2021) since many potential participants were meeting virtually already. As such, the team recognized that holding an all-day event, as is usually planned for in-person social innovation labs, would make the process less accessible. We surveyed potential participants to assess their preference and selected time slots that worked for most of them. Based on our consultation, we split the workshops into two or three separate sessions of two to three hours each, and spaced one to two weeks apart. This shortening of sessions is consistent with other studies that transitioned from in-person to online workshops (Kennedy et al., 2021; Penn et al., 2022; Zimmermann et al., 2021).

Participant Recruitment

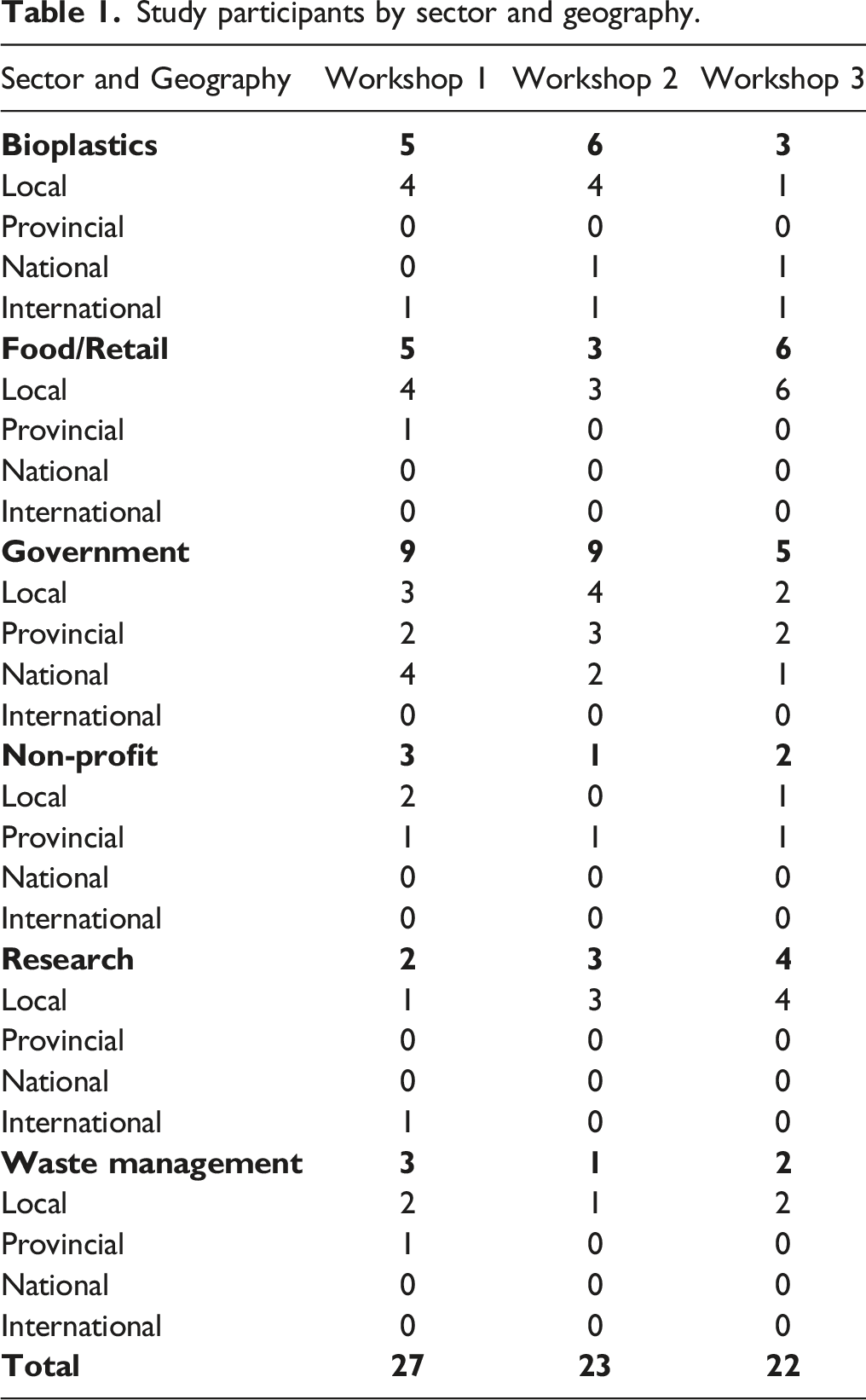

Study participants by sector and geography.

Workshop Implementation and Virtual Facilitation

The lab workshops took place between October 2020 and April 2021. Ahead of each workshop session, the research team met to review the agenda. This served both as a training session for team members who were not familiar with the social innovation lab method, and a trial run of the adapted activities. The plans for the workshop were revised based on the feedback from these meetings, such as clarifying instructions, changing time allocations, or modifying activities for better flow.

For each workshop session, the online platform was opened approximately 10 minutes before the start time to allow participants to sign in, test their audio and video, and become familiar with the platform if it was new to them. The opening of the session included a territorial land acknowledgement, a summary of the previous session (in the case of the first session, a project introduction), and an icebreaker game for participants to introduce themselves. The main activities of the session (see Supplementary Material 1) then followed. Participants were divided in two or three breakout groups of approximately four to eight people for these activities with one facilitator and one or two student research assistants who took notes and offered general logistics support. The lead facilitator managed the overall technical logistics, coordinated the breakout groups, and managed the timing of each activity. The research team used a Slack channel for internal communications during the workshop sessions for logistics and troubleshooting.

Workshop Evaluation

Online feedback forms were provided to participants at the end of the first and second workshops to obtain anonymous feedback on the content of the workshops and their experience with the virtual lab process (see Supplementary Material 2). Due to low response rates, the responses were not used for analysis, although they were discussed during the researcher debrief sessions. An online feedback form was not circulated for the third workshop. The evaluation of the lab process was based primarily on reflections and observations of participants from the research team noted during debrief sessions that took place after each workshop and workshop outputs such as notes from participant discussions and shared whiteboards. Notes from debrief sessions and workshop outputs were grouped into lessons learned by phases of the overall lab process (e.g., recruitment, facilitation, evaluation) and by workshop activity type.

Reflections and Lessons Learned

Participant Recruitment

One advantage of holding virtual workshops was that participants could be recruited from a broader geography and offered different perspectives (Archibald et al., 2019; Wilkerson et al., 2020). We were able to expand recruitment of participants beyond the local region where the in-person workshops would have been held. Participants from the federal government, international businesses, and international organizations were able to attend online workshops but would have likely not participated in-person due to the additional travel time and expense.

For social innovation labs, greater diversity is beneficial to represent a variety of viewpoints and increases the potential for innovation (Marcelloni, 2019; Westley & Laban, 2015). For example, the federal government plays an important role in setting policy for bioplastic feedstock and product development, but staff members would have needed to take a five-hour flight to attend the workshop in-person. An unexpected outcome of switching to online workshops was that participants who would otherwise have had very little reason to connect, gained unexpected insights. One participant representing the federal government commented in the closing of the first session of Workshop 1: "It's great to hear from people working on the infrastructure side of the issue and learn from them. Learning that throughput for composting systems is only 20–30 days is huge...and to be reminded that compost is a product with value, not a waste stream."

By scheduling the workshops as series of shorter sessions, we also improved accessibility for participants who were joining from different time zones. For example, a full-day session in Western Canada would end in the late evening for a participant from Eastern Canada, who is 3 hours ahead. However, a half-day morning session in Western Canada would translate to an afternoon session in Eastern Canada and therefore fit within the workday for both time zones. This shift in design, from workshops needing to be dedicated single or multi-day events, to shorter, distributed events, could be useful to reduce barriers in participation as more global collaboration takes place across multiple time zones.

Online Facilitation

Virtual social innovation lab facilitation was a new experience for the research team. Much of our focus in redesigning the workshops was on the participants' experience and we overlooked how an online environment would affect facilitators. In the debrief after the first session of Workshop 2, one of the facilitators commented: "There was too much going on. I was trying to focus on my group's conversation, and at the same time monitor the comments coming in the chat, and then I saw I missed a bunch of Slack messages."

It became clear that the facilitators were doing too much at once and feeling overextended. Even though our resourcing approach was similar to other studies that had notetakers assigned to each group to ease the burden of notetaking responsibilities, as well as a dedicated technology support person that with technology issues (Kennedy et al., 2021; Nobrega et al., 2021; Penn et al., 2022), we could have benefited from an even higher facilitator-to-participant ratio.

In an in-person session, facilitators can solely focus on holding the space for the group’s conversation, which itself can be a challenging task if there are polarizing opinions. In a virtual environment, where participants cannot read the body language of other participants as well, or have visual cues on when to speak (Nobrega et al., 2021), facilitators needed to take a more active role in moderating the discussion through using tools like the hand raising function to create a speakers' list so participants are not talking over each other. While these types of tactics are also used in-person, the added technological issues such as participants forgetting to mute or unmute themselves, needing to repeat what they say due to a poor internet connection, or missing a part of a discussion due to a dropped connection all contribute to more frustration and stress to a discussion or reduces engagement (Khan & MacEachen, 2022; Lathen & Laestadius, 2021; Oliffe et al., 2021), which the facilitator needs to then manage.

Another element of virtual facilitation that is different from in-person is the chat function. A benefit of a chat function is that participants who were having poor internet connections or felt more comfortable typing their responses could still communicate with the group. However, this sometimes created two parallel conversations that the facilitator then needed to integrate. Monitoring multiple chat messages in real-time while carrying on a conversation was challenging for facilitators because they felt like they could not keep up. At times, there was frustration from the participants using the chat function because it appeared as if their opinions were being ignored.

Lab Evaluation

For in-person social innovation lab workshops, evaluation usually took the form of a paper feedback form at the end of the event. We converted the paper feedback form into an online feedback form and expected similar response ratio of feedback as in-person workshops, but instead had a very low response rate. We identified two factors that may have contributed to this low response rate: (1) we shortened the workshop closing (when feedback was requested) to make up for running behind schedule; and (2) participants could easily leave the workshop by closing the application window.

Since the feedback form was available online, we prioritized having more workshop activity time when we were running behind schedule as a trade-off with time for participants to fill out the feedback form. The rationale was that participants could fill out the feedback form later if they didn’t have enough time during the workshop. Even though the feedback forms from Workshops 1 and 2 were very short (five multiple-choice questions and three open-ended questions that were all optional and anonymous), we only received five responses to each. Reminder emails were sent out to prompt participants to complete the feedback forms after the event but these did not result in any more responses. Due to these low response rates, we did not create a feedback form for Workshop 3.

We witnessed that during the workshop closing, participants already started leaving the workshop before we prompted for feedback. This contrasted our previous experiences with in-person workshops where participants typically stayed until the end (and therefore completed the feedback forms). The ability to quietly drop out of a workshop by closing an application window is less disruptive than walking out of a room, making it easier for participants to skip completing a feedback form.

Networking and Icebreaker Activities

Icebreaker activities are useful for building rapport and connections between participants, as well as giving participants an opportunity to practice using the online tools (Shamsuddin & Sheikh, 2021; Wilkerson et al., 2020). Similarly, informal networking and unstructured time at events can be beneficial for participants to find common interests (Budd et al., 2015). Within the Zoom platform, networking is difficult to implement and can only be accomplished through sending private chat messages. Some icebreaker activities are more easily adaptable to a virtual environment, such as small group discussions or online games. However, for in-person social innovation labs, icebreaker activities and informal networking time often require some type of physical movement, such as going for a walk, having a coffee or meal break together, or playing a game.

To emulate physical movement in a meeting space, we used Gather.Town, an online meeting platform that allowed participants to move around and interact in a virtual space like a video game (see Figure 1). Each participant had an avatar that they could move around the screen and interact with other participants' avatars. Based on the proximity of the avatars to each other, they could interact with each other through voice and/or video. This meant virtually sitting together at the same table or listening to a speech where someone is at a virtual podium or going to a virtual “coffee and food” table to network with people nearby. Screenshot from Gather.Town.

However, participants had varying levels of technical abilities so there was extra troubleshooting at the beginning of the session, which reduced the time for conducting the workshop itself. However, during the time it took to resolve technical issues, participants were able to roam around and network in the absence of a formal program. Conversations were still happening in small clusters scattered around the Gather.Town space so participants could keep themselves occupied instead of disengaging. Another unintended positive consequence was the opportunity for building connections during the troubleshooting process (Archibald et al., 2019) when participants who were more familiar with Gather.Town helped other participants who were learning the platform. As one participant who had more technical challenges at the start commented at the closing of this session: "Once we learn this platform it'll be great!"

For the icebreaker activity, which served a dual purpose of finding connections between participants and familiarizing them with the platform, we played ‘vote with your feet’. This type of facilitation technique is commonly used at in-person workshops to get participants moving around in a room, as well as get to know other participants by seeing how they ‘vote’. Participants were instructed to walk to different parts of the room based on their reaction or response to a statement or question from the facilitator. For example, one wall of the room was for participants that ‘strongly agree’, the opposite wall was for those who ‘strongly disagree’, and the middle was for those who were ‘neutral’; participants could position themselves anywhere along the continuum. In Gather.Town, we instructed participants to move their avatar towards the left or right side of the screen, depending on how much they agree with practice statements such as “Do you like pineapple on pizza?”. Some participants had challenges initially with moving their avatars. Members of the research team were able to assist these participants and by the end of this activity, all the participants were able to fully participate.

Bricolage with a Shared Whiteboard

In a traditional bricolage, participants work in teams to construct a physical representation of their solution with different objects (Westley & Laban, 2015), such as items collected by the participants or craft supplies provided by the facilitators (see Figure 2 from a previous social innovation lab). We looked for options to create a three-dimensional experience for participants to do bricolage collaboratively online but could not find a platform that was web-based and easy to learn for a new user. Therefore, to conduct bricolage virtually, we worked in two-dimensions and used the shared whiteboard feature of Gather.Town, which could be co-edited by each participant in a breakout room. These shared whiteboards essentially functioned like a piece of flipchart paper and the drawing tools were like having markers, paint brushes, and craft supplies in the middle of a table for an in-person session. In this case, participants could edit the shared whiteboard using a variety of drawing tools to create a collective “picture” of their solution (see Figure 2). In-person (left) versus virtual (right) bricolage.

The shared whiteboard feature of Gather.Town allowed participants to co-create on a common canvas with different drawing tools, like working together in-person on the same table with craft supplies. However, using a shared whiteboard was more chaotic and less collaborative than doing bricolage with craft supplies. The online drawing tools experienced a few glitches and drawing on a track pad or mouse was difficult for some participants. On a shared whiteboard, each participant was an anonymous arrow, and the only communication was through audio and video. The video windows showing other participants were very small because the whiteboard took up most of the screen, and as such, body language could not be read easily during the bricolage process. We observed multiple times when participants accidentally (or perhaps, intentionally) drew over or erased another participants work. In real-life bricolage, the activity of collectively building is more thoughtful as a participant would need to physically remove, reshape, or destroy another participants piece without anonymity. In other words, each participant has more accountability since other participants can see what they are doing. This difference can be seen in how the mental models of participants are represented in virtual bricolage versus real-life bricolage. One facilitator who experienced both types of bricolage noted that there was less creativity and focus in the virtual setting because participants could draw by freehand and write text to convey ideas instead of being constrained to using a fixed set of materials. In real life, the physical constraints “forced” participants to innovate together, which led to representations that reflected one or two key ideas with more depth to implementation realities. In contrast, there were more ideas discussed in the virtual setting, but it was challenging to get participants to focus and turn the ideas into a tangible solution. From a social innovation perspective of transforming ideas into solutions, the virtual setting worked well for generating the ideas, but was limiting for further developing these ideas into potential solutions.

Prototyping with Gamification

The objective of prototyping in a social innovation lab is to get feedback on a potential solution (or elements of it) before implementing it in real life (Westley & Laban, 2015). Prototyping can be done in a variety of ways, depending on what the solution is. Some prototyping methods are more conceptual, such as sensitivity testing or computer modelling (Westley & Laban, 2015). Other methods are more tangible, such as building models with cardboard or Lego (Tawalbeh et al., 2017). The latter was considered for one of the solutions identified in this lab (i.e., improving labels to distinguish bioplastic packaging from other types of plastic packaging).

During the design phase, we witnessed disagreements and heated discussions over potential solutions raised by the participants. It was very challenging to facilitate and deal with these more animated discussions online due to the time limitations and the structure of the Zoom platform. As such, we decided to try gamification for rapid prototyping. Gamification is the application of game design concepts to contexts that are not necessarily game-based (Deterding et al., 2011). Gamification was selected so participants could abstract themselves from their real-life professional representation as members of certain industries or departments, and instead take on the role of players on a team in a game while still being able to simulate real-life situations. This mindset change can improve engagement and collaboration (Zhang et al., 2022). In the prototyping phase, we added gamification as a new approach to “play with” potential solutions within the “container” of the lab. We also wanted to take advantage of virtual tools that were available for game design.

One game that we created was called “Can You Sort It?” and served as a simulation to see in real-time how well participants could categorize plastics based on a set of icons. In the first session, each group came up with a visual design (e.g., icons) for four types of plastics (e.g., compostable, recyclable, biodegradable, other). Between the two sessions, the research team created graphics of various plastic products with these icons. Three multiple-choice games (one per group) were then created on Kahoot!, an online game creation platform. In this game, each player needed to guess the category of the plastic in each question based on its icon (i.e., the labels designed by the different groups) within 5 seconds. In the second session, each group played the game created by a different group. In each breakout group, participants played the game as individual players, using their own smartphone or another browser window on their computer as a game controller to select their answer (one of the four buttons) while the group facilitator shared their screen with the question (see Figure 3). The participant with the highest score won the game. Screenshot from Kahoot!.

Most participants did not have experience with the Kahoot! gaming platform. To level out the learning curve across participants, we ran a few practice rounds before proceeding to the actual game. We observed quick uptake on using the game controller. However, some participants commented that the buttons on the controller (which were set as basic colors like a physical video game controller) were a bit confusing because they differed from the colors of the symbols in the pictures of packaging (e.g., compostable is blue on the controller and green on the packaging, see Figure 3). As one participant reflected in the activity debrief: “I found the colors to be really a challenging thing. Because you literally couldn’t just let your brain automatically sort. You literally had to, like stop your brain from doing what it wanted to do, transfer it to some other pathway, and then click the button.” Interestingly, another participant commented on how this type of confusion reflects real-life situations: “So, yeah, I just kept on getting mad at myself because like, I know, I know, that goes there. But I know, that’s like the whole point of the research. People only take 6 seconds to recycle this sort of thing.”

Although there was initial frustration in learning the game, many of the participants commented that they can see the utility of this approach for their work in bioplastics product design and waste management. At the end of the game, one participant commented: “I love it. We do a sorting game for [name of organization]. I think I’m gonna use this. I’m gonna use this in the virtual class, just for quickly mapping out symbols. I think that’s one of the most effective quiz games I’ve seen for this.”

Discussion

Consistent with other studies, virtual engagement enabled the diversification of representation from other provinces and countries without travel (Archibald et al., 2019; Wilkerson et al., 2020). However, virtual workshops also required participants to have a stable internet connection, access to a computer, and basic knowledge in using video conferencing software. While most of the target participants (i.e., working professionals) had these resources and skills, some groups (e.g., unhoused and/or low-income communities) that we had originally planned to invite, may not have been able to participate.

One potential solution to this challenge are multi-site (hybrid) events where participants can participate in-person and then be linked virtually to other participants (van Ewijk & Hoekman, 2021). However, a hybrid event would require more funding for a workshop space, catering, and additional facilitators to oversee parallel activities in-person and online.

Due to the additional multi-tasking involved in facilitating online, more facilitators or support staff should be recruited for virtual social innovation labs. This can help with mitigating facilitator burnout and the sense of being overwhelmed with juggling multiple platforms while being present for the diverse needs of the participants. Having additional staff on hand can also be a back-up for facilitators that lose their internet connection or have technological issues during the workshops.

A key limitation to workshop evaluation was the limited feedback that we collected from participants. The key lesson was that we should have made the completion of the survey as part of the workshop before closing of the session, although that would have cut into workshop activity time. To keep participants engaged, embedded live polls within the video conferencing software could be used to capture feedback instead of sending participants to a separate survey link. This form of real-time feedback does not just apply to evaluating the lab but can also be used as a “pulse check” for gathering participants' opinions or feelings on the topics being discussed, or their well-being as activities are taking place.

The biggest difference in using Gather.Town over a more conventional video conferencing platform like Zoom is that it brought back the “hallway time” of conferences and events where participants can network. McElroy (2002) argues that due to innovation being a social process, social learning and networking are critical. On the Zoom platform, informal networking can be a challenge to facilitate Gather.Town enabled these side conversations and informal networking opportunities, which are valuable for participants to connect, discuss topics of common interest, and find ways to collaborate despite being in a virtual space (Budd et al., 2015; McIntyre et al., 2007). In Gather.Town, participants have the agency to “walk up to” another participant and start a conversation, join conversations, or just talk to the people who are in their proximity.

In adapting from in-person to virtual facilitation, one of the biggest challenges was shifting bricolage from a tactile, three-dimensional experience to something more abstract in two-dimensions. There were several challenges in using the shared Gather.Town whiteboard such as participants drawing over each other, as well as glitches. While establishing more rules or facilitation to overcome some of these challenges may help, more supervision may also remove some of the creative elements of bricolage. This example demonstrates some of the limitations of virtual environments where it is more difficult to emulate a real-life experience. There may be other collaborative 3D design tools outside of Gather.Town that would better serve the bricolage purpose. For example, instead of a completely open whiteboard, tools with building blocks or constraints on the “supplies” available could be used. However, this would require us to leave the meeting platform altogether and may not be as user-friendly for participants.

The introduction of gamification was a completely new element that was not originally planned by our team. The use of gamification for co-design in an online environment has also shown promising results (Zhang et al., 2022). We needed to shift the design mindset for the workshops and adapt based on lessons learned from the dynamics of the group which was simultaneously juggling divergent opinions. Originally, our approach to redesign was adaptation. We used virtual tools as substitutes for what would have been done for in-person sessions. We changed our approach and decided to use the virtual environment as the starting point. Instead of asking ourselves “What can we use to simulate this in a virtual environment?”, we asked “What can we take advantage of in a virtual environment to get to our desired outcome?”. This change in mindset opened up possibilities that we would have otherwise not considered (e.g., a waste sorting video game), and is a valuable lesson learned that could be applicable to other situations where the existing approaches are not going as planned. Overall, the game seemed to have worked in getting participants to reflect on the real-world implications of a potential solution (e.g., better labelling of plastics) and generated a richer discussion than what we observed in previous virtual workshop sessions.

Conclusion

Through adapting the social innovation lab method from an in-person setting to a virtual one, we gained insights on elements that work well or do not align with virtual tools. While there may be advantages to hosting social innovation labs online, such as diversifying the geographical base of participants, we noted that online facilitation required more support. With regards to equity concerns, we have yet to explore hybrid events that would enable participation of those who do not have access to a computer and internet or skills in using virtual tools. We found that certain tools such as Gather.Town helped emulate the physical aspect of engaging in the same room together and allowed for informal networking and conversations that would otherwise have been difficult in Zoom. We also found that gamification helped address the tense dynamic between participants when identifying solutions by broadening their horizons and engaging with bioplastics as a consumer. Although the lessons learned from the examples illustrated in this paper are focused on virtual social innovation labs, they can also be applied to other qualitative methods, such as focus groups and workshops. The key takeaways from this study are that with any methodological adaptation, there is a learning phase which needs to be incorporated into design, and the trade-offs between its advantages and disadvantages need to be considered.

Supplemental Material

Supplemental Material - Reflections From Implementing a Virtual Social Innovation Lab

Supplemental Material for Reflections From Implementing a Virtual Social Innovation Lab by Belinda Li, Tammara Soma, Nadia Springle and Tamara Shulman in International Journal of Qualitative Methods

Supplemental Material

Supplemental Material - Reflections From Implementing a Virtual Social Innovation Lab

Supplemental Material for Reflections From Implementing a Virtual Social Innovation Lab by Belinda Li, Tammara Soma, Nadia Springle and Tamara Shulman in International Journal of Qualitative Methods

Footnotes

Acknowledgments

The authors would like to thank the social innovation lab participants for their valuable insights and the research assistants for their support in facilitation and notetaking.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Trans-Atlantic Platform Social Innovation, Social Sciences and Humanities Research Council 2002-2019-0003.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.