Abstract

Despite the novel educational opportunities of chatbots, their integration into teaching and learning settings is still in the early stages. Understanding the interplay of teachers’ perceptions, attitudes, and intentions to use chatbots can provide insight into the sustainable integration of chatbots in K-12 education. However, little is known about teachers’ acceptance of chatbots in this context. This study proposes a framework to better understand teachers' acceptance of educational chatbots, based on the Technology Acceptance Model (TAM). We extended TAM by incorporating trust, subjective norms, and perceived innovativeness. Data were collected from 482 K-12 teachers with experience using the EBA Assistant chatbot. The study used a mixed-methods approach, combining quantitative structural equation modeling (SEM) with qualitative interviews from 54 participants. Results show that more innovative teachers perceive chatbots as useful educational tools. Teachers’ trust in chatbot outcomes and positive attitudes toward ease of use also increase their intent to use chatbots. Trust in the chatbot's privacy and pedagogical reliability, along with social pressure from colleagues and administrators, further influences their adoption. This study reveals that acceptance of educational chatbots depends on both individual characteristics (e.g., trust) and sociocultural factors (e.g., subjective norm). These findings provide insights that can help guide the integration of chatbots in K-12 education.

Introduction

The rapid development of artificial intelligence (AI) as an emerging computer science field has led to the widespread popularity of AI-based tools (Nguyen et al., 2022). The integration of such AI-based tools (e.g., chatbots, automatic scoring applications, and intelligent tutoring systems) in education is expeditiously expanding. Among these tools, chatbots are regarded as one of the most prevalent AI technologies with the potential to optimize teaching and learning activities (Chiu et al., 2023).

This optimization stems from personalized learning and teaching opportunities offered by chatbots. More specifically, chatbots might provide learners and teachers with immediate and adaptive feedback anywhere and anytime (Hwang and Chang, 2021). Due to personalization, chatbots can support knowledge construction by increasing student engagement with the learning process (Wu et al., 2020). Further, chatbots serve as a facilitator for answering frequently asked questions from students (Chen et al., 2020), in turn, reducing teacher`s and administrators’ workload with instructional and administrative tasks (Celik et al., 2022; Hwang and Chang, 2021). For instance, chatbots have the potential to support teachers with work plan management of instructions (Shawar and Atwell, 2007), and students with reminders of assignments (Ashfaq et al., 2020). A novel advantage of chatbots is that they might have human-like characteristics (e.g., empathy and self-disclosure) leading the conversation to be more authentic and natural (Lee and Choi, 2017; Liu and Sundar, 2018).

Despite novel educational opportunities of chatbots, their integration in teaching and learning settings is still in the early stages. In fact, the utilization of chatbots in education lags more behind than other fields such as business (Luckin and Cukurova, 2019). Further, harnessing chatbots in the K-12 context is less considered compared to higher education (Wu et al., 2020). One of the main reasons underlying the limited integration of chatbots in K-12 education is that less attention has been paid to the role of teachers in the use of educational chatbots (Luckin et al., 2022). Their perceptions, attitude, and intention to use chatbots are specifically important (Al Darayseh, 2023; Chocarro et al., 2021). This is because they are regarded as one of the most crucial stakeholders in the development and integration of AI-based technologies (Luckin et al., 2022). Understanding the interplay of teachers` perceptions, attitudes, and intentions to use chatbots might provide insight into the sustainable integration of chatbots in K-12 education (Seufert et al., 2021). The acceptance of any technology refers to revealing possible factors (e.g., perception about usefulness and ease of use) contributing to the intentional use of such technology (Davis, 1989). Therefore, teachers’ acceptance of educational chatbots can give understanding for developing strategies with the aim of fostering chatbot integration in education. However, little is known about teachers’ acceptance of chatbots in the K-12 context (Chen et al., 2020; Müller et al., 2019; Rese et al., 2020).

Researchers have analyzed the adoption or acceptance of various technologies drawing upon different theoretical frameworks. Technology Acceptance Model (TAM) is regarded as one of the most robust and widespread adoption frameworks in predicting user acceptance of emerging technologies (Arpaci, 2016). The current study suggests a research framework to better understand teachers` acceptance of educational chatbots based on the TAM. User perceptions of usefulness and ease of use are key components in TAM, which are key determinants of attitudes toward using a certain technology (Davis, 1989). The TAM model, while effective in predicting user behavior, has been criticized for its narrow focus on perceived usefulness and ease of use, potentially overlooking other critical factors (e.g., personal innovativeness) influencing technology adoption in complex environments (Al-Emran et al., 2018). Expanding TAM allows us to account for additional dimensions which are particularly relevant in educational settings where decisions are influenced by more than just the perceived functionality of the technology. Hence, to improve the prediction power of the TAM, we extended the TAM by incorporating three research variables: trust, subjective norm, and perceived innovativeness. Trust might be a crucial determinant of teachers’ decision to use chatbots for instructional purposes since it influences social interactions between end-users and automated systems (Boateng et al., 2016; Choi et al., 2022). We also argue subjective norms might serve as a significant predictor of teachers’ intention to use chatbots. In other words, if teachers observe that others, who are important to them, benefit from chatbots, they are likely to utilize chatbots for their teaching (Chang et al., 2022; Venkatesh et al., 2012). Moreover, it is worth investigating the impact of perceived innovativeness on teachers’ behavioral intentions for using chatbots as earlier empirical results indicate individuals’ innovativeness characteristics play an important role in the acceptance of chatbots (Kasilingam, 2020).

The aim and contributions of the current study

Teacher-AI collaboration is essential for effective teaching progression in the era of AI (Chiu et al., 2023). Therefore, teachers should collaborate and interact with AI-based tools such as chatbots for the overall effectiveness of instructions (Celik, 2023; Hwang and Chang, 2021). For effective collaboration of teachers with chatbots, it is necessary for teachers to accept chatbots as an instructional technology (Seufert et al., 2021). Although there has been growing interest in the use of AI and chatbots in education, most research has focused on higher education settings (Luckin and Cukurova, 2019; Wu et al., 2020), with limited attention given to K-12 environments. Studies that specifically examine K-12 teachers’ engagement and acceptance of educational chatbots are particularly scarce. For example, while some research has explored teachers’ general attitudes toward technology (Chocarro et al., 2023), few studies have investigated the specific factors that influence their acceptance of chatbots in instructional practices. Additionally, prior research often overlooks the sociocultural factors, such as trust and subjective norms, that play a critical role in teachers’ technology adoption (Müller et al., 2019). Considering this gap, the current study aims to reveal the key determinants influencing teachers’ attitudes toward and intentions in using educational chatbots. To this end, we developed and test a model of hypothesized relations using three main TAM constructs (perceived usefulness, attitude, and ease of use) by extending them with trust, subjective norm, and perceived innovativeness.

The key contribution of the present paper is to ascertain new determinants through an integrated model and to investigate their role in affecting teachers` decisions to exploit chatbots in teaching and learning settings. For instance, despite the significant role of trust in accepting chatbots from other fields (e.g., business) (Müller et al., 2019), its effect has yet to be tested in education from teachers’ perspective. Another novelty of this study is to present a unified understanding of how both individual and sociocultural factors are related to educational chatbot use. The acceptance of emerging technology is not only associated with individual factors but also sociocultural features (Mun et al., 2006). However, previous research has ignored the considerable influence of social, psychological, and cultural factors in shaping teachers’ acceptance of chatbots (Kizilcec, 2023). In light of this gap, we analyzed teachers’ willingness to use chatbots from different various aspects. Namely, together trust and personal innovativeness might be considered as individual characteristics of teachers; however, subjective norm is a concept from sociocultural structure (Fishbein and Ajzen, 1975; Gansser and Reich, 2021). It is maintained in previous research that prior experiences with certain technologies have influenced teachers’ acceptance of such technology for their teaching (Gartner et al., 2022). However, Kizilcec (2023) reported that studies on AI-based technology acceptance did not include any certain type of AI tools in the instructional process, and researchers attempted to evaluate teachers’ perceptions of AI-based technologies in general (e.g., Al Darayseh, 2023; Choi et al., 2022; Du and Gao, 2022). In response to this gap, the current study analyzed teachers’ acceptance after their actual use of a specific chatbot. The current study also contributes to teachers’ integration of chatbots into education by using both quantitative and qualitative methodologies.

Literature review and theoretical framework

Artificial intelligence and chatbots

AI is not regarded as a single technology, rather it is a technical term. AI is defined as learning to perform a task associated with humans by justifying and engaging the environment (Russel and Norvig, 2010). That is, AI-powered technologies can collect data from their environment and analyze such data with an automated process. Then, they produce adaptive outputs specific to the environment (Celik, 2023). AI is an umbrella concept that includes various analytical sub-fields. The most well-known sub-fields are machine learning, natural language processing, and deep learning (Zawacki-Richter et al., 2019). Chatbots as AI-based technologies benefit mainly from natural language processing. Chatbots serve as conversational or virtual agents allowing users to interact with a machine to achieve a goal (Shawar and Atwell, 2007). Users interact with chatbots by text-based or voice-based input (Luo et al., 2019). ELIZA, developed by Joseph Weizenbaum in 1966, is seen as the first chatbot (Califf and Mooney, 2003). Massive data sets in Internet platforms (e.g., social media posts) are the source of information for chatbots when they answer questions to a user inquiry (Goyal et al., 2018). Nowadays, chatbots from a variety of fields (e.g., business, health, and education) are deployed for numerous purposes (Chang et al., 2022; Luo et al., 2019). For instance, chatbots are exploited to get daily forecasts, news for updates, and recommendations during online shopping and booking flights (Kasilingam, 2020). Further, some chatbots with sophisticated sensors capture multi-modal data from users (e.g., movements) and their environment (e.g., brightness, and temperature) (Dale, 2016); ultimately, offers timely and adaptive support for users’ task such as recommending suitable exercise activities (Hancock et al., 2011).

Chatbots offer many advantages to teachers for their instructions. For instance, the utilization of chatbots might help teachers to promote student’s engagement and increase their interest in learning topics (Wu et al., 2020). For language learning, chatbots provide real-time conversational practice, allowing students to engage in authentic dialogue outside the classroom (Wu et al., 2020). In mathematics and science, chatbots can offer personalized tutoring, giving immediate feedback and adjusting content based on students’ learning progress (Ashfaq et al., 2020). Further, chatbots answer students’ inquiries about the process of instructions (e.g., exam dates and assignment details), giving more time to the teacher for better interaction with students (Chiu et al., 2023). Teachers can also interact with chatbots to get technical and pedagogical support before their lectures during online or hybrid teaching (Hwang and Chang, 2021; Lee and Choi, 2017). Particularly teachers can benefit from chatbots when they search for educational materials to use in their lectures (Liu and Sundar, 2018). Moreover, chatbots enable students to authentically apply their knowledge in language learning. After the application process, teachers can use some metrics from chatbots for better student assessments (Celik, 2023; Wu et al., 2020).

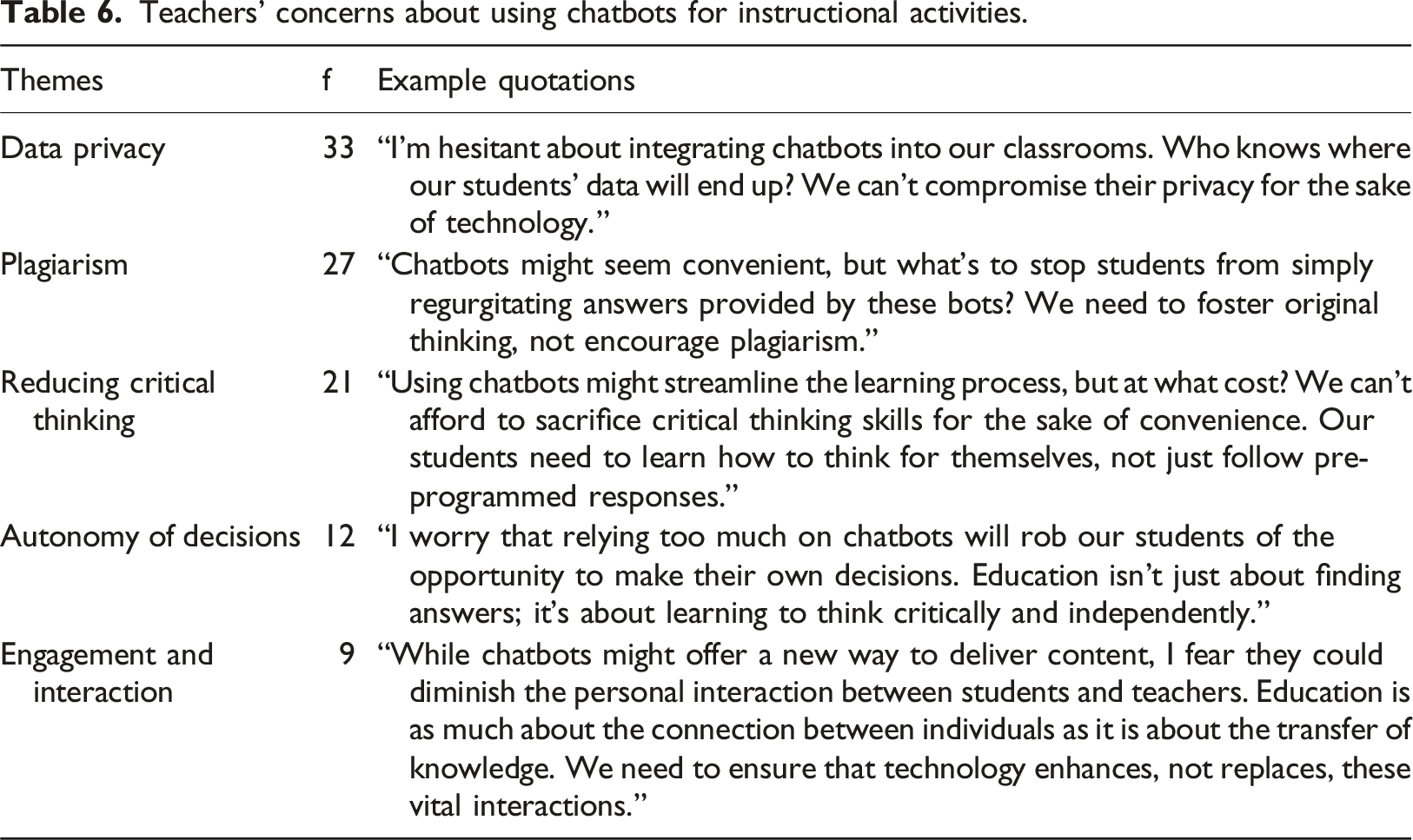

However, despite these successes, there are challenges. Teachers often report difficulties in integrating chatbots into their curriculum due to technical issues, such as limited customization options or chatbot responses that lack depth (Chen et al., 2020). Additionally, concerns around data privacy, plagiarism, and the potential over-reliance on automated systems for critical thinking tasks are significant barriers to wider adoption (Liebrenz et al., 2023). These challenges highlight areas for improvement, particularly in enhancing chatbot capabilities to better align with pedagogical needs and ensuring robust data security measures.

Technology acceptance model (TAM)

TAM as a robust framework is validated to explain determinants for the acceptance of information systems (Davis, 1989). The TAM is grounded on Fishbein and Ajzen’s Theory of Reasoned Action (TRA). According to the TRA, the individual`s adoption behavior is estimated by behavioral intentions, which are also explained through attitudes toward the behavior (Fishbein and Ajzen, 1975). Researchers employed TAM constructs for understanding users’ behavior regarding the acceptance of new technology. Perceived ease of use and perceived usefulness are the main constructs that directly or indirectly predict behavioral intention for using technology (Davis et al., 1989). In addition, using the behavior of a new technology is a direct indicator of behavioral intention, which is formed by the person’s attitude. In the present study context, perceived usefulness refers to the degree to which a teacher believes that using educational chatbots enhances their teaching performance (Davis, 1989). Meanwhile, ease of use is defined as the degree to which a teacher perceives the chatbot as being effort-free to use (Davis, 1989). Attitude is the teacher`s negative or positive feeling about the use of educational chatbots during the instruction. We argue that teacher`s perception on usefulness and ease of use play a significant role in shaping their attitudes toward using chatbots, in turn, contributing to their behavioral intention to use chatbots.

Trust, subjective norm, and personal innovativeness

Trust might have a considerable impact on the acceptance and adoption of technologies (Muir, 1987). In fact, end-users might have sustainable intention to use any technology if they have sufficient trust in such technology (Chiou et al., 2020). The degree of credibility, confidence, and predictability of a person`s behavior is an indicator of improved trust among people (Cassell and Bickmore, 2000; Mayer et al., 1995). As AI becomes more widespread in everyday technology use, revealing the consequences of individuals` trust in AI becomes increasingly crucial for the acceptance of AI (De Sousa et al., 2019; Gao and Waechter, 2017). In AI settings, trust refers to the extent to which AI-based technology`s decisions and outputs are perceived as trustworthy and reliable (Shin, 2021). In this study, we employed trust as teachers’ confidence in the reliability and trustworthiness of the suggestions and decisions offered by educational chatbots. Trust in using educational chatbots depends on the privacy issues and educational appropriateness of the chatbot suggestions (Celik, 2023).

Agarwal and Prasad (1998) suggested personal innovativeness as a significant determinant of accepting new technology. It refers to the degree to which a person is comparatively earlier in accepting new ideas than other people within a social system (Rogers, 2006). Accordingly, the more innovative people become the more they are willing to interact with new technologies. Further, innovative people with a high tolerance for uncertainty are encouraged to experiment with new things (Powell et al., 2014). Therefore, the acceptance level of new technology is likely to be higher among innovative users (Crespo & del Bosque, 2008). In educational contexts, innovative teachers tend to take a leading role in implementing new pedagogical methods and strategies and integrating emerging technologies (e.g., chatbots) (Powell et al., 2014).

According to Fishbein and Ajzen (1975), subjective norms refer to social pressure on an individual for performing a certain behavior. In other words, a person`s decision to use a technology may be influenced by other close people`s (e.g., family and colleagues) choices (Venkatesh et al., 2012). Prior psychological research empirically evidenced that subjective norms are positively associated with behavioral intention to use new technology (Herrero et al., 2008; Yi et al., 2006). Therefore, subjective norm might lead to an increase in the acceptance of AI-based technologies such as chatbots.

Hypothesis development

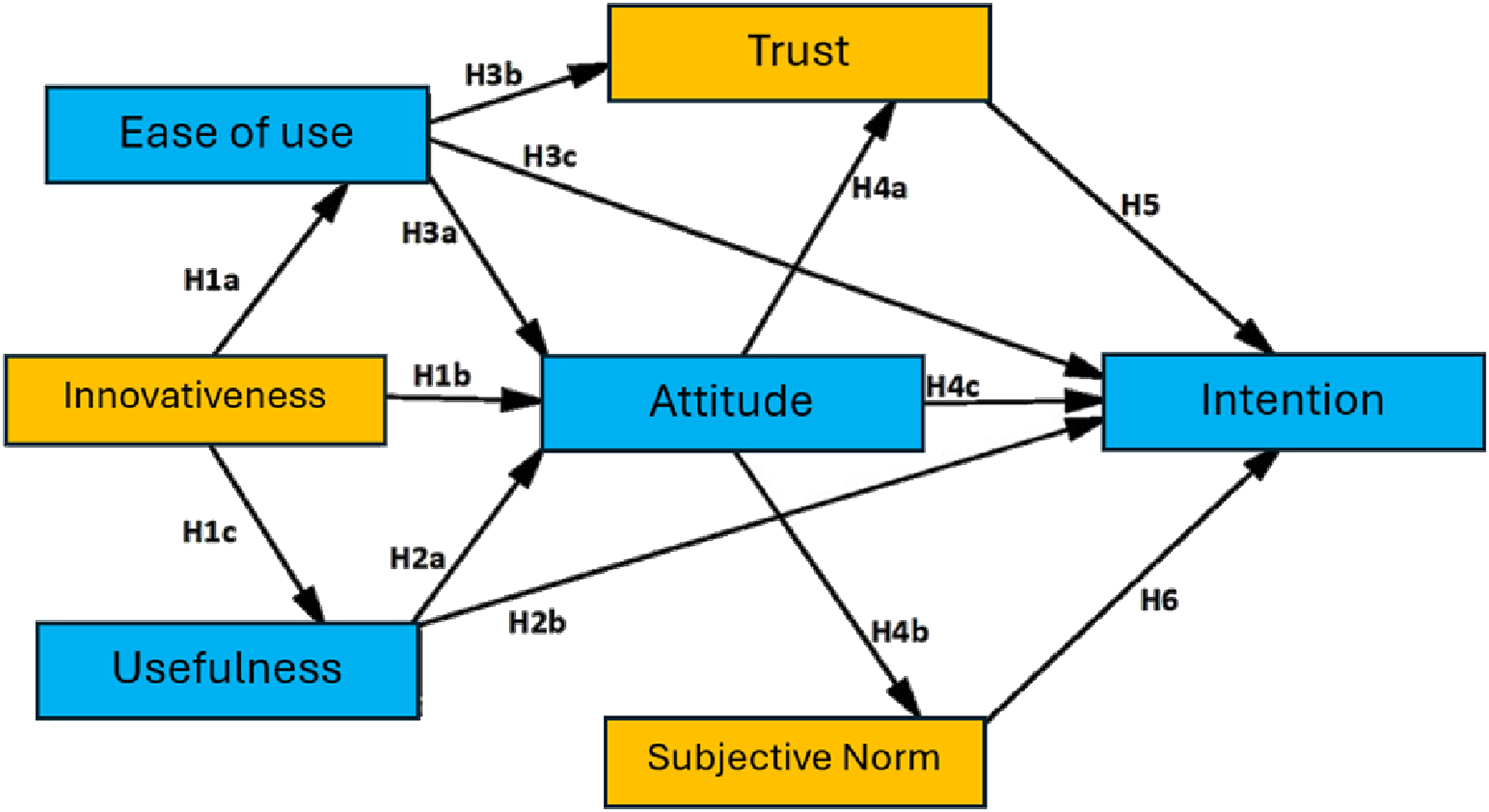

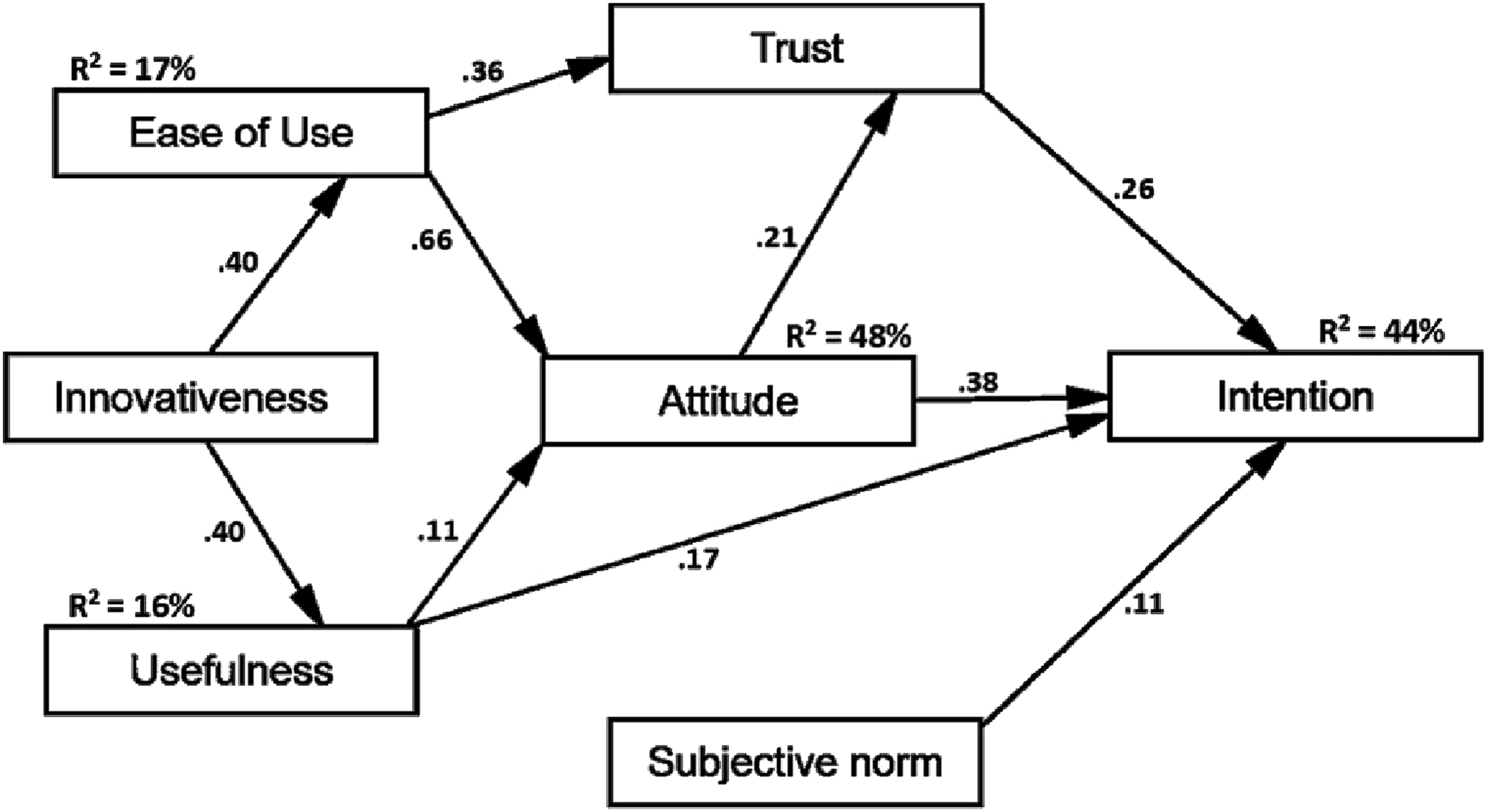

Based on the theoretical foundations detailed above, six main hypotheses were determined to develop a research model to investigate the interplay of the TAM construct (illustrated in yellow) and three additional variables, that is, perceived innovativeness, trust, and subjective norms (see Figure 1). (TAM constructs illustrated in yellow, additional variables in blue) The research model.

Effect of perceived innovativeness

Research has shown that individuals with higher perceived innovativeness are more likely to perceive new technology as being useful and easy to use. Further, more perception of innovativeness is associated with a positive attitude toward trying new technologies (Agarwal and Prasad, 1998). Innovative teachers are expected to be more interested in and interact with AI-based technologies (Wang et al., 2012). For instance, as they interact with educational chatbots, they can recognize the technical and pedagogical affordances of chatbots (Celik et al., 2024; Chen et al., 2016). Such an interaction process might enable teachers to use educational chatbots more effort-free. As innovative teachers are open to experimenting with new ideas, they can perceive that utilizing chatbots in their instruction reduces their workload (Roh and Choy, 2018). Further, perceived innovativeness generally increases teachers’ curiosity and familiarization with various technologies and, thus, leading teachers to use technologies with less effort (Schweitzer et al., 2016). Therefore, we hypothesize the followings:

Perceived innovativeness positively influences ease of use.

Perceived innovativeness positively influences attitude.

Perceived innovativeness positively influences perceived usefulness.

Effect of perceived usefulness

Usefulness is one of the key reasons to have a positive attitude towards and more intention for using new technology (Kasilingam et al., 2020). It is more expected for teachers to be willing to use educational chatbots in their teaching when teachers notice the instructional advantages of chatbots (Chen et al., 2020; Chocarro et al., 2023). In other words, teachers with positive attitudes will accept chatbots as an instructional technology when they understand that chatbots have the potential to foster student motivation and engagement (Ashfaq et al., 2020). The main affordances of chatbots such as enhancing communication skills, facilitating material search, and answering students` frequently asked questions allow teachers to have more time for interacting with the students; ultimately, these pedagogical advantages of chatbots may be a motivation of teachers to utilize chatbots. Perceived usefulness was found to be positively associated with teachers’ affective response to the use of educational chatbots (Chocarro et al., 2023). Hence, we assume the following:

Perceived usefulness positively influences attitude.

Perceived usefulness positively influences the intention to use.

Effect of ease of use

User-friendly interfaces of technologies might facilitate the adoption by avoiding technological confusion (Davis et al., 1989). In other words, teachers are expected to accept chatbots if they can easily understand how to execute a task through chatbots (Celik et al., 2022). Teachers might have positive or negative feelings when they engage with educational chatbots. Such feelings can stem mainly from the usability of chatbots (Wu et al., 2020). Teachers might be encouraged to interact with chatbots if they perceive them as being easy to utilize (Liebrenz et al., 2023). If using chatbots is easy, authentic, and more human-like nature, end users might tend to have more trust in using chatbots (Lee and Choi, 2017). Teachers can consider chatbots as more trustworthy if they use them without any technical challenges or delays in responses to teacher inquiries (Choudhury and Shamszare, 2023; Seeger and Heinzl, 2018). In light of these, the following can be suggested:

Ease of use positively influences the attitude.

Ease of use positively influences trust.

Ease of use positively influences the intention to use.

Effect of attitude

The positive relationship between attitude toward chatbots and behavioral intention to use has been reported in many fields (e.g., medicine and business) (Shuhaiber and Mashal, 2019; Yoo et al., 2018). Further, found out teachers` positive feelings about educational chatbots positively affected their behavioral intentions (Chocarro et al., 2023). The lack of teachers` positive attitudes toward chatbots may prevent the development of trust in chatbot-teacher interaction (Nundy et al., 2019). In other words, teachers with negative attitudes towards chatbots are less likely to trust in the decision of chatbots in terms of privacy and educational appropriateness (Laranjo et al., 2018). A positive association of attitude towards chatbots with subjective norms might be expected in the acceptance process. Namely, teachers with social pressure to use educational chatbots in their teaching can create positive feelings about using educational chatbots. Hence, we formulated the following hypothesis:

Attitude positively influences trust.

Attitude positively influences the subjective norm.

Attitude positively influences the intention to use.

Effect of trust

Prior studies suggested that trust could shape human interactions with emerging technologies (Taddeo, 2010; Viberg et al., 2024). Trust may be a crucial factor for the decreased acceptance of educational chatbots if teachers do have some privacy concerns and are less confident in the pedagogical appropriateness of chatbots (Wang et al., 2024). It is important to note that chatbots are data-driving technologies demanding data from users to answer their questions and train their algorithms. In this process, some teachers might hesitate to share personal and sensitive information, which may cause unwillingness to use chatbots (Liebrenz et al. (2023). However, teachers sometimes might tend to over trust in decisions of AI-based technologies, which makes them more willing to utilize chatbots (Seeger and Heinzl, 2018). Individuals’ acceptance of AI-based technology requires a certain degree of trust since they engage with to a non-human counterpart (Bickmore and Cassell, 2001; Hancock et al., 2011). Thus, we assume the following hypothesis:

Trust positively influences the intention to use.

Effect of subjective norm

Prior research indicated that subjective norms were observed to have significant and positive effects on intention to technologies (Gansser and Reich, 2021; Kim et al., 2009). For instance, Chang et al. (2022) empirically found out social pressure from important people was a significant factor attributing to chatbot acceptance. Considering prior empirical studies, it is anticipated that teachers have more intention to use educational chatbots as they are more convinced about educational opportunities of chatbots through their colleagues (Sohn & Kwon, 2020). This suggests that the more favorable the subjective norms about educational chatbots might lead to more willingness to use these AI-based technologies. Therefore, we hypothesize the following:

Subjective norm positively influences the intention to use.

Methods

Chatbot: the EBA assistant

In Turkey, the Ministry of Education developed an interactive system, named EBA Platform, to manage online distance education in the process of the COVID-19 pandemic. All teachers in K-12 education settings used and engaged with the EBA platform (MEB, 2020). In this process, a chatbot entitled EBA Assistant started to serve as a conversational agent for students and teachers. The EBA Assistant is known as the first intensively exploited AI-powered conversational assistant (Cbot, 2022). The chatbot was created with the possibilities of two main sub-fields of AI namely natural language processing (NLP) and machine learning. With the power of the NLP, the EBA Assistant comprehends the free texts like a human and offers an accurate answer. In other words, when a teacher engages with EBA Assistant by acting as a friend, the chatbot can understand the meaning of the text and interact with the teacher. Meanwhile, machine learning technology enables the EBA Assistant to be constantly trained with new inquiries. Through the EBA Assistant, teachers had an opportunity to find answers to their questions on technical (e.g., setting a password and creating an account), instructional (e.g., the curriculum and exams), and management issues (e.g., meeting with their students and counterparts and accessing the past lessons).

Participants

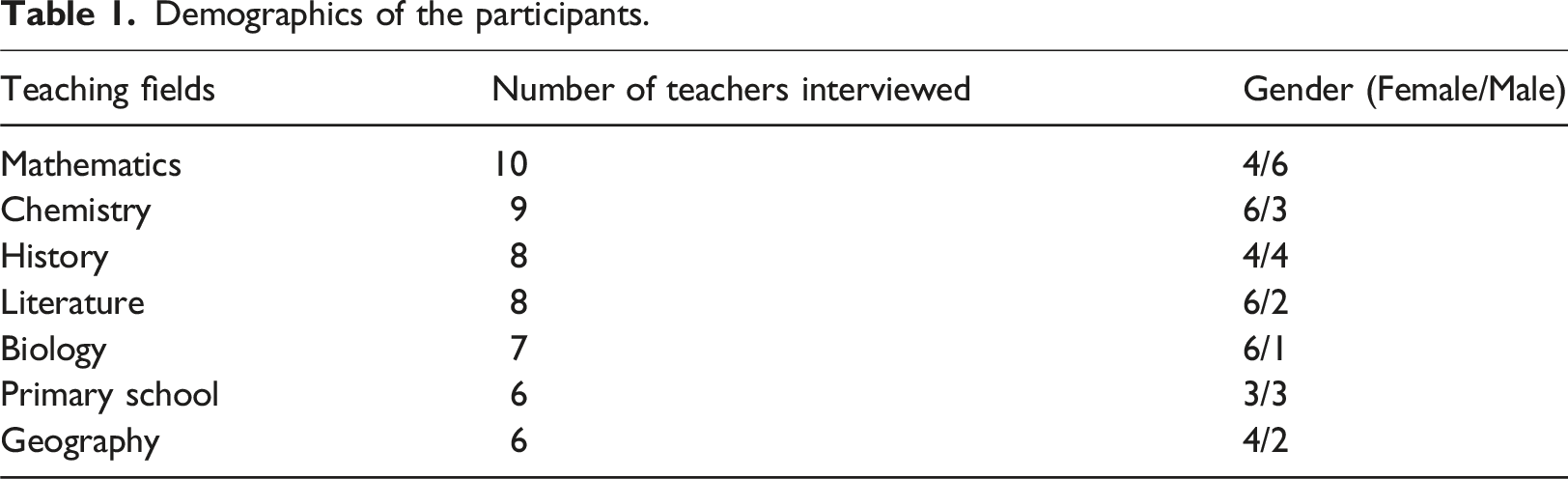

We sampled nine hundred teachers from three large cities (nCityA = 200; nCityB = 380; nCityC = 320. All teachers had experience with using the EBA Assistant. Thus, the current study employed purposive sampling method, thus, the current study employed a purposive sampling method, targeting participants based on their relevant experience with focused AI technology. Participants of the current study were teachers working in K-12 settings in Turkey.). We sent an online survey link to the participants. The online version of the data collection tool was sent to the sampled group of teachers. Five hundred ninety-one teachers filled out the data collection tool. However, nine teachers did not complete the survey; therefore, their answers were excluded from the data set. After this process, we tested the hypothesized research model with the data set of 482 teachers (nfemales = 201; nmales = 281; Mage = 34.2; SDage = 3.12; Mexperience(year) = 7.4; SD experience(year) = 3.28) from K-12 levels (fhigh school = 152; fmiddle school = 186; fprimary school = 144). At the end of the online survey, teachers were asked if they were willing to give additional insights about the usage of the chatbots. Then, we sent our open-ended questions to volunteer teachers via an online form.

Demographics of the participants.

Teachers with varying years of experience, different grade levels, and both positive and negative attitudes toward educational chatbots were included to ensure a representative sample. This purposive sampling allowed us to explore specific themes that emerged from the quantitative data, such as trust and perceived usefulness. We reached these voluntary participants through follow-up emails sent after the survey, inviting those who had indicated their willingness to participate in further research.

Data collection procedure

In this study, we utilized a mixed method approach to analyze teachers` acceptance and thoughts concerning chatbots in instruction. To measure the research variables, we adapted items using prior empirical research on technology acceptance. The number of items and source of research associated with research variables are as follows: perceived ease of use (four items; Davis et al., 1989), perceived usefulness (five items; Kasilingam et al., 2020), perceived innovativeness (four items; Powell et al., 2014), trust (three items; Cheng et al., 2022), attitude (four items; Chocarro et al., 2023) subjective norm (three items; Ajzen, 1991), intention to use (four items; Mei et al., 2018). An online survey was designed with all these 27 items (Please see Appendix A for sample items). The options are designed as a five-point Likert type and the options ranged from “1: strongly disagree” to “5: strongly agree.”

To better understand the teachers` purposes, expectations, and concerns regarding using educational chatbots, it was important to seek their view. For this purpose, three open-ended questions were asked to address the teachers` purposes, expectations, and concerns: (1) What are your purposes for using educational chatbots? (2) What are your expectations about using chatbots for instructional activities? (3) What are your concerns about using chatbots for instructional activities? All participants provided informed consent prior to their participation. The consent form outlined the purpose of the study, the voluntary nature of participation, and the right to withdraw at any time without any negative consequences.

Data analysis

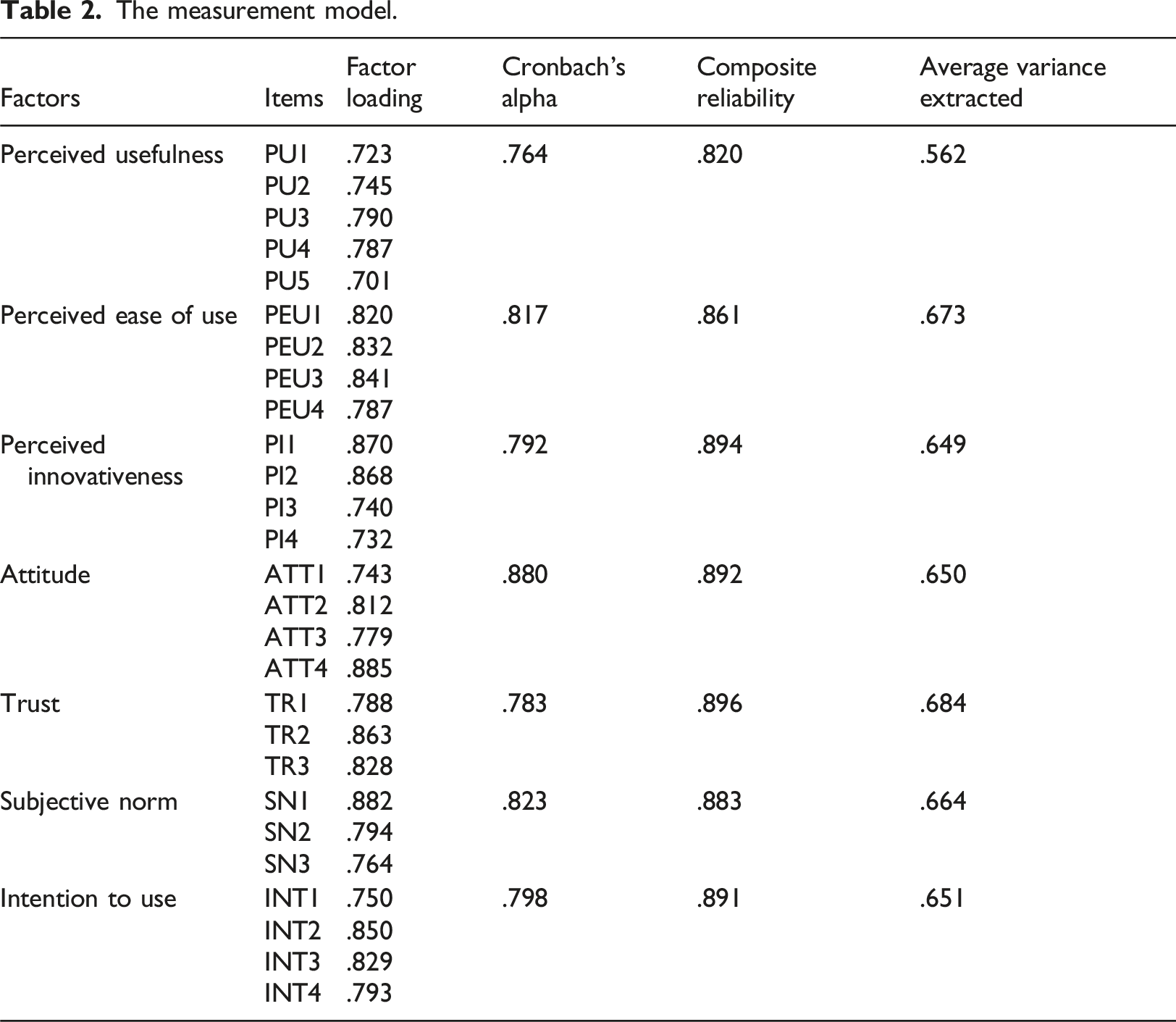

Convergent validity was performed to check the parameters of the measurement model. Next, three parameters were evaluated to confirm if the convergent validity was confirmed (Hair et al., 2006). Specifically, we first checked item reliability through factor loading (>0.7), then composite reliability by each factor (>0.7). Lastly, the average variance extracted (>0.5) was assessed. The structural equation modeling (SEM) analysis was performed to reveal the existing hypothesized associations among seven variables in the research model. SEM analysis is defined as an analytical procedure to analyze the causal relationships among several theoretical constructs and variables (Schumacker and Lomax, 2004). In this study, the predicting relationships among the main constructs of TAM (perceived ease of use, perceived usefulness, attitude toward using chatbot, and intention to use educational chatbots), and additional variables (perceived innovativeness, trust, and subjective norm) were analyzed using maximum likelihood estimation method based in the SEM approach. In the research model, we calculated an equation through endogenous and exogenous constructs (dependent or independent variables). Together direct and indirect relations of independent variables with dependent variables were predicted. Prior to SEM analysis, the necessary assumptions were controlled before the SEM analysis. With regard to the normality assumption, skewness, and kurtosis coefficients were found acceptable, meaning that no bootstrapping was needed. In addition, missing data from nine participants was excluded. The calculated equations among research variables are shown as the standardized regression weights (betas,

For the qualitative data analysis, we employed thematic analysis as outlined by Braun and Clarke (2006). Thematic analysis was chosen because it allows for systematic exploration of patterns within the data, starting with open coding and continuing with the identification and interpretation of broader themes that provide a comprehensive understanding of the research problem. Initially, the interview data were carefully read by the first author and coded to identify recurring concepts, ideas, and patterns. These codes were then grouped into larger, overarching themes that reflected participants’ attitudes, concerns, and experiences with educational chatbots. The goal was to explore how these themes aligned with or complemented the quantitative findings, providing a richer, contextual understanding of teachers’ acceptance of educational chatbots. This method allowed us to move beyond word frequency counts, focusing instead on the underlying meanings and relationships within the data. For example, both privacy concerns and pedagogical reliability emerged to have a possible role in trust, which are different from self-reported items. As the original data was collected in Turkish, 10% of the responses were translated into English and shared with the second author. Following the principles of investigator triangulation (Denzin, 2017), the first author independently coded this translated subset of the data and identified initial codes and themes. These were then reviewed and discussed with the second author. Any disagreements were resolved through collaborative discussions, cross-checking the coding scheme, and referring to relevant literature.

A sample size of 482 participants is considered sufficient for SEM analysis. According to Kline (2005), SEM typically requires a sample size of at least 200 for reliable parameter estimates, with larger samples improving model stability and power. The sample size of 482 exceeds this threshold, ensuring robust statistical power and model validity. For the qualitative component, the selection of 54 participants for interviews follows the principle of data saturation for capturing key insights (Guest et al., 2006). This number was sufficient to understand a diverse range of perspectives, while also allowing for in-depth thematic analysis.

Results

The measurement model.

As shown in Tables 1, it could be interpreted that convergent validity has been confirmed. More particularly, all factor loadings of factors were calculated as higher than 0.7 indicating the reliability of items. Further, both composite reliability and average variance extracted were found to be greater than the necessary criteria to meet convergent validity.

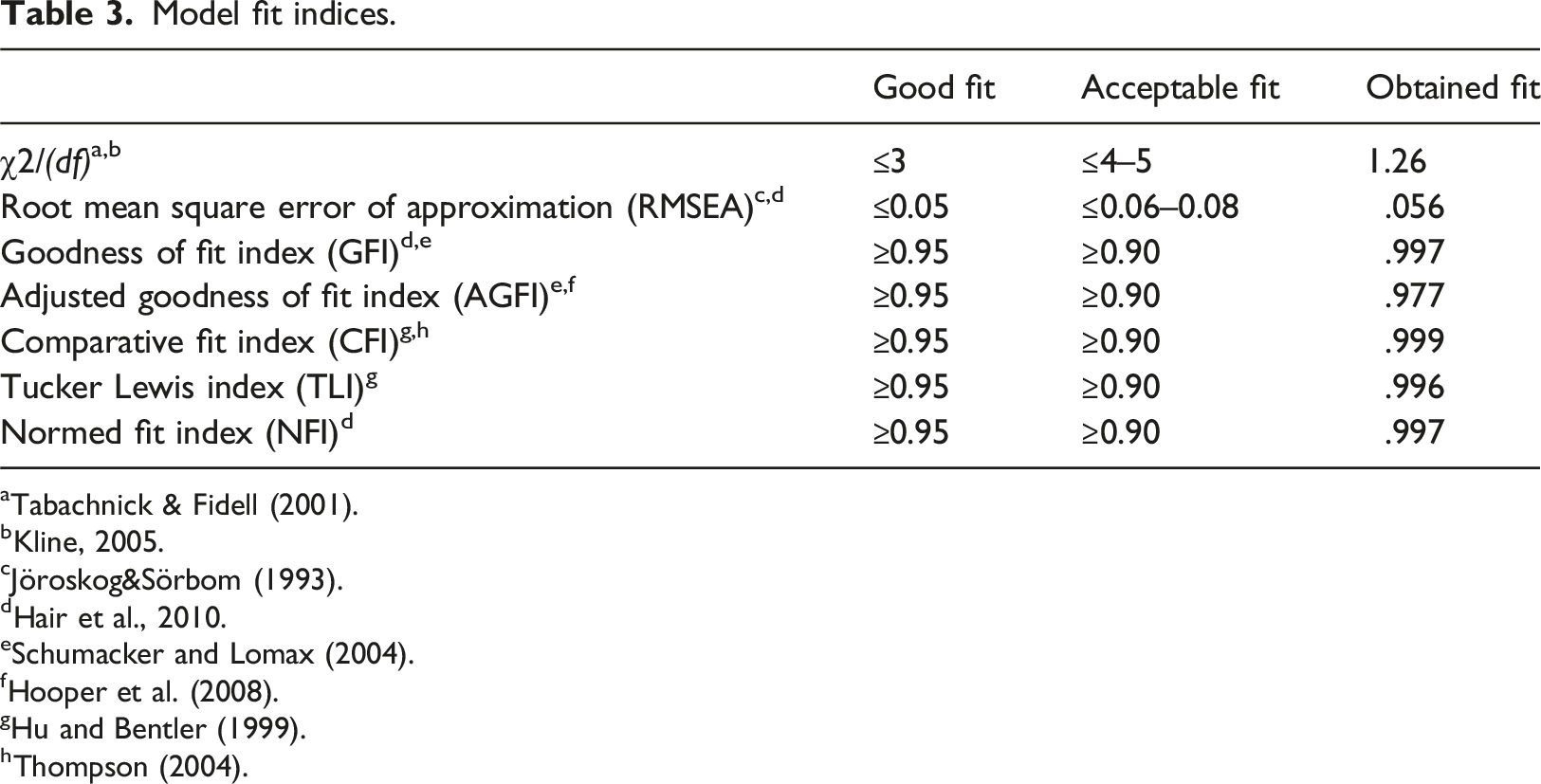

Model fit indices.

As depicted in Figure 2, perceived innovativeness was observed to have a positive effect on perceived ease of use ( The research model with standardized estimates.

Perceived usefulness and ease of use explained 48% of attitudes towards using educational chatbots. Perceived innovativeness accounted for 17% of the variance in perceived ease of use and 16% of the variance in perceived usefulness. The combined effects of perception on ease of use and attitude explained 42% of trust. The trust and subjective norm combined with attitude explain 44% of intentions to use educational chatbots. (What are the teachers’ purposes for using educational chatbots?)

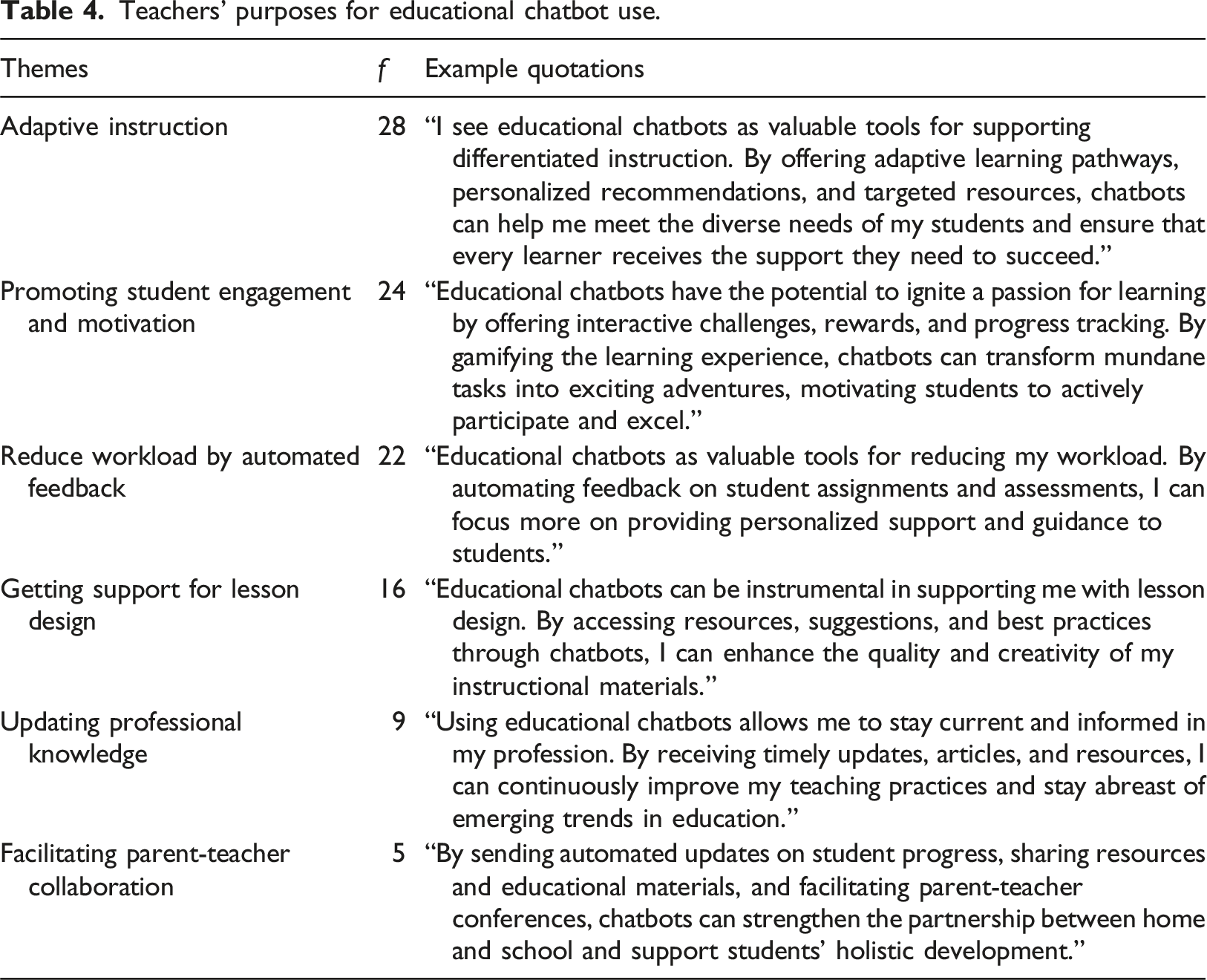

According to Table 4 we found six main purposes to use chatbots in the instructional process. Adaptive instruction appeared to be the most prevalent purpose followed by promoting student engagement and motivation. (What are the teachers’ expectations about using chatbots for instructional activities?) Teachers’ purposes for educational chatbot use.

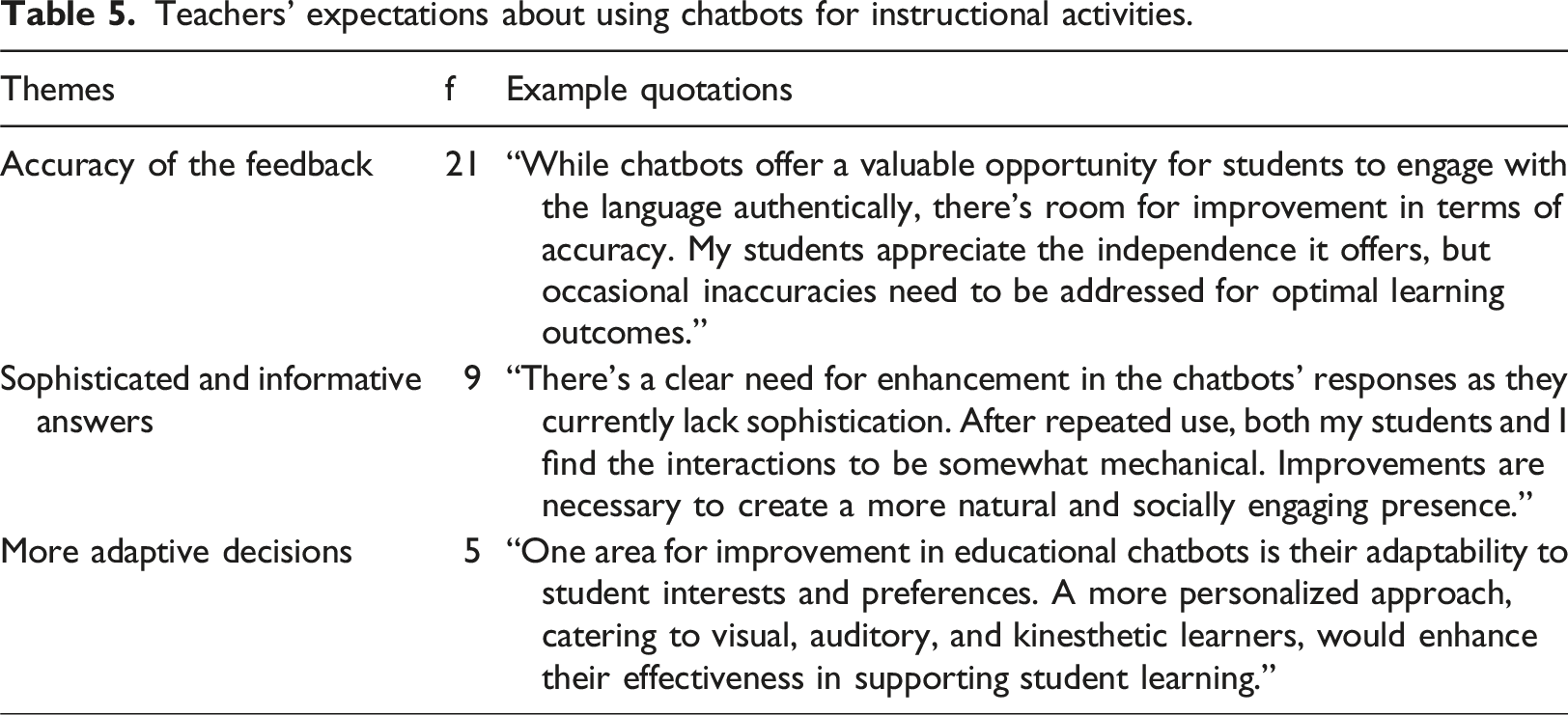

Our qualitative analysis showed that the accuracy and the quality of chatbot outcomes still should be improved. These issues are reflected in teachers’ quotations. Table 5 (What are the teachers’ concerns about using chatbots for instructional activities?) Teachers’ expectations about using chatbots for instructional activities.

Teachers’ concerns about using chatbots for instructional activities.

Discussion

The SEM analysis showed that perceived innovativeness contributed to perceived ease of use (H1a) and positive attitude towards using educational chatbots (H1b). Further, a significant and positive relationship was observed between perceived innovativeness and perceived usefulness (H1c). According to these results, it might be easier to utilize educational chatbots for more innovative teachers. Also, such teachers with more positive feelings about using chatbots in their instruction better recognize the pedagogical advantages of chatbots. It has been reported that a teacher’s perception regarding ease of use and usefulness about a certain type of technology significantly relies on their innovativeness level (Vidergor, 2023), and our findings are consistent with this. Innovative teachers apply student-centered pedagogies including adaptive feedback (Xu and Zhu, 2023). Considering that the key advantages of educational chatbots are personalized support and timely feedback (Hwang and Chang, 2021; Wu et al., 2020), it is more likely for innovative teachers to perceive chatbots as a useful educational technology for fostering student-centered learning. As also seen in the current study, an increased level of innovativeness is an indicator of a positive attitude towards using chatbots.

A significant relationship was found between perceived usefulness and the intention to use (H2b). This implies that as long as teachers believe that educational chatbots would foster their productivity, and enhance their work performance, they will exploit chatbots in the instructional process. For the acceptance of chatbots from various fields (e.g., online marketing) perceived usefulness was found to be an important factor for convincing of using such AI-based technology (Zhu et al., 2023). The current study indicated the crucial role of teachers’ perception on usefulness of chatbots in acceptance of them in educational settings. As also recommended in prior studies (e.g., Chocarro et al., 2023), pedagogical opportunities of educational chatbots should be highlighted by technology developers and administrators. In this way, teachers will be able to recognize the affordances of chatbots, in turn, have more intention to use chatbots.

Ease of use positively predicted the attitude towards using educational chatbots (H3a) and trust (H3b). Accordingly, as teachers consider chatbots as effort-free to use at anytime and anywhere, they will have more positive affective responses to using them for their lectures. Further, the chatbot`s trustworthiness will be fostered from a teacher’s perspective if the chatbots function seamlessly without any technical issues. Consistent with our findings, Kasilingam (2020) reported that end-users might suffer from chatbots with less accessibility and illegible text features; ultimately, a negative attitude towards using chatbots may appear due to their less usability. The predictability of any technology’s functions is an aspect of end-users` trust. In other words, users expect the same functions from technology when they press a button on the interface (Ouriques et al., 2023). Chatbot designs that are far from a user-friendly approach might not be predictable, in turn, influence teachers’ trust. In contrast to our expectations, the SEM analysis showed insignificant relationships between ease of use and teachers’ intention to use chatbots (H3c). This could be explained by the widespread use of chatbots in everyday life. Hence, individuals have become more familiar with chatbots from various fields (e.g., ChatGPT, Alexa, and Siri). Due to this familiarization, teachers are expected to perceive chatbots as easy for using (Hwang and Chang, 2021). Since many teachers are familiar with chatbots, their priority may not be easy to use for educational chatbots. Rather, they value the educational usefulness of using chatbots in their teaching process.

Attitude towards using educational chatbots was found to be positively associated with trust (H4a) and intention to use (H4c). In other words, teachers` positive emotional experiences with chatbots might contribute to trust in them. Chatbots with human-like characteristics are accessible to use for users at flexible time and place options (Lee and Choi, 2017). In addition, personal conversation is offered in the process of human-chatbots interactions (Chiu et al., 2023). These advantages might most probably result in a positive attitude towards using chatbots (Yoo et al., 2018), in turn, causing more trust in chatbots. Similarly, the results of the current study indicated that teachers’ positive attitudes towards might be an more trust. Teachers’ positive emotions about the chatbot use in education serve as a key experience in chatbot acceptance in K-12 settings (Chocarro et al., 2023). As seen from our SEM results, attitude functions as one of the main determinants of educational chatbot acceptance.

Trust might be considered an essential factor that shapes a technology user`s belief structure, confirming that its role is critical in the acceptance of automated systems such as chatbots (Shin, 2021). For instance, ChatGPT tools have been widely used in different fields. In fact, the algorithms of ChatGPT are trained through the available data on the Internet; therefore, its outputs are sometimes far from reality and not logical (Choudhury & Shamszare, 2023). However, researchers highlighted that an extensive lack of trust in ChatGPT might result in the underuse of this system (Liebrenz et al., 2023). Extending relevant results to educational chatbot use in the K-12 context, our study showed that trust serve to increase teachers’ intention to use these AI-based technologies during their (H5). It can be therefore argued that if the teacher-chatbot interaction is fruitful, this is reflected through trust, which then leads to increased intentional use of the chatbots in education. Trust plays a crucial role in human-computer interaction, especially in educational settings where teachers need to feel confident that the technology will support, rather than hinder, their instructional goals (Nazaretsky et al., 2022). This underscores the need for educational technology developers to prioritize building reliable, user-friendly, and secure systems.

Teachers in K-12 settings collaborate with each other to share their instructional methods and strategies. In this collaboration, they might also share their views and experiences with educational technologies. Hence, it is likely for them to influence their counterparts in terms of chatbots and their pedagogical affordances (Celik et al., 2022). Then, informed and confirmed teachers are likely to have more willingness to use chatbots (Chocarro et al., 2023). In line with this, our hypothesis (H6) is supported by the analysis of data, meaning that social pressure or influence have a direct and positive impact on the intention to use chatbot.

The percentages of variance explained by the different constructs provide insight into the strength of the relationships between the variables in the model. For instance, perceived usefulness and ease of use explaining 48% of the variance in attitudes toward using educational chatbots indicates that nearly half of teachers’ attitudes can be attributed to these two factors. This is a strong result, as typically, variance explained in behavioral studies often ranges from 30% to 50% (Davis, 1989). These results suggest that while other factors contribute to teachers’ attitudes, perceived usefulness, and ease of use are key drivers in shaping their acceptance of educational chatbots.

Additionally, the combined effects of trust and subjective norms explaining 44% of the variance in the intention to use educational chatbots highlight the important role of both individual trust and social influences. This figure aligns with studies by Chocarro et al. (2023), who found that subjective norms and trust explain approximately 40%–45% of the variance in behavioral intention to use AI-based educational technologies, further reinforcing the relevance of these constructs in educational contexts.

Based on the qualitative analysis, it is obvious that teachers have multifaceted perspectives on the integration of educational chatbots into instructional activities. This result is consistent with the prior empirical articles, suggesting that teachers have both positive perceptions and concerns regarding using chatbots in education (Celik, 2023; Chiu et al., 2023). The analysis uncovers that teachers primarily utilize chatbots for adaptive instruction, aiming to tailor to various student needs effectively. Additionally, fostering student engagement and motivation emerged as a significant purpose, indicating the potential of chatbots to transform learning into an interactive and engaging experience. However, despite the premises, concerns, and expectations persist regarding the implementation of chatbots. Teachers express hesitations regarding the accuracy of feedback and the sophistication of responses, suggesting room for improvement in chatbot functionality to meet educational objectives optimally. Moreover, issues related to data privacy and plagiarism raise remarkable concerns among educators, underscoring the importance of safeguarding student privacy and promoting original thinking. Additionally, some teachers worry about the potential impact of chatbots on critical thinking skills and student autonomy, highlighting the need for a balanced approach that leverages technology while preserving essential aspects of traditional education, such as personal interaction and independent decision-making. Overall, these findings underscore the complex considerations involved in integrating chatbots into educational settings. Therefore, our results emphasize the importance of addressing both opportunities and challenges to harness their full potential effectively. While our thematic results indeed align with existing literature, we argue that this alignment enhances the trustworthiness and transferability of our findings. Our study extends previous research by confirming these qualitative themes within the specific context of K–12 education in Turkey, focusing on teachers with direct experience using the AI-powered educational chatbot. To our knowledge, few studies have explored the nuanced perspectives of teachers following actual use of such technology in a large-scale national program.

The integration of quantitative and qualitative findings in this study provides a more nuanced understanding of teachers’ acceptance of educational chatbots. The quantitative results revealed that perceived ease of use and perceived usefulness were significant predictors of teachers’ attitudes toward using educational chatbots, which, in turn, influenced their intention to use them. Qualitative findings enriched the SEM results, with teachers consistently highlighting how chatbots reduce workload through timely feedback and administrative support, reinforcing the critical role of ease of use and usefulness in shaping positive attitudes.

Moreover, while the quantitative results indicated that trust and subjective norms explained 44% of the variance in teachers’ intention to use educational chatbots, the qualitative interviews provided deeper insight into the nature of this trust. Many teachers expressed concerns about the reliability of educational chatbot outputs, particularly regarding data privacy and pedagogical alignment, supporting the idea that trust is a key factor influencing adoption. The qualitative findings also illuminated the role of social pressure, with several teachers mentioning that they felt encouraged by colleagues or school administrators to integrate chatbots into their teaching. This complements the quantitative finding that subjective norms significantly influence teachers’ behavioral intentions.

By integrating these two sets of findings, we gain a more comprehensive understanding of how both individual and sociocultural factors interact in shaping teachers’ acceptance of educational chatbots. The quantitative results provide broad trends and relationships, while the qualitative findings offer specific examples and context, validating and enriching the quantitative data. Together, they confirm that while perceived usefulness, trust, and ease of use are significant drivers, the social environment and concerns over privacy and data security are crucial in determining teachers’ intention to use educational chatbots.

This study offers valuable recommendations for other key stakeholders. Educational technology developers should focus on improving the usability and customization of educational chatbots while ensuring robust privacy and security measures to build trust among teachers. Policymakers can support the effective integration of chatbots in K-12 education by establishing clear guidelines for ethical AI use, investing in teacher training programs that enhance digital literacy, and ensuring equitable access to the necessary technological infrastructure. By addressing these areas, both developers and policymakers can play a crucial role in promoting the successful adoption and effectiveness of educational chatbots in diverse educational contexts.

Limitations and future research

While this study provides valuable insights into teachers’ acceptance of educational chatbots, several limitations should be acknowledged. First, the study was conducted within a specific educational context, focusing on K-12 teachers in Turkey who used the EBA Assistant chatbot. This may limit the generalizability of the findings to other educational contexts or countries where different types of educational chatbots are used. Future research could expand on this by exploring the acceptance of educational chatbots across different educational systems and cultural settings.

Second, while the quantitative and qualitative data provide a comprehensive view of teachers’ perceptions and experiences, the self-reported nature of the survey responses may introduce social desirability bias, where participants provide responses, they believe are expected rather than their true opinions. Future studies could address this limitation by incorporating more objective measures, such as tracking actual chatbot usage data alongside self-reported intentions.

Third, this study focused primarily on teachers’ perspectives without considering the experiences and feedback from students, who are also key stakeholders in the educational process. Understanding how students perceive and interact with educational chatbots could provide further insight into the technology’s overall effectiveness and acceptance in classrooms. Future research could explore student attitudes toward educational chatbots and how they align or differ from teachers’ perspectives.

Lastly, a limitation of this study is the lack of deeper exploration into which specific social norms are most influential in shaping teachers’ behaviors. While the influence of subjective norms was quantitatively measured, we did not fully investigate the specific sources of social pressure or the nature of these norms in the interviews. Future research could address this by focusing on the different layers of social influence—such as peer networks, institutional policies, and community expectations—that shape teachers’ attitudes toward educational chatbot use.

Conclusion

To promote the educational integration of chatbots in K-12 settings, teachers’ acceptance of these technologies is critical. However, less is known about teachers’ acceptance of educational chatbots. Based on the main construct of the TAM framework (perceived ease of use and usefulness, attitude), we developed a conceptual model by extending the TAM with trust, subjective norm, and personal innovativeness. We conclude that more innovative teachers perceive chatbot as a useful educational technology. In addition, it can be suggested that if the teachers recognize chatbots as an instructional and usable tool for their teaching content, this is reflected through positive feelings about these technologies, which then leads to increased intention for using chatbots. Further, teachers might utilize chatbots for teaching purposes when they trust in the chatbot outcome in terms of privacy and pedagogical appropriateness. Social pressure from teachers` environments (e.g., administrators and colleagues) might appear as a motivation for teachers to use chatbots. The acceptance of educational chatbots not just relies on individual characteristics (e.g., trust) but also social-cultural constructs (e.g., subjective norm). Therefore, future studies should investigate chatbots acceptance by adding various factors from sociocultural perspectives.

Footnotes

Acknowledgements

The earlier version of the current paper was presented at the 17th International Conference of the Learning Sciences-ICLS 2023.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.