Abstract

Recent advancements in artificial intelligence (AI) have transformed consumer services with technologies such as AlphaGo surpassing human capabilities. OpenAI’s ChatGPT positioned AI as a social actor. This study examines ChatGPT’s natural language prowess using AI from the perspective of social actors. We investigated user attitudes through the Unified Theory of Acceptance and Use of Technology (UTAUT) while employing the stereotype content model to assess ChatGPT’s portrayal as a social actor. Trust is an important factor when considering ChatGPT as a social actor. Our model validation revealed that “performance expectancy and facilitating conditions” significantly impacted ChatGPT evaluation, “social influence” affected usage intentions, and “effort expectancy” had no significant effect. In the stereotype content model, warmth and competence significantly influenced trust and performance expectations. Trust in the ChatGPT emerged as the main predictor of the evaluation. The study concludes with a discussion of the implications, accounting for differences in user experience (UX).

Plain language summary

This paper looks into how users perceive and trust ChatGPT, a program developed by OpenAI that can chat like a human. With technologies like AlphaGo showing that machines can outperform humans, ChatGPT is another step in AI, acting almost like a person in conversations. We used a well-known framework called the Unified Theory of Acceptance and Use of Technology (UTAUT) to see how people accept and use ChatGPT. We also used the stereotype content model to see how ChatGPT is seen as a kind of social character. Trust is a big part of how people see ChatGPT as a social actor. Our research found that users’ expectations about how well ChatGPT works and the conditions that make it easy to use have a strong effect on how they view it. Social influences affect how much they intend to use it, but how easy it is to use doesn’t matter much. Regarding ChatGPT being like a social character, being seen as friendly and capable is key to trust and performance expectations. Trust in ChatGPT turned out to be the main thing that affects how people evaluate it. The paper ends with a discussion of what this means for user experience, considering how different each user’s interaction with ChatGPT can be.

Introduction

In the winter of 2022, the world witnessed groundbreaking advancements in AI with the emergence of ChatGPT. Following the cognitive prowess of AlphaGo, ChatGPT demonstrated a different dimension of AI: its ability to engage in natural, human-like conversations (OpenAI, 2023). This marked a significant shift in the role of AI from a tool for specific tasks to a more socially interactive entity.

ChatGPT’s conversational capabilities have ignited global discussions, particularly concerning their implications for labor markets and technological development (Ko et al., 2023; Taecharungroj, 2023). However, this enthusiasm is tempered by concerns about ethics and risk management, highlighting the importance of fostering consumer trust in AI systems (Choung et al., 2022; Yang et al., 2023).

The acceptance of AI as a social actor requires a deeper understanding of the psychological mechanisms that govern user trust and adoption. Previous research has explored anthropomorphism and social interaction in AI systems (Bertacchini et al., 2017; Nass & Moon, 2000), emphasizing the importance of human-like qualities in user acceptance (Cheng et al., 2022). Notably, Go and Sundar (2019) and Pelau et al. (2021) found that anthropomorphic features and empathetic interaction styles in AI can enhance communication quality and foster user trust, particularly in service contexts. Similarly, Castelo et al. (2023) emphasized that the adoption of human-like design and socially responsive features in AI technologies plays a critical role in shaping consumer-technology interactions, underscoring the importance of user-centric design in value creation.

These findings align with the Stereotype Content Model (SCM), that evaluates entities based on perceived warmth and competence (Fiske et al., 2002). This dual-dimensional framework has been widely applied in social evaluations, including brand perception and user interaction with anthropomorphized technology (Borau et al., 2021; Martin & Mason, 2023). However, its application in AI-driven conversational agents, such as ChatGPT, remains underexplored.

This study bridges this gap by integrating the Unified Theory of Acceptance and Use of Technology (UTAUT) with SCM to examine ChatGPT’s dual role as a functional and social entity. By investigating how warmth and competence perceptions influence user trust and adoption, this research aims to provide a comprehensive framework for understanding AI’s evolving role in human-AI interactions.

Literature Review

AI and the Unified Theory of Acceptance and Use of Technology (UTAUT)

In the realm of technology adoption, assessing a technology’s effectiveness, utility, and consumer perceptions is crucial (Castelo et al., 2023). Acceptance of technology relies on both performance and perception (Venkatesh et al., 2012). Existing studies have identified the factors influencing consumer perceptions and established validated models such as UTAUT, which consolidates prior research (Venkatesh et al., 2003). Despite extensive research on technological services, the acceptance of the ChatGPT remains relatively unexplored (Vimalkumar et al., 2021).

UTAUT encompasses various domains, including performance, effort expectancy, social influence, and facilitating conditions, and considers factors such as gender, age, and experience (Venkatesh et al., 2012). Applying UTAUT to AI presents unique challenges owing to AI’s intricate nature, particularly for conversational AI systems equipped with natural language processing capabilities (Bergner et al., 2023). Thus, adaptive models are required to examine the dynamic nature of such natural conversations (Araujo, 2018; Pelau et al., 2021).

Performance expectancy refers to an individual’s assessment of the utility or value that they believe they will receive from using a technology, whereas effort expectancy refers to a user’s expectation of how easy it will be to use a new technology when it is introduced as a service or product (Venkatesh et al., 2003). Further, social influence refers to the impact of people’s expectations and attitudes on the new technology, and facilitating conditions denote the assessment of the extent to which one has the infrastructure or related resources to adopt and use the new technology (Venkatesh et al., 2012). Performance and effort expectancies reflect the user’s subjective evaluation of the technology, whereas social influence and facilitating conditions reflect the conditions and contexts in which people use the technology (Dwivedi et al., 2020).

Before the emergence of ChatGPT, AI adoption was influenced by factors such as education, social resources, user effort, and comprehensiveness (Chatterjee & Bhattacharjee, 2020). Concerns regarding privacy and security have also played a role in AI adoption (Vimalkumar et al., 2021). However, the application of UTAUT to conversational AI presents several challenges. Negative perceptions of AI can hinder its adoption (Davenport et al., 2020), highlighting the importance of building trust to foster societal acceptance (Choung et al., 2022).

Previous studies on AI anthropomorphism have lacked a comprehensive evaluation of AI as a social actor (Gursoy et al., 2019). The role of trust in shaping technology adoption has also been overlooked (Longoni et al., 2019), creating a gap in the literature. Consequently, this study sought to enhance AI acceptance by conducting an anthropomorphic evaluation of ChatGPT and using SCM to investigate the effects of warmth and competence on trust, AI evaluation, and usage intention. Perceptions of anthropomorphism significantly affect trust, AI evaluations, and usage intentions.

As an interactive chatbot, ChatGPT excels in understanding user speech and facilitating meaningful interactions (Lim et al., 2022), thereby enhancing user satisfaction. Ensuring high satisfaction involves aligning the service with user expectations because doubts about its quality can deter users (Crolic et al., 2022). Socially interactive entities are often perceived as humans; therefore, elements that appear cold or emotionally detached, akin to machines, can lead to unfavorable evaluations (Granulo et al., 2021; Longoni et al., 2019). Thus, when evaluating socially interactive technologies, it is crucial to consider social entities that move beyond a purely mechanical perspective.

The introduction of socially interactive technologies into society relies on both functionality and acceptance (Borau et al., 2021). Embracing AI as a social actor hinges on establishing and nurturing trust (Choung et al., 2022). Highlighting AI’s human-like aspects of AI boosts its acceptance (Pelau et al., 2021). Previous research on self-driving cars has demonstrated that people may hesitate to adopt AI technologies if they perceive a lack of social responsibility (Gill, 2020). Building trust and promoting technology usage requires effective communication regarding how the technology fulfills its social responsibility, which is a critical aspect of trust (Bigman & Gray, 2018). Therefore, beyond assessing AI’s social aspects of AI, it is crucial to define the characteristics that make it trustworthy.

Social Evaluation of AI

AI-powered information and communication technologies play a pivotal role in enhancing societal efficiency and utility, leading to improved task performance (Kellogg et al., 2020). Capitalizing on advanced capabilities, AI excels in knowledge-based tasks, problem-solving, and creative thinking (Puntoni et al., 2021; Siemon, 2022). Its integration across services empowers informed decision-making, rational solutions, and bias reduction (Burton et al., 2020; Langer & Landers, 2021). Engaging with AI enhances judgment and decision-making abilities (Toorajipour et al., 2021), aligns with the subjective assessment of its competence (Gray et al., 2007), and reinforces its standing within service evaluation.

However, AI adoption is not solely driven by service performance. Users may not find AI services satisfactory because of concerns regarding personalization and distinctiveness. For instance, in medical diagnosis, individuals may doubt an AI’s ability to consider unique patient attributes (Longoni et al., 2019). Users may hesitate to accept AI recommendations for emotional decisions, doubting their understanding (Granulo et al., 2021). Such perceptions stem from AI’s potential shortcomings in terms of the warmth dimension, which affects the service experience.

To serve social functions effectively, AI must engage users as unique entities, treat objects as social entities, and emulate human interactions (Longoni et al., 2019). In conversational AI scenarios, anthropomorphism emerges when AI comprehends consumer intent and responds accordingly, embodying its role as a social actor (Q. Zhou et al., 2023). Furthermore, AI, which functions as a functional assistant, may struggle to establish strong social relationships, especially beyond dialog (Youn & Jin, 2021). Understanding the interaction between socially interactive AI and humans requires the consideration of not only technological capabilities and outcomes but also warmth-related aspects that users can connect with and accept.

Artificial Intelligence and the Stereotype Content Model (SCM)

Humans possess the ability to assess nonhuman entities, such as technology, robots, human groups, and abstract concepts, such as God, using criteria akin to human judgments (Gray et al., 2007). This evaluative process involves mind perception and bestowing human-like attributes on nonhuman entities (Waytz et al., 2010). The theory of mind perception elucidates how individuals perceive and attribute mental qualities to nonhuman objects (Waytz et al., 2010). This understanding hinges on identifying the criteria for attributing mental attributes, with experience and agency playing pivotal roles (Gray et al., 2007). Experience involves sharing emotions, whereas agency is related to the capacity for purposeful action.

Assessing threats and intentions is common when one meets new people (Goodwin, 2015) because the formation of cooperative bonds with non-threatening individuals is crucial (Fiske et al., 2002). This assessment involves evaluating a person’s likability and utility of personal goals (Goodwin et al., 2014). These mechanisms apply to the self, others, and social groups (Abele et al., 2016). SCM embodies this social cognitive assessment, associating warmth with kindness, and trust, morality, and competence with intelligence, skills, and efficacy (Fiske et al., 2007).

Balancing warmth and competence are key to positive evaluations and social prestige, as they portray an individual as both trustworthy and capable (Kong et al., 2023). Observing behaviors and attitudes in these dimensions fosters trust and rapport (Fiske et al., 2007). Warmth, tied to moral evaluation, has cognitive significance (Eisenbruch & Krasnow, 2022). Warmth assesses morality, whereas competence gages effectiveness (Cuddy et al., 2008). In socio-moral assessments related to warmth, negative information carries more weight, aligning with harm minimization strategies (Fiske et al., 2007).

Originally applied to person-to-person judgments, SCM has also been applied for brand evaluation (Kervyn et al., 2012). Its scope extends further to anthropomorphic targets, encompassing evaluations of personal possessions (Chandler & Schwarz, 2010). For instance, interactions with chatbots emphasizing warmth have been shown to elicit higher levels of brand engagement than those focusing on competence (Kull et al., 2021). Just as humans anthropomorphize objects, such as robotic vacuum cleaners, attributing them to intentionality and purpose (Hoenen et al., 2016), a similar phenomenon arises with socially interactive technologies. The acceptance of AI by society is contingent on both its technological effectiveness and presence of human-like attributes (Pelau et al., 2021). Given the pertinence of warmth and competence evaluations of anthropomorphic entities, interactions with socially interactive AIs, such as ChatGPT, may trigger automatic anthropomorphic assessments, warranting a comprehensive evaluation encompassing both dimensions. Thus, this study explored the factors that influence consumer attitudes toward services and their usage by assessing the warmth and competence dimensions in the context of ChatGPT, a prominent natural language processing-based chatbot service.

While the integration of UTAUT and SCM in research remains limited, this study seeks to highlight their unique contributions by combining these two models to examine ChatGPT’s social and functional roles (Figure 1). To provide context and emphasize the novelty of this approach, Table 1 summarizes the key studies that have explored either UTAUT or SCM, or their related extensions, in various technological contexts. The table illustrates the research focus, key findings, and distinct contributions of each study, underscoring how this study bridges the gap by offering a comprehensive framework that addresses both technological effectiveness and social perceptions.

Integrated UTAUT and SCM model.

Comparison of Studies Integrating UTAUT and SCM in Technology Acceptance Research.

Research Hypothesis

Performance Expectancy

Performance expectancy refers to users’ beliefs about the benefits of using a technology, which strongly influences their adoption decisions (Venkatesh et al., 2003). Studies have shown that when users anticipate significant gains such as increased efficiency or convenience, they are more likely to adopt technology (Venkatesh et al., 2016). For example, T. Zhou et al. (2010) find that users are more inclined to adopt mobile banking when they perceive substantial benefits.

While extensive research has explored performance expectancy in various ICT contexts, the specific impact of AI technologies on adoption intention remains less understood (Loureiro et al., 2018). AI technologies such as ChatGPT provide unique advantages, such as improved user experience and personalized interactions, which enhance perceived benefits (Di Vaio et al., 2020). These advantages are likely to influence users’ attitudes and intentions (Figueroa-Armijos et al., 2022). Based on this, we propose the following hypothesis:

Effort Expectancy

Effort expectancy refers to users’ perceptions of how easy it is to use technology, which plays a crucial role in shaping their attitudes and adoption intentions (Venkatesh et al., 2003). Technologies perceived as easy to use are more likely to be adopted, as users tend to avoid complex systems (Gursoy et al., 2019). Research has shown that simplicity in digital services such as delivery platforms significantly improves user attitudes (Song & Schwarz, 2008).

In the context of AI, complex interfaces or functions often lead to negative user feedback, reducing the likelihood of adoption (Chatterjee et al., 2023). Conversely, users are drawn to technologies that are intuitive and require minimal effort to learn and operate (Tao et al., 2020). ChatGPT, with its conversational interface, offers the opportunity to convey effortlessness, which can positively influence user attitudes. Thus, it is essential to investigate the role of effort expectancy in the adoption of ChatGPT. Based on these insights, we propose the following hypothesis:

Social Influence

Social influence refers to the extent to which individuals perceive that others believe they should adopt a new technology (Venkatesh et al., 2003). This influence becomes particularly significant in uncertain situations, where individuals rely on others’ opinions to guide their decisions (White & Simpson, 2013). When peers or influential figures express positive attitudes toward a technology, they can significantly enhance adoption intentions, especially among those less familiar with digital technologies (Joa & Magsamen-Conrad, 2022; Kijsanayotin et al., 2009).

Research on group technology adoption highlights that individuals are more likely to adopt a technology when their social circle does so, as fear of exclusion or negative judgment reinforces conformity (Gursoy et al., 2019; van Esch et al., 2019). This dynamic is particularly relevant in AI adoption, in which social endorsements can alleviate uncertainty and build trust. Given the innovative nature of ChatGPT, understanding the role of social influence in shaping user attitudes is essential. Thus, we propose:

Facilitating Conditions

Facilitating conditions refer to the resources, infrastructure, and support available to individuals to adopt and utilize new technologies (Venkatesh et al., 2003). Even with high-quality technology, effective integration can be hindered without sufficient resources or user expertise. Studies have shown that facilitating conditions, such as technical support and training, play a critical role in overcoming adoption barriers (Blok et al., 2020). This is particularly significant for older adults, where access to resources and guidance greatly improves ICT adoption rates (Macedo, 2017).

For example, Duarte and Pinho (2019) found that social support significantly increased the intention to use health aid technologies, highlighting the importance of facilitating conditions in adoption decisions. Similarly, research on AI emphasizes that adequate resources and user training are crucial for seamless technology integration (Dwivedi et al., 2021). In the case of ChatGPT, providing users with clear instructions and support systems can enhance adoption by reducing perceived barriers. Therefore, we propose the following hypothesis:

Trust

Trust plays a pivotal role in shaping users’ attitudes toward new technologies, as individuals are more likely to adopt technologies that they perceive as trustworthy (Venkatesh et al., 2012). Numerous studies have established a strong link between trust in AI-based systems and positive user attitudes (Choung et al., 2022; Oliveira et al., 2017). Trust has been identified as a critical factor in various domains, including mobile payments (Slade et al., 2015), online therapy (Casey & Wilson-Evered, 2012), counseling chatbots (Pitardi & Marriott, 2021), and online information-seeking behaviors (Oh & Yoon, 2014).

Moreover, trust in technology significantly influences users’ perceptions of its usefulness, shaping their overall attitudes toward its adoption (Shin, 2022). Trust not only enhances perceived reliability but also emphasizes the utility and value of the technology, as users believe in its ability to deliver desired outcomes (MacInnis, 2012). In the context of AI, trust functions as a mediator, influencing adoption behaviors, such as purchasing AI-recommended products (Gefen et al., 2003; Shin, 2020).

For ChatGPT, fostering user trust is essential to ensuring its adoption as a socially interactive AI. Recognizing the importance of trust in technology adoption, we propose the following hypothesis:

How Warmth and Competence Ratings Affect Trust in AI

Warmth and competence are two fundamental dimensions that shape consumer trust in both human and nonhuman entities (Fiske et al., 2002). Empirical studies have shown that these dimensions significantly impact trust in various contexts. For instance, Xue et al. (2020) demonstrated that manipulating warmth and competence in brand evaluations influences consumer attitudes and purchase intentions through trust. Similarly, Cheng et al. (2022) found that perceptions of warmth and competence affected trust in anthropomorphic chatbots, although they did not explore their influence on satisfaction or usage intentions.

Trust is more likely to develop when consumers perceive a brand or service as both relatable and technically proficient. Authentic brands that score high in warmth and competence foster stronger consumer trust, as noted by Portal et al. (2019). This principle extends to technology services, with studies by Borau et al. (2021) and Martin and Mason (2023) highlighting the role of warmth and competence in shaping service attitudes. High ratings on these dimensions enhance relatability and foster trust by satisfying both emotional and functional needs (Querci et al., 2022).

In the context of ChatGPT, warmth relates to perceived kindness and friendliness, while competence reflects technical proficiency and reliability. Trust is built when users perceive ChatGPT as both approachable and effective in delivering accurate and helpful responses (Johnson & Grayson, 2005). Based on these insights, we propose the following hypotheses:

How Warmth and Competence Dimension Ratings Affect Performance Expectancy

Perceived warmth and competence are critical in shaping users’ emotional trust and evaluations of technological utility. ChatGPT’s human-like conversational abilities foster social bonds, making them more relatable and engaging for users (Ruijten et al., 2019). These social connections, rooted in warmth and competence, enhance perceived usefulness as users apply social behavior criteria to technology (Blut et al., 2021).

Traditionally, machines have been evaluated primarily for their task-solving capabilities. However, the integration of human-like traits such as warmth and competence has been shown to significantly improve user acceptance and trust (Bigman & Gray, 2018). Like choosing to collaborate with approachable and capable coworkers, users prefer technologies that exhibit both relational and functional strengths (Borau et al., 2021; Martin & Mason, 2023). This combination mitigates the perceived risks associated with technology adoption and strengthens positive attitudes (Terwel et al., 2009; Waytz et al., 2014).

In the context of ChatGPT, higher warmth and competence ratings are expected to increase performance expectancy by enhancing perceived utility and ease of use. Additionally, positive attitudes toward ChatGPT are likely to translate into a stronger intention to adopt technology. Based on these considerations, we propose the following hypotheses:

Materials and Methods

Participants and Study Design

We conducted an online survey with the assistance of a specialized survey company, SouthernPost, in South Korea, to test the proposed hypotheses. The participant pool comprised 300 individuals, and consent was obtained via email and a survey platform. Before proceeding with the survey, we confirmed the participants’ familiarity with OpenAI’s ChatGPT.

The primary objective of the survey was to validate the model’s efficacy in assessing the intention to use the ChatGPT among South Korean adults who were aware of the technology, even if they had not used it before. To ensure a representative sample, participants were selected based on sex and age. The sample consisted of an equal number of men and women, with approximately 25% in the 20 to 50-year age range. The ages of participants ranged from 21 to 59 years (average age = 39.75 years). The survey was conducted over a 6-day period from June 1 to June 6, 2023. The demographic information of the survey participants is presented in Table 2.

Survey Participant Demographics.

The survey began with an introductory question to determine the participants’ awareness of the ChatGPT. This section includes questions about ChatGPT usage frequency, experience, and general AI service habits. To measure the intention to use the ChatGPT, we used the UTAUT questionnaire, consisting of 15 questions categorized under performance expectancy, effort expectancy, social influence, and facilitating conditions.

Participants also answered five questions regarding their trust in the ChatGPT. Furthermore, the survey included questions about the warmth and competence aspects of the Stereotype Content Model (SCM) to evaluate participants’ perceptions of the qualities of ChatGPT. This segment comprised 12 questions. In addition to the research model questions, participants provided demographic data (e.g., sex, age, socioeconomic status, education, and monthly household income) as control variables. As a token of appreciation, the participants received a modest participation fee upon completion of the survey.

Measurement

Performance Expectancy

The performance expectancy assessment was inspired by a foundational questionnaire employed in a previous study (Venkatesh et al., 2003). The parameters for effort expectancy, social influence, and facilitating conditions were adapted from prior research and integrated into the assessment queries for the ChatGPT. The segment centered on performance expectancy comprised four questions. The items were meticulously crafted to encapsulate the participants’ perceptions of whether employing the ChatGPT could enhance their work and performance. A higher score on the performance expectancy scale signifies a greater belief that using the ChatGPT would be instrumental in bolstering job or task performance. The evaluation was conducted using a 7-point Likert-type scale.

Effort Expectancy

In the context of effort expectancy, a higher score indicates that learning and using ChatGPT are more straightforward. This evaluation encompassed four items derived and tailored from a previous research questionnaire utilized in Venkatesh et al.’s (2003) study. The four items assessing effort expectancy were rated on a 7-point Likert-type scale.

Social Influence

Here, we examined how the opinions of significant individuals in the participants’ lives affected their decision to use ChatGPT. A higher score indicated that more individuals in their surroundings supported and encouraged the use of ChatGPT. The evaluation of social influence involved three questions, each rated on a 7-point Likert-type scale. These questions were adapted from Venkatesh et al.’s (2003) study and tailored to the context of ChatGPT.

Facilitating Conditions

For facilitating conditions, a higher score indicated a stronger belief that the necessary resources for ChatGPT usage were in place within the organization. This assessment comprised four questions derived from Venkatesh et al.’s (2003) study and was suitably modified to align with the context of ChatGPT. Responses were provided on a 7-point Likert scale.

Trust

Drawing inspiration from Venkatesh et al.’s (2012) extension of the UTAUT model, we adopted and developed a trust measurement tool to gage participants’ level of trust in ChatGPT. This tool consisted of five suitable questions for the context of ChatGPT, each utilizing a 7-point Likert scale.

Stereotype Content Model: Warmth and Competence

The SCM instrument, designed to measure participants’ perceptions of warmth and competence in relation to ChatGPT, employed elements established by prior research (Cuddy et al., 2008). The SCM instrument comprises two dimensions: warmth and competence. Each dimension was evaluated using six items, providing a comprehensive understanding of participants’ perceptions of ChatGPT’s attributes.

Perceived warmth in this context reflects users’ subjective evaluation of ChatGPT’s ability to engage in socially adaptive and contextually relevant interactions. While ChatGPT may not inherently treat each individual uniquely, its capability to tailor responses based on conversational context contributes to a sense of personalized interaction. This adaptability is essential for fostering the perception of warmth and enhancing the role of AI as a social actor.

Attitude Towards Using Technology & Intention to Use

The attitudes of survey participants toward using ChatGPT and their intention to use it were assessed by adapting items from prior research (Venkatesh et al., 2003) to specifically inquire about ChatGPT. Four statements were used to measure both attitude and intention to use, with responses gathered using a 7-point Likert-type scale.

Control Variables

The study incorporated control variables, including sex, age, subjective socioeconomic status, education level, and monthly income. Each variable was assessed using a single question. The complete list of items used to measure each construct is provided in the Appendix.

Results

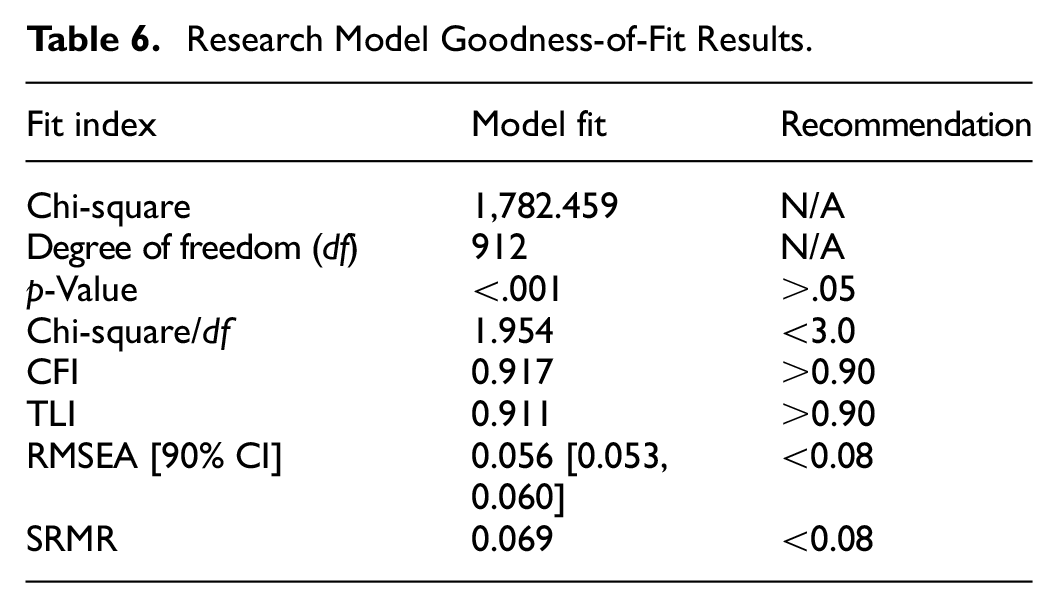

To ensure the validity of the measurement instrument and research model assumptions, we performed confirmatory factor analysis-based model validation. The analysis was performed using the R statistical program (R Core Team, 2021) and the lavaan package (Rosseel, 2012). Four evaluation criteria were employed to assess the measurement model’s fit: root mean square of error approximation (RMSEA; Browne & Cudeck, 1993), standardized root mean square residual (SRMR; Hu & Bentler, 1999), comparative fit index (CFI; Bentler, 1990), and Tucker-Lewis index (TLI; Bentler & Bonett, 1980).

The factor loadings of the latent variables were greater than 0.50, confirming their contributions to the measurement. The CFI (.933) and TLI (0.925) values of the measurement model exceeded the criterion of 0.90, whereas its RMSEA (0.058 [90% CI: 0.053, 0.062]) and SRMR (0.051) values were below the prescribed threshold (Table 3). Reliability was evaluated using Cronbach’s alpha, composite reliability, and average extracted variance, and all latent variables met or exceeded the recommended thresholds for alpha (.70), CR (.70), and AVE (.50 or higher; Table 4).

Results of Measurement Model Fit Analysis.

Results of Measurement Model Analysis and Reliability Validation.

We compared the correlation coefficients between each latent variable and the square root of the AVE value to test construct validity (Fornell & Larcker, 1981). The results of the analysis are presented in Table 5. Most relationships met the construct validity criteria, except for the correlation between facilitating conditions and the ChatGPT evaluation. Despite potential issues in this specific relationship, the overall model fit and other relationships were deemed reasonable for the analysis.

Correlation Coefficients and Validation of Measurement Variables.

After confirming the appropriate model fit and measurement instrument reliability, the research model was constructed and tested using statistical significance tests of the hypothesized paths.

The research model analysis involved establishing hypothesized paths among the latent variables identified in the measurement model while controlling for gender, age, subjective socioeconomic status, education level, and monthly household income. The fit of the research model met the same evaluation criteria as the measurement instrument (Kline, 2015). The fit of the research model is presented in Table 6. The results showed that warmth (β = .519,

Research Model Goodness-of-Fit Results.

Further, performance expectancy (β = .190,

Research Model Analysis and Hypothesis Testing.

General Discussion

Summary of Findings

The remarkable capabilities of ChatGPT have attracted attention in the field of AI advancements. Beyond its linguistic prowess, ChatGPT’s ability to understand user intentions and provide contextually relevant responses sets it apart. This newfound capacity transforms AI from a machine into a potential social entity that resembles a communicative robot. Even video game nonplayable characters (NPCs) have evolved to interpret user conversations naturally, reflecting the growing trend in real-voice communication services (Forbes, 2023).

Our results confirmed the statistical significance of most of the hypotheses. Notably, the warmth and competence dimensions assessed by the SCM significantly impacted both affective trust and cognitive performance expectancy regarding the ChatGPT. Additionally, ChatGPT’s evaluation significantly affected performance expectancy, facilitating conditions, and trust. Furthermore, the impact of the evaluation on the intention to use ChatGPT was considerable. These findings suggest that consumer evaluations of ChatGPT are primarily influenced by performance expectancy and facilitating conditions, with trust playing a vital role at the social level. Essentially, the greater potential for ChatGPT performance enhances its adoption, especially under favorable contextual conditions.

Analysis of the research model with “actual experience” as the dependent variable revealed no significant difference in the model fit. However, the effect of intention to use on experience was significant (β = .204,

However, the direct effects of effort expectancy and social influence were not statistically significant. Similarly, demographic variables did not significantly affect the outcomes. The lack of a significant impact of effort expectations on attitudes may stem from participants’ reasonable access to online activities and their familiarity with ChatGPT. Notably, the effect of social influence was more pronounced on the intention to use than on ChatGPT evaluation, implying that social influence directly predicts usage rather than affecting the evaluation process.

Overall, this study contributes to the understanding of AI’s evolving social attributes and sheds light on the factors shaping the acceptance of AI technologies. The findings highlight the intricate interplay between performance expectancy, facilitating conditions, and social trust in shaping user perceptions and intentions to adopt AI services, such as ChatGPT.

Theoretical and Practical Implications

This study offers significant theoretical contributions by integrating UTAUT and SCM. This integration broadens the application of the UTAUT framework, including its extensions such as UTAUT2 (Duarte & Pinho, 2019), by incorporating a social evaluation perspective through warmth and competence dimensions. While UTAUT2 typically accounts for factors such as psychological needs and site quality, our study highlights the importance of evaluating technologies as social entities (Nass & Moon, 2000). Specifically, this study demonstrates that perceived warmth and competence significantly influence user attitudes and trust in ChatGPT, offering a novel psychological framework for understanding AI adoption.

Moreover, the findings suggest that as AI systems like ChatGPT become more sophisticated in understanding and responding to human intent, traditional factors, such as effort expectancy, may play a diminished role. This insight invites future research to further explore the combined effects of conversational quality, user expertise, and expectations on technology adoption.

On a practical level, this study addresses a critical barrier to AI adoption: the perception that AI lacks human-like understanding and empathy (Granulo et al., 2021). Our findings indicate that emphasizing warmth and adaptability in AI design can enhance user trust and acceptance. Companies adopting AI for customer service, product recommendations, or other interactions should tailor their approach based on their industry needs, focusing on warmth to foster emotional connections or competence to build trust in technical expertise (Wang et al., 2017).

Additionally, businesses should strive for a balance between cognitive and emotional trust. For instance, ChatGPT’s ability to adapt responses to conversational contexts demonstrates the potential of AI to enhance social presence. Although ChatGPT does not possess inherent human qualities, its adaptive interaction capabilities enable users to perceive it as more personable and relatable. This highlights the importance of designing AI systems that can tailor responses to conversational contexts in order to improve user satisfaction and foster long-term engagement.

These insights are vital for industries that aim to implement AI systems that are both reliable and engaging, ensuring positive user experience and sustained adoption.

Limitations and Future Research Directions

This study has several limitations that warrant acknowledgment. First, the survey participants were sourced from online research panels, which potentially limited the generalizability of the findings to a more extensive consumer population. This approach may also introduce a bias toward individuals with higher ICT-based information-processing capabilities. In addition, relying on participants’ pre-existing evaluations of the ChatGPT rather than their experiences after using it may have resulted in disparities in expectations and usage experiences.

Furthermore, despite the identification of demographic variables such as gender, age, socioeconomic status, and education level, the constraints of the participant pool hindered the exploration of potential moderating effects. Subsequent research could delve into the interactions among these variables to examine whether varying socioeconomic and educational backgrounds influenced the study’s primary variables. Given that a substantial proportion of participants had prior experience with ChatGPT, personal experiences, attitudes, and usability across diverse contexts could impact their usage intentions.

Future research should consider issues related to liabilities and trust failures in AI. Trustworthy AI is accountable for its outcomes. However, prior research has indicated that failures may be ascribed differently to humans and algorithms. Anthropomorphism can influence perceptions of accountability. Therefore, it is important to investigate failures in AI services. The social ramifications of anthropomorphizing AI also require examination, particularly when considering incidents involving AI that encourage self-harm. Given that the interplay between brands and consumers shapes technology acceptance, comprehending negative attitudes toward AI technology and its implications is imperative.

Moreover, gender-specific stereotypes often influence the stereotype content model employed in social evaluations. While reducing stereotype bias is essential, service providers may need to consider user satisfaction associated with stronger gender stereotypes. As AI continues to evolve, addressing its evolving social roles and perceptions has become indispensable. Thus, addressing these limitations through further research will advance our understanding of AI-based technology adoption and its broad social implications.

Footnotes

Appendix

Measurement Items.

The following table presents the complete list of measurement items used in the study. All items were adapted from previously validated scales, with the original sources noted in parentheses next to each construct.

| Variables | Items |

|---|---|

| Performance expectancy (Venkatesh et al., 2003) | ChatGPT helps me get things done. |

| ChatGPT speeds up my work. | |

| ChatGPT improves my work productivity. | |

| ChatGPT helps me manage my career. | |

| Effort expectancy (Venkatesh et al., 2003) | I can easily learn how to use ChatGPT. |

| I can clearly understand how to use ChatGPT. | |

| I find it easy to use ChatGPT. | |

| I think I can use ChatGPT skillfully. | |

| Social influence (Venkatesh et al., 2003) | People I care about think ChatGPT should be adopted in my work. |

| People who have a lot of influence on me think that I should use ChatGPT for my work. | |

| People I care about think that I should use ChatGPT for my work. | |

| Facilitating conditions (Venkatesh et al., 2003) | I have the necessary resources to use ChatGPT. |

| I have the knowledge to use ChatGPT. | |

| ChatGPT works well with other technologies I use. | |

| I can get help from others when I have difficulty using ChatGPT. | |

| Attitude toward using technology (Venkatesh et al., 2003) | I am glad to see ChatGPT. |

| I am satisfied with ChatGPT’s efficiency. | |

| I like ChatGPT. | |

| I am satisfied with ChatGPT. | |

| Intention to use (Venkatesh et al., 2003) | I have the intention to use ChatGPT. |

| I will endeavor to use ChatGPT in various parts of my daily life. | |

| I plan to use ChatGPT frequently in my work or business. | |

| I am likely to use ChatGPT. | |

| Trust (Venkatesh et al., 2012) | I think I can trust ChatGPT. |

| I trust ChatGPT. | |

| I do not doubt ChatGPT’s honesty. | |

| I trust ChatGPT and will utilize it to get the job done right. | |

| I believe ChatGPT can produce the results I want. | |

| Warmth (Cuddy et al., 2008) | ChatGPT is friendly. |

| ChatGPT has good intentions. | |

| ChatGPT is trustworthy. | |

| ChatGPT is compassionate. | |

| ChatGPT is gentle. | |

| ChatGPT is truthful. | |

| Competence (Cuddy et al., 2008) | ChatGPT is competent. |

| ChatGPT is efficient. | |

| ChatGPT is professional. | |

| ChatGPT is effective. | |

| ChatGPT is intelligent. | |

| ChatGPT is skillful. |

Ethical Considerations

Our research was reviewed and approved by the Korean Psychological Association as per the submitted research proposal. Data was collected remotely by a professional survey agency. Consequently, we believe there are no ethical concerns as the online survey company adhered to its internal regulations for proper data collection. During the data collection process conducted by the online survey company, informed consent was obtained from all participants. This ensured that each participant was fully aware of the study’s purpose and methodology and voluntarily agreed to contribute their data.

Funding

This work was supported by the Ministry of Education of the Republic of Korea and the National Research Foundation of Korea (NRF-2024S1A5C3A03046579). The present Research has been conducted by the Research Grant of Kwangwoon University in 2023.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The datasets generated and/or analyzed during the current study are not publicly available owing to privacy considerations. However, they are available from the corresponding author upon request.