Abstract

Psychologists with their expertise in statistics and regarding human perception and behavior can contribute valuable insights to the development of innovative and useful artificial intelligence (AI) systems. Therefore, we need to raise attention and curiosity for AI and foster the willingness to engage with it among psychology students. This requires identifying approaches to integrate a general understanding of AI technology into formal psychological training and education. This study investigated to what extent psychology students currently accept and use AI and what affects their perception and usage. Therefore, an AI acceptance model based on established technology acceptance models was developed and tested in a sample of 218 psychology students. An acceptable fit with the data was found for an adapted version. Perceived usefulness and ease of use were most predictive for the students’ attitude towards AI; attitude itself, as well as perceived usefulness, social norm, and perceived knowledge, were predictors for the intention to use AI. In summary, we identified relevant factors for designing AI training approaches in psychology curricula. In this way, possible restraints regarding the use of AI can be reduced and its beneficial opportunities exploited in psychological contexts.

Hardly any other emerging technology has attracted as much attention and gained similar significance in recent years as artificial intelligence (AI; Fast & Horvitz, 2017). Advanced AI and analytics are predominant trends playing a decisive role for businesses, research, and governments who are all interested in an autonomous or semi-autonomous analysis, interpretation, and utilization of large amounts of data (Panetta, 2019). There are already numerous applications for AI in the fields of business analytics, medicine, commerce, administration, education, as well as in the work- and everyday life of most people. But still, unlike any other technology, AI seems to elicit ambiguous and mixed feelings in users (Lichtenthaler, 2020): People are worried about a loss of control, have ethical concerns, and fear a negative impact of AI on work, i.e., the feeling of being redundant. However, they also have high hopes for AI in healthcare and education (Fast & Horvitz, 2017; Cave & Dihal, 2019) and, according to Oracle’s AI@Work Study 2019, about 50% of the respondents are already using some form of AI technology at work. Furthermore, the concept of AI is very large and diffuse. People might derive multiple mental representations from it, ranging from robots and autonomous vehicles to machine learning algorithms, and their hopes and fears do not seem to relate only to specific technologies and applications, but to the impact of AI on the future of the whole society.

We, therefore, presume that there is a difference in how people perceive, accept, and use AI-based technology compared to other technologies and that other factors play a crucial role in their adoption. In this context, psychologists can also play a fundamental role regarding the deployment of AI (Mruk, 1987), i.e., to understand when and how people are more likely to adopt AI technology and when they rather tend to feel intimidated or subjugated by the technology. Not only the field of industrial and organizational psychology that is already involved with AI-based human resource (HR) management solutions deals with the opportunities and challenges of AI (Kersting, 2020), but also the field of clinical psychology (Luxton, 2014). Virtual patients support novice therapists in their clinical training (Johansson et al., 2017a), machine learning algorithms provide reliable predictions based on clinical and biological data (Dwyer et al., 2018), and anti-depressive chatbots support patients in their therapy or psychological treatment (Bendig et al., 2019). However, since information technology and computer science are (most of the time) no crucial components of the formal training of psychologists, they may probably be uncomfortable in the technological context of AI and do not take advantage of the beneficial opportunities (Eickhoff, 2020; Kersting, 2020; Landers, 2019). On the contrary, they might—like many other people without extensive knowledge or further training in computer sciences—consider the general idea of AI to be something mystical or threatening (Orben, 2020). With this study, we wanted to get insights into psychology students’ attitudes towards AI, their intention to use this technology, and what affects those. Therefore, we developed a model combining existing approaches and research findings from psychology and computer science to explain attitudes towards AI and their intention to use it. We tested the model in a sample of psychology students. Thereby, we scrutinized the role of perceived usefulness which has received considerable attention in research investigating technology use in the health and therapy sector (Edmunds et al., 2012; Holden and Karsh, 2010; Liu et al., 2015), but also explored social influences and perceived knowledge regarding AI which have attracted little attention in these contexts up to now. Based on this research, we discuss whether there is a need for more knowledge and competencies regarding AI technology for psychology students and give recommendations for the development of targeted interventions in the context of psychological training and education.

Existing Approaches and Theories Regarding Technology Acceptance

Researchers in the field of computer science and human–computer interaction examined and discussed concepts to explain users’ acceptance and use of recent information technology for more than 40 years. We first provide an overview of existing approaches and theories from psychology and computer science that aim at explaining users’ attitudes towards and their willingness to use technology.

Theory of reasoned action. One prominent theory applied in the context of technology acceptance is the theory of reasoned action (TRA) by Fishbein and Ajzen (1975). These authors have developed a theory with a limited set of constructs to predict and understand health related behaviors. They postulated that the theory can be applied to any behavior of interest (Fishbein & Ajzen, 1975), including the adoption and use of new technologies. The theory postulates that the combination of attitudes and norms produces behavioral intentions which in turn influence actual behavior (Ajzen & Fishbein, 1980). Attitudes are denoted people's positive or negative evaluation of performing a particular behavior. Fishbein and Ajzen (1975) differentiate three different components that shape the attitude: an affective, cognitive and behavioral component. Norms are built on beliefs about others’ views about a behavior and their actual performance of this behavior. Especially, whether important individuals or groups in the person's life approve or condemn the behavior determines the perceived social pressure. In his theory of planned behavior (TPB), Ajzen (1991) added a third factor influencing a person's intention to engage in a particular behavior: the perceived behavioral control. This third factor corresponds to a high or low sense of self-efficacy (Bandura, 1986) and is determined by facilitating or inhibiting personal as well as environmental factors that affect the execution of the behavior (Ajzen,1991). In 2010, Ajzen and Fishbein presented a unified conceptual framework, explaining the strength of a person's intention to perform a specific behavior with a favorable attitude, a supportive norm, and a sufficient perceived behavioral control: the reasoned action approach (RAA). Within this framework, the relative importance of each factor varies concerning personal or external factors and the behavior in question. They added the variable “actual control” which includes a person's skills and abilities as well as environmental facilitators and constraints. To summarize the ideas of Fishbein and Ajzen, people form behavioral intentions based on attitudinal, normative, and control considerations. This framework has been applied successfully in many contexts. Meta-analyses investigating the predictive power report an amount of explained variance in behavioral intention ranging from 48% to 59% and of explained variance in actual behavior ranging from 24% to 33% (e.g., Hagger et al., 2018; McEachan et al., 2016; Plotnikoff et al., 2013). Although Fishbein and Ajzen provided a good general framework to explain and predict behavioral intentions, they left the possibility of adding more behavior-specific predictors explicitly open as long as a causal relationship can be assumed and there is conceptual independence of the theory's existing predictors and the added predictors (Ajzen, 2011). In accordance with this, we focused on approaches with more specific predictors to explain and predict technology use.

Technology acceptance model. Based on the TRA, Davis (1985; 1989) developed a model explaining users’ acceptance or rejection of computer systems: the technology acceptance model (TAM). According to the TAM, attitudes towards technology are determined by perceived usefulness and perceived ease of use. Perceived usefulness is defined as “the degree to which an individual believes that using a particular system would enhance his or her job performance” (Davis, 1985, p. 26) or improve his or her productivity (Davis et al., 1989). Perceived ease of use refers to “the degree to which an individual believes that using a particular system would be free of physical and mental effort” (Davis, 1985, p. 26). Perceived ease of use is hypothesized to have a direct effect on the perceived usefulness, because a system that is easier and more effective to use, is correspondingly more useful for its users (Davis, 1985). This effect has been confirmed in a considerable number of studies (e.g., Lee et al., 2003). Meta-analyses report about 48%–50% explained variance of users’ behavioral intention (e.g., King & He, 2006; Schepers & Wetzels, 2007), and 30% explained variance of actual usage (e.g., Schepers & Wetzels, 2007). The TAM has also been modified and extended, for example by Taylor and Todd (1995) who combined TAM and TRA/TPB in the augmented TAM. They added subjective norms and perceived behavioral control as further determinants of behavioral intention and actual technology use.

Unified theory of acceptance and use of technology. In 2003, Venkatesh and colleagues reviewed eight existing models explaining user acceptance and use of technology (including the aforementioned models and theories) with the main goal of identifying major determinants of intention and key moderating variables. For every included model, they found at least one temporally stable construct with a strong influence on the users’ intention to use a specific technology. They also found strong conceptual overlaps between those constructs. Based on this review, Venkatesh et al. (2003) proposed three major determinants of user intention: performance expectancy, effort expectancy, and social influence in their unified theory of acceptance and use of technology (UTAUT). Performance expectancy resembles the perceived usefulness of TAM. Effort expectancy pertains to “the degree of ease associated with the use of the system” (Venkatesh et al., 2003, p. 450), which is obviously comparable to perceived ease of use but is also related to the perceived behavioral control component of TPB as it includes the perceived probability of internal and external difficulties and obstacles. The last concept, social influences, is similar to the perceived norm from TRA. The fourth variable, facilitating conditions, refers to “the degree to which an individual believes that an organizational and technical infrastructure exists to support the use of the system” (Venkatesh et al., 2003, p. 453). However, Venkatesh et al. (2003) argue that facilitating conditions are non-significant predictors for intention. In their model, the authors also list key moderators influencing the relationship between the outlined variables and behavioral intention (gender, age, experience, and voluntariness of use). The UTAUT, contrary to TRA and TAM, does not include attitude as a mediating variable. On the contrary, it proposes direct relationships between performance and effort expectancy with behavioral intention. The model has until now, similarly to the TAM, evolved to an established attempt to explain what influences whether users intend to use a technology. Venkatesh et al. (2003) indicate an overall explained variance in users’ intention to use a technology of 70%. Meta-analyses by Dwivedi et al. (2011; 2019) report results about 38%. The explained percentage of actual behavior reported by Venkatesh et al. (2003) is 48%. Both Dwivedi et al. (2019) and Khechine et al. (2016) found 21% explained variance of user behavior based on 162 and 74 empirical studies. Alam et al. (2020) applied the UTAUT to explore the antecedents of intention to use AI in recruiting. In their study with 226 HR professionals in Bangladesh, they report 36% of explained variance in intention to use AI and 21% of explained variance in the actual use of AI in recruitment.

Framework of an AI Acceptance Model

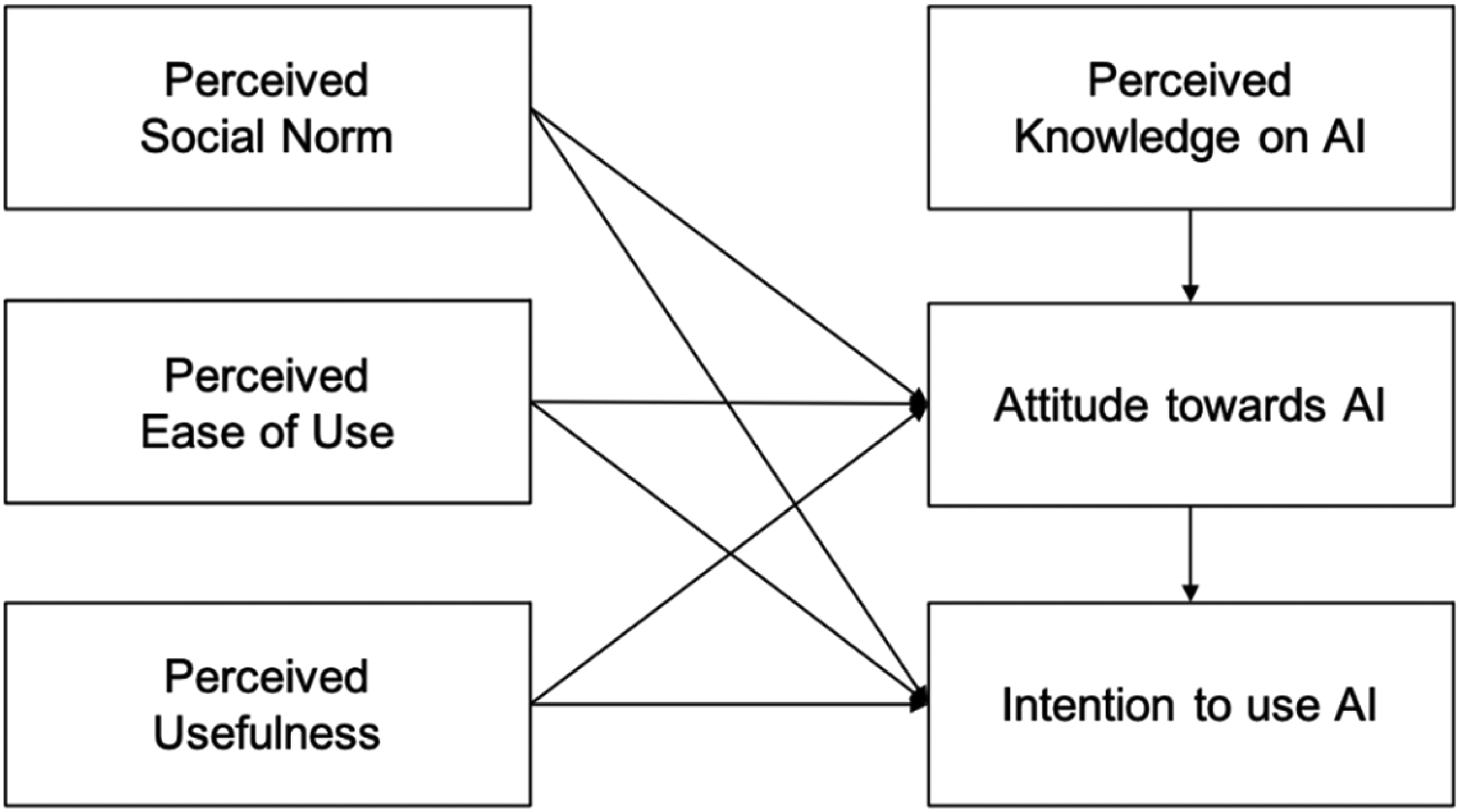

Based on the different models and their limitations, we hypothesized and tested an integrative model to explain psychology students’ AI acceptance, and which circumstances determine whether they intend to use AI. As the former models differed regarding the included variables and their relationships (e.g., the role of attitude), we combined them to investigate which factors are relevant in the AI context. The UTAUT as the most comprehensive model seemed to be a good starting point and its major variables were adopted (intention to use AI, performance expectancy/perceived usefulness, effort expectancy/perceived ease of use, social influence/perceived norm, and the behavioral intention; see suggested integrative model in Figure 1). The intention to use AI is defined as a goal, purpose, or plan to use AI or to learn more about it. In addition, we adapted attitude towards AI technology from the TRA/TAM, which should also be influenced by perceived ease of use, perceived usefulness, and perceived social norm and should be a predictor of a student's intention to use AI technology. Because most psychology students will not have manifold prior conscious experience with AI, it is unlikely that their explicit attitudes towards AI would be derived from knowledge, skills, and experience. These explicit beliefs, thoughts, and assumptions about AI technology represent the cognitive component of attitudes. Instead, we expect that the affective component of attitude towards AI might also play an important role in this target group. This component is mainly based on rather implicit and diffuse mental representations about what AI is and how people feel about it (Spatola & Wudarczyk, 2020). Accordingly, when operationalizing attitudes towards AI in this study, we considered affective as well as cognitive aspects of attitudes (Svenningsson et al., 2021). Furthermore, because AI might elicit mixed feelings, we assume that the component attitude comprises positive as well as negative subfacets.

Schematic representation of the integrative theoretical artificial intelligence (AI) acceptance model.

In the context of AI, perceived usefulness is defined as the degree to which an individual believes that using AI will help and benefit him or her. Perceived ease of use pertains to the perceived ability, autonomy, and control when using AI and to the perception that the AI system works well and facilitates the usage. One further variable included in the proposed model is a persons’ perceived knowledge of AI because it is argued that a person's attitude towards a topic, behavior, or object also depends on his or her knowledge about it. This idea is based on the knowledge attitude practice model (KAP; Kaliyaperumal, 2004; Schwartz, 1976) and the concept of technology-related knowledge, skills, and attitudes (KSA; Seufert, Guggemos, & Sailer, 2021). Accordingly, users’ attitudes towards AI may not only be determined by the perceived usefulness and ease of use of his or her use of AI technology, but also by his or her knowledge about the general benefits and risks of AI. This relationship between knowledge and attitude may particularly apply to AI because, in the context of AI, ethical and moral issues play a significant role. In the model, this is included as “perceived knowledge of AI.” This knowledge can be independent of the student's individual use, but for example be based on information about legal, ethical, or moral aspects of AI. It also does not reflect a student's actual knowledge of AI, but his or her perceived expertise regarding AI. The consideration of the effects of perceived knowledge and perceived social norm on attitude is a new approach to explain attitude towards a technology.

Objectives of the Present Study

In the current study, we test if the proposed model can explain the acceptance and (intended) use of psychology students with regard to AI. According to the model, the student's attitude towards AI and his or her intention to use AI should be related to the perceived ease of use, perceived usefulness, and the perceived social norm regarding AI. Furthermore, attitude on AI should be affected by the student's perceived knowledge and should itself influence the intention. We presume that attitude comprises a distinguishable affective component and a cognitive component.

Material and Methods

Participants

A total of 218 psychology students participated in this study via an online questionnaire. This number is sufficient for a path analysis following the advice by Boomsma and Hoogland (2001) and Kline (2011) who propose a minimum sample size of 200 participants for structural equation models. The survey was distributed via mailing lists of psychological faculties and social media. Before the survey, all participants confirmed a written informed consent according to the recommendations of the declaration of Helsinki.

Due to extreme values or skewed data outlined in the next section, two respondents had to be excluded from further analyses. In the final sample (N = 216), respondents were, on average, 24.2 years old (SD = 4.5). 75.9% were female, 23.3% were male, and 0.5% indicated another gender. 61.2% were graduate students (pursuing their master's degree) and 36.9% were undergraduate students (pursuing their bachelor's degree) of psychology. 1.9% studied psychology as a minor subject. Thirty eight undergraduate psychology students from the universities of Wuerzburg and Heidelberg participated in this study for course credit, 82 graduate psychology students from the university of Heidelberg participated in the context of a lecture and 96 psychology students from other German universities participated voluntarily in this study. All participants were guaranteed confidentiality and were also informed that participation was voluntary and that they could withdraw at any time during the data collection.

Procedure

All participants received a questionnaire collecting demographic data (age, gender, and education) and data regarding the constructs of the suggested model (perceived knowledge of AI, attitudes towards AI, intention to use AI, perceived social norm regarding AI, perceived ease of use of AI, and perceived usefulness of AI). On average, participants needed 5.8 min (SD = 2.3) to complete this survey.

Measures

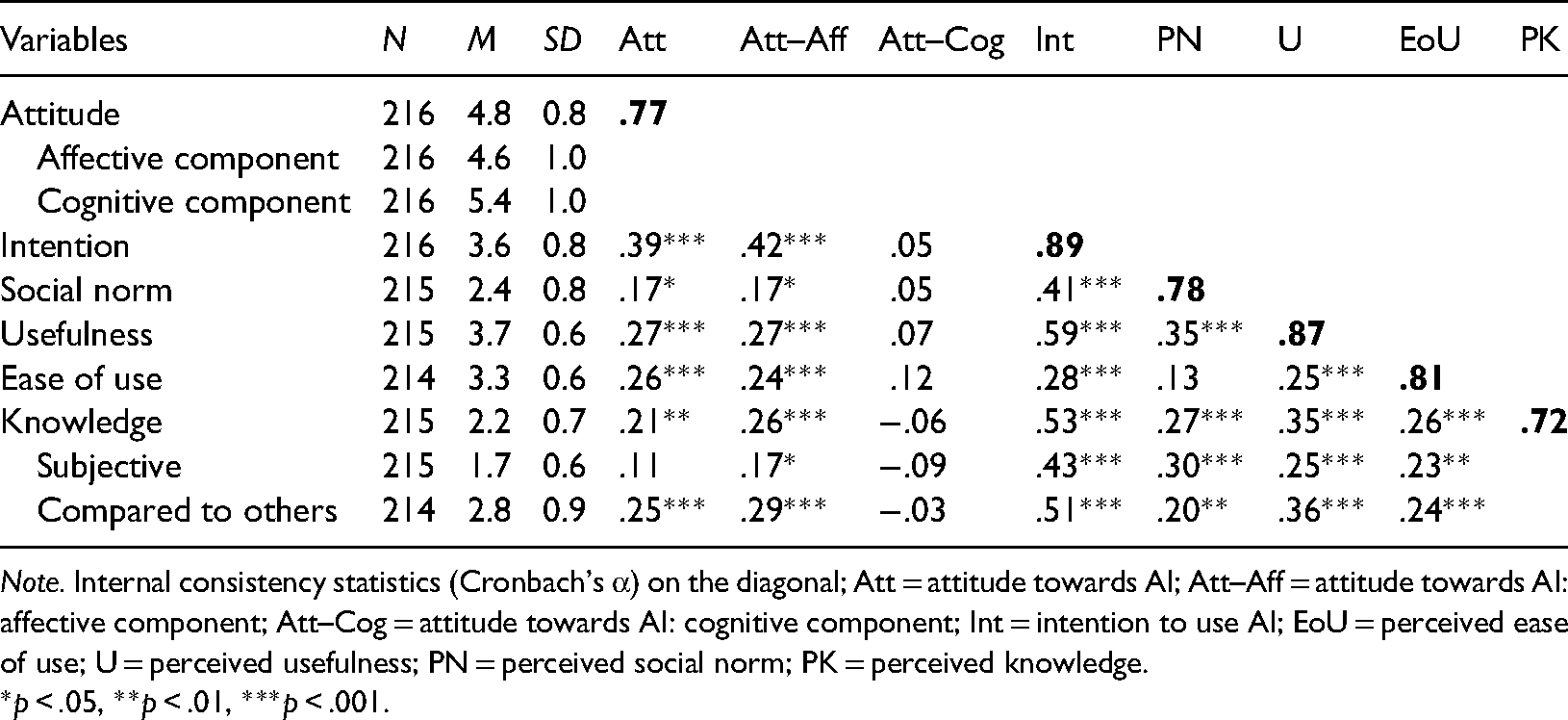

To ensure the internal validity and consistency of the measures, the measurement items for attitude towards AI, intention to use AI, perceived social norm, perceived usefulness, and perceived ease of use were adopted from existing measures derived from technology acceptance literature (Davis,1989; Fishbein & Ajzen, 2010; Venkatesh et al., 2003) that have been used in numerous studies before (e.g., Bach et al., 2016; Haas et al., 2013; Hoque & Sorwar, 2017; Hu et al., 1999; Khalilzadeh et al., 2017; Madigan et al., 2016; Mishra et al., 2014; Rahman et al., 2017; Rauschnabel & Ro, 2016; Robinson, 2006; Shih & Fang, 2006; Teo & van Schaik, 2012). Items from UTAUT were mostly adapted from the German versions of Duyck et al. (2008) and Fischbach (2019). As little modification as possible was applied to adjust the items to the context of this study. Nevertheless, to achieve high discriminant validity and reliability, we added some own items or items from other scales. The internal consistency of the scales was tested with Cronbach's α. Values are reported in Table 1. Unless otherwise specified, responses were given on a 5-point Likert scale ranging from strongly disagree (1) to strongly agree (5).

Descriptive statistics, correlations, and internal consistency.

Note. Internal consistency statistics (Cronbach's α) on the diagonal; Att = attitude towards AI; Att–Aff = attitude towards AI: affective component; Att–Cog = attitude towards AI: cognitive component; Int = intention to use AI; EoU = perceived ease of use; U = perceived usefulness; PN = perceived social norm; PK = perceived knowledge.

*p < .05, **p < .01, ***p < .001.

Attitudes Towards AI. To assess participants’ attitudes towards AI, we used the evaluative semantic differential method with a set of eight items. The respondents received a set of bipolar pairs of adjectives and evaluated AI based on these adjectives. Adjective pairs were mainly taken from the Godspeed Scale, a scale developed by Bartneck et al. (2009) to measure attitude towards robots. Responses were given on a 7-point Likert scale. We used three items each from the subscales likeability (e.g., “AI is unfriendly (1)–friendly (7)”) and perceived intelligence (e.g., “AI is incompetent (1)–competent (7)”), and two items from the subscale perceived safety (e.g., “When it comes to AI, I feel anxious (1)–relaxed (7)”). The likeability and the perceived safety subscale encompass affective components of attitudes, the perceived intelligence focuses on cognitive aspects based on explicit beliefs and ideas about AI.

Intention to Use AI. Intention to use AI was measured with four items adapted from the UTAUT scale (Venkatesh et al., 2003, e.g., “I predict that I would use AI-based applications in the coming months,” or “I intend to use AI-based applications in the next few months.”). Two additional items were added for control purposes and to meet the definition of the construct in this study: One reversed control item (“I would not use AI-based applications if I could avoid it.”) and one item capturing the intention to become more involved with the topic of AI (“In the future, I intend to become more involved with AI and AI-based applications”).

Perceived Social Norm. Perceived social norm was evaluated with four items from the UTAUT scale (Venkatesh et al., 2003). We adapted the items for a university environment (e.g., “People who influence my behavior think that I should use AI-based applications.”).

Perceived Usefulness. Perceived usefulness of AI was measured with seven items derived from the UTAUT (Venkatesh et al., 2003) and TAM (Davis et al., 1989) scales. The items accounted for aspects of generally perceived usefulness (e.g., “I would find artificial intelligence useful.”), expected rises in performance, productivity (e.g., “Using the system would increase my productivity.”), effectivity, efficiency, as well as expected benefits of using AI.

Perceived Ease of Use. Perceived ease of use was assessed with five items adapted from the UTAUT scale (Venkatesh et al., 2003, e.g., “I would find AI-based applications easy to use.” or “Interacting with artificial intelligence would not require a lot of effort.”). Additionally, we added three items from the German version of the Computer User Self-Efficacy Scale (CUSE by Cassidy & Eachus, 2002; CUSE-D by Spannagel & Bescherer, 2009), which reflect a person's perceived competence and autonomy while using AI (e.g., “I would find it easy to get the artificial intelligence to do what I want it to do.” or “When using an AI-based application, I will feel that sometimes things just seem to happen and I don't know why”; with the latter being reversed).

Perceived Knowledge of AI. To assess participants’ knowledge of AI, we asked them to rate their knowledge regarding AI and machine learning on a standard assessment 5-point Likert scale for knowledge and skills, and to rate their knowledge compared to their peer group (“Please rate your knowledge of AI and machine learning [in comparison to your fellow students.]”).

Statistical methods

Before performing statistical analyses, data cleansing and preparation measures were applied to avoid wrong conclusions due to skewed data. First, negatively worded questions were coded reversely to ensure that higher numbers represented a higher manifestation of the construct. A missing data analysis did not reveal critical accumulations of missing data with four missing data points in an item of the perceived ease of use scale being the maximum and an overall relative frequency of missing data of 0.49%. Nonetheless, means of scales were computed only when at least half of the corresponding items were answered. Because of this procedure, most analyses were performed based on a sample of 214 persons. Afterward, certain response patterns indicating that the participants did not answer seriously were checked. Two participants stood out due to a conspicuously homogeneous response pattern and, additionally, showed very short response times (below one standard deviation to the mean response time). They were excluded from further analyses to avoid that incorrect or inconsistent data lead to distorted results. To test whether the proposed factor structure of the distinct constructs is confirmed by the data, we calculated an exploratory factor analysis (EFA). A principal axis factoring (PAF) was used to check the factor loadings of items for constructs that are supposed to be closely related: perceived ease of use and perceived usefulness, as well as attitude towards AI and intention to use AI. Factor loadings of < 0.40 were considered insufficient and affected items were removed from the scales. Cronbach's α was then used to assess the reliability and internal consistency of each scale. Based on these scales, descriptive statistics and intercorrelations were computed.

To determine the general validity of the AI acceptance model, direct and indirect effects between the constructs were estimated. Given the sample size and the complexity of the model, path modeling instead of SEM was used in order to achieve stable model estimates (Kline, 2011). Path analysis allows simultaneous tests of the relationships between the variables and the overall model-fit. To obtain estimators for the regression coefficients and the explained variances, this study followed the recommendations by Rosseel (2012) and Werner et al. (2016) using R (R Core Team, 2019) and the lavaan package (Rosseel, 2012). Covariance matrices were estimated using the maximum likelihood estimation with robust (Huber-White) standard errors. Because some variables (perceived social norm and intention to use AI) violated the normality assumption, a Yuan-Bentler correction of the test statistics had to be performed (Ullman & Bentler, 2003). To evaluate model-fit, we used a χ2-statistic that shows whether there are significant differences between the proposed model and the research data, and the comparative fit index (CFI). The CFI ranges from 0 to 1, with 1 indicating a perfect fit. Values greater than 0.95 are considered a good model-fit. The CFI is generally seen to be well suited to estimate the model-fit even in small samples (Hu & Bentler, 1999). Additionally, we report the root mean square error of approximation (RMSEA). According to Hu and Bentler (1999), values of 0.06 or less indicate a fitting model. To assess if a nested model fits the data better than a full model, a χ2-test based on the difference between the models’ χ2 values was used. To compare non-nested models, we used the Bayesian information criterion (BIC; Schwarz, 1978). It comprises the model's likelihood function and a penalty term that increases with the number of parameters in the model and the sample size (Neath & Cavanaugh, 2012). The model with the lowest BIC is preferred as it represents a good compromise between an acceptable complexity of the model and little information loss. We followed the recommendations by Hoyle and Panter (1995) to report estimates for parameters with the corresponding standard errors (SE) and p-values. In an additional step, we investigated gender differences. Therefore, we compared the model found in the previous steps where every path of the model is constrained to a single value determined by the entire dataset independent from the groups with a “free” one in which all parameters are estimated for each group and allowed to differ between groups. If the two models are not significantly different and the first model fits the data well, then we can assume that there is no gender difference regarding the path coefficients.

Results

First, we extensively tested the measures used. In the first EFA, eight items from the construct attitude towards AI and six items from the construct intention to use AI were investigated to confirm that the constructs can be seen as distinct. The Kaiser-Meyer-Olkin measure of sampling adequacy was 0.83, representing good adequacy for factor analysis. Bartlett's test of sphericity was significant (p < .001), indicating that correlations between items were sufficiently large for performing a PAF. A varimax-rotated three-factor solution accounted for 57.4% of the total variance. The Scree plot suggests a one- or three-factor solution. The latter corresponds to our presumed factor structure with an intention to use AI scale, an affective component of attitudes and a rather cognitive component of attitudes (see Supplemental Appendix A). One item of the perceived intelligence subscale of the attitude scale was dropped after the analysis due to insufficient factor loadings (“Artificial Intelligence is [responsible/irresponsible]”). One item of the intention to use AI scale (“I will not use AI applications if I can avoid it.”) was not unambiguously assigned to the corresponding scale and, thus, dropped as well.

In a second EFA, items from the two scales perceived ease of use and perceived usefulness were checked. Good adequacy for factor analysis was signified by the Kaiser-Meyer-Olkin measure (0.86). The Bartlett's test of sphericity was also significant (p < .001). Supplemental Appendix A shows a varimax rotated factor analysis of the items, which accounted for 43.8% of the total variance. The item allocation widely confirms the postulated scales. However, one item of the perceived ease of use scale was dropped because of insufficient factor loadings (“When I use an AI application, I will feel that sometimes things seem to happen just like that, and I don't know why.”). Table 1 shows descriptive statistics and correlations for all constructs of the AI acceptance model. Values for Cronbach's α for all final scales ranged from 0.72 to 0.88, which supports acceptable internal reliability (Schmitt, 1996). To summarize, with small adaptations of existing measures, we were able to obtain reliable and valid estimates for most of the constructs in our proposed model. Though, because the internal reliability was relatively poor for the perceived knowledge scale, we report descriptive statistics for these individual items as well. The first item measures the perceived subjective knowledge regarding AI and machine learning. In line with our expectations about this target group, participants indicated, on average, to have little to no knowledge; only 7% of participants responded having advanced or excellent knowledge regarding AI. The second item measured the perceived knowledge regarding AI compared to the knowledge of the participants’ fellow students. Most estimated that they have less or as much knowledge as others; about a fifth said that they had more knowledge than others.

After we obtained our final scales, we conducted a Harman's single factor test (Harman, 1967) to test for a common method bias (Podsakoff et al., 2003) because all measures are self-reports. The total variance extracted by one factor was 24.12% and it is, thus, less than the recommended threshold of 50%. We, therefore, assume no common method bias in our data.

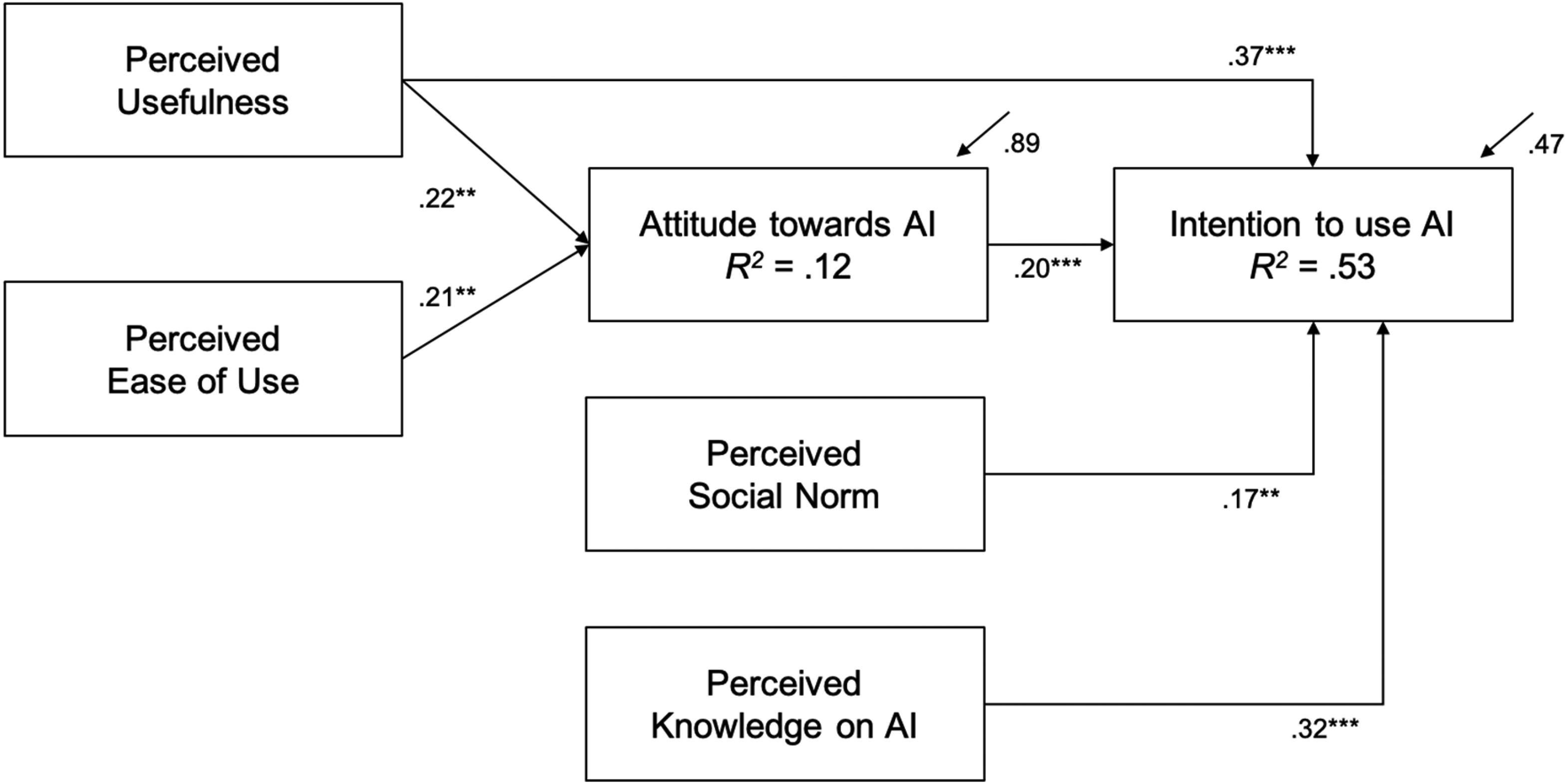

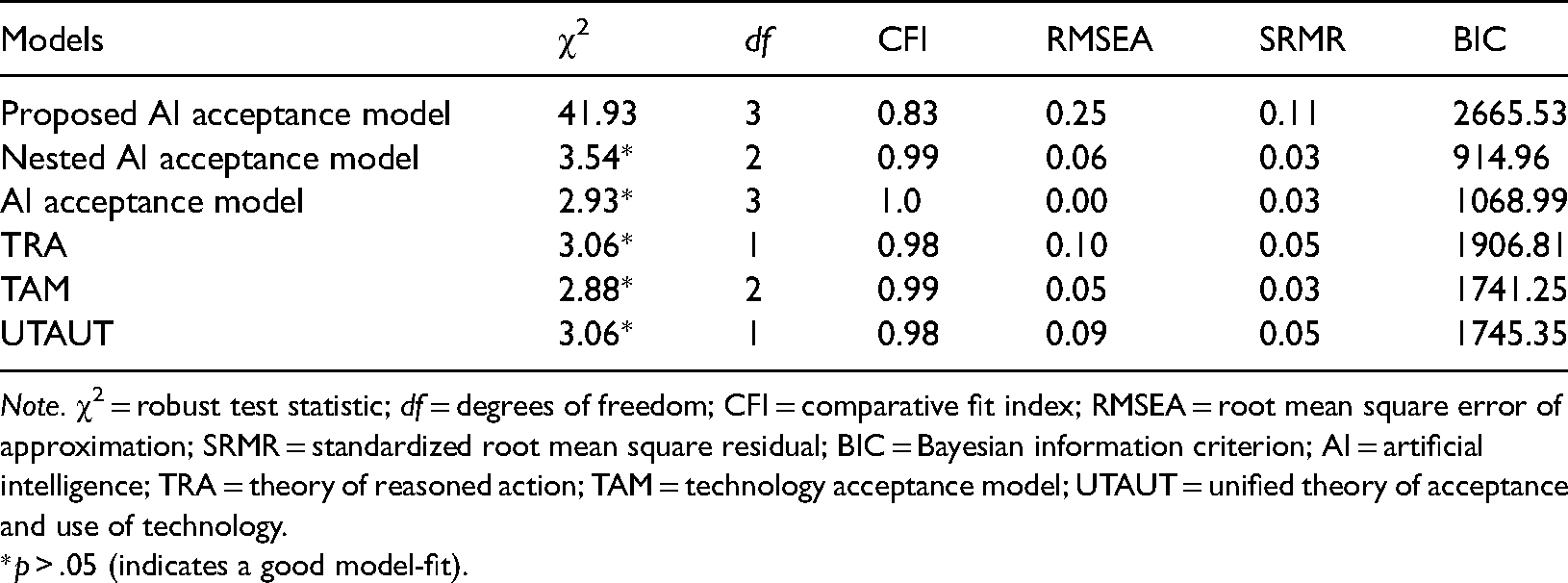

Second, we tested the proposed AI acceptance model using path analysis. The analysis revealed significant differences between our proposed model and the research data, χ2(3) = 41.93, p < .001. Since the CFI was 0.83 and the RMSEA was 0.25, fit indices for the proposed model are not satisfactory. The structural model of this path analysis can be found in Supplemental Appendix B. To improve the model-fit, we calculated a nested model that is based on the significant paths from the full model and contains, therefore, only a subset of the original parameters. The nested model is significantly better suited to explain the data structure than the originally proposed AI acceptance model, χ2(1) = 29.10, p < .001. The path coefficients of perceived knowledge of AI and attitude towards AI are non-significant in the original model and, therefore, perceived knowledge of AI was dropped for the nested model. However, based on the correlation table, perceived knowledge appears to be significantly related to intention to use AI. To check if perceived knowledge is a relevant predictor for intention to use AI, we extended the nested AI acceptance model by perceived knowledge. As can be seen in the BIC score in Table 2, this results in less information loss, acceptable model complexity, and enhanced the amount of explained variance in intention to use AI to 53%. A structural overview of this final AI acceptance model is given in Figure 2. The path coefficients of perceived usefulness to attitude towards AI, β = 0.22, SE = 0.07, p = .001, as well as, perceived ease of use to attitude towards AI, β = 0.21, SE = 0.07, p = .002, are significant, which means that a person who perceives AI as useful and easy to use is more likely to have a positive attitude towards AI. Furthermore, attitude towards AI is a significant predictor for intention to use AI, β = 0.20, SE = 0.05, p < .001. Consequently, a person who holds a positive attitude towards AI is more likely to intend to use it. Perceived social norm, β = 0.16, SE = 0.05, p = .002, and perceived knowledge of AI, β = 0.32, SE = 0.06, p < .001, are further significant predictors of intention to use AI. A perceived AI-friendly social norm is, thus, linked to a higher intention to use AI, and a person who estimates their knowledge and expertise regarding AI higher is also more likely to intend to use it. At last, perceived usefulness, β = 0.37, SE = 0.05, p < .001, is also highly significantly related to intention to use AI. Accordingly, a person who perceives AI as useful is more likely to intend to use AI. This relationship is partially mediated by attitude towards AI. The indirect effect is significant, β = 0.04, SE = 0.02, p = .011.

Path analysis for the nested artificial intelligence (AI) acceptance model extended by perceived knowledge.

Goodness-of-fit measures.

Note. χ2 = robust test statistic; df = degrees of freedom; CFI = comparative fit index; RMSEA = root mean square error of approximation; SRMR = standardized root mean square residual; BIC = Bayesian information criterion; AI = artificial intelligence; TRA = theory of reasoned action; TAM = technology acceptance model; UTAUT = unified theory of acceptance and use of technology.

*p > .05 (indicates a good model-fit).

Additional path analyses for the other technology acceptance models (TRA, TAM, and UTAUT) can be found in Supplemental Appendix B. Based on these analyses, we can conclude that an adapted version resembling the TAM model extended by perceived social norm and perceived knowledge fits the data well. Model-fit indices of all tested models are indicated in Table 2.

To investigate gender differences, we compared the final model shown in Figure 2 with a “free” model in which all parameters are allowed to differ between the female and the male group. The two models do not differ significantly, χ2(5) = 8.92, p = .112. We, therefore, do not assume gender differences. The most interesting difference is the influence of perceived knowledge of AI. It is only a significant predictor of intention to use AI for female psychology students, β = 0.33, SE = 0.07, p < .001, but not in the (though smaller) group of male students, β = 0.12, SE = 0.12, p = .319. Note, this difference could be merely an effect of the different group size (female: n = 163, male: n = 50).

Discussion

The purpose of our study was to develop and test a new model which combines and extends existing theories to explain what factors are relevant to predict psychology students’ attitude towards AI and their intention to use it. This is important because we would like to provide a profound theoretical framework for the development of targeted interventions in the context of psychological training and education. Our originally presumed model was not confirmed by the data gathered in this study. The model-fit indices indicate an insufficient theoretical basis to explain the actual relations of the variables. However, an adapted model was created based on the significant paths and correlations which yielded sufficient model-fit indices. The combined variables in the model accounted for a substantial amount of variance regarding the intention to use AI (53%), but for a noticeably smaller amount of variance in attitude towards AI (12%). As expected, intention to use AI was predicted by perceived usefulness (the degree to which an individual believes that using AI will help and benefit him or her), perceived social norm regarding AI, and attitude towards AI. But against expectations, perceived ease of use, reflecting the perceived personal ability, competence, and autonomy to use AI, did not have a considerable influence on intention. Perceived ease of use and perceived usefulness of AI were related to attitude towards AI, whereas perceived knowledge was no significant predictor for attitude but intention to use AI. The basic structure of the resulting AI acceptance model resembles the structure of the TAM. However, at least in the context of AI, perceived social norms and perceived knowledge of AI seem to be of utmost importance for the intention to use AI. The relevance of the perceived social norm has already been suggested by the TRA, RAA, and UTAUT. Taking the BIC to compare the different models shows that the newly developed model is a good compromise between information loss and model complexity to predict attitude and behavioral intention regarding AI technology of psychology students.

In the current study, perceived ease of use and perceived usefulness were significant predictors of attitude towards AI. This is consistent with a meta-analysis by Holden and Karsh (2010) investigating technology use in the health sector and a study by Liu et al. (2015) investigating technology use by therapists. Both outlined perceived usefulness as the most important factor determining attitude towards technology and the intention to use it. In a student sample, Edmunds et al. (2012) compared the influence of work, university, and social/leisure contexts on perceived ease of use and perceived usefulness of information and communication technology (ICT). They suggest that the degree to which students perceive ICT as useful and easy to use in the work context influences both attitudes towards and take-up of ICT in general. Transferred to the current study, this means that to foster a more positive attitude towards AI among psychology students, there should be a focus on its usefulness and ease of use in psychologists’ work context; both in the development of AI-based applications as well as in their promotion. For example, virtual patients to practice psychotherapeutic methods stand out as successful applications in the clinical psychology context. Johansson et al. (2017b) reported high values on the Likeability subscale of the Godspeed Scale by Bartneck et al. (2009) also used in the current study and a high interest of therapists in using this technology and recommending it to colleagues. Similarly, AI-based tools from the context of organizational and industrial psychology, e.g., to facilitate personnel selection, are becoming increasingly popular. However, because perceived ease of use and perceived usefulness could explain only 12% of the variance in attitude towards AI, other variables seem to be important in determining a person's attitude towards AI technology, e.g., anxiety (e.g., Kim, 2017), perceived trustworthiness (e.g., Nadarzynski et al., 2019), availability (e.g., Alam et al., 2020), or tech-savviness (e.g., Pinto dos Santos et al., 2019). Nevertheless, even if predictors of attitude towards AI remain to a large extent unclear, attitude predicts intention to use AI. When combined with the perceived social norm, perceived knowledge, and perceived usefulness, it accounts for 53% of the variance in intention to use AI. Again, perceived usefulness is the most important factor predicting psychology students’ intention to use AI followed by attitude towards AI. The third most relevant factor is perceived knowledge of AI. Interestingly, this seems to be especially the case for female participants in the study, whereas for male participants perceived knowledge is not a significant predictor of behavioral intention. However, because of the difference in subgroup size, it is quite possible that model testing for gender difference did not provide stable estimators. Still, AI is a complex and diffuse technology, and most psychology students might not have profound knowledge regarding it, especially female students might experience a lack of perceived competence and self-efficacy regarding the use of AI and have, therefore, a lower intention to use it (Terzis & Economides, 2011; Venkatesh et al., 2000). Including knowledge is not a common approach in technology acceptance research, but in the context of sustainability research. Here, awareness of and knowledge about environmental problems are often considered as important cognitive preconditions for developing pro-environmental moral norms and attitudes (Bamberg & Möser, 2007), e.g., concerning the purchase of sustainable fashion products (e.g., Pagiaslis & Krontalis, 2014) or green vehicles (e.g., Mohiuddin et al., 2018) as well as the consumption of organic food (e.g., Han & Hansen, 2012). Consequently, in the context of complex and ambiguous decision-making scenarios, the inclusion of perceived knowledge as a predictor of behavioral intention can be recommended. Paltoglou et al. (2019), who investigated students’ confidence regarding statistics, emphasize that instead of focusing on knowledge, it is even more important to foster students’ perceived competence. They indicate experience and perceived confidence as decisive factors. One possible intervention to foster psychology students’ intention to use AI might consequently be short glimpses into the specific aspects and application areas of AI to raise attention and curiosity among psychology students towards AI technology and to broaden their experiences with AI. Students should learn in which way machine learning-based approaches have the potential to improve decisions related to the diagnosis, prognosis, and treatment of mental illnesses using clinical and biological data (Dwyer et al., 2018). Besides, lecturers can provide hands-on training to get to know the functionality of AI technology, learn when and how to use it properly to become experienced in the interaction. One might for example show students modern therapeutic chatbots, explain how they work (their database and algorithms), and discuss potential application scenarios. Especially, women might benefit from this constant expansion of their knowledge base and get more confident with AI. This qualification might also enable students to understand when and why people use AI systems and to contribute decisively to the user-friendly advancement of those systems.

Limitations and Future Research

As mentioned above, predictors of attitude towards AI remained to a large extent unclear. Possibly the measurement instrument was one reason for this small amount of explained variance as it differed from instruments used to measure attitude in other TAM-based studies. We combined affective and cognitive components in our attitude scale. But as can be seen in Table 1, only affective components were related to other variables in our model. One possible explanation might be that our participants indicated having little to no knowledge regarding AI. When participants did not have a sufficient cognitive representation of AI because of a lack of knowledge and experience, they might only have an affective attitude towards AI. This differentiation should be further investigated especially in the context of attitudes towards new technologies.

In our study, we used a scale combining negative and positive aspects of attitudes towards AI. This approach, however, can create method effects where the wording of items may lead to impaired results in the scale depending on a person's susceptibility to negative or positive words (e.g., Van Dam et al., 2012; Mayerl & Giehl, 2018). By using the semantic differential method, we tried to avoid this problem. Nevertheless, as pointed out in the introduction of the paper, AI has also a negative connotation for many people and in the context of technology, negative or rejective attitudes may be a decisive factor preventing people from interacting with technology (Nomura et al., 2006). To shed light on this, more research is needed regarding, first, suitable measurement instruments for attitude towards AI and their construct validity and, second, what determines a person's attitude towards technology in general and AI in particular. After our study was finished, Schepman and Rodway (2020) proposed a new General Attitudes Towards AI scale with a positive and negative subscale. This measure would probably have been a better fit in our study. Another scale affected by these issues is the perceived ease of use scale that also combines positive and negative aspects. The use of negative items in general tries to reduce acquiescence bias. Especially in research investigating the acceptance of a new technology within participants that are not used to this technology and might therefore be more likely negatively biased against it, this might impair the construct validity of scales measuring rather implicit beliefs regarding a technology. To overcome this problem, future research should carefully explore the influence of negative and positive beliefs and feelings on attitudes and on behavioral intentions especially in the context of newly evolving technologies. Similarly, influencing factors of perceived ease of use and perceived usefulness require further research. In the original TAM, Davis (1989) proposed “external factors” as predictors of perceived ease of use and perceived usefulness. Based on existing research findings, both, contextual and personal, variables may be influential. For instance, a student's general self-efficacy (e.g., Rho et al., 2014), computer self-efficacy (e.g., Scherer et al., 2019), or perceived competence (e.g., Deci & Ryan, 1980; Paltoglou et al., 2019) probably have an influence. In their meta-analysis investigating the acceptance of health IT by clinicians, Holden and Karsh (2010) also emphasized the impact of self-efficacy. They further outlined the influence of perceived controllability or voluntariness of use (the feeling to have volitional control over the technology use). Empirical research is needed to investigate how perceived ease of use and perceived usefulness are affected. Holden and Karsh (2010) additionally listed the impact of facilitating conditions. Facilitating conditions are factors that impede or facilitate the use of the technology, such as the perceived complexity (e.g., Scherer et al., 2019) or the availability of complementary resources like support or assistance during the use (Venkatesh et al., 2003). Furthermore, trust in the technology itself may also affect these variables (Wu et al., 2011) as well as the perceived individual relevance of the technology (e.g., Venkatesh & Davis, 2000). A third aspect may be the technology itself. Substantial improvements might also lead to higher perceived ease of use or more perceived usefulness (Davis, 1989). These technical improvements may account for higher perceived instrumentality. Perceived instrumentality may be determined by the perceived performance of an AI system and the perceived usability. Although usability of AI has already been thoroughly investigated (Amershi et al., 2019), its influence, as well as the influence of overall perceived AI instrumentality on perceived ease of use (or perceived usefulness), have to our knowledge not been examined. To summarize, there are many possible influencing variables whose effects are likely to vary significantly over time and in different contexts and target groups. Scherer et al. (2019) for example found significant differences regarding the attitude towards digital technology in education between Asian and non-Asian teachers. In the study presented here, the results are based on a largely homogenous sample (German psychology students). To attain higher generalizability, the AI acceptance model should also be tested with a more heterogeneous sample which would allow examining moderating effects of cultural background, gender, or technology experience like proposed in the UTAUT. At last, as mentioned before, the concept of AI is very large and diffuse. People might associate very different devices and applications with the term “artificial intelligence” and have highly varying attitudes towards them. To acquire more precise information, in future studies one could restrict the questionnaire to a certain kind of AI, for example, one with certain potential relevance in the future for psychology students and professionals (like chatbots or virtual patients) or ask participants before what they picture when thinking of “AI.”

A last limitation of our study is that all results are based on self-report data which may lead to response biases such as social desirability, acquiescent responding, and common method bias (Podsakoff et al., 2003). Even without the presence of a substantial common method bias as assessed by Harman's single factor test, there is reasonable doubt whether self-reports are indicative of future behavior (Dang et al., 2020). Nonetheless, self-reports allow a systematic investigation of beliefs, attitudes, or intentions that cannot be observed directly. Future studies may integrate other research methods like observations or implicit measures to verify the study results.

Conclusion

In this study, important insights into factors that are linked to the acceptance and adoption of AI technology by psychology students were provided. Based on the developed AI acceptance model, perceived usefulness of AI, attitude towards AI, perceived social norm regarding AI, and perceived knowledge of AI turned out to be important predictors of a student's intention to use AI. For psychology students, perceived usefulness of AI technology and perceived ease of use were the most important factors in determining attitude towards AI. Consequently, to generate curiosity and enthusiasm for AI among psychology students, it can be recommended to emphasize aspects where AI can be useful, to share knowledge, and educate psychology students in this field.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Author note

The development of the AI acceptance model was part of a master thesis on the interest and involvement of

psychologists in the use and development of AI technology. The whole study was preregistered on ![]() and approved by the ethics committee of the institute of psychology at the Julius-Maximilians-University Wuerzburg (GZEK 2020-59). Data were collected between June 15 and December 18, 2020.

and approved by the ethics committee of the institute of psychology at the Julius-Maximilians-University Wuerzburg (GZEK 2020-59). Data were collected between June 15 and December 18, 2020.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.