Abstract

The foundation of how students usually learn is laid early in their academic lives. However, many or even most students do not primarily rely on those learning strategies that are most favorable from a scientific point of view. To change students’ learning behavior when they start their university education, we developed a computer-based adaptive learning environment to train favorable learning strategies and change students’ habits using them. This learning environment pursues three main goals: acquiring declarative and conditional knowledge about learning strategies, consolidating that knowledge, and applying these learning strategies in practice. In this report, we describe four experimental studies conducted to optimize this learning environment (n = 336). With those studies, we improved the learning environment with respect to how motivating it is, investigated an efficient way to consolidate knowledge, and explored how to facilitate the formation of effective implementation intentions for applying learning strategies and changing learning habits. Our strategy-training module is implemented in the curriculum for freshman students at the Department of Psychology, University of Freiburg (Germany). Around 120 students take part in our program every year. An open version of this training intervention is freely available to everyone.

Keywords

Why are Learning Strategies Important?

The opportunity and need to learn over the entire lifespan is present in people’s daily lives. As learning situations are less structured once we leave formal education behind us, older people should start to self-regulate their learning to remain fully participating members of society. The foundation of how people usually learn and which learning skills people have, however, is laid early in life. In school, pupils learn first how to learn and develop certain strategies and habits. These strategies and habits heavily influence their way of learning in later life.

A problem is, however, that many or even most students in this early and decisive stage do not primarily rely on those learning strategies that are most favorable from a scientific point of view (Bjork et al., 2013; Dunlosky et al., 2013; Jairam & Kiewra, 2009; Renkl, 2008). To change students’ learning behavior at the beginning of their university education, we developed a computer-based adaptive learning environment to train learning strategies and to change habits using them. There is evidence that such a training intervention delivers positive results (e.g., Burchard & Swerdzewski, 2009). Learning-strategy training can support students, especially when they enter university (Nordell, 2009). The positive effects of strategy training are not limited to improving learning processes. Further positive consequences are more efficient learning and lower drop-out rates (Tuckman & Kennedy, 2011).

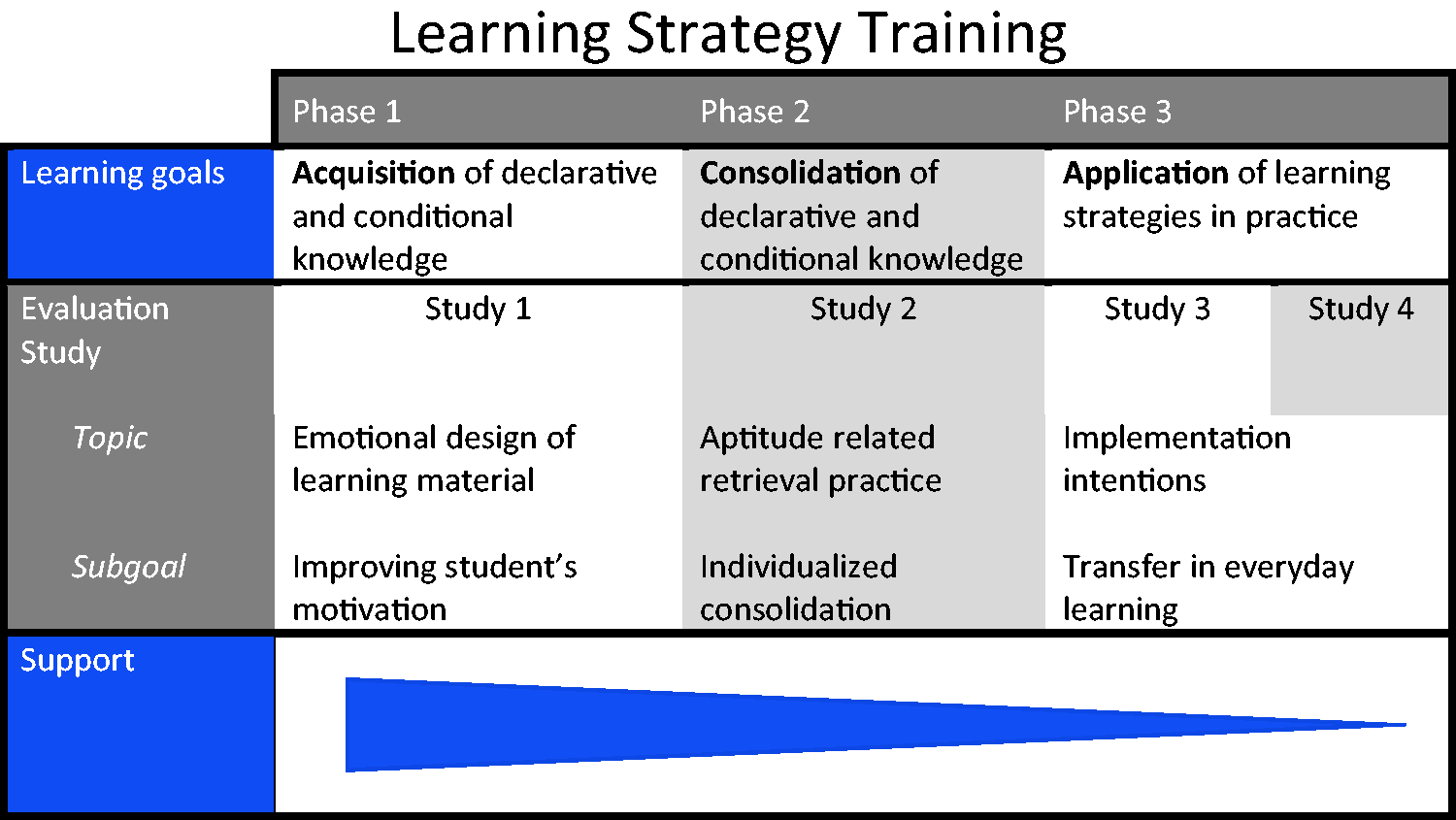

However, in everyday university life, most teachers focus on conveying the contents of the subject. In addition, instructors often lack sufficient knowledge (Morehead et al., 2016) or resources to additionally provide promising learning-strategy training. We thus developed an adaptive-strategy training course that teaches strategies mainly online and pursues several learning goals (see Figure 1). By providing the materials online, the number of students can rise considerably without making additional demands of teaching resources.

Advance organizer of tool goals, evaluation studies, and fading of support.

Development and Implementation of Strategy Training

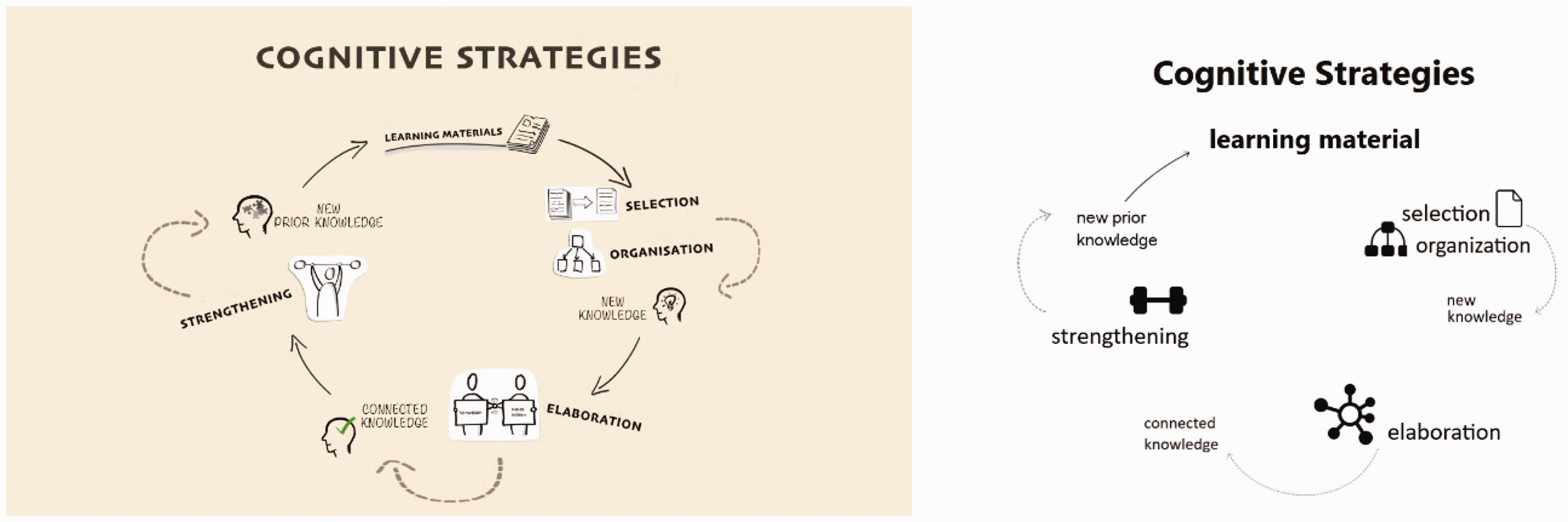

Our computer-based adaptive online strategy training is based on empirically proven general principles for the implementation of strategy-training interventions (Dinsmore, 2017; Friedrich & Mandl, 1997; Renkl, 2008). Learning strategies are cognitive or behavioral activities that learners engage in to acquire knowledge (e.g., Fiorella & Mayer, 2015). There are several learning-strategy taxonomies. According to Pintrich et al. (1991), we differentiate cognitive, metacognitive, and resource-oriented learning strategies. Cognitive learning strategies such as elaboration and organization have a direct impact on knowledge construction (i.e., learning). Metacognitive strategies help students to understand what they already know and what they should still work on. Resource-oriented learning strategies provide learners with the resources that support the actual learning process or shield it from external disturbances (Wild & Schiefele, 1994).

Our training intervention is a type of informed training. This training form focuses on teaching the advantages and disadvantages of the strategies in various application situations explicitly (conditional knowledge). In addition, students get to know different authentic application contexts to be able to independently apply the learned strategies effectively. Furthermore, the scaffolding principle is used. Students are first supported by very structured interventions to learn and practice the strategies. Gradually, more responsibility is transferred to the learners, up to independently applying the strategies in everyday learning. By this step-by-step fading of support, students begin to carry out new learning strategies independently, thus meeting the requirements of everyday life (see Friedrich & Mandl, 1997; Renkl, 2008).

Our training environment pursues three main goals: acquiring declarative and conditional knowledge about favorable learning strategies, consolidating that knowledge, and finally, applying these learning strategies in everyday life. To fulfill these goals, the course is divided into three consecutive parts: acquisition phase, practice phase, and application phase (see Figure 1).

Knowledge About Learning Strategies – the Acquisition Phase

The goal of the acquisition phase is that students acquire declarative and conditional knowledge about learning strategies. In online environments, motivating students plays an essential role. Many studies have shown that students’ motivation is essential to predicting drop-out rates and future performance in online learning environments (e.g., de Barba et al., 2016; Zheng et al., 2015). The goal when creating the learning environment was to induce a pleasant learning atmosphere by applying emotional design to increase motivation (Endres et al., 2020). We focused on situational interest as an aspect of motivation closely associated with the current learning situation.

Evaluation Experiment 1

To evaluate the acquisition phase, we focused especially on the provided videos and their design. We developed sketched explanation videos, a video format commonly used on the internet. Such videos implement emotional design by using the real or animated hands of the narrator writing letter strings, drawing pictures, or sketching symbols and figures while explaining something. Warm colors, an emotional frame story, and a friendly, young voice speaking in everyday language style are used to create a pleasant atmosphere (see Figure 2). Such videos have been used to enhance motivation (Endres et al., 2020). In our strategy training, such videos should increase motivation in particular without harming learning outcomes by including seductive details (i.e., interesting but irrelevant details; cf. Garner et al., 1989). As we wanted the learner to feel motivated right from the start, we developed the introductory videos in the form of an advance organizer (Ausubel, 1960; Korur et al., 2016).

Illustration of emotional designed videos (left) and neutral designed videos (right).

Method of Experiment 1

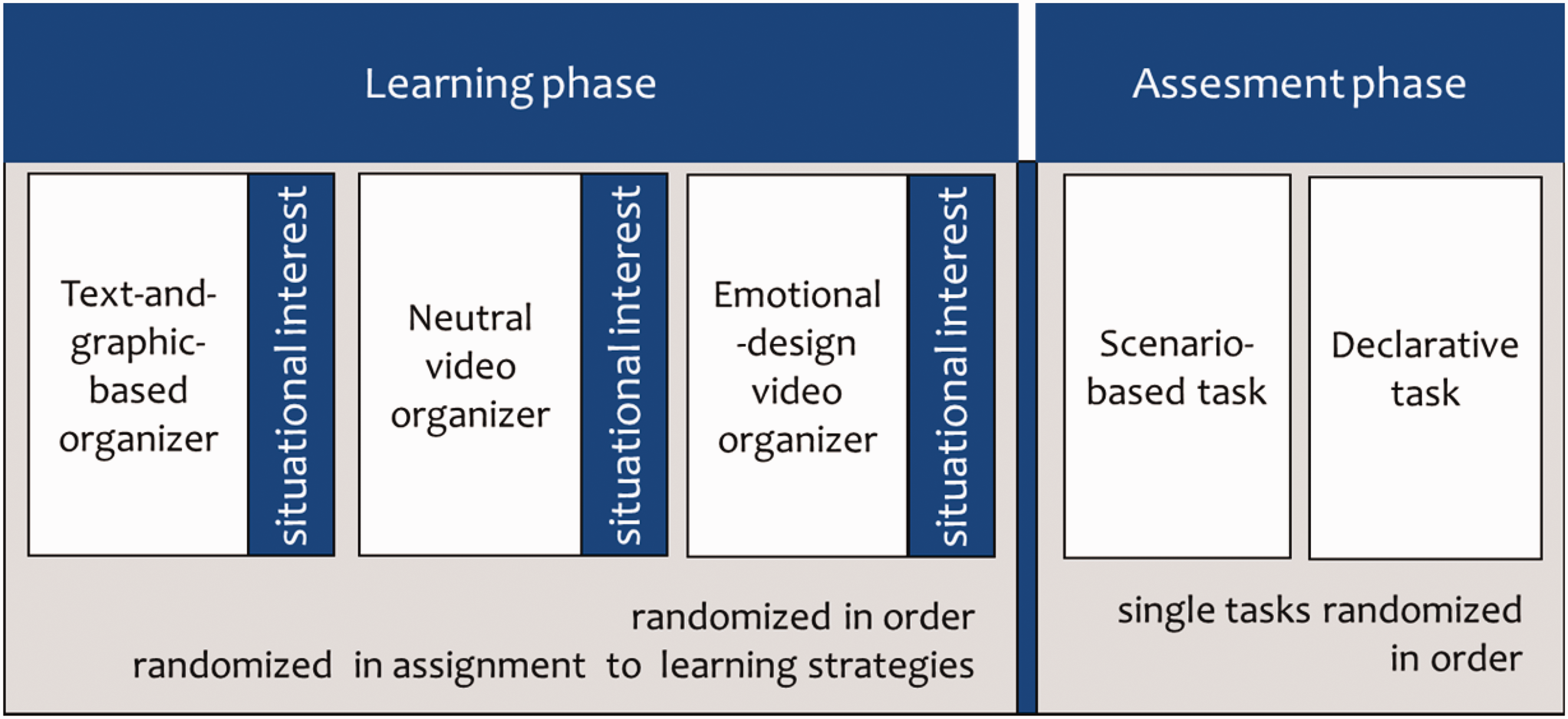

To evaluate our advance organizers, we applied a within-subjects design. We compared three types: a text and graphic-based organizer, a neutral video organizer, and an emotional-design video organizer (see Figure 3).

Experimental design of Experiment 1.

We constructed each type of organizer for the three introduced strategy groups on cognitive learning strategies, metacognitive learning strategies, and resource-oriented learning strategies (see Development and Implementation of Strategy Training section). The contents of these various advance organizers were largely the same within one strategy group. The structure and length of the modules (ca. 9 min) for the different strategy groups were also held constant. The students (n = 32; age: M = 23.14 years, SD = 3.16) learned about all three types of learning strategies with different advance organizers. The submodules’ type of advance organizer (which learning strategy) and conditions were counterbalanced. This experiment’s focus was on motivation evoked by the current learning situation. We assessed situational interest as an important aspect of motivation for learning after each advance organizer. On a 9-point Likert scale, the participants thus rated how well the adjectives “exciting,” “entertaining,” “boring” (reverse-coded), “useful,” “unnecessary” (reverse-coded), and “unimportant” (reverse-coded) described the materials’ design (Schiefele, 1990, 2009). The learning assessment consisted of declarative- and conditional-knowledge tasks. The declarative task consisted of short-answer tasks. Learners had to provide open answers that were coded afterward. The maximum score for each strategy group was 12. The conditional-knowledge task consisted of scenario-based tasks based on authentic learning scenarios. The learners evaluated different possible learning strategies in these learning scenarios. The scenarios were rated by three experts in the field of learning strategies. Students’ answers were compared to those experts’ ratings. A similar format has been used successfully for assessing learning strategy by Schlagmüller et al. (2001) and by Schwonke et al. (2006). The maximum score for each strategy group was 54.

Results of Experiment 1

In all experiments in this report, we applied an alpha level of .05 and relied on two-sided testing for all statistical analyses. We determined η p 2 as an effect size index. The values 0.01, 0.06, and 0.14 were considered small, medium, and large effect sizes, respectively. For certain analyses, we employed Bayesian analysis (Bayes factor; BF) to support the null hypotheses. We used a predefined uninformative JZS prior with a multivariate Cauchy distribution (Rouder et al., 2012). For all analyses, we performed direct hypothesis testing by a priori contrasts (see Rosenthal & Rosnow, 1985; Rosenthal et al., 2000).

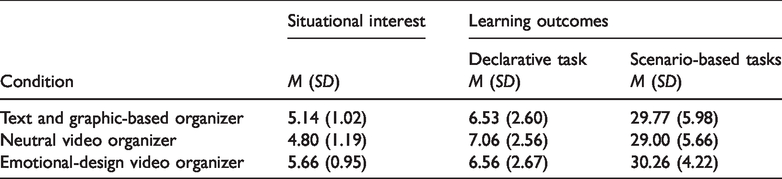

We conducted a within-subjects analysis of variance (ANOVA) with the three-condition factor “advance organizer” (see Table 1). The dependent variable was situational interest. Situational interest was significantly higher for the emotionally designed video than for the neutral design, F(1,34) = 17.39, p < .001, ηp2 = .338. This effect can be considered a large effect. We conducted a within-subjects MANOVA (multivariate ANOVA) with the three-condition factor advance organizer (see Table 1). The dependent variables were the declarative task and scenario-based task performance. We detected no significant differences between text and videos with respect to learning outcomes (Bayesian repeated measures MANOVA, condition: BF01 = 17.10). This Bayes factor indicated that the null hypothesis is 17.10 times more likely than the alternative hypotheses (Rouder et al., 2012).

Means and Standard Deviations (in parentheses) of Situational Interest and Learning Outcome Variables in all Three Conditions (Experiment 1).

Implementation in the Tool

We found that motivation can be fostered while working with the learning environment by using sketched explanation videos (i.e., video containing sketched symbols and human hands). We implemented the evaluated design features in our acquisition phase. Each group of strategies is introduced using a sketched explanation video as an advance organizer.

We also utilized emotional design in the rest of the application phase. A fictitious student (as a social agent) guides the learner through the whole tool and explains the individual strategies. The learners are addressed personally and examples are used. After the short videos, the contents are explained in more detail in text sections including pictures and videos. All sections were designed according to the principles of multimedia learning (Mayer, 2014).

Consolidation of Strategy Knowledge – the Practice Phase

The second part is the adaptive practice phase. The goal of this phase was to consolidate new knowledge about learning strategies. You cannot change students’ learning strategies by just telling them about different learning strategies. It is important that they understand when to apply the strategies and can recall them when they are applicable. In other words, their knowledge has to be strengthened and consolidated.

Evaluation Experiment 2

To reach the consolidation goal, we implemented a retrieval practice intervention (see the testing effect; Rowland, 2014). To make retrieval practice most effective, students should invest high mental effort in a retrieval task (Endres & Renkl, 2015; Pyc & Rawson, 2009) but, at the same time, succeed in recalling the contents (Carpenter, 2009; Rowland, 2014). When teachers introduce a certain retrieval task, some students might not find it challenging enough, and consolidation effects will be low. Other students may find the task too difficult and thus be unable to retrieve the content. This tension between retrieval success and retrieval effort requires that as suggested teachers find the right balance between posing high demands and facilitating a high level of success for different students. Hence, students’ heterogeneity is a major challenge for instructors, especially when many students are working in an online learning environment. An adaptable system might help tailor retrieval tasks to individual learners’ knowledge prerequisites. To evaluate our retrieval practice phase, we wanted to find out whether different retrieval practice tasks actually lead to differences in learning outcomes for students with different skill levels.

Method of Experiment 2

We developed three practice tasks for each strategy group: restudy of the material, a scenario-based-retrieval task (conditional knowledge), and a short-answer-retrieval task (declarative knowledge). The restudy material was a shortened version of the original material; we omitted the examples and part of the videos. The scenario-based task covered the same contents as the restudy material. Each scenario included a detailed description of an application situation. We expected these descriptions to function as retrieval cues. The short-answer-retrieval task comprised nine short questions asking for short answers; they covered the same contents as the restudy and scenario-based tasks. We expected that a short question would provide fewer cues for retrieval than a scenario description. Hence, retrieval of the scenario-based task was expected to be easier and as such more suitable for learners with less prior-knowledge.

As there was no clear theoretical reason for assuming a certain number of groups and for determining the cut-off points for the different learning strategies, we conducted a cluster analysis. We used the Ward method to identify groups of prior knowledge for all strategy groups. We identified three groups using a dendrogram.

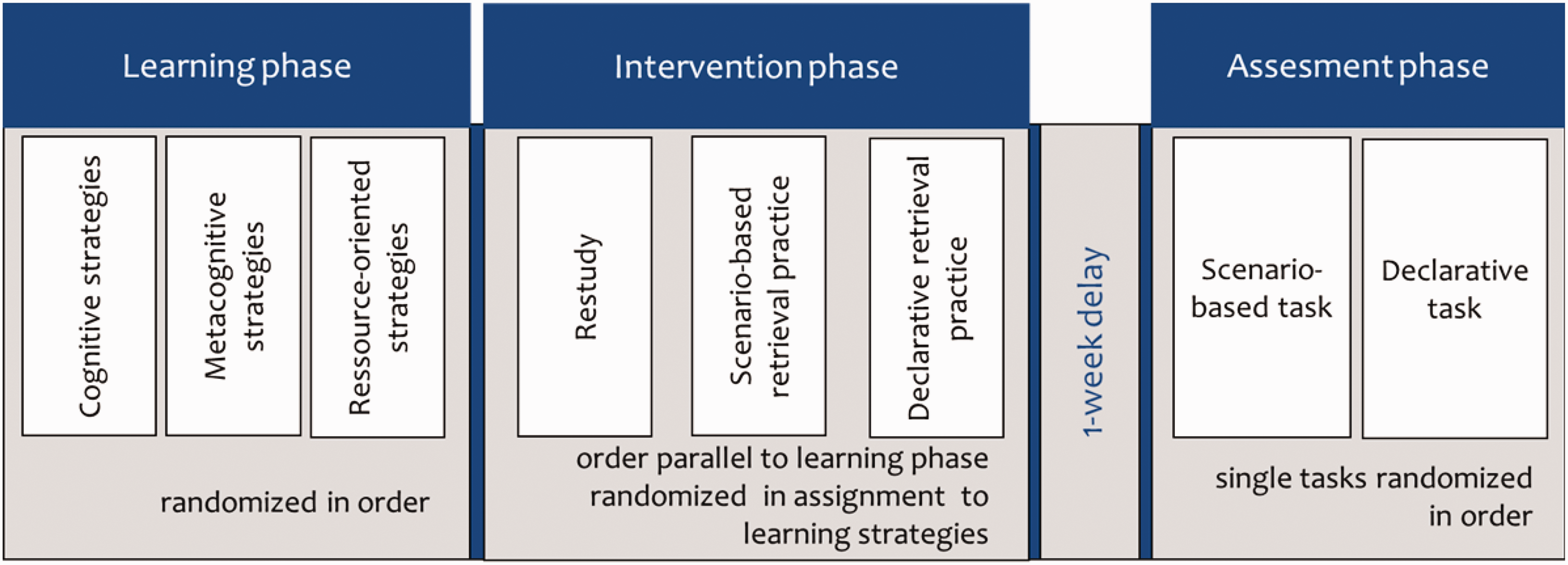

We conducted a mixed design (n = 54, age: M = 22.16 years, SD = 2.42; see Figure 4). The between-subjects factor consisted of the three identified prior-knowledge groups. The within-subjects factor consisted of three conditions. First, we assessed learners’ prior knowledge using our scenario-based knowledge task for the assessment of prior knowledge. Afterward, learners studied the material introduced in our acquisition phase. After learning, we varied the type of practice task within subjects. The contents (i.e., cognitive learning strategies, metacognitive learning strategies, and resource-oriented learning strategies) and practice-task types were counterbalanced. For each participant, our design allowed us to match one-third of their posttest tasks to the conditions restudy, scenario-based-retrieval task, and short-answer-retrieval task, respectively. We assessed learning outcomes in a 1-week delayed posttest consisting of a declarative- and a conditional-knowledge test.

Experimental design of Experiment 2.

Results of Experiment 2

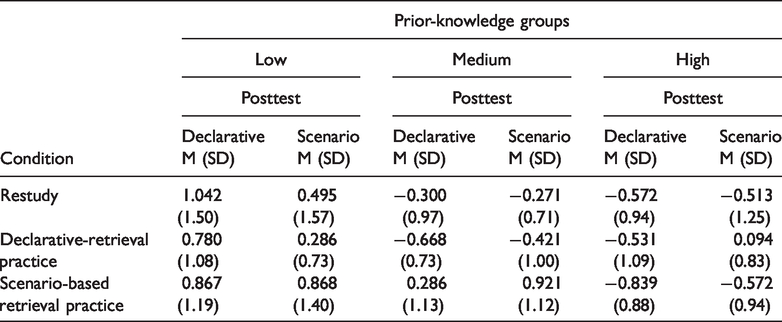

To analyze the learning outcomes, we z-standardized all tasks. We compared the conditions restudy, declarative-retrieval practice, and scenario-based retrieval practice separately for the different prior-knowledge groups (see Table 2). We detected an aptitude–treatment interaction. For the low prior-knowledge group, we expected restudy to be the best condition. This was not the case. Actually, learning outcomes did not differ between treatments (all ps > .36). Low prior-knowledge learners increased their knowledge the most, but this increase did not differ between conditions. The medium prior-knowledge group learned significantly more from scenario-based-retrieval tasks than from the other two task types, F(1,10) = 5.15, p = .046, ƞp2= .34. This effect can be considered a large effect. The high prior-knowledge group learned significantly more after short-answer-retrieval tasks than after the other two tasks, F(1,14) = 8.72, p = .010, ƞp2 = .38. This effect can be considered a large effect.

Means and Standard Deviations (in parentheses) of Learning Outcome Variables in all Three Conditions Split for Prior-Knowledge Groups (Experiment 2. Note that Negative Values are due to Z-Standardization).

Implementation in the Tool

This interaction indicates that learners on different prior-knowledge levels benefit from different retrieval practice tasks. For consolidating students’ knowledge about learning strategies, a retrieval practice-based arrangement involving different types of practice tasks for learners with different prior-knowledge levels is best. Although we identified no significant difference in the low prior-knowledge students in our experiment, we decided to give those students another restudy opportunity. In the adaptable system, learners’ prior-knowledge levels are assessed in the learning environment and cut-off levels between low, medium, and high were used to determine each learner’s best-suited retrieval task. This procedure raises the probability that every student is mentally challenged while succeeding at retrieval.

The practice phase takes place after several spaced intervals that increase in time (spacing). This spaced practice leads to better long-term consolidation (Kang et al., 2014). We used spacing intervals (1, 3, and 7 days) based on prior studies (Carpenter et al., 2012). The sequence of the questions is based on learners’ prior-knowledge level. Through this adaptation, each learner can work effectively and efficiently.

Change of Habits – the Application Phase

In this step, we help students to apply their consolidated knowledge of favorable learning strategies in their everyday learning. For this purpose, our training is connected to a university course on developmental psychology. Even when learners know about favorable learning strategies, they often fail to apply them on their own (i.e., production deficiency). Support must usually be provided so that students can fully exploit their strategies when learning. A central problem is that students have already acquired the habit of using certain learning strategies that they find helpful. They may therefore be unwilling to change to unfamiliar strategies. In the last phase of our training, we wanted to modulate student behavior toward more favorable strategies.

Evaluation Experiments 3 and 4

There are several ways to help students apply their learning strategies. One well-evaluated method is to use strategy prompts. Prompts have been successfully used to boost students’ learning-strategy application, especially in research on journal writing (Berthold et al., 2007; Nückles et al., 2020; Roelle et al., 2017). Prompts are short sentences or questions provided by a teacher or a learning system that encourage the use of certain learning strategies. We were interested in whether we could identify another procedure to use that required less external guidance from, for example, a teacher. Especially in social and health psychology, the application of implementation intentions has proven useful in changing everyday habits (Gollwitzer & Sheeran, 2008). Implementation intentions are short sentences that should help to implement a certain goal behavior in a learner’s learning routine (e.g., to use certain learning strategies). For example, imagine that students are rereading content they think is important. They know that an elaboration strategy would probably help them to fully understand the contents. They decide to use more elaboration in their learning routine. In the first step, students have to choose a concrete strategy. For example, they have decided to implement their favorite elaboration strategy – one they had learned in our training module’s previous acquisition phase, namely to come up with their own example and combine it with the learning content. They set this concrete strategy as their goal behavior (second part of the implementation intention). The second step is to connect that goal behavior with a trigger condition. This trigger condition should be a situation in which the addressed behavior is favorable. To create that trigger condition, students should apply the conditional knowledge already learned. For example, the student could form the implementation intention “If I spot key content in a text (trigger condition) I’ll think of my own example (goal behavior).” In two classroom experiments, we tested whether these implementation intentions facilitated the application of learning strategies.

Method of Experiment 3

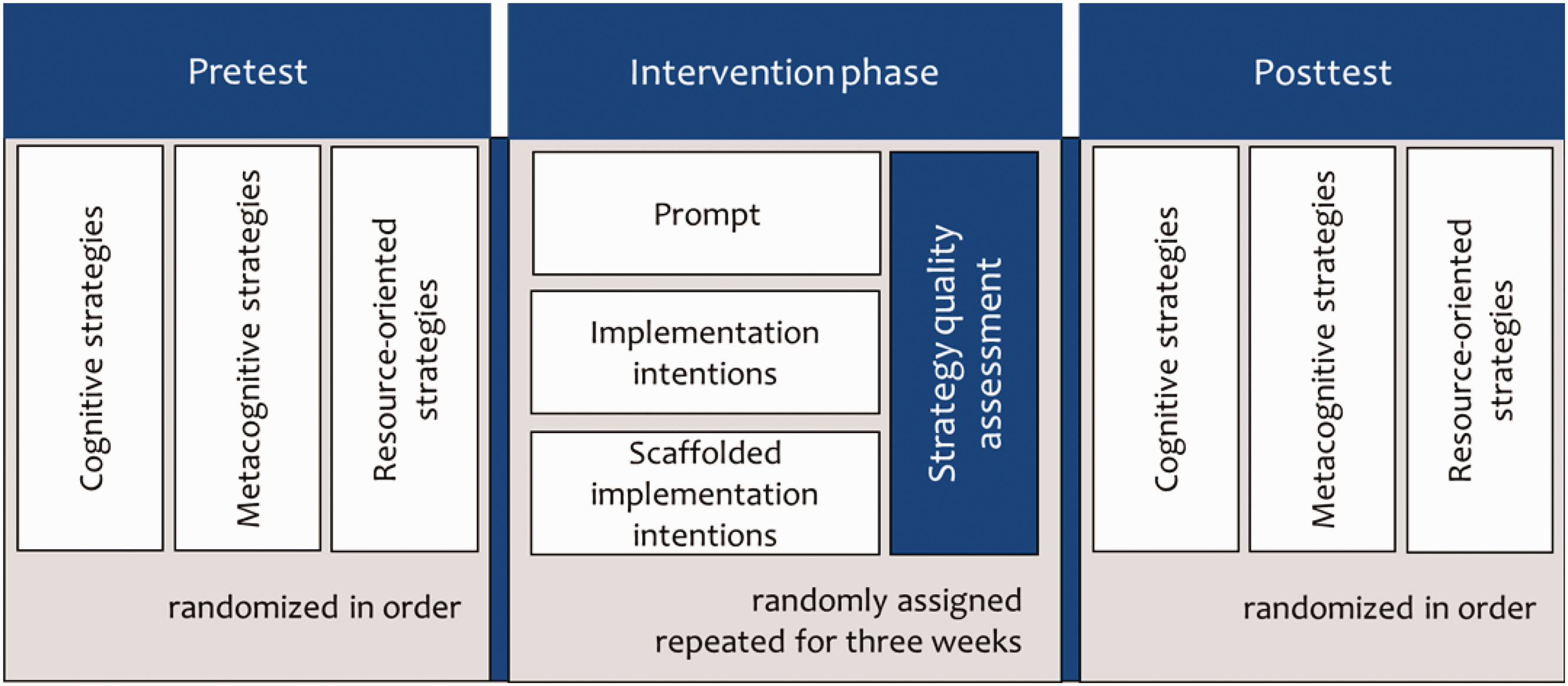

We compared three interventions that should boost students’ learning-strategy application in a between-subjects design (see Figure 5). The first was a prompting intervention. Students were provided with three types of prompts for each strategy group. Students were introduced to the concept and use of prompts via an instructional video. The second intervention was the introduction of implementation intentions. Students were introduced to the concept and use of implementation intentions via an instructional video. In addition, worked-out examples of implementation intentions were presented. Afterward, students had to form their own implementation intentions. The third intervention was a scaffolded-implementation intention intervention. In this intervention, we asked the students certain questions to help them form good implementation intentions. More specifically, they were asked what their learning goal was, what concrete strategy they planned to apply in their learning, and finally, which trigger situation they thought was appropriate for their goal behavior. After answering each question, the system came up with their implementation intentions, which they then had to learn by heart.

Experimental design of Experiment 3.

Undergraduate psychology students (n = 147, age: M = 20.09 years, SD = 3.80) were randomly assigned in a between-subjects design. Students went through the acquisition and practice phases as in previous experiments. Then students engaged in their assigned intervention for 3 weeks. The learning strategies’ application quality was assessed each week in the learning journal entries the students had to write. All learning journals were rated for quality of strategy use using a tried and tested coding scheme (Glogger et al., 2012). Before and at the end of the 3-week training module, students’ learning behavior was assessed via an adapted questionnaire (based on Wild & Schiefele, 1994) assessing the self-reported use of learning strategies in three representative categories. Learners had to rate how often they use certain learning strategies.

The quality of the implementation intentions was rated in the conditions with implementation intentions (implementation intention and scaffolded-implementation intention conditions). Each implementation intention was scored according to eight qualities: completion (if–then form), correct conditional clause, use of action verbs, naming a behavior relating to a learning strategy, quality of the described learning behavior, justification of the behavior, matching of trigger situation (if clause) and behavior (then clause), and anticipated contingency between trigger/cue and behavior. The maximum score for implementation intention quality was 5.

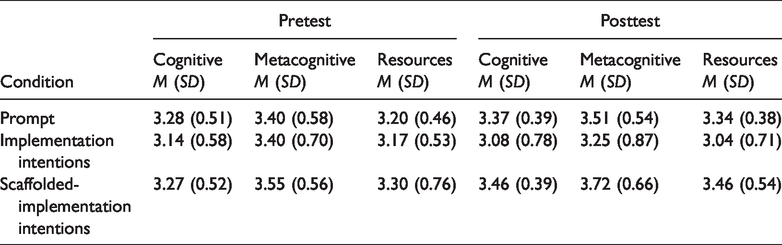

Results of Experiment 3

We conducted a MANOVA using the self-reported learning strategy use for all three learning strategies as dependent variables (see Table 3). There were no differences between groups before the intervention. The type of intervention influenced self-reported learning strategy use, F(6, 202) = 2.79, p = .012, ƞp2 = .077. This effect can be considered a medium effect. The condition with prompts and with scaffolded-implementation intentions outperformed the implementation intention condition, F(3, 101) = 4.84, p = .003, ƞp2 = .126. This effect can be considered a medium to large effect. There was no significant difference between prompts and scaffolded-implementation intentions, F(3, 69) = 1.34, p = .269, ƞp2 = .056, BF01 = 0.92. As the overall quality of implementation intentions was still rather low (M = 3.06 of 5), we focused on improving them in the next experiment.

Means and Standard Deviations (in parentheses) of Self-Reported Learning-Strategy Use in all Three Conditions (Experiment 3).

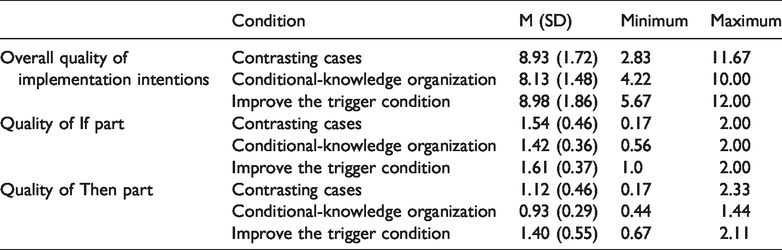

Means and Standard Deviations (in parentheses) of Implementation Intention Quality in all Three Conditions (Experiment 4. Note that Implementation Intention Quality had more Aspects than the Addition of If and Then Part).

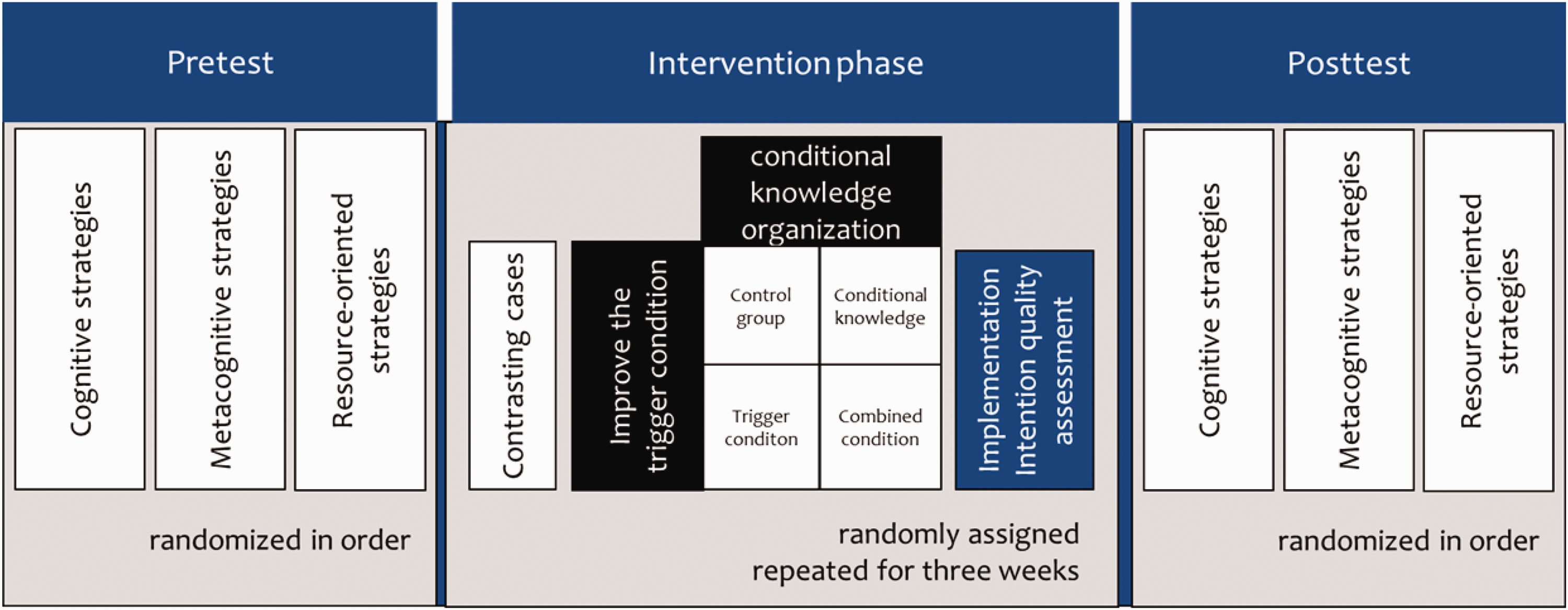

Method of Experiment 4

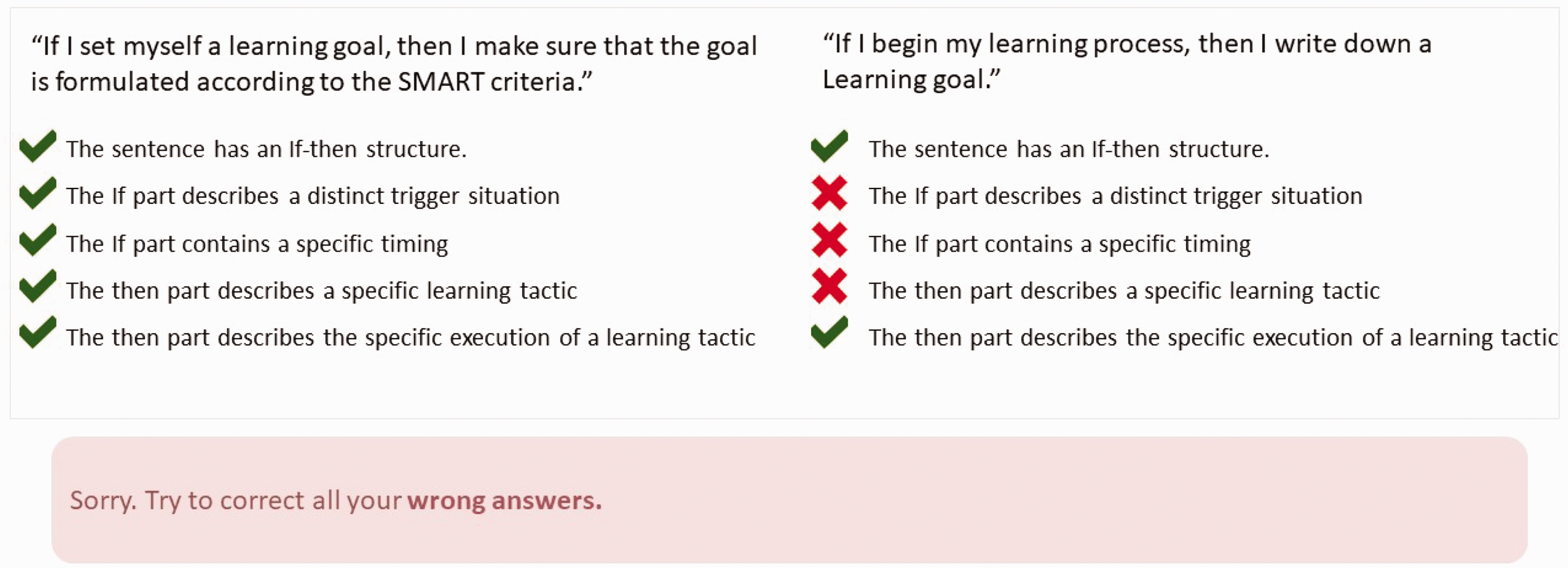

We compared two interventions to improve implementation intentions in a 2 × 2 between-subjects design (see Figure 6). Across all conditions, we implemented contrasting cases of good and poor implementation intentions. Students had to assess the contrasting cases by applying five rubrics to each of two contrasting examples. Learners had to decide whether or not each rubric category applied in the example they had just read (e.g., The “If” part of the sentence describes a distinct trigger-situation; see Figure 7 for all rubrics and an exemplary screenshot of our system). Each rubric had to be marked to show whether the category applied. There were three contrasting cases (six examples) of increasing difficulty. After marking, students got feedback for every rubric (see Figure 7 for English translations; please note that due to the translation, some text characteristics might have changed slightly. If you wish to see the original materials, please contact us).

Experimental design of Experiment 4.

Screenshot of contrasting cases presented in the learning environment (translated from German).

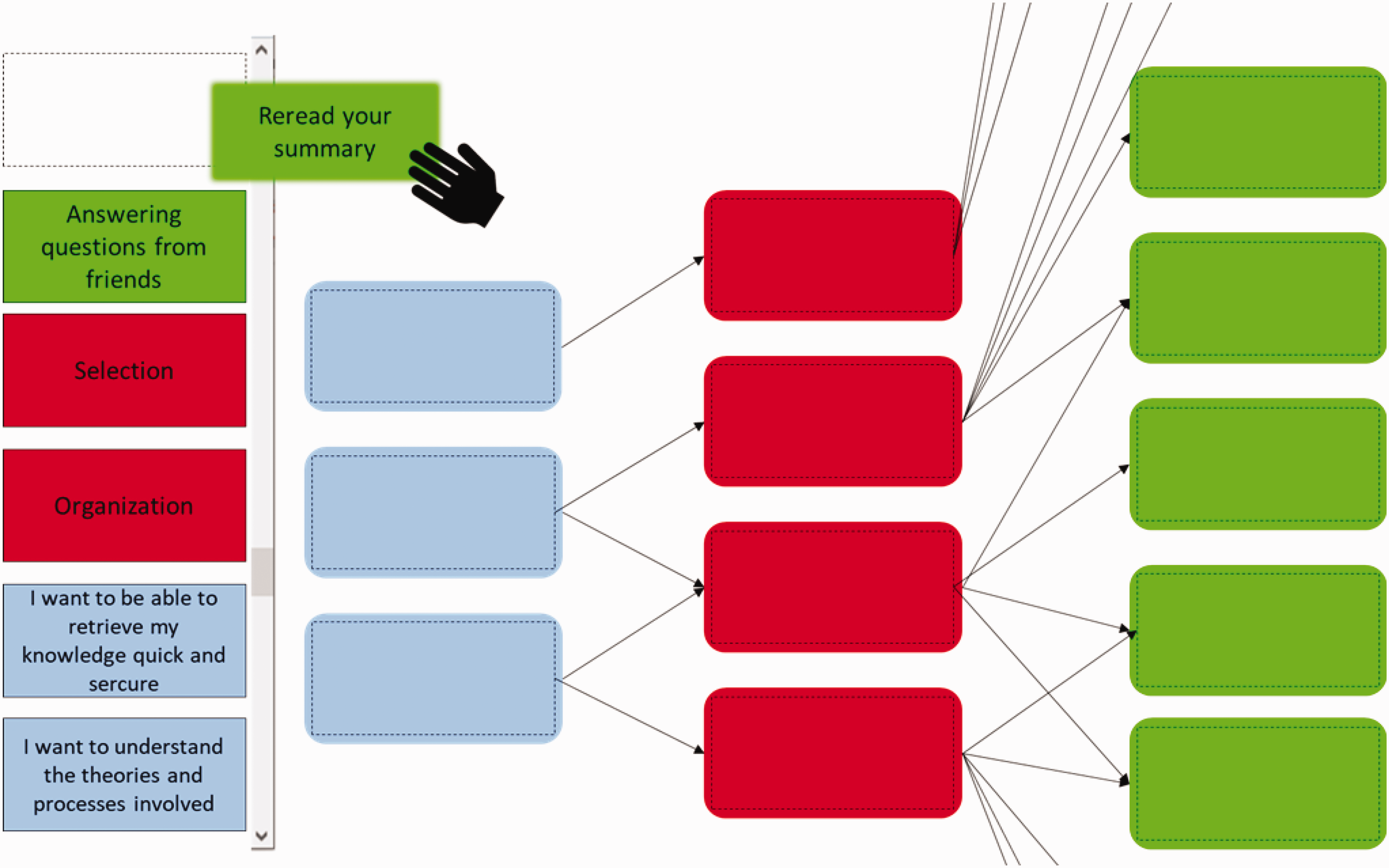

Screenshot of conditional-knowledge organization intervention in the learning environment (translated from German).

In the first intervention (conditional knowledge), we wanted to improve learners’ conditional-knowledge organization. In this intervention, the relation between certain learning goals and concrete learning strategies was emphasized. Students had to build a hierarchical structure using a drag-and-drop web interface (see Figure 8). Organizing content into this structure should improve conditional-knowledge organization.

The second intervention (trigger condition) should improve the trigger condition as part of a good implementation intention. From informal observations during our last experiment, we assumed that trigger situations would differ in their perceptibility. Some trigger situations require more metacognitive awareness (“If I realize I’ve misunderstood something …”) than others (“When I sit down at my desk …”). In this intervention, we first informed students about the difficulties in perceiving certain trigger situations. Afterward, we asked students to envision vividly certain trigger situations. For example, we asked them: “If you think of the last time you noticed a central concept in a text, how did you become aware of that?” or “How would you notice a central concept in a future learning situation?”

Undergraduate students (n = 103, age: M = 21.68 years, SD = 5.31) completed our learning-strategy-training acquisition and practice phase first. Afterward, we varied provision of the conditional-knowledge organization intervention and of the trigger-situation intervention in a 2 × 2 between-subjects design. In addition to the respective interventions, all students engaged in the contrasting-cases intervention. Over a 3-week period, students formed weekly implementation intentions for working on the contents of the related lecture on developmental psychology. We rated the quality of the implementation intentions as in Experiment 3. A questionnaire was used to assess student-perceived learning-strategy quantity and quality before and after the 3-week period. For this purpose, we developed a questionnaire based on several concrete learning strategies (e.g., mind mapping, thinking of one’s own examples). First, students answered binary items on several learning strategies. An item assessed whether they had used a certain learning strategy. If they answered yes to any of those items, they had to rate the self-assessed intensity and quality of the respective strategies on a 9-point Likert scale.

Results of Experiment 4

To analyze the effect of contrasting cases, we compared Experiment 3’s implementation intention quality (no contrasting cases) with Experiment 4’s implementation intention quality (contrasting cases, Table 4). There were no differences between groups before the intervention. Contrasting cases substantially improved implementation intention quality, F(1, 49) = 13.46, p < .001, ƞp2 = . 215. This effect can be considered a large effect. Neither conditional knowledge nor the trigger-situation condition influenced implementation intention quality: conditional-knowledge organization: F(1, 49) = 0.049, p = .827; trigger situation: F(1, 49) = 0.351, p = .556; interaction: F(1, 76) = 0.214, p = .645. Nor was there any difference in perceived learning strategy use between conditions. We observed no influence of implementation intention quality on learning-strategy application. Only contrasting cases improved implementation intentions and strategy application.

Implementation in the Tool

We found that it is important to provide guidance to students in forming specific implementation intentions by means of a step-by-step procedure. The scaffolding procedure increased the application of learning strategies more than the implementation intention intervention without scaffolding. Contrasting-cases guidance was most efficient in increasing implementation intention quality further. Forming an implementation intention does not, however, seem to be enough to change students’ habits completely. Students failed to apply the favorable strategies they had been learning about.

General Discussion

We have shown that our strategy-training module can increase students’ motivation, consolidate their knowledge about learning strategies, and help them apply these strategies in a connected seminar. As our training module was implemented in a real-life setting—and for ethical reasons—we wanted all our students to profit from the training invention, which is why we did not implement a no-training control group for our research. The control groups, especially in Experiments 3 and 4, were not just passive control groups, they represented the training niveau we provided in previous years. As these interventions are already superior to no intervention at all, the effect sizes of these studies would probably be even larger were they compared with passive control groups.

As students did not use the favorable strategies they had been learning about, even after our final intervention, we included a face-to-face intervention supporting habit-changing in a current experiment. More specifically, we implemented the Study Smart training awareness session (Biwer et al., 2020) into our training. This session’s main goal is to increase students’ intention to change their learning behavior. This stronger intention to change should, in turn, increase subsequent use of favorable learning strategies. Preliminary results support the hypothesis that additional face-to-face training improved the intention to change.

Because our application was in an authentic environment, several ethical issues prevented us from applying students’ actual performance in the semester, using our training as an independent variable. Although it would extend the scope of our paper we cannot know whether, after our learning-strategy training, these students actually scored better in their exams. To clarify this issue, we have to rely on evidence from the literature that the efficient application of learning strategies increases students’ success at university (e.g., Donker et al., 2014).

During our experiments, we noted that several students already possessed knowledge of learning strategies and were able to apply them. Most students, however, exhibited suboptimal learning behavior. Even those students with solid knowledge and already using favorable strategies improved during our training. These results suggest that, right now, learning-strategy education is considered more and more essential, even before university education. Nevertheless, there are great differences between different freshman students when entering university. The wide variety of prior knowledge had a strong effect on our training modules’ efficacy, thereby highlighting the role of adaptability when teaching students about learning strategies in university.

Current Use of the Learning Environment

Our strategy-training intervention has now been implemented in the curriculum for freshman students at the Department of Psychology, University of Freiburg (Germany) in its current form. Around 120 students take part in our program every year. We evaluate and improve the training intervention continuously. For interested participants outside our psychology course, there is a free online version that anyone can use (https://elis.vm.uni-freiburg.de/free/). The free version includes the learning and practice phases, and is used by students from different majors every year. We recommend implementing strategy training within a university course in which opportunities and incentives are provided to apply these strategies. This implementation is necessary to exploit our tool’s full potential.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by the Instructional Development Award. The grant was awarded to Tino Endres, Jasmin Leber and Alexander Renkl.