Abstract

The monitoring by teachers of collaborative, cognitive, and meta-cognitive student activities in collaborative learning is crucial for fostering beneficial student interaction. In a quasi-experimental study, we trained pre-service teachers (N = 74) to notice behavioral indicators for these three dimensions of student activities. Video clips of student interactions in collaborative learning settings served as learning opportunities in the training and as test scenarios for measuring monitoring competency in an assessment tool. Participants completed the assessment tool before and immediately after the training program. A control group (N = 33) completed the assessment tool twice but did not receive training in between. Results show that monitoring competency increased significantly in the training group but not in the control group. This provides initial evidence that a video-based training program can effectively enhance pre-service teachers’ noticing of behavioral indicators of collaborative, cognitive, and meta-cognitive student activities in a relatively short time. Our findings are of practical relevance as evaluating student interactions in a collaborative learning setting is challenging, yet also a skill much needed in teacher practice.

Keywords

Collaborative learning is becoming increasingly important in today’s classroom (Green & Green, 2010). However, teachers often feel they cannot effectively implement collaborative learning (e.g., Gillies & Boyle, 2010). Indeed, Fürst (1999) found that teachers’ interventions often interrupt student interaction, thereby hampering the collaborative learning process. Thus, there is a need for teacher training (Gillies, Ashman, & Terwel, 2008; Ruys, Van Keer, & Aelterman, 2011) that fosters teachers’ competencies regarding the implementation of collaborative learning in the classroom (ICLC) (Kaendler, Wiedmann, Rummel, & Spada, 2015). These ICLC teacher competencies comprise the abilities to: plan student interaction; monitor, support, and consolidate this interaction; and finally, to reflect upon it (Kaendler et al., 2015; see also Pauli & Reusser, 2000; Ruys et al., 2011). We investigated whether a teacher training program can enhance teachers’ competency of monitoring student interaction during collaborative learning. Specifically, we focused on supporting teachers in monitoring interactions that, based on principles from educational psychology, make collaborative learning effective for knowledge construction, such as elaboration or comprehension monitoring. The training program used videos of student interactions to illustrate these interactions and to let participants practice monitoring.

Teachers’ monitoring of student interactions in the classroom is a demanding task as a classroom is characterized by a large number of events which happen rapidly and often at the same time (Doyle, 2006). While diagnosing students’ activities during collaborative learning, a teacher faces high information load as multiple groups of students are simultaneously engaged in different activities and thus need individual and adaptive teacher support (cf. van Leeuwen, Janssen, Erkens, & Brekelmans, 2015). The more groups there are to be observed and supported simultaneously by the teacher, the more the teaching quality may be hampered (Blatchford, Baines, Kutnick, & Martin, 2001). One reason is that teachers cannot continuously monitor all groups at the same time, but have to divide their attention and decide which group to monitor and support at any given point in time. This switching between groups makes diagnosing and adaptive support more difficult (cf. van Leeuwen et al., 2015). For trainee teachers, dealing with multiple groups of students likely is too much because they first have to learn to monitor different student activities that quickly occur in one student interaction.

In the following we first present the definition of collaborative learning used in our training program and explain how student interaction can be monitored, drawing on the construct of teachers’ professional vision (Sherin, 2001). Then, we describe the situated approach to developing professional vision in pre-service teachers underlying the training program. We discuss existing research on video-based training of professional vision and teacher training programs that foster ICLC teacher competencies. Finally, we present the design of the present teacher training program in detail before reporting and discussing data on its effectiveness. In our pre-service teacher training, we focus on pre-service teachers’ monitoring of only one group of students for a given time as switching between groups is a very challenging task, especially for the target group of beginning teachers. Monitoring of only one student group is already a demanding task because teachers have to assess complex activities that occur during the student interaction within the group. We regard this competency as a prerequisite for dealing with multiple groups.

Collaborative Learning

Collaborative learning can be defined as the process of two or more students working together to solve the group task at hand (cf. Renkl, 2007). They can achieve this by sharing their knowledge and thus building common ground and joint knowledge (Roschelle, 1992). In this sense, collaborative learning goes beyond cooperative learning because cooperation is defined as a situation where a group task is divided into independent subtasks to be solved individually and then to be assembled to form the final solution (Dillenbourg, 1999; Kaendler et al., 2015). Cooperation can take place during collaboration, but through joint knowledge building, collaboration is more than the sum of its parts (Kaendler et al., 2015). In this article, we focus on collaborative learning.

Collaborative learning has proven to be highly effective and often superior to individual learning in terms of academic achievement and attitudes (Kyndt et al., 2013; see also classic review articles, e.g., Lou et al., 1996; Slavin, 1980). However, its effectiveness largely depends on the quality of student interaction (Dillenbourg & Tchounikine, 2007), which can be evaluated on three dimensions, namely students’ (1) collaborative, (2) cognitive, and (3) meta-cognitive activities as defined in the following (see Baker, Andriessen, Lund, van Amelsvoort, & Quignard, 2007; Meier, Spada, & Rummel, 2007 ; Persico, Pozzi, & Sarti, 2010): (1) When students successfully collaborate with each other, they are actively engaged, build common ground, and share information and ideas (Johnson & Johnson, 1998). (2) Asking targeted questions and giving elaborate explanations (Webb, 1989), providing reasons for a line of argumentation, and comparing different solution paths (cf. Baker et al., 2007) are visible indicators of cognitive activities. (3) Meta-cognitive activities are indicated by comprehension monitoring, checking for errors (cf. Persico et al., 2010), as well as critical checking of ideas and the final solution (Bannert, 2003). When teachers want to evaluate the effectiveness of student interaction, they are supposed to monitor student interaction along these three dimensions (Kaendler et al., 2015).

Teachers’ Professional Vision

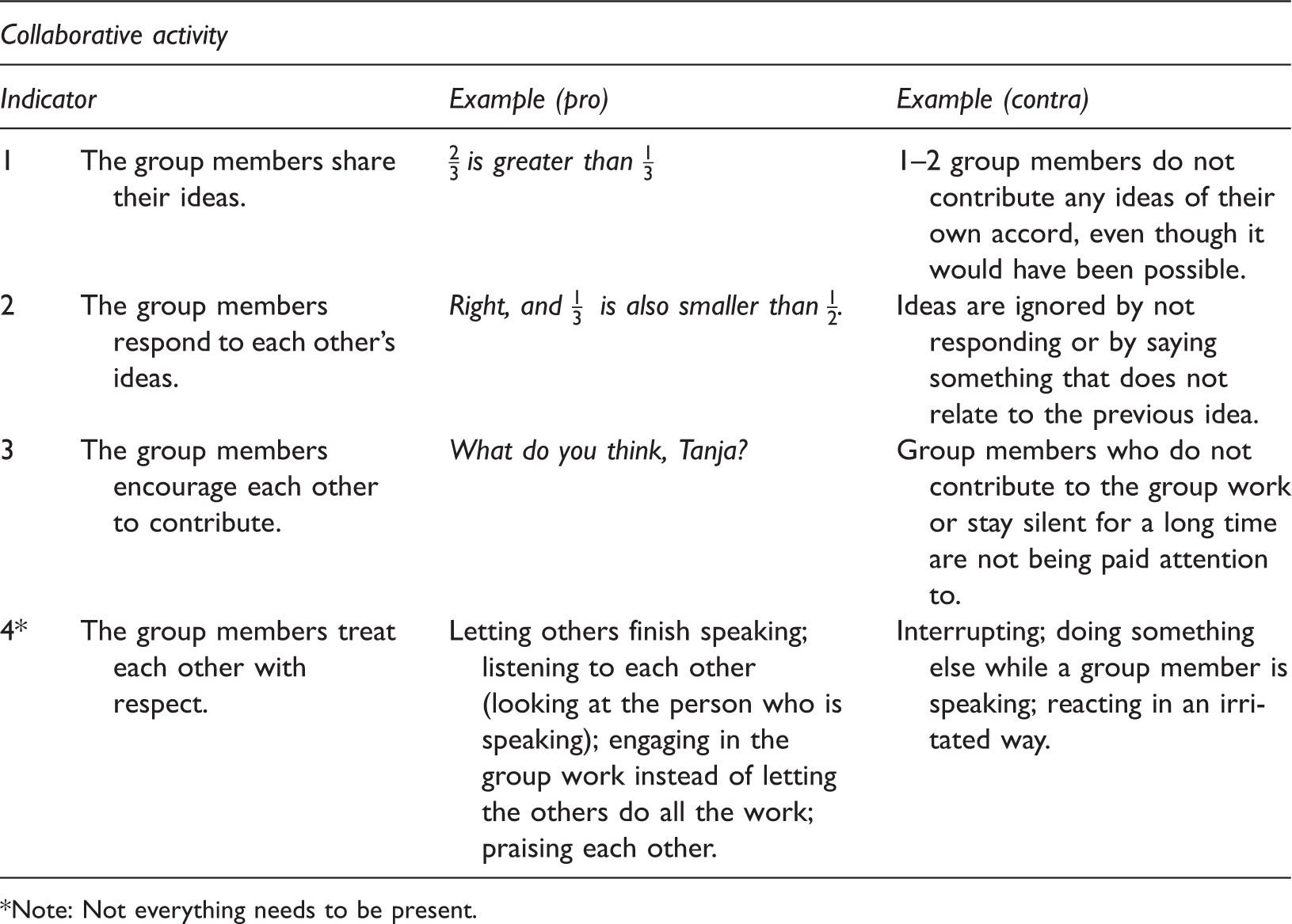

Checklist of behavioral indicators (collaborative activity).

*Note: Not everything needs to be present.

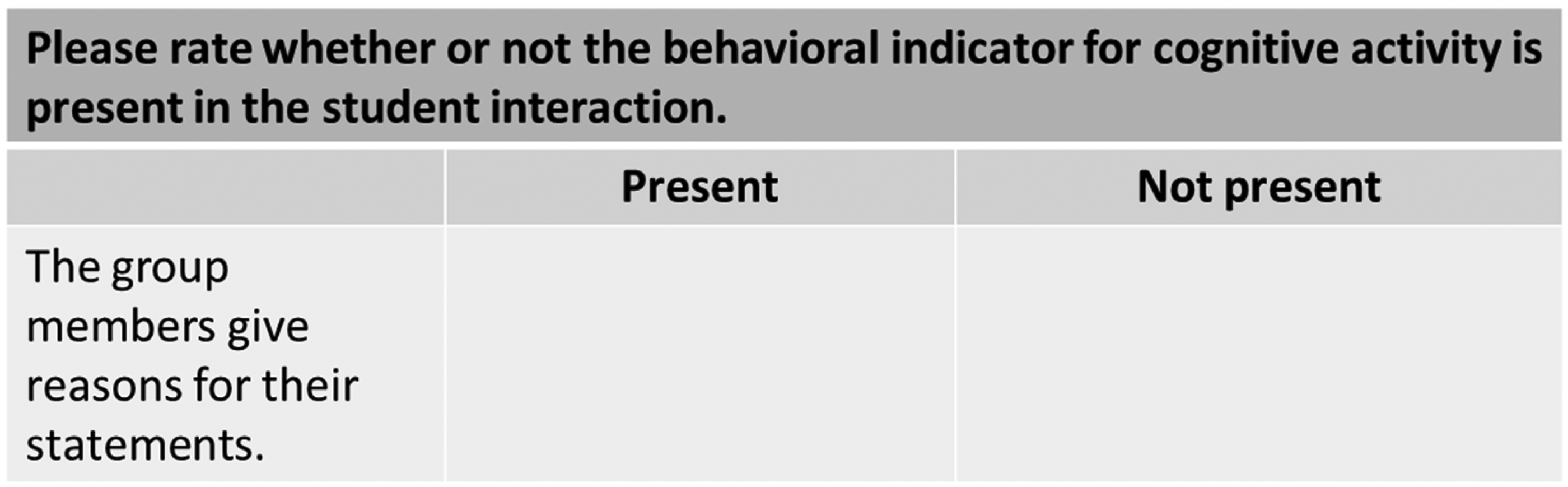

Checklist of behavioral indicators (cognitive activity).

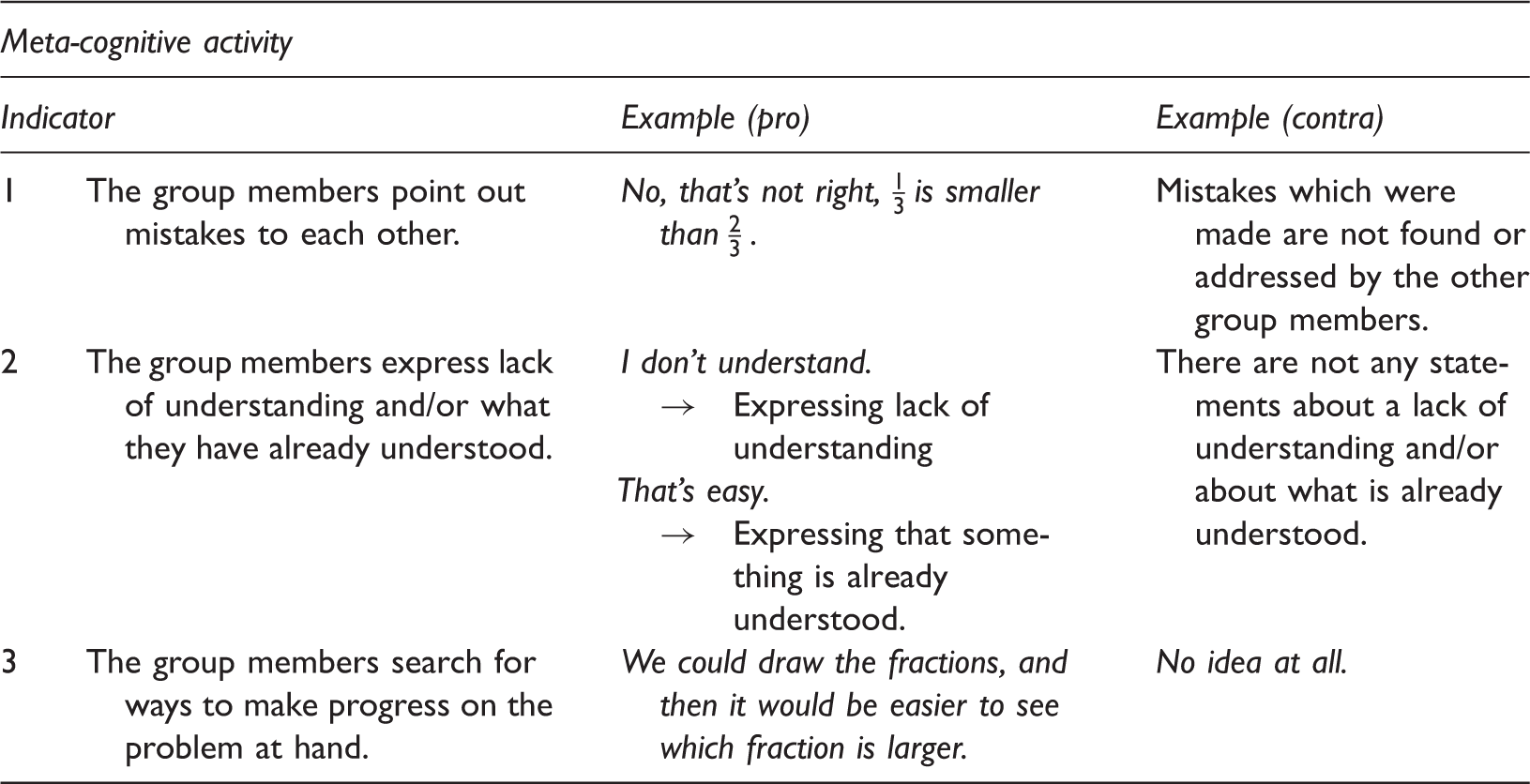

Checklist of behavioral indicators (meta-cognitive activity).

The professional vision of teachers has been shown to positively affect student learning (e.g., Kersting, Givvin, Sotelo, & Stigler, 2009). One possible explanation is that the better teachers can monitor and evaluate their students’ needs, the better they can enhance their students’ learning by providing adaptive support. When monitoring student interaction, the teacher checks if students are following the prescribed activities, for instance of a given collaboration script (e.g., Fischer, Kollar, Stegmann, & Wecker, 2013), by attending to behavioral indicators. When the teacher observes a lack in beneficial student activities, he or she may decide to intervene and support the student interaction. Monitoring competency, regarded here as a form of professional vision, can therefore be seen as pertinent for enhancing beneficial student interaction, that is, enhancing students’ joint knowledge building and each group member’s individual learning gains. We use the notion of competency because, during the whole monitoring process, the teacher must act in a flexible and adaptive manner in response to the specific situation (cf. the definition of competency provided by Klieme and Leutner, 2006). For this competent behavior, situation-specific skills are needed, such as noticing, reasoning, and decision-making (cf. Blömeke, Gustafsson, & Shavelson, 2015). We concentrate on teachers’ noticing of crucial classroom events, which is the first step of professional vision and one facet of monitoring competency. This means in our study pre-service teachers are only required to detect students’ utterances and interpret them as indicators of collaborative, cognitive, or meta-cognitive activities during student interaction. Next, we describe video-based training programs to enhance professional vision.

Video-Based Teacher Training

Since professional vision and teacher competencies in general are specific to concrete classroom situations, training and assessing them require a situated approach (cf. Klieme & Leutner, 2006). Situated approaches postulate that learning must take place in the same situation in which the knowledge gained will be used in the future (Blomberg, Renkl, Sherin, Borko, & Seidel, 2013; Lave & Wenger, 1991).

One situated approach often applied to promote professional vision and competent teacher behavior is the vignette technique (Oser, Heinzer, & Salzmann, 2010). Written or video-based typical classroom scenarios are presented, and act as stimuli for the competencies, since the typical classroom scenarios evoke the performance of tasks which a teacher faces in the real classroom. These typical classroom scenarios simulate a real classroom situation, and thus prepare pre-service teachers for real life in the classroom (Butler, Lee, & Tippins, 2006). Pre-service teachers can work collaboratively on one scenario of a teacher–student or student–student interaction by observing and analyzing the situation, gathering strategies, and finally deciding on one strategy (Tribelhorn, 2007) without experiencing the pressure which they would be under in the real classroom.

A real classroom situation is very complex as many events happen at the same time. Acting in such a complex situation requires teachers to draw on general pedagogical knowledge to identify relevant student behavior in student interaction (Sabers, Cushing, & Berliner, 1991). In teacher education, videos serve to elaborate pedagogical concepts and situate them. That is, video scenes provide examples of crucial classroom events and thus make more explicit pedagogical concepts such as cognitive student activity behind crucial classroom events such as students’ asking of questions and giving explanations. One effective instructional strategy is to introduce pedagogical concepts behind crucial classroom events which are expected to be observed by pre-service teachers and then illustrate them with video clips (Gold, Förster, & Holodynski, 2013; Seidel, Blomberg, & Renkl, 2013). Thus, videos can be used for learning in a deductive way as they are used to illustrate pedagogical concepts for teaching that are explained before watching a video-based classroom scenario (Seidel et al., 2013). In spite of the proximity to real life situations, videos allow for a control of the need of immediate action. Editing and segmenting classroom situations as well as giving control over the playback to the learners, as was done in the present study, reduces cognitive load and helps novices cope with the complexity of classroom instruction (Blomberg et al., 2013; Wouters, Tabbers, & Paas, 2007).

A video-based training format has often been implemented in the form of scheduled courses spanning one or two semesters (e.g., Gold et al., 2013; Seidel et al., 2013) and has proven to be effective for enhancing (pre-) service teachers’ professional vision and competencies. As an example of this use of videos, we refer to the video-based training by Seidel and colleagues (2013). Seidel et al. (2013) first introduced pedagogical concepts for teaching and then illustrated them with a concrete video-based classroom situation. They found that this training format enhanced descriptive pedagogical knowledge and the application of this knowledge for the purpose of evaluating video-based classroom situations. Further, this training format is appropriate for novice teachers as it is recommended that more instruction should be provided for novice teachers, while more experienced teachers should be provided with less instruction (Korthagen & Kessels, 1999).

Teacher Training for Implementing Collaborative Learning in the Classroom

The literature on teacher training for implementing collaborative learning in the classroom has mainly focused on essential pedagogical knowledge of collaborative learning (e.g., Gillies et al., 2008; Ruys et al., 2011), for instance collaborative principles such as individual accountability and social interdependence (Johnson and Johnson, 1998), or on communication skills to promote students’ thinking and learning (e.g., Gillies et al., 2008). Gillies and colleagues (2008) were able to show that teachers who were trained in communication skills asked more questions, thus better facilitating student interaction in the classroom, than teachers who had not received such training. Ruys and colleagues (2011) found that pre-service teachers’ self-reported skills in implementing collaborative learning such as organizational, socio-affective, and (meta-) cognitive guiding, as well as consolidating and evaluating collaborative learning, matured over the course of their induction phase.

The extensive literature regarding collaborative learning provides evidence for conditions under which collaborative learning is effective and for what beneficial student interaction in general should look like (e.g., Cohen, 1994). Students should possess basic collaboration skills, such as waiting their turn, listening to others, and responding to their ideas. These beneficial student interactions are described on a general level that applies across age and grade. The focus is mostly on cognitive and meta-cognitive engagement through collaboration (cf. Renkl, 2007; Van Leeuwen, Janssen, Erkens, & Brekelmans, 2013). To foster beneficial student interactions, the teacher should monitor and evaluate the quality of the ongoing student interaction by noticing indicators of collaborative, cognitive, and meta-cognitive student activities. In this article, we therefore focus on enhancing pre-service teachers’ monitoring competency, and more specifically on the first step of professional vision (see van Es & Sherin, 2002): noticing crucial behavioral indicators for the three dimensions of student activities (see Tables 1, 2, and 3).

Research Question

Research on video-based training found positive results regarding the enhancement of teacher competencies and pre-service teachers’ professional vision (e.g., Gold et al., 2013; Seidel et al., 2013). So far, training programs for implementing collaborative learning have been conducted without the aid of video. In particular, monitoring student interaction, which is a crucial competency for fostering beneficial student interaction, has not been focused on in teacher training programs. In our training study, we examined whether the first step of the competency to monitor student interaction, which is to notice beneficial behavioral indicators occurring in a collaborative learning setting, can be enhanced by a video-based training program.

Method

Research Design

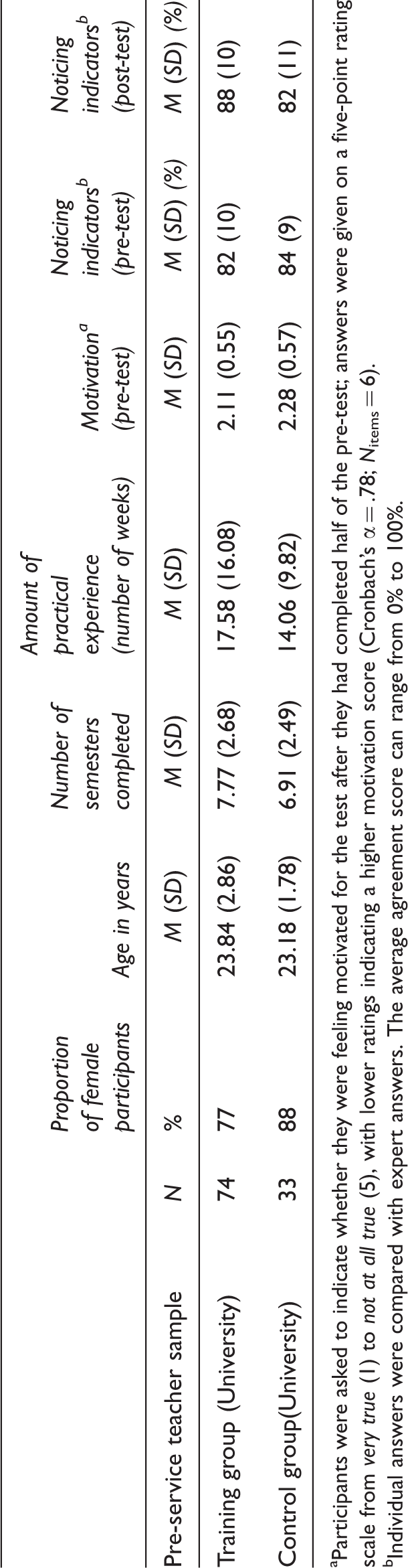

We examined the effectiveness of our training using a quasi-experimental control-group design. To evaluate the effectiveness of our training, we compared a sample of pre-service teachers studying at university (on a course for future secondary education teachers) who had received the training (Ntraining group = 74) to a control group of pre-service teachers studying at the same university who had only completed the pre- and post-test (Ncontrol group = 33).

Pre-service teachers were not randomly assigned to conditions (training group or control group), as the training program was implemented in scheduled pre-service teacher courses. Participation in the training study was voluntary. When the training took place in a course session, pre-service teachers received course credits or were paid for their time.

Participants

Characteristics of the two pre-service teacher samples.

Participants were asked to indicate whether they were feeling motivated for the test after they had completed half of the pre-test; answers were given on a five-point rating scale from very true (1) to not at all true (5), with lower ratings indicating a higher motivation score (Cronbach’s α = .78; Nitems = 6).

Individual answers were compared with expert answers. The average agreement score can range from 0% to 100%.

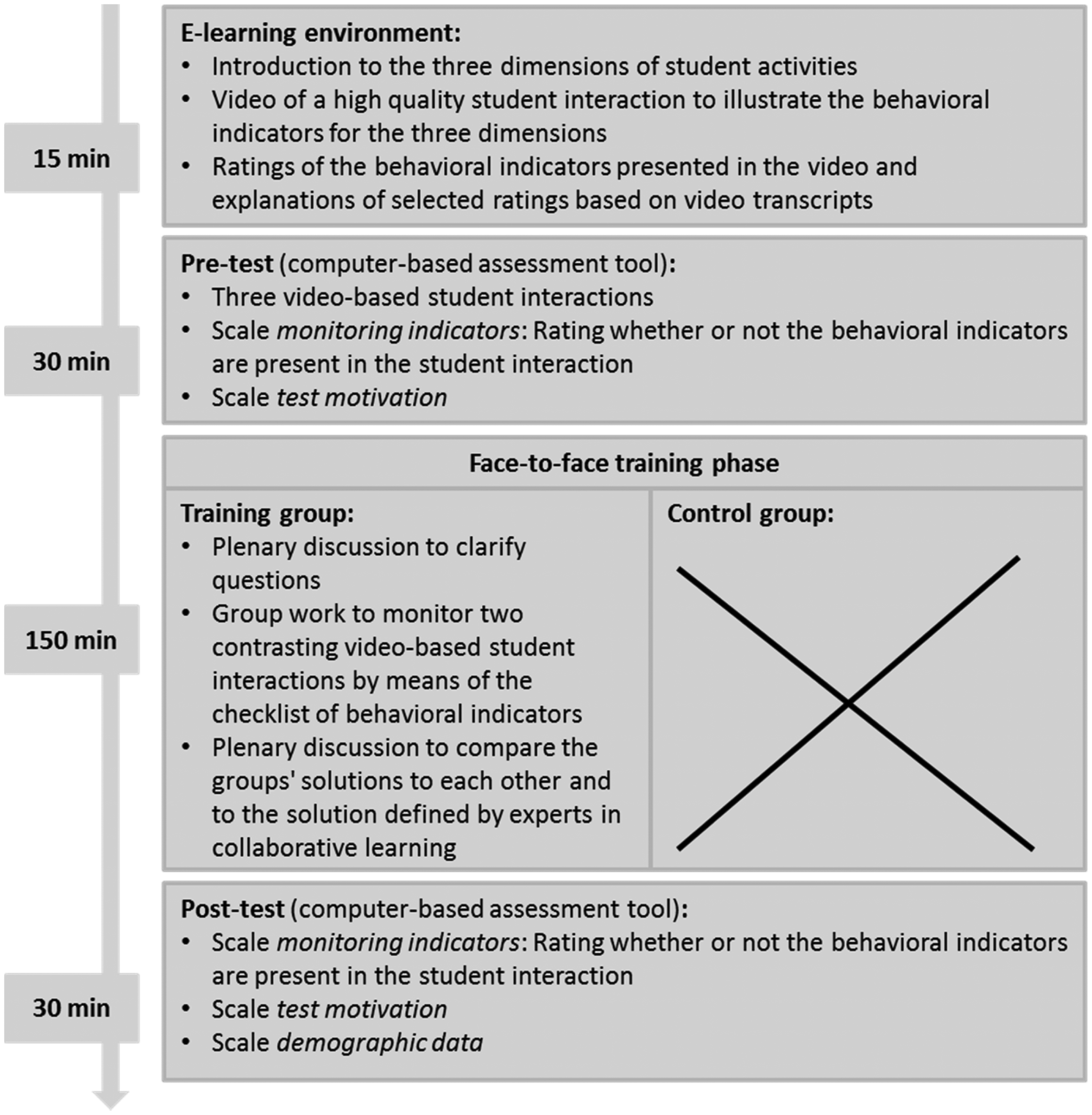

Procedure

Pilot studies had shown that prior knowledge of the concepts used in the assessment tool (see section Assessment Tool) is generally so low that without an introduction, the assessment cannot be meaningfully completed. Therefore, the study began with a brief introduction to the three dimensions of student activities and a video of a high quality student interaction with ratings of the behavioral indicators presented in this video. This introduction was delivered through an e-learning environment that participants could complete at their own pace. All participants then completed the assessment tool for the first time. Three video clips of student interaction were implemented in the computer-based assessment tool. To provide contextual information regarding the collaborative learning setting shown in each video, we presented the group task on which the students in the video are working and its solution. Group tasks were situated in mathematics, for instance tasks concerning fractions or equations.

The training group received training in noticing behavioral indicators in between the pre- and post-test phase. This training program took about 2.5 hours. The control group did not receive the training but participated in activities unrelated to the training for these 2.5 hours. All participants then completed the assessment tool with the same three videos for the second time. The study overall took about four hours. The procedure is presented in detail in Figure 1.

Training program lasting for approx. four hours.

Implementation of the Training Program

We applied a situated approach to our training design by using the vignette technique to present video-based student interactions that occur in a collaborative learning environment. In our videos the participants were supposed to observe beneficial behavioral indicators for the three dimensions of student activities. Within the intervention social learning processes were encouraged, as we implemented collaborative learning and a plenary discussion to exchange ideas, reflect upon crucial student activities, and to develop a shared professional language about different indicators of student activities in collaborative learning (cf. Borko, Jacobs, Eiteljorg, & Pittman, 2008; see also Lave & Wenger, 1991).

The structure of our training followed the training format in which pedagogical concepts, namely the definition of the three dimensions of student activities and their behavioral indicators, are introduced first (cf. Seidel et al., 2013). The e-learning environment then provided a video of a high quality student interaction with ratings of the behavioral indicators presented in this video. Additionally, explanations of selected ratings based on video transcripts were presented. At this time, participants completed the pre-test. Then, the face-to-face phase of the training began: participants watched two contrasting video clips of student interaction (a better student interaction versus a worse student interaction) and decided whether or not the behavioral indicators for the three dimensions of student activities were present in the video clips. These were not the same videos as those used in the assessment tool. As for the videos in the assessment tool, participants received the group task on which the students in these new videos are working and its solution. During a collaborative learning phase, they exchanged their observations regarding the behavioral indicators, asked each other questions, explained their observations of the behavioral indicators, and finally decided on a joint group solution regarding the indicators which were present or not in the video-based student interactions. These solutions were then presented and contrasted in a plenary discussion that served to consolidate and reflect upon the pedagogical concepts, namely the three dimensions of student interaction and their specific behavioral indicators (cf. Borko & Putnam, 1996).

In the training phase, we provided pre-service teachers with a checklist of these behavioral indicators to facilitate their monitoring of the video-based student interactions (see Tables 1, 2, and 3). The checklist can be regarded as a form of scaffolding, as it directs pre-service teachers’ attention to crucial events that occur in a student interaction (cf. Kaendler, Wiedmann, Leuders, Rummel & Spada, 2013; Meier, Spada, & Rummel, 2007). The checklist includes a selection of beneficial behavioral indicators with positive and negative examples from student discourse.

Video Material

The video clips we used showed that the different types of student interaction that were based on the literature promote learning to different extents depending on their quality. Each video focused on the collaborative efforts of one single group: three students at the age of 13 years were shown collaborating during a small group interaction in the video. To better demonstrate the three dimensions of student activities along with their behavioral indicators, we used video clips in which student interactions developed according to a screenplay written by the first authors. Our video clips showed only short excerpts of a potentially longer student interaction of the type that can occur in a collaborative learning environment. They demonstrated different student behaviors within a very short time (about one minute) in order to sensitize pre-service teachers to the behavioral indicators for the three dimensions of student activities. In our scripted scenes, the different indicators received more emphasis than would be the case in a collaborative learning setting taken from real classroom. Furthermore, to prevent cognitive overload, we allowed pre-service teachers to stop the video or watch it again as often as they wished (see Wouters et al., 2007).

In the following, we provide an excerpt of a video-transcript. The video shows three students, Tanja, Sarah, and Marius, who are working together on a mathematics task.

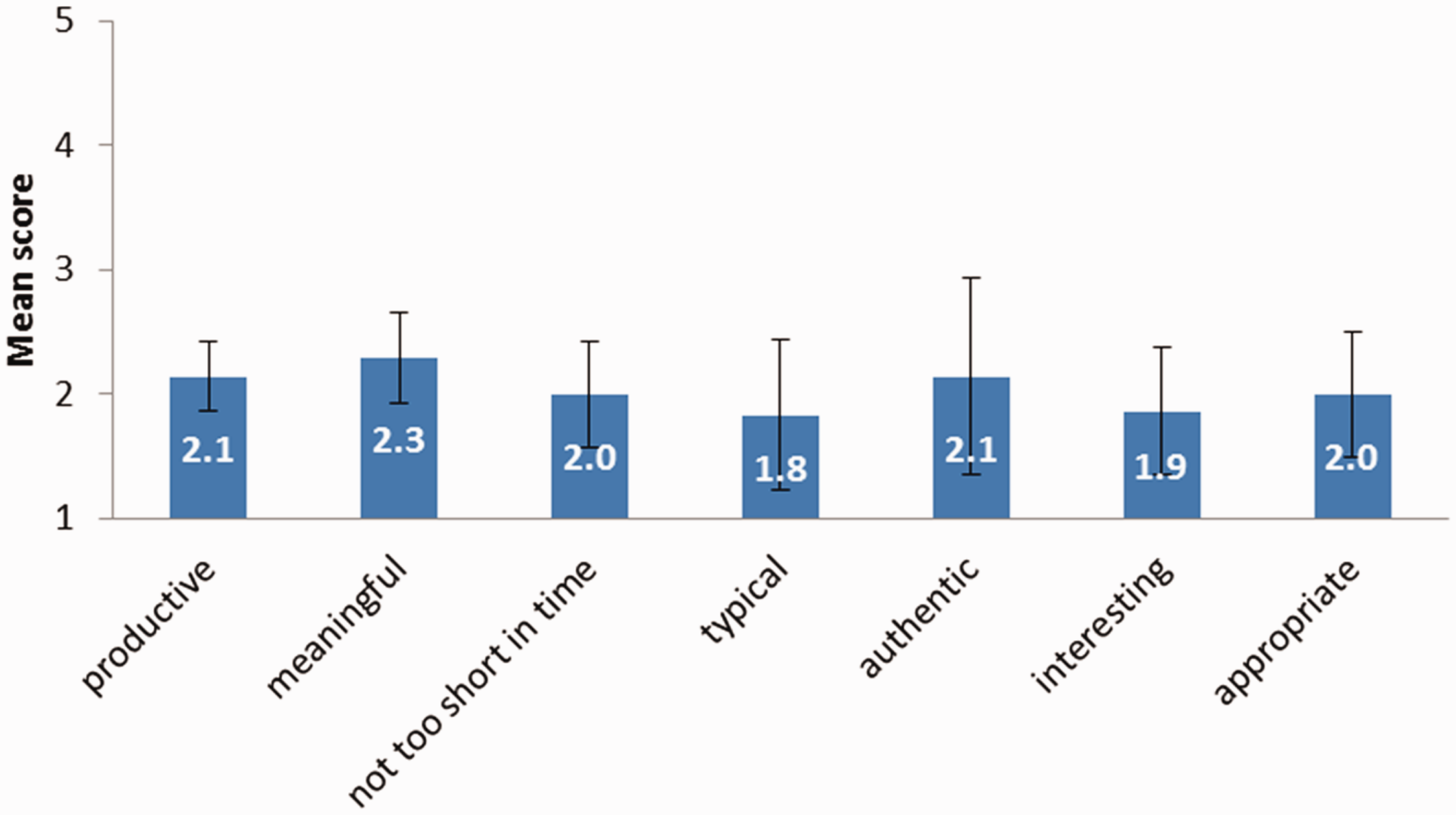

Teacher educators’ evaluation of seven characteristics of the video clips.

The goal of the task is to practice finding a common denominator. The task asks the students to compare several different fractions (

In this excerpt of a student interaction, students share ideas and respond to each other’s ideas, which indicates students’ collaborative activities. Further, Tanja and Sarah also explain their ideas, and thus show cognitive activities. Marius and Tanja express what they have already understood by commenting “That’s easy.” This utterance can be interpreted as indicating meta-cognitive activity. More meta-cognitive activity can be detected when Tanja searches for ways to make further progress on the task solution by drawing a pizza in order to see which fraction is larger.

The video material was newly developed for the training and therefore its appropriateness for training first had to be evaluated. Seven experienced teacher educators were asked to evaluate the usefulness of the video clips for teacher education. They were asked whether the clips were productive, meaningful, not too brief, typical, authentic, interesting, and appropriate on a scale ranging from 1 (=I agree) to 5 (= I do not agree). The mean scores of these seven items are displayed in Figure 2.

Example item of the scale monitoring indicators.

The characteristics of the video clips were evaluated positively, ranging from M = 1.8 regarding the typicality (SD = .75) to M = 2.3 regarding the meaningfulness (SD = .49). These positive evaluations were additionally confirmed by teacher educators’ statements on the characteristics of the video clips, such as:

“short sequences of a real collaborative learning environment,” “demonstration of diverse student behavior,” “focus on the three dimensions of student activities,” “didactic reduction with focus on one situation.”

Assessment Tool

The computer-based assessment tool which we used to evaluate our training was developed, evaluated, and refined in two pilot studies which are described in more detail by Wiedmann (2015). In the first pilot study, N = 67 pre-service teachers completed a first version of the assessment tool. Based on reliability analyses, a subset of items and videos were selected and presented to N = 11 pre-service teachers in a laboratory study using cognitive interviewing techniques such as retrospective probing (Ziegler, Kemper, & Lenzner, 2015). Items were further revised based on these results, resulting in the version of the assessment tool used in the present study. The assessment tool required participants to rate three videos of student interactions similar to those used in the training, on 23 items on the scale monitoring indicators (Cronbach’s αpre-test = .66; Cronbach’s αpost-test = .61). The 23 items corresponded to the behavioral indicators for the three dimensions of student activities (collaborative, cognitive, and meta-cognitive activity); for an example item, see Figure 3. Thus, we did not use separate scales for the three dimensions as the evaluation of the assessment tool revealed that one scale which includes all three dimensions proved to be the most reliable one.

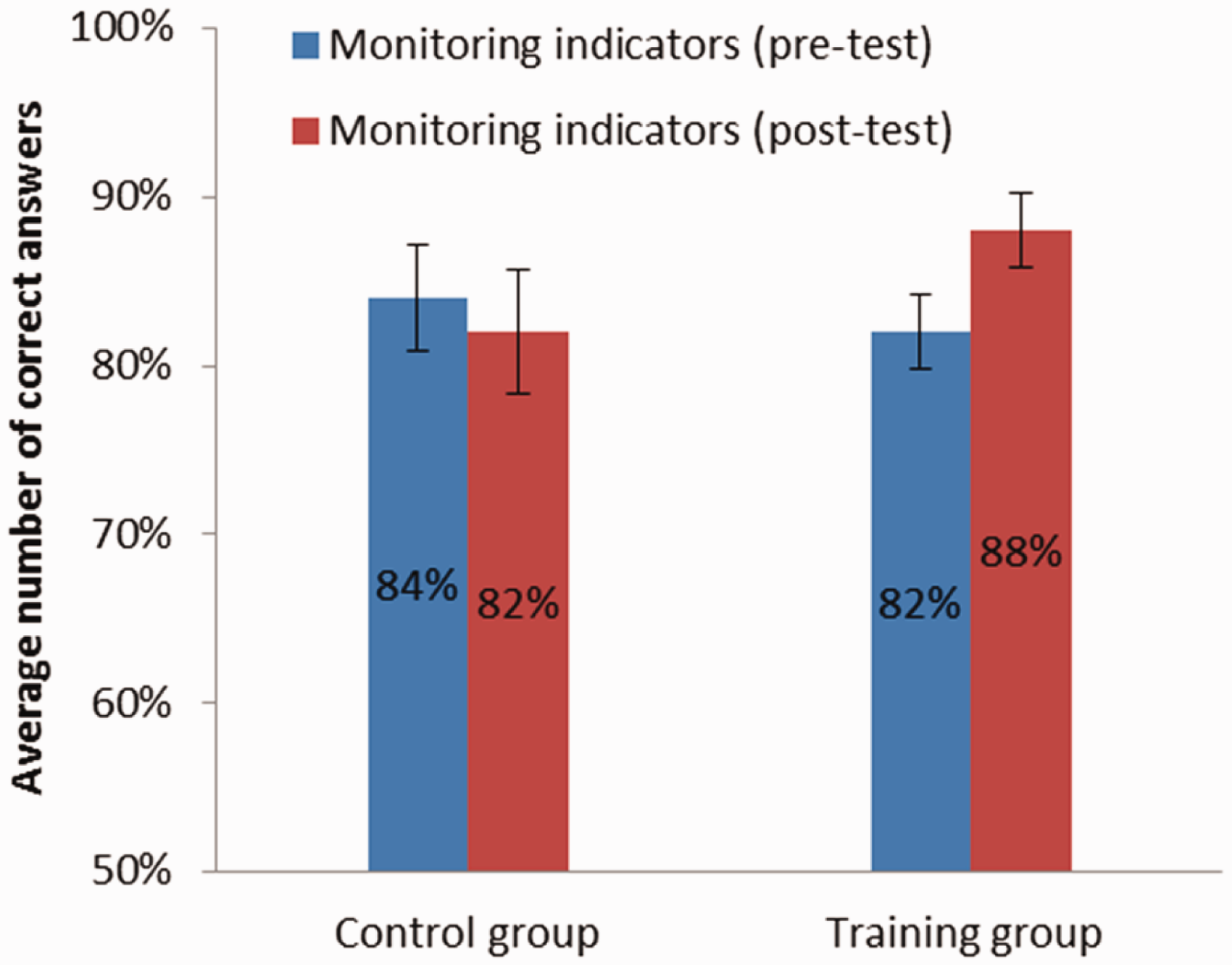

Competency in noticing behavioral indicators in student interaction at pre- and post-test.

In our training, we concentrated only on the competency of monitoring student interactions by noticing behavioral indicators for the three dimensions of student activities. In the pre- and post-test of our training study, participants worked on the same three video clips of student interaction which were implemented in the computer-based assessment tool. Similar to the group work in the training phase, participants rated whether or not the behavioral indicators for the three dimensions of student activities were present in the video clips. Participants’ ratings were compared to an expert rating, and an average agreement score was calculated by adding the number of matches (matching the expert rating was indicated by 1 and missing the expert rating was indicated by 0) and calculating the mean score.

The expert rating was provided by three teacher educators who were experienced in implementing collaborative learning in the classroom. The experts individually worked through the assessment tool, which contained an introduction into the concepts of observing indicators of cooperative, cognitive, and meta-cognitive activities. The experts first rated all items as the basis for a discussion to develop a common understanding of the concepts. As was expected due to the complexity of the subject, interrater reliability of these ratings was unsatisfactory, Krippendorff’s α = .34 (Freelon, 2010; Hayes & Krippendorff, 2007). The experts then proceeded to discuss their ratings, and explicated and resolved differences in their understanding for all but seven items. For the remaining items, the experts provided a consensus rating which was then used to assess participant ratings as described above.

Results

To compare the training group at university with the control group at university, we calculated a repeated-measures ANOVA with time of measurement (pre- and post-test), the independent variable group (training versus control group), and the dependent variable average number of correct answers on the scale monitoring indicators.

We found a significant interaction between time of measurement and group, Finteraction(1, 105) = 12.87, p = .001, ηp2 = .11; see Figure 4. Follow-up analyses (t-tests) revealed a significant increase in the average number of correct answers on the scale monitoring indicators from pre- to post-test in the training group, t(73) = 4.05, p < .001, d = 0.60 (medium effect).

Discussion

Collaborative learning is an effective instructional approach, but teachers often do not feel confident about implementing it successfully. One competency teachers may lack is the competency to notice beneficial student interactions taking place. This is the first step of monitoring student interaction, followed by reasoning about the student interaction. This study aimed to show whether video-based training can successfully foster monitoring competency, specifically noticing beneficial student interactions, in teachers during the first stages of their education. The results revealed a moderate learning gain in pre-service teachers at a German university in comparison to a control group.

Supporting evidence for the robustness of this training effect comes from a comparison with two other cohorts that participated in the training. The two cohorts were either enrolled in (N = 41) or had completed (N = 42) the teacher training program at a university of education for future primary school and lower/medium-track secondary school teachers. The latter cohort continued their training in a practical year in which they taught a reduced course load and received additional training. Appropriate control groups for these cohorts were not available and we can thus not be certain that differences between pre- and post-test result from the training and not, for example, a testing effect. However, the differences found in these cohorts are comparable in size to the training effect observed in the cohort for which a control group was available (Wiedmann, 2015). This provides initial evidence that the training can also be used effectively in other cohorts of pre-service teachers.

While our theoretical model of monitoring competency consists of three content dimensions, we have not evaluated whether the training might impact these dimensions differently. The assessment tool uses items from all three dimensions, but will need to be further developed to measure each dimension reliably.

Advantages and Drawbacks of the Training Program

The results of this study are in line with other teacher training programs that successfully enhanced professional vision concerning classroom management, for instance Gold et al. (2013). A notable difference is that most of the other training programs on professional vision took place during a whole semester (e.g., Gold et al., 2013; Seidel et al., 2013), while the present training lasted only four hours including the assessment phases. It can thus be regarded as very economical. This short duration enables the integration of the training into a scheduled course. Furthermore, the training can also be carried out in the framework of blended learning designs (see Lipowsky, 2010), as the e-learning environment is also suitable for working individually at home.

The current study investigated the training program as a whole. Two components of the program may have been particularly effective: the group work and the plenary discussion. They fostered the exchange of ideas, the reflection upon crucial student activities, and thus the development of a shared professional language about student activities in collaborative learning (see also Borko et al., 2008), that is, introducing the three dimensions and their behavioral indicators to pre-service teachers’ vocabulary.

Noticing behavioral indicators in student interactions is the first step towards a professional vision of student interaction. The next step is reasoning about these indicators. This could include evaluating the interaction, explaining what influenced the quality of the interaction (e.g., the collaboration script used and how well students followed it), and predicting what type of additional teacher support may be effective in improving the collaboration.

Monitoring competency thus interlinks with supporting competency, which is also relevant for the successful implementation of collaborative learning in their own classrooms. General pedagogical support strategies such as prompting and scaffolding should be complemented by the provision of instructional explanations, which requires additional pedagogical content knowledge. While we used videos focusing on student behavior for the noticing of behavioral indicators in student interactions, videos focusing on teacher behavior may be better suited to training supporting competency. We recommend using videos of best practice in order to explain different support strategies, followed by videos which show coping peer models (Schunk, 1987), and thus demonstrate typical teacher behavior in the classroom.

A limitation of our training study is that we used a quasi-experimental design without randomized assignment to conditions. Further, the training effect was measured directly after the training. Due to the short duration of the training and the assessment directly before and after the training, it is not yet known whether the training effect transfers to practice. Follow-up assessments were not implemented in our training study in order to test this transfer effect, and thus are needed to assess the sustainability of the training effect. Collecting pre-service teachers’ behavioral data in a real classroom during collaborative learning would allow the testing of the transfer from training to practice.

To promote a longer-lasting training effect, additional sessions after the initial training phase may be needed. A longer training program could also promote transferring into practice the noticing of behavioral indicators in student interactions (Lipowsky, 2010). For pre-service teachers who are still studying, regular exercises with video clips could be implemented over the course of a whole semester (cf. Gold et al., 2013; Seidel et al., 2013) to allow for continuous practice, and thus promote the sustainability of the training. It would be interesting to examine if the professional vocabulary of the three dimensions (collaborative, cognitive, and meta-cognitive activities) and their behavioral indicators would also be used in real classroom practice.

A relevant research question for future studies also concerns the conditions under which the monitoring competency can be transferred to practice. A real classroom is more complex as multiple groups of students are simultaneously engaged in different activities and thus need individual and adaptive teacher support (cf. van Leeuwen et al., 2015). We here enhanced and investigated pre-service teachers’ monitoring of only one group of students for a given time without switching between different groups. Thus, we here reduced complexity of a real classroom situation in order to decrease cognitive load for trainee teachers.

Advantages and Drawbacks of the Video Material

Generally, videos can never fully represent a real classroom situation and present only a selection that guides the viewer’s attention (Krammer et al., 2006). In teaching practice, events cannot be revisited but rather happen quickly, and the teacher’s attention may be distracted by several groups of students who are working simultaneously in the classroom. However, when training novices, this limitation turns into an advantage: editing and segmenting classroom situations as well as giving control over the playback to the learners, as was done in the present study, reduces cognitive load and helps novices cope with the complexity of classroom instruction (Blomberg et al., 2013; Wouters et al., 2007). In our video material the complexity of a real classroom situation was reduced, because we showed only one student interaction instead of several groups at once. Further, the video-based student interaction was less complex as the different indicators received more emphasis in our scripted scenes than they would receive in a real collaborative learning setting. The reduced complexity of our video scenes limits the ecological validity of our training material. The more complex the video scenes are, the more challenging it is to monitor them. However, less complex video scenes are needed initially in order to sensitize pre-service teachers to the indicators of relevant student activities during collaborative learning. In future training, video scenes with different levels of complexity could be presented with increasing complexity.

The participants of our training did not watch videos of their own classroom but were shown unfamiliar scenarios situated in a mathematics classroom. The majority of participants in the present study were pre-service teachers who had not studied mathematics. The video material thus presented a challenge for noticing behavioral indicators both in terms of subject matter and prior knowledge about the collaborative situation. However, Blomberg, Stürmer, and Seidel (2011) found that pre-service teachers’ familiarity with the subject matter in the video does not affect the quality of their professional vision. Still, as a precaution, participants received not only the group task before each video, but also its solution.

Presenting the group task also provided contextual information about the collaborative situation. However, the students shown in the video clips were unknown to the pre-service teachers. In teaching practice, teachers know their students and this prior knowledge facilitates their professional vision of crucial events in the classroom (cf. Van Es & Sherin, 2002). This is a limitation common to studies of professional vision (e.g., Seidel & Stürmer, 2014).

Conclusion

We designed and evaluated a video-based pre-service teacher training program to enhance teachers’ noticing of behavioral indicators in student interactions, which is the first step towards monitoring student interaction in collaborative learning. A situated approach, namely the vignette technique, was applied and didactic methods such as collaborative learning were implemented. To examine the effectiveness of our training, we used a quasi-experimental control-group design. The video-based training program was effective in terms of enhancing the first step of the monitoring competency of pre-service teachers. Our training can be regarded as a first and important step towards enhancing teachers’ competency in fostering beneficial student interactions, as noticing behavioral indicators is a necessary precondition for reasoning and thus adaptive and individual support of student interaction. This video-based training program showed how videos can be effectively implemented in a relatively short time to enhance pre-service teachers’ noticing of behavioral indicators. As teachers often do not know what to observe and how to evaluate crucial student behavior in student interactions, this training provides a first training approach for these skills, which are needed in teacher practice.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research reported in this article was supported by the Graduate School Pro|Mat|Nat (Educational Professionalism in Mathematics and Natural Sciences). Pro|Mat|Nat was a project of the Competence Network Empirical Research in Education and Teaching (KeBU) of the University of Freiburg and the University of Education, Freiburg. The Graduate School was funded by the federal state of Baden-Wuerttemberg, Germany.