Abstract

In three separate introductory psychology classes over a three-year period, I tested whether over 600 students’ exam scores were associated with the use of textbook technology supplements (TTSs). Each class used a different textbook and a different TTS (Learnsmart, PsychPortal, and Aplia). In general, students who used TTSs more, performed better on their exams (controlling for GPA). Additionally, higher scores on assignments on TTSs also correlated with higher scores (controlling for GPA). Students reported not having enough time or adequate motivation as the top reasons for why they did not use TTSs. I present suggestions for the use of such systems based on how I required the aids over the years.

Introduction

Most introductory psychology textbooks have corresponding textbook technology supplements (TTSs; Sellnow, Child, & Ahlfeldt, 2005). Does using these TTSs help students learn better? Although the content and structure of these aids vary with the publishing company, they each provide an attractive set of options to enhance student study behavior, metacognition, and learning (albeit with some additional financial costs). Whereas publishers claim the materials are designed using cognitive psychology best practices and company white papers document learning system effectiveness, few independent classroom-based tests of these systems in psychology courses exist. Currently, efficacy studies are either ‘published’ as poster presentations or round table topics, or are restricted to tests of single products (Van Camp & Baugh, 2014). I examined three different TTSs to compare and contrast student opinions and to determine if students who used them scored better on exams. I also examined student attitudes to the software and reasons for lack of use.

A wealth of learning and memory research, both historical and contemporary, indicates additional contact/interaction with material enhanced understanding and retention (Ambrose, Bridges, DiPietro, Lovett, & Norman, 2010; Hattie, 2009; National Research Council, 2000). TTSs are a convenient way for students to acquire additional contact with the material to assess their knowledge. Different types of TTS are available, and several studies across different disciplines have tested the effectiveness of such software (e.g. Biktimirov & Klassen, 2008; Johnson, Gueutal, & Falbe, 2009; Luik, 2012; Sellnow et al., 2005). Unlike online classes, TTSs are designed to supplement regularly scheduled class meetings (Buckley, 2003). These programs have been used to increase active learning, and to provide “dual coding” of the course material (Ransdell, 1992). Most programs assess what each individual student has effectively learned, what they need to spend more time studying, and how much time they need to spend studying it (Griff & Matter, 2013). All the programs also allow for repeated testing on the material. Given that cognitive psychology lists repeated testing as a key tool to learning (Dunlosky, Rawson, Marsh, Nathan, & Willingham, 2013; Winne & Nesbit, 2010) the different TTSs facilitate metacognition and should enhance learning via a testing effect (McDaniel, Anderson, Derbish, & Morrisette, 2007).

Previous research on TTSs shows mixed effects. Whereas some studies show no positive effects on students’ overall grades or exam scores (Griff & Matter, 2013; Halbert, Kriebel, Cuzzolino, Coughlin, & Fresa-Dillon, 2011; Sellnow et al., 2005), others do find TTSs effective (Burch & Kuo, 2010; Nguyen & Trimarchi, 2010; Smolira, 2008; Van Camp & Baugh, 2014). Whereas some of the mixed findings may be due to the different designs of the technology and the types of questions used (e.g. short answer versus multiple-choice), some of the differences may also result from how the technology is assigned by the instructors. Only one previous study looked at psychology courses. Van Camp and Baugh (2014) found that MyPsychLab use was associated with higher scores for individual students but making TTS use a requirement did not improve the overall class grade or passing rate. What about other TTSs in psychology?

I tested three TTSs – Learnsmart (McGraw-Hill Higher Education), PsychPortal (Worth), and Aplia (Cengage) – in three separate classes over three years. All three systems provide students with opportunities to test their knowledge utilizing a variety of formats. I tested whether students who made more use of TTs, both scoring higher and spending more time with TTSs (regardless of the points gained in the exercises), would also score higher on their course exams given the benefits of practice testing (Dunlosky et al., 2013). I wanted to extend previous research and also examine student attitudes towards TTSs.

Method

Participants

Students from a mid-sized Midwestern North American university in three of my introductory psychology classes participated in this study over a period of three years. Whereas I required the online study aid use as part of the class and had it count towards the course grade, I invited students to complete assessments of the software and their study behaviors as a bonus activity (extra points awarded on the final exam). The university Institutional Review Board reviewed and approved all study materials and procedures.

In Year One, I tested Learnsmart in a class enrollment of 251 students (164 women and 87 men). I did not collect class year information. Of the 251 students in the class, 216 students completed the entire study survey (see below) assessing TTS use. There were no differences in final class score between survey participants and nonparticipants. In Year Two, I tested PsychPortal with a class enrollment of 247 students (177 women and 70 men). The majority of the students were first year students (65%). The remaining were second-year students (18%), third-year students (6%), and final year students (9%). Of the 243 students in the class, 208 students completed the entire survey. There was no significant difference in final class score between survey participants and nonparticipants. Finally, I tested Aplia in Year Three with a class enrollment of 222 students (154 women and 68 men). The majority of the students were first year students (71%). The remaining were second-year students (19%), third-year students (7%), and final year students (4%). Of the 222 students in the class, 167 students completed the entire survey. There was a significant difference in final class score, F (1, 217) = 38.21, p < .001, with survey participants scoring significantly higher (M = 86.80, SD = 8.22) than nonparticipants (M = 76.58, SD = 16.23).

Materials and Procedure

TTSs

Students completed assignments online at the publisher websites. The types of question formats available varied with the learning product but I created two consistent types of assignments. Pre-lecture quizzes (PLQs) featured traditional multiple choice-type quizzes. The TTS auto-graded the quizzes yielding a number of correctly answered questions score. Mastery-type assignments (MTAs) consisted of quizzes featuring a variety of question types. MTAs were spread out over the semester with due dates for when the assignment closed. Students received a free access code to the product with every new textbook. Students purchasing used textbooks bought an access code for the product.

In Year One, students completed MTAs (10% of grade) and PLQs (15% of grade). This was the first time I had required MTAs or assigned a part of the course grade for it. Accordingly, I decided to focus on how long students spent with the TTSs and not their scores. The software provided a measure of the number of minutes spent on MTAs, which comprised a blend of different question formats (multiple choice, true and false, fill in the blanks, matching). Students had to take a PLQ (10 in total, each on separate chapters) before I discussed the material in class. Students had three attempts per PLQ and I counted the highest attempt. Each quiz had 10 multiple-choice questions based on the book and students had 8 min per attempt. I told students I would drop the lowest quiz score (of 10) from across the semester to reduce their anxiety if they forget to do one or did poorly on one.

In Year Two, students completed MTAs (5% of grade) and PLQs (15% of grade) on PsychPortal. I lowered how much mastery assignments would count for in Years Two and Three as I allowed them unlimited time and attempts. I told students to first read the chapter/pages assigned and then take the PLQ from the TTS site. Students had three attempts per quiz and I counted the highest attempt. Each of 10 quizzes has 15 multiple-choice questions based on the book and students had 12 min per attempt. Similar to Year One, I told students I would drop the lowest quiz. Mastery quizzes were special diagnostic study assignments designed by Worth to help students learn the material. Questions consisted of different formats such as true/false, matching, and applied multiple-choice questions based on videos or research.

In Year Three, students completed MTAs (5% of grade) and PLQs (15% of grade) on Aplia. The nine PLQs, were designed to foster the ‘practice testing’ effect (Dunlosky et al., 2013) and remind students that such testing is also a learning aid (hence I allowed multiple attempts). Students answered multiple-choice questions based on material presented in the textbook. Each quiz consisted of 10 questions pulled from a larger pool provided by the publishers. Students could attempt as many of the quizzes as they wanted until they got all questions correct. Points for class were only awarded for getting all questions on a quiz correct and all quizzes needed to be completed for the full 5% of the grade. Students had access to each PLQ for a four-week period.

For eight MTAs, students had three attempts at each question, and received feedback about their answer and an explanation of the question. MTAs expanded on the concepts presented in the textbook consisting of fill in the blank, matching, true and false, and higher level multiple-choice questions based on applied examples. Students also had the option to save their work and return to finish the question and check their answer. The program automatically graded each quiz at the due date. The total number of questions on each quiz varied. Students had access to the quizzes for a three-week period.

Classroom assessments

In all three classes, participants completed in-class group exercises, and participated in out-of-class research (a department requirement) in addition to taking four multiple-choice exams. The first three exams consisted of 65 multiple-choice questions randomly selected from a pool of 100, covering material related to only one portion of the textbook (three chapters for exam 1, two chapters for each of exams 2 and 3). The final cumulative exam consisted of 100 multiple-choice questions randomly selected from a pool of 225, 30% of which covered material from the first three exams. I used the same exam over the three years of the study.

Student survey

I created a survey to evaluate many elements of the class (e.g. instructor, class lectures) and measured different student attitudes and behaviors (e.g. note-taking, texting) as part of a larger study of learning. One section is relevant to the current study. To examine possible reasons for not using the TTS, I asked students to indicate on a scale of 1 (Not at all) to 7 (Extremely) the extent to which 14 items limited their use of the product (e.g. time, motivation).

During finals week in all three years, I emailed students with a link to the student survey created with Qualtrics software. The email text read: “I would like to get a sense of what worked well and what could be done better. Your responses will help me understand your learning and improve this class. Completing them will give you 5 bonus points on the final exam.” On reaching the survey, students first read a consent form that also informed students they could get the bonus points without completing the survey, by reading a research article on studying and answering a series of short answer questions on it. I told students that participation was voluntary, that I would not review responses until after I submitted final grades, and that the answers to the questions would not have any effect on their class grades or exam scores. I also told students their grades and high school GPA would be linked to their survey research. A third party downloaded the names of participants from the survey software. Once I linked class scores and survey data, I deleted student names from the data file. Instructions also stated that I would only use and discuss group level information from the questions and their responses would remain confidential.

Results

Year One

The amount of time spent using Learnsmart MTAs ranged from 0 min to 1800 min, M = 304.38, SD = 347.01. The average MTA score was 8.58 (SD = 1.14), although note that I told students that I would reward time spent online and not amount correct. I noted that nearly 20% of the class spent only 30 min or less on the TTS across the entire semester. This may be because I did not set a priori guidelines for how much time online for mastery assignments would correspond to what number of points (e.g. 840 min and above = AB or a 88%). In response to this low participation rate, I increased the percentage of class grade the assignments would count for in Year Two. Students’ scores on PLQs ranged from 0 to 100, M = 85.84, SD = 11.40.

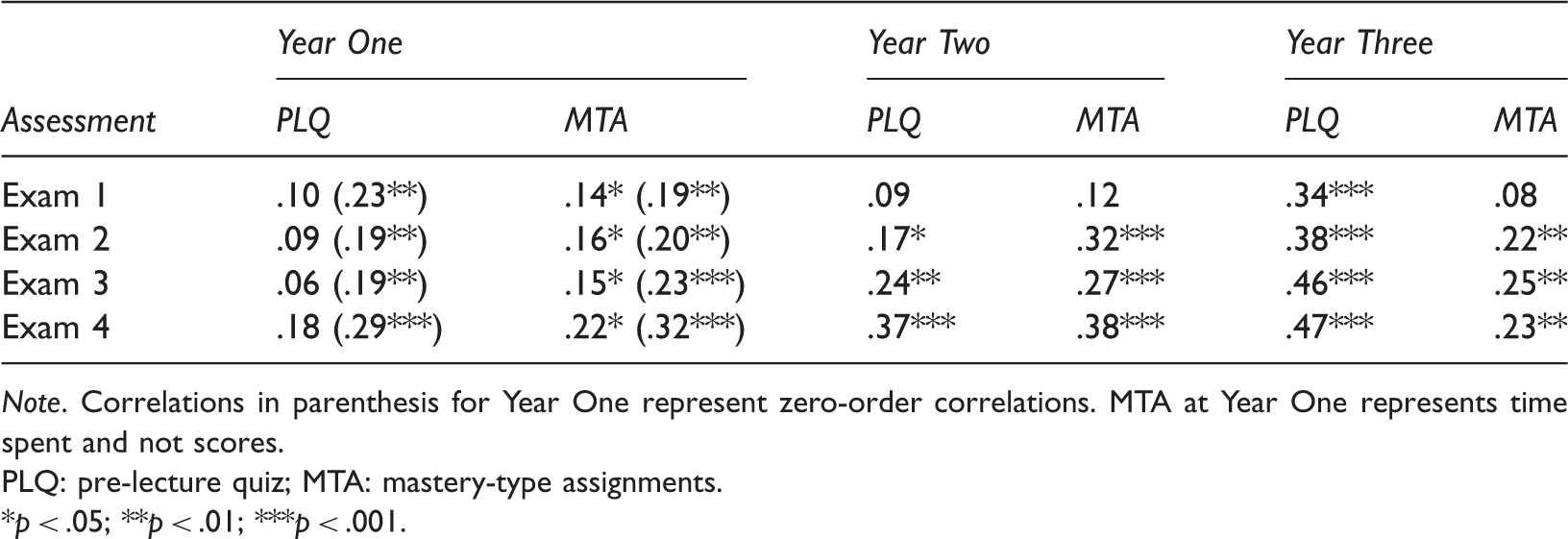

Correlations Between Graded and Practice Assignment Scores and Exam Scores Controlling for GPA.

Note. Correlations in parenthesis for Year One represent zero-order correlations. MTA at Year One represents time spent and not scores.

PLQ: pre-lecture quiz; MTA: mastery-type assignments.

p < .05; **p < .01; ***p < .001.

In general, higher scores on the PLQs and MTAs were significantly associated with higher exam scores before controlling for GPA (but not once I controlled for GPA). Furthermore, the magnitude of the association grew stronger over time for graded assignments (last correlations are higher) although the differences in correlations were not statistically significant. Given that a number of students did not report GPAs it is not prudent to interpret the non-significant partial correlations for PLQs.

Year Two

PsychPortal did not allow easy access to time spent on the system. Consequently, I focus on students’ scores. Students’ scores on MTAs ranged from 0 to 10, M = 7.29, SD = 3.06. Students’ scores on PLQs ranged from 0 to 100, M = 73.93, SD = 24.05. I conducted partial correlations to test if TTS use was associated with each of four exam scores, controlling for student GPA gathered from university records with student permission. Given the reliable GPA scores, no zero-order correlations are reported. Students with higher MTA and PLQ scores had significantly higher scores on all exams except the first.

Year Three

Cengage was not able to provide data on how long each student used the TTS material. Consequently, I again focus on students’ scores. Out of a total possible 563 TTS PLQ points, students’ average score was 452, SD = 113.96. Out of a total possible 1000 TTS MTA points, students’ average score was 439.53, SD = 374.28. Although this latter mean is low, students only got points if all questions were correct (no partial points were awarded). Many students did use the TTS assignments but may not have received points for it if they stopped before attaining mastery.

I conducted partial correlations to test if Aplia use was associated with each of four exam scores, controlling for student high school GPA (gathered from university records with student permission). Aplia PLQ scores were significantly associated with all exam scores. Aplia MTA scores were significantly associated with all exam scores except the first one after controlling for high school GPA. In general, the more students used the online study aid, the better they did.

General comparisons

For a more nuanced look at the results controlling for shared variance and the family-wise error rate, I also conducted multiple linear regression analyses to predict final exam scores from the different TTS use/scores and also prior GPA/college GPA, and gender. TTS use/scores significantly predicted final exam scores, p < .001 (Year Three) to p < .05 (Year One) but do not provide more detail than the correlations previously reported and full details are not reported for brevity (available on request).

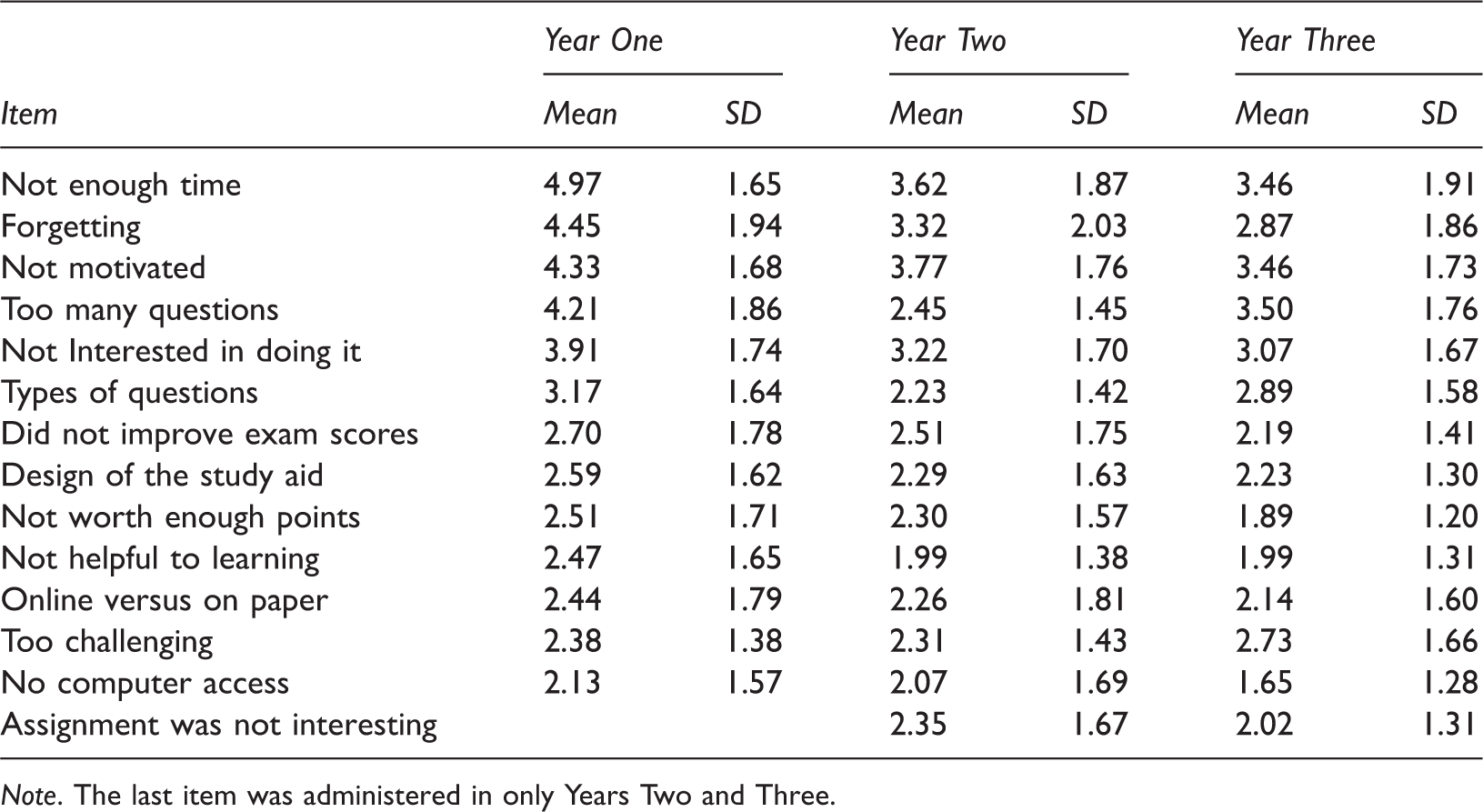

Reasons that Limit Student Use of Online Learning Study Aids (Means and Standard Deviations).

Note. The last item was administered in only Years Two and Three.

Although using different textbooks in each year precludes a formal comparison of the different systems, patterns of change in reasons for a student not using the system may reflect conscious efforts I made in the class as a result of the research. For example, in Year One students rated forgetting as a major reason for not using the system (M = 4.45, SD = 1.94). In the ensuing years I instituted more reminders and scores on this item dropped in Year Two (M = 3.32, SD = 2.03). When in Year One I noted that not having enough time was a high scoring reason, M = 4.97, SD = 1.65, I increased the amount that TTS use counted for from 10% to 20%. This increase was associated with a drop in average scores on this item to 3.62 in Year Two and 3.46 in Year Three, a drop in scores on the ‘not worth enough points’ item from Year One to Year Three, and a drop in scores on the ‘motivation’ item.

Discussion

All told, a consistent pattern of results emerged across the three separate case studies: higher use of and higher scores on the required TTSs correlated with higher exam scores. This association even held, for the most part, after controlling for GPA. The positive correlations could be influenced by how much students use the site and if usage is required/rewarded. Online homework increases grade performance on exams and quizzes if students regularly utilize the site (Biktimirov & Klassen, 2008; Van Camp & Baugh, 2014). Students are more likely to use online study aids when they are required as part of a course (Sellnow et al. 2005).

The evidence supports the testing effect (McDaniel et al., 2007) and the possibility that TTSs serve as successful metacognitive tools (Ambrose et al., 2010; National Research Council, 2001, p. 78). In general, having students complete online exercises tied to their textbooks may help students improve their awareness of where they need to improve and where they have achieved mastery (Erhlinger, Johnson, Banner, Dunning, & Kruger, 2008; Gurung & McCann, 2012). Furthermore, TTSs effectively shift the control of these processes from the instructor to the learner themselves (Schunk, 2008). By having students complement their textbook reading, note-taking, and classroom attendance with active engagement with the content via the online systems, instructors can ensure that students have many opportunities to realize what they know and what they do not know.

Using TTS is not without problems. One of the biggest problems associated with online homework is the technical issues that may arise (Bridge & Appleyard, 2005). Sometimes the learning systems crash or students’ personal computers crash. Students’ comfort levels with the site itself can be an important factor in not only usage but also in overall comprehension (Peng, 2009). When a student feels less comfortable in navigating the site, they are less likely to utilize the site.

As higher education takes more advantage of technology to enhance the face-to-face experience, online learning systems are evolving and changing. Over the last few years, publishers have modified their TTSs. For example, PsychPortal has been revised significantly, and a few years ago Aplia in psychology did not exist. The results of this study show that it is well worth the effort (on the part of the instructor) to facilitate the use of online learning systems. Students benefitted from the use of each of the three learning systems even though there were clear reasons why students did not use the TTSs. My results suggest that changing how the TTSs are introduced and the incentives for their use can change how much they are used. The more instructors can do to urge TTS use, the better learning should be, although it is still not clear whether class grades will improve in general versus for individual students (Van Camp & Baugh, 2014).

The results are tempered by some key limitations. Foremost is the reality that the data is correlational in nature and many different factors influence exam grades, not all tapped by the current design. In fact multiple regression analyses did not account for more that 35% of the variance in exam scores. The study design also lacked a non-online control condition, one where a class did not use such a medium but had the study aids available in another format (hard copy) or did not have study aids available at all. Given that TTSs get students to actively engage with the material, repeatedly test themselves and space their learning (all facilitated by software), creating a true ethically sound control is difficult. Whereas one can give students hardcopies of material, study questions or have them create study aids, none of these alternatives affords the interactivity of online software.

The fact that different textbooks accompanied each of the three systems introduces a major confound that is hard to control for. Differences in associations over the years could be due to the textbook used although this possibility is limited as I kept exam questions constant and based exam questions primarily on classroom lecture (content mostly constant). Furthermore, keeping a textbook constant precludes the instructor from finding the best textbook for a student and comparing different products.

These issues aside, the results of three separate cases and the strong correlations between TTS use and exam scores suggest instructors require students to use TTSs and provide sufficient course credit for doing so. The results of this study provide strong correlational data linking online usage and exam scores. Using three different textbooks, three cohorts of students, and varying the reinforcement for online aid use across the three cases, allows for greater generalization of these findings beyond the single university the study was conducted at although the instructor was a constant. The fact that different textbooks accompanied each of the three systems precludes a comparison of systems across the cases, a key step for future research. Learning platforms are now ubiquitous and focused research comparing platforms with adequate control of the important related variables (e.g. familiarity with technology, motivation) is critical for the advancement of teaching and learning.

Footnotes

Funding

This research received no specific grant from any funding agency in the public, commercial, or not-for-profit sectors.

Author biographies

Introductory psychology, Model Teaching Competencies, How students study.