Abstract

The advent of the integrated learning system (ILS) offers instructors opportunities to provide students with feedback-enabled, interactive learning exercises as online supplements to assigned textbooks. Major college publishers are now bundling an ILS with many of their textbook offerings in undergraduate courses in psychology and other fields. We incorporated an ILS in two separate classes in introductory psychology and evaluated the learning benefits of requiring ILS quizzing and concept-building exercises embedded within the ILS. The two classes were taught by different instructors using the same textbook and course examinations. We incentivized use of these ILS resources by allocating a substantial portion of the student's grade to performance on online assignments. Our findings suggest potential learning benefits of requiring these ILS resources. As learning benefits were limited to students in the upper third of the class distribution, additional efforts may be needed to help poorer performing students utilize online resources more effectively.

Introduction

The use of online technology to support student learning has undergone a number of changes that reflect the emergence of different pedagogical approaches (Amemado, 2014). Initially, online learning technology was primarily used to allow student access to course content (e.g., syllabi, lectures, announcements, assignments) through course management systems such as Blackboard and Canvas. The advent of interactive online technology offers a pedagogical advancement by incorporating feedback-enabled, knowledge-building activities (Stahl, 2006). An example of a more comprehensive digital learning solution is the integrated learning system, or ILS (also called integrated teaching system), which delivers instructional content along with student-paced interactive exercises designed to help students gauge their progress (Grabe & Grabe, 2003). In this report, we describe the incorporation and evaluation of two key features of an ILS, online quizzing and concept-building exercises, within two classes in introductory psychology.

Many publishers today offer integrated learning systems bundled with their textbooks in major fields of study. An example is WileyPLUS Learning Space, which combines academic content with interactive learning exercises that provide feedback to both student and instructor regarding the student's performance (Heider, 2015). Other ILS platforms include McGraw Hill's SmartBook and Cengage's MindTap. These platforms were created with the common goal of enriching online learning, but they differ in layout and design, resources offered, instructional content, and pedagogical approaches.

The adoption of online learning technology as an instructional resource requires time, effort, and added cost. Instructors need to evaluate the means of integrating these resources in course instruction, and their associated learning benefits, in order to best inform their decision to incorporate online instructional elements in their classes.

Despite the growing interest in online learning platforms, evidence of associated learning benefits remains inconclusive (Van Camp & Baugh, 2014). Prior research shows that students who more consistently use online quizzing, which is a major constituent of the ILS, tend to perform better on course examinations (Gurung, 2015; Van Camp & Baugh, 2014). This may warrant an effort on the part of instructors to facilitate more consistent use of ILS resources by students.

Early classroom-based research on online quizzing incorporated within an ILS platform was limited to single classes (Brothen & Wambach, 2001; Grimstad & Grabe, 2004; Johnson & Kiviniemi, 2009). Other studies which compared classes using online quizzing versus traditionally taught classes without these online resources produced inconsistent results (see Bartini, 2008; Becker-Blease & Bostwick, 2016; Daniel & Broida, 2004; Van Camp & Baugh, 2014). Providing more meaningful incentives, such as crediting a greater portion of the student's grade to completion of online assignments, may be needed to boost utilization of online resources and potential learning benefits (Van Camp & Baugh, 2014).

Nevid and Gordon (2018) recently demonstrated significant learning benefits of an ILS (MindTap by Cengage Learning) in which substantial incentives were provided for completing online assignments incorporating quizzing and concept-building exercises. However, further evaluation of the learning benefits of using ILS resources in course instruction is needed to gauge associated learning benefits across different instructors and with students serving as their own controls.

In this report we describe the integration of an ILS in the context of a blended course involving regular classroom instruction with supplemental ILS assignments comprising online quizzing and concept-building exercises. Two instructors in introductory psychology (J. S. N. and M. T.) used MindTap (Cengage Learning), an interactive, online, student-paced ILS that provides students with individualized feedback. We also conducted an initial evaluation of ILS learning benefits by comparing student exam performance during alternating phases in each class in which these resources were either required or made optional. Additionally, we explored whether ILS learning benefits applied to both better-performing and poorer-performing students and evaluated utilization and perceived helpfulness of ILS resources.

Methods

Class Composition

The two introductory psychology classes comprised 144 undergraduate students at a large, northeastern university. The university has a mission of service to low income and traditionally disadvantaged and marginalized populations and registers the highest Pell eligible rate of similar institutions in the USA (large Catholic universities). Many of our students are academically underprepared for college level work, which underscores the importance of developing new educational techniques to help them succeed in the classroom.

The study was approved by the university institutional review board as meeting criteria for exempt status as the instructional materials and data collected were part of regular course offerings involving normal educational practices. The classes consisted of 57 first year students, 53 second year students, 20 third year students, and 14 fourth year students. The gender distribution of the student sample was 69.44% female and 30.56% male. As the evaluation of the ILS learning resources was incorporated within regular course offerings, no other demographic information about students was collected.

Procedure and Course Structure

The teaching method we evaluated is an ILS called MindTap (Cengage Learning), which is bundled by the publisher with electronic or print versions of some of its textbooks, including the text used in the present study (Nevid, 2015). The ILS is equipped with feedback-enabled quizzing and other study resources, such as concept-building exercises, flashcards for key terms, application exercises, author podcasts that preview each chapter, and links to content-related videos with accompanying quizzes. For the purpose of our evaluation, we limited the scope of the required ILS exercises to self-scoring quizzes (both module-based quizzes and full chapter quizzes) and concept-building exercises (Mastery Training by Cerego). Though these were the only required ILS instructional components, students had access to the full range of ILS resources and were encouraged to use them as study tools.

To maintain consistency between classes, we used the same textbook, ILS learning resources, and course exams in each class. To control for differences among students in different classes, as well as content differences across textbook chapters and exams, we used an alternating treatments reversal design (ABAB for one class versus BABA for the other) to assess ILS learning benefits. ILS assignments were required during “A” phases in each class and were made optional but recommended during “B” phases. By alternating phases, we were able to control for order effects and for differences in text material across different phases of the course. We administered in-class exams after each phase to assess student knowledge of textbook chapters assigned during that phase. Unlike previous research using non-equivalent classes as the basis of comparison, the present method relied on participants serving as their own controls by comparing their performance during “A” phases (ILS-required) and “B” phases (ILS-optional).

ILS-required assignments included online module and chapter quizzes for each assigned chapter in the accompanying textbook. The textbook consisted of 14 chapters corresponding to key topic areas in psychology, with each chapter further subdivided into instructional units or modules. The publisher-provided ILS quizzes were similar in style, format, and difficulty to the types of questions on the in-class exams, which assessed knowledge of textbook content from each of the chapter modules and the chapter on the whole. In total, there were 56 module quizzes of six multiple-choice questions each and 14 chapter quizzes ranging from 13 to 17 questions each. All quizzes were untimed. As a mastery-learning exercise, students had open access to the textbook while completing online quizzes and they were permitted two retakes on each question in the quizzes. During ILS-required phases, students were also required to complete concept-building exercises (Cerego Mastery Training) incorporated in the ILS, which assessed mastery of 25 key concepts per chapter by successfully matching concepts to definitions.

To balance effort involved in completing ILS-required homework, we required students during ILS-optional phases to view psychology-related videos and complete multiple-choice quizzes based on the content of each video. We also assigned additional learning activities during ILS-optional phases that required students to post comments on the course management system (Blackboard) for assigned readings and designated TED talks that related to the content of corresponding chapters. However, knowledge of materials included in homework assignments during ILS-optional phases was not assessed in course exams.

We measured student performance on four, non-cumulative, multiple-choice, in-class exams assessing knowledge of textbook material. Exam questions were drawn from the publisher-provided test bank and sampled items corresponding to lower, middle, and higher levels of cognitive complexity in Bloom's taxonomy of educational objectives (Anderson & Krathwohl, 2001; Bloom, Englehart, Furst, Hill, & Krathwohl, 1956). Upon completion of the last exam, students responded to a survey question in which they rated the perceived helpfulness of the ILS in preparing for exams, using a four-point scale ranging from “not at all helpful” to “very helpful.”

Students obtaining 100% correct scores on ILS quizzes during ILS-required phases received two points per chapter credited to their final grades, or proportional credit based on percentage of items answered correctly. Students also received one point for achieving mastery of 25 concepts per chapter, or proportional credit for the number of concepts successfully mastered.

In order to create comparable incentives for non-ILS homework assignments during ILS-optional phases, students received 1.5 points per chapter for 100% correct performance on video quizzes, or proportional credit thereof for less than perfect performance. They also received 1.5 points per chapter for posting comments on assigned readings and TED talks.

In total, a substantial portion of the student's overall grade (42 points out of a 100-point composite) was allocated to performance of homework assignments during ILS-required and ILS-optional phases of the course. The remaining points in the student's composite final grade were based on in-class minute quizzes (10 points total) and the four in-class exams (48 points total).

Evaluation

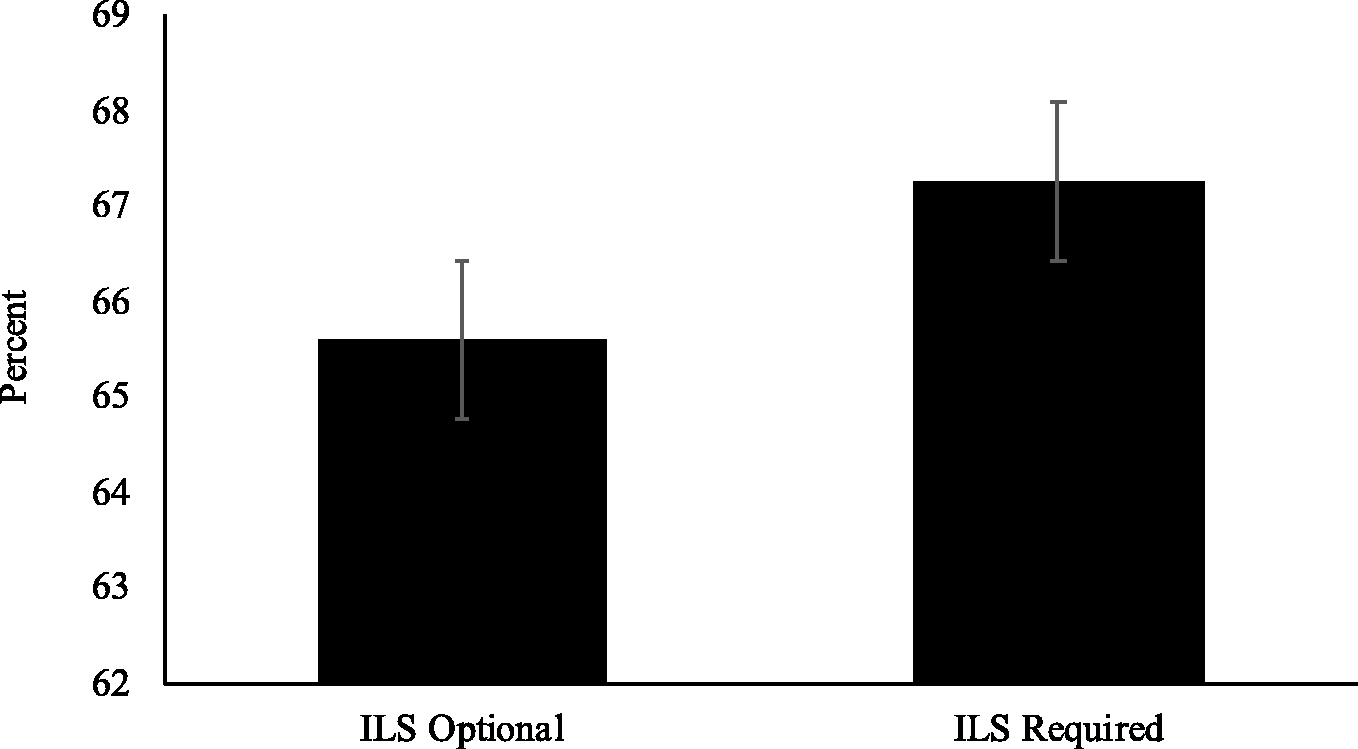

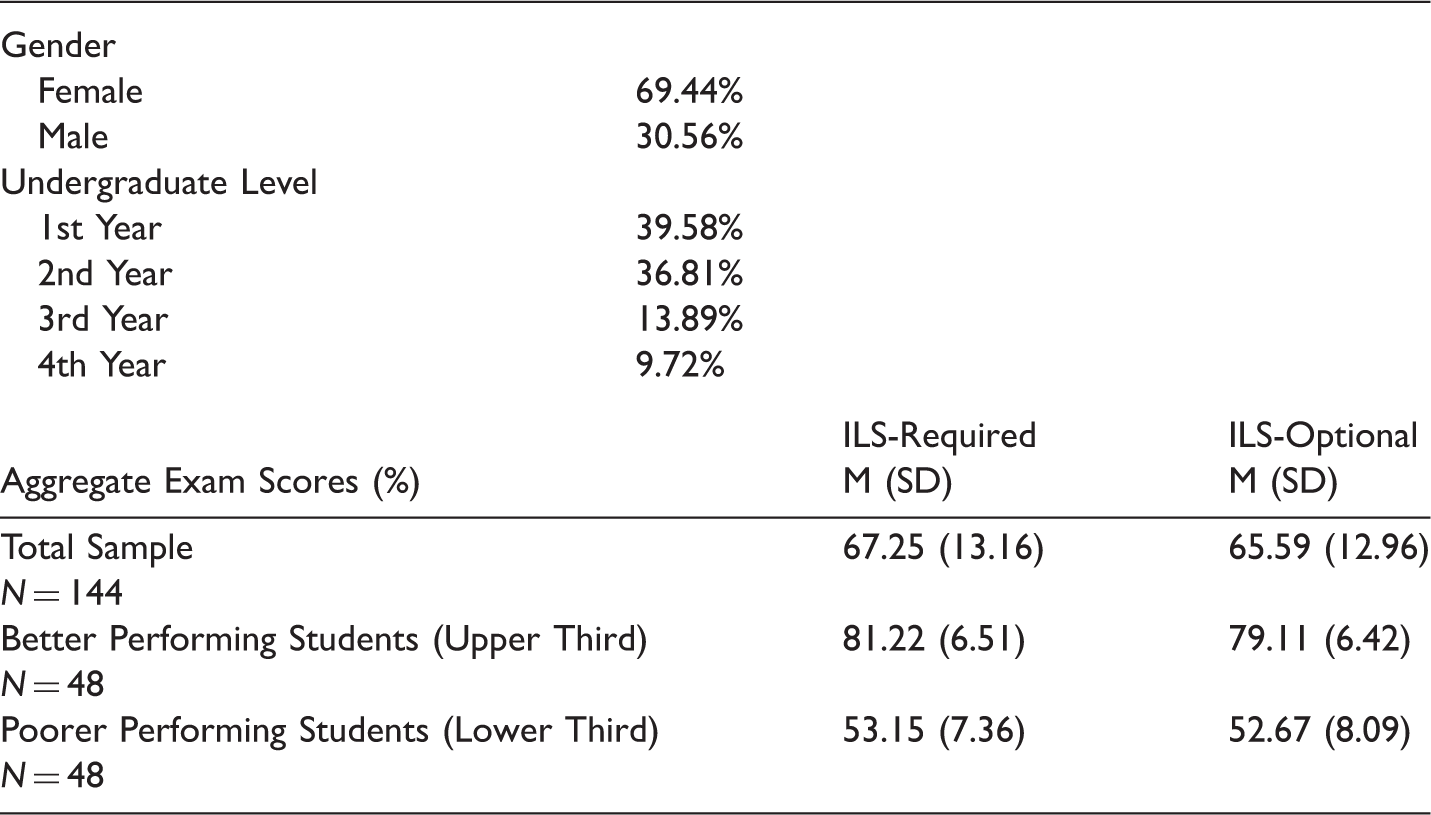

Descriptive statistics for demographics and exam performance are shown in Table 1. Preliminary analysis showed no significant differences in student performance across exams, F(3, 141) = 2.12, Wilks' λ = .96, p = .10. We then compared student performance on exams during alternating phases of the course in which ILS resources were required versus those in which online assignments were optional. Student exam performance was analyzed using a 2 (ILS-optional vs. ILS-required) X 2 (Classes) within-subjects analysis of variance. Across classes, students performed significantly better, F(1, 142) = 6.08, Wilks' λ = .96, p < .05, d = .13, on course exams during ILS-required phases than ILS-optional phases. A graphical display of the results is shown in Figure 1. We also found a significant effect for Class, F(1, 142) = 10.10, p < .01, η2 = .07, but no significant Class by ILS interaction, F(1, 142) = .66, Wilks' λ = 1.00, p = .42, indicating that effects of ILS condition did not vary significantly across classes.

Aggregate Exam Performance by ILS Condition across Classes. Descriptive Statistics for Demographics and Exam Performance (N = 144).

Exploratory Analyses

We also conducted an exploratory analysis examining ILS effects for students in the upper and lower thirds of the grade distribution based on overall exam performance. Descriptive information is displayed in Table 1. Analysis of exam performance of students in the upper third of the class showed a significant effect for ILS condition, t(47) = 2.38, p < .05, with a modest effect size of d = .33. By contrast, ILS condition failed to show a significant effect (p = .69) for students in the lower third of the class distribution. This suggests that learning benefits of requiring online quizzing and concept mastery resources in an accompanying ILS may be limited to better performing students.

Utilization of Online Quizzing

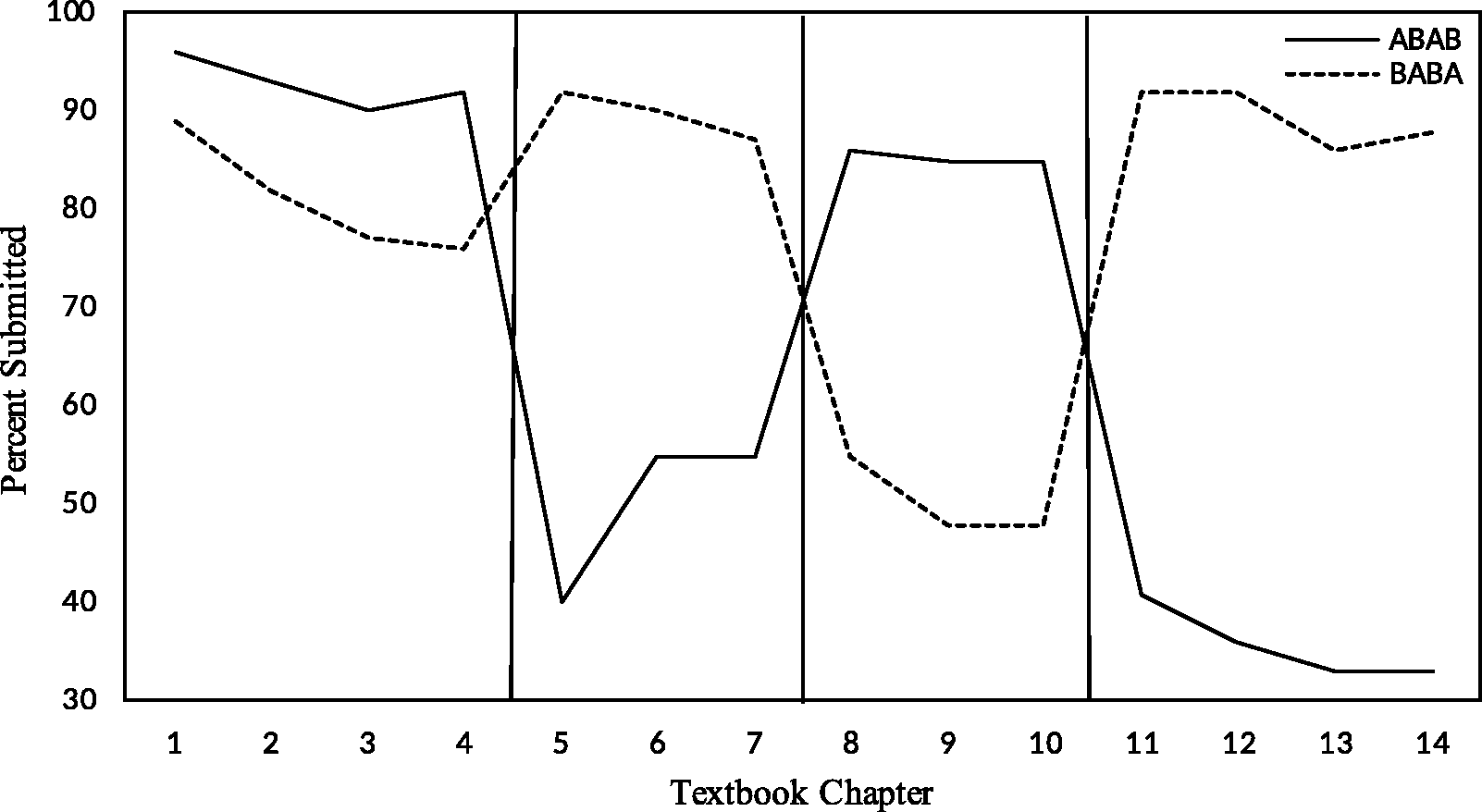

Both classes demonstrated relatively high rates of utilization of ILS resources, even during intervals when these resources were optional and not factored into student grades. Figure 2 displays the percentages of students who submitted online chapter quizzes throughout the semester. Vertical lines divide the semester into four assessment periods representing the chapters included on each of the four in-class exams. Utilization followed an expectable pattern of higher rates of submission of online quizzes during phases (“A”) in which ILS assignments were required than when they were optional (“B”). Overall, an average of 90% of students submitted online chapter quizzes during phases when they were required, as compared to 55% during phases when they were optional. As shown in Figure 2, submission of chapter quizzes during ILS-optional phases declined for both classes from the first to second optional (“B”) phase. Moreover, 97% of students, on average, submitted required video quizzes during ILS-optional phases. On average, students earned 89% of points allocated for assigned learning activities during ILS-required phases (M = 18.68 points, SD = 4.41) and 81% for ILS-optional phases (M = 17.09 points, SD = 5.61).

Submission of Chapter Quizzes by ILS Condition.

Survey Results

The survey of student opinion administered at the end of the last exam revealed a positive relationship between perceived helpfulness of ILS resources and exam performance, r(137) = .26, p < .01. Students who rated ILS resources as more helpful tended to do better on exams, perhaps because they used these resources more reliably.

Discussion

We implemented online quizzing and concept mastery exercises embedded within an accompanying ILS in two introductory psychology classes. In our evaluation, we compared student exam performance during alternating phases of the course in which these ILS activities were either required or optional. Our analysis of student performance on in-class exams suggests a potential learning benefit of requiring these ILS components but with several important caveats. First, the effect size overall was small, resulting in a difference of only about two points on exams, on the average, between ILS-required and ILS-optional phases. To strengthen the learning benefits of an ILS, instructors may need to assist students in using these learning resources more effectively, rather than just assigning them. Second, we recognize that the learning benefits may be limited to classes in which substantial course credit is allocated to student performance on the graded ILS activities. Third, we did not evaluate whether the MindTap platform produced better outcomes than other ILS platforms or those of online quizzing programs incorporated in course management programs. Fourth, requiring additional work during ILS-optional phases may have taken time away from using optional ILS resources more effectively.

Further efforts are needed to determine how students use ILS resources in practice and the underlying mechanisms explaining ILS learning benefits. We were not able to evaluate whether students relied on memory retrieval in completing online quizzes after reading the text (as recommended) or scanned quiz items first and then looked-up relevant information in the textbook before submitting their answers. Students may also have relied on guessing as an initial strategy in responding to questions on online quizzes, as they had several attempts to supply the correct answer to each question. Limiting quiz responses to a single attempt may discourage guessing but would not mitigate using the ILS to perform a type of look-up activity to find answers to quiz items in the text, not unlike using search engines like Google to find relevant information online. However, it is arguable that in this modern information age, the ability to find relevant information from a data resource is an important skill in its own right.

Learning activities in a typical ILS tend to be focused on lower-level educational objectives involving acquisition of basic knowledge (concepts and definitions) and applications of knowledge (connecting concepts to examples), rather than more advanced levels of knowledge that require higher-level skills such as analysis and synthesis. Perhaps the next generation of ILS platforms will incorporate more higher-level assignments, such as explaining underlying mechanisms and processes, solving problem sets, and analyzing behavioral interactions using learning principles. More efforts are needed to create and score these higher-level educational objectives and provide feedback to students to help them develop these competencies.

The findings of our exploratory analysis suggest a learning benefit of requiring ILS quizzing and concept-building exercises for students in the upper third of the class, but not for those in the lower third. A desirable direction for future research would be to investigate innovative means of assisting lower-performing students in using online resources more effectively, perhaps by helping them improve metacognitive skills or providing access to tutors or coaches to assist them in developing more effective study skills. We should also note that exam performance overall was lower than would be preferred for both ILS-required and ILS-optional phases of the course. Examination of factors related to underperformance on course examinations among academically challenged students is an important goal going forward.

With these caveats in mind, the present evaluation provides limited support for the educational value of incorporating ILS assignments, at least when these assignments are required by the instructor and sufficient credit is attached to successful completion to motivate consistent use of these resources. We observed that when ILS materials were not required, usage predictably fell off, even with instructor encouragement to use these resources in preparing for exams. Still, during ILS-optional phases, 55% of students completed online chapter quizzes, indicating that many students continued to use these online resources even when they were not required to do so. We recognize that high rates of usage during optional phases may have attenuated the ability to demonstrate stronger learning benefits of requiring ILS resources. On the other hand, it also suggests that students may have recognized the learning benefits of the ILS and continued to use these online learning resources even when they were not required. Overall, more consistent use of these resources may well hinge on making them a course requirement and incentivizing their use accordingly.

Footnotes

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Jeffrey S. Nevid is the author of an introductory psychology textbook published by Cengage Learning, which also publishes MindTap.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.