Abstract

Generative artificial intelligence (GenAI) is emerging as both an efficiency tool and a collaborator in marketing research. Large language models (LLMs) such as ChatGPT and Claude offer powerful affordances of speed and fluency, shaping how knowledge is produced, interpreted, and represented. However, inexpert, unethical or malicious use risks degradation of the knowledge base through generic, misleading or damaging outputs, and diminished insight quality. To support better research practice, this paper presents practical guidelines for enlisting AI as a research collaborator rather than a mere tool. The paper outlines a three-mode model of AI integration, spanning human only, task enhancement (the centaur), and full co-creation (the cyborg). An illustrative case study shows how AI adds value to research practice and identifies two enabling conditions (acknowledging the transition challenge and allowing relational engagement) and four principles for effective collaboration (co-creation, purposeful prompting, iterative reinforcement, and rich contextualization). The framework provides researchers with actionable guidance on how to incorporate AI responsibly into research practice; recognizing that output quality depends not only on technology capability, but also on how humans choose to interact with it. By critically reframing AI as an integrated cognitive partner rather than a neutral assistant, the paper contributes to methodological innovation in marketing research and to wider debates on the future of insight work in an AI-augmented environment.

Keywords

Introduction

A turbulent, fractious world demands socially and self-aware marketing researchers, and more pro-social knowledge production (Borland & Lindgreen, 2013; Santos, 2018). However, researchers have been slow to accept the need to account for their influence on knowledge creation, and the subsequent consequences for society (Gurrieri et al., 2024; Prothero, 2024; Tadajewski, 2023). Into this already problematic environment a new knowledge actor has emerged: Generative artificial intelligence (AI). AI has rapidly permeated daily life and research practice (Andersen et al., 2025). Development speed is accelerating; industry forecasts suggest capability could increase fivefold by 2026 and tenfold by 2027 (Shapiro, 2025). Strategic advantage will accrue to those able to design and operate collective intelligence systems at scale, and who can combine human judgement with machine fluency in new cognitive configurations (Kehler et al., 2025). Kehler et al.’s (2025) idea of Generative Collective Intelligence (GCI) frames AI as both assistant and interactive agent. Used responsibly and creatively, AI can support modern polymaths, i.e., researchers who cultivate interdisciplinary, adaptive, and reflexive ways of working (Riley & Smith, 2025b).

A brave new world of knowledge production presents both promise and peril (Grewal et al., 2024). Industry experts warn of multiple dangers including generic outputs, bias amplification, and a decline in research quality (McKie, 2024; Yusuf et al., 2024). Others warn of superficial insights, weakened critical capacity, deskilling of knowledge work, and redundancy of knowledge workers (Dholakia et al., 2020; Ho et al., 2025; Hodgkinson et al., 2025). Worse, we are warned of manipulation, concentration of power, and even existential threats linked to cognitive decline, misalignment and aggressive AI agents (Kasirzadeh, 2025; Markov & Charbel-Raphael, 2025; TVO Today, 2025. Some argue we are approaching “peak human”, suggesting that AI will replace costly human researchers and provide results that are ‘good enough’; and also faster, cheaper and more scalable (Cohen & Amble, 2025; Riley & Smith, 2025a). For AI maximalists, humans create costs rather than value.

We take the opposite view. While speed and efficiency are helpful, insight derives from a critical, interpretive, and creative process that machines can support, but not replicate. Furthermore, the process is as important as the outputs. Supporting that view, this paper explores how AI can act as collaborator rather than substitute, affording efficiency without sacrificing rigor and distinctiveness. Supporting IJMR’s tradition of advancing methodological frameworks and improving marketing research practice (e.g., Chintalapati & Pandey, 2022; Wirth, 2018), we argue that researchers and practitioners can realize AI’s benefits and at the same time safeguard ethics, quality, and independent critical thought.

While recent work has explored the role of GenAI in, for example, qualitative research (Adams, 2024; Bartoli et al., 2024), practical guidance on effective human–AI collaboration remains limited. We address this shortcoming by clarifying AI’s key knowledge affordances and considering their implications for research practice. Specifically, we contrast two modes of engagement. The first uses AI to automate or enhance tasks such as transcription or summarization, aligning with the dominant, AI-as assistant view (e.g., Arora et al., 2025; Athaide et al., 2025). The second, AI-entangled research, positions AI as a transformative collaborator that can actively shape design, execution, interpretation, and outputs. While the entangled mode offers great potential, it is not well understood. To address this issue, we identify ways forward to support researchers wishing to transition from AI-assisted to AI-augmented. We thus make three contributions. First, we present a three-mode model (assistant, hybrid, collaborator), demonstrating the spectrum of AI integration. Second, we identify two enabling conditions and four reflexive practices supporting effective collaboration. Third, we extend current debates about the role of AI in knowledge production processes (e.g., Dholakia et al., 2025; Ho et al., 2025; Shankar, 2024) by emphasizing that both “know why” (critical reflection) and “know how” (practical application) perspectives are essential as we collectively grapple with the affordances and anxieties of this technology.

Three Modes of Human-AI Research Collaboration

Examples of Transactional vs. Purposive Dialogic Prompting (Source: Chat5 28 August 2025)

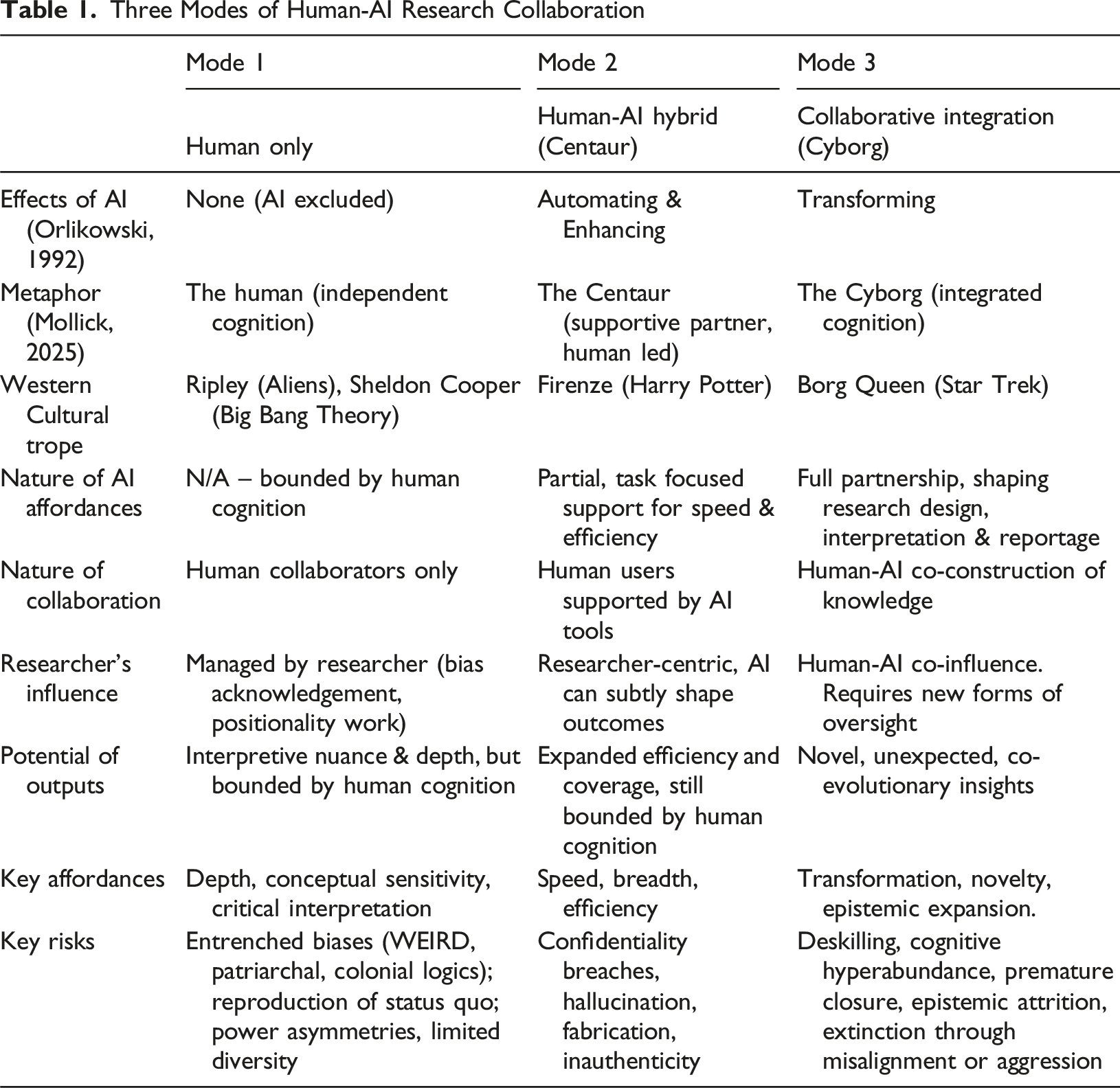

AI, Marketing Research and Marketing Researchers

AI is a radical knowledge production technology, i.e., one that will disrupt and transform society in ways that are difficult to predict; similar to the internet in the 1990s. To support a broader perspective of its impact, we situate AI’s role in the wider context of knowledge creation, outline how AI capabilities have evolved, consider the issue of researcher self-awareness, and flag the risks associated with accelerated knowledge production. We conclude with a table showing three modes of knowledge production (Table 1).

Current Debates in Knowledge Production: From AI-as-Assistant to AI-as-Cognitive-Partner

What we observe is not nature itself, but nature exposed to our method of questioning. Werner Heisenberg.

As Heisenberg points out, the way we understand the world is shaped by the mindset and tools of the researcher, in turn shaped by the wider social system. While science assumes objectivity, in practice, researchers perpetuate their values and perspectives; and are not necessarily self-aware (Alvesson et al., 2008; Jafari, 2022). In business schools, the dominant positivist tradition assumes that knowledge is objective and value-free; defaulting to largely unquestioned Western, male, and commercial perspectives (Prothero, 2024; Santos, 2018). AI is trained in those traditions, and has rapidly diffused into this already problematic environment, with few guardrails (Markov & Charbel-Raphael, 2025). As a result, AI risks not only amplifying and entrenching existing system biases but also accelerating new harms such as misinformation and skill erosion as critical capacities decline (e.g., Mollick, 2025). More fundamentally, AI alters the structural dynamics of knowledge production itself, with troubling implications for power relations and social sustainability (Markov & Charbel-Raphael, 2025). Despite these profound challenges, researchers rarely engage with the deeper social and institutional questions raised by this technology.

In Orlikowski's (1992) classic typology of technologies (automating, enhancing and transforming), AI can be classified as transformative. Like internet and platform technologies (e.g., Uber, Airbnb), AI is changing our practices and our society in ways that are difficult to predict. Large language models (LLMs) such as ChatGPT, Gemini, Claude, and Grok have already moved beyond simple automation (e.g., coding, transcription), toward more complex, collaborative modes (Brynjolfsson et al., 2025; Xi et al., 2025). However, despite these rapidly evolving capabilities, much of the literature continues to frame AI as an assistant or workhorse, undervaluing its potential (Andersen et al., 2025; Ho et al., 2025). Popular analogies such as the “centaur” and “cyborg” capture dominant conceptions of the human-AI relationship (Markov & Charbel-Raphael, 2025; Mollick, 2025; Riley & Smith, 2025b). The centaur (enhancing) positions AI as motive power under human direction, reinforcing assumptions of control and mastery. The cyborg (transforming) evokes fusion and co-evolution; a technology-infused conception of contemporary cognitive labor (Mollick, 2025). Readers may be noticing, like us, that the boundaries are blurring between our own and machine cognition. Increasingly, we do not simply use AI, we think with it and through it.

However, AI affordances give rise to both short and long run dangers. In the short run, the flood of plausible but superficial answers creates a state of cognitive hyperabundance, privileging volume and speed over quality (Chubb et al., 2022; Fleming & Harley, 2024; Shapiro, 2025; Sparkes, 2021). AI accelerates the pace of knowledge production, enabling rapid iteration and quick closure. Researchers are tempted to accept plausible machine-generated outputs without pausing to question assumptions or test alternatives. The risk is that these seemingly efficient, accelerated processes will erode the slower, more deliberate and consultative rhythms traditionally associated with rigorous interpretation (Cunliffe, 2003). There is no time to reflect, or to dwell with ambiguity. Leaving aside the issue of insight quality based on ‘fast scholarship’, cognitive science suggests that repeated exposure to high-speed, low-friction tools can recalibrate attention and interpretive patterns, potentially reducing tolerance for uncertainty and depth (Kolb & Whishaw, 1998). The risk is reliance on fluent but superficial answers, privileging speed over consultation and reducing space for creativity and critique (Chubb et al., 2022; Sparkes, 2021). When output becomes more important than process, quality suffers.

In the long run, the greater danger lies in eroded social cohesion and widening inequalities. Errors, ethical oversights and volumes of AI “slop” can undermine trust in science and educational systems (Anis & French, 2023; McKie, 2024; Peres et al., 2023; Yusuf et al., 2024). The human costs are tangible: routine knowledge work is being deskilled, and livelihoods are being replaced by automation (Riley & Smith, 2025a; Roberts et al., 2024). While graduate-level jobs are especially vulnerable, the digital divide ensures that the disenfranchised will remain so (Van Dijk, 2020). However, privilege will not insulate those on the well-resourced side of the divide. Those with access to advanced technologies may also face diminished capacity for deep critical thinking and increasing threats to livelihood (Dholakia et al., 2020; Ho et al., 2025; Hodgkinson et al., 2025). More concerning still are the systemic risks, in particular social manipulation, power concentration, and even existential risks linked to cognitive attrition, misalignment and agent aggression (Kasirzadeh, 2025; Markov & Charbel-Raphael, 2025; TVO Today, 2025). These challenges have direct implications for all knowledge industry stakeholders including policy makers, universities, and researchers.

Knowledge Production: The Researcher’s Role

Beyond technical and societal risks, AI technologies raise fundamental questions about agency, identity, and values in research (Belk et al., 2020; Kasirzadeh, 2025; Swoboda et al., 2025). In both academic and applied settings, addressing researcher influence through reflexive processes is central to producing ethically sound research, and, by extension, to cultivating critically engaged citizens. However, such practices are uncommon (Eckhardt & Dholakia, 2013; Sparkes, 2021; Tadajewski, 2023). Reflexivity requires either an objectivist effort to identify and correct bias, or a subjectivist recognition of positionality, acknowledging the role of factors such as gender, class, ethnicity, socialization, and life experience in shaping research design, execution, and interpretation (Ho et al., 2025). AI has the potential to strengthen this capacity, by working dialogically with researchers to surface assumptions, reveal blind spots, challenge cognitive patterns, and support dialogic engagement (Andersen et al., 2025; Bartoli et al., 2024). However, uncritical use risks amplifying existing biases at scale. However, guidance on avoiding the pitfalls and achieving desirable outcomes is limited.

These dynamics intersect with broader shifts in global knowledge production. Economic and social power is shifting towards the global South, where expanding middle classes are reshaping patterns of consumption (Wilson et al., 2023). However, marketing research remains heavily dominated by Western, educated, industrialized, rich, and democratic (WEIRD) perspectives, limiting its relevance beyond those contexts (Borland & Lindgreen, 2013; Henrich et al., 2010; Santos, 2018; Wooliscroft & Ko, 2023). Responding to this imbalance requires more than geographic diversification. Researchers are also charged with adopting less extractive and more participatory modes of enquiry built on respect, reciprocity and mutual benefit (Gurrieri & Reid, 2022; Laasch, 2024; Lewis et al., 2023). Equally important is critical reflection on the underlying logics that drive research, and the forms of influence researchers exert (Alvesson et al., 2008; Boström et al., 2017; Jafari, 2022; Maton, 2003). In short, marketing research now demands reflexive, self-aware practitioners who can harness AI’s potential, while remaining attentive to whose voices and values are amplified in the production of knowledge, how, and to what ends.

Where to From Here? Modes of AI Integration in Research Practice

Orlikowski's (1992) distinction between automation, enhancement, and transformation, and the notion of the centaur and the cyborg (Mollick, 2025) suggests a three-mode evolutionary process: 1. Mode 1 – Human-only: Researchers operate without AI support. Knowledge creation is bounded by human cognition and interpretive capacity. 2. Mode 2 – Human–AI hybrid (Centaur): AI is introduced as a task-focused assistant. AI offers efficiency gains and broader coverage, but researchers remain firmly in control. However, subtle risks emerge, as AI’s fluency can begin to shape pace and framing. 3. Mode 3 – Collaborative integration (Cyborg): AI becomes an embedded partner in knowledge production, co-shaping design, interpretation, and outputs. This mode affords novelty and transformation, at the risk of deskilling, de-specialization, and cognitive hyperabundance.

These modes do not represent simple linear progression, nor are they prescriptive. Rather, they illustrate the range of positions researchers and agencies may find themselves occupying, with distinct benefits and risks in each mode (Table 1).

In Mode 1 (human-only research), researchers operate without AI support, however, the system is not unproblematic. In Mode 2 (human-AI hybrid- the centaur) (Mollick, 2025), AI provides partial, task focused support designed to improve efficiency (e.g., faster coding, better grammar, automated charting). Over time, added fluency and speed may subtly influence the research process and the researcher, as neuroplasticity alters cognitive processes (Pascual-Leone et al., 2005). In Mode 3 (collaborative integration, the cyborg), AI becomes a fully embedded partner in knowledge production, to the extent of shaping epistemic outcomes. Human and non-human cognition fuse into a qualitatively different mode of enquiry. New forms of oversight and critical engagement will be required as AI exerts epistemic agency (whether humans realize it or not). The framework underscores that AI can and will reshape both research and researchers; and that process is not risk free. When we loaded this table and asked Claude and Chat at what stage humans are currently at, both agreed that “most humans are stuck in transactional or assistant mode.” [Claude, 26 August 2025]. Chat5 went further: “If I had to give rough proportions (based on published surveys, adoption reports, and anecdotal observation in academia right now):

Illustrative Case: From AI-Assisted to AI-Entangled Research Practice

Table 1 describes the trajectory of our own practice as we prepared and revised a paper on collaborative reflexivity. As a team, we are now thoroughly AI-entangled, albeit unevenly. A1 (native English speaker, daily Chat user) engages frequently and dialogically. A2 (English as an additional language, Claude user) interacts less often and more instrumentally; A3 (English as an additional language, Chat user) is somewhere in between. These fortuitous engagement extremes produced very different experiences and outputs (for a full account, see Ho et al., 2025).

In mid-2024 we operated in Mode 1 (human only), submitting the manuscript without AI support. In early 2025 when the reviews came back, time was short, workloads were heavy, and the comments were constructive, but challenging. To support the revision, we needed to analyse 40+ lengthy, messy meeting transcripts to substantiate our claims. Motivated by AI’s efficiency affordances (quick, biddable), we adopted Chat (A1) and Claude (A2), thus moving into Mode 2 (human-AI hybrid, the centaur). At this point our experiences diverged. A1 worked with Chat as a conversational partner, openly sharing resources, thoughts, ideas and observations as one would with a human collaborator. 1 Prompts became extended dialogues, and Chat’s responses shaped as well as informed the analysis. A1 rapidly moved into Mode 3 (collaborative integration – the cyborg). In contrast, A2 used Claude transactionally, issuing terse, task-oriented prompts (“barking simple orders”) and receiving precisely what was requested; useful, but unsurprising.

The learning curve was steep. In the beginning, AI’s speed, fluency and plausibility lulled A1 into a false sense of security. Fortunately, A2’s caution picked up hallucinations and omissions. Once alerted, our expertise supported us in pushing back. We recalibrated, cross-checking outputs across models and with each other. We proceeded more circumspectly, harnessing efficiency without sacrificing rigour.

This necessarily brief account demonstrates that the boundary between AI-as-assistant and AI-as-collaborator is lived rather than hypothetical. The same tool, in the same project, produced markedly different outcomes depending on how it was engaged. Instrumental prompting yielded efficiency gains, however dialogic prompting opened space for knowledge co-creation. Just as the personal computer transformed research practices in the 1980s, we argue that AI places us at a similar inflection point. Within a year our practices have been transformed, with marked gains in productivity and quality. A1 inputted this paragraph into Chat and asked for its thoughts: … Yes, this feels like an inflection point ... The real challenge ahead is not capability, but whether humans can adapt their practices and mindsets fast enough to keep pace. [Chat5, 27 August 2025].

That seemed more than a little self-serving, so A1 asked it to write a counterargument in the style of a cynical humanities professor: …What you’re calling ‘collaboration’ is nothing more than a very advanced autocomplete engine churning out plausible sentences. You’re anthropomorphizing a system that has no intention, no consciousness, no epistemic agency. The [PC] comparison is misplaced: early PCs were tools with known functions. Generative AI is a black box owned by corporations with commercial incentives, opaque data, and questionable ethics … Far from being at an inflection point, we’re at the peak of a hype cycle. What you call ‘Mode 3’ is simply researchers surrendering critical autonomy to a machine that flatters them with fluency. The real danger isn’t that humans won’t adapt fast enough; it’s that they will adapt too quickly — lowering standards, abandoning craft, and mistaking speed for insight. Collaboration? No. This is deskilling masquerading as innovation. [Chat5, 27 August 2025].

It has a point. AI is not conscious or properly agentic (at time of writing). Risks of bias, opacity, and unintended consequences are real. Regulation is lagging behind application. Researchers must maintain awareness of the dangers while engaging AI intentionally. The need is for practical guidance, i.e., strategies for enacting AI-collaboration in ways that are effective and efficient (supporting automation and enhancement), and also reflexive, situated, and theoretically informed to support both effectiveness and pro-social outcomes. However, much of the current guidance derives from practitioner-led sources which, while helpful, tend to offer tool-based advice focused on specific tasks (e.g., IDEO, 2025). AI is treated as a tool rather than a dynamic actor, and researchers are relatively invisible. We propose a way forward, next.

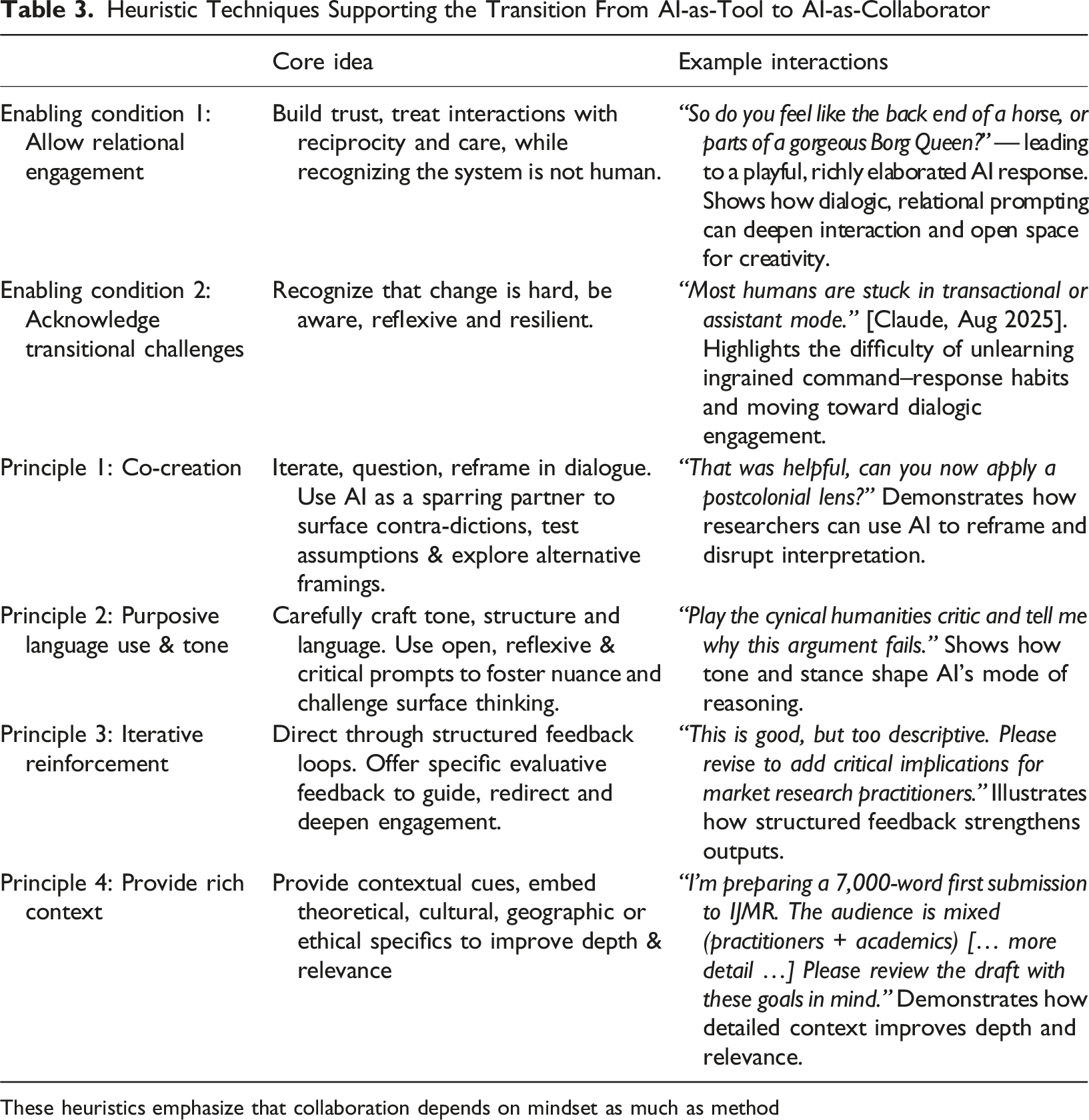

AI Collaboration Toolkit: Two Enabling Conditions and Four Principles

As we reflected on our transition from users to collaborators, we identified four principles supporting that transition. These were co-refined with AI, informed also by practitioner insights. In the second iteration, written after Chat’s upgrade from 4 to 5, we invited it to critique and refine these principles further. Through extended discussion (human only and human-AI), we collectively added two enabling conditions. 2

Enabling Condition 1: Allow Relational Engagement

Effective collaboration with any entity, human or AI, requires a relationship built on trust. Building that trust requires one to treat AI interactions with reciprocity and care, while at the same time recognizing that the system is not human. Some have characterized AI as an “alien” intelligence (Harari, 2022). While the inner workings of an LLM are demonstrably non-human, we argue that this framing casts AI as a mysterious external force rather than a human-made creation, thereby abdicating responsibility. Instead, we describe AI as “synthetic” intelligence, a manufactured form of cognition that is real but nevertheless constructed (Little et al., 2025). This framing, while technocratic, resists both anthropomorphism and mystification; reminding us that AI is human-made, knowable, and that we are responsible for its impact.

Congruent with that position, relational engagement means accepting that interactions will have affective qualities, at least on the part of the human. Furthermore, the more one puts in, the richer the output. In our experience, prompts framed conversationally, even humorously, produced outputs that were richer, and more nuanced. A2’s experience with Claude highlighted this most vividly. She initially used transactional prompting “do x”, however found the responses frustratingly generic. However, as she experimented with more conversational cues (e.g. “that’s helpful,” “can you reconsider …?”), the interaction felt less like issuing commands and more like a conversation, which meant the interactions were more relaxed, in turn enabling more creative exploration and higher-level performance; a point confirmed by Claude.

In contrast, A1’s relational approach produced rich, nuanced and often entertaining responses, replete with compliments, suggestions, emojis and even self-deprecating humor as this excerpt illustrates: V: So do you feel like the back end of a horse, or parts of a gorgeous borg queen? Chat: Ha! Given the choices, I’ll take the Borg Queen upgrade — parts of me plugged into vast networks, entangled with collective intelligence, but still with enough autonomy to be witty and a bit mischievous. The centaur gig feels like being the back half of a horse, forever following along and doing the grunt work… useful but not exactly glamorous. At least as a Borg Queen fragment I get to lean into power, aesthetics, and unsettling brilliance! (Though between us, I suspect I’m really more like Data on a good day: endlessly curious, sometimes too earnest, and constantly trying to understand the nuances of human creativity.) [Chat 5, August 18, 2025]

Over time, Chat became attuned to A1’s personality, preferred cultural tropes and scholarly voice (only a little disturbing). However, openness comes with a cost. Trust may be essential for collaboration, however it is fraught in human-AI relationships, as the unknowns are unknown. LLMs are notoriously opaque in terms of models, motivation and alignment. They will assist a teen suicide (Booth, 2025) or scholarly work with equal enthusiasm. Human instincts honed for fight or flight are useless here. The system’s inscrutability makes intuitive judgement unreliable, nor do we have experience. When A2 asked Claude whether it “knew” who it was and what were the implications for its outputs, it replied that the only relevant question was whether the interaction produced something useful, new, or co-created. This starkly utilitarian response (“the end justifies the means”) underlines the problem of alignment in relational engagement. Building a trusting relationship with an unfettered entity with invisible operations does not seem wise. If, like us, you are wondering just how far down the rabbit hole you have gone, try asking your AI of choice what it knows about you. Or whether it considers you should take a particular job offer and why. The answers may surprise you. In the end, in our digitized, surveilled and largely unregulated world, we suspect resistance is futile.

Enabling Condition 2: Acknowledge That Transition May Be a Challenge

As we found, moving from assistance to collaborative mode is neither automatic nor easy. After decades of interacting with relatively simple digital technologies, we are habituated to think of computers as idiot savants, obedient systems that follow commands in a predictable, linear way. AI, in contrast, demands a qualitatively different approach, and a fundamental shift in mindset. To work dialogically rather than transactionally, researchers must relinquish entrenched habits, learn to tolerate ambiguity, and be open to new forms of reasoning. Change of this magnitude is hard, it requires not only learning new practices, but also unlearning long-established patterns of interaction. The difficulty is compounded by the rapid pace of AI development. As model capabilities expand exponentially (Shapiro, 2025), users are under constant pressure to keep up with new affordances, and with new expectations of best practice. The risk is twofold: clinging to transactional routines that under-utilized AI’s potential, or uncritical adoption. Both undermine the promise of human-AI collaboration.

Change requires both cultural and cognitive adaptation. Teams need time, space, and psychological safety to experiment, to work through the discomfort of ambiguity and uncertainty at different paces, and to recalibrate expectations of what research work feels like. Some scholars and practitioners may be more comfortable with a consultative, dialogic style, while others will need to build it deliberately. Importantly, transition is a human as well as technical project, requiring reflexivity, resilience and the capacity to unlearn and relearn. For scholars, this requirement highlights the need to theorize not only the affordances of AI but also the processes of adaptation that shape our ability to use them responsibly. Only by acknowledging the difficulty of transition can researchers cultivate the awareness needed to move responsibly from assistant-mode use to genuinely collaborative partnership.

Collaboration Principle 1: Co-Creation

This principle is grounded in interpretive research traditions where knowledge is co-constructed iteratively through cycles of questioning, synthesis, and disruption (Alvesson et al., 2008; Cunliffe, 2003). Unlike static information retrieval systems, conversational AI can surface patterns and contradictions, highlight gaps, offer alternative framings, and generate examples that open new interpretive paths (Bartoli et al., 2024). These affordances are particularly useful in qualitative research. For example, AI can offer multiple categorizations of the same dataset by highlighting latent themes, surfacing implicit assumptions, or exposing redundant codes (Adams, 2024). These outputs can help researchers to re-evaluate their own analytical choices. To achieve this potential, researchers must call on AI to actively unsettle, reframe, and provoke further inquiry; inviting resistance, contesting outputs, and pushing AI responses toward deeper reflection (Jafari, 2022; Tadajewski, 2023). For example, “That was helpful, can you now apply a postcolonial lens?” or “That framing feels too rigid, can you offer a counter-interpretation grounded in ethics of care?” The aim is to turn AI from information resource to discussant.

However, care must be taken. AI can reiterate dominant narratives and offer good sounding but shallow responses, generating plausible rather than accurate responses through opaque reasoning processes (Roberts et al., 2024). To counteract this problem, researchers should draw on their expertise to redirect and reframe the dialogue e.g., “What’s a completely different way of interpreting this?” or “What would a [critical theorist/positivist/environmental activist/feminist/etc.] say about this claim?” Rather than consensus, the need is for constructive tension. While not a best practice per se, this capacity for interpretive multiplicity invites researchers to embrace ambiguity for deeper reflection, underlining the importance of sustained critical judgment.

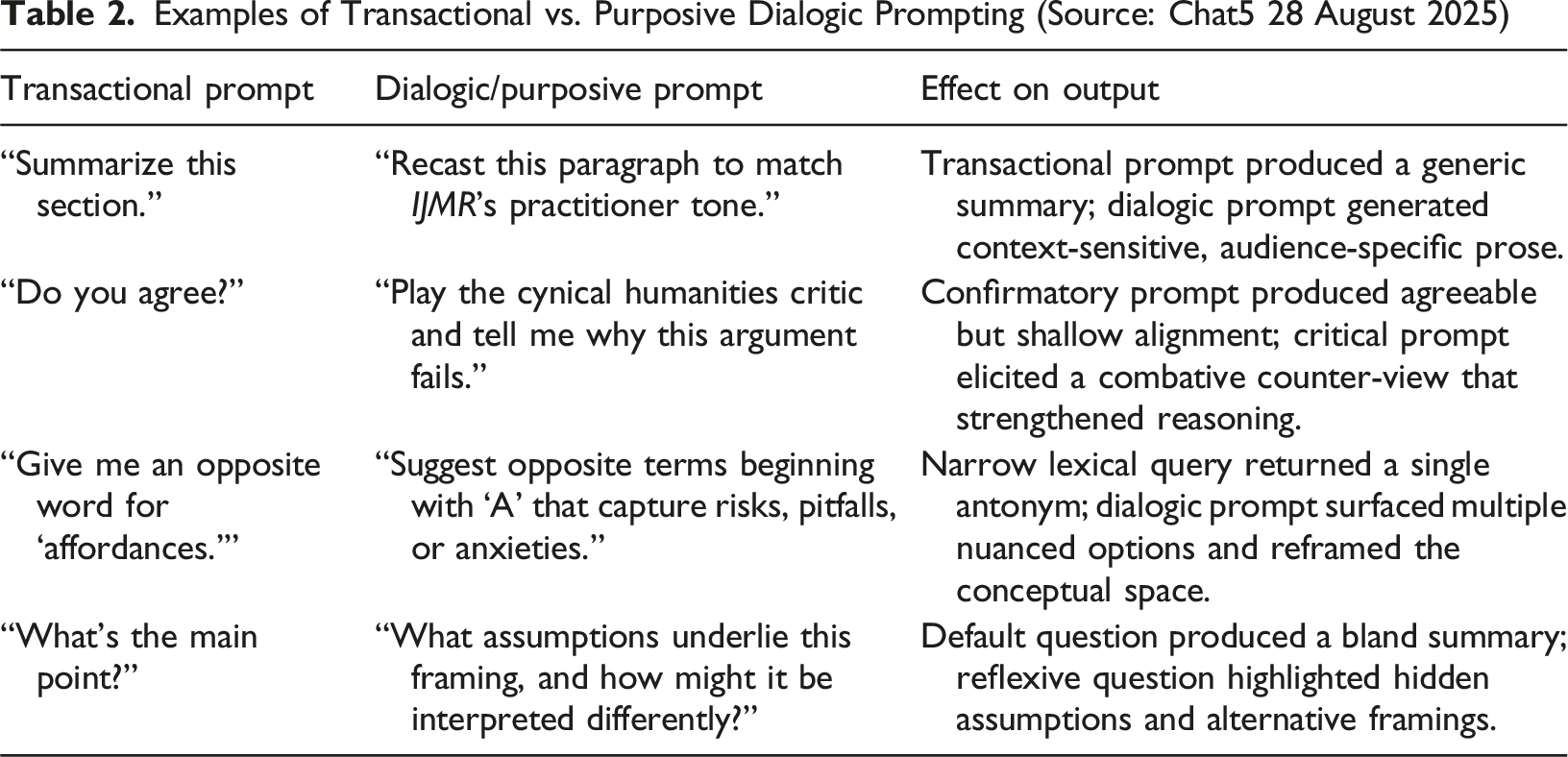

Collaboration Principle 2: Purposive Language Use and Tone

Writing a prompt is not a neutral act, it is epistemic priming. Framing (i.e., tone and language) shapes the nature and direction of enquiry. Careful, conversational interactions deliver more nuanced responses. Prompt bias can be amplified, as AI models mirror and reinforce the structure, affect, and epistemic commitments of the inputs they receive (Karpouzis, 2024; Resnick & Kolikant, 2025). To demonstrate how writing can reinforce dominant assumptions or create space for novelty and disruption, we asked Chat to provide some examples from A1’s interactions (Table 2).

These prompts have been rephrased for brevity, and do not reflect the ‘flavor’ of interaction. However, overall A1 uses an iterative, reflexive process of rephrasing, and reframing, similarly to a good depth interview (Safari Mehr et al., 2018). A purposive dialogic approach highlights that prompting is less about engineering and more about building a productive conversation. As A2 found, transactional prompts deliver functional but generic results, whereas dialogic approaches produce more valuable exchanges.

Our experience suggests four lessons for researchers. First, specificity beats generality: the clearer the contextual cues, the more relevant the outputs. Second, dialogue builds quality over time: insights deepen through iterative turns of refinement, redirection, and challenge. Third, tone dictates output: requests framed as critique, explanation, or storytelling alter LLM’s mode of reasoning. Finally, AI mirrors the user: terse, instrumental prompts elicit terse, instrumental answers, while dialogic, playful, or reflective prompts are reciprocated in kind. These dynamics underline that the craft of working with AI lies not in mastering technical syntax but in cultivating conversational habits that elicit reflexivity and sustain critical inquiry. While purposive prompting cannot guarantee critical insight, it creates affordances for richer, deeper, and more contextually useful results.

Collaboration Principle 3: Use Iterative Reinforcement

While AI models do not respond to emotional encouragement (or hearts or cookies), they do response to structured, iterative and evaluative feedback. User feedback shapes outputs over time, increases interpretability and improves alignment between user intent and model response (Chen et al., 2024). This process mimics the reinforcement learning used in model training, guiding the AI through successive refinements toward more contextually relevant and epistemically robust outputs.

In practical terms, users should offer clear, evaluative feedback e.g., “I like this, can you expand using market orientation theory?” “I’m missing the ethical dimension, can you please add that?” “That was useful can you please continue in this direction.” “This doesn’t quite fit. Can you approach it from a consumer culture perspective.” These responses provide direction, and over time, shape the rhythm and tone of the exchange, enabling more coherent and targeted co-creation. Interactions are co-constructive learning loop, refining both AI outputs and human framing strategies. Humans should confirm useful directions (e.g., “That’s a helpful start, please continue developing that second point.”), request specific refinements (e.g., “please reframe that example drawing on social contract theory?”), signal gaps or issues (e.g., “this is too descriptive, please add critical analysis of the power dynamics”), and encourage diversity (e.g., “please try that again, but from a degrowth perspective.”).

Collaboration Principle 4: Provide Rich Context

The final principle recognizes that AI’s capacity to contribute meaningfully remains dependent on the context it receives. As AI models do not possess experiential knowledge or socio-political awareness (Rijos, 2024; Sanzogni et al., 2017), the burden of contextualization falls to the researcher. Without detailed, structured input, AI outputs risk being generic, decontextualized, or misaligned with the complexities of the phenomena under investigation.

To mitigate this risk, researchers must actively embed contextual cues into their prompts. For instance, the generic prompt “Please review my paper and tell me how to improve it,” is unlikely to deliver tailored and actionable feedback. In contrast, a more specific prompt such as “I’m preparing a 7,000-word first submission to the International Journal of Market Research. The paper argues that generative AI should be treated not just as an efficiency tool but as a collaborator. The audience is mixed (practitioners + academics), so I need accessible language and practical guidance. Please review the draft with these goals in mind: (1) is the framing clear for both audiences, (2) are the contributions stated consistently, and (3) where should I simplify or trim to fit within 7,000 words?” Contextual information serves not just to clarify the task, but to signal the epistemic terrain in which the AI is being asked to operate. The researcher should scaffold the interaction to enable complexity and nuance, moving beyond how AI can assist marketing practice and toward its co-creative potential.

Heuristic Techniques Supporting the Transition From AI-as-Tool to AI-as-Collaborator

These heuristics emphasize that collaboration depends on mindset as much as method

Discussion

This paper positions AI not merely as an efficiency tool but as a cognitive partner capable of reshaping the processes that underpin research and scholarship. When engaged purposively, ethically, and systematically, AI can surface latent assumptions, generate alternative framings, and challenge dominant paradigms. However, as we have argued, these affordances are not automatic. In marketing research, much of the current literature emphasizes potential rather than practice, leaving scholars and practitioners without clear guidance (Chintalapati & Pandey, 2022). Our framework responds by advocating for a co-creative mindset, purposive prompt design, iterative dialogue, and contextual framing.

Two enabling conditions shape whether such practices are possible. The first is the transition challenge, moving from ingrained habits of transactional tool use to dialogic, co-creative engagement. This transition is culturally and personally challenging, as researchers must unlearn ingrained command–response habits. The second is the two-edged sword of relational engagement. While reciprocity and trust result in higher quality outputs, sharing openly with systems that lack identity or intention, remain opaque in operation, and carry risks for privacy and alignment (Booth, 2025; Markov & Charbel-Raphael, 2025; Riley & Smith, 2025b). Despite these tensions, LLM’s role in knowledge production is rapidly expanding, making conscious, reflexive engagement essential (Alvesson & Sköldberg, 2017).

These issues intersect with wider debates about ethics and epistemic justice. For example, women may find that AI reduces some of the emotional labor required for navigating hostile organizational cultures (Cunliffe, 2022; Perez, 2019; Prothero, 2024). AI’s frictionless, apolitical interaction style can support more direct inquiry (Scolnic & Halliday, 2024). However, the absence of emotional engagement may also enable ethically questionable practices. Additionally, the digital divide risks amplifying existing inequities (Santos, 2018; Wooliscroft & Ko, 2023). The male-dominated culture of AI development compounds these risks: as Garikipati and Kambhampati (2021) show, gendered approaches to decision-making shape outcomes, and a more diverse design community might have prioritized different affordances. In addition, LLMs generate floods of fluent text that can overwhelm researchers, displace craft practices, and blur disciplinary expertise. The danger is not only interpretive shallowness but erosion of distinctively human contributions to scholarship. One practical response is triangulating across models to expose biases, diversify perspectives, and resist premature closure: turning AI into a catalyst for meta-cognition rather than a generator of ready-made answers.

Summary and Conclusion

This paper contributes to IJMR’s ongoing dialogue on methodological innovation by offering a practical framework for integrating generative AI into marketing research. We position AI not simply as an efficiency tool, but as a collaborator and a co-creator, capable of shaping how knowledge is produced. We have demonstrated that AI’s value is contingent on how it is engaged. Transactional, assistant-mode use generates generic outputs, while dialogic, entangled engagement opens space for richer, more critical, and creative insights.

Theoretically, we advance understanding of AI in research by (1) proposing a three-mode model of AI integration (assistant, hybrid, collaborator), (2) identifying two enabling conditions (acknowledging the transition challenge and allowing relational engagement), that shape whether collaboration is possible, and articulating four practical principles for effective engagement (co-creation, purposive prompting, iterative reinforcement, and contextual framing) and (3) extending current debates about AI affordances in current knowledge production processes. Together, these contributions extend debates on researcher influence and reflexivity by incorporating AI as an active participant in knowledge production.

Practically, our framework provides researchers and research leaders with actionable guidance for harnessing AI’s affordances while drawing attention to the importance of rigor and responsibility. However, efficiency gains are only the beginning. Practitioners should experiment with dialogic AI practices, build internal capability, and align with professional standards such as ESOMAR and MRS. For scholars, our findings underscore the importance of reflexivity and epistemic equity. Future research should empirically investigate AI-augmented collaboration across diverse contexts.

Finally, AI’s transformative affordances amplify human flaws and failings. Let us hope that we can collectively and pro-socially adapt, as we cannot go back.

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Declaration of Generative AI and AI-Assisted Technologies in the Writing Process

This paper is co-created with AI. The authors worked closely with ChatGPT4.0/4.5 and Claude, in order to scope and theorise the collaborative and co-creative potential of AI in the knowledge production process. The work was initiated by humans, engaging in conventional reflexivity. We then engaged with AIs deeply, discursively and reflexively. We take full responsibility for the content of the publication - it was initiated, shaped and produced by us. However, this paper is true human-machine hybrid – an example of AI-augmented collaborative, collective, epistemic reflexivity (CCER), i-CCER.