Abstract

This study applies a practice theory lens to explore how deepfake technology impacts journalism. Based on an interview study of journalists and media and journalism experts (n = 20), we find that on a micro-level, the emergent deepfake technology impacts the presentation and packaging of journalistic output (e.g., heightening possibilities for personalization) as well as skills needed for deepfake verification. We highlight the role of data literacy in handling both the technology’s risks and potentials and emphasize the need of enhancing journalists’ (traditional) fact-checking skills. Further, the technology also affects staffing. On a meso-level, the technology is altering the production-processes of journalistic content and fact-checking and triggers the development of new guidelines. It may also have financial implications. Finally, on a macro-level, deepfake technology is discussed considering the principles and objectives of journalism, with new trends (e.g., personalization) and implications for journalistic quality and the audience relationship at the center. Based on the results of this study, recommendations for research and practice are derived.

Introduction

Today, artificial intelligence (AI) technologies can be used to create highly realistic synthetic media. So-called “deepfakes” are a subset of synthetic media that challenge the authenticity of audiovisual messages (Bateman, 2022). Differentiating real from fake audiovisual materials is becoming increasingly difficult, for both media practitioners and news consumers (Weikmann and Lecheler, 2023). The manipulation of images, however, have long been part of the history of photography (Habgood-Coote, 2023). The development of digital applications such as Photoshop revolutionized the manipulation of media and evoked debates around the ethics of image editing in journalism (Manovich, 2001; Mitchell, 2001). For example, the 1994 TIME magazine cover featuring a digitally altered mugshot of O. J. Simpson, that was darkened and made him appear more threatening, led to widespread criticism. The image was seen as reinforcing racial stereotypes and manipulating public perception ahead of his trial. The controversy sparked lasting debates about journalistic ethics, visual representation, and the limits of photo editing in news media (Schifellite, 2022).

These debates also relate to challenges of a new technology such as deepfakes, which allow for the creation of even more realistic and entirely new media. In contrast to manual editing tools, AI systems enable their users to independently create deceptive media that are not limited to text or images but can change the entire appearance of a person. Deepfakes can be considered a new means of media manipulation that challenge the perception of visual truth (Gregory, 2022). Risks associated with deepfakes are numerous and especially refer to their harmful use for propaganda and disinformation (Citron and Chesney, 2018). Deepfakes, on the one hand, necessitate the promotion of technical skills to detect highly credible fake media as well as the development of detection technology (Godulla et al., 2021; Seibert, 2023; Weikmann and Lecheler, 2023). On the other hand, deepfakes may also offer opportunities for creative industries. Research has begun to address the possible benefits of the technology for journalism, including, for example, new opportunities for inclusion of AI in production processes (e.g., for editing) or the creation of synthetic media (e.g., deepfake communicators in customer service; WDR, 2021).

To date, elite newsrooms with resources to experiment and implement the new technology are already integrating AI into production processes and are utilizing the creation of synthetic media (Jones et al., 2022). Making use of the technology’s potentials, however, also necessitates the compliance with democratic norms within journalistic innovations (exemplified in Meier et al., 2024): Public service media (PSM) in Germany, for example, are required to foster an informed and active public through their programming mandate – for which they must be able to reach audiences with their output in the first place, through innovative formats that can help meet audience expectations in a changing media environment (Stehle et al., 2022). This could include AI-generated content.

To date, a growing number of studies are examining deepfakes in the context of journalism (cf., Walker, 2019). Prior studies indicate that deepfakes might result in a loss of overall trust in news as news consumers fear that journalists could fall for a deepfake (Vaccari and Chadwick, 2020; Walker, 2019). Additionally, scholars argue that informing news consumers about the increasingly easy creation of deepfakes might promote their correct evaluation of real and deepfake media (Shin and Lee, 2022) but can also distort their perception of the prevalence of deepfake media online (Gregory, 2022). In this context, Wahl-Jorgensen and Carlson (2021) find that prevailing media coverage focused on worst-case scenarios of deepfake applications can reinforce the perception of the danger of the technology – in contrast to its actual negative effects.

We argue that journalists’ understanding and attitudes towards deepfake technology play a crucial role in how they deal with the new technology. This includes expanding data literacy and skills in identifying deepfakes as the technology pushes the boundaries of traditional fact-checking skills (e.g., critical thinking, evaluating the credibility of information, research skills to validate the origin of information and verify supporting evidence) due to the fast-paced and high-quality nature of AI manipulation (Shin and Lee, 2022; Gregory, 2022). Mahony and Chen (2024) further point out the necessity of competencies in evaluating the quality of information that were generated or enhanced using generative AI (e.g., bias and training data check). Based on recent studies that emphasize the need for data literacy considering the challenges of integrating AI in journalistic practice (Graßl et al., 2022; Jones et al., 2022; Mahony and Chen, 2024), this study focuses on the role of data literacy not only for mitigating the risks associated with deepfake technology, but also for leveraging its potentials in journalism.

This study contributes to the emerging field of AI in journalism by offering a multi-level analysis of how deepfake technology affects journalistic practice. We extend recent research on the challenges of AI adoption in journalism (e.g., Graßl et al., 2022; Simon and Isaza-Ibarra, 2023; Simon, 2024) by focusing on the implications of deepfake technology. Using a practice theory lens (Couldry, 2004; Ryfe, 2018), we examine not only how individual journalists interact with the technology, but also how the emergence and spread of the technology reshapes newsroom routines and affects the journalistic system. We highlight discrepancies in how journalists and experts assess risks and opportunities associated with deepfakes. Specifically, we explore what is perceived as essential for mitigating harms, what needs to be taken into consideration in realizing the technology’s potential for journalism (e.g., trust, new formats, reaching younger audiences), and how practitioners navigate these tensions. In doing so, we clarify negotiation processes aimed at balancing risk prevention with innovation, offering original insight into how journalism adapts in response to synthetic media. We address the following research question: RQ: What are potential impacts of the deepfake technology on journalistic practices?

To answer this question, qualitative interviews with journalists working at established German media outlets as well as media and journalism experts were conducted between September 2022 and May 2023 (n = 20). We find that while deepfakes are only in the initial stages of shaping journalistic practice, experts and journalists alike expect the technology to have effects on all levels of journalistic practice.

Challenges of AI adoption in journalism

Technological innovations are a constant companion of journalism. Mastering technological innovations requires continuous adaptations to journalistic practices and structures of entire newsrooms. Recently, the impacts of AI on journalism and editorial work have been discussed extensively in journalism practice research (e.g., Graßl et al., 2022; Simon, 2024; Simon and Isaza-Ibarra, 2023). While AI as a topic is becoming increasingly present in newsrooms, previous studies show that few journalists make use of AI tools (e.g., analyzing large amounts of data, verification of degrees of manipulation, speech-to-text applications; Graßl et al., 2022), and their effects on the news industry are still barely understood (Simon, 2024). Initial observations reveal that the implementation of new AI technologies in newsrooms is often associated with organizational challenges and missing guidelines (Graßl et al., 2022). Handling AI tools requires education and training programs for journalists to gain new perspectives to be able to weigh the benefits and drawbacks of the new technologies for journalism (Graßl et al., 2022; Jones et al., 2022).

The potential uses of AI range from, for example, research and topic identification, to verification, presentation, and preparation of journalistic output, and support for its distribution and personalization (e.g., Arguedas and Simon, 2023; Graßl et al., 2022; Simon, 2024). In this context, it is argued that AI reshapes the way content is tailored and distributed to the needs of news consumers (Arguedas and Simon, 2023). Optimistic views of the use of AI technology suggest that media coverage will become more accessible, personalized, and interesting (Arguedas and Simon, 2023). Additionally, automation processes are argued to increase efficiency and might be used for news recommendation and distribution (Arguedas and Simon, 2023; Simon, 2024).

In contrast, some highlight the potential challenges of using AI for journalistic quality and journalistic principles. Concerns relate to the independence of journalism from platform providers, both in the production as well as the distribution of news (Simon, 2024). Media generated using AI algorithms might reflect biases against disadvantaged groups which are ingrained in the training data and therefore require careful supervision (Simon and Siaza-Ibarra, 2023). Often, this process is externalized when algorithms cannot be developed in-house. When it comes to distribution, platform logics are again a concern. Specifically, the increasing personalization of content and automatization of how content is distributed to audiences could reinforce exposure to one-sided coverage (Arguedas and Simon, 2023; Simon, 2024). Hence, implementing AI-driven innovations in journalistic practice becomes a continuous negotiation on maintaining journalism’s principles while expanding the focus on audiences’ preferences and expectations (Raemy et al., 2025; Wolf and Godulla, 2016).

Deepfakes in journalism: Implications for journalistic practice

Deepfakes are frequently associated with disinformation – the creation and spread of false information with the intent to harm individuals, organizations, or whole nations (Wardle and Derakhshan, 2017). Applied to the context of journalistic practice, concerns abound that individual journalists could be misled by deepfake media, potentially becoming ensnared in disinformation campaigns, for example, by sharing fake recordings of a candidate during elections (Citron and Chesney, 2018). If journalists and established news organizations lend their credibility to inauthentic audiovisual media, this may not only damage their own credibility, but also negatively impact the media-audience relationship and threaten journalism as a democratic institution as a whole (Citron and Chesney, 2018).

Hence, journalists need to learn how to deal with deepfakes (Gutsche, 2019) – which includes their detection and reporting on them: Fact-checking processes, such as verifying the authenticity of media, are expected to become more difficult to master (Gutsche, 2019; Ritchie, 2023). Journalism training will increasingly need to focus on teaching forensic techniques, digital skills and using detection software for deepfake identification (Walker, 2019). However, Weikmann and Lecheler (2023) point out that, to date, there is little reliance on such programs. The authors find that although new detection technology is being introduced in practice, they are often faulty and therefore not yet integrated into daily fact-checking processes.

As noted, deepfake technology does not exclusively threaten journalistic practice, it also offers new opportunities. For example, the technology could find beneficial applications in newsrooms in the development of new forms of media generation and illustration and content personalization (Ritchie, 2023; WDR, 2021). The actual application of such beneficial use cases, however, also raises questions about maintaining journalistic principles, such as objectivity, accuracy, or impartiality. To ensure the preservation of such norms, practitioners call for AI literacy training for journalists (WDR, 2021).

Nonetheless, it bears pointing out that current debates on deepfakes echo previous debates on journalistic innovations – not just regarding manipulation, as highlighted using the example of Photoshop (Manovich, 2001; Mitchell, 2001), but also regarding the democratic function of journalism in general. For example, Heinonen (1999) points to fears of how the internet could “change citizens’ communication culture, leading them away from journalism” (p. 78). Years later, Poell and Van Dijck (2014) discuss the influences of social media and algorithms on consumers news diets. According to the researchers “this logic undermines journalism’s ability to fulfill its key democratic functions of keeping governments accountable and facilitating informed public debate” (p. 197). Similar concerns abound in the discourse on artificial intelligence. While AI is seen as useful in aiding with the personalization of journalistic products, contextualization of information must remain in the hands of journalists (Graßl et al., 2022).

To date, little is known about the actual impact of deepfake technology on journalistic practice, both in terms of constructive applications, but also regarding necessary risk mitigation. While in the social sciences research has begun to address deepfake reception among audiences (e.g., Vaccari and Chadwick, 2020), there are still sizeable gaps in research on the impact of deepfakes on (1) individual journalists’ engagements with the technology, (2) journalistic organizations and newsrooms, and (3) journalism more broadly (e.g., formats, principles, reception). The present study will address some of these gaps by exploring the perspectives of journalists and media and journalism experts on the multi-faceted impacts of deepfakes on journalistic practice.

Applying a practice theory lens is especially helpful as practice theory focuses on how individuals are interacting with and using media in specific situations and contexts (Couldry, 2004). It also centers the element of change (Ryfe, 2018), as practices evolve with new media technology. A key assumption of practice theory when applied to media is that media (re-)order practices in the social world (Couldry, 2004). Practice theory has variously been applied to journalism (cf., Ahva, 2017). It allows for an examination of how a novel technology like deepfakes may affect journalism (which we conceptualize as ‘impacts’), taking account of multiple levels of analyses (e.g., individual journalists interacting with the technology, applications within organizational settings, implications on the journalistic system).

Methods

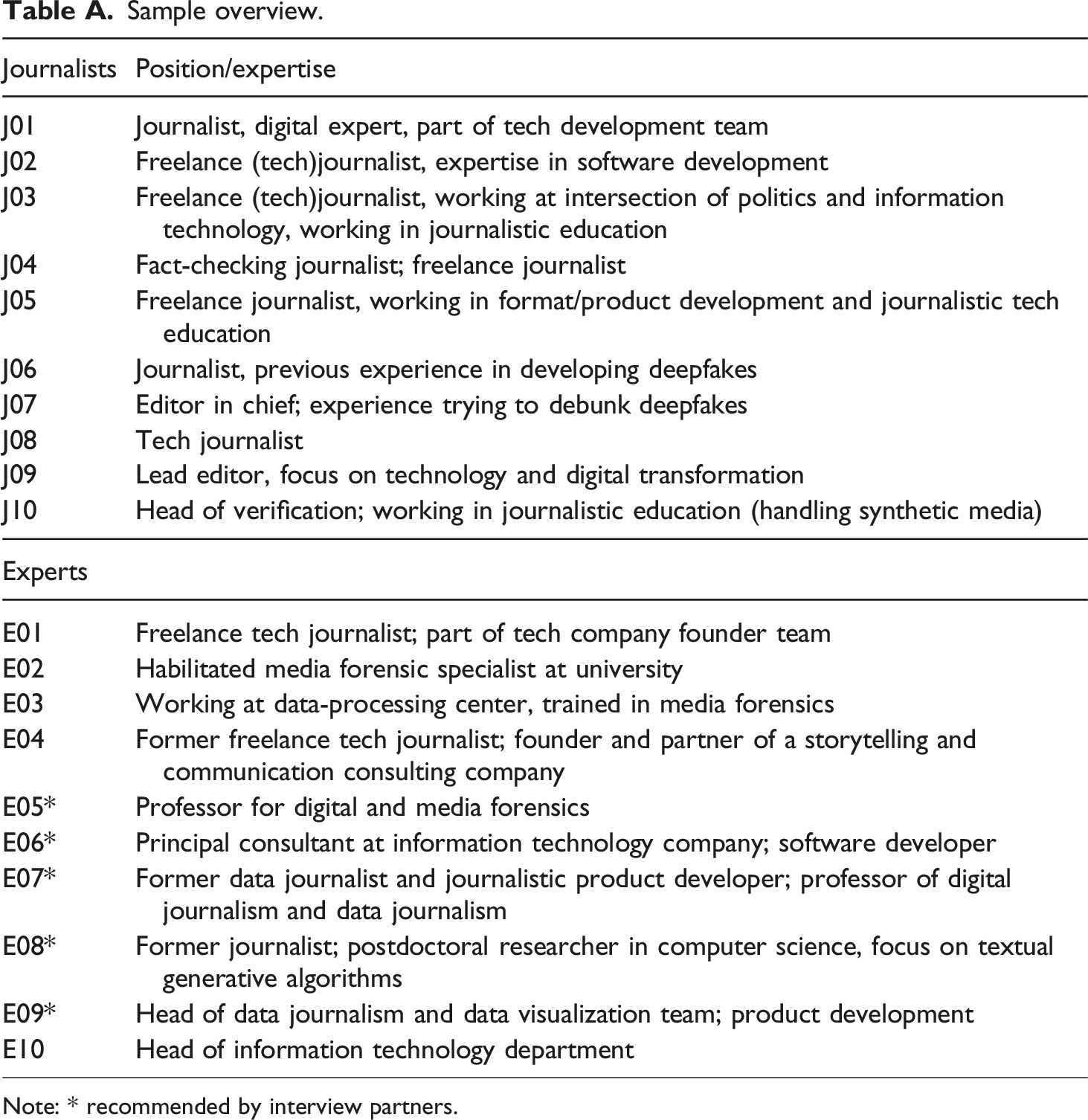

To answer this study’s research question, we conducted qualitative interviews with 10 journalists working at established German media outlets and 10 media and journalism experts, between October 2022 and May 2023. The sampling of journalists was based on an analysis of German public and private media reporting on deepfakes, with journalists covering deepfakes identified as potential interview partners. Using the search term “deepfake”, in September 2022, 23 websites of leading German public and private media such as WDR, rbb, SPIEGEL and ZEIT were searched to identify articles covering the topic, and after checking their authors’ profiles, the lead journalist on each article was added to a contact list. The requirement for the inclusion of journalists was that they have dealt with deepfakes in at least one article and that their main thematic focus includes technical innovations and/or AI. Due to the novelty of the phenomenon at the time of the study most of the journalists included in the sample either focused on technological innovations in their reporting, or were themselves part of tech development teams, rather than representing a broad range of beats (e.g., politics). To gain a wider perspective on the topic, we additionally interviewed media and journalism experts who had engaged with the topic. To identify these experts, specialists that were quoted or mentioned in the previously researched articles were identified and added to the contact list. This included internal experts working as innovation and technology managers or fact-checkers at the respective media organization (e.g., at WDR Innovation Hub) as well as external experts working, for example, at digital agencies and academics. Additionally, at the end of each interview, interviewees were asked for further experts who they considered to be able to provide fruitful insights. Several of these interviewed experts had prior journalistic experience and were still occasionally working in journalism (see appendix, Table A). Thus, both interviewed groups had expertise on deepfakes and journalism, and were able to reflect on the impact of the technology on journalism broadly. In total, 53 journalists, 20 media innovation and technology experts and 10 scholars were identified. Based on their visible engagement with the deepfake topic, 48 potential interview partners were contacted, finally resulting in 20 interviews overall (appendix, Table A).

Interview guidelines

The interview guidelines were derived from a review of the extant literature on AI innovations and deepfake technology. They first established the interviewee’s professional background, their understanding of deepfakes, and individual journalists’ prior interactions with the technology (Couldry, 2004). Second, the potentials of deepfake technology for journalism were investigated, with interviewees being asked about general fields of application, followed by questions on possible changes in production routines, day-to-day workflows, or business opportunities. Third, the interview guidelines addressed risks for journalism. Fourth, practices when encountering (potential) deepfakes were discussed, with differing questions for media and journalism experts and journalists. Journalists were asked to what extent the detection of deepfakes already plays a role in their daily workflow and how they would go about identifying a deepfake. The interview guideline for experts focused on deepfake detection programs and their usage in journalistic practice. Finally, both journalists and experts were asked about how journalists and journalistic training should adapt to the changing technological landscape due to deepfake technology. For the evaluation of the impacts of the technology, practice theory served to examine potential changes of journalistic practices and the transformation of organizational workflows (Couldry, 2004; Ryfe, 2018). Specifically, practice theory enabled an understanding of how journalists’ activities (routine tasks, processes), interactions (use of technologies, resources for detecting or creating deepfakes) and perceptions (ethical considerations) are shaped by the affordances of deepfake technology (cf., Ahva, 2017).

Data analysis

All interviews were transcribed, and subjected to a qualitative content analysis (Mayring, 2015). Hence, a category system was initially created deductively, based on the individual key areas defined in the interview guidelines. Subsequently, new categories were derived from the material inductively. This second round of coding was especially relevant due to the explorative nature of the interviews, and can be seen exemplified in how potentials of the technology were coded, deductively and inductively: While in the first round of coding, potentials were only coded generally for content generation, changes in day-to-day working routines of journalists, and new business models, the second round allowed for a more specific breakdown of applications, for example, generation of textual versus (audio)visual content, specific personalization options, and specific new business models (e.g., licensing of voices, faces). Further inductive categories included thoughts on the obstacles of realizing these potentials, ethical risks of deepfake-production by journalists, or implications of deepfake technology for journalism education. The coding was conducted using MAXQDA (2020) from the end of April to the end of June 2023.

Results

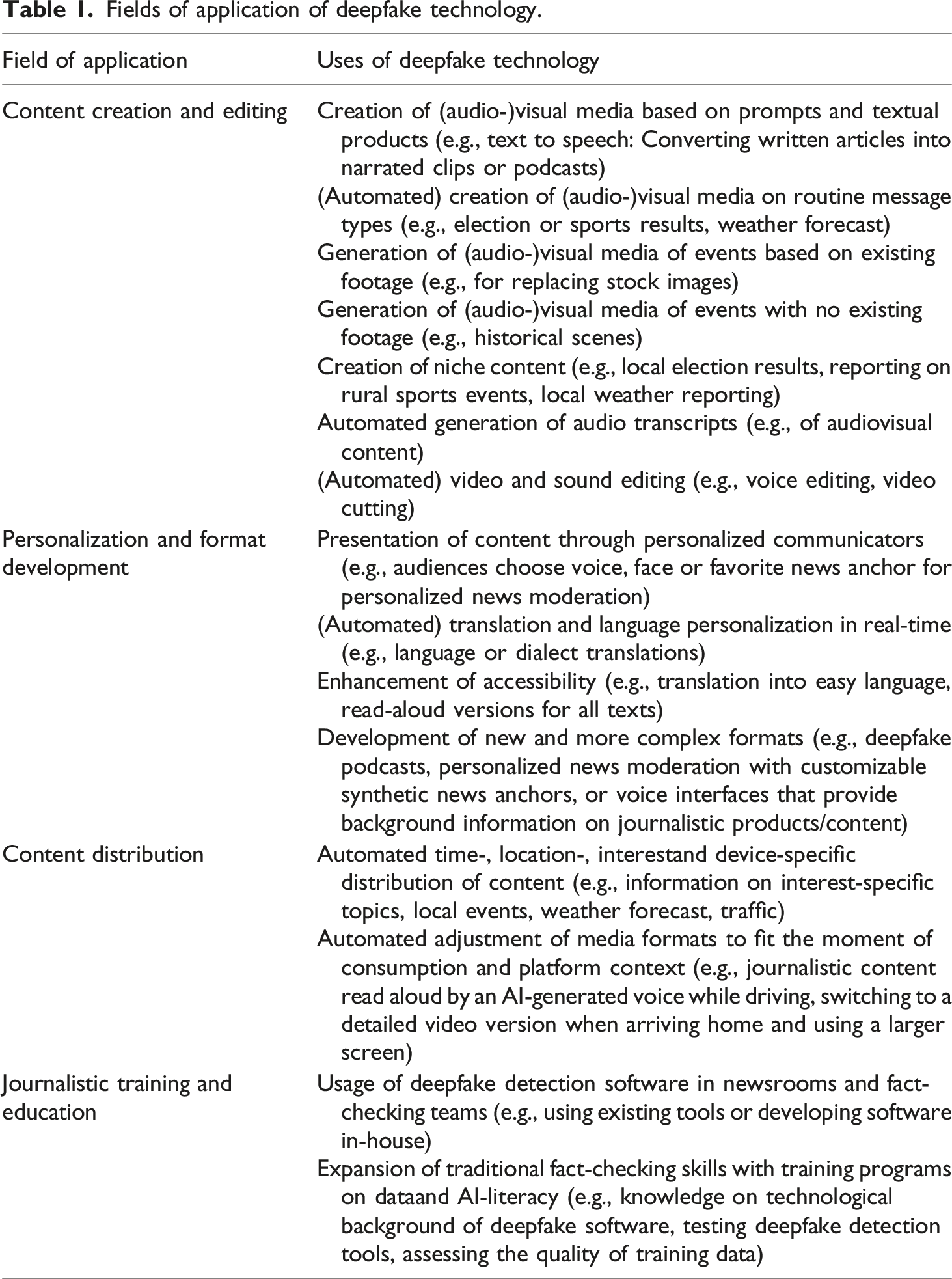

Fields of application of deepfake technology.

Based on the potential fields of application of deepfake technology as well as further implications of the technology, a total of 10 impacts of deepfake technology on journalistic practices on a micro-, meso-, and macro-level were formulated. When referencing journalism, journalists and experts alike used “journalism” and “news” synonymously, without specifying genres of articles, or specific news beats. Experts and journalists evaluated the technology and its impact on journalism very similarly. Results are therefore not discussed separately, as tensions in viewpoints emerged more between individuals rather than the two interviewed groups. This might be due to the technological background of the journalists as well as the journalism background of most of the media experts.

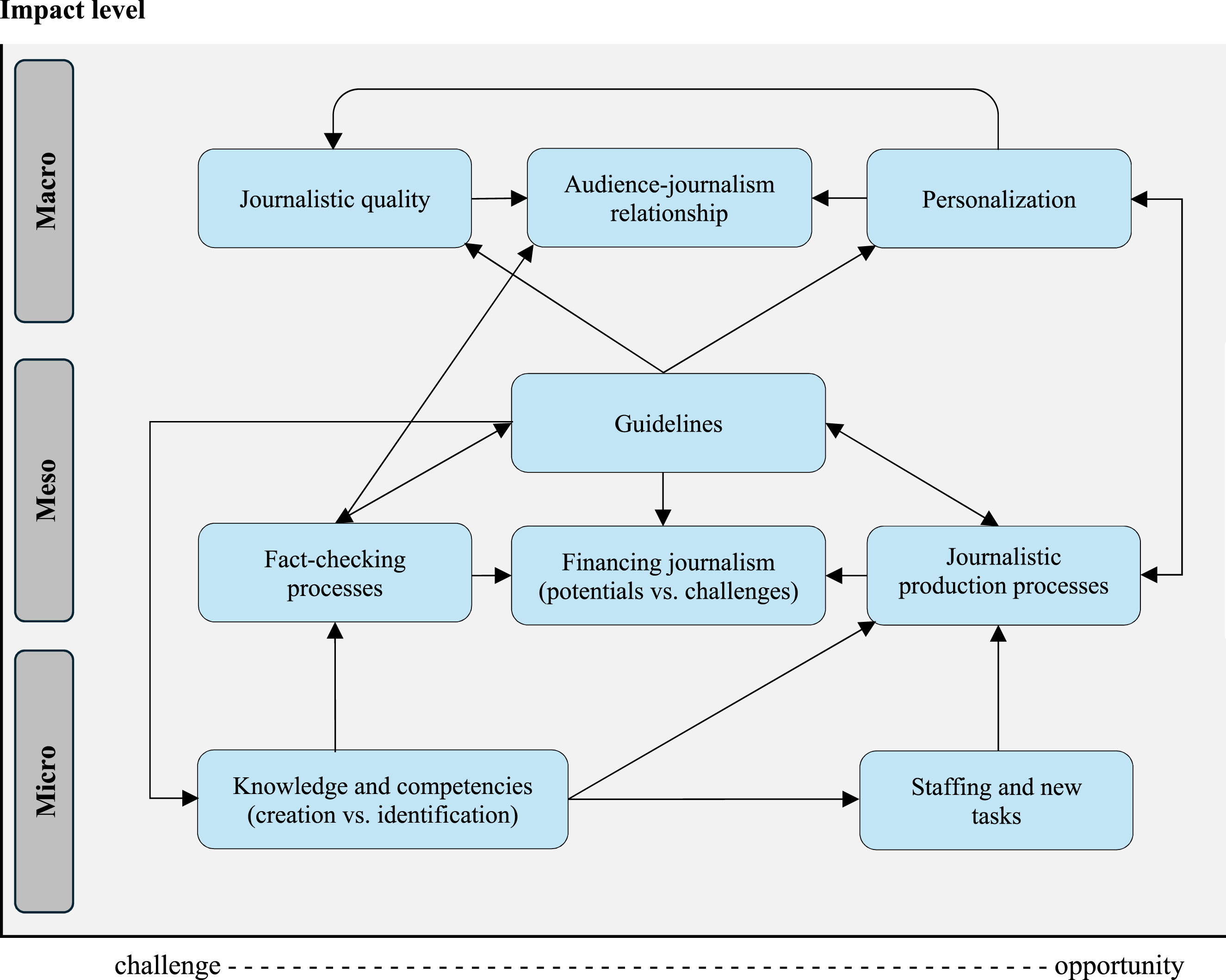

Figure 1 provides an overview of these impacts and their relationships. Aside from the three levels of analysis, it differentiates impacts by their valence, i.e., if they were described more critically as a challenge, or more affirmatively as an opportunity. Overview of deepfake impacts on journalistic practice.

Impacts on the micro-level of individual journalists

According to the interviewees, deepfakes could have an impact on the knowledge and skills required in both journalistic media creation and deepfake identification as well as on staffing in newsrooms.

Impact: Individual journalists need to learn how to use deepfake technology in production processes of journalistic media

To date, potentials of deepfake technology are rarely pursued in practice, but the interviewees formulate potential future use cases which indicate that the technology could reshape individual journalistic routines, thus, requiring new forms of knowledge and skills. According to the participants, deepfake products could allow for the creation of audio-visual journalistic media. In terms of making use of the technology’s opportunities for journalistic media creation, the interviewees argue that individual journalists must gain knowledge and develop basic skills in using the technology. While journalists do not need to become AI experts, interviewees state that they must develop a sufficient level of data competency. This means to learn about “the basics of machine-learning-algorithms” (E10) and “to teach self-learning algorithms something” (E07). As journalists put it, there needs to be an understanding “that the job profile of journalists is changing, that [journalists] are not just writers” (J05).

Further competences revolve around a focus on audience perspectives. Interviewees describe that it is becoming increasingly important to “understand how we can create personalized content and what people actually want from journalism” (E07). When referencing possibilities for personalization, interviewees describe both the creation of content that did not previously exist (e.g., generation of articles on elections in smaller municipalities (J05) and personalizing the presentation of said content (e.g., adjusting media formats to specific use cases (E07)). Further examples of personalization opportunities include translation, designing of avatars in user environments, personalized greetings, or getting to choose which journalist presents the news. The specific potential of deepfake technology compared to other AI applications, according to the interviewees, lays in the audiovisual synthetic depiction of real persons. When it comes to whether journalists should be able to create deepfakes themselves, they first need to “be educated in checking the quality of these systems consistently” (E10) to be able to weigh the potentials and risks of deepfake implementation.

Impact: Journalists need to develop competencies in identifying audiovisual deepfake media

Interviewees identify a need to deal with the threat of maliciously produced deepfakes which is linked to changing competencies in verifying audiovisual media. Respondents highlight: “Disinformation is being spread anyways. And deepfakes are the next level. Like, we can already fake photos using photoshop, but with deepfakes we can now fake videos. We need an awareness about this in journalism” (J01). The quotation points to the problem that maliciously produced deepfakes might not always be maliciously circulated but could be spread inadvertently by audience members or journalists who fall for the fake. Within newsrooms, however, addressing such threats has so far been largely intuitive.

So far, however, deepfakes are still said to be relatively easy to identify (e.g., by evaluating lip and mouth movements, catchlights in the eyes, blurred faces, distorted voices). Hence, most interviewed journalists highlight the importance of focusing on traditional research and fact-checking techniques in journalism training to counter potential threats of maliciously spread deepfakes: “Such skills already exist in journalism training. It’s research and verification of contents. That’s something we’ve always had to do. Checking sources and researching” (J01). Nonetheless, both journalists and experts agree that technological tools aiding journalists in the identification of deepfakes will become more common in the future. From the experts’ perspective, however, journalists may first need acquire the necessary skills for working (e.g., knowledge on statistics, data analysis).

Impact: Deepfake technology will influence staffing and tasks in journalistic practice

Interviewees speculate that using deepfake technology in media creation could free up journalists for more demanding tasks as the “technology just takes over tasks and humans can continue doing what the technology cannot do” (J05). In contrast, some also voice concerns about possibly losing their jobs to the technology. While job loss might be a negative outcome of the rise of the technology, the interviewees rather point to the human competences outlined as necessary for future journalists: While technological competencies are needed to stay relevant in a developing working environment, there are still many tasks which cannot be taken over by machines – both in research and deepfake detection, as well as general media production: “It’s the people who have to find something else to do, some cooler other things to do” (J05). Finally, the interviewees argue that the need for deepfake detection teams could also lead to new job offers in newsrooms.

Impacts on the meso-level of newsrooms

Shifting the perspective to the meso-level, deepfakes could influence production processes of journalistic media and offer new paths but also pose new challenges for financing in journalism. Additionally, the technology calls for enhancing established fact-checking processes and guidelines for mastering the potentials and risks of the technology.

Impact: Deepfake technology will change production processes of audio, visual, and audio-visual media in journalism

In terms of making use of the visual components of deepfake technology for journalistic media creation, the automated generation of illustrations, stock images and graphics are highlighted by participants. For example, generating visuals for court proceedings or historical events: “I could imagine showing things … that weren’t documented, and that you can now reconstruct” (J07). Additionally, participants mention the creation of audio (from textual data or freely) and synthetic news anchors (replicas of actual news anchors, or fictional personas). Hence, the use of deepfake technology could enable “the production of complete news programs … where you basically only have to provide the text or the language” (E01).

Overall, interviewees mainly considered the creation of weather, sports and traffic news, while the development of new, complex personalized formats (deepfake podcasts, synthetic news anchors) receives less attention overall. Some participants, in contrast, argue that this wouldn’t “have anything to do with journalism anymore [but] is scamming the viewer or listener” (J03). This critical stance on deepfake applications in journalism can be related back to the technology’s association with disinformation and fiction, echoing public concerns.

Impact: Deepfake technology will provide new financing opportunities and challenges for journalism

Deepfake technology is viewed by participants as an innovation allowing for new business areas. In this context, interviewees suggest that new journalistic formats could increase audience reach due to personalized presentation or diversified content – in turn leading to rising revenues. Hence, some interviewees argue for the development of personalized formats that “deliver journalistic products the way people would prefer to have it, from their favorite news anchors, in the voices they like, from … the people they trust” (E07).

Further, the automatized generation of audiovisual media based on textual products could allow for a greater accessibility on news websites and audience reach. Interviewees argue that using deepfake technology in automation processes could guarantee the availability of read-aloud options for all articles and the creation of easy language formats. Other ways to increase audience reach include the simultaneous translation of audio formats into different languages.

Finally, some mention that deepfakes may increase the need for media verification, verification workshops and toolboxes, which might also offer new revenue streams to journalistic organizations, as they could become forerunners in offering such resources to audiences. However, the heightened need for verification would also mean that more resources would need to be invested in fact-checking processes, “because you need people who can do it, and that is an added cost” (E04). Further, a worsening of the public trust in media outlets, for example if deepfakes are unintentionally spread by journalists, could lead to a loss of income (e.g., if audiences turn their back on the media outlet). Finally, there is the risk that audiences reject new deepfake-based formats and that technology investments fail. Some participants also raise concerns about a lack of guidelines on how to ensure the protection of personal data in the training of algorithms, which could lead to costly lawsuits if the technology is being accidently used incorrectly in journalistic content production.

Impact: Journalistic fact-checking will have to adapt to the deepfake technology

Interviewees discuss at great lengths the risk that the technology may contribute to disinformation and argue that newsrooms will have to reemphasize fact-checking as deepfakes proliferate. Interviewees expect that fact-checking will become more complex, though experts and journalists alike point out the fruitfulness of current journalistic routines in debunking maliciously spread misinformation. The interviewed experts state that, so far, deepfake detection software is costly and not widely available and that the development of verification technology is a cat-and-mouse-game: If the deepfakes get better, so must the programs. Currently available applications do not yet provide definitive answers to whether something that is being checked is a deepfake but instead offer percentages on the likelihood of the media being manipulated, which needs to be interpreted carefully and requires statistical skills. In consequence, there is a need not only for the enhancement of detection software but also for the promotion of knowledge about deep-learning technologies and data literacy.

Impact: Newsrooms will need to develop new guidelines for weighing the risks and potentials of deepfake technology

As practices within news organizations might change due to deepfake technology, both groups of interviewees contest that guidelines must be developed to find ways to handle both harmful and beneficial uses of deepfake technology.

All interviewees focus on the need for transparency guidelines when it comes to the implementation of the technology in journalistic media creation. Journalists must become able to combine “the values that are important in journalism” (E07) with the technology. Therefore, newsrooms need to provide contextual information on why they choose to implement the technology and to label instances where the technology is used. This is seen as particularly relevant when AI-generated content could be mistaken for real footage or depictions of reality – such as synthetic images, videos or voices – while transparency on AI tools for supporting tasks (e.g., transcription, text summaries) was considered less critical. So far, however, it remains unclear how implementations of deepfake technology should be labelled and to what extent audiences accept the use of the technology in journalism. Guidelines should therefore also address the audiences’ perceptions and needs.

Finally, the interviewed journalists highlight the need for guidelines on how to identify deepfakes and on who is held responsible for detecting a deepfake in the fact-checking process. Due to a lack of guidelines, most journalists ascribe the responsibility for handling and detecting deepfakes to themselves personally: “of course we journalists are responsible for what we publish” (J03).

Impact on the macro-level of journalism, journalistic quality and audience relationship

On the macro-level, deepfake technology could spark a new trend for personalization in journalism but could also influence the perception of journalistic quality and affect the audience-journalism relationship. Therefore, uses of the technology are discussed considering ethical aspects.

Impact: Deepfake technology could facilitate a trend towards personalization

The interviewees reflect upon how new personalized journalistic formats could help reach additional target groups. By generating more niche content and new presentation-formats, a new form of personalized journalism could be established, that – without the technology – would be limited by resource requirements. As an example, deepfake technology could be used to create articles on election results “for each rural constituency” (J05) or local sports team, thereby creating content for smaller audience groups. These contents could then be turned into audio-visual reports using artificially created “avatars … with voices, that were generated specifically for this format” (E10). This way, not only the contents, but also the presentation of the generated reports could become personalized to individual audience members. Additionally, the interviewees consider how audience members could select news anchors’ voices or faces or personalize avatars in video formats. This way, audience members could feel like they are “personally supplied with the needed information, backgrounds, investigations” (J05).

Finally, some interviewees argue that “personalized news is important because we can get the new generation to consume the news. Because if you always use Facebook or Twitter to inform yourself, then you will encounter fake news… And the solution is that people consume media content directly” (E08). In doing so, journalism could “restore closeness” (J01) between media outlets and audiences.

With too much personalized content, however, some interviewee also describes the risk of audience members no longer becoming informed comprehensively: “This shows very clearly that we have distanced ourselves from the original tasks of journalism, the gatekeeper function, the participatory function, the contextualization function” (J03).

Impact: Deepfake technology could harm journalistic quality

There is no consensus among the interviewees as to whether the use of deepfake technology in journalistic practice might affect journalistic quality. While many interviewees argue for the personalization of journalistic products using this AI technology, others describe these forms of personalization as entertainment that goes beyond the bounds of journalism. Interviewees especially voice concerns about the democratic function of journalism. One expert criticizes personalizing presentation “to the extent that you only show everyone what they are interested in anyways, and not staying true to the mission of journalism, to inform people about the current most relevant topics and to make them become able to make decisions” (E07).

Further, some interviewees view journalism’s duty to depict reality not to be compatible with the use of fictional synthetic representations: “In my opinion, recording a deepfake podcast that says ‘this is how it could have been’ … has no potential for journalism” (E04). Those interviewees therefore criticize the creation of fictive audiovisual media on real events. In contrast, they argue in favor of a “separation … between fiction and information” (J01). Journalistic quality could decline because “many of these [personalized] business areas are dubious and … represent fictional entertainment” (J08).

Impact: Deepfake technology could affect the audience relationship

Interestingly, journalists mostly consider deepfake technology implementation to have a positive effect on audience trust. They describe personalization as “an opportunity … to create closeness again” (J01). Further, both journalists and experts describe how journalism could become one of the few sources of trusted news in times of disinforming deepfakes: “To debunk them and basically be a mouthpiece for audiences … This has been seen too little so far, but this is where our strength lies and this will also be the market of the future in journalism” (J10).

In contrast, individual interviewees also point out several potential negative influences of deepfake technology on audience relationships: (1) journalists could fall for disinformation and spread it, leading to public distrust, (2) journalists could wrongfully identify cheap fakes as deepfakes and spread unfounded fear about the technology, (3) audiences could fall for a deepfake and in turn not trust the verified information published by the media. Furthermore, (4) journalistic content created using deepfake technology could be taken out of context (for disinformation).

Discussion

This study focuses on the role of deepfake technology in journalism by applying a practice theory lens (Couldry, 2004; Ryfe, 2018) and presents results from an interview study with 20 German journalists and media experts. We identified a total of ten impacts for individual journalists, newsrooms and media organizations, and for the journalistic system – highlighting how these impacts relate to each other.

Previous studies emphasized the potential of AI innovation for the personalization of journalistic output (Arguedas and Simon, 2023; Graßl et al., 2022; Simon, 2024). We expand on this notion by discussing to what extent deepfake technology offers new paths for said personalization. We find that uses of the technology in the future are mostly seen in rather “simple” areas of application. These include, for example, future media editing and personalized offers using digital voices based on audience interests and locations. In contrast, we find that the development of new complex and personalized formats using deepfake avatars and news anchors were mentioned less frequently as a future potential. This is because personalized journalistic output might become too service- and entertainment-oriented and therefore could go beyond journalism’s principles. Nonetheless, the interviewees also point out a certain dependency on developing more personalized formats of journalism, to stay competitive with social media, showing the need for journalism to adapt to audience expectations (Stehle et al., 2022) to remain relevant.

We also focus on audience reach and the audience relationship considering the implementation of deepfake technology and formulate implications for research and practice. Prior studies on the implementation of innovation in journalism have argued that the audiences’ perspective should be at the center in product development processes (Wolf and Godulla, 2016). Arguedas and Simon (2023) found that developing personalized journalistic output could make journalism more accessible and interesting. We expand on these findings by focusing on the use of deepfake technology. We find that personalized journalistic formats using deepfake technology could engage additional audiences, particularly younger target groups. In addition, personalized presentation using deepfake technology could enhance audience trust and create a sense of closeness. Thus, the future use of this technology represents an economic potential to address a broader audience and strengthen trust.

By strengthening trust and widening journalism’s audience, deepfakes could play a role in supporting media outlets to foster an informed, participatory public. On the other hand, the interviews also replicate findings on the need to implement journalistic values within the innovation process (Meier et al., 2024) to prevent undermining the democratic function of journalism – which has been discussed in the context of previous innovations, such as the internet (Heinonen, 1999), social media (Poell and Van Dijck, 2014), and AI (Graßl et al., 2022).

Third, interviewees address the audiences’ perception of deepfake technology. Previous studies found that implementing AI innovation in the creation and personalization of journalistic content requires transparent labeling to maintain the audiences’ trust (Arguedas and Simon, 2023; Simon and Isaza-Ibarra, 2023). In the context of deepfake technology, this is especially relevant because the emergence of the technology and its harmful use might decrease overall trust in news (Vaccari and Chadwick, 2020). We expand on this argument by discussing the ambivalent nature of the technology and the role of transparency in labeling deepfake media. We find that several interviewees see journalism’s task to depict reality as incompatible with the use of fictional deepfake representations. Hence, using deepfake technology for media labeled as “journalistic” could confuse audiences and raise skepticism towards the authenticity of the respective news outlet and journalism in general.

Furthermore, this study is subject to several limitations. Given the sample size and qualitative nature of the study, it cannot offer representative findings but primarily offer insights into how journalists and technology experts perceive and engage with deepfake technology. While this study focuses on implications of deepfake technology for journalism from the perspective of German journalists and experts, perceptions of the technology and developments in newsroom innovation, journalism education and responses to synthetic media may differ internationally. Hence, the results of this study should be understood as indicative of emerging developments but also leave opportunity for further research. Finally, the field of AI applications is developing rapidly, so that findings need to be understood in the context of the state of technology at the time of data collection.

Quantitative studies could assess the current use of deepfake technology in media organizations and markets. Case study research could delve deeper into the implications of the technology for journalistic training and education, innovation implementation, or newsroom processes. Questions remain specifically about technology acceptance and journalistic norms. Future studies should examine the extent to which potential areas of application of the technology for the creation and personalization of journalistic content change over time due to journalists’ growing familiarity with the technology. In this context, future studies could also explore how national and/or cultural differences shape journalists’ perceptions as well newsrooms’ preparedness and strategies for addressing this new technology.

Focusing on the audiences, more insights are needed on how personalized journalistic formats, created using deepfake technology, can be tailored to specific target groups. By conducting, for example, a usability studies, media organizations should explore which deepfake formats audience groups consider interesting and useful (e.g., soft news versus complex formats on historical events). Audiences could be involved in co-creating media using deepfake avatars or voices of their favorite news anchors could be explored. Moreover, we identify a need to further examine how labels for applications of deepfake technology may serve to prevent potential negative effects such as decreasing trust (Arguedas and Simon, 2023; Simon and Isaza-Ibarra, 2023). In this context, transparency could be a key precondition for newsrooms implementing deepfake technology retaining their credibility, maintaining audience trust, and respecting established journalistic norms.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project is co-financed with tax revenue based on the budget approved by the state parliament of Saxony (Sächsische Aufbaubank; 100607645).

Appendix

Sample overview. Note: * recommended by interview partners.

Journalists

Position/expertise

J01

Journalist, digital expert, part of tech development team

J02

Freelance (tech)journalist, expertise in software development

J03

Freelance (tech)journalist, working at intersection of politics and information technology, working in journalistic education

J04

Fact-checking journalist; freelance journalist

J05

Freelance journalist, working in format/product development and journalistic tech education

J06

Journalist, previous experience in developing deepfakes

J07

Editor in chief; experience trying to debunk deepfakes

J08

Tech journalist

J09

Lead editor, focus on technology and digital transformation

J10

Head of verification; working in journalistic education (handling synthetic media)

Experts

E01

Freelance tech journalist; part of tech company founder team

E02

Habilitated media forensic specialist at university

E03

Working at data-processing center, trained in media forensics

E04

Former freelance tech journalist; founder and partner of a storytelling and communication consulting company

E05*

Professor for digital and media forensics

E06*

Principal consultant at information technology company; software developer

E07*

Former data journalist and journalistic product developer; professor of digital journalism and data journalism

E08*

Former journalist; postdoctoral researcher in computer science, focus on textual generative algorithms

E09*

Head of data journalism and data visualization team; product development

E10

Head of information technology department