Abstract

The emergence of deepfakes has made it increasingly challenging to discern the authenticity of sources and messages. Despite the rapid accumulation of research on deepfakes in recent years, detailed reviews of the existing literature that integrate empirical findings in this area and guide future research are sparse. To address this issue, we conducted a systematic review of 261 quantitative and qualitative studies described in 219 articles on deepfakes across different disciplines. The results showed that most studies have been conducted in Western countries, with relatively little attention given to Asian countries and even less to African countries. Although there was diversity in the methodological approaches, the research on deepfakes found in the sample was still in the early stages of theory building and testing. Furthermore, the examined research focused primarily on the political domain and topics related to deepfake identification and detection. In light of this evidence, we propose an agenda for future research in this interdisciplinary field.

In late 2017, a Reddit user calling themselves “DeepFakes” posted pornographic videos in which the faces of celebrities were realistically superimposed on those of porn actors using open-source face-swapping technology. Since then, the concept of deepfakes has entered the public eye and received increasing attention. Deepfakes, a portmanteau of “deep learning” and “fake,” are produced using artificial intelligence (AI), particularly machine-learning techniques that “merge, combine, replace, and superimpose images and video clips to create fake videos that appear authentic” (Westerlund, 2019: 39). While deepfakes are among the most recognized examples, they belong to a broader category known as synthetic media. This term encompasses a diverse range of digital audiovisual artifacts generated from new or preexisting digital information. The outputs of synthetic media are often substantially distinct from their original data sources, but to the average viewer, they can be almost indistinguishable from authentic content (Barnes & Barraclough, 2020). Deepfakes thus exemplify the potential of synthetic media to produce highly realistic yet fabricated audiovisual content that depicts someone saying or doing something that never actually occurred.

Deepfake technology is a double-edged sword. On the positive side, it can provide benefits to many industries, such as entertainment and business. For example, it can be employed to produce more realistic visual effects in films, television programs, and other media forms, thereby delivering a more immersive and engaging experience for audiences. It also enables brands to provide highly personalized recommendations to meet consumer needs and lowers the cost of producing video campaigns for marketers, as in-person actors may no longer be required. However, this technology brings serious challenges to assessing visual authenticity and could become a major threat to individuals and society as a whole. For instance, deepfakes have been widely used to produce nonconsensual pornographic content, with 96% of online deepfakes being porn targeting specific women as subjects (Ajder et al., 2019). In addition, deepfakes open up new possibilities for the production and spread of disinformation, which is defined as false information deliberately created to deceive or mislead others (Wardle & Derakhshan, 2017). Previous research has shown that deepfakes have the potential to amplify the power of disinformation to confuse audiences, distort reality, and influence public opinion (Dobber et al., 2021; Hameleers et al., 2024; Vaccari & Chadwick, 2020).

Faced with the opportunities and challenges posed by deepfakes, a growing number of studies have investigated deepfakes in a wide range of contexts, such as politics (Appel & Prietzel, 2022; Hameleers et al., 2022), entertainment (Feffer et al., 2023; Singh et al., 2023), business (Powers et al., 2023; Sivathanu & Pillai, 2023), and sexuality (Fido et al., 2022; Flynn et al., 2022). These studies have evolved across disciplines and have become increasingly diverse in terms of concepts, theoretical perspectives, and methodologies. For instance, some scholars highlight the element of deceptive intent in their definitions of deepfakes, referring to them as “AI-enabled multimodal disinformation” (Lee & Shin, 2022: 533) or “advanced forms of visual disinformation” (Weikmann & Lecheler, 2024: 4). In contrast, other researchers take a more neutral perspective, defining deepfakes as synthetic media that present highly realistic content depicting individuals saying or doing things that never actually occurred, without necessarily implying malicious intent or deliberate deception (Preu et al., 2022; Westerlund, 2019). The lack of consensus on a definition of deepfakes was almost inevitable, given that researchers from various disciplinary backgrounds typically define deepfakes differently based on their disciplinary traditions and primary concerns. Even so, it is necessary to examine the conceptual elements on which most researchers in the field agree to promote a consistent and coherent understanding of deepfakes.

Naturally, research on deepfakes has been characterized by diverse theoretical perspectives and methodological approaches. Theories from different disciplines, such as psychology, communication, and sociology, have been adopted to study the phenomena and issues related to deepfakes. Likewise, a variety of methods, both qualitative and quantitative, have been used to empirically examine deepfakes. Some studies have utilized classic methods of social sciences, such as surveys, experiments, interviews, and focus groups, to collect data from various groups of people, including deepfake creators, audiences, and fact-checkers (Ahmed, 2022; Vaccari & Chadwick, 2020; Weikmann & Lecheler, 2024). Others have investigated deepfakes via digital artifacts using techniques such as discourse analysis, thematic analysis, and topic modeling to extract data from online resources (Brooks, 2021; Tang et al., 2023; Wahl-Jorgensen & Carlson, 2021). The complex patterns of differing theoretical frameworks and methodological approaches highlight the importance of systematically reviewing and summarizing the existing literature on deepfakes across disciplines to identify research gaps and help guide future research.

While previous systematic reviews have provided valuable insights into trends and patterns in deepfake research (Godulla et al., 2021; Vasist & Krishnan, 2022, 2023), relatively little effort has been made to synthesize empirical evidence in this area. To the best of our knowledge, only the study by Birrer and Just (2025) has systematically analyzed both quantitative and qualitative research on deepfakes. As empirical studies continue since the cutoff date of February 2024 for the articles included in that review, there is an urgent need to integrate recent findings from the latest literature and develop an up-to-date agenda for future research. Furthermore, to the best of our knowledge, no systematic review has yet mapped out the guiding theoretical frameworks and main research themes of empirical investigations in this area. Understanding the current state of theoretical applications and research interests can lay the groundwork for future advancements in theory and knowledge. Therefore, we aimed to conduct a systematic review to summarize existing empirical research on deepfakes, clarify key conceptual elements, identify dominant theories and methods employed, and examine the primary research areas and topics of interest. Specifically, the following research questions were proposed:

Methods

Database search

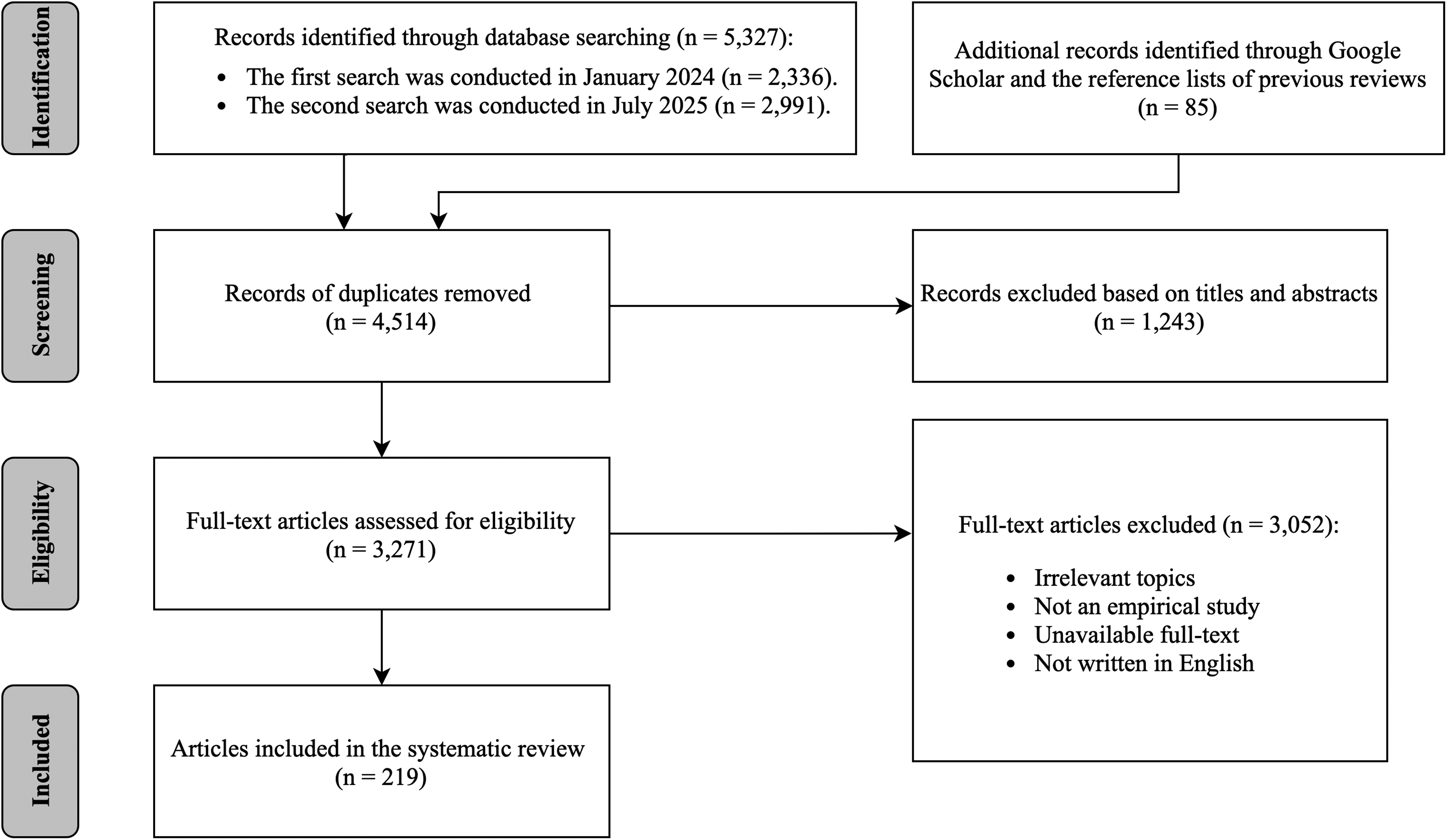

Guided by the Preferred Reporting Items for Systematic Review and Meta-Analysis (PRISMA) statement (Page et al., 2021), we conducted an extensive search for relevant published (journal articles and conference papers) and unpublished (theses and dissertations) works across several electronic databases: EBSCO, ProQuest, Scopus, and Web of Science. This search was performed using specific terms (e.g., “deepfake,” “face-swap,” “image manipulation,” “synthetic media,” “video manipulation,” and “voice manipulation”), along with their derivatives, in article titles, abstracts, and keywords. These terms were selected based on prior systematic reviews (e.g., Rana et al., 2022; Stroebel et al., 2023; Vasist & Krishnan, 2022). The search was limited to articles written in English and available online before 26 July 2025. The initial database search generated 5327 articles.

To locate additional articles not included in the databases listed above, we performed a search with Google Scholar using the same keywords and scrutinized the reference lists of previous review articles on deepfakes (e.g., Godulla et al., 2021; Vasist & Krishnan, 2022, 2023). This process yielded an additional 85 articles, bringing the overall total to 5412 articles.

After removing 898 duplicate articles, 4514 articles remained for initial screening. We examined the titles and abstracts to exclude articles that were clearly irrelevant to our research questions and retained those that appeared to be eligible. A total of 1243 articles were excluded at this stage, and the remaining 3271 articles were included. The full-text versions of these articles were then retrieved, examined, and screened based on the following inclusion and exclusion criteria. Figure 1 summarizes the search process and selection steps.

Flow chart of study retrieval and selection procedures.

Inclusion and exclusion criteria

Articles were included in the current systematic review if they: (a) focused on topics related to deepfakes, (b) presented empirical studies involving the collection and analysis of primary data, (c) were available in full text to the researchers, and (d) were written in English. We excluded articles that focused solely on technical aspects of deepfake production or detection, such as developing algorithms, models, or systems to identify deepfakes (e.g., Ilyas et al., 2023; Islam, 2022), as these were not the focus of the current research. Articles that included nonempirical research, such as review papers, conceptual work, or editorials, were also excluded.

After applying these criteria, the final sample consisted of 219 articles (159 journal articles, 44 conference papers, and 16 theses/dissertations) that documented 261 empirical studies.

Coding

The articles used for analysis were coded by two independent coders. First, basic study information, including article title, year of publication, publication venue, and country of study, was recorded. Additionally, article type (i.e., whether it was a journal article, conference paper, or thesis/dissertation) and journal discipline (i.e., journal categories as classified in the Web of Science Journal Citation Report) were coded.

Second, variables related to the way the concept of a deepfake was conceptualized were coded, such as whether a definition of “deepfake” was provided, and if so, what conceptual elements (e.g., modality, technique, and purpose) were used in the definition. Moreover, whether cited references were used to define deepfakes was coded; if so, those specific references were also coded.

Third, variables related to the theoretical framework were coded. Specifically, we focused on whether theories were used in the study to guide the empirical investigation, and if so, which theories.

Fourth, variables related to methodological approaches, including research design (e.g., quantitative or qualitative), method (e.g., survey, experiment, or interview), and sampling strategies (e.g., convenience, quota, or purposive), were coded.

Finally, variables related to the research area (e.g., politics or entertainment) and research topic (e.g., production, sharing, or identification of deepfakes) were coded.

Intercoder reliability was assessed based on a random subsample of 123 articles (approximately 56% of the total sample) and calculated using Krippendorff's alpha. The reliability scores were satisfactory, with agreement ranging from .82 to one for all variables. Disagreements were resolved through discussion.

Results

Development of the research field

RQ1 asked about the progress of deepfake research in terms of years, disciplines, and national contexts. In our sample, the earliest article was published in 2018. From 2019 to 2020, only a few articles were published on this topic, with 0 in 2019 and 10 in 2020. However, since the beginning of 2021, this area has received increasingly scholarly attention, as evidenced by the rapidly growing number of studies published each year that met the criteria for being included in our review (34 in 2021, 35 in 2022, 55 in 2023, 41 in 2024, and 43 in 2025).

The research on deepfakes that we examined spanned multiple disciplines. Among the 219 journal articles in our sample, nearly one-fifth were published in communication journals (n = 43), followed by psychology (n = 28), computer science (n = 16), multidisciplinary sciences (n = 14), information science and library science (n = 11), and business (n = 8). The journal with the most published articles in the analysis was Cyberpsychology, Behavior, and Social Networking (n = 8). Two journals, Computers in Human Behavior and Porn Studies, each published seven articles. Five articles were published in Convergence: The Journal of Research into New Media Technologies. Four articles were published in each of the following journals: Information Communication and Society, PLoS ONE, Scientific Reports, and Telematics and Informatics.

Regarding the national context in which the data were collected, our results show a pattern that focused primarily on Western countries, with 24.5% of empirical studies coming from North America (n = 64) and 19.9% from Europe (n = 52). Within these two regions, the United States (n = 63) was the country in which the most deepfake research in our sample was conducted, followed by Germany (n = 15), the United Kingdom (n = 9), and the Netherlands (n = 8). Moreover, in the articles in our sample, scholars investigated deepfakes across Asia (n = 40, 15.3%), including in China (n = 11), India (n = 8), Singapore (n = 4), South Korea (n = 4), and Japan (n = 2). However, much less scholarly attention was given to Africa, with only one study from Egypt (El Mokadem, 2023). It is noteworthy that only 4.2% of the sample studies (n = 11) gathered data from multiple countries using a cross-country comparison approach.

Conceptualization

RQ2 asked how deepfakes have been conceptualized in existing research. The results indicate that, among the 219 articles in our sample, the majority (n = 163, 74.4%) provided a definition of the term “deepfakes.” When taking a close look at these definitions, some interesting patterns of the included conceptual elements emerged. Depending on the study context, most definitions specified the modalities in which deepfakes were typically displayed (e.g., images, audio, or video), while a few (n = 30, 18.4%) broadly defined deepfakes as synthetic media. Among the various modalities, videos (n = 107, 65.6%) appeared to be the most recognized modality of deepfakes, followed by images (n = 43, 26.4%) and audio (n = 36, 22.1%). Only two definitions took text into account when conceptualizing deepfakes (Preu et al., 2022; Uchendu, 2023).

Additionally, the majority of definitions (n = 126, 77.3%) highlighted the use of advanced AI-powered techniques, such as deep learning (Khan et al., 2023) and generative adversarial networks (Brooks, 2021), in generating deepfakes. When it comes to the content of the output, most definitions (n = 120, 73.6%) underscored its inauthentic nature, describing it as “fabricated images, videos, or audio” (Sharma et al., 2023: 1728) or “video and audio of real people doing or saying untrue things” (Ahmed, 2023: 2). A realistic appearance was another conceptual element often noted, with 38.7% of definitions pointing out that deepfakes were media content that appeared realistic. Regarding the purpose of deepfakes, only a limited number of definitions (n = 14, 8.6%) emphasized malicious intent, such as misleading viewers (Rodríguez, 2021), deceiving individuals, and providing false evidence (Weikmann & Lecheler, 2024). Consistent with prior research (Birrer & Just, 2025), most definitions (n = 149, 91.4%) did not include (malicious) intent as a conceptual element.

Of the 163 studies that provided a definition of deepfakes, 62.6% used definitions introduced in the previous literature. The most frequently cited definition in our sample (12.9%) was that of Westerlund (2019: 39), who proposed that deepfakes were “hyper-realistic videos that apply artificial intelligence (AI) to depict someone say and do things that never happened.” This definition was largely in line with the most commonly used conceptual elements observed in the current analysis.

Theoretical perspectives

RQ3 asked about the most commonly used theories in existing research on deepfakes. Of the 219 articles in our sample, 35.6% (n = 78) applied theories to examine deepfakes and related issues, while 64.4% (n = 141) did not describe employing any specific theory in their empirical investigation. The most popular theoretical framework used was dual-process theories (n = 12). The overarching assumption of such theories is that the mental processes underlying social judgment and behavior can be divided into two distinct categories depending on whether they operate in an automatic or controlled manner (Gawronski & Creighton, 2013). Automatic processing is characterized by unintentionality, uncontrollability, unconsciousness, and high efficiency, whereas controlled processing is characterized by intentionality, controllability, consciousness, and low efficiency. Under this theoretical framework, some researchers have explored how individual characteristics (e.g., cognitive ability and political interest) influence the ways in which individuals process and respond to deepfakes (Ahmed, 2021; Appel & Prietzel, 2022). Drawing on one of the most prominent dual-process theories, the elaboration likelihood model (Petty & Cacioppo, 1986), Goh (2024) investigated how individuals identify deepfakes by using various strategies that correspond to different information-processing routes. MacKenzie et al. (2025) examined how preexisting attitudes toward a message source affect an individual's ability to distinguish between authentic and deepfake videos.

The second most frequently used theory was the modality–agency–interactivity–navigability (MAIN) model (n = 11), which provides a framework for understanding how technological affordances influence persuasive outcomes. The MAIN model classifies technological affordances into four primary categories: modality, agency, interactivity, and navigability (Sundar, 2008). It posits that these affordances may convey various cues that activate heuristics, thereby shaping user judgments and behaviors. As shown by examples in our sample, research on deepfakes focused primarily on the realism heuristic, a mental shortcut in which people tend to perceive information presented in richer modalities (e.g., videos) as more credible because it resembles the real world more closely. Some studies applied the realism heuristic to the context of deepfakes by examining whether and how false information conveyed through video was more likely to deceive individuals than the same information presented via text or audio (Ahmed & Chua, 2023; Hameleers et al., 2022; Lee & Shin, 2022). Additionally, Jin et al. (2025) employed the bandwagon heuristic (i.e., if others think something is good, then I should think so, too) to investigate the effects of system-generated cues (e.g., number of followers, likes, and views) on credibility judgments of deepfake videos.

In addition to these two theories, media richness theory (n = 5)—a conceptual framework that describes the capacity of communication media to accurately transmit information with minimal misunderstanding (Daft & Lengel, 1986)—was utilizd to investigate factors influencing individuals’ intentions to engage in online shopping (Sivathanu et al., 2023a), make hotel reservations (Sivathanu & Pillai, 2023), and visit tourist destinations (Sivathanu et al., 2023b) following exposure to deepfake videos. Similarly, the theory of planned behavior posits that an individual's likelihood of engaging in a particular behavior is determined by their intention to perform that behavior, with this intention being shaped by attitudes, subjective norms, and perceived behavioral control (Ajzen, 1991). This theoretical framework (n = 5) was applied to identify predictors of various behaviors associated with deepfakes, such as viewing and sharing content (Li & Zhao, 2024) as well as adopting protective measures (Pramod et al., 2025). Furthermore, the theory of motivated reasoning (n = 5)—which asserts that individuals tend to interpret and distort incoming information in alignment with their preexisting beliefs, often driven by tension between the motivation for accuracy and the desire to reach preferred conclusions (Kunda, 1990)—was used to examine how different motivational factors affect individuals’ responses to deepfakes in health (Lee & Hameleers, 2025) and political (Hameleers et al. 2025) contexts.

Methodological approaches

RQ4 concerns the methodological approaches used in existing research on deepfakes. The results reveal that quantitative design (n = 191, 73.2%) was dominant in terms of frequency among the studies in our sample, followed by qualitative (n = 61, 23.4%), mixed (n = 6, 2.3%), and multiple designs (n = 3, 1.1%). Among quantitative methods, experiments (n = 127) were by far the most commonly used. Most experiments were conducted online (n = 108); only a few were conducted offline (n = 13). In addition to self-report measures, researchers also collected experimental data through physiological measures, such as eye tracking (Ramachandran et al., 2023; Wöhler et al., 2021) and electroencephalography (Eiserbeck et al., 2023; Tarchi et al., 2023). The second most frequently used quantitative method was surveys (n = 56), most of which were conducted online (n = 49). A detailed examination of the methodological aspects of the studies using experiments and surveys in our sample revealed several patterns. First, only a small proportion of the studies (n = 47, 25.7%) conducted an a priori power analysis to justify their sample sizes. Second, most studies (n = 176, 96.2%) used a cross-sectional design, whereas only seven studies (3.8%) employed a longitudinal design. Third, the majority of studies (n = 159, 86.9%) utilized nonprobability sampling techniques, such as convenience and quota sampling, whereas a limited number (n = 11, 6%) employed probability sampling methods, including stratified sampling.

Among the qualitative methods used by articles in the sample, they relied mainly on interviews (n = 26) and case studies (n = 14). For example, several studies utilized interviews to explore how individuals identified deepfake videos and found various strategies used for detection. These include scrutinizing imperfect visual and auditory cues in videos, verifying information across multiple online sources, and applying personal knowledge to assess the authenticity of videos (Goh, 2024; Thaw et al., 2020). Additionally, some case studies were conducted to analyze the malicious and nonmalicious uses of deepfakes and their implications for individuals and organizations (Cleveland, 2022; de Rancourt-Raymond & Smaili, 2023). Other techniques, including thematic analysis (n = 10), discourse analysis (n = 4), and online ethnography (n = 2), were occasionally used to provide a nuanced understanding of deepfakes.

The number of samples, whether human or nonhuman, varied widely across the studies. For example, in experiments involving human participants, the sample size ranged from as few as 10 to as many as 9492. Similarly, in content analysis studies, the sample size of online content ranged from 10 videos to 86,425 comments. The populations most frequently observed in the empirical research included adults and college students. Some studies focused on more specialized samples that were less prevalent but involved the purposive sampling of individuals with specific characteristics, such as fact-checking experts, filmmakers, tourism industry employees, and patients experiencing moral injury due to sexual violence.

Research areas and topics

Regarding RQ5, the empirical studies in our sample covered a diverse array of areas. Politics (n = 48) was the most frequent area of deepfake research, with a particular focus on how individuals process and react to deepfake videos depicting false claims made by politicians (Appel & Prietzel, 2022; Hameleers et al., 2022; Vaccari & Chadwick, 2020). Furthermore, deepfakes were often studied in the area of sexuality (n = 23), with a particular focus on issues related to pornography (Fido et al., 2022) and sexual abuse (Flynn et al., 2022) caused by deepfakes. Other areas, such as entertainment (n = 16), education (n = 16), and media (n = 12), also received some attention in the deepfake research in our sample.

In terms of research topics in the sampled articles, the identification and detection of deepfakes was represented in the most empirical studies (n = 88). Specifically, the researchers investigated various factors that influenced individuals’ ability to distinguish between deepfakes and authentic media content, including demographic factors (e.g., age, gender, and education; Tahir et al., 2021), personal attributes (e.g., personality traits; Abraham et al., 2022), and video characteristics (e.g., length and quality; Wöhler et al., 2021). In addition, several studies explored this topic from different aspects. For example, Korshunov and Marcel (2021) compared the accuracy of humans and algorithms in detecting deepfake videos to identify similarities and differences in their perceptions of deepfakes. Sundström (2023) compared the ability of individuals to detect two different types of deepfake (face-swapping and lip-syncing) to see which was more deceptive.

The second most frequent category of research topics focused on the effects of exposure to deepfakes on audience responses, including perception, emotion, physiology, and behavior (n = 72). For instance, Pataranutaporn et al. (2022) examined how exposure to deepfake videos of instructors who resembled people students might like affected their emotions, perceived qualities of the instructor, and learning performance. Others, such as Eiserbeck et al. (2023), investigated viewers’ physiological (brain activity) and behavioral (reaction times and facial expression ratings) responses to deepfake images with smiling, neutral, and angry faces.

The third most frequent category of studies explored public opinion and understanding of deepfakes (n = 46). Some studies conducted surveys to assess public awareness and opinions about deepfakes (Ahmed, 2023; Blancaflor et al., 2023). Others analyzed public discourse on digital media platforms, such as Twitter, Reddit, and YouTube, to investigate how people perceive, evaluate, and make sense of deepfakes (Bode, 2021; Gamage et al., 2022; Twomey et al., 2023). In addition to the general public, several studies have examined the perceptions and understanding of deepfakes among specific populations, such as college students (Murillo-Ligorred et al., 2023), fact-checking experts (Weikmann & Lecheler, 2024), and low-income community members (Shahid et al., 2022).

The fourth most frequent category of research topics involved the prevention and intervention of deepfakes (n = 20). At the individual level, a body of research, which increased during the time period represented by the sample, examined the effectiveness of various strategies to improve people's resilience to deepfakes, such as displaying warning labels indicating the falsehood of information (Lee & Shin, 2022), providing strategies for detecting deepfakes (Somoray & Miller, 2023), and conducting media literacy education (Hwang et al., 2021). At the organizational level, studies provided insights into how media outlets and internet companies combat the spread of deepfakes (Vizoso et al., 2021) and how some European Union countries legally regulate and protect victims of deepfake pornography (Mania, 2024).

The fifth most frequent category included studies examining the sharing and dissemination of deepfakes (n = 15). Specifically, researchers focused primarily on the antecedents of deepfake sharing and identified various facilitating and inhibiting factors, including political brand hate (Sharma et al., 2023), self-regulation (Ahmed et al., 2023), and deepfake recognition (Iacobucci et al., 2021). In contrast, relatively little attention was paid to the consequences of deepfake sharing, with only one study investigating the relationship between the unintentional sharing of deepfakes and social media news skepticism (Ahmed, 2023). In terms of sharing behavior, some studies distinguished between intentional sharing (i.e., deliberately sharing deepfakes that people knew at the time were fabricated) and unintentional sharing (i.e., accidentally sharing deepfakes that people later discovered were fabricated). For example, Ahmed (2022) examined the role of social media news use, fear of missing out, and cognitive ability in predicting the intentional sharing of deepfakes. Another study by Ahmed (2021) explored how factors such as political interest and social network size were associated with the unintentional sharing of deepfakes.

Studies in the sixth most frequent category focused on the creation and generation of deepfakes (n = 12). One aspect that was frequently explored was the factors that explain why individuals create deepfakes. For example, Fido et al. (2022) examined how victim status, victim sex, and participant sexual orientation affected individuals’ likelihood of creating deepfake pornography. Flynn et al. (2022) investigated the relationship between individual characteristics (e.g., gender, age, and sexuality) and the generation of deepfakes used for sexual abuse purposes. Moreover, several studies analyzed the intentions and rationales for using deepfakes in documentary filmmaking (Lees, 2024) and YouTube video production (Ayers, 2021).

The final category of studies concerned media representations of deepfakes, namely, how deepfake-related issues were portrayed in media outlets (n = 9). This category focused on examining the topics, themes, frames, narratives, and sentiments surrounding deepfakes in the news media. For instance, by analyzing 4920 news articles related to deepfakes between 2000 and 2022, Tang et al. (2023) identified the major topics discussed and prevalent sentiments expressed in the news articles and discovered how they evolved over time and in different contexts. Yadlin-Segal and Oppenheim (2021) explored the narratives constructed by journalists when reporting on deepfake technology and the regulatory actions associated with such narratives. Additionally, two studies focused specifically on the negative aspects of deepfakes, examining how news media frame the problems and challenges posed by deepfakes (Brooks, 2021; Gosse & Burkell, 2020).

Discussion

As one of the initial attempts in the field to synthesize both quantitative and qualitative research on deepfakes, the current systematic review provides an overview of the field's development, conceptual frameworks, theoretical perspectives, methodological approaches, and research areas and topics under investigation. Our results provide valuable insights for scholars and practitioners to theoretically and empirically explore the evolving field of deepfakes. In this section, we elaborate on our key findings and provide recommendations for future research.

The results showed that empirical research on deepfakes has grown considerably over the past four years, spanning diverse disciplines ranging from communication and psychology to computer science and information science. Despite the recent proliferation of studies in this area, most have been conducted in Western countries, with relatively little attention paid to Asian countries and even less to African countries. Given the high illiteracy rates in South Asia and sub-Saharan Africa (UNESCO, 2017), it is not far-fetched to imagine that people living in these areas could be more vulnerable to the negative impact of deepfakes. For instance, in 2019, the Gabonese government released a video of President Ali Bongo to dispel public rumors about his health. However, many critics and political opponents claimed that the video was a deepfake. This allegation led to a military coup in the country, which, although ultimately unsuccessful, still posed a considerable threat to domestic stability (Breland, 2019). Our findings highlight the importance of expanding the scope of current deepfake research to more diverse geographic regions, particularly those with relatively low literacy rates.

Moreover, although the growth of deepfakes around the world has become an emerging global issue, previous empirical studies have primarily adopted a single-country approach and overlooked cross-country comparisons. To this point, comparative studies across countries have primarily focused on investigating the effects of exposure to deepfakes on audience responses (e.g., Ahmed et al., 2025; Hameleers et al., 2024) and examining the antecedents of sharing deepfakes across nations (e.g., Ahmed, 2021, 2022). However, there remains a notable lack of empirical research on cross-country differences in the capability to identify deepfakes. Recent studies have documented variations across nations in the ability to detect AI-generated media—specifically text, images, and audio. For example, Frank et al. (2024) found that German participants exhibited greater proficiency in detecting AI-generated audio compared to participants from the United States and China, whereas Chinese participants were more adept at identifying AI-generated images than their counterparts in the United States and Germany. Future investigations should expand this research trajectory into the domain of deepfakes to examine whether and how detection capabilities differ across various cultural and societal contexts. Furthermore, more cross-national comparative studies are needed to evaluate the effectiveness of intervention strategies aimed at combating deepfakes. Rather than treating intervention strategies as one-size-fits-all solutions, scholars should carefully consider context-specific differences and systematically examine how economic, cultural, political, and social factors influence the effectiveness of these strategies.

Our evidence suggests that the majority of empirical studies attempt to define the concept of a deepfake, but the definitions diverge in some respects. While there is no general consensus on how to define deepfakes, our results revealed several core conceptual elements of existing definitions, including video modality, advanced AI-driven technique, inauthentic content, and realistic appearance. Based on these elements, deepfakes can generally be defined as the digital manipulation of video content that employs advanced AI-driven techniques (e.g., deep learning and generative adversarial networks) to create inauthentic content with highly realistic appearances of individuals saying or doing things that never actually occurred in reality. 1 This definition provides a starting point for clarifying what researchers actually mean by deepfakes. It also helps distinguish deepfakes from other forms of deceptive media, such as cheapfakes (i.e., audiovisual manipulation generated using conventional editing software, including Adobe Photoshop or Premiere Pro, without the involvement of any AI technology; Paris & Donovan, 2019). Future research could use this definition to guide theoretical development and empirical investigation in the field of deepfakes.

Although there has been a burgeoning body of studies on deepfakes in recent years, the extant research is still at a nascent stage of theory building and testing. Our results indicated that most studies did not adopt or develop any theories in their empirical investigation. As Kerlinger (1986: 8) pointed out, “The basic aim of science is theory. Perhaps less cryptically, the basic aim of science is to explain natural phenomena. Such explanations are called theories.” According to Craig (2013: 45), “an important function of theories is to explain the regularity of empirical phenomena with reference to the functional or causal processes that produce them.” Given the pivotal role of theory in generating scientific knowledge, more theory-driven research is warranted to develop a theoretical understanding of deepfake phenomena and contribute to theory advancement and synthesis. Future research on deepfakes should devote more effort to the refinement, extension, and critique of existing theories. For example, as the current media and technological environments have changed dramatically from those that gave rise to dual-process theories, it is worthwhile to explore how the cognitive processing of deepfakes confirms, extends, or challenges the foundational assumptions of these theories. To illustrate, Appel and Prietzel (2022) developed a deepfake detection model grounded in two system models of information processing, investigating how individual differences in analytic thinking and political interest affect cognitive processes and the ability to identify deepfakes in a political context. In a similar vein, Lee's (2020) authenticity model of (mass-oriented) computer-mediated communication presents another promising theoretical lens. This model provides an integrative conceptual framework that illustrates the antecedents and consequences of authenticity judgments in digitally mediated communication. Such a framework is particularly relevant to deepfake research, as deepfake technology increasingly blurs the boundaries between genuine and fabricated content. Future research should consider leveraging and extending these frameworks to examine how the message, source, contextual, and technological factors—independently and in combination—shape users’ responses to deepfakes across various contexts.

Additionally, given the interdisciplinary nature of deepfake research (Godulla et al., 2021), it offers numerous opportunities to expand the boundaries of theories across various disciplines and to provide rich theoretical perspectives to guide future work. Thus, we advocate for closer collaboration among deepfake researchers with diverse disciplinary backgrounds—including communication and media studies, computer science, education, ethics, law, political science, and psychology—to facilitate the integration of theories and the development of new theoretical models and frameworks. Each of these disciplines contributes unique theoretical perspectives that, when integrated, have the potential to deepen the current understanding of deepfakes. For instance, social psychological theories, such as motivated reasoning theory and reactance theory, provide valuable insights into the cognitive and psychological mechanisms through which individuals process and respond to deepfake content. Political science draws upon conceptual frameworks centered on governance, power dynamics, and deliberative democracy to examine the political and societal impact of deepfakes. Law enforcement and criminology utilize concepts from digital forensics and cybercrime victimization to understand the detection, investigation, and prevention of the malicious use of deepfakes. Collaborative engagement with these theories and concepts should encompass both empirical and nonempirical approaches; for example, nonempirical research from disciplines such as law (e.g., Chesney & Citron, 2019) and technology ethics (e.g., Diakopoulos & Johnson, 2021) provides crucial insights into the legal and ethical implications of deepfakes.

Some notable methodological gaps may impede a comprehensive understanding of deepfakes. Although an a priori power analysis is generally considered an optimal method for controlling Type I and II errors to validate hypotheses (Kang, 2021), it was largely lacking in the surveys and experiments in our sample. This absence may limit our ability to determine whether nonsignificant results for a relationship are due to insufficient statistical power to detect it or because such a relationship truly does not exist in the population. Furthermore, the scarcity of longitudinal studies poses considerable challenges in identifying patterns and trends over time. This shortcoming is particularly concerning in studies assessing the efficacy of intervention strategies against deepfakes, as the primary purpose of interventions is not merely to produce immediate effects but to equip individuals with the necessary tools and knowledge for their long-term application in daily life. In addition, there was a predominance of quantitative studies using nonprobability samples, suggesting that the conclusions drawn from these studies may lack generalizability to a wider population. In light of these gaps, future quantitative research should not only conduct a priori power analyses before data collection to justify the sample sizes used but also employ longitudinal designs and more representative samples.

Another important trend in the deepfake research in our sample was a predominant focus on the political domain. While deepfakes were not originally created for political purposes, political deepfakes seemed to attract greater academic attention than their use in other areas. This may be because the malicious use of deepfakes in political contexts poses serious democratic threats by manipulating public opinions and interfering with electoral processes (Chesney & Citron, 2019; Diakopoulos & Johnson, 2021). Political deepfakes are often created to purposely spread false information about candidates, discredit opponents and rival parties, and disrupt political campaigns. Previous research has shown that exposure to political deepfakes can heighten uncertainty about information content, reduce trust in online news (Vaccari & Chadwick, 2020), undermine attitudes toward the depicted politicians (Dobber et al., 2021), and increase sharing intentions (Ahmed & Chua, 2023). Such potential consequences, along with an intense focus on political deepfakes in news media (Gosse & Burkell, 2020), may amplify public concerns about the misuse of deepfake technology in politics and receive increasingly scholarly attention. However, it is worth noting that in reality, the majority of deepfake videos circulating online are nonconsensual pornographic content targeting specific women as subjects (Ajder et al., 2019). Deepfakes have emerged as a relatively new form of gender-based violence, leveraging AI technology to humiliate, demean, and objectify women. Targets of sexual deepfakes often suffer reputational damage and experience emotional and psychological distress, such as anxiety, depression, and even suicidal ideation (Kugler & Pace, 2021). The prevalence of sexual deepfakes and the harm they cause highlight the urgent need for future research to devote more effort to investigating and addressing this issue.

When it comes to research topics, our results identified several knowledge gaps and opportunities for future research. First, existing research has focused more on the identification and detection of deepfakes than on the prevention and intervention of deepfakes. Further work is needed to explore various intervention strategies and assess their efficacy in improving the human detection of deepfakes. Second, there is a limited understanding of the psychological processes underlying the impact of deepfakes on audience responses. Most studies have focused on direct effects while overlooking potential psychological mechanisms. Additional research is thus necessary to elucidate these mechanisms and test their effectiveness in explaining deepfake effects. Third, the consequences of sharing deepfakes remain understudied. According to Barasch (2020), sharing information with others leads to two types of consequences: intrapersonal outcomes and interpersonal outcomes. The former focuses on how sharing influences the sharer's internal psychological states, while the latter concerns how sharing affects the sharer's relationship with others. These consequences largely depend on whether the information shared is positive or negative in valence. In light of this framework, future research could examine the intrapersonal and interpersonal consequences of sharing deepfakes and how such consequences vary with the valence of the shared deepfake content (e.g., entertainment vs. disinformation).

Limitations

The current results should be interpreted in light of several limitations. First, although we attempted to account for a potential publication bias by including unpublished theses and dissertations in our sample, other types of unpublished work (e.g., preprint articles) were excluded. To provide a more complete picture of deepfake research, future systematic reviews should take these unpublished materials into account. Additionally, due to the qualitative nature of this systematic review, we were unable to quantify the risk of bias in the included studies. Lastly, our sample was limited to articles published in English, so the results may not be representative of empirical research on deepfakes published in other languages. Future work should expand the inclusion criteria to include articles published in multiple languages.

Conclusion

Powered by recent advances in AI technology, deepfakes have made it easier than ever to generate highly realistic and believable media content that blurs the line between authenticity and fabrication. This study conducted a systematic review of 261 empirical studies on deepfakes to identify research trends and gaps and to point out directions for future research. In conclusion, we call for more research in non-Western and nonpolitical contexts, along with comparative approaches, to provide nuanced insights into deepfakes. We hope this review will spur more interdisciplinary research that integrates theoretical and methodological insights from multiple fields. For instance, collaboration among communication scholars, computer scientists, and psychologists could help assess how advanced deepfake detection tools affect users’ trust in detection warnings and their engagement with media content. By systematically mapping the current state of research and categorizing empirical studies into seven topics—including the identification of deepfakes, the impact of deepfake exposure, and public perceptions of deepfakes—this review provides a navigational resource for scholars. Empirical researchers can leverage this analysis to identify underexplored areas and topics, develop more robust study designs, and situate their work within a broader interdisciplinary context. For scholars dedicated to advancing theoretical frameworks for deepfakes, this review uncovers patterns and gaps that inform more thorough theorizing.

Footnotes

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was partially supported by the General Research Fund (GRF) of the Research Grants Council (RGC) of the Hong Kong SAR (Project No. 12612624).

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.