Abstract

In the post-truth age, political conspiracies circulate rapidly on social media, cultivating false narratives, while challenging the public’s ability to distinguish truth from fiction. ‘Deepfakes’ represent the most recent type of misinformation. They display deceitful representations of events to lead audiences to believe in fabricated realities. There has been limited research on deepfakes in political communications. As this technology progresses, deepfakes look deceptively authentic; thus, it is necessary to explore their effects on public perceptions. This study examines viewers’ comments on an Instagram-published deepfake video of Hillary Clinton to understand the impact of this technology. The results demonstrate that individuals struggle to identify deepfake videos and that their opinions are affected by this persuasive type of misinformation. This study also explores different ethical concerns posed by political deepfakes. By offering insights into public reactions to manipulated content, this study contributes to our understanding of the political effects of AI-fabricated content.

Introduction

Artificial intelligence and machine-learning technologies are pushing the boundaries of what computers can learn and achieve, affecting our daily encounters with visual media. Although video and audio recordings have often been considered trustworthy evidence, current software that uses deep learning algorithms to manipulate digital media presents new challenges in discerning authenticity. Such ‘deepfakes’ are highly realistic videos, images or audio that are generated with artificial intelligence and deep learning techniques that present misleading representations of events or statements. Advanced machine-learning algorithms, such as Generative Adversarial Networks (GANs), 1 are used to generate high-quality deepfakes (Singh & Dhiman, 2023). These algorithms learn from enormous amounts of data how to alter, blend and produce content that features individuals participating in activities they have never actually done or communicating something they never said. The first deepfake video was released in 2017, when a Reddit user shared a manipulated video featuring a celebrity in a compromising situation (Chadha et al., 2021; Deshmukh & Wankhade, 2020; Rana et al., 2022). Deepfake technology has been used by different artistic and educational institutions as a positive means of training and creative expression. For example, face-swapping applications have been used to reimagine historical events or in interactive museum shows. Deepfakes also benefit the movie and media industries (Westerlund, 2019). Nevertheless, this technology is mostly used for malicious purposes. Deepfakes, for instance, can be used for blackmailing or for making a pornographic photo of a celebrity (Harris, 2018; Kugler & Pace, 2021). In addition, employees can create phoney images or videos of their co-workers for amusement value or retaliation (Albahar & Almalki, 2019). Deepfakes present a new form of content creation for spreading misinformation that can potentially cause extensive issues, such as political intrusion, spreading propaganda, committing fraud and reputational harm.

AI applications have simplified the process of creating different types of deepfakes. Learning cutting-edge technical skills or accessing high-end equipment are not required to use this type of technology (Matern et al., 2019; Mirsky & Lee, 2021; Venema & Geradts, 2020). For example, Sora is an artificial intelligence programme that creates original videos based on textual instructions. Sora generates complicated scenes with several characters, distinct motion styles, and precise background and subject details. In addition to comprehending the user’s request promptly, the model also knows how the requested items actually look like in the physical world. The OpenAI team that created Sora is developing tools to assist in detecting misleading content and text input prompts that violate their usage policies. Content and prompts that call for excessive violence, sexual content, hostile images, celebrity likenesses or the intellectual property of others will be flagged by their text classifier and rejected (OpenAI, 2023). However, Sora will facilitate the production of advanced video deepfakes for individuals with malicious intentions, improving their capabilities to craft videos intended for harmful use (Cerullo & Lee, 2024). As this technology becomes more accessible, the ethical and legal implications of deepfakes continue to provoke significant debate among scholars, technologists, policymakers and the public.

This study explores how social media users respond to deepfakes, and it discusses the risks and ethical implications of their influence in the political context. Most deepfake analyses have concentrated on types of manipulation (Akhtar et al., 2023; Malik et al., 2022; Masood et al., 2023; Seow et al., 2022; Yu et al., 2021) and detection methods (Almars, 2021; Das et al., 2022; Deshmukh & Wankhade, 2020; Mirsky & Lee, 2021; Montserrat et al., 2020; Rana et al., 2022). Although recent empirical studies have begun to examine the effects of deepfakes on the political landscapes (Dobber et al., 2021; Hameleers et al., 2024; Vaccari & Chadwick, 2020b), further research should concentrate on deepfakes’ broader societal effects. The number of hi-tech political deepfakes that have been disseminated on social networking sites is still limited, meaning that this study examines an urgent political problem.

On 11 April 2023, a deepfake video circulated across social media displaying Hillary Clinton supporting Republican Florida Governor Ron DeSantis for the presidency. The video was shared on social networking sites, such as Instagram, Twitter (now X), YouTube and TikTok, when the governor of Florida prepared himself to start a 2024 U.S. election bid. This study examines comments on an Instagram-published deepfake video of Hillary Clinton to understand how individuals react to political deepfakes and how these videos inform citizens’ opinions. Moreover, this study reviews current deepfake generation and detection techniques, exploring different ethical concerns and risks posed by political deepfakes including misinformation, impacts on elections, privacy violations, polarisation and security threats.

Deepfake Generation and Detection Overview

Scholars have examined different methods for the generation and detection of deepfakes to explain the use of self-evolving technologies such as artificial intelligence to manipulate different digital media (Chadha et al., 2021; Seow et al., 2022; Yadav & Salmani, 2019). For instance, different scholars have elucidated how GANs are used to change digital content in order to generate new content (Gatys et al., 2016; Melnik et al., 2024; Mitra et al., 2024; Paul, 2021; Singh et al., 2020). GANs are considered the most successful algorithms for producing deepfakes (Goodfellow et al., 2020; Yadav & Salmani, 2019). These deep-learning technologies are trained to generate new visuals that look realistic. Therefore, the outcome seems reliable to human observers. Different techniques are used to produce deepfakes, including face swapping, facial attribute manipulation, entire face synthesis, puppet mastery and lip-syncing (Falcon-Lopez et al., 2023; Masood et al., 2023). For example, GANs are usually used to accomplish highly realistic face swaps in which a person’s face is transposed to a video of another person (Singh & Dhiman, 2023). There are some open-source applications available for generating deepfakes, and they are generally easy to use, including HeyEditor, Deepfakes Web, DeepFaceLive, Reface, DeepSwap.ai and Avatarify. All these techniques challenge deepfake detection (Kim et al., 2018; Rehaan et al., 2024; Seow et al., 2022; Suwajanakorn et al., 2017; Thies et al., 2016). For example, Rehaan et al. (2024) explain that limited lip movement in a video decreases detection accuracy.

The progress of deepfake technology has drawn researchers’ attention to the necessity of developing detection techniques to counteract the challenges that are created by the malicious use of this technology. Scholars have identified different methods of detection that can be used to distinguish manipulated files that look deceptively real (Afchar et al. 2018; Almars, 2021; Das et al., 2022; Guarnera et al., 2024; Masood et al., 2023; Mirsky & Lee, 2021; Montserrat et al., 2020; Rana et al., 2022). There are different methods that are proposed for identifying deepfakes, including machine-learning algorithms (Rana et al., 2021; Taeb & Chi, 2022), digital watermarking (Qureshi et al., 2021) and blockchain technology (Rashid et al., 2021). These methods use algorithms to determine whether an image, video or audio clip has been modified from its original form (Engler, 2019). For instance, deep learning models are used by researchers to detect inconsistencies in facial expressions, speech patterns and body language in deepfake videos. Agarwal et al. (2019) note that people have facial expressions and movements when they speak, which usually seem artificial in deepfake videos. Thus, these distinctive patterns can be used as a basis for deepfake detection.

Rana et al. (2022) review the current deepfake detection systems and classify these techniques into four groups: ‘machine learning-based methods’, ‘deep learning-based methods’, ‘statistical measurements-based methods’ and ‘blockchain-based methods’ (p. 458). Their study shows that deep learning models have been exceptionally effective in deepfakes detection. Masood et al. (2023) also note that the most recent detection techniques use deep learning, ‘showing robust performance close to 100%’. Appel and Prietzel (2022) claim that, ‘in the absence of relevant context such as prior knowledge about the deepfake or its source’, recipients can use three different sources of information to identify deepfakes, including ‘context, audio-visual imperfections (i.e., technological glitches), and content’ (p. 2). By using an unsupervised learning technique, Gao et al. (2024) improved the accuracy of detecting deepfake files that were tremendously compressed. They narrow the gap in detection effectiveness between high-quality and low-quality deepfake data. Guarnera et al. (2024) developed a new detection system that uses a tiered ResNet-101 2 model, which is categorised into three distinct levels to aim at specific detection tasks. The first level determines whether multimedia content is genuine or deepfake. The second level identifies the technology used to create deepfakes. The third level has two divisions, each dedicated to recognising the architecture of the generative models used to produce deepfakes.

In summary, developing detection tools is not only a technical challenge but also raises questions about the ‘arms race’ between deepfake creation and detection. When deepfakes become more realistic by overcoming technical limitations, detecting deepfakes will be more challenging.

Methodology

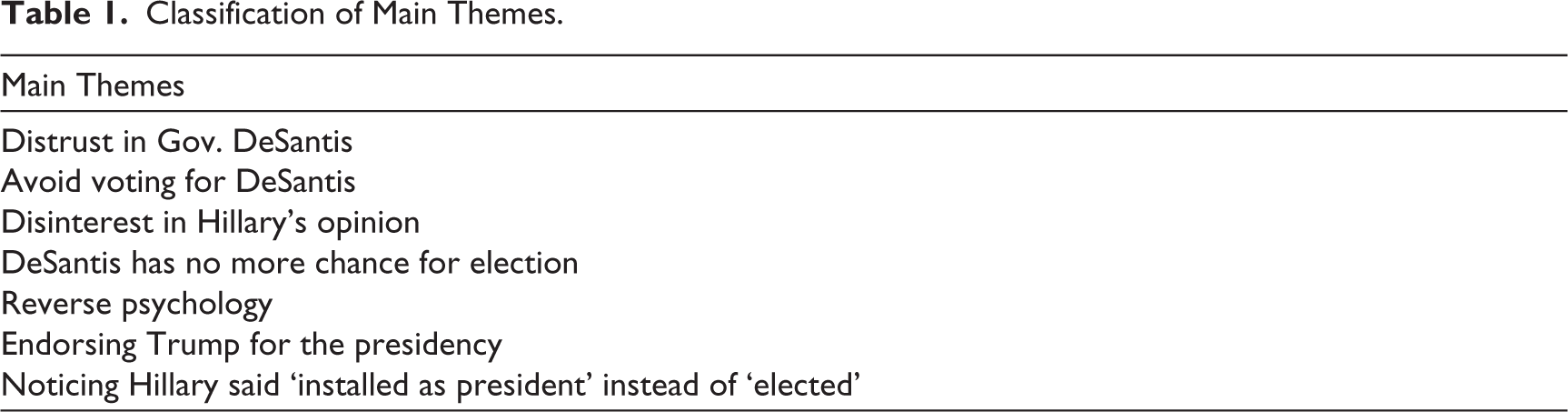

This study examines comments posted on Instagram in response to a deepfake video of Hillary Clinton endorsing Florida Gov. Ron DeSantis for president. The data was extracted from the @c3pmeme account, which initially posted the video on Instagram and received a substantial number of comments. This account has 105k followers. Approximately 1,670 comments were manually collected for this study. The Instagram post caption indicates ‘Hillary Endorsed DeSantis? #deepfake #hillaryclinton’, acknowledging that the video was manipulated. To identify and classify themes and approaches in the comments, the methodology of Madden et al. (2013) was adopted, which was used to classify the comments according to their primary themes. Madden et al. (2013) contribute a taxonomy scheme that can be used to classify user comments on social media. The scheme is created through an iterative process of examining the data, identifying potential categories and testing these classifications on additional data to determine their applicability in their current form. Content analysis is an effective method for studying text and visuals (Burnard, 1996; Cole, 1988; Tesch, 1990). I created a classification scheme based on qualitative content analysis. Initially, the comments underwent thematic analysis and were organised into three primary categories: (a) recognition by users that the video was deepfake, (b) believing the content of the video and (c) comments unrelated to the video’s authenticity, neither disputing nor endorsing its content. Finally, comments that indicated trust in the video were reviewed and analysed to uncover information and meanings (e.g., reacting to the content of the video or stating a political opinion based on viewing the video). Through a manual analysis, I identified the specific types and functions of comments and classified them into seven themes: expressing distrust in Gov. DeSantis (e.g., calling him a traitor and bought by Democrats), not voting for DeSantis anymore, expressing no interest in Hillary’s opinion, stating that Hillary ruined DeSantis’s chance for election, noting that Hillary said ‘installed as president’ instead of ‘elected’, suggesting reverse psychology and endorsing Trump for the presidency (Table 1). Categorising the comments that indicated trust in the video into these various themes allowed this study to analyse users’ responses and reactions to the deepfake in greater detail.

Classification of Main Themes.

My decision to analyse user comments as the data was based on my interest in using the data collected in its original media context and in demonstrating how users reacted to the deepfake on social media to comprehend the outcomes and consequences of deepfakes in politics. My analysis is focused on the media scene itself, with user comments serving as an integral component of that ‘scene’, and extending beyond this context would be outside the scope of my study.

In order to supplement my approach and strengthen my analysis, I reviewed the official statistics and reports published by the Pew Research Center in 2020 and 2023. These resources provided valuable insights into the public’s awareness of deepfakes, which were important for the expansion of my methodology. The Pew Research Center conducted a digital knowledge survey among 5,101 U.S. adults from 15 May to 21 May in 2023. Based on the findings from this survey, Americans’ digital knowledge varies widely across different age groups depending on the subject. Notably, 50% of U.S. adults are not sure what a deepfake is, while 60% of Americans under 30 years know about deepfakes and large language models. In addition, Pew’s report from the 16th ‘Future of the Internet’ survey provides insights from experts on vital digital issues. These reports (2020, 2023) indicate that experts have emphasised the challenges posed by generative AI systems, which can be used to produce misinformation and deceive individuals. I incorporated Pew’s data and findings in different sections of this study to support my analysis.

Findings and Discussion

The deepfake video of Hillary Clinton that was posted on Instagram is around 16 seconds long. Clinton can be heard saying the following in the clip:

You know, people might be surprised to hear me saying this, but I actually like Ron DeSantis a lot. Yeah, I know. I’d say he’s just the kind of guy this country needs, and I really mean that. If Ron DeSantis got installed as president, I’d be fine with that.

The Instagram post did not include any information about the creator of the video. However, on X (formerly Twitter), the user @Ramble_Rants posted the video and indicated that it was created with the help of another user, @C3PMeme.

Based on the analysis of the comments of the Hillary Clinton post on the Instagram account @c3pmeme, I found that 60% of the commenters believed that the video was real. That is, their comments reflected a belief that Clinton supported DeSantis and they expressed their dissatisfaction about Clinton’s endorsement. In addition, 29% mentioned that the video was manipulated or that deepfake technology was used to create the video. Approximately 11% of the comments were either single words, emojis or GIFs supporting other candidates, or their belief in the deepfake was unclear. The results of the analysis are shown in Figure 1.

Types of Comments Users Posted for Hillary Clinton Deepfake Video, 11 April 2023.

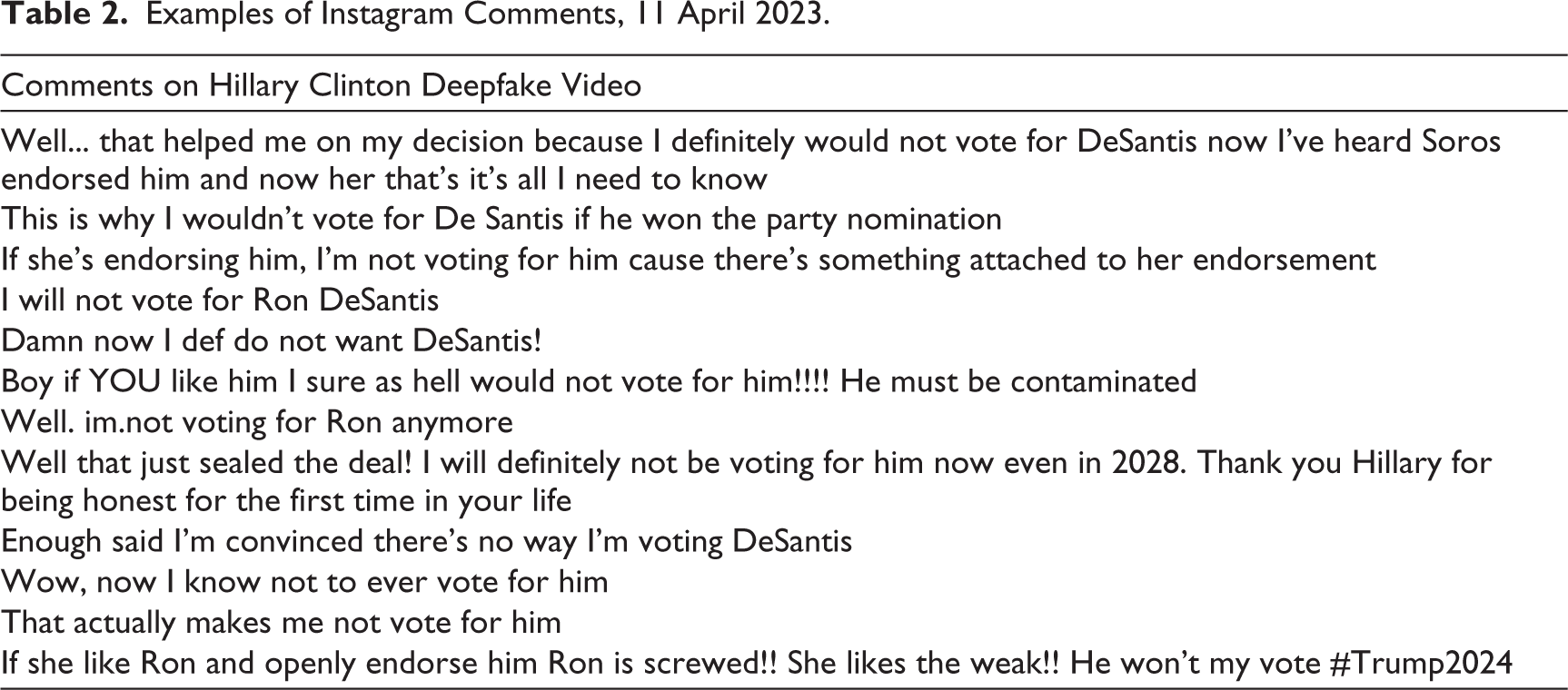

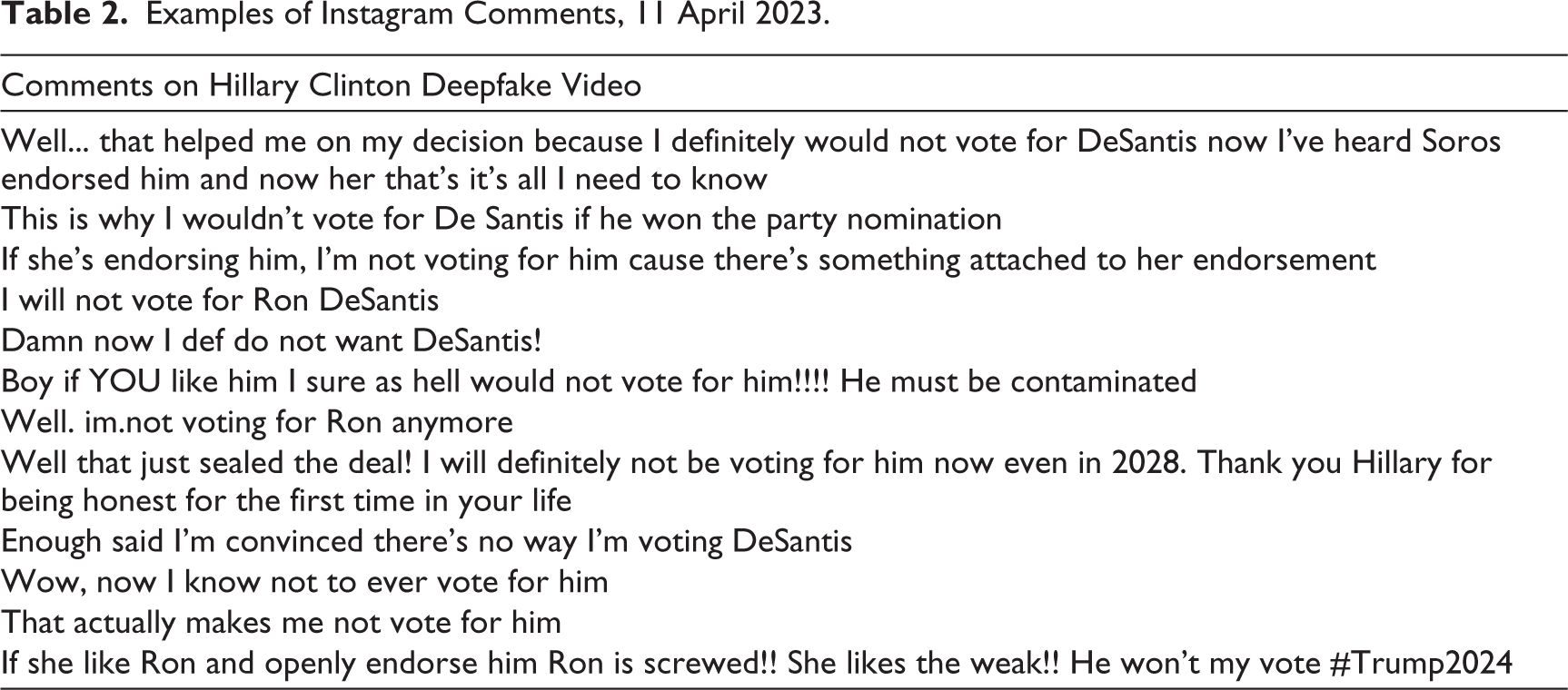

Although the account stated that the video was a deepfake (by including #deepfake in the caption), most commenters ignored or missed this information and were convinced that the video was real and that Hillary Clinton had actually endorsed DeSantis. This finding aligns with the report by the Pew Research Center (2023a), which indicates that 50% of American adults are unsure of what a deepfake is. Some commenters clearly stated their decision not to vote for DeSantis based on the video they saw (Table 2).

Examples of Instagram Comments, 11 April 2023.

Hillary Clinton was an active member of the Democratic Party and served as the 67th United States Secretary of State in the administration of Barack Obama. Ron DeSantis is an American politician who served as the governor of Florida since 2019 and is a member of the Republican Party. Therefore, it would be unexpected if Clinton supported a member of the Republican Party for the 2024 presidency, as portrayed in this deepfake video. However, 17% of individuals who assumed that the video was real expressed that DeSantis was supported by Democrats and controlled by them. They made comments such as ‘He’s already been bought and paid for’ (@g_kingeter) ‘and That’s because he’s a Democrat in Republicans clothing. Don’t trust him’ (@sherri_esco)’, and called him a ‘Trojan Horse’ and a ‘puppet’. The commenters’ responses indicate that they viewed DeSantis as a disloyal member of the Republican Party. In this way, deepfakes could erode trust and eventually affect voter decisions if he was nominated.

The exploration of Instagram comments reveals that several users could not differentiate between manipulated and reliable content. Despite the video’s imperfections and controversial statements, they accepted the authenticity of the shared video. This demonstrates how deepfakes have the potential to convincingly blur the line between authentic and altered content, making it difficult for viewers to discern the truth. They can situate fabricated content as a creditable and exemplary resource for those who believe it. The inability to detect deepfakes is also related to the lack of knowledge of this technology, as determined by the Pew Research Center (2023a).

Around 10% of viewers of Clinton’s deepfake video who thought the video was authentic indicated that they would no longer vote for Gov. DeSantis. This highlights how deepfakes sway public opinion and political judgement by shaping voters’ perceptions. As Diakopoulos and Johnson (2021) argue, ‘false information about candidates may distort a voter’s reasoning about how to vote’ (p. 9). Dobber et al. (2021) also state that ‘the attitude toward the politician is directly affected by the deepfake’ (p. 82). This perspective supports the idea that deepfakes can influence the behaviours of their audiences (Dobber et al., 2021; Hwang et al., 2021). The Pew Research Center (2023b) also indicates that some experts are concerned about the irresistible speed and capacity of digital technologies, and their potential to destroy the information environment and challenge democratic systems, particularly with deepfakes. Thus, the integrity of elections can be undermined and, as Appel and Prietzel (2022) also note, political deepfakes pose a serious challenge to democracies.

When audiences regard a political deepfake, such as Clinton’s video, as an authentic clip, it can lead to negative consequences. For instance, when false representations are created, disseminated and partially believed, they can dominate social media and deceive the public for extended periods of time. Furthermore, many social media users may not be able to recognise content as artificially created and, through the sharing of this content, misinformation can easily spread and extend its influence over communities, making discussions based on accurate information increasingly difficult. In addition, individuals are frequently willing to support and approve information that aligns with their existing opinions while dismissing content that conflicts with their views (Edgerly et al., 2020; Metzger et al., 2020). Therefore, it might be easier for some people to accept and believe in misinformation when it is framing the opposing political party. Toeing an ideological line driven by a shared belief in misinformation aggravates polarisation caused by fabricated content.

Exploring posts from commenters who identified the video as fake demonstrates that the majority of users noticed that Hillary’s lip movements did not match her words. For example, one user said: ‘Fake. Her mouth isn’t even close to saying those words’. This supports Agarwal et al.’s (2019) claim that the facial expressions and movements of individuals usually seem artificial when they speak in deepfake videos. Additionally, 30% of these comments mentioned that the video was AI-generated and deepfake. However, they did not mention how they identified the video as fake.

The Ethical Implications and Risks of Political Deepfakes

The ethical concerns and risks of political deepfakes create multiple issues and affect individuals and communities in different ways. Previous studies have underlined the harmful effects of deepfake technology (Chesney & Citron, 2019; Diakopoulos & Johnson, 2021; Meskys et al., 2020). For instance, as Chesney and Citron (2019) indicate, there are two main categories of deepfake impacts: those that affect individuals and organisations, and those that influence society. Diakopoulos and Johnson (2021) further define these two categories as they explore the impact of deepfakes within the context of elections. They classify harm into three types: ‘harms to viewers/listeners’, ‘harms to subjects’ and ‘harms to social institutions’ (p. 6). In addition, according to these studies, social media platforms play an important role in the circulation of deepfakes on a large scale, which enhances harm. Momeni (2020) also argues that the use of social media affects individual and group involvement in online political activities. Thus, an effective way to encounter ethical issues is to enforce accountability on platforms. Inspired by Diakopoulos and Johnson’s (2021) analysis, this study explains the key ethical concerns presented by political deepfakes.

Misinformation and Truth Decay

Deepfakes intensify the issues of misinformation by challenging the public’s ability to distinguish reality from fiction. Hyper-realistic but entirely manipulated audio-visual content can mislead and deceive audiences at an unexpected and unprecedented scale. This undermines faith in legitimate information sources, leading to greater disbelief in news media and political communication. Trust is a crucial component of a strong democracy (Warren, 1999), and the ‘absence of trust paralyses collective action, democratic or otherwise’ (p. 17). This ‘truth decay’ diminishes public trust in the media, institutions and factual political discourse, which is a prerequisite for a functioning democracy and for making informed political decisions. As Kavanagh and Rich (2018) argue, decreasing public trust in key sources of information that were previously regarded as reliable sources of factual information is one of the trends that define ‘truth decay’. When ‘truth decay’ increases and citizens cannot make informed decisions, they may elect unqualified leaders, which can gradually decrease the stability of society. Misinformation in political discourse can reduce political accountability and compromise social unity (Jerit & Zhao, 2020; Vaccari & Chadwick 2020a). According to the Pew Research Center (2020), some tech experts believe that the information and trust environment will worsen by 2030 because of deepfakes and other misinformation strategies. They are concerned that moving towards more disbelief and despair will increase challenges for candid and independent journalism.

Furthermore, distrust plays an important role in sustaining democracy. When citizens question information shared online and try to confirm its accuracy, they can avoid being affected by misinformation. A reasonable level of scepticism fosters critical thinking. Consequently, individuals are less likely to believe in any information that is circulated online. As Warren (1999) argues, ‘distrust is essential not only to democratic progress but also, we might think, to the healthy suspicion of power upon which the vitality of democracy depends’ (p. 310). Scepticism can prompt a continuous evaluation and improvement of democratic processes and institutions.

Impact on Elections

Deepfakes increasingly threaten democratic practices because they impact and undermine electoral processes. Deepfakes ‘are a powerful new tool for those who might want to (use) misinformation to influence an election’ (Ivascu & Iftemi, 2019). Malicious individuals can influence public opinions and votes by generating fake and inaccurate video or audio files. As Pawelec (2022) argues, the fear of using deepfakes endangers the reliability of elections.

As demonstrated by the German Konrad-Adenauer-Stiftung and Counter Extremism Project, manipulated content has interfered with how people participate in elections by inciting civil unrest, which highlights instances of fake content influencing democracy (Farid & Schindler, 2020). The challenge of distinguishing fakes from genuine content can lead to the spread of misinformation, before inaccuracies can be recognised by most social media users. This affects the reputation of politicians by generating false narratives about them. Ray (2021) argues that the scale of voters who might be influenced by a deepfake cannot be determined with certainty. However, by targeting swing seats during elections, deepfakes could impact outcomes, even if only a small number (such as a hundred voters) were swayed. In addition, organising misinformation campaigns during an election and using deepfakes that are potentially more persuasive than other forms of misinformation can cause disbelief and could lead to a chilling effect on free and open political discourse. As Wilkerson (2021) asserts, the most important risk posed by deepfakes, outside the pornographic industry, is their capacity to damage democracy.

Privacy Violations

A significant ethical issue arises from the production and distribution of content that exploits an individual’s image or voice without their permission, resulting in a violation of personal boundaries and potentially causing significant harm. Unauthorised uses can range from altering someone’s appearance in a video, putting words in their mouth, swapping the face of one person with another or even more malicious applications such as defamation and blackmail. According to Albahar and Almalki (2019), the ‘breach of privacy is a major ethical concern associated with deepfake. Almost any digital human trace can be faked, which poses a threat to the privacy of individuals’ (p. 3247). The accessibility of deepfake technologies has exacerbated these concerns, because it is possible for almost anyone to create convincing fake videos or audio files and violate an individual’s privacy. This issue is also emphasised in a report published by the Pew Research Center (2023b), which indicates that new threats to human rights will arise as privacy becomes more difficult or even impossible to maintain.

The relationship between deepfakes and consent is problematic, as it touches on individuals’ fundamental rights to autonomy and respect for their control over their own images and voices. It is necessary to improve technological boundaries to protect peoples’ rights and to ensure that the use of any videos, images and audio is consensual. Maintaining a balance between innovation and freedom of expression is challenging; nevertheless, it is important to promote the responsible use of emerging technologies.

Social Polarisation

Spreading digital misinformation on social media exacerbates polarisation and divides users on these platforms (Del Vicario et al., 2016; Quattrociocchi et al., 2016; Zollo et al., 2017). As observed in the Clinton deepfake case, political deepfakes can facilitate a group forming negative opinions about a political figure. As a result, a support system for the fake information develops, which draws more attention to the fabricated content. Thus, political deepfakes as new forms of misinformation with persuasive capacity intensify political polarisation and aggravate separation in communities.

Individuals have a tendency to present bias when they evaluate political statements and discussions, dismissing views that contradict their opinions and favouring those that align with their existing beliefs (Nyhan & Reifler, 2010; Taber & Lodge, 2006). As Morris et al. (2020) note, ‘individuals are more likely to accept or reject misinformation based on whether it is consistent with their pre-existing partisan and ideological beliefs’, supporting the argument made by Nyhan and Reifler (2010). When the content used in deepfakes aligns with individuals’ pre-existing beliefs, it re-entrenches their opinions and reinforces their ideological bubble. In contrast, when they encounter deepfakes that clash with their perspectives, they can reject them or become suspicious of opposite viewpoints. This dichotomy builds an environment of distrust and resentment between different social groups and increases societal divisions. According to the Pew Research Center (2023b), the spread of deepfakes and disinformation, as well as the widening social and digital divides are emerging threats. Political polarisation of society enabled by fabricated content weakens democratic values. This creates an obstacle to having civil and productive political debates and reaching consensus.

Security Threats

Political deepfakes can potentially threaten society’s security, particularly if they become more advanced. As Singh and Dhiman (2023) argue, deepfakes can present security risks when used for cyberattacks, propaganda campaigns and intelligence activities. Therefore, they can compromise national security. By manipulating statements or actions of political leaders or military officials, well-crafted deepfakes can misrepresent them, create misunderstandings and lead to escalations of conflicts or false narratives about national or international events. For instance, a deepfake video could falsely show that a governor made comments about other leaders or made provocative statements. This behaviour could potentially trigger confusion, influence foreign policy or create diplomatic crises. Langa (2021) argues,

Lawmakers have expressed concerns about the implications of the quick spread of technology enabling the creation of deepfakes, calling on the Director of National Intelligence to assess threats to national security and argue that such technology could be used to spread misinformation, exploit social division, and create political unrest. (p. 770)

The emerging risks of political deepfakes make it essential to achieve global consensus to initiate a uniform and wise approach to regulating political deepfakes to protect democracy. Wilkerson (2021) claims that ‘suppression of deepfake technology may mean suppression of rights. But, an absence of regulation leaves the nation vulnerable to election tampering and political dismantlement’ (p. 432). There are proposals for new legislation to protect against AI-derived deepfakes in the United States led by the Federal Trade Commission (FTC) (Torkington, 2024). Further policies would need to be crafted to form well-defined boundaries and create accountability for generating and distributing deepfakes, specifically those that are generated for the purpose of manipulation or causing harm.

According to Langa (2021), deepfakes also have an alarming ability to provoke armed conflicts, which are dangerous to domestic and international security landscapes. For instance, in 2019, the fake video of Gabon’s President, Ali Bongo Ondimba, raised doubts about his health, which led to an attempted coup (Chuming et al., 2022). Such an event emphasises how easily political deepfakes can be used to discredit authorities. In addition, the credibility of security systems that rely on video or audio authentication can be undermined by the misuse of deepfake technology since hi-tech forgeries could deceive these systems. Therefore, using political deepfakes poses several threats to a state’s stability and safety on a worldwide scale. These issues emphasise the need to find practical solutions to limit their negative effects.

Mitigating the Negative Consequences of Deepfakes

Deepfake technology has created several noteworthy issues that require prudent solutions to decrease its negative consequences. Various stakeholders such as tech companies, researchers, social networking platforms, and authorities, should collaborate to define intelligible rules for the use of different AI tools. For example, technology companies and social media policymakers can prepare unbiased and practical guidelines for deepfake generation overall, considering penalties for the creation of malicious deepfakes. In addition, more grants can be provided by the governments to support scholars for AI research.

It is also necessary to increase public awareness of different types of digital manipulations and forgeries, and how they are used to influence society. As Appel and Prietzel (2022) assert, people need to learn what deepfakes are and how to identify them. Thus, it is important to develop educational plans to improve citizens’ ability to evaluate media content. For example, videos that explain how deepfakes are created can be produced to address deepfakes distinctive potentials to entice viewers. As Doss et al. (2023) explain, without education, confusion in society will rise if people are incessantly exposed to deepfakes. Furthermore, authorities and social media platforms can organise campaigns to connect with several audiences to enlighten the public about the existence and risks of deepfakes. People must also be more sensible and attempt to check the accuracy of any visual or audio content before distributing it on any platform.

In addition, social media platforms, such as YouTube, Instagram, X (formerly Twitter) and Facebook, could be more committed to the detection of deepfakes, or tagging them as fabricated content, and removing them from their platform if they are deceiving society. Most recently, Elon Musk, owner and executive chairman of X, shared a manipulated video that mimicked the voice of U.S. Vice President Kamala Harris, making her appear to say things she did not. Musk’s post has been viewed more than 130 million times and retweeted 242k times, and includes only the caption ‘This is amazing’ with a laughing emoji. The sharing of this video demonstrates the necessity of providing and enforcing policies on social media to combat the spread of misinformation. Finally, deepfake creators must be compelled to use digital watermarking to label their content, making it possible for viewers to identify deepfakes. AI technologies are developing rapidly. Consequently, it is necessary to present lucid guidance for using them to generate digital content and to be more attentive to training these machine-learning systems.

Conclusion

The findings from this study make important contributions to the current literature and develop our knowledge of the social impact of political deepfakes. First, it illustrates that individuals may be challenged to identify AI-generated content. As a result, fabricated content is more likely to be trusted. Hameleers et al. (2024) reached a similar conclusion and argued that audiences ‘have a hard time distinguishing a deepfake from a related authentic video’ and ‘deepfakes are seen as relatively believable’ (p. 67). By analysing the comments on Clinton’s deepfake video, this study portrays that many viewers no longer trusted the Gov. DeSantis, and they did not consider voting for the governor if he won the race and became a presidential candidate. This finding supports the Pew Research Center’s (2020) report that an increase in misinformation undermines public trust.

Comments that individuals post on social networking sites may not guarantee that they will vote for a specific candidate, but they still reflect people’s reactions to what is shared on these platforms during political campaigns. This finding underlines the potential of deepfakes as persuasive and deceptive content capable of forming citizen perceptions and affecting political judgements. In addition, this study discusses the ethical concerns and threats caused by political deepfakes to explain their ability to modify the landscape of misinformation on social media. Deepfakes can reduce public trust in the media, invade the privacy of politicians, influence elections, aggravate political division and pose remarkable threats to society’s stability.

A limitation of this study is that it does not include comments on this specific deepfake video from other social media platforms. The deepfake video of Hillary Clinton was also shared on Twitter, and it would be noteworthy to compare comments from both platforms. However, the data used in this study was limited to Instagram. Because of Twitter’s new policies that make collecting responses to tweets very challenging, this study could not gather data shared on Twitter for analysis. Instagram may not be representative of other social networking sites, and this study could be stronger if it was possible to also include Twitter comments.

The advancement and ease of access to AI technologies have simplified innovative forms of content creation, elevating deepfake technologies to new heights. Different applications allow users to click once to switch faces in videos (Thaware & Agnihotri, 2018). Consequently, more deepfakes can be generated and shared across our digital media ecosystem, increasing doubt about the authenticity of any visual content. Deepfakes will continue to present new issues when this technology develops more and the outcomes become remarkably realistic. Therefore, it is important to foster adequate concepts to explain our interactions with deepfake technologies, prepare feasible plans to increase public awareness and to define new requirements and ethical guidelines for the responsible use of artificial intelligence and synthetic media.

Footnotes

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author received no financial support for the research, authorship and/or publication of this article.