Abstract

This study investigates how journalists perceive and adopt artificial intelligence (AI) technologies in their professional routines, offering timely insights into a field undergoing rapid technological change. Drawing on 20 semi-structured interviews with journalists from a range of media organizations in India, this research situates its analysis within the Global South context to explore (1) how journalists make use of AI technologies in their newswork and (2) what factors shape journalists’ adoption behavior toward AI. Acknowledging that individual experiences and contextual factors shape technology adoption, the study adopts a qualitative approach to the Technology Acceptance Model 3 (TAM3) as a guiding framework. The findings show that AI is increasingly used in journalistic communication processes, with its adoption shaped by journalists’ perceptions of its usefulness and ease of use. By combining a well-established technology acceptance framework with qualitative insights, this study contributes to a better understanding of the multifaceted factors influencing AI adoption in journalism.

Keywords

Introduction

Journalism has been continuously shaped and transformed by technological advancements. Among the most significant developments is the rise of artificial intelligence (AI) technologies, with generative AI (e.g., ChatGPT, Gemini) becoming an integral part of journalistic communication processes. AI is generally described as “a collection of ideas, technologies, and techniques” (Brennen et al., 2018: 1) that allow software or hardware systems to imitate human intelligence by replicating human performance and behaviors, typically for tasks that demand human cognitive abilities within specific areas of application, relying on AI techniques such as machine learning (European Commission, 2024). AI’s transformative potential has been increasingly recognized by media organizations worldwide (Newman and Cherubini, 2025). While AI offers significant potential for supporting core journalistic tasks, its adoption also raises important challenges and uncertainties that must be navigated (Amigo and Porlezza, 2025; Cools and Diakopoulos, 2024; Wu, 2024). Whether AI will become a lasting game-changer or merely a passing technological trend, however, largely depends on journalists’ perceptions of their relevance and impact, as the actual adoption of new technologies is rarely determined by technical functionality alone but is instead shaped by individual judgments and contextual influences (Venkatesh and Bala, 2008).

Despite growing scholarly interest in AI’s transformative role in journalism, research still lacks a comprehensive understanding of how journalists perceive and adopt these technologies into their newswork (Sarısakaloğlu, 2025b). Additionally, scholars have particularly emphasized the need to investigate diverse regional contexts to challenge dominant Western-centric discourses and foster more global knowledge production about the integration of AI in journalism (Sarısakaloğlu, 2025a). Although research on AI use in newsrooms is growing across Global South countries, known research specifically focusing on journalists’ perceptions and adoption of AI in India remains notably scarce (Sarısakaloğlu, 2025b).

Investigating the factors that facilitate or hinder AI adoption is essential for understanding its impact on journalism and for designing workflows and tools that align with journalists’ needs, expectations, and professional standards. To explore these dynamics, the Technology Acceptance Model (TAM), and in particular its refined version, the Technology Acceptance Model 3 (TAM3), provides a valuable theoretical foundation (Venkatesh and Bala, 2008). TAM3 offers considerable explanatory power for predicting the intention to use and the adoption of innovative technologies, positing that perceived usefulness and perceived ease of use are the two principal determinants of individuals’ acceptance behaviors (Venkatesh and Bala, 2008). In this context, adoption refers to the actual behavior of journalists beginning to use AI technologies in their work, while acceptance captures the cognitive and attitudinal processes through which they assess AI’s usefulness and ease of use, shaping their intention to adopt it. Although the TAM has been widely employed across various fields to explain patterns of technology adoption, its application to journalism, especially in studies exploring how journalists perceive and integrate AI into their work, remains limited (Sarısakaloğlu, 2025b). Moreover, the few existing studies mainly employed quantitative approaches, such as surveys, which tend to examine general attitudes and behavioral intentions and offer only limited insight into contextual dimensions (e.g., organizational constraints, technical or infrastructural challenges) that further shape technology adoption in practice.

This study takes an initial step toward addressing this gap by developing a more context-sensitive exploration of how AI is adopted in journalistic practices. It (1) explores how journalists integrate AI into their newswork and (2) identifies the factors they perceive as facilitating or hindering adoption, with a focus on their assessments of the technologies’ usefulness and ease of use. Drawing on 20 semi-structured interviews with journalists in India, the study offers in-depth insights into AI adoption within journalism in a Global South context.

As such, the contribution of this study is threefold: First, it demonstrates that TAM3 can be fruitfully applied in journalism research as a guiding framework to qualitatively explain how journalists’ perceptions influence AI adoption. Second, it advances theoretical understanding of AI’s usefulness and ease of use in shaping the future trajectory of journalism. Third, by focusing on India, it expands the geographical scope of research and contributes to a more globally balanced perspective that moves beyond Western media environments.

The article is structured as follows: First, it provides insights into the use and perception of AI in journalism. It then illuminates how TAM3 can support an understanding of journalists’ perceptions and adoption of AI. The methodology section outlines the empirical approach, followed by the findings organized in alignment with the research questions. Finally, the article concludes with a discussion of key results, limitations, and future research directions.

Use and perception of AI technologies

AI is becoming increasingly integrated across all stages of the journalistic value chain, supporting media organizations worldwide in preproduction, production, postproduction, distribution, consumption, as well as in administrative and business workflows. Although the benefits and drawbacks of AI are widely acknowledged in journalism research, studies focusing specifically on journalists’ perceptions and their adoption of AI remain scarce (Oh and Jung, 2025; Sarısakaloğlu, 2025b). This makes it all the more important to investigate how they perceive new technologies, given that they are central actors in producing news content, and that their perspectives influence the adoption of technologies, inform their integration into newsroom routines, and shape the role such technologies play in journalistic practice (Boczkowski, 2004). Perception can be defined as the subjective process in which individuals organize, identify, interpret, and judge sensory information “to form a mental representation” (Schacter et al., 2011: 127).

Research has primarily examined how journalists perceive the role of AI in supporting or challenging existing newsroom practices. For instance, Schapals and Porlezza (2020) found that journalists often view AI as a supportive tool that assists in streamlining workflows. At the same time, research examining journalists’ perceptions of AI’s impact on their profession and their views on its ethical dimensions revealed that initial optimism is tempered by concerns about job security, the erosion of professional autonomy, and a perceived decline in journalism’s authority (Amigo and Porlezza, 2025; Olsen, 2023; Shin et al., 2025).

Møller et al. (2024) show that journalists perceive human capacities like emotional intelligence, ethical judgment, and creativity as essential strengths AI cannot replicate. Similarly, Cools and Diakopoulos (2024) analyzed journalists’ perceptions of generative AI and reported that while many acknowledged the efficiency gains AI can bring to content creation, they also raised concerns about accuracy, credibility, and algorithmic bias.

In the Global South, research on journalists’ perception of AI, conducted in countries such as Vietnam (Trang et al., 2024), Pakistan (Jamil, 2020), Jordan (Sharadga et al., 2022), and parts of Latin America (Soto-Sanfiel et al., 2022), points to additional layers of complexity surrounding AI implementation, including inadequate infrastructure, limited resources, and the lack of a clear governmental strategy for promoting AI. Jamil (2020) illustrates how Pakistani journalists face compounded difficulties due to limited access to training and low levels of AI literacy. These findings suggest that journalists’ views of AI are not influenced only by technological affordances but also by broader contextual factors in which they operate.

Overall, existing research offers insights into journalists’ perceptions of AI but often focuses on isolated aspects and lacks a comprehensive view. How journalists in India experience and respond to AI integration in their professional routines remains underexplored. A notable contribution is the study by Rahi et al. (2024), who identify awareness, training, usage, and integration as key factors in AI adoption through exploratory factor analysis. While their study provides valuable quantitative findings, it does not address how journalists navigate AI in daily practice, a gap that calls for qualitative inquiry given India’s fast-evolving digital media environment and growing involvement in AI-driven innovation. According to the

Despite these trends, a significant gap remains in how journalists perceive AI’s usefulness and ease of use, as personal and contextual factors shape its adoption. Gaining insight into these perspectives is essential to understanding how emerging technologies reshape journalistic practices in one of the world’s largest and most dynamic media markets.

Understanding AI adoption through the lens of the Technology Acceptance Model

To understand how individuals perceive and adopt new technologies, a wide range of theoretical models has been developed (e.g., Rogers, 2003). Among them, TAM is widely recognized as one of the most influential and commonly applied frameworks for studying how individuals adopt emerging technologies (Venkatesh and Bala, 2008). TAM explains technology adoption by identifying two key predictors of individuals’ intention to accept or reject new technologies: perceived usefulness (PU), defined as “the degree to which a person believes that using a particular system would enhance his or her job performance” within an organizational context, and perceived ease of use (PEOU), referring to “the degree to which a person believes that using a particular system would be free of effort” (Davis, 1989: 320). Accordingly, journalists may be more likely to adopt AI in their work if they perceive it as useful for improving their work (PU) and easy to use without requiring significant effort or training (PEOU).

Over time, the original TAM has been expanded by integrating additional variables to account for contextual and individual differences (Lim and Zhang, 2022). An important extension of the TAM was introduced with TAM2 (Venkatesh and Davis, 2000) and later with TAM3 (Venkatesh and Bala, 2008) to enhance the model’s explanatory power. While the original model focused on PU and PEOU as the primary predictors of technology adoption, TAM2 positioned PU as the key determinant of intention and PEOU as an influencing factor of PU, extending the model by incorporating social and cognitive instrumental variables, such as subjective norm, image, job relevance, output quality, and result demonstrability, to emphasize that individuals’ intentions to adopt a technology are shaped not only by their perceptions of its usefulness and ease of use, but also by how others around them perceive the technology and how relevant the technology is to their work tasks (Venkatesh and Davis, 2000). Moreover, voluntariness and experience of use were incorporated as moderating variables, acknowledging that the strength of these relationships can vary depending on whether system use is mandatory or voluntary, and on the individual’s level of experience with the technology. Building on this, TAM3 further advanced the model by adding perceived computer self-efficacy, external control, computer anxiety, computer playfulness, perceived enjoyment, and objective usability as key determinants of PEOU to better explain how individuals form ease-of-use perceptions (Venkatesh and Bala, 2008).

Despite its continued development and widespread use, TAM has been criticized for its limited adaptability across technologies and user groups, and for relying heavily on quantitative methods that often overlook the contextual, social, and emotional factors influencing technology adoption (Bagozzi, 2007).

In journalism, the TAM has been employed to examine various forms of technology adoption. However, its use in studying AI adoption among journalists remains limited and is largely confined to a few quantitative studies (Sarısakaloğlu, 2025b). A notable example is Soto-Sanfiel et al.’s (2022) quantitative study on Latin American journalists’ attitudes toward AI. A qualitative exploration grounded in the TAM is still lacking, despite its suitability for capturing the context-dependent ways in which journalists engage with AI and for overcoming the limitations of its predominantly quantitative approach.

As AI adoption occurs within daily routines, this study uses TAM3 as a guiding framework to examine how journalists interpret and assess its opportunities and challenges in newswork. Since journalists are more likely to form judgments about AI’s usefulness through experiences, it is essential to move beyond abstract behavioral intentions and investigate their actual practices (Venkatesh and Bala, 2008). To establish a grounded understanding of how AI is concretely applied and implemented in newsrooms, the study begins by asking:

How do journalists working in Indian newsrooms make use of AI technologies in their work? Beyond synthesizing their experiences with AI, it is crucial to understand what shapes journalists’ engagement with these technologies. Drawing on TAM3’s determinants of PU and PEOU, this study explores facilitating conditions and potential barriers to AI adoption by examining:

What factors derived from PU and PEOU shape the adoption behavior toward AI technologies among journalists in India?

Method and data

To address the research questions, this study employed a qualitative approach using semi-structured interviews, which ensured consistency across key topics while allowing interviewees to express their perspectives in their own terms and the researcher to follow relevant leads as they emerged in conversation (Bryman, 2016).

Sampling method, interviewees, and data collection

This study employed a combination of sampling strategies to recruit journalists who met the purposeful criterion of having experience with AI in their professional work. Initially, a purposive sampling approach was applied by identifying media organizations known for AI experimentation and contacting journalists affiliated with them who met the selection criteria. Given the emerging nature of the phenomenon and the challenge of reaching suitable journalists, additional participants were recruited through convenience sampling by drawing on accessible contacts and snowball sampling. This combined approach allowed for strategic relevance and practical accessibility, ensuring that all interviewees could offer informed, experience-based insights.

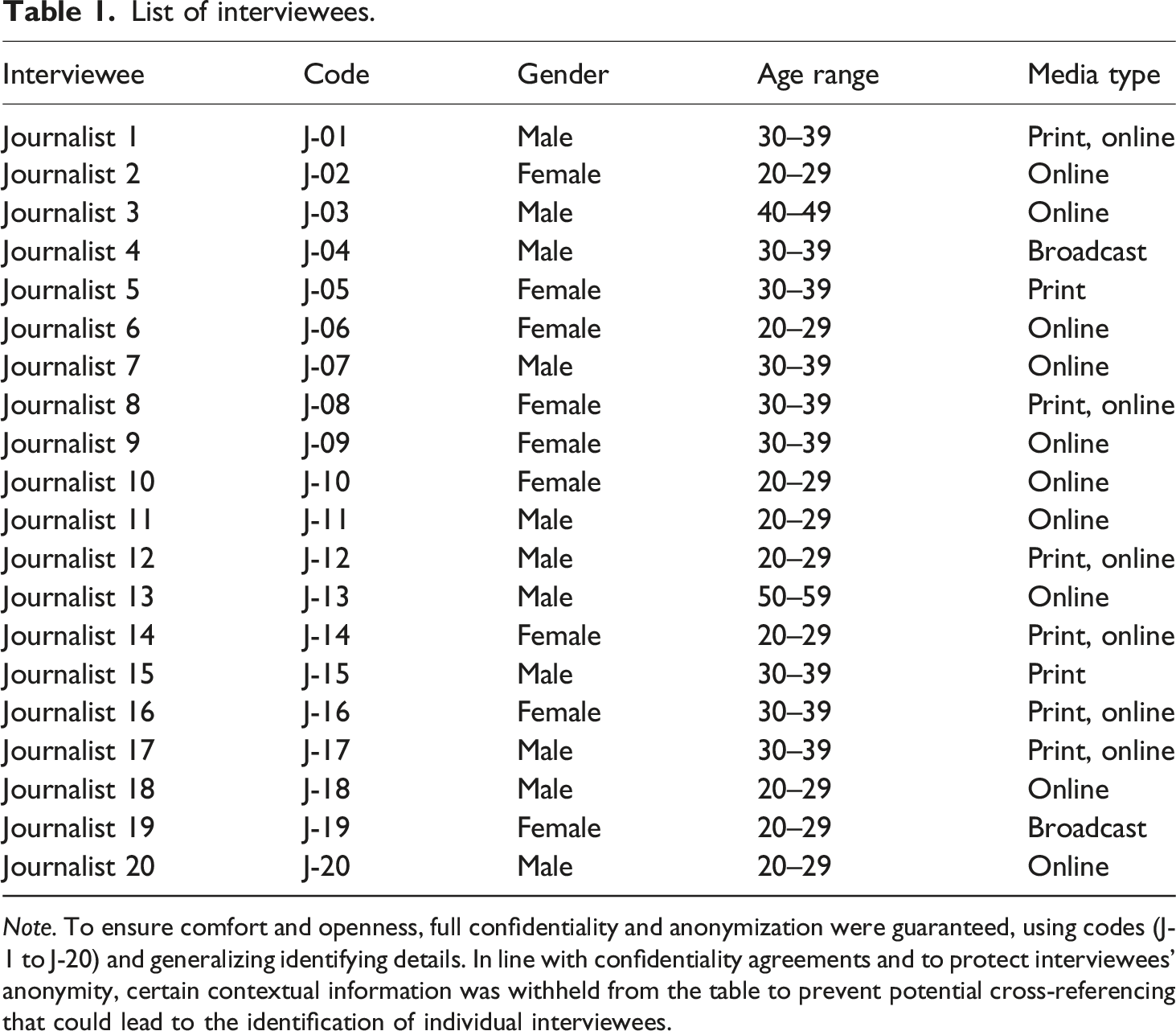

List of interviewees.

The number of interviews was determined by the principle of thematic saturation, aiming to reach the point at which additional interviews no longer yielded new insights. This decision was informed by Guest et al. (2006), who found that data saturation often occurs within the first 12 interviews, and by Warren (2001), who suggests that 20 participants are sufficient to capture diverse perspectives. Aligned with these benchmarks and the study’s scope, 20 interviews were deemed sufficient to ensure valid and thematically rich findings.

The interviews were conducted via online video conferencing platforms (Google Meet, Webex, or Zoom) between December 2024 and February 2025.

The final sample comprises 55% male and 45% female journalists, with an average age of 33 years. Notably, only two interviewees are over 40 years old. Several invited journalists in this age group reported not yet using AI in their work. While this introduces a potential bias in terms of age distribution, it may also reflect a broader generational trend in journalism, where younger professionals are more likely to engage with technological change as part of their everyday practice. The interviewees hold either a bachelor’s (

All interviews were conducted in English. The duration of the interviews ranged from 30 to 88 minutes. The interviews were recorded and transcribed verbatim using OpenAI’s Whisper model.

Interview guide and data analysis

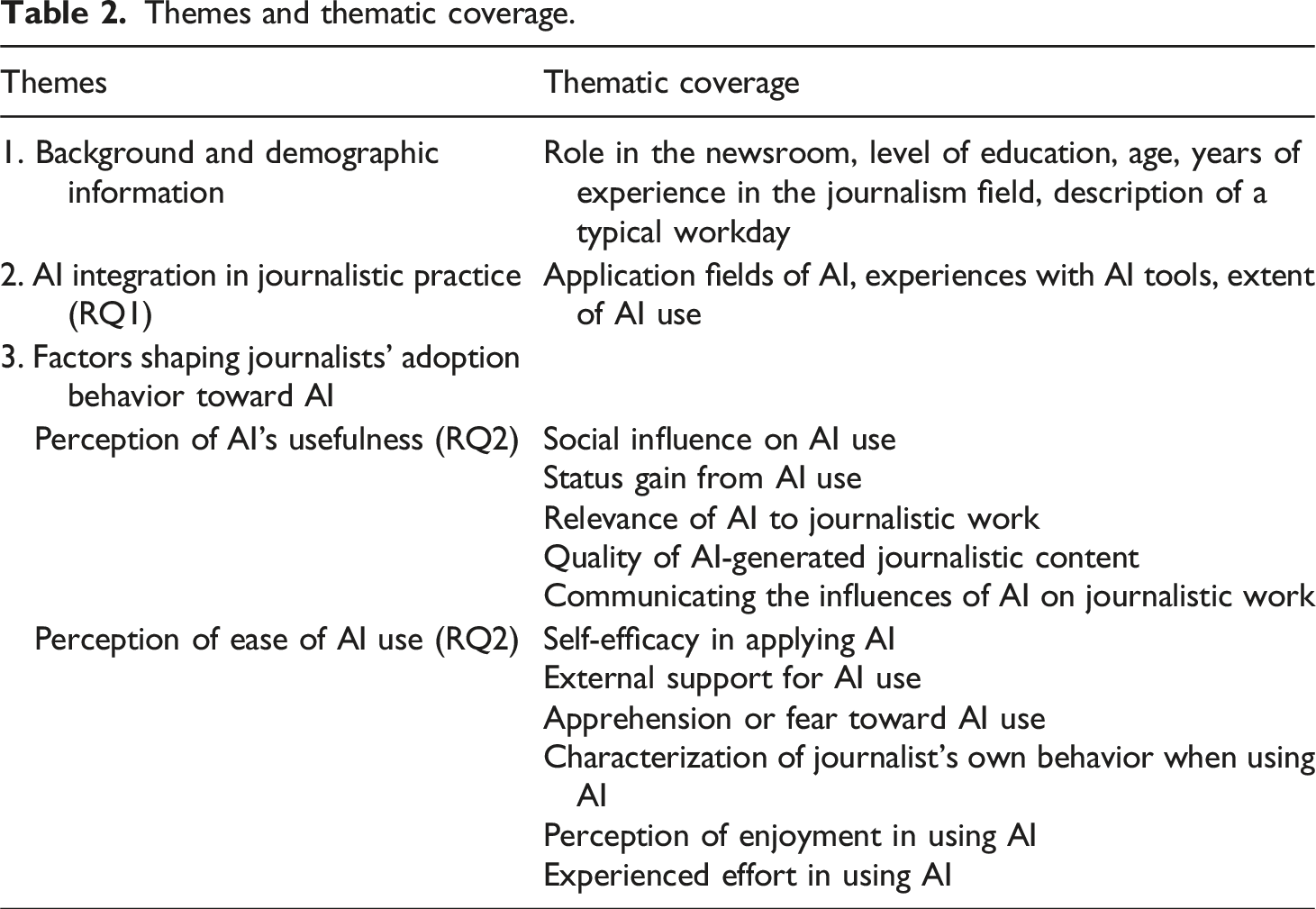

Themes and thematic coverage.

To ensure analytical rigor, the interview data were analyzed using a two-stage coding process combining deductive and inductive approaches. Main categories were first derived deductively from the study’s theoretical framework, then refined inductively following Mayring’s (2014) summarizing qualitative content analysis method. This process facilitated the identification of emerging patterns and the development of new categories grounded in the data. The analysis was iterative, involving repeated scrutiny to better reflect the depth and complexity of the data and increase the reliability of the coding process. To support the analysis, the software MAXQDA was used, guided by Kuckartz’s (2014) approach to computer-assisted content analysis.

Results

Integration of AI technologies in journalistic practices (RQ1)

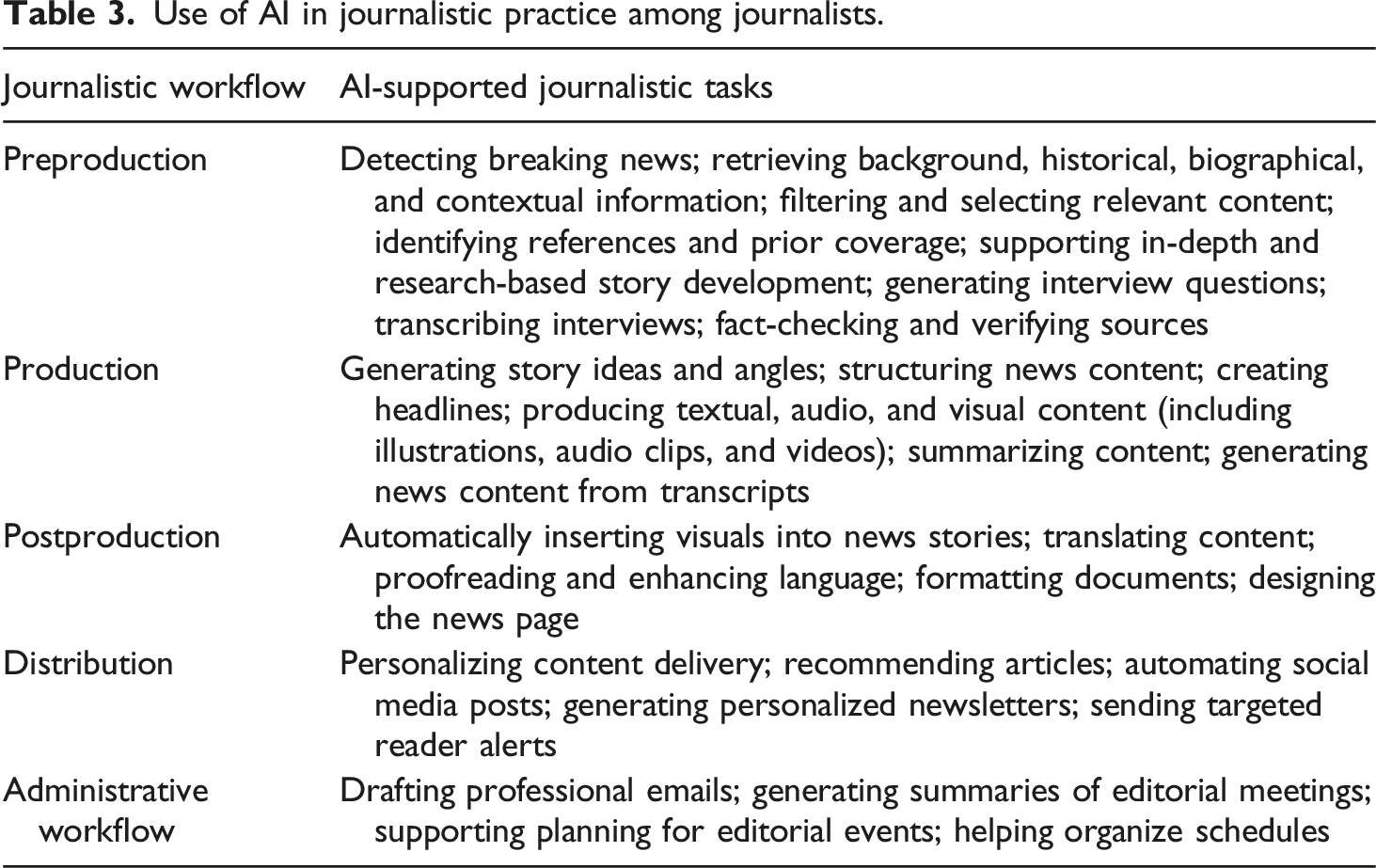

Use of AI in journalistic practice among journalists.

The journalists tend to rely most on generative AI to streamline core editorial functions. Journalists reported widespread use of ChatGPT (14 out of 20), followed by Gemini, with Microsoft Copilot and Grammarly. Additionally, two journalists noted the use of an “inbuilt AI tool provided by the organization” (J-01). Other tools, such as Canva, Dataminr, DALL·E, Fact Check Explorer, Flourish, Google Journalist Studio, Google Trends, InVideo, Meta AI, Microsoft Bing, PDF GPT, Pinpoint, and PlagCheck, are used more selectively.

Overall, the findings indicate that AI is embedded in the daily routines of more than half of the interviewed journalists (11 out of 20), integrating AI into their newswork. As one journalist noted, “AI has become a part of my everyday life” (J-06). Others described its constant presence in their workday, with one stating, “Every time I’m at my desk, ChatGPT is always open on my system” (J-02). In contrast, seven out of 20 journalists reported using AI on a more occasional or task-specific basis. Notably, many of them were aged 30 to 59 with more professional experience, which may reflect established routines rooted in traditional practices, making them more selective in adopting new technologies. As one journalist explained, “It is not a routine or a habitual part of the work process, but it helps to use AI” (J-07). Among the seven journalists who engage in both desk-based and field reporting, three expressed that AI is not part of their routine, with one strongly emphasizing, “A field reporter and going to the field cannot be replaced by any AI” (J-13).

These findings suggest that, although some interviewees use AI only occasionally, the majority perceive it as a technology increasingly adopted in their professional routines. Many of them use various AI tools across the entire journalistic value chain, indicating a transformative shift in their field.

Perceived usefulness as a driver shaping journalists’ adoption of AI (RQ2)

To understand the factors influencing the adoption of AI, journalists were encouraged to reflect on their experiences and perceptions of how useful AI is in their work (see Table 2). The findings suggest that social influence significantly shapes journalists’ adoption of AI, as their perceptions of its value are partly informed by interactions with colleagues and the broader organizational culture. Journalists highlighted a shared, informal openness within newsrooms that encourages experimentation and gradual AI integration. As one journalist put it, “everybody promotes the incorporation of AI” (J-12), while others observed that their colleagues are generally supportive of its use. According to the journalists, this supportive environment helps to ease initial doubts, as colleagues “are helping change the perspective” (J-12). Such openness has led some journalists to overcome early hesitations, making AI a part of everyday practice. Observing how colleagues apply AI in their work has contributed to a more constructive view of its usefulness. In some cases, open conversations and shared experiences were identified as key factors in shifting attitudes: “consciously and subconsciously, there is an influence (…); their opinions do shape my perspective in some ways” (J-06). Tool sharing and collaborative experimentation further reinforce AI’s perceived benefits, especially for improving workflow. Only a few journalists emphasized that their decision to adopt AI is driven mainly by personal judgment and firsthand experience, rather than by the organization’s stance or colleagues’ behavior.

When asked about the perceived impact of AI on their professional standing within media organizations, journalists highlighted that its use can enhance their status, strengthening the belief that AI is a useful tool for advancing their reputation. AI adoption is associated with greater “status” (J-18), professional “value” (J-11), and “prestige” (J-20). One journalist stated that “those who use AI have more prestige than those who do not, because they are very up to date and tech-savvy” (J-02). In this context, AI use is seen to “give you more importance” (J-10), as colleagues proficient in AI are regarded as more efficient and professionally competent. In particular, journalists noted that those who use AI are perceived as working “smartly” and gaining a visible edge over colleagues (J-05). In contrast, others stressed that professional respect is grounded in human skill, authenticity, and journalistic depth, not tool proficiency. As such, AI is regarded simply as a tool rather than a status-enhancing “asset” (J-03).

Regarding the relevance of AI to journalistic work, all interviewed journalists consider AI applicable to their professional tasks, with one expressing, “it has become an important part of my job” (J-10). AI is broadly viewed as a supportive tool that enhances, but does not replace, core journalistic functions. Many journalists (15 out of 20) interpret AI as relevant when it streamlines repetitive and time-consuming tasks and offers tangible benefits across all stages of content production and distribution (see Table 3). AI was described as a “massive advantage” and even “a must-have” (J-03), with others emphasizing its ability to “reduce the workload considerably” (J-07), “help you wind up your work” (J-05), “accelerate your production efforts” (J-04), and “boost productivity and efficiency” (J-06). In particular, “The proofreading time that it saves from my end (…) is very efficient to me” (J-02), one journalist explained, while another reported that preparing interview questions “saves quite a lot of time” (J-11). AI is consistently portrayed as a tool that optimizes workflows, with one journalist emphasizing that “AI makes the work easy” and “AI makes us work easier” (J-01). Several journalists stressed AI’s role in overcoming language-related challenges in India’s multilingual environment. “It is making work faster, better, error-less,” one noted, emphasizing how it eliminates “the little mistakes that we do while writing” (J-16). Moreover, journalists emphasized that automating routine tasks frees up cognitive and emotional energy for more meaningful or creative aspects of their work, such as in-depth reporting. AI was also described as functioning like a “second brain” during periods of “writer’s block” (J-18), offering helpful suggestions, phrasing options, and structural support.

Despite acknowledging AI’s usefulness in supporting their daily work, journalists stated that the quality of AI-generated outputs is a decisive factor shaping their willingness to adopt AI more extensively. A central concern lies in the perceived creative and emotional limitations of AI. Many journalists highlighted that AI-generated writing lacks originality, cultural nuance, narrative variability, and human emotion, elements considered fundamental to high-quality journalism. Descriptions of AI output as “bland” (J-14), “formulaic” (J-14), “dry” (J-04), “monotonous” (J-05), “robotic” (J-18) or overly similar across users (J-09) reflect dissatisfaction with its inability to generate content that meets the narrative depth, variation, and creativity expected in professional storytelling. Journalists emphasized the need for empathy and human presence in storytelling, noting, “You have to humanize the stories (…) you are telling while presenting the facts” (J-06).

Concerns about factual accuracy were equally pronounced. Journalists flagged hallucinations, fabricated content, and lack of contextual awareness as significant drawbacks. As one journalist remarked, “My biggest fear and the biggest challenge will come from the hallucination they do” (J-19). While AI is seen as useful for basic editing tasks, such as grammar correction or sentence restructuring, its unreliability in fact-checking limits its application for more critical journalistic functions. Journalists mentioned that AI can even increase their workload, as the algorithms may often be insufficient for effective source verification. Additional challenges include a lack of transparency, biases in AI-generated content, and other ethical risks associated with visual stereotyping and skewed training data.

Finally, nine journalists expressed confidence in demonstrating and communicating the outcomes of AI use to colleagues, which may reinforce their perception of its value and support adoption in newsroom practices. They described guiding less experienced colleagues by discussing potential benefits and risks, discouraging overreliance, and pointing out policy issues, demonstrating a form of informal result demonstrability through peer mentoring. However, others reported reluctance or an inability to advocate for AI. This hesitation was often linked to the absence of formal newsroom policies, lingering professional stigma, or personal uncertainty about AI’s broader applicability.

Overall, many interviewed Indian journalists perceive AI as a useful and strategically relevant technology, particularly for streamlining routine tasks, boosting productivity, and enhancing professional status. While concerns about quality and ethics temper this enthusiasm, they appear not to outweigh the perceived value of AI for supportive functions. Informal peer mentoring and openness to experimentation further suggest a shift toward sustained adoption, indicating that AI may represent a potential game-changing development in their newswork.

Ease of use as a condition for AI adoption in newsrooms (RQ2)

In addition to exploring the perceived usefulness of AI in journalism, interviewees were asked to share their experiences with using AI tools, including how accessible they find them and how much effort is involved in their effective use.

The findings suggest that most journalists perceive AI tools as easy to use, which supports their integration into newswork. ChatGPT and Gemini are frequently described as user-friendly, with their chat-based interfaces making them more accessible and interactive. As one journalist noted, “It is very easy to use. It’s very human-like. You ask questions and you get answers. (…) You don’t have to use any level of coding” (J-04). While many journalists assessed their AI proficiency as moderate, noting competence in basic functions but recognizing the need for further learning, a smaller group considered themselves closer to proficient. Others characterized their proficiency as “immature” (J-17) or described themselves as being in a “learning phase” (J-20), expressing a willingness to develop their skills further. Prompt engineering emerged as a key skill in 16 interviews. Journalists emphasized that the clarity and structure of prompts directly determine the quality of AI-generated output, as one stated, “the better your prompt (…), the better (…) the quality of work that ChatGPT produces” (J-07). Another remarked, “Prompt engineering, that’s the most important thing. If you give the right prompt, it’s only going to make your job easier (…) and help you use AI more effectively” (J-06). It was widely described as a “prerequisite skill” (J-20), underlining its growing perceived value in meeting the evolving technological demands of journalistic work. Interestingly, journalists acknowledged that prompting was largely learned informally, through trial and error, peer exchange, or self-directed experimentation, rather than formal training. Others called for more structured learning opportunities, stressing the importance of tool-specific training to strengthen prompt design skills. Moreover, journalists highlighted the importance of critical thinking skills, fact-checking, and content verification as essential safeguards against misinformation, with several expressing interest in acquiring data analysis skills to strengthen investigative reporting. Many outlined the need for foundational AI literacy to understand how to ethically and effectively engage with AI.

However, journalists’ ability to use AI effectively depends not only on their motivation to learn but also on the level of organizational support available to them. Journalists expressed that a lack of formal training or dedicated workshops in their newsrooms undermines their confidence, restricts opportunities for experimentation, and hinders deeper integration. In contrast, those with access to training reported greater confidence and adaptability in using AI, underscoring how organizational investment enhances perceived ease of use and encourages broader adoption. Another key barrier to effective AI adoption is the widespread absence of formal organizational guidelines and ethical frameworks. Journalists described a lack of clear standards for responsible AI use, often resulting in reliance on personal judgment and cautious or discreet use. This gap is partly attributed to insufficient awareness among newsroom leadership. Journalists emphasized the urgent need for leadership to take initiative, calling for stronger regulation. They pointed to inconsistencies across AI tools, stressing that a lack of standardization and oversight weakens their reliability. Developers were urged to improve testing, and media organizations to implement clearer governance for responsible and consistent use. This lack of organizational support contrasts with newsrooms where clear internal policies and training are in place, where formalized standards enable more confident, transparent, and consistent use of AI, ultimately supporting its broader adoption. Some organizations address ethical concerns by imposing outright bans on AI use, which removes the need for regulation but limits opportunities for experimentation and hinders AI adoption.

While most journalists reported no major infrastructural barriers to AI use, citing stable internet access and sufficient technical resources as key enablers of smooth integration, a few pointed to occasional connectivity issues as a constraint. Slow or unreliable internet in certain regions was noted as a barrier, as it affected the responsiveness of AI tools and limited their consistent, effective use.

Technical barriers to accessing AI also appear to pose only little restriction, as many journalists described the tools as readily available, functional, and compatible with basic devices, especially in urban settings. However, several journalists identified high subscription fees as a major obstacle, with one stating, “When companies (…) reduce their prices, people will prefer to use it. Otherwise, the introduction of AI will be very slow in India” (J-11). Others pointed to outdated hardware, limited access to advanced AI for data analysis, and the lack of locally relevant data as critical issues. Journalists noted that AI tools are often trained on datasets primarily sourced from Western contexts. As one journalist explained, “We have enough data that is coming out of Europe, because there are so many consulting firms that are already working on that. But there’s not enough in our region” (J-06).

Many expressed concern that AI poses a threat to job security, particularly in roles involving repetitive tasks, seeing it as a potential driver of job displacement. Some warned against overdependence, suggesting it could erode creativity, critical thinking, and professional autonomy. As one journalist reflected, “the more I depend on AI for writing or creating anything, the more I (…) give AI the power to remove me (…) from the job I’m doing” (J-02). However, several journalists maintained that AI poses no threat to core aspects of journalism, such as field reporting or investigative work, which they believe still require human judgment, empathy, and ethical reasoning.

Journalists described their behavior when using AI as a combination of excitement, curiosity, and cautious optimism. Some journalistis expressed enthusiasm and interest in exploring AI’s potential beyond basic tasks, whereas others noted a more pragmatic or ambivalent stance, recognizing its usefulness while remaining mindful of ethical issues, risks of overreliance, and limitations in content quality.

In terms of personal enjoyment, journalists’ perceptions of AI use reflect intrinsic motivation and practical utility. Although AI is not typically perceived as inherently enjoyable, many journalists reported satisfaction from its ability to simplify repetitive tasks and increase efficiency. This enjoyment was often indirect, stemming from reduced effort and improved workflow rather than from interacting with the tool itself. While this task-related satisfaction may support AI adoption, several journalists also expressed emotional discomfort, pointing to job displacement that limits overall satisfaction and long-term engagement with AI tools.

When asked about the overall effort involved in using AI, journalists generally characterized it as easy to use and low-effort, highlighting intuitive interfaces and dependable access as key factors that facilitate smooth integration into daily tasks. Free versions of generative AI, particularly ChatGPT, were widely considered sufficient for routine tasks. Some, however, pointed out that advanced features in paid versions, such as memory retention, significantly improve efficiency in complex or iterative tasks by reducing repetitive input. Although cost was noted as a consideration, journalists who use AI regularly often viewed the investment as worthwhile, with one remarking, “It’s cheaper than a dinner outside” (J-03). These findings indicate a high level of objective usability, strengthening perceived ease of use and supporting broader AI adoption in newswork.

Taken together, journalists widely describe AI as easy to use and, despite existing skill gaps, show a strong willingness to learn. While challenges such as unclear newsroom policies, limited organizational support, ethical concerns, and a lack of locally relevant training data pose some barriers, journalists’ perceptions reflect intrinsic motivation, satisfaction, and a clear practical utility. These patterns suggest that AI is driving a lasting shift in their professional routines.

Discussion and conclusion

Based on a qualitative analysis of 20 semi-structured interviews with journalists from Indian media organizations, this study sheds light on journalists’ experiences with AI and the key factors influencing their adoption behavior, interpreted through the lens of TAM3.

Responding to

In response to

However, for more advanced and responsible use, the results reinforce earlier calls to improve AI literacy among journalists (Deuze and Beckett, 2022). Skills like prompt engineering enhance the quality of AI-generated content, while competencies in data analysis, fact-checking, and verification are critical for investigative work and combating misinformation. Strengthening these capabilities would further promote more ethical and critical engagement with AI (Olsen, 2023) and lead to greater adoption. To fully realize AI’s potential, media organizations must actively support journalists in developing algorithmic capital through training, resources, and guidance (Sarısakaloğlu, 2025a); without such support, skills remain uneven and opportunities for innovation are missed.

The findings indicate that AI uptake is not solely an individual decision but is also influenced by the broader cultural environment within newsrooms. In line with previous research on TAM3 (Venkatesh and Bala, 2008), this study shows that a culture characterized by openness, informal knowledge sharing, and peer-driven experimentation plays a critical role in facilitating AI adoption. This points to the importance of fostering internal learning environments where shared experiences and visible results help build collective confidence in using AI.

Notably, journalists in India navigate technological change in ways that reflect practical adaptation and symbolic positioning, as AI is seen as a tool to support journalistic work and as a marker of professional capital and digital prestige. Journalists who adopt AI are often regarded as forward-thinking and tech-savvy, which can enhance their standing among peers and within their organizations (Venkatesh and Bala, 2008). This link between AI use and professional status appears to function as a social incentive, especially in competitive newsroom settings where adaptability and digital fluency are highly valued. This dynamic offers insight into how AI adoption reflects journalists’ efforts to maintain a coherent professional identity rooted in shared values and norms, revealing journalism as both a profession and an ideology (Deuze, 2005). Ultimately, this raises fundamental questions about what constitutes a journalist in the AI era or what characterizes an AI-savvy journalist (Sarısakaloğlu, 2025a).

In line with existing studies, the findings show that perceptions of AI as a threat to job security and professional autonomy remain a significant barrier to adoption (Amigo and Porlezza, 2025). This concern becomes even more pronounced when viewed through the human–machine communication approach, which highlights how AI is increasingly perceived as an interactive, communicative actor (Guzman, 2019). Such a perception may intensify anxieties about diminished agency and blurred boundaries between human and machine roles. These hybrid forms of newswork challenge traditional journalistic role conceptions (Hanitzsch and Vos, 2017) and contribute to fractured responsibilities and a partial fading of the classic understanding of journalism (Napoli, 2014; Sarısakaloğlu and Löffelholz, 2025). In this context, boundary work to redefine the domain of journalism becomes essential (Carlson and Lewis, 2015) to determine the respective roles of human and nonhuman actors, safeguard accountability, and protect the profession’s legitimacy.

Moreover, a major barrier to AI adoption stems from concerns about content quality. As observed in other parts of the world (e.g., Olsen, 2021), Indian journalists generally criticized AI outputs as formulaic, linguistically limited, and lacking empathy or cultural nuance, which they view as essential to high-quality journalism. Although generative AI has improved linguistic fluency, shortcomings such as hallucinations, factual inaccuracies, opaque decision-making, algorithmic bias, visual stereotyping, and a lack of transparency persist, confirming earlier research (Diakopoulos et al., 2024). This prompts reflections about how traditional quality criteria in journalism can be safeguarded and what defines quality in AI-driven journalism (Sarısakaloğlu, 2022; Sarısakaloğlu and Löffelholz, 2025).

These challenges are amplified by the lack of formal organizational guidelines and ethical frameworks, which limit journalists’ confidence and hinder AI’s responsible use in Indian newsrooms. However, the findings show that journalists are increasingly aware of these issues and are engaging with AI more cautiously and critically. To support responsible adoption, media organizations in India must provide clearer regulatory guidance and embed journalistic standards into AI systems. Participatory approaches, involving journalists in algorithm design, offer a promising path to ensure ethical integrity and align technological innovation with journalistic values (Sarısakaloğlu, 2024).

In contrast to studies on AI use in newsrooms across the Global South (Beckett and Yaseen, 2023), such as in Pakistan (Jamil, 2020) or Latin American countries (Soto-Sanfiel et al., 2022), infrastructural barriers do not seem to significantly hinder AI adoption in India. Technical barriers were also minimal, possibly due to the free availability of widely used tools like ChatGPT or Gemini.

In addition, by bringing the Indian context into view, the study contributes to global debates by showing that AI tools developed without sufficient regional data may fall short in supporting contextually grounded journalism, thereby highlighting the importance of access to locally relevant datasets. Journalists observed that many AI systems are primarily trained on data originating from Western contexts, which reduces their relevance and applicability to the needs of Indian newsrooms.

Overall, the widespread and routine use of AI, along with journalists’ recognition of its potential value and their awareness of the associated challenges, points to a growing acceptance and continued path toward its adoption. These findings suggest that AI is not a passing tech fad: it is neither rarely used nor generally perceived as difficult to integrate or low in usefulness, attitudes that would typically lead to skepticism. Rather than a temporary phenomenon or short-term experimentation, the integration of AI into newsroom routines reflects a strategic response to evolving professional demands and is further reinforced by significant national investment in AI (United Nations, 2025). When AI is frequently used and regarded as easy and effective in enhancing tasks, it is more likely to be adopted as a game-changer, perceived as a technology with the potential to fundamentally transform journalistic routines, roles, or newsroom structures in a lasting way.

Like all research, this study has certain limitations. Its qualitative approach, based on interviews with a limited sample of journalists, captured individual experiences and perceptions that should be understood as context-specific and may not be directly transferable to all journalistic ecosystems or countries. This limitation paves the way for future research across diverse organizational and cultural environments to validate and expand upon the findings, for instance, by examining how newsroom governance structures shape the perception and adoption of AI among journalists. Moreover, the study does not provide deeper insights into the organizational contexts of the journalists, which, however, should be explored in future research to understand how organizational culture shapes their perceptions and uses of AI.

The study qualitatively adapted TAM3, which was originally developed for quantitative research. While this approach enabled richer, context-sensitive insights, it also introduced limitations, as the model’s predefined constructs and causal assumptions are more difficult to trace in interview data. A mixed-methods approach could complement this by combining qualitative insights with standardized measures. Moreover, a follow-up focus group involving academic experts and journalists could have further enriched the findings through collective reflection and discussion. Longitudinal research is essential to trace how journalists’ perceptions, routines, and skillsets evolve with ongoing AI integration, and to assess whether initial enthusiasm or skepticism persists, intensifies, or shifts as technologies advance and institutional policies develop, ensuring that academic insights remain aligned with technological change.

Despite its limitations, this study offers guidance for newsroom managers, policymakers, and AI developers by highlighting the key factors that facilitate or hinder AI adoption in journalism. Only by considering these influences can AI adoption in journalism move forward in a responsible, meaningful, and context-sensitive way.

Supplemental Material

Supplemental material - Artificial intelligence as a game-changer or passing tech fad in journalism? A qualitative study on journalists’ perceptions and technology adoption in a Global South country

Supplemental material for Artificial intelligence as a game-changer or passing tech fad in journalism? A qualitative study on journalists’ perceptions and technology adoption in a Global South country by Aynur Sarısakaloğlu in Journalism

Footnotes

Acknowledgements

I would like to express my sincere gratitude to Professor Martin Löffelholz for his invaluable feedback on this manuscript. I am also thankful to Sanchit Dobhal, Fatema Ismail Khan, Mansoor Mahmood, Udisha Misri, Sakshi Panda, Gregorius William Suryaputra, and Valeriia Vilkina (listed in alphabetical order) for their assistance in conducting the interviews. Finally, I am sincerely grateful to the journalists for their participation in this study and their valuable insights.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Ethical consideration

This study was approved by the Institutional Ethics Review Committee of Technische Universität Ilmenau on November 15, 2024.

Supplemental Material

Supplemental material for this article is available online.

Author biography

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.