Abstract

In Cope and Kalantzis's recent discussion in this journal concerning the organization and capabilities of Generative AI, they argue that such systems exhibit critical limitations in their ability to engage with meaning and multimodality because of an alleged deep foundation in digitized ‘written texts’. In response to this, I explain why the core components of Generative AI are inherently multimodal and are not restricted to written language, or any specific modality, at all. This supports their use in an ever broadening range of multimodal analysis contexts, of which I give several examples. I then point to a more general semiotic position from which to critically reflect on their capabilities in the future.

Introduction

Even for those of us who have worked in and with AI for many years (cf. Bateman 1985), the capabilities now being exhibited by Generative AI technologies came as a considerable surprise. As one standard ‘benchmark’ – i.e., an agreed set of challenges of more or less complex problems with specified ‘correct’ answers – after the other was performed more effectively, in many cases far more effectively, by Generative AI than by previous computational techniques, it was evident that a landslide was underway. Regardless of the unfortunately also present sales hype, this is technology that is truly ‘disruptive’ in every sense of the word and we are still only beginning to gauge something of its scale. Nevertheless there remain, even in technical circles, substantial disagreements concerning just what this technology is doing and what it is not.

In Bill Cope and Mary Kalantzis’ recent contribution to this area of discussion in this journal (Cope and Kalantzis, 2024, hereafter C&K), they approach the issue applying their own view of a ‘transpositional grammar’ developed for several years in the context of multimodal literacies, or ‘multiliteracies’ (e.g., Cope and Kalantzis, 2009; New London Group, 1996). More specifically, they apply transpositional grammar to computation, arguing that AI, now including Generative AI, makes certain things possible but is also strictly restricted in capabilities due to the inherent requirement that any sense-making proceeds via transposition into, and then out of, ‘binary computing’. Although making some useful points along the way, their tone is highly polemic throughout, bolstered by a variety of somewhat questionable claims concerning the nature of Generative AI and how it works.

My main focus here, therefore, as someone who is also equally anchored in semiotics, and in particular in multimodal semiotics (Bateman, 2018), will be to critically engage with C&K’s assessments of Generative AI concerning multimodality. I will discuss how Generative AI is intrinsically multimodal from the ground up, using several examples from our own and others’ current work involving multimodal data of various kinds. Although Generative AI systems can indeed exhibit several of the limitations that C&K discuss, the reasons for this are only tangentially related to those that C&K raise. To plan ahead and avoid being surprised as these limitations are successively removed, a more robust foundation is necessary drawing on appropriate views from both semiotics and computation.

As Gee & Zhang note in their own reflection on C&K, “It is important to realize that Generative AIs like ChatGPT-4 … have their own views on C&K’s claims. This is surely a unique situation in history. When we critique the design of a car, book, machine, or movie, we do not expect them to be able to reply.” (Gee and Zhang, 2024: 153) This is indeed unique, but it also points to a critical lack in current evaluations of what Generative AI is doing. Arguably for the first time in human history, we are now confronted with artificial systems that demand a high degree of semiotic sophistication in our treatments of them. The reason for this is slightly technical, but will nevertheless be sketched briefly below in non-technical terms because it is so crucial for understanding why any description of these systems as ‘just doing statistics’, on the one hand, or (inconsistently) ‘just operating on 1s and 0s’, on the other, falls short.

The path followed in the rest of this paper will consequently be as follows. First, several examples of the use of Generative AI in multimodal research settings will be given, suggesting something of the range of capabilities that are now emerging and how this is already contributing essentially to everyday research practice and methods. This will anchor the discussion primarily in use cases and applications for the study of multimodality. Second, I will explain very briefly how the specific capabilities relevant for multimodality come about in Generative AI and how these need to be seen as intrinsic properties of the technology. And third, I recommend a particular style of reflection on the capabilities of Generative AI that is better suited to understanding their nature(s).

Generative AI in multimodality research: The practice

Generative AI is already making an essential contribution in multimodality research that is now poised to explode in breadth and capability. Many tasks of multimodal analysis that would have been unthinkable even 5 years ago are now commonplace and readily usable by researchers, often with little technical investment. For present purposes, I will not consider any uses of Generative AI that are not motivated by multimodality research, for example for producing content, generating realistic or stylized pictures and videos from textual descriptions, writing poems, or similar, where considerations of accuracy or veracity are often less relevant. Many of these capabilities can be relevant in pedagogical contexts, but this is not my concern here. In multimodality research, we are typically engaged more in the task of trying to document and then explain how semiotically diverse expressive resources may be combined for particular communicative (including aesthetic) effects and how this might be more or less successful in particular contexts of use.

In more abstract terms, this can be characterized as a search for patterns. We are looking for regularly occurring patterns of resource deployment that allow us to see better what is going on when multimodality is at work. For this, we often start with some body of ‘data’ gathered from some communicative practices and then seek to draw regularities out of that data by noting particular features. Such features can be more or less abstract, more or less formalized. They have the purpose of allowing us to group cases so that generalizations are more readily apparent, which can be done at any scale and span both quantitative and qualitative approaches.

Analyzing data in this way can nevertheless be very time intensive. For larger-scale work, it has been logical to attempt to ‘automate’ basic annotation tasks, but this, until very recently, was inherently limited to very low levels of descriptive abstraction: for example, one might find parts-of-speech in texts, the color balance of images, cuts between shots in films, fundamental frequencies of speech signals, and so on. Each step higher in abstraction, from parts-of-speech to grammatical analyses, from color balance to objects, figures, and actions, from shots to scenes, from fundamental frequencies to text, rhythm, intonation, and so on, raised substantial challenges, was increasingly inaccurate, and difficult to scale. Generative AI has turned this situation on its head; one can ask for more or less formalized descriptions of data ranging across many media and their contributing semiotic modes and at various levels of abstraction.

In our own work on film, for example, we often wish to characterize film sequences in terms of what they are depicting and the technical devices being used so that we can pick out cohesive structures and other discourse organizations at a larger scale to reveal relevant design decisions. Consider the three images shown in Figure 1 taken from three consecutive shots from Wes Anderson’s 2014 film The Grand Budapest Hotel. Whereas the interpretation for any viewer is probably quite clear, producing cohesive chains and even the discourse relations between shots in any automatic manner would until very recently have been a complex computational challenge. Visually the images share very little in common and so lower-level descriptions in terms of pixels, color balance, camera movement, etc. would need substantial augmentation to deliver results at all. Frame captures from a sequence of three shots from The Grand Budapest Hotel (Dir: Wes Anderson, 2014, American Empirical Pictures).

Giving these three images to ChatGPT (4o, accessed via Academic Cloud at https://academiccloud.de/) and asking what the images show gives a straightforward textual description quite accurately: “a steam train traveling across a bridge over a frozen body of water”, “a view from the front of the steam train”, “the interior of a train compartment, where two individuals are seated at a small table” (abbreviated). Going further to be more explicit, when given the prompt: “How many different trains are shown in the sequence?” the response was: “The sequence of images shows one train throughout. The first image captures an exterior view of the train crossing a bridge, while the second image portrays the train from the front as it moves through the landscape. The third image then presents the inside of that same train, indicating continuity in the journey being depicted.” (ChatGPT-4o, 17 June 2025)

When prompted concerning how it ‘knows’ this, the answer refers to the sequence’s “continuity in both time and space, where the exterior journey naturally transitions into the interior experience of the journey, maintaining a unified narrative theme” (ChatGPT-4o, 17 June 2025). These are all so-called ‘zero-shot’ results, where the system was given no further information prior to these prompts and so is performing at a ‘minimal’, base level. This bares no relationship with the capabilities of any automatic recognition system from as little as 5 years ago.

When performing multimodal analysis, however, we generally do not want simple descriptions of this kind. We are looking for patterns and so it is necessary to group descriptions into more abstract classes and structures. We can do this with Generative AI systems by requesting more structure in the prompts: that is, we do not ask just for some description but for descriptions with particular structures. For example, we can perform an analysis of the filmic technical feature of camera distance/framing by asking in the prompt which of a pre-given set of analysis categories hold; ChatGPT then responded that the first image is a ‘long shot’, and the following two are ‘medium shots’. This analysis could be performed in other ways, but it is interesting to probe how far the general capabilities of the employed Generative AI can take us.

We can then ask for more structure for specific tasks, for example, when asked to identify the discourse referents in this visual sequence, giving each a broad semantic type and a unique label, ChatGPT produced the following list:

Which is exactly what one might want for a more formal discourse analysis.

And going further still, when asked to list the spatial relationships between these discourse referents in the form “relation (Object1, Object2)” using the specific spatial relationship in each case, one receives responses such as: (1) crossing(SteamTrain, StoneBridge): The SteamTrain is crossing the StoneBridge. (2) TravelingThrough (SteamTrain, SnowyValley): The SteamTrain is traveling through the SnowyValley. (3) over(SteamTrain, FrozenRiver): The SteamTrain is over the FrozenRiver.

And so on. Producing semantic descriptions of even this fairly shallow nature from visual materials used to be a considerable challenge and stood as a substantial bottleneck for multimodality research. Nowadays we freely mix structured analyses produced by these kinds of strategies, analyses produced by other technical means using non-Generative AI techniques, and manual analyses. Further examples from our treatments of film are discussed in Burghardt et al. (2024).

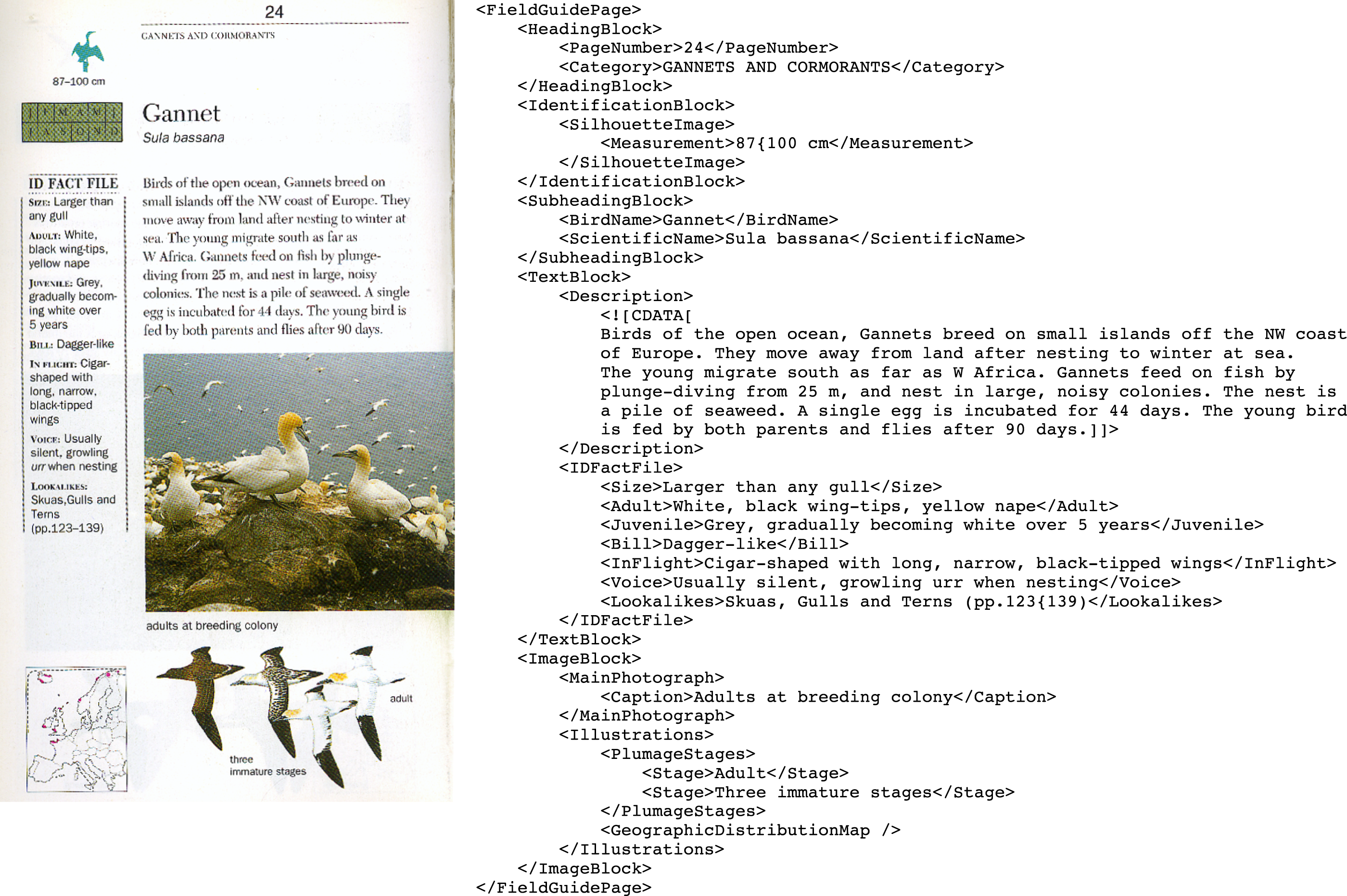

Crucially, the key to making ChatGPT responses useful for multimodality research lies in the way in which such structured results can be produced. This stands as a first step in the search for more abstract and extended patterns by which we can characterize meaning-making. The particular structures sought should be derived from theory and the empirical aims of research as always; the more precisely such targets can be formulated, the more likely it is that usable results are obtained. As a further illustration of this we can return to some of the challenges raised in the Genre and Multimodality (GeM) project that sought to construct a corpus of annotated page designs from several genres in order to investigate how the use of semiotic modes had changed in printed documents of specific genres over time (Bateman, 2008; Hiippala, 2015). A critique of the project at that time was the sheer amount of work involved in producing annotated corpus entries. The GeM scheme called for multiple layers of detailed coding, each layer expressed in the standard eXtensible Markup Language (XML) in order to support further data analysis. In Figure 2 on the left-hand side we can see one of the original pages treated in the GeM corpus taken from a field guide on birds. On the right-hand side of the figure is the ChatGPT-4o response to a prompt asking for an XML-like representation of the page’s visual compositional structure, the blocks it is made up of, and the kind of content in those blocks. Again, it must be emphasized that this is without any particular special setting up of context or training and reflects a baseline ChatGPT-4o result ‘out-of-the-box’. The result nevertheless compares favorably with the structures required (cf. Bateman, 2008: 84, 270). Left: A page from the original GeM corpus (Holden, P. Birds of Britain and Ireland. Collins Wild Guide. London: HarperCollins, 1996; used by permission); Right: An XML-representation of the layout structure of the page produced by ChatGPT-4o (June 17, 2025).

These kinds of results are very suggestive for the kinds of capabilities now being brought into multimodality research. The input to ChatGPT for the Gannet example was a just an unstructured graphic file of the page but, as we can see, the XML-representation nevertheless already includes textual renditions of relevant parts of the graphic. This is similar to other re-renderings of information, for example from spoken language to a textual transcript, which can often now be done as a simple matter of course as also reported in our film work cited above. In addition, for the Gannet page, a particular structure for the visual blocks is proposed as well, with all of the photographic and diagrammatic elements at the bottom of the page being grouped together as an ‘ImageBlock’ and the central textblock and the ‘ID fact file’ grouped together in a ‘TextBlock’, and so on. These can be compared favorably with corpus results produced previously largely by hand.

Many multimodal analysis frameworks are now being re-designed to make use of processing pipelines where distinct components of various kinds contribute, including multiple Generative AI solutions, are applied to an increasingly broad range of tasks. For example, one very powerful general component is Meta AI’s visually-based ‘segment anything model’ (SAM) that provides spatial regions for selected objects, also tracking these dynamically in videos. One might then take some video or other material, extract visual representations of particular entities in the video, and then use visual-based search to construct cohesive chains describing the occurrences of those entities. Burghardt et al. (2024) show some examples of how we are using this kind of approach as well. Other pipelines might be to take the textual components of the XML representation extracted above and pass these on to specific language processing components, or to take the diagrams and maps to pass these on to their own respective specialized processing and annotation steps, etc.

When employing any such results, however, it is still essential to bear in mind that they might not be correct. Simply because a result has been generated does not mean that we can accept it at face value. For reasons broadly discussed in the literature, the results produced need to be considered ‘plausible’ rather than definitive. This is in many respects quite similar to the situation with human coders and annotators. Analyses can be wrong. Fortunately, we have a host of methodological tools available to us for dealing with such situations, such as annotation reliability measures (cf. Bateman et al., 2017: 198–204) or checking against ‘ground truth’ test data. When employing Generative AI tools, therefore, one should always explicitly evaluate their reliability. This is best done when the outputs produced are sufficiently well structured and constrained so that comparisons can be drawn. Whenever responses are left more ‘open’, then it becomes likely that information that is driven more by plausibility than accuracy will result. Typical signs of this are when descriptive adjectives are included to ‘fill out’ any responses given. This is then no longer useful for multimodality research and corresponds more to the situation where a human annotator may be ‘guessing’ what they think might be an appropriate description. These may still sound quite technical and authoritative but should never be simply accepted: this is one of the most important lessons for those engaging with this technology for the first time.

Tasks that allow of reasonably objective descriptions and solutions are generally the safest areas of application for Generative AI. When asked, for example, “to provide a flowchart-like description for a beginning student explaining how they could classify a still frame from a film as either a close-up or a long-shot” ChatGPT-4o produces a six-step breakdown of decisions that quite accurately reflect what one might learn from film theory; when then asked further to render this as a Python program for doing the task automatically, a similarly plausible piece of code results. In contrast, when asked to perform a textual analysis of a pair of mirrored images following Kress and Van Leeuwen (2020 [1996, 2006]), the results tend to be generic and lack precision: e.g., “By using multimodal discourse principles, we understand that the mirrored images, while similar in content, may differ subtly in the viewer’s cognitive processing due to changes in directional orientation and compositional emphasis.” – which is plausible but hardly an incisive analysis. Again, it is critically important not to read too much into such generic responses.

Finally, one additional step can be taken by using the results produced by Generative AI as targets of analysis in their own right. Regardless of their accuracy on some matter, they do reflect generalizations obtained on the basis of considerable data. This leads to another area that has received considerable media coverage, i.e., their tendency to re-produce ideological prejudices and biases, even when these are politically or culturally objectionable. This can also, however, be put to positive use as a new source of information concerning culture. Garg et al. (2018), for example, use the simpler technique of ‘word embeddings’ that will be introduced below to explore 100 years of changing (and not changing) gender and ethnic stereotypes. This is now being taken further with critical and more focused analyses of Generative AI responses engaging several modalities.

Generative AI in multimodality research: The theory

The kinds of capabilities discussed so far do not occur ‘just by chance’ despite their basis in statistical distributions. Moreover, if we were to follow C&K’s characterization of ‘Generative AI’, few of them could be expected. It is therefore worthwhile explaining, even if extremely briefly, how they emerge. One important point to make is that it is absolutely not the case that such technology relies on some transposition into language labels as C&K suggest, so that all such capabilities are somehow reduced ‘anachronistically’ to written language. The reason why this is not so turns out, moreover, to have considerable theoretical consequences for multimodality as well.

To begin, most readers will probably have at least some idea that Generative AI relies on computational ‘neural networks’ – even though this is a concept more often criticized for its appropriation of the term ‘neural’ than understood. The analogy with the brain rests on two principal features. First, these components are called ‘neural’ because they are made up of relatively basic processing units connected together by links of varying strengths. Depending on the strengths, some input given at one end of the network will lead to some output at the other end of the network. Both inputs and outputs are simple numeric values which are modified as they pass through the network by virtue of the various connection strengths in play. What the numeric values stand for and where they come from is up to the designer to decide. Second, connection strengths are not pre-set but are derived during a training phase pairing inputs with desired outputs. Training operates in cycles where a batch of inputs is passed through the network and the output delivered is compared to what it should be. The connection strengths are then modified so that the next time round the actual output should be closer to the desired target. This process repeats until the actual result is considered sufficiently close to the desired result. Viewed more abstractly, this means that during training the neural network comes to approximate a function from the input to the output.

The networks relevant for Generative AI are also multiply layered so that values pass through successive groups of processing units. Some input, as a set of values, is passed into the first group, or layer, which then passes its results onto the next layer, and so on until the output layer is reached. Some fairly fundamental mathematical results concerning such systems demonstrated early on how one could configure training processes even for deeply layered networks so that a solution will be found (Rumelhart et al., 1986). This relies on a geometric interpretation where the sets of connection strength errors for layers can be seen as surfaces in space, and so minimizing error is equivalent to always moving ‘downhill’ on those surfaces; a good solution is then found combining the contributions of all of the layers. This is the basis for ‘deep learning’.

For multimodality, it is important to understand that there is nothing ‘linguistic’ in this process and there is no restriction to text, written or otherwise. The numeric values given as input and produced as output can correspond to anything: written text, spoken language, images, videos, weather patterns, blood pressure, gestures, movements in space, chemical molecules, or whatever. The solutions obtained will depend solely on the training task and so it is only at this stage that a discrimination according to semiotic modes occurs; the Generative AI process in general does not care and so is intrinsically multimodal.

There are then several ‘tricks of the trade’ that make training more responsive to properties of particular semiotic modes. For example, since moving connection strengths ‘downhill’ works in terms of continuous variation – i.e., making numbers smaller – it is better if the input has continuous properties as well. Clearly, individual words and their representations (in Unicode or anything else) do not have such properties as a small change in, for example, ‘spelling’ has no reliable relation with a small change in syntax, meaning, etc.: simply changing the first letter minimally across ‘bog’, ‘cog’, and ‘dog’ does not give us anything coherent. For this reason, when working with written text input, a preliminary translation is performed to produce a representation that does have continuous properties. This works in terms of what are technically called embeddings. An embedding is statistically derived from the distributional properties of some items in a collection of data. The result is best seen as a high-dimensional (typically 256, 512, or more) geometric ‘space’; in the case of word embeddings, each word is then represented by a vector to a point in that space, i.e., a sequence of 256, 512, or more real numbers.

Early on it was discovered that the dimensions and ‘shape’ of this space come to represent all sorts of interesting generalizations concerning the data (Mikolov et al., 2013). If we take the vector for ‘king’, for example, and subtract (a purely arithmetic operation) the vector for ‘male’ and add back in the vector for ‘female’, then the result is a vector pointing in a very similar direction to that of ‘queen’. Similarly, replacing the words by their respective vectors gives results such as ‘Berlin’-‘Germany’+‘France’ = ‘Paris’, ‘king’-‘kings’+‘queen’ = ‘queens’, and so on. C&K refer to such properties as ‘circumstantial’ and not related to meaning, but they can equally well be seen as a clear demonstration of the accuracy of Firth’s intuition that the meaning of words lies ‘in the company that they keep’ (Firth, 1957: 11). In any case, this representation is about as far from ‘binary’ as one can get. Small numerical changes correspond to small changes in ‘sense’ (construed very broadly). Multimodally this is interesting in that the first step in processing for written language is to move the ‘digitization’ of words more towards the continuous, i.e., non-digital, representations found in other modalities. An accessible introduction to the basics of word embeddings illustrated in some critical linguistic register analysis can be found in Bateman and Paris (2020).

The next step in processing is where the deep learning comes into its own. Whereas the overall goal is to acquire a model that includes substantial ‘knowledge’ of the regularities being expressed, or exhibited, in some modality or modalities, to train a model one needs some specific task so that ‘error’ can be measured – otherwise there is no basis for driving the connection strengths ‘downhill’ to reach solutions. Large quantities of training data are also necessary because this process is extremely ‘data hungry’. In the past, this was traditionally a bottleneck for deriving neural networks because the labeling of ‘correct’ solutions by hand is not feasible at the scale required. The solution, which can again be applied across modalities, is to take any coherent ‘whole’ – e.g., a picture, a block of text, a piece of music, a sequence of purposive actions, etc., – and to leave some part out. The training task is then to predict (i.e., ‘generate’) the missing piece. By these means, one can generate training data automatically and so provide input-output pairs at immense scale.

For language data, the missing piece has typically been the ‘next word’ in a sequence or some word in the middle of a block of coherent text. What was then a surprise to all was just how effective these simple tasks are, when done at scale, in driving networks to discover immensely rich sets of generalizations. In the case of language, these range freely over morphology, grammar, semantics, phonology, pragmatics, style, genre, and more. Simply stated, this is because all of these kinds of information are needed to do the best possible job at predicting the next word or finding the missing piece (for a further detailed but non-technical account, see Bateman, 2025). Exactly the same process leads to the discovery of deep visual generalizations when given purely visual input (cf., e.g., Dosovitskiy et al., 2021). This means that one can even use visual prompts and receive visual responses for tasks quite hard to put into words, such as object rotation, key point tracking, non-verbal visual IQ test questions, and more (Bai et al., 2024). In all cases, the process of training leads to the generation of complex higher dimensional ‘spaces’ that constitute the generalizations learned.

What is then particularly interesting multimodally is, first, that the ‘shape’ of the spaces acquired when training with different modalities can align in important respects, i.e., the spaces learned on language data and those learned on visual data can exhibit structural similarities reflecting deep connections across modalities, and second, as a partial consequence, the derived models can be improved further by cross-training and fusion across modalities. Again, in general this does not require tight labeling and can often be driven sufficiently by simple spatial and/or temporal co-occurrences – as when, for example, captions occur with images, diagrams, etc. or the visual field and language accompanying the visual field are presented simultaneously (Vong et al., 2024). Data of this kind is readily available and is currently being extended even further to include sensor data of various kinds to deal with common critiques of lack of embodiment. Crucially, contra C&K, there is simply no need to give ‘textual’ labels to data beforehand and so there is no restriction to written language as the model for all modalities considered.

Conclusion: Understanding generative AI

An effective way of engaging with the kind of complex models being constructed for Generative AI is to consider the most appropriate levels of abstraction to apply on both the computational and semiotic ‘sides’. For the computational side, technical details of the operations of large models are best characterized in terms of (higher order) matrix multiplication and differentiable functions (among others), while considerations of what that might mean are more productively characterized in terms of geometric/topological meaning spaces and trajectories and regions within those spaces. 1’s and 0’s are rarely, if ever, a useful level to consider. For the semiotic side, we have stratified models of human semiotic systems that may sensibly be applied (cf. Bateman, 2025). In C&K’s case, however, the semiotic abstractions are drawn from their ‘transpositional grammar’, and seem largely restricted to some particular aspects of the discourse semantics of a handful of semiotic modes (cf. Bateman et al., 2017: 117), while abstraction on the computational side is largely missing (1’s and 0’s). This creates a wedge between the two sides with the metaphors applied exhibiting more losses than gains.

One point with respect to which C&K are certainly correct, however, is in their emphasis on the ultimate source of any patterns and generalizations discovered lying in the organization of the data. In the case of language, it is indeed only because the strings of tokens analyzed reflect meaningful human discourse that there are patterns to be found. But whereas C&K see this as ‘circumstantial’ and distinct from meaning, the semiotic position taken here follows more the systemic functional or Hallidayan standpoint whereby patterns at any stratum correspond to patterns at other strata (so-called ‘meta-redundancy’). Thus sequences of tokens are at one and the same time co-instantiating patterns at higher levels of abstraction.

To understand the workings of Large Language Models, it is then important to see that the generalizations that they draw may well be situated at those higher levels of abstraction as well and should not be solely restricted to forms. The particular representations at the level of form, e.g., segmented tokens, are consequently less important: it is their overall large-scale patterning that drives generalization. The same applies to any data. To the extent that that data exhibits regularities, large models will derive corresponding generalizations, regardless of whether these are visual, behavioral, acoustic, haptic, one-, two-, or three-dimensional, static, dynamic, or any other.

To conclude, my advice to any wishing to engage with these technologies more theoretically would be to decide first of all on the levels of abstraction appropriate for their research concerns. For multimodal, social, and cultural issues, the abstract ‘shapes’ of the spaces constituting the models learned for particular modes are sure to bring to light a host of interesting regularities and generalizations. Probing potential congruences between these and the diverse semiotic systems involved then offers new methods for addressing multimodality as a phenomenon, as long as we are ready to listen.

Footnotes

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.