Abstract

News audiences’ acceptance of generative AI (GenAI) in journalism is shaped by their knowledge of (or direct experience with) what AI is, what it can do, and what implications its use has. Acknowledging this, the present study draws on the technology acceptance model to first explore what a sample of news audiences in two countries knows about GenAI and what their experiences, if any, with it have been to date. Next, it explores this sample's acceptance of use cases that demonstrate how AI is – or could be – used in journalism. It does this by using in-depth interviews with 60 participants to introduce or re-introduce 23 use cases to them and ask them how accepting they are of journalists using each. Acceptance depended on how AI was used, how transparent the use was, whether the use impacted accuracy, and whether legal and other ethical considerations were appropriately attended to.

Keywords

Introduction

Across the world, people are encountering generative AI 1 (hereafter GenAI) in many sectors, including news and journalism. Some people are unaware that the content they’re seeing, reading, or hearing has been made, edited, or reconfigured using artificial intelligence. Indeed, GenAI and/or the labour behind it, is often invisible to users and its workings are often opaque (Usher, 2025). Others are more aware due to differences in AI and media literacies or journalistic practices (Notley et al., 2024). This study examines news audiences’ first-hand experiences with GenAI tools and how these experiences shape their acceptance of this technology being used in journalism across diverse use cases.

To ground our article more concretely, we share two anecdotes from research participants we interviewed for this study. The first anecdote comes from Janet

2

, a 22-year-old woman living in a coastal Australian city of about 35,000 people. Janet describes the first time she realised she had encountered GenAI in news content: 1 read an article not all that long ago … and at the bottom it said that this article had been generated by AI … There was nothing [besides that notice] that I could have noticed that would have been able to tell me [it was AI-generated], which is terrifying.

The second anecdote comes from Amy, a woman living in a city of about 70,000 people located some 1000 kilometres away from Janet. Amy reflects on her experience with GenAI in journalism: It's really highlighted the importance of still having a human involved in journalism. If I can discern that it is AI, it's usually because there's a problem with it. It's oversimplified. It feels like details are being missed, or like it's not understanding where it's coming from, or it loses that personal touch. It can feel like they're cheaping out, like they don't want to pay journalists, like they try to replace the journalists that are making up the company. I just feel disappointed. Using AI, it sows further distrust. I feel like there's almost no one taking accountability. No one's willing to put their name almost on the article when it's AI-generated, right?

These experiences highlight several key issues at the intersection of AI, journalism, and news audience experiences. These include transparency about whether and how AI is disclosed, the way AI is used (to generate text or other media, edit content, summarise content, and aggregate content, among others), and a range of audience reactions to the idea of AI in journalism, in general, or to a concrete experience with it. These reactions span the gamut from interest or indifference to disappointment or distrust. Janet's and Amy's stories also underscore that news audiences’ acceptance of AI in journalism is a product of their knowledge of (or direct experience with) what AI is, what it can do, and what implications its use has. We draw on the technology acceptance model (TAM) (Davis, 1986; Venkatesh and Davis, 2000) as a theoretical lens to better understand how news audiences decide whether to use technologies and to frame their acceptance (or lack thereof) of journalists using these in the process of researching, editing, or creating news.

This article addresses news audiences’ acceptance of a wide range of (largely visual and multimodal) AI in journalism use cases. We focus chiefly on visual and multimodal use cases because of the under-emphasis these have had so far in the scholarship compared to use cases focused on text (Theodosiou et al., 2024); because of the unique properties of visuals that affect how audiences perceive and regard them (Barry, 2020); and also because large-scale research suggests that users themselves have less experience with using AI tools for creating or editing visual and multimodal outputs, which indicates an opportunity to learn more from audiences about their visual and multimodal literacies and why they accept or don’t accept certain use cases beyond the more familiar examples of using AI to, for example, edit or condense writing (Notley et al., 2024). By privileging the audience perspective and asking audience members how accepting they are of each example as a representative of a larger use case, this study advances scholarly understandings of technology acceptance among news audiences related to visual and multimodal AI use cases.

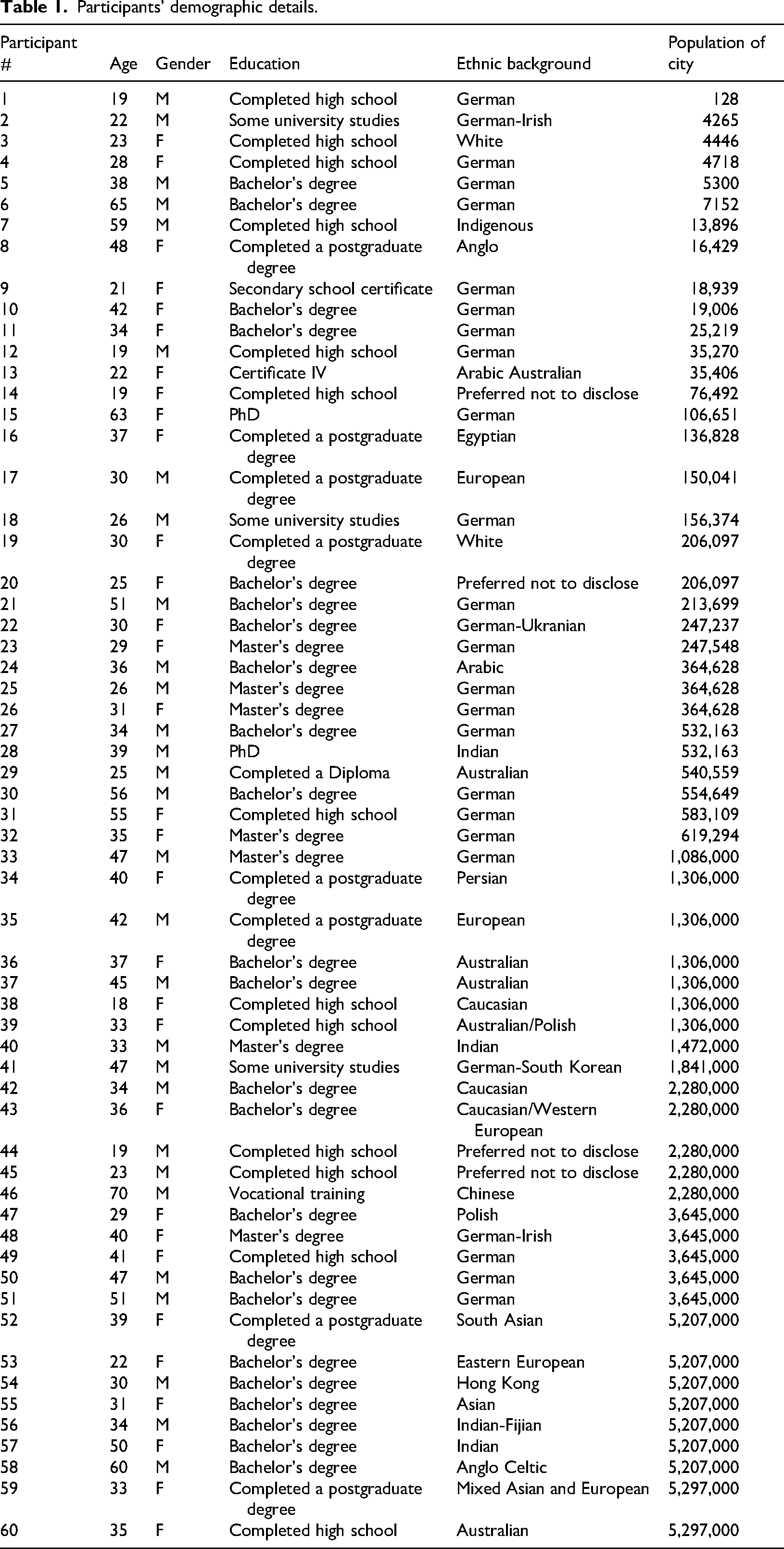

Participants’ demographic details.

Literature review

While considerable attention has been paid to audience perceptions of AI in the production and delivery of text-based journalism (see, e.g. Cloudy et al., 2022; Marinescu et al., 2022; Wang and Huang, 2024; Wölker and Powell, 2021; Wu, 2019), that same level of attention hasn’t been afforded to visual and multimodal uses of GenAI in news (Strikovic and Cools, 2025). Additionally, not every part of the journalistic process regarding the potential integration of GenAI has received the same level of research attention so these areas are more fully explored to set the context for the study and to argue that a more comprehensive and systematic evaluation of GenAI across phases – from enriching and brainstorming to editing and creating – is needed to better understand GenAI's place in journalism and audience members’ acceptance of it.

Acknowledging this, the literature review identifies existing research in this area, providing context for the use cases in the present study. It comprises three sections based on the three domains of use cases we inductively identified and propose in this paper. The first domain, enriching and brainstorming, includes using GenAI for behind-the-scenes purposes, including image recognition, brainstorming, and storyboarding. The second domain of use cases, editing, describes processes where journalists use AI to edit, modify, or transform existing content. Specific use cases in this domain include removing watermarks using AI, using AI to upscale images, expand a photograph's frame, and to animate historical and contemporary images. The third domain of use cases, creating, describes operations where journalists use AI to generate new content from training or public data, from proprietary data, or a mix. Use cases in this domain include generating icons for a news infographic, generating images to illustrate an article, generating b-roll footage, and generating a virtual news presenter. We developed this three-domain typology to ensure we paid systematic attention to various parts of the journalistic process and believe this typology is a useful organising device, as opposed to treating the phenomenon as an undifferentiated mass. 3

Technology acceptance model

The study uses TAM as a theoretical lens to guide its analysis. The model was proposed in the 1980s by Fred Davis, who theorised that technology acceptance can be explained by user motivation, which is affected by the technology's features and capabilities, and by how easy they perceive the technology is to use and how useful they perceive the technology is (Davis, 1986). Other scholars have proposed modifications to TAM over the years to allow it to explain other relevant factors, such as subjective norms (the influence of others, such as colleagues or supervisors, on the user's decision to accept the technology), image (how the use affects the perceived reputation of the user), job relevance, output quality, and result demonstrability (Venkatesh and Davis, 2000). Some scholars have called for more research into how emotional factors, such as anxiety or fear, and cultural or age differences affect technology acceptance, as well as how users accept more complex technologies, such as GenAI (Marangunić and Granić, 2015). Some recent research has applied the TAM to study how industry practitioners decide whether to integrate GenAI into their journalistic workflows (see e.g. Guenther et al., 2025; Sarısakaloğlu, 2025). This research found that social influence from peers and organisational colleagues was influential in shaping acceptance, as were perceptions that using AI could improve one's status within the organisation or could help with doing tasks more efficiently. However, the opportunity to apply the model to the context of news audiences is novel, which directly contributes to our study.

Audience acceptance levels vary by how and where AI is used in news production

Research focusing on text-based applications of AI in newsrooms offers broader context for audience acceptance of visual and multimodal uses. Several studies have indicated that audiences perceive AI-written news as less biased than news written by human journalists (Cloudy et al., 2022; Wu, 2019). Other research indicates that news audiences perceive articles written by AI as less credible (Wang and Huang, 2024) and unable to achieve objectivity, reflecting ‘the unconscious bias of those who designed the algorithm’ (Marinescu et al., 2022: 305). Another study found no significant difference in audience perceptions between AI-written and human-written news (Wölker and Powell, 2021).

Industry research indicates that audience acceptance levels of AI being used to produce and deliver news vary greatly depending on the level of involvement, format and amount of human oversight. In 2024, the Australian Broadcasting Corporation (ABC) and British Broadcasting Corporation (BBC) published a survey of over 150 people from Australia, the United Kingdom, and the United States that analysed audience perceptions of AI in news and other media content. The study included both textual, audio and visual use cases, and reported that audiences were generally nervous about the use of AI in the media, with concern varying by use case (BBC, 2024). In 2024, the Reuters Institute for the Study of Journalism (RISJ) published a similar study on public attitudes to the uses of GenAI in the news that interviewed 45 news consumers in Mexico, the UK and the US, and participants were presented with 25 textual and visual use cases of how GenAI is used in the production of news. As with the BBC study, the RISJ report found that acceptance levels varied not just by audience type, but how and where AI is used in news production (Collao, 2024). A recent study (Strikovic and Cools, 2025) used focus groups to probe news audiences’ encounters with AI-generated images in news and found that their participants had ‘deep concerns about the use of AI technologies in creating and disseminating visual information’ (p. 1), as these could potentially disrupt the potential for more objective, shared realities that participants said they desired. As such, this research found that that communication modality alongside audience needs (and perceptions of risks or harms [Shrivastava, 2025]) can also influence audience acceptance (or rejection) of GenAI in journalism. In the following section, the three domains of use cases of GenAI are discussed.

Behind-the-scenes use of GenAI for enriching and brainstorming

The RISJ study found that audiences were most comfortable with the use of GenAI ‘behind the scenes’ (what we refer to as enriching and brainstorming in our domain typology). This included using AI for automatic fact checking, identifying breaking stories, sub-editing, and transcribing and summarising interviews, among others. This ‘supporting’ function of AI, as identified by the RISJ report, ‘was palatable and mirrored how some participants were incorporating GenAI … e.g. using ChatGPT to finesse an email or a CV, using Grammarly to improve their writing’ (Collao, 2024: 35).

The present study expands on this line of research surveying how accepting audiences are with behind-the-scenes AI use to include more visual and multimodal use cases such as brainstorming, storyboarding, and recognition of people and places in visual material. Existing research illustrates some of the ways newsrooms are already utilising AI for these purposes. For example, de-Lima-Santos and Ceron (2022) identified how computer vision has been used in behind-the-scenes news production to process visual content in different ways, such as through computer vision to simulate human vision by enabling a machine to learn to recognise abstract patterns in images.

To date, there is limited research into journalist perceptions of the use of AI for visual brainstorming and ideation. Interviews with photo editors by Thomson et al. (2024) identified that staff could envision how text-to-image generators could be used in their newsrooms to workshop visual ideas and, indeed, some were already doing this. However, to the best of our understanding, no studies exist to date on how audiences perceive these visual, behind-the scenes functions of AI in newsrooms.

Use of GenAI for editing

The second domain of AI use cases in this study focuses on editing visual and multimodal news materials. Studies have examined audience perceptions of using AI to edit visual materials more broadly, outside of news-specific contexts. For example, the BBC's report on audience perception of AI found that in the production of video content such as TV shows, audiences could see the benefits of using AI for backroom editing, particularly if there was cost reduction for audiences (BBC, 2024). However, participants expressed concern over loss of jobs and human artistry. In a section examining news content specifically, the BBC report found that audiences registered a ‘distinct discomfort’ with the use of AI to edit images and videos, with participants concerned it may misrepresent reality (BBC, 2024: 42). However, there is much diversity in the ways that AI can be used to edit images and videos, so these use cases require further unpacking and nuancing, which the present study tries to provide.

Use of GenAI for creation and public-facing purposes

The last domain of AI use cases in the present study, creating, included the creation of icons, infographics, data visualisations, illustrations, 3D models, b-roll footage, and virtual news presenters. The RISJ report evaluated audience comfort levels with some of the above-mentioned use cases. While the report identified that audiences were most comfortable with AI creating content when it was text-based, ‘the use of AI in graphics (infographics, maps, charts) and illustrations … was generally acceptable and even a benefit’ (Collao, 2024: 43). On the other hand, AI-created images and videos that appeared photorealistic were least acceptable, because ‘photos and videos are perceived to be ‘the truth’, [and] the camera doesn’t (or isn’t supposed to) lie’ (Collao, 2024: 42).

In a study examining the use of AI text-to-video in the news, Thurman et al. (2025) analysed how UK audiences evaluated short-form news made by both journalists and AI, with different degrees of automation. They found that on average respondents gave more positive ratings to human-made videos than to automated videos. However, in cases where humans post-edited AI generated content, this difference disappeared, highlighting the importance of human oversight and input in audience perceptions of AI-generated content.

Following Xinhua News Agency's development of the first AI news presenter in 2018, considerable academic attention has been paid to audience perceptions of AI-generated news anchors, identifying mixed responses. For example, one US study found that audiences perceived a human newscaster as more credible than an AI newscaster (Kim et al., 2022), while a study of a Chinese audience identified a positive emotional response and perception of AI news anchors (Sun et al., 2022). Examining specific characteristics of AI news presenters, one study found that virtual, non-humanoid female AI news anchors with anthropomorphic voices were perceived as more attractive by a Chinese audience than humanoid AI anchors (Xue et al., 2022).

Synthesis and research questions

Current research into audience perceptions of AI in newsrooms for brainstorming, editing, and creation use cases indicates that competing factors influence audience comfort levels. These include where in the production process AI is used, the level of human oversight, and the extent to which AI is being used to represent ‘real life’ through photorealistic imagery and video. The present study fills a gap in the research on audience acceptance of AI in visual journalism, particularly in the behind-the-scenes and editing phases of production, where the RISJ and BBC studies indicate audiences may be more accepting of AI being used. To this end, and with the technology acceptance model of perceived ease of use and perceived usefulness in mind, the study's research questions ask:

Overall, this study seeks to bring journalists and audiences closer together. As headlines continue to champion GenAI's virtues and efficiency gains while news organisations continue sometimes with wholesale adoption or with providing their staff limited guidance on when, how, or if AI tools should be used (Tarnutzer and Blassnig, 2025), the need to listen to and understand the experiences of news audiences regarding GenAI in journalism is underscored. Doing so allows scholars to identify audiences’ needs or desires and to consider how well or poorly advances in GenAI respond to those at different stages of the journalistic process and when applied in different communication modalities. Awareness of this can inform journalistic practice on areas for innovation that are perceived as more or less risky and identify which concepts – from privacy and accuracy to integrity and honesty – audiences seem to hold most dear.

Methods

Data collection

Following approval from the human research ethics body at the first author's institution, data collection consisted of in-depth interviews of between 60–120 min with 60 participants in Australia and Germany (30 in each country) in late 2024. Germany, as a member of the European Union, which passed in December 2023 what has been called the world's first ‘AI law’ provides an interesting contrast to Australia, which has been called ‘at the back of the pack’ in regulating AI (Taylor, 2023) and has one of the world's most concentrated media markets, which can make Australian media particularly susceptible to top-down AI integration, as seen with the example of NewsCorp Australia (Park et al., 2024; Schapals, 2024). In addition, exploring English and non-English-language cultures can be instructive considering that many GenAI systems were developed in English-speaking countries and with English-language training materials (Albeihi and Rice, 2025). Lastly, while Australia and Germany are both similar in terms of the number of regular users of AI (50 and 51 percent, respectively), they differ in their citizens’ emotional valence toward the technology: only about 36 percent of Australians are excited about AI compared to more than half (about 57 percent) of Germans (Gillespie et al., 2025).

To recruit interviewees, we used a mixed purposive sampling logic (see Suri, 2011) to identify people who said they were news consumers and who said they were interested in discussing AI in journalism. We did this by designing and launching a paid social media campaign (on Facebook and Instagram) that served ads to people living in both countries who were 18 or older and invited potential participants to complete a short expression of interest if they were willing to be interviewed about this topic in exchange for a gift card of a modest value. Our approach follows other qualitative research that found ‘social media to be a viable tool for study recruitment’ (Sledzieski et al., 2023: 7).

We received more than 400 expressions of interest and, from these, tried to select participants that reflected gender parity, age diversity, and geographic diversity. Overall, the sample included participants from ages 18–70 (the average age was 36.3 years), 31 women and 29 men, and included people living in Australia and Germany from Arabic, Asian, European, Indigenous Australian, and Pacific Islander backgrounds. Twenty-five percent of the sample had only high school education, 15 percent had a diploma or vocational training certificate, five percent had undertaken some university studies, 26.66 percent had an undergraduate degree, 25 percent had a master's degree, and the remaining 3.33 percent had PhDs. Participants were also geographically diverse, hailing from small regional German villages and Australian towns of a few hundred or thousand people to urban metropolises and capital cities. Full demographic details can be found in Table 1.

During the interviews, we first asked about participants’ awareness of GenAI technologies in general and whether they had first-hand experience with them. We asked participants to share about any AI systems they used, for which purposes, and how they felt about this use. Next, we showed participants 23 use cases, identified through previous research, demonstrating how AI is (or could be) used in journalism. These examples focused on multimodal applications of GenAI and were grouped into three categories: (1) Enriching and brainstorming, (2) Editing, and (3) Creating. Whenever possible, participants were asked to respond to how accepting they were of the use case generally and not just within the specific context shown. We also asked participants to rank the use cases they accepted most and least so we could obtain rich insights from their verbal reactions as well as a clearer sense of the use cases that participants accepted journalists and news organisations using. Our full interview protocol is included as Appendix 1.

Data analysis

Upon completion of data collection, the interviews were translated and transcribed using AI 4 and then manually checked and refined by native English and German speakers. The reviewed transcripts were imported into NVivo for thematic analysis, following a process of compiling, disassembling, reassembling, interpreting, and concluding (Castleberry and Nolen, 2018). Meanwhile, participants’ ranking decisions were visualised to allow for a sense of their acceptance across use cases.

Findings

First-hand experiences with and acceptance of GenAI

The study's first research question explored how much first-hand experience the sample had with GenAI tools and how these experiences affected their technology acceptance. Most participants reported first-hand experience using a range of GenAI tools, including ChatGPT, Gemini, CoPilot, proprietary AI systems their workplaces used, MetaAI, Dalle-E, and Midjourney.

In terms of how they used these tools, participants primarily used them for research and brainstorming, followed by writing items such as emails, letters to landlords, job application materials, and birthday or Christmas cards; editing, rewording, or adapting writing to different age levels; image generation; experimentation to see what the tools were capable of; translation; coding or debugging code; condensing writing; and fun or entertainment. Aspects such as cost, output quality, privacy, accuracy, environmental impact, and enabling new opportunities affected whether participants accepted the technology and integrated it into their daily life.

Regarding modality, participants reported using a text-based modality the most frequently, followed by visual and aural modalities. The participants’ use of AI was largely siloed by mode; very little cross-modal interaction or output use was reported. While AI systems can purportedly work across languages, participants had mixed or often disappointing reactions to the utility of this feature.

Acceptance of GenAI use cases in journalism

Our study's second research question explored how accepting our sample of news audiences was to using AI for various use cases in journalism. We report our findings across three domains of use cases: enriching and brainstorming, editing, and creating.

Enriching and brainstorming

The first domain of use cases, enriching and brainstorming, contained three examples: using GenAI to recognise people, objects, animals, or environments in visual assets (photos or videos) and apply keywords to their associated metadata; brainstorming how to represent a hard-to-visualise topic; and creating a storyboard for narrative inspiration. Overall, about three-quarters of the participants were accepting of these use cases in journalism.

Image recognition

Most participants (46) were accepting of journalists using AI for feature recognition in news images. Some (6) expressed privacy or accuracy concerns and were only accepting of journalists using AI in this way for images without people in them, which they thought posed less risk. Participants in Germany, bound by the more restrictive regulations of the European Union, also wondered whether those depicted would have to consent to their likeness being processed by an AI tool.

Multiple participants noted how a similar image recognition technology was already being used in their own smartphones, such as in cloud photo storage services like Google Photos, or in software applications like Word or PowerPoint. This familiarity with the AI processes made them, they said, more accepting of journalists also using equivalent tools. They also recognised the potential efficiencies of these tools: I would be comfortable with that [journalists using AI to recognise and sort through images]. It's basically the same as having your own live photo library on your computer without having to individually name all the pictures, which would take you a long time to do and also at this stage you've got the right not use it. You can say, “No, I don't want to use that [picture]”. (Participant 7)

Brainstorming

Most participants (50) were accepting of journalists using AI to help them brainstorm hard-to-visualise topics. Participants said they appreciated how using AI for brainstorming could help ‘take away a bit of the fear of the blank page’ or help with resolving writer's block, as well as taking many ideas and condensing them into a more digestible summary: I believe AI is pretty good at brainstorming in general about certain things, because they just take so much information and condense it in really just a few hundred words. Therefore, I would say it's not a big deal. The only issue I personally see is of course the references because not all AI tools actually provide [the] references where they are taking the information from, which then is always a bit hard to understand. (Participant 18)

Storyboarding

Most participants (40) accepted journalists using AI to generate storyboards that could help inform a story's narrative: At first glance, I would actually say that it's okay, because I don't see the danger behind it. So, if someone had to do these storyboards anyway and actually already knows beforehand what should be in them or what message should be conveyed, then I think the work can definitely be taken over by the AI and then briefly checked afterwards to see if what came out is halfway right. (Participant 1)

Editing

The second domain of use cases, editing, contained seven examples: removing watermarks using AI, adding background blur in a photo using AI, using AI to upscale images, using AI to automatically process multiple photographs and composite together the best elements, using AI to edit a video through a transcript, using AI to expand a photograph's frame showing a person and a landscape, using AI to animate a historical image, using AI to animate a contemporary image, and using AI to automate layouts. Overall, participants were less accepting of these use cases compared to the brainstorming and enriching use cases. Just under half (28) accepted journalists’ use of these applications.

Removing watermarks

A minority of participants (9) accepted journalists using AI to remove watermarks on images, seeing it as intellectual theft: The watermark is there for a reason. I feel like using AI to remove that is wrong. I feel like, you know, if a journalist wants an image without a watermark, they could, either, you know, purchase one from the photographer, or like, choose a different image that doesn't have a watermark. (Participant 53)

Adding background blur to a photo

Most participants (42) were accepting of journalists using AI to artificially blur the backgrounds of their photos. Participants reasoned that this is a common feature on many smartphones (such as ‘portrait mode’ on an iPhone) and mimics effects, such as shallow depth of field, achieved through legacy means by selecting particular aperture values, focal lengths, and camera-to-subject distances. They said using it could make the background less distracting or help with privacy concerns, if, for example, children were in the background. Some participants viewed the feature as empowering and couldn’t see any downsides to journalists using it: I also have that feature in my own phone and I like it a lot. I mean everyone should have easy access to tools like this in a world that is so technically empowered. I don't see any difference between me using that for a selfie and a journalist using that for a picture they are publishing. (Participant 47)

Upscaling images

Almost all the participants (57) accepted journalists using AI to resize or upscale images. The small minority said they had potential privacy concerns with images of people's faces, feeling that this technology could be misused if it led to people in an image being identified and doxxed.

Making photograph composites

Few participants (9) were unequivocally accepting of journalists using ‘best take’ technology that automatically composites the best of several images, taken in quick succession, of the same scene. They thought the resulting image would be ‘too artificial’ or had been ‘manipulated’. Participants believed this technology, if deployed in journalism, threatened or even destroyed the integrity of the image. They regarded faces as ‘the most emotive part of us’ and said they needed to be treated with care and that editing together multiple images without disclosing it was dishonest: I don't think that [“best take” features] should be used in journalism, because I think that journalism and the news should be true and accurate and honest, and I don't think that editing different photos together and pretending that it's one photo is very honest. So, I don't really like that very much at all. (Participant 4)

Editing a video through a transcript

Most participants (47) were accepting of journalists changing the chronology of a video clip through editing an AI-generated transcript (as opposed to dragging and dropping clips around on an editing timeline), with the rationale that ‘it could save a lot of time’ as participants assumed video would always have to be edited, anyways, before publication.

Expanding a photograph's frame showing a person

Most participants (53) were unaccepting of journalists using tools like Adobe's ‘generative expand’ with a photograph of a person to artificially expand the image's borders and imagine what lies beyond them. Some were more accepting of this if it was disclosed to audiences what had been done or if consent had been obtained from the person(s) represented but most were uncomfortable with this because of its potential to mislead, deceive, or defame. They found it ‘scary’ and that it made them ‘feel like [they’ve] been duped, been tricked’.

Expanding a photograph's frame showing a landscape

Participants were more accepting of journalists using a ‘generative expand’ or equivalent tool with a landscape photo lacking people compared to a photo showing people, particularly if the landscape looked generic rather than showed a recognisable landmark. About half of the participants (31) were critical of this possibility, even for generic images: I don't like that, and the reason is related to climate change… and global warming. People have said the Barrier Reef is in bad shape, But you can use AI tools like this to manipulate the images and make what's occurring in nature look better than it actually is or hide the difficulties over there. You're not showing the full picture, so you're not getting the necessary or accurate background. I have concerns with this. I think it can lead to manipulation of things. (Participant 59)

Animating a historical image not showing people

Most participants (36) were accepting of journalists using AI to animate a historical landscape (not showing people) that was produced before video cameras, especially if the use of AI was disclosed. Some were unaccepting of this due to concerns over accuracy of the animation (because ‘you're creating something out of nothing’) or because of labour concerns.

Animating a contemporary image

By comparison, participants were much less comfortable with journalists using AI to animate a contemporary image showing a person. Only a minority of participants (16) were accepting of this use case: Here we are again with deepfakes! I just need a photo of some person and then the AI makes some movement that the person has never made, so I don't know, the [middle] finger or something, that could get them into trouble, so I don't think that's good. (Participant 10)

Automating layouts

Participants (46) were generally accepting of journalists using AI to automate the placement of elements, such as headlines, photos, captions, body text, and other elements, as part of a layout or design process, especially if the end result was checked by a human: This is content that exists independently of this tool and where only the content formatting happens through it, so to speak. I’m happy to make my peace with it if it's at least a useful tool for the media organization. (Participant 50)

Creating

The third domain of use cases, creating, contained 10 examples: generating a colour palette for use in an editorial design, generating icons for a news infographic, visualising the past (text-to-image), illustrating an article in a non-photorealistic style, illustrating an article in a photorealistic style, visualising publicly accessible data (text-to-image), visualising user-uploaded data (spreadsheet-to-image), generating 3D models, generating b-roll footage (text-to-video), and generating a virtual news presenter. Overall, participants were less accepting of journalists using the applications in this domain compared to the previous two. Less than half of participants (27) were unequivocally accepting of these use cases in journalism.

Generating a colour palette for use in an editorial design

Most participants (52) were unequivocally accepting of using AI to generate a colour palette, saying the use case was ‘helpful, especially for people that might not really know very much about color theory’. A small group said they were accepting of this use case but worried about job loss, legibility (in the case of applications with insufficient colour contrast to be easily seen), or unintended associations (e.g. rainbow colours in an image could make the audience think the content is associated with the LGBTQ community when that might not be the intent).

Generating icons for an infographic

More than half of participants (39) were accepting of journalists using AI to generate icons for a news infographic, especially if cultural context was considered and no intellectual property was infringed: I see no problems with that, especially if the actual news article is still accurate and originally made by a human and authentic … because I think everyone knows that an icon is a cartoon. It's not a real thing, so it's not trying to trick anyone, whereas if it generated [photorealistic] images, that might be a bit different. (Participant 20)

Visualising the past (text-to-image)

Few participants (13) were unequivocally accepting of journalists using AI to visualise the past using some sort of written description (such as from a historical diary or account) because of job loss concerns or concerns about the accuracy of the representation, feeling that creators could use the tool ‘to push their own narratives and completely rewrite history’.

Illustrating an article in a non-photorealistic style

Less than half of participants (28) were unequivocally accepting of journalists using AI to generate non-photorealistic illustrations, particularly of places or objects rather than people. Those who were accepting of this use case said the ‘cartoonish’ nature of the images made it clear that ‘they're not intended to be interpreted as accurate or realistic interpretations of the world’ and, thus, likely weren’t going to deceive people who saw them.

Illustrating an article in a photorealistic style

Participants (49) were much less comfortable with using AI to generate photorealistic images compared to non-photorealistic ones. Some were more accepting of this if the AI-generated images were used as generic ‘stock’ images rather than for representing ‘facts’. About half of participants were not accepting because of labour concerns for photographers, because they felt AI-generated images were unnecessary, or because they felt uncomfortable not being able to distinguish between what's real and fake: There are millions of stock photographs out there. Why would we need one new AI picture? That's really beyond my understanding. So, I have no understanding of that and no wish for further creation of AI stock footage. (Participant 48)

Visualising publicly accessible data (text-to-image)

Under half of the participants (27) were unequivocally accepting of using AI to visualise publicly accessible data (e.g. ‘create a visualisation that shows the population of Afghanistan from 1990 to 2020’). Some noted that the interval scale the AI used could influence how the results looked and so were comfortable with this use provided the scale used was appropriate and didn’t mislead.

Visualising user-uploaded data (spreadsheet-to-image)

The participants were more comfortable with journalists using AI to visualise user-uploaded data (spreadsheet-to-image) compared to public data (text-to-image). Most participants (48) were accepting of this, particularly if the output was checked for accuracy or didn’t impact people's privacy, although some thought this wasn’t necessary since ‘You can do it using conventional tools’.

Generating 3D models

Most participants (42) were accepting of journalists using AI to generate 3D models, particularly if they were accurate: Probably I'd be feeling comfortable using [AI to generate 3D models] just because it can be really helpful to describe exactly what's going on. Like, if there was something that happened in a church or if there was a bombing, it could be really helpful to explain exactly what happened, because, especially for people who haven't been there, it can be, you might say, “Ohh, the north-west wing” and people will go, “Ohh, where is that? And how does everything kind of relate?” So, yeah, I'd be fairly comfortable with journalists using AI to come up with 3D models. (Participant 38)

Generating B-roll footage (text-to-video)

A minority of participants (17) were unequivocally accepting of journalists using AI to create b-roll (text-to-video). Some were more accepting if it was disclosed that AI was used, if the b-roll depicted generic rather than specific content, or ‘if there's no person shown’ in the b-roll.

Generating a virtual news presenter

Only a few participants (4) were accepting of journalists using AI to share the news via a virtual presenter. Almost all were not accepting of this use case because it felt ‘unnatural’, because of job loss concerns, because participants felt AI wasn’t as adaptable as humans, or because AI lacked human qualities, such as humour or empathy, that human presenters are capable of: I am not comfortable with an AI news presenter. I think that it is sort of a human job. AI can do all the data entry that exists in this world. A news presenter is a big person-facing role. It's in the homes of quite a lot of people. And I don't think that that's the role for AI. (Participant 13)

Discussion and conclusion

Overall, some of the factors that audiences in this study explicitly or implicitly said affected how accepting they were of GenAI in journalism converged with traditional journalistic tenets or with reasons journalists themselves have given for (not) adopting the technology (Guenther et al., 2025; Sarısakaloğlu, 2025; Thomson et al., 2024). These include reasons like efficiency and principles like accuracy, honesty, transparency, disclosure, and verifiability (the latter of which participants implicitly invoked by mentioning that humans should check AI outputs before sharing them further). Some factors, however, such as identifiability, specificity in contrast to genericness, privacy, consent, comfort, adaptability, usefulness, accessibility, authenticity, and integrity were distinct while other factors that journalists say is important to them regarding technology acceptance, such as impact on reputation, were not shared by audiences in this sample (Sarısakaloğlu, 2025).

This research responds to calls for the study of technology acceptance in more complex systems, such as those offered by GenAI, as well as for cross-cultural research and qualitative work that can delve into users’ emotional experiences with technology (Marangunić and Granić, 2015). Participants’ qualitative accounts of their own perceptions and uses of GenAI informed how accepting they were of it being used in journalism. This research supports the notion that technology acceptance isn’t just a matter of rational cognitive assessment but is also governed sometimes by emotional responses, such as fears over AI making humans less capable or that some use cases of AI in journalism felt too intimate or inauthentic to justify their use. The results enrich the scholarly understanding of news audiences’ acceptance of emerging technologies while also providing concrete feedback to journalists, editors, and managers who might be, or are considering, experimenting with AI.

Recalling that technology acceptance can be explained by a mix of at least four factors (user motivation, technology's features and capabilities, how easy the technology is to use, and how useful people perceive it to be) (Davis, 1986; Venkatesh and Davis, 2000), it is worth reflecting on the findings and how these might contribute to scholarship and industry guidance.

User motivation

Participants’ reflections revealed a wide variety in user motivations, including ones related to efficiency and creativity. They were comfortable with outsourcing some routine tasks (writing Christmas or birthday cards as well as generating, editing, or restoring images) to technology in their personal lives but as a whole weren’t accepting of the idea of journalists outsourcing similarly routine tasks (such as visualising publicly available data or having the technology decide which photo in a series is the ‘best’) to technology when participants thought some risk of harm (including through being misled or deceived or having one's privacy compromised) existed. This shows that user motivation and technology acceptance can vary based not only on perceptions of benefit but also on perceptions of real or potential risk or harm for individuals or groups (Shrivastava, 2025).

Technology's features and capabilities

Because of their multimodal nature and the diversity of the disciplinary knowledge they are trained on, AI systems can have high potential relevance for a number of tasks across disparate fields. But just because an AI system can be used for a task, it doesn’t mean that users want it or will shift their behaviour when an existing option works just as well. This was the case with AI search where some participants thought they could have found the same information through a legacy search engine or that the AI-powered feature was superfluous or frustrating, as some AI systems lack a ‘memory’ setting that effectively forces the user to start from square one each time they started a new session. Participants’ reflections evidence that choice and customisation, then, are important components for influencing technology acceptance, in addition to factors like ease-of-use and perceived usefulness (Davis, 1986; Venkatesh and Davis, 2000).

Ease-of-use and perceived usefulness

The participants’ anecdotes and experiences underscore the importance of accessibility to the user experience and to eventual technology acceptance. Accessibility includes multiple forms of input beyond just text (such as the voice chatting option that ChatGPT offers that a Polish woman with a heavy accent regarded as making her interactions with the technology ‘so easy and so helpful’ or being able to interact with a more intuitive graphical–user interface rather than have to learn prompting conventions, which can be esoteric for those without computer science backgrounds (Zamfirescu-Pereira et al., 2023).

Accessibility also extends to the languages used in training data and in outputs. Although AI systems can purportedly work across some languages, participants’ perceptions of the accuracy or usefulness of cross-language features were mixed and often disappointing. A focus on accessibility can elevate the status of this attribute to equal footing with other concerns around AI systems, such as language biases being acknowledged alongside more widely known representational biases (Thomas and Thomson, 2025).

The findings reveal diverse reactions to AI being used in journalism across domains of brainstorming and enriching, editing, and creating. Still, despite the wide range of reactions, some consistent patterns can be identified, such as audiences saying they were more accepting when AI disclosures were in place, when human oversight was involved, when the use of AI doesn’t impact the accuracy of the representation, when AI complements rather than replaces human labour, and when AI use respects the privacy of individuals’ and organisations’ data. Notably, the hierarchy of comfort across the three domains (brainstorming/enriching > editing > creating) reflects varying perceptions of usefulness and ease of use, with behind-the-scenes applications being perceived as more useful and less disruptive to the news consumption experience.

Our study relies on a non-probability sample and, accordingly, we do not claim generalisability. Rather, we view these findings as the basis for probability-based studies, both within and across national contexts, as well as further qualitative research, to continue to flesh out the perceptions that users have of AI in journalism. Longitudinal research of this kind is necessary to study how audience perceptions change, as technologies and journalistic practices evolve.

Comparing our study's findings to previous research, several aspects become apparent. While there has been a growing attention paid to aspects such as where in the process AI is used, the degree to which it is transparently disclosed, whether AI misleads or deceives, and the effect of AI use on human labour, there has been less attention paid to other aspects that affect news audiences’ acceptance or rejection of AI in journalism. For example, our study identified other factors, such as audience members’ own use of or awareness of the AI tool or process in their personal or professional life; issues of consent, legality, and privacy; the temporality of the depiction; and AI's (in)ability to understand cultural context: that affected their acceptance of journalists using GenAI. These factors should inform newsroom principles and policies around AI use and lead to better outcomes for all stakeholders involved.

Footnotes

Acknowledgements

The authors wish to thank the participants who provided their perspectives for this research and to the Australian Research Council, the Center for Advanced Internet Studies (CAIS), and the Global Journalism Innovation Lab for their financial support of this research.

Ethical approval and informed consent statements

This study received ethical approval from the RMIT University (project ID #26704) on 18 July 2023, with further amendments approved on 16 April 2024, 17 April 2024, 26 June 2024, 2 July 2024, 11 July 2024, and 15 July 2024. Participants were provided informed consent materials and provided verbal consent to participate in the research.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Australian Research Council through DE230101233, the Center for Advanced Internet Studies (CAIS), and the Global Journalism Innovation Lab.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The study's interview protocol has been included as an appendix.

Notes

Appendix 1. Interview Protocol

Part I: Introductory questions about the participant, their knowledge of and experience with GenAI, and awareness and sentiment of GenAI in journalism

Part II: Questions around specific applications of GenAI in journalism

For the last XX minutes of the interview, we’ll look at specific examples of how AI can be used across three different domains (enriching, brainstorming, and ideating; creating; and editing) and 23 use cases. I’ll ask you to reflect on how accepting or not accepting you are of journalists using AI for each of these cases.

Enriching, brainstorming, and ideating:

Part III: Concluding interview questions: