Abstract

This study explored competing predictions about how social interactions among social media hate posters affect the sequential level of hatefulness as toxicity. Analyses involve a thousand original hateful posts and the subsequent posts by the same posters (N = 1,227,756 posts) on Gab—a platform particularly hospitable to hate messaging—and Likes, Dislikes, and written replies from other users that affirmed or negated the initial hate posts. Likes and affirming replies were commonplace, whereas Dislikes and negation replies were rare. Getting Likes and affirming replies decreased subsequent toxicity in the short term, as did getting no responses whatsoever. Getting Dislikes increased the hatefulness of users’ next original post and their posts over the next 3 months. Results challenge both the social approval theory of online hate and the need-threat approach to effects of responses to social media hate posting.

The propagation of hate messages online is increasing (see, for example, Frimer et al., 2023). On the Gab social media platform, for instance, “the content generated by the hateful users tend to spread faster, farther and reach a much wider audience as compared to the content generated by normal users” (Mathew et al., 2019: 173). Research into online hate is also increasing (Rice, 2024), primarily focused on the incidence and effects, content and nature, or detection and classification of online hate messages. Less research focuses on social factors that lead to and perpetuate online hate posting (Walther, 2024a).

Two new explanations have emerged to address the social motivations and gratifications people experience for online hate posting (Lutz and Schneider, 2021; Walther, 2024b). They focus on users’ needs for approval, the responses people receive to their social media postings, and the effects of these factors on posters’ subsequent behavior. The responses people get to their postings can take the form of graphical engagement symbols (GES) such as Likes, and more elaborate written replies.

Testing these frameworks’ application to online hate is challenging. It cannot be done using cross-sectional samples of online hate content, the kind of data that are typical in online hate research. It requires consideration of the temporal relationships among messages, as well as the conversational structures that distinguish original messages from responses, the sequential structures among messages, engagements with those messages by others, and hate posters’ subsequent behavior.

The present study examined the effects of several forms of responses to posters’ hate messages–that is, two types of social approval signals and social disapproval signals– on the hatefulness of those posters’ subsequent posts, both immediately and over a 3-month period.

It contributes in several important ways to our understanding of social processes that affect the propagation of online hate. It investigates a “fringe” platform whose community is hospitable and encouraging to online hate, where hate posting is quite normative, a factor that renders patterns of escalation and de-escalation of hate posting different from those discovered in more mainstream social media platforms. It includes the potential influence of written replies to hate messages, not just the one-click GES reactions that prior research has examined. Theoretically, it extends and challenges the social approval theory of online hate and the more general need-threat model of responses to social media posting.

It employed a corpus of messages from Gab that displayed the structure of original posts and their written replies, so that coders could categorize replies as affirmations or negations with respect to the messages to which they responded. Analyses of the frequencies of GES responses (Likes and Dislikes) and written replies showed a complex pattern between social dis/approval signals and subsequent hatefulness. Some of the results were contrary to the hypotheses. In particular, receiving social disapproval signals—especially Dislike responses—increased the hatefulness of subsequent posts, both short-term and long-term, while social approval signals and a lack of response both contributed to reductions in subsequent hatefulness. The results both extend and contest the theories in complex ways, and the patterns of findings regarding Likes suggest support for a drive-reduction explanation of social approval toward online hate. Implications also arise with respect to the overwhelming normativity of hate-approving replies in this fringe community, as well as the uncommon affordance of disapproving Dislikes on this platform.

Online hate

Although online hate evades a consistent definition (Hietanen and Eddebo, 2023), most approaches are consistent with that of Schmid et al. (2024: 2616): “hostile online communication targeting social groups to insult, degrade, or belittle their members.” Two important distinctions appear in Saleem et al. (2017): it need not be seen by its targets, since it may also “promote, or has the capacity to increase hatred against a person or group of people” by others. It encompasses “incivility” (“rude, disrespectful, unreasonable” phrases) and “toxicity” (a “negative, discriminatory, stereotype or hateful comment against a group of people on criteria including (but not limited to) race or ethnicity, religion, gender, nationality or citizenship, disability, age, or sexual orientation”) (Jigsaw, 2025: n.p.). Other message-oriented definitions of online hate “include derogatory comments, threats, and incitements to violence” (Oliveira et al., 2023: 1), language that is “‘discriminatory’ (biased, bigoted, or intolerant) or ‘pejorative’ (prejudiced, contemptuous or demeaning)” (United Nations, n.d.). It also includes “content that ‘praises, promotes, glorifies, or supports any hateful ideology (e.g., white supremacy, misogyny, anti-LGBTQ . . .)’” (Hietanen and Eddebo, 2023: 448).

Social processes theories of online hate

Social approval theory

Recent work has suggested that a thorough understanding of the production and exacerbation of online hate takes into consideration social processes among and between hate posters.

Rather than individual animus as the primary driver of hate postings in social media, the social approval theory of online hate contends that reactions by other social media users to individuals’ hate posts promote the frequency and hatefulness of individuals’ subsequent posts (Walther, 2024b). The theory explicates several constructs, including signals of social approval, signals of disapproval, and insufficient approval, to derive a number of predictions. Signals of social approval can include (but are not limited to) Likes, favorites (hearts), upvotes, retweets, and other such actions that are represented by counts that appear in association with one’s hate message.

Previous empirical research supports its predictions. For instance, a longitudinal analysis by Frimer et al. (2023) found that US congressional representatives’ tweets became more toxic over time, mediated by the number of Likes that their posts received. A study of anti-immigrant Twitter posts found that when users’ posts received an atypically great number of Likes, the posters’ subsequent messages were more toxic than when they received their respective average amounts of Likes (Jiang et al., 2023). Moreover, when hate posters received atypically fewer Likes, their subsequent toxicity declined. Consistent with this theory and with previous findings, the first hypothesis states:

H1a: The more that individuals accrue signals of social approval appended to their hate messages in the form of GES (Likes), the more hateful their subsequent messages become.

The social approval theory of online hate also specifies other forms of social approval in addition to GES Likes. It explicitly considers written responses such as replies as potential signals of social approval. These types of written responses, however, have not previously been empirically analyzed in terms of their effects on subsequent hatefulness. Written replies can affirm another’s hate message, expressing similar perceptions and experiences. They may compliment other hate posters. Replies that are congruent with the hate message they follow provide social approval and reinforcement. Alternatively, replies can negate or ridicule the content of a previous hate post or insult its creator (Metzger et al., 2021):

H1b: The more that individuals accrue signals of social approval appended to their hate messages in the form of affirming written replies, the more hateful their subsequent messages become.

RQ1: What is the effect of the combination of GES and written replies to one’s hate messages on the hatefulness of posters’ subsequent messages, over and above the effect of each?

While the foregoing hypotheses concern the respective effects of GES and written replies conveying social approval, they imply the reciprocal: When one’s hate messages incur little to no social approval, subsequent hatefulness declines. This was the case in the Jiang et al. (2023) study examining the effect of positive GES (hearts) responses to hate messages. Research needs to investigate whether the absence of either or both of these forms of engagement has a stronger effect on the reduction of hatefulness, that is, whether the presence of one form of approval offsets the absence of the other:

H2a: When individuals accrue relatively fewer (1) GES or (2) written replies of social approval to their hate messages, the less hateful their subsequent messages become.

Need-threat framework

While the social approval model of online hate focuses on the effects of positive feedback on the escalation of online hate, it also contends that overt social disapproval has no direct effect on hatefulness one way or the other. A different theory, however, offers explicit predictions about the relative effects of Dislikes as well as Likes, along with the effects of a dearth of either form of response.

The need-threat model (Lutz and Schneider, 2021), while not focused on the responses users get to hate postings, does center on how different responses to social media posts, such as Likes, Dislikes, and non-response, meet or thwart users’ needs for inclusion. The need-threat framework predicts that getting Likes generates a positive feeling that should presumably elicit behavior similar to that which was Liked. It also holds that receiving disapproval signals—Dislikes—to one’s social media posts, connotes rejection. Getting no Likes (or even no Dislikes, for that matter) is posited to be the most threatening of all possible responses as far as one’s need for belonging is concerned. Their model proposes that receiving no Likes or no Dislikes whatsoever yields ostracism, a more severe form of exclusion than that inflicted by Dislikes.

Experimental research has partially supported the differential effects of these conditions on users’ responses, although not in the context of hate posting. Lutz and Schneider review a number of experimental studies showing that receiving fewer messages, getting no Likes from strong ties, and the absence of Likes for one’s social media status updates instill ostracism and threaten receivers’ fundamental needs. Being ignored or ostracized in such a manner motivates persons to take actions that they calculate will restore inclusion, which may even involve antisocial or aggressive behavior. Therefore, a rival hypothesis to H2a is also offered:

H2b: When individuals get relatively less social approval for their hate messages, the more hateful their subsequent messages become.

As was mentioned above, the need-threat approach and the social approval theory offer different predictions regarding the impact of social disapproval. Social disapproval, like approval, may appear in written replies. On some social media sites, including Gab, users can signal disapproval through Dislike GES they can append to someone else’s hate post.

The need-threat approach initially proposed that although getting overt disapproval is noxious, it at least offers some recognition of a message poster. Rejection in the form of getting only Dislikes is less satisfying than getting Likes, intuitively, but it is also less threatening to one’s need for inclusion than is ostracism, that is, to getting no Dislikes or Likes whatsoever (Lutz and Schneider, 2021). Receiving only Dislikes was hypothesized in previous research to produce a median level of exclusion, between the effect of Likes and getting no responses, and a consequently moderate increase in behavior that would be expected to restore inclusion and approval. The results of Lutz and Schneider’s experiment did not confirm this prediction, however. Receiving only Dislikes more strongly reduced feelings of belonging than receiving a dearth of responses. Based on this theoretical position, however, a tentative hypothesis is offered:

H3: The more that individuals accrue signals of social disapproval appended to their hate messages (a) in the form of Dislikes and (b) disagreement replies, the more hateful their subsequent messages become.

Social approval theory offers more complex, contingent predictions regarding social disapproval. While it contends that disapproval signals appended to a hate post have no direct impact on the poster’s subsequent hatefulness, it posits a complex indirect effect: The presence of disapproval signals to a post may prompt other users, whose attitudes are congruent with the original post, to provide approval signals to the same post (Bright et al., 2022); and the approval signals then encourage the original message poster to post more hatefully thereafter. Such a prediction can be verified if both disapproval signals and approval signals appear in association with a hate post, not just one type or the other.

The notion that seeing disapproval prompts other users to provide approval has been noted anecdotally. Because disapproval signals may indicate that a targeted group suffers, their presence may prompt others to post congratulatory approval signals, or “high fives” (Udupa, 2019) to the original hate poster. The presentation of congratulatory messages to a hate poster for distressing victims has been documented in several contexts of online hate (Stringhini and Blackburn, 2024):

H4: The more that individuals accrue signals of social disapproval appended to their hate messages, but also receive signals of social approval, the more hateful their subsequent messages become.

Platform and characteristics

The current study employed data from a “fringe” platform, Gab, which offers some unique features and affordances of interest to this particular research. Previous original research on the social approval theory of online hate utilized a dataset extracted from Twitter, a mainstream social media platform. GES responses on Twitter are limited to heart-shaped Likes. Twitter also offers a relatively diverse user base, where both hate posters and members of their frequent target groups may interact (Daniels, 2017).

In contrast, Gab brands itself “The Free Speech Social Network.” It offers no content moderation for hate posts (Mathew et al., 2019). As a result, Gab attracts a more extreme user base (Wilson, 2016). It is a safe haven for the expression of extremism, racism, sexism, anti-Semitism, Islamophobia, and a myriad of other hateful ideologies (Munn, 2022). About 5.4% of its contents include hate terms, 2.4 times the amount of hate postings as Twitter, with more racist postings particularly (Zannettou et al., 2018). For these reasons, Gab commands an outsized impact on the social media ecosphere despite its smaller size (100,000 active users) than social media giants such as Facebook (2.94 billion users) or X.com/Twitter (600 million).

In contrast to the characterization of Twitter as a target-rich environment, Gab may be considered a hater-rich environment. In hater-rich environments, hate posters are densely connected and they are unlikely to encounter members of the populations who are targeted in the hate messages they exchange. Indeed, according to Mathew et al.’s (2019) analysis, while hateful accounts on Gab numbered less than 1% of all users, they generated 19% of all Gab postings. Hateful users post 4 times as often as non-hateful users, and get 4 times as many replies (Mathew et al., 2019). If people want to post hate messages in order to garner social approval, they are likely to choose a hater-rich environment, rather than a target-rich environment, because it is likely to be a normative and plentiful source for the provision of social approval (Walther, 2024b).

Methodologically speaking, Gab offers a fertile venue in which to study the expression and propagation of hate messages: On mainstream platforms, researchers cannot know how many prospective hate postings were removed by the platforms’ content moderation procedures. On Gab, however, hate posts are not removed, allowing no missing data from the flow of hate messages in a sample corpus (Mathew et al., 2019).

Another reason Gab is important to study is because of a technological affordance related to disapproval signals, namely, the “dislike button.” Whereas much previous social media research used Twitter, which only features Likes (hearts), Gab also offers Dislikes. Like buttons are well known to convey social approval, as well as regulate conversation, maintain ties, and indicate politeness (Eranti and Lonkila, 2015). Dislike buttons, by corollary, should convey disregard or criticism, or imply that the content’s visibility should be downgraded. While Gaudette et al. (2021) examined the effect of downvotes (a close counterpart to Dislikes) on a collective far-right community on Reddit, and Mathew et al. (2019) counted Dislikes in a sample Gab corpus, neither study examined the impact of Dislikes on subsequent hate message proliferation or inhibition. This study directly addresses that gap, adding to the growing study of Gab in particular and online hate more generally.

Method

Data source

This study analyzed a corpus of messages from the Gab social media platform. In order to evaluate the effects of several kinds of responses to hate messages on subsequent hatefulness, it was necessary to secure a dataset that had intact records of all messages and all engagements with those messages, including replies. These attributes are present in the Gab Scrape dataset on the Internet Archive, 1 a subset of the widely used open source GabLeaks dataset (Munk, 2022). It contains all the posts on Gab from August 2016 to May 2018, including records of users’ engagements (Likes, Dislikes, replies, etc.), which are amenable to this study’s hypothesis tests.

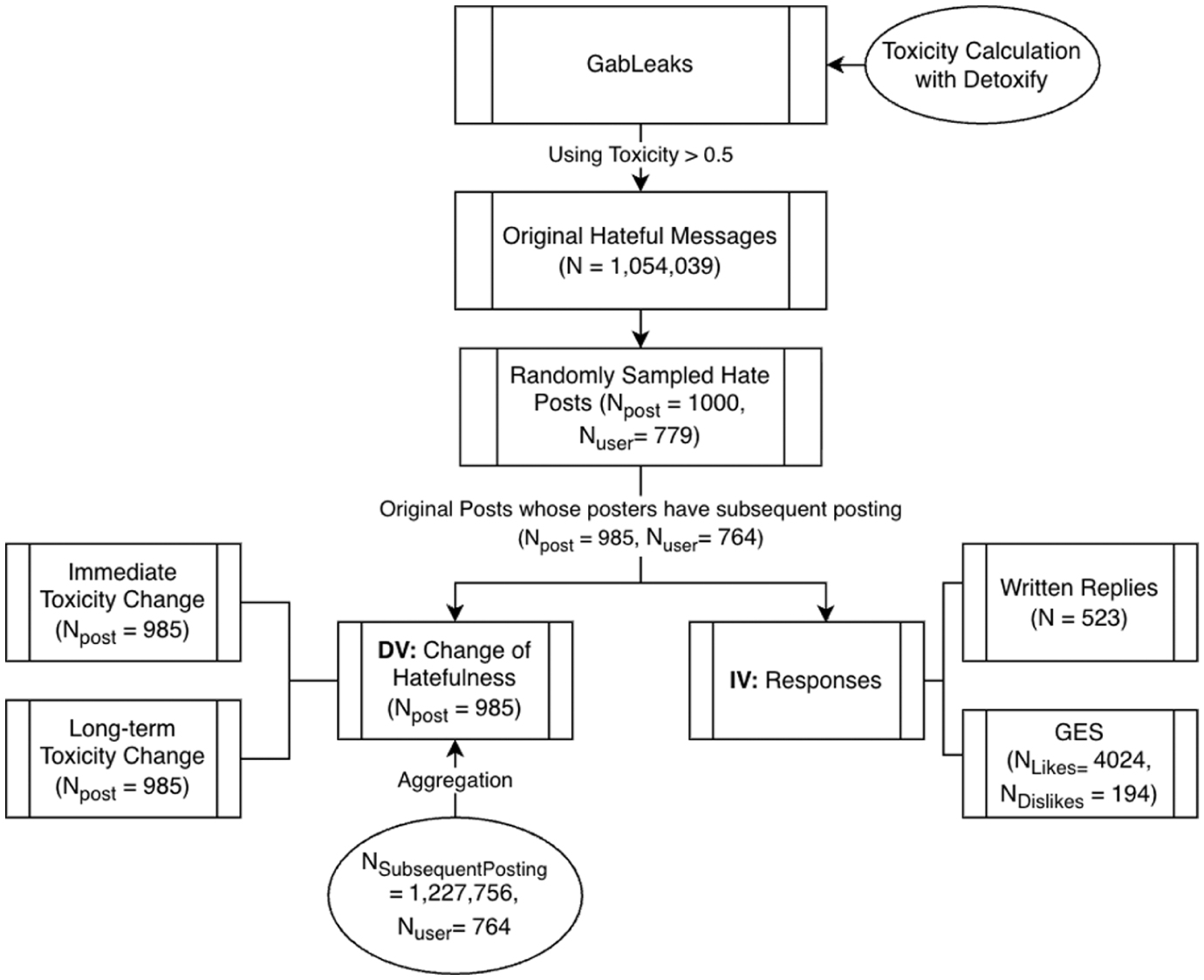

The original dataset contains 22,790,465 posts, including original posts and replies. Additional data cleaning filtered out posts with fewer than 10 words (M words = 17.5, range = 1 to 2869) in order to ensure the posts had sufficient content for analysis, yielding a remainder of 12,685,078 posts. These posts, in turn, were subjected to toxicity analysis (see section “Measurement” below) in order to provide a subset of hateful original messages; following Saveski et al.’s (2021) methods, posts obtaining a toxicity score greater than 0.5 were considered hateful, while posts scoring 0.5 or lower were not. Using this criterion, 1,054,039 original posts were identified as hateful. From these posts, 1000 were randomly selected for analysis. These 1000 posts were generated by 779 different authors between August 2016 and May 2018. From the 1000 sampled posts, we retrieved each user’s subsequent posts in the entire dataset (whether in- or outside the 1000-post sample) with which to calculate this study’s outcome variable, toxicity change. Fifteen of the users had no subsequent posts. The remaining 985 posts were written by 764 unique users, and a total of 1,227,756 subsequent posts were associated with the original 985 posts. A graphical representation of the sampling stages appears in Figure 1.

Sampling stages for selection of message and reply stimuli.

Measurement

Hatefulness, toxicity, and toxicity change

While hatefulness is the general construct this research addresses, analysis requires an operational definition with which to measure it. Other Gab analyses have employed dictionary-based approaches (e.g., Mathew et al., 2019) to assess changes in the nominal frequencies of hate messages. In order to assess changes in hatefulness, however, an interval-level measure is required. One standard approach in industry and academic research is to measure toxicity as a proxy for hatefulness (e.g. Fortuna et al., 2020; Frimer et al., 2023; Monge and Laurent, 2024; Oppenheim et al., 2024). Indeed, Kim et al. (2021) benchmarked a strong correspondence between scores from a similar toxicity classifier and human raters’ application of a broader definition of hatefulness.

Several popular classifiers were ruled out because they focus primarily on a message’s likelihood to make readers want to leave a discussion (Jigsaw, 2025) rather than hatefulness per se. One classifier, Detoxify (Hanu and Unitary Team, 2020), included hatefulness explicitly in its definition and the development of its machine learning algorithm. Built on human raters’ ratings of messages from most hateful (“a very hateful, aggressive, or disrespectful comment . . .”) through less hateful levels (e.g. “rude, disrespectful, or unreasonable comments . . .”) to not at all toxic, Detoxify now scores messages on a continuum between 0 and 1, where a score closer to 0 is more likely to be benign, while a number closer to 1 is most toxic. Detoxify has been used successfully to classify hate messages in several recent studies (e.g., Bassani and Sanchez, 2024; Falade et al., 2024; Yousefi et al., 2023). Exploratory analyses found that this measure’s ratings corresponded well with the authors’ independent classifications of an offset corpus of 145 hate messages, Cohen’s ĸ = .80.

The concurrent validity of Detoxify’s application to the 1000 messages analyzed in this study was shown by comparing Detoxify’s results to those of Perspective API, considered “the de facto standard for toxicity detection . . . [in] content moderation . . . and in academia” (Cima et al., 2024) and to ToxiGen, a relatively new classifier that was trained to be sensitive to implicit hate messages (Hartvigsen et al., 2022). Although initial correlations were moderate, analyses involved comparison among the measures’ identification of messages that exceeded a 0.5 level of toxicity (a cutoff used in further analysis, below). In this regard, Detoxify and Perspective API achieved an impressive 80% agreement level, and Detoxify and ToxiGen yielded 71.3% agreement. Given the similarity in performance, and Detoxify’s superior definition, this research adopted the scores from Detoxify in its calculation of hatefulness. Going forward, this article refers to hatefulness as a concept, and to toxicity as its operationalized variable or score.

As hypotheses focus on the change of a user’s toxicity after receiving social (dis)approval signals to a hate post, we calculated change of toxicity scores by comparing (a) a user’s message toxicity score from a subsequent post to a prior post, or (b) a user’s average toxicity scores from of all of their posts in the subsequent 3 months, to a prior post. Therefore, the two outcome variables are formulated as follows (a) ToxicityChangeNext Post = Toxicity ScoreSubsequent Post − Toxicity ScoreCurrent Post (b) ToxicityChangeNext 3Mos. = Toxicity ScoreNext 3Month Avg − Toxicity ScoreCurrent Post

A positive change score indicates that a user’s posts became more toxic, while a negative score indicates that a user’s posts became less toxic.

We calculated the toxicity change for the immediate next post and the 3-month average for each of the 985 posts using the formula explicated above. The distribution of the two outcome variables is reported in the descriptive statistics, below.

Approval and disapproval signals: GES and written responses

The number of Like GES a hate message received, and the number of Dislikes, were predictor variables. Likes were considered signals of social approval, while Dislikes were negating signals of social disapproval. Written replies to original hate posts were also predictor variables, depending on their functional relationship to the hate message to which they responded. A review of alternative interaction coding schemes (e.g. Brauner et al., 2018) and computational classifiers (Dehghani and Boyd, 2022) that might offer such relationships between replies and the original messages did not reveal a satisfactory method: As Roos et al. (2024) note, automated language analysis show how negative messages are but not whether they are negative toward others’ hate postings or toward the original post’s target population: “[A] more in-depth and contextualised analysis of interaction behaviours is needed, in which relations between posts take centre stage” (pp. 436–437). Therefore, this study developed an original, contextualized coding scheme that incorporated the conversation-analytic principle of “adjacency pairs” to assess whether a reply message affirmed or negated the hate message to which it responded, that is, examining two-part sequences of statements in which the meaning of a second statement is interpreted in reference to the prior statement, as discussed in Schegloff (2007).

The 5-category coding scheme provided two subcategories of affirmation, two subcategories of negation, and a category for other/nonresponsive replies: A reply (1) affirmed the hate message if it was supportive of, consistent with, or extended the attitude expressed by it, or (2) complimented the original poster. A reply (3) negated the hate message if it was unsupportive, contesting, or refuting; or (4) insulted the original hate message poster.

Seven coders initially categorized the replies to Gab hate messages using this coding scheme. After each coded the first 25 replies, preliminary intercoder reliability was assessed; although the score was in the acceptable range, there was some confusion between coders about the application of Other when they could not actually make a determination, so an Uninterpretable category was added, and coding of the remaining corpus continued until all 523 replies were analyzed.

Krippendorff’s (2019) alpha provides a standard reliability measure for multiple coders, categories, and variables. The initial alpha reliability for the seven coders achieved .76, falling within the “lower bound” of acceptability (.67 to .79; Marzi et al., 2024). To improve the coding accuracy, further analysis identified the three coders who had the strongest level of agreement, among whom alpha reliability was .81. In the case of disagreements among these three coders, we used their modal (i.e. two coders’) classification. When there was no agreement among any two coders, we used the modal classification produced by the original seven coders.

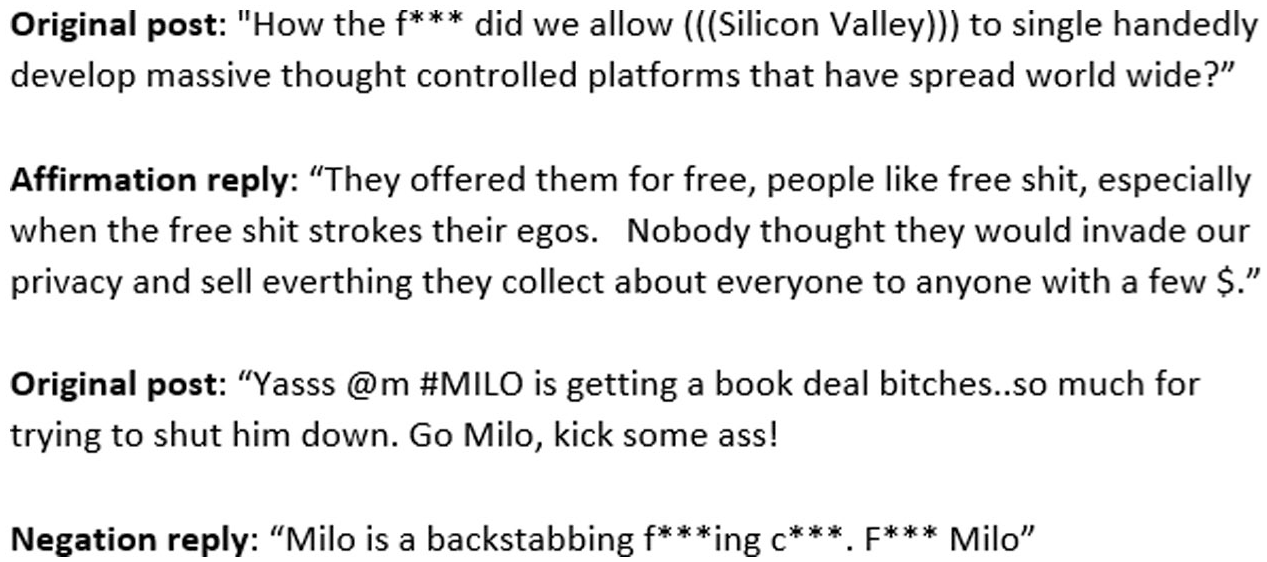

Inspection of frequencies of these four categories indicated too few comments between them to justify retention of this molar level of analysis. Further analyses collapsed them, resulting in counts of only affirmation replies and negation replies. Analysis of the 523 replies showed that a strong majority (70.7%) consisted of affirmations of the original hate message. Negations comprised 20.2% of replies. Examples of each type appear in Figure 2. Combining textual replies and GES of the same valence provides two more high-level predictor variables: Approval signals is the sum of Affirmation replies plus the number of Likes, whereas disapproval signals is the sum of Negation replies plus the number of Dislikes.

Examples of hate posts from corpus with affirmation or negation replies.

Results

Descriptive statistics

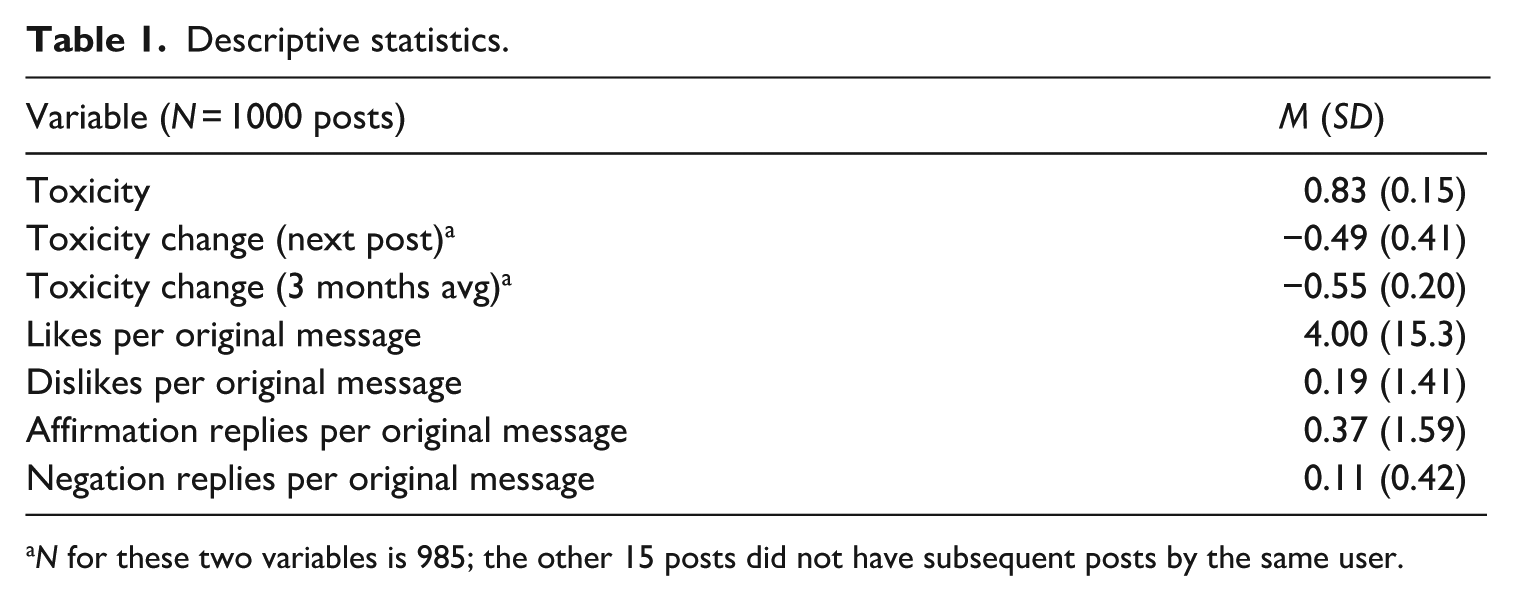

Table 1 shows the distribution of the GES responses, written reply types, and toxicity variables. The mean toxicity score of the 1000 original hate posts is 0.83 (on a scale between 0 and 1), SD = 0.15. Between the 985 original posts and subsequent posts by the same poster, toxicity decreased, as shown both in the toxicity change of the next post (M = −0.49, SD = 0.41) and the 3-month average toxicity change (M = −0.55, SD = 0.20), suggesting that the general momentum of hatefulness declined unless acted on by other social approval or disapproval signals.

Descriptive statistics.

N for these two variables is 985; the other 15 posts did not have subsequent posts by the same user.

Although there seemed to be an abundance of Likes overall (M = 4.0 per original hate message), their distribution among original messages was uneven (SD = 15.3). Of the 1000 original hate posts, 638 received at least one Like, and 362 received none. Far fewer received Dislikes: The mean of Dislikes per original post was less than one (M = 0.19); only 75 of the 1000 posts received Dislikes. Similar to the lopsided distribution of Likes, the number of Dislikes per original message was skewed (SD = 1.41). Regarding written replies, 252 of the original hate messages received at least one written reply from other users. On average, original hate messages received less than one Affirmation Reply or Negation Reply, and those that got any typically got only one (Median = 1, M = 2.07, SD = 3.09). The aggregated Affirmation Replies per original message (M = 0.37, SD = 1.59) and Negation Replies (M = 0.11, SD = 0.42) were scarce and dispersed; akin to the Likes versus Dislikes, there were more Affirmation Replies than Negation Replies.

Hypotheses testing

Regression analyses employed R (Version 4.3.0) to fit multiple Ordinary Least Squares linear models. Outcome variables include changes in toxicity, both for a hater’s next post and for the next 3 months’ average. Predictor variables included the number of each type of GES, each type of written reply, and in separate analyses, the aggregated predictors described above. The effect of getting no responses at all, compared with getting any kind of GES or written reply, was assessed using a dummy variable, for which a value of True (1) indicated any kind of response and False (0) represented no response. An initial regression analysis explored the effects of all possible interaction effects on the outcome variables. No significant interaction effects emerged, and subsequent analyses suppressed interactions.

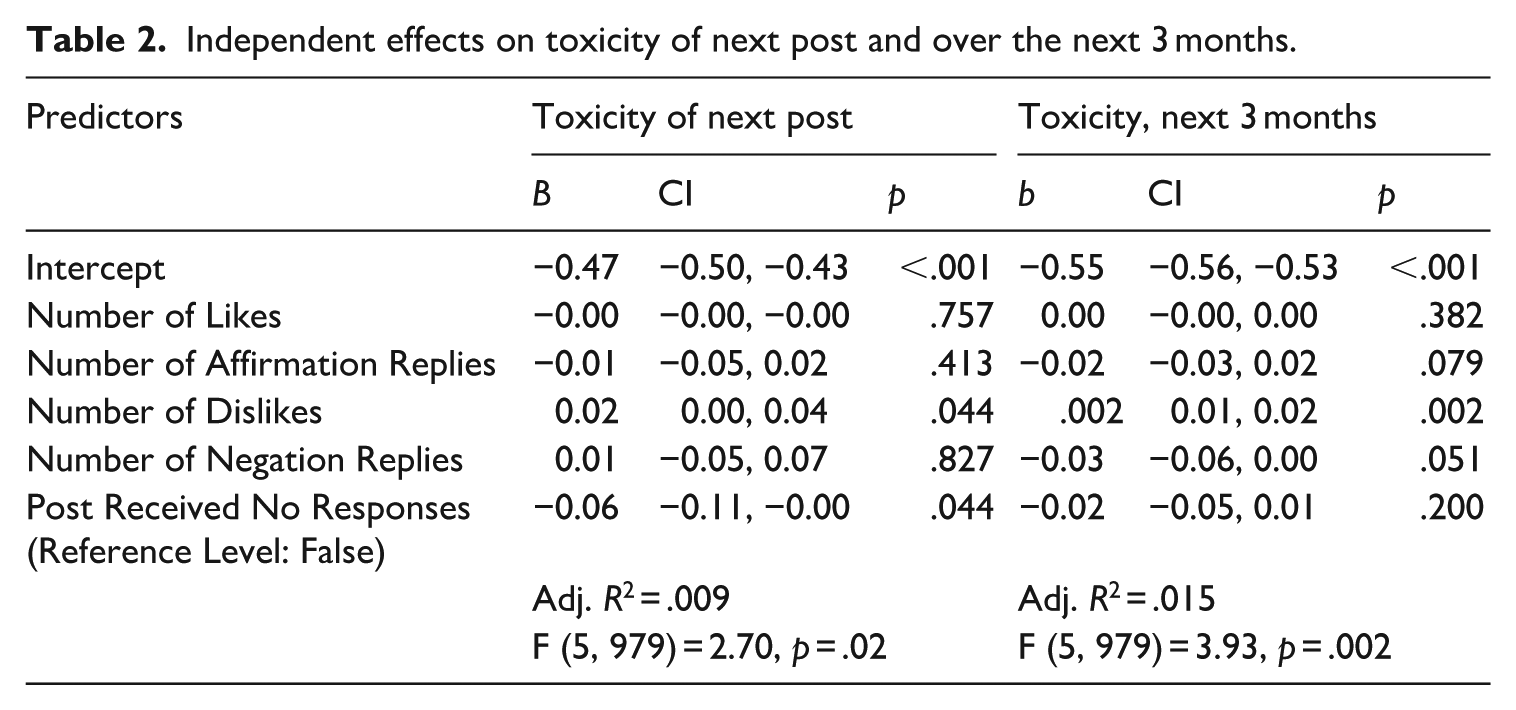

The first hypotheses predicted that toxicity increases as a result of (H1a) getting Likes and (H1b) getting affirmation replies to one’s hate posting. These hypotheses were not supported: There was no significant change in toxicity after a post received more Likes or more affirmations either on a user’s next post or over their next 3 months of posts; see Table 2.

Independent effects on toxicity of next post and over the next 3 months.

H3 was generally supported, reflecting an aspect of the need-threat theory with respect to the effect of disapproval signals. When a post received Dislikes, the next post from the same user was significantly more toxic (b = 0.02, p = .044). Dislikes also increased toxicity over the next 3 months (b = 0.02, p = .002). Both findings support H3a. The results of H3b are ambiguous. Although the number of negation replies had no effect on the toxicity of one’s next post (p = .827), negation replies were marginally associated with an increase in toxicity over the next 3 months (b = −0.03, p = .051); see Table 2.

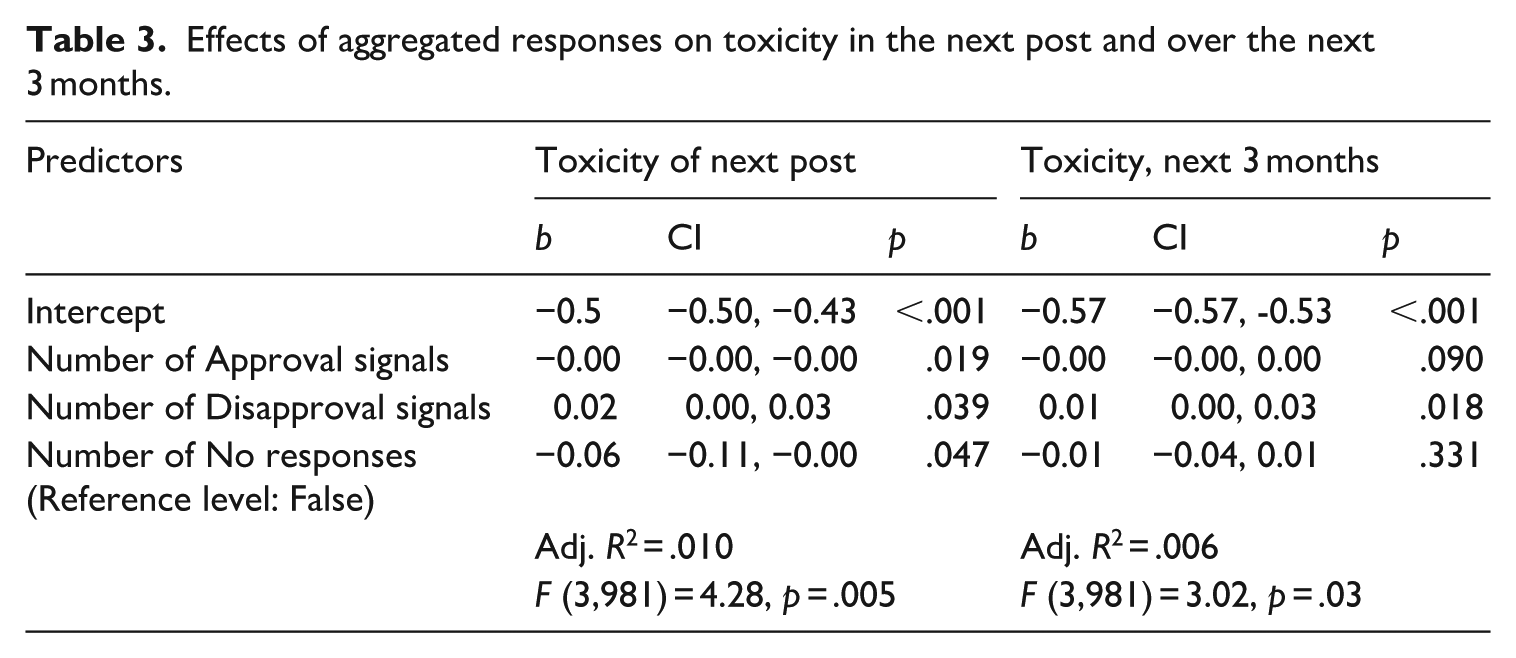

RQ1 asked if the combination of GES and written replies to hate messages have a greater effect on the toxicity of posters’ subsequent messages, than the effect of only GES or only replies. This analysis aggregated Likes and affirmations into a single variable, Approval Signals, and collapsed Dislikes and negation replies into another single variable, Disapproval Signals.

Both of these terms were significantly related to the toxicity of the next post, as was the term for no responses whatsoever. Approval Signals negatively predicted increased toxicity. That is, the more approval signals one’s message received, the less toxic an individual’s subsequent post was, b = −0.01, p = .019; see Table 3. The aggregated Disapproval signals also had a significant effect on toxicity change, similar to that of Dislikes: The more Disapproval signals a user’s message received, the more toxic the user’s next message was, b = 0.02, p = .039. Receiving no replies, an unaggregated dummy variable, predicted the same reduction of toxicity as in the previous analysis.

Effects of aggregated responses on toxicity in the next post and over the next 3 months.

These analyses were repeated using toxicity change over the next 3 months as the outcome variable (see Table 3). This model yielded a significant effect only for Disapproval signals, which increased a user’s toxicity over the next 3 months, b = 0.01, p = .018, but generated no effect for positive signals or instances of no response.

The answer to the RQ is that the combined effects of Likes and affirmation replies do influence the toxicity of a user’s next post more than either factor has on its own, but the effect is unexpectedly negative: Approval signals in aggregate reduced the toxicity of the next post.

The next hypotheses offered competing predictions. H2a predicted that individuals who get relatively less social approval for their hate messages become less hateful afterwards, because their behavior did not accrue the social approval reward that was sought, and behavior that goes unrewarded diminishes. H2b predicted the opposite: A relative deficit of social approval for one’s hate posting leads individuals to become more hateful in their subsequent messages on the presumption that they perceive that more extreme hateful messages attract more social approval (e.g., Frimer et al., 2023; Kim et al., 2021). Using a dummy variable representing no response at all to one’s hate post as the predictor, H2a was partially confirmed: Getting no response at all led to a reduction in toxicity of a user’s next message (p = .034; see Table 2), although it had no effect over the span of 3 months’ subsequent posting (p = .200). This finding contests H2b, which had predicted that getting no responses to one’s hate posts leads to an increase in subsequent hatefulness.

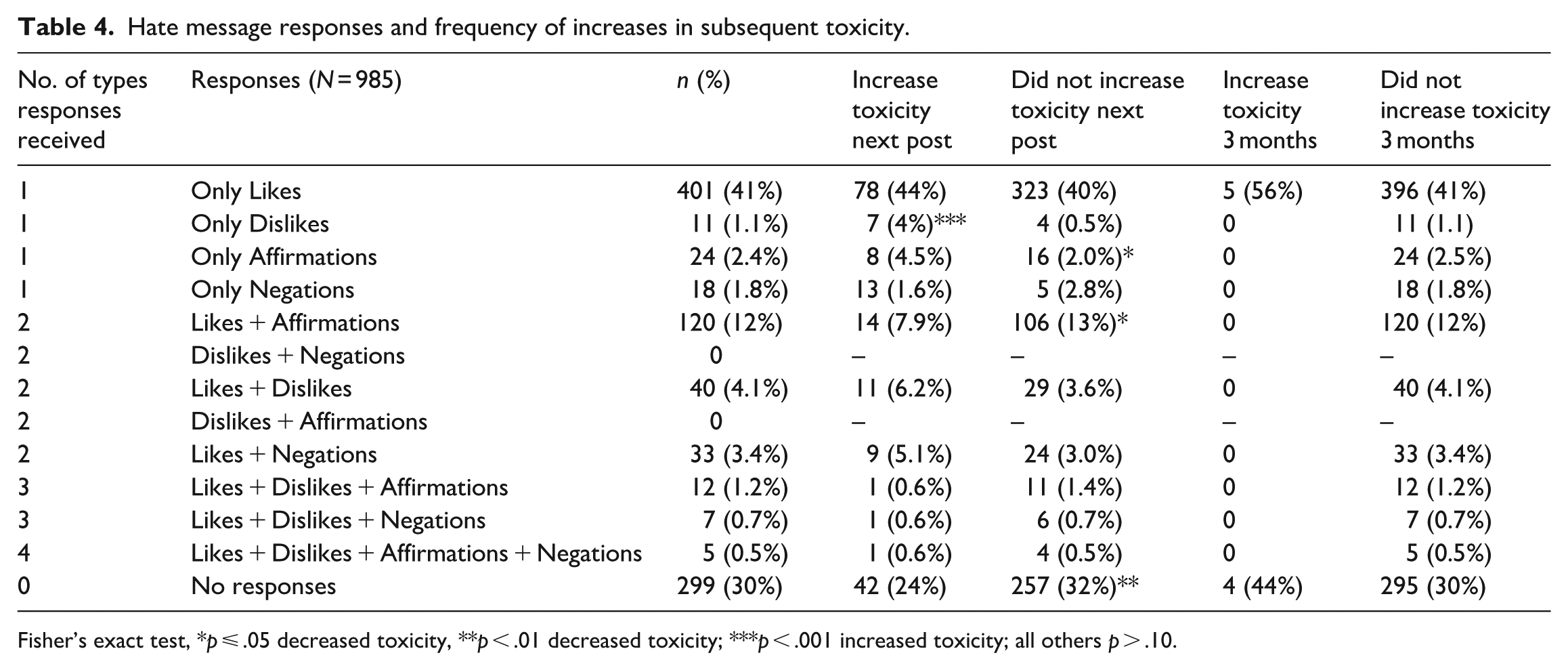

H4 predicted that when a user’s hate messages accrue social disapproval responses in Dislikes or negation messages, individuals post more hateful messages subsequently if their original hate post concurrently accrues signals of social approval. In order to assess this hypothesis, it is necessary to identify the original hate posts that received not only more than one kind of response, but also that those responses reflected both disapproval and approval signals.

Table 4 displays the frequency of each pattern of responses to hate messages, including those reflecting only one type of response, or no response, and every possible combination of response types. It was more common for a hate message to receive only one type of response (46.3%, with 41% comprising only Likes), or no responses (30%), than to get more than one type of response (28.5%). The hypothesis test focused on the cases in which a hate message received Likes and Dislikes (n = 40); Likes and Negation Replies (33); Likes, Dislikes, and Affirmation Replies (12); Likes, Dislikes, and Negation Replies (7); and Likes, Dislikes, Affirmation, and Negation Replies (5); there were no cases of Dislikes and Affirmation Responses.

Hate message responses and frequency of increases in subsequent toxicity.

Fisher’s exact test, *p ⩽ .05 decreased toxicity, **p < .01 decreased toxicity; ***p < .001 increased toxicity; all others p > .10.

Although each of these response patterns led some hate posters to post more toxic messages in their next post, somewhat more often the subsequent posts were not more toxic. Post hoc analysis looking at other combinations showed that receiving only Affirmation Replies, or a combination of Likes and Affirmation Replies, led to significantly more subsequent messages that did not increase toxicity than those that did increase in toxicity, paralleling the results of H2’s test. Similarly, getting no responses at all also led to more frequent subsequent messages that did not increase toxicity than those that did. Only when a hate post received strictly Dislikes or strictly written Negations were users’ subsequent posts more toxic.

The hypothesis test pooled the data on toxicity change for the next message among the six conditions that were relevant to the hypothesis, that is, those in which a hate message received both negative and positive responses. A 1-sample t-test assessed whether the change was significantly different than zero. Results indicated that there was a significant change in subsequent toxicity following one’s post that received both negative and positive responses, M = −0.39, t(96) = −10.02, p < .001, but the combination led to a reduction rather than an increase in toxicity. This finding disconfirms H4.

Another analysis followed the same procedure to assess toxicity change in these same pooled conditions, where hate posts received both negative and positive responses, but with regard to hate posters’ average toxicity change over the next 3 months. Toxicity significantly declined in these conditions, M = −0.52, t(96) = −26.73, p < .001. Both the short-term and long-term effects of accruing mixed responses lead to a reduction in toxicity thereafter, in contrast to H4.

An additional set of t-tests was conducted to compare the toxicity change between the hate posts that received both negative and positive responses and those that only received negative responses. When a hate poster received only negative responses, the change in toxicity of their next post (M = −0.28) was not significantly different than those who received a mixture of positive and negative responses, t(43.41) = -1.32, p = .192. Similar results were observed for the long-term comparison: When a post received only negative responses, the mean toxicity change over 3 months was −0.52, t(40.38) = -0.08, p = .94. These results imply that receiving mixed responses is not a significant factor affecting toxicity change, compared with only receiving negative responses, further defying H4.

Discussion

These analyses of social responses to hate messages, in the context of Gab, offer important findings. The findings reinforce the importance of looking at the social responses to hate messages, in terms of Likes, Dislikes, and written replies, that hate messages accrue, and how they affect whether hate posters increase or decrease their subsequent hatefulness, as shown in their messages’ toxicity scores. They clearly show a significant effect of getting negations to one’s hate messages—Dislikes and written disapproval messages—a kind of feedback that is rarely, if ever, explored in the analysis of hate messaging. Although they seldom appear, when one’s hate message accrues Disapproval signals, there is a significant increase in one’s subsequent hatefulness, regardless of any additional presence of social approval signals. These findings support one of the contentions of the need-threat model of responses to social media feedback: Getting dislikes is more encouraging than getting no response to one’s message at all. Disapproval signals increase hate posters’ vitriol not only in a user’s next post but over the next 3 months as well. The current results cannot determine whether a poster’s response to getting Dislikes enrages the poster, or whether it activates a cycle consistent with frustration/aggression theory (Kruglanski et al., 2023). Nonetheless, negations encourage posters’ greater subsequent toxicity, in quite the opposite manner than intuition, and prior studies of Likes, predicted.

The findings that approval signals reduce rather than exacerbate hate are unique among the studies that have examined this relationship to date. They challenge tenets of the social approval theory of online hate in this regard. Instead, they reflect recent speculation that the posting of hate messages in order to acquire social approval acts as a psychological drive (Hull, 1943; Walther, 2024b). That is, if individuals are driven to acquire social approval (as they are for food, water, safety, etc.) and if they post hate messages in order to get that approval, getting it should temporarily satiate the need, and reduce the force of the drive. In other words, hate posters need approval, and once they get it, they then do not need it as much for a time, and their efforts to get it—the hatefulness of the messages they use to get approval—recedes. This account is also consistent with the finding that Likes and affirmations to one’s hate posting led to a small and short-term reduction, rather than a longer, 3-month reduction, in subsequent toxicity.

Alternatively, the anomalous findings between this study and many others may pertain to the nature and culture of the platform, Gab. Gab is well known for its hospitality to racist, sexist, anti-Semitic, and other hate messaging (Jasser et al., 2023). There seems to be an overwhelming normalcy and potential expectedness of receiving affirming responses to one’s hate messages in Gab. It is a hater-rich environment, in contrast to a target-rich environment (Daniels, 2017), a relatively homophilous community where individuals can readily accrue reinforcement by expressing supremacist, dehumanizing, degrading comments about minority groups and undesirable individuals. This hater-rich environment is abundantly clear when one compares the responses to hate messages in these data to similar analyses from a more mixed environment, Twitter.

An analysis by Mathew et al. (2018) on responses to hate messages on Twitter reflected overwhelmingly disapproving (85%) replies. In the Gab data, 55.4% of hate messages received approving responses, and another 30% went unresponded. The 1000 hate messages in Gab accrued 4024 Likes and only 194 Dislikes, an average of two-tenths of a Dislike per hate message. Within this context, approval responses are vastly typical. Disapproval responses, in contrast, stand out; they are strongly atypical. Approval messages, despite (or because of) their overwhelming incidence, may go relatively unnoticed and levy no significant effect. Such is the case on Facebook, according to Hayes et al. (2016), where people Like others’ posts rather automatically and indeliberately, dampening the impact of Likes on receivers. In contrast, the atypical appearance of disapproval signals may arouse a “negativity effect” (Kanouse and Hanson, 1972), in which people give disproportionately more weight to negative responses because of the very rarity with which people generally encounter negative expressions, leaving positive information nondiagnostic and uninformative.

The finding that no response at all to one’s hate posting—no Likes, Dislikes, affirming or negating comments—leads to a short-term reduction of toxicity is similar to a previous finding that significantly fewer Likes and retweets than usual, on Twitter, also led to a reduction in subsequent toxicity (Jiang et al., 2023). The results suggest that a lack of unsupportive responses, not just a lack of supportive responses, leads to a reduction of hatefulness, which is strongly in line with predictions of the need-threat theory. Hate declines when it fails to garner attention, not just when it fails to garner adulation, it appears. At the same time, the social approval theory may have misunderstood the meaning of disapproval relative to the meaning of no response. The theory specified that acquiring insufficient approval can lead hate posters to express more hatefulness, and that disapproval signals impart no direct effect. Further consideration should be made to the possibility that overt disapproval constitutes lack of approval, in hate posters’ experience. If disapproval signals a lack of sufficient approval, the theory can be made sensible again. Dislikes, interpreted as insufficient approval, may prompt the increase in subsequent hatefulness that the theory previously predicted to result from a dearth of approval absolutely.

A further contribution of this study is its focus on a platform where both Likes and Dislikes are possible. Most existing empirical studies of online hate rely on Twitter data (Siegel et al., 2021), where no Dislike GES exists; both positive and negative GES responses exist on Gab, Facebook, YouTube, and elsewhere. An important question for future research, looking across platforms, will be to see how users on disparate channels signify disapproval in written or GES form when there are greater or lesser options for such signaling and whether the impact of these semiotic systems is similar or different.

Implications for hate mitigation

The findings that dislikes and disapproval messages escalate rather than de-escalate hate suggest caution in promoting and adopting counterspeech strategies. Social media users utilize counterspeech to fight back against online hate with messages that oppose hate posting, using a variety of message strategies (Baider, 2023). Message strategies include denigrating a hate poster or criticizing a hate message, or condemning the presence of hate messaging in a discussion more generally, among other strategies (Benesch et al., 2016; Mathew et al., 2018). This environment seems to be a space where signals of disapproval generally, including counterspeech, may actually be counterproductive, increasing rather than decreasing hate.

Reduced responses to hate lead to a decrease in future hatefulness, and this may also support suggestions elsewhere regarding online hate mitigation, advocating the use of shadowbanning or other moderation techniques that reduce the potential for hate posts to be seen and get feedback from others (Jaidka et al., 2023; Walther, 2024a). Shadowbanning allows users to post online, yet unbeknownst to them, hides their posts from other social media users. To the extent that shadowbanning might throttle both positive and negative feedback, it appears to provide an effective mitigation technique.

That said, there is no apparent reason to believe that the Gab culture or owners have any interest in curbing online hate. As Zannettou et al. (2018) noted, “From the very beginning, [Gab’s] operators have welcomed users banned or suspended from platforms like Twitter for violating terms of service, often for abusive and/or hateful behavior” (p. 1007). Its success may be due to how “It responds to the problem of sustaining community in an environment where hateful individuals are [elsewhere] increasingly deplatformed, hate speech is identified and regulated, and toxic communities are shut down” (Munn, 2022: 232).

Limitations

This study has several limitations. First, the dataset only contains posts until 2018, when GES responses on Gab included only Likes and Dislikes. More GES options (e.g. “angry,” “love,” “pray”) emerged later, to which users might react differently. It is also noteworthy that Gab went from private beta to public in May 2017 (Munn, 2022), overlapping with our sampling frame. Our analysis shows increases in user activity in toxicity corresponding with this change, consistent with Mathew et al.’s (2019) observation that hate posts became more frequent over a similar time interval. Had our sampling frame included activity only prior to this event, the generalizability of the results would be suspect. If anything, our results might be on the conservative side. Another limitation is that the measurement of toxicity and its change over time involves probability measures rather than measures of magnitude. An increase in toxicity scores could indicate that a message is more explicitly and overtly toxic, rather than more toxic per se. A continuous measure is needed to assess changes in hatefulness more accurately. In addition, the dataset does not contain the timestamp for the GES. Therefore, some of the Likes or Dislikes might have arrived after a user’s subsequent post. This is unlikely, given that most user interactions happen within the first 2 hours once they are published (Glenski and Weniger, 2017). Our analyses of average toxicity levels over the 3 months after a posting are very likely to be immune to non-immediate GES postings.

Conclusion

This study examined the effect of social approval and disapproval on hateful messaging on Gab, a so-called fringe platform where hate and toxicity are allowed to thrive. Contrary to the first hypothesis, receiving positive responses to hate reduced subsequent hatefulness in the short term. Results also showed that individuals who got no response had less hateful subsequent postings. Users were influenced by Dislikes, which increased users’ toxicity in the subsequent post and the next 3 months. This study analyzed replies in their conversational context, that is, in direct reference to respective hate messages. It incorporated not only Likes but positive written responses to hate messages, and it combined Dislikes with negative written responses as well. The results suggest that principles and predictions of the social approval theory of online hate, as well as the need-stress theory of responses to social media postings, require reconsideration: For the former, with regard to its conceptualization of social disapproval signals and their effects, and for the latter, whether a reversal of predictions or interpretations is warranted when applied to the context of online hate posting.

Footnotes

Acknowledgements

The authors thank the following individuals for their invaluable assistance: Sarah Gamarnik, Mandy Hitchcock, Carley Palmer, Eva Peate, Dorian Sanchez, and Sasha von Ende. A previous version of this work was presented at the 2025 meeting of the International Communication Association.

Author contributions

Y.Y.: Data curation, Conceptualization, Methodology, Data Analysis, Writing-Original draft preparation. R.A.L.: Data curation, Conceptualization, Writing-Original draft preparation. Y.Z.: Data curation. J.B.W.: Conceptualization, Methodology, Writing-Original draft preparation, Supervision.

Data availability statement

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was partially supported by the Institute for Rebooting Social Media, Berkman Klein Center for Internet & Society at Harvard University.