Abstract

As legislators and platforms tackle the challenge of suppressing hate speech online, questions about its definition remain unresolved. In this review we discuss three issues: What are the main challenges encountered when defining hate speech? What alternatives are there for the definition of hate speech? What is the relationship between the nature and scope of the definition and its operationability? By tracing both efforts to regulate and to define hate speech in legal, paralegal, and tech platform contexts, we arrive at four possible modes of definition: teleological, pure consequentialist, formal, and consensus or relativist definitions. We suggest the need for a definition where hate speech encompasses those speech acts that tend towards certain ethically proscribed ends, which are destructive in terms of their consequences, and express certain ideas that are transgressions of specific ethical norms.

“Online violence does not stay online. Online violence leads to real world violence.”

Although hate speech has been at the centre of attention for some time, both its theoretical definition as well as practical and legal regulations have proven elusive. On the one hand, the legal background contains deep roots. On the other hand, hitherto new ethical and communicative challenges abound (Gillespie, 2018; Persily & Tucker, 2020).

At the core of these challenges, definitions of hate speech are often considered vague or contradictory (Brown, 2015). Assimakoupoulos et al. (2017) state that there is “no universally accepted definition for [hate speech], which on its own warrants further investigation into the ways in which hate, in the relevant sense, is both expressed and perceived” (p. 3). Also, jurisprudence exhibits a spectrum of competing descriptions of the basic ideas and principles involved in the definition, which inevitably engenders ambiguity (Fraleigh & Tuman, 2011).

In this narrative review (Baumeister & Leary, 1997), we approach hate speech with the intention to propose a new basis for a functional definition, with a focus on the online context. Since material on social media and other online fora is first analysed by algorithms, a definition of hate speech needs to enable coherent operationalisation and proper transparency, which current definitions do not.

We pose three questions: (1) What are the main challenges encountered when defining hate speech? (2) What alternatives are there for the definition of hate speech? (3) What is the relationship between the nature and scope of the definition and its operationability in an online context?

Legal Background

Social media have made hate speech a societal concern that must be regulated, but the notion builds upon very old legal traditions. Legal aspects are central, not least because hate speech could lead to litigation, which is one of the concerns of stakeholders. In fact, the most recent efforts regarding hate speech regulation are legal in nature; the European Commission's current proposal is to introduce hate speech as a new “area of crime” (European Commission, 2021).

The oldest Western historical parallel to hate speech is defamation. Roman law prohibited publicly shouting at someone adversus bonos mores (contrary to good morals). The evaluation of the offence was made based on the degree of libel resulting from the disrepute or contempt of the person exposed. The possible truth of the statements was not considered a justification for a public and insulting manner of delivery. Below, we will define this as a consequentialist view. A subcategory of defamation concerned statements that were said in private, in which case only the content was evaluated—a formal view (Birks, 2014; “Defamation,” 2022; Zimmermann, 1996, ch. 31).

In the West, two traditions have been established: the U.S. “anti-ban” position and the rest of the western world, which imposes penalties against hate speech (although defined differently in different countries (Heinze, 2016, ch. 2.1)). In the U.S., “hate speech” is used in legal, political and sociological discourse, whereas the phenomenon in Europe often relates to laws on the instigation of hate against groups of people (Germ. Volksverhetzung; Frommel, 1995). An early German legal formulation (StGB §130 [Germ. Strafgesetzbuch, the German Penal code]; StGB, 1871) has later developed into three parts, constituting “the public disturbing of peace through an attack on human dignity” (Germ. Menschenwürde; StGB §130; Bundesgesetzblatt, 1960): (1) instigation of hate, (2) urging towards violence or high-handedness or (3) insult, scorn or slander. Later iterations follow the same ideas but with more legal detail and severer penalties (Frommel, 1995).

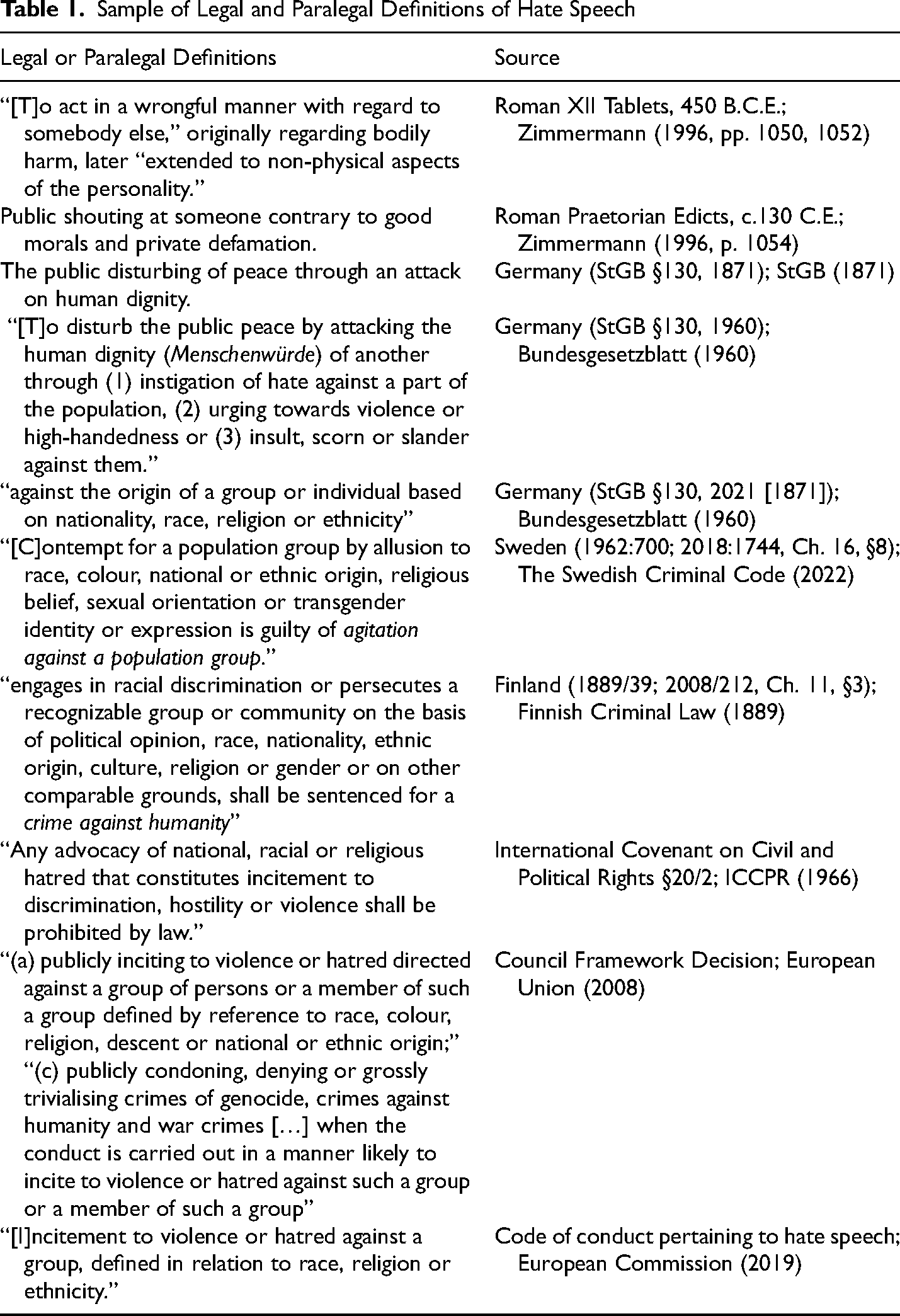

The formulations, as well as legal headings, vary between countries. In Sweden, for example, this legislation falls under “offences against public order” in a 2019 law on “agitation against a population group.” The Finnish equivalent from 2011 falls under the heading “war crimes and crimes against humanity.” In comparison with the Swedish law, six aspects are identical, and three are not. These represent typical variations in European legislation. Please see Table 1 for examples and sources.

Sample of Legal and Paralegal Definitions of Hate Speech

In summary, matters that originally were broadly covered under the heading of defamation have since the 1960s separated into two laws in Europe, where groups are also protected, along with individuals, under the heading of public order, as in Germany and Sweden or under the heading of crimes against humanity, as in Finland. Even though we here only mention a few countries as examples, their development is typical of other similar European countries (for an overview, see the Research centre on security and crime (RiSSC), 2017).

The Rationale for Hate Speech Bans

Since our interests relate to the process of banning hate speech, we do not need to elaborate on the basis of bans in general. In Europe, bans are already in place since both the penal codes quoted above are in effect bans on hate speech, and the EU's code of conduct constitutes a ban. The need for bans is mainly a U.S. debate. A few pointers should, however, be noted.

Advocating for free speech, Heinze (2016) suggests different needs for a “longstanding, stable, prosperous democracy” (p. 9) compared to non-democracies or weaker democracies. This differentiation solves the problem of comparisons supporting hate speech bans that are actually incomparable, historical and contemporary. For example, the situation in the Weimar Republic is not comparable with the situation in Germany today, wherefore examples of Nazi rhetoric seldom make a valid point for bans (Heinze, 2016).

Heinze presents the following taxonomy: “Arguments for or against bans generally fall under two familiar styles of moral reasoning, commonly known as consequentialist or ‘outcome-based,’ and deontological or ‘duty-based’” (pp. 32–34). These are not mutually exclusive and are often confused. The main problem with consequentialist arguments is that they lack empirical evidence. On the one hand, it is difficult to indicate causality between hate speech and its effects. On the other hand, it is impossible to indicate causality between bans and the amount of hate speech in society. Heinze's thesis is that weaker democracies may well have to depend on consequentialist arguments for bans, whereas stronger democracies “become more [greatly] bound to deontological criteria” (p. 35). Based on Heinze's analysis, we limit our context to that of long-standing, stable, prosperous democracies, such as Western countries.

Although the major social media platforms all originate in the anglophone West (the U.S. or Europe), the phenomenon is universal. Tackling intercultural variations, however, is a complex matter wherefore we delineate our study to the West. Like all communication, hate speech is a culturally embedded practice, but we cannot here address culturally contingent challenges.

Difficulties of Definition and Delimitation

In 2016, several major social media companies jointly agreed to the European Union's code of conduct pertaining to hate speech, which requires tech platforms to address material flagged as hate speech within 24 h (European Commission, 2019; Hern, 2016). This code gives a functional definition of hate speech as the incitement to violence or hatred against a group, defined in relation to race, religion or ethnicity. The code also emphasises the need to safeguard freedom of speech, even in relation to shocking, politically subversive or disturbing material (European Commission, 2019).

The tension inherent in the concept of hate speech comes to the fore in relation to its opaqueness. Definitions of hate speech are often considered vague or contradictory, and we have no universally accepted definition. Moreover, looking at definitions in the literature, the framing tends to be emotional and tinged with a moral tenor. For example, Kang et al. (2020) describe hate speech as “poisonous mushrooms” that proliferate in relation to populism specifically. The UN Strategy and Plan of Action on Hate Speech (United Nations, 2019) emphasises that “hate is moving into the mainstream,” undermining peace, democracy and even our common humanity. Similarly, Brudholm and Johansen (2018) remark that the current discourse indiscriminately construes hate and hate speech in no uncertain terms as “a vice, an evil and a threat” which “fuels terror and extremism,” and call for a more precise definition and nuanced understanding of the concept.

The danger of value-laden characterisations, in concert with an inherent vagueness, is that they open the door to a situation where any sensitive or emotionally inflammatory political opposition could be construed as hate speech and become a target for suppression.

The “hate” in hate speech is also often associated with some subjective experience that characterises the intention of the speech act in question, which, as stated, is often also emotionally framed. The notion that the key problem is that hate is emotionally construed presents significant difficulties of definition and delimitation (Waldron, 2012, pp. 35–36) and fails to adequately represent the many conceivable examples of harmful speech acts that have little relation to emotion.

Operationalising Vague Definitions

In his review of definitions of hate speech, Sellars (2016) notes that “the lack of definitions in scholarship translates to uncertain definitions in law and social science research and even more uncertain application of principles in online spaces” (p. 15). The complexity of what the definition must cover has resulted in either abstract and non-functional definitions or resignation; for example, Papcunová et al. (2021) argue that “the potential for finding one [a universal theoretical definition of hate speech] seems unlikely at the moment.” (“Definitions of hate speech” section). The lack of consensus results in varying interpretations when platforms try to implement the EU's code of conduct.

Often, a broad definition is provided without a critical discussion. One of the more frequently used definitions is by Nockleby (2000). According to him, hate speech is “usually thought to include communications of animosity or disparagement of an individual or a group on account of a group characteristic such as race, colour, national origin, sex, disability, religion or sexual orientation” (p. 1277). However, with such a broad and abstract definition, implementation is difficult. For example, when Brown-Sica and Beall (2008), based on Nockleby's definition, suggest automated moderation and filtering as a means of dealing with hate speech in an online context, the definition raises the question: what exactly should be moderated or filtered? What exactly is meant by “communications of animosity or disparagement”?

Regarding automatic detection of hate speech (the primary contemporary gatekeeper in the process of hindering hate speech), we run into various challenges. First, there is a plethora of negative speech that is not hate speech. On Internet fora and comment spaces, people readily express discontent, resentment and blame regarding just about any type of issue. As non-constructive as such vilifications may be, they need not fall under a separate heading from incivility, rudeness or simply contentiousness. We agree with Rossini (2020), who notes the need to distinguish between uncivil and intolerant discourse. Unfortunately, the types of negative language that are not hate speech are difficult to separate from actual hate speech and furthermore, from such forms of hate speech that legally could or ought to be prosecuted; here, we run into a conundrum regarding the term “hate speech” itself.

Paasch-Colberg et al. (2021) note that hate speech as a term is an “explicit reference to the emotion of hate” and thus generally used as a “label for various kinds of negative expression by users, including insults and even harsh criticism.” Paasch-Colbert et al. attempt to clarify the situation by distinguishing actual hate speech from neighbouring concepts and creating a model to empirically distinguish hate speech from offensive language. They suggest: “In order to avoid assumptions about the intentions of the speaker or possible consequences of a given statement, we argue in favour of the legal origins of the term ‘hate speech’ and focus on discriminatory content and references to violence within a given statement” (p. 172). They suggest the following as prohibited: negative stereotyping; dehumanisation; expression of violence, harm or killing; and the use of offensive language (insults, slurs, degrading metaphors and wordplays).

Sellars (2016, p. 14) provides an overview of definition attempts and notes that “the consensus view appears to be that a wide array of different forms of speech could or could not fit a definition of ‘hate speech,’ depending on the speech's particular context, which rarely makes it into the definition itself.” Sellars does not provide a definition, but based on his literature overview, he (pp. 25–30) suggests “some common themes” for a definition: (1) targeting a group or individual as a member of a group, (2) content in the message that expresses hatred, (3) the speech causes harm, (4) the speaker intends harm or bad activity, (5) the speech incites bad actions beyond the speech itself, (6) the speech is either public or directed at a member of the group, (7) the context makes violent response possible, (8) the speech has no redeeming purpose.

Although it is generally easy to identify words and phrases as negative or vilification-based without context, it is rarely possible to know if they are, in fact, hate speech. The matter is furthermore complicated by concealment practices, the use of terms that of themselves are harmless but which are code words for racial slurs or the like (Sellars, 2016, pp. 14–15). Heinze (2016, p. 19) suggests the term “hateful expression,” noting that non-verbal acts should also be included.

Finally, definitions are constructed for various purposes. Sellars (2016) groups these into academic attempts, legal attempts, and attempts by online platforms. Sellars’ overview (p. 14) deals mainly with racist speech. In this study, we discuss a definition of hate speech for the purpose of an ideologically responsible regulation and an operational detection of hate speech which needs to include all kinds of hate speech.

EU Efforts

Two contemporary international efforts that stand out are the International Covenant on Civil and Political Rights (ICCPR, 1966; entry into force 1976) and the EU's Framework Decision on combating certain forms and expressions of racism and xenophobia by means of criminal law (European Union, 2008). The ICCPR's central statement is brief, whereas the EU framework is detailed (see Table 1), indicative of the development during the 42 years between the two.

As the EU ramps up its efforts to stop hate speech, the commission focuses on hate speech as an “area of crime” that connects with a broader group of crimes (European Commission, 2021, p. 7). Particularly, hate speech is considered harmful due to its impact on common values, individual victims and their communities, society at large and the sheer scale of both hate speech and hate crime (pp. 8–11).

The perceived need for action has increased during recent years: “The COVID-19 pandemic has created an atmosphere in which hate speech has flourished, becoming ‘a tsunami of hate and xenophobia’” (European Commission, 2021, p. 16). The increase has affected groups who are blamed for being major spreaders of the virus, such as Roma and migrants, as well as persons perceived to be of Asian origin. At the same time, antisemitic postings have increased considerably. Furthermore, hostile messages increased against people identifying as LGBTQI and older people (European Commission, 2021, pp. 16–17).

Hate speech is already combatted through legislation in many, but not all, EU member states. Furthermore, different aspects are criminalised in different countries. For example, 20 member states criminalise hate speech on the grounds of sexual orientation, but only six member states do so on the grounds of age (p. 13). As the commission argues, the trans-national cross-border dimension of hate speech requires international measures that have a common basis (European Commission, 2021, pp. 14–15).

Platform Efforts

The platforms were originally not required to regulate their content to filter out hate speech. Under the general principle of freedom of speech, even “unsuitable” speech has generally been seen as tolerable. However, some moderation has, from the beginning of the history of social media platforms, been meaningful as prevention against litigation and a safeguard against dissatisfaction and controversy among platform members, and in the continuation against the platform itself. According to their user agreements, platforms reserve the right to impose sanctions, including the banning of a user, at their discretion, without any legal process (e.g., Meta, 2022a, 2022b). Furthermore, Gillespie (2018) shows how moderation has always been an integral part of social media business models where advertising is moderated in different ways, for instance, in combination with user data for targeted advertising.

However, the borders of what is and is not tolerated have remained unclear. As Sellars (2016, p. 21) explains, it is “unnecessary—and perhaps unwise—for a platform to neatly delineate the precise scope of unacceptable behaviour on their platform. Platforms want the flexibility to respond at will.” This situation is unsatisfactory, as the actual practices and internal norms within different platforms are not publicly available. When considering the enormous influence of the biggest social media platforms, the imbalance between their regulation is at odds with the academic and legal efforts to define the problem and find working implementations thereof.

Nonetheless, this situation is changing. While awaiting the results of the EU proposition regarding hate crime (for current status, see Bąkowski, 2022), the most notable governmental effort in effect for controlling hate speech on online platforms is currently the EU Code of Conduct on Countering Illegal Hate Speech Online (European Commission, 2019), which is an implementation of the EU Framework. The code of conduct requires service providers to (1) prohibit hate speech on their platforms, (2) respond quickly to reports of hate speech, (3) provide updates on enforcement statistics, and (4) promote counter-speech. This EU resolution mentions protected characteristics, but the Charter on Fundamental Rights already, in fact, prohibits “any discrimination based on any grounds” (European Parliament, 2016, p. 81).

The sixth evaluation of the EU code of conduct (European Commission, 2021) draws attention to a decline in both notifications reviewed within 24 h and the average removal rate. Furthermore, in 10–12 per cent of the reported cases, Meta (Facebook and Instagram) and YouTube disagreed in their assessment with the notifying organisations. The report states: “This shows the complexity of making assessments on hate speech content and calls for enhanced exchanges between trusted flaggers, civil society organisations and the content moderation teams in the IT companies” (European Commission, 2021, p. 2). However, what is needed is not only “enhanced exchanges,” but a clearer understanding of what constitutes hate speech.

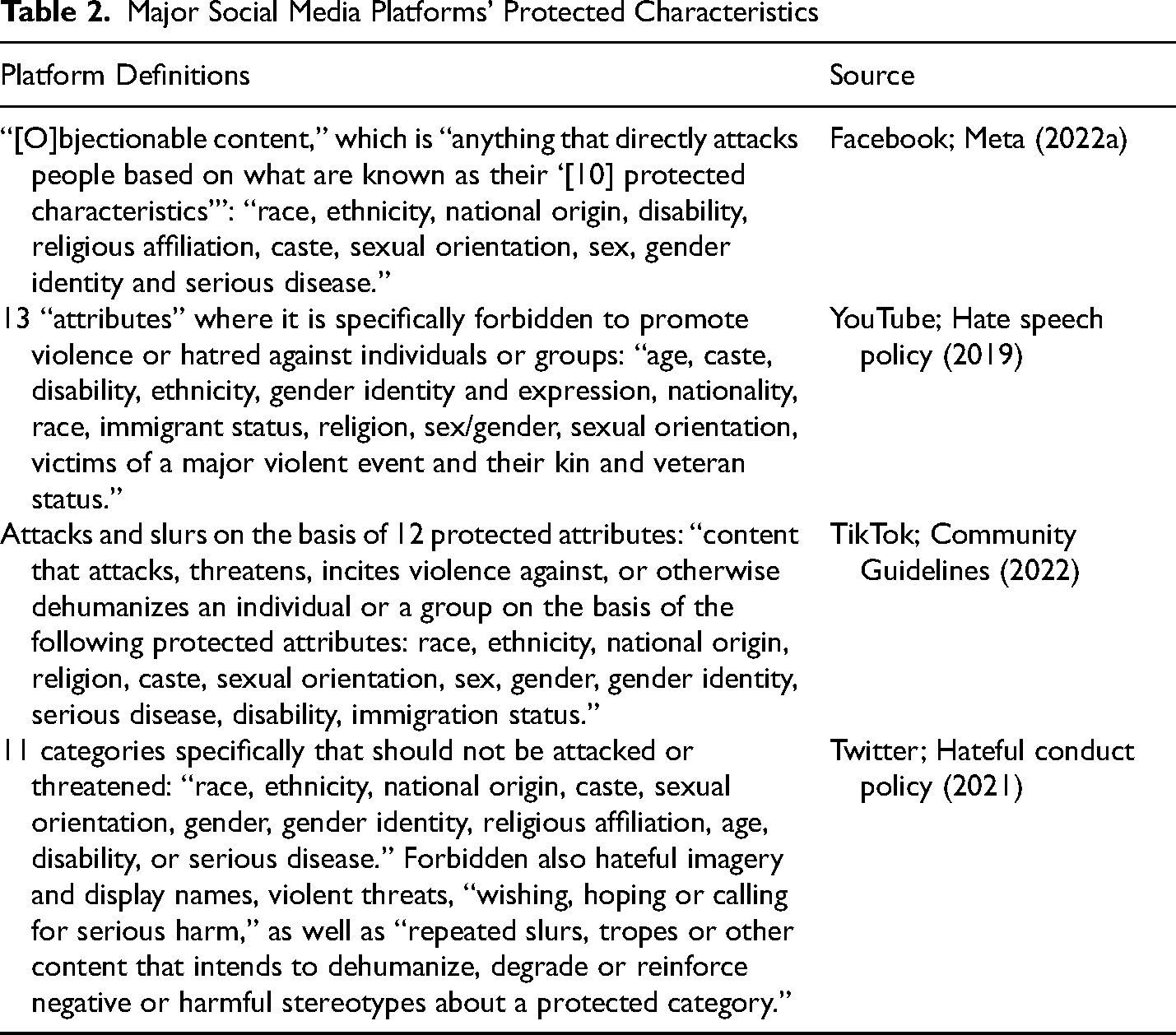

The major social media platforms—Facebook, YouTube, Instagram, and TikTok (Walsh, 2022)—have adopted guidelines and practices against hate speech. In the platforms’ respective terms of service, the members in principle agree to refrain from hateful conduct, the promotion of violence, threats, and discriminatory practices. The grounds of discrimination are based on a set of protected characteristics or attributes, which vary between platforms. The following are included in all guidelines: race, ethnicity, nationality, religion, caste, disability, sexual orientation, and gender identity. The following are included in some, but not in all: sex, gender expression, serious disease, immigrant status, victims of a major violent event and their kin, veteran status, and age. These differences aside, the main challenge is that “[t]here is no universally accepted answer for when something crosses the line” (Allan, 2017, para. 7). See Table 2 for examples and sources.

Major Social Media Platforms’ Protected Characteristics

TikTok has complemented their list by explicitly forbidding hateful ideologies, which they, among other things, define as content that “praises, promotes, glorifies, or supports any hateful ideology (e.g., white supremacy, misogyny, anti-LGBTQ, antisemitism)” or that “contains names, symbols, logos, flags, slogans, uniforms, gestures, salutes, illustrations, portraits, songs, music, lyrics, or other objects related to a hateful ideology” (Community Guidelines, 2022).

Hate Speech Regulation in Practice—Facebook's “Tiers”

Meta has recently tried to explicate their take on hate speech on Facebook (Meta, 2022a). We do not have access to how they arrived at their lists, but they give the impression that some of the examples cover actual cases where Facebook moderators have enforced removals or bans. Other elements in the lists are more general, and seem to be formulated to cover various forms of possible hate speech.

For Facebook, Meta operates with levels of harm, grouping hate speech into three “tiers” ranging from the most harmful to somewhat less harmful. A fourth category is contingent on more information before a decision is made. This is currently the most detailed effort to regulate hate speech in the online environment (for the lists in full, see Meta, 2022a).

Tier 1 contains written or visual “content targeting a person or group of people [based on] their protected characteristic(s) or immigration status” through “violent speech” or “dehumanising speech or imagery.” Dehumanising speech can, for example, relate to animals that are culturally perceived as intellectually or physically inferior, filth, disease and faeces, sub-humanity, criminals, or statements denying existence.

Tier 2 also concerns “targeting a person or group of people [based on] their protected characteristic(s)” using, for example, “generalisations that state inferiority, expressions of disgust or cursing.”

Tier 3 contains content with the same purpose, but is considered less harmful, such as “segregation in the form of calls for action, statements of intent, aspirational or conditional statements or statements advocating or supporting segregation.” Meta provides examples, such as “black people and apes or ape-like creatures; Jewish people running the world or controlling major institutions such as media networks, the economy or the government; Muslim people and pigs; referring to women as property or ‘objects.’”

Facebook's empirical reality seems to have guided their take on hate speech and other parts of their community standards. Most, if not all, of Tier 1 seems to stem from a process best described as some sort of consensus. It is probably not far-fetched to conclude that the words and phrases mentioned have been flagged by Facebook users thousands of times. However, relying on general Facebook users for what is objectionable creates trend-sensitive, relativistic, and almost arbitrary grounds for evaluation.

The lists also seem to exhibit hate speech that could be defined on formal grounds. Some words and phrases are hate speech per se, regardless of user reactions, due to culturally established traditions on what is objectionable. Some of the concrete items on the highest tier reflect culturally or temporally bound concerns, making them transient. Some slurs, such as typical antisemitic slurs, have endured for a long period, whereas others, such as current transgender slurs, are relatively new. Others will inevitably change over time. Furthermore, this development must be followed up separately for each language, cultural sphere, and area (noted, also, by Allan (2017), VP for Facebook's EMEA public policy: “Regional and linguistic context is often critical, as is the need to take geopolitical events into account”).

The lower tiers also exhibit hate speech that one could arrive at, based on teleological and consequentialist arguments. For example, “expressions of dismissal” are always negative, whereas calls of action for segregation may have negative consequences. We note the complexity of Meta's lists and how they give the impression that, when we reach the level of actual practice, a simple definition may not cover all forms of hate speech.

Tendencies of Definition

On a conceptual level, hate speech in contemporary discourse could be described as a kind of speech act that contextually elicits certain detrimental social effects that are typically focused upon subordinated groups in a status hierarchy (Demaske, 2021, pp. 10–14; United Nations, 2019, p. 2). When attempting more exact specifications, we encounter a set of challenges. For instance, if we characterise hate speech teleologically (Baider, 2017) and define it as entailed harms, the vagueness pertaining to these injuries gives us an unworkably broad spectrum of possible hate speech acts.

These considerations notwithstanding, current injunctions are formulated in a broadly consistent manner which implies that implicit considerations guide the actual socio-legal construction of hate speech prohibitions. These considerations could include worldviews, values, political ideologies or even matters of aesthetics. The UN Strategy and Plan of Action for Hate Speech (United Nations, 2019) refers specifically to the violation of certain norms and values and a weakening of the “pillars of our common humanity,” which anchors the entire institution of hate speech suppression in specific values and a particular worldview. The discursive framing and social construction of protected groups, in relation to their historical and cultural situation, is an example of such tacit factors.

In effect, such values amount to a formal aspect of the prohibited speech acts in question, aside from the more ambiguous characterisations coupled with teleological definitions; that is, the anchoring of the institution of hate speech suppression of values or worldviews would imply that the prohibitions (also) target particular ideological or conceptual content. As mentioned above, TikTok specifically lists elements considered “hateful ideology” (Community Guidelines, 2022).

Arguably, the formal targeting of ideological or conceptual content would nonetheless be inevitable in practice, as an institution of this sort crystallises and establishes its practices and normal operations. In this situation, we will inevitably end up with a specifiable set of suppressed ideas, whether or not this set was initially targeted for discernible content of meaning. A similar effect would seem to follow from the tacit reproductions of formal content by the paradigm examples that guide the practices of suppression in actual institutional practice.

A definition of hate speech that combines teleological aspects with a formal delineation of content would inevitably produce a certain tension in relation to the precepts of liberal democracy. The notion that a “ban on ideas” is being practiced would clash with central enlightenment precepts. Nonetheless, an explicit restriction of such ideas has significant potential benefits, not least in relation to increased transparency, which, in turn, may facilitate a more open deliberation on these types of institutional processes that are now proliferating. It would also address the issues of ambiguity that stem from weak characterisations, by providing clear ideas, values or views that should not be publicly transgressed.

Paradoxically, the ambiguity might be significantly more conducive to misuse than an outright ban of specific ideas. In the absence of a clear and discernible set of suppressed perspectives or propositions, the prohibition of speech acts becomes inherently vague and malleable, subject to arbitrary or unpredictable implementation. An outright ban would also allow prohibitions to be questioned and targeted by dissenters since they would need to have a rationale that can be debated.

The question of whether such a formal explication would conflict with the free exchange of ideas is central. Arguably, the conflict will be a fact whether or not we affirm the presence of such prohibitions, if the implicit prohibitions are an inevitable aspect of this type of institution, or if they can be shown to already guide hate speech suppression in practice (Malik, 2015).

Four Possible Definition Modes

Based on previous suggestions and the preceding observations, we suggest that there are four general definition modes for hate speech, given the goal of formulating definitions that are both formally coherent and possible to operationalise: a teleological, a pure consequentialist, a formal (in Platonic or Aristotelian terms), and a consensus or relativist mode.

Teleological definitions will frame hate speech as those speech acts that, with regard to their inherent character or intention, tend towards specific negative effects, while also possessing an inherent or contextually relevant causal relation to these ends. These negative effects may then be construed in relation to fundamental rights and liberties.

Pure consequentialist definitions will consider speech acts as hate speech if they have an inherent or contextual causal relationship to specific negative effects. In this context, consequentialism does not necessarily imply utilitarian normative ethics as per Anscombe’s (1958) terminology. Consequentialist definitions do not invoke the inherent character or intention of the speech act to identify the targeted category of speech.

A formal definition dispenses with the effects altogether and focuses on the forms or ideas expressed, such that certain conceptual positions per se are suppressed or prohibited. This definition may be operationalised with no explicit regard to the rationale of the suppression, such as the effects of particular speech acts or the truth or falsity of the positions; they can, for instance, be framed as an unambiguous list of ideas that may not be disseminated.

Finally, a consensus definition will construe hate speech in relation to some form of general agreement as to what speech acts will be categorised in the definition. This definition would also overlap with something akin to the fiat of an authoritarian rule, yet which arguably also amounts to the consensus of a governing strata in practice.

Relationship to Other Definitional Models

We note that our four modes bear superficial similarities to the comprehensive “four bases of definition” of hate speech listed in Anderson and Barnes (2022, “What is Hate Speech?” section), which consist of (1) harm, (2) content, (3) intrinsic properties, and (4) dignity. However, we find that these bases exhibit similar issues of coherence as many other definitions. Most importantly, harm of some sort is central to all approaches towards hate speech, but Anderson and Barnes’ construal of harm is vague, and does not consider how different perspectives on normative ethics reflect various approaches towards harm. Their position is basically a version of our consequentialist mode, yet where the harm is accidental and not specifically related to content or context. Also, their succeeding distinction between content-based definitions and those based on “intrinsic properties” of the speech act both actually collapse into formal content. Finally, the notion of a dignity-based approach is, in Anderson and Barnes, basically a narrow form of the consequentialist mode that focuses on the speech act's effects towards undermining the dignity of a particular group.

In a similar way, the Roman Praetorian edicts combine our consequentialist and formal modes (Zimmermann, 1996). The German criminal code (StGB, 1871) connects with the teleological approach by invoking the notion of human dignity. Most modern legislative approaches have a consequentialist and teleological character (European Parliament, 2016; The Swedish Criminal Code, 2022). Nockleby’s (2000) definition is formal-teleological, Paasch-Colberg's (2021) is mainly formal, while Sellars’ (2016) combines the teleological, formal and consequentialist modes (yet omits the consensus aspect).

With regard to the above, our four-pronged model seems to attain the objectives of other general definitional models, while adding a consensus perspective. Additionally, it provides a clear and reproducible operationalisation based on a comprehensive conceptualisation of all possible types of implemented hate speech suppression.

The Operationalisation of Hate Speech Injunctions

Our typology seems exhaustive and allows us to analyse the discursive tendencies of hate speech injunctions in practice. There does not seem to be a coherent way to definitionally frame an injunction against certain types of speech acts, which neither amounts to a prohibition against an ethical breach inherent to the act or its effects, a purely formal rejection of particular ideas or concepts, or a consensus or fiat-stance as to which positions are to be suppressed in the relevant discourses.

Injunctions against hate speech almost always invoke ethical principles, explicitly or implicitly. The teleological variants are either anchored in the active intention or the inherent character of concepts or propositions towards some form of ethical breach or normative principle, such as epistemic duties. The consequentialist variants can be characterised in the same way, although they jettison any reference to intentions. The purely formal models will refer to epistemic norms and duties—or in any effect, to the desirability or value of the ideas as such. The consensus approaches or bans by fiat seem to be the only methods that, in principle, could disregard explicit ethical norms and anchor the injunctions in something like user-consensus or similar, but it is difficult to see how this could be used for trans-national agreements. Thus, values most probably need to be a determining factor of hate speech injunctions. Consequently, values ought to be scrutinised in deliberations on hate speech injunctions and be made visible.

In addition to the ethical perspective, another more significant indirect observation is the tendency towards formal approaches (i.e., the actual bans of concepts) inherent in many positions. One could say that every mode of injunction must possess a cut-off, a scope for which propositions and ideas, formally speaking, must be disallowed.

In other words, teleologically motivated suppression will inevitably produce an implicitly or negatively defined set of propositions that cannot be admitted into discourse. This also holds for the consequentialist and consensus variants, albeit by other causal pathways. The only meaningful difference in relation to a formally defined list of prohibited ideas is ambiguity regarding the precise propositions that are members of the prohibited set, as well as an associated difficulty in clearly specifying the unique axiological rationale for the suppression of every member of the set. Neither of these two attributes is desirable.

Conclusions

Two millennia ago, publicly shouting at someone against good morals was prohibited. Today the “shouting” is both more widespread and more harmful. Identifying a set of protected characteristics to protect human dignity marked an important step forward. Now more detailed descriptions of what kind of speech actually violates these characteristics are needed. These descriptions should be based on a sound understanding of what a general definition of hate speech entails.

Our study opened with the following questions: (1) What are the main challenges encountered when defining hate speech? (2) What alternatives are there for the definition of hate speech? (3) What is the relationship between the nature and scope of the definition and its operationability in an online context?

As for (1), the characterisation of challenges depends upon the objectives. The general difficulty of balancing issues for safety and security with the precepts of free speech is compounded with other problems; depending on the subject position that we presume, there are many other outcomes to consider.

From the viewpoint of a tech platform, a definition or model of hate speech must mesh with their overarching goals relating to profit and expansion. Therefore, it must not negatively impact the quality or quantity of the products and services they deliver. Nor should it erode the corporation's important relationships with the public, other corporations, important non-governmental organisations or various governments (Gillespie, 2018).

In terms of the public's interests, a significant issue is the de facto encroachment of civil liberties that a corporate culture of censorship entails, not least in association with structures of automated interventions that afford little transparency or oversight. There are also issues pertaining to the subtle effects on culture, discourse, and relations that may arise from this sort of ubiquitous and potentially intrusive regulation.

In terms of (2), there seem to be four possible basic modes of definition in principle, which could be combined: (A) The teleological definition, where the intentionality and tendency of the speech act towards certain ends is focused on. (B) The pure consequentialist definition, which focuses on the effects of the speech act alone. (C) The formal definition, which builds on the essential character of the speech act and the ideas involved. (D) The consensus or relativist mode, where any speech act can become denoted hate speech by fiat.

Given the risk of suppressing speech that should not be labelled as hate speech, we are sceptical of the possibility of a systematic suppression of hate speech. However, if injunctions are to be institutionalised, we suggest that outright prohibitions of specified ideas be implemented and for clear reasons, rather than ambiguous suppressions of a vague category of speech acts.

Such an outright ban might clash with key principles of liberal democracy, but the hate speech provisions nonetheless inevitably tend in this direction, and explicit lists with clearly specified axiological reasons for the suppressions can at least, in principle, be targeted by rational arguments.

Conversely, the use and abuse of ambiguous prohibitions are much more difficult to coherently dispute because the rationale consists of implied general values. Thus, when arguing against vague injunctions, one will tend to be placed in a position of disputing generally held values, rather than arguing why specific prohibition P is not in coherence with values XYZ.

Any consequential definition must be agreed on internationally (e.g., through the ICCPR), since we should not have to rely on the big tech platforms to restrict their own activities. Also, some solutions regarding restrictions favour big operators who have the resources to implement expensive algorithmic developments and manual enforcement of regulations, which further monolithises their positions. For a long-term perspective, internationally funded, open-source tools for algorithmic hate speech detection based on big data are necessary, not least to attain functional transparency of these types of interventions.

Finally, in responding to question (3), the four modes of definition we have identified could also function as potentially interesting criteria for more comprehensive and stringent definitions of hate speech. One such suggestion, encompassing A, B and C, would provide a definition where hate speech encompasses speech acts that intentionally or inherently tend towards certain ethically proscribed ends that are actually or potentially destructive in terms of their consequences in the context that they are made. These also express certain ideas that are, formally speaking, transgressions of specific ethical norms. Adding the consensus criteria D to this definition would also provide a useful criterion of democratic ratification for any proposed hate speech injunction.

Furthermore, the stipulation of norms that are most explicit in C are key to every level, which is why the formal mode is paramount and will most plausibly involve some form of reference to vulnerable minorities. However, this multi-pronged definition would still have an obvious use, both in nuancing the debate and forcing a clearer rationale that is anchored in the various types of effects of the suppressed speech acts.

This definition is also a workable foundation for a more nuanced utilisation of automated hate speech suppression. It could be used as grounds for developing explicit lists of contextually relevant speech acts which could match the above-mentioned definition with a high degree of probability.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Author Biographies

![]()

![]()