Abstract

This paper seeks to understand how online interventions shape incivility and user engagement with news comments. Using a novel dataset of over 39 million news comments on Korea’s largest online news source (Naver News), we examine changes in the share of comments that are categorized as uncivil before and after the introduction of two automated interventions aimed at flagging incivility. We trained two deep learning models to categorize comments and replicate each intervention. The findings reveal significant decreases in uncivil content following each intervention. Interestingly, we find mixed effects of the interventions on total engagement: while the number of comments and commenters decreased after the first intervention, both metrics increased after the second. Examining individual-level data reveals that the aggregate reduction in incivility cuts across all users regardless of pre-intervention incivility or commenting frequency.

Introduction

In the past, reading the news was a mostly solitary activity. Thousands, or even millions, of individuals would consume the same story without discussing or sharing it beyond their immediate contacts. In today’s digital news environment, offline discussions may remain largely unchanged, but consumers are also given the opportunity to interact online with a much wider community of news readers. As a result, comments on news articles have the potential to significantly influence public opinion and shape broader news narratives (Lee, 2012; Stroud, Scacco, et al., 2016). News comments are prevalent; in a 2016 cross-national study of five European countries and the United States, researchers found that between 5% (Denmark and Germany) and 16% (the United States) of the adult population in each country reported leaving comments on news websites (Kalogeropoulos et al., 2017). The number is significantly higher for commenting on news articles through social media (ranging from 10% in Germany to 28% in Spain). A 2023 study spanning 46 countries found that 22% of the adult population reported having participated in news by posting or commenting on articles (Eddy, 2023). 1 And, a much greater share of the population reads comments. Nearly half of Americans (49.3%) reported having read or posted news comments online in 2016 (Stroud, Van Duyn, et al., 2016), a number we suspect may be even higher today.

While the option to comment on news stories opens the door for reader participation, it has also led to the proliferation of uncivil comments online (Coe et al., 2014). These uncivil comments can have deleterious effects on open-mindedness (Borah, 2014; Kim & Park, 2019), attitudes toward political trust (Mutz & Reeves, 2005), and deliberative democracy (Hwang et al., 2014). Incivility may drive some readers away from online articles, potentially causing them to abandon news altogether (Springer et al., 2015).

In reaction, news websites are using various methods to flag or remove uncivil comments (Gillespie, 2018). Moderation can take the form of editorial or journalistic review, which has been shown to improve the quality of online discussions (e.g., Boberg et al., 2018; Muddiman & Stroud, 2017; Stroud et al., 2015), or user-based efforts in which individuals flag inappropriate, hateful, or uncivil posts (e.g., Friess et al., 2021). More recently, news organizations have turned to automated text analysis to detect and remove uncivil language at scale (Drolsbach & Pröllochs, 2024). Yet, despite these interventions’ potential to shape public opinion, civic dialogue, polarization, and a range of other political behaviors, we have a limited understanding of their full impact. A key constraint when studying the effect of any intervention is that news websites typically only show those comments that successfully pass through their filters. Without access to data typically hidden inside the walls of news organizations, researchers have little hope of gaining a full picture of how and how well such interventions work.

This article makes use of a novel dataset of comments on South Korean news stories to examine how interventions aimed at reducing uncivil content shape user behavior. Over 87% of the Korean population accesses news through Naver News, the nation’s largest online news platform (IGAWorks, 2022; Korea Press Foundation, 2021). Several years ago, Naver introduced two interventions, Cleanbot 1 (2019) and Cleanbot 2 (2020), aimed at identifying and flagging uncivil content in news comments. Importantly, Naver does not remove uncivil content, but rather gives users options to hide or show all posts that Naver flags. Naver News articles are followed directly by comment sections that do not require people to actively search them out and are thus readily visible to readers.

We train two deep learning text classification models to detect comments that would be flagged by either of these interventions and apply the models to over 39 million comments posted by more than one million users. This method allows us to analyze posts that would have been flagged before the Cleanbot systems were introduced and compare them with comments flagged after the interventions. Beyond these aggregate counterfactual analyses, our data uniquely identify individual commenters, enabling within-subject comparisons before and after each intervention.

The paper proceeds as follows. We start by reviewing previous research on news commenting and online incivility. In doing so, we present a theoretical framework for understanding how these AI interventions may (or may not) shape users’ incentives to post uncivil content. Next, we provide a brief background of the online news environment in Korea, with particular attention to Naver News. The following section introduces and verifies our classification model, trained to identify comments flagged as uncivil by Naver’s Cleanbot algorithms. We then turn to the analyses, where we start by examining aggregate levels of incivility and engagement before and after each intervention. This section also investigates commenting behavior disaggregated by news section and examines how incivility relates to whether a comment garners numerous replies or likes and dislikes. Last, we examine individual-level data, identifying which users are most likely to change their commenting habits in response to each algorithm. The paper concludes by discussing the normative implications of AI interventions for political discussions, incivility, and an informed public.

Online News Comments: Incivility and Interventions

The online space was once seen as a promising platform for fostering deliberative participation and enhancing democratic engagement (Papacharissi, 2004). It has, however, often fallen short of these initial expectations. Although frequently in the form of implicit or covert messages, rather than explicit personal or group insults and threats of violence (Rieger et al., 2021), uncivil communication floods the digital landscape (e.g., Santana, 2015; Wulczyn et al., 2017). Many scholars attribute incivility to the absence of face-to-face communication (Papacharissi, 2004) or anonymity of online interactions (Halpern & Gibbs, 2013; Moore et al., 2012; Santana, 2014), though group salience and organizational norms may alter these effects (Gibson, 2019; Postmes et al., 1998; Postmes et al., 2001).

While terminology varies across the literature (e.g., Papacharissi, 2004; Rega et al., 2023; Rossini, 2022; Rowe, 2015), scholars generally agree that not all uncivil messages are equally harmful to democratic discourse (Chen, 2017; Masullo, 2023; Rossini, 2022). Recent research on incivility distinguishes intolerant messages, which involve harmful or discriminatory intent toward specific groups, from impolite messages, characterized by rude or offensive content (Rossini, 2022). These two types of messages often stem from differing motivations by the sender and may have unique effects on receivers. Both impolite and intolerant comments are generally viewed as disrespectful and violate standard communication norms, though the degree to which they are perceived as uncivil may differ by context and receiver (Bormann et al., 2022). In this article, we define uncivil discourse broadly to include both impoliteness and intolerance, and thus incorporate hate speech, abusive or profane language, and rude conduct. 2 However, because they are distinct, our empirical analyses differentiate between impolite and intolerant comments.

Prior research suggests that individuals who identify as male, as well as those with lower education or income, are more likely to post uncivil or hostile comments online (Küchler et al., 2022; Stroud, Van Duyn, et al., 2016). Frequent commenters are also reported to contribute disproportionately to toxic language (Kim et al., 2021). Yet other work shows that uncivil messages are widely distributed across users, including many low-activity commenters, rather than concentrated in just a handful of individuals (Wulczyn et al., 2017). These findings suggest that incivility is not merely driven by a few extremists but is instead a structural and widespread phenomenon. Indeed, some topics appear to provoke such behavior. Hard news subjects, such as politics, the economy, and crime, attract more uncivil comments (Coe et al., 2014), and contentious political issues like abortion and elections elicit the greatest hostility (Ksiazek, 2018). While some studies suggest that social media platforms such as Facebook contain more messages harmful to democracy than individual news websites (Rossini, 2022), others find the opposite (Rowe, 2015).

Given the prevalence of offensive online content, news organizations implement various interventions to remove incivility or moderate user behavior. The most common methods include editorial review, where journalists and editors themselves identify and address problematic content; community flagging, which relies on users to report offensive material; and automatic detection, which employs artificial intelligence to moderate content (Gillespie, 2018).

Many studies focus on editorial review, a costly process that has been found to improve the quality of online discussions. For example, in a quasi-experiment analyzing approximately 2500 comments on a local TV news station’s Facebook page, Stroud et al. (2015) found that active engagement by recognizable reporters, compared to anonymous organizational teams, fostered more civil and relevant discussions. Similarly, in a study of over 300,000 comments across 20 news websites, Ksiazek (2018) found that journalist participation led to an increase in comments and a reduction in hostility, although organizational commenting policies—moderation timing, reputation management features (e.g., displaying user rankings or badges to signal credibility), or private messaging—produced mixed results. On other platforms, too, moderation policies that guide user behavior can shape language. For instance, Gibson (2019) shows that Reddit forums with different rules foster contrasting tones, with r/lgbt (a “safe space”) featuring more positive language and the lightly moderated r/ainbow (a “free speech” forum) containing more negative language.

Despite these outcomes, editorial moderation has its limitations, as striking a balance between maintaining high discourse quality and avoiding censorship can be challenging (Gillespie, 2018). Organizational moderation may be perceived as arbitrary or dogmatic (da Silva, 2015), and moderators themselves often face dilemmas about where to draw the line, making inconsistent or subjective decisions (Boberg et al., 2018). Research also shows that different moderation strategies—such as pre- or post-publication moderation, requiring user registration, or enabling comment ratings to identify sensitive issues—do not consistently improve comment quality across news organizations (Ruiz et al., 2011). Most importantly, manual editorial review is simply impractical due to the sheer volume of online comments.

Given these limitations, many platforms rely on users to flag objectionable content (Gillespie, 2018). User-driven moderation is considered successful when it is timely and aligned with community norms (Watson et al., 2019). Yet user-driven moderation is not always effective. Cheng et al. (2014) found that negative feedback on user-generated comments (i.e., low up-vote ratios on a given comment) significantly decreased the quality of subsequent posts, while positive feedback had no significant effect. Han et al. (2018) found that exposure to metacommunication addressing incivility (e.g., comments about tone) did not heighten civility subsequently (though it did further greater metacommunication). In addition, user moderation requires considerable resources and is susceptible to subjective biases (Gillespie, 2018).

In light of the challenges associated with traditional moderation methods, automated interventions offer an attractive solution for many organizations. As the volume of comments continues to grow, manually monitoring all content is nearly impossible. Consequently, major platforms increasingly deploy automated moderation to manage content at scale (Gorwa et al., 2020), and recent studies show that Facebook, Instagram, YouTube, TikTok, Snapchat, X, LinkedIn, and Pinterest employ automated moderation widely (Drolsbach & Pröllochs, 2024). While this method has its own limitations—including non-transparent practices, challenges in safeguarding free speech, and biases in algorithmic decision-making (see, e.g., de Bittencourt Siqueira, 2024; Gillespie, 2018; Gorwa et al., 2020)—there is no question that AI content regulation is the future for most digital platforms. Yet, although AI moderation is already widespread, there is scarce empirical evidence that speaks to the effects of such interventions on user behavior. To understand how large-scale automated moderation systems operate and shape user-generated content, it is crucial to test their impact. This paper takes up that challenge by asking the following research question: What are the effects of AI moderation on incivility and user engagement in news comments?

To address this question, we examine the effects of two automated interventions implemented by Korea’s largest news aggregation website, Naver News. This case provides a unique opportunity to assess the effects of automated moderation systems deployed at scale, utilizing unique individual user identifiers. Cleanbot 1 primarily focused on detecting explicit forms of offensive language, such as slurs and profanities, while Cleanbot 2 expanded its scope to include more context-based assessments of uncivil language in news comment sections. By comparing user behavior before and after these two interventions, we aim to understand Cleanbot’s influence on both the prevalence of incivility and patterns of user engagement. Our analysis provides valuable insights into the efficacy of automated moderation systems and their broader implications for online discourse.

Automated interventions like Cleanbot can be effective in curbing uncivil comments by shaping perceptions of what constitutes typical and desirable behavior in comment sections. Social psychologists distinguish between descriptive norms, which identify what is socially typical, and injunctive norms, which describe what people ought to do. When they conflict, individuals follow whichever norm is most salient, which may itself be shaped by the surrounding environment (Kallgren et al., 2000). For example, previous research demonstrates that a person’s likelihood of littering depends on the relative salience of normative anti-littering messages as well as littering behavior among their peers (Cialdini et al., 1990). Previous evidence suggests that focal injunctive norms are more effective than descriptive norms in driving positive behavioral change (Cialdini et al., 1991; Reno et al., 1993).

In the case of online incivility, a descriptive norm around the frequency of uncivil comments may conflict with the injunctive norm that suggests users should make polite and respectful comments. While most users do not post disrespectful content, incivility is by no means infrequent. By highlighting whether a comment will be deemed inappropriate, Cleanbot may shift the focus from descriptive to injunctive norms and be particularly effective at curbing behaviors labeled as inappropriate. Consequently, fewer people may post uncivil comments, which (along with hiding flagged content) should further drive down the descriptive norm of frequent online incivility.

Our goal is to examine whether interventions like Cleanbot are actually effective in achieving the intended outcomes of reducing incivility. We therefore treat the material flagged by these automated interventions as representing distinct forms of norm violations: impoliteness for Cleanbot 1, and both impoliteness and intolerance for Cleanbot 2. We expect that by labeling inappropriate comments, Cleanbot will shift users’ focus to the injunctive norm against posting uncivil content and thus reduce incivility. Though we cannot test the mechanism directly, in what follows, we provide strong evidence of a causal relationship between the Cleanbot intervention and decreased incivility.

It is worth noting that various additional mechanisms may lead Cleanbot to increase civility and engagement. First, commenters seeking to maximize readership will likely adjust flagged content so that it remains visible to the large share of users who keep the Cleanbot filter active. Second, some individuals may not be aware that their messages could be seen as uncivil in the first place, and issuing a warning may simply provide the information individuals need to voluntarily adjust their behavior. 3 Third, there may be social contagion. Modifying or removing even a subset of uncivil content may have a cascade effect if people tend to post messages similar to those they have read. By discouraging some individuals from posting uncivil comments, moderation may indirectly influence others who, seeing fewer uncivil posts, are themselves more likely to post civil content. Consequently, those who previously avoided comment sections to eschew impolite or intolerant messages may now scroll down and consider commenting themselves. As we discuss below and show in the Supplementary Information, there is indeed evidence of contagion, suggesting that Cleanbot has both immediate and downstream effects on posts.

News Consumption in Korea

Historically, Korean media were tightly controlled under authoritarian regimes, with newspapers and broadcasters often serving as mouthpieces for the state. After democratization in 1987, however, media outlets gained greater autonomy and became highly influential, with the ”Big Three” newspapers—Chosun, Joongang, and Donga—dominating the market (Kim & Kim, 2016). Like many other countries facing growing ownership concentration, Korea also experienced media cross-ownership (Park, 2021). In 2011, the removal of long-standing anti-concentration limits allowed major newspapers to launch their own television channels.

But it is Korea’s “exceptionally high rate of digital media penetration” that makes it stand out (Yoon, 2018, p. 1). Unlike many Western contexts where users directly visit outlet websites, Korean audiences largely rely on news aggregation platforms like Naver to access news (Reuters Institute, 2024), making platform policies such as moderation and ranking rules especially influential in shaping public discourse. This platform-centric ecosystem exemplifies broader trends of news media platformization, in which digital platforms increasingly mediate news distribution, visibility, and audience access (Nielsen & Fletcher, 2023).

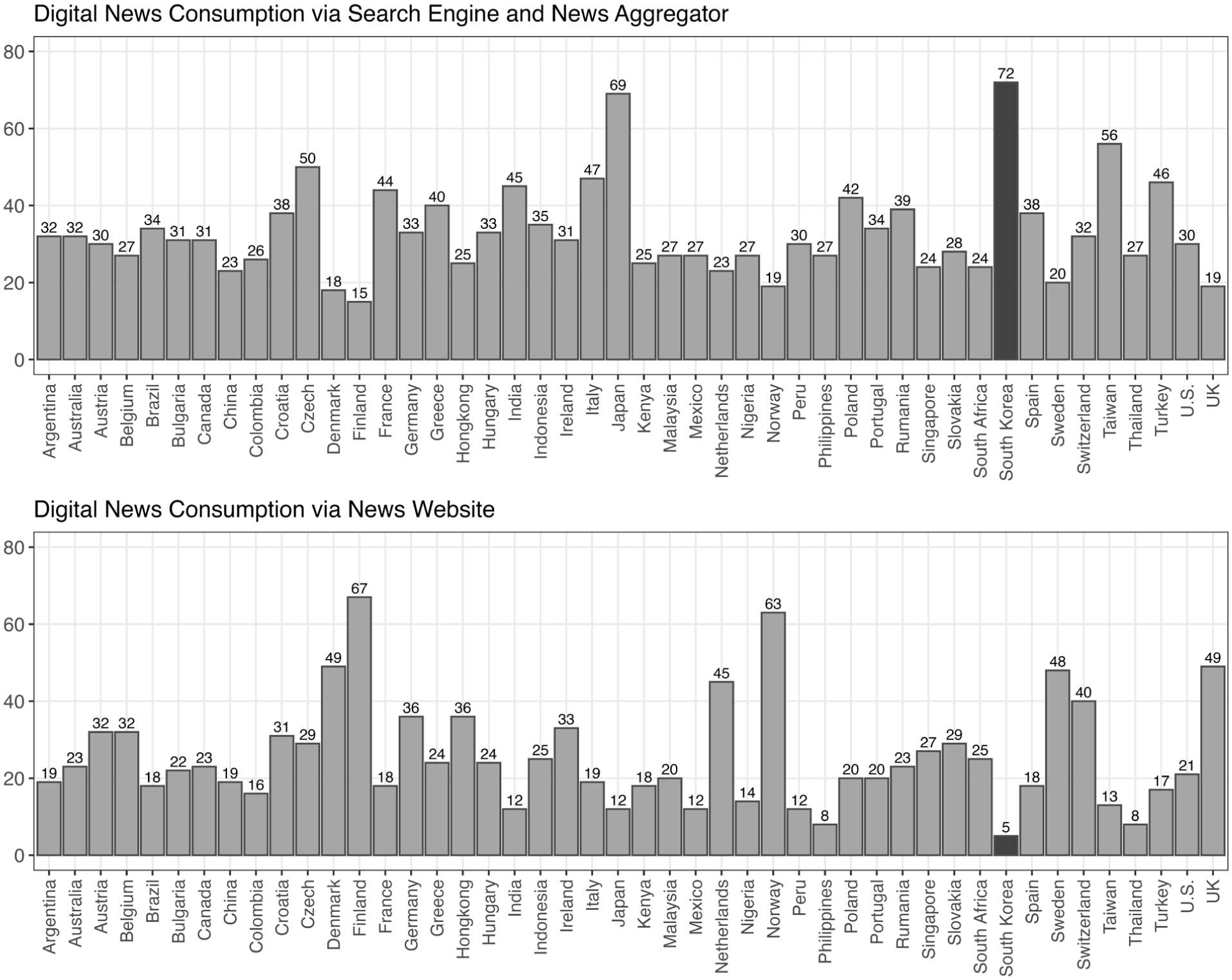

As illustrated in Figure 1, Korea in fact had the highest rate of news consumption via news aggregators and the lowest rate via individual news websites of 45 comparison countries (Oh et al., 2021). Over 90% of Koreans rely on Naver as their primary search engine, and 87% use Naver News to consume news online (Korea Press Foundation, 2021).

The top panel displays the percent of individuals who consume news through search engines or news aggregators at least once per week across 46 countries in 2021. The bottom panel shows the percent consuming news from news websites at least once per week across the same countries and time period. South Korea is highlighted in black.

Naver News provides in-links to articles from most Korean newspapers and TV news programs. 4 An in-link allows users to access a news article directly through Naver without being redirected to the original news website. Importantly, this means Naver users do not need to sign up for separate accounts on media websites to view full articles.

Below each article is a comment section where users can engage with one another by reading and leaving messages. The use of comment features has exploded over time. Figure 2 shows the total number of monthly comments (left) and commenters (right) on Naver from January 2012 to September 2020, based on the 30 most-read articles in each of six news sections per day. Both numbers increase significantly, with the average number of monthly comments nearly quadrupling over the eight-and-a-half-year period. Across all news stories (569,579 in total), each article received an average of 438 comments, and each user left an average of 43 comments over the entire period. 5

The number of monthly comments (left) and unique commenters (right) on Naver over time.

The Cleanbot Interventions

Naver’s first attempt at curbing uncivil content came in April 2019. The news site created an algorithm to block hate speech on portions of the platform primarily used by young people and known as “Junior Naver.” Later that year, in response to growing concerns about cyberbullying, the company expanded the intervention to the full news platform, launching Cleanbot 1 on November 13, 2019. Cleanbot 1 primarily targeted abusive language, conspicuous hate speech, impoliteness, and expletives. Although these elements fall under the broad definition of incivility, they are not exhaustive and exclude indirect intolerance. A second version, Cleanbot 2, was introduced on June 18, 2020, with the intention of covering all uncivil language. Specifically, Cleanbot 2 extended detection beyond abusive language to incorporate context and intent (Bizwire, 2020). 6 As we find below, comments that are flagged by Cleanbot 1 appear to almost, if not completely, make up a subset of those that would be flagged by Cleanbot 2.

Cleanbot operates in real time and shapes the experiences of both commenters and their readers. When a person posts a comment, they are alerted immediately if their comment will be flagged by Cleanbot. The user is then given the option to edit the comment (as many times as they like) so that it no longer receives the Cleanbot flag. Alternatively, they can elect to post it as is and receive the flag, knowing that the comment will be identified as uncivil and therefore filtered by many users.

Comment readers have the option to turn the Cleanbot filter on or off (it is on by default). If the filter is turned on, the content of a flagged article is not shown, but a notice indicating that a flagged comment was posted appears in its place. Users have the option to click on the flag and reveal the flagged comment if they so choose. Figure 3 shows the slider to activate Cleanbot in the foreground. In the background, we can see comments on an article as well as the statement “Cleanbot detects malicious comments.” Clicking on the icon or word “Cleanbot” in that statement brings up the pop-up to activate or deactivate Cleanbot. The Cleanbot setting can be adjusted whenever the user likes, and changes in one session are saved for future visits.

Option to activate Cleanbot (foreground) and Naver comment section (background). The website is translated from Korean to English using Google Translate.

Data and Methods

To assess how Cleanbot interventions shape commenting frequency and incivility, we downloaded every comment for 180 articles each day between August 13, 2019 and September 30, 2020. The 180 articles per day came from a list of the top 30 most-read articles in each of the six sections presented on Naver News: Politics, Society, Economy, World, Life/Culture, and IT/Science. Until October 2020, Naver News listed the top 30 most-read articles published that day in each of these six sections. This feature, called “Ranking News,” was updated throughout the day as attention patterns changed; once the day was over, the list was fixed. We downloaded all of the comments from the top 180 articles based on the ranking at the end of each day.

In addition to its text, our data includes various other features for each comment, including the date and time of posting, the commenting user’s unique id, its number of likes, dislikes, and replies, and whether or not the comment was flagged by Cleanbot (for comments posted after the intervention[s] began). At the article level, we identify the title, source, article posting date and time, section, and the number of likes and dislikes each article received. In sum, we collected 39,993,087 comments over 414 days from 1,767,547 unique users.

Classification Model

Deep learning approaches have gained prominence in communication and political science research for their capacity to analyze large volumes of text with high accuracy (Laurer et al., 2022). Accordingly, since Naver does not make its algorithm public, we developed two models to predict whether comments would be flagged by their respective versions of Cleanbot. These models were trained using comments from the 3 months following the implementation of Cleanbot 1 (November 13, 2019, to February 12, 2020) and Cleanbot 2 (June 18, 2020 to September 30, 2020). For Cleanbot 1, the training dataset consisted of 64,000 comments; for Cleanbot 2, the data utilized 61,440 comments. We also created corresponding test datasets, comprised of 8000 comments for Cleanbot 1 and 7680 comments for Cleanbot 2, ensuring that none of the test data overlapped with the training data.

We used KcELECTRA-base-v2022 as our base model—a transformer-based model specifically pre-trained on Korean-language data. The model was chosen for its strong performance on Korean text classification tasks.

7

We trained the base model on the respective training datasets for Cleanbot 1 and Cleanbot 2 to develop two classifiers that replicate their respective flagging behaviors. We then evaluated the classifiers’ performances by comparing model predictions (flag or not flag) against Cleanbot flags using each version’s test dataset. The models demonstrated high predictive accuracy, achieving identical overall accuracy rates for both Cleanbot 1 and Cleanbot 2 of 95.1%. Confusion matrices, presented in Figure 4, illustrate the models’ performance, detailing the proportions of true positives, true negatives, false positives, and false negatives. The corresponding

Confusion matrices showing the accuracy of the models for predicting flagged and not flagged comments under Cleanbot 1 (left) and Cleanbot 2 (right).

We applied the two classification models to the comment dataset. Because classification is time-consuming, we prioritized the 24-week window around Cleanbot 1 and Cleanbot 2 initiations and classified all comments in those periods. In addition, we classified a random sample of 252,500 comments from the full-time period (2012–2020), stratified by week (approximately 1000/week).

Using the random sample of comments, Figure 5 plots the percent of all comments that our models predict to be flagged according to Cleanbot 1 (gray) or Cleanbot 2 (black). As we can see, there is a clear decrease in the share of comments predicted for Cleanbot 1 after its introduction in 2019, suggesting that commenters moderated their content. Because almost all comments predicted to be flagged by Cleanbot 1 are also predicted to be flagged by Cleanbot 2, we see a similar decrease for predicted Cleanbot 2 flags with the introduction of Cleanbot 1.

The percent of all comments predicted to be flagged using Cleanbot 1 (gray) or Cleanbot 2 (black) over time.

As with the introduction of Cleanbot 1, the share of comments predicted to be flagged by Cleanbot 2 decreases after its introduction; in this case, by approximately 8%. Again, it appears that commenters modified their behaviors by posting less uncivil content following the new intervention. Note that the share of comments predicted to be flagged by Cleanbot 1 remains constant after Cleanbot 2, implying that users adjusted to Cleanbot 1 by the time Cleanbot 2 was introduced. These patterns also show that Cleanbot 2 flags significantly more content than Cleanbot 1.

Content Validity

While our focus is on uncivil comments as identified by the Cleanbot algorithms, we also evaluated our classifiers using 400 randomly selected, manually coded comments from the 24-week period surrounding the launch of both Cleanbots. A native Korean coder manually classified each comment as one of the following: (1) a civil message, (2) an impolite message involving a rude or offensive tone or profanity, or (3) an intolerant message attacking democratic norms or using derogatory language targeting specific groups. Because Cleanbot 1 is understood to primarily detect impolite language, whereas Cleanbot 2 is intended to detect both impolite and intolerant messages, comments classified as impolite were coded as those that Cleanbot 1 would have flagged, while comments classified as both impolite and intolerant were coded as those that Cleanbot 2 would have flagged. Manual coding was conducted blind; the coder was unaware of whether Cleanbot flagged the comment. We then assessed how closely our classification models (replicating Cleanbot 1 and Cleanbot 2) matched the manual labels. The results yielded an accuracy rate of 86% for the Cleanbot 1 replication and 88% for the Cleanbot 2 replication. These findings not only corroborate Naver’s claims about the targets of Cleanbot 1 and 2, but they also provide a more concrete idea about what type of content users could expect to have flagged. 8

Results: Aggregate Analyses

To start, we examine aggregate changes in incivility and engagement before and after the introduction of each intervention. Daily-level data allow us to identify whether there were abrupt changes to commenting behavior in the time following the introduction of Cleanbot. Beyond civility and volume, we also examine how readers react to comments by engaging in direct replies or indicating their like or dislike of that comment.

Figure 6 shows the share of daily posts predicted to be flagged by Cleanbot 1 (left) and Cleanbot 2 (right) 2 months before and after each intervention. In both cases, the daily fraction of uncivil posts decreases significantly (

The daily percent of comments predicted to be flagged by Cleanbot 1 (left) and Cleanbot 2 (right) 2 months before and after each intervention. Dashed horizontal lines indicate the average over the respective time period.

In the case of Cleanbot 1, the percent of comments flagged changes from 11.4% to 10.2%. For Cleanbot 2, the percent reduces from 17.8% to 16.4%. Although in both cases differences are less than 1.5 percentage points, they equate to an important overall reduction in uncivil posts of 11% and 8%, respectively. These findings suggest that (at least some) users adjusted their content after the introduction of Cleanbot.

Beyond testing how interventions shape incivility, it is also important to assess how such interventions influence user engagement. A benefit to allowing people to turn off uncivil content may be that doing so encourages more people to read or post comments in the first place. On the contrary, previous studies highlight a positive relationship between incivility and engagement (e.g., Masullo et al., 2021; Muddiman & Stroud, 2017), so restricting content may curb commenting overall. Unfortunately, we cannot test reader engagement because we do not know how many people visited each news article or scrolled to the comment section. However, we can examine active engagement with comments by simply looking at the overall number of posts before and after each intervention, as well as each comment’s number of replies and share of likes or dislikes received.

While the Cleanbot algorithms are clearly associated with a decline in uncivil content, their relationship with commenting engagement is less clear. The average number of daily comments, shown in Figure 7, decreased from 102,604 to 73,096 from the 2 months prior to the introduction of Cleanbot 1 to the 2 months after (

The number of comments posted per day (tens of thousands) in the 2 months before and after each intervention, along with a 1-week moving average. Dashed horizontal lines indicate the average over the respective time period.

Several possible explanations may account for differences in engagement across interventions. First, Cleanbot 1 may have turned people off who enjoyed posting impolite content, while at the same time it remained insufficiently strict to encourage users who wanted to avoid intolerant messages. Cleanbot 2, in comparison, may have encouraged more sensitive users to participate by assuring them that the intervention would hide offensive content. This theory aligns with a prior study showing that an exclusively negative tone can reduce user interactivity (Ziegele et al., 2014). Second, the initial introduction of content moderation may have deterred people from posting in 2019, whereas the rollout of an updated version of Cleanbot 7 months later was not a new technology and therefore had little effect on engagement. Third, the effect (if any) of Cleanbot on engagement may be too small to detect amid other environmental factors. Cleanbot 2 was introduced during the height of Covid-19 in Korea, a time when people were likely spending more time online—possibly reading and commenting on news stories, regardless of the online intervention (Casero-Ripollés, 2020; Van Aelst et al., 2021).

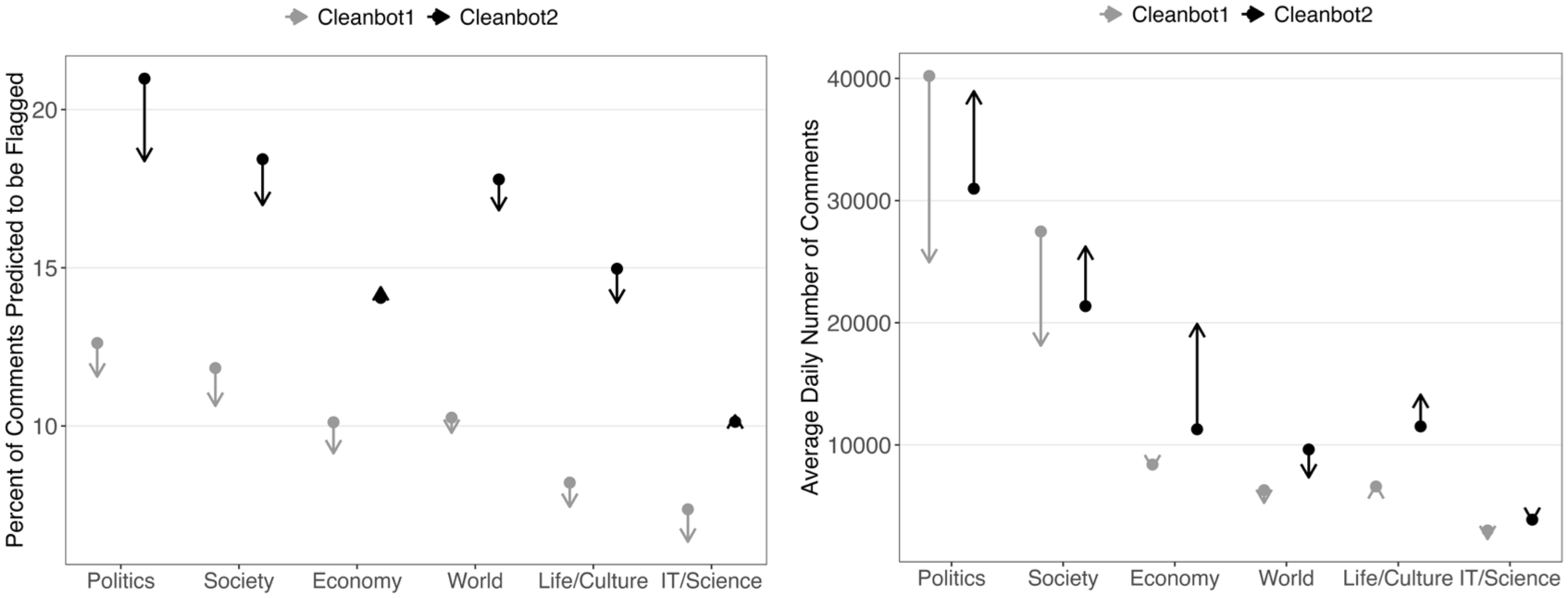

Figure 8 disaggregates the data on incivility (left panel) and engagement (right panel) by each of the six sections in Naver News for the 2 months before and after each intervention. As we can see, changes within the section generally replicate the findings shown in Figures 6 and 7. In the left panel, the percentage of flagged comments decreases after both interventions in every section except Life/Culture after Cleanbot 1 and IT/Science after Cleanbot 2. Not surprisingly, the less civil Politics section saw some of the greatest reductions, with a 9.6% drop after Cleanbot 1 and an 18.9% decrease after Cleanbot 2. Average daily comments (right panel) tended to drop after Cleanbot 1 and climb after Cleanbot 2 in each of the individual sections, with a few exceptions.

Pre- and post- intervention comparison of comment moderation outcomes across news sections. The left panel shows the percentage of comments predicted to be flagged, and the right panel shows the average number of daily comments posted. Arrows point from 2 months before to 2 months after each Cleanbot intervention, indicating the direction and magnitude of change. Gray symbols represent Cleanbot 1; black symbols represent Cleanbot 2.

At the comment level, we measure engagement through the number of direct replies. Across the primary 8-month time period (2 months before and after each intervention), a majority (90%) of comments received no replies, and an additional 6% only received one reply. Among comments with at least one reply, the average number of replies is 2.8. We expect that for any given article, comments attracting more replies are likely to be seen by more users because they spark a longer conversation thread. Beyond the number of replies, we can also measure a comment’s popularity by accounting for its like differential–that is, how many like votes it received (in the form of a thumbs up) minus its number of dislikes (thumbs down) votes. Liking or disliking a comment is easier than writing a reply, and not surprisingly, most comments receive at least one like (58%). A sizable minority of comments receive at least one dislike (29%). The average like differential is seven, meaning that comments on average receive seven more positive than negative votes. 11

Figure 9 displays the average number of replies (left panel) and average like differential (right panel) for Cleanbot 1 and 2, separated by whether the comment was deemed civil or uncivil, as well as if it was posted before or after the relevant intervention. As we can see, civility plays a major role. For both interventions, civil comments attract more replies and receive higher ratings than uncivil ones. The differences are sizable; overall, civil comments receive about 50% more replies than uncivil comments, and their like differential is almost twice as high.

The average number of replies (left panel) and like differentials (right panel) for uncivil and civil comments, pre and post both interventions.

Figure 9 also suggests that the Cleanbot interventions play a role in engagement and popularity. In the left panel, we see that there is only a small difference in the number of replies for civil comments in the pre and post-Cleanbot periods. (For Cleanbot 1, replies to civil comments are more frequent after the intervention; for Cleanbot 2, the opposite relationship holds.) In contrast, there are significant decreases in replies to uncivil comments after both interventions. These differences suggest that the intervention itself is in part responsible for decreasing engagement. Because many users employ Cleanbot to limit the comments displayed, these differences may simply reflect lower exposure. Interestingly, there is no consistent increase in the number of replies to civil content, suggesting that overall engagement through replies decreases following Cleanbot. 12

Although we do not have data on the content of direct relies, we do know the ordering of main comments for each article. In the Supplementary Information, we demonstrate that there is strong evidence of a contagion effect for uncivil discourse. Specifically, using a bootstrapping technique, we find that the probability that a given comment is uncivil is significantly greater if the previous comment was uncivil, controlling for the overall share of uncivil comments on the article. Consequently, limiting exposure to uncivil comments may indirectly reduce a person’s likelihood of posting their own uncivil comment, independent of the direct effect of Cleanbot flagging their content.

Figure 9 reveals that a similar pattern to the number of replies holds for the like differential. While the like differential for civil comments increased after Cleanbot 1, it decreased after Cleanbot 2. But for uncivil comments, the like differential decreased after both interventions, with a particularly dramatic reduction following Cleanbot 2. Examining the data separately for likes and dislikes reveals that the decrease is primarily due to a drop in likes. In Cleanbot 2, the average number of dislikes decreased by 0.4 votes, but the average number of likes decreased by 1.4 votes. Interestingly, these aggregate data suggest that those who filter out comments flagged by Cleanbot may be missing a subset of comments they would have otherwise liked. In sum, examining engagement metrics through replies and the like differential suggests that interventions may have the potential (as shown in Cleanbot 1) to increase engagement with civil comments, while at the same time they decrease engagement with uncivil messages.

Individual Analyses: Who Responds to AI Interventions?

Of course, aggregate decreases in comment incivility could reflect various dynamics at the individual level. Perhaps the most uncivil commenters change their tone in response to Cleanbot, while moderate or rarely uncivil commenters remain unchanged. Alternatively, uncivil posters may already be aware that they are writing hateful or offensive comments and continue to write for the audience of users who leave the Cleanbot filter off. 13 A person’s commenting frequency may shape how they respond to the intervention. One advantage of the Naver data is that every comment can be matched with a unique user identification number. While commenters can change their displayed username, their id remains fixed. This feature allows us to track individual changes in behavior over time. 14

Commenting frequency is highly skewed, with a small fraction of users accounting for a large share of posts. For example, in the 1 month leading up to each intervention, the top 27% of users accounted for 80% of the comments, while 40% (Cleanbot 1) and 38% (Cleanbot 2) of users during the same period posted only one comment. The Gini coefficient, which measures inequality of commenting frequency on a scale ranging from 0 to 1, is 0.67 for the months preceding both interventions.

Incivility is even more uneven. Eighty percent of uncivil comments are made by only 10% of users in the month leading up to Cleanbot 1 and 13% of users in the month before Cleanbot 2. The Gini coefficients for the distribution of uncivil comments across users are 0.89 and 0.86 for the 30 days preceding Cleanbot 1 and 2, respectively.

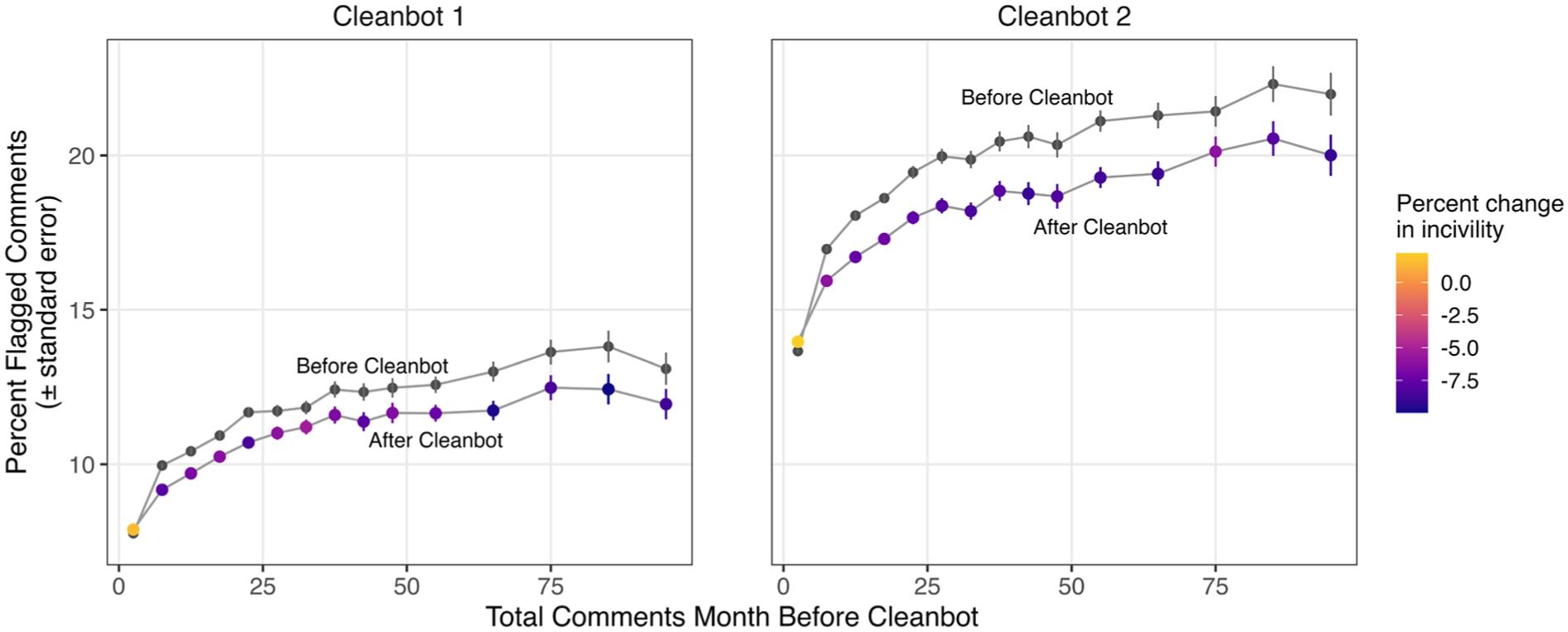

To examine how these two behaviors—commenting frequency and commenting incivility—relate to one another, Figure 10 plots the average percent of flagged comments in the one month period before and after each intervention as a function of comment frequency in the month before that intervention. As we can see, in both the pre- and post-intervention periods for Cleanbot 1 and Cleanbot 2, the fraction of uncivil comments increases with total comments made. That is, frequent commenters are, on average, less civil than moderate or infrequent posters. Comparing the pre- and post-intervention lines in each graph, all groups, except for those in the left-most bin (making the very fewest comments overall), show a decrease in incivility after the interventions. Infrequent and frequent commenters alike reduced their levels of incivility in response to the interventions. Observations are colored by the percent change in incivility, which also reveals little variation across commenting frequency aside from the most infrequent commenters. Overall, the reduction in incivility after each intervention is remarkably consistent. Thus, rather than responses to Cleanbot reflecting only the frequent (or infrequent) commenters changing their behavior, it appears that the overall decrease in incivility depicted in Figure 6 stems from a general reduction in uncivil comments across the board. This evidence suggests that interventions to curb incivility affect all news commenters similarly. (The exception is commenters who rarely post and are highly civil; by design, these individuals cannot reduce their incivility due to floor effects.)

Percent of comments flagged before and after each intervention by commenting frequency.

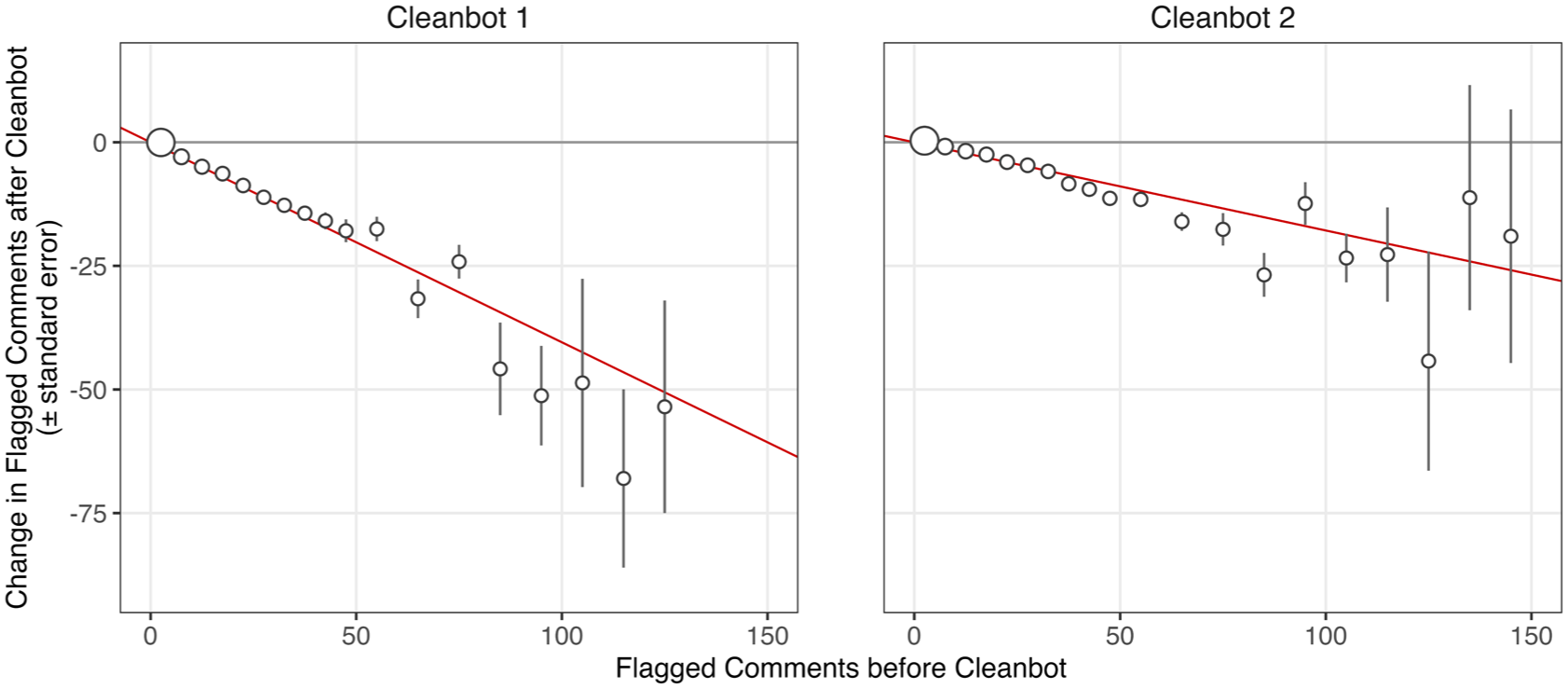

While parsing the data by comment frequency shows remarkably parallel shifts in uncivil content, differences in behavior may differ by baseline incivility levels. To examine this relationship, Figure 11 plots the change in uncivil comments against the number of flagged comments in the month preceding the intervention. As we can see, Figure 11 demonstrates that users who posted more uncivil comments pre-intervention exhibit greater absolute reductions in incivility. However, the fairly linear decrease indicates a consistent proportional reduction in incivility across most of the data’s range. (Both the horizontal and vertical axes identify the absolute number of comments, so any straight line on the plot corresponds with a change in incivility that is proportional to pre-intervention behavior.) Red lines in each panel show linear regression models fit to the individual data (including outliers not depicted in the plot) with intercepts constrained to equal zero. For Cleanbot 1, the estimated slope corresponds to a 40% reduction in incivility, while for Cleanbot 2 the corresponding reduction is 18%. This model fits the data especially well for users with fewer than fifty flagged comments in the pre-intervention month, which accounts for 73% of the users for Cleanbot 1 and 67% of the users for Cleanbot 2. Overall, it appears that most users, regardless of pre-intervention comment frequency or incivility, made a roughly equal percentage change in their incivility following the interventions.

Change in the number of predicted flagged comments after each intervention as a function of the number of predicted flagged comments pre-intervention. Red lines show linear models fit to data where intercepts are constrained to equal zero. Point sizes are proportional to the number of observations. Plot range does not include one outlier for Cleanbot 1 at

Last, we examine changes in overall engagement by separating users into three categories based on their commenting frequency in the month prior to the intervention. Infrequent commenters are defined as those who posted two or fewer comments in that period, moderate commenters made between three and fifteen comments that month, and frequent commenters posted sixteen or more times. These cutoffs were set based on the distribution of commenting frequency; infrequent commenters comprise the bottom 50% of all users, while frequent commenters make up the top 10%.

Figure 12 plots the percent change in the number of comments from the month prior to the month following Cleanbot 1 (left panel) and Cleanbot 2 (right panel) as a function of incivility for users in each category. (Note that for infrequent commenters, the percent flagged comments is constrained to equal 0, .5, or 1, since these commenters posted two or fewer comments.) Comparing the left and right panels, we can clearly see the contrasting reduction and increase in comments following Cleanbot 1 and 2, respectively. In the case of Cleanbot 1, users from the three commenting frequency categories made similar proportional changes in engagement, and these changes varied little with respect to incivility. A greater decline in commenting frequency exists among the most uncivil commenters in the moderate or frequent commenter categories, but data scarcity in these groups makes any conclusion uncertain. Similarly, for Cleanbot 2, the increase in comments affects users across the incivility spectrum, though frequent commenters made the smallest proportional change to their commenting frequency.

Percent change in total comments posted from the month pre-intervention to the month post-intervention as a function of percent of flagged comments in the month before the intervention. Users are binned into three categories based on pre-intervention comment frequency indicated by colors: infrequent commenters (blue;

Discussion

This article examines how people modify their behavior in response to algorithmic interventions that flag incivility. We argue that Cleanbot’s detection system increases the salience of injunctive norms against incivility, thereby encouraging greater adherence to standards of civil conversation in online comment sections. By signaling when a comment violates platform norms, the system may also educate users about unacceptable forms of expression and generate broader spillover effects that reinforce community-wide commitments to civil discourse.

To examine these dynamics, we draw on a rare empirical setting that closely approximates a natural experiment: the introduction of two automated moderation systems on Naver News, South Korea’s largest news aggregation platform. Drawing on nearly 40 million comments posted by more than one million users, our data provide access to both filtered and unfiltered comments, as well as uniquely identified individual commenters, allowing us to observe behavioral changes surrounding the introduction of the interventions We also use text classification models to identify comments that would have been flagged as uncivil (impolite or intolerant) prior to implementation of Cleanbot, allowing comparisons between pre- and post-interventions periods using a consistent measure of incivility.

Results show a decline in incivility following the AI interventions. In Cleanbot 1, the share of uncivil comments decreased by 11%; after Cleanbot 2, the difference was 8%. Individual-level analyses demonstrate that behavioral changes were similar across users, regardless of previous engagement and incivility. This pattern is consistent with the argument that automated moderation increases the salience of injunctive norms of deliberation and respectful commenting, thereby contributing to a reduction of uncivil messages in the comment sections.

The effects of Cleanbot interventions on engagement were mixed: while overall commenting declined after Cleanbot 1, which primarily targeted impolite messages, comments increased after Cleanbot 2, which targeted both impolite and intolerant messages. Previous studies also show that the effects of moderation on engagement are not unidirectional. For instance, Kim et al. (2021) find that exposure to uncivil messages increases the toxicity of subsequent comments but does not significantly affect people’s willingness to comment. Similarly, Gervais (2015) shows that the extent of engagement varies depending on whether uncivil comments are like-minded. Some studies indicate that incivility can stimulate participation and engagement (e.g., Masullo et al., 2021; Muddiman & Stroud, 2017), while others find that an excessively negative tone can discourage user interactivity (Ziegele et al., 2014). Our study does not provide a definitive answer to these ongoing debates, but the mixed results between Cleanbot 1 and Cleanbot 2 suggest that moderation efforts that go beyond filtering impolite language to effectively address intolerant messages may, in some cases, foster greater user engagement.

The reductions in incivility nevertheless suggest that Cleanbot achieved Naver’s stated goal of fostering a more civil discussion environment. When situated in the broader literature on online incivility and moderation, these findings illustrate a notable advancement in what automated interventions can accomplish. Prior studies have shown that editorial and community-based moderation can improve deliberative quality (Stroud et al., 2015), but often at the cost of scalability and neutrality (da Silva, 2015). Human moderation requires continual subjective judgment and is limited by labor and consistency constraints, while user-driven moderation is susceptible to subjective biases (Gillespie, 2018). Cleanbot’s results suggest that large-scale algorithmic interventions may replicate some of the civility-promoting effects of human moderation—–reducing impolite and intolerant language—–without the same institutional or resource burdens.

Importantly, Cleanbot achieves this while preserving user choice: commenters can still post flagged content if they wish, and readers can choose whether to reveal such comments. Unlike previous moderation systems that operated post hoc, Cleanbot’s real-time flagging and visibility filtering—while giving both parties agency—appear to influence users’ own commenting norms, potentially through subtle educational or norm-priming effects that prior studies have rarely been able to observe empirically. The findings lend support to the idea that automated systems, when transparently deployed, can both enforce and communicate injunctive norms of civility at scale, fostering not only immediate reductions in incivility but also potentially longer-term normative learning among users.

These findings also highlight what remains unknown and thus suggest several avenues for future research. First, we still know relatively little about whether such interventions can sustain civility without discouraging participation or marginalizing dissenting voices. As scholars note, not all forms of incivility are equally harmful to democracy (Chen, 2017; Chen et al., 2019; Masullo, 2023; Rossini, 2022), and algorithmic filtering risks conflating passionate disagreement with hate or intolerance in ways that may inadvertently suppress political participation. Prior studies suggest that incivility can increase political interest (Brooks & Geer, 2007; Mutz & Reeves, 2005) or potentially raise individuals’ intentions to participate in politics (Chen & Lu, 2017). Future research should therefore examine how algorithmic moderation can distinguish between harmful incivility and productive disagreement, and whether such interventions can promote civility without dampening democratic engagement. In addition, the criteria for what constitutes incivility are not always clear-cut. Perceptions of incivility are subjective and may vary across individuals (Kenski et al., 2018), and filtering content deemed “uncivil” by AI systems may unintentionally reinforce existing societal biases. While our study provides strong evidence that Naver users generally alter their behavior in response to AI interventions, we are unable to identify which demographic groups are most responsive. It is crucial to examine whether comparable interventions function similarly in contexts with lower digital penetration or in political systems characterized by different commitments to, and constraints on, speech. 15

Beyond these issues, several open questions remain about the mechanisms and downstream consequences of algorithmic moderation. Because our data examines only the content of first comments and not replies, we cannot fully assess how such interventions shape interactive conversations, where spillover effects may emerge among users engaged in direct back-and-forth interactions (Han et al., 2018). Nor can we disentangle the pathways through which interventions like Cleanbot operate; that is, whether users learn from being flagged through normative or educational cues or whether the visibility restriction itself motivates behavioral change. We also cannot observe how many people attempt a message and then, when flagged, decide to opt out completely. Understanding such disengagement is critical for evaluating the broader implications of automated moderation for democratic participation. Lastly, future work could compare the effects of automated and human feedback directly. While Cleanbot may be effective in part because it provides immediate responses, reactions from real individuals may be more influential in producing durable behavioral change. Addressing these questions will be essential for understanding how algorithmic interventions shape broader political outcomes, including knowledge, engagement, norm adherence, and offline discourse.

Supplemental Material

sj-pdf-1-sms-10.1177_20563051261428916 – Supplemental material for The AI Referee: How Online Interventions Shape Incivility and User Engagement in News Discussions

Supplemental material, sj-pdf-1-sms-10.1177_20563051261428916 for The AI Referee: How Online Interventions Shape Incivility and User Engagement in News Discussions by Georgia Kernell and Seonhye Noh in Social Media + Society

Footnotes

Acknowledgements

We thank participants at UCLA’s Institute for the Study of Hate, UCLA’s Department of Communication, the American Political Science Association Meetings 2024, and the University of Kansas’ Department of Political Science for helpful comments on previous drafts.

Ethical considerations

N/A.

Informed consent

N/A.

Author contributions

Both authors contributed to the analyses and writing of the manuscript. SN scraped all of the data from Naver and generated and ran the classification models. GK ran the aggregate and individual-level analyses. GK wrote the analysis section; SN wrote the literature review section, and both authors contributed to the remaining sections.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was funded in part by UCLA’s Institute for the Study of Hate.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.