Abstract

What does antisemitism look like in the context of political discussions on Twitter? In this article, we introduce the notion of platformed antisemitism. We first define it as a platform-agnostic concept, and then explore it through an exemplary case study of Twitter and its affordances by way of a mixed-methods analysis of discourse surrounding the 2018 US midterm election. Via qualitative textual analysis, we document how political discourse on Twitter is marred by antisemitic conspiracy theories that intersect with QAnon and Trump/MAGA support. Through quantitative content analysis of a sample of 99,062 tweets, we highlight a list of terms and hashtags most often associated with antisemitic speech on Twitter and showcase how specific affordances on the platform (quote-tweets, hashtags) amplify and/or diminutize antisemitic speech. Via Lasso regression, we introduce an antisemitism classifier that can be used to further refine future detection efforts of antisemitic speech.

Keywords

Introduction

Discussions about race and religion on social media are often fraught with harassment and vitriol. Jewish people, a group with a history of weathering hate in the media and otherwise, are often subjected to antisemitic 1 communication online (Schwarz-Friesel, 2019). In 2019, the special rapporteur on freedom of religion of the United Nations reported a worldwide rise in antisemitism (Shaheed, 2019). The worst antisemitic attack in US history, a mass shooting at the Pittsburgh Tree of Life synagogue on 27 October 2018, was carried out by a person who had used the social media platform Gab to spread antisemitic communication prior to committing the atrocity (McIlroy-Young and Anderson, 2019).

In this study, we refer to pervasive anti-Jewish hate online as platformed antisemitism. We conceptually describe the particularities of the phenomenon, and empirically assess how platformed antisemitism tracks on one exemplary platform and case study: Twitter. Researchers have found that major political events are often followed by surges in antisemitic content (Zannettou et al., 2020). Against this background, our study investigates how antisemitic speech manifested itself in political discourse on Twitter in the lead-up to the 2018 US midterm elections. We use content analysis to study a sample of 99,062 tweets pertaining to the election to identify antisemitic themes, and to extrapolate terms and hashtags that were most prone to be used in antisemitic speech. To complement these quantitative results, we carried out a qualitative textual analysis of tweets, identifying themes and commonalities that arose in the discourse.

Our study conceptually sheds light on platformed antisemitism: its definition and conceptual underpinnings. Empirically, we provide evidence on how platformed antisemitism operates on one platform that serves as an exemplary case study, Twitter. We document an antisemitism classifier and showcase how platformed antisemitism operates on Twitter by focusing on affordances (quote-tweets, hashtags) and themes.

Literature review

Hatred of Jews in America

Antisemitism, as Shaheed (2019) writes, is commonly understood as “prejudice against, or hatred of, Jews” (p. 4), which is packaged into conspiracy theories, stereotypes, and honed through “religious doctrine and pseudoscientific theories” (p. 6). The International Holocaust Remembrance Alliance (IHRA) (2020: n.p.) has coined a popular working definition, referring to antisemitism as “a certain perception of Jews, which may be expressed as hatred toward Jews. Rhetorical and physical manifestations of antisemitism are directed toward Jewish or non-Jewish individuals and/or their property, toward Jewish community institutions and religious facilities.” Lipstadt (2019) describes contemporary antisemitism after the Holocaust, pointing out that “[w]hat should alarm us is that human beings continue to believe in a conspiracy that demonizes Jews and sees them responsible for evil” (p. x).

Antisemitism is a problem that permeates US society both on and offline. Antisemitic hate crimes in the United States rose to the highest level since 2008 in 2019 (Levin, 2020). Social media platforms are particularly popular vessels for antisemitic discourse (Ozalp et al., 2020; Zannettou et al., 2020). Despite this, a recent survey from the American Jewish Committee reports that almost half of Americans are unfamiliar with the term antisemitism (Mayer, 2020) and nearly 3 out of 10 Americans say, “they are not sure how many Jews died during the Holocaust” (Alper et al., 2020: 6). However, more than a third of American Jews say they have been a victim of antisemitism within the previous 5 years (Mayer, 2020). In 2019, the Pew Research Center (Doherty et al., 2019) found that 64% of Americans “say Jews face at least some discrimination” (p. 2), a number that rose by 20 percentage points from 2016. Despite an apparent lack of comprehension of the particularities of anti-Jewish hate, a majority of Americans appears to understand that antisemitism is a serious issue.

Antisemitism, as some researchers argue, needs to be understood as a “cultural category deeply embedded in collective memory” (Schwarz-Friesel, 2019: 315). It permeates all aspects of society, finding expression within the left, right, and center of the political spectrum, and within Christianity as well as Islam (Fine and Spencer, 2017). In its most outright forms, antisemitism includes Holocaust denial propagated by state actors such as Iran and by neo-Nazis in the West (Litvak, 2006). Holocaust denial reaches the core of right-wing thinking, such as Germany’s Alternative for Germany party or the American “alt-right” (Gallaher, 2021; Salzborn, 2018). Antisemitism is not a fringe issue, extending its purchase into mainstream political organizations such as the British Labour party under Jeremy Corbyn (Equality and Human Rights Commission, 2020). New antisemitism theory proposes that concealed versions of antisemitism use the vehicle of a critique of the State of Israel (Cousin and Fine, 2012). Importantly, criticizing Israel in and of itself is not antisemitic, but it can become “antisemitic under certain conditions” (Cousin and Fine, 2012: 177), depending on prejudice, stereotyping, and generalizations.

Hate speech and social media platforms

Hate speech conventionally refers to the communication of disparagement against people based on one or more identity factors. 2 With this in mind, the concept generally refutes easy classification (Fish, 2019; Sellars, 2016) and is “equivocal [in] that it denotes a family of meanings” (Brown, 2017: 562). The United Nations define hate speech as “any kind of communication in speech, writing or behaviour, that attacks or uses pejorative or discriminatory language with reference to a person or a group on the basis of who they are” (p. 2)—based on a range of identity factors. Building on this definition, antisemitic speech squarely falls into the parameters and family of meanings occupied by the term hate speech.

Definitions of and repercussions for hate speech differ across national jurisdictions. For instance, in the United States, it may be legally permissible to issue antisemitic statements in public. This is different from countries such as Germany, France, Austria, and Israel, in which specific laws outright ban, for instance, certain speech that denies the existence of the Holocaust (Carmi, 2008).

Social media companies, to protect their brands (Roberts, 2019) and their users typically employ community standards and content moderation with the intent to weed out certain types of speech (Gillespie, 2018a; Nurik, 2019). Since social media platforms are private operators, they have license to eliminate as much and any which types of speech that they choose to, including antisemitic speech. Platforms such as Google’s parent company, Alphabet, have sought to create machine learning tools like Jigsaw’s Perspective API (Wakabayashi, 2017) to weed out toxicity. The solutionist premise of such propositions is that “there is nothing wrong with the tech that can’t be fixed by the tech” (Seymour, 2019: n.p.). But tools are not panaceas and can be riddled with bias. For instance, when auditing Perspective, the civil society organization AlgorithmWatch found that text that included “as a Black person” or “as a gay” had a higher chance to be considered toxic than phrases with other descriptors (Kayser-Bril, 2020). This pinpoints that hate speech detection posits issues that are difficult to resolve. For instance, simple lexicon-based approaches may lead to many false positives as words are sometimes used in an antisemitic manner—and sometimes not. Therefore, researchers recommend approaches that are “based on the semantics of the problem rather than allowing them to be domain-agnostic” (Olteanu et al., 2017: n.p.)—which is precisely what the present study has set out to do.

Online antisemitism in the Trump era

In the Trump era, antisemitic expression has appeared in communication from people in the highest echelons of the government, including within the president’s own tweets (Anspach, 2021). Researchers argue that this has contributed to a normalization of antisemitism (Tuters and Hagen, 2020). However, not all of Trump’s tweets about Jews are seen as antisemitic—some language he uses “humanizes Jewish oppression, demonstrates Holocaust remembrance, and advocates against the antisemitic hate crime” (Coe and Griffin, 2020: 7).

Antisemitism is especially virulent online (Schwarz-Friesel, 2019; Shaheed, 2019), marring mainstream social media platforms such as Twitter (Ozalp et al., 2020) and video games (Ingersoll & Anti-Defamation League, 2020), and roaming relatively unhinged on fringe platforms, such as 4chan and Gab (Zannettou et al., 2020). For example, in the lead-up to the 2016 US presidential election, more than 800 journalists were targeted with antisemitic tweets (Anti-Defamation League, 2016).

Outlets for hate have had a purchase on online life for perhaps as long as people have had access to the Internet (Borgeson and Valeri, 2004; Daniels, 2009). Sometimes disguised and not openly antisemitic, cloaked websites and online communities may “call into question the basis of what we say we know about ethnicity, racism and racial equality” (Daniels, 2009: 661), implicitly endorsing antisemitism. Research has found that major political events such as the 2016 US presidential election and the 2017 Charlottesville “Unite the Right” rally are often followed by surges in the spread of antisemitic content online, which makes its way from fringe forums onto mainstream platforms (Zannettou et al., 2020).

We focus on Twitter in this study since, for a long time, the platform has been defined by its “anything-goes approach” (p. 64), and has served as an avenue which fulfills a critical role for white nationalists and antisemites to mainstream their ideas and shift the Overton window of acceptability (Daniels, 2018). Seymour (2019: n.p.) goes as far as suggesting that “something about social media is either incipiently fascistic, or particularly conducive to incipient fascism.” Meanwhile, the relationship between Twitter and white supremacy has been coined a “love story” (Daniels, 2017: n.p.). Panizo-Lledot et al. (2019) have studied alt-right forums during the US midterms, identifying white supremacist, antisemitic, and islamophobic themes in the discourse. With these prior findings in mind, the 2018 US midterms serve as a political event with sufficient magnitude for our study during which one could reasonably expect antisemitic speech to emerge. We ask the following research questions related to both the nature and semantics of antisemitic discourse on Twitter during the 2018 contest:

RQ1. Of a purposively selected list of terms, which (a) yield the highest shares of antisemitic discourse, and (b) which are most predictive of a tweet being antisemitic?

RQ2. What antisemitic themes manifest themselves in the political discourse on Twitter around the 2018 US midterm elections?

Platformed antisemitism

When discussing antisemitism, it is crucial to locate it within a larger system of racisms (Cousin and Fine, 2012). The relationship between racism and antisemitism, however, is not a simple one to conceptualize. Both terms center around hostilities toward specific groups in society (Banton, 1992). Racism originally formed around the idea of racial antisemitism (Bernasconi, 2021), though contemporary understandings of racism typically focus on anti-Black racism. Fanon (1967) once referred to Black people and Jewish people as “brothers in misery”—with both antisemitism and racism as indicative of “the same collapse, the same bankruptcy of man” (p. 86). Du Bois (1952), upon visiting Germany under Nazi rule, reflected on his impressions of the Nazi terrors against Jewish people: “It had never occurred to me until then that any exhibition of race prejudice could be anything but color prejudice” (p. 251). Contemporary readings of antisemitism see it as a “form of racism” or “religious discrimination” (Moerdler, 2017: 1282). Rich (2016) summarizes that “antisemitism differs from other forms of racism because it uses conspiracy theories to claim that Jews are a powerful, controlling influence in society” (pp. 201–202). In this study, we build upon Matamoros-Fernández (2017) who coined the term platformed racism, which (1) casts platforms as ‘‘amplifiers and manufacturers of racist discourse by means of their affordances and users appropriation of them” (p. 940), and (2) argues that platforms reproduce inequality by way of content policies and how these rules are enforced (Matamoros-Fernández, 2017). The notion of platform, in the context of this study, refers to “sites and services that host, organize, and circulate users’ shared content or social exchanges for them” (Gillespie, 2018b: 254).

We take this as a point of departure to propose the concept of platformed antisemitism, acknowledging that racism and antisemitism are distinct—although related—from one another. Platformed antisemitism is a specific form of platformed racism that discriminatorily targets Jewish people and puts a strong emphasis on conspiracy theories and tropes. Platformed antisemitism is similar to platformed racism in that it is contingent on platforms for its distribution and their affordances that allow it to flourish, but topically different in its subject matter and specific forms of discrimination. We understand platformed antisemitism as the particularities of antisemitic discourse, ridden with conspiracy theories, prejudice, stereotypes, Holocaust denial and more veiled forms of hatred aimed at Jews, amplified and moving within and through platforms, on the fringes and in the mainstream.

Platformed antisemitism often manifests during major hate events on the Internet. For instance, during the sexist attacks that defined #Gamergate, the harassment campaigns that were waged against Los Angeles-based video game journalist Anita Sarkeesian included accounts of platformed antisemitism such as slurs or caricatures (Braithwaite, 2016). Platformed antisemitism can also be discerned in semantic developments particular to specific social media platforms that then later spread across a range of platforms. For example, the use of three parentheses before and after a particular name was and continues to be used by antisemites on social media platforms to bring attention to a person’s Jewishness (Tuters and Hagen, 2020). These parentheses have taken on a life on their own, at times decoupled from an antisemitic context, a phenomenon which Tuters and Hagen (2020) describe as a floating signifier. This means that although some people may not even know what the parentheses originally entailed, their usage normalizes and propagates antisemitic symbolism.

Affordances of platformed antisemitism: the case of Twitter

To understand platformed antisemitism, it is imperative to investigate particular social media affordances that are used toward its ends. The concept of affordances in communication research generally refers to the range of opportunities and actions one can potentially derive from something. According to Davis and Chouinard (2016), affordances are “the functions attached to a given object—what, potentially, that object affords” (p. 242). Social media, for instance, afford human interaction (Evans et al., 2017). Individual buttons—likes, hashtags, retweets—can also be understood as affordances (Bucher and Helmond, 2018). Ichau et al. (2019), when studying representations of Jewishness on Instagram through hashtags such as #jew, #jews, and #jewish, have identified antisemitic memes propagating stereotypes. Indeed, hashtags are relevant affordances as they can “create an interest-based micro-network” (Ozalp et al., 2020: 8). They can be understood as “tools of activating certain interpretative frames” (Lindgren, 2019: 421). In a similar vein, researchers have argued that the acronym of President Trump’s 2016 US presidential election campaign MAGA—“Make America Great Again”—when used as the hashtag #maga—has served as an affordance for community building among white supremacist organizations (Eddington, 2018).

Twitter as a platform allows users to retweet or quote-tweet other people’s posts. Retweeting is, in essence, “the Twitter-equivalent of email forwarding where users post messages originally posted by others” (boyd et al., 2010: n.p.). Retweeting allows other people to join threads and expands visibility beyond single publics. Aside from simple retweets, the platform also affords quote-tweets, a feature in which one can add their own contextualization to a retweet (Molyneux and Mourão, 2019). The possibility to add commentary when resharing content as a quote-tweet has been referred to as an “understudied, yet common” (Wojcieszak et al., 2021: n.p.) phenomenon. Retweets have been shown to play an important role in the formation of alt-right networks for “identity construction” (Shahin and Ng, 2020: 2425). Against this background of affordances—tweets, hashtags, and retweets/quote-tweets—with the potential to allow platformed antisemitism to flourish, we ask the following three additional research questions:

RQ3. For tweets that are antisemitic, which hashtags are used most frequently?

RQ4. Which are the hashtags with the highest proportion of antisemitic usage?

RQ5. What dynamics can be discerned when considering antisemitism in original tweets as well as retweets?

These questions help us parse apart which are the most popular hashtags that appear across content that is exclusively used in an antisemitic manner (RQ3), hashtags that yield high percentages of associated tweets that are antisemitic (RQ4), and differences in the degree of how antisemitic tweets are between a set of original tweets and retweets (RQ5). Contrasting datasets with original tweets and original tweets plus retweets/quote-tweets is important: The context that a quote-tweet adds may be able to reframe an originally antisemitic tweet so that—when considering the original tweet plus the comment in its totality—it is no longer antisemitic in its potential intent.

Methodology

Content analysis

We sought to capture political conversation on Twitter as it was unfolding leading up to the 2018 US midterm election. To construct our dataset, in a multi-step procedure beginning with the hashtags #2018 midterms, #vote, #bluewave2018, and #redwave2018, we first identified, by way of purposive and snowball sampling, 228 hashtags around the election. Delineating conversations by proxy of hashtags in such a manner is an established way of conducting research on political conversations on Twitter (Bradshaw et al., 2020). In a second step, we used Tweepy, a Python library through which one can access the Twitter API, to collect a population of 5,843,282 tweets that pertained to our hashtags in the time span between 31 August and 17 September 2018. Third, we compiled a list of 50 terms that were hypothesized to be used in an antisemitic manner. We drew these terms from known antisemitic forums on 4chan and 8chan, direct expert consultation with the Anti-Defamation League (ADL), and from literature on antisemitism (UNESCO & OSCE, 2018). Among our collection of more than 5 million tweets, 99,062 of them (1.7%) contained one or more of these hypothesized antisemitic terms. We conducted a content analysis of these 99,062 tweets to adjudicate whether each specific term usage was indeed antisemitic. Research recommends combining computational and manual methods in big data content analysis to preserve contextual nuance (Lewis et al., 2013). Our unit of analysis was a single tweet. Of the 99,062 tweets in our sample, only 14.5% are original tweets, whereas 85.5% are retweets (such retweets include quote-tweets in which someone else might have appended their own commentary to the quoted tweet). Among the population of original tweets 17.3% were human-coded as antisemitic, whereas among the population of tweets plus retweets, 25% were human-coded as antisemitic.

In accordance with what we conceptually outlined in the literature review, human coders assessed whether a tweet carried either (1) a reference to a specific Jewish person as well as a trope, prejudice, or conspiracy, or (2) a reference to Jewish people writ large in combination with a trope, prejudice, or conspiracy. Coders were asked to assess whether a Jewish person seeing a tweet would reasonably perceive it as antisemitic. Negatively charged references to Jewish people or a particular Jewish person (e.g. “Soros donates millions to Democrats!” or “the Netanyahu government are corrupt!”) were considered not antisemitic (if harsh) political criticism, but if references also invoked antisemitic tropes/conspiracies or generalizations about Jewish people (e.g., “ . . . the soros dream to your state of a country submissive to globalism and control” or “deep state cartel zionist”), they were classified as antisemitic. One co-author and one student research assistant coded 1000 tweets from the sample for training purposes, as well as a second round of another set of 1000 tweets, which yielded sufficient intercoder agreement (Krippendorff’s α = .86). These comments represent 1% of the sample size. Research suggests drawing pretest content from a separate sample to assess intercoder reliability (Lacy et al., 2015)—a practice that was not available to us. Therefore, another colleague provided tie-breaking votes to definitely assess ambiguous results during pretests. The rest of the sample was split evenly between the first and second coder, who carried out the coding process between 4 and 21 May 2020. This dataset with manual annotations forms the basis for further analytical pursuits in our study.

Analytical pursuit

The analysis consisted of two components: Quantitative analysis via logistic regressions based on human annotations to yield terms and hashtags most often associated with antisemitic speech, and qualitative textual analysis to parse the content of tweets—what references were made, topics that were touched upon, and more.

First, to analyze which terms were predictors of antisemitic speech, we ran logistic regression analyses, with human-coded antisemitism classification as the dependent variable. Predictor variables consisted of 50 terms identified by researchers as often used in an antisemitic fashion that were entered into the model as dummy variables. In line with recent developments in communication research, we heed recommendations to utilize penalized regressions (Hindman, 2015) typically used in computer science/machine learning (e.g. Beltran et al., 2021). Regression models such as the one used here—least absolute shrinkage and selection operator (Lasso)—are “especially useful when the number of available variables for a regression model is very high” (Scherr and Zhou, 2020: 205). The Lasso method is useful for improving model interpretability by reducing dimensionality of the model. It operates by shrinking regression coefficients toward zero and—unlike the ridge regression—some to be exactly equal to zero. It effectively performs variable selection, removing redundant predictors that yield no additional explanatory power.

Using the R package glmnet (Friedman et al., 2010), we performed a 10-fold cross-validation using deviance as the evaluation criterion. We chose the optimal value of the shrinkage parameter λ per the one-standard-error rule, that is the value of λ that yields the most regularized model such that the cross-validation error is within one standard error of the minimum. We then ran a logistic regression using the predictors selected from the Lasso (Xp where|βp| > 0 at λ = lambda.1se).

Second, we performed a qualitative textual analysis of all tweets. We employed coding techniques from grounded theory research such as open and axial coding to parse through the data (Corbin and Strauss, 2008). Open coding refers to a process in which researchers form associations and come up with abstract terms and heuristics to describe phenomena and observations in the data. Axial coding refers to interrelating these open codes in a next step, finding commonalities and dominant categories, and working toward the grouping of the most prevalent dimensions relevant to the research question. Constant comparison, a strategy which emphasizes the continuing re-evaluation of coding schemata, allowed us to identify three topics and themes representative of the discourse which we later present in the results.

Results

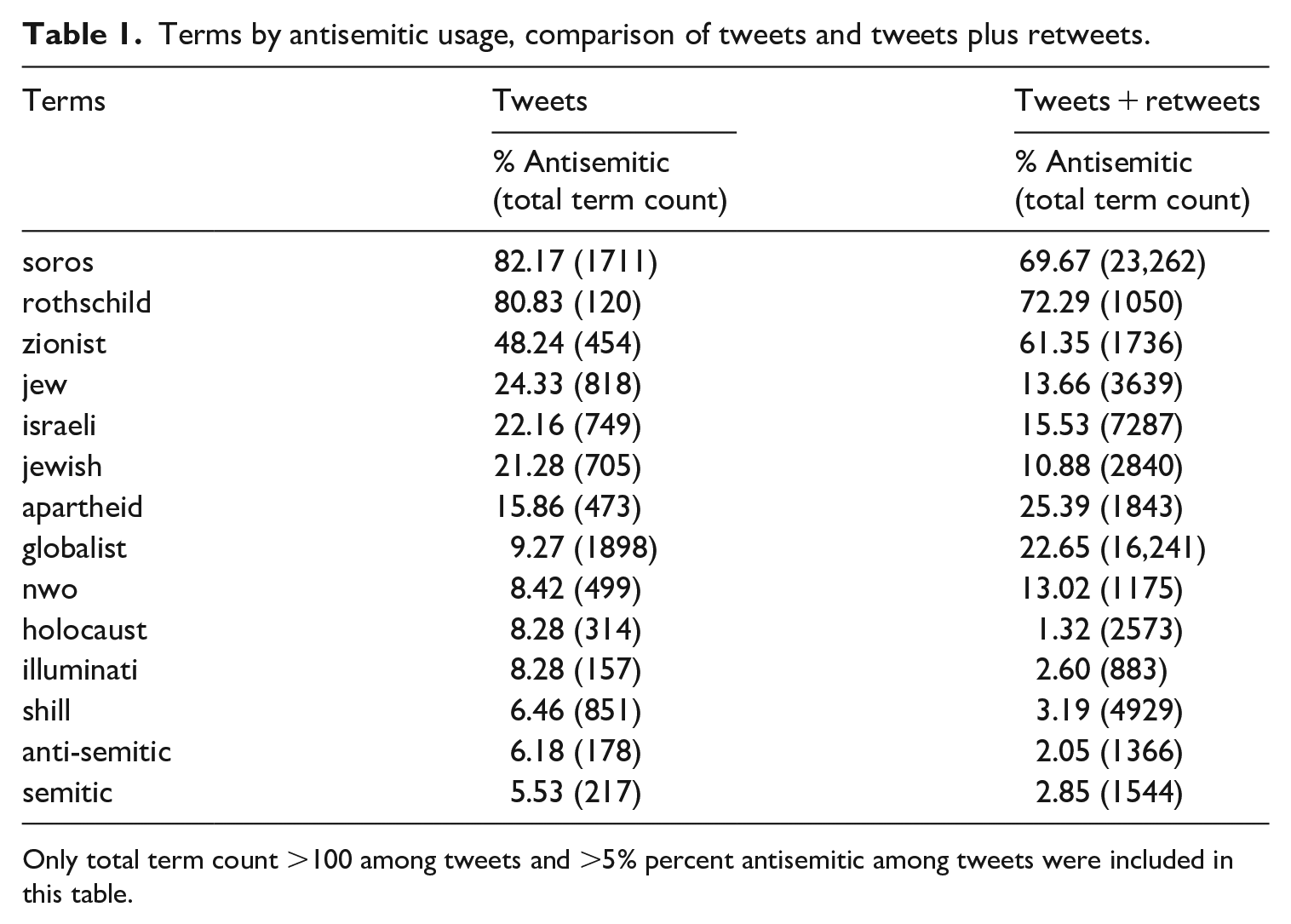

To answer RQ1a, we first conducted a descriptive analysis of the incidence of antisemitic tweets across our sample. Among 14,379 original tweets, 17.3% were considered antisemitic in the human coding process. Subsequently, we assessed which terms had the highest share of antisemitic use. The words “soros,” and “rothschild” had shares of more than 80% antisemitic use. “Zionist” yielded almost 50% antisemitic use. “Jew,” “jewish,” and “israeli” ranged between 20% and 25% antisemitic use, while apartheid held at slightly above 15%. Terms such as “holocaust” (8.28%) and “nwo” (8.42%), the latter of which is the abbreviation of the antisemitic conspiracy theory of a “new world order” yielded relatively low numbers of antisemitic content (Table 1).

Terms by antisemitic usage, comparison of tweets and tweets plus retweets.

Only total term count >100 among tweets and >5% percent antisemitic among tweets were included in this table.

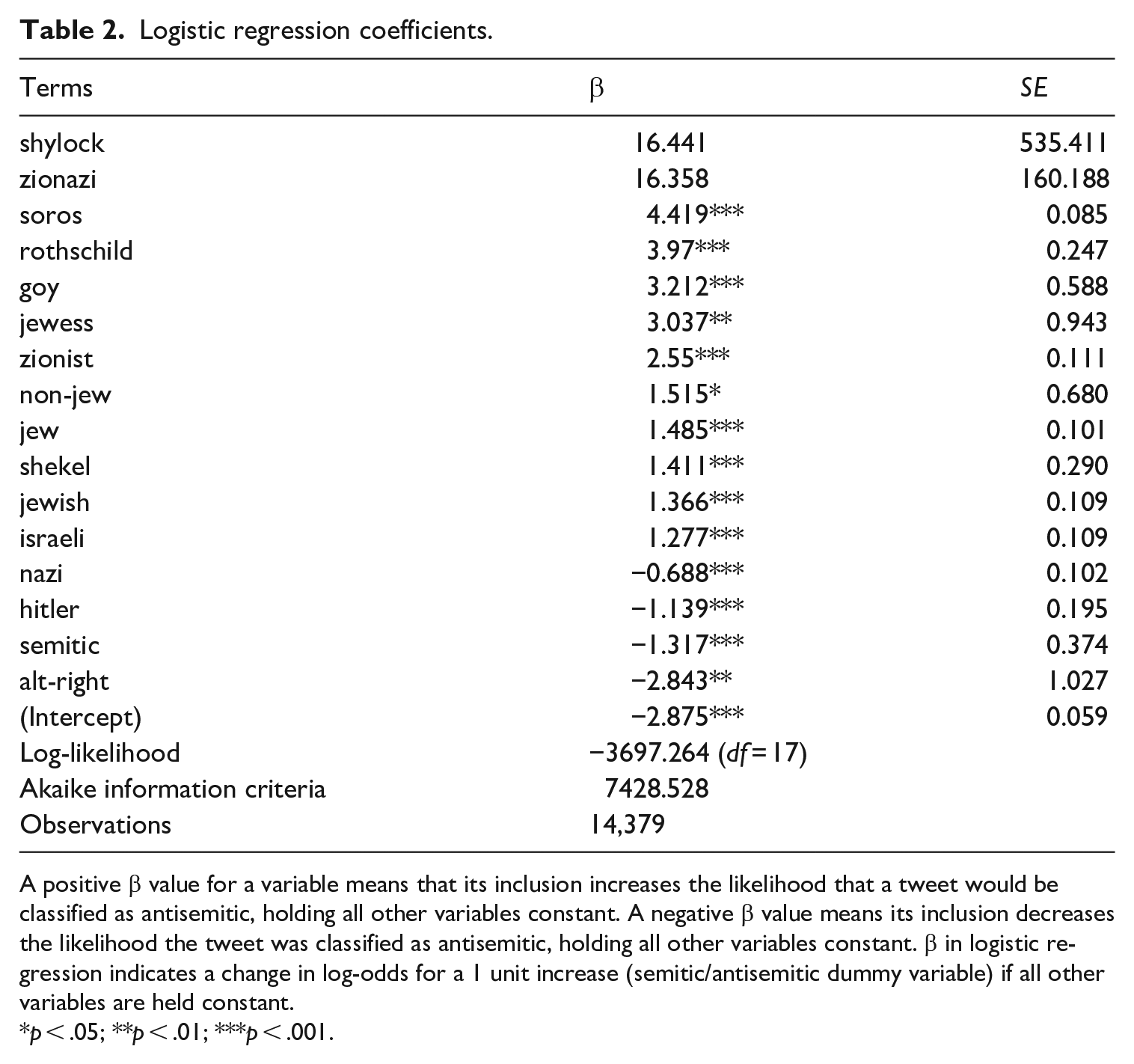

To answer RQ1b, we first performed the Lasso method, and then logistic regression analysis on the basis of the variables that were selected through Lasso, creating a sample of 14,379 original tweets. The inclusion of the term “soros” in a tweet was most predictive (β = 4.419, SE = 0.085) of a tweet being antisemitic. The second highest predictor was “rothschild” (β = 3.97, SE = 0.247), followed by “goy” (β = 3.212, SE = 0.588), “jewess” (β = 3.037, SE = 0.943), and “zionist” (β = 2.55, SE = 0.111). The inclusion of terms “nazi” (β = −0.688, SE = 0.102), “hitler” (β = −1.139, SE = 0.195), “semitic” (β = −1.317, SE = 0.374), and “alt-right” (β = −2.843, SE = 1.027), strongly decreased the likelihood of a tweet being classified as antisemitic (see Table 2 for full list of regression coefficients).

Logistic regression coefficients.

A positive β value for a variable means that its inclusion increases the likelihood that a tweet would be classified as antisemitic, holding all other variables constant. A negative β value means its inclusion decreases the likelihood the tweet was classified as antisemitic, holding all other variables constant. β in logistic regression indicates a change in log-odds for a 1 unit increase (semitic/antisemitic dummy variable) if all other variables are held constant.

p < .05; **p < .01; ***p < .001.

This logistic regression model can be used to predict the probabilities of a tweet being classified as antisemitic. For example, the following tweet would be predicted, on the basis of the model, as having a 2.8% chance of being antisemitic: #vote against racist holocaust denying types, trump murdered 3000 people twice, just like any nazi did, pretending no one died, it’s easier to pretend killing thousands isn’t real. #republicanracist neglect, gop is failed in any leadership skills.

To compare, the model would predict this tweet to have an 82.4% chance of being antisemitic: alex soros #evilliveshere #embedded.. son of globalist #nwo #agenda30 george soros making freinds #ussenate .@realdonaldtrump @transformativev @hulkanator11 @patriotnewschan @qblueskyq @freenaynow @itsshannonz @peacesells7 @ironmaidenman1 @kerryjrn @ilobbyist #maga

RQ2 inquired what antisemitic themes manifest in the political Twitter discourse around the 2018 US midterm elections. Our qualitative, textual, in-depth manual analysis revealed the following themes: (1) Age-old antisemitic tropes and conspiracy theories rehashed, (2) discourse that dips into the debate around Zionism, and (3) term usage that diverged from expectations.

The first theme we identified pertained to age-old antisemitic tropes and conspiracy theories. Tweets promoted conspiracy theories that proclaimed that prominent Jewish leaders such as George Soros or the Rothschild family would seek to impact and influence events to gain control over the world. This ties in with prominent conspiracy theories such as those promoted in the Protocols of the Learned Elders of Zion, a Russian propaganda pamphlet published in the early 1900s which posits that Jewish people aim for world domination (Farkas et al., 2018). Antisemitic tweets concerning George Soros were the most common refrains in the dataset (Table 1). He was called “the face of the deep state” and a “#puppetmaster,” among other slurs, and repeatedly accused of controlling the Democratic party, paying protestors, paying members of Black Lives Matter, and paying to manipulate voting machines, all with the intention of establishing a “globalist” “new world order” by overthrowing democracies with “immigration” and “open borders.” Particularly blatant conspiracy theories included accusations that Soros himself had been a Nazi collaborator and that the perpetrator who murdered Heather Heyer at counterprotests to the 2017 white supremacist rally in Charlottesville was sponsored by Soros.

The second theme we identified pertains to the debate around Zionism, which surfaced as a wedge issue that is both highly contested and highly partisan. As our prior analyses reveal, “zionist” appears both as a term appearing in a high percentage of antisemitic discourse, as well as being highly predictive of a tweet being antisemitic. Antisemitic usage occurred predominantly in anti-Trump tweets. For example: “donaldtrump is a zionist puppet who is being told what to do by bibi nutanyahoo” and “don used #americafirst as a guise for his #israelfirst agenda. he is only interested in delivering for his zionist sugar daddies.” Notably, antisemitic usage of “zionist” was common in content related to QAnon. For instance: “the revolution against the zionists is coming. everyone is awake now and we know who you are . . . #wwg1wga.”

Given that QAnon is based upon racist and anti-elite conspiracies (Zuckerman, 2019) and emphasizes a battle of “good” against “evil,” this is perhaps not all that surprising.

The third theme that emerged was the unexpected usage of particular terms. For instance, we expected terms such as “sieg” and “heil” or “Aryan” to be used in a derogatory manner toward Jewish people; instead they were appropriated, for instance, to mock and make fun of Donald Trump or Republicans. Tweets such as “SIEG HEIL, MEIN FÜHRER! #NAZIS,” revealed their satirical nature by considering contextual information on individual user profiles. As outlined before, usage of terms such as “nazi,” “hitler,” “semitic,” or “alt-right” in fact decreased the likelihood of a tweet being antisemitic. This was due to the fact that the majority of tweets with these terms called for followers to “rise up & resist” Nazism, accused others of being like Hitler or a Nazi, or refuted accusations (e.g. “Maga is not the KKK or the Nazis”).

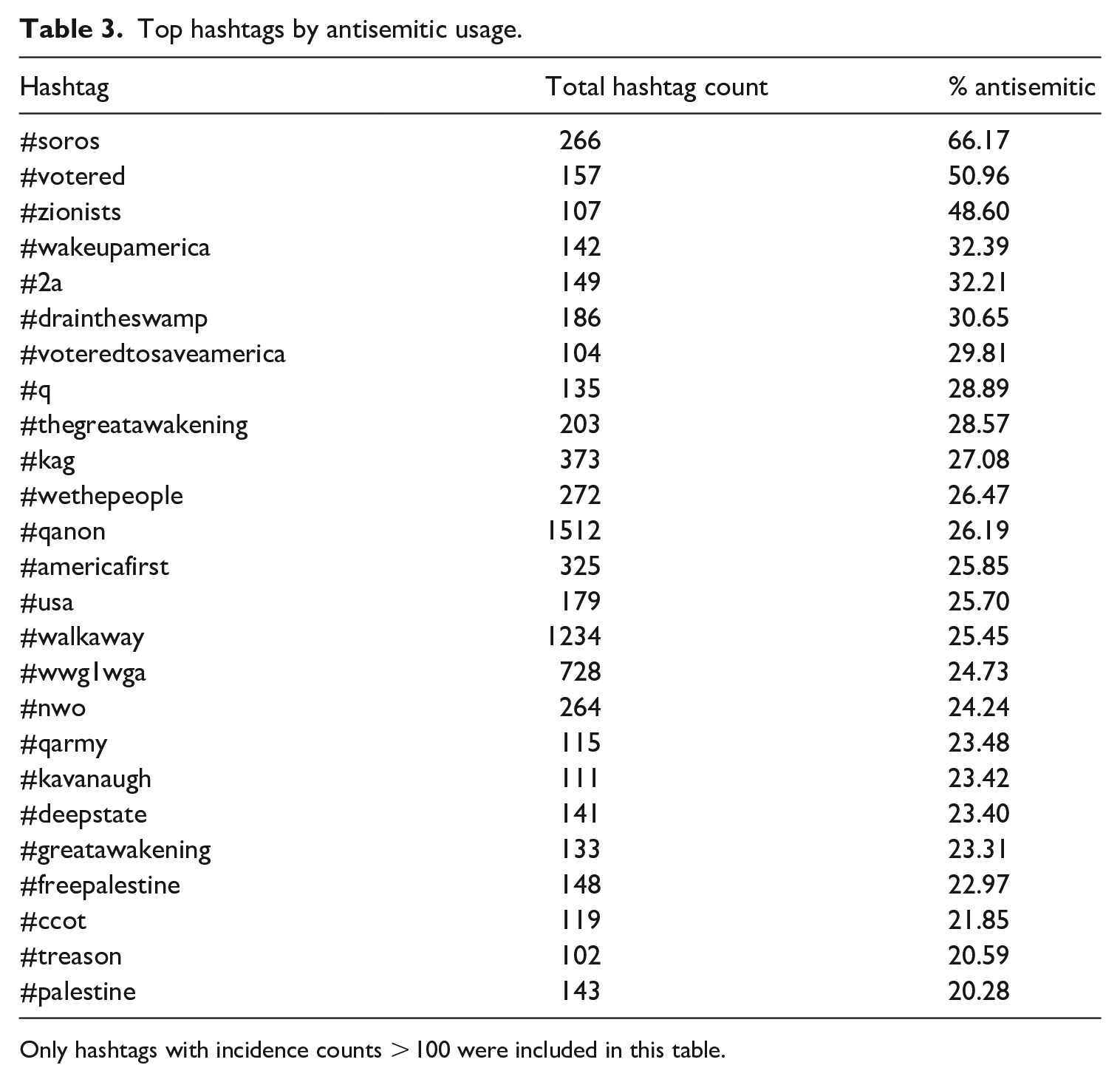

RQ3 asked, among the tweets that were labeled as antisemitic, which hashtags were used most frequently. Among antisemitic tweets, the hashtags most frequently used were #maga (680), #qanon (396), #walkaway (314), #bds (227), #wwg1wga (180), #soros (176), #trump (166), #kag (101), #americafirst (84), and #tcot (82). These hashtags indicate a significant share of exclusively antisemitic speech congregated around MAGA/Trump support (#maga, #walkaway, #trump, #kag, #americafirst, #tcot), the conspiracy theories surrounding QAnon (#qanon, #wwg1wga), as well as antisemitic dog whistles relating to George Soros (#soros). It also included the hashtag #bds, referring to the Boycott, Divestment and Sanctions movement which protests Israeli occupation of Palestine and promotes economic boycotts of Israel and Israeli products.

RQ4 asked which were the hashtags with the highest proportion of antisemitic usage among all tweets (not only among those classified ex-ante as antisemitic). We found that only the hashtags #soros and #votered rendered antisemitic usage higher than 50%. Other hashtags that rendered high percentages of antisemitic content (>30%) were #zionists (48.6%), #wakeupamerica (32.4%), #2a (32.2%) and #draintheswamp (30.7%). Content pertaining to the conspiracy theory QAnon also appeared in the top hashtags by antisemitic use—for instance as #q (28.9%), #thegreatawakening (28.6%), #qanon (26.2%), #wwg1wga (24.7%) or #qarmy (23.5%). Other conspiracy theories emerged as well among the hashtags with the highest proportion of antisemitic usage. These included, for instance, #nwo (24.2%), or #deepstate (23.4%) (see Table 3). These results indicate that while some hashtags indeed are vectors for higher shares of antisemitic use (e.g. #soros, #votered, #zionists, #wakeupamerica, or #draintheswamp), lexicon-based restrictions are no solution to targeting antisemitic speech, as these percentages also indicate that there is an abundance of cases in which the use of such hashtags is acceptable.

Top hashtags by antisemitic usage.

Only hashtags with incidence counts > 100 were included in this table.

RQ5 asked about the dynamics that emerge when considering antisemitism among original tweets versus among a population that includes both tweets and retweets. To explore the notion of amplification or diminution of antisemitic content, we draw from Table 1, in which we tracked the volume of term counts and their percentage of antisemitic usage. Between tweets and tweets plus retweets, we observe a diminution of the percentage of antisemitic content connected to certain terms when retweets are factored in for the terms “soros” (from 82.2% to 69.7%), “Rothschild” (from 80.8% to 72.3%), “jew” (from 24.3% to 13.7%), “israeli” (from 22.2% to 15.5%), “jewish” (from 21.3% to 10.9%), “holocaust” (from 8.3% to 1.3%), “illuminati” (from 8.3% to 2.6%), “shill” (from 6.5% to 3.2%), and “anti-semitic” (from 6.2% to 2.1%), “semitic” (from 5.5% to 2.9%). Diminution indicates that further text that people supplemented when they quote-tweeted antisemitic content contextualized or countered, rather than reaffirmed, such content, and/or to unpacked/expanded on what is problematic and despicable in the language of the original tweet. We also observed amplification, for the terms “zionist” (from 48.2% to 61.4%), “apartheid” (from 15.9% to 25.4%), “nwo” (from 8.4% to 13%), and “globalist” (from 9.3% to 22.7%). Amplification indicates that while some original usage of a term may have been not antisemitic, quote-tweets retweeting and contextualizing it further had piled on more antisemitic speech, or information that made the totality of the tweet plus its quote-tweet framing ultimately antisemitic.

Discussion

This study sought to accomplish two things: To provide a working, platform-agnostic definition of platformed antisemitism, and to document how platformed antisemitism manifests in an exemplary case study of political discourse on Twitter during the 2018 US midterm elections, specifically emphasizing platform-specific affordances such as the role that hashtags play, as well as the dynamics surrounding tweets and retweets/quote-tweets. We draw on the dynamics of antisemitism in the United States, social media governance, as well as the notion of platformed racism (Matamoros-Fernández, 2017) to further the conceptual understanding of what we refer to as platformed antisemitism: the particularities of antisemitic discourse, ridden with conspiracy theories, prejudice, stereotypes, Holocaust denial and more veiled forms of hatred aimed at Jews, amplified and moving within and through platforms, on the fringe and in the mainstream.

Our in-depth qualitative, textual analysis of 99,062 tweets and retweets allowed us to substantiate this definition of platformed antisemitism—how age-old antisemitic conspiracy theories concerning Jewish “puppeteers” are rehashed and utilized to express hatred of Jewish people, as well as how Zionism serves as a productive vector for hatred. Furthermore, we found these themes to be enmeshed with relatively novel conspiracy theories surrounding QAnon, as well as with Trump/MAGA support. The Pew Research Center (2020) found that in early 2020, only about a quarter of people in the United States knew what QAnon was—a number that doubled by September of the same year. In 2018, at the time of our study prior to the midterm election, our analyses reveal that QAnon had very much arrived in antisemitic political speech online as is manifest in hashtags such as #wwg1wga, #qanon, or #qarmy.

When considering platform-specific affordances that serve as vectors for platformed antisemitism, our study—although not originally intended to study QAnon, found evidence of QAnon discourse enmeshed with antisemitic speech on Twitter as early as 2018. Scholars describe QAnon as “a meta narrative that knits together contemporary politics and hoary racist tropes with centuries of history behind them” (Zuckerman, 2019: 3). It purports that a cabal of elites attempt to undermine US democracy, aided by people such as George Soros or the Rothschild family. Zuckerman (2019) argues that this is “more anti-elite than explicitly anti-Semitic” (p. 4). We argue that, in line with parentheses that have become a floating signifier taken from antisemitic discourse and brought into the mainstream (Tuters and Hagen, 2020), conspiracy theories such as QAnon give rise to a normalization of platformed antisemitism in US society. When further considering platform-specific affordances operating as vectors for platformed antisemitism on Twitter, we found that certain hashtags relating to President Trump and his agenda such as #maga, #trump, or #americafirst surfaced as frequently used among tweets that were labeled antisemitic. This is in line with research showing how #maga has served as a tool for online community building among white nationalists (Eddington, 2018).

Another important contribution of this study is that, through our use of penalized regression (Hindman, 2015), we are able to introduce a set of predictors that increase a tweet’s likelihood of being antisemitic (i.e. “soros,” “rothschild,” “goy,” “jewess,” “zionist”), as well as predictors decreasing such likelihood (i.e. “nazi,” “hitler,” “alt-right”). At face value, the predictors for a decrease are not intuitive. It may be that self-prescriptions of antisemites would be unlikely to use terms such as “nazi,” “hitler,” or “alt-right,” and these are instead likely used by others to refer to antisemites or antisemitic discourse. The use of humor by antisemites long predates the web (e.g. Sartre, 1948), and humor is an important category among propagators of hateful content (Farkas et al., 2018; Milner, 2013), with 4chan jokes serving as a launchpad for white supremacists (Tuters and Hagen, 2020). The appropriation of humor to counter antisemites, and to make fun of them by employing certain terms, is a noteworthy finding of our research.

This research sought to shed light on Twitter affordances and their roles in platformed antisemitism. We compared a population of original tweets with tweets plus retweets and found an increase and amplification of antisemitism from 17.3% to 25% when adding retweets. We also found individual differences based on terms, with some terms leading to a diminution in antisemitic usage after retweets (including quote-tweets that add context) are taken into account (i.e. “soros,” “jewish,” or “holocaust”) as well as amplification (i.e. “zionist,” “apartheid,” or “globalist”). This pinpoints that some tweets that were not antisemitic were ultimately made so by way of people adding antisemitic references/context in their quote-tweets, and some tweets that were antisemitic were couched in language contesting them, leading to the overall compositum of tweet plus quote not being antisemitic. This finding is particularly relevant as it aligns with emerging research on quote-tweets and the contextualization of speech through sharing. In their study on networked frame contests around #BlackLivesMatter discourse, Stewart et al. (2017) showed that quote-tweets “directly challenge the other side’s narrative or frame” (p. 15). Our study also mirrors research documenting that people add negative comments in quote-tweets when sharing out-group tweets (Wojcieszak et al., 2021). Building on Shahin and Ng’s (2020) understanding of retweets as a way of “identity construction” (p. 2425) among alt-right networks, we suggest that some terms might provide better ferment for platformed antisemitism than others, and indeed serve to construct identity and enforce antisemitic speech. This, however, can also be counteracted by a dynamic in which content on Twitter gets quote-tweeted and annotated with comments that contradict the original tweets.

Limitations and future research

Like all studies, this one has limitations. We delimited a large population of tweets and retweets (including quote-tweets) at a specific point in time, guided by the pragmatics of Internet research and a selection of keywords and hashtags rooted in the literature. Therefore, our study only provides a snapshot of platformed antisemitism in the context of one particular platform. Methodologically, the definition of antisemitism applied in our coding of tweets rests on the assumption that a mere reference to a Jewish person or Jewish people alone didn’t emanate to be considered antisemitic, and to be considered only so when appearing in conjunction with the invocation of tropes/conspiracies/generalizations. Other definitional approaches may disagree with us.

Our results help shed light on platformed antisemitism and provide avenues for future research—that is, its intersections with new conspiracy theories such as QAnon, as well as Trumpism. We are cognizant that developing tools to weed out antisemitic speech is a highly contested issue (e.g. York and Greene, 2021). Although we provide a speech classifier in this study, we want to emphasize that our findings also underscore that lexicon-based approaches are insufficient in weeding out antisemitic speech, and risk over-including content that should not be banned. This points to the need for context- and language-sensitive human moderation efforts by platforms. Important conversations must be had about how the focus on certain terms contributes to the silencing of legitimate political criticism, for example, of pro-Palestinian speech critical of Israel (Biddle, 2021). For instance, while we substantiate here that the term “zionist” is an important predictor of speech being antisemitic, our study also showed that in many cases, it is not used in an antisemitic manner. Technological approaches weeding out antisemitic hate speech, therefore, must emphasize nuance and context.

This study provided a snapshot of antisemitic discourse and how it metastasizes throughout the Internet. It is our hope that our conceptual definition of platformed antisemitism and our methodological contributions aid the understanding of the mechanics of hatred and further motivate the prevention of its propagation.

Supplemental Material

sj-pdf-1-nms-10.1177_14614448221082122 – Supplemental material for Platformed antisemitism on Twitter: Anti-Jewish rhetoric in political discourse surrounding the 2018 US midterm election

Supplemental material, sj-pdf-1-nms-10.1177_14614448221082122 for Platformed antisemitism on Twitter: Anti-Jewish rhetoric in political discourse surrounding the 2018 US midterm election by Martin J. Riedl, Katie Joseff, Stu Soorholtz and Samuel Woolley in New Media & Society

Footnotes

Acknowledgements

The authors thank Katlyn Glover for her help in coding tweets for this project.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Samuel Woolley was supported as a Belfer Fellow at the Anti-Defamation League (ADL) during the course of this research; the findings do not represent the opinions of ADL. This study is a project of the Center for Media Engagement (CME) at Moody College of Communication at the University of Texas at Austin, where research is supported by the Open Society Foundations, Omidyar Network, The Miami Foundation, as well as the John S. and James L. Knight Foundation.

Supplemental material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.