Abstract

This article introduces the notion of platformed conspiracism to conceptualize reconfigured forms of conspiracy theory communication as a result of the mutual shaping between platform specificities and emergent user practices. To investigate this relational socio-technological shaping, we propose a conceptual platform-sensitive framework that systematically guides the study of platformed conspiracism. To illustrate the application of the framework, we examine how platformed conspiracism unfolds on BitChute and Gab during the COVID-19 pandemic. Our findings show that both platforms have positioned themselves as technological equivalents to their “mainstream” counterparts, YouTube and Twitter, by offering similar interfaces and features. However, given their specific services, community-based and politically marketed business models, and minimalist approaches to content moderation, both platforms provide conspiracy propagators a fertile refuge through which they can diversify their presence and profit monetarily from their supply of conspiracy theories and active connection with their followers.

Introduction

Historically, conspiracy theories circulated mostly in small and local “socio-cultural niches” (Asprem and Dyrendal, 2018: 227). Their pervasive flourishing in contemporary media and platform environments, however, demonstrates that this has changed. Conspiracy theories have flowed from the hidden margins of society to the forefront of public and scholarly debates. The logic of digital platforms (Van Dijck and Poell, 2013) and the connectivity of the platform ecosystem (Van Dijck et al., 2018) arguably brought about detrimental changes to the culture of conspiracy theories, including their distribution, visibility, connectivity, and language. In this study, we introduce the notion of platformed conspiracism to conceptualize reconfigured forms of conspiracy theory communication, which we understand as the product of both the specificities of platforms and the emergent practices of users.

Platformed conspiracism is multifaceted, dynamic, and can take a variety of shapes. However, despite the wealth of studies that have interrogated the impact of platforms on the communication of conspiracy theories (for an overview, see Mahl et al., 2022), there has been little systematic investigation of how conspiracy theories evolve on different platforms and why certain spaces have established themselves as fertile refuges for extreme or marginalized views. Most research has analyzed single platforms or aggregated several platforms under the umbrella of “social media,” neglecting potentially crucial differences between platforms (notable exceptions are, for instance, Forberg, 2022; Theocharis et al., 2021; Zeng and Schäfer, 2021). Given the distinct specificities of platforms, this perspective does not seem sufficient to study conspiracy theories in contemporary platform environments.

We aim to (partly) close this gap by providing a twofold contribution. Conceptually, we propose an analytical framework that assumes a mutual shaping between platform specificities and user practices. Empirically, we apply this framework in a cross-platform analysis and assess how platformed conspiracism unfolds on two under-researched “alternative” platforms 1 —BitChute (the “censorship-free” alternative to YouTube) and Gab (the right-wing equivalent to Twitter)—during the COVID-19 pandemic. Both platforms have been shown to be fertile breeding grounds for conspiracy theories (Trujillo et al., 2020; Zannettou et al., 2018).

Platforms, conspiracy theories, and platformed conspiracism

Digital platforms, which we understand as programmable architectures designed to “host, organize, and circulate users’ shared content or social exchanges” (Gillespie, 2018: 254), have drastically penetrated and transformed how we interact with each other, how information is produced and distributed, and how decision-making is organized. However, platforms are by no means neutral conduits of online sociality (Van Dijck et al., 2018). Rather, they are shaped by techno-cultural, economic, political, and social dimensions from which they should not be separated analytically.

The transformation of digital platforms into the “dominant infrastructural and economic model” (Helmond, 2015: 1) of today’s social web is commonly referred to as platformization. Importantly, the notion of platformization recognizes the ways in which the entire social sectors and spheres of life are transforming as a result of the mutual shaping that occurs between platforms and their users (Poell et al., 2019). This transformation has been studied with respect to cultural production (Nieborg and Poell, 2018), mobile media and apps (Kaye et al., 2021), and the news industry (Poell et al., 2022). In addition, platformization as a theoretical lens has been utilized to investigate how the technological affordances and governing logics of social media contribute to the proliferation of toxic discourses online. For instance, Matamoros-Fernández (2017) coined the term platformed racism to describe a new form of racism amplified by the culture and politics of social media. Inspired by this concept, Riedl et al. (2022) investigated how Twitter’s affordances contribute to what the authors describe as platformed antisemitism.

We argue that the logic of platforms has also fundamentally modified the ways in which conspiracy theories are communicated. First, conspiracy theories, along with other forms of deceptive content, are more visible than before, circulate faster, and generate more user engagement than verified or scientific information (Bessi et al., 2015; Vosoughi et al., 2018). Second, due to their connective architecture, platforms enable conspiracy propagators to build and sustain virtual networks of like-minded individuals (Mahl et al., 2021), which can ultimately evolve into transnational movements (Forberg, 2022). Third, vernacular practices of online milieus give rise to new ways of communicating and encrypting conspiratorial messages, such as through memes and slang expressions (Tuters and Hagen, 2020).

To capture the new dynamics and complexity of conspiracy theories online, we introduce the notion of platformed conspiracism. Building upon platformization theory (Helmond, 2015; Nieborg and Poell, 2018; Van Dijck et al., 2018), we define platformed conspiracism as reconfigured forms of conspiracy theory communication that are contingent on platforms’ technological, economic, and regulatory specificities and receptive to emergent user practices. Accordingly, platformed conspiracism can vary spatially and temporally depending on platform and user cultures. Similar to the concepts of platformed racism (Matamoros-Fernández, 2017) and platformed antisemitism (Riedl et al., 2022), platformed conspiracism addresses the appropriation of platform specificities by social groups to promote harmful discourses, and by implication, the accountability of technology companies for facilitating such practices. The term conspiracism acknowledges that, first, narrative elements of conspiracy theories are disseminated rather than fully elaborated theories (Rosenblum and Muirhead, 2019), and second, individuals who participate in the culture of conspiracy theories do not necessarily believe wholeheartedly in such narratives, but may also be driven by attention-seeking, monetary, or entertainment motives (Dyrendal, 2016).

Conceptual framework to study platformed conspiracism

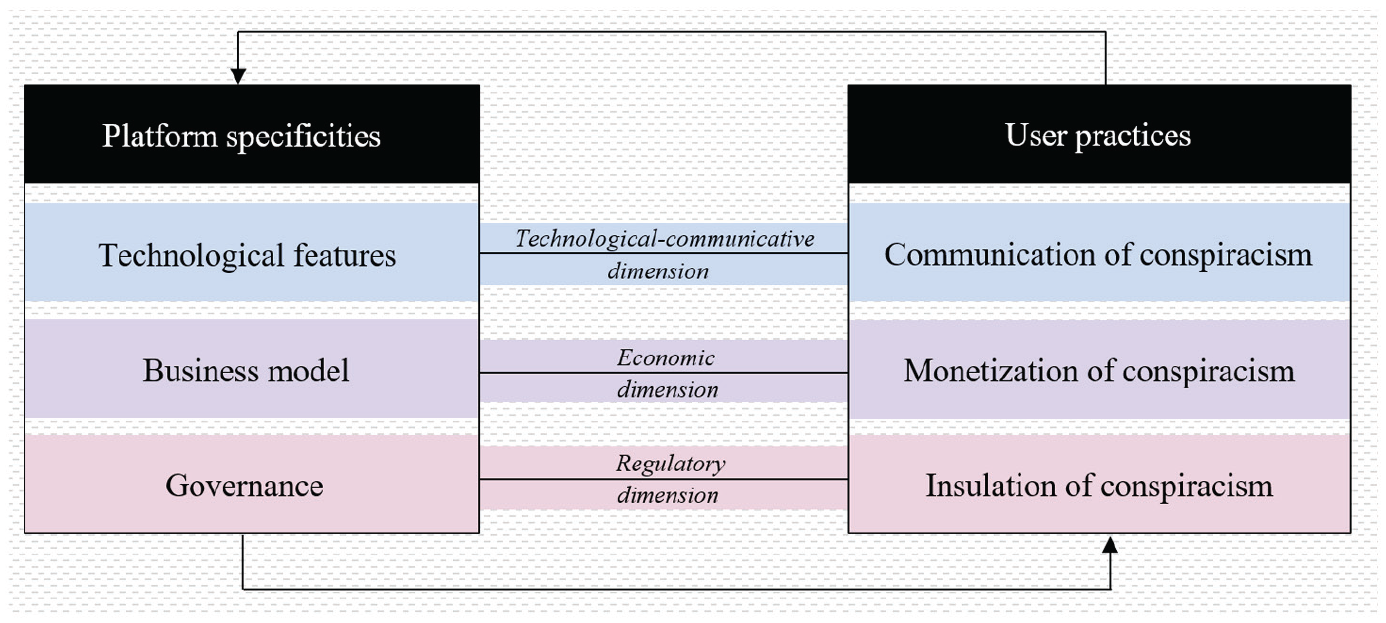

To empirically investigate platformed conspiracism, we propose a conceptual framework that encompasses two main building blocks—platform specificities and user practices—and their underlying analytical dimensions (see Figure 1). Importantly, the framework rests on the idea that platforms and users are interrelated and mutually condition each other, forming a relational socio-technological fabric. This linkage encompasses technological-communicative, economic, and regulatory dimensions. The technological-communicative dimension comprises the platform’s technological features, which in turn afford certain communicative practices for users. At the same time, the way users adopt these features can stimulate the (re)configuration of (new) features. With regard to the economic dimension, the platform’s business model and users’ monetization opportunities can be contrasted. While platforms can enable or constrain monetization strategies of users, they can simultaneously benefit from so-called “conspiracy entrepreneurs” (Sunstein and Vermeule, 2009: 212) who can expand the active user base. Finally, the regulatory dimension contrasts the governance by platforms and the ways in which conspiracy propagators employ strategies to circumvent these regulatory efforts. This, in turn, may lead to a readjustment of governance strategies.

Conceptual framework for studying platformed conspiracism.

This framework can advance critical platform studies and conspiracy theory research alike. First, it brings to focus the interplay between platforms and users, which has led to reconfigured forms of conspiracy theory communication—platformed conspiracism—and systematically guides its analysis. Simultaneously examining platform specificities and emergent conspiracism-related user practices allows for a more nuanced and coherent picture of how (diverse) conspiracy theories are communicated in platform environments. Second, since the framework is “platform-sensitive” (Bucher and Helmond, 2018: 234) but not platform-specific, it can provide a useful resource for cross-platform investigations of various public discourses, for example, around extremist or right-wing communities, vulnerable or marginalized populations, and creator cultures in general.

Platform specificities

The specificities of platforms afford, shape, or constrain certain modes of use and types of cultures. 2 Drawing on critical platform studies (Gillespie, 2010; Nieborg and Poell, 2018), we distinguish between three analytical dimensions: platforms’ technological features, business model, and governance.

Technological features

The dimension of technological features considers how platforms are purposefully designed to orchestrate how users can interact with each other both on and across platforms (Nieborg and Poell, 2018). This ranges from the format of content that is preferred (e.g. textual or [audio-]visual content) to specific buttons (e.g. like or share) that afford engagement of users and dissemination of content. In addition, services that offer the integration of multiple platforms within one environment facilitate the distribution of content across platforms simultaneously—which can lead to higher visibility and faster proliferation. From the perspective of platformed conspiracism, the latter feature is vital as it allows conspiracy propagators to expand their community and reach (Innes and Innes, 2021). Depending on the constellation of such features and services, content creators can utilize platforms in very different ways and for specific purposes and modes of communication. For instance, while micro-blogging platforms such as Gab are mainly used as information-sharing tools (Zeng and Schäfer, 2021), video-sharing platforms such as BitChute primarily host content users on other platforms referred to through outlinks (Trujillo et al., 2020).

Business model

The business model of platforms takes into account how platforms commodify user activities, social relations, and various forms of transactions (Nieborg and Poell, 2018). Platforms’ business models, however, can vary widely. While “mainstream” platforms such as Twitter or YouTube often rely on targeted advertising, the revenue model of “alternative” platforms such as BitChute and Gab is commonly based on memberships or donations (Ballard et al., 2022; Trujillo et al., 2020). From the perspective of platformed conspiracism, understanding these business models is crucial to evaluating how a sense of community is created, as donations to or investments in the platform can indicate a strong engagement and commitment. Consequently, the platform’s choice of a particular revenue model is often justified by strategic decisions and marketed in a politicized way (Jasser et al., 2021).

Governance

Governance refers to strategies and interventions employed by platforms to moderate and internally police user interactions and distributed content (Nieborg and Poell, 2018). Three aspects of platform governance need to be distinguished (Buckley and Schafer, 2022). First, platforms codify prohibited content or behavior such as pornography or hate speech in their terms of use (ToU), terms of service (ToS), or community guidelines (Nieborg and Poell, 2018). Second, platforms have various options to enforce their rules or respond to violations of them. These options include appending warnings to content, reducing the visibility of content, imposing demonetization strategies, or banning users from the platform (Gillespie, 2022). Third, platforms apply different mechanisms to detect content that might violate their policies, including the use of algorithms, human moderators, users, or a combination of the three (Nieborg and Poell, 2018). In light of platformed conspiracism, it is important to note that although all platforms moderate content and monitor user behavior, their policies vary greatly due to strategic and economic interests. For instance, while “mainstream” platforms have increasingly stepped up their content moderation efforts, as problematic content not only discourages users but also deters advertisers (Gillespie, 2018), “alternative” platforms deliberately advertise themselves as defenders of “free speech.”

User practices

User practices emerge from, and are significantly shaped by, the ways in which users appropriate and leverage the specificities of platforms. By drawing on the concept of platform vernaculars (Gibbs et al., 2015), micro-celebrity (Senft, 2013), self-branding (Khamis et al., 2017), and re-platforming (Innes and Innes, 2021), we distinguish three conspiracism-related user practices: users’ communication, monetization, and insulation of conspiracism.

Communication of conspiracism

The communication of conspiracism encompasses (platform-)specific communicative practices of users to propagate conspiracy theories. This dimension can be understood through the lens of platform vernaculars (Gibbs et al., 2015), that is, “shared (but not static) conventions and grammars of communication, which emerge from the ongoing interactions between platforms and users” (p. 257). Although each platform affords a unique form of communication, it is important to note that “the vocabulary and grammars of vernaculars migrate between social media platforms” (Gibbs et al., 2015: 257). Depending on the specificities of platforms and how user communities embrace them, the communication of conspiracism can take many shapes. For instance, Tuters et al. (2018) found that the technological design of 4chan—an anonymous imageboard known as a hotbed for conspiracy theories—“grammatises” new forms of collective action. De Zeeuw et al. (2020) examined the cross-platform diffusion of the QAnon conspiracy theory, showing that while 4chan and 8kun functioned as “incubators,” YouTube and Reddit acted as “bridge platforms.” With regard to the QAnon movement, Zuckerman (2019: 5) emphasized that it “fully embraced the participatory nature” of platforms.

Monetization of conspiracism

The monetization of conspiracism focuses on the ways through which conspiracy propagators convert their content, visibility, and influence into revenue. As documented in prior research on creator culture (Duffy, 2016; Glatt, 2022; Johnson and Woodcock, 2019), monetization strategies commonly used on social media include advertising, gifting, sponsorships, and subscription. With the rise of “conspiracy entrepreneurs” on digital platforms, it has become increasingly relevant to study conspiracy propagators through the lens of content creators, shedding light on their platform-specific financial strategies. In this sense, the monetization of conspiracism strongly relates to the concept of micro-celebrity (Senft, 2013) and self-branding (Khamis et al., 2017). Both conceptualize how users embrace self-presentation strategies to strategically gain status and develop an “online identity as if it were a branded good” (Senft, 2013: 346). Alongside self-branding, a multi-platform monetization model is key to sustainable revenue as it allows content creators to diversify their income streams (Glatt, 2022). However, from the perspective of content creators, today’s platform economy is replete with uncertainties (Duffy et al., 2021; Glatt, 2022), which are also particularly relevant to conspiracy propagators. In recent years, “mainstream” platforms have increasingly employed interventions to demonetize extremist and conspiratorial content creators, such as terminating revenue-sharing partnerships, which has largely boosted the popularity of “alternative” platforms and their monetizing programs (Trujillo et al., 2020).

Insulation of conspiracism

The insulation of conspiracism refers to circumventive strategies deployed to protect conspiracy theory content and accounts from content moderation and platform governance. Similar to Innes and Innes’ (2021) discussion of re-platforming strategies, attempts to insulate conspiracism on platforms can be understood as a form of countermeasure to deplatformization, allowing conspiracy propagators “to persist in their activities ‘on-platform’, albeit in a moderated form, while simultaneously driving a diversified ‘cross-platform’ presence” (p. 3). Like other types of content creators, conspiracy propagators need to constantly modify their practices to remain visible. As Duffy et al. (2021) point out, creators’ practice and experience are shaped by “the promises and precarity of visibility” (p. 2). For many conspiracy propagators, “mainstream” platforms remain an important venue because of their promising potential for reaching a mass audience despite their increasingly strict content moderation. At the same time, to mitigate against precarity, extending or migrating their presence to various “alternative” platforms has become an important part of their survival strategies. However, content creators’ cross-platform negotiation of visibility should not be simply understood as attempts to maximize their exposure. Remaining “below the radar” is not only a key logic in influencer (sub)cultures (Abidin, 2021), but also an important insulation strategy used by conspiracy propagators to circumvent content moderation (Zeng and Schäfer, 2021). Spreading content below the radar allows creators to achieve what Abidin (2021) describes as “silosociality”—when the “intended visibility of content is intensely communal and localized” (p. 4). In the context of platformed conspiracism, silosociality means cultivating localized visibility in conspiracy theory communities while avoiding attracting the attention of content moderators. This can be achieved through using “alternative” platforms, niche hashtags, and (semi)closed communication channels.

Empirical case study

Platform selection

To illustrate the application of the framework through an empirical case study, we chose to focus on “alternative” platforms. In the aftermath of events such as the Unite the Right Rally in Charlottesville in 2017 or the storming of the U.S. Capitol in Washington in 2021, the pressure from the public, civil rights groups, and regulators has grown on platforms to curtail the ways in which extremist figures and communities use them to organize and radicalize (Van Dijck et al., 2021). Consequently, “mainstream” platforms have cracked down on controversial content creators for violating platform policies; they have subsequently flocked to new virtual refuges, ranging from encrypted instant messaging services like Telegram over livestreaming services like DLive to “alternative” platforms like 4chan, 8kun, BitChute, Gab, Parler, or Voat. As these spaces advertise themselves as bastions of “free speech,” they have gained immense popularity among extremist and radical communities (Jasser et al., 2021) and have become a breeding ground for conspiratorial content (Zeng and Schäfer, 2021).

Despite their significance for the propagation of conspiracy theories and the emergence of related user communities, “alternative” platforms are still thoroughly under-researched (Mahl et al., 2022). 3 We aim to remedy that by studying platformed conspiracism on two marginalized radical platforms that differ critically in terms of the format of communication they facilitate, potentially fostering different forms of platformed conspiracism: the video-sharing platform BitChute and the micro-blogging platform Gab. BitChute, launched in 2017 by Ray Vahey in the United Kingdom, is committed to “put creators first and provide them with a service that they can use to flourish and express their ideas freely” (BitChute, 2022a). Under the flag of “free speech,” BitChute is especially attractive to extremist content creators who were deplatformed from its “mainstream” counterpart YouTube (Trujillo et al., 2020). Founded in 2016 by Andrew Torba in the United States, Gab touts “free speech, individual liberty and the free flow of information online” (Gab, 2022b). As such, Gab established itself as an alternative to Twitter and a refuge for suspended voices (Zannettou et al., 2018). Both platforms target similar user communities, which feature hateful, radical, anti-semitic, right-wing, and conspiratorial actors and content (Trujillo et al., 2020; Zannettou et al., 2018).

In applying our conceptual framework to study platformed conspiracism on BitChute and Gab, we focus on a specific use case for better comparability: COVID-19-related conspiracy theories. Based on the two main building blocks of our framework—the specificities of platforms and the practices of users—the following research questions (RQ) guide our analysis:

Data and method

To answer RQ1, we investigated each platform’s specificities, that is, its technological features, business model, and governance. We conducted an in-depth examination of BitChute’s and Gab’s functionality, interface, and services alongside a documentation analysis of media and annual business reports, the platform’s own news, its terms of service, and community guidelines.

To interrogate RQ2, we examined the curated practices of users, that is, the communication, monetization, and insulation of conspiracism, based on data originating from an earlier project by the authors (Zeng and Schäfer, 2021) that investigated COVID-19-related conspiracy theories on Gab and 8kun during the early phase of the pandemic (January until July 2020). To compile our corpus for BitChute, we first identified channels based on BitChute videos 8kun users linked to in their posts. Identifying and merging BitChute channels associated with these videos ultimately resulted in 18 unique English-language conspiratorial channels that were still accessible at the time of our study. In a second step, we manually identified all COVID-19-related videos shared by these channels (n = 1,213), ranked them by the number of views, and selected the 20 most viewed videos for each channel. 4 This resulted in 282 COVID-19-related conspiratorial videos that, together with the channel and video descriptions, were examined in depth. That is, we applied an inductive qualitative content analysis (Mayring, 2015), including an iterative process of interpreting, paraphrasing, and aggregating the material under investigation. For Gab, we used the same original dataset (Zeng and Schäfer, 2021), ranked accounts by their number of followers, and selected only public and still-existing accounts that contained more than 10 COVID-19-related posts. For better comparison, we selected the same number of unique conspiratorial accounts as we did for BitChute, which published a total of 1,427 COVID-19-related posts, all of which were coded.

Findings and discussion

We will first discuss the specificities of BitChute and Gab by comparing their technological features, business model, and governance. Then we will turn to the respective practices of users by investigating users’ communication, monetization, and insulation of conspiracism.

Specificities of BitChute and Gab (RQ1)

Technological features

Aesthetically, BitChute is strikingly similar to its “mainstream” counterpart, YouTube: it is organized around channels and horizontally presents video-based content. Besides commonly known user-level information such as the name of the channel owner and a short description of the channel’s purpose, the number of subscribers and links to personal websites or other social media platforms are prominently displayed. Content creators’ success in reaching a large audience as well as their support of the platform in the form of paid memberships is visualized by corresponding badges in users’ profiles. The latter can be especially indicative of a strong commitment to the platform and its user communities and reinforces a sense of belonging (Jasser et al., 2021: 13). Forms of user interaction are similar to those offered on YouTube: Users can watch, like, dislike, or post editable comments on videos. Content creators can also add hashtags or descriptions to their videos and select topics (e.g. news and politics) and sensitivity settings (e.g. normal—content suitable for ages 16 and up) to categorize videos. In addition, depending on the type of membership, users can create several channels and synchronize their YouTube channel which automatically back-loads all previously and newly uploaded YouTube videos to BitChute. Against the backdrop of YouTube’s recent deplatforming strategies, such cross-platform infrastructural services are of great importance for extremist and conspiratorial content creators (Klinenberg, 2022).

Like BitChute, Gab shares many features with its “mainstream” counterpart. Gab users (known as “Gabbers”) maintain a profile page with a visual layout almost identical to Twitter. It includes the user’s name, a profile and background picture, a profile summary, and information about the number of posts (called “Gabs”), followers, and followees. Gabbers can create editable posts that are limited to 3,000 characters—and thus resemble Facebook posts more than tweets—and include hashtags, images, links, polls, or safety tags such as a “not safe for work” (NSFW) indication. In addition, users have access to a common set of interaction forms such as liking, reposting, or quoting content. Besides the aesthetic imitation of Twitter, Gab has also adopted signature features from Reddit, such as the symbol “g/” to mark groups which directly refers to Reddit’s subreddit symbol “r/.” Despite its pronounced similarities to Twitter, Gab provides features that make the platform especially appealing to conspiracy propagators. Gab Pro, for example, is a paid upgrade that allows users to join Gab’s “Parallel Economy,” which includes benefits such as an automatic Gab certification. This certification, which is aesthetically identical to the Twitter verification icon, is particularly enticing given that “mainstream” platforms have routinely denied extremist content creators such technological symbols of “cultural capital” (Kor-Sins, 2021: 14). In addition, Gab has continuously expanded its infrastructure. Among other services, it offers users an end-to-end encrypted messaging chat, a peer-to-peer payment service, a video conferencing and live-streaming service, and a web browser extension that is designed to bypass content moderation by enabling users to comment on any website via an overlay that is only visible to other users of this specific browser. By building and integrating various copycat versions of online services, Gab creates a place that unites the most essential forms of interaction and transaction. Moreover, the “infrastructuralization” of Gab, that is, the platform’s transformational process of turning into an infrastructure for users (Van Dijck, 2021), strengthens its position within the wider platform ecosystem.

Business model

While the business models of BitChute and Gab are very similar, they differ significantly from those of “mainstream” platforms. On BitChute, content creators can support the platform by purchasing a membership, which comes with several benefits such as access to additional rights or technological features, or by donating cryptocurrencies. Like BitChute, Gab’s revenue model is community-based and fully supported by its users through premium subscriptions, donations, merchandise sales, and affiliate partnerships. Thus, both platforms explicitly distance themselves from the business model of “mainstream” platforms based on targeted advertising, data mining, and dataveillance. However, it should be noted that both platforms might rely on advertising in the future if they were more attractive to large companies. In fact, Gab is already expanding into this market with its ad service. Much more pronounced than BitChute, Gab markets its revenue model very strongly in a political way. By calling for building one’s “own parallel economy” in the fight against the “corrupt system” around banks, tech companies, media companies, schools, or the government (Torba, 2021), Gab maintains the image of a common enemy, which in turn can further strengthen community cohesion and the construction of an ideological identity (Jasser et al., 2021).

Governance

In line with BitChute’s mission to offer a service that is intended to “foster an inclusive environment where users can express themselves, their thoughts and opinions for open discussion, without discrimination” (BitChute, 2022b: 2), the platform’s governance follows certain principles such as the freedom of expression, individual responsibility, or empowerment of creators (BitChute, 2022b). These principles are reflected in BitChute’s community guidelines, which specify prohibited content and forms of platform misuse (BitChute, 2022a). Accordingly, BitChute claims it does not allow, for instance, animal cruelty, harassment, harmful activities, or violent extremism. Violations of prohibited content must be reported by users. Thus, it is the responsibility of content creators to ensure that they adhere to the platform’s community guidelines. With respect to moderation practices, BitChute claims that it implements several steps, including setting appropriate sensitivity labels, blocking material, or suspending channels (BitChute, 2022b).

Gab’s politically marketed strategy is to claim that its governance practices follow the First Amendment to the U.S. Constitution. Accordingly, the platform ensures that its content moderation “does not punish users for exercising their God-given right to speak freely” (Gab, 2022a). In line with this, prohibited content that is specified in the platform’s terms of service includes, for example, violations of any applicable law of the United States, harming minors, or engaging in actions that may result in physical harm or offline harassment. In addition, Gab claims to set content standards in which users’ posts may not, for instance, be unlawful, obscene, or pornographic. Although Gab rhetorically invokes the absolutism of “free speech,” the platform’s allegedly prohibited content and content standards are not consistent with it—even though actual governance practices seem to paint a different picture. The platform’s policy on hate speech is to allow “hate speech, as defined in New York law, if it is lawful” (Gab, 2022a). This means Gab does not trace hate speech or provide any mechanism to report it, nor does the platform respond to related reports. Like BitChute, Gab hands over the responsibility for reporting unlawful content to users, allows group admins to set and moderate their own guidelines, and only takes actions it deems “appropriate” (Gab, 2022a).

Summary of BitChute’s and Gab’s specificities

BitChute and Gab have positioned themselves as technological equivalents to their “mainstream” counterparts, Twitter and YouTube, by offering similar interfaces and functionality, with Gab also adopting signature features from Facebook and Reddit. However, both also offer services that are particularly attractive to conspiracy propagators. Moreover, Gab has continuously expanded and “infrastructuralized” its services. The community-based, politically marketed business model of BitChute and Gab clearly underscores their strategic positioning within the broader platform ecosystem. In addition, both differ from their “mainstream” counterparts in that they present themselves as defenders of a twisted version of “free speech” and fighters against “big tech censorship” and “cancel culture” (Gab, 2022b; Torba, 2022). At the same time, both platforms claim to prohibit certain content such as harassment or harmful activities—contradicting their “free speech” absolutism—while studies have shown that hate speech is extensively present on BitChute (Trujillo et al., 2020) and Gab (Zannettou et al., 2018). This minimalist approach to content moderation has gained the platforms the favor of conspiracy propagators.

User practices on BitChute and Gab (RQ2)

Communication of conspiracism

Besides well-known conspiracy celebrities like David Icke, “The World’s Best Known Conspiracy Researcher” 5 (according to his BitChute channel), and Alex Jones, “The MOST BANNED person on the internet” (according to his Gab profile), “professional conspiracy theorists,” “free speech fundamentalists,” “investigative reporters,” and “truthers” participated in conspiracy theorizing about the COVID-19 pandemic on BitChute and Gab. Seeking to “bring the truth to light,” “help others wake up,” and “connect the dots,” content creators disseminated numerous conspiracy theories about the origin of COVID-19, its causes, consequences, and containment measures.

Our investigation of how users communicated conspiracism on BitChute and Gab revealed five distinct practices: truth construction, identity building, calls for action, crowdsourcing, and entertainment. These practices, however, differed between both platforms. On BitChute, users most often engaged in truth construction by claiming to uncover the “truth” about the “PLANdemic/SCAMdemic” that existed only in the “mainstream media,” harnessing “hidden knowledge,” sharing “huge discoveries,” and challenging “official” explanations about the origin or cure of COVID-19. In doing so, content creators often cultivated a sense of transparency (cf. Lewis, 2018) and strengthened their claims by citing or interviewing “canceled” and “censored” experts, sources, or (vaccination) victims. Moreover, hosting ideologically like-minded guests allows conspiracy propagators to multiply audiences (cf. Lewis, 2018).

In addition, BitChute users commonly employed identity-building practices by using the platform as a vehicle for engaging in “othering” (Harmer and Lumsden, 2019), that is, practices that seek to (re)draw boundaries in, around, and between individuals and groups. Prominent enemy images were “corrupt fake mainstream media,” scientists, or politicians, who, as “dictators” or “puppets,” only do what “the invisible enemy [. . .] behind the curtain” tells them to do. To develop a strong sense of “we,” users also utilized identity markers like “WWG1WGA” or coded vernacular expressions like “sauce” (instead of “source”), “juice” (instead of “Jews”), and “cen$0red” (instead of “censored”). 6 Moreover, users created a sense of community by acting as “ideological testimonials” (Lewis, 2018: 25), sharing personal experiences of awakening (“red-pilled”).

Identity-building practices were often tied to the negotiation of power and knowledge and the question of which forms of knowledge are accepted, as illustrated in this excerpt from a BitChute video:

I keep hearing people exclaim in frustration that none of this makes any sense. Well, that depends on how you look at it. [. . .] There are people out there who have cracked the code. We are not in the cult, but we have learned to read the language. [. . .] We do understand it and we are trying to explain it, and do you know what they call us? We are called tinfoil hats, conspiracy theorists, right-wing extremists, terrorists, misinformation artists, disinformation artists.

Another strategy to communicate conspiracism on BitChute involved various calls for action. Content creators urged their community to “be critical,” “do your own research and don’t let the media manipulate you,” “stand up for your freedom,” and “spread the word.” In addition, users engaged in crowdsourcing by providing “insight knowledge” or “investigative reports” to the community, such as information on “HOW TO MAKE YOUR OWN HYDROXYCHLOROQUINE.”

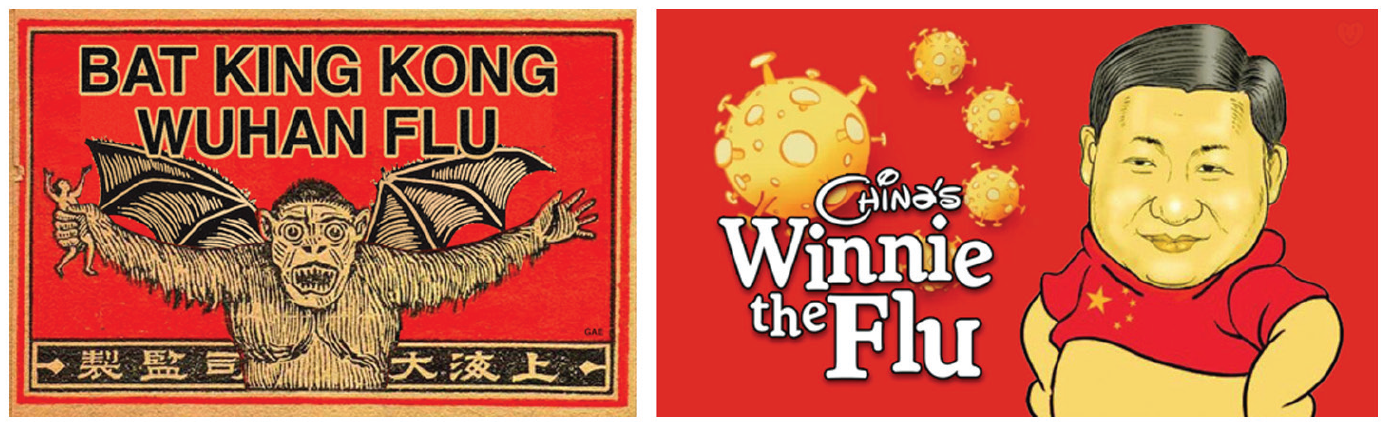

Unlike BitChute, Gab was predominantly used as a broadcasting platform to disseminate information about the pandemic (cf. Zeng and Schäfer, 2021). This means Gabbers primarily communicated conspiracy theories by participating in crowdsourcing through sharing links to other platforms and sources, including “alternative” and “mainstream” social media as well as file-sharing platforms, news media, scientific or governmental websites, or personal sites. Besides that, users engaged in truth construction and identity building—albeit to a much lesser extent than on BitChute. For instance, content creators shared “proofs” for an alleged “premeditated biological terror attack by powerful political elements” or claimed that the Chinese Communist Party “infected the world with the deadly #Wuhanvirus.” They urged their community that it is their responsibility to bring the truth to light and “not blindly follow the gov’t like a #sheep.” In addition, content creators directed various calls for action to the community, but again much less frequently than on BitChute. Finally, unlike BitChute users, Gabbers communicated conspiracy theories by employing entertaining practices—most often in the form of memes (see Figure 2).

Examples of COVID-19-related conspiratorial memes shared on Gab.

Monetization of conspiracism

Our investigation of how users monetized conspiracism on BitChute and Gab revealed two common practices: donations via in-platform features and strategic self-commodification. Unlike the YouTube Partner Program (YPP), BitChute does not have a platform-wide revenue-sharing system where content creators profit from advertising. 7 Instead, the platform allows direct monetization through in-platform donations: Content creators can receive one-time tips or reoccurring donations from the community through a number of payment services such as PayPal, SubscribeStar, or Patreon. In addition to donations, conspiracy propagators promoted products, their own merchandise, and publications and exclusive content. For instance, some content creators promoted conspiratorial explanations of medical issues, health supplements, or alternative medicine, while others used BitChute and Gab to sell conspiratorial media products (e.g. [audio]books). Although Gabbers can use Gab Marketplace to list products, most conspiracy propagators directly promote them by providing links.

As the examples above demonstrate, the strategies employed by conspiracy propagators on BitChute and Gab are similar to monetization practices documented in previous research on influencer culture (Duffy et al., 2021; Johnson and Woodcock, 2019). This further implies the relevance of discussing platformed conspiracism within the context of the platform economy and creator culture. “Alternative” platforms tolerate and facilitate content creators’ monetization of conspiracism. Rather than taking a direct commission on transactions, BitChute and Gab profit from the growing number of ideologically like-minded users—potential donors to the platform. More importantly, for platforms whose business models are not yet diversified, the priority remains generating content and traffic, without which they will have difficulty attracting investors or advertisers.

Insulation of conspiracism

On both platforms, we found numerous incidents of users stating that they were banned by “mainstream” platforms, highlighting the financial loss they suffered. While users instrumentalized their deplatforming as a “badge of honor” within the community (Innes and Innes, 2021), they simultaneously employed strategies to circumvent content moderation and deplatformization efforts. Our investigation revealed that BitChute and Gab users employed two main practices to insulate their communication of conspiracism: the development of a collective network of conspiracism and the diversification of their presence (see Innes and Innes, 2021, for similar findings). On BitChute, users built a collective network of conspiracism by linking to external sources or other content creators who are ideologically close to them or by mirroring content at risk of being banned. The latter was often indicated by phrases such as “mirror of” or “mirrored from,” followed by links to the original source. In this context, content creators frequently called for the distribution of mirrored or banned content across a wide range of platforms and communities: “Download and re-share this video everywhere,” “Let’s make this go globally viral.” This strategy for insulating conspiracism was also found on Gab, but mainly in the form of shared links or quoted and tagged users. Another practice we observed on BitChute and Gab is that users diversified their presence on and across platforms. They promoted links either to their other profiles on the same platform (“LINK TO MY OTHER VIDEO CHANNEL”) or to their personal websites or profiles on other platforms. While in some cases the content on these profiles or sites was identical copies of the original profile, others simply contained lists of active links to all of their online presences. Notably, BitChute allows creators to link their profile and YouTube channel, which allows conspiracy propagators to automatically mirror and archive content from YouTube to BitChute, serving as safety insurance against YouTube’s content moderation.

Although similar practices of using sub-accounts (Kang and Wei, 2020; Weinstein, 2018) and cross-platform branding (Duffy et al., 2021; Glatt, 2022) are commonly employed by content creators, their function as safety insurance against content moderation and demonetization is particularly important for conspiracy propagators. One related trend we observed among conspiracy propagators on BitChute and Gab is the revival of more closed communication channels. For instance, instead of aiming for maximum visibility on all major social media, some conspiracy propagators focus on promoting their personal blogs or mailing lists. For the communication of conspiracism, the main advantage of such channels is that they are below the radar and therefore more insulated from regulations. As discussed earlier, retreating to more localized online spaces allows users to create silosociality (Abidin, 2021). Negotiating between maximizing visibility and cultivating silosociality is an important feature of conspiracy propagators’ content creation practices.

Summary of user practices on BitChute and Gab

We found that unique and platform-specific communicative practices emerged through the use of each platform’s specificities. While conspiracy propagators on BitChute primarily engaged in truth construction and acted as dot-connectors, which the format of long videos significantly encourages, Gab predominantly served as a broadcasting platform to share information by linking to external sources. In addition, our findings clearly underline that both platforms provide conspiracy propagators a fertile refuge through which they can maintain their presence and connection with their followers—which also allows them to profit from their visibility by receiving monetary rewards.

Conclusion

Digital platforms provide fertile infrastructures for the dissemination of conspiracy theories. Consequently, the logics of platforms (Van Dijck and Poell, 2013) have greatly changed the culture of conspiracy theories, including their visibility, connectivity, and language. To conceptualize these changes, we introduced the notion of platformed conspiracism, that is, reconfigured forms of conspiracy theory communication that emerge from the confluence of the specificities of platforms and the emergent practices of users.

To conceptually investigate platformed conspiracism, we proposed a framework that identifies its key analytical dimensions: platform specificities, which include the platform’s technological features, business model, and governance; and user practices, which encompass users’ communication, monetization, and insulation of conspiracism. By integrating both building blocks, we acknowledge the socio-technical nature of platforms and users and how they mutually shape and co-construct each other.

To empirically study platformed conspiracism, we assessed how it unfolds on two under-researched “alternative” platforms, BitChute and Gab, during the COVID-19 pandemic. Our results indicate that both platforms shaped platformed conspiracism in slightly different ways. This is likely due to the platforms’ technological features, which allow for different formats of content (long-format video content vs short posts), as well as the monetization opportunities that BitChute and Gab offer their users—and the different ways in which users embrace them. Differences between BitChute and Gab were most apparent with respect to their “mainstream” counterparts. Consistent with previous research (Innes and Innes, 2021; Rauchfleisch and Kaiser, 2021), our study suggests that the effects of deplatforming may merely be short-term. While it takes time for conspiratorial content creators and “alternative” platforms to build an “alternative” ecology, in the long run, conspiracy propagators may be able to rebuild their network through cross-platform content distribution.

The current study gained important insights into how BitChute and Gab constituted different forms of conspiracism by interrogating how particular platform specificities and related user practices mutually articulate each other. However, it needs to be considered in light of some limitations that may guide future research. First, it should be noted that the conceptual framework does not allow for a precise determination of how or to what degree platforms promote the visibility of content, the emergence of specific forms of exchange, or connections between users. Second, while comparing BitChute and Gab with one another elucidated unique platform specificities and user practices, we did not systematically compare both platforms with their “mainstream” counterparts. Thus, we could not see, for example, how conspiratorial, ideologically biased, and partly deviant the communication on “alternative” platforms is compared to the “mainstream” equivalents. Future studies may thus expand on this work by examining platformed conspiracism in “mainstream” spaces.

Notwithstanding these limitations, we hope that our conceptual framework and empirical findings inspire future research aims and agendas and stimulate the development of digital methods to systematically interrogate how platformed conspiracism diffuses across platforms.

Footnotes

Acknowledgements

We would like to thank our two anonymous reviewers and the editor, whose thorough comments and valuable feedback tremendously improved this article.

Authors’ note

All authors have agreed to this submission. We confirm that the article is not currently being considered for publication by any other print or electronic journal.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was funded by the Swiss National Science Foundation (SNSF) as part of the project “Science-related conspiracy theories online: Mapping their characteristics, prevalence and distribution internationally and developing contextualized counter-strategies” (Grant: IZBRZ1_186296).