Abstract

This article deals with YouTube’s advertiser-friendly content guidelines – the content rules dedicated to defining what YouTube deems advertiser (un)friendly and that YouTube creators seeking to monetise their content through advertising have to follow. Specifically, this study addresses the textual composition of YouTube’s regulations with a focus on occurrences of vagueness. Regarding methods of data analysis, I take a corpus-assisted discourse analytical approach to YouTube’s texts on advertiser-friendliness. That is, I take a wide-angle view on all concordance lines of ‘content’ and identify occurrences of different forms of vagueness. Findings suggest that at least 26% of the lines of ‘content’ detailing YouTube’s ad-friendly content guidelines exhibit at least one of eight forms of vagueness. Consequently, content creators – left unsure about the monetisability of particular content – may choose not to push any boundaries but to produce noncontroversial content, which, in turn, may impede content plurality on YouTube.

Keywords

Introduction

Social media (SM) are not tabulae rasae 1 that allow users/content creators to do and say what they will. Rather, the majority are for-profit businesses that limit user/creator behaviour through technological and policy intervention. Hence, although ‘it remains tempting to study social dynamics on platforms while ignoring the platforms themselves, treating them as simply there, irrelevant, or designed in the only way imaginable’ (Gillespie, 2015: 1), discourse analysts have stressed the importance of exploring how SM platforms’ design, affordances and governance restrict users’/creators’ activities and have begun to study elements connected to this (Bouvier, 2015; KhosraviNik, 2017; Kopf, 2019).

This article complements this existing discourse analytical research by examining YouTube, a platform that has enjoyed popularity since the 2000s (Alexa, 2021). Motivated by the site’s prominence in the SM landscape, the persistent lack of discourse analytical research on SM’s content policies generally, and YouTube’s policies in particular, this paper sheds light on the textual composition of YouTube’s advertiser-friendly content guidelines. That is, the article addresses the policies applied to creators who wish to monetise by having advertising inserted into their content. Overall, this article’s objective is twofold. First, it sheds light on if and how vagueness is realised in YouTube’s policies, that is, it homes in on the textual means by which the SM provider establishes a discourse of vagueness and imprecision (section ‘Key findings – a discourse of imprecision’). Second and building on this, the article discusses the broader implications of YouTube’s use of vagueness in its policy texts (section ‘Implications and conclusion’).

Several factors make this study particularly relevant. First, in terms of the fields of linguistics and discourse analysis, this study illustrates the different textual means that can be used to establish a discourse of imprecision and vagueness. Second, the importance of the analysed policies make this study especially pertinent. As advertising is a key source of revenue for YouTube and monetising creators (Spangler, 2020; also see Frade et al., 2021 for more on advertising on YouTube), the policies regulating the advertiser friendliness of content touch on the core of what drives the for-profit venture, its operations and YouTube’s monetising creators’ actions. Therefore, these policies, and how YouTube may employ vagueness strategically, deserve particular research attention. In addition and connected to this, this study is essential as the presence/absence of vagueness may influence monetising creators’ decisions regarding content production and, consequently, may affect YouTube’s content plurality. This is because a lack of unambiguous information on what precisely YouTube considers advertiser-friendly content leaves room for interpretation and may inspire insecurity among creators if their content is advertiser friendly enough for monetisation (see more on this in the sections ‘Social media: arenas for free debate versus corporate censorship’ and ‘Implications and conclusion’). Here, it is also worth noting that SM allow the unique opportunity to glean insight into content policies not typically available to the public regarding traditional media. That is, traditional media, whose content tends to be produced by employees, do not need to share their policies on advertiser friendliness publicly. In contrast, SM rely on users’ content production, i.e., on content produced by people/entities outside of the business. Therefore, the policies have to be made publicly available and are thus accessible for research. Despite this notable opportunity provided by SM, a lack of discourse analytical research on SM content policy texts persists – a dearth the following study seeks to redress.

Past research and theoretical background

Social media: arenas for free debate versus corporate censorship

These days, humanity faces issues that require us to band together transnationally, formulate global responses and make concerted efforts to redress these matters, among which are climate change or the COVID-19 pandemic. Arguably, we need spaces that allow us to share and access information and debate solutions for these global challenges. That is, humanity needs transnationally accessible arenas – possibly even public spheres as envisioned by Habermas (1992) and other theorists 2 – where people can discuss issues of socio-political import, express and form opinions and possibly even decide on collective action (p. 436).

The participatory web and SM especially seem to provide spaces that could give rise to such transnational, even global, arenas. After all, a substantial part of humanity has access to SM (see Johnson, 2021 for more on Internet access worldwide). Possibly, then, SM could function as global public spheres – if not as strong ones, that is, spheres for political action (Fraser, 1990: 75), then at least as general ones dedicated to discussion of opinions without necessarily translating that into political action (Eriksen, 2005: 345). Indeed, the Internet and SM specifically have inspired research regarding how and why they can(not) function as transnationally accessible spaces that allow private citizens to share information, engage in critical debate and even proceed to activism (Papacharissi, 2002; Salvatore, 2013).

Some researchers take an optimistic view and highlight the democratising and empowering potential of SM (e.g. Castells, 2013; Tufekci, 2013). Shirky (2011), for instance, argues that SM strengthen civil society, not least of all because they offer a venue for the coordination of protests. Examining Spanish Twitter data, Hermida and Hernández-Santaolalla (2017) stress the micro-blogging site’s potential to give rise to citizen journalism and give voice to alternative views than presented in mainstream media. Especially notable in the context of this article, Antony and Thomas (2010), in a relatively early study of citizen journalism on YouTube, suggest that YouTube may serve as a public sphere understood as ‘a network for communicating information and points of view’ (Habermas, 1996: 360 quoted in Antony and Thomas, 2010: 12). Another vein of research cautions against hailing SM as venues for free debate, deliberation and political engagement, for example, Kruse et al. (2017) highlight the digital divide and identify the lack of global access to SM as problematic. Poell and Van Dijck (2015) acknowledge that SM are valuable tools for activism, but also underscore that activists are still subject to the ‘technological mechanisms and algorithmic selections operated by large SM corporations’ (p. 534). Connected to this, a number of scholars have argued that SM’s profit orientation impedes their potential to support the free dissemination of information and unhindered debate, not least as the for-profit focus leads to SM platforms’ preferring/dispreferring particular types of content (Allmer, 2015; Bouvier, 2015; Fuchs, 2015).

Altogether, across the literature discussing SM as spaces that may support global public spheres, citizen journalism and activism, one prerequisite for SM to be used in such ways has not been called into doubt; namely the importance for people to be able to freely select topics for debate. In other words, the importance of citizens’ freedom of expression and freedom from censorship on SM has remained unchallenged. However, and as the critical line of research discussed above suggests, SM do not provide spaces free from content control, that is, censorship. 3 Already in 2006, Klang (2006) concluded that ‘[t]he threat presented by the privatized censorship of service providers should not be underestimated’ in online contexts (p. 189). One form of privatised censorship is SM businesses moderating content, or at least providing a set of content policies (Gillespie, 2018: 5). Here, Gillespie (2018) argues that some censorship is necessary for the good of society (pp. 5–7). 4 However, as SM sites are typically run by for-profit companies, SM content is ‘subject to both the commercial logics of, and restrictive interventions by’ SM companies (Hintz, 2015: 119). SM businesses do not merely censor content for the good of society but engage in economically motivated content control and restriction of freedom of expression – in corporate censorship 5 (Sell, 2016: 177).

In connection with this, Fuchs (2015) also argues that capitalist media’s content is tailored in accordance with their profit objective – he finds that such media present ‘[c]ommercialised and tabloidised content’ (p. 332) as content funded through advertising tends to focus on entertainment rather than critical engagement with controversial issues (Habermas, 1990: 267). Indeed, SM companies generate profit by offering opportunities for other businesses to advertise on their SM platforms. This explains why SM companies engage in corporate censorship, for example, by applying policies that guide creators’ content production with the aim of attracting large numbers of visitors, which in turn, ought to attract advertisers. As well as appealing to visitors and thus ensuring SM’s appeal for advertisers, SM’s censorship in the form of content regulations arguably aims to lead to content uncontroversial enough for various brands to wish to run advertising alongside the content, for example, YouTube videos. Thus, SM companies – such as YouTube with its ‘advertiser-friendly content guidelines’ (see below) – seek to control content to protect their advertisers from association with controversies and ideologies that could alienate customers. Kumar (2019) even argues that SM businesses’ reliance on advertising leads to excessive content control, that is, control that limits ‘content plurality’ (p. 2).

While research has acknowledged that SM’s content control affects content plurality, so far, empirical studies have not addressed how the textual composition of SM policies may influence content plurality. Hence, my analysis of YouTube’s ‘advertiser-friendly content guidelines’ is not merely an end in itself by elucidating on the textual realisation of vagueness. Rather, building on the empirical findings, I present considerations regarding how YouTube’s use of vagueness ties in with and may exacerbate the loss of content plurality, and how this may limit YouTube’s ability to provide an arena for free debate and information exchange.

YouTube: past research and the advertiser-friendly content guidelines

YouTube, the video-sharing platform, has inspired research since its rise to prominence in the 2000s. To name but two research strands, for one, researchers have examined YouTube’s characteristics, the site’s discourse and how various discourses are realised on YouTube. Second, research has focused on activism and critical engagement, that is, has addressed how YouTube may serve as an arena for information sharing and debate as discussed in the section ‘Social media: arenas for free debate versus corporate censorship’.

Regarding the former strand, Burgess and Green’s (2018) examination of YouTube deals with the platform as a research object on multiple levels, ranging from how YouTube is used to how it has been received by the traditional media 6 and cultural production on the site in light of its for-profit orientation. Taking a narrower view, Benson (2019) homes in on the discourse of YouTube and presents a framework for dealing with the site’s complexity of multimodal semiosis and multi-authored content production (also see Ng, 2018). Moreover, research has addressed various discourses on YouTube, for example, YouTube discourse around topics such as makeup (Riboni, 2017), YouTube discourse of a particular function such as discriminatory discourse (Chen and Flowerdew, 2019) and content creators’ discursive behaviour as performances of identity and authenticity (Yueh, 2020).

Concerning the latter strand, Raby et al. (2017) focus on young Canadian vloggers and find traces of civic engagement in their videos. Similarly, Garcia and Vemuri (2017) study how SM in general, and YouTube in particular, may serve as spaces for creators to challenge rape culture and Mallapragada (2017) highlights YouTube’s potential in making visible disadvantaged social groups and raising awareness of social problems. While these studies suggest that YouTube may serve as a space for free information sharing and civic engagement, it is worth noting that they focus on YouTube before 2017, that is, before YouTube introduced stricter policies on what is ‘advertiser-friendly content’ (see more on the ‘adpocalypse’ in Dunphy, 2017).

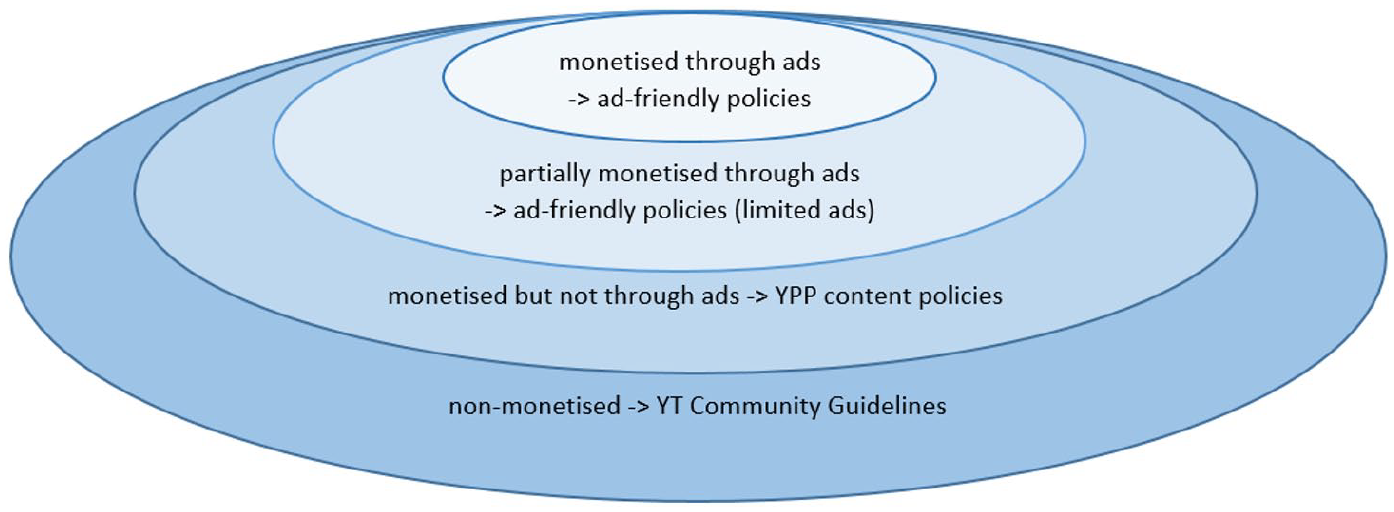

Generally, YouTube’s body of content policies illustrates that the SM provider engages in corporate censorship motivated by its reliance on advertising – after all, YouTube even has a dedicated policy set regulating what content is monetisable through advertising: the ‘advertiser-friendly content guidelines’. Here, it is noteworthy that YouTube does not apply its ad-friendly content policies to all creators, but has policies for different types of creators (see Figure 1). The outermost circle of Figure 1 refers to the ‘community guidelines’ – these regulate the content by all YouTube creators and users. Second, YouTube has policies designed for creators seeking to monetise their content – who are part of the ‘YouTube Partner Programme’ (YPP). The YPP policies apply to all content creators aiming to earn money, for example, from sponsorship deals, selling merchandise and advertising (YouTube Help, 2021). The two innermost circles refer to the ‘advertiser-friendly content guidelines’ only. That is, the third circle in Figure 1 refers to content that can be monetised through some advertising but is deemed inappropriate for all advertisers. The innermost circle refers to content that is completely compliant with the strictest of YouTube ad-friendly content guidelines and can be fully monetised through advertising. Overall, content that yields advertising revenue – a notable revenue stream for creators as well as YouTube (Spangler, 2020) – is subject to the strictest level of content control as creators have to comply with all the more general policies (all layers depicted in Figure 1) on top of the ad-friendly content guidelines.

Layers of (non-)monetised content and the policies applied.

The following sections home in on YouTube’s advertiser-friendly content guidelines as a form of privatised corporate censorship.

Data and method

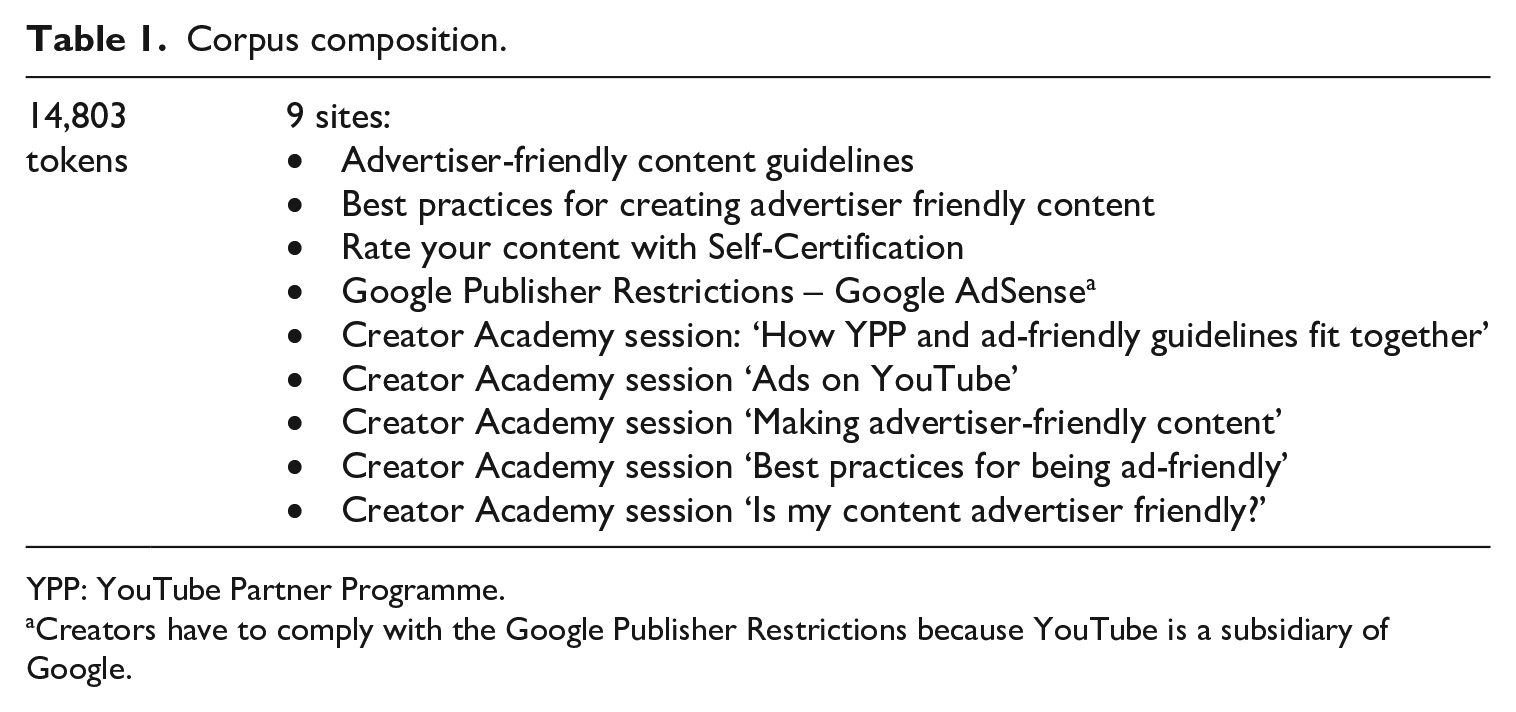

The data consist of a corpus encompassing YouTube’s ad-friendly content policies and further information on these (Table 1). In March 2020, I sampled the ad-friendly content policies detailed in the context of the YPP and ‘Creator Academy’ 7 content that focuses on the ad-friendly content policies.

Corpus composition.

YPP: YouTube Partner Programme.

Creators have to comply with the Google Publisher Restrictions because YouTube is a subsidiary of Google.

Regarding data analysis, I take a corpus-assisted discourse-analytical approach. Here, the corpus tools merely serve as the entry point into the data, which are then examined qualitatively. Using AntConc (Anthony, 2020), I create a frequency list and identify the most frequent reference used for creators’ uploaded material, as this item allows insight into how YouTube discusses the advertiser (un)friendliness of uploaded data and how the SM provider presents readers (i.e. creators) with its guidelines. YouTube overwhelmingly uses the term ‘content’ to refer to creators’ material – the item is the topmost used content word in the corpus and takes rank five in the frequency list. Then, I examine ‘content’ in more detail. While I pay attention to this item’s collocational profile (t-score, span of four), my focus is on the qualitative investigation of all 351 concordance lines of ‘content’ in co-text. For this, I take a wide-angle view ‘equivalent to text extracts’ (Partington et al., 2013: 18) on the concordance lines of ‘content’ – at least 40 tokens left/right of the node – to ensure a comprehensive understanding of how YouTube discusses ad-(un)friendliness.

The focus of my data discussion below is on occurrences of vagueness in these 351 lines (Channell, 1994). 8 That is, language use that is semantically or epistemically unclear – that describes ad-(un)friendly content in terms that lack ‘sharp boundaries’ (Raffman, 2014: 2) and whose meaning is ‘contextually sensitive’ (Raffman, 2014: 19–20). Specifically, I home in on occurrences of vagueness regarding the nature of ad-friendly content and the monetisability of content through advertising. Examining all 351 concordance lines of ‘content’, I identify eight types of vagueness in the data:

Vague quantification (e.g. ‘some’, ‘most of the time’) (Drave, 2002: 26);

Gradable adjectives used without a frame of reference that sheds light on the ‘cutoff’ point (e.g. ‘tall’, ‘strong’) (Égré and Klinedinst, 2011: 12);

Vague expressions, that is, expressions that by their very nature are not defined (e.g. ‘thing’) (Koester, 2007: 41);

Modal verbs that serve to mitigate the certainty of a statement’s proposition (e.g. ‘may’, ‘might’);

Epistemic stance adverbials that introduce uncertainty into a proposition (e.g. ‘possibly’) (Rozumko, 2017: 76);

Indetermination in the representation of social actors, that is, instances where actors’ ‘unspecified’ representation (Van Leeuwen, 1996: 51) leads to a lack of clarity regarding monetisability of content, for example, who and how many brands are willing to advertise on certain content;

Vague categorisation, that is, when a particular classification is provided/evoked but the borders of this category are blurred and some form of openness regarding this category is suggested (e.g. ‘nudity and stuff like that’) (Channell, 1994: 119ff);

Statements whose applicability depends on regional context although YouTube’s regulations apply globally (e.g. ‘illegal drugs’).

In addition to these elements whose linguistic realisation is relatively apparent on the text surface, 9 I address a ninth category, namely vocabulary whose referents are culturally dependent. With its texts about ad-friendly content, YouTube addresses a global audience of content creators, that is, individuals from various cultural backgrounds. Still, YouTube might use lexis that requires a ‘shared cultural frame of reference’ (Evison et al., 2007: 139); lexis that – because of its variation in referents depending on cultural context – may constitute vagueness in the context of texts addressed to a global audience. It is important to note, that – in contrast to the eight aspects above – I do not quantify this ninth phenomenon because the assessment of whether certain vocabulary is culturally dependent is highly subjective, arguably more so than the eight types of vagueness above as these are identifiable on the text surface. That is, observing that the phenomenon occurs is important for its implications in terms of vagueness, but rigorous quantification is impossible and is not attempted.

Key findings – a discourse of imprecision

Before detailing how YouTube’s texts exhibit vagueness, two preliminary observations deserve mention. First, YouTube discusses whether content can be monetised in terms of ‘suitability’ instead of explicitly prohibiting certain types of content for advertising. Already indicated by ‘content’ collocating with ‘suitable’ at a t-score of 3.5, the concordance lines of ‘content’ drive home this point, for example, ‘This type of content is not suitable for any of our advertisers’, ‘that content may still not be suitable for advertising’ and ‘certain types of content may not be suitable for all brands’. Using intensive attributive relational processes with advertisers/brands as alleged attributors 10 (Halliday and Matthiessen, 2014: 265) (e.g. ‘for all brands’/‘for [. . .] our advertisers’), YouTube evokes the impression that the advertising brands themselves review content for advertiser friendliness and judge suitability. It shifts responsibility to the advertisers and disguises the prominent role YouTube as platform operator undoubtedly has in the process of assessing suitability, curating content for advertising, and designing the ad-friendly content guidelines.

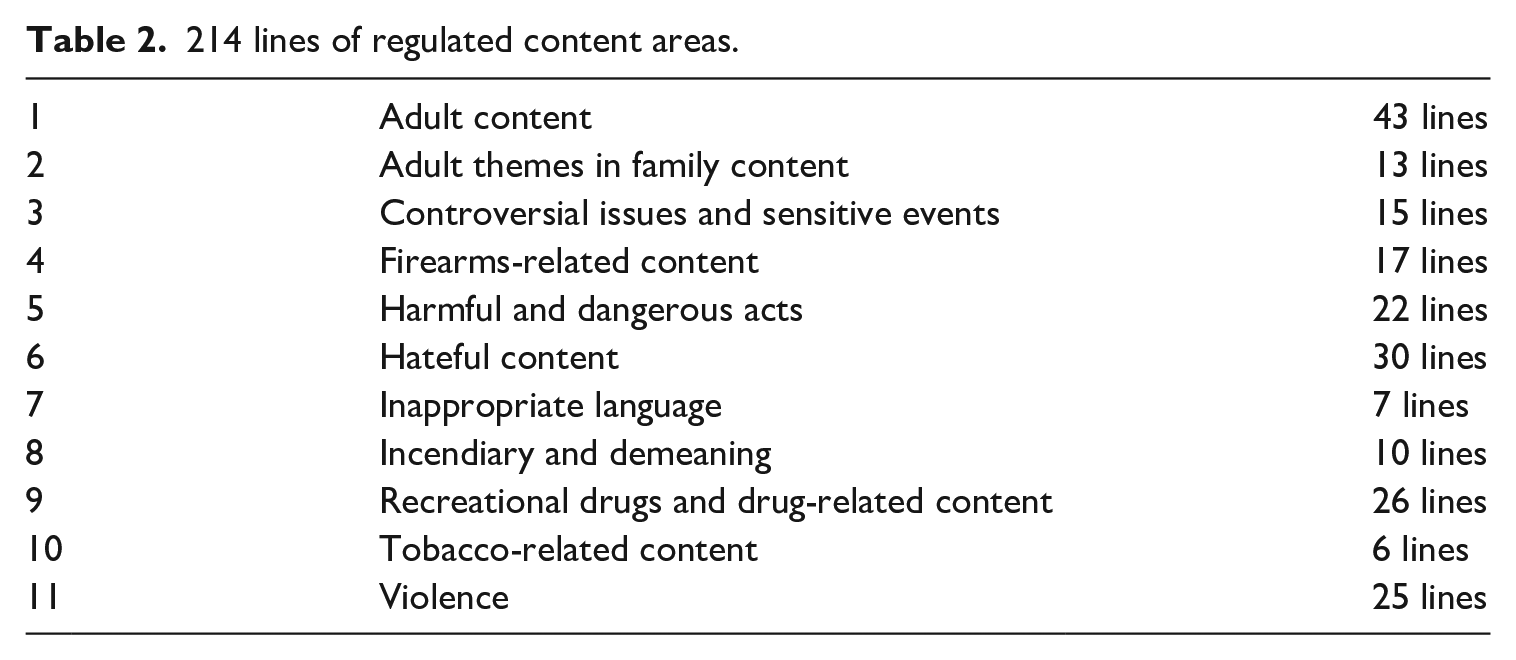

Second, a preliminary reading of the corpus shows that YouTube regulates the content areas listed in Table 2. Moving on to the concordance lines of ‘content’, of all 351 lines, 35 lines can be discarded as these do not use ‘content’ in reference to the ad-friendly guidelines (e.g. ‘as content creators, we want to make sure that we’re doing all that we can to get educated’). Another 24 lines can be discarded as they are merely headings used in YouTube’s texts (‘Adult content’, ‘Hateful content’). Of the remaining 292 lines, 214 lines address all the regulated content areas (Table 2), albeit to varying degree. 11 Since the corpus-assisted perspective on the data allows an insight into YouTube’s treatment of all regulated areas, the chosen method of data analysis appears well suited to achieve an adequate understanding of how the SM provider discusses its various content regulations.

214 lines of regulated content areas.

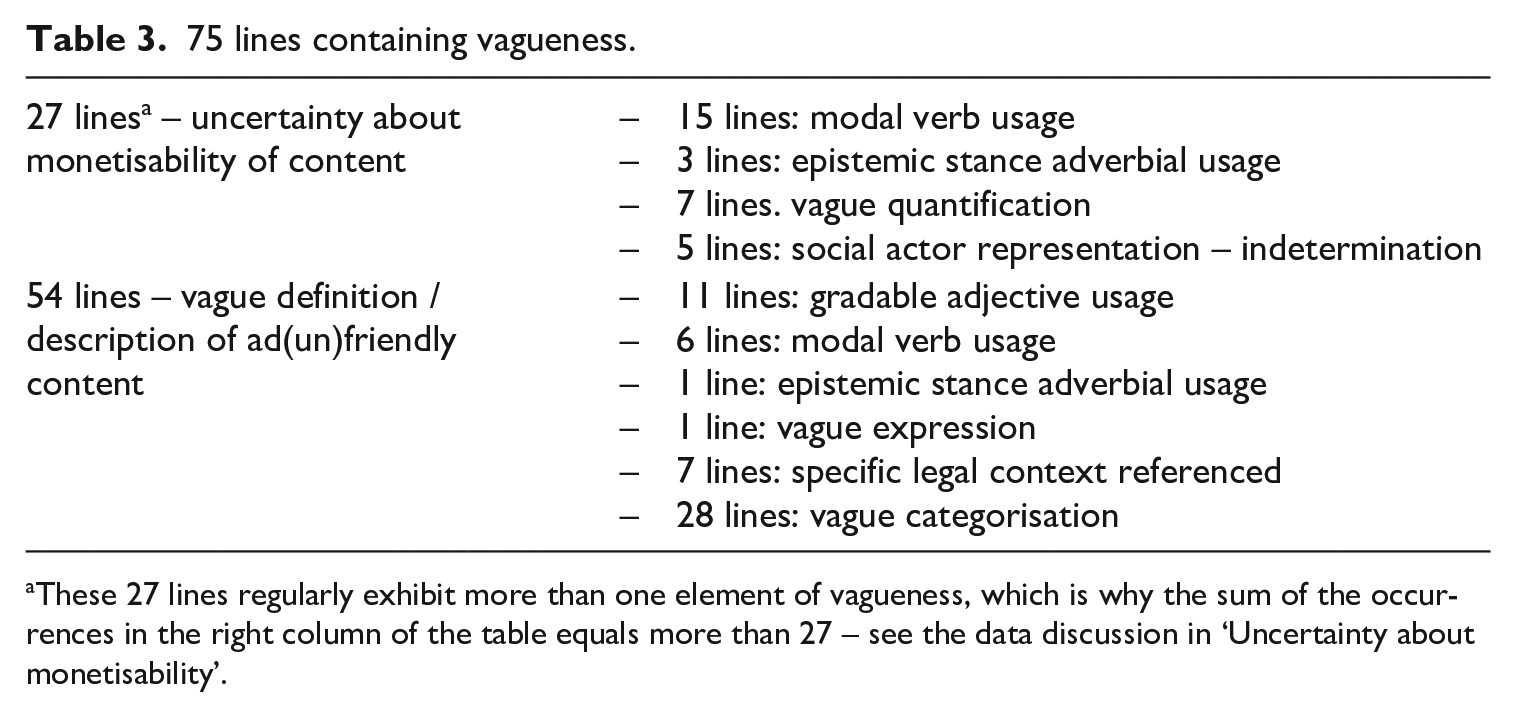

Returning to all 292 lines of ‘content’ dedicated to discussing the advertiser-friendly content regulations, 75 lines thereof (26%) exhibit one of the eight markers of vagueness listed in the section ‘Data and method’ (Table 3). These eight different types of vagueness create a lack of clarity regarding two aspects: on one hand, 27 lines introduce uncertainty about the monetisability of content. On the other hand, 54 lines contain vagueness regarding the nature of ad-(un)friendly content. 12

75 lines containing vagueness.

These 27 lines regularly exhibit more than one element of vagueness, which is why the sum of the occurrences in the right column of the table equals more than 27 – see the data discussion in ‘Uncertainty about monetisability’.

Uncertainty about monetisability

Vagueness regarding the monetisability of content was found in 27 lines of ‘content’. That is, these lines are vague about whether particular content is monetisable through advertising and to what degree. One phenomenon reducing certainty about monetisability is YouTube’s use of modal verbs. In 15 lines of ‘content’ YouTube uses ‘may, ‘might’ and ‘should’ to this effect:

(1) Educational content in most cases 13 should be safe to monetize;

(2) Content that is satire or comedy may be exempt;

(3) This video content might not be suitable for advertising;

(4) Content that contains frequent uses of strong profanity or vulgarity throughout the video may not be suitable for advertising. Occasional use of profanity won’t necessarily result in your video being unsuitable for advertising.

Examples (1)–(4) allow an insight into whether content ‘should’ or ‘might’ / ‘may’ (not) be safe or suitable for monetisation through advertising. Examples (1) and (2) suggest that particular content can be monetised despite specific policies – (1) by referring to content as ‘safe’ for monetisation and (2) by referring to a possible exception from a content regulation, that is, satirical/comedic content ‘may be exempt’ from and acceptable for monetisation. Excerpts (3) and (4) exemplify instances where non-monetisability of particular content is suggested but no conclusive statement to this effect is made.

Besides illustrating the use of modal verbs, (4) showcases another phenomenon occurring in overall three lines, 14 namely the use of epistemic stance adverbials (Rozumko, 2017: 76). In this excerpt, after using the modal verb ‘may’ to decrease certainty of the proposition ‘content that features X is unsuitable for advertising/monetisation through ads’, YouTube refers to a possible exception to the rule in the second sentence. In addition to the exception itself not being conclusively defined (‘occasional’ as vague quantifier – see ‘Vagueness in describing ad-(un)friendly content’), it is not clear if an element is indeed an exception as YouTube’s use of the adverbial phrase ‘not necessarily’ implies that profanity could but need not lead to ad unsuitability. To give another example of an epistemic stance adverbial introducing insecurity about monetisability: ‘this content will likely receive less advertising than other, nonrestricted content’.

Excerpt (1) also illustrates another form of vagueness – vague quantification with the effect of reducing certainty regarding monetisability occurs in seven lines (Drave, 2002: 26). In addition to the modal verb usage, in (1) YouTube decreases certainty of monetisability by modifying the proposition ‘educational content is safe to monetise through ads’ with ‘in most cases’ and thus evokes uncertainty when precisely content is monetisable. To give another example of vague quantification used to this effect: ‘For the most part, that content is safe to monetise’ – here, the prepositional phrase containing vague quantification introduces uncertainty to an otherwise unhedged statement.

A final phenomenon (five lines of ‘content’) is the lack of specificity in terms of the advertisers as social actors (see more in van Leeuwen, 1996: 51). Examples (5) and (6) are statements that comment on monetisability implicitly, that is, by referring to advertisers’ willingness to have their advertisements appear on certain content, which then affects whether content can be monetised through advertising:

(5) Every brand is different, and some have different views on the kind of content 15 they are comfortable appearing alongside;

(6) Some advertisers may choose not to show their ads on particular content types that don’t match their brand.

Both examples constitute cases of indetermination in representing the advertisers. Thus, they obfuscate how many, or which advertisers in particular, may not ‘choose’ to, or are not ‘comfortable’ with, having their brand associated with particular content. As the number and nature of advertisers (un)willing to run advertising on particular content affects monetisability, the lack of concrete information here constitutes vagueness.

Vagueness in describing ad-(un)friendly content

Altogether 54 lines of ‘content’ exhibit one of the eight forms of vagueness in the sense of not giving precise information on the nature of advertiser-(un)friendly content.

Vagueness arising from the use of gradable adjectives occurs in 11 lines of ‘content’ (see more in Égré and Klinedinst, 2011: 12). Notably, even when defining a phrase, that is, when providing additional information for creators to understand YouTube’s policy texts, the SM provider diminishes the precision of the definition through use of gradable adjectives. For example, as a footnote to discussing the policy on violence, YouTube provides a definition of ‘mild violence’ as content acceptable for monetisation:

(7) Definitions: ‘Mild 16 violence’ refers to scuffles in real-life content or fleeting violence like punching;

(8) Inappropriate 17 language: Content that contains frequent uses of strong profanity or vulgarity throughout the video may not be suitable for advertising. Occasional use of profanity won’t necessarily result in your video being unsuitable for advertising;

(9) Content that’s meant for more mature audiences can still make money on YouTube.

The use of the adjective ‘fleeting’ 18 in (7) only gives vague indication of the amount of time violent content may take up in a video or the intensity that violent content may have in order to be judged as ‘mild violence’. Example (8) reproduces example (4) above – YouTube’s use of ‘frequent’ and ‘occasional’ does not shed light on how often, precisely, a creator may use profanity (see Solt, 2011 for more on the relationship between expressions of quantity and gradable adjectives in the context of vagueness). Moreover, the gradable adjective ‘strong’ introduces vagueness regarding the intensity of the profanity deemed acceptable for monetisation. Interestingly, in contrast to other instances of YouTube using gradable adjectives, a corpus search for ‘strong*’ shows that the SM provider does provide a frame of reference to aid interpretation of the phrase ‘strong profanity’: ‘Strong profanity refers to words such as the “f-word”, its derivations and any equivalents’. Still, this attempt at clarifications hinges on an example (‘such as’) and highlights that the ‘f-word’ is not the only item that is considered strong profanity but also ‘equivalents’, a term whose referent remains unspecified. In example (9), apart from other factors (see more below), the lack of precision arises from the absence of a definitive frame of reference for the adjective ‘mature’ and the connected unknowability of what is a ‘more’ or less mature audience.

YouTube uses modal verbs to the effect of decreasing precision in six instances, for example, in examples (10) and (11) YouTube uses a passive 19 modal verb construction – (10) suggests that particular content ‘could be considered’ advertiser-unfriendly content (viz. matching the topic area ‘incendiary and demeaning content’). In (11) content is deemed advertiser unfriendly if it ‘may be interpreted’ in certain ways:

(10) Ask yourself if your content focuses on shaming or insulting an individual or group. If so, this could be considered incendiary and demeaning;

(11) Content that may be interpreted as promoting a sexual act in exchange for compensation.

Two more aspects of vagueness are realised each in one line on ‘content’: One line shows how an epistemic stance adverbial introduces a degree of vagueness regarding the nature of content that is not advertiser friendly (12). By comparison, another source of imprecision in YouTube’s discussion of ad-(un)friendly content is the use of a vague expression in one line (see Koester, 2007: 41). That is, in (13) YouTube uses a noun that does not have a conclusive definition (‘stuff’) in addition to premodifying it with an adjective that depends on subjective or culturally-dependent assessment (see below):

(12) Content likely to offend a marginalised individual or group;

(13) [There is] content on YouTube that basically is just trying to trick kids into thinking the content is for them and then it contains some weird stuff.

YouTube discusses the types of ad-(un)friendly content in terms of vague categories in 28 lines. However, in contrast to traditional vague category markers discussed in linguistics (Channell, 1994; Evison et al., 2007), YouTube does not do so by using such markers tagged onto sets of elements that evoke or create a category (e.g. ‘Take orders and clean table and stuff’). Instead, vague categorisation in YouTube’s texts occurs in the form of formulaic phrases used to introduce lists of examples – the concordance view of ‘content’ shows that (14) is used in 22 and (15) in 6 lines:

(14) Some examples of content that also fall into this category;

(15) Examples (non-exhaustive) – Limited or no ads: Content that [. . .].

Here, the reference to ‘example’ suggests a degree of categorial openness, as an example is but ‘an instance (such as a problem to be solved) serving to illustrate a rule or precept or to act as an exercise in the application of a rule’ (Merriam-Webster, 2021). In (14), the vague quantifier ‘[s]ome’ underscores the point that the subsequent lists of examples do not instantiate all thinkable elements pertaining to the topic areas. In (15), the word ‘example’ alone would have sufficed to express the incompletenss of the subsequent lists and adding ‘non-exhaustive’ would not have been necessary to convey this point. YouTube’s pleonastic inclusion of the word serves to emphasise and cement this point of incompleteness. Altogether, the effect of YouTube’s introductions to its lists of examples is similar to a vague category marker tagged onto a set of elements – the recipients of YouTube’s texts are left to make sense of and complete the set of elements that constitute the category.

As an aside, (15) has interesting implications regarding the aspect discussed in the section ‘Uncertainty about monetisability’. In introducing its lists of examples, YouTube may evoke insecurity about whether particular content can be monetised. YouTube refers to ‘Limited or no ads’ together and does not clearly distinguish between content that is fully advertiser unfriendly and content acceptable for some advertising (see Figure 1). Thus, creators producing content of this nature cannot be sure if their content will yield ‘limited’ advertising or indeed ‘no’ advertising and the associated revenue cuts.

Seven lines of ‘content’ exhibit another form of imprecision. In these lines, YouTube refers to the legality of certain substances or actions without specifying which legal context and applicable law YouTube uses as a frame of reference. This might elicit insecurity among creators as YouTube’s policies are applied globally, that is, across numerous legal frameworks with differences regarding what is deemed ‘illegal’ or what is a ‘regulated’ substance. To give but one example:

(16) Content that features the sale, use or abuse of illegal drugs, regulated drugs, substances, or other dangerous products is not suitable for advertising.

Moving on from the discussion of the 75 concordance lines of ‘content’ that exhibit one or more of the eight forms of vagueness listed in the section ‘Data and method’, YouTube’s vocabulary deserves brief attention. The SM provider draws on lexis that could lead to a lack of clarity for content creators. This is because YouTube uses vocabulary that requires a ‘shared cultural frame of reference’ (Evison et al., 2007: 139) among text producer and text audience. However, the policies are applied globally and the texts setting out these policies are aimed at a global audience of content creators. Consequently, it can be argued that using signifiers whose signified depend on cultural context constitutes a form of vagueness that may lead to uncertainty about what content is avertiser friendly. 20 As noted above, while quantification of this phenomenon is not the aim, its recurrence across the text corpus deserves to be mentioned. In excerpt (17), the phrase ‘sexualised themes’ instantiates such a case. The assessment if content features ‘sexualised themes’ is not objective but hinges on cultural norms and subjective views honed by cultural norms:

(17) Content that features highly sexualised themes is not suitable for advertising;

(18) Controversial issues and sensitive events: Content that features or focuses on sensitive topics or events is generally not suitable for ads.

In (18) YouTube also employs this form of imprecision. As with ‘sexualised themes’, the referents of ‘sensitive events’ / ’sensitive topics’ and ‘[c]ontroversial issues’ are culturally specific or even depend on subjective interpretation. 21 What is more, the level of sensitivity/controversy around particular events/topics may vary across local contexts, that is, a specific topic may be controversial in one regional context but not in another, for example, LGBTQI+ rights may inspire controversy in some settings but not in others.

Implications and conclusion

Overall, the empirical analysis found that YouTube uses vagueness extensively in its policy texts. The examination of the concordance lines of ‘content’ has shown that 26% thereof contain at least one of eight forms of vagueness regarding (1) what YouTube considers advertiser-friendly content and (2) the monetisability of particular content. 22 I would argue that the extensive use of vagueness is not entirely accidental. After all, for YouTube this vagueness is beneficial. While, of course, the company is generally free to decide what content to (de)monetise, the vagueness of its policy texts permits it more argumentative ‘wiggle room’: the vaguely phrased policies allow room for manoeuvre and make it difficult to challenge the SM’s decisions concerning what, precisely, is ad-friendly content and why. In light of the large amount of uploaded content YouTube has to process on a daily basis, the interpretative freedom afforded by its use of vagueness may be especially useful (if not essential) in order to keep creators’ challenges/objections to a minimum and thus YouTube operational.

Before going into detail regarding the effects of YouTube’s use of vagueness, it is important to highlight that the mere fact that the ad-friendly content guidelines exist already constitutes a form of economic censorship. This is because these policies motivate/discourage the production of particular content based on economic considerations. After all, creators who wish to fully monetise via advertising have to produce content that YouTube deems acceptable for advertisers and that then can be used to generate revenue from advertising. That is, creators have to upload content that complies totally with YouTube’s ad-friendly content guidelines or bear the consequences, namely financial losses.

Moreover, the guidelines’ textual composition may motivate creators’ excessive (self-) censorship. That is, YouTube’s extensive use of vagueness may leave content creators uncertain whether their content can gain ad revenue and how to design their content to ensure monetisability through ads. This uncertainty inspired by YouTube’s vagueness might boost creators’ (self)censorship, that is, to avoid demonetisation they may decide to err on the side of caution and avoid producing any content that could be interpreted as violating YouTube’s ad-friendly content policies. Instead, creators may aim to produce particularly ad-friendly content – content that does not push any boundaries or tap into any ideologically charged issues but safely falls within the narrowest category of ad-friendliness. 23 Overall, this may lead to a loss of ‘content plurality’ (Kumar, 2019: 2) on YouTube, at least regarding the content monetised through advertising. Since it is the most popular YouTube channels that are afforded the opportunity to monetise through advertising in the first place (YouTube Partner Programme overview and eligibility, 2021), this means that it is also the popular and widely received channels whose content is likely to become more bland and inconsequential.

This concatenation of circumstances – YouTube’s use of vagueness leading to uncertainty among creators, potentially leading to excessive (self)censorship leading to a loss of content plurality – may hamper YouTube’s ability to serve as a space for free, that is, uncensored, debate, let alone as a public sphere as envisioned by Habermas and others (see, e.g. Eriksen, 2005 for more on the characteristics and types of public spheres). Creators whose aim is to monetise through advertising are compelled to consider carefully what topics to broach and in what manner. Therefore, unless YouTube’s texts are revised to reduce vagueness, YouTube only has limited ability to support critical engagement, free expression and information sharing about topical and potentially controversial issues of social, economic or political import – at least for creators’ monetising through ads.

While it may seem that YouTube could support these aspects for non-monetising creators, I would like to give to consider the following. First, YouTube has an interest in foregrounding content containing ads, for example, YouTube may bring such content to the audience’s attention by having the SM’s algorithm prioritise recommending such content to the platform’s audience. After all, for YouTube to generate advertising revenue the content containing ads has to be watched by an audience, that is, it is in YouTube’s interest to make content containing advertising especially accessible for its audience. Hence, I would argue that, the visibility of innocent and tame ad-friendly content may increase. Second, the SM only permits creators who are already popular to monetise via ads (YouTube Help, 2021). Consequently, major creators that are widely received and dominate in terms of platform traffic are likely to produce ad-friendly content – it might only be minor, more obscure and niche creators that provide critical engagement with difficult issues on YouTube.

Future research ought to address if this SM with its for-profit orientation, its various affordances and characteristics may still give rise to an arena of critical engagement to a degree as discussed in the section ‘Social media: arenas for free debate versus corporate censorship’, or if the theoretical considerations resulting from my empirical analysis are reflected in YouTube’s content evolution. That is, research should explore YouTube content with a focus on whether there is indeed a loss of content plurality, especially among the elite monetising content creators. This is an important venue of research not least in order to shed light on whether Habermas’ (1990) and later Fuchs’ (2015) argument that the commercialisation of media leads to a tabloidisation of their content (see ‘Social media: arenas for free debate versus corporate censorship’) holds true.

Footnotes

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.